Abstract

In this study, we examine two U.S. elementary teachers’ use of worked examples, representations, and deep questions in elementary mathematics lessons both before and after a project intervention designed to promote teaching improvement. This intervention was guided by a cognitive construct containing these three aspects, which were demonstrated via cross-cultural video lessons of both U.S. and Chinese teachers. We specifically analyze two teachers (Ann and Bea) who taught first-grade lessons on the topic of inverse relations. Although their lessons were rated similarly in year 1, the rating of their lessons in year 4, post-intervention, demonstrated different patterns of change. While Ann's use of worked examples and representations underwent no significant improvement, Bea spent more time unpacking a single worked example with a representational sequence fading from concrete to abstract (also called “concreteness fading”). Neither teacher showed improvement in asking deep questions. An analysis of the teachers’ responses to the project intervention revealed that their video noticing skills and personal takeaways were related to the areas of teaching where they improved. Other factors such as teachers’ prior knowledge, beliefs, and textbook resources, also played a role in teaching changes. These findings shed light on a recent endeavor called the “science of improvement” in mathematics teaching, which seeks to document teaching changes and the underlying mechanisms that might induce these changes. Implications for both research and practice are discussed.

Keywords

Promoting teaching improvement in elementary mathematics: A case with inverse relations

Despite a continuous effort to improve classroom teaching in the United States, “the core of teaching—the way teachers and students interact about content—has remained the same for a century or more” (Hiebert & Morris, 2012, p. 96). Studies of randomly selected videos of U.S. eighth-grade mathematics lessons from the Trends in International Mathematics and Science Study's (TIMSS) project have revealed that typical teaching in the United States has remained similar to traditional, procedure-based teaching styles that have been reported in the past (Jacobs et al., 2006). Comparison studies on elementary mathematics classroom teaching have revealed similar findings (Ding et al., 2019, 2021; Huang et al., 2019). Recently, the mathematics education field, inspired by research from medicine and health care (Berwick, 2008), is calling for a science of improvement in teaching that would document the teaching changes and their underlying mechanisms as part of the development of an intervention (Cai et al., 2019). This study aims to contribute to this endeavor by examining the teaching changes of two U.S. elementary teachers who participated in a project intervention based on cross-cultural videos. We acknowledge that although the current study is not inherently cross-cultural comparative, the cognitive constructs at the core of this project intervention were derived from previous cross-cultural studies and illustrated by cross-cultural videos (Ding, 2021; Ding et al., 2019, 2023). We seek to understand the successes achieved and challenges faced by the teachers who participated in our intervention and to determine the extent to which their teaching changes were related to their experiences during the project intervention. To situate the current study, we will first review the literature concerning the necessary changes in U.S. elementary classrooms and the relationship between current professional development (PD) efforts and the science of improvement. Then, we discuss the two primary features utilized by our project intervention: cross-cultural videos and a cognitive construct consisting of worked examples, representations, and deep questions (Pashler et al., 2007).

Literature review

Procedural-driven teaching in U.S. elementary mathematics

Results from international studies indicate that a large percentage of U.S. students are underperforming in mathematics when compared to their international counterparts (Programme for International Student Assessment [PISA], 2016), even as early as in elementary school (e.g., Cai, 2004; Li et al., 2008; TIMSS, 2016). Although various factors contribute to students’ low achievement (e.g., curriculum, parent support), the quality of mathematics teaching has been widely recognized to be one of the most critical factors (Hiebert & Stigler, 2004). Despite an ongoing debate in educational research about varied teaching styles (e.g., Anderson et al., 2000; Hmelo-Silver et al., 2007; Kirschner et al., 2006; Schmidt et al., 2007), the core of mathematics teaching in the United States remains procedurally driven (Hiebert & Stigler, 2004; Huang et al., 2019; Stigler & Hiebert, 1999). One effort to respond to the unsatisfactory learning results in K-12 classrooms has been the issuing of Common Core State Standards of Mathematics (e.g., Common Core State Standards Initiatives [CCSSI], 2010) which emphasized eight practices, including making sense of problems, reasoning abstractly and quantitatively, and constructing viable arguments. Some recent studies (e.g., Hill et al., 2018) have observed teaching improvement in urban elementary classrooms following the implementation of these practices. However, other studies (e.g., Huang et al., 2019) have indicated that teachers in U.S. elementary classrooms still largely focused on procedures. For instance, Barnett and Ding (2019) found that when teaching the associative property, teachers in grades 1–4 tended to emphasize computational strategies, with the underlying property rarely being made explicit. Additionally, although some lessons involved concrete contexts and abstract number sentences, there were insufficient links between these representations. More recently, a national survey with parents of school-aged children, teachers, and adults has shown that there is widespread dissatisfaction with the way mathematics is commonly taught in U.S. classrooms (Jimenez, 2023). These findings confirm the importance of the ongoing effort to promote teaching improvement in U.S. classrooms.

In this study, we focus on the teaching of inverse relationships between addition and subtraction (also called “additive inverses”), a critical topic for which research findings underscore the need for teaching improvement. The inverse relation is a fundamental mathematical idea that should be learned in elementary school (CCSSI, 2010). An understanding of the inverse relation contributes to one's full comprehension of the four arithmetic operations (Wu, 2011), algebraic thinking (Carpenter et al., 2003), and mathematical flexibility (Nunes et al., 2009). Students can initially learn this relationship through a fact family that is formed by a set of related addition and subtraction facts involving the same numbers (e.g., 7 + 5 = 12, 5 + 7 = 12, 12 − 7 = 5, and 12 − 5 = 7). In a similar vein, they can develop an understanding of this relationship through a group of inverse word problems, the solution of which forms what is known as a “fact family” (Carpenter et al., 2003; Howe, 2009). Moreover, students can learn this relation by applying it to find missing numbers, perform computations, and check answers (Baroody, 1987; Carpenter et al., 2003; Nunes et al., 2009). Despite the importance of the topic and the various ways in which it can be taught, students have often been found to lack understanding of this concept (Baroody, 1987; Ding & Auxter, 2017; Nunes et al., 2009; Torbeyns et al., 2009). This may be partially related to procedural-based classroom teaching that focuses on number manipulation (Ding et al., 2019, 2021). For instance, through an analysis of eight U.S. elementary expert teachers’ 32 lessons on inverse relations (Ding et al., 2021), we found that some teachers taught repetitive worked examples followed by irrelevant practice tasks; other teachers failed to help students understand the representational connections; still others focused on the use of keywords or provided direct explanations instead of using deep questions to elicit student explanations of inverse relations. These findings echo observations in other studies (Ding & Carlson, 2013), where teachers commonly expected students to generate a fact family based on the location of the numbers on a “fact triangle” flashcard. Without a conceptual understanding of the meaning of the number sentences, students often produce mistakes such as 7 − 12 = 5 and 5 − 12 = 7 (Ding & Carlson, 2013). These observations indicate a need for change in elementary mathematics teaching to support students with both procedural and conceptual knowledge.

Professional development and the science of improvement

There have been ongoing PD efforts to deliver instructional interventions capable of improving classroom teaching. Traditional research suggests the adoption of a linear approach, which consists of three phases: Researchers should first explore an intervention, demonstrate the effectiveness of the intervention, and conclude with a scaled-up implementation of the successful intervention (Borko, 2004). As such, traditional empirical studies in mathematics education have often explored interventions through randomized control trials (Blanton et al., 2015; Carpenter et al., 1989; Clements et al., 2011; Jitendra et al., 2013; Lewis & Perry, 2017). Despite the reported successes, such studies often lack detailed descriptions of how participants interacted with the interventions (Cai et al., 2019). As these authors themselves acknowledge, this area of uncertainty makes it difficult to guarantee the success of a given intervention when it is implemented in a classroom setting. Reviews of 1343 studies on PDs (Guskey & Yoon, 2009; Yoon et al., 2007) have revealed that teachers who receive substantial PD hours can boost their students’ achievement. However, out of these 1343 studies, only nine met the evidence standards of the What Works Clearinghouse and were included for detailed analysis in the authors’ findings. Similarly, while large-scale teacher surveys (Garet et al., 2001; Porter et al., 2000) have indicated that PD activities that focus on specific instructional methods can contribute to changing teachers’ practices, they also found that the average teacher's PD experiences did not have the potential to foster lasting teaching changes.

The educational research referenced above confirms the promise of PD interventions but also suggests that careful tracking of post-PD teaching is necessary to understand if an intervention has promoted long-term teaching changes. To address these issues, there has been a recent call for an alternative research path in mathematics education, referred to as the science of improvement (Cai et al., 2019). This notion was developed in the field of medicine and found to be effective at improving the quality of healthcare systems. The primary feature of the science of improvement is that of incremental change (Berwick, 2008), established through an iterative process of “plan-do-study-act” (PDSA) with a concurrent analysis of the mechanics that enable observed changes. This model is designed to address three fundamental questions: (a) What are we trying to accomplish? (b) How will we know that a change is an improvement? And (c) What change can we make that will result in improvement? (Langley et al., 2009). These critical questions, combined with the iterative PDSA process, distinguish this improvement model from traditional research, which is often linear in nature. Due to its rigorous nature in exploring fine-grained changes, the science of improvement has received increasing attention in educational research as a tool for enhancing teaching practices (Bryk, 2009; Lewis, 2015). Chen and Terada's (2021) recent development of an observation-based protocol for evaluating teaching practices in science classrooms exemplifies such an effort. In our study, we join in this effort by exploring possible incremental changes in the mathematics teaching of two elementary teachers who participated in an intervention based on cross-cultural videos.

Cross-cultural videos in enhancing teacher noticing

In general, video-based PD workshops were found to be effective in supporting teacher learning, especially in the area of teachers’ noticing skills (Borko et al., 2008; Clarke & Hollingsworth, 2002; Hollingsworth & Clarke, 2017; Santagata & Guarino, 2011; van Es & Sherin, 2008). Developing skills of noticing and interpreting student interactions is important for utilizing instruction that supports students’ mathematical reasoning and communication (CCSSI, 2010). The Learning to Notice framework (Van Es & Sherin, 2002) emphasizes three central characteristics of noticing: attending to what is important in a teaching situation (e.g., student thinking), connecting specific events to broader ideas, and utilizing content knowledge to interpret specific situations. However, teachers can have vastly different takeaways when watching the same videos. While some may notice surface features of a classroom (e.g., environment), others may attend to the essential aspects such as how teaching moves are used to promote students’ thinking (Van Es, 2011). In addition, while some teachers describe the features they notice, others provide knowledge-based reasoning such as explanations, interpretations, and predictions. These differences in the “what” and “how” dimensions of teacher noticing are indicative of different levels of teaching expertise (Seidel & Stürmer, 2014; van Es, 2011; van Es & Sherin, 2008) that are inseparable from teachers’ knowledge and beliefs (Clarke & Hollingsworth, 2002; Jacobs et al., 2010).

However, through effective guidance and support, teachers have been able to shift their noticing from surface to essential features of classroom interactions and increase their levels of reasoning (Amador et al., 2021; van Es & Sherin, 2008). Van Es et al. (2020) reviewed the literature to identify features and characteristics of video-based systems that support teacher learning. These features included aspects of audience, goals, video selection, task design, planning and facilitation and learning assessment. Therefore, PD providers should consider the number and length of the selected video segments as well as the sequence of videos within a PD session that best supports teachers’ noticing skills. More importantly, the video tasks should be designed to scaffold teachers’ attention to noteworthy features related to student thinking and the pedagogical approaches that are utilized in the video.

Cross-cultural videos have been found to be particularly powerful for supporting teachers as they learn to notice. Prior studies (e.g., Ding et al., 2019; Huang et al., 2019) indicate that analyzing the differences and commonalities between U.S. and Chinese mathematics classroom teaching can provide opportunities for educators to learn from their international peers. For instance, Huang et al., (2019) studied the teaching of additive comparison problems in two first-grade classrooms. Both the U.S. and Chinese lessons focused on understanding comparison word problems using multiple representations. However, the U.S. lessons emphasized using representations to compute answers, whereas the Chinese lesson stressed using them to illustrate the structure of additive comparison. PD settings that incorporate cross-cultural videos offered the opportunity for teachers to make profound reflections about their own teaching and question their engrained beliefs (Jacobs & Morita, 2002; Kleinknecht & Scheider, 2013; Moran et al., 2017) when the teachers were asked to notice the subtle cross-cultural differences that affect students’ thinking. Because of the potential advantage of cross-cultural videos, TIMSS released 53 cross-cultural videos about eighth-grade mathematics and science lessons to support teacher learning (Richland et al., 2007). Ding et al. (2023) also subsequently reported on the power of cross-cultural mathematics videos in facilitating how teachers learn to notice. Teachers from different countries may notice different instructional aspects when viewing the same cross-cultural videos (Ding, Li et al., 2023). Miller and Zhou (2007) found that when watching the same mathematics lesson episodes, U.S. teachers tended to notice general pedagogical strategies, whereas Chinese teachers attended to more mathematics content. Additionally, the timescale and duration of video-based interventions (e.g., a single instance, a semester long, or between) should be considered. Amador et al.'s (2021) synthesis of the literature suggests that extending teachers’ learning experience over the course of a semester would be helpful for enhancing their noticing skills.

Prior studies on learning to notice through videos are informative. However, few studies have explored teachers’ follow-up classroom instruction to identify which aspects of instructional improvement, if any, are observed. For instance, there were no reports on whether teaching changes were exhibited by those who watched the TIMSS videos. In this study, we analyze teaching changes for two U.S. elementary teachers who participated in a cross-cultural video-based intervention to further this line of research.

A cognitive construct: Worked examples, representations, and deep questions

To promote teacher changes through video learning, it is important to orient teachers’ noticing toward instructional features that promote student thinking (van Es et al., 2020). In this study, our video-based intervention focused on three aspects—worked examples, representations, and deep questions—recommended by the Institute of Educational Science (IES) in the United States for organizing classroom instruction to improve student learning (Pashler et al., 2007). These recommendations were concrete and applicable principles that emerged from research findings on learning and memory. Our recent studies (Ding, Byrnes et al., 2023a) found that teaching aligned with these three aspects significantly predicted students’ learning of inverse relations. As such, we anticipated that a video-based intervention that focused on teachers noticing these three instructional aspects could serve as a path to enhancing mathematics teaching in elementary classrooms.

Worked examples

Worked examples refer to instructional tasks with solutions given, which help students develop a mental schema that decreases their cognitive load during problem solving (Gog et al., 2011; Sweller & Cooper, 1985). Classical research on worked examples has mainly been conducted in laboratory settings where completely solved problems were provided to students. This process is relatively passive and thus has been criticized as being more akin to direct instruction (Kirschner et al., 2006). Recent research on worked examples has taken student involvement into consideration. For instance, Renkl et al. (2004) invited students to complete partially filled steps of their word example's solution. However, empirical studies have found that many U.S. teachers tended to teach a set of repetitive worked examples instead of unpacking a single example in depth (Ding & Carlson, 2013). Additionally, worked examples should be interleaved with relevant practice tasks to reinforce and strengthen student learning (Pashler et al., 2007).

Representations

Concrete representations, such as real-world situations, can help activate students’ personal experiences and make abstract mathematics more comprehensible (Gerofsky, 2009; Resnick & Omanson, 1987). However, concrete representations often contain irrelevant information that may distract students from the targeted concept (Goldstone & Son, 2005). Thus, it is also important to link concrete and abstract representations that present the same mathematical concept in purely symbolic and numerical terms (CCSSI, 2010; Kaminski et al., 2008; Pashler et al., 2007). Recent research has found that a sequence of examples that begin with concrete representations and transition to the abstract (called “concreteness fading”) is the most beneficial progression for student learning (Fyfe et al., 2015; McNeil & Fyfe, 2012). During this process, semi-concrete representations such as manipulatives (e.g., cubes) and schematic drawings (e.g., number lines, bar models) can help model problem situations and serve as a transition from concrete to abstract (Pashler et al., 2007). However, there are several ways that teachers have been observed to use representations that do not maximize their potential as an educational tool. Some U.S. teachers have favored students’ using semi-concrete representations as problem solutions instead of tools to solve the problems (Cai, 2005). Other teachers have encouraged students to use both concrete and abstract representations without establishing connections between the two (Ding & Carlson, 2013). Still, other teachers used representations for the purpose of finding computational answers rather than helping students make sense of the problem structures (Ding et al., 2019; Huang et al., 2019).

Deep questions

Deep questions refer to queries that target underlying concepts and structures (Craig et al., 2006; Pashler et al., 2007). These questions should be open-ended in nature (Chen et al., 2017) and require students to make inferences that go beyond the presented materials (Morris et al., 2020). When teachers ask deep questions, students are prompted to examine relationships, identify patterns, construct explanations, and resolve uncertainty, resulting in a high level of cognitive engagement and deep learning (CCSSI, 2010; Chen et al., 2017; Chen & Techawitthayachinda, 2021; Chi, 2000; Morris et al., 2020). Practices that meet the standards of deep questions include asking questions that encourage deep explanations, typically starting with how, why, why not, what if, what if not, how does X compare to Y, what is the evidence for X, and why is X important? (Pashler et al., 2007).

However, prior research has consistently reported that asking deep questions (especially follow-ups) is a considerable challenge in classroom teaching. For instance, even in Cognitively Guided Instruction (CGI) mathematics classrooms, many teachers found it challenging to ask a probing sequence of specific questions to facilitate students’ thinking instead of stopping after a single question (Franke et al., 2009). Studies (Mason, 2000) have also reported a phenomenon of teacher questioning called “funneling,” in which teachers begin with a deep question but then gradually reduce the depth of their questions, often to the point of giving full explanations to students. Nevertheless, recent research has found that with substantive support, teachers may improve their deep questioning skills. Chen et al., (2017) analyzed 30 science lessons taught over a 4-year span and found that teachers could be trained to shift away from asking questions that require low-level cognitive responses (e.g., reproducing the correct answers) to require higher-level cognitive responses (e.g., providing longer “responses to defend, challenge, synthesize, and articulate claims and evidence,” p. 387).

The current study

In this study, we aimed to explore the teaching changes of two elementary teachers who participated in a project intervention where the aforementioned elements of the cognitive construct were illustrated by cross-cultural video clips of U.S. and Chinese expert teachers. To achieve this overarching research goal, we asked two research questions:

What changes do teachers make in terms of using worked examples, representations, and deep questions in their lessons? How do teachers respond to the project intervention that aims to promote teaching changes in using worked examples, representations, and deep questions?

Method

This study employs a case study research approach (Creswell & Poth, 2018). The case is defined as the event of teaching change demonstrated during the inverse relation lessons that occur before and after our project intervention. Two teachers were included in this case study for comparison, with the goal of understanding what aspects of teaching were changed and how these changes may relate to the targeted intervention.

The larger project

Although the current study is not cross-cultural comparative (as we only studied two U.S. teachers), our video-based project intervention benefited from cross-cultural insights. In particular, the current study is part of a five-year cross-cultural study supported by the National Science Foundation in the United States. The goal of the larger project was to identify algebraic knowledge for teaching (AKT) in elementary schools, drawing insights from expert teachers’ lessons in the United States and China.

The overall design of the larger project is design-based research, which is aligned with the science of improvement's PDSA model (Langley et al., 2009). In particular, during years 1 and 2, we invited expert teachers to plan and teach our selected lessons in their respective countries (the “Plan and Do” in PDSA). During year 3 of the project, we conducted systematic video analysis based on the teachers’ use of worked examples, representations, and deep questions. This culminated in the development of our project intervention materials, which were then shared with teachers through professional development at the end of the third year (the “Study” phase in PDSA). Finally, we encouraged teachers in each country to re-teach the lessons, incorporating the learned intervention in year 4 of the project (the “Act” phase in PDSA).

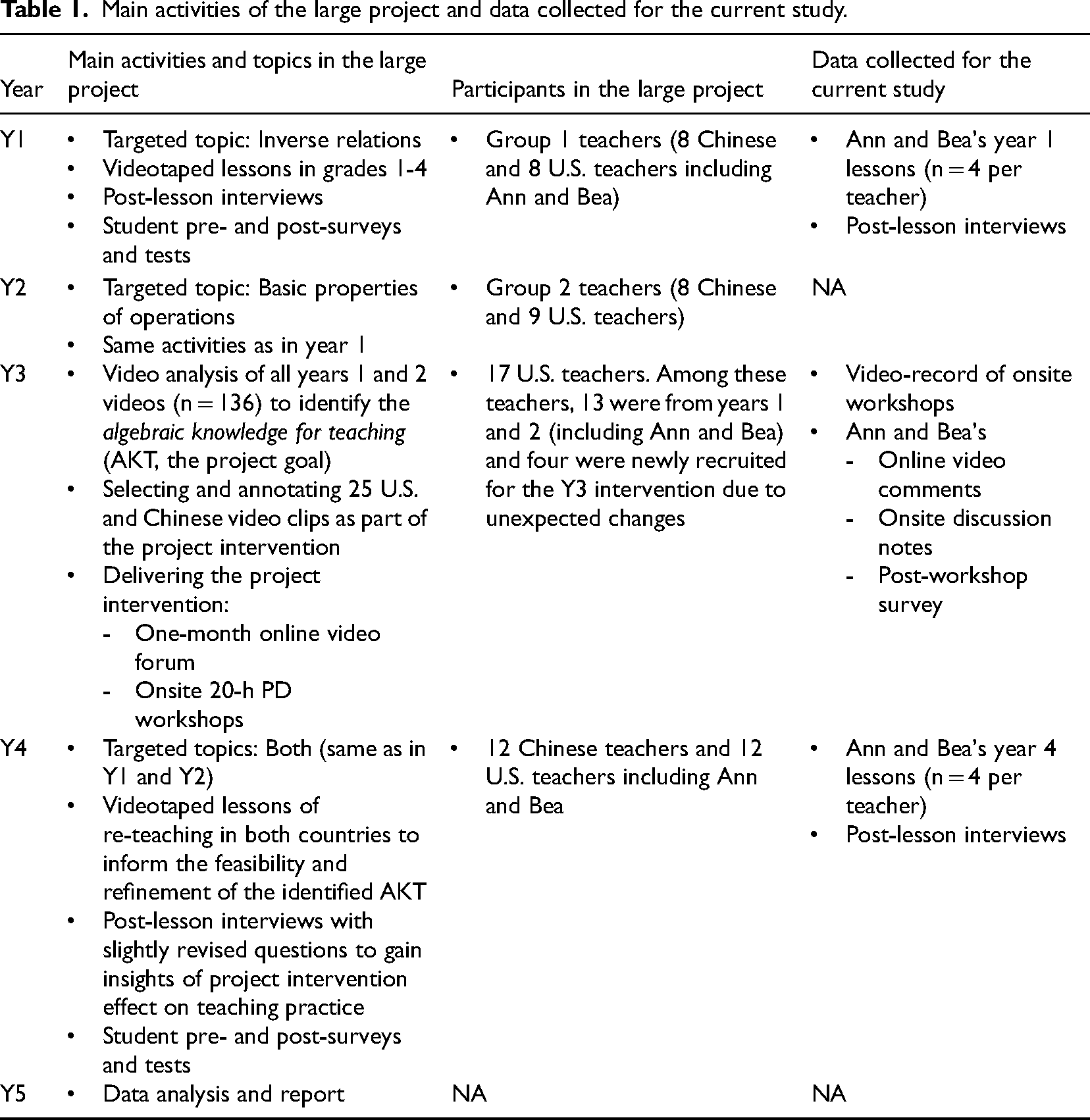

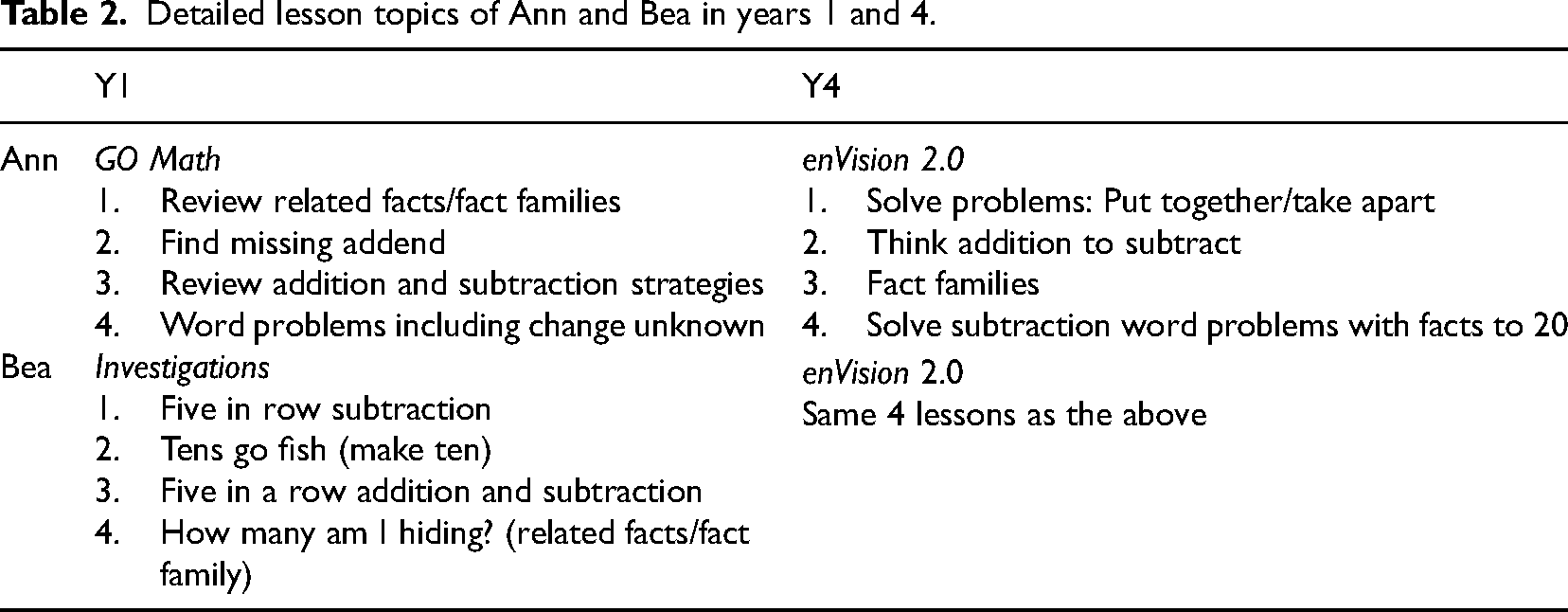

Table 1 presents the main activities of each year along with the respective teacher sample. In year 1, a group of elementary teachers (NChina = 8, NU.S. = 8) taught lessons on inverse relations. In year 2, a different group of teachers (NChina = 8, NU.S. = 9) taught lessons on the basic properties of operations. All teachers from both years taught grades 1–4. Year 3 was devoted to video analysis and project intervention. Due to unexpected project changes, we recruited one more Chinese teacher who taught the inverse relation lessons, resulting in a total of 17 Chinese and 17 U.S. teachers in this project. In year 4, there were 12 teachers in each country who taught post-intervention lessons (see Table 1).

Main activities of the large project and data collected for the current study.

Main activities of the large project and data collected for the current study.

Given that this large project aimed to study expert teachers, we recruited teachers with at least 10 years of teaching experience and with good teaching reputations. All Chinese teachers received national- and/or province-level teaching awards. All U.S. teachers were recommended by the school district and/or principals, and five of them were National Board-Certified Teachers. Each teacher taught four lessons, totaling up to 136 videos on either inverse relations (year 1 topic) or the basic properties (year 2 topic). In year 4, we collected 96 post-intervention lessons on the same topics that had been previously taught.

For the current study, we examined how teachers may or may not have improved their teaching after participating in our intervention. For in-depth analysis, we employed purposeful sampling (Mertens, 2020) by selecting two first-grade teachers in the United States, Ann and Bea (see Table 1). Across our project, we observed teachers who made an effort to incorporate our project interventions into their teaching and those who did not. Our observations of Ann and Bea's participation were representative and together serve as contrasting cases (Mertens, 2020). We chose to study teachers who reside in the United States because the literature (Barnett & Ding, 2019; Hiebert & Stigler, 2004; Huang et al., 2019) indicates an urgent need for teaching improvement in the United States. Additionally, studying teachers from the same country and grade-level served to limit possible distracting factors such as cultural and mathematical content differences. By identifying possible incremental changes and studying their underlying mechanisms, we hope to contribute to furthering the science of improvement in elementary mathematics teaching.

Both Ann and Bea were Caucasian and taught in the same large, high-need, urban school district on the East Coast of the United States. This school district has a student population with 86.19% of its students being non-White, 85.00% being economically disadvantaged, 10.47% being English language learners, and 14.05% receiving special education services. At the time of joining the project, both teachers had taught for over 15 years in different schools. Although they were not National Board-Certified Teachers, both were highly recommended by their principals. Nevertheless, both their average video scores were ranked below average among the participating U.S. teachers (Ding et al., 2019) 1 for the inverse relations lessons they gave during year 1 of the project. Subsequently, both teachers participated in our year 3 project intervention and re-taught their lessons in year 4. Given that they had similar experiences in the project, we selected these two teachers to explore possible teaching improvement by comparing their year 1 and year 4 videos. We purposefully chose two teachers whose lessons demonstrated similar use of elements from the cognitive construct (with low ratings) prior to the intervention, but who experienced different outcomes afterward. We then sought to contrast their experiences during the intervention to determine how the features they attended to and their personal beliefs about math pedagogy may have affected their outcomes. Note that no video data from year 2 was used in the current study because our year 2 project addressed a different mathematical topic.

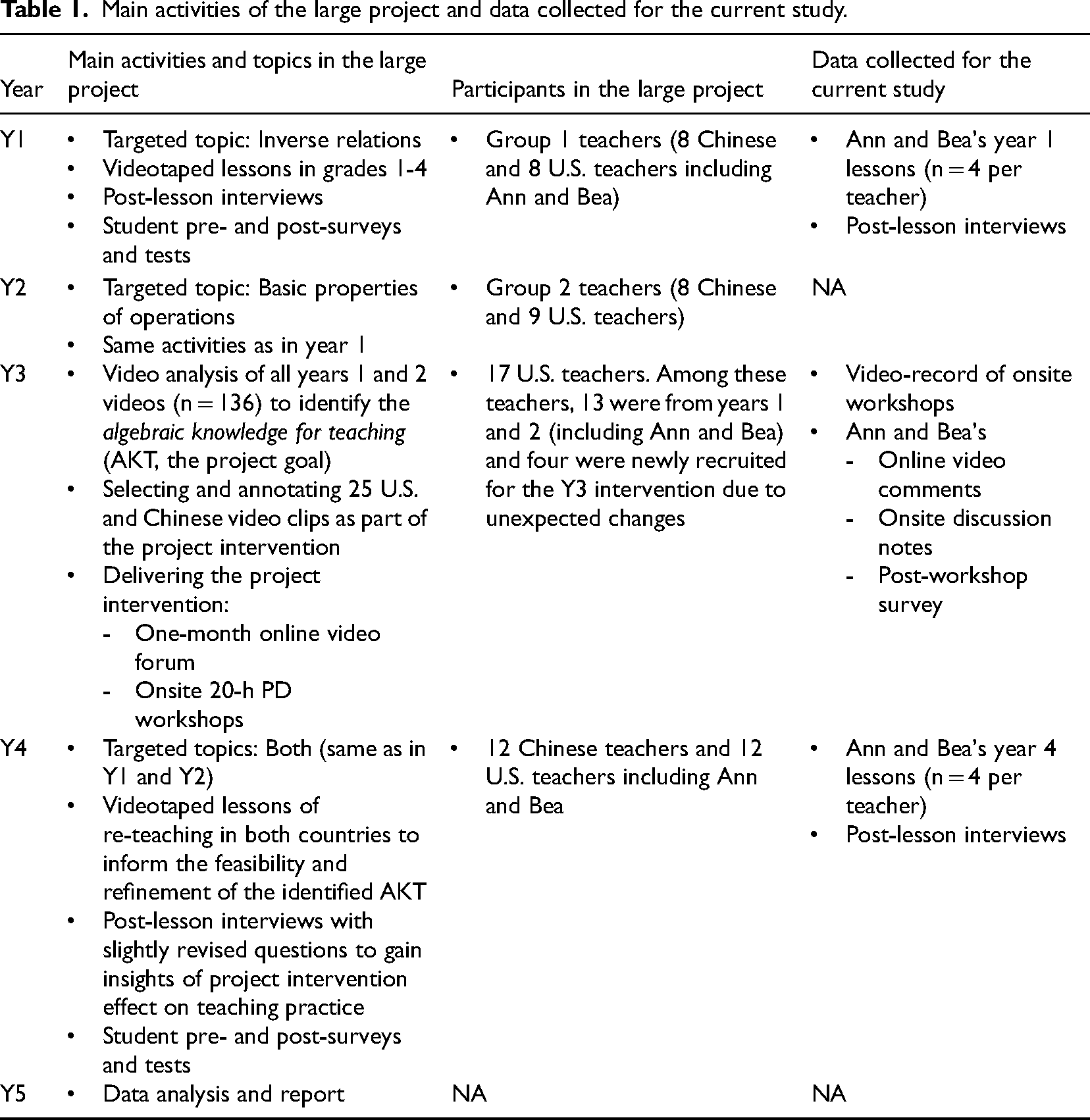

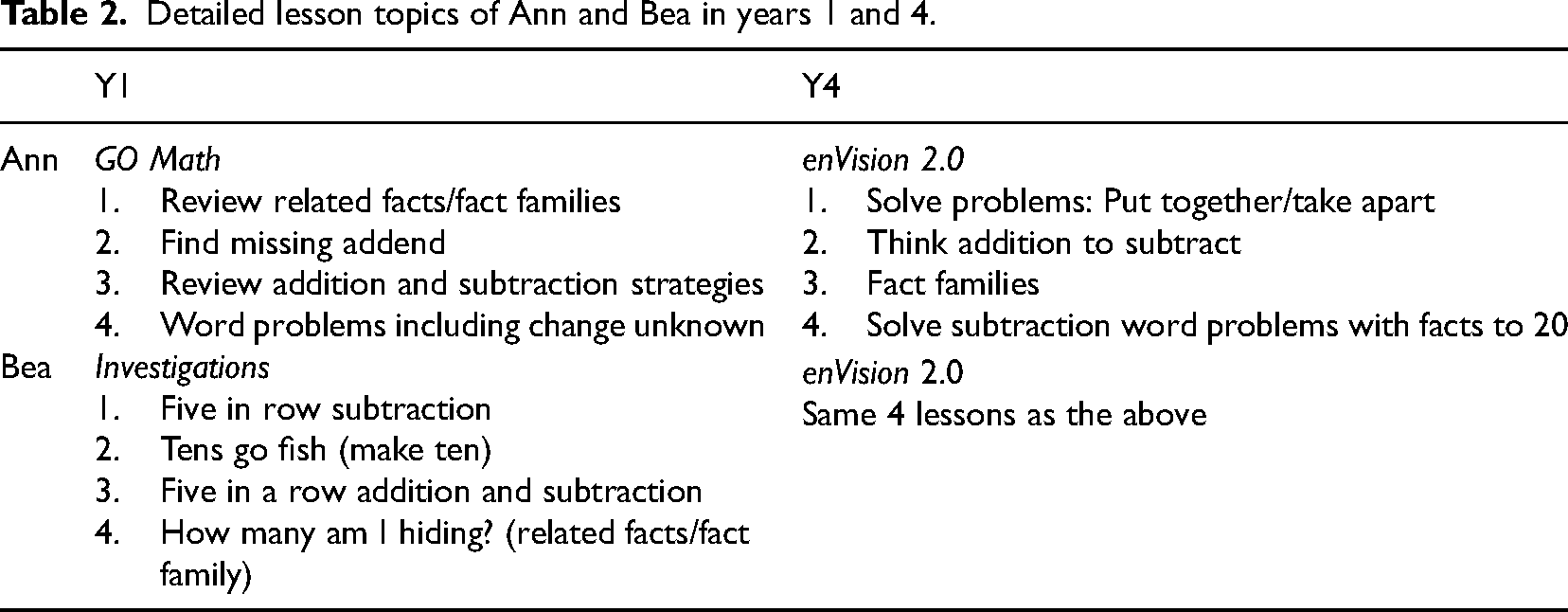

Instructional tasks

Table 2 lists the detailed textbook lessons taught by the teachers. According to the literature, typical additive inverse lesson tasks include fact families/related facts, finding missing numbers, using addition to do subtraction, and initial unknown problems (Baroody, 1987; Carpenter et al., 2003; Nunes et al., 2009). In our project, we used the literature to identify representative textbook lessons in consultation with the participating teachers. In year 1, Ann used the GO Math textbook while Bea used Investigations. For these selected lessons, GO Math primarily used semi-concrete models such as connected cubes and fact triangles to model the additive inverse relations. Investigations mainly used games to assist student learning and occasionally interspersed these with story problems. In year 4 of the project, there was a district-wide curriculum change and the participating schools switched to enVision Math 2.0 resulting in both teachers teaching the same four lessons (see Table 2). Most of the selected lessons contained word problems as part of the worked examples and all the lessons provided opportunities to discuss inverse relations. For these reasons, we felt comfortable analyzing within and across teacher comparisons at a fine-grained level despite the curricular changes.

Detailed lesson topics of Ann and Bea in years 1 and 4.

Detailed lesson topics of Ann and Bea in years 1 and 4.

Project videos and teacher interviews in years 1 and 4

To answer our research questions, we collected relevant data from Ann and Bea across the course of the project (see Table 1, rightmost column). Four lessons per teacher were videotaped in both years 1 and 4 (before and after the project intervention), resulting in a total of 16 observations. The average lengths of videotaped lessons in years 1 and 4 were 50 and 53 min respectively for Ann and 57 and 31 min respectively for Bea. Bea's Y4 lessons were likely shortened due to her position change—her school assigned her to be a third-grade teacher that year and thus she had to “borrow” a first-grade class to teach for our project.

Post-instructional interviews were conducted right after each lesson to elicit reflections about teachers’ use of worked examples, representations, and deep questions. Example questions included, “What do you think about the questions you asked in today's class?” or “Were they helpful for eliciting students’ deep understanding of mathematics? Explain.” In particular, with the year 4 interviews, we purposefully added questions about teachers’ implementation of the strategies discussed in the project intervention. This included a general question: “During this lesson, did you incorporate any ideas from the summer workshop? If so, please elaborate on what you’ve incorporated.” Additionally, we asked a specific version of this question for each element of our cognitive construct (e.g., Did your use of representations incorporate any strategies from the summer workshop?). Furthermore, due to textbook changes, we added an interview question asking teachers whether the new textbook materials were used in the videotaped lessons.

Project intervention in year 3

The project intervention took place at the end of year 3 of the project (see Table 1), and its design was informed by the first two years of videotaped lessons. The intervention was focused on illustrating the three aspects of our cognitive construct via cross-cultural videos. First, we carefully selected 25 U.S. and Chinese video clips (NU.S.=13, NChina = 12) on either inverse relations or basic properties of operations (Ninverse =15, Nproperty = 10). For each subtopic covered (e.g., fact families), there were matching U.S. and Chinese clips with an average length of about 10 and 13 min, respectively. For instance, for the topic “expressing inverse relations as a fact family,” we had both the U.S. videos (e.g., “US_G2_T3_FactFamily”) and corresponding Chinese videos (e.g., “China_G1_T2_FactFamily”). Although these video clips have matched topics, the overall instructional approaches (especially in representation uses and questioning) across two countries are different (see the details in Ding et al., 2019; Ding, Li et al., 2023b) and thus we consider this set of U.S. and Chinese videos as “cross-cultural videos.” Closely matched topics were chosen to enable teachers to make comparisons and help avoid construct-irrelevant variance due to different content (Dreher et al., 2021). We selected each video with an eye toward our targeted cognitive construct. As such, each video included an episode of teaching with a worked example that lent opportunities for viewers to discuss the teachers’ use of representations and questioning. Each video clip was translated and annotated with subtitles from the language of the peer country.

Next, we delivered the intervention to all participating teachers through an online forum with a subsequent summer PD workshop. All of the 25 annotated video clips were uploaded to two functionally equivalent online platforms (Youtube and Youku) so that U.S. and Chinese teacher participants (including Ann and Bea) could watch and comment on the same set of videos. Teachers were encouraged to watch the videos, identify observations that interested them, and make comments based on the given prompts (elaborated below). We did not require teachers to watch all of the videos due to time commitment concerns. For each video clip observed, teachers were expected to respond to the following three open-ended prompts: (1) What do you notice? What stood out to you? (2) What questions do you have in terms of this video or in general? and (3) Other comments? Teachers could read each other's comments within each platform, if interested. This online video forum lasted for one month, with five email reminders sent to the teachers. In particular, Ann commented on 10 video clips and Bea on three. In comparison, Ann's comments were generally brief descriptions of what was observed, while Bea's comments were much longer involving both descriptions and reasoning. Detailed evidence of noticing from the cross-cultural videos will be reported in the Results section.

After the online video forum, we conducted a three-day onsite summer workshop (20 h total) with the U.S. and Chinese teacher participants (in their own nations, respectively). The main contents for each workshop were documented in six PowerPoints with modifications for the United States and China based on their specific needs. The majority of the workshops were video recorded and the format of the workshop had the following structure: (a) an introduction to worked examples, representations, deep questions (Pashler et al., 2007) and the strategy of concreteness fading (McNeil & Fyfe, 2012), (b) four topic-specific sections on teaching and learning inverse relations or the basic properties of operations, and (c) a concluding session. In the introduction section, features from the targeted instructional strategies (e.g., concreteness fading, deep questioning) were introduced to teachers as recommended by the literature. For instance, the common stems of deep questions were shared with teachers. In each topic-specific section, we replayed a few parallel video clips from both countries. In particular, we put the teachers in small groups and asked them to share and elaborate on what they noticed about a teachers’ representation uses and questioning, or what they might have previously shared through the online video forum. All teachers were actively engaged in group discussions and group representatives reported what each small group noticed. Based on the teachers’ discussions, the PD providers prompted teachers for further reflections on the targeted aspects. When a deep question was identified, we discussed what made that question deep and what student explanations were elicited. In contrast, when a video lacked deep questions, we encouraged teachers to think of possible questions by recalling what they had learned from the cross-cultural videos. In the concluding session, teachers were asked how they could implement what they had learned for the upcoming year using their new textbooks. We purposefully grouped teachers based on their grade levels so that they could share their thinking about similar concepts. We encouraged teachers to consider using the teaching strategies that the workshop emphasized during their lesson planning to maximize their students’ learning opportunities. At the end of the workshop, a written survey was distributed to teachers asking, “What have your learned that you feel is useful and may try out in your own classrooms?” Teachers’ survey responses and a few post-email responses were collected. For the current study, we retrieved the workshop recordings, identified Ann and Bea's onsite responses whenever possible, and collected their responses to our post-workshop survey.

Data analysis

Coding and analyzing project videos

To identify teaching changes, we coded Ann and Bea's years 1 and 4 videos and made comparisons within and across the videos. Below we introduce our coding framework, coding examples, procedures, and reliability checking.

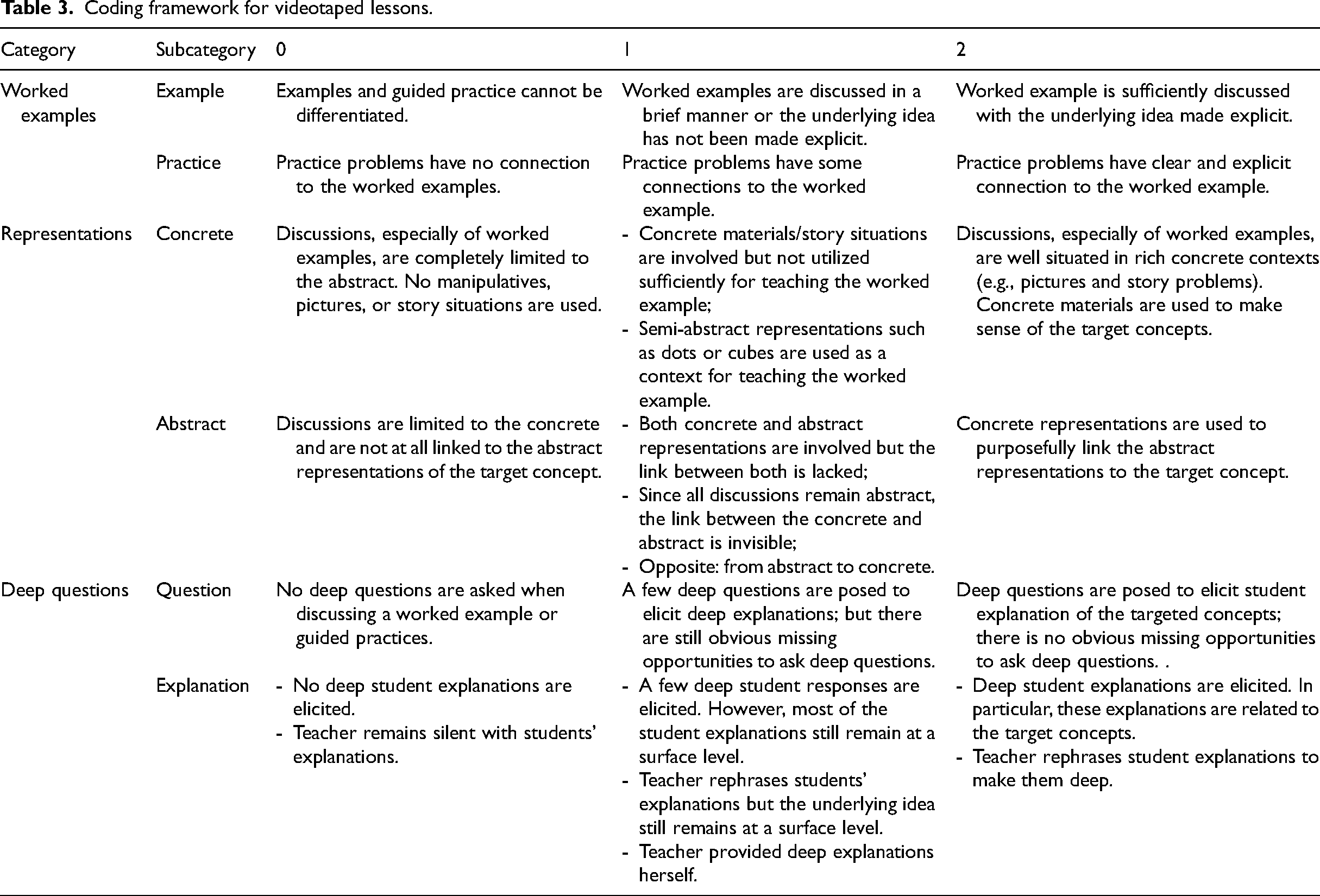

Coding framework. The coding framework was modified from our prior study on teachers’ lesson planning (Ding & Carlson, 2013). As seen in Table 3, the main elements are the three aspects of the cognitive construct with each aspect further divided into two subcategories: worked examples (example, practice), representations (concrete, abstract), and deep questions (question, explanation). For an ideal lesson, we expected the teacher to (a) pay sufficient attention to at least one worked example, followed by relevant practice problems, (b) situate the lesson in a concrete context, which would be gradually faded into abstract representations, and (c) ask deep questions to elicit students’ self-explanations of the targeted concept. To obtain a general sense of the lesson quality, we used a 0–2 scale to score each subcategory of each lesson, with “2” indicating that all expected elements of the construct were utilized fully and “0” indicating the lack of expected evidence. Some codes unavoidably incorporated the authors’ subjective interpretations. For instance, we evaluated whether a worked example was sufficiently unpacked based on the length of the worked example. We were aware that “length” is not equivalent to quality; yet, an over-brief worked example did not lend itself to in-depth discussion. Therefore, we assigned a score of 2 to lessons containing a worked example of at least 8 min, the average length of all worked examples in our year 1 U.S. videos (Ding et al., 2019).

Coding framework for videotaped lessons.

Coding framework for videotaped lessons.

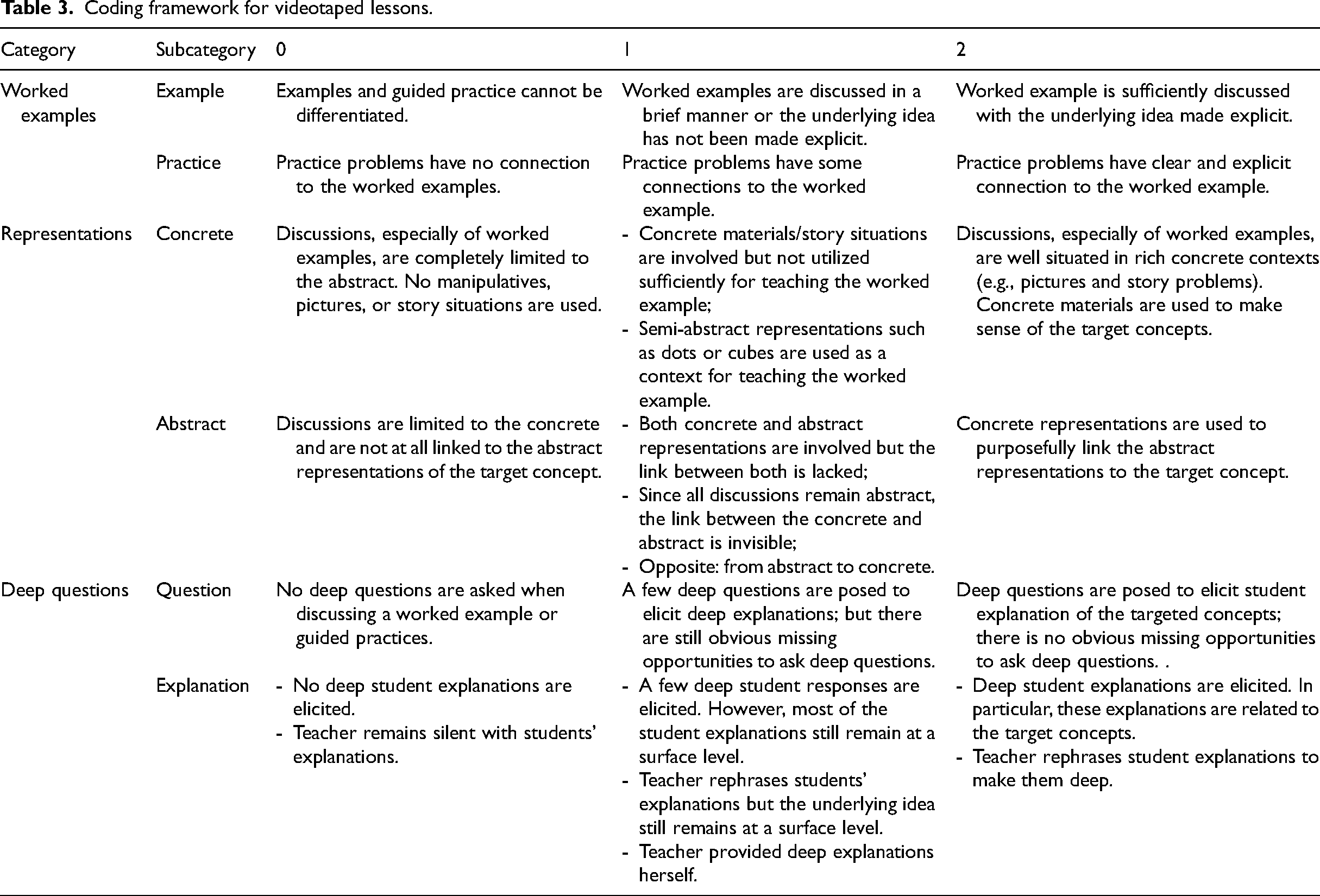

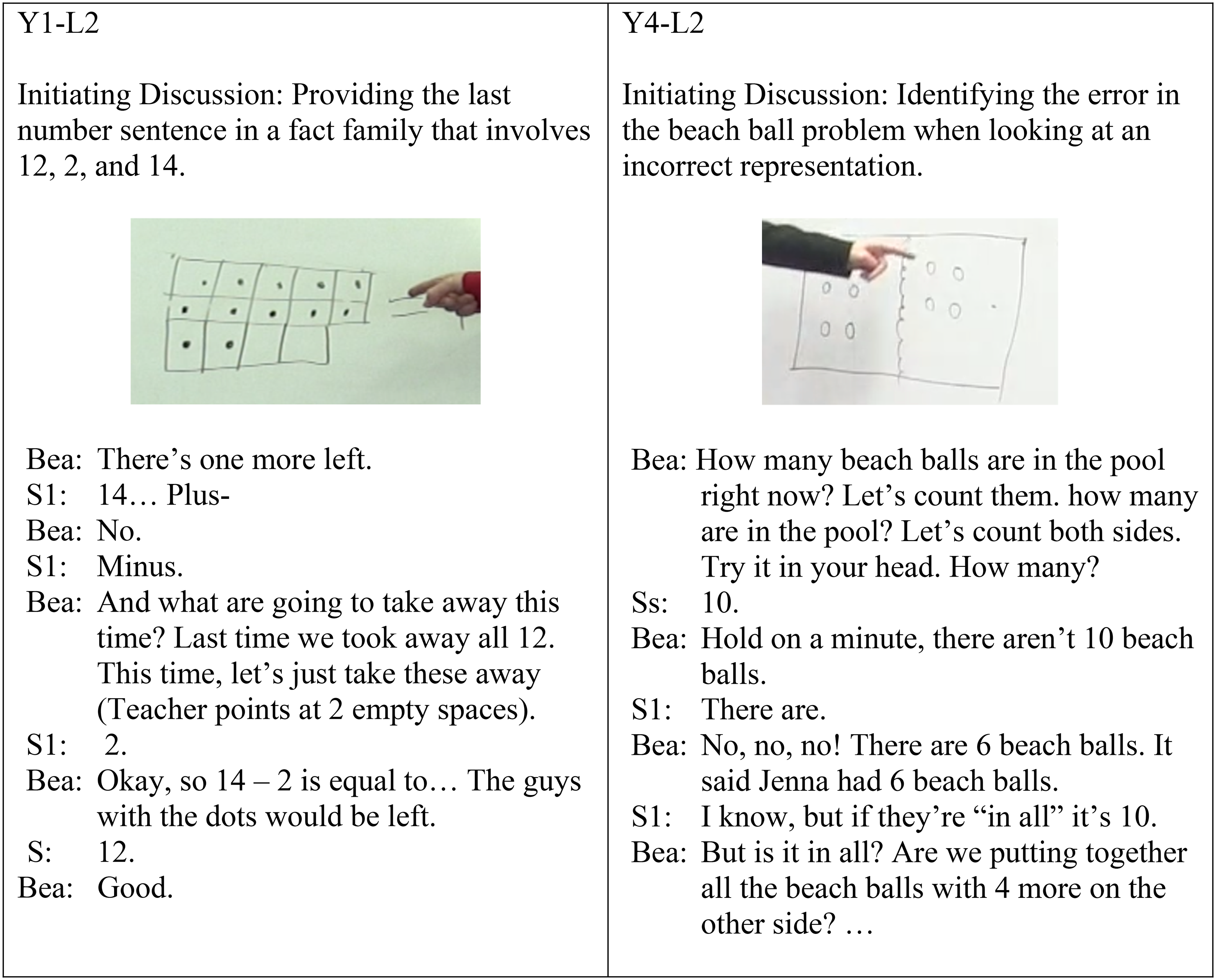

Coding examples. To illustrate how we coded the lessons, we will analyze Ann and Bea's lesson 2 from year 4 (briefly: Y4-L2). Both teachers taught the same lesson titled “Think addition to subtract” using the same example task, “Jenna has 6 beach balls, 4 blew to the other side of the pool. How many beach balls are left?” The textbook expected students to solve this task using subtraction 6 – 4 = ? and then consider the related addition fact 4 + 2 = 6 to find the answer. Both teachers spent 18 min on this worked example, exceeding the year 1 average. Thus, we scored both teachers’ examples as 2, indicating that both teachers spent sufficient time unpacking the worked example. Ann's lesson contained two brief practice tasks about related facts; however, since the majority of her practice time was devoted to a domino game that only utilized subtraction we scored her practice as 1. Bea's lesson contained two tasks that directly related to the worked example (writing related facts) and thus she was scored as 2 for her practice problems.

For Ann's lesson, both representations (concrete and abstract) were scored as 1 while both of Bea's representations were scored as 2. For the beachball problem, both teachers used the representations in different ways. For instance, students in both classes misrepresented the story situation as illustrated in Figure 1(a), resulting in the incorrect solution, 6 + 4 = 10. To address this mistake, Ann guided students to draw a semi-concrete bar diagram (see Figure 1b) without referencing the context of the problem to clarify the confusion. This led to the students making further abstract mistakes (6 + 4 = 2, 4 + 2 = 10) which will be elaborated upon below. In contrast, Bea directed her students back to the beach ball context to deduce whether 6 + 4 = 10 was relevant to the original problem. Students suggested that since four balls blew away, they could draw them on the right side of their diagram and erase the balls on the left (see Figure 1c). This led to students’ self-correction of mistakes and the correct transition from concrete to abstract (6 – 4 = 2 and 4 + 2 = 6).

Representations in both teachers’ Y4-L2. The picture in “a” is a reconstruction by the authors. a. Student representational mistake in both classes. b. Ann's representation. c. Bea's representation.

In terms of deep questions, Ann's typical question was, “What was your strategy?” which elicited student explanations of procedural steps. In addition, when facing the aforementioned student mistake, Ann suggested, “If we use addition to solve subtraction…, our numbers should be the same.” This suggestion was meant to imply that the numbers 6, 4, and 2 needed to be a part of the addition sentence, but inadvertently led students to change their initial response of “6 + 4 = 10” to “6 + 4 = 2.” Ann then modeled the impossibility of 6 + 4 = 2 using cube manipulatives. We therefore scored Ann's questioning and explanations as 0 because her choices reinforced a procedural focus on computational answers rather than on quantitative relationships. In contrast, Bea posed several open-ended questions such as, “This is a great mistake. Does anyone see why?” and “What is the problem with 6 right here and 4 right there?” These questions allowed for a greater depth of student responses than Ann's approach did. However, once Bea had concluded her discussion of how to set up the beach ball diagram, her questions focused more on computation and often elicited single-word responses. Because of her lack of consistency, Bea's questions and explanations were both rated as 1.

Coding reliability. The first author, who developed the coding framework (Ding & Carlson, 2013), had experience coding all the project videos and trained the other two co-authors. Using Ann and Bea's years 1 and 4 videos, each co-author independently watched and scored the eight lessons (two lessons per teacher each year) and compared their scores with the first author, resulting in initial reliability of 85% and 75%, respectively. Discrepancies were discussed. This ongoing process resulted in a shared understanding of the coding process. Next, each of the authors independently coded the rest of the videos for both Ann and Bea. The scores were compared and discussed until an agreement was achieved. Additionally, we summarized our observations of each teacher's instructional patterns within each observed year and across both years, as well as comparisons/contrasts between the two teachers.

To triangulate our video analysis, we qualitatively coded teacher interviews after each lesson in both years 1 and 4. Using the transcripts, we identified instances where the teachers reflected on their use of worked examples, representations, and deep questions. We then wrote memos as side comments on each of the aspects based on a teacher's typical responses. These reflections provided rationales for the teachers’ instructional choices and were used to determine the validity of our inferences about any observed patterns. The interview data in year 4 also provided each teacher's input regarding the extent to which the project intervention made an impact on their teaching. For instance, when Ann was asked if her questioning in Y4-L2 had incorporated any strategies from the summer workshop, she responded, “Probably asking them to explain was a strategy, although I’ve done that before.” Based on this, we added a comment, “It seems she did not learn or apply new questioning techniques.”

Coding and analyzing project intervention

To understand the factors behind the teaching changes, we analyzed teacher responses to the project intervention. First, we qualitatively explored the teachers’ video comments focusing on the “what” and “how” dimensions (van Es, 2011; van Es & Sherin, 2008) with a focus on whether they attended to surface or essential teaching features (e.g., student thinking, classroom instruction that support student thinking) and whether they provided simple descriptions or knowledge-based reasoning (Seidel & Stürmer, 2014). For each teacher's video comment, we documented the results using two dimensions (what and how), along with example responses. Note that for the “what” dimension, while teachers might notice many aspects, we hoped to see whether they would notice the elements in our cognitive construct (e.g., worked examples, representations, deep questions). A similar process occurred with analyzing teachers’ onsite video comments which we relistened to while jotting down relevant comments. Finally, teachers’ post-intervention surveys from the summer workshop were read and coded with the same areas of focus.

Based on the above data, we inferred possible connections between what each teacher attended to during the intervention and whether she achieved the outcome we desired from the intervention. We also compared our observed connections with teachers’ year 4 interviews in which they reflected upon how they implemented strategies from the summer workshop.

Results

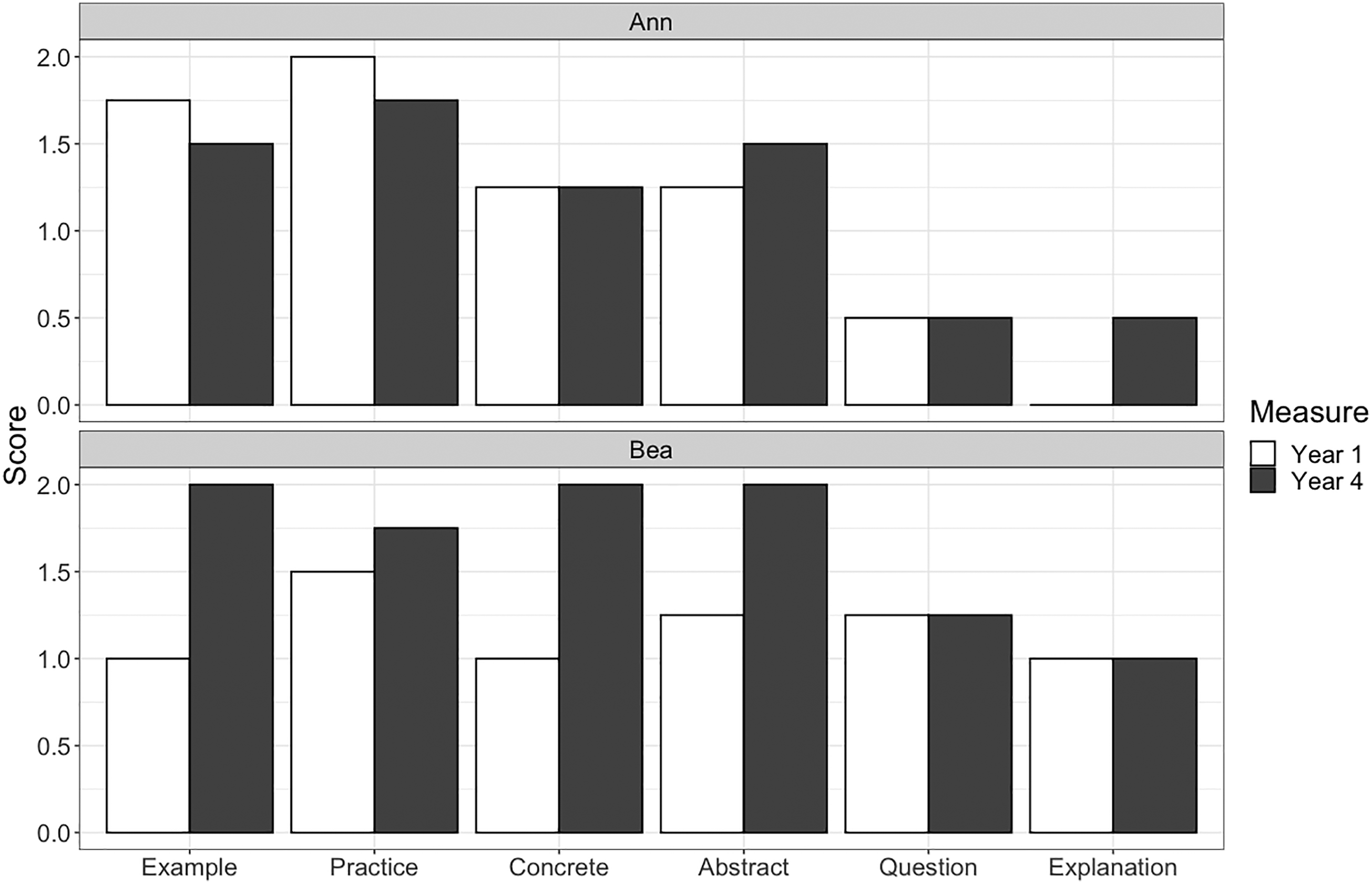

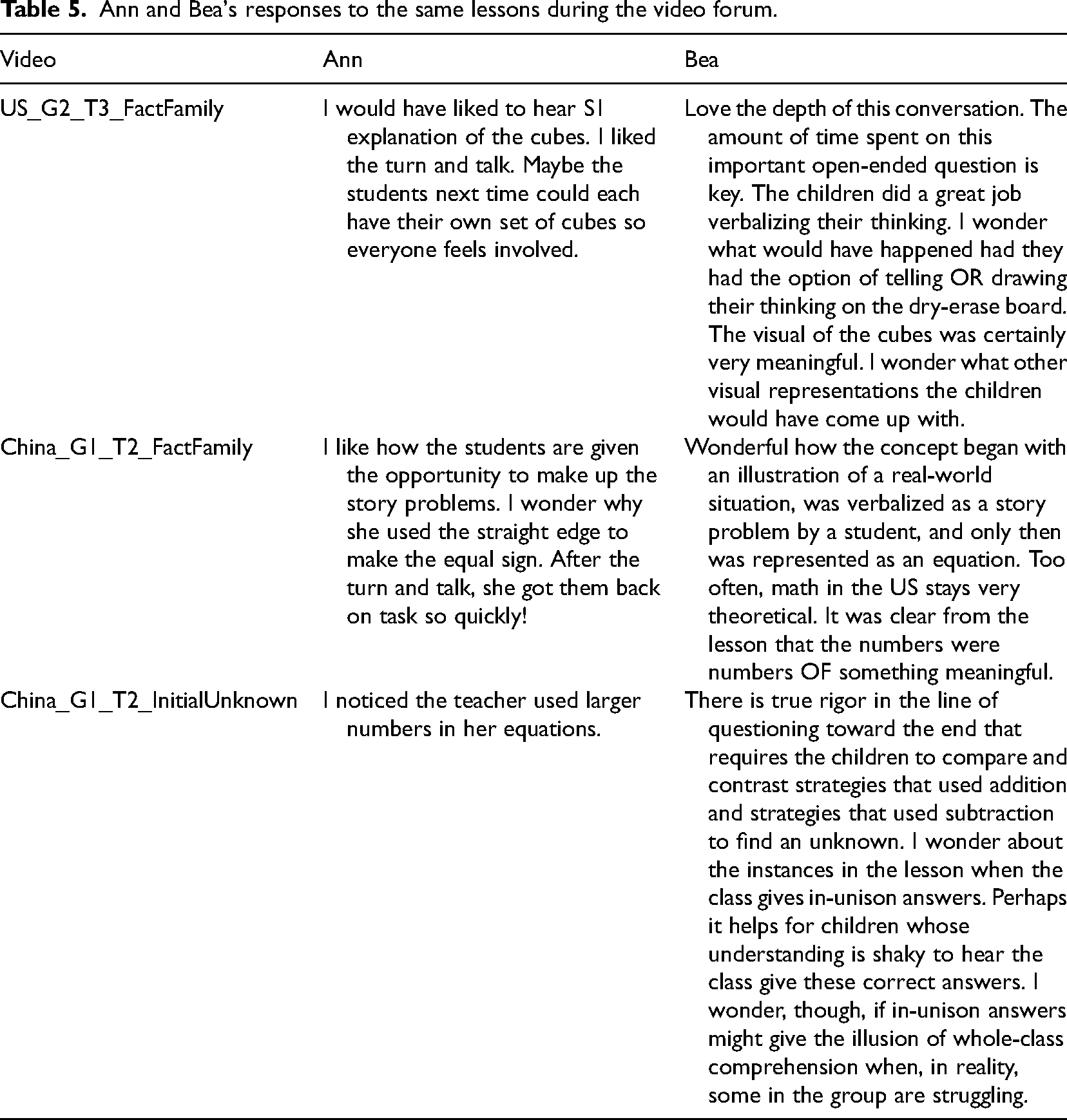

Figure 2 shows the average scoring in each of the coding categories for both teachers in years 1 and 4 (briefly, Y1 and Y4). By comparing the average categories between Y1 and Y4, we can get a sense of the overall improvement that occurred after the Y3 intervention. Due to the limited sample size, we did not conduct statistical tests to identify what amount of change could be considered as significant. However, the scores are not intended to be a quantitative measure of teaching quality, but rather, an indicator of whether certain desired instructional priorities were observed. In the categories of examples and representations, Bea's scores indicated a clear increase in using worked examples and relevant practices, employing concrete representations, and linking the concrete to the abstract. In contrast, Ann's score for using worked examples decreased, and no changes occurred for using concrete contexts. With regards to questioning practices, there were few observed changes for either teacher. Although Ann's lessons in year 4 seemed to contain more deep explanations than in year 1, Bea was ranked higher than Ann in her overall questioning usage. Below we first compare the lessons of each teacher in years 1 and 4, addressing our first research questionTop of FormBottom of Form regarding whether teachers made changes in their use of worked examples, representations, and deep questions. We then report the teacher's responses to the project intervention in year 2 in order to address our second research question.

An overview of Ann and Bea's implementation of each construct during years 1 and 4.

Ann's overall instructional style appeared to be relatively stable. Ann indicated in her post-intervention interviews that when changes did take place, those adjustments were likely related to curriculum changes as opposed to PD intervention.

Worked examples

Ann's average score in worked examples decreased from 1.75 to 1.5. During Y1, she unpacked at least one worked example in each lesson except for L3, which contained multiple short examples. However, during Y4, she had two lessons (L1, L3) in which her longest worked example came in the form of interactive videos provided by the textbook publisher and took 6 and 4.5 min, respectively. Despite this slight difference across the years, Ann consistently used multiple worked examples per lesson. Although she used more examples in Y1 lessons (about 4 per lesson) than in Y4 (about 2 per lesson), her choice of using fewer examples in Y4 seemed to be motivated by the changes in curricular requirements. In the interview following the beach ball lesson, Ann mentioned feeling constrained by her textbook, “They don’t give a lot of examples. They give two that we are supposed to work on together. They’re supposed to go back to their seat and do their own stuff. It's not enough.”

Although nearly all practice problems were aligned to worked examples, we identified a slight decrease in Ann's practice problem scores (from 2 to 1.75) in Y4-L2 lesson. Her use of dominos (e.g., This side is 4, this side is 2. I want to know how many dots are different. What is the difference?) led to several issues. First, these practice exercises were structured as comparison problems, as opposed to the takeaway problems from the worked example (CCSSI, 2010). Second, Ann directed her students to solve by “taking away” cube manipulatives rather than making use of the inverse understanding targeted by the worked example. These observations may suggest limitations of teachers’ knowledge about the basic additive word problem structures, which were discussed using CCSSI's (2010) Table 1 (p. 88) in our summer PD. However, the initial study did not assess teachers’ learning gains in this content knowledge area and have identified this as something to be improved in future PD efforts.

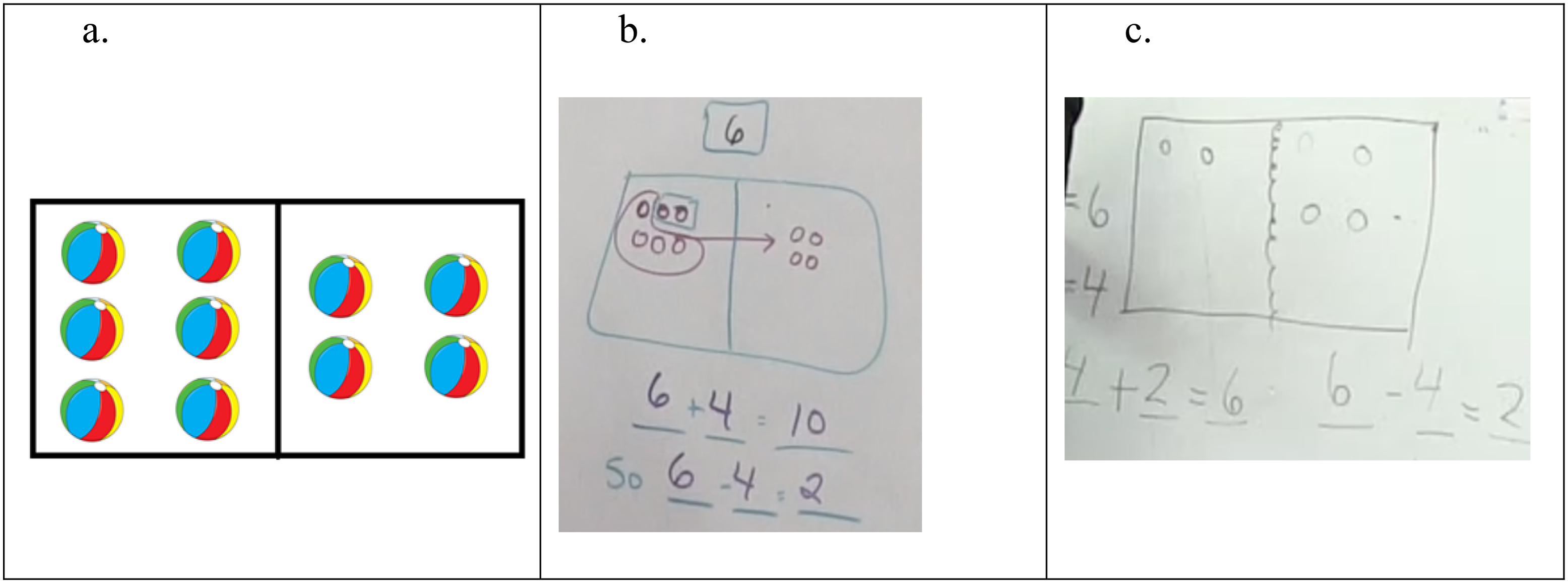

Representations

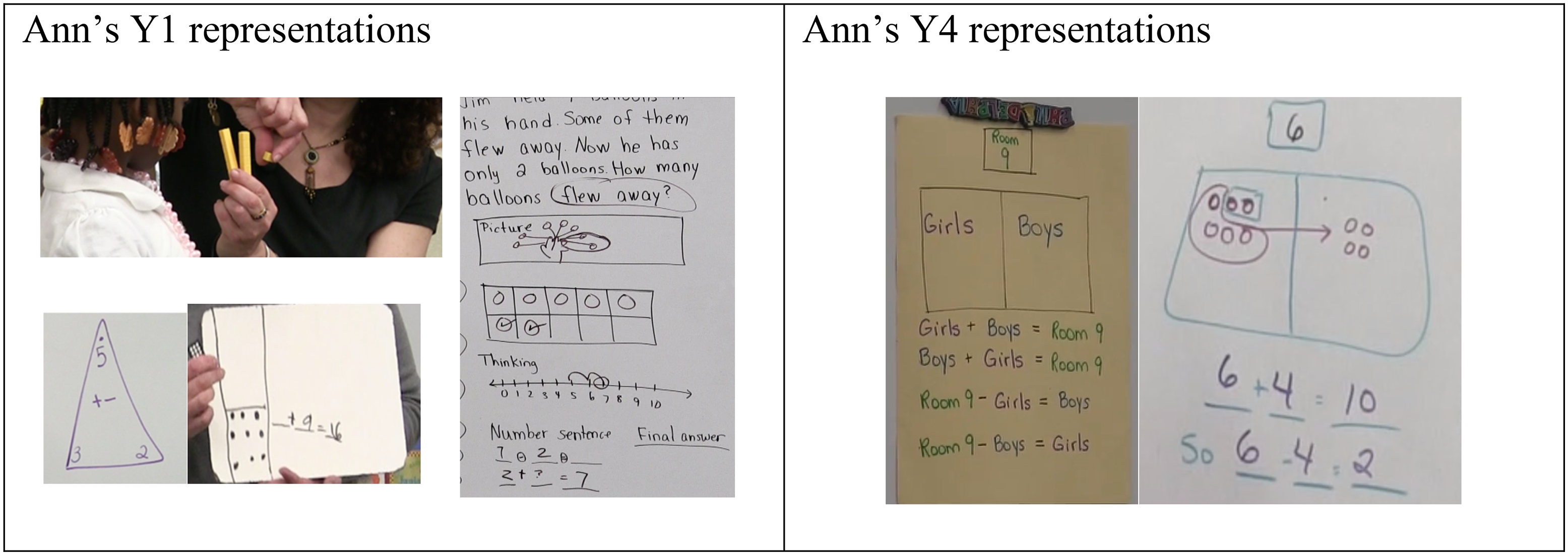

Ann's score for concrete representations was 1.25 for both years. In Y1, one of Ann's lessons situated the worked examples in a concrete context. The rest of her lessons contained only semi-concrete representations such as cubes, fact triangles, dominos, and 10-frames (see Figure 3, left). In Y4, Ann increased the use of concrete contexts in three lessons that relied on textbook presentations (Y4-L2) or interactive videos (Y4-L1 and Y4-L4). There was also an increased use of bar models (see Figure 3, right) in all Y4 lessons, as opposed to only one of the Y1 lessons; however, Ann used concrete contexts mainly as a pretext for computation. The concrete context in Y4-L2 (as described in the coding example in the Method section) was referenced only tangentially after the corresponding bar diagram had been completed, even though there was a situation where the context could have been cited to help students identify and correct errors (see Figure 3, right).

Typical representations in Ann's years 1 and 4 lessons.

Ann's average abstract representation score changed from 1.25 to 1.5, which coincided with her increased use of video examples provided by the textbook. There were four total instances of video usage across Ann's lessons (one from Y1 and three from Y4) and each was projected from a computer with some built-in opportunities for teacher–student interactivity (such as invitations to pause and solicit student responses to questions posed by the video). The videos occasionally connected story problems and their semi-concrete representations with the corresponding abstract numerical expressions.

Ann consistently used questioning techniques that aimed at having students describe computational strategies (and were scored as 0.5 in both years). For instance, Figure 3 (left) shows a worked example of a change-unknown problem from Y1. To solve this story problem, Ann drew a picture, a 10-frame, and a number line on the board and directly provided the number sentences (2 + ? = 7; 7–2 = ?), then guided her students to obtain the answer through counting with questions such as, “I subtract 2 from 7, and the answer is?”. This questioning style appeared resistant to change in Y4 as the focus of Ann's interaction with the students, such as during the Y4-L2, was also to solicit computational strategies.

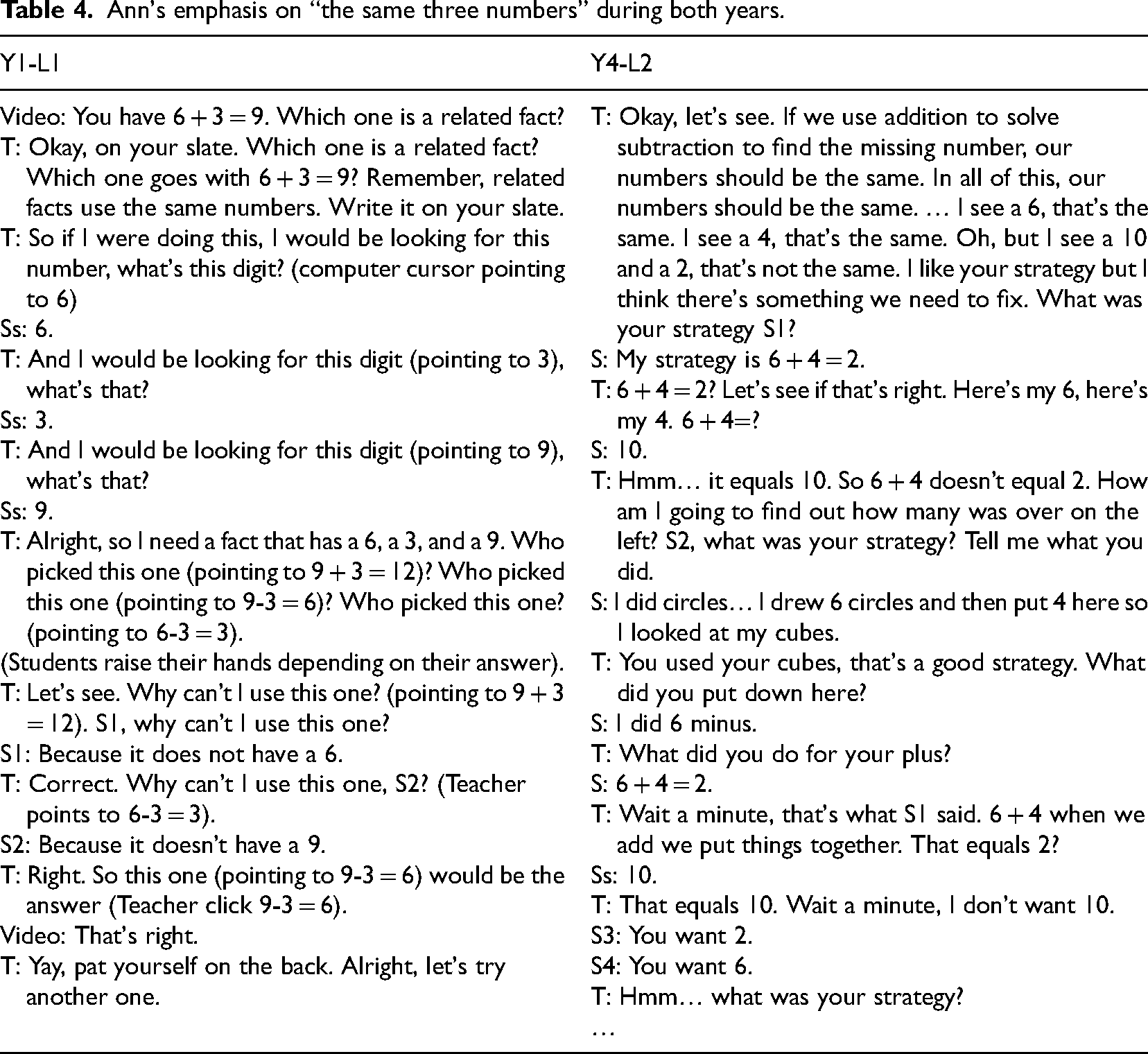

Ann's average explanation score increased from 0 to 1 over the period of observation. Much of this increase was related to her use of the interactive videos in Y4 (L1, L3, and L4) which sometimes provided explanations about conceptual choices (e.g., referring to the part-whole structure). In both years of observation, Ann often prompted students to justify their choice of number sentences by stating that additive inverses contain “the same three numbers.” Table 4 illustrates typical excerpts. Sometimes this explanation worked, as in the Y1-L1 (Table 4, left) excerpt, where Ann prompted students to pick a related fact for 6 + 3 = 9 from three pre-chosen options (9 + 3 = 12, 9–3 = 6, 6–3 = 3). However, as seen in the Y4-L2 excerpt (Table 4, right), this method was insufficient to help students connect a concrete problem situation to abstract number sentences. During the interviews for both lessons, Ann expressed her belief that her students provided deep explanations. However, as demonstrated by the excerpt, her student responses focused mainly on surface features without referring to the part-whole relationship.

Ann's emphasis on “the same three numbers” during both years.

Ann's emphasis on “the same three numbers” during both years.

Across both years, we observed specific improvement in Bea's implementation of our cognitive construct in her classroom teaching. Our scoring of Bea's post-intervention lessons was generally higher than her Y1 ratings in the areas of worked examples and representations. Post-instructional interviews indicated that these changes were intentional and that Bea connected them directly to the project intervention.

Worked examples

Bea's scores for worked examples changed from 1 to 2 (see Figure 2). In Y1, Bea tended to use multiple short, worked examples with a relatively uniform length (e.g., her three examples in Y1-L2 took 5.5, 6, and 5.5 min respectively; her three examples in Y1-L4 took 2.5, 2, and 2 min, respectively). In Y4, Bea implemented a different routine of using one primary worked example with a concrete context and increased length as the main body of her lesson (e.g., the primary example in L1 took 17 min; the example in L2 took 14 min). Bea would extend her Y4 primary worked example by rephrasing it in different ways moving from concrete to semi-concrete, and then to abstract. In addition, there was a shift in how well Bea's practice problems aligned with her worked examples, thereby resulting in a score increase from 1.5 to 1.75. In Y1, her practice problems were consistently games with dice or number cards, which requested that the students identify isolated addition or subtraction facts (e.g., 3 + 6 = 9; 8–3 = 5) rather than connect related facts that compose inverse relations. In contrast, the majority of Bea's practice tasks in Y4 demanded related facts (e.g., 3 + 6 = 9; 9–3 = 6).

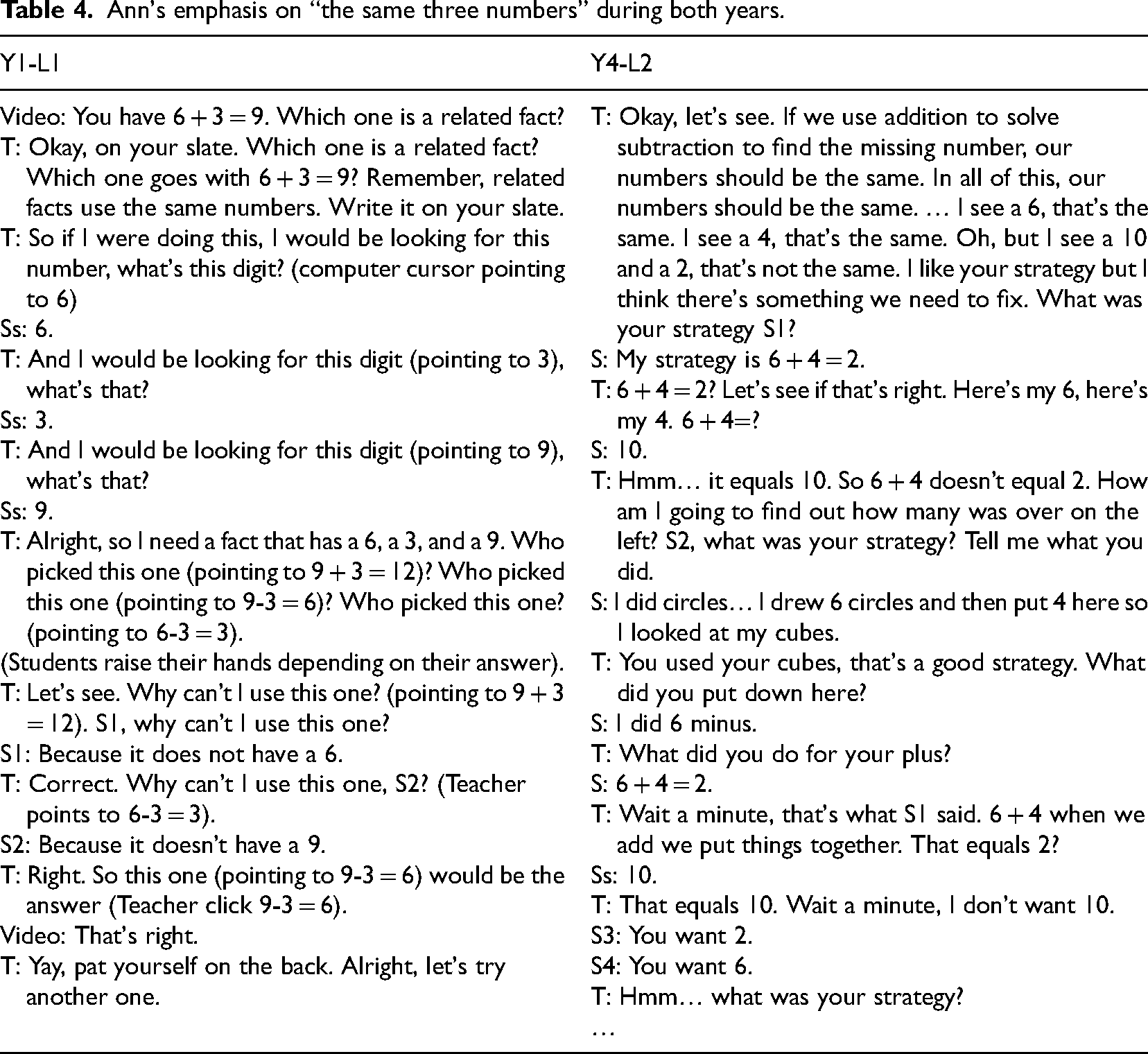

Representations

Bea's concrete representation scores improved from 1 to 2, due to her increased use of concrete contexts when teaching with worked examples. In most of her Y1 lessons, Bea's worked examples involved semi-concrete representations (e.g., cubes, 10-frames, dice, and game boards) without contextual support (see Figure 4, left). By contrast, Bea's Y4 lessons always started with a story context. She introduced the Y4-L1 with a familiar situation in which she modeled children playing inside the classroom and outside on the playground (see Figure 4, right) with magnetic figures. She also modeled four student-generated story scenarios in the Y4-L3, to represent the inverse relation concept of a fact family.

Typical representations in Bea's Y1 and Y4 lessons.

In Y1-L4, Bea had students decompose the number 10 into 2 numbers and then generate abstract fact families from each triad (see Figure 4, left). In Y4, however, Bea systematically implemented concreteness fading by shifting from concrete story representations to semi-concrete (e.g., bar diagrams and magnetic manipulatives) which were further faded into abstract number sentences (see Figure 4, right). In her Y4 interviews, she regarded concreteness fading as her “new paradise” for any lesson and elaborated, “That idea of concrete fading really resonated with me and so I really made an effort to modify the lesson that was written in order to start from a real concrete example before we got into an equation or even a part-part-whole model.”

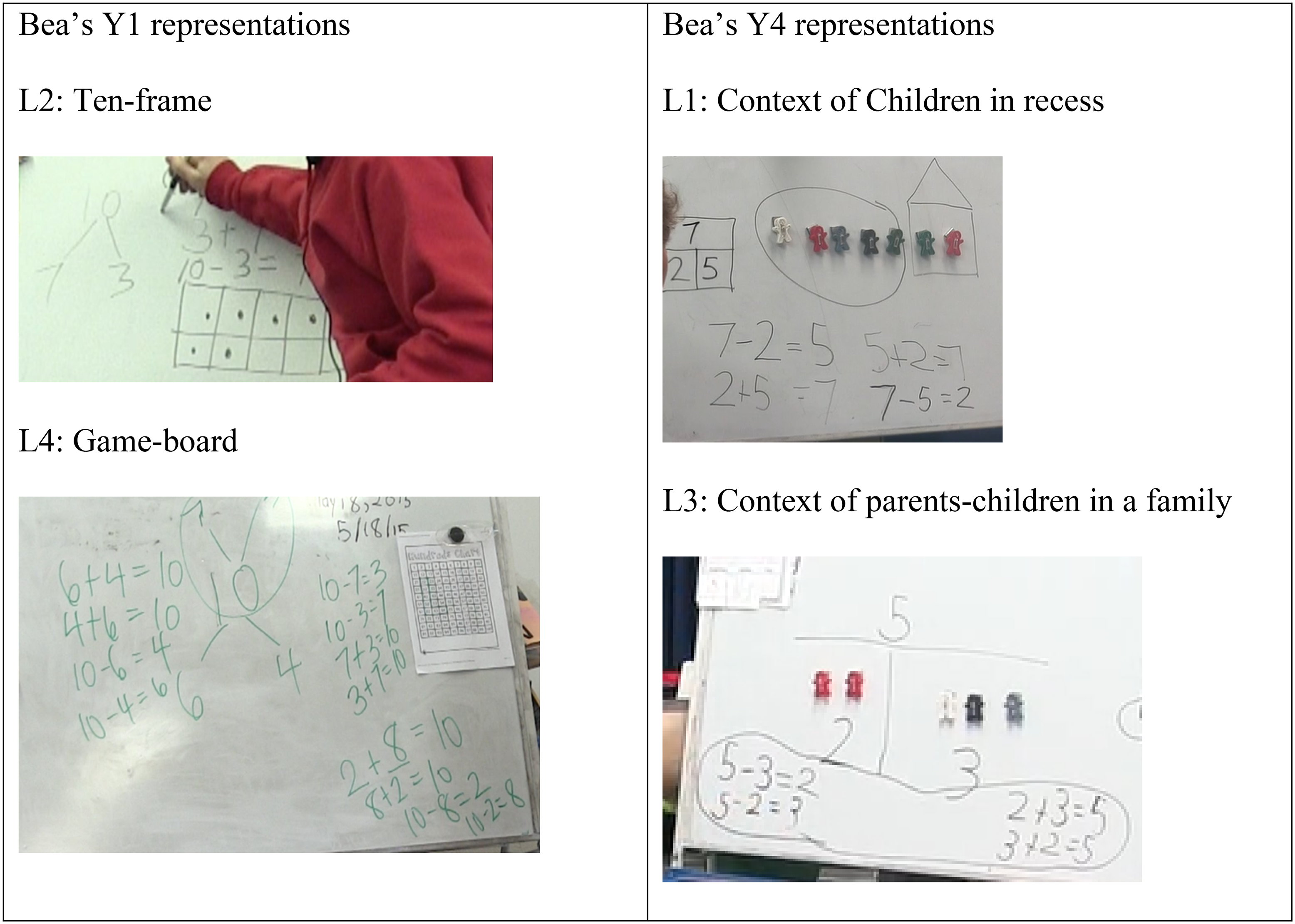

Bea's average scores for questioning and explanations remained unchanged in Y4 in comparison to Y1 (both rated 1). She expressed consistent beliefs about the importance of deep questioning in her interviews, “I use questions that put the lifting on [the students’] end” (Y1-L3) and “I really wanted to ask probing questions pushing children's thinking, like, ‘how did you know?’ questions” (Y4-L1). During the lessons, Bea showed skills at prodding students when they offered correct explanations that lacked detail. For example, in Y4-L4 she asked students to compare related number sentences of 13–6 = ? and 6 + ? = 13, “Why do you say this is the same thing?” followed up with the prompt, “Say more.” Consequently, students in her class provided deep explanations such as “They have the same parts and whole.” This type of explanation emphasized structural relationships and was much deeper than Ann's emphasis on the surface feature of “the same three numbers.”

Although Bea's questioning extended her students’ on-track explanations, she was less successful in asking deep questions that addressed students’ mistakes. Throughout Y1 and Y4, there were instances in which she would react to incorrect responses through a process of funneling (Mason, 2000) during which she would gradually reduce the depth of her questions until she was giving out explanations directly. Figure 5 illustrates two examples. In the Y1-L2 excerpt, Bea corrected a student's stated operation and then indicated which part (2 or 12) should be used. In the Y4-L2 excerpt, Bea pointed out the “conflict” between the problem statement and the current finding and directly informed students about the story situation.

Bea's consistent tendency of giving out answers and explanations across both years.

We now report both teachers’ responses to our project intervention. Since the purpose of the PD was to promote teaching changes aligned with our cognitive construct, the teacher responses may indicate the existence of factors that underpin the observed teaching changes (or lack thereof) post-intervention. As mentioned in the Method section, our year 3 intervention included both an online video forum and three-day onsite workshops.

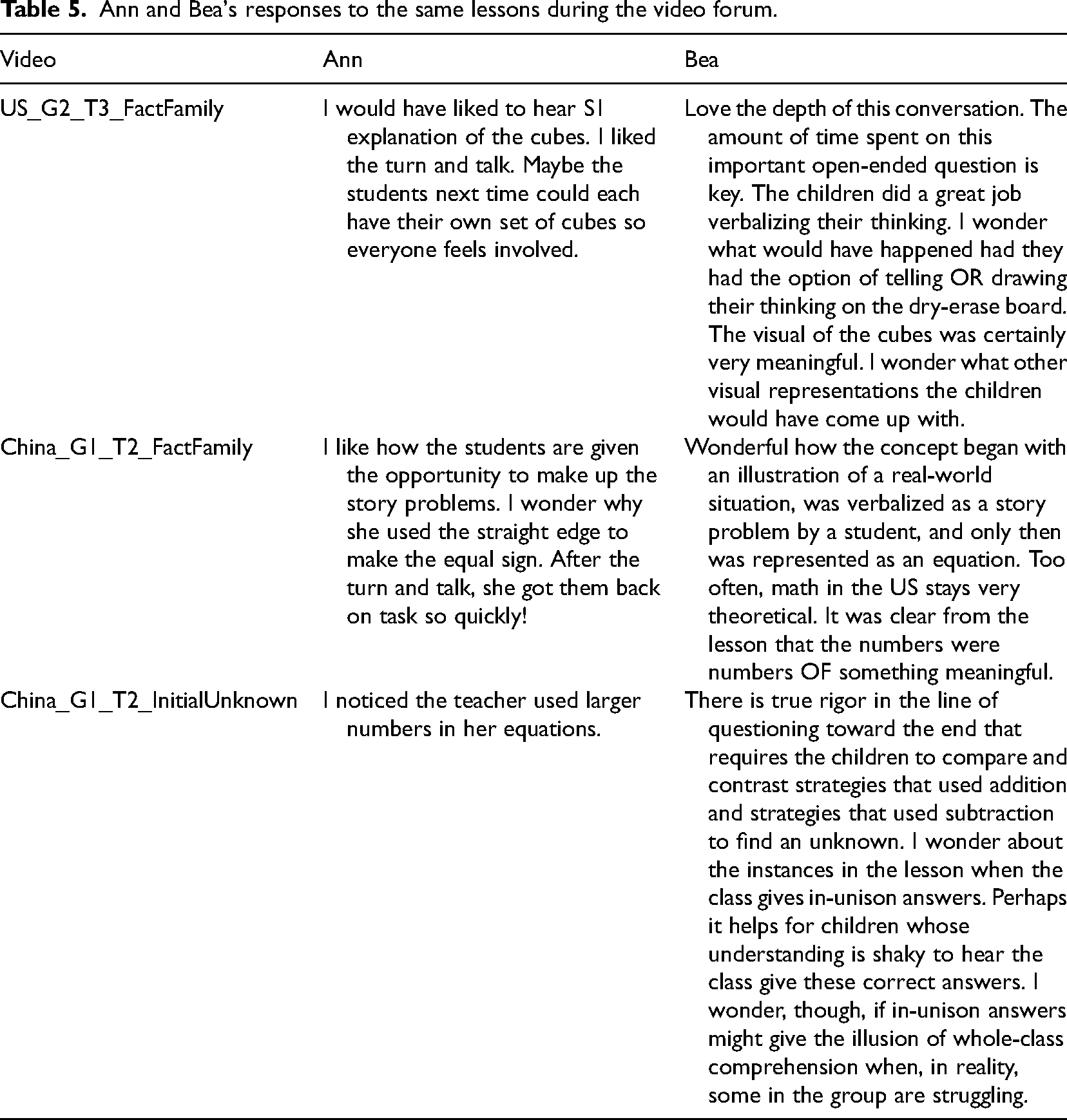

Teacher responses to online videos

Overall, there is a difference in “what” teachers noticed and “how” they reasoned about their noticing from the cross-cultural videos. Table 5 lists both teachers’ comments on the same three cross-cultural video clips.

Ann and Bea's responses to the same lessons during the video forum.

Ann and Bea's responses to the same lessons during the video forum.

In Table 5, Ann's video comments (left) mainly described communication styles, classroom environment, and the physical appearance of mathematics tools, which indicated a low level of video noticing skill (van Es, 2011). For instance, Ann expressed in a few places that she liked the communication style “turn and talk” utilized in both U.S. and Chinese classrooms. With the U.S. video (“US_G2_T3_FactFamily”), Ann stated, “I liked the turn and talk.” With the Chinese video (“China_G1_T2_FactFamily”), she reiterated, “After the turn and talk, she got them back on task so quickly!”. Ann also noticed that Chinese teachers used a “straight edge” to draw equal signs on the chalkboard and broadly referenced her U.S. peers using manipulatives as relevant uses of mathematical tools. Moreover, Ann mentioned that she liked how the students were given the opportunity to make up the story problems, but she did not reason further about the specific benefits of the practice. Overall, the above comments across these videos indicate that Ann's video noticing was largely devoted to the surface features of the lessons.

In contrast, Bea's video comments (Table 5, right) focused on teachers’ questioning and the use of representations, which were essential aspects of our cognitive construct (Pashler et al., 2007). For instance, in the same Chinese lesson about fact families (“China_G1_T2_FactFamily”), Bea was impressed with how Chinese lessons situated math concepts in real-world contexts: Wonderful how the concept began with an illustration of a real-world situation, was verbalized as a story problem by a student, and only then was represented as an equation. Too often, math in the U.S. stays very theoretical. It was clear from the lesson that the numbers were numbers OF something meaningful.

While the online forum was designed to facilitate teachers’ learning, our follow-up onsite workshop was meant to directly deliver the targeted project intervention to teachers. Ann's written comments on the workshop's individual videos were not available; however, her overall written responses were collected. Her typical notes included, “China children wore coats, lessons were similar” and “Chinese children were respectful.” These comments tended to describe surface features of teaching and were broad in nature. In her responses to our survey, she stated that she enjoyed discussing the Chinese way of schooling and learning about other cultures. She also stated that she would implement what she learned as follows: During my math lessons, I am going to try and question my students more, but at the same time, try not to spend too much time on one problem. I already have them turn and talk, but maybe they can explain to others as well and have two groups join to discuss solutions or thoughts.

In contrast to Ann's responses, Bea's onsite reactions to the chosen videos showed she was paying attention to essential teaching elements and students’ mathematical thinking. For instance, Bea expressed great interests in Chinese teachers’ representation uses, that is, starting a lesson with illustrations of “a real-world situation,” followed by “concreteness fading.” In her post-intervention survey, she shared that she had been implementing the concreteness fading approach in her lessons: Our workshop in August truly lit a fire for me. I am particularly inspired by the idea of concreteness fading. I have endeavored to work this concept into every math lesson that I've taught this year. Embarrassingly, it never occurred to me before August how highly conceptual a notion an equation actually is. I am making sure to build with my students considerable real-world meaning about math concepts before I introduce how these math relationships can be communicated in equation form.

Discussion

International assessments suggest a need to improve U.S. students’ mathematics learning (Cai, 2004; PISA, 2016; TIMSS, 2016; Li et al., 2008). Given that teaching is a critical factor responsible for learning, this study is a response to a recent call for the application of a science of improvement to classroom teaching (Bryk, 2009; Cai et al., 2019; Lewis, 2015). We carefully analyzed two teachers’ videotaped lessons before and after a project intervention that was based on cross-cultural videos and aligned with cognitive research assertions. Such a fine-grained analysis of teacher learning is different from previous large-scaled, quantitative reports on how an intervention might have impacted teaching and learning at a macro level (Blanton et al., 2015; Carpenter et al., 1989; Clements et al., 2011; Jitendra et al., 2013). In our study, we do not intend to make causal claims. Instead, we have taken individual account of teaching behaviors after an intervention, observed which teaching aspects changed, and analyzed how those changes were applied. It is our hope that our detailed findings comparing these two teachers can contribute to building a knowledge base about elementary mathematics teaching (Cai et al., 2019). Below we first discuss the successes and challenges in promoting our cognitive construct via this intervention, followed by discussions of possible factors that may have supported or hindered this PD effort.

Successes and challenges in implementing the cognitive construct

In this study, we predicted that teachers could learn and implement the targeted cognitive construct (Pashler et al., 2007) to support students’ mathematics learning. Inspired by the literature on the worked example effect (Gog et al., 2011; Renkl et al., 2004; Sweller & Cooper, 1985), we expected that post-intervention the teachers would spend sufficient time unpacking a single worked example to develop students’ mental schemas. As acknowledged, the length of an example is not equivalent to its quality. However, multiple, short, worked examples do not allow students to unpack the conceptual elements of problems and thus do not produce the desired worked example effect. In Y4 of this study, both teachers used fewer examples with greater amounts of class time spent on at least one example in each lesson. However, this choice was made intentionally by Bea and reluctantly by Ann. The difference in the teachers’ use of worked examples may reflect their different beliefs about teaching (elaborated upon in the next section). However, it may also relate to their different uses of representations and deep questions—the other two aspects stressed by our intervention.

In this study, our intervention encouraged teachers to make use of real-world contexts and the concreteness fading approach (Fyfe et al., 2015; McNeil & Fyfe, 2012). Ann and Bea increased the use of concrete situations post-intervention; however, only Bea routinely did so with intention. Ann's concrete situations, in contrast, often remained pretexts for computational discussions (Pashler et al., 2007). Therefore, we noted that an increase in the application of concrete situations was incomplete without genuinely fading the concrete into abstract to unpack these problems. Bea demonstrated great interest in the concreteness fading approach and intentionally integrated the technique in her post-lessons, while Ann did not.

We also instructed teachers to ask deep questions, especially those targeting conceptual meanings and facilitating comparisons (Ding, 2021), which promote students’ inference making (Morris et al., 2020) and help elicit deep explanations (Chi, 2000; Craig et al., 2006; Pashler et al., 2007). Nevertheless, both teachers retained their initial questioning styles with few alterations. Even though Bea asked open-ended questions in both years, her habit of funneling questions (Mason, 2000) seemed to be an ingrained reaction that remained unchanged. This is similar to the findings of Franke et al. (2009) in which the CGI teachers demonstrated the ability to ask initial deep questions but not a probing sequence of specific questions. Similarly, Ann's satisfaction with student computational strategies and her own procedural responses persisted over both years of observation. Note that our concerns about procedural responses are not meant to imply that the learning of procedures lacks value. Both procedural and conceptual knowledge are critical components of mathematics learning (NRC, 2001). However, we observed (to varying degrees) that both teachers had difficulties incorporating conceptual elements into their lessons, likely due to their unimproved questioning skills.

Factors that may affect teaching improvement: Linking to intervention

To promote instructional changes, we provided teachers with a PD intervention that was centered on cross-cultural videos. As reported in the Methods section, the selected video clips together provided opportunities to discuss the extent to which the targeted cognitive constructs were used in U.S. and Chinese classrooms. According to the literature, cross-cultural videos are powerful tools in supporting teacher learning and noticing skills (Ding, Li et al., 2023b). This is because culturally contrasting instructional features may draw teachers’ attention to targeted aspects and facilitate teachers’ deep reflections on their own culturally-based teaching habits (Jacobs & Morita, 2002; Moran et al., 2017). However, in our study, the two teachers we observed had exceedingly different takeaways from the same cross-cultural video intervention. While Ann's video noticing mainly focused on the surface teaching features that were not stressed by our project intervention, Bea noticed the teaching elements that aligned with our targeted cognitive construct (Pashler et al., 2007). In addition, while Ann tended to describe what she noticed, Bea applied knowledge-based reasoning to her observations (van Es, 2011). These differences in the “what” and “how” of teacher noticing during cross-cultural videos provide a possible reason why the teachers’ classroom implementations of the cognitive construct showed differing levels of success.

What the teachers noticed during the videos may be tied to their own knowledge and beliefs about mathematics teaching (Clarke & Hollingsworth, 2002; van Es & Sherin, 2008). For instance, in her Y1 interviews, Ann expressed her interest in “turn and talk” and frequently mentioned this in her video comments during our project intervention. In addition, Ann's preference for using multiple worked examples also remained unchanged, which seems consistent with a common belief held by many U.S. teachers that “the more examples, the better” (Ding & Carlson, 2013). In Bea's case, her adoption of concreteness fading also seems related to her beliefs that “math in the US stays very theoretical” (see her video comment in Table 5). In her interviews, she attributed her purposeful teaching changes to our project intervention. Thus, it seems that when teachers’ initial beliefs are aligned with the proposed intervention, there is a greater likelihood that they will try to implement the intended approach.

Nevertheless, we also observed a gap between what the teachers believed and what they actually performed. For instance, Ann and Bea articulated in their Y4 interviews that they had tried to incorporate deep questioning and increased student explanations. Yet, our observations indicate that neither teacher implemented a significantly different question/explanation strategy than she had in Y1. Perhaps, this was due to a lack of deep knowledge about the targeted mathematical concepts, or a satisfaction with their previous questioning methods that reduced their motivation for change. These observations call for our reflections on how PD interventions can be better delivered to teachers while considering hidden factors such as teachers’ prior knowledge and beliefs. In addition, Bea borrowed a class to teach in year 4. It is unclear how her unfamiliarity with students may affect her teaching moves. Furthermore, the literature indicates that effective video-based intervention also takes time (Amador et al., 2021).

Finally, we found that textbook materials seemed to be an influential factor in teaching changes. This is especially true with Ann who stated that she felt constrained by the textbook in terms of the amount of worked examples it provided. Ann also used more interactive videos provided by the textbook publisher in her Y4 lessons, which offered deeper explanations than those she had provided on her own in either year of observation. Unfortunately, our interview data could not reveal her curriculum beliefs, such as whether she felt obligated to teach the given materials in particular ways. In addition, we found that when semi-concrete diagrams were emphasized by both the textbooks and our project intervention, both teachers increased the use of bar models in their year 4 lessons. Even though differentiating between the impact of our project's intervention and the textbook's influence on observed teaching changes is hard, it is safe to argue that if textbook resources are designed to incorporate cognitive research assertions, then interventions like ours will likely be reinforced and implemented with greater success in classrooms.

Implications and future studies

Our findings from this study have broader implications and suggest future directions for inquiry. While prior studies have often reported overall successes of video-based PD intervention for teacher learning (e.g., Santagata & Guarino, 2011) and interventions that enhanced students’ mathematical learning (e.g., Blanton et al., 2015; Jitendra et al., 2013), our study demonstrates the possibility of variations existing among individual teachers. In particular, we found that teachers’ noticing skills (van Es, 2011; van Es et al., 2020) could be quite different, even when applied to the same set of videos. Moreover, the aspects of the PD that the teachers attended to seem to be associated with whether they made pedagogical changes post-intervention. The suggestion that causal relationships between teachers’ video-based learning and their teaching changes would require rigorous experimental design beyond the scope of this study. However, our findings contribute to a science of improvement (Bryk, 2009; Cai et al., 2019; Lewis, 2015), which encourages follow-up analysis of teachers’ lessons after receiving interventions. Future studies may continue this line of research to document and analyze more cases so that the field of mathematics education might mirror the advances made by medical and health professionals (Bryk, 2009; Cai et al., 2019; Lewis, 2015).

This study echoes the literature (Kleinknecht & Scheider, 2013; Moran et al., 2017; Richland et al., 2007) suggesting that cross-cultural videos have the potential to support teachers as they learn to notice. By “cross-cultural,” we refer to a set of U.S. and Chinese videos with closely matched topics, enabling direct comparisons. Nevertheless, it is important to note that there are many factors (e.g., students’ prior knowledge, teachers’ own mathematical knowledge, teachers’ knowledge and beliefs about curriculum and pedagogy) that may affect what the teachers notice from the PD materials and, in turn, may affect their subsequent classroom implementation of our constructs. Future studies may consider ways to measure these constructs more objectively and explore in fine-grained ways how other factors may interact with the given PD intervention to support teachers’ learning based on cross-cultural videos. Moreover, successful teaching approaches in other countries may not necessarily translate directly across cultures. Future studies may document more cases with different teacher groups when teaching different topics in this regard.

Finally, with our targeted cognitive construct, we found that teachers’ questioning skills are relatively hard to change when compared to the other elements of our construct. Asking deep questions and responding to improvisational situations demands alterations to a teachers’ structural knowledge that are difficult to plan for (Ding, 2021; Franke et al., 2007; Morris et al., 2020). However, we acknowledged that we did not administer a formal assessment of how teachers attended to instances of deep questioning after their receiving of the PD intervention, and whether that is linked to their choices for their follow-up lessons. Future research and PD efforts may give particular focus to teachers’ understanding of deep questions and anticipated deep explanations. Carefully designed assessments across different stages of PD might be used as an outlet to improve classroom questioning techniques. Curricular materials (e.g., textbooks, interactive videos) should also play a supportive role in enhancing teachers’ deep questioning and explanations.

Limitations and conclusion

This study reports the instructional changes of two teachers after receiving our project intervention. The findings reported do not provide a complete picture of the entire study, nor are they generalizable to all teachers. However, we argue that generalization is not our study's purpose. Given that the two selected teachers had the same initial video scores and both taught the same concepts across both years of observation, their cases provide a compelling picture about the forms of teacher noticing that can affect whether an intervention has been implemented successfully. Our next step is to analyze all the videos that have been collected from both years of the project intervention to obtain a more complete picture that might enrich the findings reported in the current study. Based on future “studies” of additional cases, we can further the PDSA cycle, refining and implementing the intervention for our cognitive construct. With continuous effort, we hope to contribute to the science of improvement (Cai et al., 2019) enhancing the quality of mathematics teaching and consequently students’ mathematical learning, in the United States and abroad.

Footnotes

Acknowledgements

This work is supported by the National Science Foundation CAREER award (No. DRL-1350068) at Temple University. Any opinions, findings, and conclusions in this study are those of the authors and do not necessarily reflect the views of the funding agency.

Contributorship