Abstract

This study examined the alignment and predictive power of instructional practices as reported by teachers, students, and external raters by using the Shanghai data that included 85 teachers and 2,613 students who participated in the Global Teaching Insights study. Results from exploratory and confirmatory factor analysis along with ordinary least square regression indicate that the same four conceptual components including classroom discourse (e.g., allowing students to explain their ideas and engage in peer discussions), meaning-making (e.g., explaining why a mathematical procedure works), cognitive activation (e.g., encouraging students’ critical thinking in solving complex tasks), and clarity instruction (e.g., teachers’ giving clear explanation of subject matter) were identified in the instructional practices reported by teachers and their students. The cognitive activation factor in the data reported by teachers emerged as the most significant predictor of students’ post-test scores, whereas the classroom discourse factor in the data reported by students accounted for the largest portion of variance in students’ post-test scores. Furthermore, our analysis revealed that the alignment between ratings reported by students and external raters was the highest, and student ratings of their mathematics teachers’ instructional practices demonstrated the highest predictive power for students’ post-test scores. Results of this study provide important empirical evidence for the merit of cognitive activation and classroom discourse in mathematics teaching and inspire researchers, practitioners, and policy-makers to pay careful attention to student-reported instructional practices that can serve as a better source of data in measuring mathematics teaching quality.

Keywords

Introduction

In recent decades, teaching quality has been recognized as the crucial driving force for shaping student achievement and thus identifying the elements of effective instructional practices becomes an important task for researchers, especially in the field of mathematics education (Barber & Mourshed, 2007; Bokhove, 2022; Cheng, 2014; Stigler & Hiebert, 2009). Referring to the overall effectiveness of teaching as often measured by improvement in student academic achievement (Hill et al., 2012; Little et al., 2009), teaching quality is a multifaceted theoretical construct that encompasses various aspects of instructional practices, including specific strategies, techniques, and methods that teachers utilize to facilitate effective teaching and learning experiences in the classroom (Bell et al., 2020; Praetorius et al., 2017, 2018; Van der Lans, 2018). Previous studies have commonly relied on either teachers’ self-reported instructional practices, student surveys, in-classroom observations, or lesson recordings to evaluate the quality of mathematics teachers’ instructional practices. Although useful insight into what constitutes teaching quality either within a specific education system or internationally has emerged, the inconsistent level of agreement among the different types of data remains a critical issue, which leads to confusions or uncertainties regarding the merit of each type of data used in evaluating teaching quality (e.g., Begrich et al., 2020; Desimone et al., 2010; Fauth et al., 2014, 2020; Kaufman et al., 2016; Wagner et al., 2016; Wisniewski et al., 2020).

The discrepancy in the findings of existing literature regarding the alignment of different types of data warrant further exploration of this issue. More importantly, it suggests that multiple sources of evaluation need to be considered when assessing instructional quality, as each source provides valuable but potentially different perspectives on instructional practices. Additionally, achieving higher correlations among the measures provided by teachers’ self-report, student ratings, and observer ratings would provide strong evidence of construct validity for teaching quality that can be used to inform classroom instruction and teaching evaluation (Kunter & Baumert, 2006; Wagner et al., 2016). However, to date, few research has compared all three types of data within one study setting. Therefore, the objective of this study was to address such a gap by examining the alignment among the three types of data and their respective predictive power for student mathematics achievement that serves as a critical indicator for the quality of mathematics teaching (Hill et al., 2012; Little et al., 2009).

Literature review

Instructional practices and student mathematics achievement

Although student mathematics achievement can be influenced by various contextual factors at the school, classroom, and student levels, research has highlighted the pivotal role of teaching quality in attributing to student learning (Hill et al., 2012; Little et al., 2009; Stigler & Hiebert, 2009). Research on teaching quality has largely followed the teacher effectiveness tradition that focused on two important components, that is, the process (teachers’ actual teaching in classroom), and the product (student learning outcomes that teachers eventually foster) (Brophy, 2000; Fenstermacher & Richardson, 2005). Therefore, teachers’ instructional practices in the classroom and student learning outcomes are the two essential elements in assessing teaching quality. Reasonably, selecting valid and reliable approaches to measure teaching quality and identify effective instructional practices that constitute quality teaching become significant.

The common approach to evaluate teaching quality has been to utilize ratings obtained from external observers, while collecting reported data by teachers and students from questionnaires has also been used (Goe et al., 2008; Little et al., 2009). Although other student learning outcomes such as engagement and motivation have been used in measuring teaching quality, standardized student achievement test scores were more often selected due to their affordance for comparisons across different studies (Goe et al., 2008; Grossman et al., 2014; Rieser et al., 2013) and our current study adopts this approach. In the following, we will review the merits of the three types of rating data, the alignment among them, and their predictive power for student mathematics achievement on standardized tests.

Teachers’ perceived and self-reported instructional practices

Although collecting self-reported data has been popularly used in a large number of studies including those in the field of mathematics education due to their relatively low cost, ease of administration, and advantage of involving a large number of teachers to produce findings of external generalizability (Newfield, 1980; Schneider et al., 2007), debate is still going on with regard to the accuracy of such data related to teacher self-reported classroom instructional practices. Proponents argue that survey about teachers’ instructional practices is reliable and can be used (e.g., Mayer, 1999; McCaffrey et al., 2001; Porter et al., 1993; Ross et al., 2003), and in particular, high reliability and accuracy can be achieved when certain conditions are met, which include that surveys are conducted anonymously (Aquilino, 1994, 1998), respondents are asked to provide demographic information, self-efficacy, and interest (Chan, 2009; Stone et al., 2000) or report on unconnected happenings that occurred in recent and unique scenarios (Bradburn, 2000; Tourangeau et al., 2000). Additionally, high reliability and accuracy can also be obtained when teachers are asked to describe their teaching behaviors instead of judging the quality of their teaching (Mullens & Gayler, 1999), and when researchers use composite variables instead of a single variable from teacher's self-reported data in subsequent data analysis (Mayer, 1999). Teachers’ perceived and self-reported instructional practices appear to align with what is obtained from external observations and interviews (Kaufman et al., 2016; Mayer, 1999; Ross et al., 2003), especially when teachers are prompted to report on the instructional practices they use for a single class or for within a limited time period (Newfield, 1980; Porter et al., 1993).

However, using teachers’ self-reports to measure instructional quality can be challenging as some researchers found self-reported data to have low reliability and less accuracy when respondents are asked to report on their own experiences, attitudes, attributes, or behaviors that are either socially desired or frowned upon (Bradburn, 2000; Devaux & Sassi, 2016; Little et al., 2009; Tourangeau et al., 2000; Van de Vijver & He, 2014). Still, other researchers found that teachers’ self-reported data is unreliable when compared side by side with in-classroom observations in portraying teachers’ instructional practices (Bodzin & Beerer, 2003; Brophy & Good, 1986; Koziol & Burns, 1986) and specifically when this type of data is used to infer the quality of instructional practices (Kaufman et al., 2016; Mayer, 1999). This can be due to various factors, such as a desire to present themselves in a positive light, fear of negative consequences for admitting weaknesses or shortcomings, or a belief that their responses will be used to evaluate their performance or effectiveness (Reddy et al., 2015). Because of these factors, teachers may not always provide accurate or complete information about their instructional practices or the quality of their teaching. As a result, self-reports may not always provide a reliable or valid measure of instructional quality.

Despite the debate related to the strength and weakness of self-reported data, teachers’ self-report on their instructional practices is still commonly used in the field of educational studies. Previous studies using teachers’ self-reported data have provided valuable information about the potential factors influencing teaching and learning practices, though they are limited in its ability to inform the researchers with regards to the actual teaching and learning that take place in the classroom in order to improve such practices (Grossman et al., 2014; Hill et al., 2011; Pianta & Hamre, 2009). The alternative approaches such as on-site observations or ratings based upon lesson recordings tend to be rather time-consuming, costly and also suffer from threats that may reduce the overall reliability and accuracy of the data collected (Praetorius et al., 2012; Reddy et al., 2019). Another popular approach is to involve the students who actually are part of the teaching and learning process in the reporting of instructional practices. In the next section, we will review relevant studies that examined the trustworthiness of this type of data.

Student rated instructional practices

Similar to teachers’ perceived and self-reported data, students’ reported instructional practices have both merits and limitations. In terms of the merit of this type of data, previous research provided compelling evidence supporting the reliability and validity of student ratings of teaching quality (Fauth et al., 2014; Praetorius et al., 2012; Wagner et al., 2016). In recent years, researchers placed greater emphasis on investigating the degree of stability of student ratings across different school years, classes, or subjects, and critical reflections on the merit of student ratings. For instance, Gaertner and Brunner (2018) found high stability in student-rated instructional practices, unaffected by factors such as elapsed time between ratings, subject taught, or grade level. Similarly, Fauth et al. (2020) found relatively stable student ratings of teaching quality when students from the same class rated the same teacher within two consecutive school years and when the same students rated different teachers. However, Fauth and colleagues (2020) also noted rather low stability in ratings across different classes taught by the same teachers. Reflecting on the value of student ratings, Lauermann and ten Hagen (2021) argued that students, being the authentic participants in the teaching and learning process and natural recipients of teachers’ knowledge transmission, possess a unique perspective in the classroom to observe their teacher's instructional practices on a daily basis. This proximity allows them to critically reflect on and evaluate their teachers’ teaching performance, as their primary purpose is not mere observation but active engagement in the learning. The reliability of student-rated instructional practices is improved since such ratings are aggregated across many lessons in which the students spent significant amount of time (Gaertner & Brunner, 2018; Lauermann & ten Hagen, 2021). Therefore, instructional practices reported by students have good merit and such type of data has been collected in teaching evaluations and educational studies.

However, there exist limitations that can lead to bias in student-rated instructional practices. Some researchers expressed their concerns about the reliability of this type of data by citing students’ young age (Fauth et al., 2019) and untrained nature as raters compared with professional raters who might be well equipped with the necessary skills and expertise to use the evaluation instrument proficiently after going through rigorous training process, since the evaluation instrument contains terms or dimensions of constructs that are beyond the comprehension of young students (Fauth et al., 2020). Accordingly, a potential disagreement might arise between the data obtained from students who experience their teachers’ teaching and those professionally trained raters, even though previous research has provided some evidence for the reliability and validity of ratings from students and external observers (Fauth et al., 2014, 2020; Begrich et al., 2020). Nevertheless, researchers in a most recent study (Tsai et al., 2022) found that students reported teaching effectiveness is in alignment with theory-based structure of the survey completed by students, which helps clear up the concerns expressed by Fauth et al. (2019, 2020) and thus supports that young students at the elementary and secondary level have the capacity of discerning various dimensions of teaching. In the next section, we will review relevant studies that examined the trustworthiness of another commonly used approach of assessing instructional quality, that is, observer ratings.

Observer rated instructional practices

There is ongoing debate about the extent to which observer ratings can be used to judge teachers’ instructional quality. Some researchers argue that observer ratings should be used as one piece of evidence in a broader evaluation process (e.g., Lei et al., 2018; Van der Lans, 2018), while others question the validity and fairness of using such observer ratings (e.g., Praetorius et al., 2014; Weston et al., 2021). On the one hand, some prior studies have found that observer ratings of instructional quality are consistent across different observers and occasions, and that they correlate with other measures of instructional quality, such as student ratings and student learning outcomes (Hill et al., 2012; Van der Lans, 2018). Additionally, Reddy et al. (2019) found that when observers use well-validated standardized observation tools and rubrics to assess instructional quality, the resulting ratings are generally reliable and valid. This type of rating provides a comparatively objective measure of teaching effectiveness that is not based solely on student ratings or teacher self-reports, which can be useful in providing a more complete picture of instructional quality. Additionally, observer-rated instructional quality based upon standardized instruments or rubrics allows for comparison across different classrooms and teachers and ensuring accountability, which can be useful in identifying areas of strength and weakness, making decisions about resource allocation, and supporting accountability for teaching effectiveness (Lei et al., 2018).

However, as argued by some researchers, the weaknesses of this type of rating are also obvious. First, observer ratings are inherently subjective as observers may bring their own biases, beliefs, interpretation of teaching behaviors to the rating process, causing potential variability in ratings and negatively impact the reliability and validity of such ratings (Praetorius et al., 2014). Because of this, observers need to be rigorously trained on the observation tool or rubric, and then subsequently calibrated to ensure that they are applying the tool or rubric consistently from the beginning to the end (Briggs & Alzen, 2019). Additionally, observers are typically only able to observe a limited portion of a teacher's practice and may not have a complete understanding of the context in which teaching is occurring; such limited scope may also negatively impact the validity and reliability of the ratings (Weston et al., 2021). Furthermore, observer ratings can be resource-intensive, requiring trained observers, observation time, and rating instrument development and validation, which can severely limit the feasibility of using observer ratings in large-scale evaluation systems (Hill et al., 2012; Reddy et al., 2019).

Despite these limitations, observer-rated instructional quality has remained a valuable tool. Obviously, its validity and reliability largely rely on that of the observation tool or rubric (Reddy et al., 2019), whether observers are rigorously trained, and rating calibration is considered (Briggs & Alzen, 2019). Since data collected from observer ratings can possibly provide researchers with rich information about the actual teaching and learning process (Grossman et al., 2014; Hill et al., 2011; Pianta & Hamre, 2009), particularly when video recording of teachers’ lessons are obtained and then analyzed by trained raters, it has gained more popularity in recent decades with the affordance of modern video technology as evidenced by the Video Study of Trends in International Mathematics and Science Study (TIMSS) in the 1990s (Stigler & Hiebert, 2009) and then the Organization for Economic Co-Operation and Development (OECD)'s large scale Global Teaching Insights (GTI) study in 2018 (OECD, 2020a). Having reviewed the merits and limitations of the three types of data collected from teachers, students, and observers, we will examine the correlations among them and their predictive power in relation to student achievement.

Alignment of three types of ratings and their predictive power

Studies on the alignment between ratings reported by teachers, students, and external observers have provided some good evidence of their associations but the results are quite divergent. First, while there is no consistent pattern across studies, the majority of research suggests that teachers tended to rate their own teaching practices higher than external observers did (e.g., Debnam et al., 2015; Hansen et al., 2014; Kaufman et al., 2016), as teachers have a vested interest in presenting their teaching in the best possible light, while external observers are more objective and have no personal investment in the outcome. Additionally, external observers may have a broader perspective on teaching and may focus on different aspects of instructional quality than teachers themselves.

Regarding the alignment between the ratings reported by teachers and their students, discrepancies also exist and only a low to moderate correlation was found in the studies that were conducted in the past two decades (Desimone et al., 2010; Kunter & Baumert, 2006; Wagner et al., 2016; Wisniewski et al., 2020). Similarly, regarding the alignment between the ratings by students and external observers, some earlier studies found that correlations between these two types of ratings typically fall within the 0–0.50 range (Fauth et al., 2014; Begrich et al., 2020). However, Fauth et al., (2020) found that teaching quality as rated by students and external observers varied considerably from 0.39 to 0.85 for the various dimensions of teaching quality between classes, with student motivation as a significant predictor of such variations in teaching quality as rated by external observers and students. As argued by some researchers (Kunter & Baumert, 2006; Wagner et al., 2016), attaining higher correlations among the three types of ratings from teachers, students, and observers would provide robust evidence for the construct validity of teaching quality, which can subsequently have potential in informing classroom instruction and teaching evaluation. However, the low to moderate correlations observed among the three types of ratings, coupled with the discrepancies in results identified in previous studies, suggest that more research is needed to examine the causes for such discrepancies.

Regarding the predictive power of different types of ratings for student mathematics achievement, some studies found that student-reported instructional quality exhibits greater predictive power for noncognitive learning outcomes, such as engagement (Lauermann & Berger, 2021) or motivation (Schiefele & Schaffner, 2015). The noticeable limitations of these studies include their predominate use of one or two types of data in analyzing teachers’ instructional practices and a weak focus on student cognitive learning outcomes. These limitations are significant considering the low level of agreement among the ratings of instructional practices provided by teachers, students, and external observers (Desimone et al., 2010; Fauth et al., 2020; Kunter & Baumert, 2006). Such limitations along with the discrepancies related to the alignment of the three types of ratings in the existing studies necessitates the inclusion of different perspectives in order to better analyze teachers’ instructional practices. This multifaceted assessment approach will enable the researchers to triangulate the data and potentially obtain a more thorough and accurate depiction of teachers’ instructional practices that plays a critical role in shaping student learning outcome.

The current study

In this study, we aim to address the gaps and limitations in the existing literature by utilizing the data of the Shanghai sample from the large-scale study of GTI, which is a video study of teaching administered by OECD. The GTI study collected valuable data related to the instructional practices self-reported by mathematics teachers as well as observed by students and trained raters (Opfer, 2020). This valuable data provided a richer picture of teachers’ instructional practices that can be analyzed to explore the alignment of the three types of ratings and their relationship with the critical indicator of teaching quality, that is, students’ mathematics achievements (Goe et al., 2008; Hill et al., 2012; Little et al., 2009). More importantly, the GTI study purposefully designed the same sets of questions that focus on mathematics teachers’ instructional practices in the student and teacher questionnaires, which enabled the researchers to examine whether the proposed conceptual components outlined in the Quality Teaching framework of the GTI study below can be identified in teachers’ self-reported practices and those reported by their students (Praetorius et al., 2020a, 2020b).

The quality teaching framework of the GTI study

To measure mathematics teachers’ teaching quality across different countries and economies, the GTI study team first conceptualized what constitutes teaching quality and then developed its own instrument to achieve this goal. The GTI study's conceptualization process of teaching quality drew on various sources that include the specific conceptualizations of quality teaching and standards on teaching from each participating countries and economies, a critical review of global observation literature on teaching quality and teaching effectiveness, and the pertinent conceptual frameworks on teaching quality from Teaching and Learning International Survey (TALIS) 2018 and Program for International Student Assessment (PISA) administered by OECD to ensure close alignment (Klieme, 2020). The resulting six-domain quality teaching framework consists of classroom management, social-emotional support, discourse, quality of subject matter, students’ cognitive engagement, and assessment of and responses to students’ understanding (Bell et al., 2018, 2020).

Since the framework was constructed based on theoretical considerations rather than empirical data (Castellano & Bell, 2020) and a more simplified domain structure might exist, the GTI study team combined the latter four domains into the general realm of “instruction,” which is a latent construct specifically focusing on the mathematics instructional practices, then tested it with confirmation factor analysis. The team found that the model that contains the general realm of “Instruction” had good fit statistics, robust CFI = 0.96, robust TLI = 0.92, RMSEA = 0.10, which allow it to become an overarching latent construct encompassing the four sub-domains, that is, discourse, quality of subject matter, cognitive engagement, and assessment of and responses to student understanding (Castellano & Bell, 2020). Therefore, this latent construct was used as the coding framework by trained raters to rate mathematics teachers’ instructional practices and the data collected was adopted in our analysis to answer research question 2 and 3 as listed below, together with teachers’ and students’ rating data. Additionally, the framework of the latent construct “Instruction” was also used to guide our analysis to answer our first research question. We will discuss in more details about these in the next section.

Specifically, the current study seeks to answer the following three research questions: RQ1: What are the different conceptual components of the instructional practices reported by teachers and students?

RQ2: To what extent do instructional practices reported by teachers, students, and external raters align with each other?

RQ3: How do the instructional practices reported by teachers, students, and external raters compare in predicting students’ mathematics achievement?

Method

Data source

To answer our research questions, we used the Shanghai dataset from the large international GTI study conducted between 2016 and 2020 collaboratively among a total of eight OECD member countries and partner economies with the aim to measure mathematics teachers’ instructional practices and their relation to student cognitive learning and non-cognitive outcomes (Opfer et al., 2020). To identify any possible shared or divergent patterns of mathematics teaching across the eight countries and economies, the GTI study purposefully selected the topic of quadratic equations that are typically taught at the secondary school level in all countries and economies. In Shanghai, this important topic is taught at the eighth-grade level. A total of 2,613 students around the age of 14 along with their 85 teachers across 85 schools from Shanghai participated in the GTI study.

Instruments

Student and teacher prequestionnaire and postquestionnaire

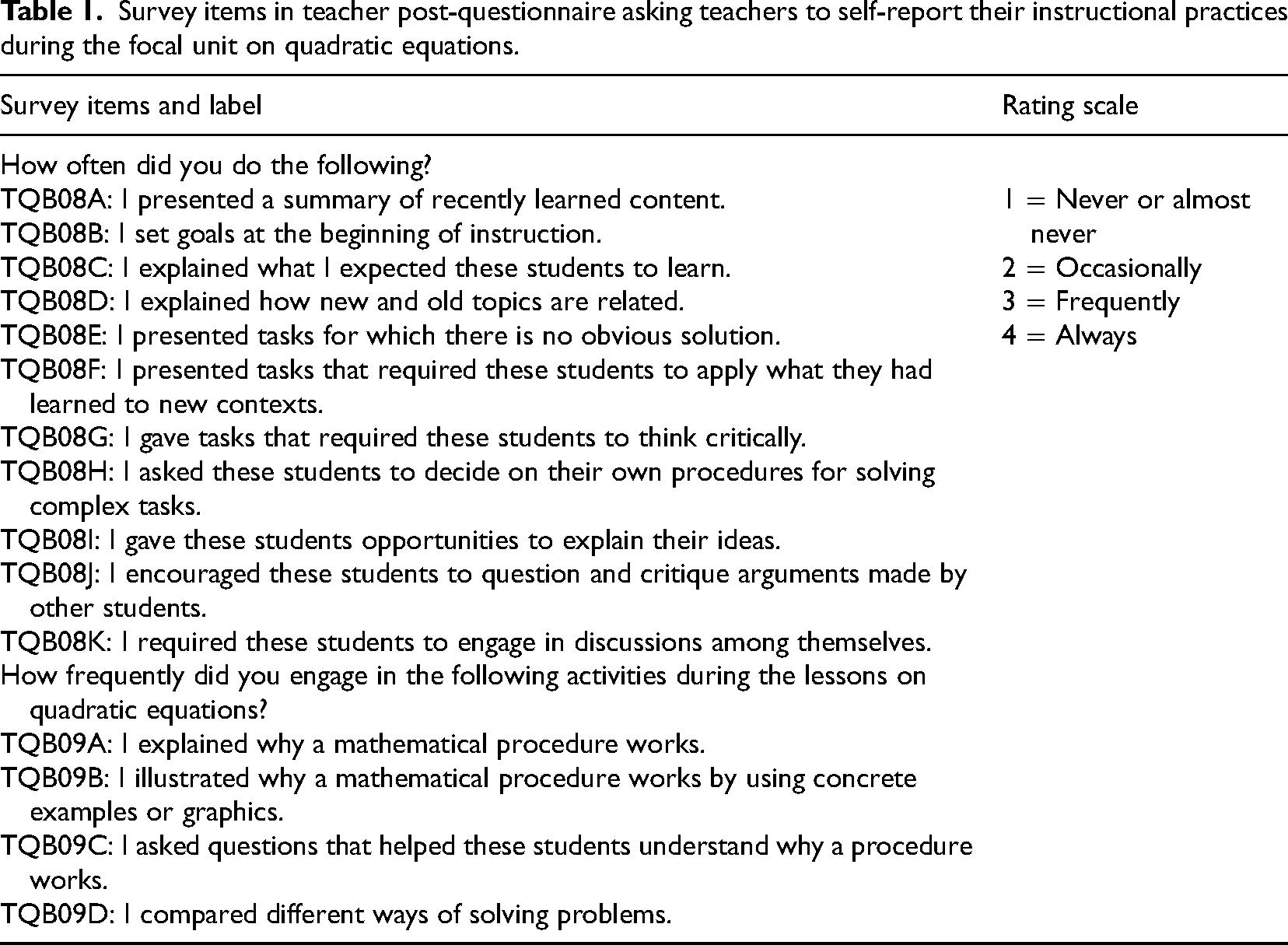

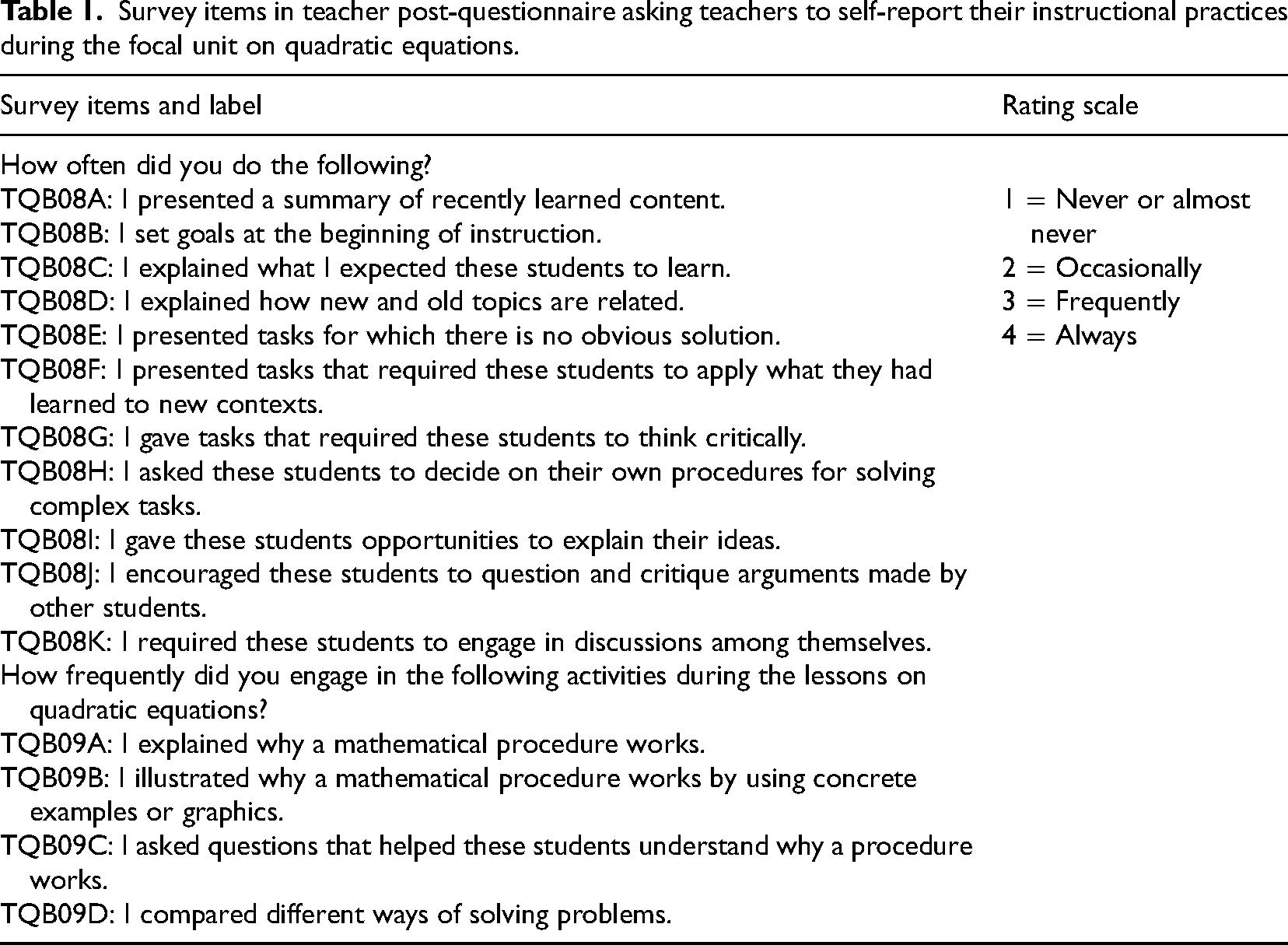

The GTI study administered both student prequestionnaire and postquestionnaire to collect student background and learning-related information. The prequestionnaire was administered prior to the focal unit instruction on quadratic equations while the postquestionnaire was administered at the conclusion of the unit. Additionally, the GTI study also administered teacher prequestionnaire and postquestionnaire at the same time student prequestionnaire and postquestionnaire were administered to collect teachers’ background and focal unit-related information such as lesson goals, content covered, etc. It is in the student postquestionnaire that students reported their rating of teachers’ instructional practices while in teachers’ postquestionnaire that teachers’ self-reported instructional practiced were collected, both of which were on a scale of 1–4 with 1 indicating “never or almost never” and 4 indicating “always” in using each specific instructional practice (see Tables 1 and 2).

Survey items in teacher post-questionnaire asking teachers to self-report their instructional practices during the focal unit on quadratic equations.

Survey items in teacher post-questionnaire asking teachers to self-report their instructional practices during the focal unit on quadratic equations.

Survey items in student postquestionnaire asking students to rate their teachers’ instructional practices during the focal unit on quadratic equations.

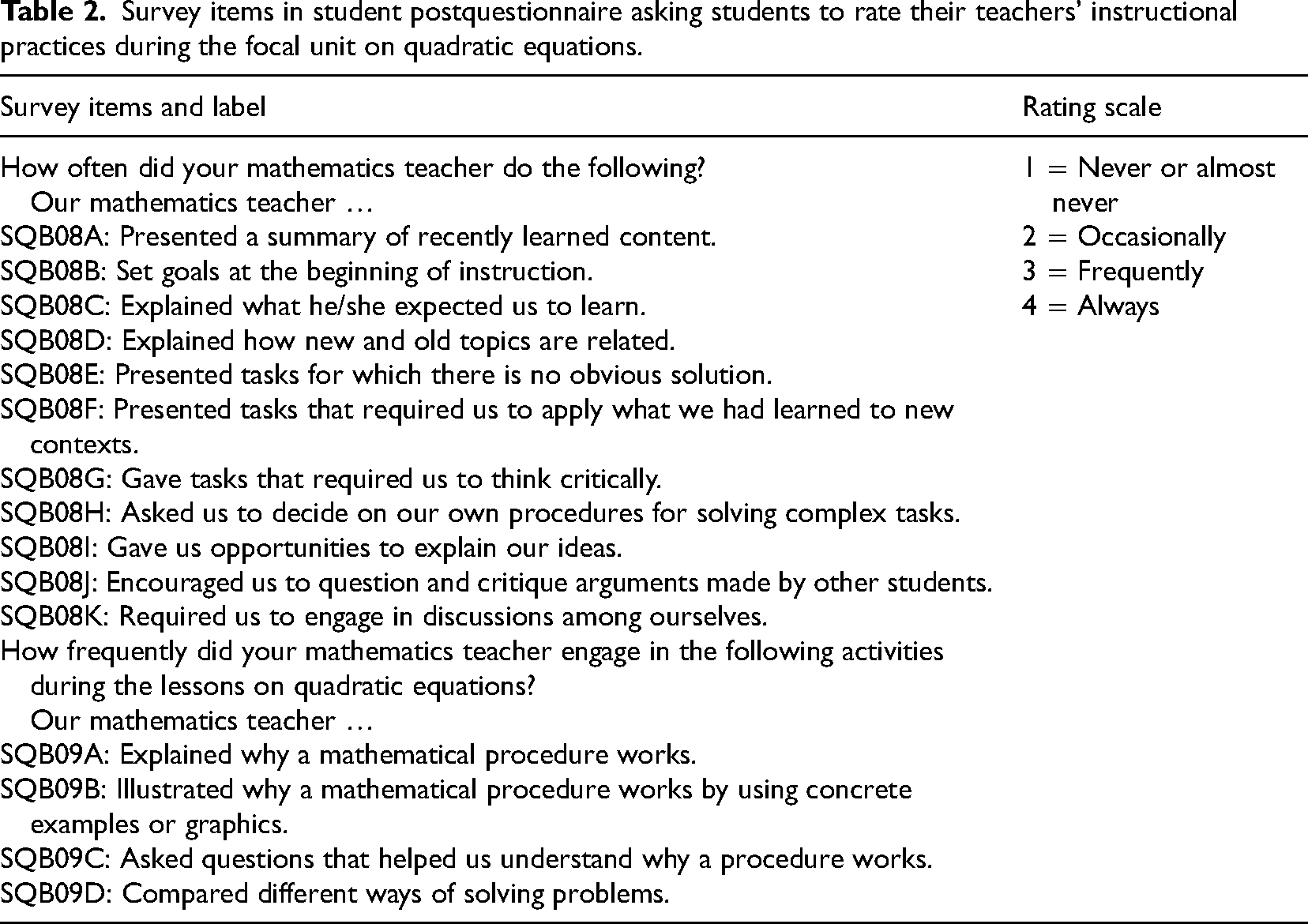

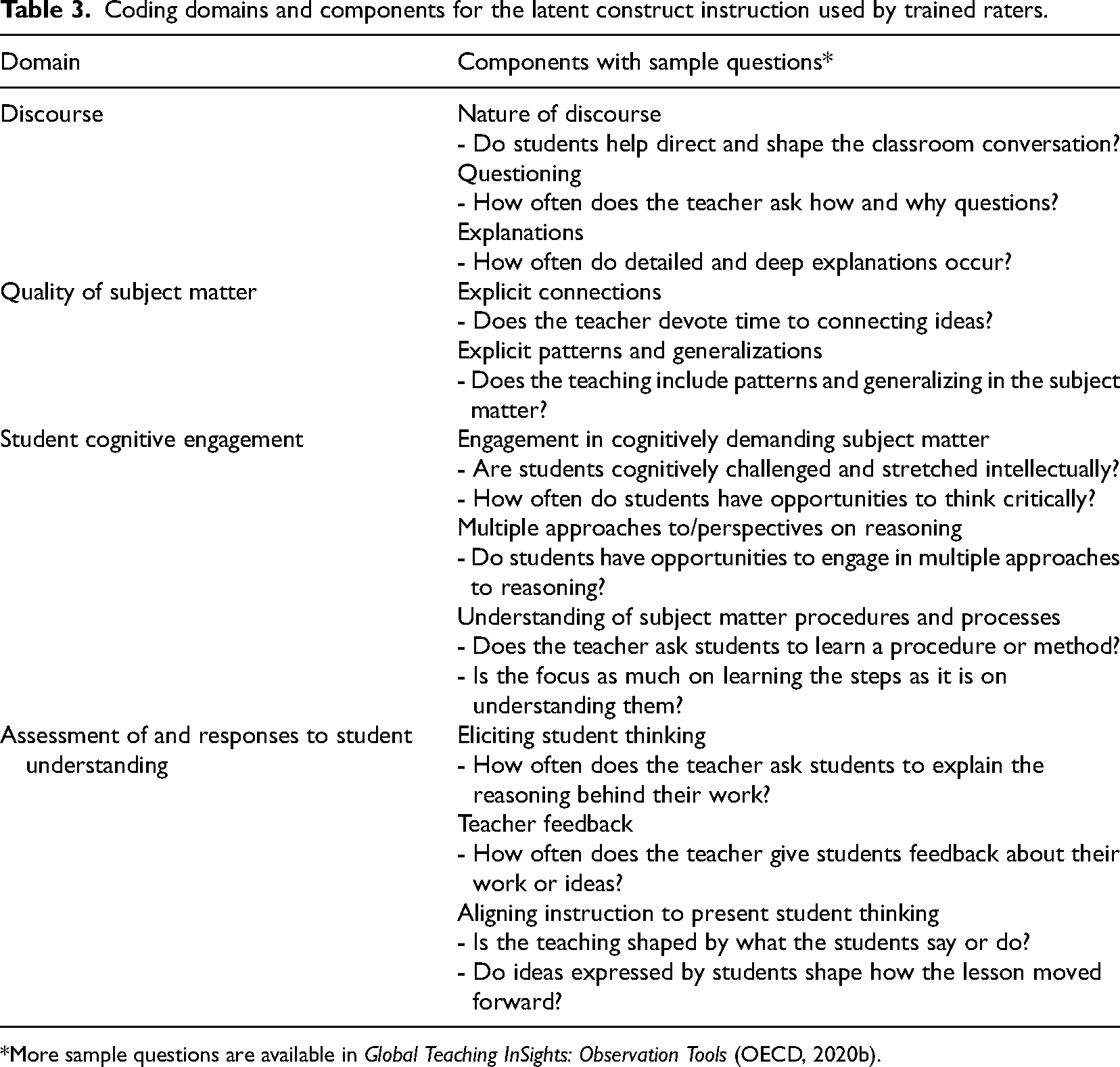

The aforementioned theoretical construct “Instruction” formulated by the GTI study team consists of four domains and was used as the blueprint for designing the coding instrument, which was then used by trained raters to rate each mathematics teacher's instructional practices. In the following, we will explain what each of the four domains entail and how the coding instrument was used to rate the teachers’ instruction.

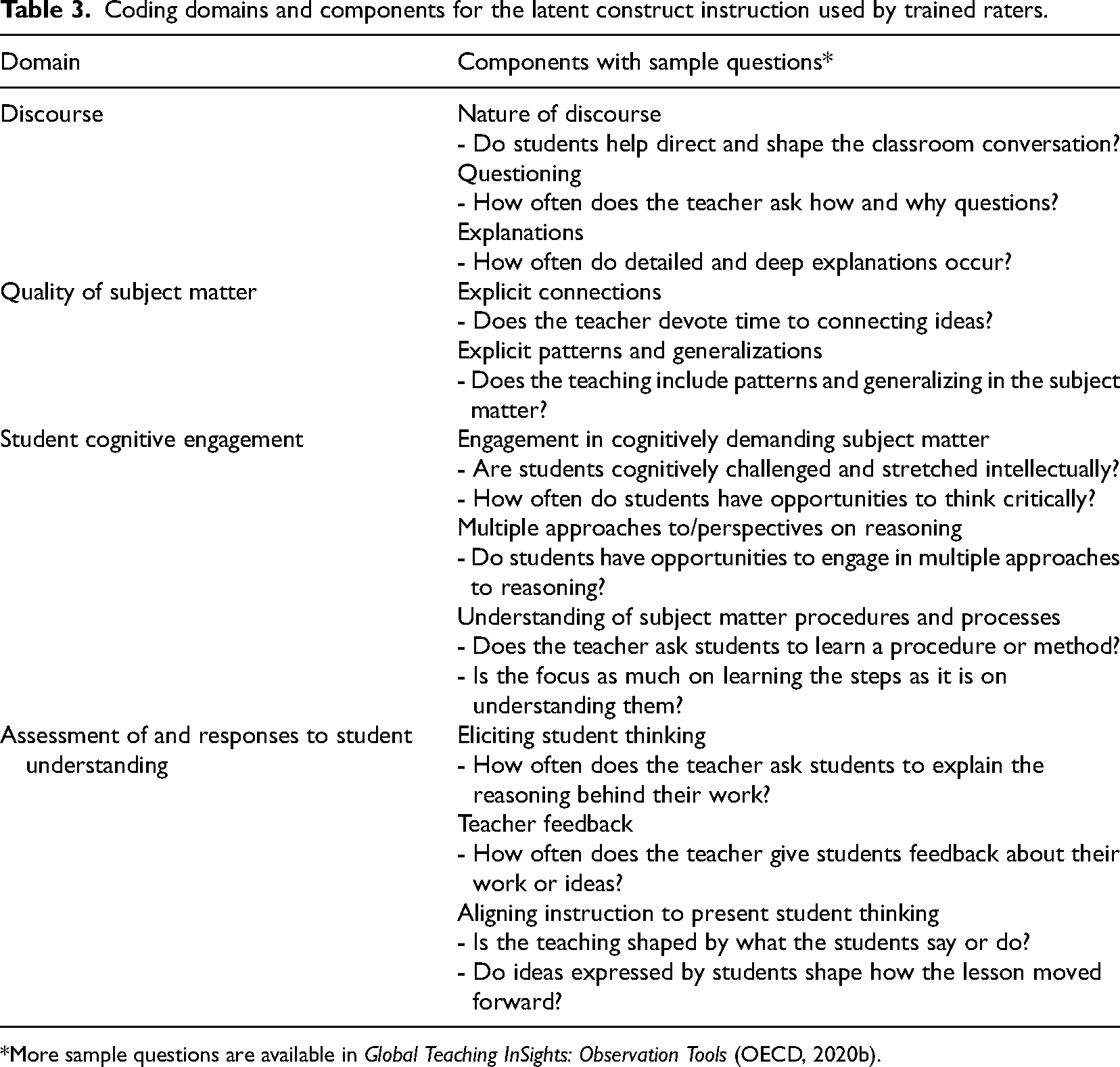

The discourse domain refers to the conversational interactions between and among teachers and students that promote active engagement, critical thinking, and the exchange of ideas. Effective discourse provides students with opportunities to articulate their ideas, respond to the ideas of others, and engage in productive conversations that deepen their understanding of the subject matter (Praetorius et al., 2020a, 2020b). This domain was assessed with three components from a 4-point Likert scale that includes the nature of discourse (whether students actively contribute to the discourse), questioning (whether the questions have a good mix of various levels of cognitive involvement and help to engage the students in critical analysis, synthesis, or justification), and explanations (whether such explanations emphasize extended and profound mathematical content) (see Table 3).

Coding domains and components for the latent construct instruction used by trained raters.

Coding domains and components for the latent construct instruction used by trained raters.

*More sample questions are available in Global Teaching InSights: Observation Tools (OECD, 2020b).

Quality of subject matter refers to the clarity and accuracy of the content and tasks as well as the ability of students and teachers to make explicit connections between the subject matter, procedures, viewpoints, and clear and appropriate representations or equations, with components that include explicit connections as well as explicit patterns and generalizations (Bell et al., 2020). This domain consists of two components: explicit connections, and explicit patterns and generalizations. Explicit connections refer to connections among subject matter ideas, procedures, or equations, between the content being learned in mathematics class and real-world contexts, and across different subject areas. Explicit patterns and generalizations refer to the extent to which teachers and students actively look for patterns in mathematical problems and use those patterns to generalize and draw conclusions (see Table 3).

Student cognitive engagement domain refers to students’ active and thoughtful interaction with the subject matter, and it encompasses a range of activities that require them to analyze, create, and evaluate information (Praetorius et al., 2020a, 2020b). It has three main components that include demanding subject matter, multiple approaches to/perspectives on reasoning, and understanding of subject matter procedures and processes, with which teachers can create a rich learning environment that promotes students’ cognitive engagement and supports their development of knowledge and skills. This domain was assessed with three components from a four-point Likert scale that includes engagement in cognitively demanding subject matter (whether teachers engage students in analytic, creative, or evaluative tasks that are also cognitively challenging and thoughtfulness demanding), multiple approaches to and perspectives on reasoning (whether students employ more than two techniques or lines of reasoning to thoroughly solve the problem), and understanding of subject matter procedures and processes (whether students regularly focus on the justification of the procedures and processes when encountering them) (see Table 3).

Assessment of and response to student understanding involves teachers positioning their instruction with their students’ thinking to provoke conceptual attainment, ensure better evaluation, and provide feedback in order to foster deeper learning (Praetorius et al., 2020a, 2020b). By eliciting students’ thinking, providing teacher feedback, and aligning instruction with students’ thinking, teachers can create a learning environment that supports the development of students’ knowledge and skills and promotes deeper learning (Praetorius et al., 2020a, 2020b). This domain was assessed with three components from a three-point Likert scale that includes eliciting student thinking (whether teachers’ questions, academic prompts, and meaningful tasks they use can bring about a variety of student contributions), teacher feedback (whether there exist common loops of feedback and thorough teacher-student discussions), and aligning instruction to present student thinking (whether teachers regularly give scaffolding to help students obtain conceptual understand when they get stuck) (see Table 3).

The rigorously trained and certified raters of the GTI study coded the recorded lessons of each mathematics teachers according to the component rating rubric for each of the domains. All component scores were rated on a 4-point scale, with 1 indicating the lowest level or least frequency while 4 indicates the highest level or highest frequency in teachers’ use of certain components during their teaching on quadratic equations (Bell et al., 2020). The detailed rubrics for each component code can be accessed in the Chapter Annex of the GTI Technical Report (International Project Consortium, 2020).

The GTI study administered both a pretest and a post-test to the participating students within 2 weeks before their teachers started the instruction of quadratic equations and two weeks immediately following the completing of instruction on quadratic equations (Praetorius et al., 2020a, 2020b). Since the 30-item pretest measured students’ mastery of general mathematics knowledge while the 25-item post-test purely assessed students’ mastery of knowledge and skills related to quadratic equations, this study used student post-test scores as the measure of student mathematics achievement to answer the third research question. Both the pretest and post-test scores were first standardized according to the mean of Shanghai sample (mean = 231.11) and standard deviation (SD = 13.93), then were rescaled on the basis of the IRT model to make it range from 100 to 300 with 200 as the mean and 25 as the standard deviation across the entire international sample in order to make the achievement data comparable across the participating countries or economies (Doan & Mihaly, 2020). There were 4 missing values in the teacher dataset (TQB08A-D), and 59 missing values in the Shanghai student dataset (SQB08 and SQB09). Missing value in TQB08A-D, SQB08, and SQB09 were replaced by 0 using zero imputation method, and 37 missing values in student post-test scores were deleted from the dataset by following the analytical recommendations of the GTI study team (Doan & Mihaly, 2020).

Data analytic approach

To answer the first research question, exploratory factor analysis (EFA) using principal axis factoring (PAF) extraction method and a promax (oblique) rotation with Kaiser normalization was performed for teacher self-reported teaching practice and observed practices as reported by students. EFA is typically used to identify the underlying factors that may explain the correlations among a set of observed variables (Finch, 2019); therefore, it is the appropriate analytic approach for the first research question that seeks to identify the conceptual components of instructional practices as reported by teachers as well as their students. Approximate fit indices including Kaiser-Meyer-Olkin (KMO) Measure of Sampling Adequacy and Bartlett's Test of Sphericity were used in determining the model fit during the EFA analysis (Finch, 2019).

To answer the second research question, we used correlation analysis to identify the possible relationship among the EFA factors extracted in the last step and the Instruction factor based upon external observers’ rating. Aggregation of student-level data to the class level was conducted before running the correlational analysis. Ordinary least square regression was then used to answer the third research question regarding the predictive power of instructional practices reported by teachers, students, and external raters in explaining the variance of student post-test scores obtained after the unit instruction on quadratic equations.

Results

RQ1: Conceptual components of instructional practices reported by teachers and students

Results from the EFA analysis show that the correlation matrix of the instructional practices reported by teachers and students is factorable as some correlation coefficients are greater than 0.30, KMO Measure of Sampling Adequacy is 0.80 for teacher level data, and 0.94 for the student level data, Bartlett's Test of Sphericity are both significant at 0.001 level; the values in the anti-image correlation matrix are small with 3% of these values being larger than 0.20 for the teacher level variables and all values being smaller than 0.08 for the student level variables, while the Measures of Sampling Adequacy (MSA) values range from 0.63 to 0.87 for teacher level individual variables and 0.91 to 0.96 for student level individual variables, which are all larger than 0.50, suggesting adequate model fit (Cerny & Kaiser, 1977; Tabachnick & Fidell, 2018).

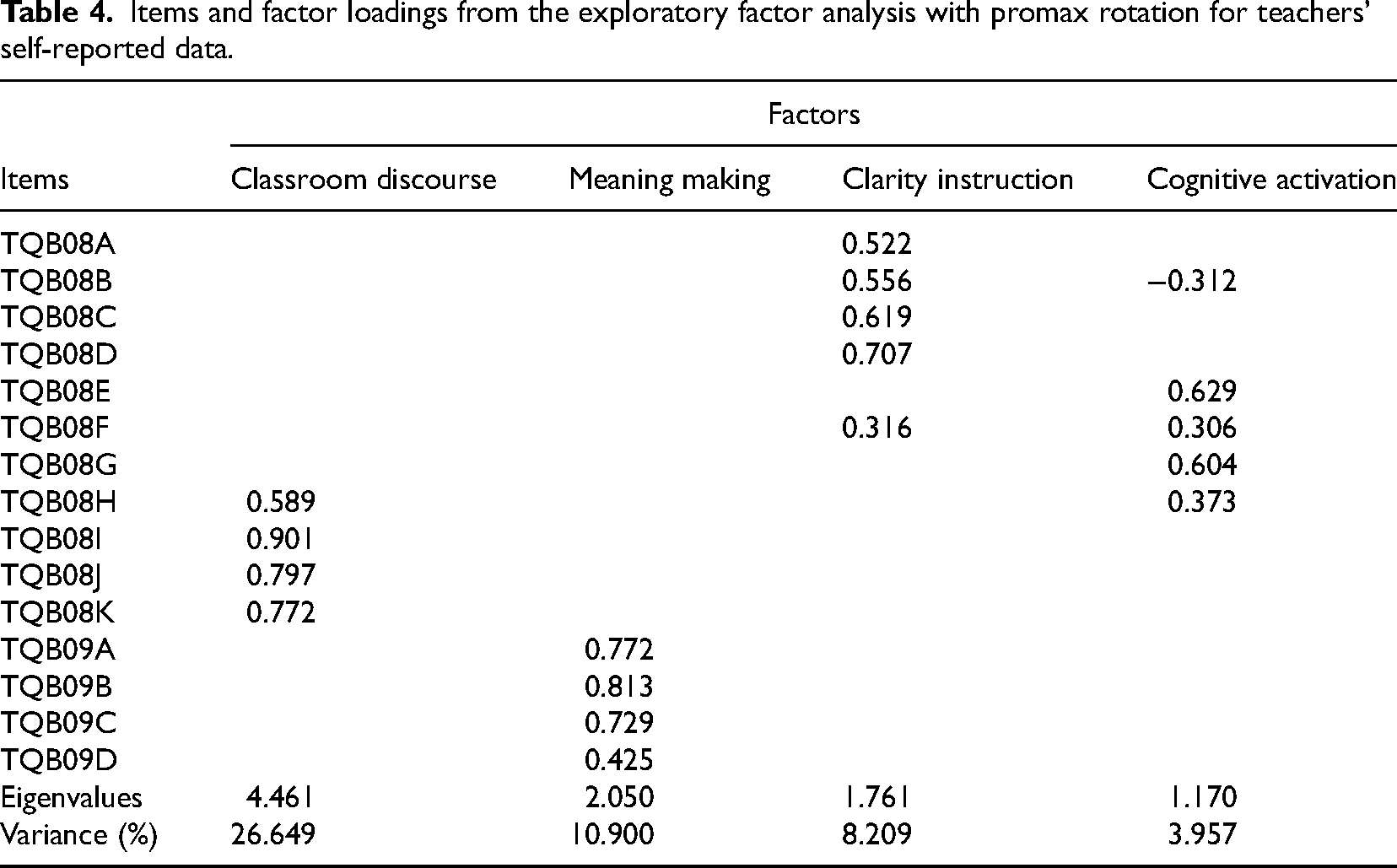

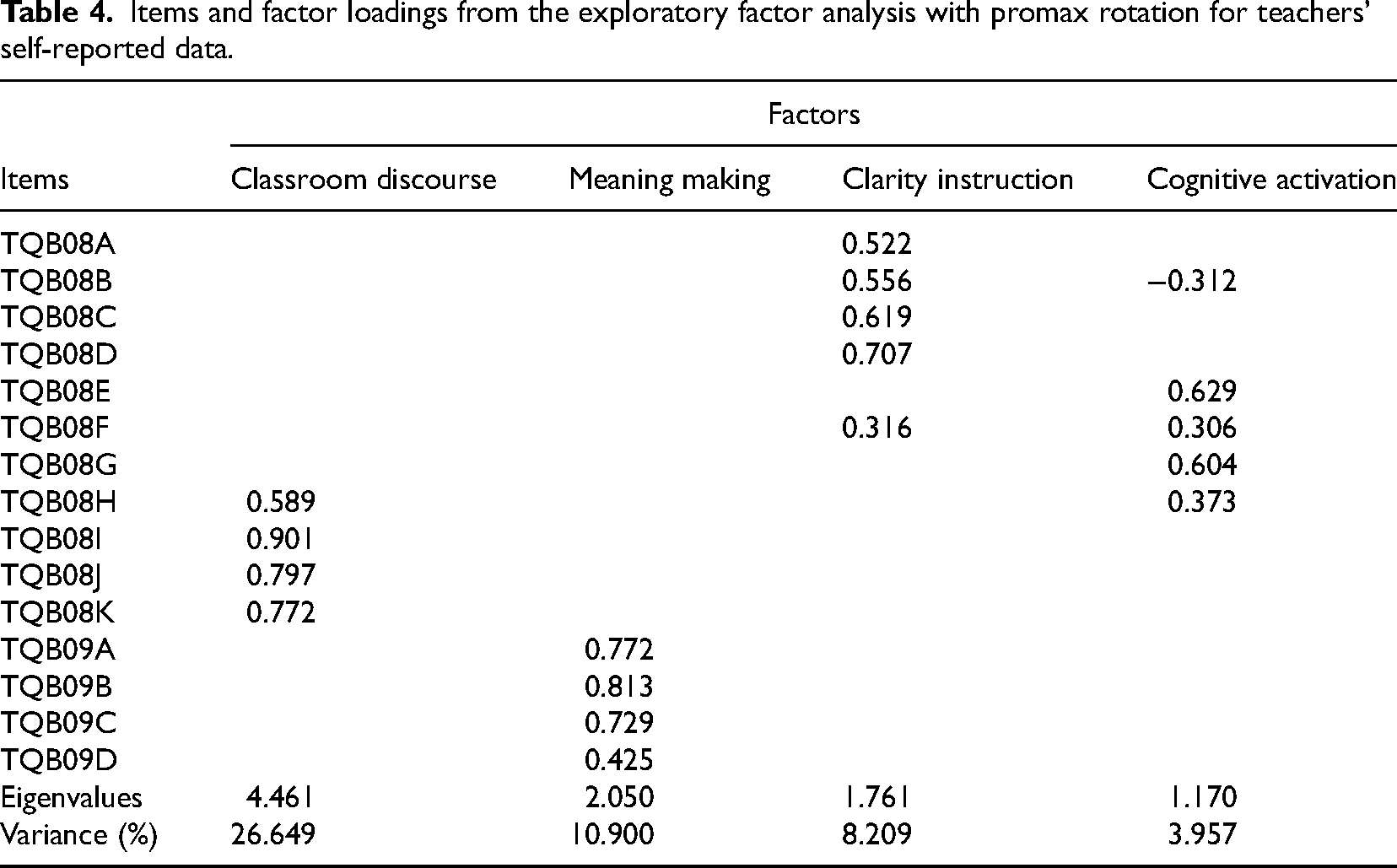

Principal axis factor analysis with Promax rotation and Kaiser normalization for the 15 teacher-reported survey items of TQB08A-K and TQB09A-D yielded four factors with eigenvalues greater than 1.0, explaining 49.72% of the total variance after rotation. Table 4 showed the factor loadings for each item on each of the four extracted factors. Items with factor loadings greater than or equal to 0.30 were considered to load significantly on a given factor. The results indicated that Factor 1 (Classroom Discourse) was primarily defined by items TQB08H, TQB08I, TQB08J, and TQB08K, Factor 2 (Meaning Making) by items TQB09A, TQB09B, TQB09C, and TQB09D, Factor 3 (Clarity Instruction) by TQB08A, TQB08B, TQB08C, TQB08D, and TQB08F, and Factor 4 (Cognitive Activation) by TQB08B, TQB08E, TQB08F, TQB08G, and TQB08H. The negative loading of TQB08B in Factor 4 might mean that this item described the opposite of this factor.

Items and factor loadings from the exploratory factor analysis with promax rotation for teachers’ self-reported data.

Items and factor loadings from the exploratory factor analysis with promax rotation for teachers’ self-reported data.

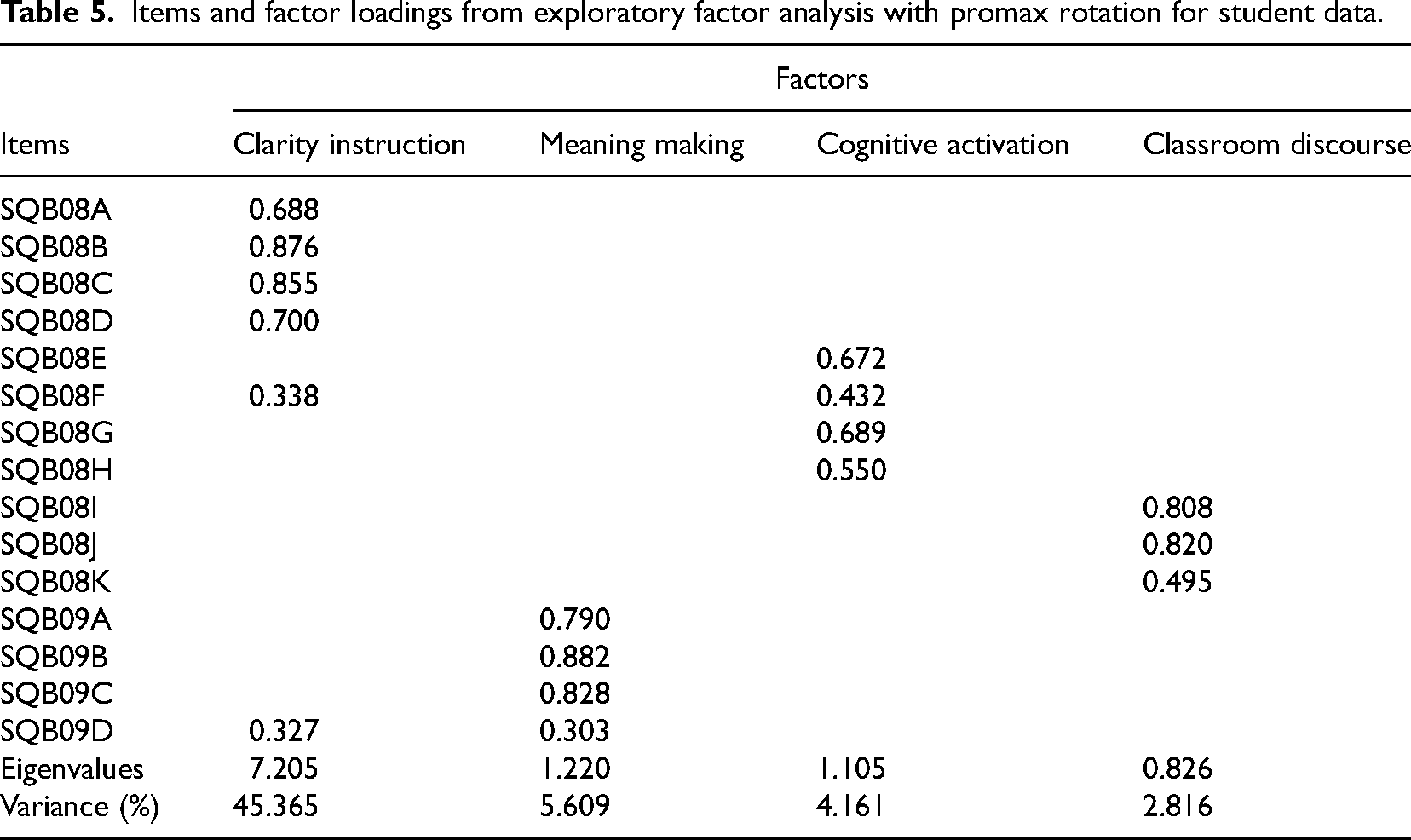

Principal axis factor analysis with Promax rotation and Kaiser normalization for the 15 student-reported survey items of SQB08A-K and SQB09A-D yielded three factors with eigenvalues greater than 1.0. Although the eigenvalue of Classroom Discourse was 0.83, it explained over 5% variance before rotation. Therefore, we included this factor in the four-factor structure that explained 57.95% of the total variance after rotation. Table 5 showed the factor loadings for each item on each of the four extracted factors. The results indicated that Clarity Instruction was primarily defined by items SQB08A, SQB08B, SQB08C, SQB08D, and SQB08F, Meaning Making was defined by items SQB09A, SQB09B, SQB09C, and SQB09D, cognitive activation was defined by items SQB08E, SQB08F, SQB08G, and SQB08H, and Factor 4, classroom discourse, was defined by items SQB08I, SQB08J, and SQB08K. Both SQB08F and SQB09D had loadings that exceed 0.30 onto two factors.

Items and factor loadings from exploratory factor analysis with promax rotation for student data.

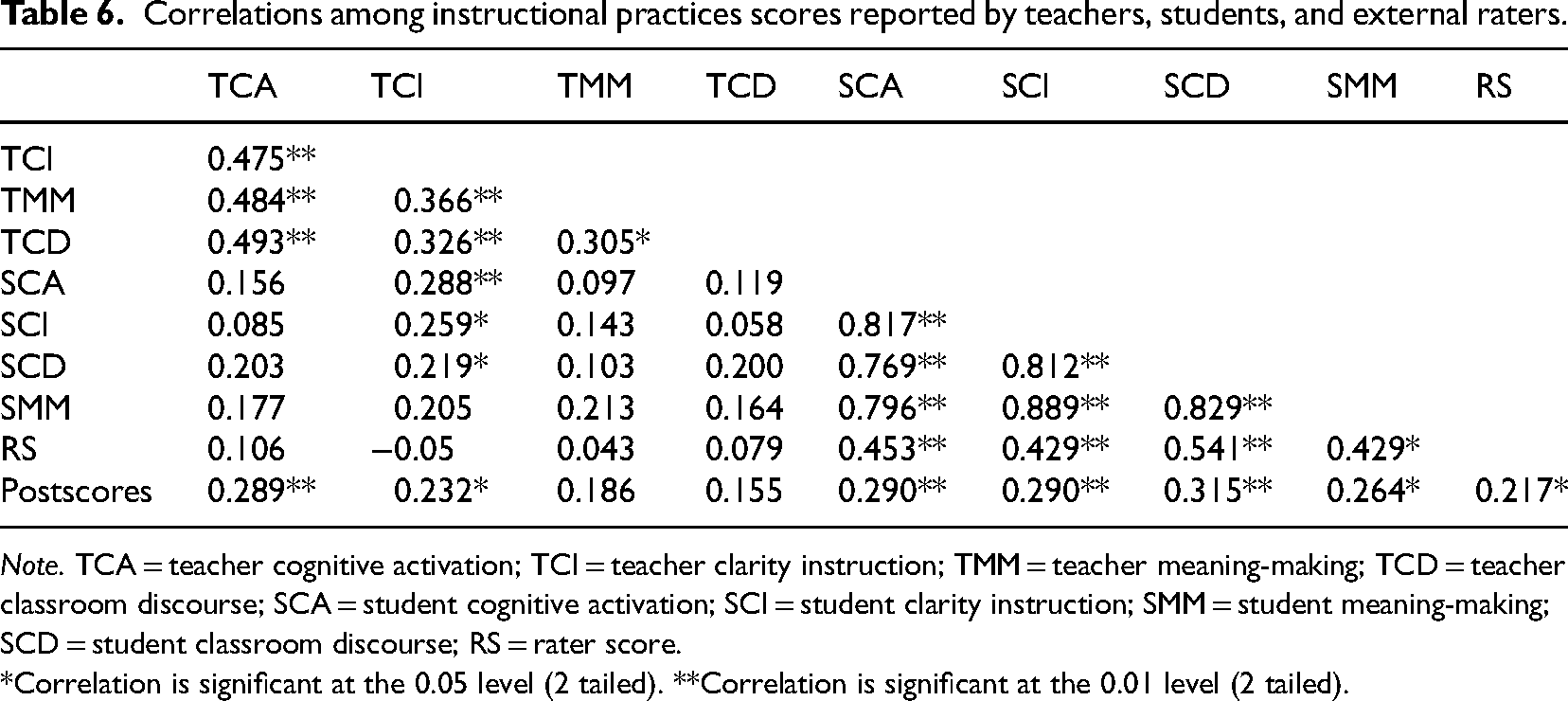

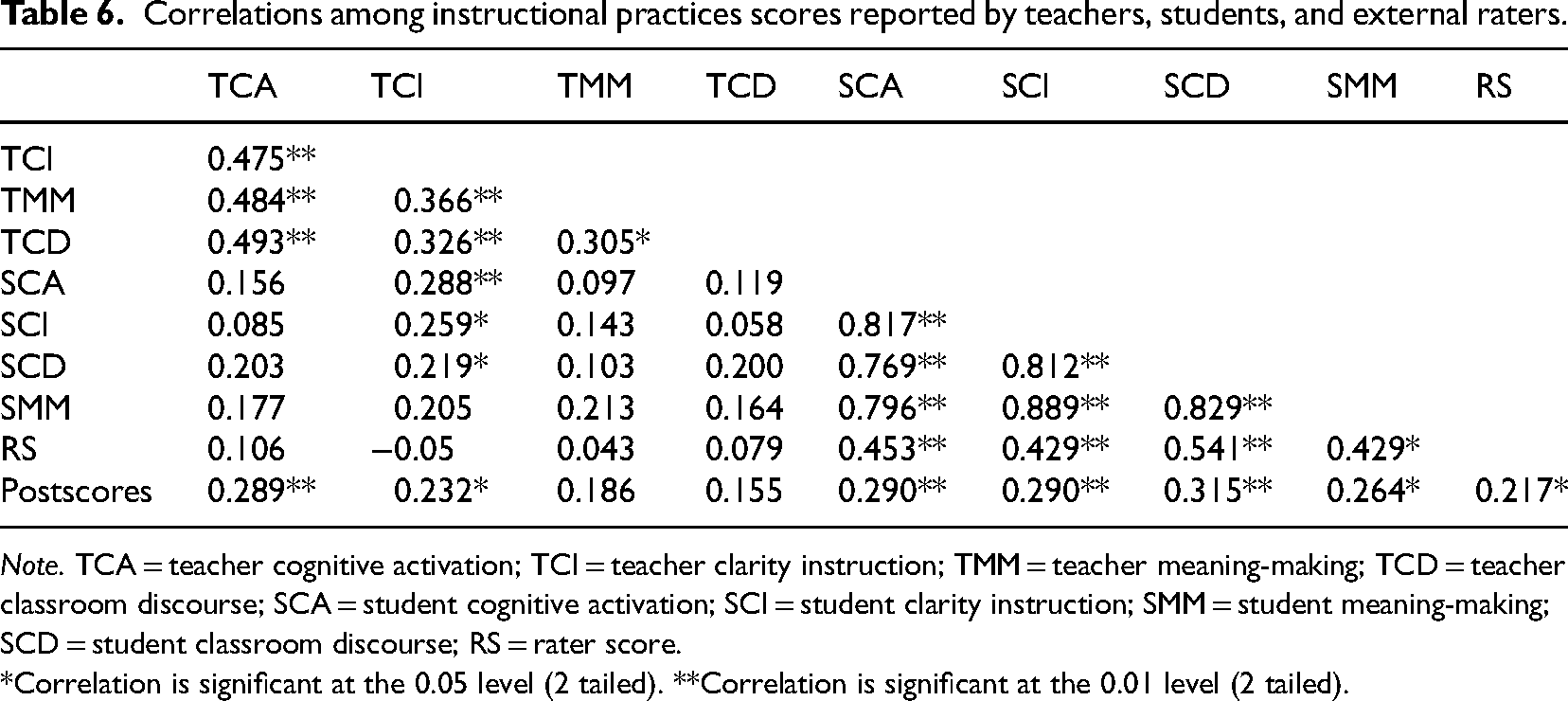

Results from the correlation analysis are presented in Table 6. The correlation coefficients of each pair of the four teacher self-reported factors range from 0.305 to 0.493, and those between the four student self-reported factors range from 0.769 to 0.889, p < .01. The correlation coefficients between the four corresponding pairs of factors at the student and teacher level were all positive but quite weak, and of the four pairs, only significant correlation was found between the clarity instruction factor pair, r = 0.259, p < .05. Additionally, all the student-reported teaching practice factors had moderate and significant positive correlations with rater-reported teaching practice factor (rRS−SCA = 0.453, p < .01; rRS−SCI = 0.429, p < .01; rRS−SCD = 0.541, p < .01; rRS−SMM = 0.429, p < .01). Lastly, three of the four teacher self-reported factors had non-significant but positive, weak correlations that range from .043 to .106 with rater-reported teaching practice factor and one factor, Clarity Instruction, had a very weak, non-significant, negative correlation with rater-reported teaching practice factor.

Correlations among instructional practices scores reported by teachers, students, and external raters.

Correlations among instructional practices scores reported by teachers, students, and external raters.

Note. TCA = teacher cognitive activation; TCI = teacher clarity instruction; TMM = teacher meaning-making; TCD = teacher classroom discourse; SCA = student cognitive activation; SCI = student clarity instruction; SMM = student meaning-making; SCD = student classroom discourse; RS = rater score.

*Correlation is significant at the 0.05 level (2 tailed). **Correlation is significant at the 0.01 level (2 tailed).

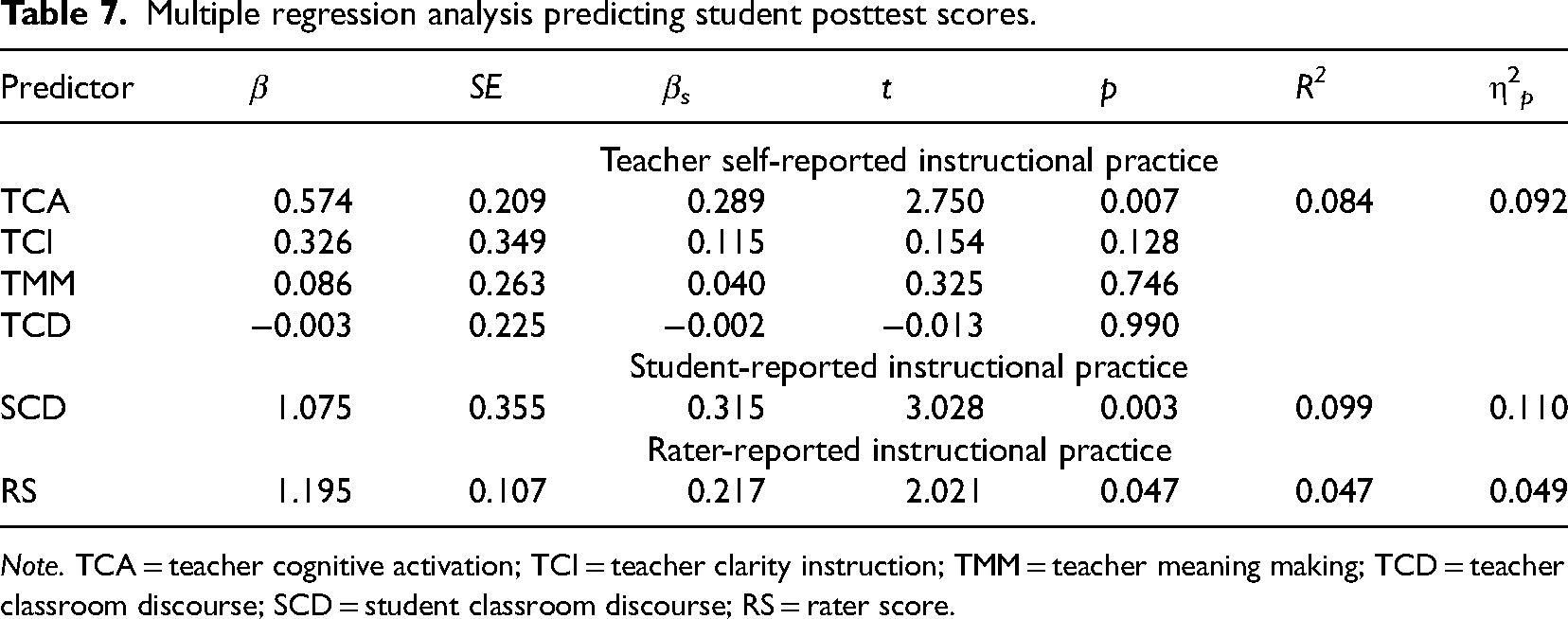

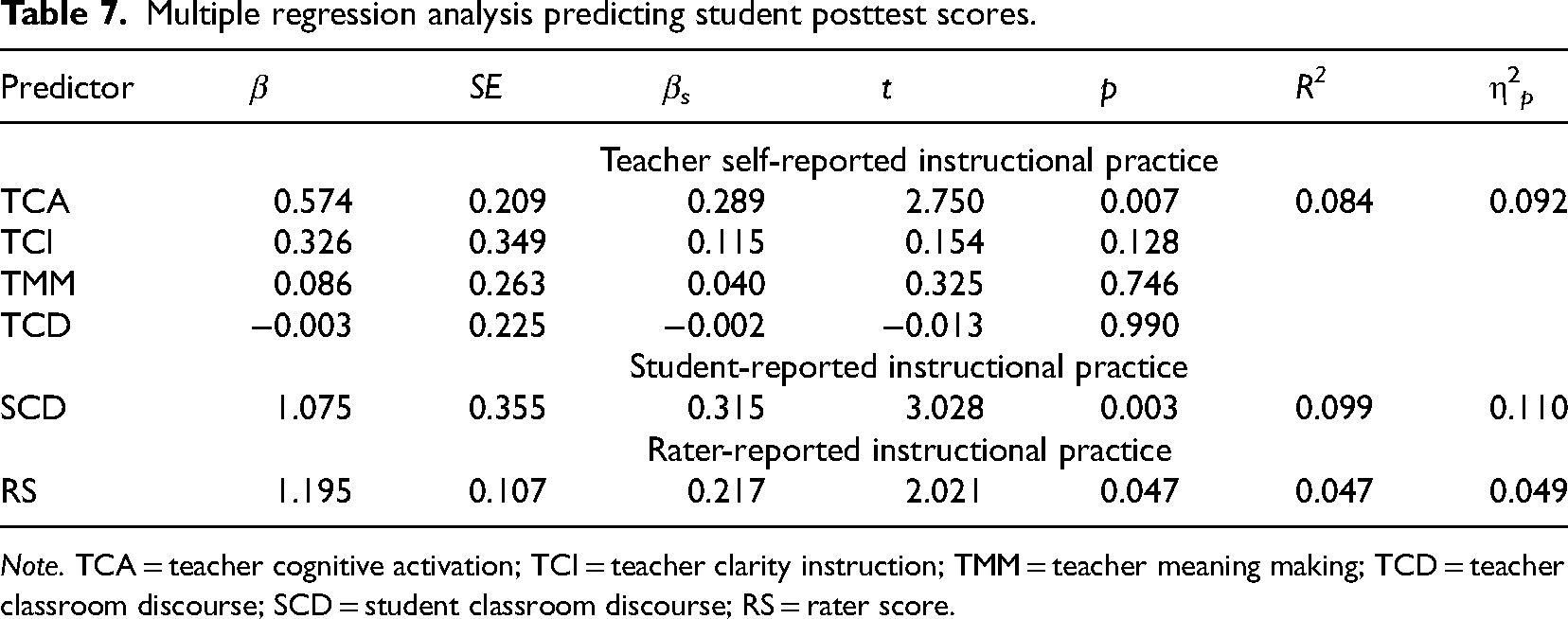

Multiple regression analyses were conducted to answer research question three. Given that the evaluation of instructional practices involves variables from three different perspectives, it is not reasonable to include all observation variables (such as teacher cognitive activation [TCA], student cognitive activation [SCA], and rater score [RS]) in the same multiple regression equation according to theoretical assumptions. As a result, this study conducted multiple regression analyses for each distinct perspective separately, and the results are presented in Table 7. Furthermore, due to the high correlations (rs > 0.70) among the four factors from students-reported instructional practices, this study adopted a stepwise method in the multiple regression analysis for student-reported instructional factors. As a result, only one significant factor, student classroom discourse (SCD), was identified and thus reported in Table 7.

Multiple regression analysis predicting student posttest scores.

Multiple regression analysis predicting student posttest scores.

Note. TCA = teacher cognitive activation; TCI = teacher clarity instruction; TMM = teacher meaning making; TCD = teacher classroom discourse; SCD = student classroom discourse; RS = rater score.

Results from the correlational analysis (see Table 6) indicated that two of the four teacher self-reported factors did not have significant correlations with student post-test scores, except TCA, rPS−TCA = 0.289, p < .01, and teacher clarity instruction (TCI), rPS−TCI = 0.232, p < .05. The multiple regression analysis conducted to examine the relationship between teacher-reported instructional practices and student achievement included four predictor variables in the model: TCA, TCI, teacher meaning making (TMM), and teacher classroom discourse (TCD). The analysis revealed that the model was significant, F (1, 83) = 7.562, p = .007, η2 p = .092. Specifically, TCA was a significant predictor of the outcome variable (β = .574, p = .007), while TCI, TCD, and TMM did not significantly predict student post-test scores (β = .326, p = .128; β = −.003, p = .990, β = .086, p = .746, respectively). The model yielded an R-square value of .084, indicating that the predictors together explained 8.4% of the variance in the outcome variable. The variance inflation factor scores (all VIFs < 1.5) indicated that multicollinearity was not a concern in the analysis.

Student perspective

Results from the correlation analysis (see Table 6) indicated that all the student-reported teaching practice factors had moderate and significant positive correlations with student post-test scores (rPS−SCA = .290, p < .01; rPS−SCI = .290, p < .01; rPS−SCD = .315, p < .01; rPS−SMM = .264, p < .05). Similar to the teacher level model, four predictor variables were included in the multiple regression analysis model to examine the relationship between student reported instructional practices and student achievement: SCD, student meaning making (SMM), student clarity instruction (SCI), and SCA. Results from the analysis showed that the overall model was significant, F (1, 2574) = 9.171, p = .003, η2 p = .110. SCD was found to be the only significant predictor of student post-test scores, β = 1.075, t (2,574) = 3.028, p = .003, and it explained 9.9% of the variance in student post-test scores (R2 = .099). The excluded variable analysis showed that SMM, SCI, and SCA were not significant predictors of student post-test scores.

Rater perspective

Results from the correlational analysis (see Table 6) indicated that the rater score was positively and significantly correlated with students’ post-test scores, r = .429, p < .01. The regression analysis model included one independent variable, rater-reported instructional practice score (RS), and the dependent variable was student post-test scores. The analysis yielded an R-square value of 0.047, indicating that the perception of instructional practices related to the domain of instruction accounted for 4.7% of the variance in post-test scores. The ANOVA results indicated that the regression model was significant, F (1, 83) = 4.083, p < .05, η2 p = 0.049. The coefficient for RS was significant, B = 1.195, t (83) = 2.021, p < .05, indicating that a one-unit increase in students’ perception of instructional practices related to the domain of instruction was associated with a 1.195-unit increase in student post-test scores.

Discussions

Using the Shanghai sample of the GTI study, the current study first examined the underlying conceptual components of the instructional practices reported by teachers and their students to answer our RQ1. Results from the EFA revealed that essentially the same four-factor structure was identified from both sources of survey items, though several survey items loaded differently on three of the four factors. Specifically, the factor Meaning Making was defined by the same items from both sources, and the items that define the other three factors, that is, classroom discourse, clarity instruction, and cognitive activation, are mostly overlapping. Such results can serve as important empirical evidence for the trustworthiness of the two types of data and we deem these findings to be very significant considering the ongoing debate on the reliability and validity of the data perceived by teachers (Mayer, 1999; McCaffrey et al., 2001; Mullens & Gayler, 1999; Porter et al., 1993; Ross et al., 2003) or reported by their students (Fauth et al., 2014; Praetorius et al., 2012; Wagner et al., 2016) in helping evaluate instructional quality. Especially, the above results resonate with what Tsai et al. (2022) found in their study that students’ reported teaching effectiveness is in alignment with theory-based structure of the survey completed by students, and therefore further clarify the concerns of some researchers (Fauth et al., 2019, 2020) who considered students’ young age and lack of survey instrument training as the potential causes in lowering the validity and reliability of student ratings of instructional practices. Nonetheless, there are still differences in both the items that define three of the four factors, and in the order and weight of the factors that contribute to the total variances of instructional practices reported by teachers and students. The differences, along with the mostly weak and non-significant correlations between each pair of the factors, might result from the inherent nature of self-reported data (Debnam et al., 2015; Hansen et al., 2014; Kaufman et al., 2016), students’ young age, or the untrained nature before students’ completing the questionnaire (Fauth et al., 2019, 2020).

For RQ2 with regard to the alignments among the three types of ratings, our study provided new evidence that all the student-reported teaching practice factors significantly and positively correlate with rater-reported teaching practice factor in the 0.429 to 0.541 range, which falls within the 0.39–0.85 range that Fauth et al. (2020) found in their study but partially differ from the 0 to 0.50 range found by other researchers (Fauth et al., 2014; Begrich et al., 2020). The current study also found weak but positive correlations between the four pairs of factors obtained from instructional practices reported by teachers and their students, and among the four pairs, only a significant correlation was found between the pair of clarity instruction factors, which confirmed the low to moderate relationship that was found between the instructional practice ratings reported by teachers and their students by previous researchers in the past decades (Desimone et al., 2010; Kunter & Baumert, 2006; Wagner et al., 2016; Wisniewski et al., 2020). With regards to the alignment between ratings reported by teachers and external observers, our study found that among the four teacher self-reported factors, three of them had nonsignificant but positive, weak correlations that range from 0.043 to 0.106 with rater-reported factor; and interestingly, one factor, clarity instruction, had a very weak, negative correlation with rater-reported factor. Our results resonate with some previous studies that reported a discrepancy, nonconsistent pattern between the two sources of evaluation (Debnam et al., 2015; Hansen et al., 2014; Kaufman et al., 2016), possibly due to the common concerns in teachers’ self-reported data (e.g., Bradburn, 2000; Devaux & Sassi, 2016; Kaufman et al., 2016; Little et al., 2009; Tourangeau et al., 2000; Van de Vijver & He, 2014). Considering that GTI external raters were rigorously trained in their use of a well-validated observation instrument and quality control was ensured in the rating process (Bell, 2020a, 2020b), results reported by the raters are deemed highly reliable and valid (Reddy et al., 2019). The nonsignificant, weak, and even negative correlations between these two sources encourage researchers to further examine the possible underlying causes.

Additionally, when comparing the predictive power of the three types of ratings to answer RQ3, our study found that only one of the four teachers’ reported factors, cognitive activation, was a significant predictor explaining 8.4% of the variance in the outcome variable; in a similar vein, only one of the four student reported factors, classroom discourse, was found to be significantly predicting student post-test scores, and the percentage of variance in student post-test scores it explained was 9.9%. The instructional practice factor obtained from external raters was also found to be significant, but it only explained 4.7% of the variance in the outcome variable, which is the lowest of the three. The results indicate that student-reported instructional quality had a greater predictive power for student mathematics achievement, and further justifies the reliability and validity of this type of data. The evidence also echoes the findings of some previous studies, albeit non-cognitive learning outcomes were often used in those studies (e.g., Lauermann & Berger, 2021; Schiefele & Schaffner, 2015; Wagner et al., 2016). As argued by Lauermann and ten Hagen (2021), students are the authentic participants and direct recipients of teachers’ instruction, which enables them to have firsthand experience of the teaching methods and strategies used in the classroom. As participants in the classroom, especially in inquiry-based mathematics classrooms characterized by active learning and collaborative environment, students are immersed in inquiry-based activities and are encouraged to explore concepts, ask questions, and construct their own understanding (NCTM, 2000, 2014). Studies have shown that when students engage in inquiry-based collaborative activities, they are more likely to develop a deeper understanding of mathematical concepts, improve problem-solving skills, and enhance critical thinking abilities (Elbers, 2003; Goos, 2004; Staples, 2007), along with better student engagement, motivation, and active participation, all leading to improved academic performance and long-term retention of mathematical concepts (Blazar, 2015; Carpenter et al., 1996). Their active involvement in the learning process may allow them to provide valuable insights and feedback on the effectiveness of teaching methods, clarity of explanations, and overall instructional quality, especially considering that this type of rating is aggregated across multiple lessons that allows for a more comprehensive and representative view of the teacher's instructional practices (Gaertner & Brunner, 2018; Lauermann & ten Hagen, 2021). Consequently, their perceptions and feedback shed valuable insights on the effectiveness of instructional practices. By considering their perspectives, researchers and educators may gain a better understanding of the factors that contribute to successful learning in mathematics.

Lastly, the two different factors from teacher and student data, that is, cognitive activation and classroom discourse, that were found to be significant in predicting student mathematics learning outcome is interesting since both factors point to the active learning category, suggesting that teaching practices that orient towards fostering higher order critical thinking skills to activate profound cognitive process and encouraging meaningful, relevant classroom discussions in mathematics classrooms are important in helping students achieve better learning results. These valuable quality instructional practices are endorsed by the researchers and policy makers in both the East Asian and US contexts (Cai & Ding, 2017; Carpenter et al., 1996; Ding et al., 2022; Fennema et al., 1996; Leung, 2001; Leung & Li, 2010), and should be promoted in all mathematics classrooms.

Limitations, implications, and future directions

Before discussing the implications of the results, we would like to note two limitations of this study. First, results from the current study are based on the Shanghai data from the GTI study that included 85 teachers and 2,613 students. Shanghai is a developed urban city in China and generalization of the results to other contexts should be made with caution. Second, the GTI study is specifically designed to explore classroom instructional practices related to one essential topic in mathematics—quadratic equations. Hence, the results should be interpreted accordingly and implications to be discussed are bounded thereof.

Results from our study indicate that the alignment between ratings reported by students and external raters is the highest among the three types of ratings, and student-reported instructional practices have more predictive power than the other two for student mathematics achievement on the post-test taken after the quadratic equations unit, which suggest that student-reported mathematical teaching practices are a better source of data for evaluating mathematics instructional quality. In the future, program designers might need to give more weight to student-reported instructional practices and provide students with training before using the instrument to help students discern various dimensions of teaching and therefore improve the validity and reliability of such data (Fauth et al., 2019, 2020; Tsai et al., 2022). Additionally, more studies can be conducted to evaluate if the same result can be found in other grade levels and subject areas such as science or language arts education.

Furthermore, the result that classroom discourse in student-reported instructional practices emerged as the most significant factor contributing to student achievement suggests that in mathematics teaching, teachers should prioritize the development of a supportive learning environment and positive classroom norms to foster strong teacher-student and student-student relationships. When students feel safe and have a positive rapport with their teachers and peers, they are more likely to actively participate in class discussions, ask questions, and share their thoughts and ideas related to the concepts and skills they are learning (Nathan & Knuth, 2003). This engagement can enhance their understanding and ultimately contribute to improved mathematics achievement. Classroom discourse has gained much support from the mathematics education reform initiatives in China (Ministry of Education of the People's Republic of China, 2011, 2022) as well as in the West, especially in the United States (Council of Chief State School Officers, 2010; National Council of Teachers of Mathematics, 2000, 2014), and was also endorsed by numerous studies (e.g., Nathan & Knuth, 2003; Zhao et al., 2016). More effort is needed to devote to analyzing the nuances of classroom discourse, aiming to enhance its effectiveness in facilitating the optimal learning and teaching of school mathematics.

Lastly, GTI's design is unique in that it incorporates three different types of measures from teachers, students, and external raters to evaluate instructional practices (Opfer, 2020). Given that only teachers and students responded to the same types of survey questions in GTI, efforts should be made to better align the perspectives of teachers, students, and external observers on what constitutes effective instructional practices; thus, new waves of GTI and other large-scale studies might consider designing the same instrument that can be modified to be used by teachers, students, and external raters in their rating of classroom instructional practices, which would potentially better facilitate the comparison of the validity and reliability of the three types of measures, ultimately clarifying the inconsistencies and discrepancies found in existing studies.

Conclusion

Using the Shanghai data from the GTI study, this study first compared the conceptual components in the instructional practices reported by teachers and their students. Subsequently, it examined the level of alignment among the instructional practices reported by the three sources. Lastly, it investigated the predictive powers of these three types of ratings for student mathematics achievement. It was found that the same four conceptual components were identified in the instructional practices reported by teachers and their students, with the cognitive activation factor in teacher-reported data and the classroom discourse factor in student-reported data as the most significant ones respectively in predicting student post-test scores. Furthermore, when comparing the three ratings, we found the alignment between ratings reported by students and external raters to be the highest. Notably, student ratings of their mathematics teachers’ instructional practices displayed the highest predictive power for students’ post-test scores. These findings from our study provide crucial empirical evidence that highlights the importance of cognitive activation and classroom discourse in mathematics instruction. We urge researchers, practitioners, and policy makers to pay close attention to the instructional practices reported by students, as they can serve as a valuable and reliable source of data for evaluating the quality of mathematics instruction.

Footnotes

Acknowledgements

The authors would like to extend their sincere appreciation to the anonymous reviewers for their insightful comments, and to Jeanine Raush, Ed.D., for her professional assistance in proofreading the draft of the manuscript.

Contributorship

Qiang Cheng conceptualized and designed the study with inputs from Shaoan Zhang and Jinkun Shen. Jinkun Shen and Qiang Cheng contributed to the data analysis and result reporting. The first draft of the manuscript was written by Qiang Cheng. Shaoan Zhang and Jinkun Shen provided comments on previous versions of the manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.