Abstract

The National Institutes of Health (NIH) supported the Rapid Acceleration of Diagnostics-Underserved Populations (RADx-UP) program, which aims to ensure that all Americans have access to COVID-19 testing and promotes health equity, especially in underserved communities. Under RADx-UP, there are two pilot programs – Community Collaboration Grants (C2G) and Rapid Research Pilot Program (RP2) – that fund projects to conduct community-engaged outreach and research to address COVID-19 testing disparities. As such, we sought to assess projects’ health equity impacts within their priority populations. Our evaluation team used cognitive interviews with C2G and RP2 project representatives to revise health equity metrics within an existing Health Impact Assessment (HIA) tool to measure health equity impacts within these community-engaged research projects. During interviews, participants indicated that we needed to improve the clarity and readability of key terms and phrases related to health equity, and they provided suggestions for how to tailor metrics to community partners. In this paper, we highlight our process of adapting the original metrics and share lessons learned that other evaluators may apply to their work. Our project highlights the importance of cognitive interviewing as a critical methodology to tailor an existing pilot-tested HIA to a community-based audience; however, it also sheds light on the difficulties of measuring health equity within community-engaged research initiatives and future recommendations.

Introduction

Cognitive interviewing is a useful method for researchers and evaluators to assess if survey instruments are being interpreted correctly by the intended audience. 1 Research demonstrates that items and abstract terms are often subject to misinterpretation by the intended audience, even when they seem clear to researchers. 1 This is especially true for researchers attempting to assess health equity within large research initiatives in the area of public health. We sought to evaluate the health equity impacts of a community-engaged research (CEnR) initiative, the National Institute of Health (NIH)-supported program, Rapid Acceleration of Diagnostics in Underserved Populations (RADx-UP). To measure the health equity impacts of this initiative, we identified an existing Health Impact Assessment (HIA), 2 and utilized cognitive interviews to measure how RADx-UP community partners were interpreting health equity-related items within the HIA. In this paper, we detail our process of utilizing cognitive interviews to adapt existing health equity measures, and share lessons learned for other evaluators to consider.

First, it is important to contextualize the RADx-UP program, which was established in 2020 to ensure that all Americans have access to COVID-19 testing and to promote health equity, especially in underserved and COVID-19 vulnerable communities. 3 Under RADx-UP, there are two pilot programs that provide funding to projects conducting CEnR to address COVID-19 testing disparities — the Community Collaboration Grant (C2G) program and the Rapid Research Pilot Program (RP2). The C2G program aimed to improve capacity for COVID-19 testing in vulnerable and underserved populations, as defined by the NIH. 4 Similarly, RP2 aimed to increase the reach, access, acceptance, uptake, and sustainment of Federal Drug Administration (FDA) approved COVID-19 diagnostics among vulnerable populations in geographically underserved areas. 5 Both C2G and RP2 projects implemented CEnR using a collaborative approach to engage community members, organizational representatives, and researchers throughout their work the experience. 6

Therefore, CEnR, conducted through projects like C2G and RP2, has the potential to greatly impact health equity, and evaluators must figure out ways of assessing health outcomes and evaluating progress toward closing health gaps between advantaged and disadvantaged populations.7,8 By focusing on health equity, evaluators can frame findings in a positive light, rather than highlighting existing inequalities and disadvantages, 7 and share how research initiatives contribute to progress. However, scholars have documented the ambiguity in the definitions of health equity, 9 which means measuring health equity is also a challenge, one exacerbated by limited methods to document best practices and strategies for doing so.8–11

Not only is health equity difficult to measure, but few tools, if any, take into consideration the nuanced ways CEnR impacts health equity. Nonetheless, HIAs have emerged as a tool for public health practitioners, researchers and evaluators to assess the health impacts of a program, policy, project, or plan and describe potential distributions among populations.10,12–14 While equity is considered a core tenant of HIAs, there is a need for more guidance and practical resources in how to address equity in the HIA process.2,10 In this study, we detail how we utilized cognitive interviews to adapt an existing HIA tool for RADx-UP pilot projects' community partners. 2

About the Original HIA Tool

The original HIA tool 1 that we adapted was created and piloted by the Society of Practitioners of Health Impact Assessment (SOPHIA) Equity Working Group in 2014. The HIA tool was created for HIA practitioners and evaluators to measure progress toward equity. The authors of the original tool indicated that the tool and included metrics could be used by practitioners and evaluators for various purposes, including employing the metrics as a self-reflective exercise, comparing several HIAs using a subset of the metrics, or planning approaches to measuring equity. As such, we sought to adapt the tool into a survey to measure C2G and RP2 project’s health equity related outcomes.

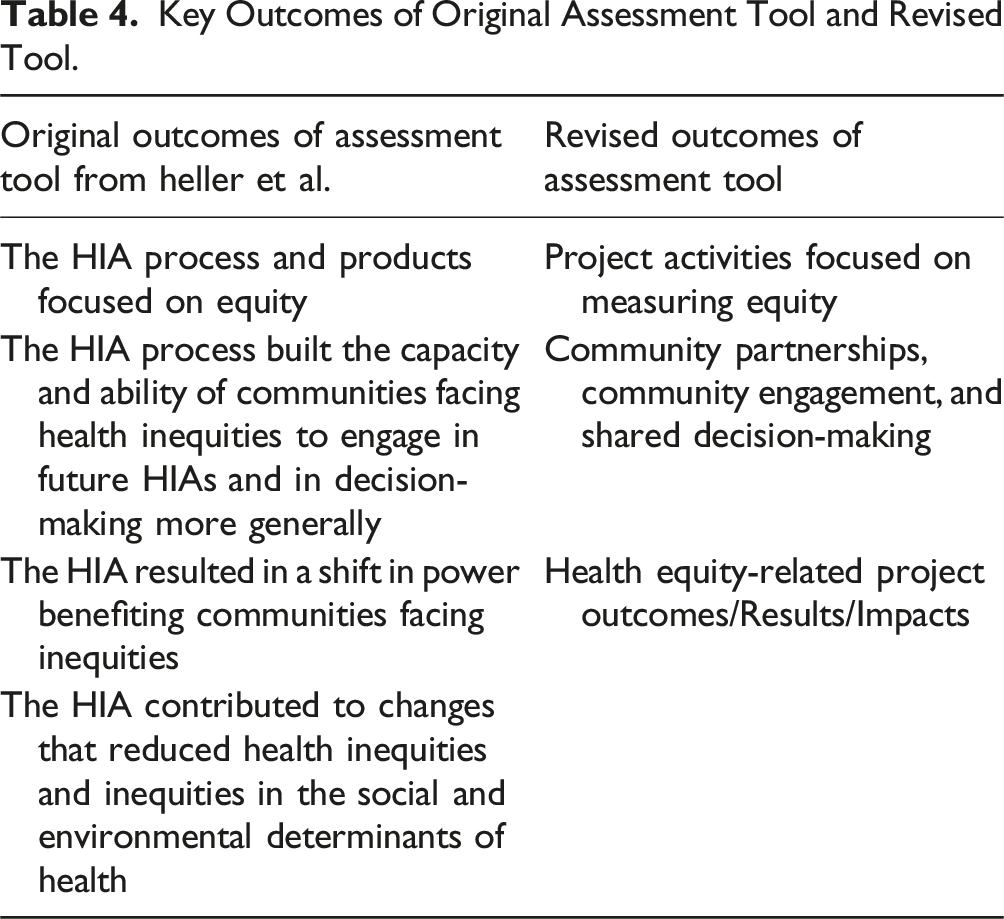

The HIA tool has 23 metrics of health equity that are organized into four outcome areas: (1) the HIA process and products focus on equity; (2) the HIA process built the capacity and ability of communities facing health inequities to engage in future HIAs and in decision-making more generally; (3) the HIA resulted in a shift in power benefiting communities facing inequities; and (4) the HIA contributed to changes that reduced health inequities and inequities in the social and environmental determinants of health. 2 SOPHIA suggests that the final two outcomes are achieved when HIAs successfully achieve the first two. Beyond the metrics, the tool also includes data collection suggestions for practitioners and evaluators, as well as measurement scales for each metric.

Current Study

In this study, we adapted the original tool developed by the SOPHIA Equity Working Group for community partners and transformed the HIA tool into a revised survey format. We utilized cognitive interviews to assess C2G and RP2 community partners’ interpretation of the original tool’s four outcome areas, 23 metrics, and various measurement scales. After the interviews, we revised the outcomes, metrics, and scales and transformed the original tool into a survey. In this paper, we describe how we utilized cognitive interviews as a method to adapt the existing HIA metrics to fit the target RADx-UP program population, and we provide an overview of the findings from cognitive interviews, highlighting key revisions made to the original HIA tool. We conclude with a reflection of the challenges measuring health equity impacts of individual projects and entire programs and the usefulness of cognitive interviews to revise existing HIAs.

Methods

Design and Rationale

We selected a mixed methods approach to adapt and revise the original HIA evaluation tool as outlined by Heller et al. 2 We utilized both qualitative and quantitative data collection and analysis methods, recognizing that each approach offers unique insights into the challenges of measuring health equity in the context of CEnR. First, we copied the HIA evaluation outcomes, metrics, and measurement scales into a basic document and retained all the original language from the tool. We shared this document with participants in real time over Zoom during cognitive interviews to assess C2G and RP2 participants’ understanding of health equity concepts, the original HIA tool’s language, and their perspectives on the HIA’s applicability to their projects. Prior research has documented how cognitive interviewing can be a powerful technique to help survey developers understand respondents’ interpretation of and thought processes while engaging with the survey.1,14

Second, we revised the HIA tool’s outcomes, metrics, and measurement scales based on cognitive interview findings. As we analyzed the cognitive interviews, we discovered opportunities to identify and address flaws in the HIA design or misinterpretation of metrics or scales. Previous research finds that this process can enhance a survey’s reliability and validity.14,15

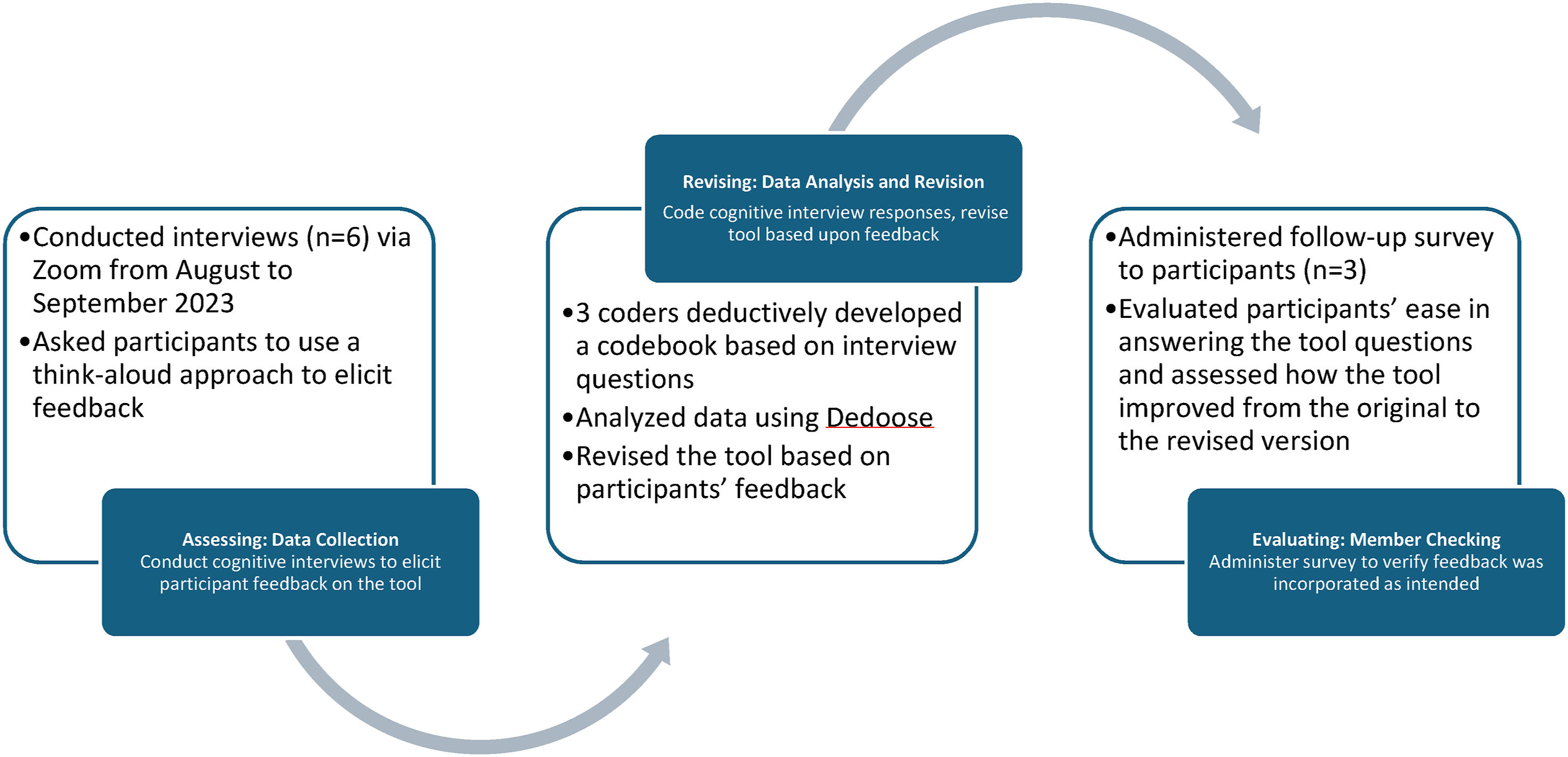

Third, after we revised the HIA tool’s outcomes, metrics, and scales, we transformed the tool into a revised survey format. We then asked participants to re-evaluate the updated tool through this new survey. We sent participants the revised tool via Qualtrics, asked them to review the revised items, and answer questions about the usability, relevance, and ease of completing the new survey. We did this as a form of member checking, a process used to determine the validity of data by actively involving participants in the research process to check the interpretation of qualitative results,16,17 and, in this case, how successfully we implemented the survey revisions utilizing the qualitative data. Figure 1 details our mixed methods approach to assess, revise, and evaluate our adaptation of the original tool. In this paper, we highlight our findings from analyzing both the cognitive interview and survey data, and we present our findings in alliance with our methods – assessing the original HIA; revising the original HIA; and evaluating the revised HIA. Assessing, revising, and evaluating process to adapt the impact assessment tool.

Sample and Recruitment

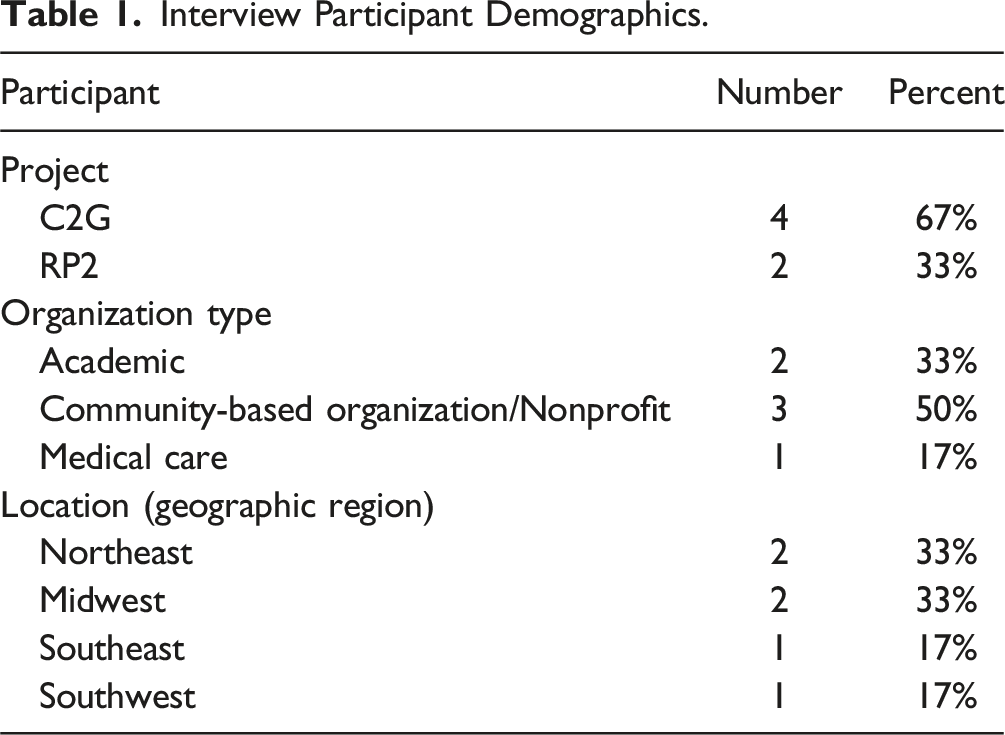

To ensure representation from both RADx-UP programs (C2G and RP2) as well as maximize insights gained through cognitive interviewing, we employed a purposive sampling strategy.18,19 Previous research indicates that the sample size for cognitive interviews varies based on several factors including the purpose of the interview, how long it takes to reach saturation, and economic and time related factors.19–22 Because the goal of cognitive interviewing is to improve a survey questionnaire’s quality, research suggests that a small sample size is sufficient to produce informative observations about the survey quality.21,23,24 With this in mind, we successfully recruited six participants recognizing that a smaller sample size could provide valuable insights and improve the tool’s items.

Interview Participant Demographics.

Cognitive Interview Data Collection and Analysis

From August to September 2023, we conducted cognitive interviews via Zoom. The interviews lasted about one hour and were recorded and transcribed. One member of the team facilitated the interview while another member took notes using a structured note taking template. We developed a semi-structured interview guide to assess participants’ interpretation of the original HIA metrics. 2 During interviews, we shared a document with the original HIA tool’s outcomes, metrics, and measurement scale with participants on the Zoom screen, and we used a ‘think-aloud’ approach, prompting participants to verbalize their thought processes as they interacted with each outcome, metric, and scale.1,15 Probing questions included asking participants to explain what the metric is asking using their own words, to indicate if any words or phrases were confusing or difficult to understand and why, and to describe in what ways a question was difficult to answer given the scale provided. This technique allowed us to gather rich, qualitative data on comprehension, clarity, structure, and perceived difficulty. A team of three coders deductively developed a codebook based on the interview questions. See Appendix A for our codebook. Then, we analyzed data using Dedoose, 26 a web-based qualitative analysis software, and revised the original HIA tool into a revised survey based on participants’ feedback.

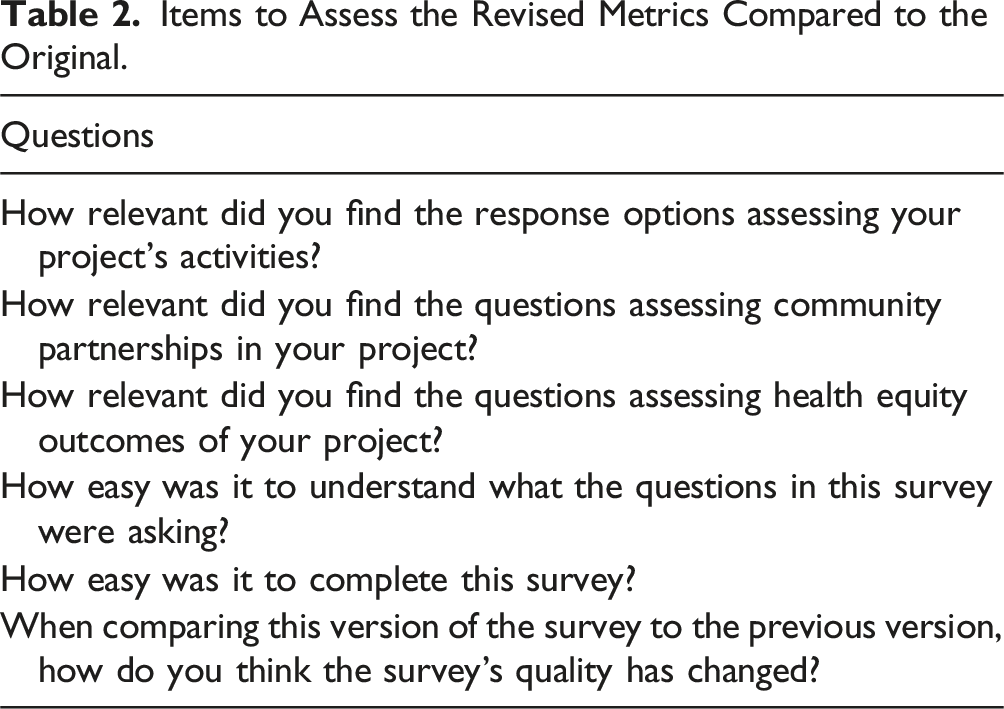

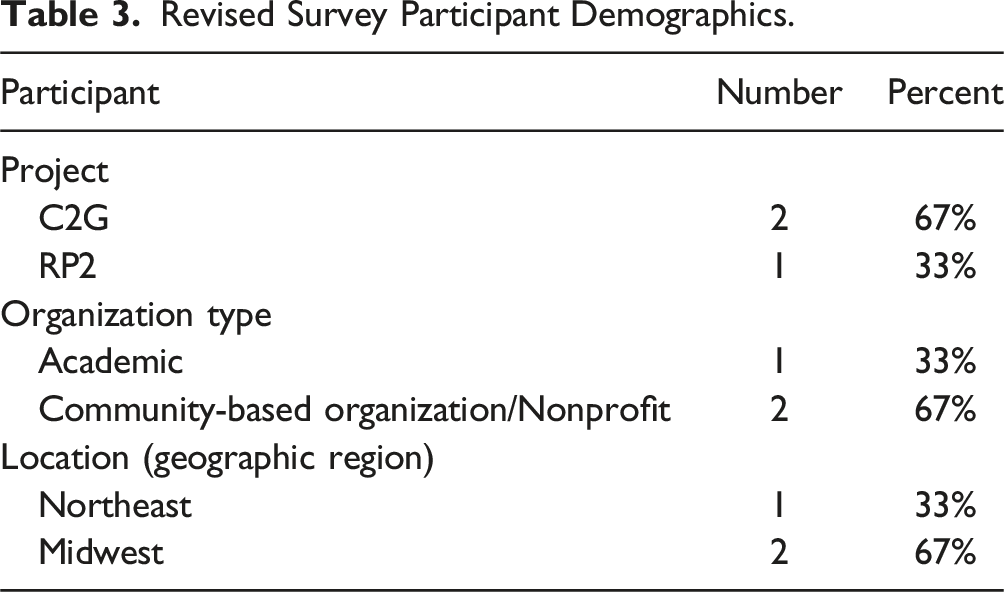

Revised Survey Data Collection and Analysis

Items to Assess the Revised Metrics Compared to the Original.

Revised Survey Participant Demographics.

Findings

Assessing the Original Tool – Cognitive Interview Findings

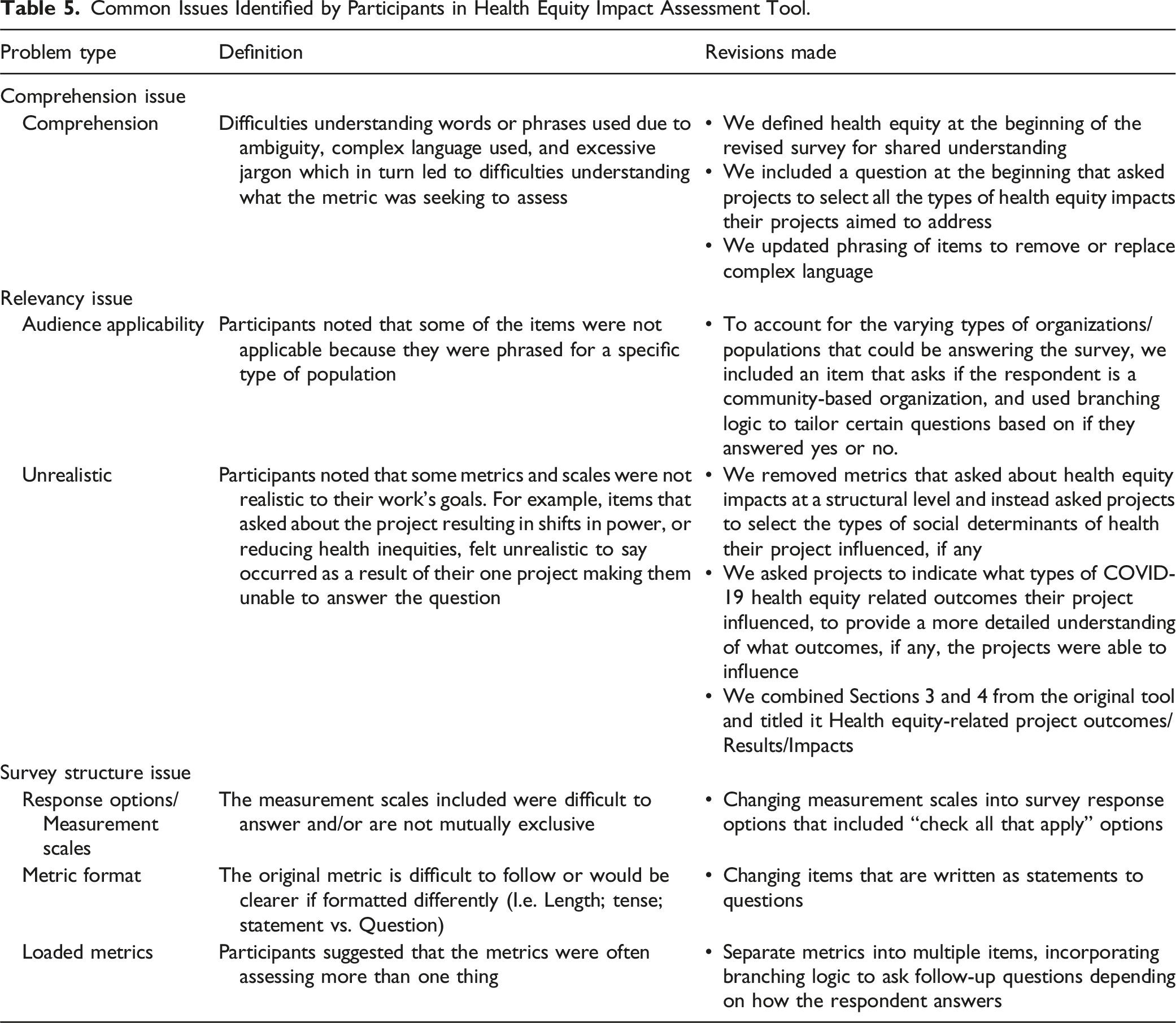

We asked each participant to read through each outcome, metric, and measurement scale as they were originally written in the HIA during facilitated Zoom discussions. Upon doing so, cognitive interviews revealed that the original HIA tool was not easily applicable to the work that C2G and RP2 projects were doing, and that health equity language held different meanings for different participants. Participants highlighted two key challenges with the original tool: (1) comprehension barriers – many struggled to understand some of the metrics and language around health equity their underlying concepts; and (2) relevance gaps – participants found it difficult to connect metrics related to power and reduced inequities to their specific projects. This section explores these two themes in greater detail, examining participants’ feedback on the tool’s limitations in capturing their experiences and perspectives on health equity.

Health Equity Language and Related Survey Items Were Difficult to Comprehend

Participants indicated that they had difficulty with certain words or phrases throughout the tool and/or dissecting metrics that were long and complex as they related to health equity. Both the language around health equity and the structure of the metrics and measurement scales were difficult for participants to comprehend, which prevented participants from providing reliable answers about how their projects’ impacted health equity.

As participants talked through how they were interpreting metrics, they articulated that certain words and phrases used throughout— institution, community partners, social health outcomes, equity impact, and adverse impacts — were difficult to understand or could hold various meanings. For example, one item asked participants if their research included follow-up items for adverse impacts; interviewees indicated they were not sure what was meant by adverse impacts: “‘Adverse impacts’ makes me think like – oh, gosh! We did something terrible to offend somebody! Or our survey is like causing someone a challenge. That, to me, seems more extreme. But I don't think that's what you're asking... But adverse impacts might be interpreted differently by different people. So, clarifying exactly what that means, because adverse impacts could be like is there something negative happening to this population because of? Or is it we didn't reach as many people as we planned?” (R1).

Thus, this participant indicated that an adverse impact could be interpreted differently by different people, which poses a problem in collecting reliable data to understand how projects accounted for adverse project impacts, and how that influenced equitable project outcomes.

Most notably, the term “equity impact” itself was not clear to participants. While reading a metric that asked if the project plan included clear goals to monitor health equity impacts, a participant asked, “what’s an equity impact?” (C1). Without a clear understanding of an equity impact, the participant had a difficult time determining how best to answer the question, and the interviewer and participant had to negotiate a shared understanding for the participant to answer the question.

Some of the metrics and measurement scales assessing health equity were also long and complex, which impacted participants’ comprehension. For instance, one participant had difficulty answering the following item: “Distribution of health and equity impacts across the target population were analyzed (e.g. existing conditions, impacts on specific populations predicted) to address inequities.” The participant thought aloud: “Distribution of health inequity impacts across the target population were analyzed, existing conditions, impacts on specific populations predicted. Oh, man, that's a lot of words... Oh, let's see...Distribution of health inequities, impacts across target population were analyzed to address inequities... I don't know what I'm answering, this one is throwing me off. I can't. I don't know how to apply these answers to what I just read... I don't even I don't know what we're talking about anymore. I think I'm gonna have to pass on this one” (C1).

Although the metric aimed to assess if equity impacts were analyzed across projects’ priority populations, participants could not easily understand what the question was asking or the measurement scale, and thus, were not able to answer the question on how they analyzed health equity impacts across populations.

Finally, participants indicated that questions included too much jargon overall: “I don’t know who the target for these for this survey is, but it’s not easy to read and the questions [are] too wordy, too many big words” (C4). Therefore, participants suggested simplifying the tool to increase readability and response rates: “The simpler you make it for people to just respond and click through the more responses you get, which is great. And then also, the more likely it is that you’re going to get actually usable data because everyone will understand what’s being asked of them” (R1).

Overall, participants were not able to answer most of the original survey questions easily or quickly, which impacted their ability to complete the survey and provide reliable answers related to health equity impacts. We recognize that participants’ difficulty answering metrics was due in part because the original HIA tool was not written in a survey format, but these interview insights allowed us to apply participants’ feedback to our revised survey.

Survey Sections That Assessed Health Equity at a Structural Level Were not Applicable to Individual Projects’ Work

Participants also had a difficult time relating metrics in two outcome sections of the original tool — “shifts in power” and “reduced inequities” — to their projects’ work. They felt these outcome categories are imbedded within steep power hierarchies and structural inequalities that their projects alone could not improve on their own.

For example, one metric asked if projects led to more responsive, transparent, and inclusive institutions; a participant read this aloud and noted that this was not a realistic goal or result of their project: “As a result of your project's work, institutions are more transparent, inclusive, responsive and collaborative with communities facing inequities and community organizations that serve these communities. Again, the question is asking for things that I don't think are possible... I just don't think it's realistic that these things will happen as the result of one project” (C4).

Similarly, participants felt that improving health outcomes beyond COVID-19 may have not been possible to measure from their individual projects. For example, one item read, “In addition to your project’s work on COVID-19 health outcomes, has the project influenced physical, mental and social health outcomes within the community?” The response options included: (1) No change in other health outcomes; (2) Communities facing inequities experience improvements in additional health outcomes; (3) Communities facing inequities experience improvements in additional health outcomes and minimize health disparities; and (4) Data not available yet. When thinking aloud, one participant said: “I don't feel quite confident that we say, hey, a physical, mental, and social health outcome have been definitely improved [from] our project. But I certainly can see there are many overlaps or work that we've done with the community members that are involved in improving the physical, mental, and social health for the community members. So that's my answer, and I don't really see [an option] that fits for my answer” (R2).

Although all participants’ work sought to improve health outcomes, they did not feel it was appropriate for them to say whether their C2G or RP2 projects led directly to any shifts in power or reduced inequities beyond COVID-19. This finding suggests that metrics assessing health equity at a structural level were not applicable to individual projects’ activities.

Revising the Original Impact Tool

Based on interview findings, we revised the tool’s outcomes, metrics, and measurement scales into a revised survey format. The changes that we made to the original tool are detailed below, and the revised survey is included in Appendix B.

Revising Outcomes

Key Outcomes of Original Assessment Tool and Revised Tool.

Revising Metrics and Measurement Scales

Common Issues Identified by Participants in Health Equity Impact Assessment Tool.

To address comprehension issues, we updated phrasing to remove excessive jargon and remove or replace overly complex terms so that the language used was easy to understand. We also included definitions of terms such as ‘health equity’ and ‘community capacity’ to ensure a shared understanding among individuals.

Given participant feedback regarding audience applicability, we included an item that asked if the respondent is from a community-based organization, and used branching logic to tailor certain questions based on if they answered yes or no. To address feedback regarding unrealistic questions, specifically challenges related to assessing health equity impacts on a structural level, we simplified relevant questions to better fit project activities. For example, rather than asking about shifts in power and reduced inequities, we asked projects if they influenced COVID-19 health equity related outcomes as well as social determinants of health, utilizing ‘check all that apply’ to allow projects to select what item(s) their project had influenced.

Survey structure changes included updating the structure and format of the tool. The metrics of the original tool were written as statements, rather than questions, so we revised all the metrics to be written in a question format. Beyond that, we added branching logic to avoid stacked questions; both updates were intended to present information in an easier to read format. We also increased the number of ‘select all that apply’ options to measurement scales to give participants more flexibility in their responses.

Evaluating the Revised Survey – Member Checking

After revising the tool and formatting it into a new survey, we asked participants to re-evaluate the tool to ensure that we improved its quality and applicability to C2G and RP2 projects. We asked all six participants to take the revised survey and answer six questions to assess whether and how the survey improved from the original to the revised version. Refer to Table 1 to review our evaluation questions. Three of the six respondents completed the revised survey. Responding participants indicated that the survey improved; two of the three respondents indicated it was “much better” and one respondent indicated it was “somewhat better.” All participants indicated that the survey was “very easy” to complete. Finally, participants found the revised sections of the survey either “very relevant” or “somewhat relevant” to their C2G and RP2 projects. To review the survey results, refer to Appendix C.

Discussion

As previous literature demonstrates, cognitive interviews reveal that participants may interpret survey metrics differently than intended by the researchers that develop them.15,27,28 This study was useful in illustrating the use of cognitive interviews to elicit in depth information from partners on how they think about health equity. Cognitive interviews revealed that community partners often struggled with language and concepts related to health equity, particularly in the context of structural factors and systemic inequalities. This suggests the need for evaluators to develop more accessible and relatable equity measures that align with the lived experiences of communities. The revised HIA tool was generally well-received by community partners, demonstrating its improved clarity, relevance, and usability. However, further refinement may be necessary to ensure that all relevant community partners can readily understand the tool effectively.

Findings from cognitive interviews echoed prior research findings that health equity can be difficult to measure and operationalize.8,10 Health equity language, at least the language used in the HIA tool described by Heller et al., 2 can have multiple meanings and interpretations. If the language is not clear to those conducting health equity related research, then the data collected by evaluators trying to understand health equity impacts may not be reliable or valid. Therefore, it is imperative for evaluators who are assessing the health equity outcomes of programs or projects to measure and operationalize health equity in a way that is easily understood to program participants, like RADx-UP community partners.

As such, it may not be realistic to ask program recipients directly about how their research has led to health equity impacts at the structural level. While the RADx-UP pilot projects provided time-limited funding support to projects conducting CEnR to address COVID-19 testing disparities, it may not be feasible that these time-limited grants could also address the structural issues that led to the disparity in COVID risk, exposure, and access to relevant resources. Rather, participants indicated that constructs used to measure equity must be simplified and scaled down to match actual program activities. As Penman-Aguilar et al. 8 suggest, it may be more feasible to measure social determinants of health, which can then be linked to equities or inequities. Although the SOPHIA group suggests that successful outcomes in the first two outcome areas of the original HIA tool can contribute to the outcomes in the last two areas 2 which take longer to achieve, we found that participants were uncomfortable answering the metrics in the last two outcome areas for their project. As such, we revised the original tool’s outcomes and metrics related to “shifts in power” and “reduced inequities” to measure the types of social determinants of health that C2G and RP2 projects addressed instead. Based on data from these questions, evaluators may be able to make inferences about structural shifts and power and reduced inequities. Nonetheless, the revisions made to the HIA tool demonstrate the importance of incorporating community-informed perspectives in the development of health equity measurement tools. By ensuring that tools are clear, relevant, and sensitive to the cultural nuances of the communities being studied, researchers and evaluators can obtain more accurate and actionable data to inform policy and practice.

Although we found cognitive interviews to be a useful strategy for revising and tailoring the HIA tool, there are some methodological drawbacks that other evaluators may consider. Cognitive interviews can be time and resource intensive. Evaluators must have the time and capacity to conduct and analyze the interviews, revise the original tool, and verify the accuracy of revisions. If evaluations have a strict timeline, cognitive interviews may not be feasible. If time is not a limitation, cognitive interviews are a reliable way to ensure that evaluators are tailoring tools to their audience, and that audience members interpret health equity measures consistently. While member-checking is not always necessary, it can ensure that evaluators interpreted cognitive interview results correctly. This study only received a 50% response rate while member-checking, which impacts our confidence in the revised tool. If time allowed, an expanded pilot of the survey would have been beneficial to ensure the validity and reliability of the revised tool. Regardless, cognitive tools are a great option for finding ways to adapt existing HIAs metrics to evaluators’ target populations and identify appropriate ways to assess and measure health equity.

Conclusion

This study underscores the crucial need for participatory approaches when developing and adapting health equity measurement tools. Beyond this, involving those that do health equity research, particularly community partners, can lead to more participatory and equitable evaluation research practices. While we were able to adapt the tool to better fit the study population, our findings reiterate the existing challenges around defining and assessing health equity impacts.

One key takeaway from this study is that surveys may not be the best method for measuring nuanced health equity impacts of programs or initiatives. Alternatively, future studies may want to consider qualitative approaches to evaluate and assess health equity impacts. During interviews or focus groups, evaluators and program participants may be able to come to a shared understanding of health equity related concepts and then have more in-depth discussions around how CEnR projects impact health equity in priority populations and underserved communities.

Nonetheless, cognitive interviews were invaluable for assessing how community partners understand and think about health equity, and our findings allowed us to tailor survey metrics to RADx-UP community partners. By actively engaging community members in the development and revision process, researchers can create more accurate, relevant, and accessible measures that truly reflect the complexities of health equity. Therefore, future research should prioritize participatory approaches that involve communities in every stage of the measurement process. This includes ensuring that tools use language that is readily understood, incorporate indicators that are relevant to community priorities, and consider the unique cultural nuances and experiences of the populations being studied. Future studies should continue to explore ways to accurately and effectively measure health equity.

Supplemental Material

Supplemental Material - Adapting a Health Impact Assessment Tool for Community-Engaged Research to Improve Health Equity Measurements for NIH RADx-UP Pilot Projects

Supplemental Material for Adapting a Health Impact Assessment Tool for Community-Engaged Research to Improve Health Equity Measurements for NIH RADx-UP Pilot Projects by Shelly Ann Maras, Rossana Roberts, Caroline Taheri, Bola Bilikis Yusuf, Brittany Choate, Ania Wellere, Tara Carr, Abisola Osinuga Snipes, Leah Frerichs in Community Health Equity Research & Policy.

Footnotes

Acknowledgements

We would like to acknowledge the following groups for their help on this project: the RADx-UP Tracking & Evaluation Team; C2G Administrative Team & Projects; RP2 Administrative team & Projects; Interview Participants.

Author Contributions

RR, LF, TC, AS, and SM conceived the study and designed the evaluation protocol and tools in consultation. SM, RR, BY, AW, and CT contributed to participant recruitment, data collection, and data analysis. RR, SM, AW, CT, BY, AS, TC, BC, and LF contributed to the interpretation of the analysis and contributed to writing the manuscript drafts. All authors reviewed and revised manuscript drafts and approved the final manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Research reported in this RADx® Underserved Populations (RADx-UP) publication was supported by the National Institutes of Health under Award Number U24MD016258. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health.

Ethical Considerations

The Institutional Review Board (IRB) of the University of North Carolina at Chapel Hill reviewed the evaluation protocol and determined non-human subject research (RP2 IRB#: 21-1592; C2G IRB #: 21-1676).

Data Availability Statement

Data supporting this study cannot be made available because participants did not agree to have their data shared.

Supplemental Material

Supplemental material for this article is available online.

Note

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.