Abstract

Machine interpreting (MI) has become an influential form of computer-assisted interpreting, shaping both interpreting practice and pedagogy. Although prior studies have explored MI’s effects on interpreting processes and outputs, little is known about how directionality operates in MI-assisted consecutive interpreting (CI). This study addresses this gap by examining directionality effects in MI-assisted CI through a mixed-methods design combining product evaluations, self-assessments, and perceived cognitive load measures. Results revealed a consistent directional asymmetry: both professional and self-assessments showed higher holistic performance in the English-to-Chinese direction, with self-evaluations displaying stronger contrasts. NASA-TLX data further indicated significantly higher perceived cognitive demands and frustration when interpreting into English. Post-task questionnaires revealed how participants managed MI-generated visual input and adapted their strategies across directions. This study provides the empirical evidence of how directionality interacts with MI support in CI, offering novel insights into the cognitive and performance implications of integrating MI into interpreter training and practice.

Keywords

1. Introduction

The interpreting profession is undergoing a significant technological transformation, which is conceptualised as the “technological turn in interpreting” (Fantinuoli, 2018b, p. 3). During this technological breakthrough, machine interpreting (MI) represents a major game changer as a result of the advancements in automatic speech recognition (ASR) and other artificial intelligence (AI) technologies (Fantinuoli, 2018b; Fantinuoli & Dastyar, 2022). Originally designed to replace human interpreters (Fantinuoli, 2018b), MI, including both speech-to-text and speech-to-speech real-time translation, can be conceived as an in-process supporting tool and used to “augment professional interpreters” (Fantinuoli, 2023, p. 58). As a process-oriented computer-assisted interpreting (CAI) technology that could offer both original and target language texts, MI is promising for alleviating interpreters’ cognitive load and enhancing their performance while still under exploration (Chen & Doherty, 2024). The application of MI during the interpreting process has recently attracted scholarly attention, focusing on its integration into interpreting workflows (Chen & Kruger, 2024a) and the implications of MI’s components, such as ASR, on interpreters’ cognitive processes and performance (e.g., Li & Chmiel, 2024).

The consideration of directionality is driven by the contemporary market requirement of interpreters’ bidirectional interpreting capabilities, including interpreting into B language (non-native language) (Lim, 2005). This challenges some institutional policies that favour interpreting into A language (the native language) based on the conventional belief that interpreters perform better in this direction (Graves et al., 2022). The demand for bidirectional interpreting competence is particularly pronounced in emerging interpreting markets (Donovan, 2004) and for language combinations involving languages “rarely learned by non-native speakers” (Whyatt & Pavlović, 2021, p. 143). Extensive research has demonstrated that directionality, the distinction between interpreting into one’s A language versus B language, significantly influences interpreters’ cognitive processes and performance in traditional interpreting tasks without AI assistance (e.g., Bartlomiejczyk & Gumul, 2024; Chou et al., 2021). However, a lacuna remains in empirical research regarding the impact of directionality on performance and cognitive processes in CAI, particularly MI-assisted consecutive interpreting (MI-assisted CI).

To bridge this gap, the present study explores the effects of directionality on MI-assisted CI by comparing student interpreters’ output quality and their subjective responses collected through post-experiment questionnaires in both Chinese-to-English and English-to-Chinese directions. Rather than offering a blanket assessment of MI as either beneficial or distracting, this study adopts an exploratory lens to examine the role of interpreting direction in shaping MI-assisted CI performance and perceived cognitive processes. The findings are expected to contribute to a more nuanced theoretical understanding of directionality effects in technology-enhanced interpreting and offer practical implications for interpreter training in the era of human–AI collaboration.

2. Literature review

2.1 MI as a computer-assisted interpreting tool

Fantinuoli (2018a) categorised CAI tools into two main types: process-oriented and setting-oriented. Process-oriented CAI tools refer to various programmes that are able to support interpreters “in at least one of the different sub-processes of interpreting” (p. 155). The tools could include “non-dedicated,” such as search engines and other tools for generic purposes, and “dedicated” tools, such as terminology management systems and other tools specifically designed to improve interpreting performance (Chen & Doherty, 2024, p. 406), encompassing the stages of preparation, in-interpreting, and post-interpreting. According to Fantinuoli (2023, p. 58), MI refers to “the process of converting a spoken text from one language into written or spoken text in another language in real-time,” and both forms of speech-to-speech and speech-to-text translation could be in-process CAI tools. Though not specifically designed to support interpreters, such a form of MI has “considerable potential for changing the way interpreting is practiced” (Pöchhacker, 2022, p. 197). Meanwhile, recent scholarship emphasises that the core of MI lies in its translation component. Liu and Liang (2024, p. 3) argue that text-to-text translation is the essential module of MI, as it is “where translational activity really happens,” while speech recognition and synthesis merely serve as technological interfaces. Similarly, Chmiel and Spinolo (2025) conceptualise MI post-editing (MIPE) as the real-time use of machine-translated subtitles as a source for interpreter reformulation. Therefore, the present study builds on this understanding to analyse caption-based ASR–MT integration, viewing it as a text-output instantiation of MI that directly supports the interpreter’s interpreting process.

One major component of MI is ASR, which transcribes “spoken languages into written text” in real time (Fantinuoli, 2023, p. 56). Some empirical studies examined the use of ASR on interpreters’ performance and cognitive processes. The pioneering research by Defrancq and Fantinuoli (2021) demonstrated that simultaneous interpreters utilising ASR-based support tools achieved significantly enhanced numerical accuracy in their renditions. Defrancq et al. (2024) reanalysed the dataset, and their comparative analysis of ASR-supported and non-ASR interpreting yielded similar results in output accuracy and acceptability. Recently, the eye-tracking study by Yuan and Wang (2024) revealed that ASR-supported simultaneous interpreting (SI) showed better overall performance but higher cognitive load. The more sophisticated investigation by Li and Chmiel (2024) proved that ASR implementation could significantly enhance SI output accuracy, with performance benefits becoming particularly pronounced at precision thresholds of 90% and above.

While ASR-based “monolingual live captioning” (Zhang & Xie, 2025) has been relatively well-studied, MI represents a promising yet underexplored tool in CAI (Chen & Doherty, 2024). As a CAI tool with full automation, MI warrants particular attention due to its “significant untapped potential to support and enhance” interpreters (Fantinuoli, 2025, p. 2). Chen and Kruger (2023) introduced a novel methodological framework through their two-step computer-assisted consecutive interpreting (CACI) mode between Chinese and English, which incorporated iFlytek ASR and Baidu Translate machine translation (MT), with resemblance to MI. Their empirical study revealed a significant effect of directionality, with overall quality scores being higher in the into-A direction than in the into-B direction across both conventional CI and CACI. However, the comparative analysis by Ünlü and Doğan (2024), which utilised Sight-Terp, a self-built CAI tool that synthesises ASR and Speech Translation capabilities and delivers both source and target texts in parallel form, revealed a nuanced trade-off: while Sight-Terp resulted in enhanced accuracy, it simultaneously led to increased disfluency and longer interpreting duration in the into-A language CI. This divergence highlights the complexity of technological integration in interpreting contexts.

In addition, Su and Li (2024) examined Tencent’s commercial MI system in Chinese-to-English SI and based on eye-tracking and accuracy data, found that MI reduced cognitive load and improved performance. However, the benefits of MI in SI are not consistently observed. Zhang and Xie (2025) compared monolingual live captioning (YouTube ASR) and bilingual captioning (iFlytek MI) in English-to-Chinese SI among student interpreters and reported that both captioning conditions lowered interpreting quality relative to SI without captions. Moreover, MI-assisted SI yielded poorer accuracy, fluency, delivery, and overall performance than monolingual ASR.

Therefore, the shift from monolingual ASR-based captioning to bilingual MI-based captioning is not merely technological but also cognitive. Whereas ASR provides interpreters with real-time transcription of the source speech in the same language, MI introduces an additional translated layer in the target language. This bilingual captioning format may alter interpreters’ processing dynamics by changing the distribution of comprehension and reformulation effort. Instead of relying solely on auditory input, interpreters may engage in cross-modal verification, post-editing, or monitoring of machine-generated target text (e.g., Chernovaty, 2024). Such processes potentially introduce new cognitive demands, such as managing interference between inputs and aligning source and target representations. Therefore, extending findings from ASR to MI warrants dedicated investigation, as bilingual captions introduce additional processing demands that may reshape interpreters’ cognitive load and strategies.

However, MI’s application remains “a less chartered territory” (Chen & Doherty, 2024, p. 407), and the “added and freed cognitive capacities” it potentially affords still demand closer empirical investigation (Fantinuoli, 2023, p. 64). The inconsistent findings across prior studies may be conditioned by contextual factors, most notably, interpreting direction. For instance, the contrasting results reported in Su and Li (2024) and Zhang and Xie (2025) raise the possibility that MI assistance interacts differently with Chinese–English and English–Chinese interpreting. This possibility is theoretically plausible given the well-documented asymmetry between into-A and into-B interpreting, which involves different cognitive and linguistic demands (e.g., Chmiel, 2016; Kajzer-Wietrzny & Chmiel, 2023). If MI introduces additional visual input and monitoring requirements, these demands may interact with direction-specific processing constraints rather than affecting both directions uniformly. The following section therefore reviews research on directionality to establish the theoretical basis for examining how MI support may differentially influence into-A and into-B processing.

2.2 Directionality in interpreting

Directionality, or the direction of transfer between interpreters’ A and B languages (International Association of Conference Interpreters, 2023, p. 5), is a foundational concept in interpreting studies due to its implications for cognitive load and processing efficiency (Gile, 2005). While extensively studied in traditional interpreting contexts, its significance becomes even more pronounced in technology-assisted interpreting settings, where additional cognitive demands introduced by tools may interact differently with into-A and into-B interpreting.

Research on directionality in interpreting without technological assistance has primarily examined three dimensions: cognitive load, linguistic features, and quality of output. Findings consistently point to asymmetries between interpreting directions, though not always in the same dimension. For instance, into-A interpreting has been shown to impose a heavier cognitive load during note production but facilitate faster lexical retrieval (Chen, 2020; Chmiel, 2016), while into-B interpreting is often associated with higher demands during reformulation and more complex syntactic structures (Kajzer-Wietrzny & Chmiel, 2023). Studies also suggest directional effects on delivery and fluency, though evidence is mixed (Adler, 2024; Chou et al., 2021). Such mixed findings indicate that directionality effects may be conditioned by factors such as interpreting mode, interpreter expertise, and language pair. Nevertheless, this body of research confirms directionality as a key determinant of interpreter performance, suggesting that its influence may extend in distinctive ways to technology-assisted interpreting settings.

However, relatively few studies have examined how directionality interacts with CAI tools. Recent investigations indicate that MI may alter established directional asymmetries. Chen and Kruger (2024a) found that MI’s effectiveness on students’ CI performance was compromised by directionality, with better performance observed in the into-B language. A later study by Chen and Kruger (2024b) also revealed different cognitive resource allocation patterns in two directions of CI. In the same language pair of Chinese-English, Su (2025) found contrasting patterns in MI-assisted SI of higher accuracy in the into-B direction but lower cognitive load in the into-A direction. These studies highlight the complexity of combining directionality with technological support, yet the evidence remains fragmented.

Despite the inherent complex nature of CI, which involves sub-tasks such as note-taking, memory retention, and message encoding, empirical investigations into the interaction between directionality and MI-assisted CI remain scarce. As Chen and Kruger (2024a) have noted, the impact of interpreting direction warrants closer examination. Addressing this, the present study treats directionality as a key analytical variable rather than a mere moderator. Moreover, to enhance ecological validity, we adopt an authentic commercial MI system that provides bilingual captioning support, alongside a standardised CI workflow that mirrors prevalent pedagogical practices. This design minimises interference from unfamiliar protocols and reflects current teaching realities. Specifically, the research questions (RQs) are as follows:

RQ1. How does directionality affect the quality of student interpreters’ MI-assisted consecutive interpreting by student interpreters, as evaluated by professional interpreters?

RQ2. How do student interpreters evaluate both the product and process of their MI-assisted consecutive interpreting across language directions?

3. Research methods

This study employed a within-subject experimental design to investigate the influence of directionality on the effect of MI assistance on the CI process and performance. Participants’ MI-assisted CI process and performance were assessed in two language directions: Chinese-to-English (C–E) and English-to-Chinese (E–C). The interpreting performance was evaluated through professional assessments conducted by three experienced interpreters, student interpreters’ self-assessments, cognitive load ratings, and post-task written reflections. The inclusion of both professional and student perspectives aimed to combine objective evaluations of interpreting quality from professional raters with subjective self-assessments reflecting students’ perceptions of performance and MI support.

3.1 Participants

Twenty-one student interpreters were recruited through convenience sampling from a highly competitive Master’s programme in Simultaneous Interpreting at a renowned university in China. Participants included 7 males and 14 females, with a mean age of 23.29 years (SD = 1.55). All participants were native speakers of Chinese (L1) and used English as their second language (L2). Their English proficiency was comparable, as reflected in their average International English Language Testing System (IELTS) score of 7.2 (SD = 0.28), indicating a high level of language competence. Each participant had completed at least one semester of intensive CI training, ensuring a comparable level of interpreting competence for the experimental tasks. This homogeneity in training and language proficiency helped minimise individual variation, allowing the analysis to focus on directionality and the impact of MI support rather than proficiency-related factors. Although still developing professional expertise, student interpreters with similar backgrounds have been shown to engage effectively with technological tools such as live captioning (Yuan & Wang, 2024). Their inclusion therefore provides valuable insight into the early-stage integration of MI support in interpreter training and practice.

3.2 Materials

The experiment materials included two source speeches, one in Chinese and the other in English. Both speeches addressed the broader technological domain but with different focuses. The Chinese speech was extracted from Xiaomi CEO Jun Lei’s Speech at the 2024 Press Conference of Xiaomi Mix Fold, 1 focusing on mobile telecommunications. The English speech was sourced from NVIDIA CEO Jensen Huang’s Keynote at COMPUTEX 2024, 2 addressing computing systems. Although the two speeches differed in specific subtopics (mobile telecommunications vs. computing systems), both belonged to the same overarching technological domain and were delivered in comparable corporate keynote settings by technology CEOs. To mitigate potential topic-related variability, preparatory measures were implemented (see section 3.5 “Procedure”), ensuring that participants were adequately familiarised with the content before each task.

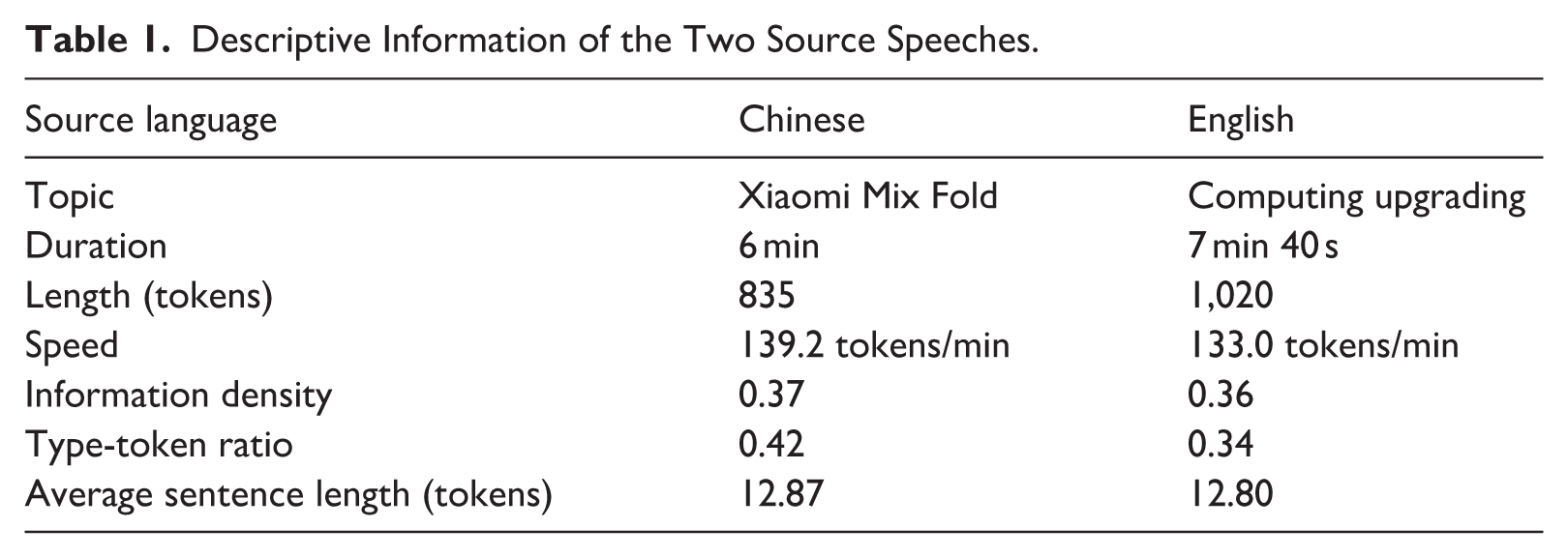

Both the English and Chinese texts were tokenised using Stanford NLP, which segmented the texts into tokens. Subsequently, speech duration, length, speed, and multiple linguistic parameters, including information density, type-token ratio, and average sentence length, were calculated to ensure the comparability of the two source speeches (see Table 1).

Descriptive Information of the Two Source Speeches.

Regarding the visual modality, both speeches were delivered in a typical keynote format, primarily featuring close-up shots of the speaker, with occasional presentation slides or product images displayed on the screen. While differences in slide content and layout were inevitable, the overall visual structure and modality were comparable across tasks and remained constant for all participants within each condition.

3.3 MI system

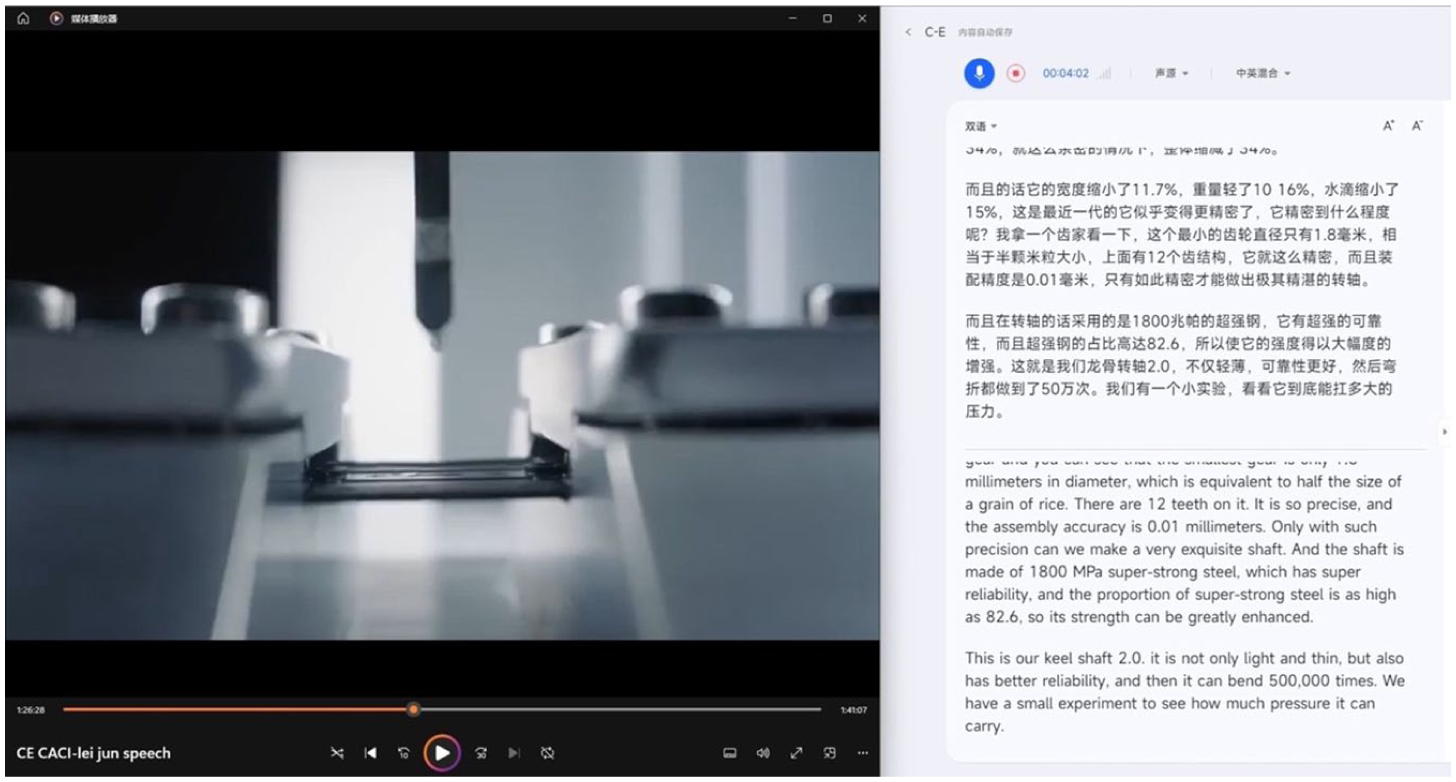

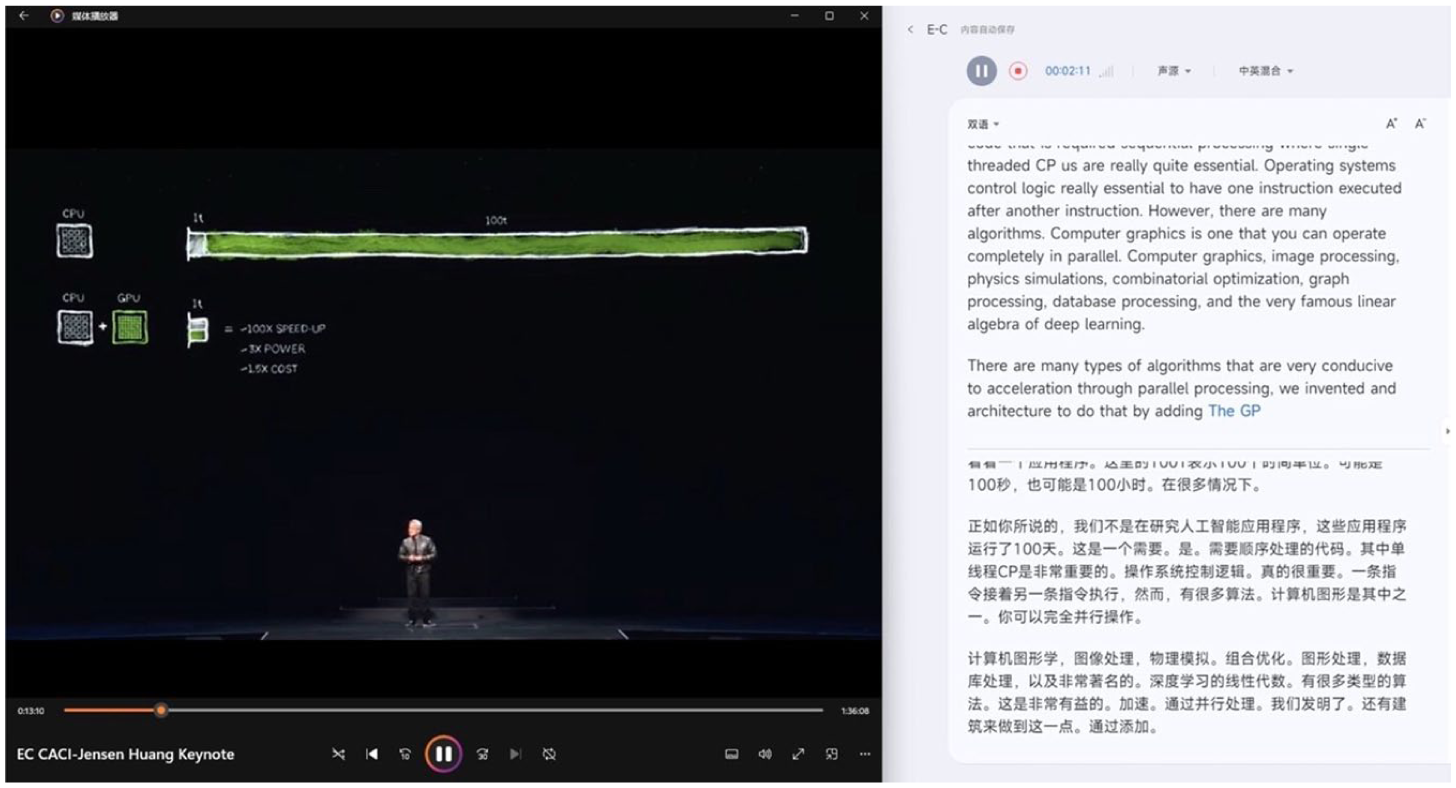

iFlytek’s MI system served as the in-process CAI tool in this study, providing a continuous, simultaneous display of source and target texts within a unified interface during the speaker’s delivery. It was selected for its strong natural language processing capabilities and its marketed speech-recognition accuracy of 97.5%. 3 The interface displayed the source text in the upper-right section and the target text in the lower-left section (see Figures 1 and 2). The subtitles appeared dynamically in real time, following the speaker’s rhythm and pacing, thus resembling a live MI-assisted CI setting. The original speech videos were also displayed on the left side of the screen throughout the tasks, providing participants with full access to the visual context, including the speaker’s image, gestures, and presentation materials. This design aimed to replicate a realistic interpreting environment where both auditory and visual cues are available.

iFlytek Machine Interpreting (C–E Direction).

iFlytek Machine Interpreting (E–C Direction).

The self-reported sense of comfort with the system was 5.00 out of 10 (SD = 1.90) in the C–E direction and 6.19 out of 10 (SD = 2.01) in the E–C direction. The self-reported reliance on the system was 6.48 out of 10 (SD = 2.36) in the C–E direction and 6.14 out of 10 (SD = 2.53) in the E–C direction. These results indicate that student interpreters actively engaged with the captions, though comfort and reliance varied across directions. While these descriptive statistics suggested potential directional differences, a Wilcoxon signed-rank test revealed no statistically significant differences between the two directions in either comfort level (W = 52.500, p = .087, r = −.478) or reliance (W = 88.500, p = .582, r = −.205). A Pearson chi-square test of independence indicated that caption type preference did not differ significantly across interpreting directions (χ2[2] = 1.82, p = .403). All expected cell counts exceeded 5, confirming that the assumptions of the test were met.

3.4 Questionnaire

The post-experiment data collection employed a multi-component questionnaire via wenjuanxing, an online survey platform. 4 The questionnaire, presented bilingually in Chinese and English, comprised four main sections designed to capture various dimensions of participants’ experience with MI-assisted CI. In addition to closed-ended items, open-ended questions were included to allow participants to elaborate on their experiences and management of MI support (see Appendix).

The first section consisted of Likert-type-scale items assessing student interpreters’ perceived comfort level with MI, their reliance on the system, and task difficulty evaluations. The second section elicited participants’ preferences regarding caption language usage: source language, target language, or both. The third section required participants to rate their performance on three key interpreting parameters: fluency of delivery, information completeness, and target language quality. The fourth section incorporated the NASA Task Load Index (NASA-TLX), a validated instrument for measuring subjective workload in interpreting studies (Chen, 2017; Li & Chmiel, 2024). Given the cognitive focus of interpreting tasks, this section mainly evaluated participants’ perceived cognitive effort, task demands, frustration levels, and holistic performance during MI-assisted CI tasks. Physical demand was excluded due to the short duration of the tasks involved in the current study, and temporal demand was not examined separately as task duration and speech rate were controlled. Raw TLX scores were used for analysis. As the NASA-TLX included a holistic self-rating of performance, we did not administer a separate overall quality assessment in the third section.

3.5 Procedure

The experiment was conducted in a specialised interpreting laboratory equipped with professional audio-video devices and an integrated teaching system across two sequential sessions from December 2024 to January 2025. Twenty-one student interpreters with comparable proficiency levels participated in the study, with 10 in the first session and 11 in the second.

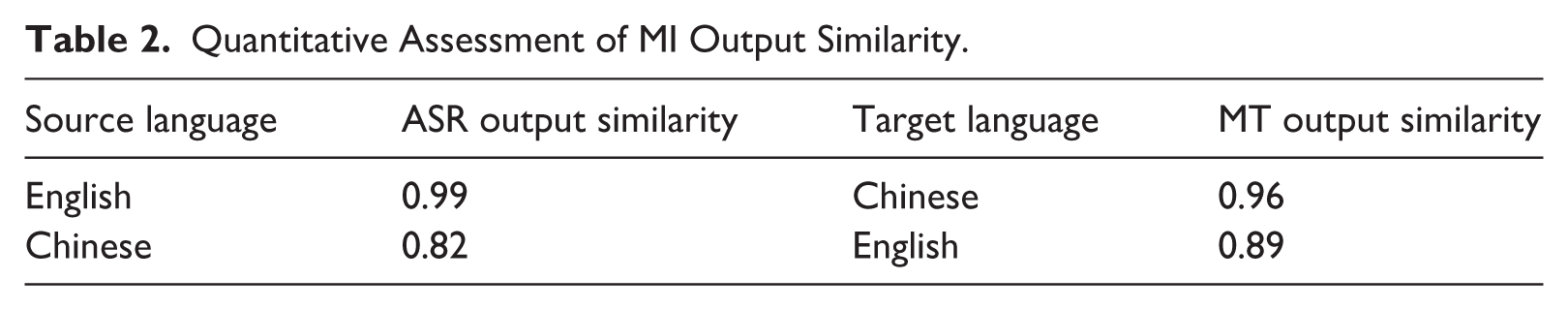

To validate the consistency of MI outputs between the two sessions, we conducted semantic similarity analysis using optimised fine-tuned models of Sentence-BERT for English and Chinese-BERT for Chinese (Devlin et al., 2019). Cosine similarity was computed to quantify the semantic alignment between corresponding outputs, specifically comparing ASR outputs from the first cohort to the second, and likewise for MT outputs. A cosine similarity score of 1 indicates perfect semantic overlap, 0 no similarity, and −1 complete dissimilarity. Thus, a cosine similarity value closer to 1 reflects a higher degree of semantic similarity (Wang & Ge, 2025), and values above 0.80 are generally considered indicative of high similarity (Herbold, 2023). This approach is widely used in natural language processing and MT evaluation (e.g., Sun & Wang, 2024) because it can capture semantic overlap even when lexical or syntactic differences are present, making it a valid method for assessing the stability of MI outputs. Importantly, this analysis was not intended to assess the quality of the ASR or MT components per se, but rather to ensure that participants across cohorts received semantically comparable MI input. The observed cosine similarity values, ranging from 0.82 to 0.99 (Table 2), indicate high semantic consistency, thereby supporting the comparability of interpreting conditions across the two groups.

Quantitative Assessment of MI Output Similarity.

Participants performed CI tasks in two language directions, C–E and E–C, with support from the iFlytek MI system. To ensure task preparedness and procedural familiarity, they were provided with glossaries, a 10-min preparation period, and a researcher-led briefing on the speech content and system functionalities. A 5-min trial session preceded each formal task to help participants adapt to the interface and the simultaneous display of source and target texts. The MI system was operated solely by the researchers and projected on each participant’s screen via the integrated teaching system, which ensured identical visual input for all participants and eliminated variability in system handling.

The experiment followed a fixed order, with the E–C task completed first and the C–E task second. CI was delivered in 30- to 40-second segments, and participants were permitted to take notes, in line with standard practice, to maintain ecological validity. During each source segment, machine-generated captions (including both source transcription and translated output) were displayed continuously while the speaker was speaking. Each segment followed a consecutive structure: participants listened to the entire source segment (with captions visible) and took notes if necessary, and target-language production commenced only after the speaker had finished the segment. No temporal overlap between source speech and target production was allowed. A 10-min break was provided between tasks to reduce fatigue and potential carry-over effects. After each task, participants completed a post-experiment questionnaire to capture their immediate perceptions. All MI-assisted CI outputs were audio-recorded for subsequent analysis.

3.6 Professional assessment

3.6.1 Rating criteria

To address the research questions, we adopted a comprehensive assessment framework combining holistic and analytic rating scales. The assessment covered four dimensions: a holistic score reflecting overall interpreting quality and three analytic dimensions, namely information completeness (InfoCom), fluency of delivery (FluDel), and target language quality (TLQual), each targeting a specific aspect of performance. This multidimensional approach provides a balanced evaluation by integrating accuracy- and delivery-related indicators. The operational definitions of these rating criteria follow those of Chen et al. (2022), which provide detailed descriptions.

3.6.2 Raters

Three raters, one male and two females, were selected based on their expertise and qualifications to evaluate CI performances. All raters were certified conference interpreters, having passed the Level 2 China Accreditation Test for Translators and Interpreters (CATTI) Interpreting Exam, a nationally endorsed professional certification examination, and possessing a minimum of 5 years of professional interpreting experience. Meanwhile, they have experience in interpreting assessments and are familiar with the scoring scales used in the present study.

Prior to formal evaluation, the three raters completed a training session to ensure consistency and scoring accuracy. They were provided with detailed holistic and analytic rating scales, along with clarification of all criteria to avoid ambiguity. Raters then received the source transcripts, speech videos, and CI audio recordings and were given sufficient time to familiarise themselves with the materials. Then, the raters independently assessed the first three CI performances in each direction, followed by a moderation session to establish consensus on scoring standards and enhance inter-rater consistency.

3.7 Data analysis

To evaluate the consistency of the scoring results by professional interpreters, inter-rater reliability was examined using intraclass correlation coefficients (ICCs). ICCs were computed for both the holistic and analytic scores in both directions of C–E and E–C to ascertain the degree of agreement among the raters.

Due to the paired nature of the data, the small sample size (n = 21), and the lack of normality among the data, the Wilcoxon signed ranks test was conducted to analyse the differences in raters’ assessments as well as participants’ self-reported evaluations between the two directions. Given that 11 separate paired comparisons were performed (four professional assessment dimensions, four self-assessment dimensions, and three NASA-TLX dimensions), p values were adjusted using the Benjamini–Hochberg procedure to control the false discovery rate. All statistical analyses were performed using R statistical software (R.4.4.1).

4. Results

4.1 Rating reliability in performance assessment

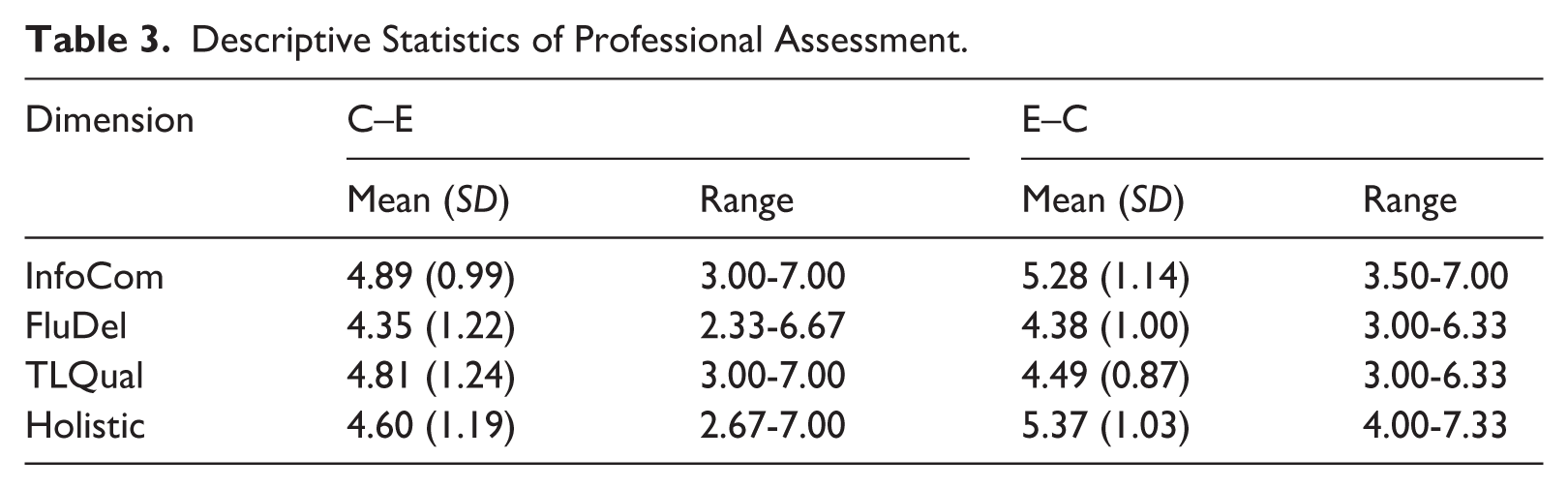

ICC results demonstrated high inter-rater reliability across all dimensions of InfoCom, FluDel, TLQual, and Holistic in both interpreting directions. For the C–E direction, the ICC values indicated excellent inter-rater reliability for all dimensions, with InfoCom (ICC = 0.91, 95% CI [0.80, 0.96], p < .001), FluDel (ICC = 0.88, 95% CI [0.76, 0.95], p < .001), TLQual (ICC = 0.94, 95% CI [0.87, 0.97], p < .001), and Holistic (ICC = 0.93, 95% CI [0.85, 0.97], p < .001). For the E–C direction, the ICC values ranged from 0.89 to 0.96, with InfoCom exhibiting the highest reliability (ICC = 0.96, 95% CI [0.91, 0.98], p < .001), followed by Holistic (ICC = 0.93, 95% CI [0.85, 0.97], p < .001), TLQual (ICC = 0.92, 95% CI [0.83, 0.96], p < .001) and FluDel (ICC = 0.89, 95% CI [0.78, 0.95], p < .001). These results suggest that the ratings provided by professional interpreters were highly consistent across both interpreting directions and dimensions, supporting the reliability of the evaluation framework. Given the high degree of agreement among raters, we averaged their scores across each dimension for subsequent statistical analysis. Table 3 shows the descriptive statistics of the professional assessment.

Descriptive Statistics of Professional Assessment.

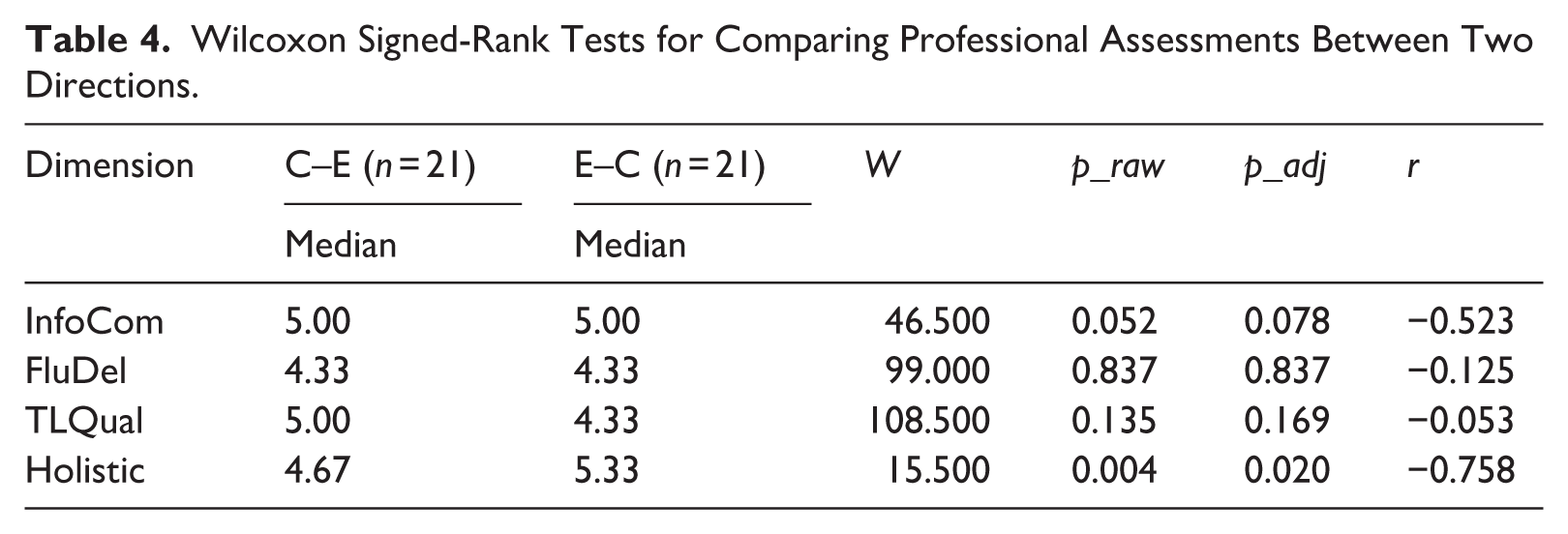

4.2 Professional assessment

Regarding RQ1, which investigated the quality differences in MI-assisted CI between two directions of C–E and E–C from the professional perspective, the E–C direction (Median = 5.33) registered a significantly better performance than the C–E direction (Median = 4.67; W = 15.500, p_adj = .020, r = −.758) in the Holistic dimension (see Table 4 and Figure 3), and the effect size of −0.758 indicated a substantial impact of directionality on MI-assisted CI. This finding suggests that the professionals perceived the overall quality of the E–C MI-assisted CI to be superior to that of the C–E counterpart.

Wilcoxon Signed-Rank Tests for Comparing Professional Assessments Between Two Directions.

Quality Differences by Professional Assessment Between Two Directions.

However, no statistically significant differences were found between the two directions in the other three dimensions: InfoCom (Median C–E: 5.00, Median E–C: 5.00, W = 46.500, p_adj = .078, r = −.523), FluDel (Median C–E: 4.33, Median E–C: 4.33, W = 99.000, p_adj = .837, r = −.125), and TLQual (Median C–E: 5.00, Median E–C: 4.33, W = 108.500, p_adj = .169, r = −.053). These results suggest that professionals rated interpreting outputs in both directions similarly along three specific dimensions. While professionals’ ratings were comparable across dimensions, the InfoCom dimension approached but did not reach statistical significance after multiple-comparison correction (p_adj = .078). Notably, the effect size was large (r = −.523), suggesting a potentially meaningful directional difference in information completeness, with E–C MI-assisted interpreting showing descriptively higher performance. However, given the limited sample size (n = 21), these results should be interpreted cautiously and warrant further investigation with larger samples. In summary, the Wilcoxon signed-rank test analyses revealed a significant directional asymmetry in holistic quality from the professional raters’ perspective, with E–C MI-assisted CI demonstrating superior holistic performance compared to C–E. However, significant differences were absent in three specific dimensions of InfoCom, FluDel, and TLQual in both directions.

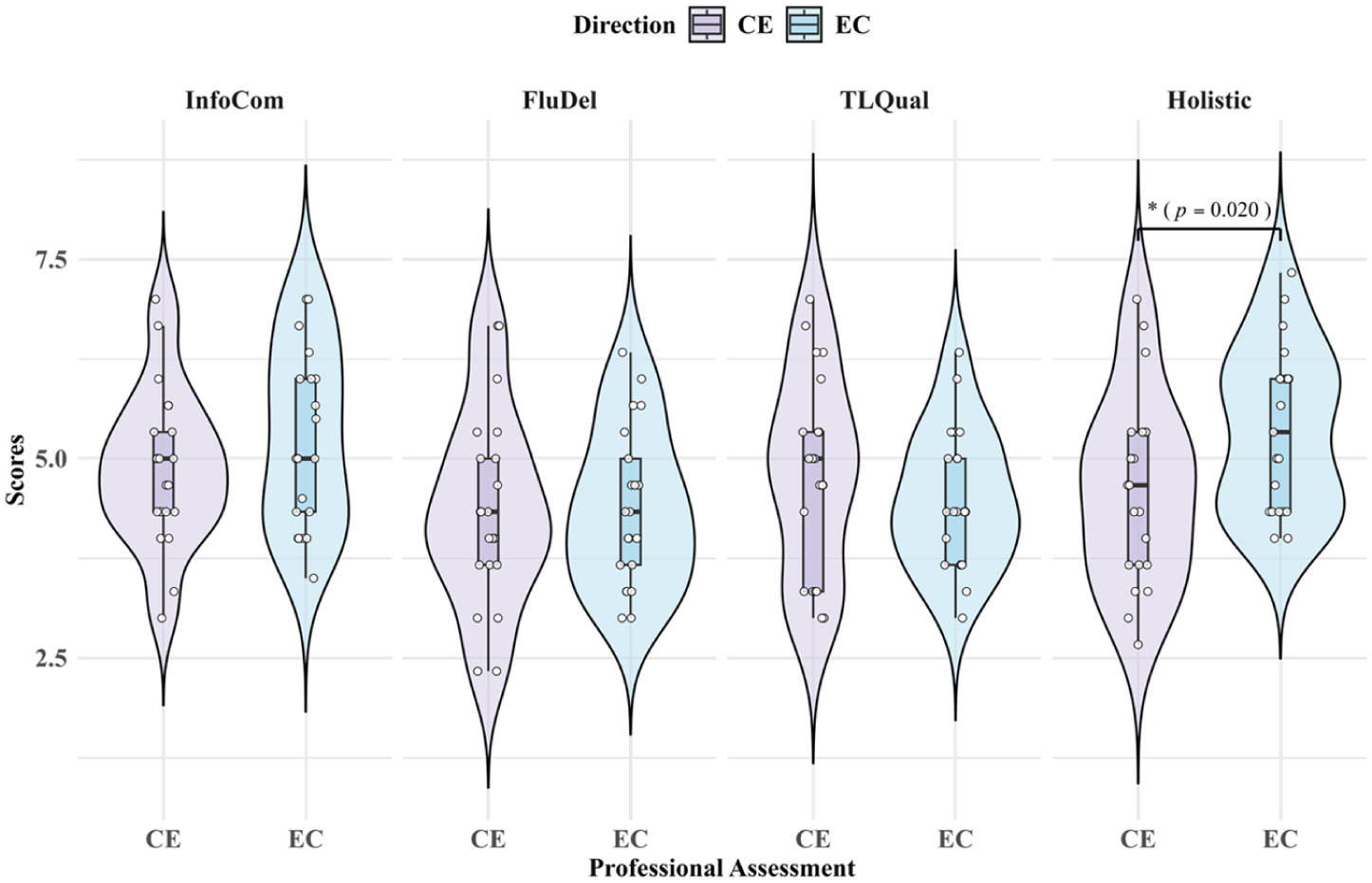

4.3 Self-assessment in quality and process

Table 5 shows the descriptive statistics of self-assessment data.

Descriptive Statistics of Self-Assessment.

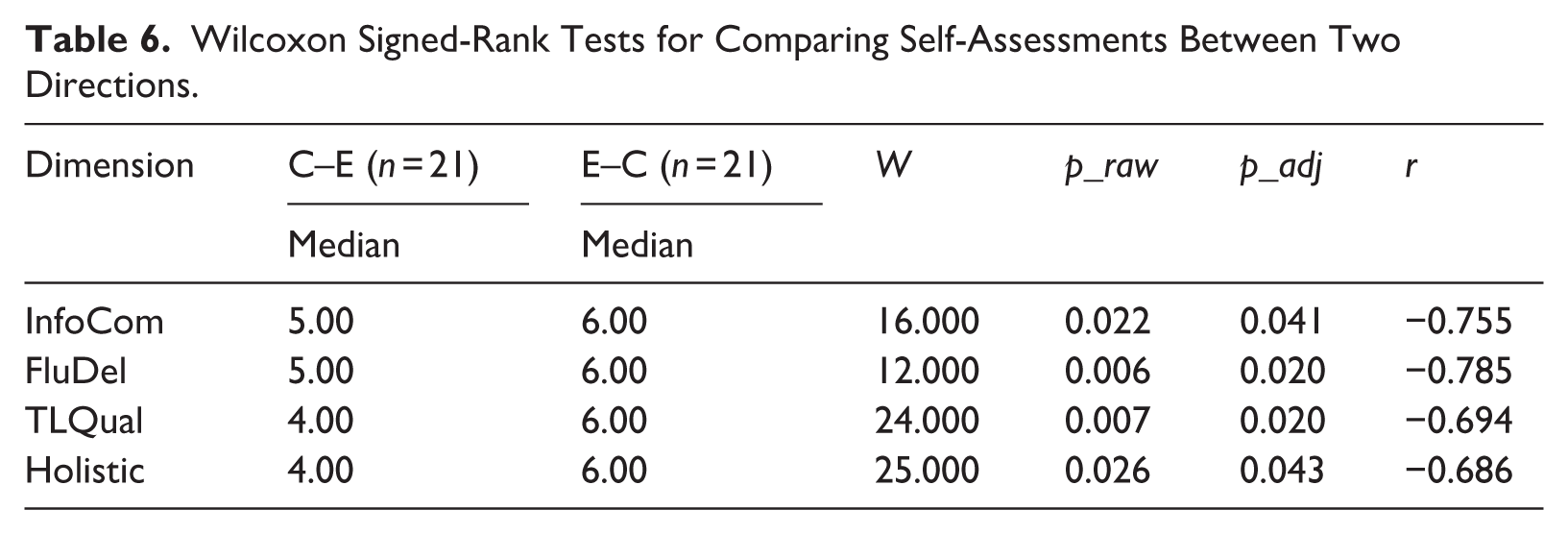

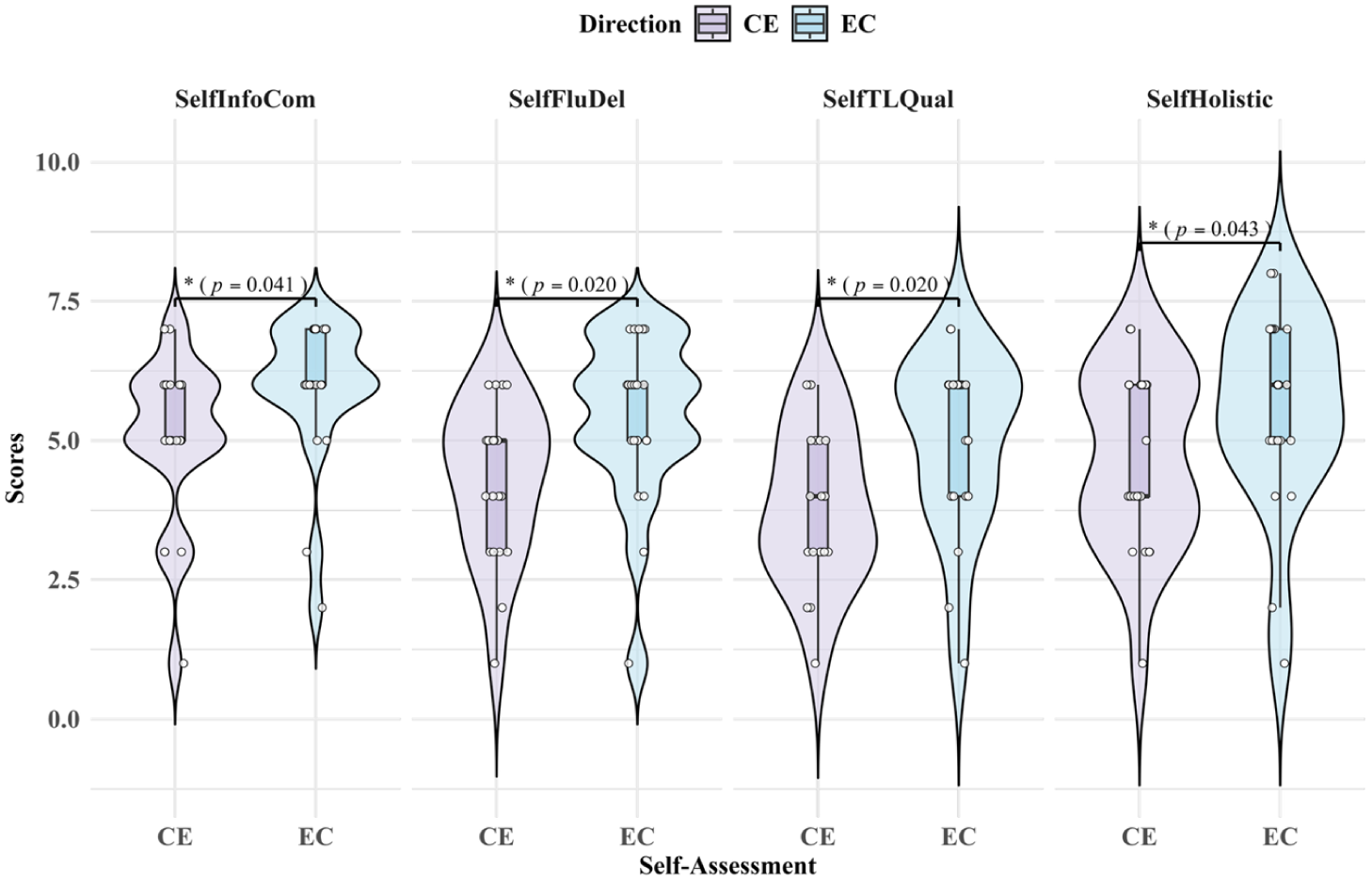

Regarding RQ2, which examined the impact of directionality on the self-perceived quality assessment in MI-assisted CI, the Wilcoxon signed-rank tests revealed significant differences between the C–E and E–C directions across all four dimensions (see Table 6 and Figure 4). The E–C direction consistently received significantly higher quality scores than the C–E direction in InfoCom (Median E–C: 6.00, Median C–E: 5.00, W = 16.000, p_adj = .041, r = −.755), FluDel (Median E–C: 6.00, Median C–E: 5.00, W = 12.000, p_adj = .020, r = −.785), TLQual (Median E–C: 6.00, Median C–E: 4.00, W = 24.000, p_adj = .020, r = −.694), and Holistic (Median E–C: 6.00, Median C–E: 4.00, W = 25.000, p_adj = .043, r = −.686) dimensions. The effect sizes for all four dimensions were also large, with r values ranging from −.686 to −.785, indicating that the directionality exerted a strong impact on the MI-assisted CI performance from the self-assessment perspective. These findings suggest that participants perceived their MI-assisted CI performance in the E–C direction as superior to that in the C–E direction across all quality aspects.

Wilcoxon Signed-Rank Tests for Comparing Self-Assessments Between Two Directions.

Quality Differences by Self-Assessment Between Two Directions.

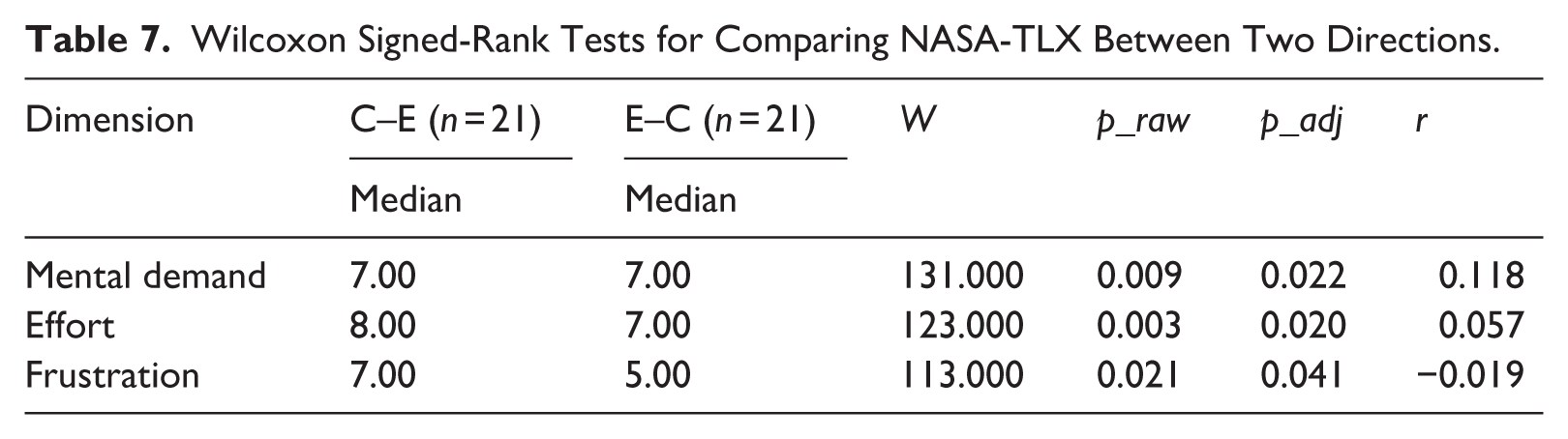

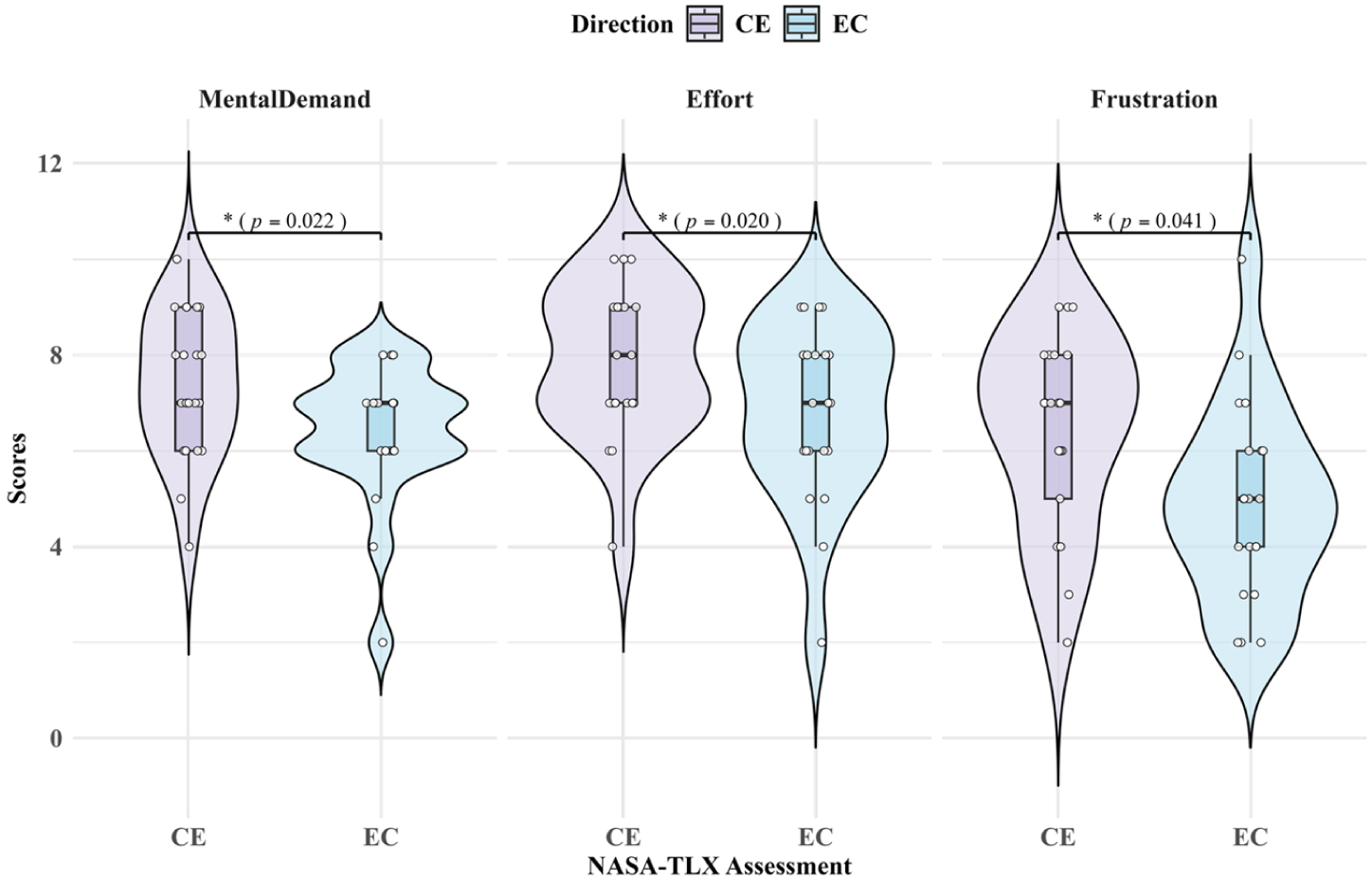

To examine student interpreters’ perceived cognitive process during MI-assisted CI in both directions, three dimensions of the NASA-TLX were analysed: mental demand, effort, and frustration. The results (see Table 7 and Figure 5) showed that the C–E direction was associated with significantly higher effort (Median C–E: 8.00, Median E–C: 7.00, W = 123.000, p_adj = .020, r = .057) and frustration levels (Median C–E: 7.00, Median E–C: 5.00, W = 113.000, p_adj = .041, r = −.019) compared to the E–C direction. Mental demand also showed a statistically significant directional effect (W = 131.000, p_adj = .022, r = .118) despite the same median scores in both directions. These findings collectively indicate that student interpreters experienced heightened perceived cognitive load during C–E MI-assisted CI, characterised by increased mental demand, greater effort, and elevated frustration.

Wilcoxon Signed-Rank Tests for Comparing NASA-TLX Between Two Directions.

NASA-TLX Assessment Between Two Directions.

In summary, these findings demonstrate the significant influence of directionality on MI-assisted CI, revealing systematic differences in interpreting quality and perceived cognitive processes. The C–E direction yielded lower performance while imposing a higher cognitive burden, suggesting that directionality mediates the effectiveness of MI support in CI.

5. Discussion

This study investigated the impact of directionality on MI-assisted CI by systematically comparing the C–E and E–C directions. The research employed a triangulated evaluation framework comprising four components: professional assessments of interpreting quality, participants’ self-assessments, cognitive load ratings using NASA-TLX, and retrospective reflections. These measures provide a multi-perspective account of how MI assistance interacts with directionality in CI.

5.1 Directionality and MI-assisted CI quality

Both self- and professional assessments indicated a general tendency towards better MI-assisted CI performance in the E–C direction compared to C–E, though the strength and consistency of this effect varied across dimensions. Self-assessment data demonstrated statistically significant directional differences across all four quality dimensions of InfoCom, FluDel, TLQual, and holistic performance, with consistently higher ratings for the E–C direction. Professional assessment identified a significant directional effect in holistic performance, while other dimensions showed minimal variation across directions with no statistical significance. Meanwhile, TLQual slightly favoured the C–E direction, but the difference was not statistically significant. This divergence may reflect the distinct evaluative focus of holistic versus analytic ratings. Holistic assessments capture overall communicative adequacy and listener impression (Chen et al., 2022). These global perceptual benefits may accumulate across multiple analytic dimensions, such as marginal improvements in information completeness and delivery, to produce a significant overall advantage in the E–C direction, even when individual analytic dimensions do not reach significance. As suggested by Chen et al. (2022), holistic assessments exhibit greater dependability, and the observed significantly higher scores may reliably indicate higher MI-assisted CI performance in the E–C direction. By contrast, analytic ratings assess specific interpreting components independently and may be less sensitive to such cumulative perceptual effects, especially under sample-size constraints. The slight C–E advantage in TLQual likely reflects the scaffolding effect of MI captions, which can assist interpreters in their B language by providing lexical prompts and syntactic templates, temporarily enhancing surface linguistic quality. Moreover, raters are generally more critical when evaluating TLQual in the interpreter’s A language, where higher standards of idiomaticity and stylistic precision are expected (Han et al., 2023). Therefore, the slight C–E advantage observed in TLQual in professional assessment may result from the distinct natures of the scales, the potential facilitative effect of MI on language quality, and the raters’ assessment behaviours.

The discrepancy between professional assessments and student interpreters’ self-assessments in MI-assisted CI may reflect different rating patterns documented in previous studies. Professional assessors’ adherence to stringent evaluation criteria (Lee, 2022) contrasts with student interpreters’ tendency towards high self-criticism, manifested in a strong focus on negative aspects of their performance (Bartłomiejczyk, 2007). In addition, this self-critical orientation shows directional variation, with greater assessment accuracy in E–C interpreting (Han & Riazi, 2018), potentially due to students’ greater confidence in their native-language comprehension and output monitoring capabilities. In MI-assisted contexts, this directional effect may be further amplified by the presence of real-time machine-generated captions, which could heighten students’ sensitivity to linguistic mismatches, especially in their stronger language, thereby reinforcing the directional asymmetry in self-perceived performance.

Notably, student interpreters’ significantly lower self-assessments of MI-assisted CI quality in the C–E direction may contrast with the previously documented over-scoring tendency in traditional C–E interpreting. While prior research has consistently shown that students tend to overestimate their C–E interpreting performance due to limited awareness of subtle linguistic errors (Han & Riazi, 2018), our findings may reveal that students evaluate their MI-assisted C–E interpreting performance significantly more critically. It is likely that when performing CI with MI assistance, students demonstrate more critical self-assessment in the C–E direction than the lenient self-evaluation typically observed in traditional C–E interpreting. It is also worth noting that the participants in our study, as advanced language learners with sophisticated analytical capabilities, may possess enhanced linguistic literacy and metacognitive competence, which could contribute to their more discerning self-assessment in the C–E direction.

The directionality effects observed in MI-assisted CI quality in the present study may present both points of convergence and divergence with prior CACI findings. Our professional assessments revealed significantly better holistic performance and descriptively higher InfoCom in the E–C direction, aligning with Wang and Wang’s (2019) earlier finding that higher E-C interpreting accuracy could be observed with the use of MI assistance. However, our results diverge from Chen and Kruger’s (2024a) later study, which reported higher performance in the C–E direction with the CACI mode, especially in the accuracy as measured by propositional ratings. In contrast, our findings suggest that overall performance and InfoCom favoured E–C, a reversal of their pattern. Several factors may account for these differences. Methodologically, Chen and Kruger’s (2024a) workflow relied on respeaking rather than note-taking, which may alter cognitive demands and restrict participants’ simultaneous interaction with ASR and MT. As mentioned in their study, respeaking may also create greater scope for improvement in C–E production, which could help account for the stronger quality advantages they observed in that direction. By contrast, our study employed a more conventional CI workflow involving note-taking, comprehension, and reformulation, which remains the standard in interpreter training and more closely mirrors real-world CI practice. This difference in mode may have shifted how participants integrated MI support, particularly in relation to directionality. Participant profiles also differed in that Chen and Kruger (2024a) investigated undergraduate language majors, whereas our study focused on postgraduate interpreting students with more advanced bilingual proficiency and training, whose greater expertise could have shaped strategy use and performance differently from the earlier study.

The marginal directional InfoCom advantage observed in professional assessments may demonstrate MI’s potential as a content enhancement tool in the into-A interpreting direction, in which no such advantage was observed in traditional CI, namely CI without technological assistance, by Chou et al. (2021). However, professional assessments indicated no significant directional differences in FluDel and TLQual, as well as InfoCom. This absence of typical into-A direction advantages in these dimensions, as previously documented in traditional CI (Chou et al., 2021), suggests that integrating MI’s visual input introduces novel cognitive demands. Interpreters may require additional time and strategic adaptation to effectively incorporate this new element into their cognitive resource allocation patterns, particularly in quality dimensions related to delivery and language production.

Student interpreters’ self-assessments revealed significant directional differences across all four quality dimensions, with consistently higher perceived performance in the E–C direction. Beyond InfoCom and holistic scores, perceived advantages in FluDel and TLQual may suggest that students tend to associate interpreting into their A language with stronger delivery and language quality, when supported by MI. Since self-assessment serves not only as a reflective learning tool (Lee, 2022) but also as a window into learners’ subjective experiences and metacognitive processes (Fernández Bravo, 2019), these findings should be interpreted as reflections of how students perceive MI’s usefulness across directions rather than as direct indicators of actual performance. However, as observed by Han and Riazi (2018), students evaluate their E–C output more accurately than their C–E output, likely due to greater confidence in and familiarity with their L1; therefore, the observed self-assessed quality advantages in the E–C direction may not only reflect students’ subjective perceptions but also suggest that MI support could be more effectively leveraged when interpreting into the native language.

5.2 Directionality and cognitive processing in MI-assisted CI

The NASA-TLX results revealed significantly higher perceived cognitive demands in the C–E direction of MI-assisted CI, as reflected in higher ratings of mental demand, effort, and frustration reported by student interpreters. However, given the relatively small effect sizes observed across these dimensions, the difference should be interpreted as a modest increase in perceived cognitive demand rather than a substantial cognitive burden. This directional asymmetry in cognitive load corresponds with the observed performance disparities in quality assessments, suggesting that even limited increases in cognitive demand may influence performance efficiency in C–E interpreting. The convergence of subjective load measures and quality evaluations thus supports the presence of directional differences in MI-assisted CI, with the E–C direction showing relative advantages in both performance outcomes and perceived processing efficiency.

This finding may contrast with Chen and Kruger (2024a), who reported higher mental demand, effort, and frustration in the E–C direction. A likely explanation lies in methodological differences between the two studies: their design required respeaking, an additional oral task that could occupy interpreters’ limited attentional resources and place greater strain when performed in the L2 (De Jong et al., 2015). Consequently, participants in their study experienced greater cognitive load when respeaking into English than into their native language. By contrast, the present study preserved the note-taking practice typical of CI, thus avoiding the additional oral burden and possibly yielding a different directional pattern of cognitive load.

This directional asymmetry in cognitive load aligns with Su’s (2025) findings on technology-assisted SI, where reduced cognitive load was observed in the E–C direction, as evidenced by decreased mean fixation duration. This consistency in directional effects across different interpreting modes suggests a pattern in which technological assistance tends to reduce cognitive load in the into-A direction, while offering less mitigation of the inherent processing challenges in the into-B direction. The elevated cognitive demands observed in the into-B direction corroborate Chen’s (2020) findings on CI without MI assistance, where interpreting into B language showed intensified cognitive burden during speech production. Despite the integration of MI assistance in our study, this persistent heightened cognitive load suggests that technology integration might not fully mitigate these inherent directional processing challenges in into-B CI.

The directional asymmetry observed in MI-assisted CI may be interpreted through a cognitively oriented perspective, whereby multiple interacting factors influence interpreters’ allocation of cognitive resources. A primary factor lies in the asymmetry of language proficiency between interpreters’ A language (Chinese) and B language (English), which heightens processing demands during target-language production. When interpreting into their B language, interpreters face a dual cognitive challenge: while they can comprehend the source message in their A language with relative ease, they tend to invest more cognitive resources in B language production due to lower proficiency levels, focusing more on language expressions (Xu, 2021) or target language quality (Lee, 2022). Even with MI assistance, student interpreters still had to verify, monitor, and often reformulate machine-generated suggestions in their B language, a process requiring considerable cognitive resources for lexical selection (Tomczak & Whyatt, 2022) and retrieval (Adler, 2024), grammatical restructuring (Ma et al., 2021), linguistic processing (Shen et al., 2023), and output monitoring and self-repair (Dailidėnaitė, 2017). As such, technological assistance, while supportive in principle, may not neutralise this basic linguistic disparity. In addition, specific features of the MI system itself may have exacerbated these challenges. Caption inaccuracies (Li & Chmiel, 2024), latencies (Fantinuoli & Montecchio, 2022), and the need to navigate bilingual output (Zhang & Xie, 2025) required sustained attention and occasional repair, adding further strain to already effortful into-B production. Open-ended questionnaire data confirmed that some participants found captions incoherent or had difficulty splitting attention, reporting that they sometimes disrupted memory or experienced prolonged pauses in delivery. These observations suggest that MI support did not consistently function as a cognitive aid in the C–E direction, and in some cases, may even have increased processing load.

The second factor contributing to this asymmetry may be explained by the task enhancement and disengagement from the language control model in interpreting proposed by Dong and Li (2020). This model is applicable to both SI and CI, as it captures general attentional control processes involved in bilingual language production. Task enhancement involves deliberately strengthening and prioritising the mental representations of currently relevant information. Task disengagement, on the other hand, refers to the cognitive flexibility to release or suppress previously processed information that is no longer relevant, allowing attention to shift efficiently to new incoming information (Zhao et al., 2023). In MI-assisted CI, task enhancement and disengagement operate in a more complex cognitive environment than in CI without technological assistance due to the presence of bilingual live captioning. Task enhancement involves not only strengthening mental representations of auditory information but also managing concurrent processing of machine-generated captions (Zhang & Xie, 2025). On the one hand, when interpreters attend bilingual captions, they must enhance the representation of relevant caption information while simultaneously maintaining their own interpreting flow. This dual enhancement becomes particularly demanding when interpreting into B language, where more cognitive resources are already allocated to target-language production. Unlike the into-A direction, where the translation and interpreting process is more automatic, the into-B direction demands heightened attention to linguistic accuracy and stylistic appropriateness (de Bot, 2000; Tomczak & Whyatt, 2022). This conscious effort competes for cognitive resources with the need to process and enhance the source message, potentially making the into-B direction particularly challenging in MI-assisted CI. On the other hand, interpreters need to efficiently disengage from information that is no longer relevant to free up cognitive resources for incoming input. This process is especially crucial in the into-B direction, where the slower, more effortful production of the target language might lead to interference if disengagement is not executed effectively.

The cognitive complexity of task enhancement in the into-B direction manifests in interpreters’ interaction with MI assistance, as evidenced by one participant’s reflection: In the C–E direction, the translated text sometimes provides me with a possible translation when I have no idea. However, I often don’t know where to start and have to spend some time figuring it out. (Translated by the Authors from Chinese, and emphasised by the Authors)

Another participant also reported, The Chinese recognition is very inaccurate, and there are many sentence segmentation issues, which make the corresponding English translation incoherent and unreliable. Sometimes, it’s so problematic that it even disrupts my memory. In such cases, I often forget the content of the subsequent segments and have to extract keywords from the given translation while trying to locate the Chinese original text in the subtitles to revive my memory. However, tracing the original text is also cumbersome and makes it hard to quickly locate the needed information, which prolongs the pauses in my interpreting output. As a result, I have to slow down my speaking pace and resort to vague and interpreted statements until I can find the original text and continue. (Translated by the Authors from Chinese, and emphasised by the Authors)

These qualitative accounts illuminate the cognitive complexity of MI-assisted CI, especially in the into-B direction, not merely because of inherent linguistic asymmetry but also because of the system’s limitations in input clarity and segmentation. Interpreters must deal with segmented captions, disrupted working memory, and cross-modal verification, which collectively increase effort and frustration. Meanwhile, the adaptive strategies reported in the responses, including keyword extraction, backtracking captions, and vague reformulations, highlight interpreters’ resilience and agency but also demonstrate the cognitive cost of compensating for occasional system unreliability.

In summary, MI provides potentially beneficial translation suggestions, but the cognitive demands of utilising these resources create additional processing burdens. Interpreters have to grapple with multiple concurrent processes: identifying relevant segments in the continuous captions, evaluating the appropriateness of MI-suggested translations, and maintaining their own interpreting flow. In addition, task disengagement mechanisms are activated when interpreters must suppress interference from previously activated linguistic information or prior processing tasks before transitioning to the target output (Zhao et al., 2023). In MI-assisted CI, task disengagement may thus include suppressing interference from previously displayed captions, disengaging from ongoing visual search processes, inhibiting irrelevant MI-generated texts, and reducing activation of the non-target language representations. The participant’s reported difficulty in efficiently locating starting points in captions further suggests greater cognitive demand in MI-assisted CI in the into-B direction, as interpreters must simultaneously manage multiple streams of visual input while maintaining efficient task disengagement from previous processing phases.

This multifaceted analysis suggests that technological assistance, despite its supportive functions, does not fundamentally offset the inherent cognitive asymmetries in bidirectional interpreting. Rather, it introduces new dimensions of complexity related to unique task enhancement and disengagement issues in MI-assisted CI, which interact with existing directional asymmetries in language processing and production. Rather than neutralising these asymmetries, MI support may sometimes amplify them, especially in cognitively demanding into-B tasks. These findings have important implications for both interpreting research and pedagogy. From a pedagogical standpoint, despite the limits of the present experimental design, the findings should not be taken as evidence against integrating MI tools into interpreter education; rather, they tentatively suggest the need for a guided, staged integration. As interpreting technologies become increasingly embedded in professional practice (Fantinuoli, 2023), early-stage exposure under instructional supervision may offer opportunities to develop technology literacy and strategic control. Although MI systems may produce imperfect or visually demanding outputs, such conditions reflect real professional environments where interpreters deal with hybrid human–machine interaction (Gile, 2025). When integrated progressively, MI-assisted tasks may function as effective scaffolds for developing essential cognitive and metacognitive skills, including selective attention, information management, and self-monitoring. Accordingly, interpreter training should focus not on replacing human competence with machine support, but on preparing students to engage with MI critically and selectively, particularly in into-B interpreting where cognitive demands are greater. In this light, the cognitive strain identified in our study represents not a pedagogical risk but a natural part of the learning curve required to develop technological agency in modern interpreting practice. But given that the present study was based on short-term exposure to MI assistance, the pedagogical implications should be interpreted as exploratory and context-bound. From a research perspective, the results underscore the importance of investigating not only whether MI is beneficial, but also under what conditions, in which directions, and for which learner profiles such benefits are more effectively realised. Moving forward, longitudinal designs and interdisciplinary collaboration will be key to unpacking these nuanced interactions and informing training approaches that are responsive to the evolving demands of technologically enhanced interpreting.

6. Conclusion

This study examines the effect of directionality on MI-assisted CI and our findings revealed a clear directional asymmetry across all evaluation measures. Interpreting performance was consistently superior in the E–C (into-A) direction compared to C–E (into-B), as evidenced by both professional assessments and interpreters’ self-evaluations. This directional disparity was particularly pronounced in self-evaluations, where participants reported marked differences across all four quality dimensions, including information completeness, fluency of delivery, target language quality, and holistic quality. Cognitive load measurements through NASA-TLX revealed significantly elevated effort, mental demands, and frustration levels during into-B interpreting, suggesting that technological assistance cannot fully mitigate the inherent challenges of into-B interpreting. These findings offer empirical observations that may inform ongoing discussions of task enhancement and disengagement in MI-assisted interpreting. While they do not constitute a direct theoretical advancement, they highlight patterns that merit further conceptual and longitudinal investigation. Pedagogically, the results point to considerations for technology-integrated interpreter training rather than prescriptive instructional models. First, this advocates for differentiated instruction strategies when introducing MI tools, or AI tools in general, with more systematic scaffolding and practice time allocated for into-B interpreting tasks. Second, this highlights the importance of developing direction-specific coping strategies and competencies in interpreting training to optimise the benefits of AI-powered technologies. Finally, this provides guidance for developing targeted class exercises that help student interpreters effectively manage multiple information inputs while delivering satisfying interpreting, especially when interpreting into their non-native languages.

The present study has several methodological limitations. First, the small sample size limits generalisability and reduces the statistical power of quantitative analyses. Second, participants received only brief familiarisation with the MI system, rather than the systematic training typically required for effective CAI use. Given that even professionals experience a learning curve, limited training likely influenced how students interacted with the tool, and the findings primarily reflect early-stage adaptation. Future work should adopt larger-scale, longitudinal designs incorporating structured training phases to more accurately assess how expertise and directionality influence MI-assisted CI. Third, tasks were administered in a fixed order; randomising task order in future studies would help isolate potential sequencing effects. Fourth, the sample consisted of student interpreters, whose developing expertise and limited exposure to technological tools may have heightened cognitive load and constrained their ability to strategically integrate MI support. Including professional interpreters and diversifying rater profiles would allow deeper examination of how expertise mediates technological assistance and direction-sensitive evaluation. Finally, incorporating process-oriented measures such as eye-tracking or EEG would provide more granular insight into the cognitive mechanisms underlying MI-assisted interpreting and complement the self-report and performance data used here.

Footnotes

Appendix

Acknowledgements

We would like to express our sincere gratitude to the participants for their time and contribution.

Author contributions

Wenkang Zhang: Data curation, Formal analysis, Investigation, Methodology, Software, Visualization, Writing – original draft, Writing – review and editing.

Rui Xie: Conceptualization, Data curation, Formal analysis, Investigation, Methodology, Software, Supervision, Writing – original draft, Writing – review and editing.

Yongqi Xian: Methodology, Validation, Writing – review and editing.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Ethical approval and consent to participate

This study obtained ethical approval from the Ethics Review Committee of The Chinese University of Hong Kong, Shenzhen (EF20241202001). All methods performed in the study were carried out in accordance with the 1964 Helsinki Declaration and its later amendments or comparable ethical standards. Informed consent was obtained from all individual participants involved in the study.

Data availability statement

Data is available by contacting the corresponding author upon reasonable request.