Abstract

The remote and fragile Kizil Cave murals present a rich educational value for ancient Buddhist artworks in the Silk Road, China. Virtual reality (VR) offers a sustainable and non-invasive medium to experience these murals, while expanding access and protecting the original site. This article introduces a controller-free, multisensory VR teaching experience that uses full-body motion and haptic interaction to support embodied cognition and immersive learning. By allowing learners to see, hear, and physically engage with mural narratives, the system fosters both deeper understanding and emotional connection to the heritage. This approach advances UNESCO's sustainable development goal (SDG) 4 (quality education) by enhancing learning outcomes, SDG 10 (reduced inequalities) by broadening access across diverse user groups, and SDG 11.4 (heritage protection) by supporting digital preservation and public awareness. Drawing on recent research in haptic democratization and inclusive design, the study illustrates how user participation, multisensory interaction, and accessibility can be integrated into a practical and equitable model for heritage education. The interdisciplinary framework offers relevant knowledge for scholars and practitioners in heritage studies, immersive learning, and sustainable development.

Introduction

The ancient Buddhist murals of the Kizil Caves in Xinjiang, China—dating from the third to ninth centuries CE—are among the earliest and most important painted cave shrines along the Silk Road (UNESCO, n.d.). Carved into the cliffs of the remote Kuqa region, these grottoes once served as vibrant cultural crossroads, where Indian, Iranian, and Chinese artistic and religious traditions converged (Daniel, n.d). The murals vividly depict Jātaka tales (stories of the Buddha's past lives), monastic life, and secular scenes along ancient trade routes, making them not only masterpieces of Central Asian art but also invaluable pedagogical resources (Sächsische Akademie der Wissenschaften zu Leipzig, n.d.). Through their rich visual narratives, the Kizil murals offer unique opportunities for learning about Buddhist ethics, cross-cultural exchange, and pre-modern Eurasian lifeways (Daniel, n.d).

However, despite their cultural significance, the murals face a multifaceted crisis of accessibility, fragility, and historical loss. Since the late nineteenth century, numerous mural fragments were forcibly removed by foreign expeditions—including German, British, and Japanese teams—and are now dispersed across museum collections in Berlin, London, St Petersburg, and elsewhere (Elikhina, 2013; Morita, 2015; Staatliche Museen zu Berlin, n.d.). The original site itself is situated in a harsh desert environment, and centuries of natural weathering, vandalism, and earlier excavation damage have rendered many paintings extremely fragile. Visitor presence introduces light, humidity, and temperature fluctuations that threaten the delicate pigments and microclimate within the caves (Agnew, 2010). Today, public access to most of the 230+ caves is strictly prohibited, with only a small number of chambers open under tight conservation controls. Photography is banned, and entry time is severely limited. This reality presents a dual challenge: how can we educate people about this extraordinary yet endangered cultural legacy, and how can we do so inclusively—across geographic, linguistic, and physical boundaries—without further endangering the site?

Virtual reality (VR) offers a sustainable and non-invasive solution to this dilemma. By digitally reconstructing the Kizil Cave spaces in immersive form, VR allows learners around the world to explore and engage with the murals without putting physical stress on the originals (Addison, 2001; Champion, 2021). It enables the creation of multisensory, embodied learning environments where students can see, hear, and “interact with” mural narratives, fostering emotional connection and deeper understanding (Johnson-Glenberg, 2018). More importantly, VR lowers barriers to access: users from remote regions or with mobility limitations can experience the site meaningfully, aligning with global equity and inclusion goals and accessibility guidance (Dudley et al., 2023; O’Connor et al., 2021). From the perspective of cultural sustainability, VR supports both the conservation of heritage (sustainable development goal (SDG) 11.4) and the expansion of high-quality, accessible learning (SDG 4), while also contributing to the reduction of educational inequality (SDG 10) (UNEP, 2025; United Nations, 2022, 2025).

In this paper, we present a controller-free, multisensory VR learning system designed specifically for the Kizil Cave murals. Using full-body gesture recognition, spatial interaction, and multimodal feedback (visual, auditory, and simulated tactile cues), the system supports embodied cognition and user-driven learning (Johnson-Glenberg, 2018). We describe the pedagogical logic behind the experience design, as well as the results of a user study evaluating the system's effectiveness in promoting immersion, skill learning, and engagement. Drawing on recent research in haptic democratization and inclusive design, we propose this VR model as an equitable, scalable, and culturally responsible approach to heritage education (Elbaggari, 2022; O’Connor et al., 2021). Ultimately, this research demonstrates how advanced media technologies can safeguard fragile legacies while transforming how we teach and learn from them.

Our work draws upon and contributes to three key strands of research: (1) the use of VR in cultural heritage visualization (Champion, 2021), (2) multisensory and embodied interaction in learning environments (Johnson-Glenberg, 2018; Lindgren & Johnson-Glenberg, 2013), and (3) sustainable and inclusive design practices in digital education (Dudley et al., 2023; O’Connor et al., 2021). Below, we situate our contribution in relation to these domains.

Related Work

Virtual Reality in Cultural Heritage

Recent years have seen immersive VR increasingly adopted for reconstructing fragile or remote heritage sites, enabling virtual access while preserving physical authenticity. Notable projects such as Virtual Giza (Manuelian, 2013), Lascaux VR (France-Voyage, n.d.), and Scan Pyramids illustrate the potential of immersive environments for engaging users with endangered archaeological spaces. These endeavors elevate cultural presence and storytelling through spatial immersion (Brooks, 2019), yet often remain visually focused and under-exploit multimodal interactivity (Kidd et al., 2019).

Multisensory and Embodied Interaction in Learning

Growing evidence in educational technology and human-computer interaction suggests that multisensory, embodied engagement significantly enhances learning outcomes, especially when dealing with cultural and artistic subject matter. Embodied cognition theories emphasize how gesture, bodily movement, and sensory feedback anchor understanding and emotional retention (Johnson-Glenberg, 2018; Lindgren & Johnson-Glenberg, 2013). While there is exploratory work integrating auditory, haptic, and olfactory cues to enrich cultural learning (Zhang & Du, 2025), few systems allow for natural, controller-free interaction throughout the learning experience (Ma et al., 2023).

Sustainability and Inclusive Design in Cultural Education

Digital heritage education is increasingly framed within sustainability and inclusion discourse, with frameworks like UNESCO's advocating for equitable access and preservation aligned with SDG 4 (quality education) (United Nations, 2022), SDG 10 (reduced inequalities) (United Nations, 2025), and SDG 11.4 (heritage protection) (UNESCO, 2016). Previous approaches have leveraged low-cost mobile apps or accessible interface designs (e.g., sign language-enabled platforms) to broaden participation (Gkagka et al., 2023; Guerrero-Contreras et al., 2024). For extended reality (XR) specifically, technical guidance highlights requirements for multimodal support, customization, and safety to ensure accessibility (O’Connor et al., 2021). Nevertheless, there remains a gap in VR systems that unite embodied interaction, inclusive access, and cultural interpretive depth at scale.

Positioning Our Contribution

Our study advances the field by delivering a controller-free, multisensory VR learning system for the Kizil Cave murals—a site of immense cultural importance yet limited accessibility. Unlike predominantly visual reconstructions, our design emphasizes gesture-based full-body interaction, multimodal sensory feedback, and inclusive, accessibility-conscious features (O’Connor et al., 2021). This approach addresses the current limitations in embodied engagement, equity, and sustainable heritage mediation.

Methods

System Design: Controller-Free Multisensory VR Learning

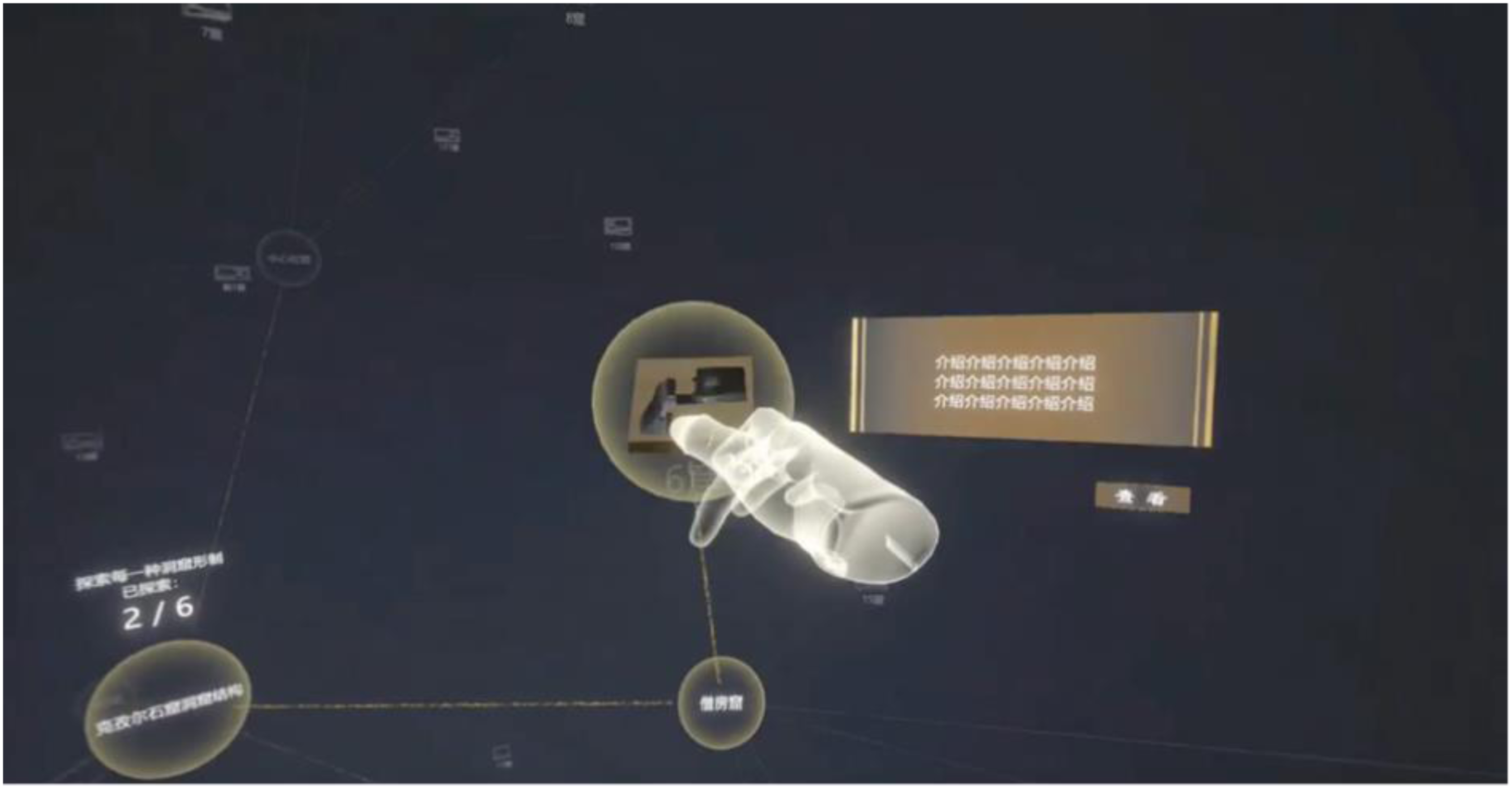

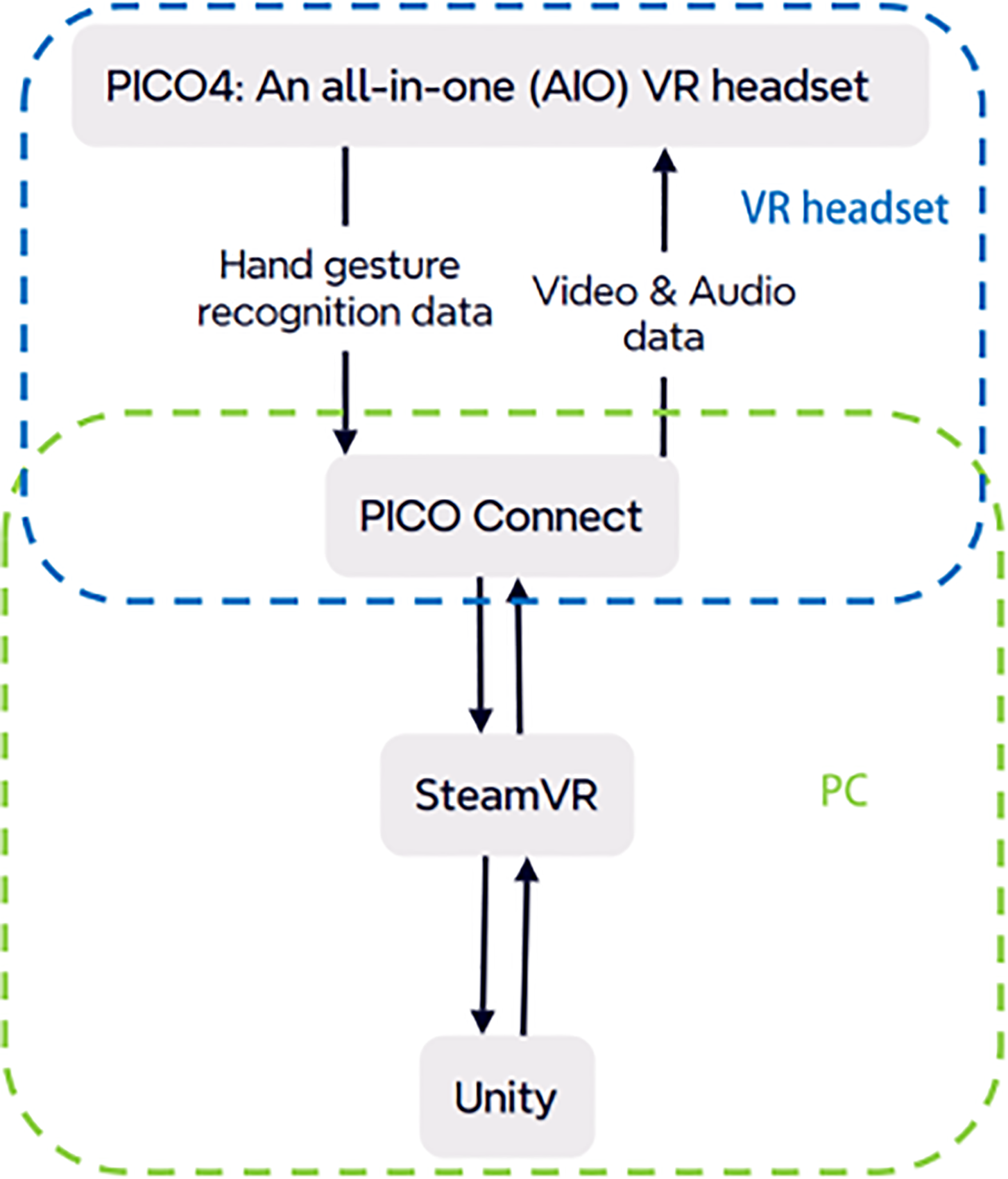

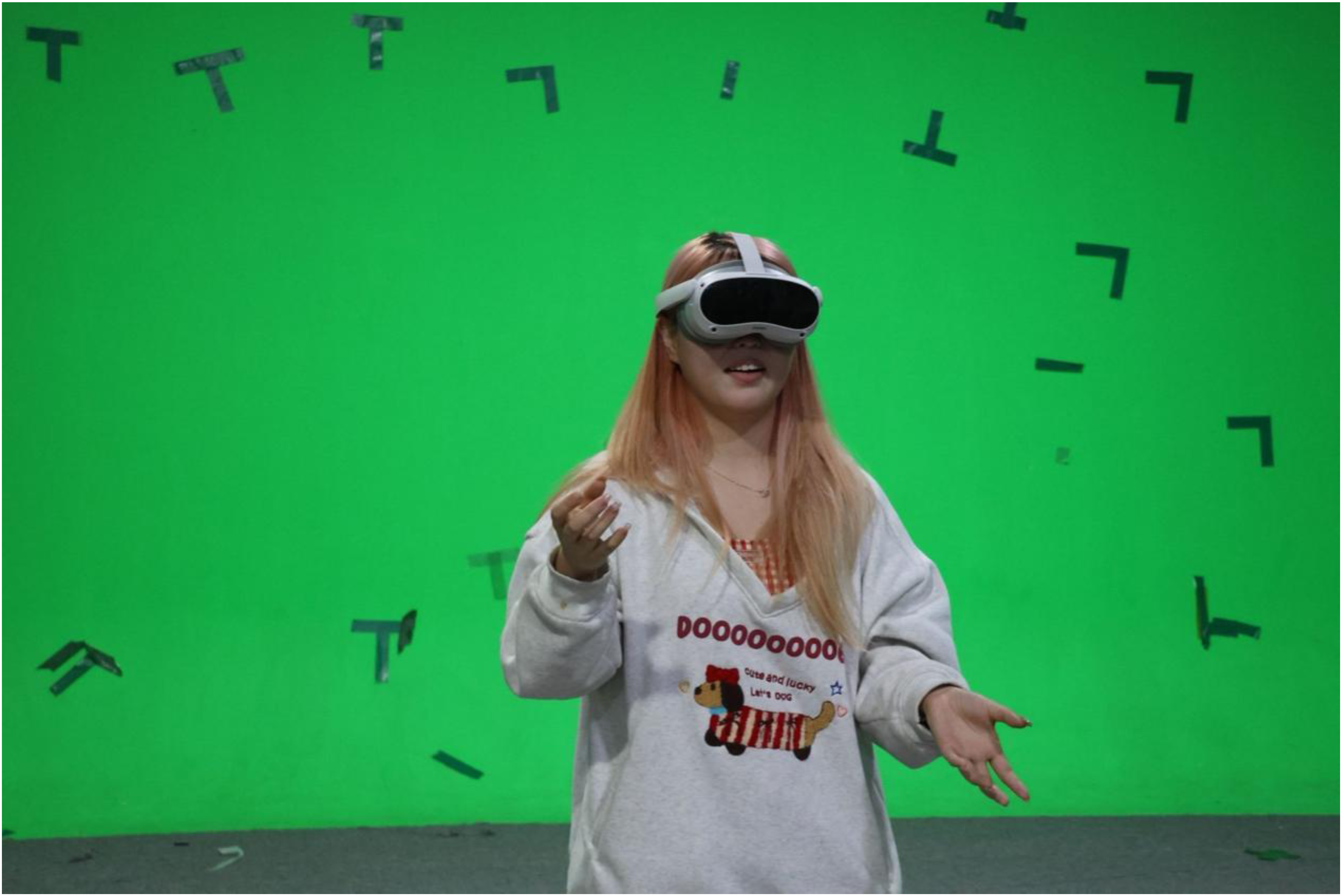

To leverage these strengths of VR for the Kizil murals, we designed a controller-free, multisensory VR system. Controller-free means the user does not need to operate handheld VR controllers; instead, the system uses natural body movements and gestures as input (O’Connor et al., 2021). This decision lowers the barrier to entry and increases embodiment. Many first-time VR users (especially young students or non-gamers) find game controllers intimidating or abstract—they have to learn which button or joystick does what. By removing physical controllers, we let users interact as they normally would in the real world: moving their hands, arms, or whole body, and using voice or gaze (Figure 1). Modern VR headsets with built-in hand tracking and external depth sensors enable recognition of hand gestures and body posture. For example, in our Kizil VR Experience System, a student can reach out to “touch” a mural with their hand and the system registers that action, or they can nod/shake their head in response to a question and the system interprets it as “yes” or “no.”

Gesture-Based Interaction Scene From the Kizil VR Experience System. VR = Virtual Reality.

Full-Body Interaction and Embodiment

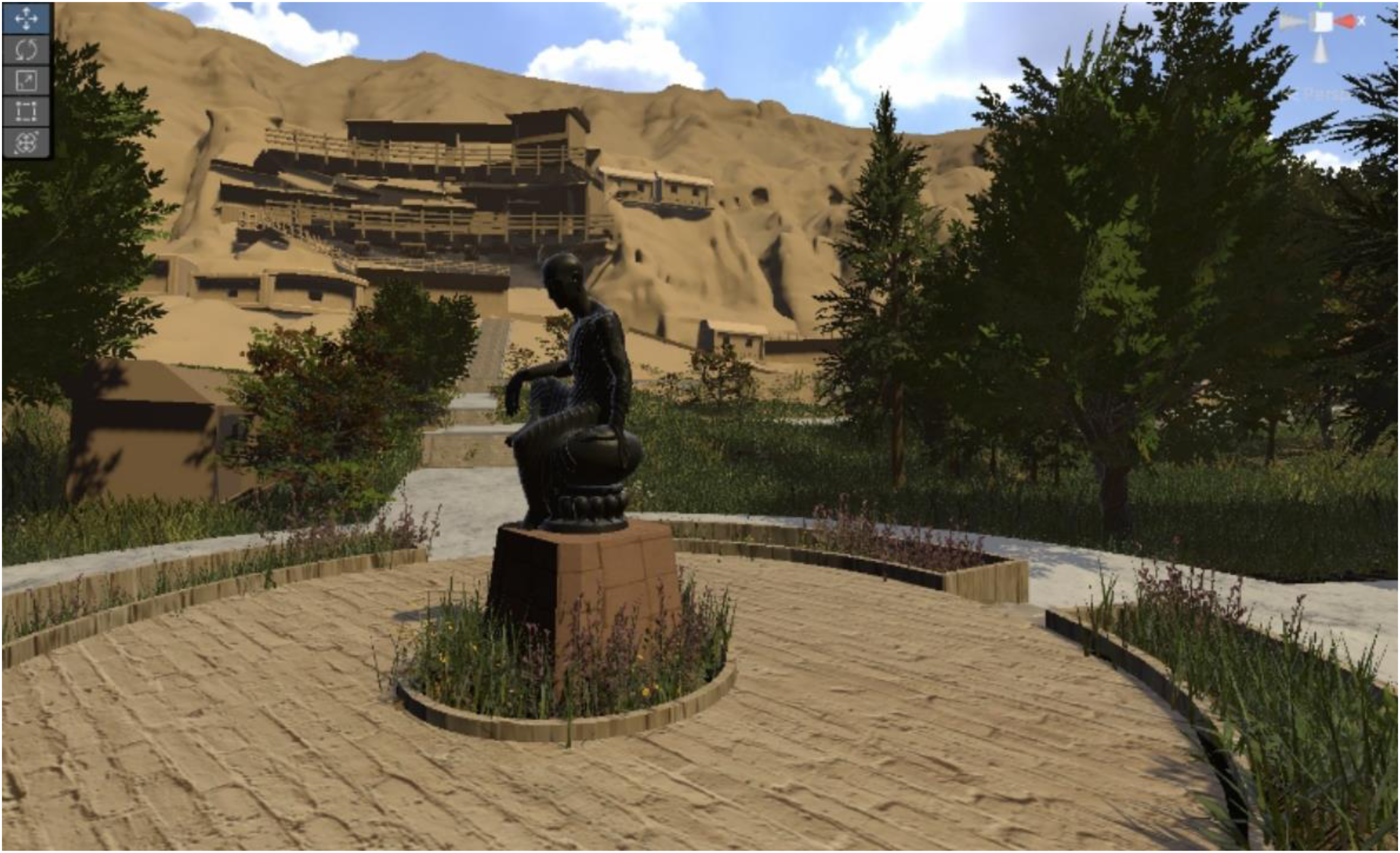

The learner's entire body becomes an active interface in our virtual environment. Users can walk around the virtual cave (or teleport short distances if physical space is limited) to explore different sections (Figure 2). They may crouch to get a closer look at lower parts of a wall painting, or extend an arm to point at and select an item of interest on the mural. Because there are no artificial input devices mediating these actions, the interactions feel intuitive—much like how one would behave when physically present in a cave (with some VR-only abilities like teleportation or summoning info panels). This design taps into embodied cognition: learning can be deepened when it involves bodily experience in addition to mental processing (Makransky & Petersen, 2021). By physically navigating the space, students form mental maps of the cave layout and remember the relative positions of murals better than if they only saw static images in a book (Wei et al., 2025). Embodied interaction also makes abstract concepts tangible. For instance, if a mural depicts a musical performance, our system allows the user to mimic playing an instrument to trigger corresponding sounds, actively linking the image to its meaning. Such learning-by-doing particularly benefits kinesthetic learners, keeping their attention and enjoyment high.

Rendered Model of the Virtual Kizil Cave Environment.

This full-body approach also promotes inclusivity. Not everyone has the dexterity or ability to use game-style controllers—this could include young children, people with certain disabilities, or simply those unfamiliar with gaming. Our reliance on natural gestures and motion means that if a user can move their hand (or even just direct their gaze), they can interact. We implemented multiple input modes: hand gesture recognition, gaze dwell (staring at an interactive object for a couple of seconds to select it), and voice commands for key functions. For example, a user can say “Next” to advance a narration, or look at a glowing icon to activate it, if they prefer not to (or cannot) use their hands. This multimodal input design ensures the experience accommodates a broad range of users, aligning with inclusive design principles. By not requiring specialized hardware or complex button presses, we lower technological and skill barriers, enabling more people to comfortably use the system. This supports the SDG 10 goal of reduced inequalities: the VR tool is built so diverse learners—regardless of physical ability or gaming experience—can participate equitably.

Multisensory Feedback (Visual, Audio, Haptic)

A hallmark of our system is engaging multiple senses to recreate the atmosphere of the caves and the narratives on their walls. Visually, the VR presents a high-fidelity three-dimensional (3D) reconstruction of a Kizil Cave interior, textured with photogrammetric images of the actual murals (from conservation archives) (Bentkowska-Kafel et al., 2012; Stylianidis, 2020). But we go beyond visuals. Audio elements are richly layered to enhance immersion and understanding. Upon entering the virtual cave, students hear ambient sounds like the faint drip of water, echoing footsteps, and wind whistling from the desert outside. As they focus on a particular mural, spatial audio cues play: for example, a gentle narration explaining the scene, or sound effects related to the story (the roar of a painted lion, the chant of a meditating monk) (Kern & Ellermeier, 2020; Potter et al., 2022). Audio provides context and emotional tone that visuals alone cannot, helping to situate the learner historically and thematically. We offer narration in multiple languages to broaden accessibility, and even a voice-based query system: a student can ask aloud “Who is this figure?” and a voice from a virtual guide will answer, making the experience feel like a dialogue with a knowledgeable companion rather than a one-way lecture (Figure 3).

One of the Virtual Guides in the Kizil VR Experience System. VR = virtual reality.

The most innovative aspect of our design is the use of haptic feedback and tactile cues to incorporate the sense of touch. Obviously, virtual objects cannot be physically felt, so we employ wearable devices and illusions to simulate touch sensations. Learners wear a lightweight pair of haptic gloves and two small haptic pads (one on the chest, one on the forearm) during the session. These devices produce vibrations and gentle pressure forces. Whenever the user “touches” something in VR, the gloves buzz or resist to convey contact. For instance, if the user runs their hand along a virtual cave wall to feel the texture of a mural, a fine-grained vibration in the glove simulates the roughness of ancient plaster. If they pick up a virtual artifact (say, a pottery lamp), the gloves create a sensation of weight and surface contact in their palm. The chest/forearm pads provide larger feedback: for example, during a story scene with a thunderclap or an impact, the chest pad thumps to impart a mild shock, enhancing the drama. We also implemented mid-air haptics using focused ultrasonic waves, allowing users to feel some feedback without wearing gloves for certain interactions (Carter et al., 2013; Long et al., 2014). As a user's hand approaches a wall painting, for example, we project a faint pressure sensation on their palm—as if feeling the “aura” or radiated warmth of the painting before contact. This subtle touch not only enriches realism but can guide behavior (a slight resistance as a boundary, preventing a user's avatar from “passing through” a solid wall) (Neate et al., 2023).

Research indicates that adding tactile feedback in VR enhances sensory engagement and reinforces presence (Sallnäs, 2010). In our context, feeling the textures and forces makes the virtual cave more convincing and “grounded” for the user. It also adds an experiential learning dimension: when learners both see and feel something, they form stronger memories of it than by sight alone. Our design strategically ties every haptic or audio cue to a pedagogical purpose, not just novelty. For example, when a student correctly identifies a character in a mural, the system provides positive feedback through a gentle vibration pulse in the glove coupled with a congratulatory chime (Figure 4). This pairing of sensory reward with achievement reinforces the learning moment (analogous to a teacher's approving nod, but here you feel it). If a student's attention is needed on a certain detail, we may use a subtle cue—like a directional sound or faint vibration—to draw their gaze. By mindfully designing these cues, the multisensory system provides a continuous feedback loop: the student acts or responds, and the system answers through visual, auditory, or haptic feedback. The experience thus becomes highly interactive and student-centered, with the sensory richness serving the educational narrative at every step.

Optical Visual Feedback.

User Interface and Interaction Flow

From the user's perspective, the session feels intuitive, engaging, and responsive. Upon donning the VR headset, the learner finds themselves embodied as an explorer avatar inside a virtual Kizil Cave. The perspective is first-person; they see the environment through their own eyes and use their own hands/bodies to interact. We provide a minimal avatar representation (semi-transparent hands and a faint body shadow) so that the user perceives a self-presence in the virtual space without a full distracting mirror image (Figure 5). This first-person embodiment is crucial: it gives the student a personal viewpoint within the historical scene. For example, if the mural depicts monks and patrons gathered around the Buddha, the student can stand among them, at the Buddha's feet—looking up and experiencing the scale as if they were a pilgrim in that scene. Such a perspective can build empathy and understanding by letting one experience the art rather than just observe it.

Hardware System.

The interface is designed to be unobtrusive and diegetic (embedded in the environment). There are no floating toolbars or game-like heads-up displays by default. Instead, the environment itself acts as the interface: looking at an interactive item or making a gesture toward it will highlight it subtly, and an icon might appear next to it indicating possible actions (e.g., a hand icon if you can “grab” an object, or an ear icon if an audio story is available there) (Beck & Roberts, 2021). A virtual guide character is available in the environment as well—for instance, a friendly monk non-player character who can be summoned via voice command. This guide offers instructions and context when needed (“Try touching the mural to feel its texture,” or “This painting was made in the 5th century during the Kucha kingdom…”), and even asks the learner questions to prompt reflection (“What emotion do you think the artist conveyed here?”). The guide's presence is optional and user-triggered; learners can explore independently for a more discovery-driven experience, calling on the guide only if they want help or deeper explanations. The key idea is to empower the user to drive their own learning: they can follow their curiosity within the virtual cave, while the system dynamically supports and educates them at their chosen pace.

Throughout the experience, learning feedback is woven into the interactions. If the user engages with a mural and tries to interpret it, the system provides immediate informative feedback. For example, suppose a student looks at a mural panel and guesses aloud, “This looks like the story of Prince Siddhartha and the hungry tigress.” The system's voice recognition would catch this and the guide avatar might respond, “Excellent, you recognized the Hungry Tigress Jātaka! Indeed, it shows Prince Mahasattva offering himself to feed a starving tigress, exemplifying selfless generosity.” If the guess was wrong, the guide would gently correct them: “That's a good guess. Actually, this story is about King Sibi's sacrifice—let's explore how it unfolds.” In both cases, the student immediately knows whether they were on the right track and learns the correct narrative, much like a teacher would provide instant feedback in a tutoring session.

The system also tracks progress and provides subtle progress feedback. The VR lesson can be structured as a series of micro-activities or discoveries (for instance, finding and examining all key murals in the cave might be a goal). The system notes which important spots the user has visited and, if some are missed, will prompt the user before concluding: “Before you leave, note that there's another significant mural on the right wall you haven’t explored yet.” As users complete each section (e.g., fully explore a mural and answer a question about it), a gentle indicator updates (such as ticking off that mural in a virtual notebook). This gives learners a sense of accomplishment and orientation (“I’ve covered these topics, a few more to go”). It also provides teachers or facilitators monitoring the session a gauge of how far the student progressed and what they interacted with, useful for debriefing afterward (Tao et al., 2025).

We incorporate a short debriefing phase at the end of the VR session. When the learner finishes exploring (either by their choice or after covering all required content), the system transitions to a calm virtual space (or simply stays in the cave) and the guide avatar poses a few reflective questions: “How did it feel to stand in front of these ancient paintings?” “What lesson from the stories resonated with you the most?” The student can respond freely (speaking or selecting from multiple-choice options). The system doesn’t judge these answers; rather, this is to encourage the learner to articulate their thoughts and feelings. The guide then offers a closing summary highlighting the key takeaways: for example, “From the murals here, you learned about the virtue of self-sacrifice in Buddhism, the cultural diversity of the Silk Road, and how technology can help preserve such heritage. We hope this experience inspires you to learn more and help protect our world heritage.” This recap reinforces the learning objectives and ties the personal experience back to broader themes, including explicit mention of preservation values (e.g., even a note that our project itself is an effort to preserve heritage through technology might be conveyed). We found that this reflection phase helps consolidate what students gained from the VR and connects it to real-world significance.

Finally, user comfort and safety are carefully safeguarded. VR can be intense for newcomers, so we monitored signs of discomfort (if a user remained still for too long, or verbally indicated dizziness, etc.) and the guide would gently intervene—for instance, offering to teleport them to a neutral brightly lit area if they felt overwhelmed. Periodically the guide asks “Are you okay to continue?” especially after any intense sequence. This ensures the experience remains positive and user-centered, prioritizing the learner's well-being as much as their learning.

User Study Design

After building the VR system and example content, we conducted a user study to evaluate its effectiveness on several fronts: immersion, gesture accuracy and skill learning, knowledge retention, and user engagement, compared to a more traditional learning method (Jennett et al., 2008). We focused the evaluation on a specific interactive task drawn from the murals—a culturally significant musical instrument depicted in Kizil art—as this allowed measurable performance comparison (UNESCO, n.d.). In the Kizil mural known as “Tian Gong and Musical Entertainment” (a scene of celestial musicians), the ancient Chinese lute Ruan Xian is shown being played. We developed a module where learners could virtually learn and practice the Ruan Xian as part of the mural's story, using hand-gesture recognition and audio feedback to teach basic playing techniques (plucking and strumming patterns depicted) (Volioti et al., 2023). We then compared this VR-based learning against a conventional video tutorial for the same instrument.

Participants

We recruited 20 participants (college-aged, mixed gender) with diverse backgrounds in music. To ensure a balanced comparison, participants were split into two equal groups of 10: an experimental group using the VR gesture-learning system, and a control group who learned via traditional video instruction. Each group had five participants with some prior musical instrument experience and five with no musical experience, and notably, none had experience with the Ruan Xian itself. This balanced design ensured we could evaluate the VR system's impact across both novices and those familiar with learning instruments (albeit a different one), while controlling for baseline skill differences.

Procedure

All participants first underwent a pre-test to assess their baseline knowledge and skills. They filled out a questionnaire about their familiarity with the Ruan Xian instrument, any prior VR usage, and basic hand coordination skills. They also performed a simple finger gesture test (unrelated to Ruan Xian) to gauge initial gesture control ability (Volioti et al., 2023).

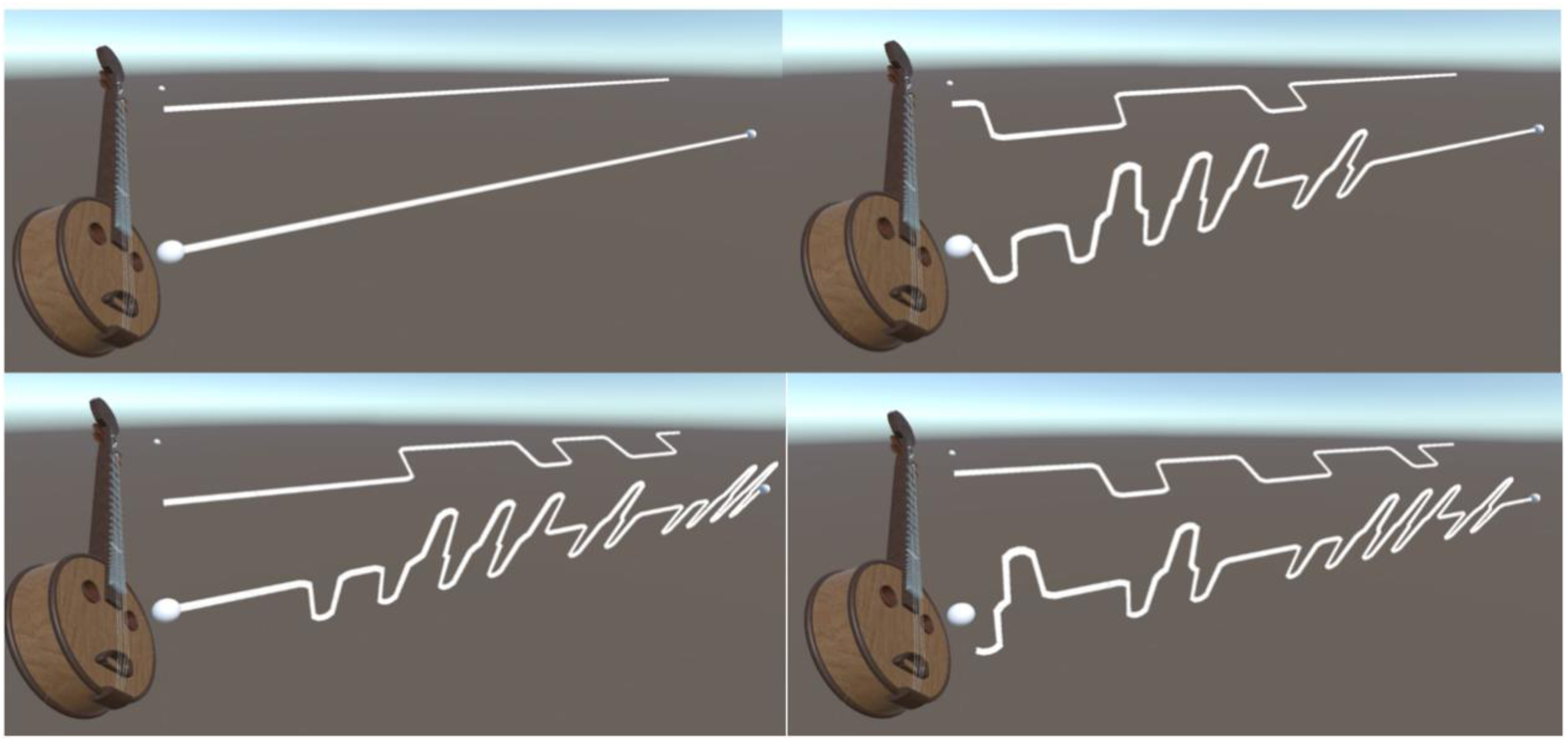

Each participant then proceeded with their assigned learning session. The experimental group entered our VR environment wearing the headset and haptic gloves, and followed an interactive Ruan Xian tutorial in VR—essentially an adapted lesson where a virtual instrument was placed in their hands and visual/auditory cues guided them to pluck strings in certain sequences (Figure 6). The system provided real-time feedback on their hand positions and timing: if they moved correctly, they would hear the proper musical notes and see a visual glow on the instrument; if they erred, the system would highlight the mistake and play a corrective sound or voice prompt (e.g., “move your index finger higher”) (Boutin et al., 2023; Gani et al., 2022). In contrast, the control group watched a video tutorial on a screen, demonstrating how to play the same basic Ruan Xian notes and rhythms, but with no interactivity or personalized feedback (Figures 7 to 9). They could imitate along with the video, but they did not receive any cues whether they were doing it right or wrong.

Experimental Group Received Complete Visual and Audio Feedback During the VR-Based Ruan Xian Exercise. VR = virtual reality.

Control Group Learned Ruan Xian According to a Traditional Video Tutorial.

Curve Drawn by LineRenderer.

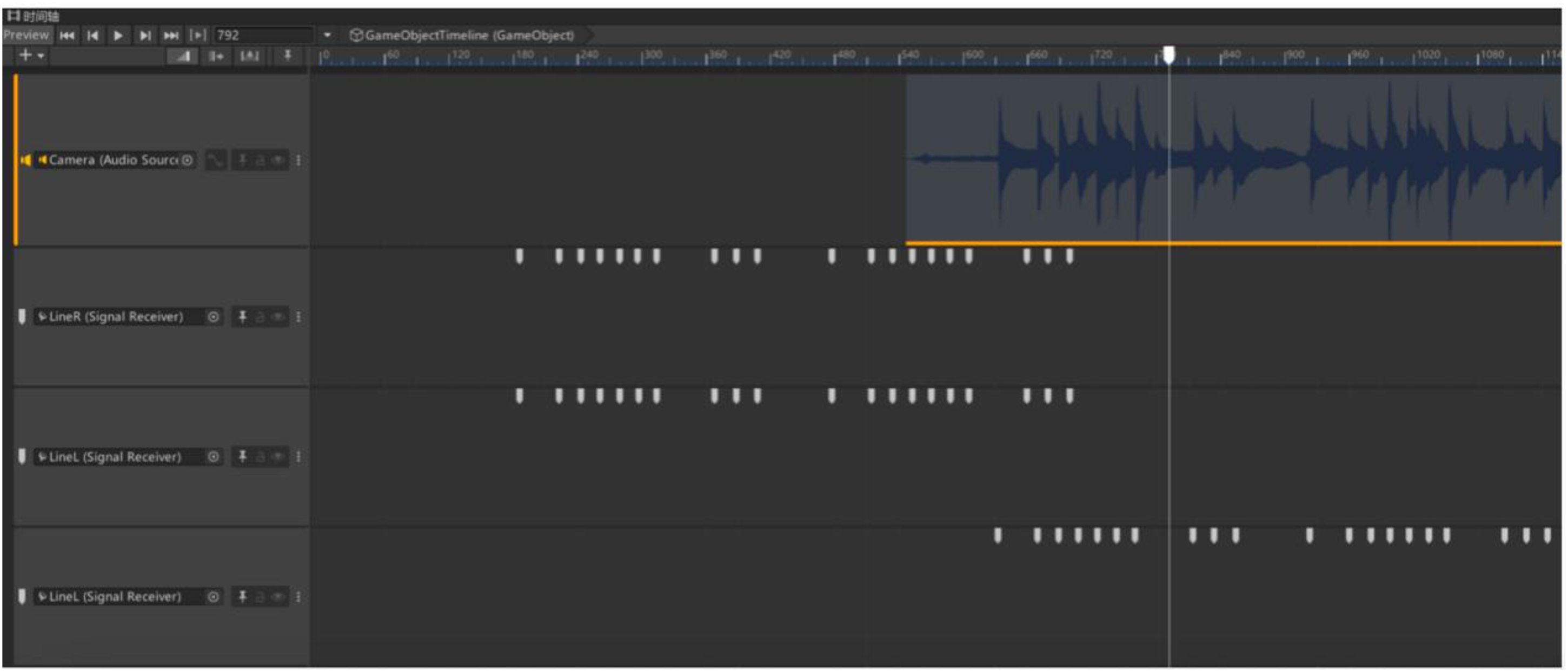

Music Rhythm Timeline.

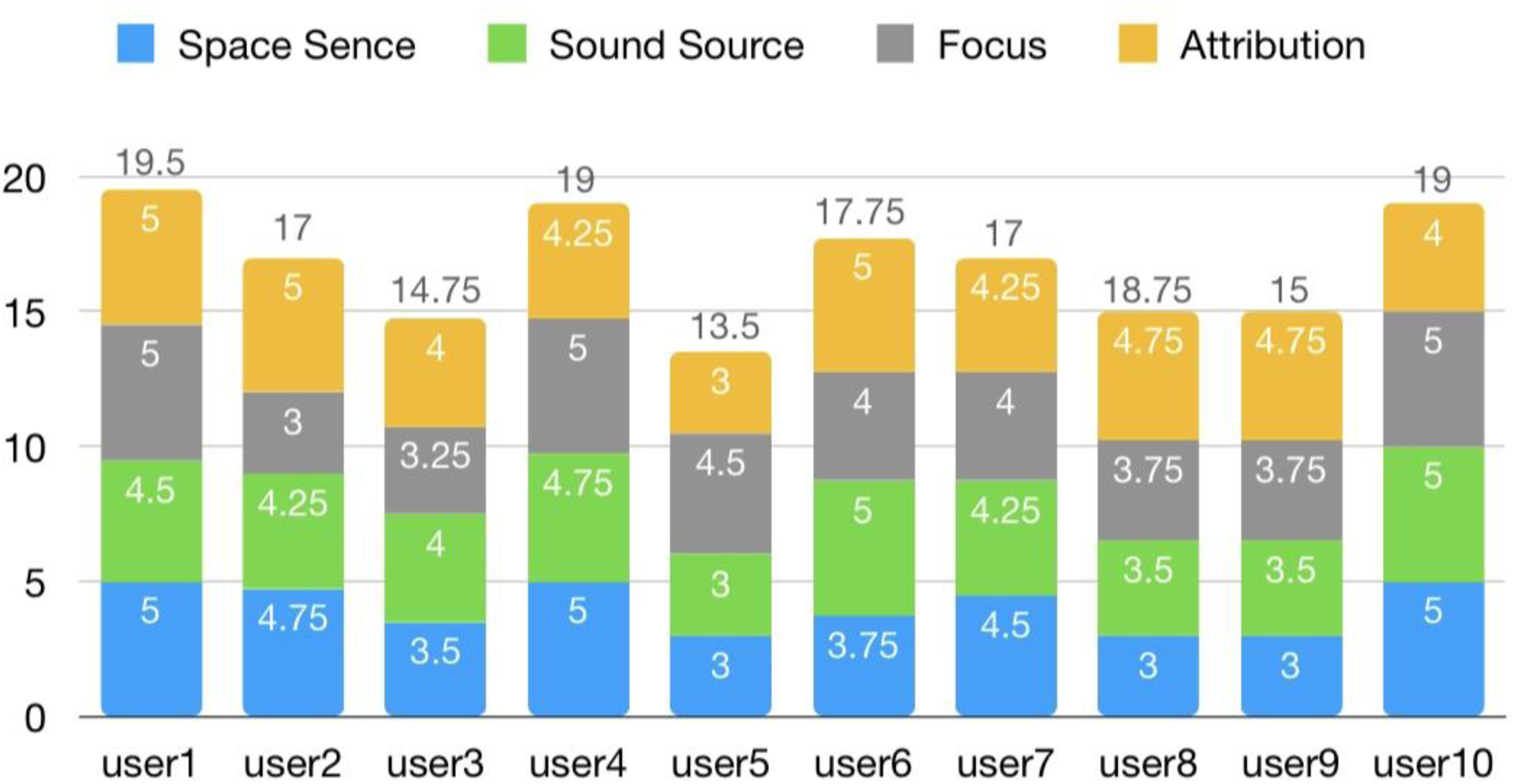

After this learning phase, we measured immersion and user experience. The experimental group, having used VR, and the control group, after watching the video, both completed an Immersion Questionnaire designed to capture their sense of presence and engagement. We adapted questions from a perceived immersion (PI) framework covering four dimensions: (1) room perception—did they feel surrounded by a complete virtual space or scene; (2) sound perception—could they locate and distinguish sounds clearly (important for the VR group's spatial audio vs the stereo audio in video); (3) attention—how absorbed were they in the activity, losing awareness of the real environment; and (4) attribution—did the controls and feedback feel natural and helpful for the task (Figure 10) (Hammond et al., 2023; Jennett et al., 2008). These questions were answered on a Likert scale. Additionally, participants were interviewed for qualitative feedback on their experience—what they found effective or challenging, and their overall enjoyment (Bareišytė et al., 2024).

User Scores in Four Factors in VR. VR = Virtual Reality.

Finally, to assess learning outcomes, participants performed a post-training test on the Ruan Xian. We asked each to play a short sequence of notes they had just learned, this time without any guidance or feedback (the experimental group removed the headset and gloves; both groups just had the real or virtual instrument without cues). This test was recorded for analysis (Figure 8). Specifically, we evaluated their gesture accuracy (did they use the correct finger placements and motions as taught) and timing consistency (Figure 9) (did they hit the beats in the correct rhythm) (Volioti et al., 2023). These performance metrics were quantified by reviewing the recordings (for the control group) and logs from the VR system (for the experimental group, which automatically tracked their finger movements during the test). We also noted if participants could recall the meanings or context of the instrument and its cultural significance from the tutorial (a simple verbal quiz for knowledge retention).

In summary, our user study collected both quantitative data (immersion ratings, performance metrics on playing accuracy/timing) and qualitative data (user feedback and observations). Next, we present the results comparing the VR gesture-learning experience to the traditional video learning (Bareišytė et al., 2024).

Results

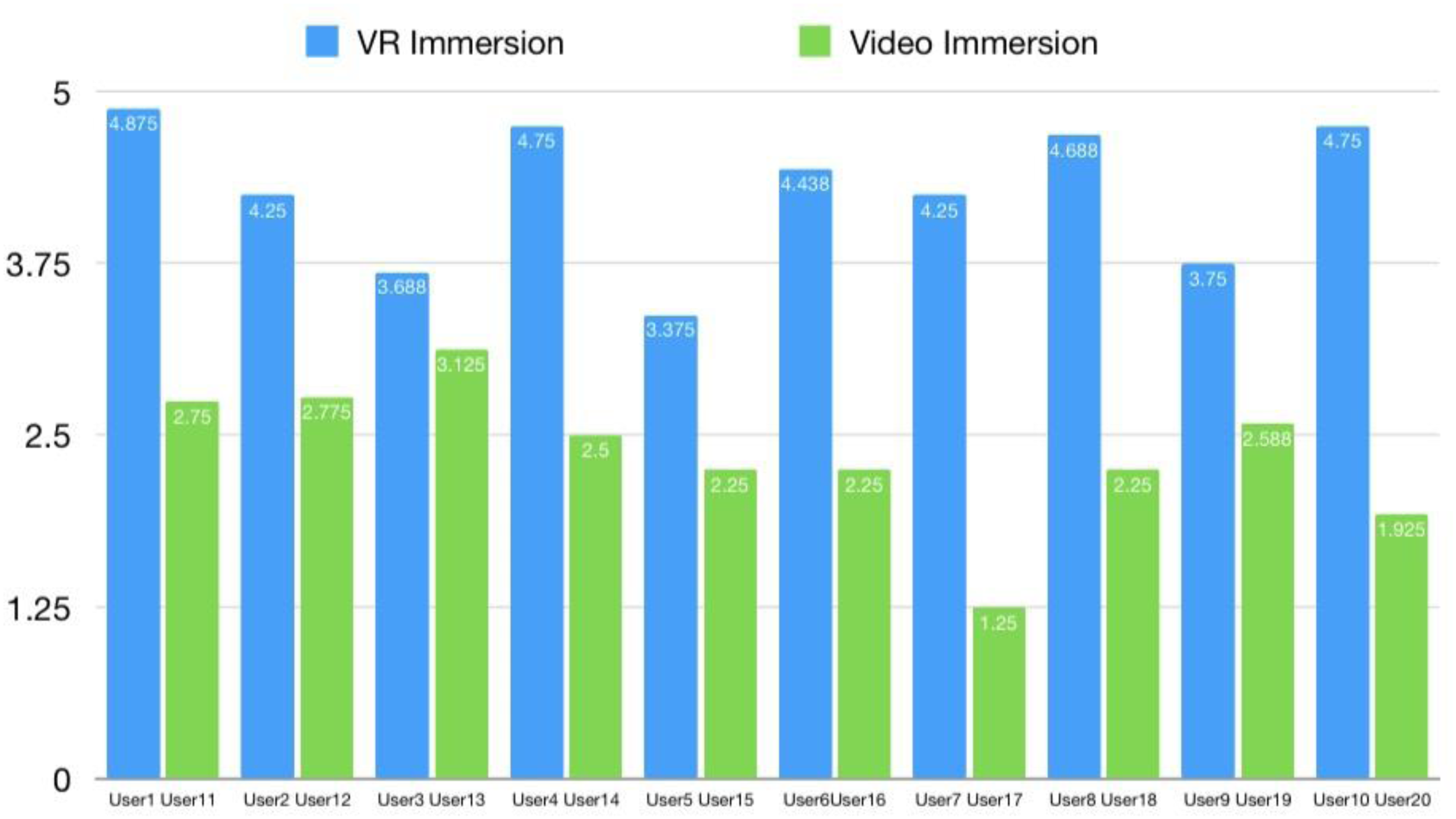

Immersion

The VR system substantially improved participants’ sense of immersion compared to the traditional method. On a composite 5-point scale (combined score derived from the PI questionnaire dimensions) (Jennett et al., 2008), the experimental group reported an average immersion score of 4.28 (SD = 0.49), whereas the control group averaged 3.29 (SD = 0.78) (Figure 11). The VR learners often described feeling “present in the scene,” surrounded by the virtual cave and instrument (Cummings & Bailenson, 2016), while video learners remained aware they were just watching a screen. The difference in immersion scores was statistically significant (independent t-test, P < .01), indicating the controller-free, multisensory VR provided a markedly stronger feeling of presence and engagement than the flat video-based approach (Cummings & Bailenson, 2016). In particular, VR participants gave high ratings to the room perception and attention sub-scales, reflecting that the 360° environment and interactive feedback kept them focused and oblivious to outside distractions (Makransky & Petersen, 2021). Some control group participants, by contrast, noted their attention wandered during the video.

Immersion Scores of the Users.

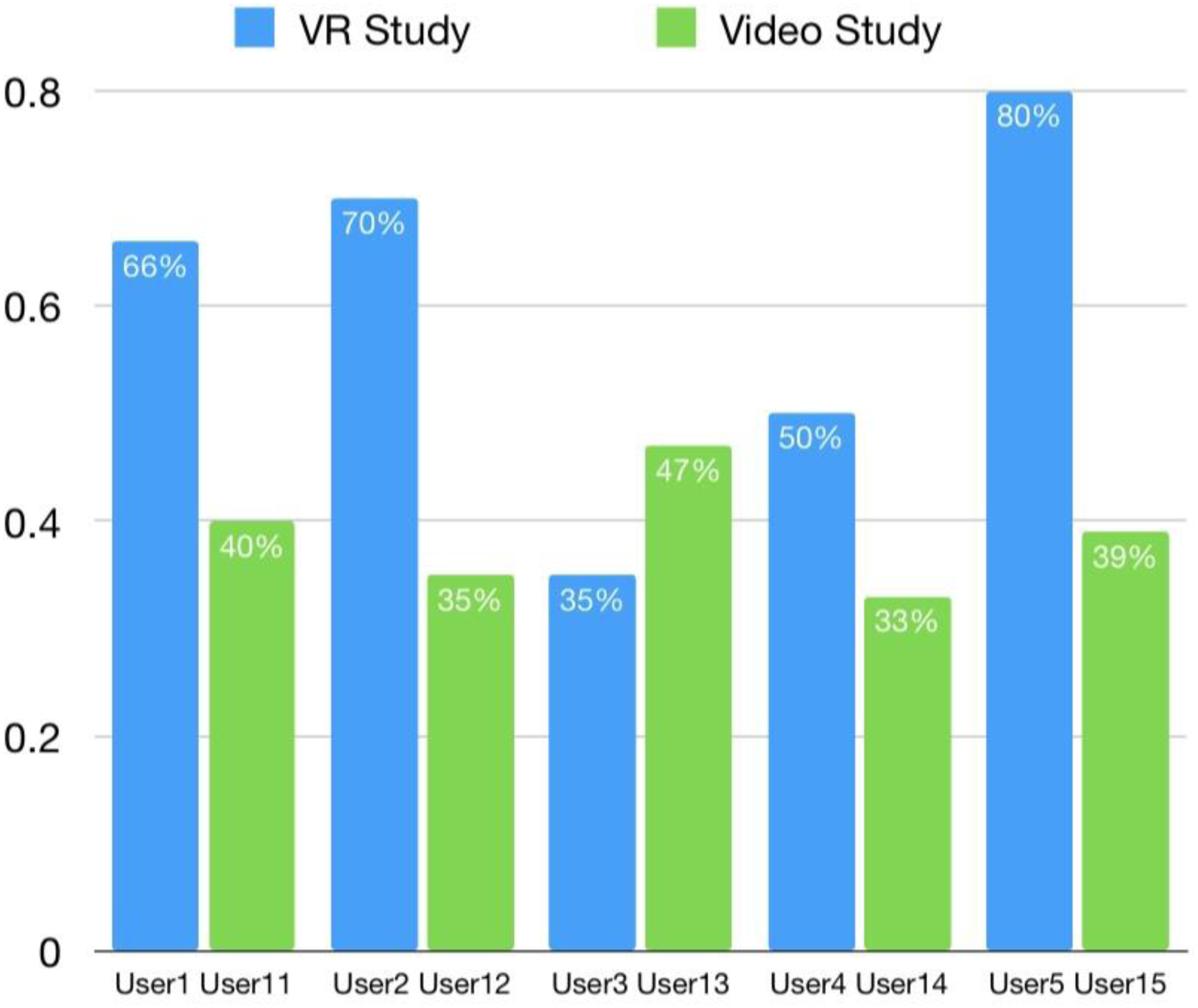

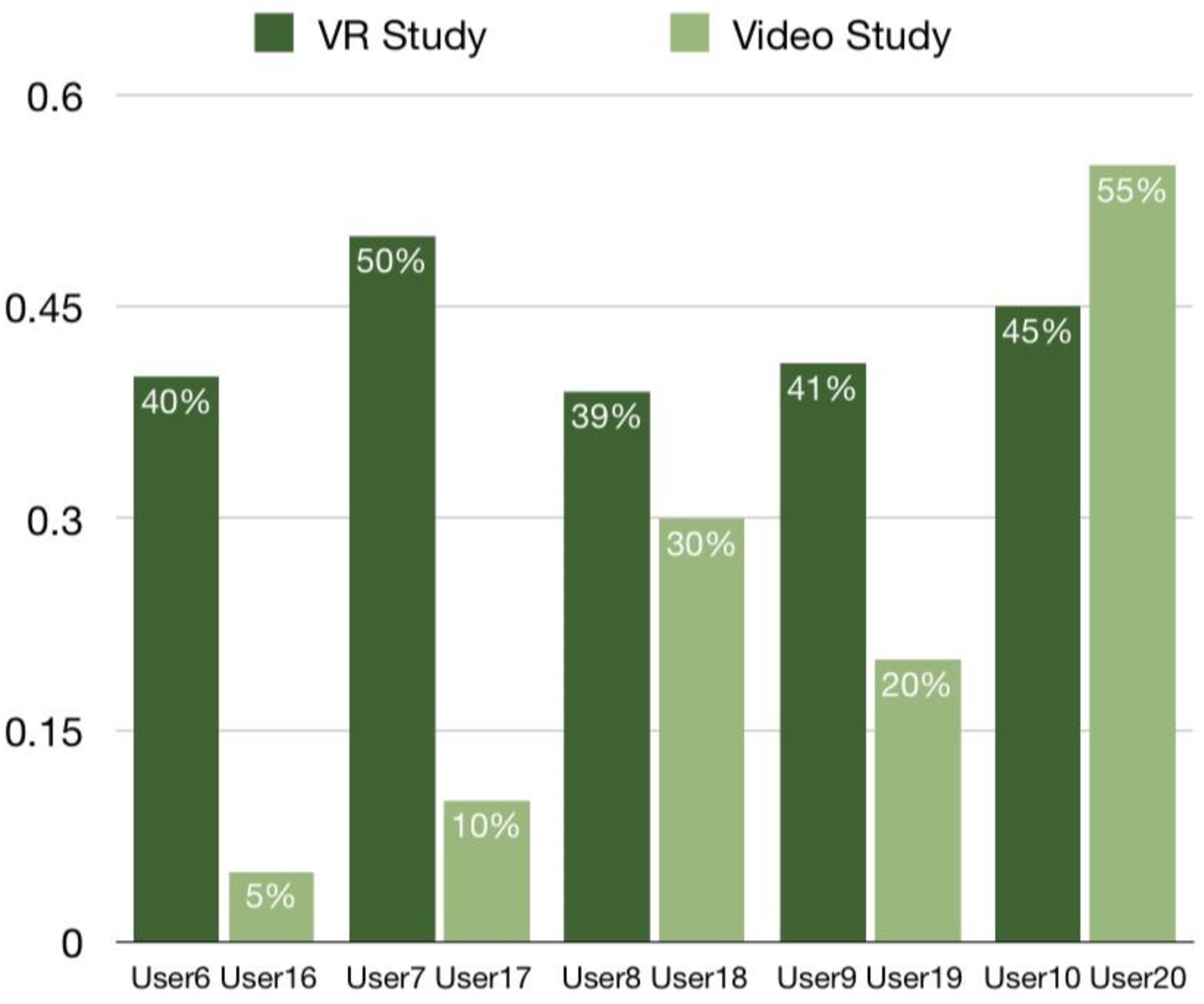

Gesture Accuracy and Skill Performance

Objective performance metrics showed the VR group learned the Ruan Xian technique more effectively (Sigrist et al., 2013). During the final unguided practice test, the Experimental group achieved an average gesture accuracy of 51.6% (SD = 14.4%), whereas the control group achieved only 31.4% (SD = 15.0%). This accuracy score was based on how many of the required finger positions and motions were correctly executed. Likewise, timing consistency was significantly better in VR: the experimental group's average timing deviation was 55.4 s (SD = 26 s) off from the ideal tempo (over the length of the exercise), compared to 94.6 s (SD = 62 s) for the control group. In other words, the VR-trained participants not only learned the finger movements more accurately, but kept a steadier rhythm when performing, while control participants tended to falter or pause more often. The differences suggest that real-time multimodal feedback in VR (visual cues when a finger was misplaced, audio rhythm guidance, etc.) helped users quickly correct mistakes and internalize the skill, leading to better post-training performance (Islam & Lim, 2022; Seymour et al., 2002). These improvements in both accuracy (+20 percentage points) and timing (∼40% better) are notable given the short training period, highlighting the effectiveness of the interactive, embodied learning approach.

Learning Outcomes and Knowledge Retention

Beyond motor skills, we observed differences in how well participants understood the content. In a brief oral quiz about the cultural context (e.g., “What is the significance of the Ruan Xian instrument in the mural?”), 7 out of 10 VR group participants could correctly recall that it was an ancient lute depicted in a celestial music scene and mention its role, compared to only 3 out of 10 in the control group. Moreover, VR participants often added descriptive details unprompted (e.g., describing the cave setting or how the music felt), indicating a richer memory trace. Many VR users formed an emotional or narrative connection (“I felt like I was actually performing in the cave orchestra”), which seemed to aid retention. In contrast, several control users admitted the video felt abstract and they “zoned out” or didn’t remember much beyond the finger movements demonstrated. While our sample size is small for robust statistical claims on knowledge retention, these trends align with the idea that active immersive learning leads to deeper cognitive processing of the material (Makransky & Petersen, 2021).

User Experience Feedback (Qualitative)

Interviews and observation notes provided insight into the learners’ experiences:

Participants in the VR group overwhelmingly reported the experience as engaging and enjoyable. They described the session as “fun and immersive,” often highlighting how the real-time feedback helped them improve. “It was like the system was a personal tutor—when I moved my hand wrong, it instantly showed me how to fix it,” said one user, who felt this saved time compared to trial-and-error with a video (Sigrist et al., 2013). Many noted that being inside the virtual cave and seeing the instrument in context made the learning more meaningful: “I wasn’t just practicing music, I felt part of a story.” Several VR participants commented that the experience was less intimidating than a traditional class or video; mistakes didn’t feel embarrassing because feedback was gentle and the environment was playful. A few challenges were noted for the VR system. At first, some users had trouble getting oriented to the gesture interface—for example, understanding where to position their hands so that the system would correctly track the Ruan Xian strings. “At the beginning I kept missing the virtual strings because I wasn’t used to mid-air tapping,” one participant laughed, though adding that “after a bit of practice it clicked.” Indeed, most users reported that these initial adaptation issues were overcome within minutes, suggesting a short learning curve. Another minor issue was physical fatigue: two participants mentioned that holding their arms up (to play the virtual instrument) grew tiring faster than expected—something to consider in future design (perhaps by allowing more breaks or posture adjustments). Importantly, no participants reported any motion sickness or serious discomfort; the gentle pacing and ability to move at will likely helped. In stark contrast, the control group participants often expressed frustration. Many found it challenging to learn complex finger movements from the video alone, especially with no feedback on whether they were doing it right. One person noted, “I couldn’t tell if my hand position was correct, so I kept rewinding the video and still wasn’t sure.” Several control subjects said they felt less motivated to continue practicing without any interactive element: “It's hard to stay interested when it's just a video and you’re not sure if you’re improving,” said one. Observationally, some in the control group grew visibly frustrated—sighing, pausing the video frequently, or giving up on certain parts of the practice. This contrasts with the VR group, where we observed many participants continuing to play around even after completing the required task, indicating higher engagement.

Overall, the results demonstrate that the controller-free, multisensory VR system was effective in enhancing immersion, performance, and engagement in learning the cultural heritage material (Sigrist et al., 2013). Participants using VR were more present, learned the gestures more accurately, stayed on rhythm better, and enjoyed the process more than those using traditional media. Qualitatively, the VR approach appears to boost learners’ confidence and interest—some even kept practicing longer than instructed, purely out of enthusiasm (Figures 12 and 13). Meanwhile, the control group's difficulties underline the importance of interactive feedback in acquiring new skills and knowledge (Islam & Lim, 2022).

Performing Accuracy of the Users With Basic Skills of Instruments (%).

Performing Accuracy of the Users Without Any Skills of Instruments (%).

These findings align with our expectations that an embodied VR environment can provide superior support for learning complex, sensorimotor tasks (like playing an instrument) and for contextual learning (situating the skill in a meaningful story) (Islam & Lim, 2022; Makransky & Petersen, 2021). In the next section, we discuss the implications of these results, along with considerations for sustainable heritage education and the broader impact of such technology.

Discussion

Advancing Heritage Education Through Immersive Design

The development and evaluation of the Kizil VR teaching experience exemplifies how interdisciplinary collaboration can yield innovative solutions at the crossroads of technology, education, and cultural heritage. We integrated insights from heritage studies (to ensure authentic content and context) (Bendicho, 2013), from immersive learning research (to leverage VR's engagement effects), and from sustainable development frameworks (to align with inclusion and preservation goals) (United Nations, 2025). This deliberate blending of perspectives was crucial to creating a system that speaks to multiple stakeholders: museum curators, teachers, technologists, cultural policymakers, and of course the learners themselves. By focusing on the human impact—how this helps people learn and care about heritage—we avoided technology for technology's sake and kept the design intent clear across disciplines.

Our approach resonates with emerging ideas of telexistence and haptic democratization in new media. Telexistence technology, as Lee (2024) describes, can bridge distances and make remote experiences tangible, essentially allowing people to be “present” in places they physically aren’t. However, Lee also cautions that these technologies must be developed to empower and include people rather than alienate or isolate them. Our VR system is a practical realization of that vision: it uses immersive telepresence and rich haptic feedback to bridge the gap between a learner and a far-off heritage site—bringing Kizil to anyone, anywhere—while ensuring the experience is inclusive and user-centric. We are not simply building a high-tech gadget, but a bridge that carries knowledge from the past to learners of today across barriers. This democratization of access reflects what Lee frames as striving for equity with emerging media. Cultural education has often been a luxury for the few (those who can travel to sites or attend prestigious schools); our project treats it as a shared resource for the many, enabled by technology.

Likewise, principles of inclusive design and what term “creative democratization” guided our development. Inclusive design means accommodating diverse users in the experience—exemplified by our controller-free, multimodal interaction that can be used by novices, people with limited mobility, and different language speakers. The creative democratization aspect suggests technology should allow people to participate in cultural creation or interpretation, not just consume passively. In our current project, users are primarily consuming an experience, but even within that, they are co-creating their path by choosing what to explore and how to interact (each user's journey in the cave is unique). We envision extending this further: for example, allowing students to build their own virtual exhibits from what they learned, or enabling local communities to add their narratives or perspectives into the VR as optional layers (imagine an Uyghur cultural perspective voice-over for Kizil, contributed by the local community). This would turn learners into creators and stewards of the digital heritage content, truly democratizing the cultural discourse.

Balancing Authenticity and Engagement

One challenge we encountered is balancing historical authenticity with creative interactivity. To truly preserve heritage values, the VR must faithfully represent the art and context (the colors, designs, spiritual meanings should not be distorted) (Bendicho, 2013). Yet, to engage learners, we sometimes introduce creative elements—animations, interactive story events—that go beyond what's literally on the cave walls. We navigated this by working closely with historians and art experts, and grounding any embellishments in canonical sources or logical inference. For instance, if we gave King Sibi spoken dialogue in the VR reenactment, we based his words on textual versions of the Jātaka tale. This ensures we don’t stray into pure fiction or misleading interpretation. This experience underlines the importance of interdisciplinary teamwork: technologists working hand-in-hand with historians, educators, and cultural advisors to maintain credibility while innovating. Future projects in immersive heritage should emulate this model—involve curators and scholars early in the design to vet content accuracy, and involve designers in heritage discussions to brainstorm engaging yet respectful ways to present the material (Bendicho, 2013).

Accessibility and Scalability

Another consideration is access to the required technology. While VR hardware is far more accessible than a decade ago, it's still not ubiquitous, especially in under-resourced schools or regions. If our aim is to reduce inequalities (SDG 10) in access to cultural education, we must plan for how to get this technology into the hands of those who could benefit most—perhaps through partnerships with educational institutions, government grants to set up VR labs in rural schools, or traveling VR kits provided to museums and community centers (United Nations, 2025). The cost of hardware and the need for technical support could otherwise become a barrier that ironically reintroduces inequality. We see solutions in the form of community VR stations (e.g., a local library with a VR setup for public use) and adjusting our software to run on lower-cost or even mobile VR platforms as they improve.

Content longevity is also an issue: digital projects can become obsolete as software/hardware evolves. From a sustainability perspective, we need to preserve not just the real murals but also the digital experience itself. We are addressing this by using open, cross-platform standards where possible, such as OpenXR for runtime portability and glTF 2.0 for 3D asset interchange, which also has ISO/IEC recognition (The OpenXR™ Specification; glTF™ 2.0 Specification; Khronos glTF 2.0 Released as an ISO/IEC International Standard). This makes it easier to port the experience to future systems without a complete rebuild. Therefore, VR content requires long-term maintenance to remain functional and publicly accessible, like films or digital archives.

User Feedback and Iterative Design

The user testing was invaluable in refining the system. Early pilot sessions (prior to the formal study) revealed, for example, that some students found the initial haptic feedback intensity surprising or even distracting. We responded by dialing the default vibration strength down, and providing settings for users or instructors to adjust it. This way, the tactile cues accentuate the experience without overwhelming. We also learned which narrative elements resonated most. Interestingly, many students reported that the emotional impact of the stories was much stronger in VR: one student shared, “I had read about these Jātaka stories before, but in VR I felt the sacrifice—it was almost real.” This kind of comment highlights VR's ability to generate empathy and emotional engagement, which is a double-edged sword (Herrera et al., 2018; Madary & Metzinger, 2016). On the one hand, emotion can greatly enhance learning (as emotional arousal aids memory and empathy broadens understanding); on the other, we must be careful not to traumatize or overwhelm learners, especially younger ones. We addressed this by pacing the experience—ensuring moments of intensity are followed by calm periods for reflection—and by having the guide avatar contextualize and reassure. For example, after the dramatic King Sibi scene, the guide reminds the student that “no one was truly harmed in the end; it was a test of virtue,” to alleviate any lingering distress. This kind of emotional modulation is a lesson we impart to future immersive content creators: leverage VR's power to engage the heart, but also provide grounding and comfort (Madary & Metzinger, 2016).

Wider Implications and Future Work

Our project demonstrates one way to achieve sustainable, inclusive heritage education, and we believe the model can scale far beyond Kizil. The methodology—digitize a heritage site, design multisensory interactions around its stories, align with educational and sustainability goals—is broadly applicable. One can imagine similar VR learning experiences for Mayan temples, Egyptian tombs, medieval cathedrals, indigenous cultural sites, and more. The specifics would differ (each heritage has unique stories and sensory elements), but the blueprint of “immerse, interact, and inspire” holds true. By sharing our case study, we contribute to the growing body of knowledge on using VR for heritage sustainability and education. We show that when done thoughtfully, VR doesn’t isolate people in a tech bubble; it can connect them more deeply with human culture and even with each other. In fact, a compelling extension would be multiuser VR sessions, where students from different countries explore the same virtual cave together and discuss in real time—fostering cross-cultural dialogue and mutual understanding (Hein et al., 2022; Knutzen et al., 2025). This social dimension (imagine Chinese and American students jointly experiencing Kizil and sharing perspectives) could indirectly support goals like peace and partnership (SDGs 16 and 17) by building empathy across borders.

Our initial positive results give us confidence that VR, wielded with purpose, can indeed enhance learning and make it more inclusive. To temper this enthusiasm, we acknowledge a few limitations: the current evaluation had a relatively small sample size (20 participants) and focused on a single type of interactive lesson (musical instrument training). In future research, we intend to conduct studies with larger and more diverse participant groups, including younger students and possibly international classrooms, to validate and extend our findings. We also plan to test different content types—for example, using a VR system to teach about traditional art conservation techniques or ancient languages—to see if the benefits generalize to other kinds of heritage learning. Additionally, while our design strove to be inclusive, the requirement of a VR headset and haptic gear means there is a technological learning curve for some users. A few participants, as noted, needed time to adapt to interacting in VR. Future versions could incorporate an onboarding tutorial (a brief “VR 101” practice session) to help users get comfortable before diving into the historical content. We are also exploring integrating more affordable haptic solutions or even haptic-free options (using just visual/audio cues to simulate touch, as an entry-level mode) to accommodate schools that might not have glove devices (Elbaggari, 2022).

In conclusion, the Kizil VR project shows the art of the possible when emerging media are guided by educational and ethical goals. It stands at the confluence of preserving the past and innovating for the future. By emphasizing user experience, sensory richness, and alignment with societal needs (like education access and heritage conservation), we ensured that this high-tech venture remains fundamentally human-centered. As one collaborator phrased, “We are not just building a virtual cave; we are building a bridge—a bridge that carries knowledge from the past to learners of today, across distances and barriers.” With that spirit, we look forward to expanding this work and seeing many more such virtual bridges built around the world.

Conclusion

The exploration of the Kizil Cave murals through a controller-free, multisensory VR learning experience offers a compelling vision for the future of heritage education—one that is immersive, inclusive, and impactful (Radianti et al., 2020). We set out to solve a dual problem: how to educate people about a fragile, remote cultural treasure, and how to do so in a way that anyone can participate and benefit. Our full-body VR approach demonstrated that it is possible to bring learners “into” a heritage site virtually, allowing them to engage with ancient art and narratives in a deeply experiential manner (Cummings & Bailenson, 2016). By removing traditional barriers (both the physical barrier of geographic distance and the technical barrier of complex interfaces), we created an educational space where diverse learners can connect with history on a personal level (O’Connor et al., 2021). The incorporation of visual, auditory, and haptic elements meant that learning was not confined to the intellect, but also touched the senses and emotions—a holistic approach that led to more profound understanding (Sigrist et al., 2013).

This project underscores that technology, when guided by humanistic goals, can greatly enhance sustainable development targets in education and culture. In advancing quality education (SDG 4), we showed that learning about heritage can be far more engaging and effective when students are active participants in an immersive narrative rather than passive recipients of information (United Nations, 2022). The VR experience became a vivid memory for learners, likely to stay with them much longer than a textbook chapter (Makransky & Mayer, 2022). In addressing reduced inequalities (SDG 10), we put the democratization of cultural access front and center (United Nations, 2025). A student from a rural village or a person with limited mobility can now stand in a legendary cave and closely examine its art—an opportunity previously reserved for a privileged few. Here, technology acted as an equalizer, reinforcing the notion that everyone deserves access to humanity's cultural heritage. In terms of heritage protection (SDG 11.4), our work contributes both by directly reducing the need for physical interaction with a vulnerable site (thereby lowering risk of damage) (Agnew, 2010), and by cultivating a global audience that values and wants to protect such sites. Every learner who comes out of the VR with a sense of wonder or respect for the Kizil murals is potentially a new advocate for cultural preservation.

Our findings and user feedback confirm that VR enriched with multisensory engagement can make heritage education more equitable and impactful (Yu & Xu, 2022). Students in our trials not only learned factual information (dates, names, symbolism), but did so with a level of enthusiasm and personal connection that is hard to achieve with conventional methods (Jongbloed et al., 2024). Many described the experience as “eye-opening” or “inspiring,” and importantly, as fun—not typically an adjective for history lessons. This positive emotional response matters because it can spark further interest: a student who enjoys a virtual visit to Kizil may go on to learn about other Silk Road art, or simply become more curious about world history and cultural diversity. In this way, the project's impact ripples outward beyond just the immediate lesson.

We also gleaned valuable design lessons for future projects. One is that embodiment and interactivity need to be purposefully directed—adding technology features for their own sake can distract or overwhelm (Makransky & Petersen, 2021). Instead, success lies in integrating the right features that serve the story and learning goals. Another lesson is the paramount importance of cultural sensitivity and accuracy; working with domain experts (historians, archaeologists, local cultural representatives) was key to keeping the experience authentic and respectful (Bendicho, 2013). Additionally, providing user options and adaptability proved crucial for inclusivity: users have different comfort levels and abilities, so a one-size-fits-all immersion can inadvertently exclude some (O’Connor et al., 2021). Our design of multiple input modes and adjustable feedback intensity addressed this, and we recommend future developers of educational VR do the same.

In summary, the marriage of VR technology with cultural heritage content—under the guidance of pedagogical and sustainability values—is a promising pathway to equitable, high-quality education and heritage conservation (Radianti et al., 2020). The Kizil Cave murals VR project is just one example, but a successful one, of how this fusion can look in practice. It virtually transports a piece of the Silk Road into classrooms and homes around the world, teaching not just art history but also empathy, creativity, and global awareness (Wei et al., 2025). As we move forward, we envision expanding such initiatives and creating a virtual library of world heritage sites accessible to all. In doing so, we keep the torch of cultural knowledge burning brightly and pass it to the next generation in a form they find illuminating and inclusive. Just as the Kizil murals themselves were a convergence of cultures and ideas along the Silk Road, our project is a convergence of past and future—an ancient legacy preserved through cutting-edge means, for the benefit of present and future generations.

Footnotes

Ethical Statement

This study was conducted according to the guidelines of the Declaration of Helsinki and received academic ethics review and approval from the review committee of the Ministry of Social Science, Changshu Institute of Technology (No. CIT MSS-E-2024-041). Informed consent was obtained from all subjects involved in the study. Written informed consent has been obtained from the participant(s) to publish this paper.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the China Postdoctoral Science Foundation, Research Project of Xinjiang Uygur Autonomous Region Key Research and Development Program Projects: “Digital Restoration and Immersive Experience Demonstration of Key Cave Murals in the Kizil Caves” (grant number 2024M751597, 2022B03035-3).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.