Abstract

This study explores the socio-technological barriers to the adoption of artificial intelligence (AI)-powered solutions in three countries of the global south – South Africa, Lesotho, Eswatini, Botswana and Zimbabwe. Through 20 in-depth interviews with key stakeholders, it examines the distribution and circulation of AI technologies within selected newsrooms. Furthermore, the article explores socio-technological obstacles to the integration of AI among journalists. Lastly, it examines the consequences of these socio-technological obstacles to journalism. The article specifically seeks to answer three questions: How are AI technologies integrated in southern African newsrooms? What are the socio-technological barriers attendant to the use of AI in selected news organisations of sub-Saharan Africa? What are the implications of these socio-technological barriers to the process of news production in these newsrooms? The article reveals the challenges hindering the development and deployment of AI in these organisations, and I highlight the detrimental effects of limited AI access, which places Southern African media organisations at a disadvantage on the global stage, perpetuating socio-technological disparities between the global north and global south. The findings underscore the urgency of addressing these barriers in order to reduce information inequality, increase efficiency and productivity and improve audience engagement. Reducing technological barriers and democratising AI integration can enhance the influence of Southern African media in promoting domestic and international equity. The article therefore, propose a model for AI adoption and reduction of technological barriers in adopting these technolgies. This study emphasises the need for collaborative efforts among policymakers, industry leaders and stakeholders to create an inclusive environment that maximises the potential of AI for the greater benefit of parts of Southern African societies. The study adds to extant literature at the intersection of AI and media, particularly how AI technologies are impacting on specific communities of practice, like newsroom organisations.

Introduction

The artificial intelligence (AI) race, across the tech industry, is growing around the production and design of AI models that are faster, stronger and more profitable (Lindgren, 2023a). With this advent of AI technologies, and their almost universal ubiquity, several debates have emerged about the quotidian integration and usage of these technologies. These debates have centred on the ethics of AI, and the responsible use of these technologies (Dignum, 2019). Yet, in the developing world, the question of integration is still central to AI debates as the technologies percolate into society (Adamu & Nkwo, 2023).

To what extent, for example, will AI technologies remain completely value-free considering that they are an, ‘…active social construct….’ (Lindgren, 2023a, p. 3). How will these technologies make certain decisions and assumptions without reproducing inequalities as they interact with people in society? There are also enduring arguments about responsible AI (de-Lima-Santos, unpublished). This argument revolves around how AI can be made responsible. Bassett and Roberts (2023) argue that the notion of a responsible AI can be self-immolating because it assumes the existence of a normative model of AI built on the acceptance of AI in decision-making. Lastly, in this debate, there is also the question of how to ensure equity in the use of AI.

These arguments make an incorrect assumption – the assumption that AI has already been fully embraced in every context such that society is focused on its humanness, ethics, equity and responsible AI, because the stage of uptake, adoption, adaptation and usage has been passed. Yet, as AI technologies explode, the socio-technological barriers to their deployment ought to be understood. This is crucial in contexts like southern Africa, considered to be traditionally net importers of technologies like AI (Simon Ramaoka & Kraemer-Mbula, 2022). Jobin and Katzenbach (2023) agree, noting that AI takes shape as an important socio-technical institution on today's society. Thus, AI is not only technologically driven but driven by people's capacity to adopt and adapt to it. This, in turn, is affected by socio-technological factors that may hinder the integration of these technologies. By socio-technological barriers, I adopt Mullakhmetov's (2018) definition (supported by Lindgren's, 2023a view) of the concept to mean technological change and its complex interactions with various elements like individual predispositions, existing cultures (for instance journalism cultures) regulations, practices and cultural meanings at different scales. Lindgren (2023a, p. 6) clearly articulates thus, ‘AI interaction with society cannot be understood according to any simple or universal “natural” laws. Rather, [the adoption of technology in] society is not only affected by a range of historical and contextual factors—it is also structured according to power relations by which technology that is beneficial for some may be perilous for others. Such relations of power must be the object of study for critical studies’.

This article is a qualitative study that explores and examines the socio-technological barriers to the deployment of AI technologies in five African countries’ newsrooms. The article answers three related questions: How are AI technologies integrated in selected Southern African newsrooms? What are the socio-technological barriers attendant to the integration of AI in these selected news organisations of sub-Saharan Africa? What are the implications of these socio-technological barriers to the process of news production?

The socio-technological barriers to the uptake of AI technologies within newsrooms ought to be understood in order to make sense of the affordances and capabilities of these technologies within these newsrooms. Considering that since time immemorial, journalists have always been affected, and impacted on by technologies, it is important to understand these barriers and their implications from a journalistic perspective. Furthermore, a deep-cutting focus on techno-social factors hindering the integration of these technologies is an intellectually productive endeavour, especially in an African context where these institutions import most of the technologies from the West and elsewhere. Emerging technologies impose barriers on the acceptance, adoption and use of technologies. If the socio-technological factors hindering the integration of these technologies are appreciated, we, therefore, can problematise issues around autonomy of journalism in the application of these technologies. The paper makes a several interrelated arguments. First, it argues that a major socio-technical barrier is the ‘black-boxed’ nature of these AI technologies which imposes a violent skepticism among African journalists. Second, decisions on which AI technologies to use is a serious socio-technological barrier to the deployment of AI in African newsrooms. This has implications on news production, journalistic capital, journalistic habitus and newsroom power dynamics. This article addresses AI from a broader perspective. This means I address AI as forms of (newsroom) technologies integrated in news gathering, editing, production and dissemination. As such, it has both positive and negative disruptions to extant newsroom cultures, and it alters journalism practices. Therefore, I take AI as a gamut of technologies, a culture and a set of practices within newsrooms that, however, operates within the prisms of journalists’ agency.

South Africa, Eswatini (formerly Swaziland), Botswana and Zimbabwe are neighbours in Southern Africa, and Lesotho is enclaved within the latter. South Africa has the most industrialised and technologically advanced economy on the African continent. As such, its newsrooms have advanced relatively well in terms of AI uptake and usage (Munoriyarwa et al., 2023). The stability of the economy has meant that South African newsrooms can relatively afford these technologies since most of them come at a cost. On the other hand, Botswana is economically stable, but it has small newsrooms compared to South Africa. The stability of its economy allows for stable investments in AI, not only in media, but in other industries as well. Zimbabwe's media have been decimated by an unstable and poorly managed economy. The newsrooms have been suppressed by the ruling party ZANU PF. This has led to a gradual attrition of media freedoms, unlike in Botswana and South Africa. A collapsed economy means that newsroom investments in technologies have been limited, especially relative to Botswana and South Africa. Lesotho has stable media and relatively free one. Eswatini has mimicked Zimbabwe in terms of eroding media freedoms. However, in all these countries, AI technologies have largely been imported from the West – America and Europe.

This article proceeds as follows. In the next section, I review literature on AI integration in newsrooms. This is followed by a section which deals with the theory of technological appropriation (Feenberg, 2008). It is followed by the methodology of the paper. After that, the findings are presented. I conclude the paper with a discussion of the major findings.

AI integration within newsrooms: a review of literature

AI technologies are relatively new technologies making inroads into journalism practice. The impact of these technologies on journalism institutions is yet to be fully grasped and understood (Lindgren, 2023b; Munoriyarwa et al., 2023). Anecdotal evidence is, however, beginning to emerge across different contexts, examining and exploring the uptake and deployment of these technologies in newsrooms (Finnset, 2020; Kioko, 2022; Moran & Shaikh, 2022; Munoriyarwa et al., 2023; Simon, 2024). There is not much agreement in scholarship yet, as to which of the varied AI technologies have become relatively dominant than others within newsrooms. Generative AI does, however, seem to be the dominant type of AI that has become common in many newsrooms (Nishal & Diakopoulos, 2024).

In journalism contexts, basic AI tools can produce text, personalise reading experiences, analyse documents and public statements for journalists and edit texts (Beckett, 2019; Diakopoulos, 2019; Marconi, 2020, cited in Finnset, 2020). These AI technologies are being integrated in newsrooms at a time when journalism itself is surviving in a competitive and disrupted digital environment, making it pertinent to understand AI their contribution either to newsroom disruptions or their alleged ‘Messianic’ saviour role to journalism beleaguered by these disruptions in the digital ecosystem. AI technologies offer opportunities to newsroom innovation (Finnset, 2020). Yet, this intended innovation needs to be funded. This explains why big techs, like Google, have become central to funding these initiatives (de-Lima-Santos et al., 2023). Drawing on this extant research, it means that some of the AI technologies may encourage innovation in newsrooms, admittedly, but will have to be funded by big techs themselves. This shows how these big techs, through their technologies, are beginning to exert a huge influence in processes of news production. News organisations, rich enough to purchase AI products, or those able to access funding from big techs, are most likely to be beneficiaries of AI. We, therefore, should be aware of both power imbalances, and exclusionary tendencies AI technologies may exacerbate within newsrooms.

In Africa, research at the intersection of AI and journalism is still emerging. The relatively little research available scopes the uptake of AI in different contexts of the continent. In Kenya, Kioko et al. (2022) note that Kenyan newsrooms have begun to feel the impact of AI and are increasingly integrating these technologies in their everyday work. They further note that there is a common feeling among working journalists – the fear of job redundancies. This is supported by Radoli (2024) who notes further that legacy media in Africa are being pushed to adopt their practices and exploit the technological innovation of AI in news production, creativity and efficiency. Makwambeni et al. (2023), note that the integration of AI technologies in South African newsrooms has hovered between utopia and dystopia. Age and newsroom resources are, according to Makwambeni et al. (2023) determinant factors of AI integration uptake in newsrooms.

A growing body of extant literature exposes AI faults, especially when adopted within newsrooms (e.g., Zagorulko, 2023). Zagorulko (2023) argues that generative AI tends to generate biased content, often in context with users’ queries and respects no balanced reporting. Furthermore, Zagorulko (2023) notes that AI-generated stories are notorious for their opacity of news sources and lack of up-to date sources and violation of journalism standards. Tensions exist between AI and journalism in highlighting the pitfalls of AI (Moran & Shaikh, 2022). For example, journalism asks; What, eventually, will turn out to be the role of AI in newsmaking? Can decision-making around production of news be shared between journalists and AI models? Is AI integration even inevitable? Can AI be resisted within newsrooms? These questions are very central in AI discourses, especially when juxtaposed with the economic, political, socio-technical complexities of newsmaking (Moran & Shaikh, 2022) and the traditional normative ideals of journalism and expectations on journalists. Yet, it should be acknowledged that integration of these technologies happen within specific journalistic cultures that lead to them being accepted or resisted. Integrating a technology, like AI, is also a question of individual users’ attitudes towards the technology. Therefore, it should not be taken for granted that when a technology is introduced, it is accepted unquestionably. Journalists, with their individual agency, may resist, and in worst case scenarios, sabotage these technologies.

A key take-away from this literature on AI integration in newsrooms is that much of it adopt a ‘tip of the iceberg’ approach in researching AI. This means it is very much exploratory and not offering detailed analysis that address fundamental issues around, for example, socio-technical hindrances to the integration of AI in newsrooms. For instance, the two questions: What are the socio-technological barriers attendant to the deployment of AI in selected news organisations of sub-Saharan Africa? What are the implications of these socio-technological barriers to the process of news production in these newsrooms? have not been addressed in extant scholarship. Yet, addressing these questions is essential for a comprehensive understanding of the uptake of AI technologies if the socio-technical obstacles to this adoption are to be understood. Another take-away from this literature is the paucity of research on AI adoption in African newsroom. Much of this literature (see Makwambeni et al., 2023) is still emerging. However, what lacks in this literature is an understanding of socio

Conceptual framework

Conceptualising technological appropriation and socio-technical approaches to technology adoption

In order to holistically understand socio-technological obstacles to the adoption of AI, the present article draws on an integration of two related concepts – the technological appropriation theory (Feenberg, 2008) and the socio-technical concept of technology adoption (Bijker et al., 1987). The socio-technical concept of technology adoption (Bijker et al., 1987) state, among other tenets, that society is not determined by technology, neither does society determine technology. These two are closely intertwined (Costley, 2016) they do shape one another (Munoriyarwa & Chiumbu, 2024). Social factors are as important as technological factors in enabling technological transitions. This means, if we do not understand social factors, which interact with technological factors, we cannot have a clear picture of the obstacles that may hinder adoption or adaptation to specific technologies. The two do converge as two sides of the socio-technical coin (Nuru et al., 2021).

How a technology is accepted in society, largely depends on these dualistic effects of social and technical contexts. What this means is that as a technologies, like AI, are introduced, or gradually take shape within society, they have to content with intangible dimensions ranged in favour of, or against their success (Hughes, 1994). These intangible dimensions include technological practices, perhaps already entrenched in a community, or within a community of practice like journalism, beliefs and the knowledge that actors have about a technology (Hughes, 1994; Nuru et al., 2021). The acceptance of technology, hence, is the sum total of the interaction between the technology itself, and the society/community of its intended adoption. This stage is often referred to as, ‘technological momentum’ (Hughes, 1994; Nuru et al., 2021, p. 2; Sovacool, 2009). Only when technological and social obstacles are addressed, can a technology's adoption gather momentum within a community. Feenberg (2008) argue that in order to understand how these technologies are used, we need to understand how, in the first place they are adopted – do communities readily accept them, or communities often view them with scepticism, confusion, awe, etc., before they finally gel them into their quotidian practices? This brings onto the centre of the debate, the socio-technological obstacles hindering the adoption of these technologies, which this article, hence, explores in a (sub) Saharan African context. Feenberg (2008) further notes that how technologies,, like AI, are imagined collectively, affects how they are likely to be understood and accepted. How a technology is integrated or not integrated into newsrooms, and any other institutions is not only determined by the technology itself. There are a host of other factors which include existing cultures, individual perceptions of the technologies, individual predilections on the profession itself; and many other factors. In the absence of social acknowledgement and support for its adoption, a technology, like AI cannot sustain itself (Sovacool, 2009). These two concepts offer a strategic and holistic understanding of the technological and social barriers to adoption of technologies, and they will be deployed as lenses to understand the obstacles to AI technologies by journalists in selected southern African newsrooms. Therefore, we need to avoid an overtly deterministic understanding of technology. We should consider how newsroom structures and AI systems co-evolve in unpredictable ways. Some technologies may be less flexible and have a more deterministic effect than others. The agency of the journalist is central in the integration of AI technologies within newsrooms, and ultimately, the impact these technologies will make within newsrooms.

Note on methodology

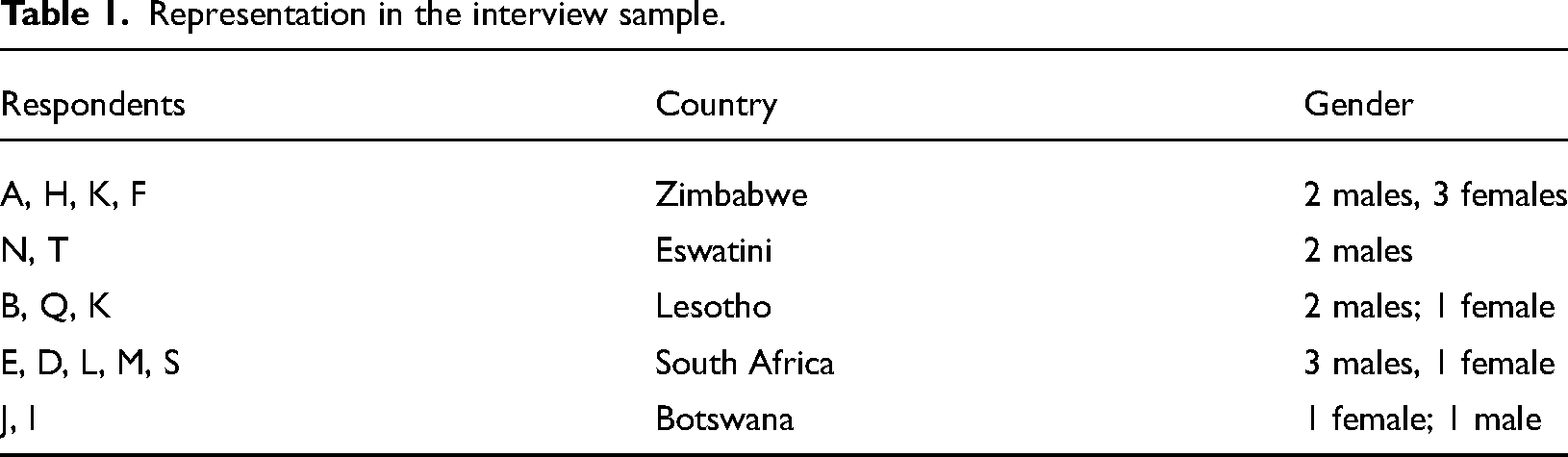

This article's data is part of an on-going research on data journalism and AI-driven journalism in southern Africa (see Munoriyarwa, 2022; Munoriyarwa & Chiumbu, 2024). By southern Africa, I refer to South Africa, Zimbabwe, Botswana, Lesotho and Eswatini. The journalists were recruited through convenience and snowballing sampling. The journalists interviewed were available for the interviews during workshop times where the researcher was present. These included USAID, Internews and investigative Hub workshops held in the past two years, from 2022. Some were interviewed outside conferences – in their newsrooms. Some of the interviewees referred me to others. The data was collected through in-depth face-to-face interviews, and telephonic interviews with practising journalists from some African newsrooms, over a number of conferences held with journalists in which I attended. These interviewed journalists presented themselves during workshops. The data collection stretched over two years from 2022. Some of the interviews have already given outputs (see, e.g., Munoriyarwa et al., 2022: Munoriyarwa & Chiumbu, 2024). Some of the interviewees came from popular newsrooms like MSNBC (South Africa), NewsDay (Zimbabwe), The Independent (Zimbabwe), The Mail and Guardian (South Africa). These are mainstream newsrooms in their respective countries. There were three journalists sampled from three community newspapers in South Africa and one community newspaper from Eswatini. Eswatini has been undergoing massive repression of journalism 1 such that my respondents were not comfortable to have their newsrooms, and their own names revealed. For example, on 4 July 2021, two New Frame journalists, Magnificent Mndebele and Cebelihle Mbuyisa, who were on assignment in Eswatini were detained, assaulted and tortured by security forces. 2 The same has been noted about Botswana, Lesotho and Zimbabwe. For these respondents, the fear was not physical assault, but that they were not certain if they needed approval from their newsroom leaders before granting interviews. Because of that, we agreed on anonymising the respondents and the newsrooms as well. My Botswana interviewees were part of a Freedom of Expression Conference held in Sandton, South Africa in January 2022. The main journalism conferences from which the researcher found respondents were: The Internews conference of July 2022; Journalism Hub (online conference held on November 2022); Freedom of Expression Conference held in Sandton, South Africa in January 2022. These face-to-face interviews were augmented with telephone interviews with some journalists, and all were in-depth interviews. There were 20 interviews altogether, even though some of the interview data was not used in this research – eight from South Africa, three from Eswatini, three from Lesotho, four from Zimbabwe and two from Botswana. South Africa is the biggest country in that region in terms of population, has more media institutions and has the most technologically advanced economy in the region. The journalists I interviewed (and eventually, whose data was used), come from different newsrooms constituted as: three newsrooms from Eswatini; two from Lesotho; three from Zimbabwe two from Botswana and five from South Africa. This explains why it has more respondents than any other countries. From Zimbabwe, respondents came from news organisations like Zimpapers, The Newsday, and The Independent. From Botswana, my respondents came from The Sunday Standard and The Voice. In South Africa, I had respondents from Amabhungane, The Mail and Guardian, The Times and Sunday News. These are all newspapers. I also got respondents from CNBC Africa, a television broadcaster in South Africa. However, suffice to mention that the number was determined by the availability of respondents at the given time. For uniformity, I will anonymise the responses, (using symbols as Respondent A, B, C, etc.). Journalists’ ages ranged from 26 to 54. This gave an average age of 40 years. Some of the questions I asked my respondents during the interviews were: How do they understand AI in their daily news gathering and production routines? What role do they accord to it? What are their views about AI in newsrooms? What factors enable them/hinder them from embracing AI? Of these factors, which ones do they consider to be the biggest obstacles to their acceptance of AI? By answering these questions and others, journalists allowed me to answer my three major research questions, which I have outlined in this paper. These are: How are AI technologies integrated in southern African newsrooms? What are the socio-technological barriers attendant to the uptake of AI in selected news organisations of sub-Saharan Africa? What are the implications of these socio-technological barriers to the process of news production in these newsrooms? (Table 1).

Representation in the interview sample.

Findings

AI integration: Distribution processes and circulation dynamics within newsrooms

The integration of AI technologies in newsrooms is not only determined by the availability of the technologies. Journalists’ individual predilections and their perceptions of these technologies are central to this integration. AI technologies are, therefore, not simply praised and worshipped as a panacea to news gathering, production and distribution., These technologies conjure up fears, job loss alarm and sometimes foster skepticism and near-resistance among journalists. Southern African journalists sampled perceive AI as inconsistent with their long-held journalistic values and motivations, which makes them resist the ‘…encroachment of a technology in a terrain they have owned and arbitrated with success for centuries… a technology totally alien to what we believe journalism should represent…’ (Respondent, E, The Times, South Africa). For some journalists, AI, cannot be embraced by everyone in the newsroom. ‘It does not belong to this age…perhaps, in the future… today's journalism cannot be served by AI technologies…journalism has survived many of these technologies, but this one is simply the wrong technology, in the wrong place… at least in our African context…’ (Respondent B, Lesotho). Despite such obstacles, there is a steady surge in the uptake of AI technologies within newsrooms. What differs is the speed at which these technologies are being adopted, and the kind of AI technologies being used. Basic AI tools seem to be the most popular because they are available at no costs on newsrooms. These include document analysis tools, transcription robots especially in electronic news organisations like CNBC Africa. An informant said, ‘We use robots to transcribe our interviews. We actually can use a transcribed interview in real time…’ (Respondent G, CNBC News Africa). A news transcription robot, however, can hardly be described as simple AI tool. South Africa's largely stable, and technologically more advanced newsrooms have acquired more advanced AI technologies like robots, unlike other newsrooms in Zimbabwe, Botswana, Eswatini and Lesotho. In these contexts, basic AI technologies adopted include text-editing AI technologies that according to some journalists, ‘have encouraged some exciting news production and interactive scenarios that we have not experienced in a while, and new appreciative approaches to storytelling…’ (Respondent A, Zimpapers, Zimbabwe). However, despite these differences in acquisition and usage of AI across these different newsrooms, it is evident that content creation AI technologies do seem to be common and there is a growing belief by journalists that these technologies will perhaps, lead to streamlined and operational efficiency across newsrooms. For example, AI technologies that can write news scripts have been adopted because, ‘In text-intensive beats like investigative journalism, they can potentially help with the production of the news text itself…but I should admit, it is so far, a forlorn hope because my experience is that they are not matching human capabilities…’ (Respondent B, The Independent).

In relatively technologically advanced journalism systems like that of South Africa, financial news production has also benefited from AI technologies. For example, at Bloomberg Africa, in Johannesburg, AI-driven cyborgs help pull out pertinent facts and figures that would take long to uncover manually (Respondent C, Bloomberg Africa). AI technologies also help financial news producers to automate research for investigative news reporting and other in-depth stories (Respondent C, Bloomberg Africa). This is in addition to ‘normal’ AI chores like editing financial news articles. The overall consequence is that there is a reduction of time used in the production of financial and business news, and this time can be redeployed elsewhere (Respondent I: Botswana). The use of basic AI technologies has been noted in other newsrooms as well (see Phule, 2024). The generative AI technologies do seem to have been the most adopted across African newsrooms.

There are key issues to note about the current AI practices within newsrooms in southern Africa. There is no known acquisition strategy for these technologies. One newsroom editor said, ‘With these technologies, you just make use of what you come across… the way they do change means it is really hard to plan for an overarching AI acquisition strategy…’ (Respondent C, Bloomberg). The lack of an acquisition strategy means that AI technologies differ widely within newsrooms as each newsroom focuses on the kind of technology they come across, fit their purposes, or is likely to generate little skepticism amongst journalists, and garner wider acceptance. While there is no known acquisition strategy for AI technologies within newsrooms, the trend I discussed above shows an incremental approach to acquisition even without a clear strategy. As more AI technologies are rolled out, newsrooms that can afford acquiring and integrating them. There is, however, a lack of uniformity in the integration of AI technologies. Journalists interviewed noted that there are no clear guidelines as to how AI technologies will be purchased and then integrated into news work. Botswana, which, like South Africa, has a stable economy, has not made as much inroads as South Africa because of its small economy, which means it cannot attract much advertising revenue to sustain AI technologies’ acquisition. One respondent said, ‘We are not sure how these technologies are purchased. Sometimes you are just told that now you can do this…we are subscribed to this and that technology’ (Respondent I, Botswana). Another respondent from Eswatini agreed, ‘You can just be told to attend a workshops on how to use these technologies. You are not sure of its need in your day-to-day work…sometimes you just go, learn and forget…’ (Respondent T, Eswatini). In Zimbabwe, AI acquisition has been chaotic – no known strategy and no known systematic practice of acquisition. One journalist noted that purchasing was a decision made by senior editors, and newsroom managers. This points to the need for journalists’ buy-in when these technologies are being purchased, in order to easy the process of integration. Media managers might argue that purchasing decisions are not a journalistic function, and will, perhaps, oppose such mission creep. However, for an unfamiliar technology, that is new, and is already garnering both controversy and hostility, there is need for a shared view and wider consultations.

AI and its inconsistency with long-held journalistic values and motivations

AI technologies themselves provide strong grounds for resistance and alienation by journalists. As one journalist put it, ‘Some of the technologies are not meant for African newsrooms. The degree of repurposing that had to be done borders on humiliation of African journalism practices… they should just stay where they are meant to stay…’ (Respondent D, South Africa). AI constrains some forms of storytelling, for instance storytelling anchored in communal suffering and struggles, understood by journalists operating in these contexts, some of whom grew up there.

According to some journalists, the designs of most AI technologies are inconsistent with African journalism values and do not sync neatly with (African) journalistic motivations. The genesis of their resistance to this type of technologies is that they are, firstly, ‘designed for profit-making….to churn out news quickly, and in real time… ignoring other dimensions of newsmaking…generative AI is not what we can embrace seriously and wholeheartedly if we are to be taken seriously as newsmakers…’ (Respondent F, The Herald). In the African context, journalists stand for something else beyond just newsmaking. Journalists in Africa, ‘Tell deep stories of exploitation, harm, suffering…rooted in the communities in which they live…this is unique, and different from of storytelling elsewhere… and our rejection of AI is based on the fact that this cannot be done by machines…algorithms… whatever you call these…’ (Respondent J, Botswana). One journalist added that AI is an, ‘…unAfrican, trial and error technology which threatens the democratic role of journalism by allowing it to be arbitrated by machines…’ (Respondent R, South Africa). The issue with generative AI technologies is that they are trained on Large Language Models (LLMs) (Floridi, 2023). This means they can only generate text using information already in existence (Barreto et al., 2023). This is not often how journalism works. Journalism works by original content creation. ‘It protects a whole way of life through storytelling…and attempting to build a society on AI models of news production is simply inadequate, impossible, unfathomable and in retrospect, not adequate to protect society’ (Respondent K, Lesotho).

There is a story told by one informant about an AI-driven robot news reader in a broadcasting newsroom. The respondent said, when the robot was brought into the newsroom, ‘…it could not pronounce African names…it had to be trained Igbo language…then it was repurposed to pronounce words using the Kenyan accent …’ (Respondent L, South Africa). The fact that it had to be repurposed and refitted to suit the African context is enough to tell us for who these technologies are meant. ‘…It's a very white and very English AI-driven robot, it had to be tweaked in order to suit the African news audience. Our first act of resistance was to refuse to have the robot named in our names…we all refused until they had to outsource someone…’ (Informant L, South Africa). It is this incompatibility with African newsrooms that has become an obstacle to adoption of AI technologies within African newsrooms. AI are products of the capitalist industries (Zhikharevich, 2021). They are designed to churn out quick news in real time. Several journalists, furthermore, noted that AI cannot respond, to the requirements to save democracy in Africa that African journalists are burdened with. One journalist said, ‘Generative AI produces vast amounts of news in real time, and this is a capitalist practice, and we are not forever saving capitalism through our media, some of us are serious about democracy, we write to save democracy, AI will never be able to do this…this is what motivates us in journalism…’ (Respondent M, South Africa).

How news are produced is important to understanding how audiences encounter the news and how they might use it. AI technologies alter these news production routines, and the strategies traditionally deployed by news institutions to win audiences and keep them. And, since AI is designed for a different type of audience, it is difficult to see how it can produce news that speak to these type of news audiences in an African context. One journalist said, ‘I know for a fact that my community expect news around crime in their community, what is happening to fight it and I know AI cannot produce the news that suits my community. It is not anchored in my community’. (Respondent M, South Africa).

There are several research works that have critiqued the design of AI technology, arguing that how the technology is designed, and for (primarily) whom it is designed for, is important to its adoption, and can be a serious obstacle to its adoption in other contexts (Bijker et al., 1987; Nuru et al., 2021). This literature underlines the fact that technology is socially constructed and the social is also technically constructed. The technical component of AI includes its (text) generation capabilities, its efficiency, accuracy in the production of news. In addition to this, we should evaluate the technical affordances of AI in terms of the news ideas it generates in certain context and the values it brings to news processes. Lastly, AI should be evaluated technically through the knowledge that actors who interact with it, that is journalists, have in utilising it. When these forces interact, it means the social and the technical have integrated. When the social and the technological fail to interact, there are serious obstacles to adoption of AI technologies. Southern African journalists are aware of the failings of AI in Western contexts. For instance, Zagorulko (2023) notes that AI-generated stories are notorious for their opacity of news sources and lack of up-to date sources and violation of journalism standards. One journalist said, ‘If the AI technology is doing this in Western context where it was trained using large Language Models of English, what more of our native languages? It will obviously make more errors. Remember these are not local technologies… their purchase for me, is my slightest worry, than their usage and whether we should embrace them…’ (Informant K, Zimpapers, Harare).

‘I know of different AI-driven softwares like DALL.E 2 that can generate images and transcribe speech. I do not know of any native African languages, especially in my own country of Lesotho, that can be transcribed by these AI models. Why then should we embrace them? I would not want to embrace AI as long as it does not cater for my own language interests as well…’ (Informant K, Lesotho).

Journalism is a form of writing that at the centre of it is story telling. When journalists become inculcated into their profession, they become absorbed into a social organisation that has its own culture, logics, tradition and history. One South African journalist said, ‘We grew up in the apartheid era…we knew that we had to use journalism to fight an evil system… after apartheid, we knew we had to protect democracy…if you ask veteran journalists, they will tell you that this desire to protect the country's hard-won rights is a deeply shared motivation for us…and when you tell me that AI can do this, I really do not understand how? These are technologies. They cannot write passionately like us… and where, in the first place, will they get the facts…of events happening right now…and provide an analysis the way we do for our audiences?’ (Respondent M, South Africa).

Journalists resist AI in this regard because they are worried about the ways through which AI can recognise the various journalistic cultures existing in the world. Some African journalists, therefore, see AI as a constraint to their storytelling abilities.

Furthermore, journalism practice itself, involves complicated and dynamic decision-making processes. As such, news always carry an ‘emotive dimension’ (Soroka & Krupnikov, 2021). The emotive dimension of news arises out of two factors that interplay. First, it is the events to be newsfied. Events like war, murder, natural disasters often cause journalists to express emotions, anxiety, sympathy and anger (Soroka & Krupnikov, 2021). Journalists are agents who act and react in specific situations in ways that are not calculated. Journalists’ decision-making intersects with processes of enculturation and socialisation from the people that journalists work with (Burns, 2004). This is where AI becomes questionable. One journalist said, ‘AI is not emotional… journalists are emotional beings. It cannot factor in all these personality issues that journalists bring in news writing. We cannot substitute news writing with machines, it does not matter how you claim it is good… This is a machine and nothing much…’ (Informant S, South Africa).

‘I was there when Cyclone Idai devastated Chimanimani. You could not avoid being emotional. It was hard…I felt for the community, and often, your writing reflect how you felt. I think journalism connects more with society… This is not what AI can do… I do not think we want this technology in our newsroom…’ Respondent H, Newsday).

The genesis of the resistance is multidimensional. AI, according to these journalists, is nothing but a series of ‘aloof technologies’. They are, as journalists explain, very distant from the society, where journalism is anchored. This generates anxiety amongst journalists who fear that AI can possibly alienate them from the communities that they serve. Furthermore, there is this palpable perception that AI is an import in a totally alien field, and this perception resonates with a majority of journalists. It has consistently become the basis of an ‘anti-AI’ movement questioning the technologies effectiveness in African newsrooms.

‘Tech exhaustion’ as an obstacle to AI deployment

Tech fatigue is one of the most talked about issues in today's newsrooms (Samir, 2019). One major characteristic of newsrooms is their high-speed innovation and experimentation with new technologies. There is always a danger with this approach. If newsrooms focus more on innovation, their focus might become monetisation rather than content production (Samir, 2019). For some African journalists, AI, if not resisted, will drive journalism toward an obsession with monetisation of services than producing journalism content that serves society. For African newsrooms, that largely depend on imported newsroom technologies, the rate of adopting and adaptation to new technologies can take an emotional toll, and sometimes, breed resistance cultures which in turn, act as obstacles to adoption of these technologies. Earlier on, I mentioned that Africa is largely a net importer of the most advanced technologies (Simon Ramaoka & Kraemer-Mbula, 2022). Much more recently, countries like Kenya, Ghana, South Africa and Nigeria have started establishing innovative hubs with the hope of establishing their own technologies. And for journalists, the stakes are high. One journalist questioned the relevance of what she called, ‘the ever-present pressure to adopt new technologies’ (Respondent A, Zimpapers, Harare). Transiting from classical/traditional journalism to AI-driven journalism is in itself a fundamental shift for journalists. Here is a profession that prides itself of writing, and informing society. This was its raison d'etre in the first place. This was the essence of J-Schools as well. This fundamental shift in the profession, being driven by AI always causes a cognitive overload and cognitive fatigue on the part of journalists. The danger is an inability for journalists to focus on what matters the most – newswriting – which can lead to disengagement from core journalism practices.

Journalism has survived many technological revolutions. Recently, it survived the ‘data journalism revolution’ (Munoriyarwa, 2022) that threatened to unleash mathematicians, statisticians and computer/software experts into newsrooms (Bro et al., 2016; Kiberenge, 2020; Munoriyarwa, 2022). The adoption was enabled by several factors. These include the fact that journalists were/are eager to learn new skills to improve on their capabilities (Himma-Kadakas & Palmiste, 2019). Secondly, some of these technologies were couched in discourses that elevated them to unavoidable newsrooms infrastructure. But some journalists are now tired of embracing new technologies according to several journalists interviewed. Technology fatigue has set in. One journalist from Eswatini said this, ‘I am inspired by storytelling…I am inspired by the desire to be a great writer in my country…I cannot continue learning every technology thrown our way as necessary for everyday journalism…Sometimes it is not necessary…and this is what I think about AI’ (Respondent N, Eswatini).

An overuse and overt dependence on these technologies in the newsroom has created some form of ‘tech colonialism’ amongst journalists. Tech colonialism, in this sense, means that critical journalism responsibilities are rented out and outsourced to AI. As one journalist said, ‘The modern -day journalist will not be able to think critically if newsrooms don’t halt this dependency on AI. He/she will be digitally colonised…will never be able to write a story without these technologies…it's a slippery slop… we should avoid.’ (Respondent J, Botswana).

The efficacy of AI technology in specific African contexts

AI technologies require us to think broadly, about whether all journalism should be AI-led. Africa exhibits peculiar social and circumstances that require deep and serious considerations about AI efficacy. For instance, can AI technologies inform rural reporting in Africa? Africa's rural areas lie on the deep peripheries of the modern state. Some of these rural areas do not have running water – they subsist on shared boreholes and underground wells, the children walk to school, some have no electricity. What kind of journalism do these communities need? Can it be AI-driven? Some pride themselves of very peculiar cultural practices. Let us take, for instance, the Xhosa people of the Eastern Cape, South Africa. They had a long tradition of circumcision that goes back to generations (Mhlahlo, 2009). In fact, their circumcision ceremonies are a hallmark of their cultural peculiarity (Gwata, 2009). Can ChatGPT, for instance, generate text that captures the rich peculiarities, nuances and cultural tapestries that have endured for generations? Can a software designed in San Francisco, at the heart of California capture the rich and long history of a cultural practice that annually prides an ethnic group in Hlankomo and Mdeni, Kwabhaca and Khowa, for example? Can AI capture the personal feelings of loss, and the enduring psychological consequences of Cyclone Idai in Biriiri, Zimbabwe, or the environmental, economic and social travails of the Khoisan groups in the southwestern districts of Botswana? These are serious questions about the technical design of AI that some journalists scorn at, and hence, are an obstacle to a broader adoption by some journalists, of these AI technologies.

Age as an obstacle to adoption of AI

An enduring social obstacle to the adoption of AI is the age factor. Interviews revealed that journalists’ age has an impact on the extent of acceptance and resistance to AI technologies within newsrooms. My interviews reveal that the old generation of journalists resist AI technologies more than the young tech savvy generation. Similar research (see Van Deursen & Van Dijk, 2019) have revealed the centrality of age in either accepting or rejecting technological changes, even though the research were conducted in other contexts that are not African. The old generation of journalists still prefer the traditional news sourcing and journalism decision-making practices are still preferred. One journalist from Lesotho said, ‘I have worked as a journalist for many years. I have learnt to use many technologies in the newsroom. I am uncomfortable with a technology that writes a whole story for you, while I am reduced to a copy editor. I think this is past me now. I just need to go and retire, and watch journalism embrace all this’. (Respondent Q, Lesotho).

This obstacle has a historical genesis. The old generation of journalists grew up in newsrooms that had little technological resources. Some of their contemporaries who left the newsroom, still did so in conditions of resource constraints. It is, arguably, this lack of resources that had permeated and punctuated most of their journalism years which has affected their attitude towards new journalism technology tools like AI. An environment where technology is available often stimulate positive attitudes towards technology (Moravec et al., 2024).

The fact that these journalists had never had any previous experience with such an extremely disruptive technology should not be underestimated. Journalists argue that AI is at a higher level of newsroom disruption, unknown, and never experienced in newsrooms. One journalist noted, ‘We have witnessed and lived in the era of newsroom disruptions. But the level of AI -induced disruptions is unprecedented. Some of us are not willing to swallow some of these technologies. We want to let this be whatever it can, but I personally want this new generation to explore this, but as for me, I think I would rather let sleeping dogs lie…’ (Respondent N, Eswatini).

The ‘old class’ journalists as Respondent B called them, envision themselves as having a strong and enduring bond to both the community they newsfy and the profession they serve. And, accordingly, this relationship that binds them to their communities, cannot be arbitrated by technologies like AI. This ‘generational divide’ highlights different approaches to AI in newsrooms where there are several generations of journalists. The inclusion of AI in the news production is likely to divide newsrooms further. Furthermore, there is a difference between a recently trained journalist with AI awareness and the old school journalists who refuse or deliberately want to avoid AI conversations and practices. Consequently, there is a view amongst the old school journalists that the young journalists are praise and worshipping AI and raising it to the level of newsroom infrastructure that cannot be dispensed with. The overall consequence is that these divided perspectives of AI adoption, will present serious obstacles to collective newsroom responses to AI technologies. Some journalists will resist, while others can easily integrate. Already, in the previous sections of this paper, I have explored the genesis of this scepticism. If age becomes a factor, the overall danger is that cooperation within and outside newsrooms on important journalistic assignments might be tempered with because of different perceptions with AI.

AI newsroom integration and implications for news production

This section of my findings answers the question: What are the implications of these socio-technological barriers to the process of news production in these newsrooms? In answering this question, I argue that, for journalists, one of the implications is their concern with news sources diversity in AI-generated news. Secondly, I argue that AI deepens already existing scepticism in newsrooms which has further implications for its intergration in the future. For most journalists interviewed, AI's ‘inability’ to diversify news sources remains a serious concern that drives local skepticism and resistance to its adoption. Journalists fear that some underrepresented and marginalised voices might be further marginalised if AI is adopted as the central technology in news production. This is, more so, for journalists who have always been skeptical of AI, and who work in community newspapers that are closely linked to their specific society. A Swazi journalist who works for a relatively large community newspaper said, ‘Every community has its own demands for news. These are the demands that AI will never be able to wholly meet. We cannot pretend like it is the best technology in town, Communities are specific’ (Informant T, Eswatini). Furthermore, as one journalist stated, there are those very important sources that are not easy to find. This is mostly true, when dealing with either community news, or investigative news. Respondent A (Zimpapers), noted, ‘AI has limitations. It cannot dig a story for you. It cannot approach the real sources, or whistleblowers for you. I do not think we can sit here and argue that AI is the best news-producing tool we can have. Some sources are not easy to find…and AI cannot do it for you’.

The issue of voice marginalisation/invisibilisation is about the affordances of the AI technologies. For a start, AI technologies often, through their algorithm models, push journalists to sources that have already been quoted frequently (Westlake, 2024). We, therefore, need to interrogate the claim that AI solutions have the potential to revolutionise news production, such as automated news processes and enhanced investigative reporting through computer vision models or expert systems (De-Lima-Santos & Ceron, 2021) more carefully. It cannot be generalised in African contexts where, in the first place, investigative journalism sources are hard to find, can threaten the journalist (Munoriyarwa, 2022) and can destroy much of the evidence before it is even fed online. In 2021, South Africa's Steinhoff CEO Markus Jooste allegedly destroyed company documents as auditors circled and investigators became interested in the company's affairs 3 . This had happened years back in the USA when former Enron bosses destroyed documents 4 . AI will never follow up with people involved in such and will never dig what happened. We should, therefore, understand the limits of AI in this regard. It is not a saviour, the humane element will forever be required in journalism.

For some senior journalists, the fear is about their own marginalisation or alienation, which in itself upends the newsmaking process. These are the most crucial cogs in the newsmaking process. They keep institutional memory, and they have habitus, in addition to the journalistic capital they have accumulated over time. Once they feel alienated, it means that newsmaking processes can easily be periled through the creation of ‘Towers of Babel’ – where senior journalists resist AI integration, and the young generation reporters embrace it. One journalist from Zimbabwe said, ‘In our newsroom, the old generation of journalists do not worry much about AI, and how to use it. Even senior management are in the old generation of media managers. How would you expect these people to even think about purchasing AI technologies? And even newsroom conversations are different between the old and new generation of journalists. The danger is how not to frustrate the old group experience we always rely on…’ (Respondent A, Zimpapers).

For the old generation of journalists, there are more questions to ask. One journalist (Respondent B, Lesotho) asked, ‘With AI, we need to ask, how is this technology tailored for African audiences as well? We have heard in some instances, of its biases. This is my worry. Is there a clear path that AI can lead to reader satisfaction, and how we could measure?’

The overall consequence is the dichotomisation of the newsroom – between the old and the new generation of journalists. Thus, the obstacle to adoption becomes largely individualistic, and newsroom-based. The lack of understanding of what exactly AI requires in newsrooms has not been settled clearly even between the old and new generation of journalists. As I have noted, even the tech savvy generation has no clear understanding of what AI requires. Does AI require an upgrade of existing newsroom infrastructures? Or is it a radical overhaul of newsrooms? These questions have not been clearly understood in African newsrooms.

Discussion and conclusion

This article sought to answer three questions: How are AI technologies deployed in southern African newsrooms? What are the socio-technological barriers attendant to the integration of AI in selected news organisations of sub-Saharan Africa? What are the implications of these socio-technological barriers to the process of news production in these newsrooms? The findings show that there is a steady uptake of AI technologies in newsrooms. But findings show that the integration of AI technologies is happening within a ‘regulatory vacuum’ within newsrooms. There are no clear guidelines in different newsrooms to regulate AI technologies integration. Furthermore, findings show that the uptake is not consistent, it is determined by different levels of capital within newsrooms. Highly capitalised newsrooms have relatively surged ahead of their counterparts in the region. This is because of the different levels of affluence within news organisations.

Major concerns that militate against journalists’ integration of AI include the fear that AI will not be able to serve emerging democracies on the continent, The watchdog role of the media is much more complex than AI would understand. For instance, in the service of the watchdog role of the media, journalists undertake investigative reporting, they speak to sacred sources, including whistleblowers. AI cannot help journalists to reach out to these sources. AI, as already noted earlier, and in other research work (see Westlake, 2024, for example) relies on sources already fed into the system. In Africa, as respondents note, there are very few such sources already fed into existing systems. Therefore, there is a disjuncture between what AI can provide, and what watchdogism – which is a core journalistic function in the service of democracy, can provide. Furthermore, issues of corruption, where the corrupt leave no digital footprints on which journalism can rely on, cannot be solved by AI. The human element, as one journalist pointed out, would always be imperative if journalism is to serve democracy in the AI age.

In addition to this, Africa's peculiar social circumstances require consideration when thinking about AI in newsrooms. I raised, for example, the cultural peculiarities on the continent. For example, cultural practices like religion, circumcision ceremonies, marriage (e.g., polygamy practices, for instance) require emotive human understanding of the specific cultures in which they exist. An understanding of these practices cannot, arguably happen by simply drawing information from AI algorithms. In addition to this, there is also the question of AI designs. Several points stand out here. As I noted in the preceding sections, African newsrooms largely import these technologies from designers based in Europe and North America, etc. (The capanilities of, arguably, the first African-made Large Langauge (LLM) AI model, InkubaLM are not fully known).

More so, journalists view algorithms as very ‘unAfrican’ as they ignore African languages. In that respect, they will never be able to report African news in their various contexts. This is why journalists noted that most of these AI technologies are trained in English language models. This is a constraint in the sense that local languages are not catered for. For example, the Khoisan language, with its click sounds, cannot be found on AI technologies that can be integrated in newsrooms. The overall suspicion with algorithms (see Westlake, 2024) – that it directs newsmakers to sources already fed in the database, worsens these perceptions. So, by their design AI technologies are not meant to completely serve African newsrooms. Some functions will work, and others will never work. This feeds into growing skepticism. The ‘Blackbox’ nature of AI acquisition decision-making is yet another source of resistance. New technologies are, arguably, supposed to have institutional buy-in. In the long run, this helps understanding how they work and lessen resistance to their integration.

Findings, furthermore, also show that integration of AI within African newsrooms is an intense generational issue. Newsrooms become dichotomised due to the absence of a ‘generational consensus’ on AI between the new tech savvy entrant and the old class generation. Thus, the adoption of AI and the obstacles to it become a game of power within newsrooms. AI becomes a way through which those with knowledge of its usage dominate news production spaces by elevating AI into a critical infrastructure of the news production process, and a midwife of news. The old generation of journalists lack knowledge of AI protocols and processes in newsrooms. The new generation have knowledge, and are amenable to technological change, but lack the journalistic experience of the old generation. For the old generation, AI-mediated news production means that they cannot depend on their journalistic capital, build over time. The growing perception is that AI is a disruptive technology in the newsmaking process.

There is a persistent concern noted in the findings, that AI marginalises certain communities by neglecting them as news sources. This concern raises real terrors amongst African journalists who grew up accustomed to newsfying rural communities. It raises the question of how AI can diversify news sourcing if it is to be embraced by journalists. There are many voices in marginalised communities that are sources of news on relevant topics, but the LLMs used to train AI often do not include these voices from Africa, at least for now. A cursory look at business news, for example, on AI-driven business news sites would prove the point. There is an overt preponderance with top companies and organisations, and commentaries from top economists and well-known business analysts. What about smaller ones operating on the margins of communities, where they have made a larger and impactful contribution? Will AI provide journalism with the creation of storytelling potential on an egalitarian and democratic basis? Westlake (2024) notes that once this marginalisation persists, there is no more fairness and trust in news.

My findings have several implications for literature and theory. For theory, my findings confirm the interplay between journalism and technology (Moran & Shaikh, 2022). Furthermore, my findings converge with extant literature that technologies like AI have the potential to transform journalism. However, in southern Africa, the specific cases I explored show that while journalism practices are overtly determined by technology, journalists’ willingness to adopt the technology ultimately determine how much of it is integrated into newsrooms. Ultimately, journalists have a say in how much AI they want to use, as my data pointed. In addition to this, my findings upend ‘tech evangelism’, arguing that for old generation journalists in some southern African newsrooms, serving the community and journalistic storytelling are not determined by the adoption of technologies like AI. Rather, these are adopted by journalists sticking to their profession and building a critical mass of readers to build capital, and ultimately, trust in their news. Thus, socio-technological factors, often intervene to hinder/promote adoption of these AI technologies. Thus, we need to move away from a techno-deterministic perspective that see these AI technologies as panacea to journalism. Other non-newsrooms factors influence this uptake. There is a co-evolution, happening in very unpredictable ways, amongst AI, journalists’ own predilection of AI, and newsroom structures in Africa. Any understanding of socio-technological barriers to AI adoption should, therefore, consider factors at this intersection. Some AI technologies may be less flexible and have a more deterministic effect than others. The agency of the journalist is central in the integration of AI technologies within newsrooms, and ultimately, the impact these technologies will make within newsrooms. Feenberg (2008) argues that the usage of technology is determined by its availability. My case diverges from his view, noting that availability alone is not sufficient to determine usage. Rather, as my case studies examined here show, socio-technological factor, journalistic predilections with these technologies, and their overall perceptions with these technologies trump availability as a consideration for usage. Technologies like AI are adopted within a cultural, political and social system which, depending on whether they are adopted or not, they can dominate and control. There are, however, trade-offs between journalists’ needs as a community of practice and technologies. Journalists can choose which AI technology to adopt and which ones to reject if these technologies do not sync with their newsroom/journalistic values and beliefs.

In this research, I acknowledge several limitations. One of them is that it is based on a few (twenty) interviews in a sub-continent with hundreds of newsrooms. Future research should expand on the number of interviews with more journalists across several newsrooms and countries that are not part of this research. Secondly, the article would have benefitted from exploring the political economy of newsroom funding in the adoption of AI. Future research can expand on these two important issues through interviews, and more document analysis on AI and how this technology can spur further innovation in newsrooms. Future research may also delve into comparative analysis of socio-technological barriers attendant to the integration of AI in Africa, compared to Asia, Europe, and other parts of the world, bearing in mind that these newsrooms exist in their unique cultural, social, political, and economic settings. This article is an important starting point on AI adoption in southern African newsrooms.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Ethical statement

This study involves non-vulnerable human subjects. These are journalists in Southern African newsrooms. The journalists interviewed during this research agreed and were fully aware that the data would be used for different academic research outputs, which include this one https://www.taylorfrancis.com/chapters/edit/10.4324/9781003327639-12/artificial-intelligence-skepticism-news-production-allen-munoriyarwa-sarah-chiumbu ![]() . The University of Johannesburg funded the research in 2022, and consent was granted. However, to further protect the subjects of this research, as non-vulnerable as they are, we have anonymised all responses and all newsrooms in this research. No identifier has been left on the data.

. The University of Johannesburg funded the research in 2022, and consent was granted. However, to further protect the subjects of this research, as non-vulnerable as they are, we have anonymised all responses and all newsrooms in this research. No identifier has been left on the data.

Funding

The author received no financial support for the research, authorship and/or publication of this article.