Abstract

In May 2020, the social media giant Meta, parent company of social media platforms Facebook and Instagram, established an Oversight Board to allow some recourse to the content moderation policy of the company. The Oversight Board is an experiment in self-regulation (where ‘self’ refers to the company, not industry) by one of the global platforms to address content regulation. The Board is structured on the ‘arm's length principle’ to ensure independence from Meta. This paper analyses the structure for legitimacy and takes a bird's eye view of the decisions that have been made to date for efficacy. Of the more than 100 decisions by the Board, a total of 80% had been overturned. Interestingly, the percentage of overturned decisions has been increasing. This study suggests that the company was indeed not able to handle decisions around content well by itself. Further, the Board reported the need to increase the answerability and comprehensiveness of Meta to its recommendations. The paper suggests a shift in the tone of the global debate on content moderation from a near-absolutist position against moderation to a willingness to consider content governance. It concludes that while there is room for improvement, the structure could serve as a model for other companies that face a similar need to moderate content globally.

Keywords

Social media platforms the world over are facing political pressures to police content posted by their users. Such content moderation creates a dilemma between the need to filter harmful content such as hate speech, graphic violence and disinformation against the desire to maintain freedom of expression. Ideally, the decisions would be made transparently and in such a manner that the platform cannot be accused of deciding in favour of profits over possible societal harm.

Among the platforms, Meta, the parent company of Facebook, Instagram and WhatsApp, has embarked on what might be regarded as an experiment to set up a system that would allow for a more objective decision-making process that accommodates cultural sensitivities.

Meta 1 launched its Oversight Board on 6 May 2020, with much buzz and excitement by naming the first 20 of the 40 members. But as might be expected from something like this with its inherent complications, there was a lot of heightened expectations.

This paper aims to answer three critical questions regarding the Board:

What is the governance of the Board in structure? Our definition of governance is rule, and rules about rules (Ang, 2020). That is, governance encompasses both rules as well as the process of rule-making. Zuboff in ‘The Age of Surveillance Capitalism’ has a crisper if less comprehensive definition of ‘who decides and who decides who decides’ (Zuboff, 2018, p. 231). How legitimate is the Board in theory and in practice? There is a natural tendency to be suspicious of such a Board when both funding and appointment of key members are by the company itself. Although there will never be a perfect structure for governance over content, as will be demonstrated, there have been at least one significant proposal for a structure that comes close to maximising independence. How close is the Oversight Board to that ‘ideal’? Finally, how efficacious has the Board been? That is, how closely have the decisions—both in process and outcome—been to the intended aim of the Board as enunciated by Facebook and Board members.

Why did Facebook create the oversight board

Meta had several reasons for the creation of such a Board that functions in a manner similar to an ombudsman, a Scandinavian innovation in which decisions by an organisation may be reviewed in an open and independent process. An ombudsman, independent from the government, would be in line with the free speech doctrine that governments should have no say in regulating content (Youm, 2008).

At a macro level, such an ombudsman office engenders greater confidence in the organisation, which is particularly relevant for Meta because its business model is reliant on advertising. The advertising industry is dependent on a high element of trust – that the product or service works as advertised. To be credible, the Board's decisions must therefore bind Meta.

Nobel-prize-winning economist George Akerlof's (1970) work on ‘the market for lemons’ is instructive. His insight is that in a market where there is asymmetry of information, that is, in the absence of signals that indicate quality, the market will tend to self-destruct. The advertising industry, a market that Meta is in, fits the conditions: the advertiser knows the quality of the product or service but the buyer does not. One of Akerlof's recommendations to reduce quality uncertainty and so defeat the tendency of such a market to destruction is licensing, which includes self-regulation. Facebook's Oversight Board, which is a form of self-regulation, may thus be seen as quality-signalling by the company.

Here, it should be observed that much of the direct regulation of advertising is performed on a self-regulatory basis, with the ‘self’ referring to industry in general. That is, much of the advertising in the world is run by industry self-regulation, viz., industry regulating the industry. The shifting understanding of ‘self-regulation’ is evidenced in how the regime that has been established has been described as a form of ‘enhanced self-regulation’ through the insertion of an intermediary as opposed to ‘thin self-regulation’ exercised earlier where the ‘self’ refers to the organisation (Medzini, 2022). Thin self-regulation (a company ‘regulating' itself) has little credibility because of questions around legitimacy and independence. The structure of the Oversight Board is aimed at addressing those concerns.

The idea of such a ‘Supreme Court’ of Facebook had come from Stanford law professor, Noah Feldman, a friend of the then-chief operating officer Sheryl Sandberg (Klonick, 2021). Zuckerberg announced a ‘blueprint for a new system for content governance and enforcement’ in 2018 (Clegg, 2020). The backdrop of the announcement was the series of scandals and public relations crises concerning the misuse and abuse of personal data such as Cambridge Analytica (Cadwalladr, 2018) and the whistleblower Frances Haugen (Mac & Kang, 2021).

Self-regulation as its own court

A court can only function when it is independent. Indeed, Meta has structured the Oversight Board so that it aims to achieve that end. The major concern is the independence of the Board members from its appointer. To that end, there are three components of the Oversight Board: first, a Trust that will be financed from what in essence is an endowment model; second, the Board itself, which will be appointed by trustees of the Trust and not Meta; and, third, an administration that is dedicated to serve the Board.

How can the appointees (viz Board members) making the decisions be independent from the appointer? The 2012 Report on the Leveson Inquiry following the UK scandal around the Murdoch-owned newspaper News of the World, more formally known as ‘An Inquiry into the Culture, Practices and Ethics of the Press’ offers a helpful suggestion: have an appointment panel that then selects the Board. In other words, have an appointing committee to appoint the working committee (p. 1759–1760). The committee members appointed must be perceived to be independent, free from conflict of interests. That way, there is an arm's length from the entity the Board is self-regulating. In the UK, the Leveson Inquiry recommended the structure for a new press council to replace the existent (Leveson, 2012). The recommendations, however, were never implemented in the UK.

The Oversight Board comes close to achieving the structure recommended by the Leveson Report. Facebook did in effect create an appointment panel, by first appointing the four co-chairs. Section 8 of the Oversight Board Charter, titled ‘Selection and removal of Board members’, (Facebook, 2019, p. 4) states: To support the initial formation of the board, Facebook will select a group of co-chairs. The co-chairs and Facebook will then jointly select candidates for the remainder of the board seats. The trustees will formally appoint those members.

The four co-chairs, along with Facebook executives, then interviewed and selected 16 other Board members. In May 2020, Facebook unveiled these 20 of the 40-strong Oversight Board. The remaining 20 Board members are to be interviewed and selected by the four co-chairs. In other words, the other 36 members of the Board (90% of membership) will not be appointed only by Meta. It is not quite the recommendation of the Leveson Report that 100% of the Board be appointed by the appointment panel but 90% of the Board is a cup more than half full.

Further, section 8 paragraph 2 of the Oversight Board Charter states that future Board members will be appointed by a committee of the Board. This means that Meta will have no say in appointments to the Board. These future members may be proposed by the public or by Facebook: Section 8 (paragraph 2): Thereafter, a committee of the board will select candidates to serve as board members based on a review of the candidates’ qualifications and a screen for disqualifications. Facebook and the public may propose candidates to the board. The trustees will formally appoint the members.

Board members may only be removed for violation of a code of conduct and apparently only by ‘the trustees’, five of whom were appointed in December 2020 (Oversight Board, 2020b). The trust itself is a company, Oversight Board LLC, registered in October 2019 in Delaware to manage the funds to run the Board (Oversight Board, 2019).

Oversight Board members are compensated by money from Meta, Facebook's parent company, but not by Meta directly. In July 2022, Meta made a commitment to contribute US$150 million on top of the US$130 million that was given when the Board was set up in 2019 (Oversight Board, 2022). This is the application of the arm's length principle used by Nordic governments when they subsidise their media organisations (Mangset, 2009). Having the funds earmarked for running the Board eliminates the question of financial independence.

The Oversight Board is intended to be 40 strong. Why 40? In the online space, two groups of 40 spring to mind: the Working Group on Internet Governance (WGIG) (2004–2005) and the Multistakeholder Advisory Group (MAG) (from 2006) that plans the Internet Governance Forum programme for the United Nations. The MAG may be viewed as an offshoot of the WGIG.

It should be noted, however, that the WGIG and the first MAG were chaired by highly skilled international civil servants. They were diplomats of the highest order and the fact that this Board is emulating the composition alone speaks volume of the high regard with which they (WGIG and MAG) are held.

If Facebook intends the Oversight Board to parallel the groups, that is a good thing. The WGIG and the MAG are both well regarded. They aimed to be inclusive in representation and in incorporating views, the meetings were open and the outputs have been generally accepted by the Internet community.

The first 20 Board members who have been appointed have generally earned the thumbs up online. This is important: good people with reputations to protect means that they are more likely to do the right thing than not.

Terms of reference of the board

Notwithstanding its misleading name, the Facebook Oversight Board is not an oversight board over Facebook. The Oversight Board should more accurately be called the Content Standards Board. As stated in Article 2 of the Board's Charter, it is oversight over content: Article 2 Authority to Review People using Facebook's services and Facebook itself may bring forward

It is not just any kind of content. Article 2 Section 1 restricts the content to those contentious cases where the user disagrees with the outcome of Facebook's decision to remove content and are at a dead end with no other means of recourse or appeal. Article 2 Section 1 of the Board's Charter under ‘Authority to Review’ says: In instances

In April 2021, the Board expanded its scope from appeals to put up content that Facebook had removed to take on appeals against Facebook to remove content (Oversight Board, 2021). In all cases, the Board can only take on cases after all appeals to Facebook have been exhausted.

The approach uses a complaints regime and contrasts with active monitoring that involves actively seeking out issues to address. The complaints regime is the most efficient mode of enforcement because, first, action is taken only when there is a complaint so no action is ‘wasted’ and, second, no resources are spent on active monitoring.

Given the size of Facebook as a company, and a 40-member Board, it would not be possible, nor wise, to have every member weigh in on every case. Instead, the workload would be divided up so that five members review each case (Oversight Board, 2021). Here, the Secretariat does the allocation of caseload. It will be the Secretariat that will filter many of the complaints. But the Board has the discretion to select what to review.

Separately, Facebook can submit requests for review, including additional questions related to the treatment of content beyond whether the content should be allowed or removed completely. Detailed procedures on submission and requirements for review by the Board continue to be fleshed out (Facebook, 2019). Article 2(1) states: The board has the discretion to choose which requests it will review and decide upon. In its selection, the board will seek to consider cases that have the greatest potential to guide future decisions and policies. In limited circumstances where the board's decision on a case could result in criminal liability or regulatory sanctions, the board will not take the case for review.

Here, some types of content are excluded: those that could result in ‘criminal liability or regulatory sanctions’. At one level, this does make sense: if Facebook decides that someone's post in a country could result in the person being executed there, then, even in the absence of such a clause, Board members would likely think twice about their decision because they would in effect be condemning the person to death by their decision. The wrinkle in this is that because Facebook is an international organisation, setting the rule in one locale could affect the global position. In this case, it would mean not ruling, which would mean status quo, that Facebook's final decision stays final.

Decisions 2020 to mid-2024

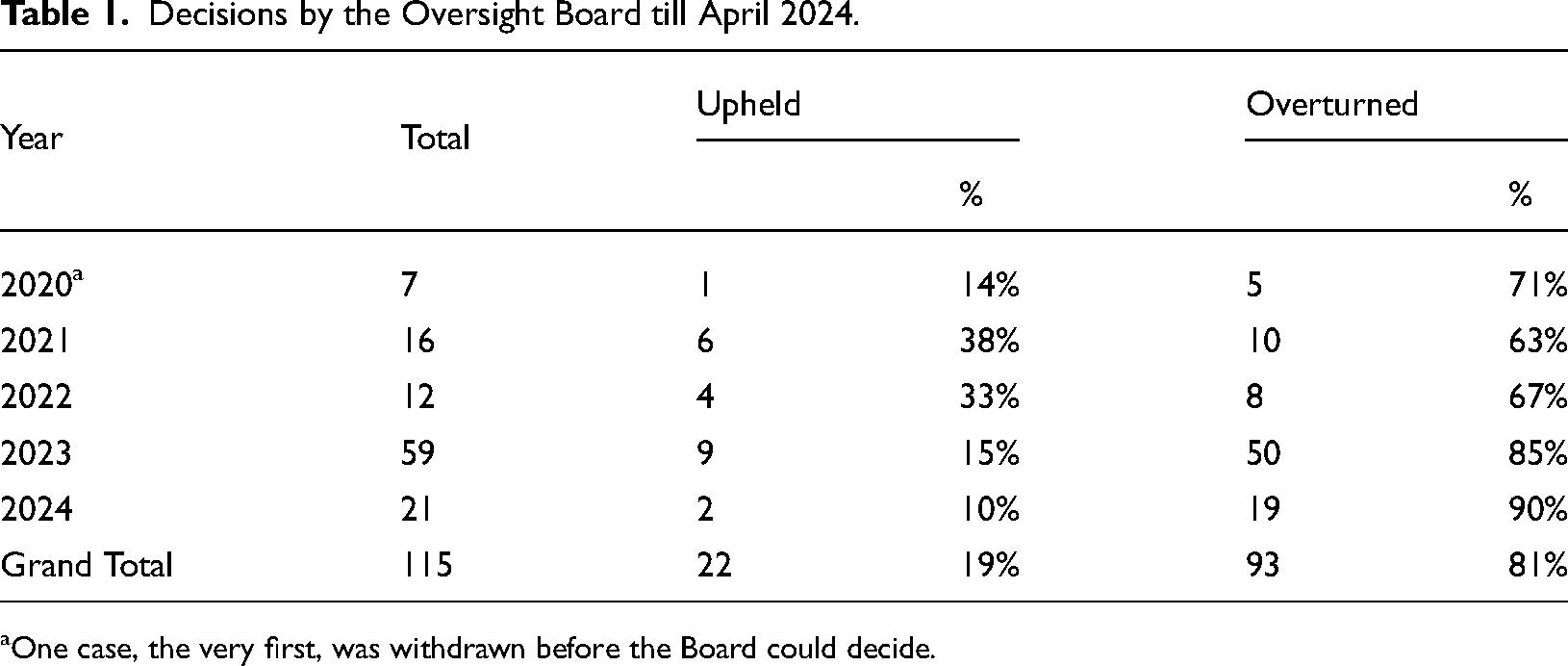

When the doors opened for appeals in October 2020, the Board received 20,000 cases. The Board chose six to review in the three months to December 2020, ‘prioritising cases that have the potential to affect lots of users around the world, are of critical importance to public discourse or raise important questions about Facebook's policies’ (Oversight Board, 2020a). Table 1 lists the decisions by the Board from that start to April 2024.

Decisions by the Oversight Board till April 2024.

One case, the very first, was withdrawn before the Board could decide.

Table 1 shows that from the start, most of the cases were decided against Meta. In fact, over the years, the percentage of overturned cases has risen. One would expect that with experience, the percentage would decline. The surprising proportion of increasing overturned cases would suggest that it is more likely that more difficult cases were being sent to the Board. Nevertheless, that so many cases are overturned, however, suggests that Facebook could strengthen its competency and capacity in this area.

Issues encountered and room for improvement: The relationship between the board and meta

For the Board to function well, the governance, legitimacy and efficacy of the Board are closely related with the performance of Meta in meaningfully considering and incorporating the decisions of the Board to Meta's content policies and practices done both by humans and algorithms (Price & Price, 2023). In other words, there should be clear and open dialogue and coordination mechanisms between Meta and the Board to ensure the transparency, accountability and compliance of and by Meta. These include the answerability of Meta to (1) any inquiries posed by the Board on Meta's content moderation rules and enforcement processes, (2) the content moderation review decisions of the Board and (3) the Board's policy recommendations.

So how has Meta fared in responding to the Board's inquiries? The Board through its transparency reports has been highlighting that initially they ‘spent eight months attempting to gain access to Meta's CrowdTangle tool to give them more information when selecting cases and assessing recommendation impact’ (Oversight Board, Annual Report, 2022, p. 20). Meta's responses to the questions posed by the Board for its (Meta) reasons and mechanisms in deciding any content moderation decisions in question improved over time. The 2022's Oversight Board Annual Report noted (p. 38):

The share of questions that Meta answered fully in 2022–86% – was identical to 2021. The share of questions Meta did not answer, however, fell from 6% (19 out of 313 questions) in 2021, to 4% (13 out of 356 questions) in 2022. We believe that Meta's answers in 2022 were more comprehensive than in 2021. When it could not answer a question, Meta explained why this was the case more often than in 2021.

After releasing its (the Board) decisions, the Board then often raises policy recommendations to Meta ‘to improve its rules and to act in a way that is principled, transparent and treats all users fairly’ (Oversight Board Annual Report, 2021, p. 2). The Board has continually urged Meta to have accessible, clear and understandable rules for in its users’ languages and for enforcement. This is as reflected in the 2021 Annual Report, ‘Our recommendations have repeatedly urged Meta to follow some central tenets of transparency: make your rules easily accessible in your users’ languages; tell people as clearly as possible how you make and enforce your decisions; and, where people break your rules, tell them exactly what they’ve done wrong’ (Oversight Board Annual Report, 2021, p. 55).

Can Facebook ignore the decisions and policy recommendations of the Board? Article 4 of the Board's Charter states: ‘While Meta must accept the content moderation review decisions of the Board, it has a liberty to consider the policy recommendations from the Board’. Moreover, although Meta can ignore the Board's policy recommendation, it would be difficult to see how it can do so when the policy recommendation presumably arises from a case that itself is implemented. In any event, Meta must respond publicly on how it is responding to and complying with the Board's policy recommendations. In other words, Meta has to respond to why it did or did not adopt recommendations of the Board.

How has Meta performed this far with respect to the decisions and policy recommendations of the Board? In general, as described in the Transparency Report of the Board: Second Half of 2023 (Oversight Board, 2023), Meta has complied with the Board's reversal decisions. To strengthen Meta's response to policy recommendations from the Board, the current rule states that Meta must respond to policy recommendations from the Board publicly within 60 days. In 2022, Meta began hiring data science teams to evaluate and demonstrate the implementation and impact of the Board's policy recommendations (Oversight Board, 2022 Annual Report). Since then, the number of recommendations that are fully or partially implemented by Meta has been increasing between transparency reports. Of the total 251 recommendations made by the Board since 2021, there are 146 recommendations that have been fully or partially implemented with published information demonstrating the implementation or the ongoing implementation. However, also since 2021, there are still 46 recommendations (18.3% of the total 251 recommendations) that had not been provided with public information but that actually had been implemented by Meta (Oversight Board, 2023).

In 2023, the Board also began improving its assessment regarding the comprehensiveness of Meta's responses, which are divided into three factors, namely whether Meta has addressed all components of the recommendation, whether it has committed to concrete action, and whether it has provided a timeline for action. When Meta meets 1 of 3 factors, it is categorised as not comprehensive, 2 of 3 factors as being somewhat comprehensive, and 3 of 3 factors as being comprehensive. Regarding the assessment categorisation above, the Board said that of the 16 recommendations given by the Board to Meta in the second half of 2023, there were 11 recommendations classified as progress reported; however, the Board has no evidence of implementation. Progress reported means that Meta has committed to implement the recommendations, but has not completed the implementation (Oversight Board, 2023).

The above summary of Meta's responses suggests that there is an improved communication flow between it and the Board. Furthermore, the Leveson Inquiry recommended that a ‘named senior individual within each [newspaper] title should have responsibility for compliance and standards’ (Part K, Chapter 7, para 4.28). Applied to Facebook, this means a senior executive should be appointed to ensure that the organisation complies with the decisions by the Board.

Can such a structure work for other tech companies

The obvious question is the applicability of the structure for companies such as YouTube, TikTok and Twitter, with millions of users and where some objectionable content will inevitably be uploaded. In principle, the answer has to be an unequivocal ‘Yes’. Having such an independent body fosters greater trust in the company. And as the business model for such companies depend on advertising for their revenue, it will be good in the long run.

There are, however, costs, both short run and long run. The long-run cost is the cost necessary to maintain such a board. Meta has committed a total of US$280 million. Assuming a 3% return on investment, a rate of return that is used in many university endowment funds amounts to US$3.6 million a year.

Conclusion

The way the Oversight Board has been established suggests that its structure and governance could make it an independent voice, a model perhaps in the governance of online content. There is a combination of institutional rules as well as personalities with reputations to protect. How well the Board coheres as a group will also depend on the leadership of the four co-chairs.

Whether the Board functions may well also depend on the issues it is allowed to address. Currently, it appears to be very narrow. Only when all appeals have been exhausted can the Board consider the case. Even so, there are tens of thousands of appeal cases every month.

The 115 cases reviewed up to April 2024 show the repeated failures of Facebook in making the appropriate content moderation decision. In those cases, Facebook was twice as likely to be wrong as right.

Nevertheless, there are hopeful signs. Facebook, after what appears to be some internal foot dragging, is more responsive and more transparent. The legitimacy and independence of the Board is not seriously challenged.

The Board structure is not perfect. There is some room for improvement. The three principal ones are, first, Meta should adopt a complete hands-off position from appointing even the trustees now that they are established. Second, there should be detailed dialogue and coordination mechanisms between Meta and the Board in order to advance the transparency, answerability and compliance of Meta to the Board. Third, there should be a senior executive within the organisation (one for each of its services) to ensure compliance with the Board's policy recommendations. Article 4 of the Board Charter binds Meta to ‘accept the content moderation review decisions of the Board’ but not the policy recommendations.

Hopefully, the defects in structure and governance should be fixed down the road. But one should not fall into the nirvana fallacy of waiting for perfection to prevent action.

Footnotes

Afternote

When this project was beginning, Elon Musk bought Twitter (Paul & Milmo, 2022), promised to set up his own content moderation council (Guardian, 2022), and then he had posted and then deleted an offensive tweet (Bekiempis, 2022). It would appear that the model of the Oversight Board may find application sooner than one might expect.

Data availability

Data are publicly available and so the study is IRB-exempt under the guidelines of Nanyang Technological University, Singapore IRB-2022-1015 (2.0).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Ethical statement

This study does not involve human subjects as participants. All the use of materials and analyses correspond to the protocols of research ethics.