Abstract

This paper explores the transformative potential of haptic design and human augmentation, inspired by Dr. Minamizawa's keynote address at the 2024 Emerging Media for Communicating Sustainable Development Goals conference (held in Yang Ming Chiao Tung University, 30 March, 2024). By integrating tactile feedback into prosthetics and wearable devices, and employing robotic avatars and virtual interfaces, these technologies bridge the physical and virtual realms, redefining presence and interaction. The convergence of haptic design with telexistence and human augmentation enhances sensory engagement and bodily awareness, challenging traditional boundaries between biology and technology. However, these advancements might also raise ethical considerations and societal concerns about privacy, accessibility, autonomy, and safety. The future of human augmentation demands responsible development and inclusive dialogue to ensure empowerment, equity, and collective progress. This paper highlights the need for a human-integration approach to navigate the opportunities and challenges presented by these emerging technologies.

Introduction

In the realm of technological advancement, the convergence of haptic design and human augmentation stands as a beacon of innovation, promising to revolutionize the way we interact with and perceive the world around us. Haptic design, with its emphasis on tactile feedback and sensory immersion, has long been recognized for its potential to enhance user experience in various domains, from entertainment and gaming to rehabilitation and healthcare. On the other hand, human augmentation, fueled by breakthroughs in fields such as robotics, prosthetics, and wearable technology, seeks to augment human capabilities, bridging the gap between humans and machines.

Dr. Kouta Minamizawa is a professor at Graduate School of Media Design in Keio University. He directs the KMD Embodied Media Project, where conduct research on technology, design and social deployment of haptics and embodied interaction to facilitate the transmission, augmentation, and creation of human bodily experiences through interdisciplinary endeavors. Dr. Minamizawa is widely recognized as a leading figure in the field of embodied interaction technologies. His scholarly contributions focus on advancing knowledge in haptic design and human augmentation, fostering interdisciplinary collaborations, and driving innovation in the realm of sensory enhancement. His expertise and leadership in this domain are instrumental in promoting academic discourse and research activities aimed at shaping the future of human–computer interaction and immersive technologies.

Below are a few thoughts stemming from Dr. Minamizawa's keynote address at the 2024 Emerging Media for Communicating Sustainable Development Goals (SDGs) conference (held in Yang Ming Chiao Tung University, 30 March, 2024).

Haptic design

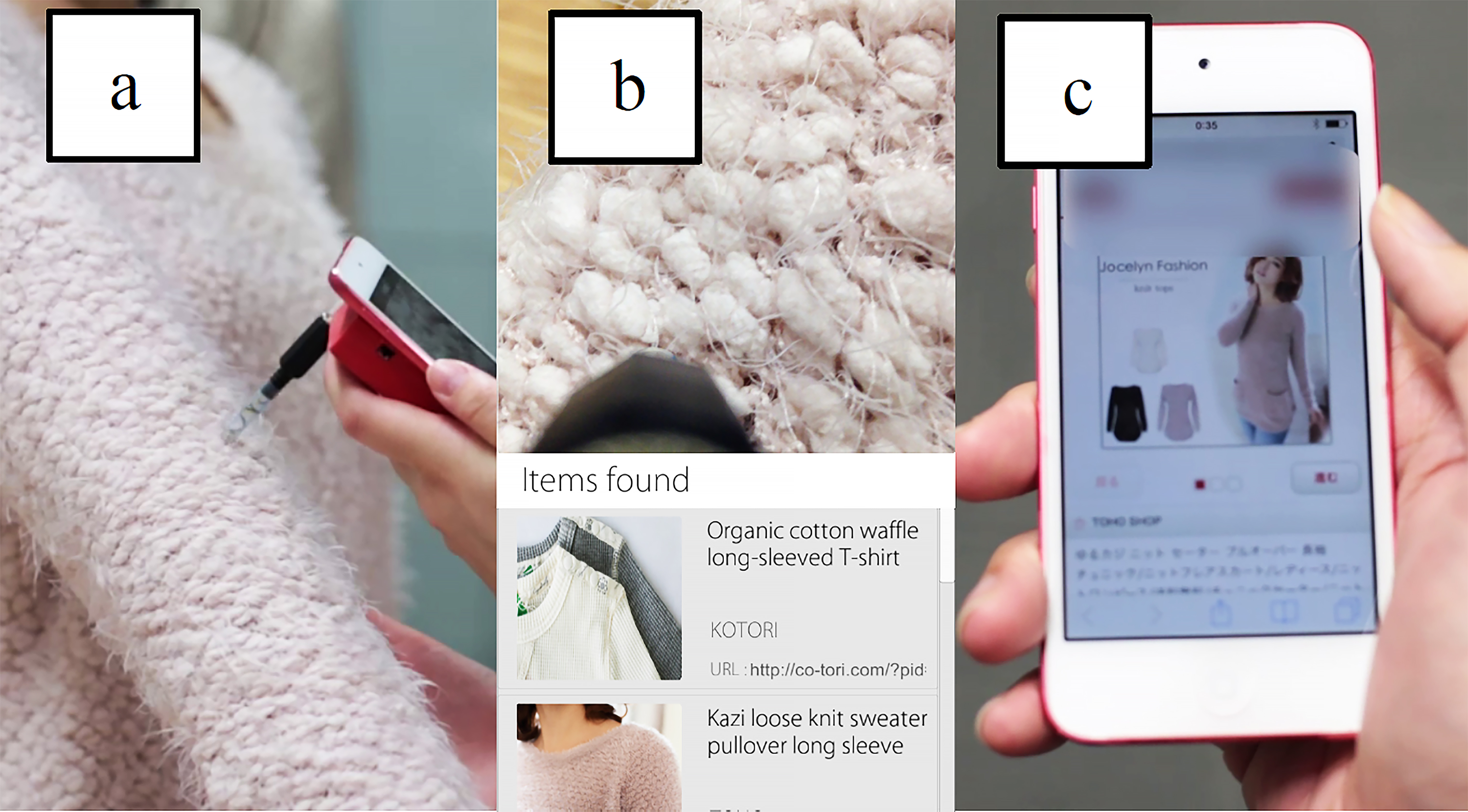

In his presentation on “Haptic Designer / 触業”, Dr. Minamizawa elaborated on the multifaceted nature of tactile perception, emphasizing the array of stimuli received through fingertips and palms. These stimuli encompass sensations such as pressure, texture, temperature, and pain, collectively constituting the role of touch in sensory perception. Drawing an analogy to equipped sensors, he elucidated how haptic technology functions as a mechanical sensor, mirroring the sensory capabilities of human cells and nerves and translating them into digital data. Through network transmission and reproduction, this technology enables the sharing of tactile sensations across distant locations, akin to the transmission and playback of visual and auditory content. Dr. Minamizawa's presentation showcased successful outcomes from his research endeavors, including the TECHTILE Toolkit (Minamizawa et al., 2012) and the CNN-based Tactile Search Engine (Hanamitsu et al., 2015), underscoring haptic design's ability to bridge physical and virtual tactile experiences (Figure 1) . The evolution of haptic technologies, from rudimentary force feedback mechanisms to sophisticated tactile displays, has been propelled by advancements in engineering, material science, and neuroscience. While early research primarily focused on basic haptic feedback for simulating physical interactions in virtual environments, contemporary efforts delve into nuanced forms of tactile communication and sensory augmentation. Fundamental to comprehending haptic design are theoretical frameworks elucidating the perceptual and cognitive mechanisms underlying tactile sensation. Concepts such as active touch, pioneered by James J. Gibson (1962), emphasize the dynamic interplay between sensory input and motor action in haptic exploration. Psychophysical models like Weber's Law and the Just Noticeable Difference (JND) offer quantitative insights into tactile perception thresholds and discrimination abilities. Recent studies by Cheng et al. (2023) and Kappenman et al. (2021) have expanded upon these foundational theories, providing nuanced perspectives on the neural mechanisms of haptic perception and the integration of tactile feedback with other sensory modalities. These theoretical advancements deepen our understanding of haptic processing in the brain and inform the design and evaluation of haptic interfaces and applications.

CNN (deep learning)-based tactile search engine (Hanamitsu et al., 2015).

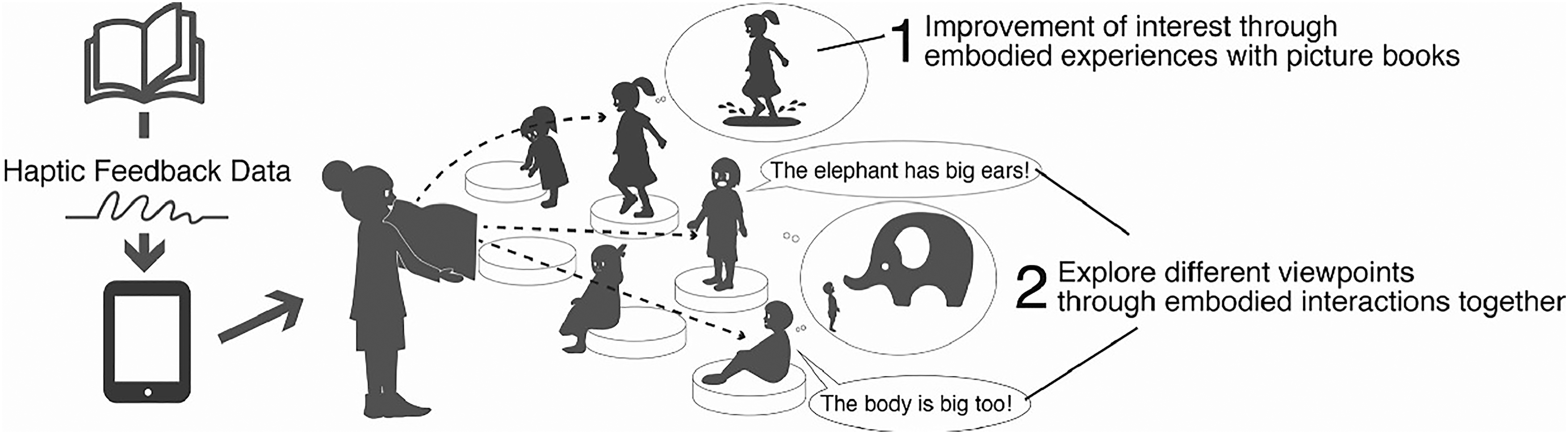

Another exciting application of haptic technologies is their incorporation into societal settings, which can yield unexpected benefits and significantly advance embodied learning for children and deaf individuals. This innovation enriches their capacity to engage with previously inaccessible experiences, such as dance performances and musical displays. Dr. Minamizawa's related developments include the “Kinder Buru Buru Cushion,” a joint project with Toppan Printing Co., Ltd., and Froebel-kan Co., Ltd. (Shibasaki et al., 2020). This cushion vibrates in sync with the content of children's picture books (Figure 2). Another development, “Karada Tap,” transfers haptic sensations and rhythmic cues from the stage to audience seats, enabling deaf and disabled individuals to enjoy tap dance performances or stage plays (Shibasaki, et al., 2016) (Figure 3); and, FeelTech™ (Figure 4) (NTT Docomo, 2023), developed for NTT Docomo, digitizes touch and allows the sharing of tactile sensations with people in distant locations. As to how a camera captures video for display on a TV or how a microphone records audio for broadcasting through a radio, FeelTech™ facilitates real-time communication through touch, compensating for information that sight and hearing alone cannot fully convey (Figure 4) to enhance life and memory.

Kinder Buru Buru Cushion (Minamizawa, 2018).

Karada Tap (Li et al., 2024)

Feeltech™ (NTT Docomo, 2023).

Moreover, the intersection of haptic design with the burgeoning field of human augmentation heralds a new era of sensory enhancement and bodily awareness. Human augmentation technologies, encompassing prosthetics, exoskeletons, and wearable devices, seek to augment or enhance human capabilities, bridging the gap between the biological and the technological. Haptic design plays a pivotal role in this convergence by providing tactile feedback that not only enriches user experience but also fosters a deeper sense of embodiment and body awareness. Research by Nishio et al. (2012) has demonstrated how haptic feedback can be integrated into prosthetic limbs to provide users with a more intuitive sense of proprioception and limb position, facilitating smoother motor control and enhancing overall body awareness. This symbiotic relationship between haptic design and human augmentation underscores the transformative potential of tactile feedback in fostering a more profound connection between humans and their augmented selves. As such, the exploration of haptic design in the context of human augmentation opens up new avenues for enhancing not only sensory perception but also telexistence and agency.

Telexistence

In Dr. Minamizawa's view, the concept of “Telexistence,” a groundbreaking fusion of “Tele” and “Existence,” redefines the boundaries of human interaction with remote environments. Through the utilization of robotic or virtual avatars, users navigate and engage with distant locales, transcending physical barriers and limitations. Highlighting his pioneering projects like the Telexistence Surveillance Vehicle in collaboration with Obayashi Corp. (Kamijo, et al., 2016) and the HUG Project in partnership with Ducklings Corp. (Saraiji, et al., 2015 ), the related technology not only revolutionizes disaster surveillance but also presents a solution to age-related challenges. By embodying human capabilities in alternative forms, telexistence opens new horizons in human-robotic or human-avatar synergy, promising to reshape our understanding of spatial relationships and redefine the very essence of presence in scenarios of agent-based interaction. The effectiveness of telexistence performance might highly depend on the user's sense of presence, wherein they feel immersed in the remote environment rather than their physical surroundings. This concept of presence is a subjective experience that requires focusing on a coherent set of stimuli provided by remote functions. Further elaboration is warranted regarding different levels of achieving the complex phenomenon of presence particularly from the embodiment perspective: the phenomenology includes the sense of self-location (SoS), the sense of agency (SoA), and the sense of body ownership (Braun et al., 2018; Kilteni et al., 2012).

Sense of self-location

In robotic teleoperation, the SoS plays a critical role in enhancing operator performance and task execution efficiency. Self-location, or the operator's perceived position relative to a remote environment, significantly influences the teleoperator's spatial awareness and interaction capabilities. These coordinate systems, such as head-centered, arm-centered, body-centered, and world-centered coordinates, switch unintentionally when humans perform different tasks (Andersen et al., 1993). Advanced teleoperation systems thus aim to create an immersive experience where the operator feels physically present at the robot's location. This immersive SoS can be achieved through high-fidelity sensory feedback, such as visual, auditory, and haptic cues. Effective self-location is essential for tasks requiring precise manipulation and navigation, as it directly impacts the operator's ability to make real-time decisions based on spatial relationships.

Several user interface factors that influence the SoS have been discussed in previous studies. One of the frequently examined factors is the field of view (FOV) (Nakano et al., 2021). The display perspective presented by the virtual or digital camera determines how users can remotely interact with the virtual / real environment, what they focus on, and how their controlled agents adapt to the context. The impact of first-person FOV versus third-person FOV on the SoS remains uncertain. Pfeiffer et al. (2013) suggest that developing full-body illusions (FBIs) from a third-person perspective (3PP-FBI) could better enhance the SoS. These 3PP-FBIs are characterized by self-identification with an entire body in extrapersonal space rather than with a body part in peripersonal space. Conversely, other researchers (Huang et al., 2017; Petkova et al., 2011) argue that collocation between the virtual and real body (first-person perspective) elicits a stronger SoS than non-collocated perspectives (third-person point of view). Haptic design is another potential approach to increasing the SoS. Synchronous tactile and visual stimuli have been shown to enhance the SoS with the remote agent (Salomon et al., 2013). The well-known Rubber Hand Illusion experiment (Botvinick & Cohen, 1998) uses such a conceptual model to demonstrate that self-location can be altered when synchronous visuo-proprioceptive correlations occur between a rubber hand (visual) and the hidden real hand (tactile). In the context of robotic teleoperation, recognizing the coordinate of the robotic hand can significantly improve operability.

Self-consciousness is another possible issue that has been discussed for its effects on the spatial relationship between the body consciousness and the agent (Lenggenhager et al., 2009; Serino et al., 2013). It encompasses self-identification and awareness of one's own body, influencing how individuals perceive their location and interact with their environment. This intrinsic awareness affects the ability to accurately judge spatial relationships and navigate effectively, particularly in contexts where real and virtual environments intersect, such as in robotic teleoperation and virtual reality. Most previous studies on artificial avatars have focused on using highly human-like avatars or mannequins to enhance the sense of presence and realism for the operator. While findings from these studies (including those on artificial limbs) are often referenced in robotic teleoperations (Kheddar, 2001; Kheddar et al., 2014), technological research in this field has generally concentrated more on the high fidelity of sensory feedback displays (such as tactile feedback) at the “master” station, task performance, and the motions of the robot in remote locations rather than on issues related to the operator's self-consciousness.

Sense of agency

SoA encapsulates the subjective sensation of instigating and regulating an action, epitomizing the sense of authorship evoked when stating phrases such as “I am the one steering this tank” or “I must have been the one to hit the ball.” As such, SoA, as delineated by Cornelio et al. (2022), involves the subjective feeling of initiating and controlling actions, allowing individuals to distinguish their own actions from those generated externally. While SoA is often associated solely with motor control, it also encompasses intentional aspects that extend beyond the body's confines. As indicated by Lopez-Sola et al. (2021), SoA is a belief-based action-effect paradigm suggesting that the user's intention to act and the resulting action are sufficient to generate a SoA, irrespective of how the action is executed, whether mentally or through physical motor movements. This broader understanding of SoA underpins various ethical and legal concepts, including moral responsibility, free will, and culpability.

In essence, the SoA emerges when individuals act as agents to take control of their own actions. Through our interactions with our bodies, we exhibit precise motor control and maintain awareness of our movements, exemplified by proprioception. Agency involves the coordination of bodily movements with our intentions and the perception of movement, intricately linked with awareness and planning (Blanke & Metzinger, 2009). This SoA extends to the manipulation of tools, where understanding sensorimotor control and the relationship between actions and outcomes is crucial. The alignment between intention and outcome further enhances this SoA. Even in scenarios involving the control of robots or virtual avatars, individuals retain a SoA despite deviations from physical reality (Kilteni et al., 2012). That is, this resilience is apparent even when interacting with non-realistic avatars or those substantially different from one's physical body. Although adjustments may be necessary when the effector (e.g. robot hands, avatar gestures) differs from the real body, individuals can adapt through processes like proprioceptive adjustments, motor learning, or recalibration of virtual space (Henriques & Cressman, 2012). However, ensuring a high level of perceptual-motor fidelity between individuals and their agents remains paramount.

Bodily ownership

In addition to the SoA achieved through regulating actions, body ownership is described as the feeling of owning one's body (or an artificial body) (Longo et al., 2008) and experiencing the body (real or artificial) as the source of sensations (Tsakiris, Prabhu, & Haggard 2006). Specifically, while SoA arises solely from voluntary actions, body ownership is experienced during both voluntary actions and passive experiences. Body ownership denotes the unique perceptual status of one's own body, imbuing bodily sensations with a distinctly personal quality in remote contexts and virtual reality, significantly impacting the sense of presence. Recently, the cognitive neuroscience community has increasingly agreed that the perception of one's own body in space critically relies on multisensory integration (Ehrsson, 2012; Kilteni et al., 2015; Lopez et al., 2008). That is, ownership illusions have demonstrated that users not only respond to threats to their virtual or rubber body parts (Botvinick & Cohen, 1998; Yuan & Steed, 2010), such as virtual arms (Slater et al,, 2008), legs (Kokkinara & Slater, 2014), or FBIs (Petkova et al., 2011), but also integrate information from afferents in the skin, muscles, and tendons, as well as visual, vestibular, and auditory signals, in the cortical convergence of body signals (Angelaki & Cullen, 2008; Hagura et al., 2007). These findings underscore the importance of bodily agency perception and the sensation of ownership.

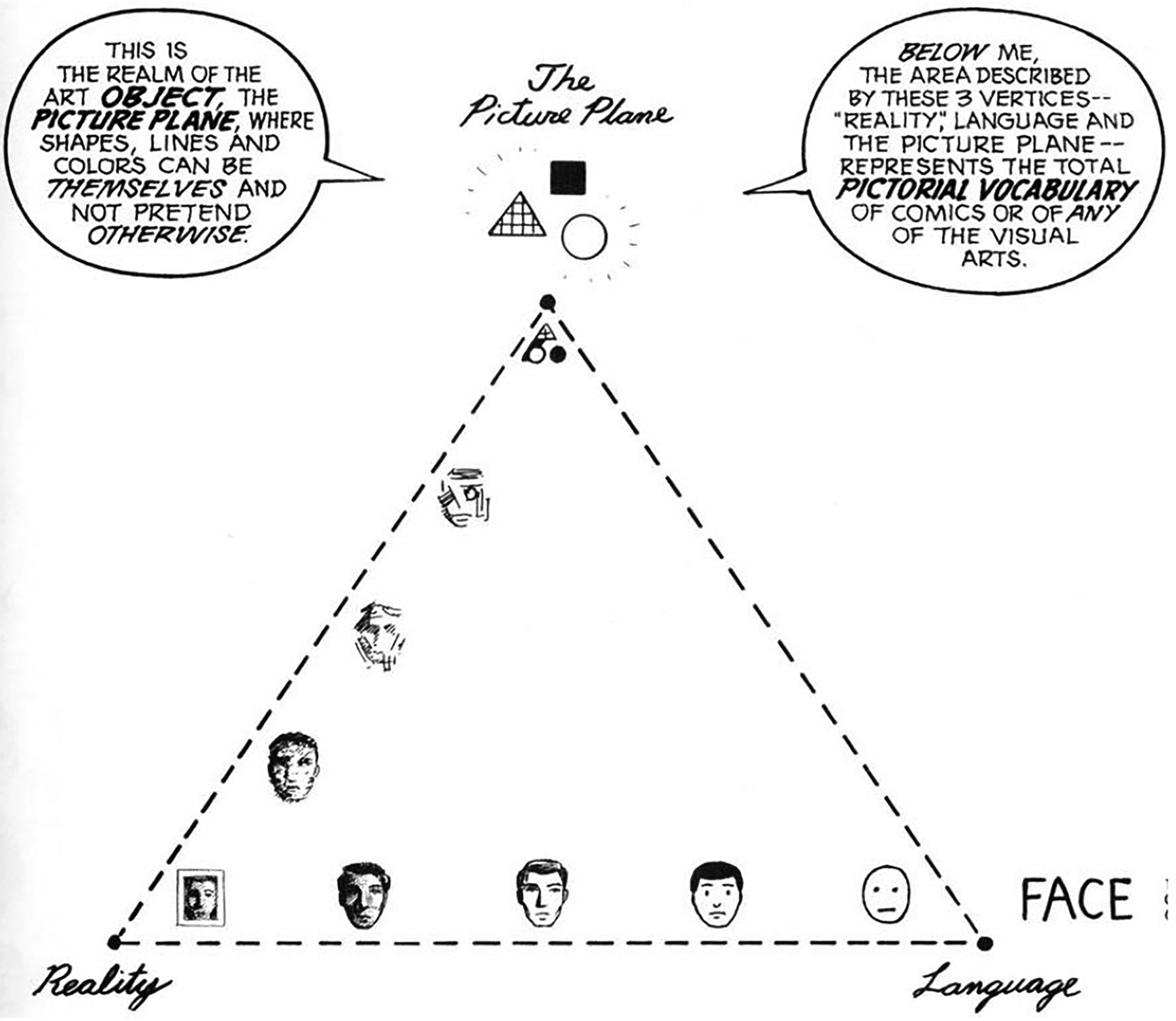

While considerable attention has been devoted to examining the resemblance between the user and the controlled agent (Guterstam et al., 2015; Piryankova et al., 2014), there remains a notable gap in research regarding how structural changes in the agent's morphology impact users’ perceived body ownership. Besides visual and visuotactile stimuli, the design of avatar appearances significantly impacts the illusion of body transfer and the sense of body ownership. McCloud's (1993) Realism Triangle Model and Peirce's (1894) Theory of Signs are widely used frameworks for categorizing graphic interfaces and character appearances: By dividing representations into two dimensions: detailed features and abstraction level, McCloud (1993) divides visual representations based on their physical resemblance (Realism), causal relationships or metaphors (Index), and abstractions or arbitrary definitions (Symbol). According to Figure 5, the horizontal axis shows a decrease in the level of detailed features from left to right, with the most realistic images (such as photographs) located at the bottom left, presenting detailed features realistically. In contrast, the bottom right depicts iconic representations that utilize metaphors or references. Lastly, the geometric figures at the top represent abstract images, whose symbolic meanings are culturally or thematically influenced.

Realism triangle model (McCloud, 1993).

Peirce's (1894) Theory of Signs classified symbols based on the principles of semiotics. Symbols are meaningful basic units defined in two parts: the signifier and the signified. The former is a sign with specific meaning (often represented as sounds or images) that evokes associations with specific objects or concepts, while the latter refers to the concept associated with this sign image. Although McCloud's Realism Triangle Model may differ in terminology, it is quite similar to Peirce's types of symbols in terms of representational meaning, namely realistic, metaphorical, and abstract symbols. Integrating the perspectives of these scholars, Rogers (1989) proposed four typologies that might more suitable for agent morphology design:

Resemblance: Direct similarity to the objects they represent, such as the lens icon for a phone camera. Exampler: Common examples of the object category they represent, such as using a trowel or broom to represent gardening. Symbolic: Conveying abstract concepts, such as a broken wine glass representing fragility. Arbitrary: Having no inherent relationship with the object or concept, requiring learning of their relationship, such as the on/off button of a computer.

Above theoretical frameworks corroborate the critical impact of agent morphology on user experience in a virtual or a remote scenario. Agents closely resembling or personalized by the user can enhance the illusion of body transfer, body ownership, and presence (Gorisse et al., 2019; Waltemate et al., 2018). However, the findings of Lugrin et al. (2015) highlight the nuanced nature of this relationship. While robotic and cartoon agents excel in inducing a strong body transfer illusion, overly realistic humanoid agents may evoke discomfort due to the uncanny valley effect. This underscores the importance of understanding how different typologies of agent morphology influence user perception and experience in virtual environments, offering insights for optimizing design choices to create immersive and engaging embodiment experiences.

Human augmentation

Not a “Tool” but an “Integration”

Since the emergence of direct manipulation and graphical user interfaces / GUIs (Engelbart, 1968; Lipkie et al., 1982; Shneiderman, 1982), mainstream human–technology interaction has undergone gradual evolution. The foundational interaction model, GUIs have enabled more direct, efficient, and robust tool usage. Recent research in the field has fast towards pervasive and intelligent interaction, including agent-based interaction (Macy and Willer, 2002), embodied interaction (Dourish, 2001), and conversational interfaces (Kocaballi et al., 2019). Despite these advancements, GUIs maintain a clear delineation between the user and the system which view computing devices primarily as tools. Human augmentation presents a different paradigm shift that builds upon these established frameworks by prioritizing human action in interaction design. This approach entails enhancing human actions with technologies focused on perception and manipulation.

In his presentation, Dr. Minamizawa emphasizes the immense potential of human augmentation technology in digitally transforming human bodily experiences, encompassing sharing, enhancement, and creation. In addition to the telexistence experience, which enables control over action, sensation, and authorship, Dr. Minamizawa advocates for cybernetic avatars (CAs) not a conventional “tool,” but as “alternative bodies,” conceived through three core constructs: (1) cognitive augmentation, (2) parallel agency & experience sharing, and (3) collective abilities. Cognitive augmentation offers users an alternative body capable of dynamically harnessing their potential in response to varying situations and environments; Parallel agency & experience sharing present an alternative body equipped to perceive and engage across multiple spaces simultaneously. Lastly, collective abilities introduce an alternative body capable of amalgamating the diverse skills of multiple individuals, transcending individual limitations to achieve collective goals.

The “Moonshot” project stands as one of Dr. Minamizawa's most ambitious research endeavors, centered on these three constructs advancing CA technology to empower individuals in unlocking their full potential and facilitating the global exchange of diverse skills and experiences. Recognizing the complex social and ethical considerations inherent in the shared utilization of physical skills and experiences, the “Moonshot” project is dedicated to establishing the groundwork for the seamless integration of embodied skills and experiences into societal frameworks. This initiative not only aims for these advancements to become commonplace but also strives for their harmonious integration into the fabric of society. Looking forward, the “Moonshot” project vision extends to 2050, envisioning a society where individuals can seamlessly connect and collaboratively craft embodied experiences through CAs. Such a vision aligns with the UN SDGs 3, 10, and 16, underscoring the commitment to promoting health and well-being, reducing inequalities, and fostering peaceful and inclusive societies.

The Avatar Robot Cafe DAWN ver.β (https://dawn2021.orylab.com/en/) (Figures 6 and 7) serves as a highly successful showcase of the “Moonshot” project. This permanent experimental cafe offers a unique environment where individuals facing challenges in mobility can remotely operate avatar robots OriHime and OriHime-D from their homes or hospitals, thereby seamlessly providing services to patrons. These avatar robots serve as interactive digital extensions of human capabilities, made possible through sophisticated sensing and actuation technologies, as well as the fusion and fission of information. The design of DAWN aligns with Moore’s (2008) concept of “human enhancement” that entails efforts to surpass the current limitations of the human body through both natural and artificial means, either temporarily or permanently. DAWA exemplifies various forms of human augmentation as described by Raisamo et al. (2019): Augmented senses, Augmented action, and Augmented cognition.

Augmented senses: This enhancement extends the range of reality humans can perceive by integrating multisensory information. Augmented action: This focuses on improving human physical abilities by sensing human actions and mapping them to actions in local, remote, or virtual contexts. Technological advancements such as artificial limbs, speech recognition, gaze controls, and teleoperation have enabled more precise functions, with robotics playing a significant role. Augmented cognition: This refers to a form of human–system interaction that closely couples user and technology through physiological and neurophysiological sensing of the user's cognitive state. This paradigm aims to transform human–technology interaction by adapting user–system interactions in real-time based on cognitive state.

Reception of cafe DAWN ver.β.

Order delivery of avatar robot.

The remote operation of avatar robots at Cafe DAWN enhances user capabilities by enabling individuals with physical limitations to perform tasks and provide services in a physical space. The multisensory interfaces employed to control the robots augment sensory perception, offering users with limited mobility a more immersive and effective means of interacting with their environment. Additionally, the system's capacity to adapt to the cognitive state and operational needs of the users illustrates augmented cognition, optimizing the interaction between the user and the robotic system to ensure a seamless and efficient service experience.

A new paradigm

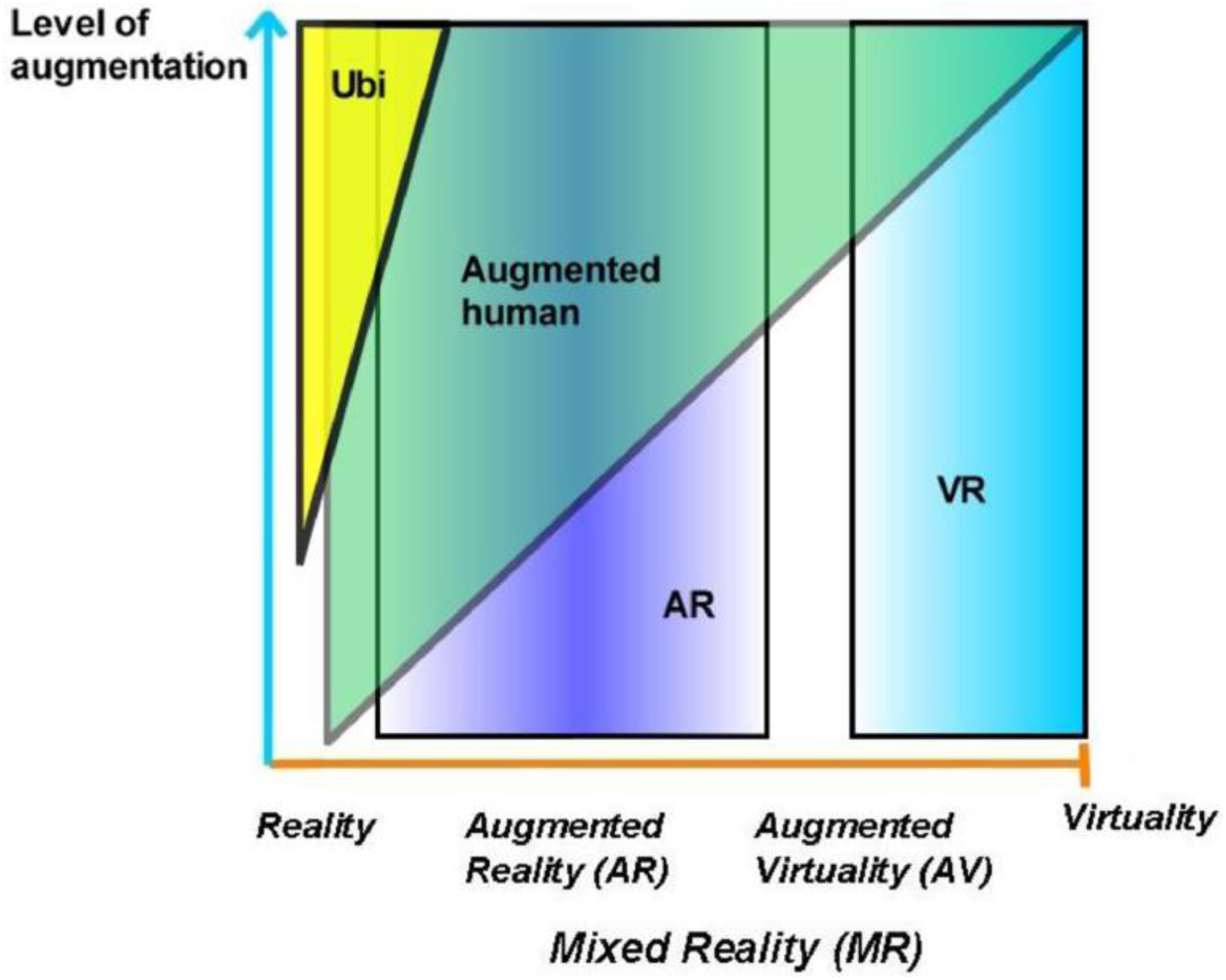

As augmented technology has rapidly evolved, the original framework has expanded to address the growing complexity and capabilities of human augmentation technologies. This evolution has led to the conceptualization of a new Human-Computer Integration paradigm, which not only includes augmented reality (AR) and augmented virtuality (AV) but also integrates essential components of wearable interactive technology with advancements in augmented senses, augmented action, and augmented cognition. To gain a comprehensive understanding of this emerging technology for effective research and design, Raisamo et al. (2019) extended Milgram and Kishino's reality-virtuality (RV) continuum (1994) to form the human augmentation technology continuum (Figure 8). Milgram and Kishino's RV continuum, introduced in 1994, has been instrumental in framing the development and understanding of virtual and AR technologies. This continuum spans from the completely real environment to the completely virtual environment, encompassing AR and AV in between. Figure 8 illustrates the extension of Milgram and Kishino's RV continuum by adding a y-axis representing the level of augmentation, which includes the number of employed sensors or tools and the integration level of augmented sense, augmented action, and augmented cognition.

Human augmentation continuum (Raisamo et al., 2019).

The augmented human paradigm utilizes elements from AR, VR, ubiquitous computing, and other user interface paradigms, merging them in novel ways. This continuum reflects a broader spectrum of human enhancement possibilities, from sensory extensions and physical augmentations to cognitive enhancements. It enables a more comprehensive understanding of how technology can enhance human capabilities in diverse contexts.

Based on this human augmentation continuum, it is essential to identify which forms of human augmentation technologies are best suited to address specific user situations or problems. Additionally, understanding the social impacts and considerations associated with these technologies, both positive and negative, has become a critical area for further exploration. On the positive side, human augmentation technologies can significantly enhance quality of life by improving accessibility, increasing productivity, and enabling new forms of interaction and experience. For instance, AR can assist individuals with visual impairments by overlaying critical information in their FOV, while augmented action technologies can aid those with mobility issues in performing tasks that would otherwise be difficult or impossible. Augmented cognition can enhance learning and memory, providing significant benefits in educational and professional settings.

However, there are also potential negative social impacts to consider. Privacy concerns arise as augmented technologies often rely on extensive data collection and monitoring. There is also the risk of exacerbating social inequalities, as access to these advanced technologies may be limited to those who can afford them (Kapeller et al., 2021). Moreover, there may be psychological effects, such as dependency on technology or a diminished sense of personal achievement. Therefore, it is imperative to explore these issues comprehensively to ensure that the deployment of human augmentation technologies maximizes benefits while minimizing potential harms. This involves not only technological development but also ethical, social, and policy considerations to guide the responsible use of these innovations.

Opportunity and challenges

From an innovation adoption perspective, user and societal perceptions of new and emerging technologies are crucial for future development. From the user's view, Whitman (2018) conducted a survey to understand the characteristics of individuals who support or oppose human enhancement technologies. The study investigated five types of human enhancements: (1) Therapeutic use to restore ability, (2) Prevention with known risk or relevant family history, (3) Prevention without known risk or family history, (4) Enhancement beyond normal human ability, and (5) Enhancement significantly beyond normal.

The survey began with an assessment of technologies aimed at correcting health deficiencies and progressed to capture public opinion on using these technologies to enhance normal human abilities. Results showed that 96% of respondents supported vision enhancements as therapeutic interventions or preventive measures. However, support dropped significantly when these enhancements were elective or exceeded normal human capabilities. Additionally, over 95% of respondents agreed that technological aids, such as medications for cognitive decline due to aging or illness, are appropriate as therapeutic interventions. With the increasing prevalence of an aging society, finding new ways to address age-related disabilities is imperative. Human augmentation technologies have the potential to enable better and longer independent living, addressing the urgent need for innovative solutions to cope with and combat age-related disabilities.

A similar conclusion was reached by Villa et al. (2023), who conducted two scenario-based studies with 506 participants from four countries. Their research indicated that respondents were more positive about augmentations aimed at compensating for a missed skill. This study also suggested that different types of augmentations elicit varying levels of concern; cognitive augmentations were less well-received than motor augmentations, and sensory augmentations raised higher privacy concerns; Rousi and Renko (2020) examined cognitive enhancement from the body's perspective, categorizing integration levels as Endo (in-body), Exo (wearable/embodied), and External (environment).

In Particular, their study addressed users’ emotional attitudes toward human augmentation technologies, wearable devices, smart clothing, smart glasses, and cognitive enhancement games. They found that people are less willing to use brain or eye implants compared to smart glasses or smart textiles, suggesting that the level of integration significantly impacts augmentation acceptance. Collectively, these studies highlight the nuanced perceptions of human augmentation technologies and underline the importance of developing targeted and acceptable solutions.

From a societal perspective, it is widely acknowledged that public perception of a technology can significantly shape its development and adoption. Social acceptability is the most well-known and extensively studied factor influencing the adoption of emerging technologies (Lu et al., 2022; Muehlhaus et al., 2022). Although human augmentation can enhance the user's quality of life (Menuz et al., 2013), concerns about its broader societal impacts persist (Bavelier et al., 2019). Lack of social acceptability can affect the self-perception of augmented users and deter early adopters from embracing new technologies (Koelle et al., 2017). Factors influencing public attitudes towards human augmentation include social manipulation, accessibility, autonomy, and safety (Fitz et al., 2014; Harborth, 2022; Ho et al., 2022).

Concerns about social manipulation stem from the ability of augmented sensing technologies to generate fabricated information, potentially subjecting users to the influence of large corporations and compromising the credibility of information (Maras & Alexandrou, 2019). Accessibility is another significant concern, as the high cost of these technologies may make them unattainable for socially vulnerable groups, such as the impaired or elderly, who stand to benefit the most from them (Obrenovic et al., 2007). Autonomy can be compromised when technologies such as sensory sharing encroach on personal independence (Wolf et al., 2016). The ethical complexities are particularly pronounced with cognitive augmentations, as neurotechnological implants and chemical stimulants carry risks of adverse side effects and potential for misuse, including in malicious activities such as terrorism (Harborth, 2022; Ho et al., 2022; Tennison & Moreno, 2012). Public concerns about safety are predominantly centered on the extensive reach of augmentation technologies, including big data, sensors, and surveillance systems. These technologies have the potential to exert unprecedented control over individuals, fueling fears of invasive monitoring and privacy erosion. The unauthorized access to large volumes of personal data could also result in identity theft and other forms of cybercrime (Davies et al., 2015; Khamis and Alt, 2021; Raisamo et al., 2019)

Nevertheless, human augmentation symbolizes a fresh technological paradigm brimming with vast potential and offering boundless opportunities for innovation. Yet, it also signifies a venture into uncharted territory, and overlooking potential adverse effects on individuals and society could precipitate undesirable outcomes in the future.

Conclusion

In 1964, McLuhan's theory of the “extension of man” posited that technology and media serve as extensions of human faculties, enhancing our ability to interact with and perceive the world. Each technological advancement extends a part of the human body or mind, thereby transforming societal structures and personal experiences. Interestingly, McLuhan's concept from decades ago resonates with the cutting-edge developments in human augmentation today. Artificial enhancements—such as prosthetics, wearable technology, and cognitive implants—embody McLuhan's idea by extending human capabilities beyond their natural limits. These modern augmentations not only supplement human abilities but also redefine the boundaries of what it means to be human.

Reflecting on the intricacies of haptic design and human augmentation, as elucidated by Dr. Minamizawa's keynote address, one is drawn into a realm where technology and human experience intertwine, promising transformative possibilities for our engagement with the world. The journey into haptic design reveals a rich tapestry of sensory exploration, where the nuances of touch are dissected and reconstructed to bridge physical and virtual realms. The concept of telexistence further expands these horizons, offering a glimpse into a future where physical presence transcends spatial limitations. Through robotic avatars and virtual interfaces, users navigate remote environments with a SoA and ownership, challenging traditional notions of presence and interaction.

Moreover, the convergence of haptic design with telexistence and human augmentation unveils a new frontier in sensory enhancement and bodily awareness. By integrating tactile feedback into prosthetics and wearable devices, Dr. Minamizawa and his peers propel us towards a deeper connection with our augmented selves, blurring the boundaries between biology and technology. This symbiotic relationship between humans and machines heralds not just enhanced capabilities but a reimagining of what it means to be embodied in an increasingly digital world.

Yet, amidst these advancements lies a call for deeper understanding and ethical consideration. As we delve into the realms of self-location, agency, and bodily ownership, we confront questions of identity and perception in virtual and remote contexts. The exploration of user interfaces and avatar morphology underscores the importance of design choices in shaping our experiences, urging us to tread carefully as we navigate this ever-expanding landscape. The journey towards human augmentation is not without its challenges. Perceptions of these technologies vary widely, from therapeutic interventions to enhancements significantly beyond normal human abilities. Understanding these nuanced perspectives is crucial for the responsible development and deployment of human augmentation technologies. Moreover, societal concerns about privacy, accessibility, autonomy, and safety underscore the need for ethical, social, and policy considerations to guide their implementation.

In navigating these opportunities and challenges, it becomes evident that telexistence and human augmentation represent not just technological advancements but also societal transformations. By embracing a human-integration approach and fostering inclusive dialogue, we can ensure that the future of human augmentation is one of empowerment, equity, and collective progress.