Abstract

Misinformation constitutes a societal practice and challenge that necessitates unwavering attention worldwide. In this essay, we discussed the theoretical advancement and empirical evidence in misinformation research, encompassing a review of definitions of misinformation, research orientations, research perspectives, and vulnerable groups. We then reviewed the misinformation fueled by generative artificial intelligence (AI) and the evolving conceptualization of literacy. To counter AI-fueled misinformation, we argue that the development of ethical AI necessitates regulations from AI practitioners and legislation, and ethical uses of AI require efforts in AI literacy education and research. The AI literacy should include (a) users’ understanding and critical evaluation of knowledge, values, and cultures within which AI systems function, and their implications on the AI-generated content, (b) users’ strategic interpretation and proper use of AI-generated content, and (c) users’ utilization of feedback mechanisms to promote institutional management of the AI power.

Introduction

With the emergence of social media and mobile media in the early 2000s, the spread of misinformation, a communication phenomenon that has existed for thousands of years, has accelerated at a speed faster than ever (Ha et al., 2021). Around the 2016 U.S. presidential election, misinformation and its news form, fake news, have been suggested to play a prominent role in influencing voters’ choices and accelerating political polarization (Howard et al., 2018). Later, the COVID-19 pandemic facilitated a surge in misinformation and fake news worldwide, which produced devastating influences and (Roozenbeek et al., 2020). Such misinformation that is inextricably interwoven with social events and realities has widely attracted the public's attention as well as concerns. In other words, it has become an important public agenda around the globe.

Since 2017, academia has witnessed exponential growth in the research on misinformation and fake news. In light of the prevalence and the perseverance of misinformation and fake news, researchers examined not only individuals’ exposure to, consumption of, and sharing of fake news during the U.S. election season (e.g. Allcott & Gentzkow, 2017; Grinberg et al., 2019) but also paid attention to the spread of fake news in other social domains, such as natural disasters, science, terrorism, finance, and health (e.g. Krishna & Thompson, 2021; Vosoughi et al., 2018) and in other non-western socio-cultural contexts, such as Asian societies (Chen et al., 2022). Following the investigation of components and communication of misinformation, there is a surge of research on misinformation correction. Studies probe the effectiveness of various message strategies in correcting misinformation, such as politeness (Malhotra & Pearce, 2022), debiasing (Dai et al., 2021), pre-bunking, and inoculation (Lewandowsky and van der Linden, 2021).

The importance of media literacy and relevant interventions in misinformation correction campaigns has been increasingly emphasized. Media literacy initially describes the skilled-based ability to access, analyze, evaluate, and communicate media information that normally has application to K-12 and higher education (Aufderheide, 1997; Christ & Potter, 1998). As the representation of knowledge and the conduct of communication are constantly changing due to the media and technology advances, media literacy can be broadly considered “part of a package of measures to lighten top-down media regulation by devolving responsibility for media use from the state to individuals, a move which can be interpreted either as ‘empowering’” (Livingstone, 2004, p. 10). The prevalence of misinformation and fake news on social media poses challenges to media literary education and warrants research on digital media literacy programs “outside the traditional classroom” (Lee, 2018, p. 461).

Today, as generative artificial intelligence (AI) has boosted the creation and spread of misinformation (Galaz et al., 2023; Zhou et al., 2023), both misinformation and literacy needed in misinformation correction should be reconsidered. The present study first aims to provide a quick overview of theories and empirical research under the umbrella term misinformation. We then argue how generative AI may influence the production and communication of misinformation and how the definition and interventions of literacy should adapt to the development of generative AI. A call for future research on misinformation and literacy is presented at the end.

An Overview of Misinformation: Theory and Empirical Evidence

Characterizing misinformation and related research

To avoid the blur of definitions and conceptualizations of misinformation-related terms, we in this essay primarily adopt the term

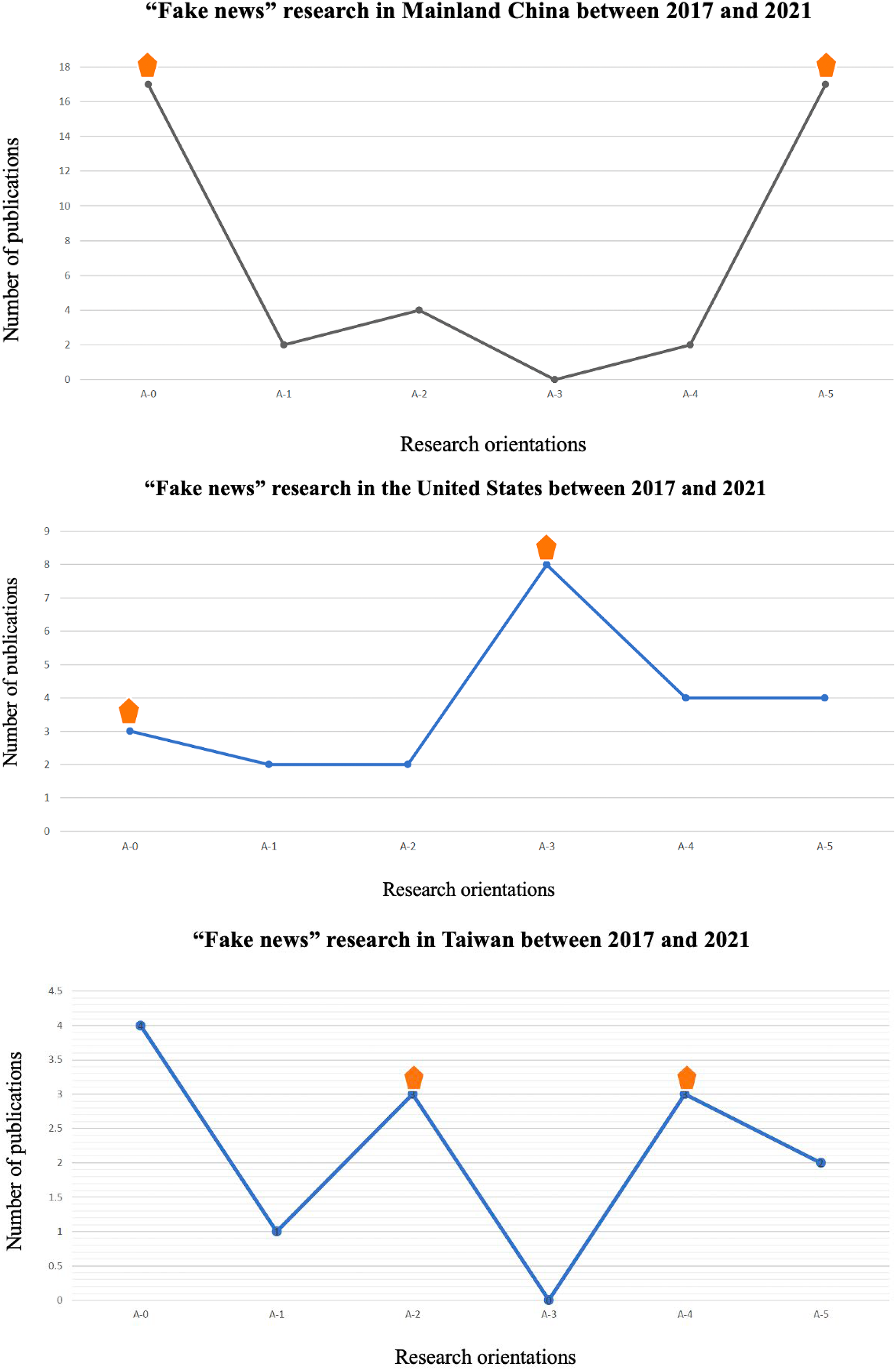

The orientations and topics of misinformation research can differ across regions. The authors conducted a brief literature review of articles related to “fake news” published in prestigious communication journals in mainland China, Taiwan, and the United States. The purpose was to explore differences between articles published in different regions in their research orientations. Considering the 2016 U.S. presidential election constitutes a catalyst for the spike of research on misinformation (Li, 2020), the time frame of this research was set between 2017 and 2021. The preliminary analyses (see Figure 1) showed that a large proportion of articles published in mainland China and Taiwan were (often non-empirical) review articles, case analyses, and essays, and they put great emphasis on the institutional-level factors centering around fake news and legislation against fake news. Comparatively, most articles published in the United States explored the influences of fake news on individuals’ attitudes, behaviors, and social interactions particularly.

Research orientations in three regions between 2017 and 2021.

Misinformation, media bias, and psychological determinants

Research on the production of misinformation can be traced back to the investigation of media bias, which is anchored in the perspective of structural influences. As the intensity of mediated experiences, institutions, events, and processes beyond one's reach have increased, media has become a vital channel for information exchange and communication and breeds mediated politics (Strömbäck, 2008). This mediated communication often goes hand in hand with bias in media coverage, characterized by the lack of accuracy and realism, the blur between fact and opinion, and slant (Hackett, 1984; McQuail, 1996). In political communication, media bias is reflected in partisanship and biased reporting that favors one political actor/position/stance over another. More specifically, the bias in political media coverage may manifest itself in the visibility of political actors/parties (i.e. visibility bias), evaluation of political actors/parties (i.e. tonality bias), and the association between political actors/parties and certain issues (i.e. agenda bias) (Eberl et al., 2017). Nevertheless, it's noteworthy that misinformation in other social domains (e.g. health-related misinformation) is found to be created mostly by individuals with no official or institutional affiliations (Wang et al., 2019), which shifts a wide bunch of researchers’ attention from the production of misinformation to individuals’ reception and formation of misinformation at a micro-level.

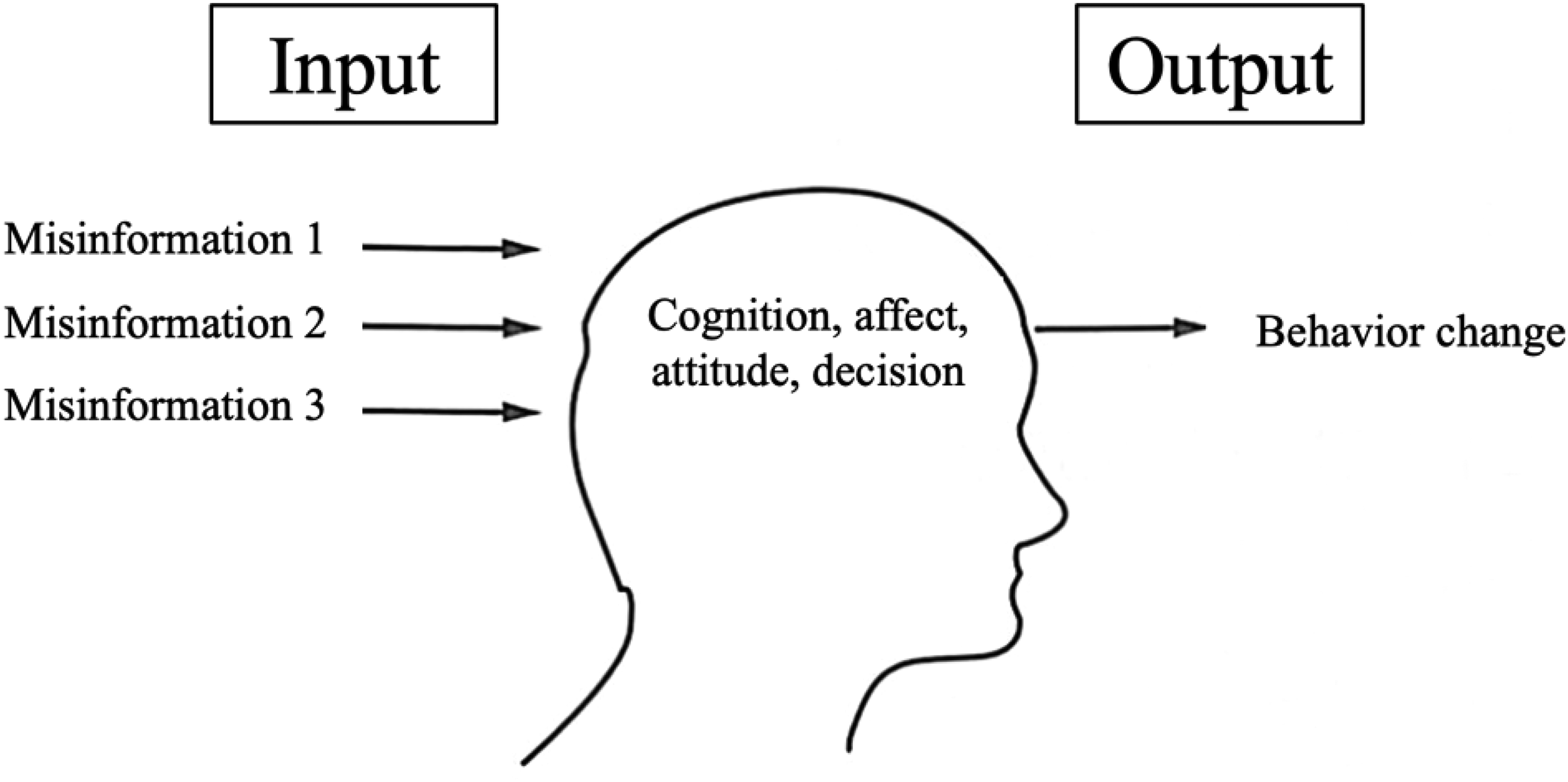

Misinformation research at a micro-level emphasizes people's false and unsubstantiated belief formation process. According to Nyhan and Reifler (2010), misinformation refers to a situation where “people's beliefs about factual matters are not supported by clear evidence and expert opinion” (p. 305). This definition is open to discussion on the formation of such beliefs from psychological perspectives. Accordingly, a general pathway is that individuals who are exposed to misinformation will evaluate or assess the information first, during which rational thinking and heuristics may intertwine, and emotions enter into the decision process at the same time; consequently, attitudinal changes and decision bias will occur, and the behavioral decision will be made (see Figure 2) (Chu-Ke, 2022). Amazeen (2023) in a recent paper proposed a misinformation recognition and response model and treated the misinformation reception process in much detail. For instance, problem identification and issue motivation are important prerequisites of the activation of misinformation recognition, after which individuals may adopt different cognitive coping strategies and display ensuing responses.

Misinformation and individual information processing.

Psychological decision theories on the role of heuristics may shed light on the individuals’ reception and formation of misinformation. As behavioral decision theory suggests, humans use heuristics to simplify their decision-making under uncertainty. Three heuristics that are often used to assess probabilities and values of uncertain information include representativeness heuristics (i.e. assessing the probability by considering the object or event to what extent is representative of existing knowledge or stereotypes), availability heuristics (i.e. assessing the probability by considering the instances or occurrences of an object or event can be brought to mind), and adjustment and anchoring heuristics (i.e. assessing the probabilities biased toward the initial values that are either provided by others or produced by insufficient computation) (Slovic et al., 1977; Tversky & Kahneman, 1974). Despite being very economical and beneficial sometimes (Bílek et al., 2018), these judgmental heuristics can lead to serious cognitive biases and facilitate the reception of political and health misinformation among citizens (Dancey & Sheagley, 2013; Hwang & Jeong, 2023).

Emotions may produce bias when individuals identify misinformation and motivate individuals to spread misinformation. According to a review of research on emotion and decision-making by Lerner et al. (2015), emotions, including incidental and integral ones, can “powerfully, predictably, and pervasively influence decision-making” (p. 802) such that they shape the content of thought, depth of thought, goal activation, etc. Anger, specifically, is associated with approach motivation (Harmon-Jones & Sigelman, 2001) and, together with counterarguments, goes against typically beneficial advocacies and drives unwise decisions (Brehm, 1966; Rosenberg & Siegel, 2018). A systematic review explored the association between emotions and misinformation, and it concluded that moderate levels of stress and short-term distress can decrease susceptibility to misinformation, while anger may increase susceptibility (Sharma et al., 2023).

Misinformation and vulnerable groups

Empirical research has suggested that senior citizens are susceptible to misinformation. Loos and Nijenhuis (2020) in their study on political fake news articles in the form of advertisements on Facebook revealed that older adults, compared with younger adults, are more likely to be exposed to political fake news articles. Yousuf et al. (2021) also found that senior citizens in the Netherlands generally believed misinformation related to COVID-19 vaccines. Some reasons behind the positive relationship between age and susceptibility to misinformation have been summarized by Brashier and Schacter (2020): they pointed out that senior citizens may have greater misplaced trust, more difficulty in detecting misinformation, and less emphasis on information accuracy when consuming information, which facilitates the reception of misinformation among senior citizens. With a cross-national survey, Jo et al. (2022) found that senior citizens are less likely to check on suspicious content. These may also be related to the digital literacy of senior citizens. Some of the senior citizens may be newcomers to the Internet and have insufficient ability to detect sponsored or manipulated content. Besides, the reception of misinformation among senior citizens can be attributed to both media exposure and social influence. For instance, family members and friends who are exposed to misinformation can actively relay it to senior citizens (Chia et al., 2023).

In addition to senior citizens, it is important to recognize that adolescents are also at risk of falling victim to misinformation. Baumel et al. (2021) suggest that adolescents may be frequently exposed to misinformation and “at a substantial risk of misinformation” because of their deep engagement in social media (p. 1021). Individuals with certain political stances can also be vulnerable to misinformation. For instance, conservative political orientation was found to be associated with higher acceptance of COVID-19 misinformation compared with liberal political orientation (Xiao, 2022). When communicating information about stigmatized health topics, those stigma-aware individuals are found to display information avoidance, which encourages the belief in misinformation (Dong et al., 2024). Besides, misinformation acceptance may depend on various information-processing styles and states. Those who experienced message fatigue and information overload were found to have a greater acceptance of COVID-19 misinformation via increased information avoidance (Hwang et al., 2022; Tandoc & Kim, 2022). For those who engaged in heuristic processing, the effect of COVID-19 misinformation exposure on COVID-19 misinformation acceptance was greater (Hwang & Jeong, 2023).

A case analysis of misinformation: pesticides into the air via aerial spraying

The emission of pesticides into the air via aerial spraying used to play an important role in preventing the spread of diseases and insect attacks in many parts of the world. In May 2016, a piece of misinformation about “the emission of pesticides into the air via aerial spraying for the control of fall webworms” started to circulate in group chats on WeChat, the most popular social media in China. The misinformation informed the citizens that the local Forestry and Park Bureau planned to use aircraft to spray pesticides to prevent and control the fall webworms and suggested citizens avoid outdoor activities during the spraying period. The misinformation spread from the east of the Chinese mainland to the whole Chinese mainland and Taiwan area at a very fast speed, which drew the attention of mainstream media and local government. The media coverage followed very soon after the virality of misinformation and confirmed that the local government would not release pesticides into the air via aerial spraying. Every year social media in China witnesses the viral spread of similar misinformation on “the emission of pesticides into the air via aerial spraying” and the correction to it as follows.

First, it's noteworthy that this misinformation heavily relies on authority heuristics. In the misinformation, either the Forestry and Park Bureau or the Department of Agriculture as the unfounded information source attracted public attention and significantly increased the information's credibility via heuristics. Second, as time went by, the content of misinformation evolved and was inextricably interwoven with trending social events. In 2019, the misinformation on “the emission of pesticides into the air via aerial spraying for the control of fall webworms” included more details than in 2016, such as associating the outbreaks of fall webworms with swine fever. Since 2020, the misinformation combined pesticide spraying rumors with COVID-19 information.

Senior citizens are found to play an important role in spreading and accepting this misinformation. In eight counties and cities in Taiwan area where the misinformation about “the emission of pesticides into the air via aerial spraying” gained the greatest popularity, senior citizens occupy over 14% of the local population. As pesticide misinformation is tightly associated with peoples’ health and environment, it may draw much attention from senior citizens and raise their rumor anxiety, which consequently leads to a greater likelihood of spreading misinformation (He et al., 2019).

Misinformation and Literacies in the Age of Generative Ai

Generative AI and misinformation

The debate about AI flooding the public sphere can be traced back to social bots and deepfakes. Social bots, “a computer algorithm that automatically produces content and interacts with humans on social media, trying to emulate and possibly alter their behavior” (Ferrara et al., 2016, p. 96), have been criticized for posing great risks to the digital environment as well as our society. They amplify interactions with low-credibility content and help circulate it to influential users so that low-credibility content can go viral (Shao et al., 2018; Shu et al., 2020). The social networks consisting of bots help diffuse misinformation and fake news (Miró-Llinares and Aguerri, 2023). Deepfake is another debatable generative AI technique that has been exploited for creating fake media. In a study on the discussion about deepfakes in Reddit communities, Gamage et al. (2022) revealed that niche social media platforms such as Reddit provide an incubator for deepfake generators/creators, and especially for expert users to support the creation of customized pornography.

Generative AI is defined as “AI based on advanced neural networks with the ability to produce highly realistic synthetic text, images, video and audio (including fictional stories, poems, and programming code)” (Galaz et al., 2023, p. 5). They are characterized by increased accessibility (i.e. being easily available), sophistication (i.e. the capacity to create longer pieces of synthetic text, video, and voice with specific prompts), and capabilities for persuasion (i.e. the capacity to elaborate the content persuasively). With these characteristics, generative AI can now help create and spread false, seemingly credible information quickly and effortlessly (Zhou et al., 2023). For instance, ChatGPT, a chatbot developed by OpenAI and launched on 30 November 2022, has been found to produce convincing text that comprises conspiracy theories and misleading narratives (Hsu & Thompson, 2023) and manufacture fake news articles that claim trusted news organizations and journalists as the source (Moran, 2023). Meanwhile, due to the easy access with minimal programmatic knowledge, human users who deploy generative AI originally as assistive technologies can unintentionally become creators and spreaders of misinformation.

Given the capabilities of generative AI and its potential impact on misinformation, it's necessary to consider the following three dimensions for the development of ethical AI and ethical uses of AI in counteracting misinformation, the first two of which concern AI practitioners and legislation, and the rest at the user end will be discussed with a focus on its implications for the literacy program and communication studies.

Making ethical AI: regulations From inside and outside

Ethical AI for combating misinformation necessitates efforts from AI practitioners, especially those who design and develop models, platforms, and APIs. First, transparent AI development practices should be encouraged. It is critical that AI practitioners, platforms, and organizations come together to create a culture that nurtures values of collaboration and openness. Specifically, AI algorithms, data, and methodologies should be openly accessible while maintaining ethical bounds to promote transparency and collective understanding. Moreover, it's important to deploy interpretable models and explainable user interfaces. With enhanced interpretability and explainability of generative AI, users can better understand how generative AI arrives at specific decisions and predictions and detect potential falsehoods. Second, there's a need for user guidance and monitoring of misuse (Zhou et al., 2023). Despite that the current ChatGPT notifies the users that “ChatGPT can make mistakes. Consider checking important information” at the bottom of its interface, such a warning cue may not be salient enough to raise users’ awareness of the potentially flawed information. Cues appearing right after copy-and-paste action may also assist in reminding the users to do fact-checking if necessary. To help users verify information, it can provide useful links to fact-checking websites, accredited news organizations, and relevant governmental agencies.

Ethical AI for combating misinformation also entails the intervention of laws. On December 8, 2023, European Parliament and Council negotiators successfully reached a provisional agreement on the Artificial Intelligence Act (i.e. the “AI Act”). This is the inaugural comprehensive law on AI enacted by a major regulatory body, aiming to “ensure that fundamental rights, democracy, the rule of law and environmental sustainability are protected from high risk AI, while boosting innovation and making Europe a leader in the field” (Artificial Intelligence Act, 2023). While the AI Act does not directly target misinformation, its provisions regarding prohibited AI practices and requirements for high-risk AI can contribute to the development and deployment of more reliable AI systems for combatting misinformation. For instance, in line with the interpretability and explainability, we previously mentioned, the AI act emphasizes the rights of individuals who may be subject to decisions produced by high-risk AI or adversely impacting their health, safety, etc., to request from the AI deployer clear and meaningful explanations about the role of the AI system plays in the decision-making process. Some general-purpose models that are often deployed to produce misinformation and disinformation, such as generative models that create images, code, and video, have also been regulated accordingly (Gibney, 2024). Enforcement mechanisms and penalties for non-compliance to provisions regarding data governance, transparency obligations, monitoring, and surveillance are established.

Promoting ethical use of AI: constructing AI literacies

Faced with the changing media landscape and pervasive misinformation, a variety of literacy has been conceptualized and investigated. For instance, Khan and Idris (2019) contribute to the conceptualization of information literacy by specifying information-seeking, sharing, and verification skills. Specifically, information verification skills refer to the capacity to verify online information using an online tool and to use a search engine. Carmi et al. (2020) argued about the significance of data literacies in an increasingly datafied society. They emphasized what data literacies encompass in addition to the previous literacies, e.g. networked literacies, critical thinking about the online ecosystem, and literacies assisting in active citizenship, to help citizens identify and combat the misinformation crisis. In light of the development of generative AI, Long and Magerko (2020) further devised a conceptual framework for AI literacy composed of five overarching themes:

Here, we’d like to engage in a dialogue with the conceptualization of literacy by Livingstone (2004) and explore a few points that may offer directions for future literacy education and research regarding AI-fueled misinformation. According to Livingstone (2004), literacy is rooted in the historically and culturally conditioned relationship among three processes:

Conclusion

Misinformation is a social practice and challenge that demands our substantial attention. With the proliferation of falsehoods, the erosion of trust and deepening societal divisions are inevitable and undesired consequences. Therefore, misinformation research is not only a scholarly investigation but also a social inquiry. In this essay, we briefly reviewed theoretical advancements and empirical evidence regarding misinformation. We suggest that the definition and study of misinformation can vary across disciplines and regions due to differences in research orientations, methodologies, and cultural contexts. Misinformation research at the macro level primarily touches upon media bias and institutional inadequacy that facilitate the information crisis, whereas micro-level studies mainly examine the (conditioned) process of individuals’ reception and formation of misinformation. Populations that are specifically vulnerable to misinformation and a case of misinformation about “pesticides into the air via aerial spraying” are discussed.

In the age of generative AI, misinformation and AI literacy targeting misinformation should be reconsidered. It's imperative that we embark on ethical AI development and ethical AI use to combat the rising tide of misinformation. The call to action should and has been increasingly made for AI practitioners and lawmakers alike to embrace ethical guidelines and regulations. Furthermore, the empowerment of users through the implementation of AI literacy programs and the study of communication is of necessity. We suggest that at least three aspects of AI literacy should be incorporated into the current literacy education and research: (a) The understanding and critical evaluation of knowledge, values, and cultures within which AI systems function, and their implications on the content they generate; (b) The strategic interpretation and proper use of AI-generated content; (c) The utilization of feedback mechanisms to promote institutional management of the AI power.

Footnotes

Acknowledgment

All authors have agreed to the submission and that the article is not currently being considered for publication by any other print or electronic journal.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.