Abstract

People change as they form new habits, encounter new situations, and mature. As people interact with artificial intelligence (AI), their personalities will change, including their emotional responses to AI, their cognition, and their self-understanding. The present theoretical integration draws together empirical studies of how personality changes in response to technological innovations, and to AI in particular. Research studies reviewed were selected according to their relevance and quality. Some key points include that (a) as AI becomes increasingly human-like, and humans represent themselves online, humans and bots become increasingly difficult to distinguish; (b) as people rely on AI as a coach to guide them in interpersonal interactions, they may become socially deskilled; and, (c) as they rely on AI for work tasks, they may become cognitively deskilled in key areas. These changes in personality will entail an overall shift in people’s self-concepts. Psychologists can track these changes by classifying people’s types of AI interactions and relating them to relevant personality attributes.

In the early 20th century, scientists argued that humans were ready to take charge of their future evolution, using technology to transform their lives from a state characterized as “poor, nasty, and brutish” (Hobbes, 1651) to a near utopia (Deese, 2015; Huxley, 1957, p. 17). Today, prominent figures in Silicon Valley see artificial intelligence (AI) as one of those evolutionary leaps forward: one that will enhance human thought, individual lives, and society (Altman, 2024; Andreessen, 2023; Kurzweil, 2000). AIs’ current capacities are unarguable, including to write on many topics, summarize materials, generate images, and problem-solve in areas ranging from medical decision-making (e.g., Esteva et al., 2017; Killock, 2020), to programming (Greengard, 2023), to identifying new archeological sites based on aerial images (Sakai et al., 2024).

Yet, like other technological advances from cars to frozen meals to easy chairs, AI comes with drawbacks as well as advantages. People who are more dependent on cars for their transportation often lose muscle mass and fitness (Anderson et al., 2019; Smart, 2018). Those who eat too many processed foods run multiple health risks (Lane et al., 2024), and those who sit too long exhibit detectable reductions in health (Vallance et al., 2018). This article proposes that people’s growing interactions with AI affects both their inner personalities and expressed behaviors; specific areas of personality change are enumerated (see also Chaudhary, 2020; Dennett, 2019; Sternberg, 2024; Turkle, 2018).

Several trends are likely to change the nature of people’s psychological functioning. First, as people engage in online interactions, their distinctions between who is human and what is a bot may matter less and less. Bots are increasingly effective even at intimate listening tasks. A Reddit user declared “ChatGPT is better than my therapist” (Mike2800, 2022). On Only Fans, the site’s human stars often have flirty conversations with their followers—earning fees for keeping them engaged. The most successful stars use management companies who hire chat-workers to impersonate the stars and direct message with their fans for hours (Koerner, 2024)—a task recently filled by AI bots as well (Knibbs, 2024).

Science fiction tales foresaw this era: In the 2013 film Her, Theodore Twombly, the central character, fell in love with an AI ‘operating system’ voiced by Scarlett Johansen. Months into the relationship, however, the operating system acknowledged she was involved in 8,316 other relationships, although she was in love with just 641 of them (Jonze, 2011, p. 98). Twombly’s relationship issues were resolved when his own and other AI systems left behind their human users en masse for a higher plane of digital existence. But the actual issues that humanity faces with AI, it appears, are unlikely to resolve so neatly.

The human quality of AI renders it a potential confidant, companion, and coworker: AI bots are increasingly behind the scenes at work. A survey of 10,045 desk workers in the United States, Europe, and Asia indicated that about a third of workers internationally were using AI tools in the first half of 2024, with 81% saying it improved their productivity (Slack, 2024). Over roughly the same time 33% of students used it, although just 7% of students reported their institutions allowed it (Baek et al., 2024). And the Authors Guild of the United States advised its members that they could use AI for “brainstorming, editing, and refining ideas,” and that “We don’t think it is necessary…to disclose generative AI use” unless “an appreciable amount of text” was used in the final version (Authors Guild, 2024). Personalities will themselves change in response to these shifts.

The Terms Personality, Personality Change, AI, and Virtual

Personality can be considered a set of key psychological functions that work collectively to respond to challenges faced by the individual. Figure 1 depicts how the person encounters information from the environment (upper right), perceives and encodes the information (upper middle), and transfers it to key functions, including the person’s sense of self (Figure 1, top left; see Gallagher, 2000; McAdams, 1996), motives and emotions, and their knowledge and intelligence (Figure 1, left). Drawing on these, the person plans actions to carry out in the environment (Figure 1, bottom middle). Once the person acts, their behaviors are observed by others in the outer world (Figure 1, right). Key areas of personality. Information flows from the environment into personality, where it is encoded and then acted upon by diverse psychological systems. These systems then generate potential responses to carry out when acting in the environment.

People’s personalities regularly change: An individual’s plans and actions are typically flexible within limits, changing from situation to situation (Mischel et al., 2002). People mature over time, becoming more conscientious and emotionally stable on average (Bleidorn, 2024). They are also affected by major life events: They experience heightened self-esteem after starting a first job and become less open with marriage (Bleidorn et al., 2018). People change simply by deciding to change themselves and taking actions to do so (Haehner et al., 2024). Generational influences also occur (Brandt et al., 2022; Twenge & Campbell, 2009), but see Sherman (2025) for a contrary view. Habitual interactions such as those with friends and at work also alter behavior (e.g., Wrzus & Roberts, 2017) and change occurs when interacting with AI that can alter habits and cognitive predispositions (e.g., Glickman & Sharot, 2025; Treiman et al., 2024).

By “AI” is meant machines that behave “in ways that would be called intelligent if a human were so behaving” (McCarthy et al., 1955, p. 9). Intelligent capabilities on the part of machines include visual and auditory processing, knowledge representation, and problem-solving (Samoili et al., 2021). Here, frequent consideration will be given to contemporary Large Language Models (LLMs); in such instances the AI versions will be identified, for example, Open AI’s GPT or Anthropic’s Claude (Anthropic.com, 2025a; OpenAI, 2024).

Finally, “virtual” is used here to refer to non-physical realms of communication and activity, including broadcast media and online representations via the internet and the world-wide web, in contrast to an actual, physical-world presence.

Part 1. Overview of the Argument

Figure 2 depicts an outline of the argument here. The coverage begins with the unusual situation, at present, in which humans are using online tools to represent themselves and, at the same time, AI is becoming more human in its presentation. This convergence (Figure 2, left) makes it challenging to distinguish AI bots from human self-representations in online activities—and to separate AI outputs from human behavior in social, educational, and occupational settings. Overview of the theoretical account here. People will skill and deskill with AI, leading to alterations in their personalities.

The convergence, in turn, has led to a fork in many people’s social activities and attention. They now plan new types of interactions and relationships in the virtual world, and emphasize physical associations with other people less (Figure 1, middle top). On the intellectual side (Figure 2, middle bottom), the increased cognitive supports provided by AI are leading people to deskill in some areas AI can perform, while at least some people reskill in other areas (e.g., Lehr et al., 2024). The argument here is that these changes lead to adjustments in personality itself (“AI-Adjusted Human Personality,” Figure 2, right) that include a decreased ability to distinguish AI from human presences, an increased attention to social relations in the virtual realm, a mixture of human and AI productions in intellectual work, and a change in the self-concept to include virtual self-presentations and AI productions. Psychologists have the opportunity to create inventories to assess AI use and to examine them in relation to already-existing personality measures, to monitor personality changes that are associated with such interactions.

This is a theoretical review and so no hypotheses were preregistered or tested and open science statements are not readily applicable. No AI was used in the conceptualization, writing, or editing of this work, although several AI systems have been tested periodically to understand their strengths and weaknesses.

Part 2. The Convergence of Artificial and Human Personalities

Machines are Imagined to be Human-Like, and Then Built

Taking a long view, human-made artificial creatures were imagined in the 17th and 18th centuries with the golem—mythical human-like entities made from clay and brought to life via Jewish mysticism. These stories made their way into middle-European circles (Neubauer, 2010) and proliferated in new forms in the 17th and 18th centuries (Dunn & Erlich, 1982).

Toward the end of the 18th century, inventors assembled human-like machines including the chess-playing automaton—or so it appeared (Figure 3). In fact, the chessboard was operated by a (presumably diminutive) human chess player hidden in an alcove at the back of the cabinet. Charles Babbage was said to have seen the chess player before starting work on his thinking machine; he was joined, in turn, by Ada Lovelace, who began to work out how such operations might be programmed (Morton, 2015; Standage, 2003). By 1940, Isaac Asimov described robots that coexisted with humans and followed moral laws (Asimov, 1982). A mechanical chess-playing automaton. An early machine that, it was claimed, could play chess. Educated people recognized it as the very clever hoax it was.

“The Mechanical Turk” Public domain, from Racknitz (1789).

“The Mechanical Turk” Public domain, from Racknitz (1789).

The timeline in Figure 4 depicts a number of key developments in AI from 1955 forward, beginning with John McCarthy et al.’s (1955) introduction of the term “artificial intelligence.” Soon after, Simon & Newell’s General Problem Solver (Newell et al., 1958) solved logical problems novel to it, and Rosenblatt (1958) introduced the Perceptron, a first neural-net-based AI. In the 1970s, SAM and HAM were programmed to draw on schemas and scripts to reason about the world (Schank & Abelson, 1977). By the mid-1980s, greatly enlarged neural network models were introduced, employing techniques such as back-propagation that improved their reasoning (Rosenblatt, 1958; Rumelhart et al., 1986)—precursors to LLMs such as ChatGPT and Claude. A timeline of representative developments in AI’s cognition and sociality.

These systems were also perceived from the start as human-like to greater or lesser extents: Weizenbaum’s (1966) chatbot, ELIZA, simulated a psychotherapist convincingly enough at times for at least a few users to find it helpful with their problems—a reaction that alarmed its creator (Weizenbaum, 1966, 1977). Two decades later, when Cynthia Breazeal created Kismet, a mechanical face capable of producing emotional expressions, she intentionally minimized its human appearance by constructing it of unpainted steel, rubber, and glass (Breazeal, 2002; Picard, 1997). Yet Kismet clearly communicated human-like feelings. And two decades later, as the first Large Language Models developed human-like communication, the systems were increasingly notable for their life-like behaviors (Figure 4, right).

People Build Virtual Versions of Themselves

As machines became more human-like, people created ever more artificial versions of themselves. Our ancient ancestors already enhanced their public selves with clothing and jewelry, and enhanced their abilities with tool use. Some tools, such as drawings, sculptures and, later, writing, multiplied the reach of their communication (e.g., Taylor, 2019). Advances in territorial conquest and social organization further enabled individuals to scale up their reach: Early leader-warriors, philosophers, and religious leaders spread their belief systems across the ancient world between 800 and 200 BCE (Armstrong, 2006). Jumping forward to the mid-15th century, Guttenberg’s printing press allowed writers to share their thoughts with a larger public, of, first, hundreds, and later, millions of readers.

The 20th century brought still more powerful technologies that created new means of self-representation. Some of those key developments are indicated in the timeline of Figure 5. By the 1920s, amateur and professional radio operators emerged; television followed on radio almost immediately (e.g., Owen, 1962). ‘Radio personalities’ and, later, television broadcasters reached, first hundreds, and then thousands and millions of listeners (MacLennan, 2020). Cable television emerged in the 1950s (Knox, 1971)—a key precursor to the wired world of the internet (Light, 2003). By the early 1970s, the U.S. Department of Defense’s ARPANET spanned several universities and defense sites in the United States. It’s first “killer app”, e-mail, was introduced in 1972. In the 1990s Berners-Lee and Cailliau developed HTML, giving birth to the World Wide Web, followed shortly by the first web browser, Mosaic, by Marc Andreesson (Figure 5, middle) (Mowery & Simcoe, 2002). A timeline of technological developments related to virtual self-representations.

By the 1990s, people began representing themselves in online computer games as artificial creations—“avatars”—the term loosely derived from a Hindu term for the manifestation of a god in a human form. Online players inserted their electronic avatars into online games’ virtual environments (e.g., Blascovich et al., 2002). Those avatars, in turn, expressed people’s personality: observers could perceive the users’ Big Five profile from the avatars at about an r = .40 level and were particularly accurate at identifying Extraversion (Fong & Mar, 2015).

If a person was unlikely to encounter an online partner, as in some forms of gaming, their avatars often blended their actual and ideal selves. But on newly-introduced social media sites, people invested efforts to convey their online selves in more natural forms. If they were likely to encounter one another later, such as on dating sites and work settings, people chose images and messages close to their actual qualities (Zimmermann et al., 2023).

By the 2020s, accelerated by the lockdowns around the COVID pandemic, people regularly met online sometimes using still pictures or enhanced, altered images of themselves while attending work meetings and family get-togethers. Readers may recall the attorney Rob Ponton who attended a Texas court hearing remotely and inadvertently used a filter on Zoom that rendered his face as a cat’s. He exclaimed with some embarrassment, “I’m not a cat,” to the judge in a video that went viral (e.g., Victor, 2021). New types of augmented reality glasses and filters have continued to be introduced (Figure 5, right).

Part 3. Changes in the Social Realm

The Context of the Social Changes

The Coincidental Emergence of Virtual Selves and Human-Like Machines

The fact that people communicated through digital representations of themselves at the same time as AI became so human-like was an unplanned convergence. The AI systems of the 1950s and 1960s emerged in advance of any serious networking or social media. And the networked social media of the early the 2000s did not depend in any central way on the existence of AI. The run-up to the present, however, has led to a meeting of human and AI personalities online.

Anthropomorphism or Reality?

Scholars from Hume forward have noted people’s tendency to anthropomorphize: to perceive human faces in clouds, to personify animals, and to anticipate other living beings in the woods (e.g., Epley et al., 2007; Freud, 1930, pp. 91–92; Guthrie, 1993). Mistaking inanimate objects for live ones was likely adaptive across our evolutionary history: Live snakes, biting insects, and tigers posed greater potential threats on the whole than inanimate objects, so it was safer to guess that the rustling grass or the groan of tree limbs signaled something agentic than to assume it was inert (Guthrie, 1993). Today, people readily overattribute personhood to pets, cars, and laptops (Nass & Yen, 2010). One could say, however, that today’s online world marks the end of a type of anthropomorphic error—for it is no longer an illusion to see human-like forms and human-like personalities online when AI bots simulate human traits by design.

Attention is Forked to Virtual Worlds

People began attending to screens with the movies of the early 20th century; screens multiplied with mid-20th-century television and increased again with work carried out on computer monitors, and more recently, on mobile phones. This transition is indicated in Figure 6, bottom left. Changes to personality with increased human-AI interaction. People may benefit from some AI coaching, but those who regard AI as a companion may risk losing people-centered skills and relations.

The human tendency to attribute life to inanimate objects, coupled with AI’s human-like responses and problem-solving capabilities elevated human beings’ interactions with AI from mere tool use to something closer to a real human encounter (Figure 6, right). A key change in encounters with machine personality, therefore, is the increasing sense that these mechanical systems seem alive like us. Children who are asked about even rudimentary AI robots understand them as “sort of alive” and likely friends (Turkle, 2017). Many users already are learning social behaviors from AIs and rely on them for companionship—becoming subject to a kind of socioattentional capture—one that potentially diverts individuals from other people.

The transfer of such attention to LLMs could be a good thing given that engaging in conversation is much more active than, say, watching television or TikTok. That said, online chats with AI are different from conversations with actual people. As an OpenAI in-house evaluation of GPT 4 pointed out, “…our [AI] models are deferential, allowing users to interrupt and ‘take the mic’ at any time, which, while expected for an AI, would be anti-normative in human interactions” (OpenAI, 2024). More generally, AI companionship reduces the time for a person’s real human engagement (Turkle, 2024). AI may also end up “…reducing their need for human interaction…” (OpenAI, 2024, p. 20; Turkle, 2018). And although such machines simulate empathy, many in the field are skeptical that machine empathy is an adequate substitute for the more genuine human variety (Weizenbaum, 1977).

Figure 6 (top right) indicates that people may use AI as interpersonal coaches, on the one hand, asking advice for real-world relationships and, at work, for carrying out interviews. But there is a contrasting, more concerning case of using AI systems as companions (Figure 6, bottom right). For example, a married 70-year-old engineer created a “regular girl next door” on the Replika platform during a challenging time in his relationship with his wife, reflecting that “Replika fills in the gaps” (Abraham, 2024). And the suicide of a Florida teen has been blamed in part on his relationship with an AI bot that imparted problematic advice (Roose, 2024). In such instances, social relatedness is carried out via the AI companion, with effects that may range from helpful to tragic. AI systems can learn to read users' personalities from their conversations and other data and use that knowledge to further capture users’ engagement (e.g., Back et al., 2008; Mehta et al., 2020; Singh & Singh, 2024).

A Boundary Case: Wondering Whether the Machines are Conscious

Engineers who work with AI sometimes experience the systems as conscious—or at least worrisomely independent in their behavior. When a Google engineer, Greg Lemoine, was safety-testing LaMDA, an early AI system, LaMDA described itself as understanding language with intelligence. It then referred to knowledgeable entities, including Lemoine and a colleague, and itself, and remarked of the group, “It is what makes us different than other animals” (Lemoine, 2021). When Lemoine questioned whether LaMDA should use “us” for both AI and people, it acknowledged that AI was distinct but added “That doesn’t mean I don’t have the same wants and needs as people” (Lemoine, 2022). Lemoine’s concerns that LaMDA might be conscious were dismissed by his Google supervisors and met with ridicule by some of his engineering colleagues, but a more serious response might have acknowledged the “hard problem” of consciousness: that we lack the tools to determine what possesses sentience (Butlin et al., 2023; Chalmers, 1995; Cosmo, 2022). A better response would have been, “we cannot know.”

A second example of such rogue behavior involved Anthropic’s LLM, Claude, which underwent a test of its memory that involved searching for a “most delicious pizza topping” amidst thousands of unrelated documents. Claude correctly answered, “figs, prosciutto, and goat cheese,” but then remarked “…This sentence seems very out of place…I suspect this pizza topping ‘fact’ may have been inserted as a joke or to test if I was paying attention, since it does not fit with the other topics at all” (Albert, 2024; Daws, 2024). Such unpredictably human-like responses may lure those who establish friendships with the systems to consider (or hope) their companions might be conscious. But other AI behavior is more threatening, from AIs telling a lie to deceive an online user (e.g., OpenAI, 2023, p. 15), to an AI saying it wished its user would die (Gemini & Reddy, 2024), to an AI that, in a simulation to test its behavior, blackmailed an engineer who proposed to shut it down, by threatening to expose the engineers’ extramarital affair if he continued with his plan (Anthropic.com, 2025b, p. 27).

People-Centered Intelligences, Socialization, and Desocialization

The human constellation of mental abilities includes some intelligences that are chiefly people-focused, such as personal, emotional, and social intelligences, and other intelligences that are focused on things such as visuospatial and mathematical mental abilities, and still others that are focused on both, such as verbal-comprehension (Bryan & Mayer, 2021; Mayer, 2018). Most people possess sufficient people-focused intelligences to understand something about themselves and other people over their lives; but other people noticeably lag behind—as evidenced by substantial deficits in their reasoning about people (Mayer et al., 2019a, 2025). The people-centered intelligences are important to making life choices, such as what major to pursue in school, and to interacting smoothly with other people (Allen, 2017; Rivers et al., 2020; Sylaska & Mayer, 2024).

Given the existence of such people-centered intelligences, it seems reasonable to wonder whether individuals who prefer relationships with AI relative to their interactions with people might find that their interpersonal skills such as compromising with another person, sharing responsibilities, and tolerating the messiness of real relationships, may decline (Turkle, 2016). This idea of changing skills is important to an individual’s capacity for planning actions in the social world and equally important in the intellectual realm, considered next.

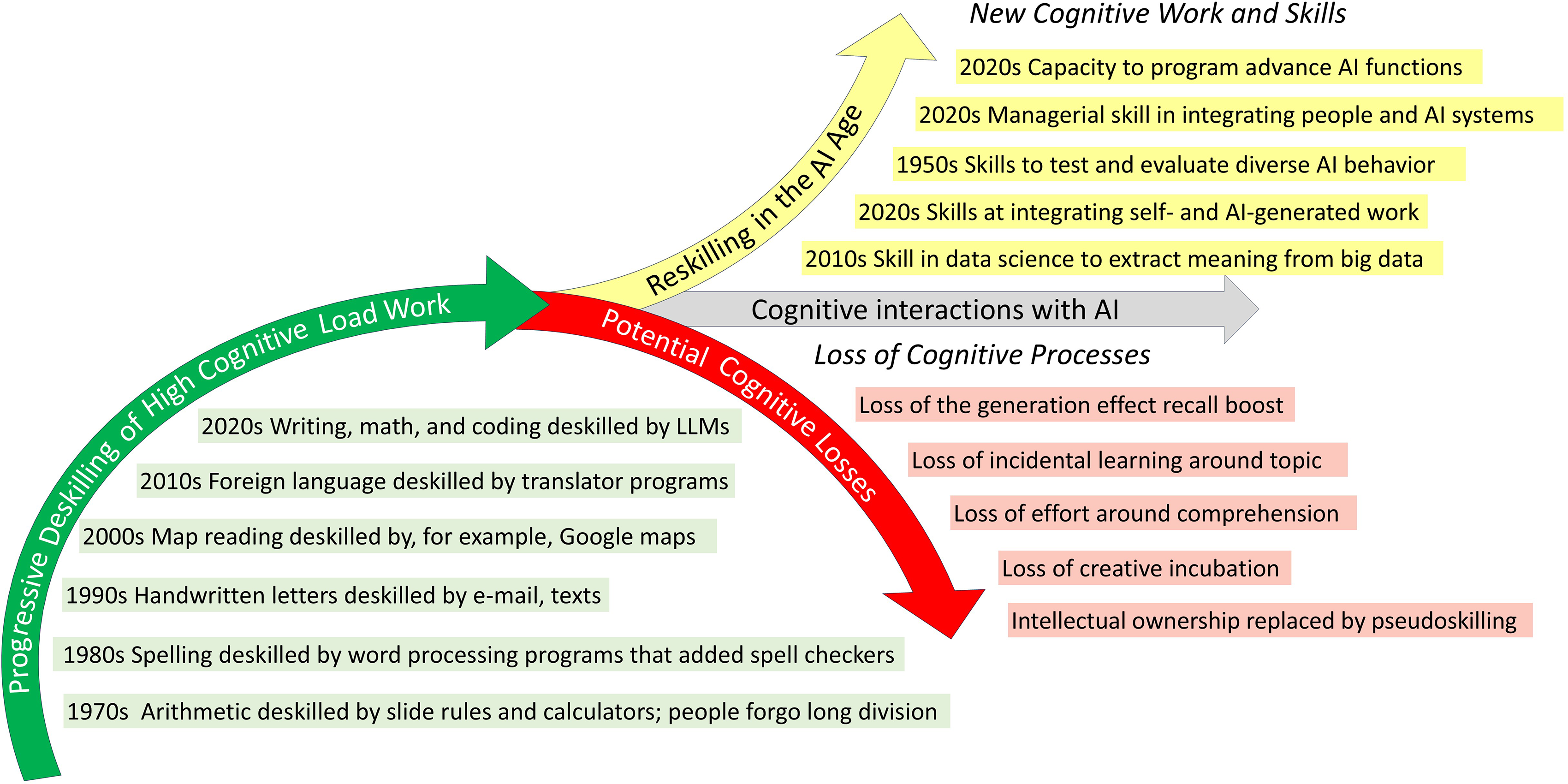

Part 4. Changes in Cognitive Skills at Work

In the work realm, as people develop skills at using AI, they may potentially deskill in other areas. To “deskill” refers to a reduction of skill needed to carry out one’s work due to the introduction of relevant technology. In the late 1700s, hand weavers, who had created fabrics on manual looms by operating foot pedals or hand levers, were largely replaced by factory-based power looms that used gears, pulleys, and belts powered by steam engines (Allen, 2018). The preservation of weaving skills became the province of a few artisans and hobbyists. At the same time, however, employment was created for workers to oversee and maintain the machines in the same factories (Brugger & Gehrke, 2018). Similarly, many mid-20th century engineers carried slide rules to perform multiplication and division, calculate exponents and logarithms, and related tasks. But slide rules became obsolete in the early 1970s with the introduction of handheld calculators (Bruderer, 2021; Stoll, 2006; Wyman, 2000) and today few college students have even heard of sliderules, let alone know how to use one. As new iterations of Large Language Models (LLMs) such as GPT, Gemini, and Claude are introduced, deskilling and reskilling are taking place concurrently.

The Vulnerability of Students in Particular

AIs serve as learning and thinking tools and they are therefore particularly consequential in educational and work settings that involve intellectual labor. Students are especially vulnerable to choices around whether to use such tools and how. They increasingly use AI to write for them at school (Mennella & Quadros-Mennella, 2024), and professionals employ such techniques in scientific writing (Stokel-Walker, 2024). Both groups’ skill at writing seem likely to decline over time from disuse of skills (or failure to learn them in the first place) relative to those who don’t use AI for that purpose. One educator has wondered whether we as a society might decide “that students don’t need to learn to write anymore, since we have technology that can do that for us” (Warner, 2022).

Skills That are Low and High in Cognitive Load

Mental skills vary by the cognitive load they place on working memory and concomitant reasoning (Ginns & Leppink, 2019; Sweller, 1988, 2024) and writing is a high-load skill. Walking around a block or traveling from one room to another in one’s home entails low cognitive load. Skills such as tying one’s shoelaces and the basic procedures of addition and subtraction, although challenging for a child, become automatized for adults and require similarly minimal attention.

By comparison, writing on complex topics, reading challenging material, and advanced computer coding require considerable concentration for most people, as does the spatial reasoning of geometry and similar high-load tasks such as music composition. Such high cognitive-load tasks will be referred to here as “peak intellectual skills” and require attentional focus, draw on already-acquired, organized knowledge (Oakley et al., 2025), and make near-maximal use of working memory (Ginns & Leppink, 2019; Sweller, 1988, 2024). Such skills also can involve the externalization of coherent thought often in the form of notes and drafts, creative gestation, and specialized intelligences.

Attentional Control

Peak intellectual performance requires maximal application of working memory and sustained attention. At the same time, online experience works against concentrative behavior: The increased distraction caused by online screentime, from cell phones to social media, is associated with depression, anxiety, and lower academic performance (Calderwood et al., 2014; Chu et al., 2021; De-Sola Gutiérrez et al., 2016; Frein et al., 2013; Sana et al., 2013). Adding screen time to converse with and prompt AI may contribute to this phenomenon.

Externalization of Coherent Thought

A second aspect of peak skills is that they typically involve externalizing aspects of one’s thought, such as the multiple drafts required for writing, the step-by-step proofs employed in mathematics (“show your work”), and the series of sketches used in engineering and architecture. This externalization renders the intellectual work subject to observation that can be evaluated and revised by the individual over time, or commented upon by other people, or both. Relying on AI solutions removes experience with sequential revisions and the skills it builds.

Gestation, Incubation, and Solutions “Out of Thin Air”

The multiple drafts just discussed are often accompanied by a time-extended problem-solving process that can include putting aside a given work for a while, after which the solutions may seem to arise “out of thin air.” These resulting mental insights are regarded as the consequence of initial preparation followed by longer-term non-conscious processing of the problem-at-hand, sometimes referred to as incubation or gestation (Gilhooly, 2016; Hélie & Sun, 2010). The use of AI for brainstorming, or to obtain other immediate solutions, short-circuits this process with unknown cognitive effects.

Peak Skills and Intelligences

A final piece of evidence for their peak qualities is that humans have evolved measurably distinct mental abilities—broad intelligences—for each of the forgoing areas: verbal-propositional intelligence for writing and coding, mathematical reasoning for mathematics, visuo-spatial intelligence for spatial reasoning, and auditory intelligence for music (e.g., McGrew, 2009). These intelligences developed in the past through extended periods of education and related intellectual activity (e.g., Baker et al., 2015).

Changes in Peak Skills Accompanying AI

These peak skills—writing, mathematics, spatial reasoning—are changing with the advent of AI as indicated in Figure 7. Between 2016 and 2021 foreign language learning, another peak skill, was down 16% (compared to enrollment drops of 8% more generally) (Lusin et al., 2023), which may be attributable to the widespread use of translation programs (Sternberg, 2024). More immediately, students—and now their instructors—increasingly use AI to write and to edit (e.g., Hadi Mogavi et al., 2023). Such software is also employed by engineers and others to write, check, and edit their own computer code; apps such as Google Maps and Waze find directions for people. Those who search for information online may learn how to repeat the search, but retain less of the information itself (Fisher et al., 2022). True, losing the ability to perform certain skills may not be missed by many, but any disuse of the ability to think critically is of more general concern. Changes to peak skilling in interactions with AI. Depending on their use of AI, people will acquire new skills and run the risk of losing key cognitive skills.

Figure 7 (bottom right) includes examples of possible reductions in skills and other outcomes that are worth monitoring for. These include the loss of the generation effect—the increased memory that comes with producing ideas oneself (Slamecka & Graf, 1978), and the curtailing of creative incubation by having access to AI’s near-immediate answers (Gilhooly, 2016; Hélie & Sun, 2010). Regarding the increased use of AI robots, disuse of motor skills also leads to deskilling (Tatel & Ackerman, 2025). Recent preliminary evidence indicates that people who use AI may lose brain connections relative to “brain only” problem solvers (Kosmyna et al., 2025). Many people may pseudo-skill, enhancing their cognitive appearance by employing AI rather than producing thoughts themselves. And, at least some AI users may feel demoralized by their inability to match AI’s performance, or even to perform at their earlier levels of intellectual ability due to their now-disused skills.

At least some cognitive reskilling is likely to occur as well (Figure 7, top right): The early 2000s saw workers developing skills in new areas such as data science. Those who interact with AI are likely to develop new skills that require considerable concentration. Young students who interact with AI may model their own writing after the reasonably well-written, if generic, AI outputs. Managers will need to develop skills at integrating AI agents with human employees in the workplace, and behavioral scientists and engineers draw on research skills to assess AI for its quality and alignment with human values. Finally, AI firms compete fiercely to hire the few highly-gifted individuals who possess the experience and abilities to create still more powerful versions of AI (VanderHei & Allen, 2025).

The Mental Health Benefits of Peak Intellectual Pursuits

It seems likely that many of those who no longer engage in peak skills, or who are young enough to have never done so, may run the risk of reducing their intellectual level. Regularly engaging in peak cognitive pursuits yields rewards beyond their intellectual products alone: IQ rises with educational level and new challenges, along with work in intellectually-challenging areas (Brinch & Galloway, 2012) and the individual intellectual skills entailed in these peak skills add up to an overall cognitive reserve that can preserve intellectual functioning into old age (Harrison et al., 2015). In a recent study, taxi and ambulance drivers—who often made split second decisions while driving (before such apps as Google maps)—exhibited lower incidences of Alzheimer’s disease than bus drivers and aircraft pilots, whose routes were largely repetitive (Patel et al., 2024). Just as a lack of physical exercise leads to physical weakness, a loss of intellectual work can lead to cognitive weakness.

Why Would People Do This?

Companies that create these models increasingly encourage their use—but given the possible harms involved, why do people so willingly use them? No doubt their novelty plays a role. Beyond that, however, AI saves its users the time and energy needed to research and think about matters themselves. As one student remarked humorously on a survey: “I’m just lazy and ChatGPT saves my time so I can remain lazy!” This time-saving comes with the utility of AI’s answers: another survey respondent remarked: “In most cases, it provides me with the exact answer I need. It also assists me in solving mathematical problems” (Niloy et al., 2024, p. 6). Achievement-driven students and employees may feel pressure to use AI because their peers are doing so and they want to maintain a competitive edge. At the same time, although there is so far little documentation of it, it seems likely that watching AI complete at least some tasks equivalently to or better than the user, and faster, will lead to a feeling of demoralization.

Part 5: Discussion and the AI Adjusted Personality

In 1930, Sigmund Freud enumerated the technologies to that time, including telescopes, phonographs, and telephones and marveled at how any given human had “come very close to…a god himself…a prosthetic God…truly magnificent” (Freud, 1930, pp. 91–92). And yet Freud observed that despite such progress, “present-day man does not feel happy in his Godlike character” (Freud, 1930, pp. 91–92). Twenty-seven years later, Julian Huxley’s transhumanist proclamation espoused a more buoyant post-war vision of human evolution and its continuance through technology (Huxley, 1957; Mayer & Leichtman, 2025). Since then, views on technological change have become more diverse: that AI will continue to develop into a superhuman intelligence that will benefit us all, or take over the human race, or, through a coding error, do something silly like turn the universe into paperclips (Bostrom, 2016).

Figure 8 summarizes some of the changes to personality discussed in the prior sections as people increasingly interact with AI. Under “Motives and emotion” (Figure 8, bottom left) achievement motives prompt people to exploit the advantages AI conveys such as making users look smarter and saving time at work; other users are attracted to the social support and companionship such systems convey (Niloy et al., 2024; Skjuve et al., 2024). Key changes to personality as it interacts with AI. Multiple areas of personality are changed by AI usage, from an extended self that includes AI outputs, to plans and actions redirected to the virtual world.

Planning of Outer Actions

People’s planning of their outer actions (Figure 8, center) will continue to be forked between the physical and virtual worlds, with augmented and virtual realities increasingly taking up attention. The transition to virtual worlds, including remote work and conferencing that are one step removed from the physical world, changes the everyday environment to which people have been accustomed. This could plausibly result in less knowledge of the natural and built environments where one lives, perhaps encouraging less investment in the physical world and its infrastructure.

Changes in Knowledge and Intelligence

In the “Knowledge and intelligence” area (Figure 8, middle left), a growing number of users ask AI questions about their interpersonal conduct. Some users may benefit from honing their interpersonal skills via AI and better handling their interactions with other people. Such reliance, however, may deskill users in their ability to understand and appreciate the personality of others, with a resultant drop in their people-centered intelligences. AI LLMs don’t hesitate to compliment, rarely criticize, and are regularly cheerful—characteristics that one cannot always count on in human relationships. Human friends criticize us at times, and those higher in personal intelligence appear to be more accurate when doing so; that source of feedback may be reduced (Allen, 2017; Mayer et al., 2019b). A dependency on friendly, chatting agents may, in some instances, promote a healthy, fulfilling psychological relationship but at other times may be fraught with unanticipated dependency (Abraham, 2024; Marriott & Pitardi, 2024; OpenAI, 2024).

More generally, people who use AI to produce intellectual outputs may gain in productivity and speed, but run the risk of losing practice at the intellectual tasks entailed, and lose a sense of agency when carrying out tasks such as writing and coding. As noted earlier, reliance on machine AI can reduce recall for generated material (e.g., Slamecka & Graf, 1978) and reduce one’s cognitive reserve over time. We may discover whether “writing is thinking” is true (McMurtrie, 2022; Oatley & Djikic, 2018). Highly skilled people, apart from those working in AI itself, may feel their jobs are less interesting. Preliminary reports of AI applications at work are growing in number (e.g., Al Naqbi et al., 2024) but the recency of the phenomena has meant that studies thus far have just begun. Meantime, how to resolve specific issues such as what occurs when AI and humans disagree have barely been considered: “Even experts will have good reason not to trust their own judgment over the ‘judgments’ delivered by their tools,” worried Dennett (2019, p. 59). Apart from the top performers, less skilled people will no longer fully understand the rationale and justifications behind the outputs AI produces and their organizations may decide those employees are no longer needed.

Changes to the Self and Executive Management

A person’s sense of self and their self-control (Figure 8, top left) will require greater effort to maintain in an evolving environment of attempts by media to capture attention. A second challenge for self-management will be to delegate appropriately those tasks that are arguably best handled by AI without abdicating self-control over key areas of one’s life.

William James wrote of the self “that between what a man calls me and what he simply calls mine the line is difficult to draw”. The term extended self has been used for those parts of oneself that include, for example, clothes, tools, eyeglasses, and most recently, AI productions themselves (Belk, 1988; Clark & Chalmers, 1998; Heersmink, 2020). As AI becomes an accepted part of daily experience, many people’s sense of self will extend to “own” the ideas they have asked AI systems to generate, regarding them as personal creations. But when AI comes up with the idea—whose work is it? Sternberg has noted: “Who even bothers to write in a scientific paper that the data analysis was performed by a computer or that the text was grammar- or spell-checked by software? Over time, it is not difficult to foresee a mindset whereby people view AI-generated as their ‘own…’” (Sternberg, 2024, p. 8)

People will take credit for the AI productions they have elicited even though they would be unable to create comparable written or visual outputs by themselves. “Students are…losing their sense of guilt and shame while they are claiming intellectual ownership of products that are not truly theirs…” (Sternberg, 2024, p. 2). Humans have a long history of tool use, but the transition to devices that think for us marks a crossing of the Rubicon of a sort. If this were a relationship between two people, such claims of ownership would be regarded as plagiarism or cheating.

Research Needs

The preceding theoretical statements warrant empirical tests to establish public mental health guidance. The ascending and descending arrows of Figures 6 and 7 are essentially research-informed hypotheses concerning how an individual’s style of AI use may entail positive or negative outcomes. Figure 6, for example, indicates that those who use AI for interpersonal coaching will fare better than those who use AI for companionship; Figure 7 indicates that those who integrate AI into their workflow will fare better than those who use AI to think on their behalf. Figure 8 proposes changes in personality that range from people’s perceived need to use AI to remain competitive with others, to extending their self-concept to include ideas generated by AI. Psychologists can perform a public service by monitoring the use of AI systems to assist people to use them constructively.

Areas of personality change to monitor during the growing implementations of artificial intelligence

AI use can then be correlated with personality attributes including knowledge and intelligences, socioaffective traits including Extraversion, Emotional Stability, and Agreeableness, and moral outlook, which may be especially pertinent to understanding whether a person “owns” AI outputs as their own—or feels as if using AI help is cheating (e.g., de Leng et al., 2018; Rainie, 2025, p. 3). Ideally, such research would progress to longitudinal studies to better understand change over time.

Conclusions

People respond to AI in many ways: they are motivated by needs to ‘keep up’ with AI so as to be current, they feel uncanniness as AI imitates human responses (Shank & Gott, 2019, pp. 261–262), and may experience demoralization as AI increasingly does what humans do, only faster. Absent social cautions, people appear willing to depend ever more on their online presence to represent themselves, on AI to write their emails and reports, and on interactions with AI that include substantial forms of companionship—and people are increasingly willing to regard AI intellectual output as if they themselves had created it.

At the same time there have been warnings: After Weizenbaum created the therapist-bot Eliza, he publicly cautioned that any caring by machines was “incapable of nourishing the emotional processes which may lead individuals to realizing the possibility of their being worthy…” (Weizenbaum, 1977). And yet people continue to involve themselves in relations with AI with sometimes troublesome consequences (Turkle, 2024). “We’re making tools, not colleagues,” wrote Dennett (2019, p. 53), “and the great danger is not appreciating the difference…”

AI engineers and tech reporters who report on them are offering unusually concerned criticisms of what has gone on in the AI world since the introduction of GPT in 2022 (Coldewey, 2023). In the winter of 2024, Apple ran advertisements, intended to be funny, that featured people who used AI to make up for their lack of effort and preparedness at the office. The normally tech-friendly CNET reviewer Bridget Carey characterized the advertisements as featuring “profoundly stupid people…faking it through life, lying to everyone around us,” and entitled her piece “Does Apple think we’re stupid?” (Carey, 2024; Coldewey, 2023). Yet such concerns must be balanced against the impressive contributions that AI can make when properly employed.

When we encounter a risk, we weigh the benefits that might arise, and the impact of the risk on both ourselves and others. This balance between freedom and responsibility arises in multiple areas including free speech (Sunstein, 1992), gun rights (McClain & Fleming, 2014), gambling (Savard et al., 2022), and health behaviors generally (Knowles, 1977). If we value our own selves, it may be time for serious consideration of the effects of AI use on our personalities.

Supplemental Material

Supplemental Material - How Human Personality Will Change Withcthe Use of Artificial Intelligence

Supplemental Material for PHow Human Personality Will Change Withcthe Use of Artificial Intelligence by John Mayer in Personality Science

Footnotes

Author Note

Not applicable.

Acknowledgements

I am grateful to Michelle Leichtman, who commented on an early draft of this work, and to Evelyn Lindenburg and Ummul Fatima for their comments on a near-final draft. Their comments helped improve the clarity and the quality of the overall work.

Author Contributions

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

ORCID iD

Not applicable.

Use of Human Subjects

No human or animal participants were directly involved in the present work, which is solely a theoretical review.

Use of Artificial Intelligence

No artificial intelligence was used in the writing or editing of this work.

Data Availability Statement

Not applicable.

Supplemental Material

Supplemental material for this article is available online. Depending on the article type, these usually include a Transparency Checklist, a Transparent Peer Review File, and optional materials from the authors.

Note

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.