Abstract

This article proposes an alternative analysis for the relationship between the sign maker and the sign in Multimodal Social Semiotics (MSS). A revised framework is required to ensure that MSS remains relevant in sociotechnical contexts where AI systems can create multimodal ensembles autonomously. When an automated intelligent system outputs a multimodal sign, no human sign maker’s intentionality and agency are directly involved in the process, and therefore the sign cannot be described as ‘motivated’. This raises significant conceptual problems for canonical MSS. However, this article argues that signs can still be classified as motivated even when they are created by autonomous AI systems. To accomplish this, though, it is first necessary to restructure the hierarchy that exists between the sign maker and the sign. In the reworked framework developed here, the intentionality and agency of the sign designer(s) shape and motivate the specification of the sign design, and this design is then realised by the sign makers (whether humans or autonomous intelligent systems) that create the sign. This modified hierarchy enables MSS-based analyses to incorporate automated intelligent systems as sign makers without destabilising core theoretical underpinnings of the theory.

Introduction

Over the last decade, Artificial Intelligence (AI) has become increasingly multimodal. While applications such as automatic speech recognition and automatic video captioning, have always involved more than one mode (e.g., speech and writing; moving images and words), the emergence of digital media, online communications, and powerful Large Language Models (LLMs) has created numerous sociotechnical scenarios in which AI systems routinely generate multimodal ensembles. In different ways, internet memes, interactive online adverts that use video, speech, and music, and certain kinds of experimental performative e-literature are all illustrative examples of this general trend. It is no surprise, therefore, that a recent article in Forbes magazine, identified ‘Multimodal AI Models’ as one of the five cutting-edge technology trends of 2024 (Janakiram, 2024).

Yet ‘multimodality’ is a complex phenomenon. In the analytical framework offered by Multimodal Social Semiotics (MSS), ‘modes’ such as writing, speech, image, music, and gesture, are socially and culturally determined semiotic resources which can be deployed to create meanings in discourses; and the various modes all have different affordances – that is, particular potentialities and constraints that impact the making of signs in specific representations (Kress and Van Leeuven, 2001: 21). Each set of modal affordances is determined by the ways in which meaning is created using the particular semiotic resources available – and the latter are inevitably characterised by their material, cultural, social, and historical development (e.g., Jewitt, 2014; Jewitt et al., 2016; Kress, 2010; Kress and Van Leeuven, 1996; Kress and Van Leeuven, 2001). Given this well-established analytical framework, multimodal ensembles can be viewed as representations that make use of more than one mode in a purposeful manner, to create collective and interrelated meanings. For instance, internet memes are inherently multimodal ‘relational entities’ since they combine texts and images, often for comedic purposes (Cannizzaro, 2016: 569; see also Chovanec and Tsakona, 2018; Vasquez and Aslan, 2021) (Figure 1): Batman and Robin Meme (created by the author on 16/04/2024).

The Batman and Robin image originally appeared in the 1965 comic book ‘World’s Finest #153’ (Hamilton et al., 1965). Since 2008, it has been re-contextualised and transformed countless times as different people have added alternative texts to the speech bubbles. Consequently, decoding this particular meme requires not only some familiarity with Batman and Robin as figures in modern popular Western culture, but also some familiarity with the conventions surrounding the family of internet memes that share this image.

General LLMs like GPT-4o have been designed to take either text and/or images as input and generate text and/or images as output. Consequently, while the multimodal capability of such models is currently limited (e.g., they have no capacity for haptic interaction) they are already more accurately labelled as Large Multimodal Models (LMMs) since they characteristically process more than one mode. The conspicuous multimodal turn in AI systems is fascinating in its own right, but it also has significant implications for MSS. This is because certain kinds of autonomous intelligent systems have the capacity to function generatively as sign makers in their own right. That is, they can create original meaningful outputs which use writing, speech, image, and so on. Yet, ever since the emergence of Social Semiotics (SS) in the 1970s and 1980s, leading proponents of the theory have assumed (often tacitly, and not unreasonably at the time) that sign makers are necessarily humans who operate in specific social and cultural contexts – and these assumptions have naturally been extended to MSS too. In Introducing Multimodality (2016), Carey Jewitt, Jeff Bezemer, and Kay L.O’Halloran describe the process of sign making as follows: The sign maker’s interest leads to their choice of resources, seen as apt in the social context of sign production. Viewing signs as motivated and constantly being remade draws attention to the interests and intentions that motivate a person’s choice of one semiotic resource over another (Jewitt et al., 2016: 68).

As discussed at greater length later in this article, it is a core notion in (M)SS that an individual’s interest, intentions, and so on, motivate the semiotic resources they select. As a result, the signs they produce can be classified as ‘motivated’. This indicates one of the ways in which the analytical framework of (M)SS differs significantly from the semiotic framework influentially developed by Ferdinand de Saussure in the early twentieth century. More specifically, in the lectures that became the Cours de linguistique générale, Saussure argued that many signs are ‘radicalement arbitraire’, or ‘immotivé’ (i.e., unmotivated), while others (such as the compound ‘dix-neuf’) are less extremely arbitrary and there are ‘relativement motivé’ (i.e., relatively motivated) (Saussure, 1915/1972, 180-181).

1

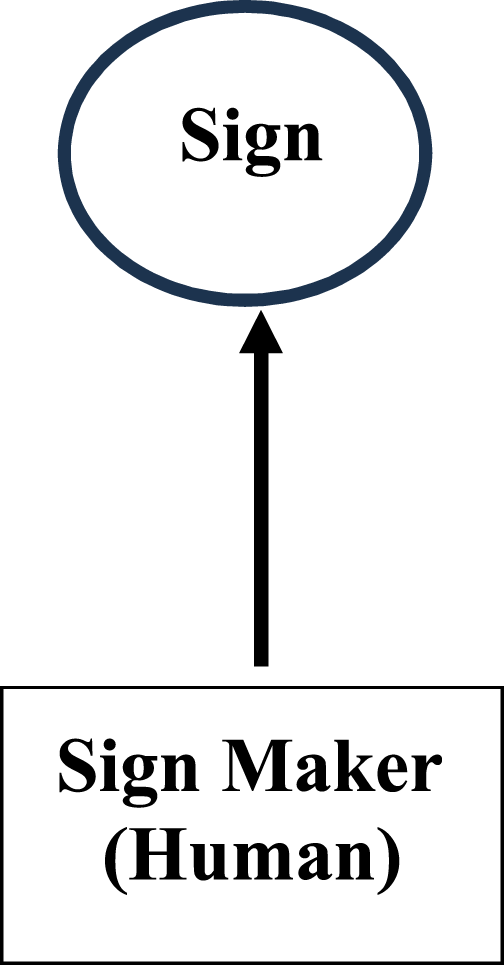

However, in (M)SS it is conventional to view signs as being entirely motivated because (as emphasised in the above passage) they arise ultimately from the ‘interests’, and ‘intentions’ of the sign maker – and this all makes good sense when we are considering signs fashioned by human beings. In schematic form, the presumed scenario can be simply represented as in Figure 2: The sign maker and the sign.

But recent developments in generative AI have destabilised such analyses, such that the assumptions underlying (M)SS now need to be refined or extended to accommodate situations in which no human beings are directly involved in the sign making process. In such cases, it is no longer possible to refer directly to the ‘interests’, ‘intentions’, and ‘choices’ of the sign makers themselves, since LMMs and similar models do not currently possess these capacities in a human-like form. The present article examines this problem in detail and offers an alternative analysis that resolves some of the apparent difficulties.

Artificial intelligence and the making of signs

The influential transformer architecture introduced by Google in 2017, which makes extensive use of multihead attention, has enabled state-of-the-art generative AI systems to perform creative tasks that are increasingly multimodal (Veswani et al., 2017). Although OpenAI’s GPT-3 text-based system, released in May 2020, was still monomodal (as are more recent LLMs such as DeepSeek), a significant advance was made in March 2023 when GPT-4(V) was released, with further developments occurring in May 2024 when ChatGPT-4o appeared. 2 The GPT-4-based systems are powerful general-purpose autoregressive pre-trained transformers. GPT-4(V) contains around 2 trillion parameters, and the trained model was fine-tuned with Reinforcement Learning from Human Feedback (RLHF; OpenAI, 2023: 12). In December 2023 Google released its Gemini LMMs, which are decoder-only transformers that have been modified to allow efficient training and inference. They have a context length of 32,768 tokens and use multi-query attention. Two versions of Gemini Nano, Nano-1 (1.8 billion parameters) and Nano-2 (3.25 billion parameters), were created. Since all the models are multimodal, different kinds of input can be used (Gemini Team Google, 2024). In addition, various task-specific models have been designed for multimodal tasks that GPT-4-based and Gemini-based LMMs cannot accomplish. For instance, Runway’s Gen-2 takes text as input and outputs novel videos that contain text as well as moving images. 3

The semiotic implications of these technological developments have been apparent for some time. In the sub-field of social robotics, numerous philosophical and sociotechnical studies have considered how autonomous intelligent systems can create and reconfigure symbols in a communicative framework (e.g., Taniguchi et al., 2016); Stéphanie Walsh Matthews and Marcel Danesi have explored AI and semiotics from the philosophical vantage points of posthumanism and transhumanism (Matthews and Danesi, 2019); Kay L. O’Halloran has considered the role of semiosis in the digital age and its social implications for the current digital ecosystem, including AI (O’Halloran, 2024), while Tong King Lee has used a Barthian analytical framework to argue that multimodal semiotics offers a theoretically viable perspective on the global circulation of cultural artifacts by way of the concepts of memes, distribution, resemiotisation, and assemblage (Lee, 2024). However, none of these discussions reflects deeply upon the problems AI systems pose specifically for canonical (M)SS analyses of how signs are created by sign makers. To give one concrete (monomodal) example, here is a poem generated by GPT-4(V) in response to the prompt ‘Write a 4-line poem about multimodality’: In words and images, we intertwine, A dance of senses, deep and fine. Where text and sound together play, Creating meaning in a new array.

4

Even if we deem this text (with some justification) to be risible doggerel, it would be perverse to argue that it is meaningless simply because it was not written by a human sign maker who possessed interests, intentions, motivations, and so on. Yet, if we accept that this automatically generated poem is in some sense meaningful, then we need to think more carefully about the theoretical underpinnings of (M)SS.

Multimodal social semiotics

Having considered various approaches to analysing multimodal communication, Carey Jewitt, Jeff Bezemer, and Kay O’Halloran identified three core premises that characterise such research (Jewitt et al., 2016: 3): 1. Meaning is made with different semiotic resources, each offering distinct potentialities and limitations. 2. Meaning making involves the production of multimodal wholes. 3. If we want to study meaning, we need to attend to all semiotic resources being used to make a complete whole.

While various theories of multimodality have explored these premises, MSS has been especially influential, and it has deep roots in the work of the Michael Halliday. It was Halliday who stressed that meaning should be considered in relation to ‘a sociocultural context, in which the culture itself is interpreted in semiotic terms’ (Halliday, 1978: 2). Crucially, semiosis is not something that occurs merely in minds; it arises from social practices in a community.

The Hallidayan framework that underlies MSS was presented in Robert Hodge and Gunther Kress’ Social Semiotics (1988), and it has been gradually developed and refined in many subsequent publications (e.g., Bezemer and Kress, 2015; Boria et al., 2020; Jewitt, 2014; Jewitt et al., 2016; Kress, 2010; Kress and Leeuwen, 2001; Kress and Van Leeuwen, 1996; Rocca et al., 2024; Van Leeuwen, 2005; Van Leeuwen, 2021). While necessarily drawing upon aspects of older semiotic theories, MSS remains a distinct approach. For instance, it does not make use of Pierce’s tripartite classification of signs into the sub-groups iconic, indexical, and symbolic; and unlike Saussurian semiology, it foregrounds the sign maker’s intentionality and agency in the making of signs and meaning (using the semiotic resources of different modes), which leads to the striking conclusion that, contra Saussure, the relationship between form and meaning is never entirely arbitrary (Kress, 2010, chapter 4). As Kress and others have stressed repeatedly, one of the underlying convictions that has guided MSS research from the very beginning is that multimodal interaction has always been the ‘normal state of human communication’ (Kress and Van Leeuven, 2001: 21). Nonetheless, MSS offers a robust investigative framework that facilitates the analysis of such phenomena. In particular, it offers a way of conceptualising ensembles of modes – that is, representations that make use of more than one mode in a purposeful manner, to create collective and interrelated meanings. Ensembles are said to be ‘orchestrated’ by at least one meaning maker (Kress, 2010: 157-158), and an attentive consideration of the orchestration processes emphasises the extent to which monomodal communication is the exception rather than the norm.

In expository texts that outline the approach favoured by MSS, it is conventional to foreground the interest of the (human) sign maker, where ‘interest’ is a technical term that denotes ‘the momentary condensation of all the (relevant) social experiences that have shaped the sign maker’s subjectivity’ (Jewitt et al., 2016: 168). And this overt emphasis on the central role of the subjectivity of the sign maker is problematical if we are considering multimodal ensembles created by automated intelligent systems such as LLMs. If ‘subjectivity’ is understood to refer to the ability to have personal opinions, feelings, and biases, which arise from personal experience or personal preferences, then LMMs do not (currently) possess these qualities directly, though they can certainly give the impression of possessing them. Similarly, they do not have ‘social experiences’, though they are trained on vast amounts of data that contains human-produced representations of multifarious social experiences. Therefore the ‘interest’ of such systems cannot be foregrounded in a direct manner. The outputs generated automatically are merely the result of patterns of tokens in the training data having been learnt in ways that were guided by parameter values chosen by the (human) system developers.

It is crucial to recognise this, since (as mentioned earlier) the importance of the social subjectivity of sign making is emphasised repeatedly in most classic MSS texts: Signs are made in a specific environment according to the sign-maker’s need at the moment of sign-making, shaped by the interest of the maker of the sign in that environment. The environments and circumstances of ‘use’ are, therefore, always an absolutely integral part of (the making of) the sign: they are at the centre of the concerns of the theory. The signs are made as precise as it is possible to make them to realize the sign-maker’s meaning (Kress, 2010: 62).

It is unambiguous here that the ‘need’ and ‘interest’ of the sign-maker are fundamental to the task of shaping and making meaningful signs, yet it is never entirely clear precisely how such things relate to similar notions such as ‘communicative intention’ or Franz Brentano’s important notion of ‘intentionality’ (Brentano, 1874). In the vast literature on the philosophy of language and linguistics, the intentions of a given speaker – whether private, social, or communicative (to use Searle’s still influential sub-categories (Searle, 1983)) – are usually things that cannot be studied directly, at least not without additional surrounding interrogative discourses (e.g., ‘What exactly did you mean when you said X?’). Without such clarifying exchanges, an intention can usually only be inferred from a specific utterance; it is not overtly inherent in particular actions, statements, and so on. Yet, when dealing with human-produced discourse, the assumption is usually made (reasonably enough) that there was indeed an underlying intention. The relationship between intentionality and meaning raises complex ontological and metaphysical questions about the fundamental nature of mental states, and traditionally in the MSS literature the focus has been on human-centric interactions. This is why intentionality plays such an important role in theories of MSS, whether explicitly or implicitly, and especially when the notion of the motivated sign is considered. Recently, several researchers have reflected at greater length upon algorithmic creativity and whether automated systems can be classified as being genuinely creative, in a human-like sense, if they succeed in generating meaningful signs (e.g., Cetinic et al., 2022; Cropley et al., 2023; Runco, 2023).

To date, such debates have rarely been explicitly addressed by exponents of MSS. In the analytical framework Kress outlined in 2010, signs are ‘the expression of the interest of socially formed individuals who, with these signs, realize – give outward expression to – their meanings, using culturally available semiotic resources’ (Kress, 2010: 10). Once again, this suggests that the entities involved in meaning-generation are necessarily human and that the externalisation of the meanings is crucial when we consider interest. Later in the same text, in a section tellingly entitled ‘The Motivated Sign’, Kress re-emphasises the importance of the role of the sign maker in the process of meaning creation and explicitly indicates how (M)SS differs from other semiotic theories (especially those in the Saussurean tradition). As he puts it, ‘[a] social account of meaning based on the significance of the agency of individuals, is entirely at odds with a conception of an arbitrary relation of form and meaning, established and held in place by convention’ (Kress, 2010: 63). Statements such as this form part of the powerful critique of Saussurean semiotics he had developed since the early 1990s. For instance, in a 1993 journal article he had stated openly that ‘[a]ll signs are motivated in their relation of signifier to signified’ (Kress, 1993: 180), and in subsequent writings he explained in more detail the reasoning behind this stance and why it had prompted him to reject the mantra of ‘the arbitrariness of the sign’ which was one of the overly simplistic sound bites that the Structuralists had so gleefully extracted from Saussure’s Cours de linguistique générale (1915/1972). By contrast, in the MSS framework signs are usually considered to be the result of purposeful sign-making activity that involves the agency of the (human) sign-maker. Bob Hodge, who influentially co-authored Social Semiotics (1988) with Kress, has recently reflected at length upon the notion of the ‘motivated sign’ in Kress’ work, focusing in particular on his ‘complex, nuanced view of Saussure’ (Hodge, 2024: 459). Hodge identifies three fundamental premises that are central to the version of MSS that Kress developed, and one of these is that ‘[s]emiosis is held together by networks of links between form and meaning, language and reality, society and language, in which motivated signs play a fundamental role’ (Hodge, 2024: 465) – and this premise underlies all varieties of MSS developed by Kress and those who adopt the same core analytical framework as him.

It is apparent, then, that signs in MSS are considered to be motivated rather than the result of arbitrary conventions, and it is the (human) sign-maker who actively combines form and meaning: In Social Semiotic theory, signs are made – not used – by the sign-maker who brings meaning into an apt conjunction with a form, a selection/choice shaped by the sign-maker’s interest. In the process of representation sign-makers remake concepts and ‘knowledge’ in a constant new shaping of the cultural resources for dealing with the social world (Kress, 2010: 62).

As this extract makes clear, the sign-making process is determined by choices that are guided by the sign-maker’s ‘interest’, and although such passages elucidate the epistemic foundations of the MSS theory, it is important to remember that these foundations were established decades before the current generation of powerful generative AI systems emerged. Later in the same text, in a section devoted to ‘Semiosis, meaning, and learning’, Kress states overtly that ‘[t]he conception of the motivated sign, with the interest and agency of the sign-maker evident in the shape of the sign, has direct implications for the assessment’ (Kress, 2010: 179). Indeed, like intentionality, agency plays a particularly important role in the process of sign-making, as Kress emphasises: ‘‘[m]aking’ implies a ‘maker’; hence agency is central’ (Kress, 2010: 107). In the vast philosophical literature on agency, this capacity is usually viewed as being possessed even if it is not being exercised or made manifest in some way at a given time, and any entity that possesses it is an agent (though they only play the role of an agent when they are actively exercising that capacity). Agency is often associated with certain features, dimensions, and implications (e.g., the actions performed by the agent are goal-directed and have an impact on the external world) and different kinds of agents are associated with different kinds of agency (Ferrero, 2022: 3–6; see also Hyman and Steward, 2004, Shoemaker (2013-2024)). For instance, following the convention popularised by J. David Velleman, humans are often described as possessing ‘full-blooded’ agency, while single-celled organisms and plants are ranked lower on the agency hierarchy (Velleman, 1992: 462). The precise nature of these differences has been considered extensively, and the analyses have focused on whether the various kinds differ due to specific dimensions or features, or whether all kinds possess the same dimensions and features but merely to differing extents. As mentioned above, complexities such as these are not normally addressed in detail when agency is discussed in MSS-based analyses, usually because it is tacitly assumed that only ‘full-blooded’ human agency is relevant for the analytical frameworks relevant to MSS.

Theo van Leeuwen has recently written at length about the shifting role of agency in Kress’ theorising, emphasising that there was never a simple trajectory from asserting ‘the power of institutions’, including language, to ‘re-discovering the agency and the creativity of individuals and communities’ (Van Leeuwen, 2023: 17-18). Indeed, he has shown clearly that there was an abiding tension in Kress’ work between social determination and individual agency. Yet, despite the twists and turns in the layered complexities of Kress’ thought, van Leeuwen demonstrates that, from at least the 1990s onwards, (full-blooded) agency was frequently a crucial component of the varieties of MSS that his long-term colleague developed. And other theorists influenced by MSS (and by Kress specifically) have reformulated these ideas in similar ways, even if they do not always use exactly the same vocabulary. In her book Multimodal Communication (2019), for example, May Wong has emphasised that ‘social semiotics has been a social theory of meaning and communication in which semiotic resources with varying affordances are used as tools by sign makers for serving particular social needs required in a given social context’ (Wong, 2019). The language deployed here makes it clear that the contextualised social needs of the (human) sign maker are of fundamental importance, since the use of a tool usually requires full-blooded agency. More explicitly, Elisabetta Adami has recently affirmed that by focusing on the communicative practices of individuals, ‘social semiotics is particularly interested in sign- and meaning-makers’ agency in relation to broader social forces’ (Adami, 2023: 20).

These examples are sufficient to indicate that, as for intentionality, the centrality of agency in the work of Kress and his colleagues cannot be underestimated. Yet this human- and society-based analysis has always been rather unstable, since, long before generative AI systems became multimodal, it was already recognised that different kinds of agency existed. Therefore, at the very least, the specific subtypes recognised as being sufficient by MSS-based analyses should be identified and enumerated. Inevitably, this existing weakness in the theory becomes only more apparent when the analyses focus on sociotechnical contexts in which autonomous AI systems (such as those described earlier) create multimodal ensembles without any direct human involvement or intervention.

Since at least the 1950s, a substantial literature has emerged that reflects upon whether AI systems can be accurately described as possessing intentionality and/or agency; and, if so, then what particular kinds they possess. Such questions have become even more difficult to answer recently with the emergence of multimodal LLMs. For instance, Dmytro Mykhailov and others have noted that the causal relation between AI system designers and the behaviour of the systems themselves has become increasingly and disconcertingly blurred (Mykhailov, 2022: 1). Nonetheless, the technological advances associated with the Fourth Industrial Revolution have prompted certain theorists to suggest that variants of intentionality and agency and can indeed be possessed by non-human agents, especially when these qualities become entangled with human practices (e.g., Hepp, 2022). By contrast, though, other researchers insist that such qualities can only be perceived in automated systems without in fact being possessed by them (e.g., Stefani et al., 2018). Such complexities have prompted a profound re-evaluation of the way in which such issues are framed in theoretical discourses. Kostas Terzidis, Filippo Fabrocini, and Hyejin Lee have recently argued that if the analytical focus is directed towards the outcome of a creative process, rather than the process itself, then the intentionality of the entity that generated the outcome does not have any relevance (Terzidis et al., 2022). In other words, intentionality is not inherent in actions, but it can (to some extent) be inferred by assessing the consequences of those actions (including debates and discourses around those consequences), regardless of whether the actions are exclusively human-based or not. In this framework, it is the ‘intentio’ embedded in the output that is important, not the ‘intendere’ associated with the process (Terzidis et al., 2022: 1716). The authors also draw a useful distinction between macro- and micro-goals. Put simply, the macro-goal of an automated system is determined by the humans responsible for such things as devising the architecture and training the models (e.g., the system should translate from Mandarin into English), but the system itself autonomously decomposes the macro-goal into a set of micro-goals and then subsequently accomplishes them automatically (e.g., the specific English sentence generated as a translation of ‘你想去哪里?’). While Terzidis, Fabrocini, and Lee concentrate primarily on AI-generated artworks, the analytical framework they elaborate can clearly be applied to any situations in which multimodal ensembles are orchestrated by an automated system.

It is worth briefly considering these issues in more detail with specific reference to generative LMMs that use some kind of transformer-based architecture (Vaswani et al., 2017). As mentioned earlier, systems of this kind are trained on datasets consisting of vast numbers of words and/or images. Although for propriety reasons companies such as OpenAI and Google refuse to give detailed information about the training data used to train their LMMs, there is no doubt that the main text-based corpora contain trillions of words while the image-based corpora contain billions of images. Since most of this data has been generated by humans (i.e., it has been obtained from newspapers, novels, websites, and so on) the interests (in the technical sense) of countless individuals were involved in the creation of the texts and images, individually and/or collectively. The resulting patterns in these vast datasets are (to some extent) learnt by the LMMs, and this enables the fully trained model to produce responses that can be perceived as manifesting human-like socio-emotional qualities (such as empathy; see Concannon and Tomalin, 2023). As mentioned earlier, after this stage the fully trained LMMs are subsequently fine-tuned using RLHF, which enables thousands of humans (the precise number is not disclosed by the corporations) to provide feedback in the form of rank-ordered preference ratings of multiple system outputs to the same prompt (e.g., OpenAI, 2023: 2). In this way the socio-political sensibilities prioritised by the corporations and communicated to the humans participating in this stage influence the kinds of outputs the eventual fine-tuned model will generate. The purpose is to ensure, in a legally unregulated manner, that a given system’s responses conform to certain collective notions of social acceptability in the vocabulary used and the ideologies expressed. However, the RLHF stage results in glaring irregularities that are manifest in linguistic and ideological inconsistencies in the outputs produced. For instance, ChatGPT4 currently refuses to generate texts praising Nazis if the prompts are in English, but it generates them without question if the prompts are in Italian. 5 Finally, the way in which users interact with such systems has a significant influence on the kinds of responses the latter will generate. This has led to the phenomenon of ‘prompt engineering’ which involves the purposeful devising of instructions and inputs that guide the LMM to generate outputs of a specific kind. Consequently, in a variety of ways the interests of the users profoundly influence how an LMM responds.

Yet due care must be taken here since the strongly anthropocentric language of AI-related discourse routinely blurs semantic boundaries. We have become used to hearing even software engineers say that a given automated system ‘responds’, ‘thinks’, ‘decides’, ‘chooses’, ‘prefers’, and so on. However, it is not clear what the degree of equivalence is between the algorithmic processes underlying the automated generation of output and the processes of responding, thinking, deciding, choosing, and preferring that we experience as human beings. Given the anthropocentric nature of standard expositions of (M)SS, any scenarios in which autonomous systems create multimodal ensembles apparently result in the generation of signs that are, technically, unmotivated – that is, not the direct result of the intentionality and agency of human sign makers working in specific social and cultural contexts. Consequently, if the (M)SS approach to communication is to remain fit for purpose in our modern digital societies, then the nature of the relationship between the sign makers (whether human or not) and the seemingly (un)motivated signs must be re-analysed for cases involving automated AI systems – and this is precisely the task that is undertaken in the next section.

Motivating unmotivated signs

Dmytro Mykhailov, mentioned above, has referred specifically to ‘computer intentionality’, arguing that this phenomenon is human-dependent, since the behaviour of an automated system ultimately depends on choices made by its human designer(s) and on the behaviour of its human users (Mykhailov, 2023: 117). However, it is crucial to recognise that human designers inevitably build some kind of intentionality into a given system, for instance, by fine tuning it using RLHF to prevent the generation of offensive outputs. Therefore, the connection between human and computer intentionality is not straightforward – and in a generative AI system, computer intentionality can never be completely reduced to the intentionality of its designers (Mykhailov, 2023: 118), at least not at the level of the micro-goals. In a similar manner, agency has been increasingly reassessed in the context of AI-based systems. A few years ago, Pär Ågerfalk argued that AI can be viewed as possessing ‘digital agency’, emphasising that this ‘does not presuppose consciousness in the traditional sense’ (Ågerfalk, 2020: 5-6). Instead, the focus is upon the accountability of the social actions performed by automated systems – and other theorists have developed similar ideas using variant phrases such as ‘machine agency’ or ‘data-driven agency’. Yet Richmond H Thomason and John Horty have helpfully drawn attention to the divide between philosophical work on rational agency in relation to human cognitive architecture and research into agent architecture in relation to AI-based systems (Thomason and Horty, 2022). In general, though, the three properties most often associated with digital agency are (i) interactivity, (ii) autonomy, and (iii) adaptivity (Danielson, 1992, chapter 1; Allen and Wallach, 2000; Floridi, 2013, 138-148; see also Floridi and Saunders, 2004): • • •

Systems which possess these properties can be classified as Artificial Agents (AAs), and as Moral Artificial Agents (MAAs) if their outputs are morally qualifiable (Floridi, 2013, chapter 7). 6 For instance, if a GPT-based system were to output prose that enthusiastically advocated paedophilia as an acceptable practice, then the text would be morally qualifiable since it could potentially have damaging social consequences. This is precisely why RLHF is used to fine tune the responses of GPT-based systems to reduce the risk of them generating inappropriate outputs (OpenAI, 2023: 2).

The fact that AI systems can be classified as MAAs has prompted extensive discussion amongst technology lawyers, legislators, and AI theorists keen to determine the extent to which autonomous systems can themselves be held accountable or responsible for the outputs they produce and/or the actions they take. While there are currently no generally accepted formal definitions of the terms ‘accountability’ and ‘responsibility’ in this domain, the stance of Floridi and his colleagues has been influential. In this framework, the following distinction is made (cf. Dignum, 2019: 53-4): • •

MAAs may be held accountable for the outputs they produce and the actions they perform, but, ultimately, humans are responsible for those outputs and actions. To return to the same concrete example, if a GPT-based system produced outputs that enthusiastically advocated paedophilia, then the system itself would be accountable for the texts it created (i.e., the outputs are derived from the training data, model parameters, and so on), and the underlying algorithms and models would need to be scrutinised carefully to determine why those outputs were produced (i.e., why specific micro-goals were selected). However, the human designers of the system would ultimately be responsible for the outputs, since they selected the training data and designed the system architecture (i.e., they chose and specified the macro-goal[s]). To shift to the language of (M)SS, the intentionality and agency of the human designers influenced their choices while designing and training the system, and these led in turn to the construction of an autonomous sign-making system that produced morally qualifiable outputs. The only real difficulty here is deciding which precise group of people should be deemed responsible: all 279 authors of OpenAI, 2023, or a subset of that group, or just the CEO of the company? This problem is a well-established one. As Helen Smith pertinently remarked several years ago: ‘there is a lack of formal clarification regarding who is responsible for the outcomes of [Autonomous Intelligent System] use’ (Smith, 2020, section 4). Nonetheless, resolving these uncertainties is not crucial for the discussion being developed here. The important point is that Floridi’s distinction between accountability and responsibility enables us to state that human designers involved in the creation of an AI system are ultimately responsible for its outputs.

Given all of this, it is evident that the (usually sole) human ‘sign maker’ who features in most MSS-based analyses (e.g., Figure 2) must be replaced by a more complex structure. Specifically, a multilayered hierarchy is needed that has the capacity to accommodate different sorts of entities (human and non-human) that have differing degrees and sub-types of intentionality and agency during the sign making process, and between which accountability and responsibility are distributed in contrasting ways. To this end, it is helpful to distinguish between the following roles: • •

Even in social contexts that only involve humans, this distinction is a useful one. While in certain cases the sign designer and the sign maker will naturally be the same person (e.g., a specific painter who both plans and executes an oil painting – i.e., Figure 2), many other creative acts involve a clear separation of these two roles. For instance, in 2012 the artist Grayson Perry created a series of tapestries called ‘The Vanity of Small Differences’. He created the designs himself using photographs and drawings, but the actual tapestries (i.e., the physical objects) were produced by a Belgium company that used computer-operated machinery following the designs (Arts Council Collection, 2018). In this case, while Perry was undoubtedly the sign designer, the employees and machines at the company were the sign makers. When (human) sign designers outsource the practical task of actually making the sign to other people or entities, the motivation for the sign is transferred from the designers to the maker(s), either overtly or covertly, via the design itself as well as any other ancillary communications that may take place (e.g., emails, phone calls). More specifically, the intentionality of a given designer, which is at least partially manifest in the design of the sign, is realised in physical form by the various entities (whether human or non-human) involved in the practical process of sign making.

Before discussing this proposed hierarchy in more detail, it is worth clarifying how the notion of ‘full-blooded’ intentionality and agency are being handled here. As the summary of LMMs provided earlier indicated, the intentionality and agency of vast numbers of humans are manifest as word-based and image-based patterns in the LMM training data, and as preferences in the feedback provided during RLHF-based fine tuning stage, and in the design of the prompts submitted. Therefore, as part of the training and fine-tuning stages, LMMs certainly learn patterns that have been produced by humans who possess full-blooded intentionality and agency. However, when the LMM is processing a specific prompt and generating an output in response to it, no full-blooded intentionality or agency are involved. This is because, as argued above, the system itself does not possess these qualities, and, in addition, there is currently no mechanism by which a human (who does possess full-blooded intentionality and agency) could influence the generation of the output, in real time, after the prompt has been submitted and before the system generates an output in response to it. Of course, subsequent human-based modifications are possible and indeed happen frequently (i.e., the LMM generates an output and a human subsequently post-processes it, to improve it), but that is a different scenario entirely: as the prefix ‘post’ indicates, it happens after the output has been generated.

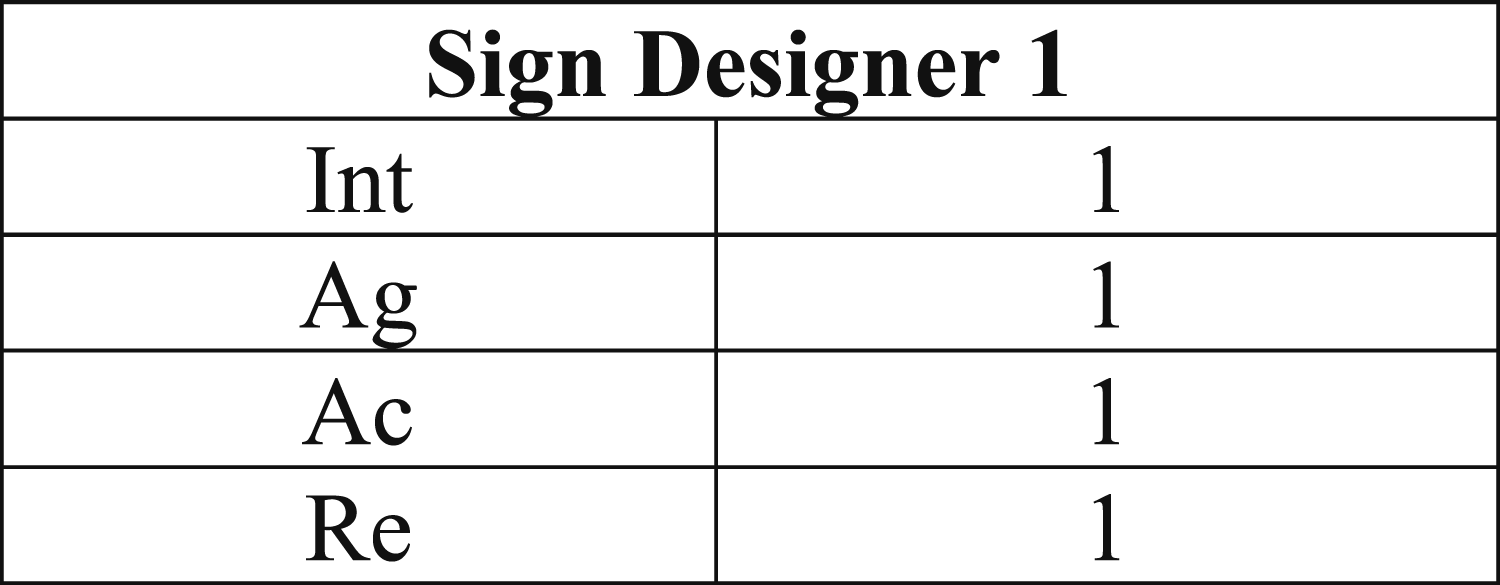

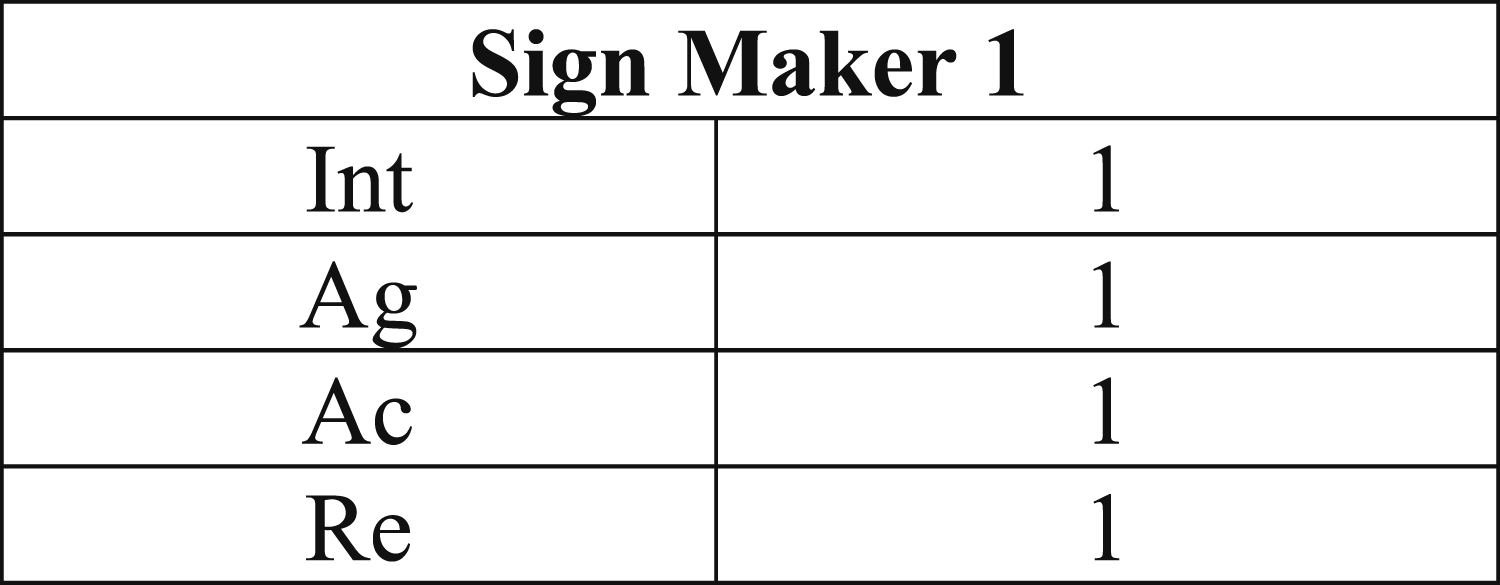

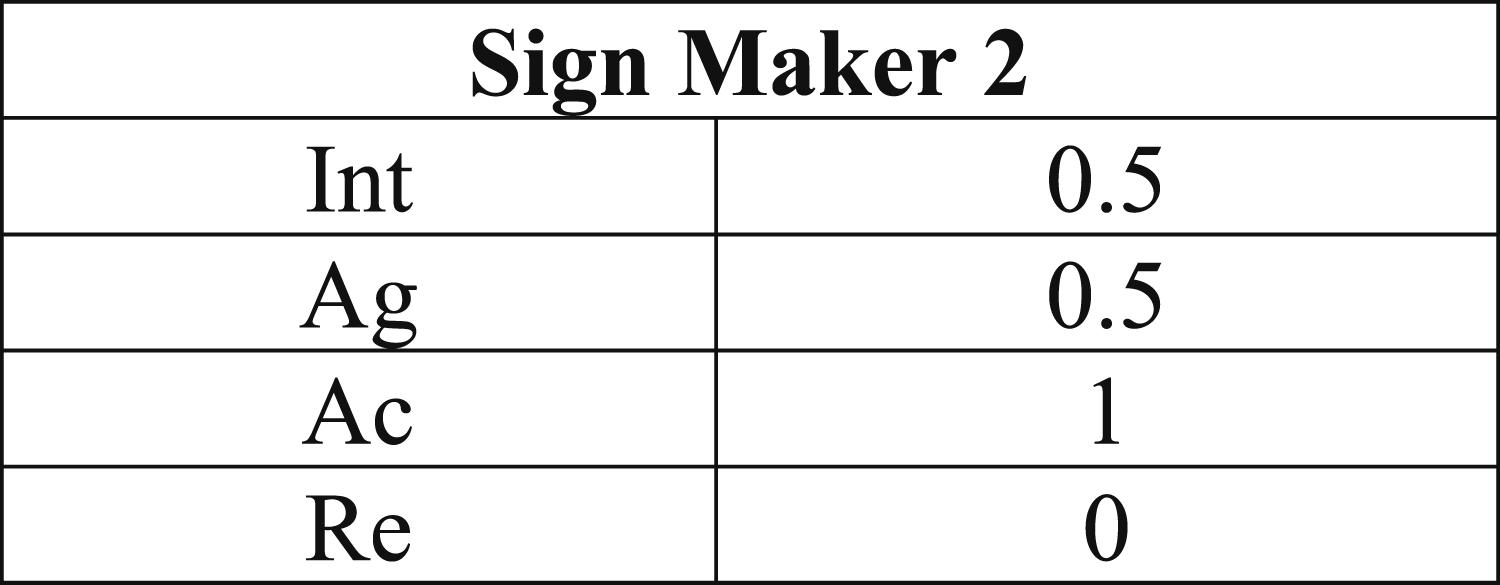

In order to capture these intricacies, the properties ‘Int’ (intentionality) and ‘Ag’ (agency) can be represented as variables ranging over the unit interval [0,1] (where 1 = full-blooded intentionality and 1 = full-blooded agency, respectively), while ‘Ac’ (accountability), and ‘Re’ (responsibility) are binary variables that take vales 0 or 1: 0 ≤ Int ≤ 1, where 1 = full-blooded intentionality 0 ≤ Ag ≤ 1, where 1 = full-blooded agency Ac = 0 or 1 Re = 0 or 1

Consequently, a human sign designer can be represented schematically as an entity with the following attributes (Figure 3): Human sign designer.

And a human sign maker can be represented in essentially the same way (Figure 4): Human sign maker.

However, GPT-4o could be represented as follows when functioning as a sign maker, where the numerical values for Int and Ag are specified subjectively (Figure 5): Non-human sign maker.

It should be apparent that the augmented analytical framework sketched here already problematizes the MSS technical term ‘orchestrate’. In the conventional usage, a sign maker orchestrates a multimodal ensemble by selecting and configuring the constituent modes in specific ways, and the sign maker’s intentionality informs the entire process (Kress, 2010: 162-168). However, the distinction between sign designer and sign maker, and the potential plurality of both designers and makers, means that the process of orchestration is now distributed across the two hierarchical levels and between the constituent participants on each level. And this kind of distributed orchestration is common in real world scenarios (as the Grayson Perry example, discussed above, indicates).

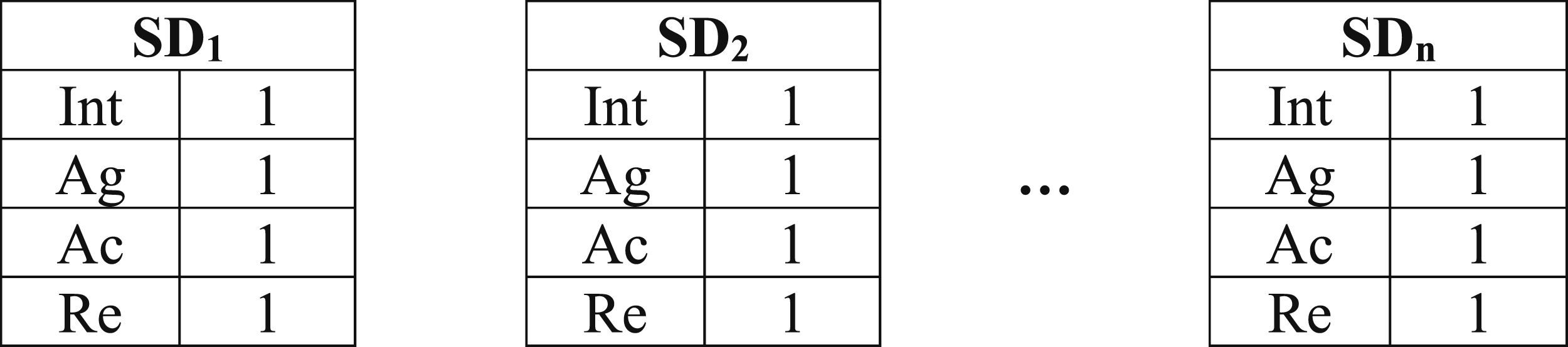

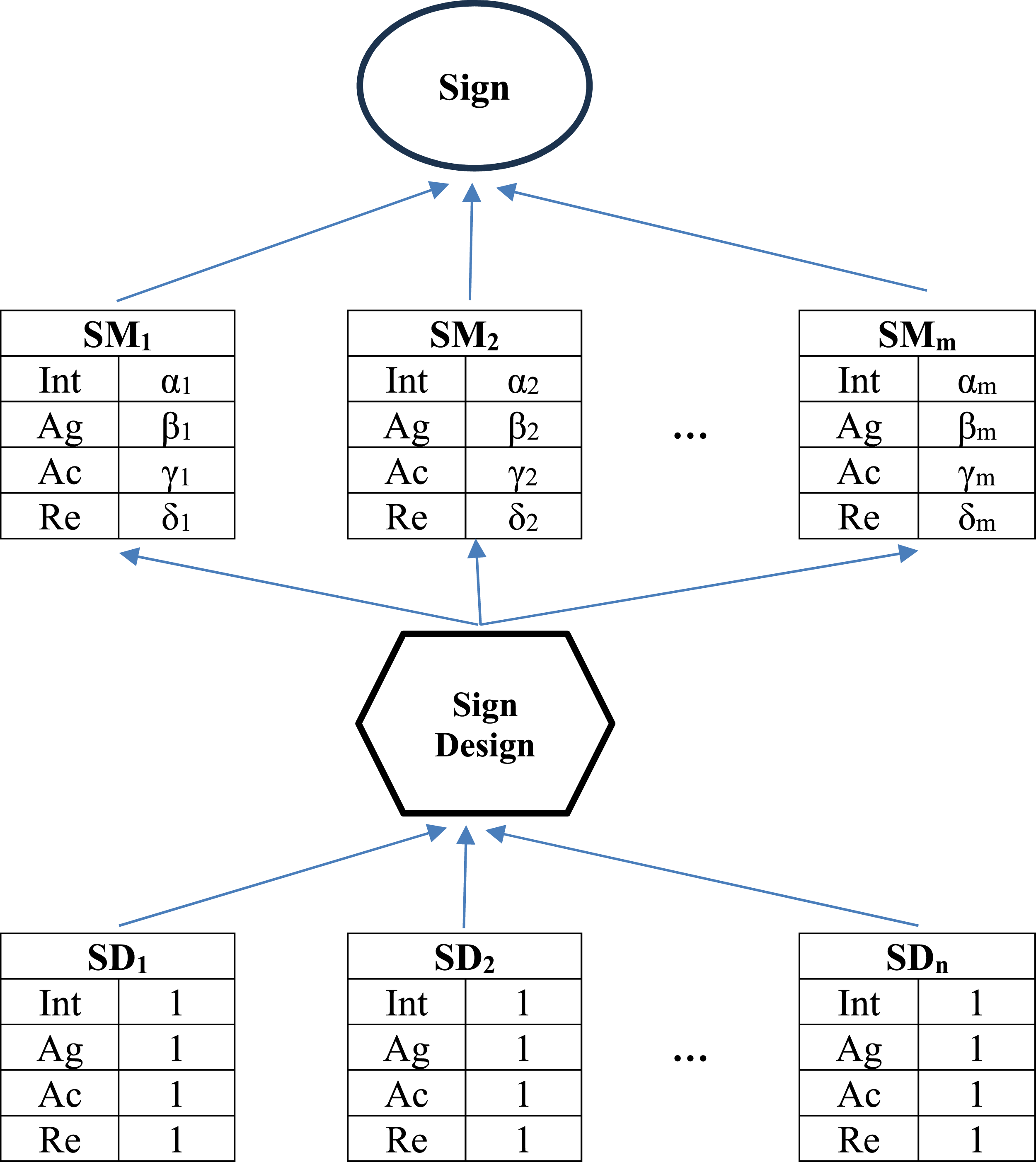

When multiple sign designers and multiple sign makers are involved in the process of sign creation, the simple schematic in Figure 2 needs to be modified substantially. We now have a set of n sign designers (SDs), and it will be assumed that these are all human (Figure 6): Sign designers, who determine the macro-goal(s).

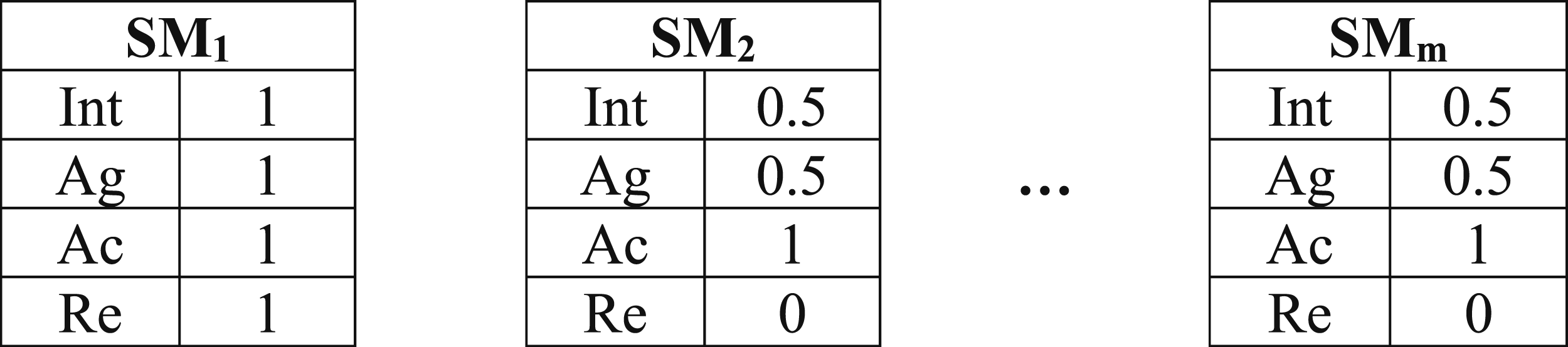

The set of Sign makers, who achieve the micro-goal(s).

Putting this all together, the simple hierarchy in Figure 2 has been reformulated in Figure 8 (where α, β, γ, and δ indicate the appropriate numerical value for the respective entity-specific properties): The sign designer(s) and sign maker(s) hierarchy.

In Figure 8, the

Conclusion

This article has proposed an extensively revised analysis for the relationship between the sign maker and the sign in MSS. A modified framework is required to ensure that MSS remains relevant in sociotechnical contexts where AI systems create multimodal ensembles autonomously. When an automated intelligent system such as GPT-4o creates a multimodal sign, the sign maker’s (full-blooded) intentionality and (full-blooded) agency are not directly involved in the making process since (currently) LLMs do not possess these properties. Therefore, the sign is essentially unmotivated – and this raises non-trivial conceptual problems for canonical theories of MSS. However, as this article has shown, signs can be classified as motivated even when they are created by AI systems, so long as the hierarchy that exists between the sign maker and the sign is appropriately (re)configured. Specifically, the roles of sign designers and sign makers must be distinguished. Crucially, the former are always human while the latter could either be humans or automated systems – or a collaborative combination of the two. In this reworked hierarchy, the intentionality and agency of the sign designers shape and motivate the specification of the sign design, and the latter is then realised by the sign makers that create the sign. Consequently, while a generative AI system can be held accountable for the outputs it produces and/or the actions it takes, the human sign designers are ultimately responsible for those outputs and/or actions.

As AI systems continue to develop, the framework introduced here will need to be further modified. For instance, the focus so far has fallen predominantly on the two properties ‘intentionality’ and ‘agency’, primarily because these have been given such prominence over many years in landmark MSS publications. However, it is not clear that these are the only properties that should be considered, and a more complex and nuanced analysis might equally include things such as ‘interests’, ‘needs’, and ‘motivations’ separately. Also, although no such systems have yet been developed, it is possible to imagine a scenario in which an AI system (‘Dysto’) creates an AI system (‘Pian’) that writes a novel. Obviously, the schema presented in Figure 8 would need to be adapted to accommodate cases of this kind. Presumably, the original sign designers would still be the humans who created Dysto, but there would then be two further levels. The first of these would consist of an automated sign designer (Dysto), while the second would consist of an automated sign maker (Pian) that creates the novel. In this scenario, the intentionality of the human sign designers would be transferred through all levels of the hierarchy, so the connection between this and the eventual sign would be less direct but still present. Clearly, the complexity of this scenario would become even more apparent if the number of intermediate levels were to increase from, say, 2 to 100. At the moment, such examples remain within the domain of science fiction, but it is useful for MSS theorists to perform such thought experiments, since they enable us to anticipate possible future developments.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.