Abstract

This paper presents three studies on the design, use and effectiveness of multimodal online baking blogs that present cookie recipes in two forms: illustrated step-by-step Instructions with Pictures and printable text-only Recipe Cards. Firstly, a corpus study describes how authors combine text and pictures in 15 blogs. Secondly, an eye-tracking study was conducted to explore how 12 participants read and evaluate baking blogs and the Instructions with Pictures in them. Finally, a user study was conducted to explore how 4 teams of participants execute and evaluate either an Instruction with Pictures or a Recipe Card of a typical baking blog. Questionnaire data on the readers’ and users’ judgments of the comprehensibility, design and their (expected) performance of the instructions, as well as eye-tracker data and videos capturing the reading and baking practices were collected and analysed. Thus, the triangulation of exploratory studies displays how different research methodologies inform the relevance and evaluation of particular characteristics of multimodal presentations given the readers’ and users’ judgments as well as through objective measurements that provide complementary insights on multimodal baking instructions in terms of multimodal information presentation, reading strategies and situated use.

Introduction

Cooking and baking recipes have been around for centuries, presented in manuscripts, cooking books and various other written resources (Arendholz et al., 2013). In the current age of digital platforms, culinary enthusiasts have found a new home on the internet, where they can access a wealth of recipes on cooking and baking blogs, often accompanied by helpful pictures and videos. As the online realm continues to expand, it becomes useful to explore how the multimodal nature of online baking instructions impacts on the effectiveness of these culinary guides. Embracing this challenge, this paper delves into online baking blogs containing written step-by-step instructions and step-by-step pictures. Through triangulation of a corpus analysis, an eye-tracking study, and a user study, the research presented in this paper not only studies multimodal design and its impact on readers and users, but also displays how description and evaluation methods reinforce each other in terms of implementation, data processing and subsequent research questions.

Cookie baking instructions

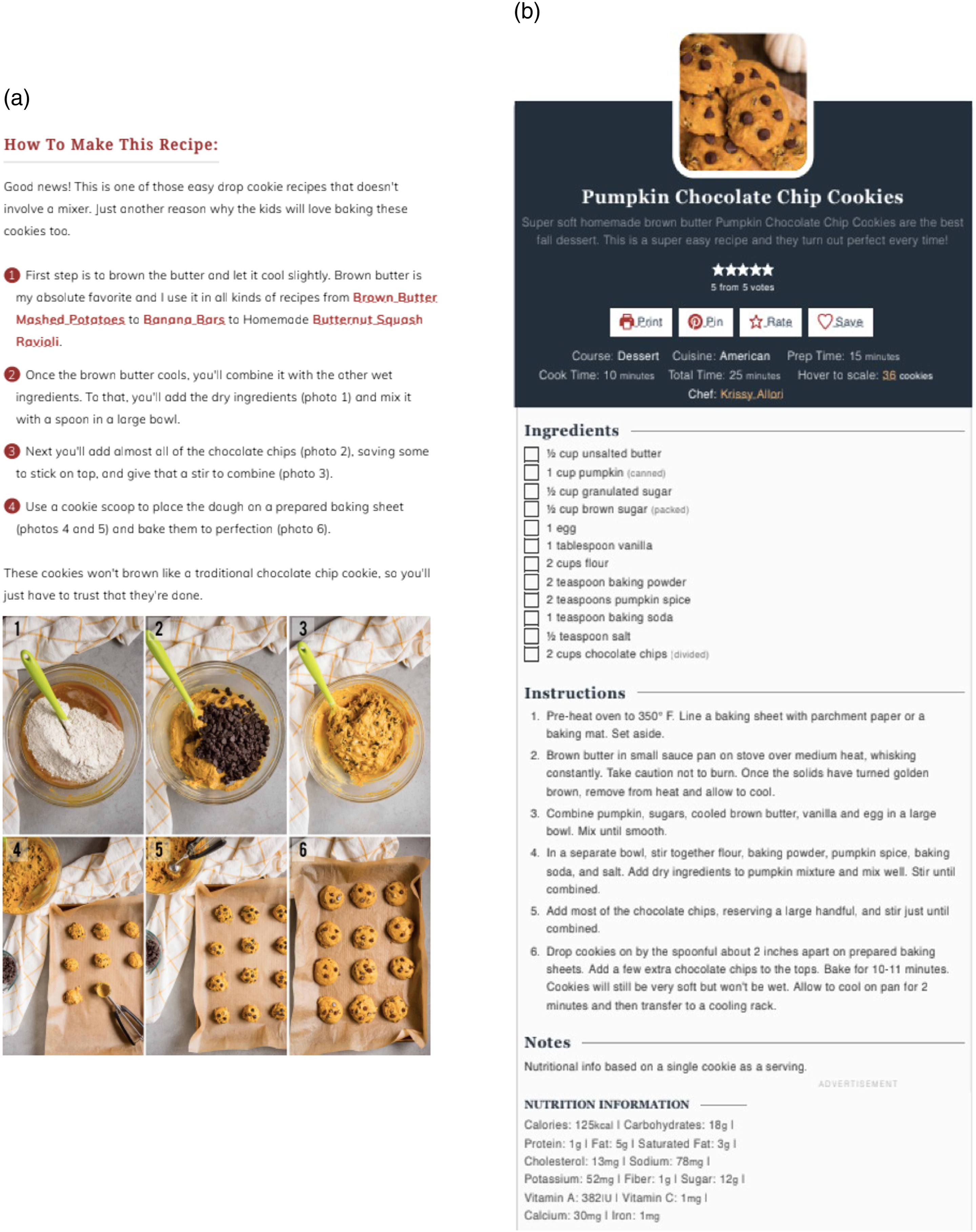

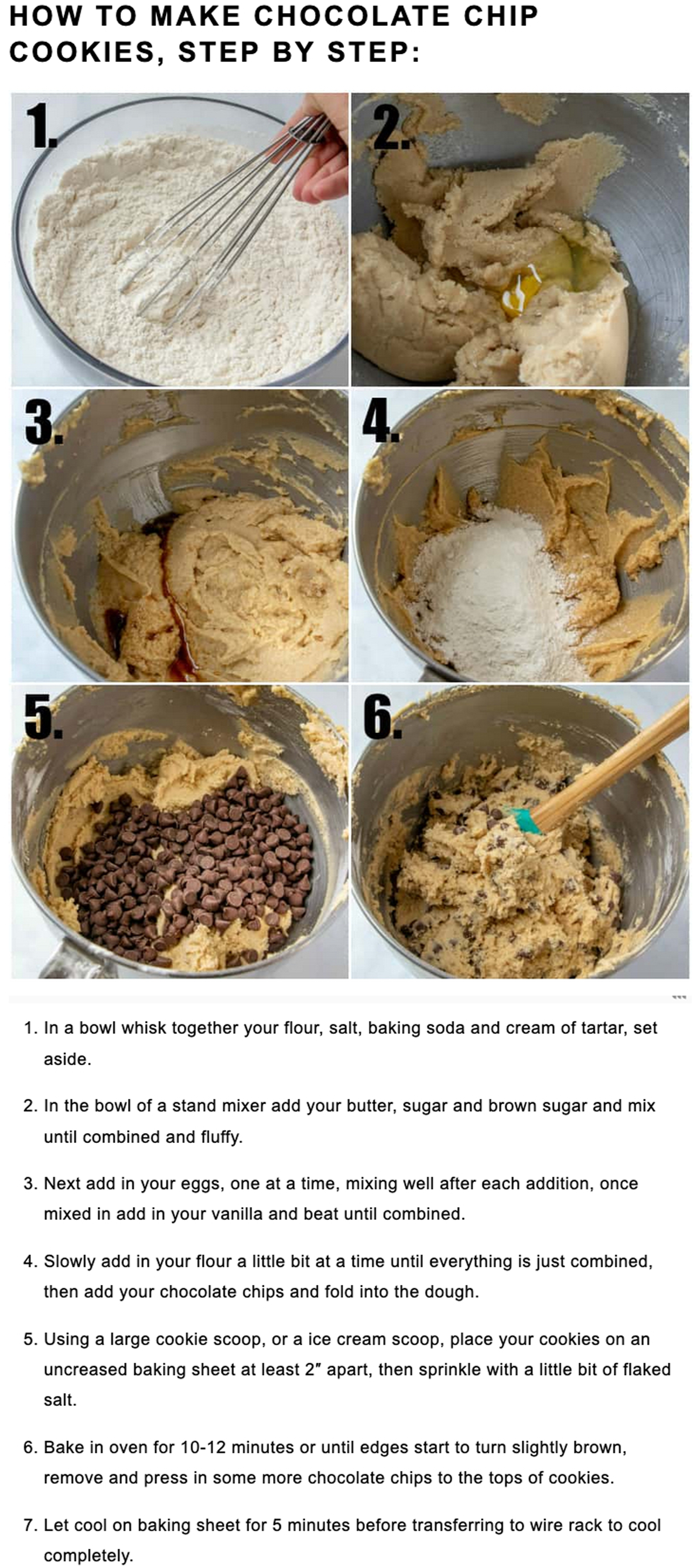

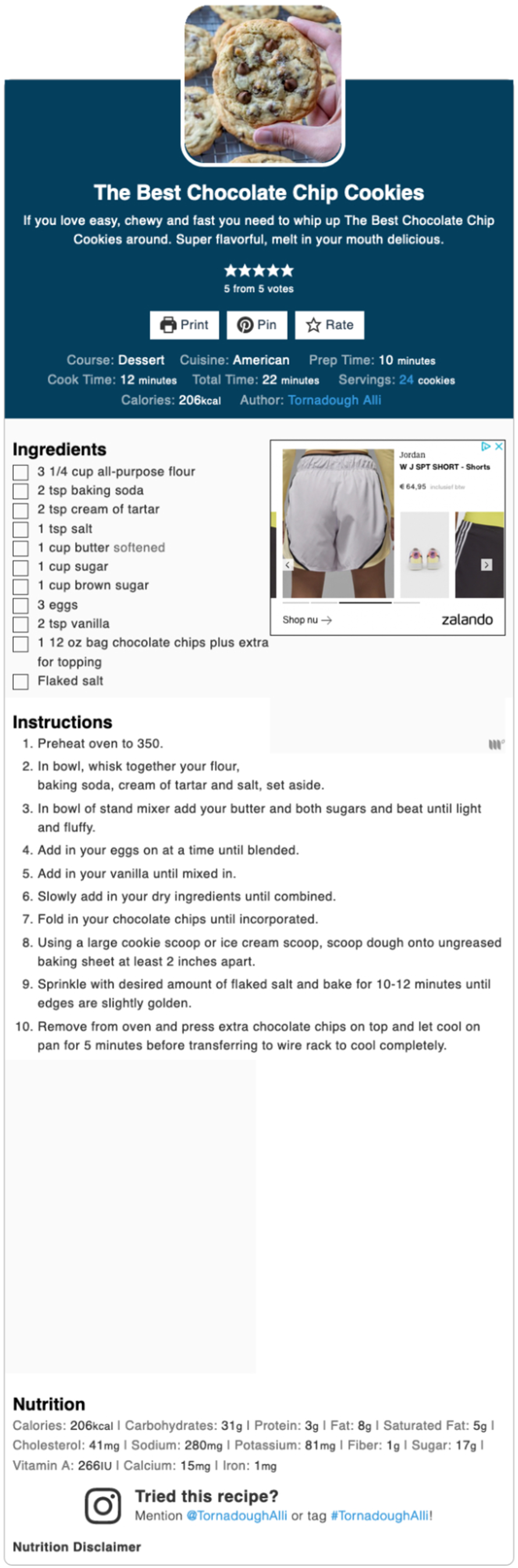

The baking recipes examined in the studies presented in this paper are considered multimodal instructions (MIs), which combine different forms of communication: text and pictures. Building upon existing research in multimodal analysis (cf. Bateman et al., 2017) and in reader and user studies (cf., Holsanova, 2014), this study specifically investigates multimodal baking instructions for chocolate chip cookies sourced from online baking blogs. Typically, these online baking blogs present recipes in two formats within the webpage. The blog text includes an embedded instruction with step-by-step pictures, which we will call the Instruction with Pictures. At the end of the blog, a printable card, which we will call the Recipe Card, provides instructions for the same recipe. Bowker (2021) differentiates the blog content from the Recipe Card by highlighting that the Recipe Card contains all the necessary steps and ingredients, while the preceding blog content allows for additional information and visualizations of particular states in the procedure. The current study focuses on the Instruction with Pictures (IWP), which combines textual and pictorial step-by-step baking instructions, as well as the Recipe Card (RC), which usually only includes a single picture of the end product. Examples of these two types of instructions can be found in Figure 1(a) and (b). Since both instructional texts present the same recipe, while only the IWP includes step-by-step pictures, the blogs provide an good opportunity for a comprehensive analysis of the presentation and effectiveness of text and pictures of the baking instructions. Thus, a corpus study was undertaken to provide a systematic description of the text, pictures, and the relations between them in both the IWP and the RC. Additionally, an eye-tracking study was designed to explore how individuals read and judge a baking blog as a whole and an Instruction with Pictures by itself. Finally in a user study, participants were instructed to bake cookies using either the Instruction with Pictures or the Recipe Card, to examine how the choice for either instruction influences performance in users. By presenting these three exploratory studies together, we show how different methods present complementary views on the same material, and how different research methodologies inform the relevance and evaluation of particular characteristics to study in multimodal presentations. (a) Instruction with pictures of MI 15. (b) Recipe card of MI 15. Source: https://selfproclaimedfoodie.com/pumpkin-chocolate-chip-cookies/.

Research questions

To gain insights into the design of online baking blogs, the research begins with a corpus study that examines 15 step-by-step instructions. The study’s central research question is: ‘How can we describe the instructions in online step-by-step baking instructions and what are the relations between the different modes used in them?’

Using an action-based annotation model (Van der Sluis et al., 2016a, 2016b, 2017, 2022a, 2022b; Van der Sluis and de Jonge, 2024) the analysis focuses on the differences and similarities between the IWP and the RC and the multimodal coherence relations between text and pictures in the IWP. Equipped with an understanding of the multimodal design of baking blogs, the study progresses with an exploratory eye-tracking study involving 12 participants. This study aims to answer the question: ‘How do people read and judge online baking blog recipes containing multimodal instructions?’

Participants were asked to read and judge a complete webpage as well as an individual IWP. Observing participants’ eye movements uncovers how readers engage with instructive baking blogs as a whole as well as with multimodal IWPs in particular. The readers’ evaluations of the blogs and the IWPs they processed were collected via a questionnaire and a short interview.

Because it turned out that the participants in the reader study varied in their evaluations and interpretations of IWPs and RCs, a user study was set up to explore the actual use of baking blogs and to explore differences in judgments between readers and users. A user study was conducted in which participants actively engage in baking activities. Divided into two groups, participants follow either the IWP or the RC of a typical baking blog to bake cookies. The primary question addressed in this study is: ‘How does using either the Instruction with Pictures or the Recipe Card of a baking blog influence the user’s execution of the baking instruction and the user’s judgments of the comprehensibility, design and performance of the baking instruction?’

This study provides valuable insights into the users’ execution of a baking instruction, offering a glimpse into their decision-making processes. Through a comparison of the performance and experiences of IWP users and RC users, we also examined the effect of adding pictures to an instructional text.

With the three studies we aspire to contribute to the analysis, understanding and quality of online multimodal communication, especially multimodal instructions. This paper provides a deep dive into the design, human processing and use, and thus the effectiveness of baking blogs. The exploratory studies investigate the functionality and realization of recipe blogs and the relations between the text and pictures in them, uncovering how both readers and users judge and interact with these multimodal documents. Through a comprehensive approach encompassing a corpus analysis, an eye-tracking study, and a user study, we aim to illuminate the path toward enhanced baking instructions, to inspire future research on multimodal instructions, and to inform the development of research methods to investigate multimodality in our society.

Background

Multimodal instructions

Multimodality refers to a communicative situation where different forms of communication are used to make meaning (Bateman et al., 2017: 7). These different forms, or

Nowadays, it is highly prevalent for communicative artifacts to utilize a variety of modes to present information (Bateman, 2014). When instructional texts are coupled with instructional pictures, they form MIs. This leads to the question of how these pictures are connected to the text. In an effort to address this, Barthes (1964) delineates three distinct types of text-picture relations. The first is

Bateman (2014) further expands on text-picture relations, focusing on how one mode expands the meaning of the other. He introduces three categories to describe this interaction:

Ganier (2004) emphasizes the importance of using pictures alongside instructive text. Pictures may facilitate the human processing of instructions by reducing and/or distributing the load on human cognitive capacities, eventually helping people with action planning and integrating knowledge into their long-term memory. Sanchez-Stockhammer (2021) also supports this notion, highlighting that presenting instructions visually and verbally creates multimodal repetition, enhancing comprehension. Even sublexical cohesive relations, such as linking the word ‘apple pie’ with an image of an apple, contribute to the readability of a text through textuality (Sanchez-Stockhammer, 2021: 11).

There is a copious amount of research that proves that text- and picture-based instructions and learning materials are more effective than text-only documents (see, e.g., Butcher, 2014; Mayer, 2002). A lot of research on the effects of the use of text and pictures in instructions has been conducted in the domain of healthcare and medicine (e.g., Cline et al., 1999; Dowse and Ehlers, 2005; Mansoor and Dowse, 2003; Morrow et al., 1998, 2005; Sata et al., 2003; Sojourner and Wogalter, 1998). All these studies highlight the importance of adding pictures to healthcare information, as pictures proved to be an important source of information for patients. For example, in a study conducted by Morrow et al. (1998), 72 participants were asked to study an instruction for taking a hypothetical medicine. The instruction was presented either in text-only format or in a format that included text and a visual icon timeline indicating the timing of medication intake. After the participants finished their study of the instruction, they were asked questions about it. The results showed that questions about dose and time information were answered more accurately and quickly when the icon timeline had been present in the instruction. The visual timeline also caused a reduction in the study time that the participants needed.

Another large body of research focuses on the effect of text and pictures in a learning environment. For instance, it has been proven that pictures can facilitate and contribute to L2 learning (Andrä et al., 2020; Hagiwara, 2015; Morett, 2019). Andrä et al. (2020) investigated the effects of gesture-based and picture-based learning on 8-year-old children’s acquisition of new vocabulary in a foreign language. Three studies were conducted with German children over a period of 5 days. The results showed that both gesture and picture enrichment improved children’s performance in vocabulary recall and translation tests compared to non-enriched learning. These benefits persisted up to 6 months after the training, and they were observed for both concrete and abstract words. Contrary to the initial hypothesis, gesture and picture enrichment had similar positive effects on children’s language learning, suggesting that both modalities are effective in enhancing children’s learning outcomes over an extended period.

Morett (2019) compared the effects of viewing still images, iconic gestures, and glosses on the learning and retrieval of concrete words in early stage second language (L2) acquisition by 28 Hungarian undergraduate students. The results showed that concrete L2 words learned through viewing still images were better recalled than those learned through viewing iconic gestures. Additionally, results showed that L1 glosses did not facilitate L2 word learning in novice learners. These findings suggest that images are more effective than gestures or glosses in facilitating the learning of concrete L2 words for learners unfamiliar with the target language, indicating that glosses are not always necessary for effective L2 word learning.

Lastly, Hagiwara (2015) investigated the use of pictorial support in processing morphemic elements in multiclausal sentences for second language (L2) learners. Thirty-two learners of Japanese participated in elicited imitation tasks with and without pictorial support. The results showed that learners performed significantly better when provided with pictorial support. However, the effectiveness of pictorial support was limited for recently learned elements in sentence-final position, suggesting a difficulty in learners to automatize such items regardless of cognitive support.

Limitations to the use of combinations of text and pictures

The value of adding pictures depends on various factors, including the context, the performance measures, and the learners (Fisk et al., 1986; Reid and Beveridge, 1986; Zhao et al., 2020). In a study by Fisk et al. (1986), 70 participants were shown one of 5 instructions for sign language, each varying in their text-picture ratio. After studying the instructions, they were asked to first perform the signs, and subsequently, after a 2-min distraction task, they were given a picture and text recognition test of the signs. Participants who studied the instruction combining text and pictures significantly outperformed those in picture-only and text-only conditions in terms of performance accuracy. In terms of speed, participants in the picture-only conditions performed the best. In the memory test, participants in the picture-only condition had less accuracy in recognizing textual sign instructions. Thus, even though pictures can improve performance speed and accuracy, text can be applied to broader contexts more effectively, as it provides more flexibility in usage.

Zhao et al. (2020) also examined the roles of text and pictures in a learning context. Secondary school students received text-pictures units taken from geography and biology textbooks, and were either asked questions about them after reading (delayed-question) or before reading (preposed-question). Eye movement analysis showed that students in the delayed-question condition allocated more resources to text processing, while those in the preposed-question condition allocated more resources to picture processing. This suggests that texts provide explicit conceptual guidance during initial mental model construction, while pictures support mental model adaptation by providing specific information for task-oriented updates.

Additionally, Reid and Beveridge (1986) found that the effect of adding pictures can also depend on the learners’ ability level. They examined the impact of text and pictures on learning a science topic among 13-year-old children. In the study 272 students received texts with varying pictorial content. Learning was assessed using an objective test. The results showed that pictures did not have a general motivational effect on learning, but specific pictures had a beneficial effect for higher-ability students while being distracting for lower-ability students.

In some studies, the benefits of combining text and pictures do not seem to occur at all (Liu and Chuang, 2011; Rasch and Schnotz, 2009). In Liu and Chuang (2011), eight college students viewed web pages with text and pictures about atmospheric pressure and wind formation. The results showed that participants focused more on the text than on the pictures and that segmentation of the content in text and pictures did not cause an increased attention for the pictures. Participants alternated their focus on different parts of the pictures while concentrating on weather system explanations in the text. The text provided more detailed information and served as the primary resource for understanding. Rasch and Schnotz (2009) tested the effects of adding interactive and non-interactive pictures to a hypertext about time and date differences on the earth. One hundred university students participated in the study, and were assigned to different groups with varying combinations of text and pictures. The results showed that adding pictures to text had no significant effect on learning, and learning from text alone was more efficient than learning from text and pictures. Interactivity had a positive effect on one learning task but not the other. The visualization format influenced participants’ interaction with pictures but did not impact the learning outcomes. The authors give two possible explanations for these results: pictures can cause readers to superficially process text, as they partially replace the text as an information source with the pictorial source of information. Furthermore, pictures can be redundant if they portray what the reader has already made up in his/her mind based on the textual information (Rasch and Schnotz, 2009: 420).

Design choices and human processing

One thing that is clear from the studies on multimodal processing discussed in this paper, is that the design of an MI plays a crucial role in facilitating human processing of the procedural instruction presented. When relevant visual information is easily accessible, comprehension and learning are improved. Clear signaling and proper organization of multimodal content help to guide human attention, and enhance efficiency and effectiveness (Ozcelik et al., 2010; Tenbrink and Maas, 2016; Van der Sluis et al., 2017).

A problem that can arise from poorly organized MIs is the split-attention effect (Chandler and Sweller, 1992; Schroeder and Cenkci, 2018). The split-attention effect refers to a cognitive phenomenon that occurs when readers have to simultaneously process and integrate information from multiple sources that are spatially or temporally separated. Specifically, it refers to situations where learners need to integrate information presented in different modes, such as text and pictures. As learners have to divide their attention, they can experience a cognitive overload, which reduces the learning efficiency (Schroeder and Cenkci, 2018).

Another design problem that occurs is the redundancy effect (Kalyuga and Sweller, 2014). The redundancy effect refers to the phenomenon where presenting the same information through multiple modalities, such as presenting the same information in both visual and auditory formats, can lead to cognitive overload and hinder learning. It suggests that including the same information via multiple modalities may not necessarily enhance learning outcomes and can even have a negative impact on cognitive processing (Kalyuga and Sweller, 2014).

The baking blogs studied in this paper could present potential challenges related to both the split-attention effect and the redundancy effect. Readers and users of these baking instructions are required to process information from different modalities, namely text and pictures, which could lead to the split-attention effect. Additionally, at first sight the pictures in the baking instructions primarily serve as visual representations of the actions described in the text. It is not straightforward to reach a consensus about the redundancy in verbal and pictorial content, but at least a partial redundancy can be described as we will also show in the corpus analysis presented in this paper.

Describing text-picture relations

In the field of computational linguistics and natural language processing, an increasing number of studies focuses on the automatic description of procedural cooking instructions. These studies aim to build systems that allow computers to understand and extract practical knowledge from written instructions, enabling them to perform tasks based on human-like instructions (Zhang et al., 2012). In order to do so, large databases containing cooking instructions, as well as videos of people executing these instructions, have been collected (e.g., Regneri et al., 2013; Rohrbach et al., 2012; Salvador et al., 2017; Yagcioglu et al., 2018). Regneri et al. (2013) focuses on the problem of linking textual descriptions of actions to visual information extracted from videos. The authors present a corpus that aligns videos with multiple natural language descriptions of the actions portrayed in those videos, and they demonstrate how combining text-based models with visual information from videos can significantly improve the understanding and similarity assessment of action descriptions. This action-based approach to annotating large databases can provide valuable insights into the structure of MIs. However, there are still limitations to computational tools for automatically identifying and categorizing actions in instructive texts (e.g., Van der Sluis et al., 2018; Zhang et al., 2012). While Van der Sluis et al. (2018) concluded that accurate categorization of actions requires human intervention as an essential guiding factor, recent initiatives in natural language processing and generation are promising (e.g., Pustejovsky et al., 2021; Tu et al., 2022a; Tu et al., 2022b).

Manually annotating MIs can pose a significant challenge, not only because MIs contain multiple modes that cohere and make meaning together but also because the layout in which the text and pictures are presented varies considerably. The PAT annotation model is being developed within the PAT project. 1

Since 2016, various versions of the action-based PAT model have been used to describe (parts of) multimodal instructions according to the following principles: 1. The instructional text is split into clauses; 2. The clauses are identified as either Action clauses or Control Information clauses; 3. The text clauses and the accompanying instructional pictures are described using functional attributes (e.g., Action Type, Action Status, Action Aspect and Control Information, Specification) and using domain-specific content attributes (cf. Van der Sluis et al., 2016a, 2017, 2018, 2022a).

The categorization of the verbal and visualized content according to the same model allows for the specification of the text-picture relations in terms of for example, elaboration and enhancement (cf. Bateman, 2014; Halliday 1985: 216–221). The generalizability of the PAT model is shown by annotating multimodal instructions in different domains, such as first-aid instructions (Van der Sluis et al., 2017) and cooking instructions (Van der Sluis et al., 2016a; Van der Sluis and de Jonge, 2024), as well as through the annotation of multiple types of documents for example, instructional videos (Vijfvinkel et al., 2018) and instructional comics (Wildfeuer et al., 2023). In this paper, a further development of the PAT annotation model is used to achieve a description of a corpus with online baking instructions. The description allows for a thorough examination of the multimodal nature of MIs, considering both the form and content of the text-picture relations.

Reader and user studies

After establishing the structure of baking instructions through corpus annotation, our focus shifts to examining the impact of text and pictures on readers and users. To comprehend and register the way in which text and pictures in instructions are processed by human users and in order to enhance their instructional effectiveness, methodologies such as reader and user studies often integrate the utilization of eye-tracking methods (Alemdag and Cagiltay, 2018; Fisk et al., 1986; Ganier, 2004; Liu and Chuang, 2011; Ozcelik et al., 2010; Van der Sluis et al., 2017; Zhao et al., 2020). Eye-tracking is a widely employed method for assessing human processing of multimodal instructions (MIs). Holsanova (2014) explains that this technology enables researchers to meticulously track the reading and scanning process, gaining insights into what users look at, where their gaze falls, when they shift their focus, and how frequently they do so. Such information proves invaluable in understanding user interactions with multimodal messages, information integration across different modes, and factors that capture their attention. By measuring eye movements, researchers can uncover the allocation of visual attention, which serves as a behavioral indicator of ongoing visual and cognitive processes (Holsanova, 2014).

Several studies utilized eye-tracking to draw conclusions about human interactions with multimodal instructions. For instance, Zhao et al. (2020) utilized eye-tracking to reveal that participants allocated their attention differently to text and pictures depending on the given tasks, while in the work of Liu and Chuang (2011), the analysis of participants’ eye movements uncovered that the text received more attention compared to the pictures. Here, it was observed that participants alternated their gaze between relevant components of illustrations while focusing on key elements in the text. Scan paths further demonstrated that decorative icons within the pictures caused distractions and split attention effects. Consequently, the researchers concluded that eye-tracking proves to be a valuable tool for investigating the cognitive processes involved in learning from multimodal documents (Liu and Chuang, 2011).

Eye-tracking studies are often combined with user studies, where performance measures such as speed and accuracy are used to investigate the human processing of MIs (e.g., Fisk et al., 1986). After all, the effectiveness of an instruction is determined by how well participants actually execute it. Holsanova (2014) also suggests that eye-tracking measurements are especially useful when used along with verbal protocols, interviews, comprehension tests, and/or questionnaires. This helps researchers understand readers’ attitudes, habits, preferences, and problems related to their interaction with these messages (Holsanova, 2014). Van der Sluis et al. (2017) demonstrate how eye-tracking can be combined with performance measures, a comprehension test and a questionnaire. The participants’ eye movements and their performance was recorded while they executed a tick-removal instruction. Subsequently the participants were asked to fill out a questionnaire measuring their comprehension, recall of the instruction as well as their opinion on the instruction’s attractiveness. Finally the participants took part in a short follow-up interview. In addition to the eye-tracking data and the performance data, the questionnaire and the interview provided valuable insights. Participants were able to recall four out of five actions and demonstrated comprehension of the instruction. However, they reported difficulty in recalling the actions accurately. Overall, the questionnaire and interview complemented the eye-tracking data by providing a deeper understanding of participants’ experiences, perceptions, and preferences, enhancing the study’s completeness and validity.

In order to maximally elicit information from participants, Holsanova (2014) recommends using verbal protocols. This refers to the use of verbal reports as a technique to trace and understand cognitive processes and knowledge underlying task performance (Ericsson and Simon, 1993). Verbal protocols involve participants verbalizing their thoughts and actions while or after working on a task. Common techniques are the

The

Corpus study: Describing recipe blogs

The corpus study described below aims to describe the structures and content of the Instruction with Pictures (IWP) and the Recipe Card (RC) of online step-by-step cookie baking instructions, whereby the relevance and generalizability of existing descriptive categories is explored. As a starting point for the analysis, two existing annotation models were combined and adapted to fit the current corpus. This study aims to answer the question: ‘How can we describe the instructions in online step-by-step baking instructions and what are the relations between different modes used in them?’ The study analyzes the similarities and differences between the IWP and the RC, as well as the text-picture relations within the IWP. Because each MI in the corpus includes an IWP and a RC to present the same recipe we do not expect significant differences between the descriptions of verbalized instructions types in the two instructions. Based on previous findings (Van der Sluis et al., 2016a; Van der Sluis and de Jonge, 2024) we do expect a difference between the verbalized and visualized actions within the IWP, where the text is expected to present actions as processes and the pictures are expected to present the results of actions. The findings obtained with the corpus analysis are used to make an informed choice in determining and motivating the content for the reader and user studies that are also presented in this paper. Moreover, the corpus analysis is used to support the interpretation of the data collected with the reader and user studies.

Data set

The materials for this research consist of 15 recipes taken from online baking blogs. The 15 recipes were selected from a larger corpus of 40 baking blogs. We developed selection criteria that allowed us to obtain a subset of the corpus with seemingly comparable blogs that would also offer enough variation in verbal and visual instructional content to study multimodal instructions and the text-picture combinations in them. We applied the following selection criteria: • The MI originates from a web source; • The MI describes the process of baking chocolate chip cookies; • The MI contains two ‘versions’ of the same recipe: an Instruction with Pictures, as well as a Recipe Card; • The Instruction with Pictures contains at least 4 step-by-step pictures; • The text in the Instruction with Pictures is split up into different steps; • The text in the Recipe Card is split up into different steps, and does not have the form of a coherent paragraph.

Annotation model

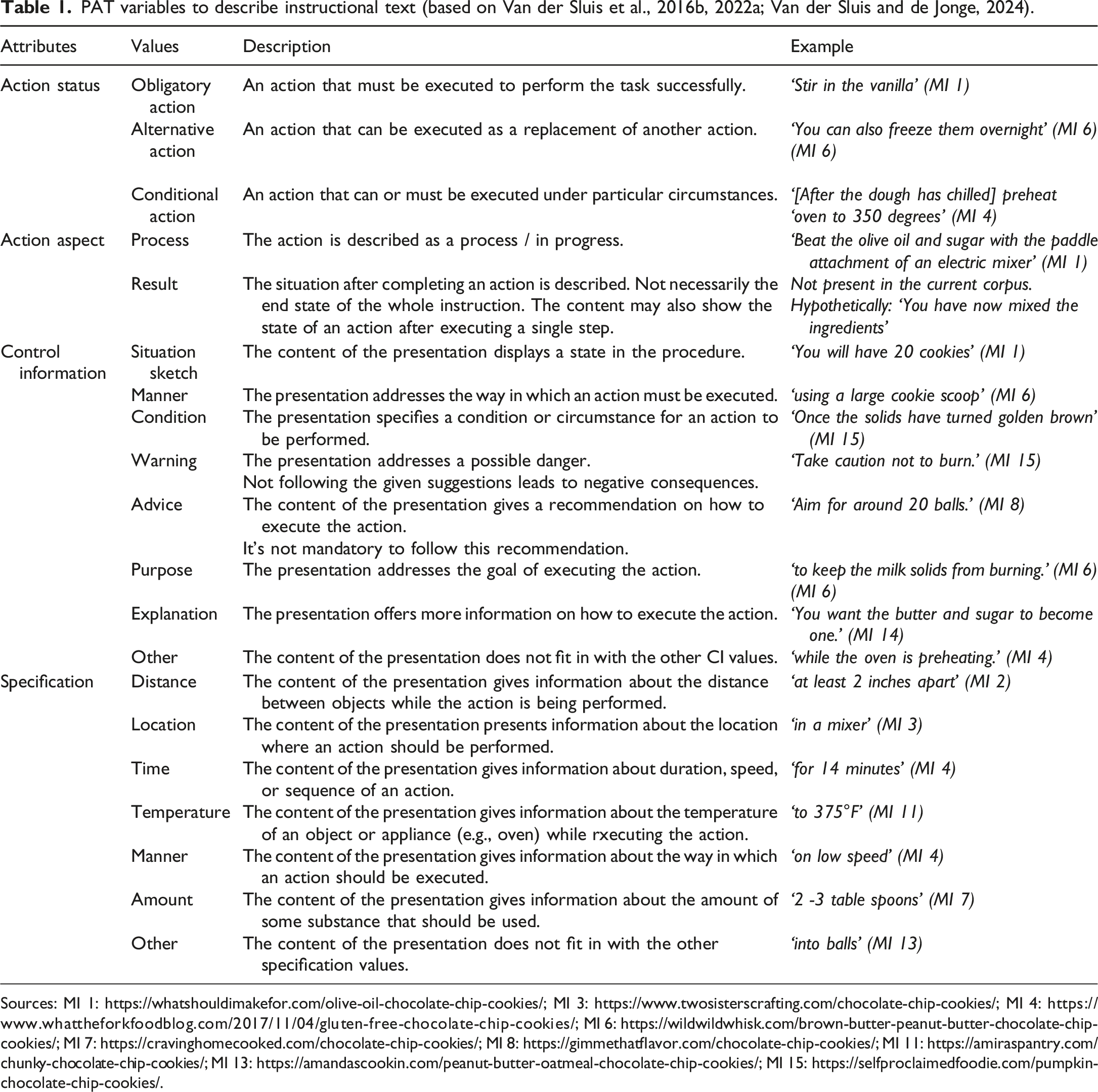

PAT variables to describe instructional text (based on Van der Sluis et al., 2016b, 2022a; Van der Sluis and de Jonge, 2024).

Sources: MI 1: https://whatshouldimakefor.com/olive-oil-chocolate-chip-cookies/; MI 3: https://www.twosisterscrafting.com/chocolate-chip-cookies/; MI 4: https://www.whattheforkfoodblog.com/2017/11/04/gluten-free-chocolate-chip-cookies/; MI 6: https://wildwildwhisk.com/brown-butter-peanut-butter-chocolate-chip-cookies/; MI 7: https://cravinghomecooked.com/chocolate-chip-cookies/; MI 8: https://gimmethatflavor.com/chocolate-chip-cookies/; MI 11: https://amiraspantry.com/chunky-chocolate-chip-cookies/; MI 13: https://amandascookin.com/peanut-butter-oatmeal-chocolate-chip-cookies/; MI 15: https://selfproclaimedfoodie.com/pumpkin-chocolate-chip-cookies/.

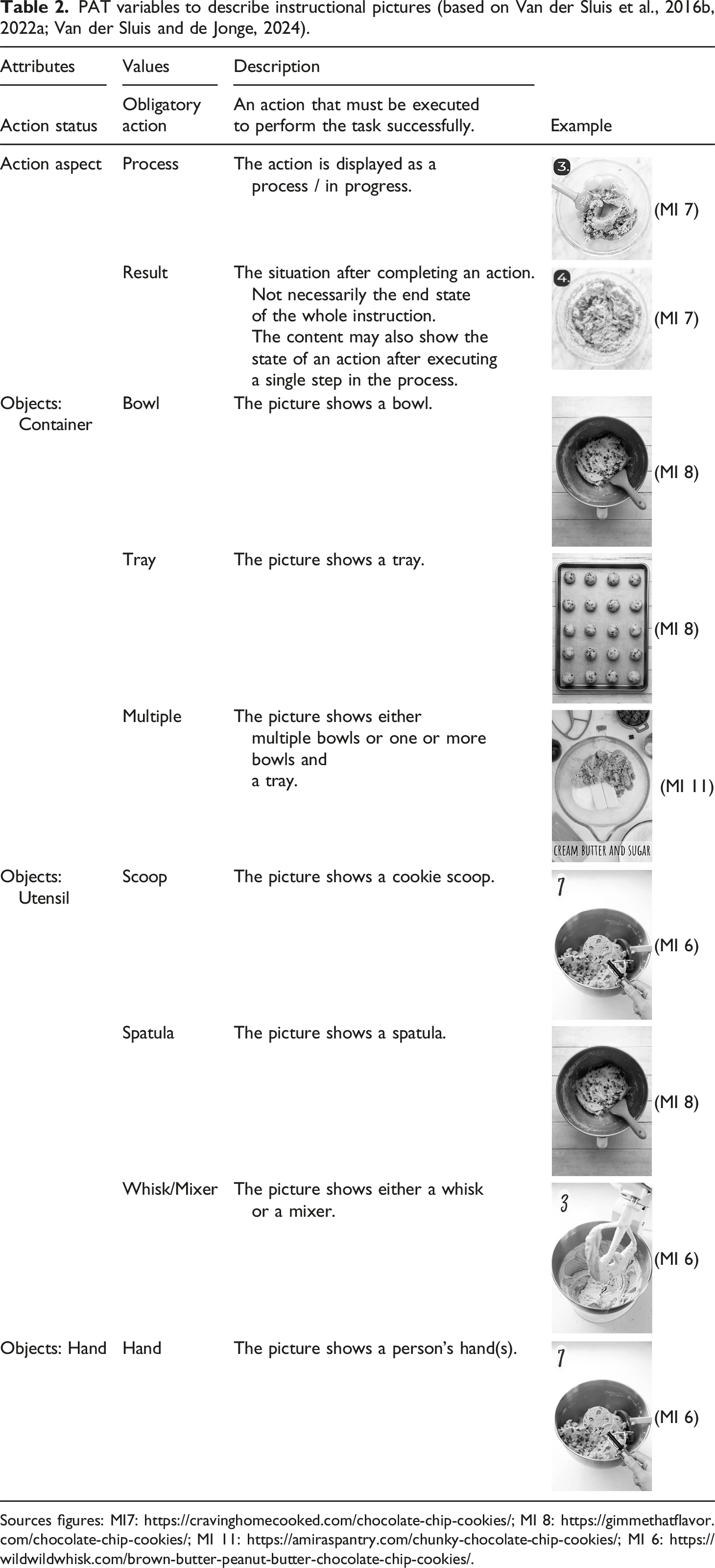

PAT variables to describe instructional pictures (based on Van der Sluis et al., 2016b, 2022a; Van der Sluis and de Jonge, 2024).

Sources figures: MI7: https://cravinghomecooked.com/chocolate-chip-cookies/; MI 8: https://gimmethatflavor.com/chocolate-chip-cookies/; MI 11: https://amiraspantry.com/chunky-chocolate-chip-cookies/; MI 6: https://wildwildwhisk.com/brown-butter-peanut-butter-chocolate-chip-cookies/.

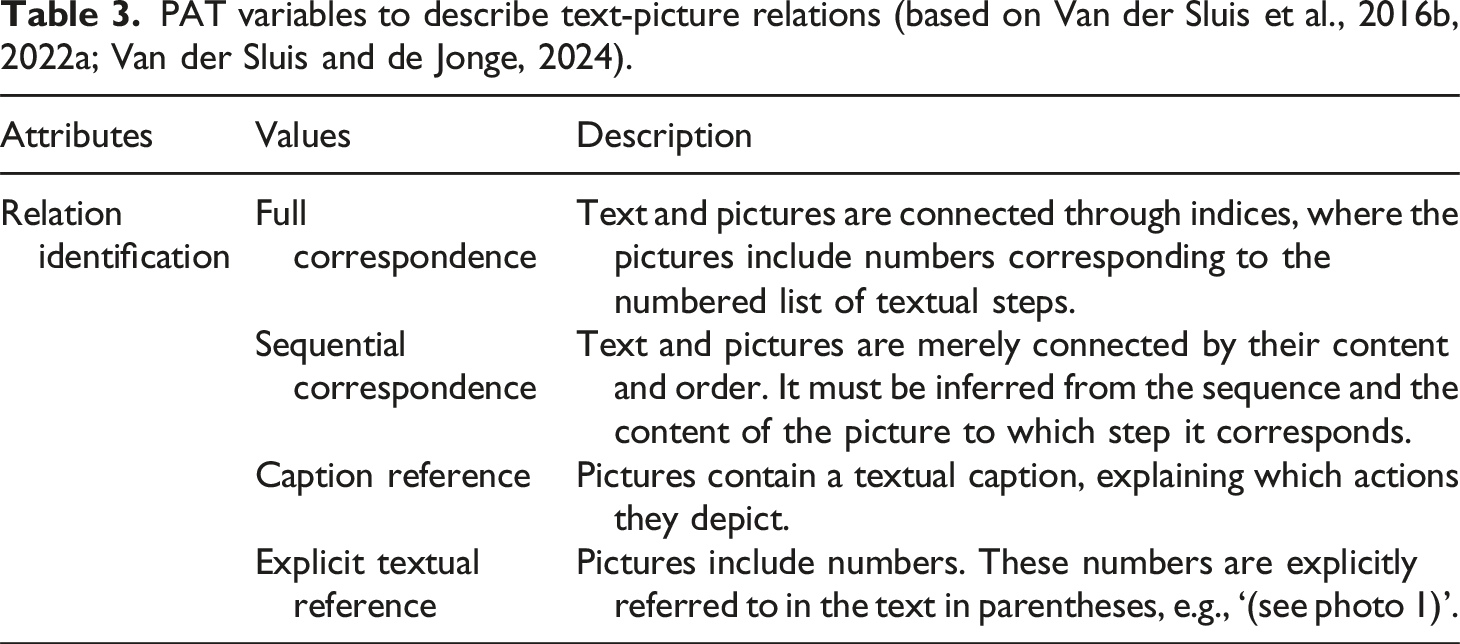

PAT variables to describe text-picture relations (based on Van der Sluis et al., 2016b, 2022a; Van der Sluis and de Jonge, 2024).

Regarding the text, the annotation model enables the identification of diverse types of Control Information and the identification of Specifications within the Action clauses (Table 1). Note that the pictures in the corpus only include actions with Action Status

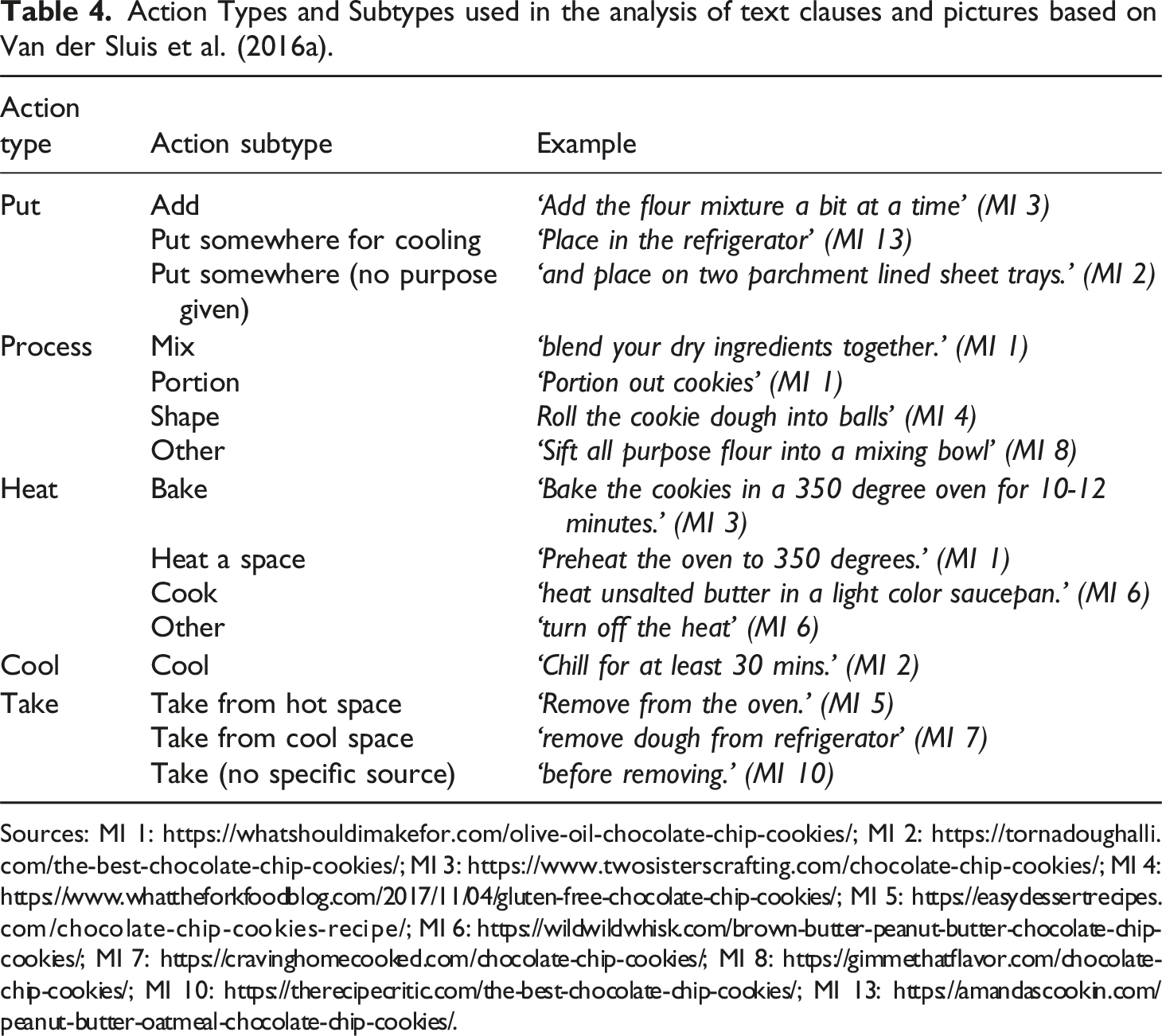

Action Types and Subtypes used in the analysis of text clauses and pictures based on Van der Sluis et al. (2016a).

Sources: MI 1: https://whatshouldimakefor.com/olive-oil-chocolate-chip-cookies/; MI 2: https://tornadoughalli.com/the-best-chocolate-chip-cookies/; MI 3: https://www.twosisterscrafting.com/chocolate-chip-cookies/; MI 4: https://www.whattheforkfoodblog.com/2017/11/04/gluten-free-chocolate-chip-cookies/; MI 5: https://easydessertrecipes.com/chocolate-chip-cookies-recipe/; MI 6: https://wildwildwhisk.com/brown-butter-peanut-butter-chocolate-chip-cookies/; MI 7: https://cravinghomecooked.com/chocolate-chip-cookies/; MI 8: https://gimmethatflavor.com/chocolate-chip-cookies/; MI 10: https://therecipecritic.com/the-best-chocolate-chip-cookies/; MI 13: https://amandascookin.com/peanut-butter-oatmeal-chocolate-chip-cookies/.

Corpus annotation

The basis of the analysis are grammatical units in which either actions or control information are described (cf. Van der Sluis et al., 2022a). The units can be full or reduced clauses or stand-alone fragments that serve clause-like functions but that lack the grammatical properties of clauses. Clauses can be subordinate as in ‘If the kitchen is warm, keep the rest of the dough balls in the fridge while they’re waiting for their turn.’ (MI 6), which contains two clauses: [If the kitchen is warm,] and [keep the rest of the dough balls in the fridge while they’re waiting for their turn.], or coordinated as in ‘Take the reserved handful of chocolate chips and pop them on top of the cookies’ (MI 1), which also contains two clauses: [Take the reserved handful of chocolate chips] and [pop them on top of the cookies]. To annotate the corpus the MI text was divided into clauses. Each clause was identified as either an Action clause or a CI clause. For each of the Action clauses, Action Status and Action Aspect were determined (Table 1), and the Action Type and Action Subtype (Table 3) were identified. Each CI clause was attributed one of the available CI values. Specifications, for example, adjectives, adverbs, and prepositional phrases regarding the manner in which an action should be executed were annotated within the Action clauses (Table 1).

Each picture of the IWPs was described in terms of the visualized objects and the Action (Sub)Types (Tables 2 and 4). Note that not all pictures explicitly show an action being executed; sometimes the Picture 3 of the IWP of MI 13. Source: https://www.glutenfreepalate.com/paleo-chocolate-chip-cookies/.

It is important to note that some Action clauses and some pictures respectively describe and visualize multiple of the same actions. For instance, in the clause: ‘Add in the almond flour, flax seed meal, salt, and baking soda’ (MI 13), four different ingredients are added, and therefore the

The analysis of the annotations comprised two parts. First, the annotations in the IWP and the RC were compared to describe how the functional content is distributed and realized in these two different types of instructions. Next, the text-picture relations within the IWP were mapped out using the Relation Identification attribute (Table 3). To further investigate the realization of the text-picture relations within the IWP, the actions presented in the IWP text and the actions presented in the IWP pictures were compared. It was determined whether the text and pictures offer the same Action Type category, a different category, or whether the Action Type is only presented in the text, or only in the picture.

The annotation model was developed on the basis of the models described in Van der Sluis et al. (2016a, 2016b, 2022a; Van der Sluis and de Jonge, 2024). In multiple rounds of annotating a subset of the corpus, the models were adapted to fit the corpus. The resulting model was used by one annotator to describe the corpus. The annotation was discussed and improved based on multiple thorough discussions with a second annotator until all inconsistencies were resolved. Subsequently, the annotators realized that the descriptions of the text clauses needed more detail and it was decided to also annotate the various Specifications that were included in the text clauses. In a further iterative process, the Specifications were annotated by two different annotators until the annotators were in agreement, resulting in the final annotation model and corpus description presented in this paper.

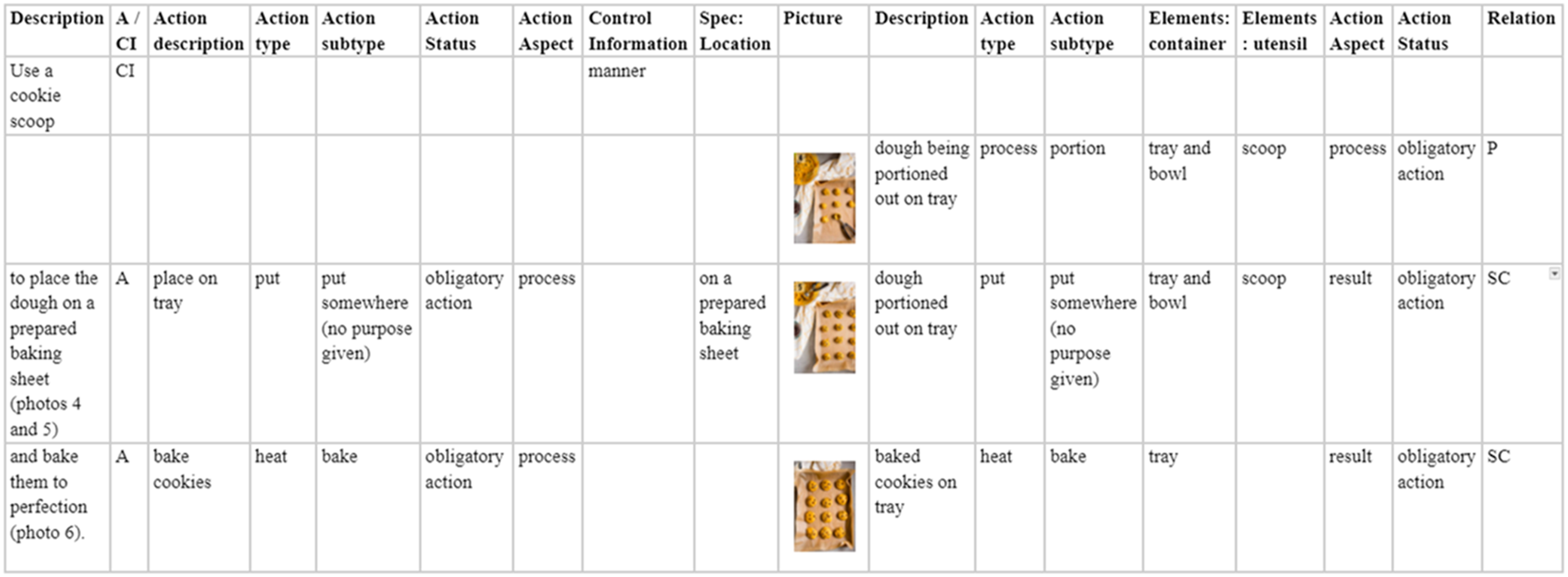

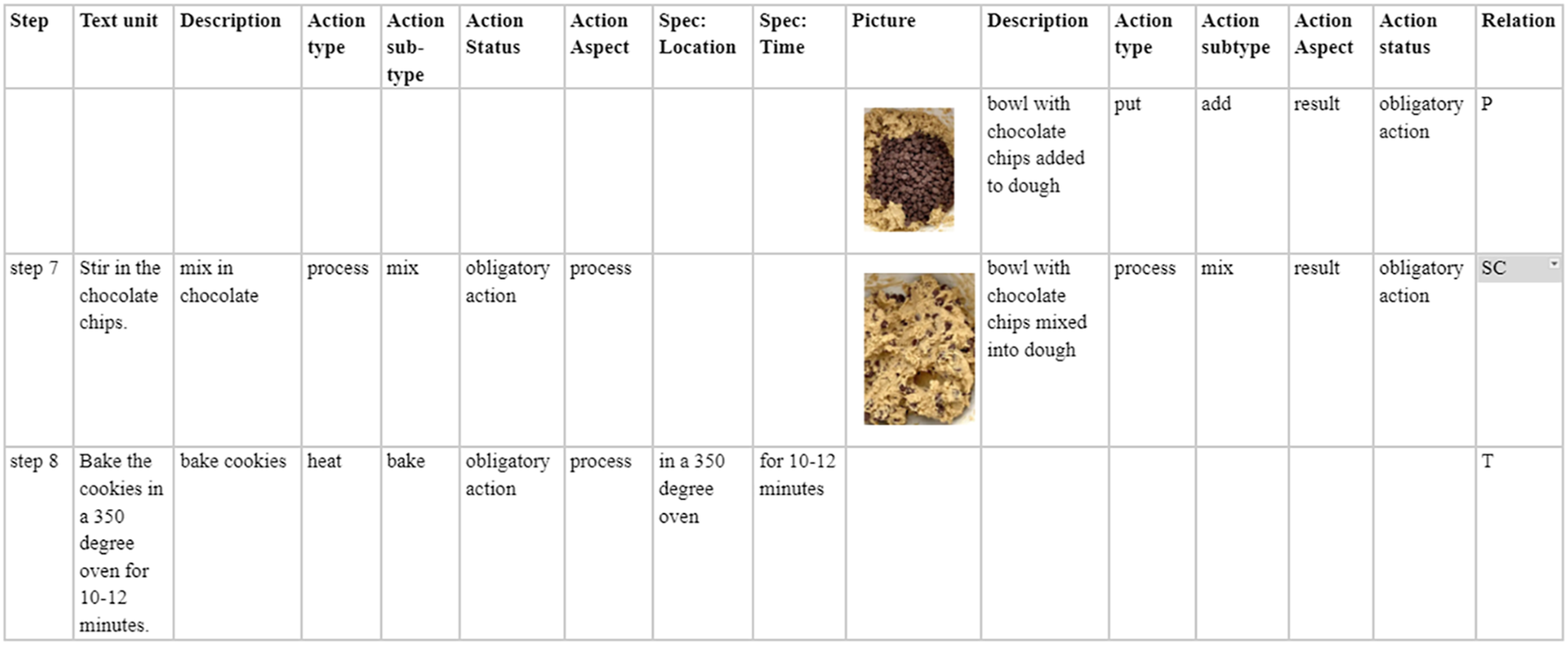

Worked examples

Figure 2 presents the annotation of Step 4 from MI 15 (see Figure 1). In this example it is shown that the text clauses are described as either Action or Control Information clauses. The clause ‘Use a cookie scoop’ is identified as a Control Information clause, because it describes the

Figure 3 presents the annotation of the third and fourth pictures of MI 3. Figure 4 presents steps 7 and 8 of MI 3. In this example, the The annotation of the text and pictures in step 4 of MI 15, where the text clauses are annotated as either action clauses or control Information clauses (A/CI), which may include Specifications. The Relation between the text and the pictures (i.e., Picture or same category) is based on the verbalized and visualized actions which are described in terms of action types, action subtypes, action aspect and action status. also visualized elements are described. The annotation of the text and pictures in steps 7 and 8 and the third and fourth pictures of MI 3, where the relation between the text and the pictures (i.e., only picture, text or same category) is based on the verbalized and visualized actions which are described in terms of action types, action subtypes, action aspect and action status.

Results

IWP versus RC

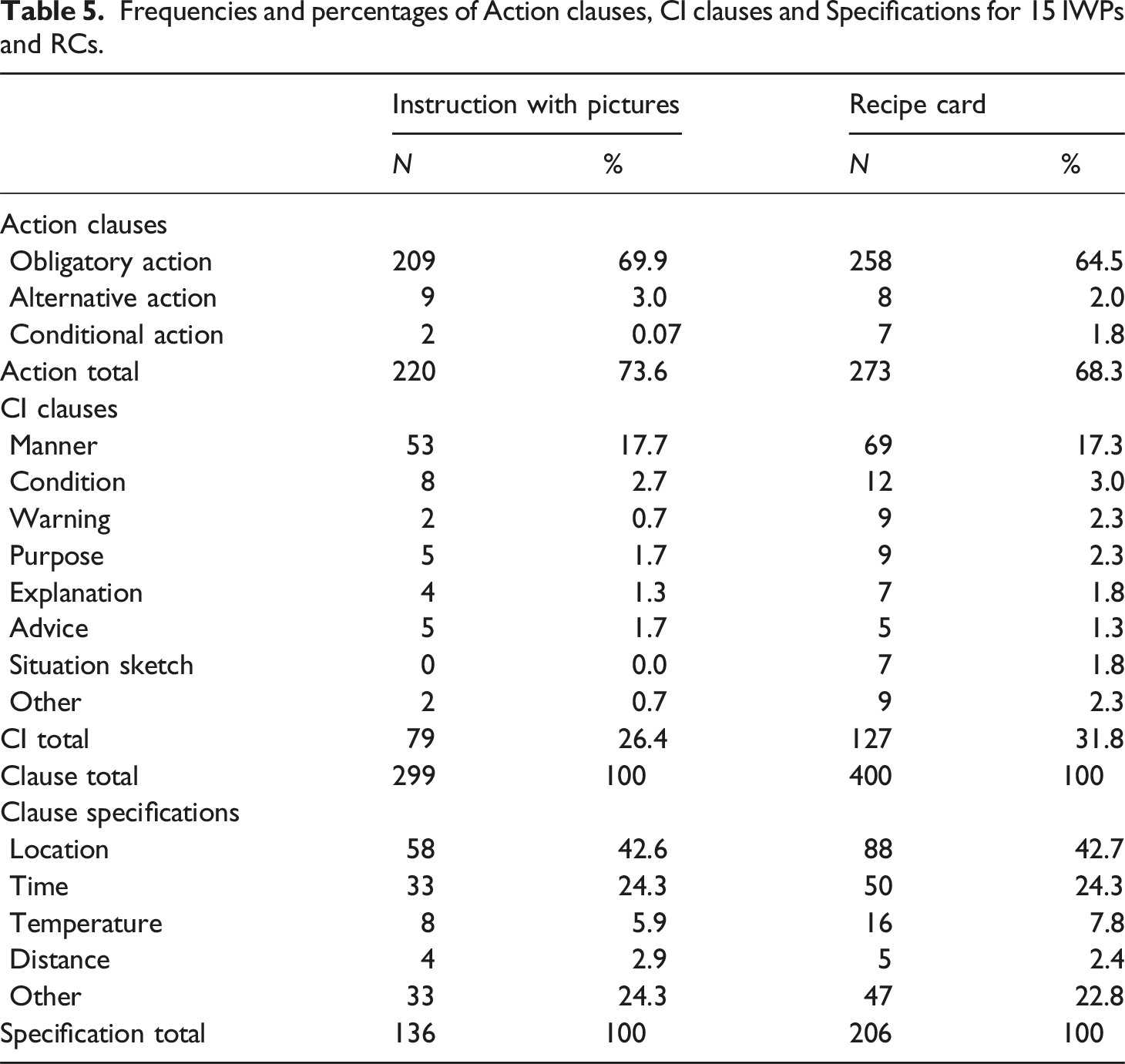

Frequencies and percentages of Action clauses, CI clauses and Specifications for 15 IWPs and RCs.

With respect to the CI clauses there is a clear difference between the two text types. As can be seen in Table 5, the RCs contain more CI clauses than the IWPs (127 vs 79). For both texts CI

The RC clauses also contain more Specifications than the IWP clauses (136 vs 206). The

In general terms, the percentages show that the distribution of the Action Status values and Control Information values and the Specification values are, as we expected, similar within the IWPs and the RCs. Consequently, the differences between IWP and RC for each of these categories are not significant.

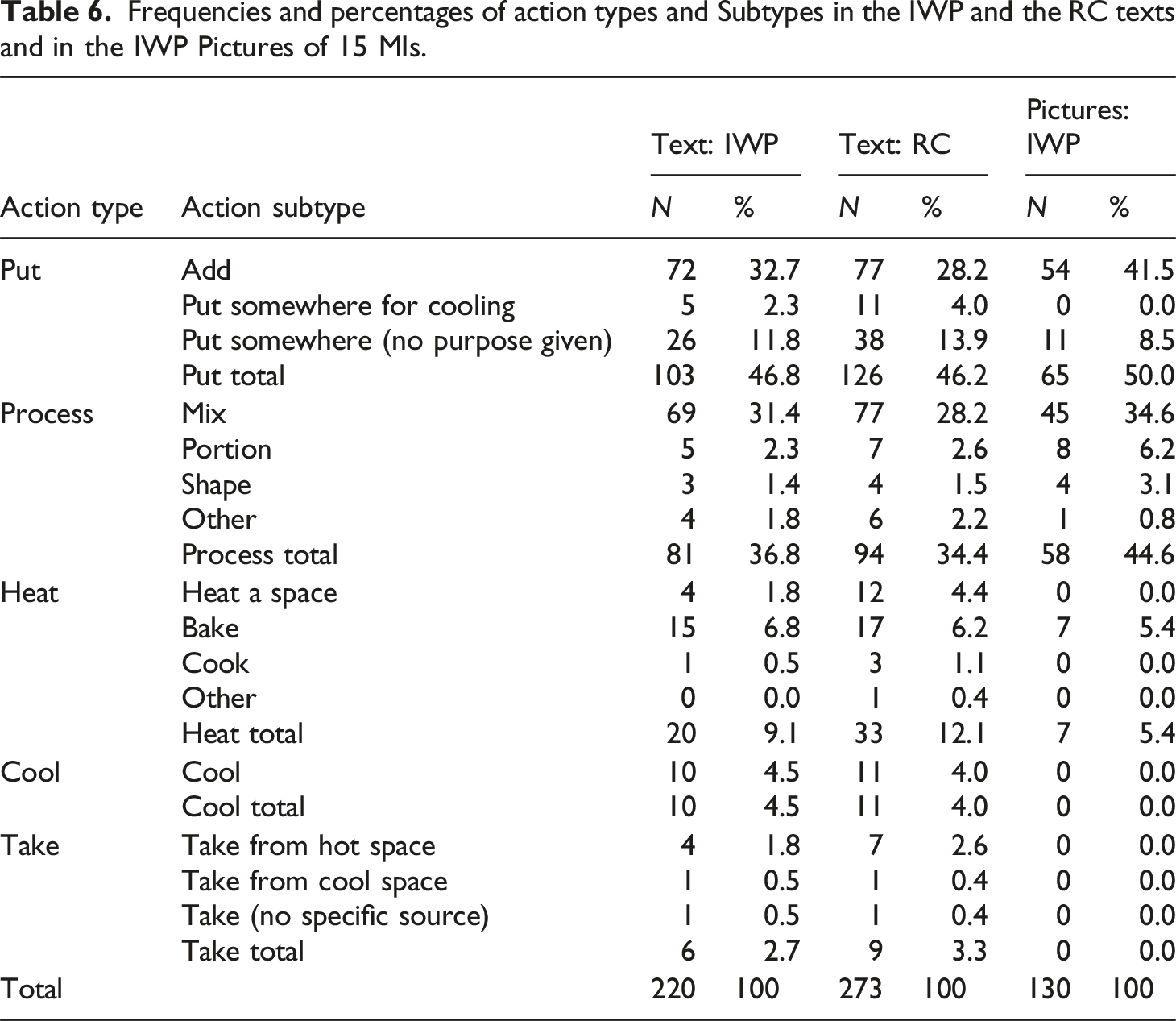

Frequencies and percentages of action types and Subtypes in the IWP and the RC texts and in the IWP Pictures of 15 MIs.

Text-picture relations in the IWPs

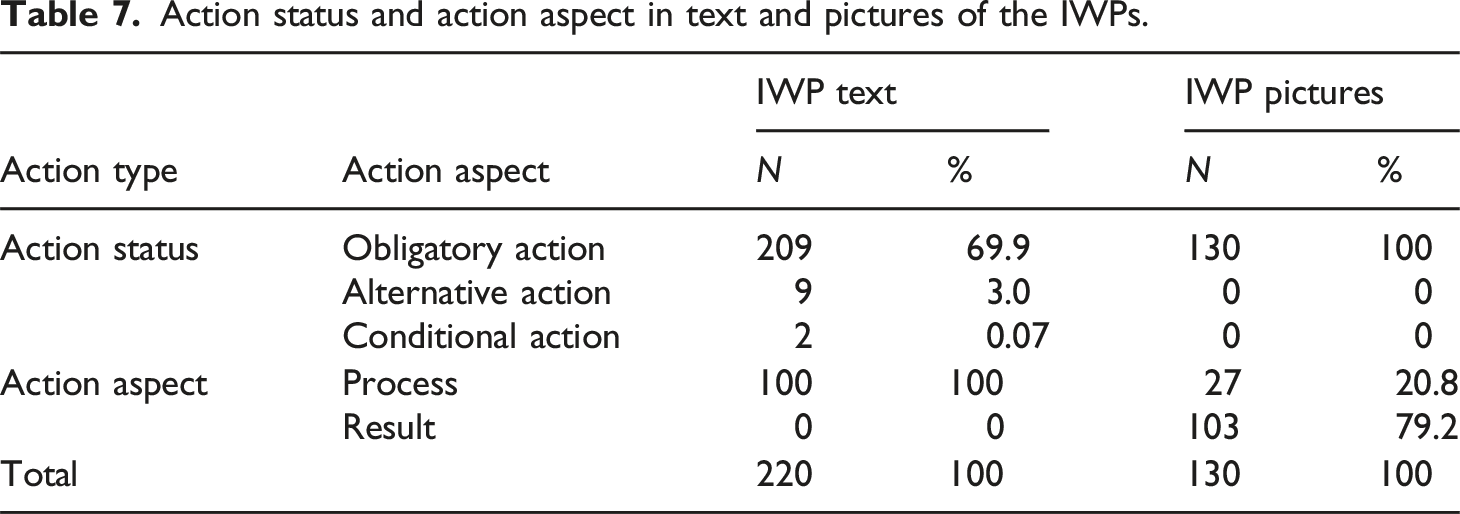

Action status and action aspect in text and pictures of the IWPs.

The Relation Identification category describes the forms in which the text and the pictures in the 15 corpus IWPs are linked.

Full correspondence

In MI 1, 4, 6, 9 and 13, the steps in the text fully correspond with the steps in the pictures. A number is added to the pictures to make it clear which textual step is referred to.

Sequential correspondence

In MI 2, 7 and 14, a number is also added to the pictures. This number, however, does not necessarily correspond to the textual step that the picture visualizes. Usually this happens because there are fewer pictures than steps in text. In MI 3, 5, 8 and 10, the pictures contain no indices at all. In these cases the correspondence between the text and the pictures can only be inferred from the order and the content of the pictures and the text.

Caption reference

In MIs 11 and 12, the pictures contain a caption that explains which action is visualized in it. The caption allows the reader to link the picture to a part of the text. MI 12 also contains indices, but these indices do not correspond to the indices of the textual steps.

Explicit textual reference

In MI 15, the pictures do contain numerical indices, which are referred to in text between parentheses. For example, step 2 in text says ‘To that, you’ll add the dry ingredients (photo 1)’. Even though the number in the picture does not relate to the number of the text step, it is still clear which step and which picture are related.

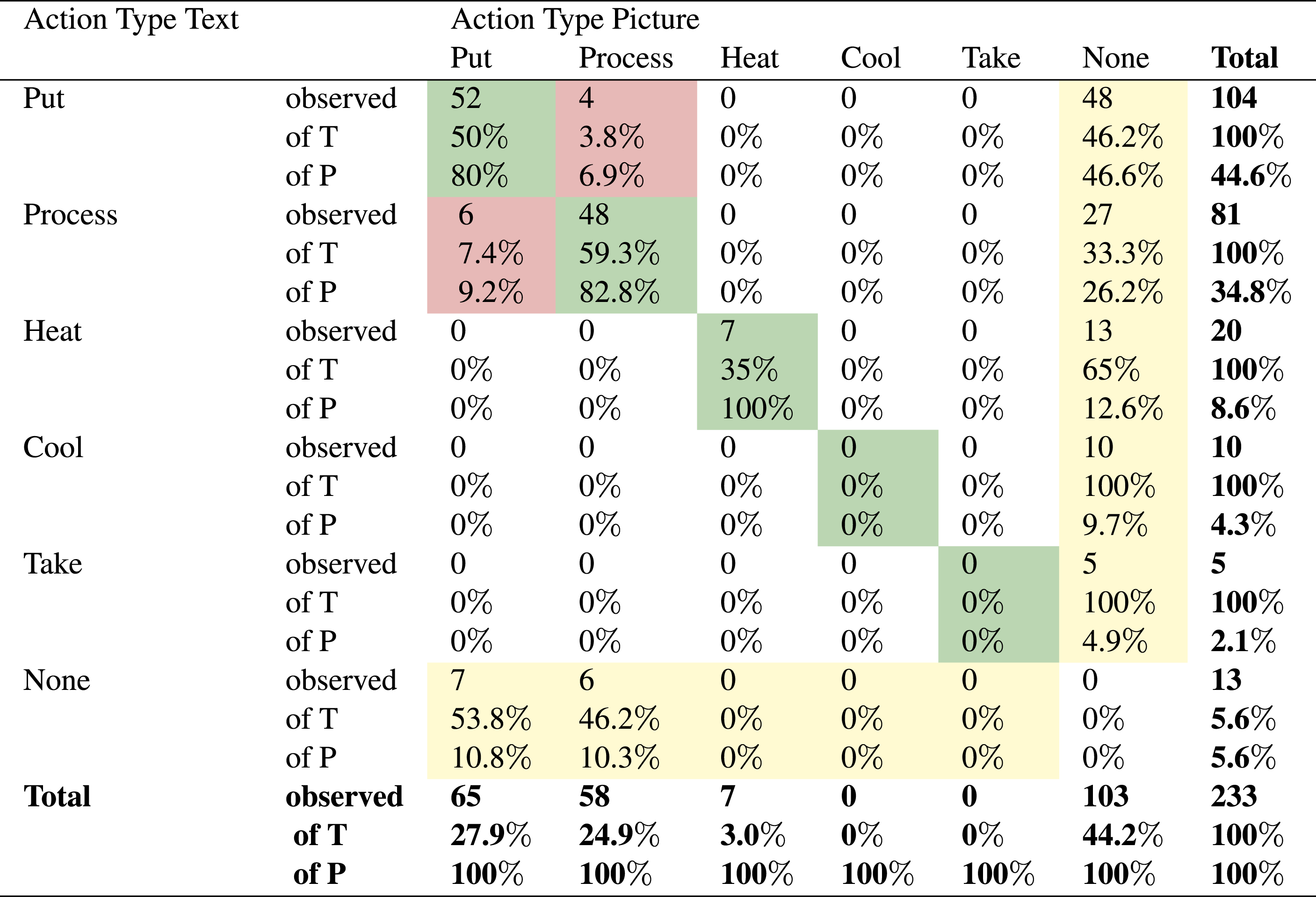

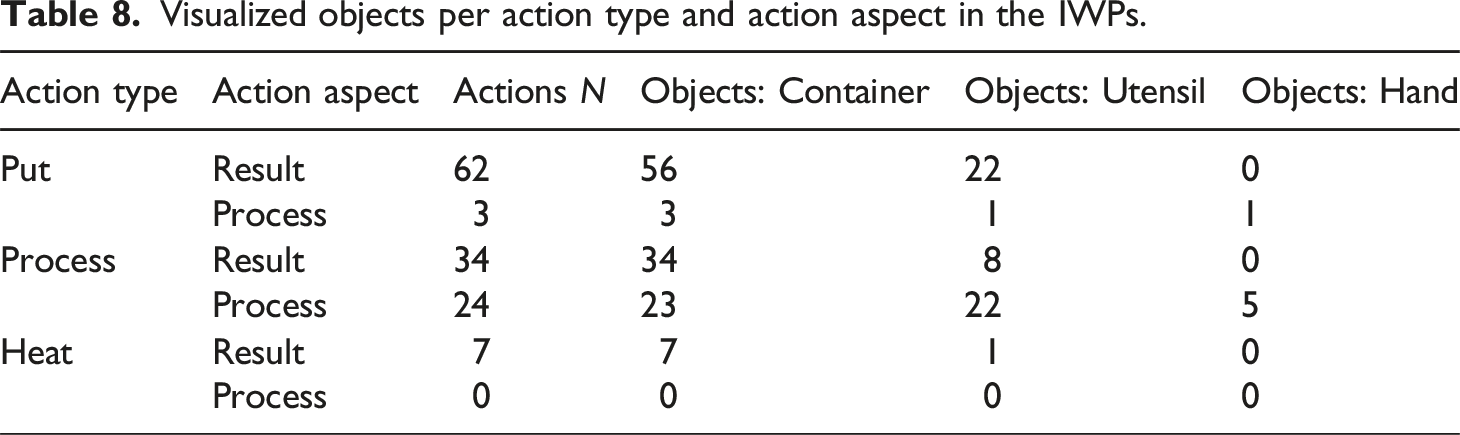

The text-picture relations within the IWP were also analyzed on the basis of Action (Sub)Types. Table 6 presents an overview of all the Action Types and Subtypes found in the pictures of the IWPs. There are several differences between the distribution of Action (Sub)Types presented in text and in pictures. In general, the pictures contain less actions than the texts. There are also several Action Types and Action Subtypes that do occur in the text, but are not visualized in pictures, such as the

Visualized objects per action type and action aspect in the IWPs.

Cross table overview of text-picture action type relations in the IWPs.

In general, the

Preliminary discussion

In the annotation models used to analyse the corpus we have included functional categories (i.e., Action Status, Action Aspect, Control Information, Specification) as well as domain dependent categories to describe the realized Action Types (

Functional categories are useful variables to predict meaning interpretation in different recipes, or eventually multimodal instructions in other domains. In the presented corpus study the IWPs and the RCs of 15 MIs were analyzed by applying an annotation model that allows for the annotation of Actions and Control Information in the text and the pictures of the MIs. The corpus analysis shows that the IWPs and RCs in the baking blogs vary in terms of the amount of text clauses (IWP Mean = 19.9, Std = 6.24; RC Mean = 26.7, Std = 7.83) and the amount of pictures, where the IWP includes pictures (IWP Mean = 6.49, Std = 2.54) that visualize actions and the RC does not. A comparison of the IWP text and the RC text resulted in the observations that in general the IWPs contained fewer clauses than the RCs (299 vs 400). Compared to the RCs, the IWPs contain fewer Action clauses (220 vs 273) and fewer Control Information clauses (79 vs 127). Within the text clauses in the IWPs and RCs also the number of Specifications, which mostly specified

Apart from the functional analysis, we conducted a domain dependent analysis to explore the way in which the text-picture relations in the IWPs are realized, and to support the analysis of the way in which the verbal and visual presentations are read and used in a situated context. The verbalized (

In the reader and user studies presented in the following sections of this paper, the effectiveness of the identified functionality and realization of the online baking instructions is explored in more detail to answer questions as: ‘How are the baking blogs read?’, ‘How are the instructions judged?’, ‘Do readers observe differences between a blog’s IWP and RC?’, and ‘how do readers interpret and value such differences?’. The description of the 15 blogs resulting from this corpus study is used to make informed choices in determining the content for the exploratory reader and user studies. Consequently, the corpus analysis also supports the interpretation of the collected reader and user data.

Eye-tracking study: Reading and judging recipes

The eye-tracking study presented in this section was designed to answer the question ‘How do people read and judge online baking blog recipes containing a multimodal instruction?’ Twelve participants were asked to read through and evaluate 1 of 3 baking blogs and 1 of 3 Instructions with Pictures. The research question introduces two concepts that need to be measured,

Participants

Eye-tracking and questionnaire data from 12 participants was recorded (eight male and four female). All participants were students living in the Netherlands (

Materials and setup

Three MIs described in the corpus, MI 1, MI 3, and MI 14, were used for this study. The IWPs and RCs of MI 1, MI 3 and MI 14 are presented in Figures 5 and 6 respectively. To represent the variation in the corpus, the three MIs were chosen on the basis of the number of text clauses (IWP Mean = 19.9, Std = 6.24; RC Mean = 26.7, Std = 7.83) and the number of pictures in the IWP (Mean = 6.49, Std = 2.54). Table 10 presents the amount of textual and visual information of the IWPs and RCs per blog. The number of clauses in the MIs is similar, with MI 14 including the most clauses ( (a) IWP of MI 1. (b) IWP of MI 3. (c) IWP of MI 14. Source: MI 1: https://whatshouldimakefor.com/olive-oil-chocolate-chip-cookies; MI 3: https://www.twosisterscrafting.com/chocolate-chip-cookies/; MI 14: https://www.foodologygeek.com/fleur-de-sel-chocolate-chip-cookies/. (a) RC of MI 1. (b) RC of MI 3. (c) RC of MI 14. Source: MI 1: https://whatshouldimakefor.com/olive-oil-chocolate-chip-cookies; MI 3: https://www.twosisterscrafting.com/chocolate-chip-cookies/; MI 14: https://www.foodologygeek.com/fleur-de-sel-chocolate-chip-cookies/. Characteristics of MIs in terms of the amount of action and CI clauses in the IWP and RC, and the number of pictures in the IWP.

In each of the three blogs, two Areas of Interest (AOIs) were defined that covered the Instruction with Pictures and the Recipe Card. The IWPs of the three blogs are given in Figure 5, while Figure 6 presents the RCs.

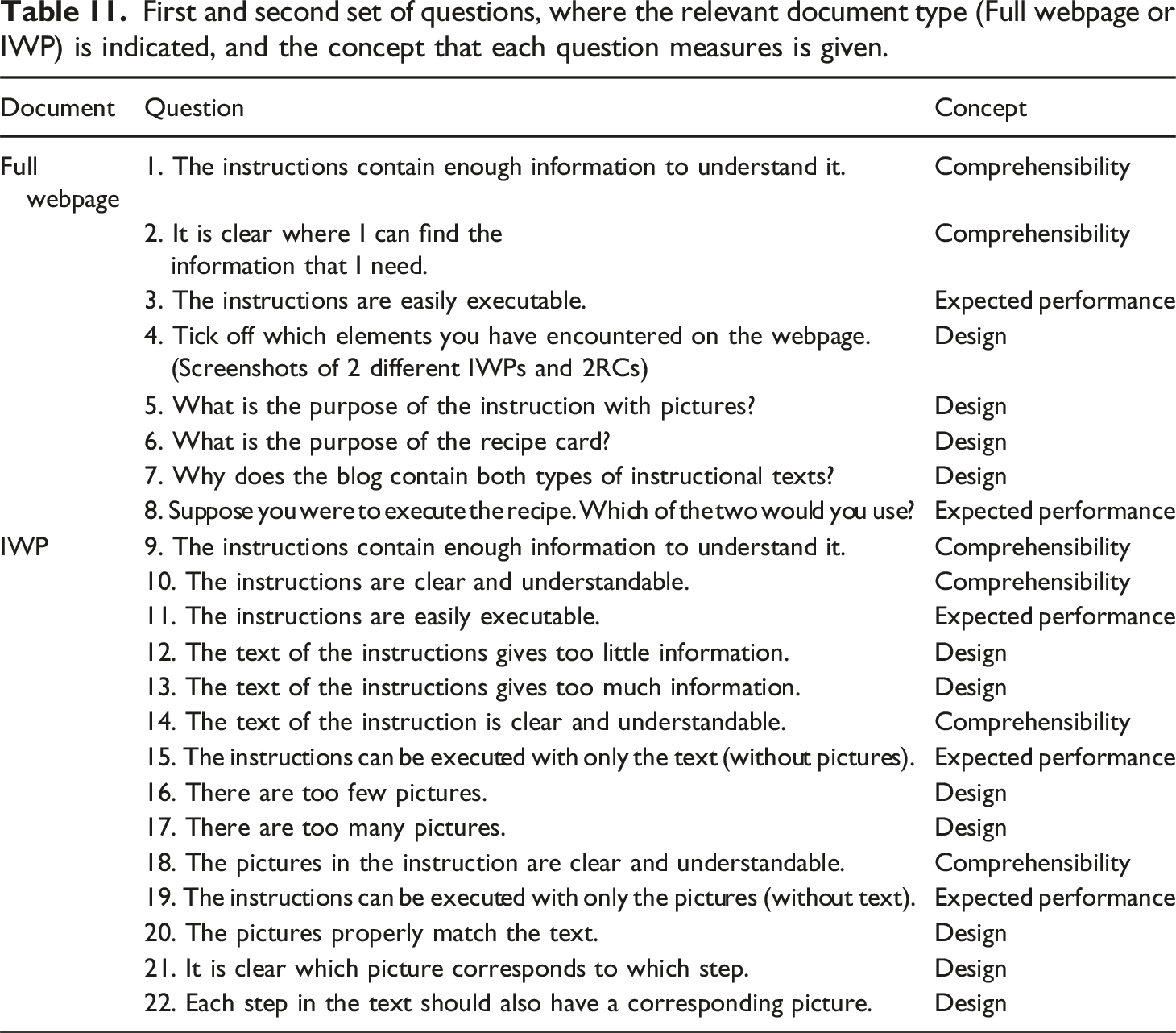

First and second set of questions, where the relevant document type (Full webpage or IWP) is indicated, and the concept that each question measures is given.

To obtain further insights in the participants’ observations and motivations, short interviews were included after the participant had filled out each part of the questionnaire. In these interviews the instructor went over the participants’ responses in the questionnaires, new questions were not included. The questionnaire also contained a set of demographic questions, in which participants were asked for their name, gender, age, highest level of education, first language and ability to read/comprehend English texts. Arguably, demographic characteristics have an effect on whether participants are familiar with cooking in general, more specifically baking cookies and reading and using recipes in English. The questionnaire also recorded whether they had experience using baking recipes, specifically for baking cookies, and, if so, how often they had done this and how long ago they baked cookies. These demographic questions were included to control for potential confounding factors and to provide a more nuanced understanding of the research findings.

The materials were presented to participants on a laptop connected to an Eyelink Portable Duo eye-tracker.2 This eye-tracker has a binocular sampling rate up to 2000 Hz, which results in very accurate and reliable eye-tracking data. The questionnaire was presented on a separate laptop. Figure 7 presents a simulated picture of the study setup. In terms of software, Weblink3 was used to present the materials and record eye movement, while Data Viewer

4

was used to process the data. Weblink is a screen recording software by SR-Research, in which participants can view and interact with websites, documents and images while their eye movement is recorded. The software compensates for scrolling movement, which means that the data on the whole webpage is accurately recorded. Simulation of the eye-tracking study setting, including questionnaire laptop (left) and eye-tracker laptop (right). The picture was generated with https://floorplanner.com/.

Procedure

The participants individually took part in the study. Each of the participants was welcomed into the lab. After receiving a short oral introduction to the study, the participants signed a consent form and filled out the demographic questions on the questionnaire laptop. Next, the participants switched to the other laptop, where the eye-tracker was calibrated to the participants’ pupils by the instructor. From that point onward, all tasks and instructions were presented visually on the eye-tracker laptop. The instructor stepped back from the study setup, but stayed in the lab at another desk. The participants read the task description, which told them to look at a baking blog webpage on the eye-tracking laptop, imagining that they were planning to use a blog recipe for baking cookies with the purpose of deciding whether the given recipe was to their liking. Note that with this task we envisioned to simulate a real life context, meaning the participants were not explicitly asked to read the whole webpage. The instructions also stated that they would be asked to answer a set of questions related to the shape, content, and function of different elements within the blog, to encourage them to pay attention to those aspects while reading the blog. After reading through the webpage, the laptop showed the instruction to switch to the questionnaire laptop and fill out questions 1–8. While the participants were reading the text and filling out the questionnaire, there was no conversation between the participants and the instructor. After filling out the first questionnaire, the instructor briefly interviewed the participants about their answers to the questions and about the webpage in general. Next, the instructor invited the participants to switch back to the eye-tracking laptop, and stepped back from the participants again. The laptop presented the instruction to look at an Instruction with Pictures (no specific prompt was given), and subsequently answer questions 9–22 on the questionnaire laptop. Again, the instructor was not involved during these steps. After the participants had filled out the questionnaire, the instructor did another short interview to discuss the participants’ answers to the questionnaire and views on the IWP. Finally, the participants were debriefed about the study and thanked for their participation.

Analysis

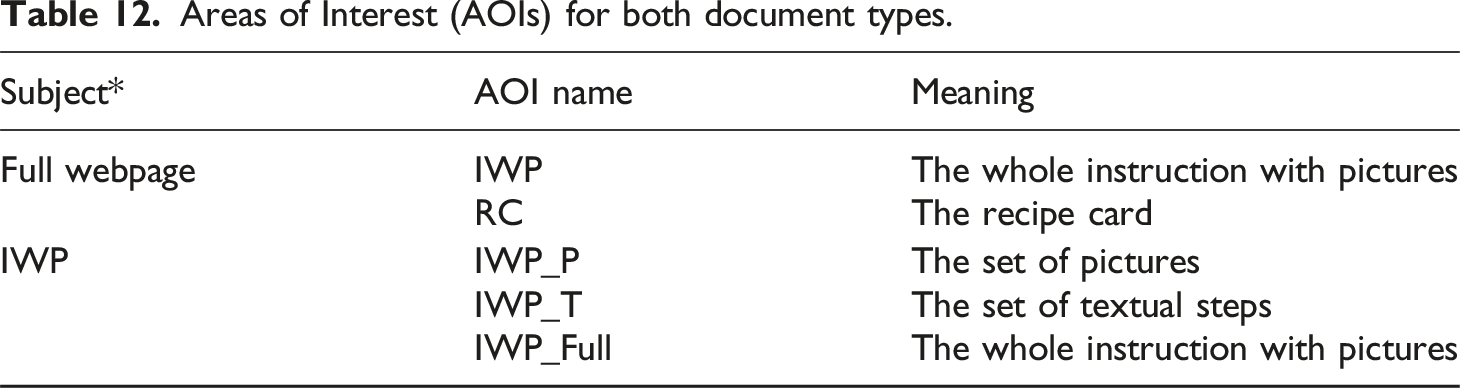

Areas of Interest (AOIs) for both document types.

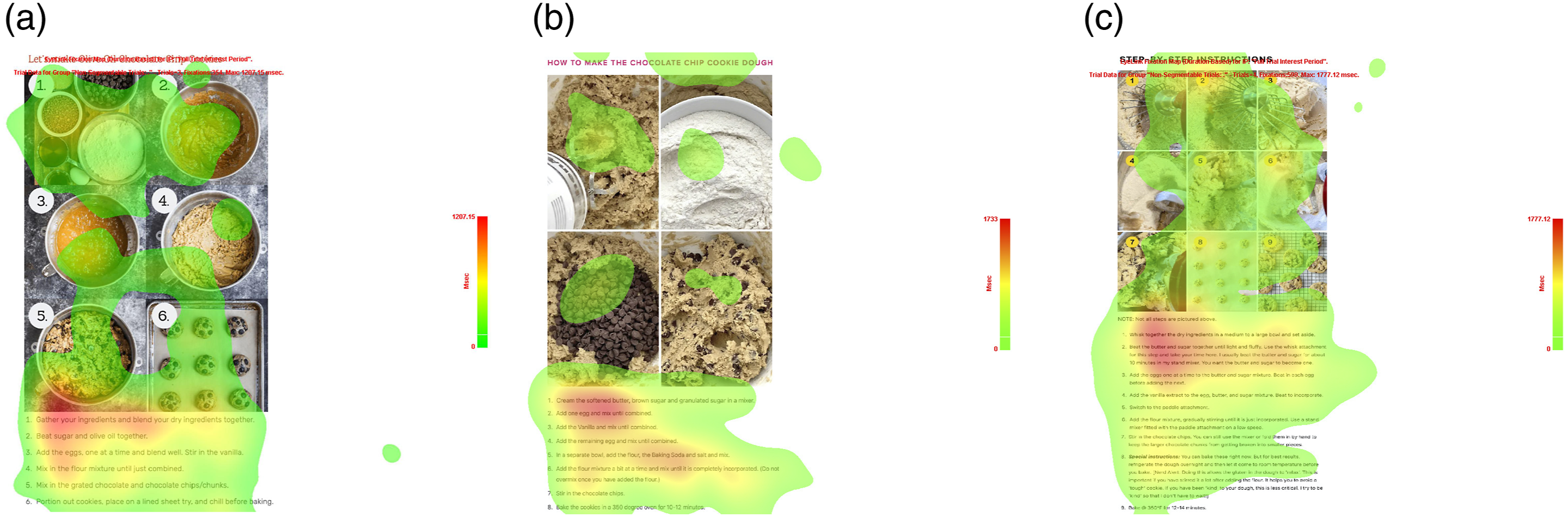

For the individual IWPs, the reading strategy was also analyzed using heat maps. Heat maps visualize the amount of attention paid within an AOI. In this case it was recorded how participants distribute their attention between the text and pictures within three different IWPs (i.e., AOI IWP_Full). No heat maps were generated for the full webpages, as the size of the documents made this infeasible and most likely uninformative given our research question.

The numerical data collected with the questionnaires was processed by calculating means and standard deviations. The numerical data relating to

Results

The presentation of the results of the eye-tracking study below is split into the reading data of the eye-tracker, and the judgment results collected with the questionnaire.

Eye-tracker results

Webpage

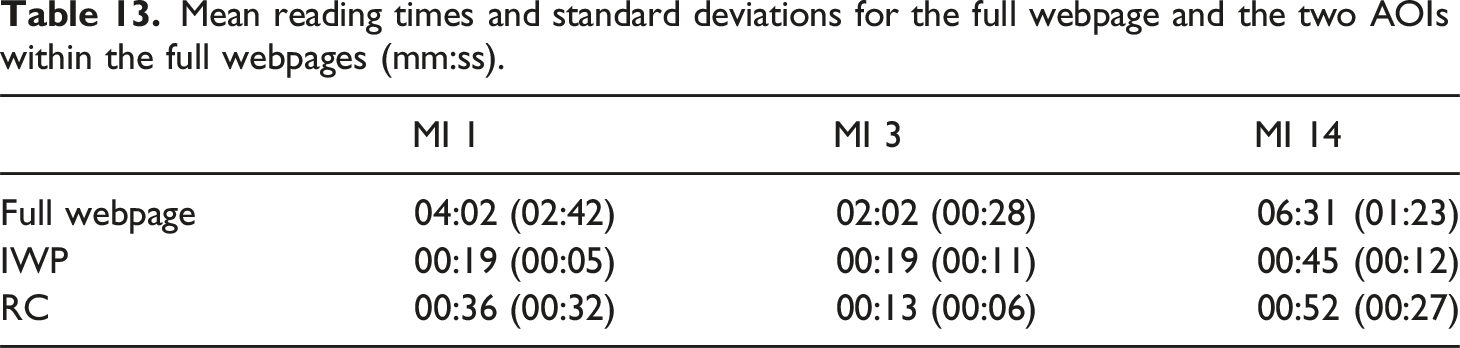

Mean reading times and standard deviations for the full webpage and the two AOIs within the full webpages (mm:ss).

Instruction with pictures

The reading strategy for the individual IWPs is very different from the reading strategy for the full webpage. When scrolling through the webpage, participants generally follow a linear reading, and also when looking at the IWP within the webpage, participants go through it linearly. However, when looking at the IWP as a separate component, participants switch back and forth between different elements. All participants begin and end their session looking at the pictures. In between, they switch back and forth between the text and the picture. Some parts of the sequences demonstrate how participants attempt to link the textual steps with the pictures. For example, for Participant 1, who read the IWP of MI 14, the following gaze sequence was recorded for the first four steps of the IWP: T1

In order to visualize the gaze distribution over the different elements within the IWPs, a heat map was created for each of the MIs. The heat maps are presented in Figure 8. The coloured overlay displays where the participants were looking, where the red areas are dwelled on the most. The heat maps show that there are no pictures or textual steps that are fully overlooked. The heat maps also highlight that the dwell time on the text is longer than the dwell time on the pictures. The first few textual steps of each IWP receive the most attention from the participants, but the attention gradually declines towards the end of the text. Heat maps for the IWPs of MI 1, MI 3 and MI 14 as generated with the Eyelink software (https://www.sr-research.com/eyelink-portable-duo/). (a) IWP of MI 1. (b) IWP of MI 3. (c) IWP of MI 14.

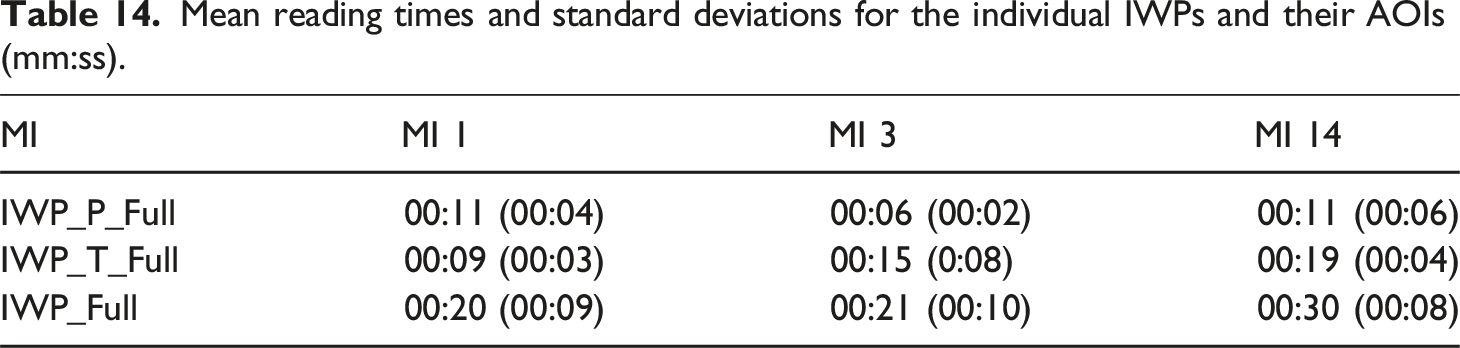

Mean reading times and standard deviations for the individual IWPs and their AOIs (mm:ss).

For the text, the dwell time corresponds to the amount of text. MI 1 has the lowest dwell time of 9 seconds, followed by MI 3 with 15 seconds, and MI 14 with 19 seconds. This is in line with the amount of text presented in the IWPs.

Questionnaire results

Webpage - Comprehensibility

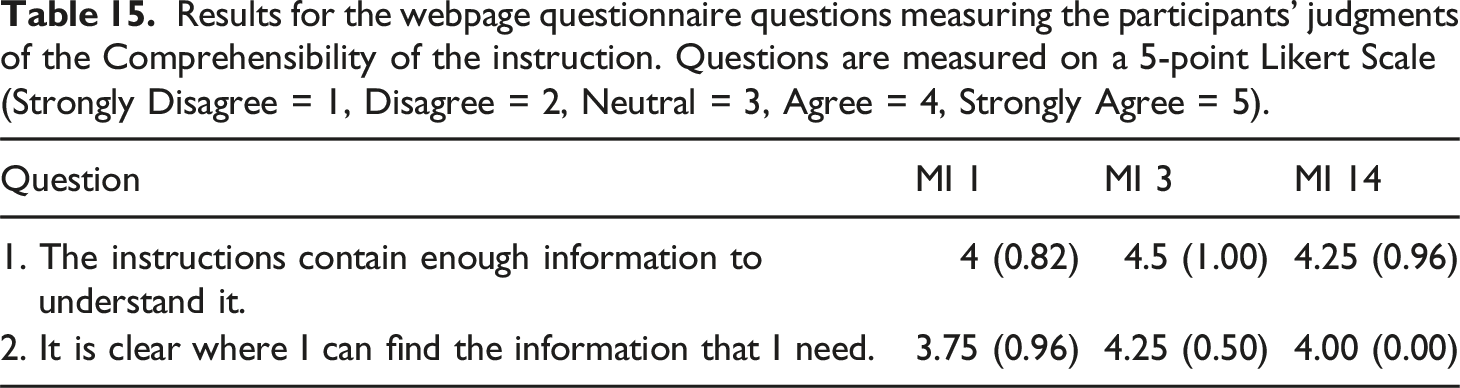

Results for the webpage questionnaire questions measuring the participants’ judgments of the Comprehensibility of the instruction. Questions are measured on a 5-point Likert Scale (Strongly Disagree = 1, Disagree = 2, Neutral = 3, Agree = 4, Strongly Agree = 5).

Webpage - Design

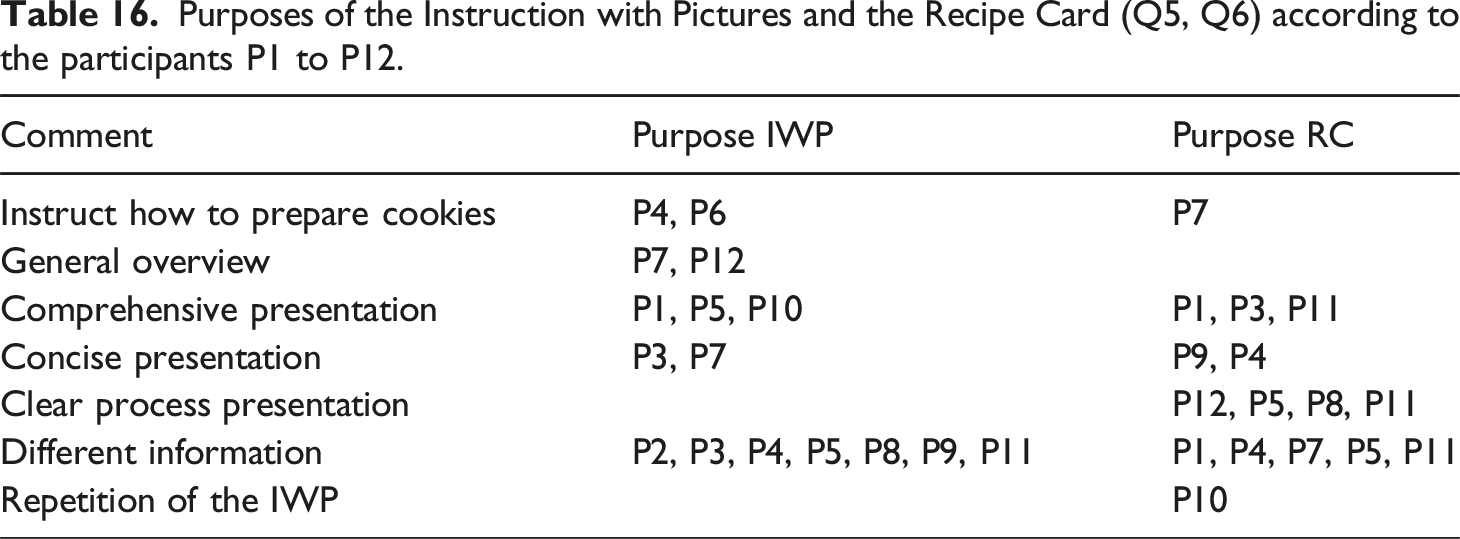

Purposes of the Instruction with Pictures and the Recipe Card (Q5, Q6) according to the participants P1 to P12.

Webpage - Expected performance

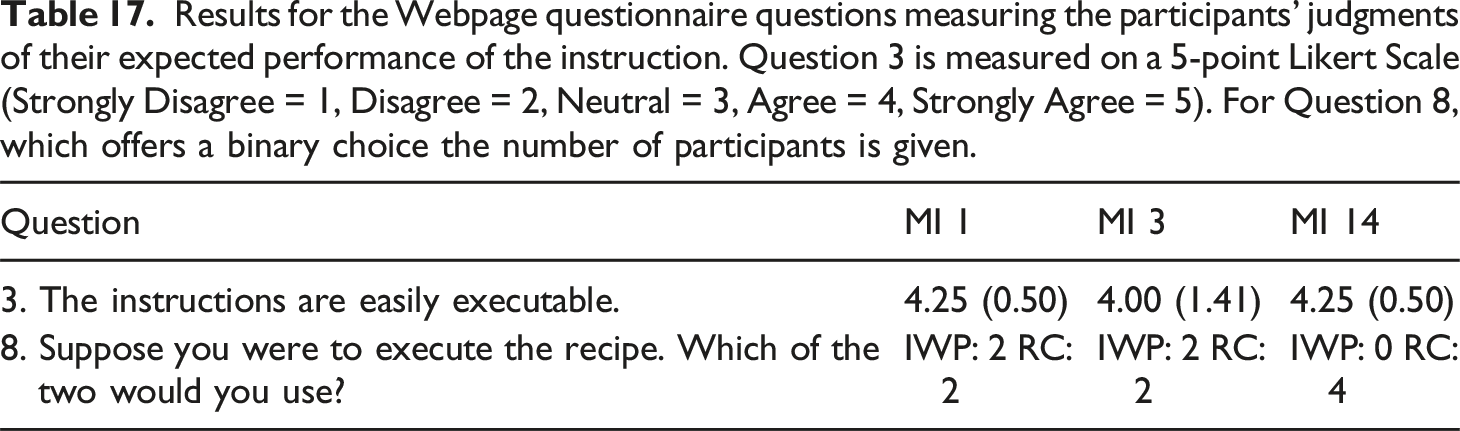

Results for the Webpage questionnaire questions measuring the participants’ judgments of their expected performance of the instruction. Question 3 is measured on a 5-point Likert Scale (Strongly Disagree = 1, Disagree = 2, Neutral = 3, Agree = 4, Strongly Agree = 5). For Question 8, which offers a binary choice the number of participants is given.

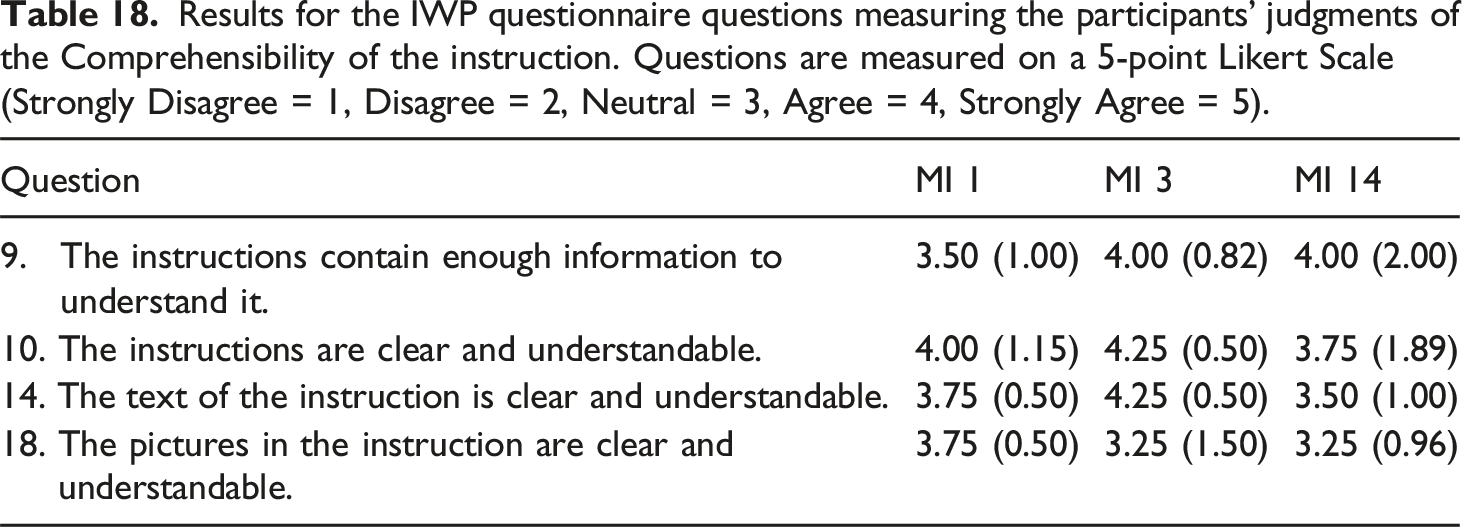

IWP - Comprehensibility

Results for the IWP questionnaire questions measuring the participants’ judgments of the Comprehensibility of the instruction. Questions are measured on a 5-point Likert Scale (Strongly Disagree = 1, Disagree = 2, Neutral = 3, Agree = 4, Strongly Agree = 5).

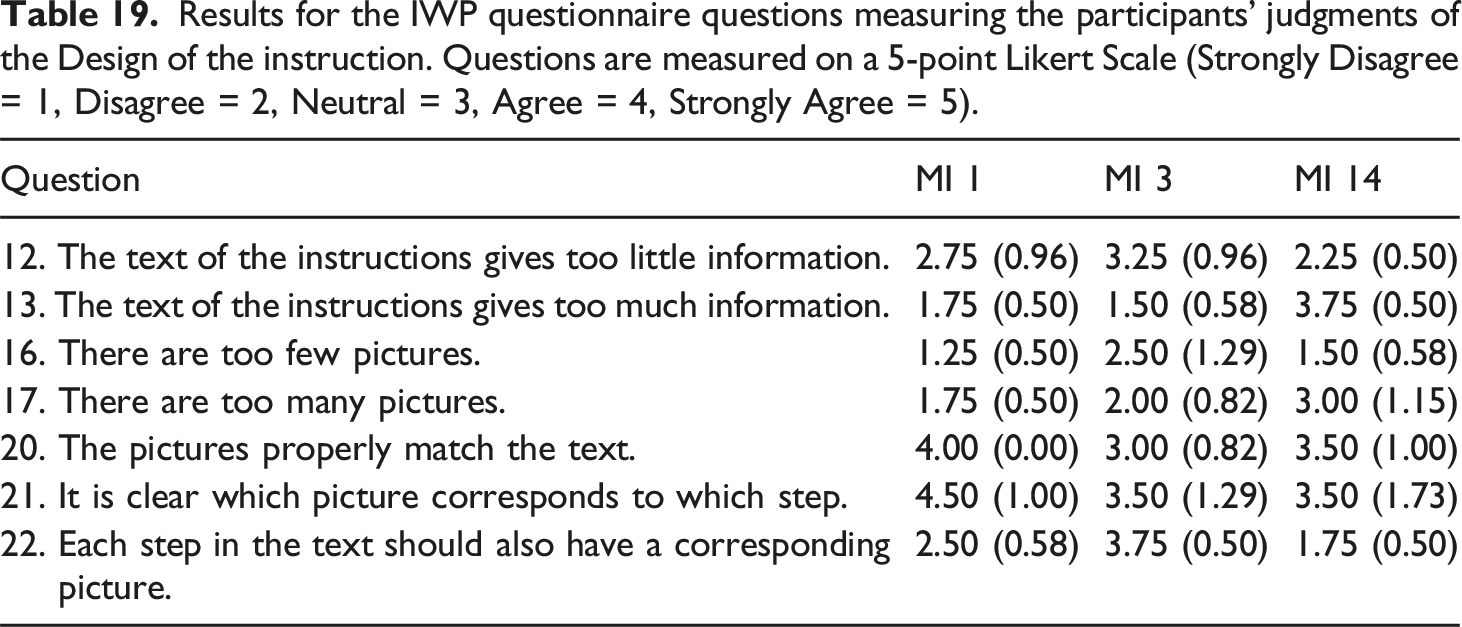

IWP - Design

Results for the IWP questionnaire questions measuring the participants’ judgments of the Design of the instruction. Questions are measured on a 5-point Likert Scale (Strongly Disagree = 1, Disagree = 2, Neutral = 3, Agree = 4, Strongly Agree = 5).

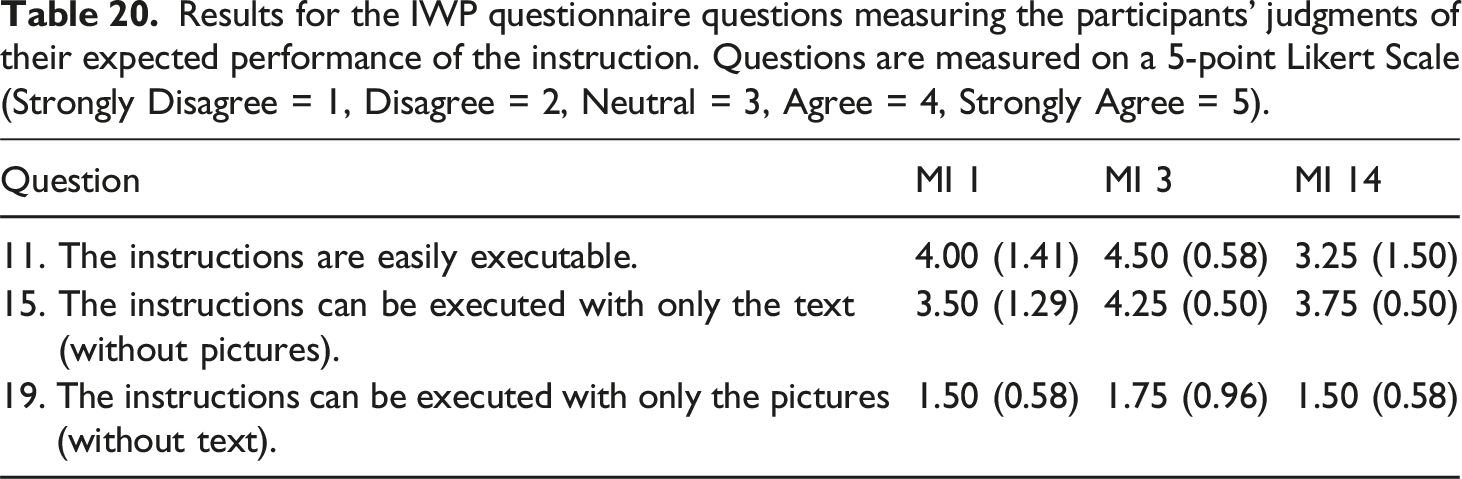

IWP - Expected performance

Results for the IWP questionnaire questions measuring the participants’ judgments of their expected performance of the instruction. Questions are measured on a 5-point Likert Scale (Strongly Disagree = 1, Disagree = 2, Neutral = 3, Agree = 4, Strongly Agree = 5).

Preliminary discussion

The amount of time that participants spend on the blogs and IWPs corresponds with the length of the documents. Notably, only a small portion of the time that participants spent on the baking blog was spent on the Instruction with Pictures and the Recipe Card in it. Most participants read the baking blogs including the IWP within it in a linear fashion. In contrast, the participants’ processing of the individual IWPs shows a different reading strategy in which the participants move back and forth between the pictures and the text, which leads us to conclude that readers make an effort to establish content relations between the text and pictures. The time that participants spent on the IWP pictures suggests that a smaller number of pictures (

Although some participants remarked that the blogs were lengthy and difficult to navigate, all the participants found the instructions clear and understandable, especially the text of MI 3 which is of medium length compared to MI 1 and MI 14 and the pictures of MI 1 which show the results of six actions without specifying the utensils used to obtain these results. All the participants thought that they would be able to use the instructions to successfully bake cookies even without the pictures but not with only the IWP pictures. Interestingly, the participants were divided on which part of the blog they would use to bake the cookies, the Recipe Card or the Instruction with Pictures.

The variation in the readers’ evaluations and interpretations of IWPs and RCs raises questions about the use of IWPs and RCs in a real live situation, and whether users and readers could differ in their judgments of the comprehensibility, design and their expected/actual performance of IWPs and RCs. Accordingly, the exploratory user study presented in the next section was set up. The reader study results as well as the corpus analysis informed the selection of the IWP and RC to offer to the participants in the user study in terms of verbal and visual content. Consequently, the results of the reader study and corpus analysis support the interpretation of the data collected through situated use of the RC and IWP. The reader study results also helped in compiling the materials for the user study. For instance, as it was noted in the reader study that the IWPs do not include an ingredient list which is crucial to prepare the cookie dough, we were able to prevent a situation in which users of the IWP and RC were prompted with different starting points.

User study: Baking cookies

The user study presented in this section was designed to test the following question: ‘How does using either the Instruction with Pictures or the Recipe Card of a baking blog influence the user’s execution of the baking instruction and the user’s judgments of the comprehensibility, design and performance of the baking instruction?’ The research question introduces three concepts that need to be measured, the

Participants

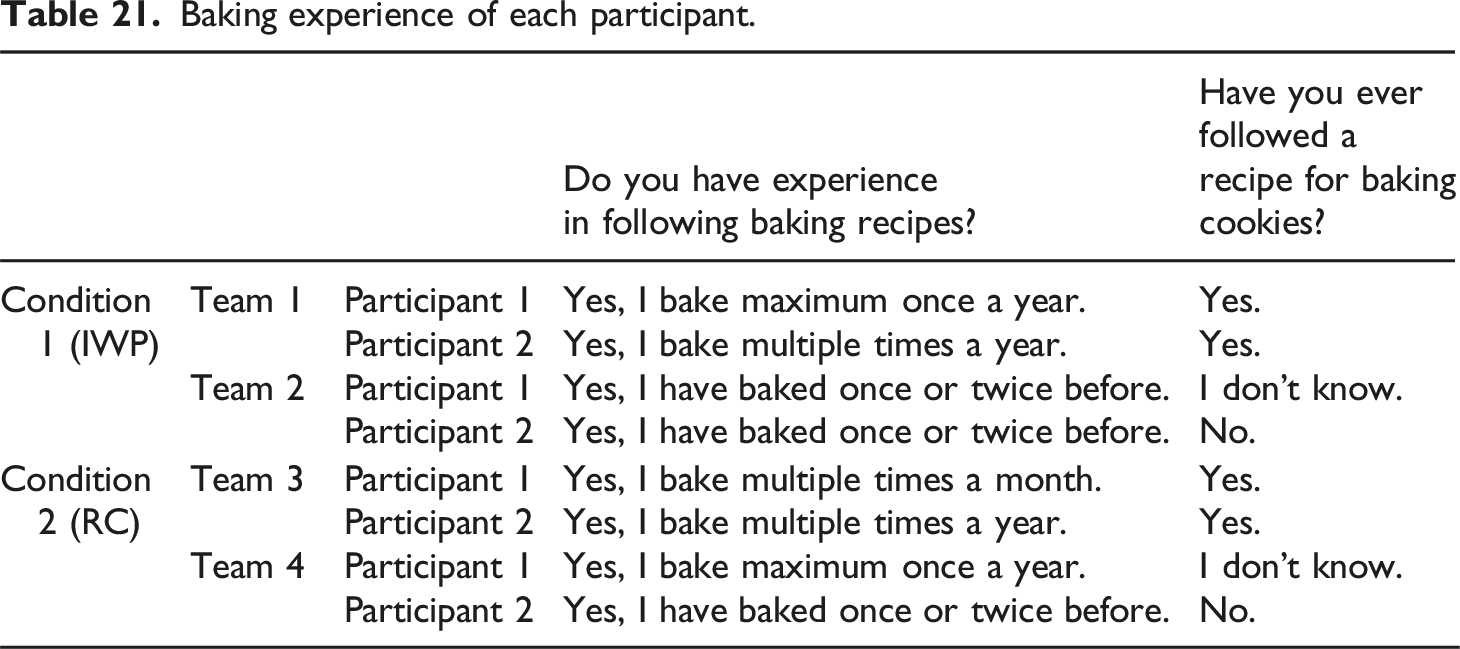

The study was conducted with four teams of 2 participants, resulting in a total of eight participants (three males and six females). All participants resided in the Netherlands (

The teams were divided into two conditions: the participants in the IWP Condition used the Instruction with Pictures to bake cookies, while the participants in the RC Condition used the Recipe Card of the same baking blog to bake cookies. After baking the cookies using their assigned instruction, the participants also read the other instruction from the same blog and subsequently rated it.

Baking experience of each participant.

Materials and setup

The Instruction with Pictures and the Recipe Card used in the reader study are presented in Figures 9 and 10. The figures present the fragments from the webpage of MI 2 that were analyzed in the corpus study. The motivation for choosing MI 2 is based on the corpus analysis and on the reader study results presented in this paper. The corpus study shows that the main difference between the IWP and the RC is the absence of pictures in the Recipe Card. In order to investigate the added value of the pictures in multimodal baking instructions, we decided to use an IWP and RC that are similar in terms of their verbal content and include an average amount of text and pictures given the IWPs in the corpus (clauses: Mean = 19.9, Std = 6.24; pictures: Mean = 6.49, Std = 2.54). In addition, the amount of visual and verbal information presented in MI 2 is in line with the preferences of the participants in the reader study. Although the corpus study shows that the Recipe Card generally contains more Action clauses and CI clauses, this is not the case for all MIs. In MI 2, both texts contain virtually the same amount of Action clauses that is 18 in Instruction with Pictures versus 19 in Recipe Card. The action that is omitted in the IWP compared to the RC is the first step of preheating the oven (Action Subtype Instruction with pictures (MI 2). Source: https://tornadoughalli.com/the-best-chocolate-chip-cookies/. Recipe card (MI 2). Source: https://tornadoughalli.com/the-best-chocolate-chip-cookies/.

In the reader study the participants observed that a Recipe Card contains the indispensable ingredient list while the Instruction with Pictures does not. To make sure that teams in the two conditions worked from the same starting point, the participants were provided with the exact amount of each of the ingredients necessary for baking the cookies. Note that providing the exact amounts of the ingredients is also expected to reduce potential errors in the participants’ performances, the duration of the sessions in which the teams executed the baking procedure and our own efforts in analysing the user study data.

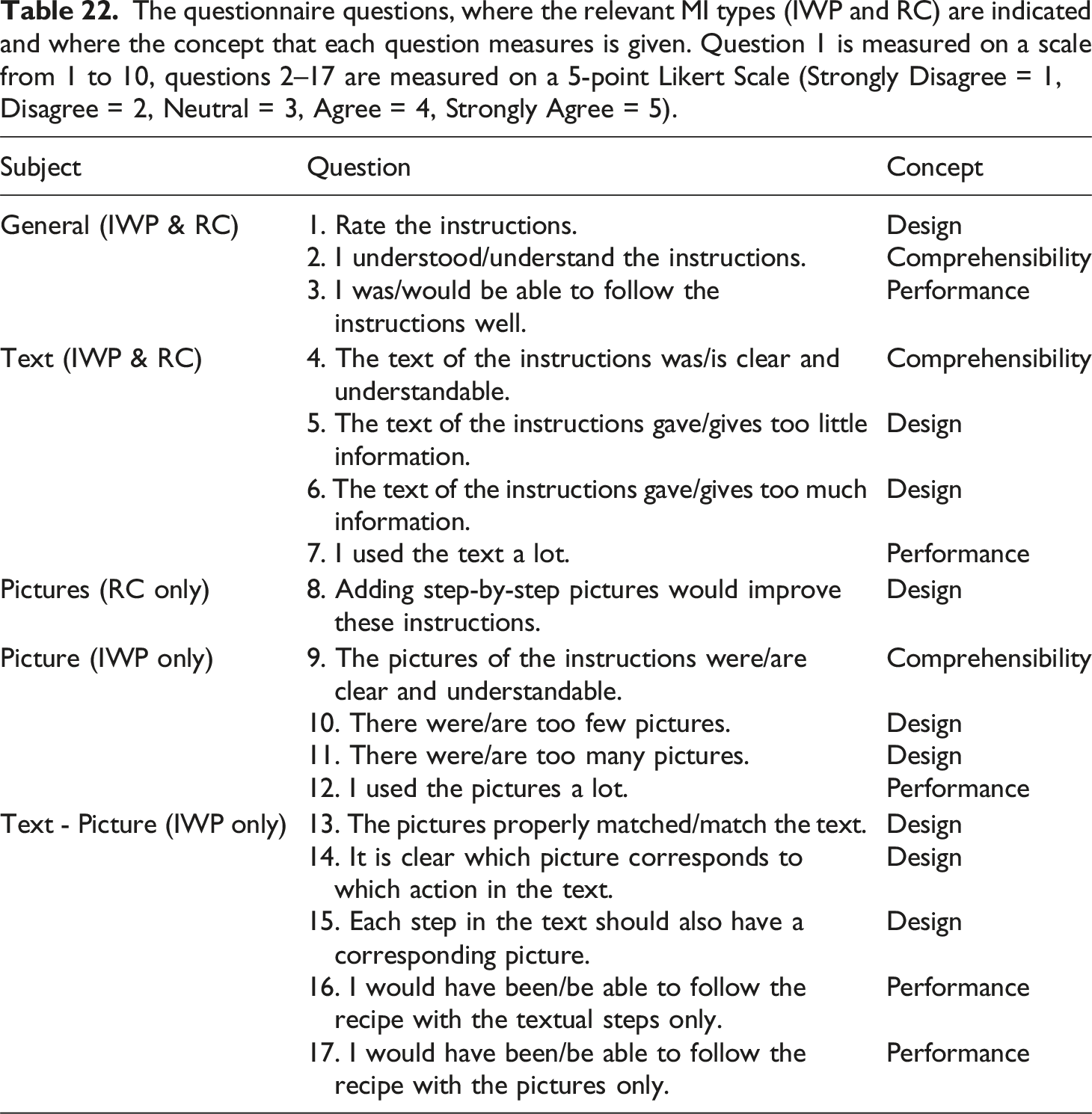

The questionnaire questions, where the relevant MI types (IWP and RC) are indicated and where the concept that each question measures is given. Question 1 is measured on a scale from 1 to 10, questions 2–17 are measured on a 5-point Likert Scale (Strongly Disagree = 1, Disagree = 2, Neutral = 3, Agree = 4, Strongly Agree = 5).

After filling out the questionnaire, the participants in the IWP Condition were shown the RC, and the participants in the RC Condition were shown the IWP. Participants did not have to execute these instructions, but were merely asked for their opinion on this alternative instruction using the relevant questions presented in Table 22. The participants in the IWP Condition now answered questions 1–6 and eight to rate the RC, and the participants in the RC Condition answered Questions 1–6, 9–11 and 13–17.

By asking participants to rate the questions presented in Table 22, different aspects of the MI in the given distribution based on their use or their reading of either the IWP or the RC, we recorded a comprehensive evaluation of the instruction. The collected data allows us to make comparisons between the

At the end of the study, the participants were asked to fill out the demographic questions that were also used in the eye-tracking study, in which they were asked for their name, gender, age, highest level of education, first language and their ability to read/comprehend English texts. The questionnaire also recorded whether they had experience with following baking recipes, specifically for baking cookies, and, if so, how long ago this had happened.

Figure 11 shows the setup in which each pair of participants was recorded. Participants were asked to stand behind a table. On this table, all of the ingredients were laid out in the correct amounts in bowls and/or containers. Participants were also provided with the utensils and tools necessary for the execution of the recipe such as empty bowls, a mixer, an oven etc. The recipe (either Instruction with Pictures or Recipe Card) was presented to the participants on a laptop screen. The screen was recorded to enable monitoring the specific parts of the instruction that participants looked at. A camera in front of the table with the bowls and the mixer recorded the baking process. User study setting with the workspace and a detail of ingredients in birds eye views, and a view from the side showing the counter with the oven, utensils and laptop that present the instruction.

Procedure

The instructor welcomed the participants into the lab and invited them to position themselves at the table with the ingredients and the laptop. The participants first read a short introduction to the study, then they were asked to sign a consent form regarding their voluntary attendance, privacy, confidentiality and our data collection. Subsequently the participants were given a task description, in which the setup of the study was explained. The introduction to the study, the consent form, and the task description were presented on the laptop. In the task description, the participants were advised to make a task division such that one of them kept track of the instructions on the laptop, while the other could mainly focus on executing the actions to bake the cookies using the tools and ingredients provided on the table. After the team had read the task description, there was one last opportunity to ask questions. When all questions were resolved, the instructor started the camera recording and the laptop screen recording, opened the baking instructions on the laptop for the participants, and left the room. From this point onwards, the participants were not allowed to speak with the instructor until they had finished the recipe. In the task description, the participant had been instructed to first read the recipe before starting the baking process. Note that the participants were not offered the whole webpage. The recipe (IWP or RC) was presented on the laptop, as a scrollable fragment of the webpage from which it originated. Once the cookies were in the oven, the participants called the instructor back into the room. The participants then sat down on a couch and were invited to fill out the questionnaire questions in Table 21 on their phones (they were of course offered only the relevant subset of questions). While filling out the questionnaire, they were able to look at the instructions they had used (IWP or RC) on a laptop in front of them. Subsequently, the participants who had used the Instruction with Pictures were offered the Recipe Card and vice versa. These instructions were also presented on the laptop. After reading this alternative instruction, participants filled out the questionnaire with the relevant questions presented in Table 22. Subsequently, the participants filled out the demographic questionnaire. Participants were explicitly instructed not to discuss their answers while filling out the questionnaires. Finally, the participants were debriefed and thanked for their participation.

Analysis

The data in the questionnaires was processed by calculating means and standard deviations for each of the questions. For the questions we used a 5-point Likert Scale with values: Strongly Disagree = 1, Disagree = 2, Neutral = 3, Agree = 4, Strongly Agree = 5. The questionnaire responses about the instruction that participants executed were analyzed by comparing the scores of the IWP Condition and the RC Condition. The questionnaire data was organized into subsets with respect to the three concepts that the questionnaire measures:

Variables used in the video analysis to measure user performance.

We also analyzed the second part of the questionnaire, in which participants were asked questions about the alternate instruction which they had only read. This data was used to make a comparison between users and readers of both the RC and the IWP.

Results

The results are presented in two parts: the findings in the user data collected with the questionnaire and video recordings, and a comparison of users and readers of the IWP and the RC based on the questionnaire data.

User results

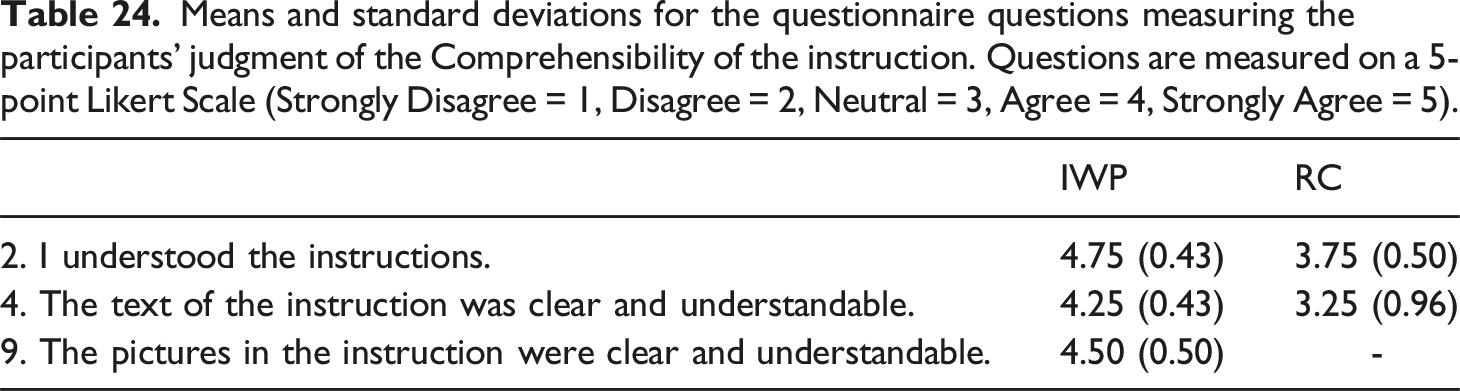

Comprehensibility

Means and standard deviations for the questionnaire questions measuring the participants’ judgment of the Comprehensibility of the instruction. Questions are measured on a 5-point Likert Scale (Strongly Disagree = 1, Disagree = 2, Neutral = 3, Agree = 4, Strongly Agree = 5).

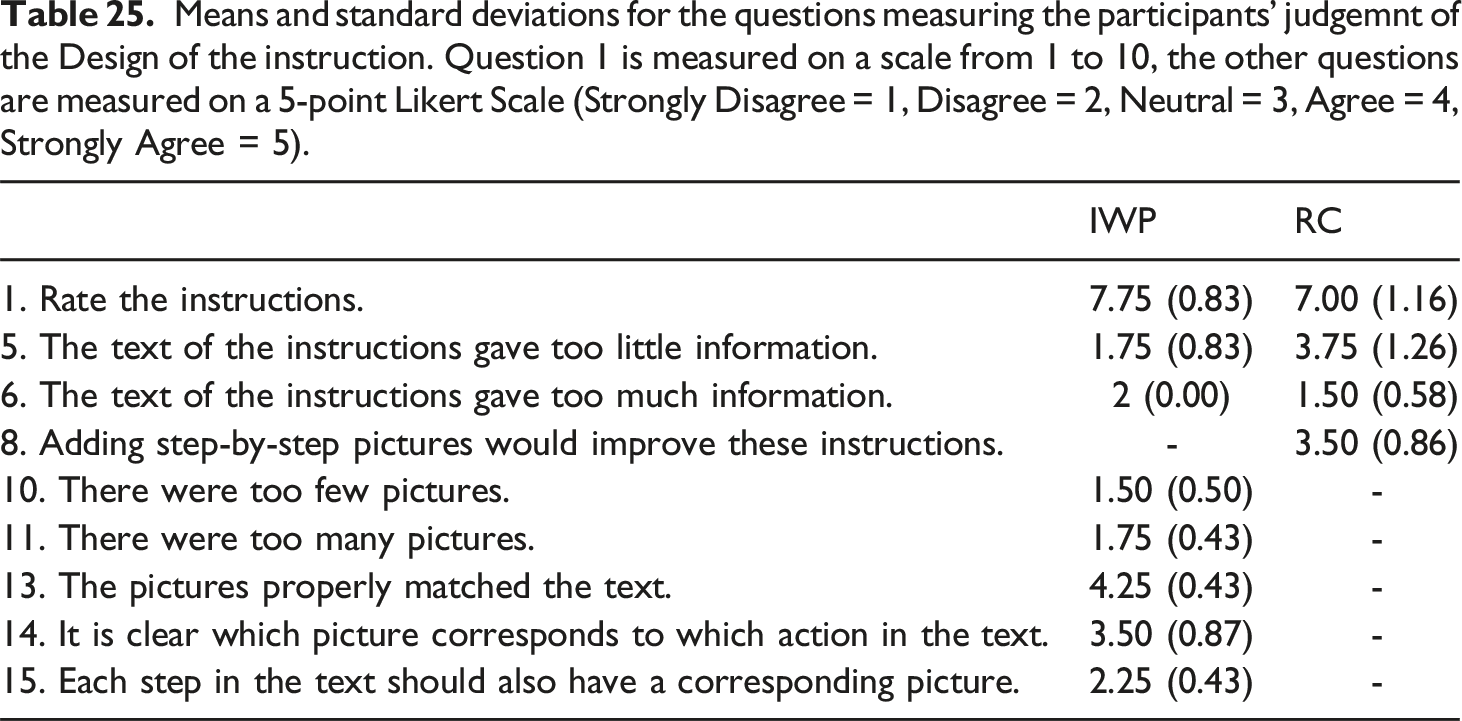

Design

Means and standard deviations for the questions measuring the participants’ judgemnt of the Design of the instruction. Question 1 is measured on a scale from 1 to 10, the other questions are measured on a 5-point Likert Scale (Strongly Disagree = 1, Disagree = 2, Neutral = 3, Agree = 4, Strongly Agree = 5).

IWP participants found that the instruction contains a proper amount of pictures (Q10, Q11), and that these pictures properly matched the text (Q13). However IWP participants also found that the clarity of the correspondence between the text and the pictures in the instruction could be improved (Q14). Still, IWP participants do not think that each verbal step needs a corresponding picture (Q15).

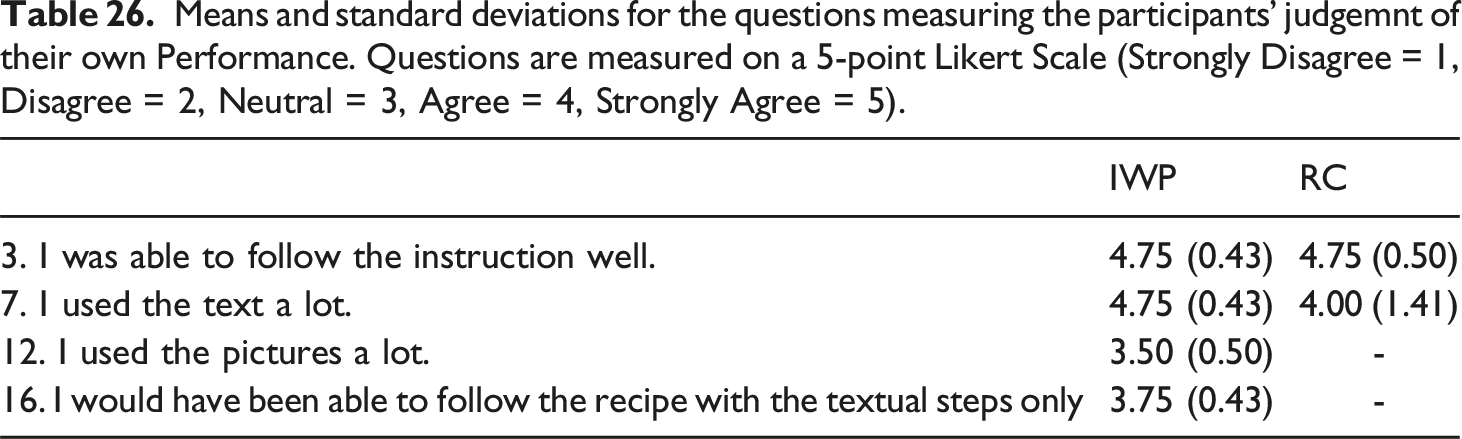

Performance

Means and standard deviations for the questions measuring the participants’ judgemnt of their own Performance. Questions are measured on a 5-point Likert Scale (Strongly Disagree = 1, Disagree = 2, Neutral = 3, Agree = 4, Strongly Agree = 5).

IWP participants scored their use of the pictures lower than their use of the text (Q12). IWP participants found that they would be able to bake cookies using solely the verbal instructions (Q16) and that the pictures were not really necessary (Q17).

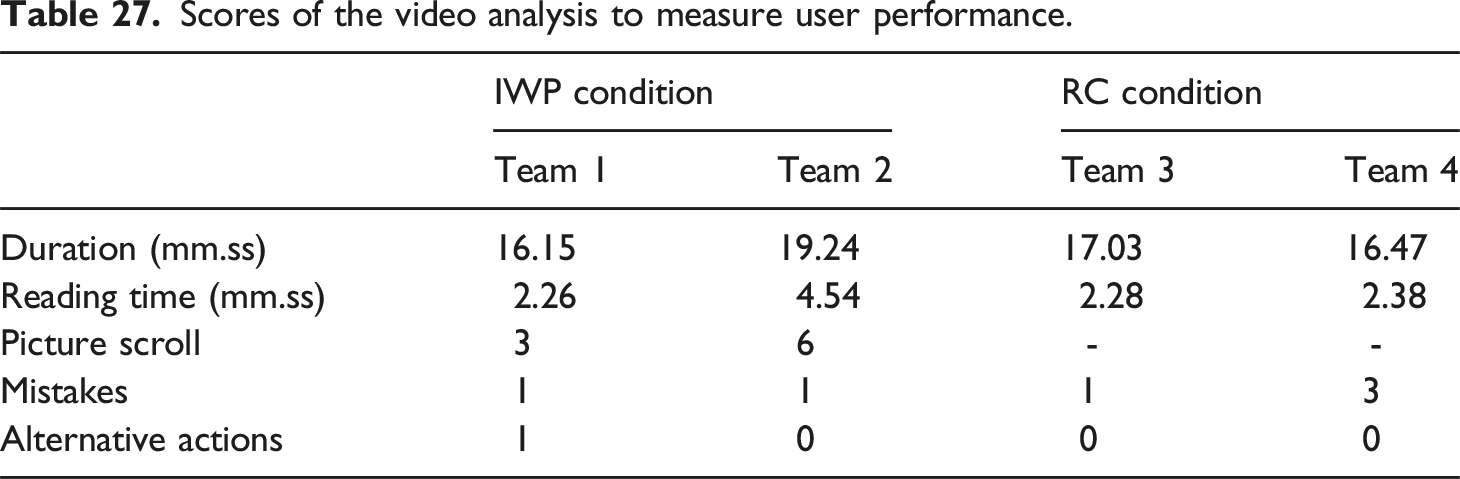

User performance

Scores of the video analysis to measure user performance.

Three types of mistakes were made. RC Team 4 added the dry ingredients to the bowl of the stand mixer instead of to a separate bowl; Team 4 did not notice that in the RC text ‘bowl’ in Step 2 and ‘bowl of stand mixer’ in Step 3 refer to different objects (See Figure 10). In comparison, the participants in the IWP Condition used the text as their main guide for baking. The pictures were used, but mainly to resolve any uncertainties that arose in the process. For example, both IWP teams discussed whether they had to add the dry ingredients to the bowl of the stand mixer or to another available bowl. After scrolling up to the pictures, they both concluded that it had to be the other bowl.

RC Team 4 incorrectly mixed the chocolate chips in the dough with the stand mixer instead of with the spatula. Note that this RC team, who only had the text to work with, could not see the picture in which the spatula is shown with the mixed-in chocolate chips (See Figure 9: Picture 6). In the RC text, however, the verb

All teams made the mistake of not saving chocolate chips to top off the cookies after baking. The teams could only have known that they had to save a part of the chips if they had read the instructions as a whole before executing the individual steps presented in the instructions.

Note that the IWP teams did not heat the oven at the start of the baking procedure. Recall that the IWP, in contrast to the RC instruction, does not instruct the user to preheat the oven. Therefore the omission of the heating action in the IWP condition was not considered a mistake.

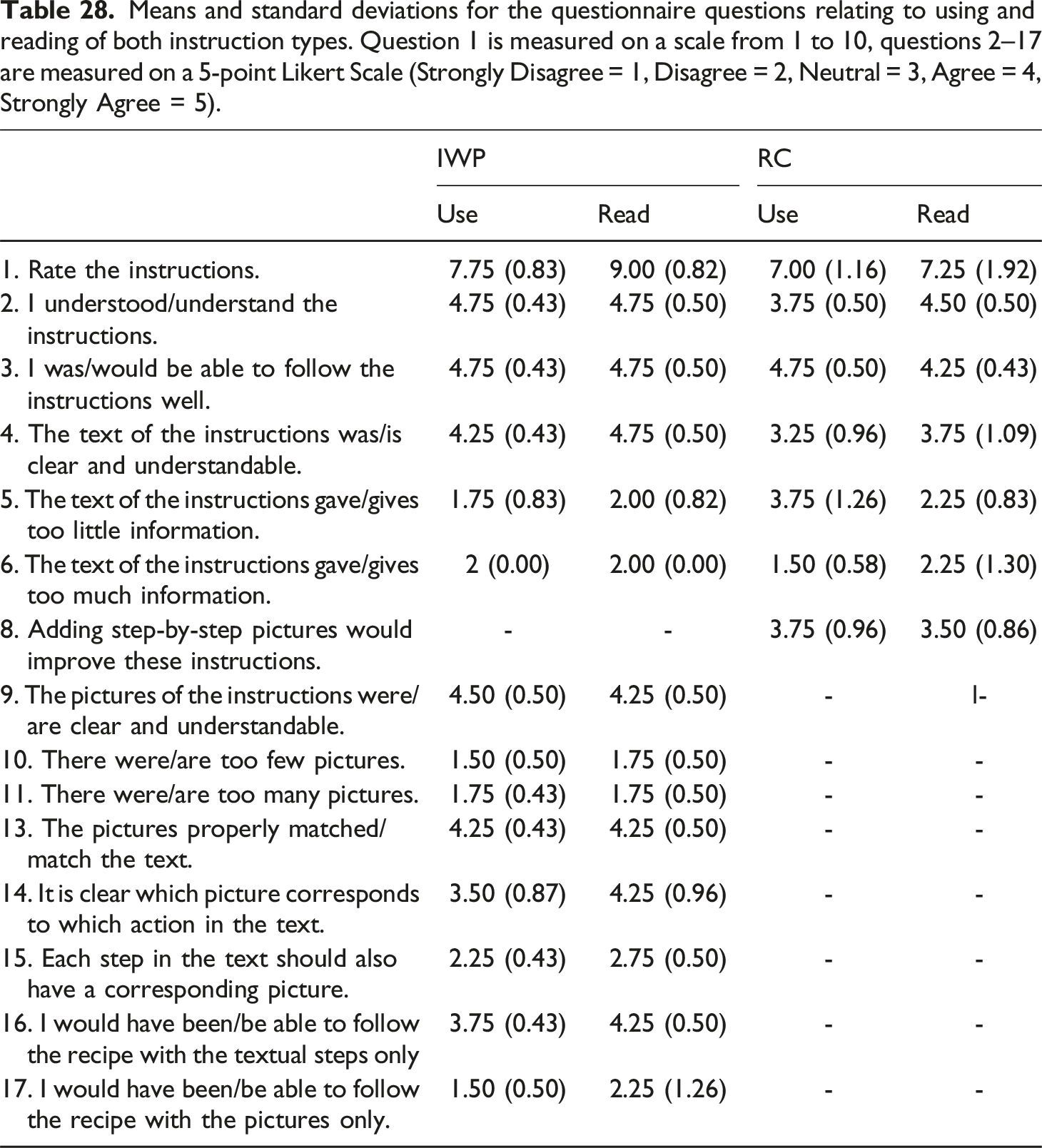

Users versus readers

Means and standard deviations for the questionnaire questions relating to using and reading of both instruction types. Question 1 is measured on a scale from 1 to 10, questions 2–17 are measured on a 5-point Likert Scale (Strongly Disagree = 1, Disagree = 2, Neutral = 3, Agree = 4, Strongly Agree = 5).

For the RC the ratings are similar for readers and users, but lower than for the IWP. There are a few questions in which RC readers give a more positive rating than RC users. For instance, in terms of

In terms of the

In terms of

Overall, the IWP is judged as slightly better by the participants in all groups, independent of whether they executed it or only read it.

Preliminary discussion

In terms of

In terms of

Overall, in terms of

Discussion

Corpus study

Results of the corpus study

In the corpus that we analyzed, the text of the 15 MIs was split into clauses. As expected, the RC and IWP texts do not differ significantly in terms of the distribution of functional and realized text content. Thus the IWP and the RC can be considered comparable presentations of the same procedure. The IWPs contained a total of 299 clauses, while the RCs had 400 clauses. The RCs contain 53 more Action clauses, 101 more Control Information clauses and 70 more Specifications. These differences can be explained by a difference in terms of the purposes of the two texts (Van der Sluis and de Jonge, 2024). The IWP’s purpose could be to attract potential users to use the recipe by showing that baking is easy and results in delicious cookies. The IWP may also be considered as a coarse guideline, while the RC contains the necessary details to be followed to actually bake the cookies. In contrast, the Recipe Card can be considered to be a comprehensive printable overview containing all the information needed to study the recipe, properly execute the instructions and ultimately obtain the envisioned results (cf. Bowker, 2021). Thus it makes sense that the Recipe Card contains more detailed verbal explanations. Moreover, in the multimodal IWPs, text and pictures work together to make meaning (Bateman et al., 2017: 7). Arguably, some of the verbal content available in the RCs is replaced by visualizations in the IWPs.

As expected the text-picture relations within the IWPs display a significant difference between the content of text and pictures in terms of Action Aspect. While all actions in the text are described as a

In the corpus, the organization of the relations between text and pictures varies across different multimodal instructions (MIs). In some cases, there was consistency, where the textual steps and their corresponding pictures were assigned the same numerical indices. However in other instances, the indices of the verbal steps did not correspond with the pictures included, or the indices were absent in either the text or the pictures or altogether. To ensure effective support for readers and users, it is crucial for the writers of these documents to carefully establish and maintain connections between the text and pictures. Previous research has demonstrated that a well-linked integration of text and pictures can significantly enhance the comprehensibility of instructions (Ozcelik et al., 2010; Tenbrink and Maas, 2016).

Method of the corpus study

The annotation of the corpus was done by two annotators who annotated parts of the corpus individually and used multiple rounds of meticulous and detailed discussions that resulted in revisions in the corpus annotation until a consensus on all the encountered issues was reached. In future work, an evaluation of the proposed annotation model is envisioned, in which multiple trained annotators will annotate a larger corpus with cooking instructions also containing more variation in terms of food types. To evaluate the annotation model and the resulting corpus annotation, multiple annotators will annotate the same MIs such that annotator agreement can be calculated. Accordingly, the model will be further improved and the accuracy and consistency of the annotated MIs will be secured.

The developed annotation models serve as a solid and broad foundation for the reader and user studies. The annotation models used to analyse the corpus include functional categories (i.e., Action Status, Action Aspect, Control Information, Specification). The functional categories are useful variables to describe and compare content presentations in different modalities (i.e., IWP text vs IWP pictures) and content presentations in different texts (IWP text and RC text). Potentially the functional categories can also be used to describe and compare other recipes (cf. Van der Sluis and de Jonge, 2024), multimodal instructions in other domains (cf. Van der Sluis et al., 2022a, 2022b), and multimodal instructions presented in different text genres (cf. Vijfvinkel et al., 2018; Wildfeuer et al., 2023). The proposed annotation model also includes domain dependent categories to describe the realized Action Types (

The distinction between Action and CI clauses is not always evident. For instance, phrases like ‘use a cookie scoop’, although containing an action verb, are classified as a CI clause instead of an Action clause. The rationale is that the clause describes the means to portion the dough and therefore should be annotated as CI

In addition, in future work a more detailed annotation model is envisioned to describe certain Specification categories in the verbal instructions. For instance, the

It should also be noted that the corpus study presented describes a relatively small number of MIs. The MIs, however, were carefully selected from a larger corpus and are representative for cookie baking recipes. Other recipes, for instance recipes in which a meal is cooked on a stove, or in which cold snacks or beverages are prepared are likely to include different actions and different action sequences (cf. Van der Sluis and de Jonge, 2024). Currently, we are working on a recipe corpus that covers a wider range of food types. In general, the recipe blogs are composed similarly in that all the blogs include an Instruction with Pictures and a Recipe Card. Future work will expand the scope and representativeness of our MI description in the cooking domain as well as the description of the blogs in which they appear. A larger and more varied corpus will provide a more comprehensive understanding of the design features and especially of the text-picture relations in multimodal instructions.

Eye-tracking study

Results of the eye-tracking study

The participants in the reader study tended to use a linear reading strategy, without scrolling back and forth between the different parts on the page. In contrast when examining the individual Instruction with Pictures, participants exhibited a different reading strategy. They switched back and forth between different text and pictures, with some readers showing clear patterns of attempting to link textual steps with corresponding pictures. Heat maps of the individual IWPs showed that readers focused more on the text than the pictures within the IWPs. However, the individual pictures did all receive some attention. Accordingly, we can conclude that the readers focussed on understanding the instructions (instruction-based reading) rather than selecting specific information in an interactive way (task-based reading) (cf. Ganier, 2004). Analogously to the duration of the participants’ text processing being dependent on the length of the text, it seems that the duration of processing visual information depends on the number of pictures. With only four pictures the visual information provided in MI 3 received the least attention. An interesting find, however, is that the same amount of time was spent on the six pictures of MI 1 and the 9 pictures in MI 14. Further study is required to understand exactly how these findings should be attributed to the split-attention effect, whereby the division of attention among multiple elements hinders cognitive processing (cf. Schroeder and Cenkci, 2018).

The questionnaire data shows that in general the participants considered the baking blogs as a whole and the IWPs in particular understandable and executable. In terms of

Method of the eye-tracking study

In the reader study, we envisioned to simulate a real life context by asking the participants to imagine themselves in a context in which they were looking for a recipe to bake cookies. It is of course questionable in how far the suggested context was achieved and whether it actually played a role. Note that the participants were not explicitly asked to read the whole webpage, but were asked to determine if the webpage contained a recipe that was useful for them in the given context. Given this setting, the participants were expected to pay little attention to the extraneous information on the webpage such as the advertisements and contextualization of the recipe. However, the time that participants spent on reading the IWP and the RC in the full blogs that were offered was very short; in the three conditions the participants spent less than a third of their dwell time on the blog on the actual recipes.