Abstract

This paper explores the notion of complementarity in the modeling of social systems. Complementary variables in physics exhibit a duality, and similar dualities are found in the analysis of social, political, cultural, and neurophysiological structures. In this paper, I show that the management of uncertainty in a hierarchical model exhibits certain dualities that closely parallel those observed in social systems. I argue that, due to the necessity of coordination within collectives, agents will mirror each other to a large extent, and will develop heuristics and tactics that are aligned with how each agent is managing uncertainty dualities. I connect sociological literature on social structures with neuroscientific, economic, and psychological developments, and show a simplified derivation of these connections from free energy principles. I then explore how these connections can be used to gain insights into observable socio-cultural processes.

“It is of course the ambition of every experimenter […] to make a discovery, to sail safely between the Scylla of intellectual prejudice which makes us reject evidence not really integrated without preconceived notions, and the Charybdis of irrelevance which has swallowed many working days spent in pursuit of instrumental artifice. (

Deutsch, 1958

:

98)”

Introduction

A collective of autonomous agents has a deeply embedded complementarity: each agent can be viewed as an individual, or as the collective, but not both at the same time. This is simply because one is the inverse of the other: individuals cannot be a collective, otherwise they’d lose their individuality; and a collective cannot be only made of individuals, otherwise it would cease to be a collective. However, autonomous members of a collective (agents) are both, at the same time: individuality and collectivity are inextricable (Breiger, 1974). In order to handle this inextricability and complementarity, an agent can make use of a hierarchical model, which involves a duality or tradeoff between abstract/coarse and concrete/precise representations. Using a concrete representation for itself as individual, and a more abstract representation for the collective, an agent can model both at the same time. However, the agent must carefully trade off these two complementary representations, to avoid being either swallowed by the Charibdis of concrete details and losing sight of the collective, or foundering on the Scylla of abstract social demands and losing sight of itself. The same tradeoff occurs in scientific discovery between abstract prejudices and concrete empirical details, elegantly expressed by Martin Deutsch as quoted in the epigraph. 1

This is the same tradeoff as between Berlin’s hedgehog and fox (Berlin, 1961). The hedgehog uses a single policy (or model) which works under many conditions (it curls up in a ball), while the fox figures out the entire current situation and works out a method for getting his way. The fox is a precise, fragile agent who focuses on the concrete, and the hedgehog is a coarse, robust agent focusing on the abstract. Foxy strategies, however, are favored by a Bayesian learner (MacKay, 2003), simply because the likelihood of data for the fox will be higher as it uses a lower bias (more precise) model. The fox is only able to handle a very limited number of situations, although it does these very well. The hedgehog is able to handle many more situations, but does not perform optimally in any of them. The same tradeoff appears in machine learning as the bias/variance tradeoff (Bishop, 2006): learned patterns in data can be underfit (high bias, abstract, low variance models, hedgehogs who give power to the model), or overfit (low bias, concrete, high variance models, foxes who give power to the data).

It is apparent that the same complementarity exists in the brain: abstract and concrete construals are complementary and inextricable from a neuroscientific perspective (Gilead et al., 2020). While concrete construals are complex and precise (e.g., a set of features, preferences, and probability distributions representing a domain ontically grounded in the world), abstraction allows for easier, more general, but less precise action selection (e.g., a general-purpose policy of action or apparently heuristic shortcut). Abstract construals gain importance in social situations due to an inability to estimate risk over concrete construals (ambiguity, Knightian uncertainty). Abstractions of social situations, however, are not descriptions of an objective world, but rather of an intersubjective, cultural, one. Part of the argument I present is that, for these abstractions to be useful, they must be shared among members of a collective, and so their tradeoffs between abstract and concrete construals will be similar.

Bohr (1950) may have been the first to propose that the complementarity between individuality (concrete) and collectivity (abstract) was a fundamental aspect of human social organization. Stephenson (1986a, 1986b) furthered this line of inquiry by describing social complementarity as between social structure and cultural structure, and Martin (2009) describes it succinctly in terms of passing from individual, instrumental, concrete tendencies or heuristics that shape the relationship between persons, to collective, relational, abstract tendencies that shape the relationships between sets of persons. That is, the social structure “allows for a generalization of the principles of action inherent in the structure, and the relationship seems like a ’thing in itself’ that people can orient to, rather than orienting to one another” (Martin, 2009: 336). Martin (2009: 340) also notes that the “duality of heuristic and structure becomes generalized to the duality of value and position.” While heuristics and structures play a role when focusing on the individual, social evaluation (value), and social identity (position) play the same role when focusing on the collective. Once this shift has been made, an institution is created where individual heuristics become “unmoored” from their original social structure, and by entering the cultural structure, “it is these free-floating heuristics that we call institutions” (Martin, 2009: 339).

Martin (2009) also finds, based on the earlier sociometric work of Feld and Elmore (1982) and White (1992), that these tradeoffs are not binary, but ternary. Through analysis of three heuristics that are used by people to form collectives, Martin reveals that the complementarity between social and cultural structure may be expressed in three different ways in social groups. Martin shows how these three heuristics are mutually exclusive, in that using more of one means using less of the others, a ternary complementarity. 2 A social group is constrained to place shared weight on each heuristic, leading to a social organization for that particular balance of heuristics. I draw a parallel in this paper between these “styles” of social organization and three notions of freedom or equality arising in political science (Anderson, 2017). As agents adjust their representations to be more collective, higher bias models increase the equality of agents, while reducing their freedom to be individuals. These same higher bias models (hedgehogs) are more secure and robust against changing environments, that is, they have lower variance. Since there are three ways for agents to “be” a collective, there are three associated types of freedom and equality, as agents adjust three, mutually complementary tradeoffs (Hoey, 2021).

I show in this paper that these ternary complementarities are equivalent, and can be modeled with a two-level Bayesian model. Using a free energy based approach, I show how three dimensions of uncertainty (or bias) are revealed as three sets of parameters in the hierarchical model. These parameters represent three capacities of agents for representing information at a concrete or abstract level. Finally, I connect the three parameters of the Bayesian model to the three heuristics of cultural/social structures, and the three freedoms discussed above.

I start by reviewing related material from economics and psychology, and then introduce the basic concept of complementarity, followed by neuroscientific, social psychological, sociological, and economic versions of it, knitted together as expressions of the same thing. I then discuss how three different complementarities lead to three social structures and three notions of political freedom and equality. Following this, I introduce a simplified free energy model that can be used as a computational basis for linking together these various complementarities. Lastly, I go through a set of descriptive examples showing how this computational model could be used to explain some social and political behaviors.

Related work

An epistemic division of labor is implied by the formation of a collective, one that is in constant flux as the world interacts with itself. This division of labor involves a tradeoff, between what is beneficial to the group and what is beneficial to the individual. Many modern economic, game theoretic and artificial intelligence theorists account for this by using a two-factor preference or utility function, with one piece for the individual utility, and a separate piece for the utilities of others in the group, which are assumed to be known (a classic original paper is by Fehr and Schmidt, 1999). However, Adam Smith called such a modification of preference a “feeble spark of benevolence” (Smith, 1759: 159), and pointed to a “stronger power, a more forcible motive, […] reason, principle, conscience” (Smith, 1759: 159), which he associates with a love of honor, and a desire to avoid scorn. I argue here that praise and scorn (and other social emotions like shame and guilt) are in fact ladled out by the person being praised or scorned. He looks at himself through the mirror of society 3 and thus “man has […] been rendered the immediate judge of mankind” (Smith, 1759: 151). How should an agent know how to ascribe praise and scorn to his own actions? He is so guided through his learning process, and by emotional signaling from others, to construct exactly that world in which he will feel such praise and scorn for his own actions.

One may ask why should this desire for praise and loathing of scorn not simply be a modification of preference? To make use of such a preference, an individual would need to know which of his actions will elicit praise and which will elicit scorn. To do that, he would need to maintain a model of each other person he interacts with, in essence, a theory of mind (ToM), in which the beliefs, desires and intentions of other agents are inferred from their behaviors. Such approaches arise from a philosophical tradition of methodological individualism: each agent is considered to be an independent, intelligent entity who can believe, desire, and intend independently of any other agents. Intelligent systems based on methodological individualism typically maintain an (incomplete) model of each other agent (Doshi and Gmytrasiewicz, 2009; Sutton, 2022), and use inductive (theoretical) or deductive (simulation) processes to plan the best joint actions to take. In such approaches, agents and their behaviors are measurable, objective elements that are responsive to other agent’s actions, and these actions are completely under the actor’s control. Although the view of the mind as being able to infer a model of other minds has become nearly incontrovertible in psychology (Goldman, 2012), proposals that go beyond theory of mind are being investigated (Gallagher, 2008; Kiverstein, 2011; Overgaard, 2017; Slors, 2012), and the ideas presented here fall into this category. These proposals are for a “minimal theory of mind” in which “we have one system for computationally efficient but inflexible mindreading and another system for flexible but cognitively demanding mindreading” (Slors, 2012: 20). In this minimal view, social emotions such as praise and scorn take center stage as strong forces for group participation. This view of synchronized diversity in groups means abandoning ToM as an explanation for individual behavior, advocating instead for a theory in which agent A internalizes marginal beliefs about what all other agents in A’s group feel that A should be doing. The marginal belief is an estimate about how other agents feel on average, collectively, and makes salient the possible futures it is consistent with. Individuals can only optimize preferences over these possible futures: “…all rational thought moves within a non-rational framework of beliefs and institutions” (Hayek, 1960: 269). How they do this is based on which sets of heuristics they use: how they manage uncertainty.

I emphasize that this marginal belief is not reducible to globally shared modifications of individual preference. Suppose that people had individual preference functions, each with an additional term representing the group. The additional term (primarily the weight relative to the individual term) needs to be shared among all group members. There is nothing preventing an agent in this situation to modify this shared weight to her advantage. When she does so, everyone does so, and the shared weight vanishes.

Mechanism design

The modern economic construct most similar to this idea is that of mechanism design in which a game is constructed so that the decision-theoretically rational move of each player includes adjustments for benefiting the group (Myerson, 1991). Each player acts as an individual, but the rules of the game are such as to limit his opportunity to only those actions that may also benefit the group. Most decision-theoretic approaches assume a principal, however, who fixes the mechanism. Further, it is assumed that the mechanism must be incentive compatible, that is, agents must prefer (have incentive) to follow the mechanism, given their individual utility functions. The approach I am presenting does not make either of these assumptions. Instead, the rules of the game are those patterns of neural activity that are brought into play by a given social context. Those not brought into play, those not made salient, and those that do not consume energy, are those which do not carry this group benefit alongside the benefit to the individual. The agent simply never notices that these other, non-social, options. No principal is needed (unless one considers the entire society as a principal), and agent preferences may not be aligned with the mechanism imposed on them. For example, many might prefer to go topless in the summer when it’s hot, but would simply not see that as an option, even though there is nothing preventing it. The social stigma associated with certain social prescriptions runs deep, and blinds many to opportunities. A similar process occurs in scientific investigation, in which a paradigm sets the rules brought into play, and the resulting scientific experiments benefit the accepted paradigm (Kuhn, 1962).

Dual-process models

The approach I am presenting in this paper is closely related to Bayesian dual-process models often used in cognitive science. Oaksford and Chater (2001) discuss how logical reasoning is often difficult for humans, especially in defeasible or ambiguous situations, and on how probabilistic models and Bayesian reasoning can give more parsimonious explanations for human deviations from rationality. The ideas I am discussing fall very clearly under the same umbrella, and tackle the important question of “the balance of System-1 versus System-2 processes in human reasoning” (Oaksford and Chater, 2001: 356). This balance is well studied in social psychology (Chaiken and Trope, 1999), but many different terms are used to refer to the two levels of processing. “Cognitive” processing is often referred to as deliberative, reflective (Ortony et al., 2005), conscious (Smith et al., 2019) or “System 2” (Stanovich and West, 2000), whereas “emotional” processing is called automatic, routine (Ortony et al., 2005), or “System 1” (Stanovich and West, 2000). Behavioral economists have brushed against computational dual-process models by proposing a variety of mechanisms that explain the experimental evidence of pro-social (e.g., cooperative) behavior in humans. Early work on motivational choice (Messick and McClintock, 1968) proposed a probabilistic relationship between game outcomes (payoffs) and cooperative behavior. This led to the proposition that humans make choices based on a modified utility function that includes some reward for fairness (Rabin, 1993) or penalty for inequity (Fehr and Schmidt, 1999). Modifications to the utility function based on identity have also been proposed (Akerlof and Kranton, 2000). A similar idea was taken up by Hoey et al. (2021), in which the sociological affect control theory (Heise, 2007) is used as a “System 1” process encoding societal norms and prescriptions.

Evans (2008) differentiates between parallel-competitive (PC) and default-interventionist (DI) dual process models. In PC, instrumental (System 2) plans of action are, if used sufficiently, hard-coded into an associative network that can later be quickly retrieved by System 1, and which then competes with ongoing System 2 reasoning for a given situation. In DI, the System 1 process sets a context in which the System 2 process can reason. The dual parallel constraint networks of Glöckner and Betsch (2008) are an example of DI in which the model has a “primary” network that rapidly settles to a maximally coherent description of the context and the revelation of an option to take, and a “secondary” network that is called upon if the primary network cannot find consistency.

In cognitive science, there are many examples of hierarchical or “dual process” models. Consider a recent example in Hawkins et al. (2023), which uses a Bayesian hierarchical model of language to show how a number of effects such as the convergence to efficient communication strategies in a repeated reference game. Their model has three levels and three associated parameters, much like the model I discuss in this paper. The results in Hawkins et al. (2023) are applied to a specific case of a reference game, meaning that elements of the model take on more specific meanings and functional forms, but the underlying social uncertainty principle is the same. Some results in Hawkins et al. (2023) are presented over a range of parameter settings, but the main results are shown for one specific setting that highlights the effect they are looking for. The model I am presenting in this paper shows how the parameters are arbitrary, but mutually constraining, and is able to model what effects will occur with different parameter settings.

Basic concepts

In this section, I review complementarity from mathematical, neuroscientific, and social-psychological perspectives.

Complementarity

In physics, conjugate variables are sets of variables that are complementary in that a signal can be represented in one or the other, but not both or neither.

4

Further, it is often more helpful to frame a situation in terms of one feature or the other. Features f1 and f2 of some object are conjugate if (Goldstein et al., 2002).

5

Features related in this way exhibit complementarity because, as the measurement precision of one feature increases, the precision of the other must decrease, and so there is an associated (non-quantum) uncertainty principle,

Neuroscientific complementarity

Gilead et al. (2020) describe a model of the functioning of the human brain as a hierarchical set of levels of abstraction, each of which has a two-fold structure. The abstractum is a general description of the situation at some level, while the concretum is the specifics of the situation at that level. A pair of concreta and abstracta lie in two distributed functional networks in the brain, and are mutually exclusive in the sense that information must be represented in one or the other, but not both. Shapira et al. (2012) review this as an “accuracy/detail” tradeoff that relates to the bias variance tradeoff in machine learning. As something unknown is represented in greater and greater detail (lower bias model, less abstraction), it will be more variant in the world (higher variance), meaning that training the model on different instances of the same abstract category will result in different models. As the unknown is represented more and more abstractly (higher bias model), it will be less variant in the world. Gilead et al. (2020) use an example of trying to predict what a blind date will look like. A low bias, high variance model would be a complete description of all the characteristics of the date (e.g., 5′11″, blonde hair, blue eyes), but it would often be wrong. A high bias model would be “like a human,” which would be a poor description of any specific date, but would be correct in almost all cases (low variance).

Thus, I conjecture that neuroscientific abstractum and concretum of Gilead et al. (2020) are conjugate:

abstractum = derivative of action with respect to concretum.

That is, the abstraction of a concept with respect to an action is the change of that action with respect to concrete instances of that concept. The action can be physical interaction with the world, and more broadly changes in mental or emotional state. As previously noted (footnote 5) it is more like a measure of energy flow than a discrete entity.

Consider also that neural processing (projection, posterior calculation) of abstracta and concreta will mutually affect each other, but the order in which they occur is significant. If I know exactly what the situation is (to precise detail, so this rules out most interactions with humans), then the abstractum is not necessary, and so is maximally variant. However, in an ambiguous situation, the concretum is more variant and the abstractum must be more precisely defined to obtain action. Conversely, if the abstractum is perfectly defined, all possible changes in the concretum still need be accounted for, and so the concretum would become maximally variant (relatively). If the abstractum is loosely defined, a single concretum is precisely specified. What this implies is that the degree of variation in abstractum and concretum must be related by a (non-quantum) uncertainty principle:

Equation (3) may be used to give a simple interpretation to the results in Shapira et al. (2012), in which it is shown that people use a more abstract categorization in situations of increased uncertainty. Indeed, increases in concrete uncertainty lead to ΔC increasing, which according to equation (3) implies that ΔA must be decreasing. As ΔA is associated with modeling uncertainty, this implies that the abstracta will be forced to use simpler models.

Social psychological and sociological complementarity

The social psychology of complementarity originated with Bohr (1950) and was later taken up by Stephenson (1986a, 1986b). Stephenson’s idea was to do a factor analysis (called Q) in the space of people (across variables of interest) rather than one (called R) in the space of variables of interest (across people) (Burt, 1940). In doing so, the complementarity of these two approaches was revealed. In the one, Q, the world is viewed in terms of a cultural structure, that is, the inter-relationships between networks of types of people (e.g., a neighbor is someone who is helpful and friendly). In the other, R, the world is viewed in terms of a social structure, that is, how specific individuals are connected to each other (e.g., I see my neighbor Chad every day) (Wallace, 1983). It is perhaps easiest to think of social structure as a property of individuals (e.g., I am connected to Chad and he is connected to me), while cultural structure is a property of the enclosing nested set of groups to which an agent belongs (e.g., the particular social practices and norms of my neighborhood, enclosed in the western cultural feelings about neighborliness more generally). Factoring people in the space of features (R) gives a subspace spanned by vectors of features. Factoring features in the space of people (Q) gives a subspace spanned by vectors of people. These two subspaces are complementary: one (R) is estimating variance across data in a space of features, while the other (Q) is estimating variance across features in a space of data.

Now consider the size of these spaces. There are potentially thousands of features one can use to describe a person: race, age, clothing style, eyelash length, etc. In appraising a person in terms of features (R), one gets a very high dimensional vector, leading to a computationally intensive process. In contrast, appraising a person in terms of people (Q), one can use a much lower dimensional space, resulting in less computational overhead. Since cultural and social structures are complementary, I may re-write the definition of complementarity (equation (1)) as:

cultural structure = derivative of action w.r.t. social structure.

The cultural structure defines how my action should change as a function of changes to the social structure. This duality between structure (“objective pattern of relationships”) and culture (“subjective understandings guiding relationship formation”) is discussed at some length by Martin, who points out that the content of a relationship “must be one that makes reference to [the] subjectively understood action imperatives…” (Martin, 2009, all quotes p. 17).

This type of reasoning is well modeled by the social psychological Bayesian Affect Control Theory (BayesAct) (Hoey et al., 2016), which fuses the sociological concepts of identity with a control principle and a computational implementation, and is a model that stresses emotional consistency as a driver of action (i.e., is something humans seek). BayesAct is a sociological model in that it assumes individuals are aligned with their social embeddings (groups they belong to), but allows for individual deviance from the social average. That is, an individual may not share these average social meanings, perhaps because they are from a different cultural or sub-cultural group (e.g., people who distrust Western medicine). I will not go further into details of this method, but I overview these models briefly in the supplemental material.

Three freedoms and three forms of dominance

The search for a mechanism that connects individuals with collectives can be approached from the collective side, or the individual side. In the former (this section), I start by describing different types of freedoms from a political perspective, based in the work of Anderson (2017). In the latter (next section), I start from individual heuristics for connecting with others, based in the work of Martin (2009). In both cases, the complementarities that I find are ternary, not binary. Once I sketch out these ternary complementarities, we will consider a Bayesian model that exhibits the same properties.

Three dimensions of freedom/equality in tabular form. Columns are the three freedoms, while rows are (1) the advantage gained by having that freedom; (2) the type of uncertainty; (3) the type of freedom (Graeber and Wengrow, 2021); (4) the corresponding type of dominance/equality; (5) the advantage gained by losing the freedom; (6) how private property is ensured when the freedom is lost.

The word “negative” sometimes causes confusion in this context, so I will clarify. Positive freedom means there are things you can do if you want: there is opportunity. Negative freedom means there are

The distinction between negative and republican equality is examined in depth by Durkheim (2014/1893), who labels them as organic and mechanical solidarity. Durkheim’s analysis is primarily about the social changes occurring during the industrial revolution, where the collectivity shifted from mechanical solidarity (solidarity through similarity, homogeneity of the population, rule by a prince) to organic solidarity (solidarity through division of labor, heterogeneity of the population, specific and complementary roles for individuals). Further, Durkheim (2014/1893) points to a difference in how the law is applied in each society. While mechanical solidarity requires punishment or retribution to enforce conformity, organic solidarity requires restitutive legal measures to ensure sufficient equality. I return to these different forms in when discussing state emergence.

To summarize, the three freedoms are as follows, • Positive freedom is opportunity—as positive freedom is increased, more opportunities for action are added. Opportunities for action are usually social opportunities—for example, other people. It is positive because as it increases, opportunities increase (added, positive). • Republican freedom is freedom from violence, or having no one coercing anyone else to do something (usually with threats of force). It is also negative in the sense that it is increased by the relaxation of constraints (e.g., threats). However, these are concrete threats to a person or their property or their family, while negative freedoms are abstract constraints on the individual. • Negative freedom is freedom from constraints from others (or the “freedom to build new social worlds” (Graeber and Wengrow, 2021)). It is negative because as it increases, agency increases, and constraints decrease (subtracted, negative).

Heuristics for forming collectives

Martin (2009) describes three mutually exclusive heuristics that are observed to occur in human collective formation, based on sociometric studies and reflecting the work of Feld and Elmore (1982) and White (1992). These three heuristics are used by humans when dealing with ambiguity, and I can connect them with the three freedoms discussed in the previous section as follows. • Choose those who are chosen by others. This heuristic operates at the individual level and implies preferential attachment, meaning a reduction of positive freedom (there are fewer opportunities with only one patron). • Choose alter if alter chooses ego. This heuristic operates at the level of dyads and implies reciprocity, meaning a reduction in republican freedom (to be really equivalent, the dyad must be controlled by a third party as neither can take control unilaterally). • Form a collective or small world. This heuristic operates at the group level and implies transitivity, meaning a reduction of negative freedom (one must be labeled as a group member).

Martin’s analysis starts by noting that observable characteristics, if stable and easy to estimate, are the source of preferential attachment (e.g., obey the bigger agent). However, if such characteristics are unstable or difficult to estimate (as in ambiguous social situations), then more interaction is needed. Thus, a complementarity is revealed between how precisely definable the environment is, and how much interaction with others is needed. To complicate matters, one can interact with others in two different ways, based on dyadic relationships of equality or on collective relationships of equality. Once again, a ternary structure is revealed between three complementarities. The apices of this ternary structure can also be related to the three notions of freedom discussed above.

I note these also appear related to the set of three evolutionary heuristics proposed by Nowak (2006). Kin selection involves choosing those whom others (your kin) choose, evolutionarily. Reciprocity maps to Martin’s second heuristic, while Nowak’s indirect altruism encourages transitivity (A’s action on B is observed by C, such that C expects the same from A, depending on C’s relationship with B), as Martin’s third heuristic.

Uncertainty

The biggest hurdle faced by an agent attempting to optimize success by modeling their social world, is uncertainty. I take uncertainty to be grounded in the physical world, of which one component is the agent doing the optimization. Taking a Bayesian viewpoint, uncertainty is a degree of belief. However, it is also a property of the world, as in I am uncertain if the tree bough will break in this great wind. The tree bough’s structural integrity is not easy to predict.

Uncertainty is handled by people in three complementary ways, which correspond to three things at play: the individual, the group, and the connection between the individual and the group. Another way of saying this is the objective (external, the group) the subjective (internal, the self) and the connective (membership in the group). The representations of the social context in an agent’s brain or mind pervades reason and thought, and the way in which each agent in each context trades off the social and individual contexts will be defined by, and will define, the social order and thus reality: “the relationship between the individual and the objective social world is like an ongoing balancing act” (Berger and Luckmann, 1967: 134).

Returning to Table 1, the second row (“Uncertainty”) shows the different types of uncertainty, and in the fifth row, the corresponding certainty/security that comes with a reduction in that uncertainty/freedom. Positive freedom corresponds to uncertain knowledge: The external states are highly sensitive to the ecological niche, including the actor himself. Each agent has many different models, or ways of seeing the world, and can exercise discretion at which one is used in a given situation. The actor may be in a state with great uncertainty (i.e., waiting for a test result), or in one of great certainty (where he is an expert, or where he is following a rational plan designed by an expert). Republican freedom corresponds to uncertain safety: without a coercive force enforcing a plan, agent’s individual plans are likely to cross paths, possibly leading to conflict and danger. As this freedom is reduced, safety is increased through the protection of the prince’s use of force. Finally, negative freedom corresponds to existential uncertainty, in the model of the “self” (which may include a model of the world as well). High confidence is usually a hallmark of a lot of negative certainty, and may be increased through rituals and schismogenesis.

Thus, different uncertainties in a two level model lead to different freedoms and certainties in a social group. As I show in the next section, these three dimensions are a basic property of hierarchical Bayesian models, but it is my construction to map these three dimensions to three types of freedom. Since these three dimensions are properties of a Bayesian hierarchical model, they are highly likely to be at play in each individual’s brain, which means they’re highly likely to be at play in how cultural and social structures develop. I further am hypothesizing that these same mechanisms are at play collectively. That is, both group and individual must be doing this in the same way.

Free energy

In this section, I derive a simple Bayesian model used as a minimal working example that ties these different complementarities together. The key idea is that it is the management of uncertainty across a set of (at least two) complementary levels of abstraction that defines how a social system connects individuals with the collective. I approach from an information theoretic standpoint, starting with a discussion of free energy.

The complexity of an agent’s environment, defined as the total number of configurations accessible to the agent, is related to the free energy (MacKay, 2003). A configuration is one potential arrangement of the state of the world in the future. The accessible configurations are those that the agent can achieve as a function of its actions (and possibly exogenous events including the actions of other agents). The accessible configurations are defined by (and define) the agent’s econiche (Bruineberg and Rietveld, 2019). This is the expected set of temporally dynamic configurations of other agents and objects which are accessible to the agent in its current environment. The dynamic stability of these configurations is crucial to the establishment of a long-lasting equilibrium or steady state. I will say an agent is aligned with his econiche (and thus to all other agents and objects in it), if the agent is at an equilibrium in which the number of expected configurations is minimized (or at least the probability over them is maximized, and the free energy is minimized). An agent wishing to assess the free energy and to use it as a guide for action needs to model the relative probabilities of all the future configurations of the world. Whether these configurations are known or not, a successful agent must predict how likely each one is. Importantly, it must include itself within this prediction.

As an agent’s world becomes more complex (e.g., with the addition of other social agents), this true free energy becomes intractable to model within an agent’s resource bound, and so the agent must approximate. The agent, primarily interested in evaluating how surprising outcomes will be, will need to average over all possible future configurations, something that it cannot reasonably do. It can, however, construct something that is always bigger (more surprising) than the surprise of the environment, known as the variational free energy. An agent’s variational free energy is an internal measure of how well its (approximate) model fits the real world, combined with the true degree of surprise (the true free energy). A very well matched agent will not feel surprised very often, whereas a mis-aligned agent will often feel surprised. As surprise is a key factor in survival, this concept is supported by an intuitive evolutionary argument. In fact, even evolution is thought to follow a similar optimization of surprise, leading to species that are better and better adapted to their econiches (Badcock et al., 2019). Minimizing this variational free energy is the same as moving toward a state of true free energy which is minimal, and to a model that is the best possible abstraction of the real world given the resource bound, and is consistent with the econiche in which it is embedded (Bruineberg and Rietveld, 2019; Friston, 2010). Typically the variational free energy is optimized in an iterative approach where expectations over the future give a set of models are combined with expectations over the models (including the agent itself and its goals).

The variational free energy can be written by considering that, to survive, an agent can be viewed as constructing a generative model of its sensory readings, o, known as the evidence and written p(o). Agents want to maximize the evidence, because they do not want to be surprised by o. Agents can maximize this by minimizing −log p(o), which is the definition of surprise and of the true free energy: the degree of predictability and expressiveness of the future, from an absolutely collective perspective. The agent, to accomplish this, the agent can infer a set of states, s, which constitute its belief about the true state of the world, s*, and sum out these states before taking the negative logarithm,

Sometimes (as in the next section) this is conditioned on model parameters, −log ∑

s

p(o, s|θ). This expression will be difficult to compute due to the summation, but we can use a variational method to approximate it. This involves multiplying through by a function q(s), and then using Jensen’s Inequality to derive the most commonly used expression for (what is now) the Variational Free Energy:

The first term is the KL-divergence, or difference, between the variational approximation of the posterior, q(s), and the prior expectation p(s). This is the complexity given by the number of bits needed in the model to predict the future from the prior. Futures that are less predictable (from p(s)) require more complex models. The second term is the accuracy: the expectation of the outcomes given the state. Notice the same complementarity here between complex models that are highly accurate but fragile, and simple models that are not accurate, but robust: both optimize the free energy in different ways. The next challenge is that an agent cannot estimate p(o|s) in a predictive model simply because it has not observed o in the future, and must therefore do so in expectation given its current model.

Policies of action (mappings from sensor readings to motor commands) can be computed using this variational free energy approach, and yield a quite general class of normative model of intelligence as based on active inference (Ramstead et al., 2019). This class includes solution techniques that can be used on the type of deep Bayesian model I am introducing in this paper. The computation of a policy of action resolves to inferences about model parameters, coupled with inferences about expected futures. The optimization is usually termed the free energy principle, which states that agents try to maximize control of their perceptions. That is, agents use their output to try to control their input, and only indirectly have effects on the world itself. This is fundamentally different than the traditional approach in artificial intelligence and engineering of mapping from input to output. In the free energy approach, an agent’s primary goal is to build a sufficiently flexible internal model of the world in which he is embedded, and of his actions in that world and their effects. The agent can do this in two ways: by constructing a better internal model, or by modifying the outside world directly, both aimed at making the environment less surprising. One way to modify the internal model is through a set of parameters that governs how precise/sensitive the model is with respect to the incoming data distribution, roughly corresponding to the flexibility/security tradeoff I have been discussing in this paper. To ensure the model has sufficient flexibility and accuracy, a Bayesian learning approach is followed, as described in the following section.

Bayesian learning

A Bayesian learning problem is one of computing a distribution P(m|d) over models, m ∈

This learning problem is difficult because of the denominator on the right side, also known as the partition function, the negative logarithm of which is the free energy, G. This summation is over a potentially very large set of models,

Simplified model

As a minimal working example, consider the graphical model shown in Figure 1, in which o is the observations: evidence coming from the boundary states or sensorium, interpreted denotatively (i.e., translatable into object labels that denote real objects in the world). While X is the concrete denotative state, related to the denotative evidence o, Y is a more abstract, connotative state (i.e., related to abstract “feelings”). Action by the agent requires some direct connection to the outside world, through some actuator, and so is part of X, but may be mirrored in the connotative state Y. This minimal model will allow a mathematical formula for the tradeoff to be derived, and will serve as a basis for the following sections. Graphical model used as a basis.

Parameters of this model are α, γ, and δ, which I consider here to be simple variances. That is, higher values of these parameters mean models that can handle more uncertainty, in the sense of more diverse inputs from the level below. In Figure 1, I have drawn the parameters (which parameterize the links pointed to) in squares, and the links between X, Y and O as generative. This is not necessary (the arrows can be removed) without changing the analysis substantially. I am deliberately avoiding introducing temporal thickness, which, although important for action selection in general, does not substantially modify the analysis I present here.

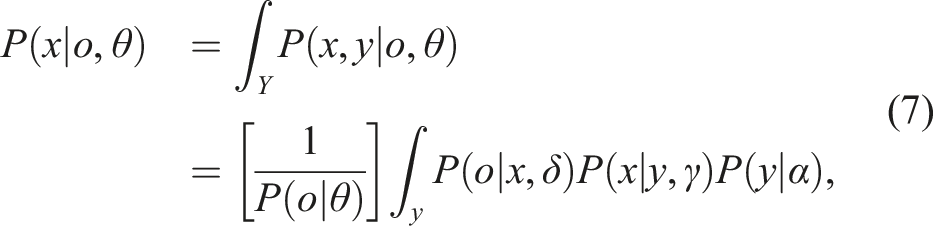

If using this model, an agent’s primary interest is to compute P(X = x|O = o, θ), or the probability of each of his states (including actions), x, given the evidence, o, and the model parameters, θ ≡ {α, δ, γ}. To do so, the agent must compute:

To better understand the mechanics of this model, I show a simple example of equation (8) using uni-modal Gaussian distributions over continuous valued spaces. That is, I will assume the following form for the partition function:

I now carry out the integrals in equation (9) in the simplest possible case where o, x and y all live in the same vector space. This is a highly unlikely latent space to discover, but any linear functions M would yield the same uncertainty relationships that I uncover with M = I, the identity function. I return to this question in the supplemental material, but for now, using M = I, equation (9) becomes

Each integral can be done by simply completing the squares (see supplemental material), yielding:

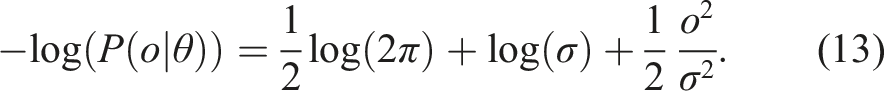

This is a measure of an agent’s individual free energy. I assume that an individual lives in a society that defines a free energy minimum, C. This minimum is the one at which agents in the society “fit” together best (or the minimum they have found so far). Thus, the individual agent is tasked with keeping this quantity is minimal:

Returning to equation (5), this quantity is that over which the expectation is taken for the accuracy term in the agent’s approximation to the free energy (the variational free energy). Thus, decreasing σ to increase accuracy will result in an increase in complexity, which works in an opposing direction in terms of minimizing the variational free energy. This implies is that the true variance in o must be matched by σ2 in order to minimize the entire free energy, that σ2 = α2 + γ2 + δ2 must be constant (at least as constant as the agent’s econiche), and thus that X, Y and O must be jointly complementary at equilibrium: each pair of variables are complementary and induce an duality, an uncertainty principle, such that all three together form a ternary complementarity. In the restricted case where all M = I, this means the parameters must lie on a simplex, as shown in Figure 2. Simplex on the three parameter dimensions of

Any linear M would also work the same (see supplemental material), but nonlinear M would be yet another story. Temporal depth could be added to this analysis, only encumbering the equations with additional (historical) values for x and y (and possibly θ). Once this is added, the model becomes a hierarchical partially observable Markov decision process, or h-POMDP, which has long been studied in operations research, decision science, and artificial intelligence (Åström, 1965). POMDPs are foundational models for artificial intelligence, and come with a large array of machine learning methods for their learning, solution and usage (Boutilier et al., 1999; Kaelbling et al., 1998).

One interesting thing to note is that while the parameter θ lies on a simplex if one is attempting to keep free energy constant, the variance of the posterior P (x|o, θ) given by equation (7) must lie on a simplex, but in the “inverse” space of

This overly simplistic model of information processing is meant only to show the three complementarities in the processing of information by a hierarchical Bayesian model. In the following, I will discuss examples of how this management of uncertainty maps to the dimensions of freedom and equality previously discussed.

Uncertainties and freedoms

I can now return to the discussion of heuristics and freedoms and draw a parallel by noting that, as one of an agent’s uncertainty parameters increases, the chances it will be using a similar model to others in its groups decreases, implying that the freedom of individuals (to be different from others) has increased. Conversely, if uncertainty decreases, then coordinated agents will be much less free to be individual, and will become more homogeneous, more equal. In this sense, freedom and equality are opposites. Increasing equality means decreasing freedom, and vice-versa. Returning to the hedgehog and fox, the fox represents freedom, individuality, flexibility and heterogeneity, while the hedgehog is equality, sociality, security and homogeneity. This pits the individual against the group, the “figure it all out alone” against the “do what everyone else does” strategies. While the fox sails close to the Charibdes of irrelevance, the hedgehog edges toward the Scylla of prejudice.

Now consider the simple Bayesian model from the previous section from an individual agent’s perspective. If

Consider now if

Finally, if

Individuals, groups, and social movement

This section connects the individual and group using the Bayesian model introduced above, and discusses some ideas of how social movement and change may be represented. In the following, I will simplify and draw the simplex as shown in Figure 3(a), in which I use dashed arrows to denote movement through this cognitive space, but off of the simplex and toward the origin. Solid arrows are the opposite (on the simplex, or off the simplex toward ∞). I will use the notation Different configurations of a group’s movements: (a) movement of a social group across the simplex—dashed arrows are off the simplex toward the origin, while solid arrows are off the simplex toward ∞, (b) a small group of individuals moves coherently, and (c) incoherently.

Agents have incentive to “copy” their neighbors to a certain degree, as this a known route to survival (Deutsch, 2011; Henrich, 2016). Thus, I can draw (Figure 3(b)) a small group of people on the simplex, all moving in a coherent direction, or (c) I can imagine another small group moving in a rather more incoherent fashion. I can also summarize with a single arrow showing the whole group moving as in Figure 3(a).

I can now inquire as to what relationship these parameters and the simplex have to social organization and human intelligence, or how individual processing of information can lead to coordinated group behavior. To gain insight into this, I first look to James (1890) and Peirce (1955), founders of the pragmatic approach, who each discussed the nature of meaning as it relates to action. The pragmatic maxim holds that what is represented in the brain, language in particular, is not the objective world outside of us, but rather a complete description of the world outside and inside, and the relationship between the two (Peirce, 1955). For example, my concept of friend includes everything about a friendship, including what my actions might be within it. Once I (abstractly) decide someone is not a friend, I cross a very rapid gap in my comprehension of the world. This gap is what James’ referred to as the “flights and perchings” of thought (James, 1890: 243), and what underlies binocular rivalry (Hohwy et al., 2008). The gaps may also be the basis of transfer learning and mental travel, as I can cross the gap metaphorically, constructing potential solutions without having ever encountered the situation (Holyoak and Thagard, 1996; Lakoff and Johnson, 1980). Similarly, much phenomenological thinking revolved around the relationship between the world and an embodied agent’s perceptual and motor systems (Gallagher, 2020; Heidegger, 1927). The Gibsonian view, for example, was direct perception of the affordance of something, or how it is related to the perceiver’s actions (Gibson, 1986). Glenberg (1997) examines the role of memory and embodiment in making this connection, and is allowing for effective suppression of environmental noise, thereby allowing for embodied action.

The analysis above shows that a two-level Bayesian model has three variance parameters, and these three parameters are co-dependent given the goal of estimating free energy or a posterior probability distribution over agent actions (e.g., settings of actuators) given evidence (e.g., sensor readings). Their co-dependence means that increasing one leads necessarily to a decrease in at least one of the others, in order to maintain the same variance in the posterior. This negative or inverse co-variation can be represented with a simplex as described by equation (12). If members of a group were processing information differently, they would be making different tradeoffs between individual and group. While some degree of variation will be unavoidable, group members not “fitting the mold” (e.g., free riders) will be ostracized from the group. I therefore argue that group members will also share these model parameters, and will be located at roughly the same location on the simplex in their model space. In fact, the location of any society on the simplex is more like a cloud of points that, on average, sits roughly on the simplex, but may be overall more or less equal, or more or less equal on any dimension. One could then picture the global political world as a constellation of these clouds of points, each of which represents a particular group or subgroup (and there may be hierarchical structure within the clouds).

A group of agents who are processing information using parameters

The second mechanism for social movement is larger-scale exogenous events which affect many group members at once. For example, a natural disaster throws whole groups of people off the simplex by massively decreasing

There may also be physical, structural, or emotional constraints on a group that cannot be circumvented, and which reduce the “operational” region of the simplex in a third mechanism for social movement. For example, widely dispersed hunting and farming lands might implicitly prevent any authority developing (and thus prevent

In the fourth and last mechanism, different forms of inference may be more salient in different regions of the simplex and take more or less important roles at different junctures in history, harkening back to Hegel’s dialectic (Hegel, 1807). Such ideas have been explored in a free energy formalism (Beni and Pietarinen, 2021). I explore this idea further in Hoey (2022), but mostly have left this for future work.

Therefore, the structures at play in the society, formed by different processes of uncertainty management, as well as ecological and environmental pressures, combine to locate a group and to shape its trajectory. What humans do next is attempt to form larger and larger groups. Why do they do this? One reason is inequality between groups, leading to some wanting to submit their neighbors to their will, possibly due to a perceived need to differentiate their group from their neighbors (“we are different than them”). This “schismogenesis” (Graeber and Wengrow, 2021) often relies on “structures of refusal” and is there specifically to enhance group membership and motivated cooperative action.

Descriptive examples

Harkening back to Plato, the abstract idea of a generic object (say, a bed) has an existence of its own, and is superior in some way to all such objects (e.g., all individual, real, beds). The seventeenth century disengagement from this restriction allowed for a more sophisticated analysis of the nature of knowledge, resulting in its attribution to three factors proposed by Locke (1690: 343): intuition, agreement of ideas, and sensation. This is further reflected in Locke’s political philosophy in which he discusses three freedoms defined by three constraints: permission from others, the will of others, and the laws of nature. While the permission from others is held intuitively (I have the negative freedom to act how I see fit without explicitly asking permission, but social prescriptions restrict the space of actions I can reasonably take), the actions of the self must cohere with the will of others, restricting republican freedom (increasing homogeneity and republican equality). Finally, the laws of nature are unavoidable and must be adhered to, restricting positive freedom to carry out whatever strategy we desire (e.g., the laws of nature prevent me from doing anything that involves walking on water).

My goal in this essay has been to show how these three elements of the nature of knowledge (and freedom) are closely related to different ways of managing uncertainty in the human mind, shared across a group. Uncertainty may arise from the external world, leading to a lack of “sensation” knowledge, or from the internal (mental) world, leading to a lack of “intuitive” knowledge, or from the agreement between the two, leading to a lack of “agreement of ideas.” I have attempted to show that these three are mutually exclusive, but the exact tradeoff used between the three is somewhat arbitrary (conditional on the environment): a group may function as a society using any of the infinitude of possible settings of these three uncertainty management elements (subject to remaining on the simplex), so long as the whole group is using the same settings. If we accept this, there is no longer any right and wrong setting, simply a need to understand, accept, and adapt to groups using different modes of operation. In this final section, I will discuss how these settings result in different structures in human social networks, in particular: how states emerge, the dynamics of public policy, the function of a separation of powers, the causes of political polarization, and the categorization and legibility of rules. I close each sub-section with a summary showing relationships to freedoms, free energy, and the simplex. My aim is to show how the two-level Bayesian approach I have proposed can be used to model a wide variety of human social and political phenomena, demonstrating increased generality. However, as I mentioned previously, the model is an h-POMDP which has a suite of well-developed techniques for machine learning of the parameters. Thus, confirmatory or falsifying evidence for each example could be generated by learning the model from different datasets and exploring the resulting parameters.

State emergence

One important aspect of classical political philosophy centers around the idea of a “state of nature,” from which modern society is thought to have arisen. It is hypothesized that security is the prize for giving up freedom, and that increasing security leads to state formation. Nozick (1974) discusses state emergence by pointing out that a Lockean state of nature differs from a Hobbesian one in that it allows for an immutable law of nature. In Hobbes’ original state, it is a war of all against all, corresponding to pure freedom on all three dimensions: no one forces, nor even suggests what a person should do, and everyone is free to do anything at all, including murdering those who have something enviable (Hobbes, 1651). In Locke’s state of nature, however, there are certain immutable laws (such as the right of everyone to stay alive) which must be respected. However, these laws can never be complete, and so there must be some “invisible hand” that guides the resulting society (Locke, 1690).

Indeed, if we let

Positive freedom lost

The two sides realize that the battle is unresolvable or at least inefficient, and create a set of laws and a bureaucracy to implement the laws. It must then be a common agreement that everyone follows the laws, and each individual loses opportunities as they must follow a pre-set path. As the paths are pre-set, Uncertainty boundaries crossed in state emergence: (a) ecological (unresolved conflict, laws and rules); (b) force (winner takes all, loser conforms); (c) social (shismogenesis, separation).

Republican freedom lost

A winner takes over and forces the loser to conform

Negative freedom lost

Warring sides agree to disagree, and occupy different territories. The inhabitants of each territory (or clients of each protective association) are not free to redefine themselves as inhabitants of the other. They have lost this negative freedom of definition, and a schismogenesis occurs through structures of refusal: each party makes sure they do things to differentiate them from the other group (Graeber and Wengrow, 2021). The complementary identities that so form essentially modify the models used by each group, but they do so by making identities more precisely defined, such that

Nozick (1974) also considers in some detail how “meaningful” work and self-esteem are related to equality and freedom. For example, selling “green” products that are more expensive, but give the buyer and seller a sense of integrity. Consumers may band together to pay more for those products produced by the more “meaningful” workers. This is

Further, Nozick (1974: 247–252) discusses how meaningful work (as a proxy for self-esteem, essentially) may be achievable in three ways. The first way is described above, by increasing

Public policy

The standard model of policy changes is one in which policymakers have static (unchanging) preferences, (also assumed in many economic theories emanating from the seminal work of Arrow (1951)), and that elections are the process by which the people insert different preference functions into the legislature. However, Jones and Baumgartner (2012) note severe deficiencies in this model based on the leptokurtic (with fatter tails than normal) shape of the distribution in budgetary commitments. Instead, they describe a theory of government information processing that has many parallels with the approach I am presenting. They focus on a bounded rationality model of information processing that puts emphasis on attention and salience rather than on rationality. The resulting “stick-slip” dynamics of punctuated equilibria has many echoes in the work of Taleb (2001) on unimaginable events (Black Swans) arising out of the fat tails of complexity, and is analogous to the scientific revolutions arising from a new paradigm of investigation: “scientific investigation [is] a succession of tradition-bound periods punctuated by non-cumulative breaks” (Kuhn, 1962: 207). Similar dynamics have long been noted in other fields including literature, music, and the arts (Kuhn, 1962: 207).

The idea is that agents have a bound on the amount of information processing they can do, as proposed by Simon (1967). These agents would like to be able to figure everything out rationally, but simply cannot. Whatever is left behind by them (whatever overflows from the cup of their mind) may then possibly accumulate in institutional practices, eventually emerging in a catastrophic or sudden and unexpected event in which policy changes dramatically. These sudden changes arise from the institution itself, but look like they arise randomly. Indeed, “In a not-unfamiliar story line, a problem festers ‘below the radar’ until a scandal or crisis erupts; policymakers then often claim ‘nobody could have known’ about the ‘surprise’ intervention of exogenous forces, and then scramble to address the issue” (Jones and Baumgartner, 2012: 7). In general, the individual agents will not keep up with the institutional change, as it is the institutions they participate in that are suffering from this skewness. The punctuated equilibrium model of Jones and Baumgartner (2012) gives an account of these stick-slip dynamics with a “panic” button that participants can hit. The panic button essentially overweights by a great deal one of the possible indicators that are being attended to. It is like a super magnifying glass that is focused on one policy (Jones and Baumgartner, 2012: 135). Such a panic button can be accounted for in the model I am presenting as follows. The accumulation of misalignment typically triggers a disruptive event that arises from within, for example, from a group member “misbehaving” and being civilly disobedient. Once the offset becomes too great, individual agents will start to defect, and once this emigration hits a tipping point, another model (another location on the simplex) takes over and re-balances the society, in what may be a sudden and drastic change. Following the trigger event, there are individuals making determinations about the events that occurred. In many cases, individuals may suffer high misalignment (movement off the simplex), which in turn causes change in parameters for at least some participants. Once these changes occur, they are difficult to reverse. If many people have the same shifts (which is likely, given the shared nature of the model, they will all be doing it so as to reduce the overall free energy of the group, which has been displaced by the trigger), then an overall shift occurs in the group, precisely because it is made up of the members making that shift. This can occur at the level of any group, including one of policymakers. These panics then induce events that overweight one indicator, and thus are the source of the leptokurtic distributions.

The model proposed by Jones and Baumgartner (2012) is compelling in the effects it predicts and the stick-slip dynamics seem to fit the evidence. What I am proposing fits well with their model, but the underlying reasons for the bound on rationality are different. In their Simonesque bounded rationality approach, each agent is struggling to rationally figure everything out, and only is capable of doing so much, meaning his attention must focus on one or the other option or model. The emphasis is on human inability to process information due to overload. In the more sociologically oriented approach I am presenting, the individual is not even trying to figure things out rationally, instead he places the emphasis on the group, such that the bound is not an involuntary weakness of individuals, but rather a voluntary (but partly group-oriented) choice to let the society in which an individual is embedded do the heavy cognitive lifting. Notice the paradox created here because the individual is the group.

In the view I am proposing, individuals can no longer arrogantly claim responsibility for the intelligence of the group, and must instead acknowledge the powerful role of socialization in creating and maintaining the longer-term momentum of evolutionary wisdom contained in the species as a whole. Indeed, presuming that individuals are responsible for consciously steering society where it ends up going, “reveals only the narrowness of an outlook uninformed by humility” (Polanyi, 1951: 199).

Separation of powers

The liberal democratic ideal of a separation of powers can be framed as a three-way tension across the simplex of parameters I have been discussing. This tension was first described by Montesquieu (1748/1989: 63): “In order to form a moderate government, one must combine powers, regulate them, temper them, make them act; one must give one power a ballast, so to speak, to put it in a position to resist another.” Here, I describe how this ballast can be viewed as uncertainty management.

While the democratically elected legislative body determines laws that reflect the understandings of people • Prevention of violence and fraud: If the executive raises • Protection of property and enforcement of contracts: If the legislature raises • Recognition of equal rights to produce and sell: If the judiciary raises

One can visualize these effects on the simplex by showing a force toward one apex countered by two forces toward the opposite apices, as shown in Figure 5(a)–(c), corresponding to the three damping mechanisms (Montesquieu’s “ballast”), respectively (Hoey and Schröder, 2023). (a) Excessive increase in

Political polarization

A contradictory finding is that both non-exposure and exposure to opposing viewpoints increases political polarization (Bail et al., 2018; Facciani, 2020), but this may be partly due to experimental conditions making connotative interpretations more or less salient. Facciani (2020) argues that “including the variable with whom the participant discusses ‘important matters’ is crucial for accounting for these inconsistencies.” That is, whether the experiments ask for information about connotatively meaningful persons or not (a.k.a friends or strangers) is important for gauging the resulting effect. These different polarization mechanisms are also related to different forms of social capital (Putnam, 2000). While “bonding” social capital refers to like-minded people in homogeneous organizations becoming deeply connected to one another, “bridging” social capital implies trust of out-group members (Putnam, 2000: 358). In both cases, what distinguishes the individual to these two situations is precisely their connection to the people spreading mis-information. When the connection is more denotative (strangers), a bonding mechanism excludes these out-group others, resulting in polarization due to a confirmation effect (echo chamber). On the other hand, when the connection is more connotative (friends), then without a bridging mechanism to include out-group others, polarization results due to a conformity effect.

This same difference is noted in work on the political science of polarization, in which it is increasingly well known that there are two types of polarization being measured (Mason, 2013). While the first relates to the substantive issues being considered such as a position on social welfare or government size (a denotative, confirmation effect leading also to polarization), the second relates to partisan furor (a connotative conformity effect leading to polarization). While some have argued that the former causes the latter (Webster and Abramowitz, 2017), others have argued for the opposite (Mason, 2013, 2015). In particular, Iyengar et al. (2012) discusses using social identity theory (Tajfel and Turner, 1986) as a basis for interpreting partisanship and polarization, arguing that affective identity is an important aspect of polarized beliefs, as echoed by Facciani (2020).

The two effects are also found in research on threat and uncertainty, and are shown to be somewhat separable (Haas and Cunningham, 2014). While uncertainty decreases political tolerance in the presence of threat (conformity with the group leads to ignoring evidence from strangers), it increases political tolerance in low threat conditions (confirming evidence from friends is accepted). What Haas and Cunningham (2014) show is that if people feel safe (e.g., because they are surrounded by similar others and their values are not being confronted), they will be less politically tolerant when only non-conflicting information is presented, as in an echo chamber, but more so when conflicting evidence is presented. When people feel unsafe (they are surrounded by strangers and exposed to alternative values), then they will be less politically tolerant and will discard conflicting information and stick to their guns.

The effects of incongruence are also noted by Marietta and Barker (2019: Chap. 11), who showed that people are more willing to work with someone who shows congruent beliefs. This is because when people confront incongruence, they rely more on affective meanings, which would label this incongruent person as part of the out-group, and therefore not someone good to work with. The focus on affective meanings in this case trumps the potential benefits of working with this person, reflecting the notion that people opt for acceptance in a group over accuracy of evidence. However, Pennycook et al. (2021) has noted that while people will act according to this maxim (acceptance over accuracy), they actually prefer accuracy over inaccuracy. The key is that in order to engage in exploratory behaviors that would reduce inaccuracy (uncertainty), one needs to be in a socially and emotionally coherent situation. Once this coherence is disrupted (e.g., by the presentation of incongruent information from strangers), then focus returns to exploitation of existing structures, reliance on affective meanings and stereotypes, and epistemological laissez-faire (uncertainty is ignored instead of reduced).

Conflicting evidence increases uncertainty both connotatively and denotatively, while non-conflicting evidence does the opposite. That is, if an agent encounters evidence that contradicts her beliefs, she will feel less certain about the world (denotative) and less certain about herself and her group (connotative). Her free energy will increase and she will be knocked off the simplex. If the conflicting evidence is coming from a stranger, the agent can resolve the extra uncertainty by discounting the information (as it is from a non-trusted other), leading to a conformity polarization. On the other hand, if conflicting evidence is coming from a friend, then connotative uncertainty remains low while denotative uncertainty increases. The agent can only “double-down” on her friendship in this case, accepting the conflicting evidence, increasing political tolerance, and strengthening her friendship (the alternative is to disregard the information and forgo the friendship). Contraversely, if non-conflicting evidence is coming from a friend, uncertainty is decreased both connotatively and denotatively, leading to increased polarization through confirmation (echo chamber).

To take a simple example, consider an agent who believes the earth is flat. Suppose this agent is presented with evidence from a stranger that the earth is round. The agent is likely to disregard this conflict, and conform to his in-group of flat-earthers, reinforcing his beliefs. On the other hand, the agent would easily accept evidence of the world being flat coming from a friend, confirming his beliefs and affirming his friendship (a.k.a. group membership). Contraversely, evidence that the earth is flat from a stranger may decrease connotative polarization (maybe these strangers are really friends?), and evidence that the earth is round from a friend may decrease denotative polarization (maybe the earth really is round!). If I replace “the earth is flat” with any public policy decision (e.g., control guns, legalize drugs), and the same effects are expected to hold.

Such considerations are also taken up in the study of how groups integrate conflicting evidence, often becoming more firmly entrenched in their original beliefs, in a “backfire” like effect. Hahn (2024) uses a naïve Bayes model to implement the integration of evidence, some of which may be conflicting, and shows how this can account for belief polarization in groups. Such a model falls under the umbrella of the type of hierarchical model I am considering in this paper, but does away with one parameter, leaving only two. Hahn et al. (2020) does a similar analysis but looks at network structures and how this affects the flow of information. In an agent-based simulation, they create agents with parameter sets (credences) drawn from a narrow distribution around uninformative (high entropy) distributions. Essentially, in my model, they are starting all agents off from the exact same point on the simplex. This is restrictive in the sense the human groups may be far more diverse than this. The model I am proposing considers the non-homogeneous case, where parameters may not be shared, or may be significantly different in different groups. This could be used to extend the analyses in Hahn, for example, by considering multiple groups with different parameter settings, and how this can lead to conflict due to different interpretations of a situation (see preliminary examples in (Hoey and Schröder, 2023)). Falandays and Smaldino (2021) use a similar model to Hahn (2024) (a “mixture of Gaussians” which is a naïve Bayes model with continuous outputs), implemented in a network of agents. A range of parameter values is investigated, which the model I am proposing considers in the whole (and adds a second, more abstract level of representation).

In a similar vein, Connor Desai et al. (2020) examines a situation of informational conflict over time, resulting in the “continued influence effect” (CIE) in which misinformation is observed to persist even after corrections. The explanation given is based around the Bayesian probabilistic inertia of the perceiver’s hypothesis about whether some evidence from a source is real or not, given estimates of the reliability of the source. Again, three parameters surface in this model, which may be estimated as averages across all participants for any participant pool. However, for a different participant pool, and especially one from a different cultural background (there is no information on this given in Connor Desai et al., 2020), these parameters may be quite different, as may the predictions of the model. The model I am proposing is therefore at a higher level of generality in some sense than the specific model used by Connor Desai et al. (2020) to study CIE. While Desai’s model can be used to study the effects of misinformation on a specific group of participants, the model I am proposing generalizes this to any group of participants, with the important caveat that the parameters at play are not arbitrarily settable, but must remain on the simplex. Further, the model I am presenting can be used to model situations where people do not share these parameter settings.

Categorization and legibility

As noted by Page (2007: 183), the “greater the manipulation envisaged [by the state of the people], the greater the legibility required to effect it.” Some of the biggest attempts at social engineering by high-modernist, rationalist, ideals were founded on the ability to categorize and count people. In order to make large-scale social changes as envisioned by high modernist thinkers, all people and all their activities need to be defined and represented in a way that makes the implementation of a rational plan possible. That is, the legibility of a people is intimately tied with the possibility of making the plan work. This state of positive equality requires a despot, but, as stated by Le (1964), the “despot is not a man. It is the Plan.” (quoted in Scott (1998: 112)). However, such excessive bureaucracy results in an “arbitrary, myopic layer of officials presiding over a dispirited workforce putting in a bad-faith day on the factory floor” (Page, 2007: 177), that is, the state pretends to tax the people, and the people pretend to work.