Abstract

Why do bad methods persist in some academic disciplines, even when they have been widely rejected in others? What factors allow good methodological advances to spread across disciplines? In this paper, we investigate some key features determining the success and failure of methodological spread between the sciences. We introduce a formal model that considers factors like methodological competence and reviewer bias toward one’s own methods. We show how these self-preferential biases can protect poor methodology within scientific communities, and lack of reviewer competence can contribute to failures to adopt better methods. We then use a second model to argue that input from outside disciplines can help break down barriers to methodological improvement. In doing so, we illustrate an underappreciated benefit of interdisciplinarity.

This paper uses agent-based models to show how interdisciplinary contact can improve poor methods in the sciences. Strong methodology is foundational to trustworthy science. However, poor methods that have high rates of false positives or low generalizability persist in some disciplines, despite being widely rejected in others. Why? We first show how poor methods can persist when peer reviewers are biased toward their own practices, especially if they are not fully competent to judge the quality of new, better methods. We then extend our model to include community structure. We then show how contact with other disciplines can help spread superior methodology through copying and credit-giving. In doing so, we illustrate an underappreciated benefit of interdisciplinarity and highlight the importance of communication about research methods between scientific fields. In general, this exploration shows that interdisciplinarity can improve the collective intelligence of the scientific community as a whole.Significance Statement

Introduction

Much has been written about the benefits of interdisciplinarity. Prior discussions have focused on two categories of benefit, which we might call “innovation” and “emergence.” Innovation occurs when methods and theories find applications in fields external to those in which they were developed, leading to new insights (Foster et al., 2015; Rzhetsky et al., 2015). Examples include the application of spin glass models from physics by neuroscientists to understand perceptual categorization (Hopfield, 1982) and the application of disease contagion models by social scientists to understand the diffusion of innovations (Bass, 1969; Centola, 2018). 1 Emergence goes beyond knowledge transfer to involve the creation of new amalgamate disciplines, often to great success. Examples include the relatively recently formed fields of cognitive science (Goldstone, 2019), network science (Brandes et al., 2013), and cultural evolution (Mesoudi, 2017), while cybernetics (Wiener, 1948) provides an earlier example. 2

In this paper, we describe an additional benefit of interdisciplinarity, usually omitted from the conversation, which we might call “methodological competition.” We propose that interdisciplinarity promotes the maintenance and spread of superior scientific methodologies. Superior methods as we consider them need not be innovative, and as such this discussion is orthogonal to the “innovation” benefit. Our main claim is that when inferior methods persist in individual disciplines, interdisciplinary contact can improve the chances that superior methods take over. Our argument is supported by a case study and by mathematical and agent-based modeling grounded in the framework of cultural evolution.

In many cases, scientific communities continue to use inferior methods even after they are abandoned in other disciplines. The replication crisis, for instance, has shed light on problematic practices in areas like social psychology and biomedical research (Open Science Collaboration, 2015; Ebersole et al., 2016). Even as some disciplines adopt reforms like open data, preregistration, and more rigorous statistical training, others remain resistant. 3 On the face of it, this pattern seems surprising. We expect scientific communities to share the normative goal of seeking truth, and thus expect that methods promoting this goal should be widely adopted. What is going wrong?

Our first model considers groups of scientists who choose between methods that are more or less likely to yield epistemic successes—that is, to contribute new knowledge or understanding. The research produced by these scientists is reviewed by peers, and those methods that yield publications tend to be adopted by others in the field. When review tracks only epistemic success, there is no problem. Communities adopt superior methods.

We are interested in cases where reviewers are either incompetent to judge between methods, or else show biases for the (possibly inferior) methods that they themselves are already using. We find that these factors can lead to the stable persistence of poor methods. This result extends previous work by Akerlof and Michaillat (2018), who focus on how self-preferential bias prevents the spread of superior theoretical paradigms.

Our second model shows how interdisciplinarity can break this pattern. In particular, we focus on two mechanisms by which this can happen: (1) credit assignment and (2) copying between disciplines. First, when reviewers from disciplines with different competences and biases are able to evaluate work within a problematic discipline, and assign credit based on their own standards, better methods can gain traction within the original discipline. Second, when individuals using poor methods occasionally copy the methods of successful peers in other fields, this increases the likelihood that better methods take over in their own community.

Our models are tailored to scientific communities, but the mechanisms at play may be generalized to other settings. For instance, it has been recognized that sub-optimal practices in a wide variety of organizations can get “locked in” by positive feedback effects (Arthur, 1989). When firms continue to use poor practices (or fail to adopt new innovations), when government bodies do not adopt ideal scientific standards, or when sports leagues do not try out best training regimes, contact with other, similar groups may help. Again, this can happen via outside credit-giving, that is, when feedback from outsiders allows innovative insiders to have more influence. It can also happen when practitioners copy the successful methods of another firm or team. In this way, the right levels of contact between various sorts of groups may improve the performance of all. To put it even more broadly, interconnectivity may improve the collective intelligence of groups by facilitating the adoption of superior problem-solving methods.

The remainder of the paper is organized as follows. In MBI and sports science, we present a case study which illustrates the importance of competence, self-preferential bias, and interdisciplinarity in determining methodological practice in the sciences. In Bias, competence, and interdisciplinary contact, we give some relevant background for our model and discuss previous research looking at the failure of good methods to spread in science. The one-community model outlines our first model, looking at the persistence of poor methods. We present an analysis of this model in Payoffs and invasion in a single community. We then move on to discuss interacting communities and interdisciplinarity in Interactions between communities. We conclude in Discussion by discussing implications for the optimal structure of scientific communities, and outlining, in more detail, the roles our models can play in reasoning about the real world.

MBI and sports science

In this section, we discuss a case study from the field of sports science. 4 Two factors we explore in our models—competence and self-preferential bias—seem to have played important roles in this case. Furthermore, interdisciplinary feedback now seems to be playing a key role in disciplinary reform. In the conclusion, we will briefly discuss a few additional examples.

Foam rolling involves lying down, face up, with a soft polymer cylinder under one’s back and rolling over it with the aim of relaxing muscles. MacDonald et al. (2014) purport to show that foam rolling reduces muscle soreness, improves range of motion, and even improves performance in activities like vertical jump height when used after exercise. Since publication in 2014, their paper has been cited hundreds of times. But their claims rely on a statistical method called magnitude-based inference (MBI), which statisticians have widely criticized as misleading and incoherent (Sainani, 2018). Over the years, hundreds of published papers in the discipline of sports science have used MBI, and the demonstrated unsoundness of the method throws many of these results into doubt (Lohse et al., 2020). A re-examination of the data from the foam rolling study using more rigorous statistical testing casts doubts on many of their claims (Lohse et al., 2020).

Magnitude-based inference was introduced by Batterham and Hopkins (2006). Briefly, it compares the risk that an intervention causes harm with the chance that it benefits an athlete. 5 Rather than providing equations or making documented code available that detailed the workings of MBI, the authors developed and distributed Microsoft Excel spreadsheets that allow readers to implement their method without understanding it.

Since its introduction, statisticians have been raising red flags about MBI and calling for statistical reform in sports science (Barker and Schofield, 2008; Welsh and Knight, 2015; Sainani, 2018; Lohse et al., 2020; Sainani et al., 2020). Biostatistician Andrew Vickers has described MBI as, “a math trick that bears no relation to the real world” (Aschwanden and Nguyen, 2018). Recently, there has been movement to suppress the method in sports science, including a ban by the well-respected journal Medicine and Science in Sports and Exercise (MSSE). But despite this at the time of writing, numerous articles continue to be published using variations of MBI.

Several factors contributed to the uptake and persistence of MBI. First, its originators, who are prominent members of the sports science community, actively promoted the method through a website and at sports science conferences. 6 Second, the ease of use of the spreadsheets seems to have been attractive to users. Third, many sports scientists (like many other scientists) are relatively unfamiliar with the mathematical details of their statistical methods, and thus unable to adjudicate the quality of such methods themselves (Vigotsky et al., 2020). Last, the method has a high false positive rate. This means that researchers in sports science, who are often looking for small effects in small samples, were able to publish results using MBI that would otherwise have been statistically insignificant. 7

There are a few things to highlight about this case. First, for the duration of its use, statisticians have universally labeled MBI as a misleading methodology. Nevertheless, reviewers within sports science, in judging the research of their peers, have approved its use. As mentioned, this seems to be at least partly due of a lack statistical competence by these reviewers. It also seems to be due to the strength of self-preferential biases. Once the use of MBI became widespread, researchers who employed it themselves were perfectly willing to accept other papers using it for publication. In editorial on MBI biostatistician Doug Everett writes, “I almost get the sense that this is a cult. The method has a loyal following in the sports and exercise science community, but that’s the only place that’s adopted it” (Aschwanden and Nguyen, 2018).

A second thing to highlight is the role of statisticians in the slow move toward rejecting MBI. This includes their role as reviewers in the field. For example, when the originators of MBI tried to publish a defense of the method in the journal MSSE, reviewers in statistics rejected the paper. 8 In addition, some sports scientists have worked directly with statisticians to promote better methods and call for reform (Sainani et al., 2020). These forms of contact with statisticians seem to have been crucial in the current turn against the method.

Bias, competence, and interdisciplinary contact

In the next section, we use models to systematically investigate some of the key features at work in the case of MBI and others like it, and test their relevance to the persistence of poor methodology. Before doing so, let us first address these key features—self-preferential bias, competence, and interdisciplinary contact—in greater detail. We will follow this with a discussion of previous, relevant modeling literature.

Bias and competence

One focus of our investigation here is researcher bias toward one’s own methods. There are a cluster of causes for this sort of bias. Many communities have norms for researchers to use the standard methods of the field. Journals often act as gatekeepers standardizing a field’s practices. Novel methods must often overcome conservative biases for existing methods, a point noted by Kuhn (1962) and others. Recently, concerns have been raised about the adverse effects of conservative norms in rejecting both novel methodologies and novel questions in the social sciences (Akerlof, 2020; Barrett, 2020a; Stanford, 2019). In addition, a wide body of empirical work has found that scientists show preferences for work like their own during peer review. 9

Despite the influence of self-preferential biases, we expect new methodologies to spread in a scientific community if researchers discern a clear advantage to adopting them. For this reason, competence to assess methodological quality is critical. By competence, we mean the ability of researchers within a research tradition to assess the relative quality of methodologies in producing epistemic value. Several studies show low inter-rater reliability among peer reviewers (Cole et al., 1981; Cicchetti, 1991; Mutz et al., 2012; Nicolai et al., 2015) suggesting that competence may sometimes be highly variable. While competence will not be the same for all researchers within a discipline, shared norms and training practices may create systematic differences in competence across fields, as in the case of MBI. Exacerbating this effect, in some fields, the rewards for adopting new methodologies may be especially hard to evaluate compared to others, particularly when effect sizes are small and data is hard to gather.

We have just discussed self-preferential bias and competence to assess methods. Before continuing, it is worth stepping back to clarify how we are using the term “method” here. There are a number of related things that the models we develop can apply to. Paradigmatic applications include cases like the MBI one, where scientists use discrete, easily labeled practices, which they learn from other scientists. Things like statistical practices (MBI vs standard frequentist methods), experimental paradigms (like induced valuation in economics or looking times in developmental psychology), quality control methods (preregistration or open data), and mathematical frameworks (like using Markov chain models to capture genetic drift) fall within these clear cases.

There are other things the models can apply to, but where the mapping between models and reality will be less precise. These might include guiding theories or paradigms like natural selection or relativity theory. These shape practice and spread between scientists but are embedded in many other theoretical commitments and aspects of practice. In addition, as noted, we might apply the models to practices in non-scientific communities, such as the use of checklists by airlines to prevent fatal accidents, or interval training by cross-country coaches. Readers should ideally keep the sorts of cases discussed in the previous paragraph in mind when assessing our models, although we leave open the possibility of wider applications.

Interdisciplinarity

Many scientific fields are linked via shared interests, forming a loose network of communities (Vilhena et al., 2014). Such links provide a social structure for fruitful interdisciplinary contact. Interdisciplinarity allows different fields or sub-communities to retain their cultural identity and norms, while also receiving and sharing knowledge, ideas, and methods.

There are different ways in which interdisciplinary contact occurs. Journals receiving work that cannot be reviewed within their field may seek input from outsiders. Alternatively, a researcher may submit work for evaluation in adjacent fields. An academic might attend a conference in another field and learn about new methods or theories. Two researchers might collaborate across disciplines and, in doing so, train each other in their different methods and assumptions. A research trained in one discipline might even change fields, bringing methods and ideas from her original discipline to her new one.

There are contextual factors that determine when and whether researchers will benefit from such contact. Some fields have norms discouraging interdisciplinary publication, and in some fields, outside publications or collaborations will not support tenure or promotion decisions. 10 Some disciplines have practices that cannot be appropriately critiqued from without, such as when they use specialized, expensive technology that requires extensive training. In still other cases, the costs of training and technology will mean that members of one discipline are unable to learn good methods from another, even if doing so might be epistemically beneficial.

In other cases, although, researchers can be successful by being relatively interdisciplinary—by publishing in the journals of adjacent fields, receiving grants centered in adjacent disciplines, collaborating with outsiders, or learning methods from adjacent fields. As we will argue, in these cases, it may be possible for better methods to gain traction even in communities marked by strong self-preferential bias or low competence. In this way, contact between disciplines, or other sorts of groups, can potentially improve the performance of all. In the MBI case, for example, interdisciplinary contact of these sorts seems to be helping to eliminate poor statistical practice.

Previous work

In developing our models, we (fittingly) draw on work from several disciplines. We follow previous authors in assuming that scientists are part of a “credit economy.” That is, scientists strive for publications—and the citations, talk invitations, job offers, grant money etc. that ensue—in the same way that normal people strive for wealth or happiness.

Many credit economy models focus on scientists as rational credit seekers (see, for example, Kitcher (1990); Bright (2017)). Our model, in contrast, falls in line with previous work on “selection” models, where scientists are part of a population where certain behaviors are selected by dint of their success. In particular, as will become clear, we assume that methods which generate credit tend to spread. This could be because prominent role models tend to be imitated, as with MBI in sports science. It could be due to conscious choices to copy methods that generate credit or it could stem from differential success of students whose advisors use credit-producing methods. Previous models have shown that these sorts of selection processes can help explain failures of methodology and discovery in science (Smaldino and McElreath, 2016; Smaldino et al., 2019; Holman and Bruner, 2017; O’Connor, 2019; Stewart and Plotkin, 2021; Tiokhin et al., 2021).

Such models are related to biological models looking at the selection of beneficial behavioral traits. In particular, the multi-group model we present in Interactions between communities reflects a cultural evolutionary model developed by Boyd and Richerson (2002). They consider a population where different cultural groups adopt cultural variants via imitation. They assume, as we do, that imitation tracks payoff success and show how beneficial variants can spread between sub-groups. 11 We, likewise, are interested in cases where beneficial epistemic practices can spread to other sub-fields. Our models, however, are specifically designed to represent details of scientific communities. In addition, when it comes to the spread of methods, we focus both on imitation between groups and the possibility of interdisciplinary credit allocation.

Akerlof and Michaillat (2018) model the persistence of “false paradigms.” That is, they investigate why a scientific community might continue to adhere to a set of sub-optimal guiding theories. They consider the possibility that scientists are biased toward their own paradigms in tenuring younger faculty. As they show, such a bias can stabilize poor paradigms, especially when tenure judgments are less sensitive to the quality of the work performed. While we make some different modeling assumptions, our findings concerning self-preferential bias are very similar to theirs, adding robustness to this idea. Even with different modeling choices, self-preferential bias can stabilize poor practice, particularly in the face of incompetence. Our second model further departs from Akerlof and Michaillat (2018) by investigating the role of outside influences in methodological change. They propose that paradigm change is largely contingent on improvements in the competence of community members. This may be the right focus for paradigms that are strictly discipline specific. As we show, however, methods which are used across disciplines can spread through contact between communities.

The one-community model

We consider a community where each scientist employs one of two characteristic methods: an “adequate” method, (A), or a “superior” method, (S). We assume that the quality of methodology is relevant to the epistemic products of these scientists. The expected epistemic value produced by a researcher using the adequate method is a baseline of x A = 1, while a researcher using the superior method produces an expected epistemic value of x S = 1 + δ. A higher value of δ corresponds to a greater distinction between the quality of the methods. In the sports science case, this difference would track the gap between MBI, which produces low-quality results that are poor guides to action in the world, and more standard statistical methods. As another example, we might compare randomized control trials to trials without a control. The former method is better at eliminating spurious correlational findings, and thus is better at adding to existing bodies of knowledge.

The first question we ask is: under what conditions do these methods yield more or less credit for their users? On the assumption that high-credit methods tend to be imitated, answering this question will allow us to predict whether communities will adopt one method or the other.

Scientists employ their methods to generate results and are then assigned credit by reviewers in their field. Reviewers here could represent those who literally review work for publication. But reviewers could also represent other credit assignment mechanisms in science, including, for instance, those who read papers and cite them, or invite the author to apply or a job, or to speak at a conference. Reviewers are characterized by two field-specific properties: competence and self-preferential bias. Competence, ω ∈ [0, 1], is the ability to discern the relative value of another scientist’s methods. At the extremes, when ω = 1, reviewers are perfectly accurate in their assessment, and when ω = 0, reviewers cannot distinguish the value of a method from the average method used in their field. The competence-based credit rating assigned to scientist i is given by

Notice that when competence is very low (i.e., ω ≈ 0), reviewers rate all papers as having the same quality. In computational versions of the model, detailed below, we can make the model more realistic by adding an error term where reviewer judgments are noisy. In such cases, different papers will receive different quality ratings, but incompetent reviewers will draw all these ratings from the same distribution. 12

Self-preferential bias, α, is the extent to which reviewers prefer research that uses similar methods to their own. When α = 1, reviewers assign credit entirely based on similarity to their own methods, whereas when α = 0, they view methodology solely in terms of its (perceived) objective merit.

The total credit given to research produced by scientist i using method x

i

by reviewer j is thus

To review, when α—the weight determining bias versus competence in judgments—is very low and ω—the actual competence of reviewers—is very high, scientists receive a payoff commensurate with the quality of their methods. When ω decreases, reviewers are not biased to a particular method but are unable to distinguish the underlying quality of the two methods. When α is higher, self-preferential bias plays a role in review. The credit a researcher can expect to receive becomes dependent on the current distribution of methods in the field, such that more prominently used methods tend to get higher payoffs because more reviewers are familiar with them and prefer them.

The model we have presented to this point is a simple and highly idealized one. The goal is to investigate how just a few key factors in a community can impact the adoption of methods. In addition, this simplicity will allow us to naturally extend the model to the case of interdisciplinarity.

Payoffs and invasion in a single community

With our model defined by the equations above, we can calculate the expected credit that will be assigned to scientists using either of the two methods. Again, let p be the proportion of scientists using the superior method. The expected credit contribution from reviewer bias, B

i

, is 1 − p for an adequate scientist and p for a superior scientist. A scientist using method A should thus expect to receive credit of

In other words, method S spreads whenever the epistemic advantage to adopting the superior method (δ) is large enough to overcome limitations from the competence and bias of reviewers. Greater competence (ω) lowers this threshold. Greater bias (α) increases it.

The distribution of methods in the community (p) also matters. If a substantial number of individuals have already adopted method S, bias can work in favor of the superior method. In particular, there is a threshold frequency of users of method S which, if reached for whatever reason, will allow the superior method to take over. We can compute this threshold by solving for the minimum proportion of scientists using method S necessary for it to increase in frequency, p*. This is the value of p for which C

S

> C

A

If decisions are made entirely based on bias (α = 1), then whichever method is more common will spread (p* = 1/2). As bias goes to zero, the better method is increasingly guaranteed to spread at lower threshold frequencies. For intermediate values of bias, increased competence can move the critical threshold lower, so that a better method held by the minority can still spread even in the presence of bias. Similar logic holds for when competence increases from zero. Figure 1 shows threshold values of p* under several parameter values, along with the results of agent-based simulations of the model (described below). Note the close match between analytic and agent-based results. Evolution of superior methods as a function of their initial frequency, p. The left graph (a) varies self-preferential bias, α, while holding competence fixed at ω = 0.5. The right graph (b) varies competence, ω, while holding bias constant at α = 0.5. Dashed lines are analytical calculations from equation (7). Solid lines are the proportion of 100 runs of an agent-based model in which superior methods spread to fixation.

Let us focus on the case in which method A is firmly entrenched in a scientific community, so that almost everyone is using method A. This case is perhaps the most interesting because it allows us to ask: under what conditions can an objectively better method increase in frequency when it is rare (or “invade” in the language of evolutionary game theory)? We can calculate the criteria for invasion by setting p ≈ 0. The following inequality shows the minimum epistemic value advantage for method S to invade

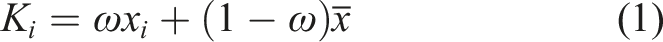

Figure 2 illustrates how competence and bias both contribute to the spread of better methods into a community that is currently adopting poor ones. This figure shows the minimum competence, ω, needed for method S to spread when rare as a function of bias, for several values of the method’s epistemic advantage

13

, δ. The graph could also be interpreted as the maximum bias allowable for the invasion of superior methods for a given level of competence. Either way, the greater the extent to which reviewers are biased toward their own methods, the more competence they must possess to accurately identify superior methods. The smaller the improvement of the method, the less likely superior methods are to spread when reviewers are even somewhat biased and imperfectly competent. This indicates that there may be a wide range of conditions under which novel superior methods fail to spread in a community of scientists. Minimum competence, ω, necessary for superior methods to invade a population of adequate methods as a function of bias, α. The shape of the curve depends on the epistemic advantage of better methods, δ.

Agent-based simulations

Agent-based simulations allow us to add noise and error to the model, to explore finite populations, and to add additional community structure. We find that they support the findings of the analytic model just presented. The simulation model described here will be extended in the subsequent section to allow us to examine multiple interacting communities.

In these simulations, we generate a population of N = 100 agents. We assume that self-preferential bias and competence are homogeneous across the group. In each round, each agent produces some epistemic product using their method. We calculate the credit an agent expects to receive for this work given the make-up of their community, just as in the analytical model. 14 Credit assignment reflects self-preferential bias and competence but might also involve the introduction of noise, which we will describe below.

We then use a process similar to the Moran process from evolutionary biology to determine how methods change over time (Moran, 1958). An individual i is randomly chosen to adopt a new method. They do so by randomly selecting another researcher j as a target for possible imitation. Agent i copies the method of agent j with a probability given by the following sigmoid function

We ran simulations long enough that they reached stable states (where one of the two methods reached fixation) to see whether the population evolved to use the superior method over the course of simulation. A full description of the agent-based model, including all parameters used and a link to the source code, is provided in the Appendix.

Figure 3 shows the proportion of simulation runs in which method S successfully invaded and went to fixation. For these results, we initialize the population with 95% of agents using method A, and with 5% using method S. As is evident, the analytic predictions (pictured as magenta lines) correspond very well to the outcomes of these simulations.

15

Bias and competence trade-off in determining whether the better method can invade. The epistemic difference between methods, δ, shifts the degree of competence necessary to offset bias. Proportion of simulation runs in which superior methods successfully invaded a population of adequate methods, as a function of competence, ω, and self-preferential bias, α, for several values of epistemic difference, δ. Colored cells indicate the proportion of runs in which methods S dominated after 105 time steps, averaged across 100 runs for each combination of parameter values. Magenta curves are predictions from the analytical model seen in Figure 2.

As any academic knows, the peer review process is not always a consistent one. For this reason, we also ran simulations in which error was introduced into credit assignment. Under this assumption, the credit assigned to scientist i was

Interactions between communities

We have seen that if bias for existing methods is strong or if competence to assess the quality of new methods is low, inferior methods can persist and superior methods cannot spread. However, this conclusion so far holds only for an isolated community, in which community members always evaluate one another and in which copying always occurs within the community. In this section, we consider what happens when two scientific communities interact. This model is similar to the one-community version, with two exceptions (see Appendix for more details). First, when a scientist is evaluated for credit, the reviewer is chosen from the other community with probability c, which we call out-group credit assignment. Second, when a target is chosen for the potential transmission of methods, the target is chosen from the other community with probability m, which we call out-group copying. These represent two types of interdisciplinarity within a research area. The full two-community model is illustrated in Figure 4, and Table 1 summarizes parameter values we consider. Model diagram. Parameters used in the multi-community model.

In particular, we are interested in cases where one community has adopted the superior method, and another the worse one. We consider two communities of size N = 100. We initialize community 1 as before, with 95% of agents using method A and only 5% using the superior method. We choose parameters such that in the absence of inter-group interaction (c = m = 0), the superior method will not spread in community 1. We initialize community 2 with 95% of agents using method S and 5% A, so that the superior method S is stable in the absence of interdisciplinarity. Our analyses show that a moderate amount of interdisciplinary contact can lead to the spread of the superior method in community 1. The rest of this section will elaborate this finding and point to a few cases where it does not always hold.

We begin by focusing on cases where method A is common in a community with either (1) low competence or (2) high self-preferential bias, such that method S fails to spread when rare. The parameters of bias and competence used here are illustrated in Figure 5. The pink horizontal axis represents the case in which superior methods fail to spread because of high bias. The two communities have the same competence, ω = .5, but differ in bias with α1 = {.8} and α2 = {.2}. The turquoise vertical axis represents the case where one community has relatively low competence. Our two communities have the same bias, α = .5, but differ in competence with ω1 = {.2} and ω2 = {.8}. Relation between competence and bias for the invasion of better methods within a community, for δ = 2. The gray area denotes a region where superior methods cannot invade, and the peach-colored area denotes a region where they can. The points show the scenarios comparing adequate (circles) and superior (squares) communities, with the turquoise points on the vertical line differing on competence (ω = {0.2, 0.8}) and the pink points on the horizontal line differing on bias (α = {0.2, 0.8}).

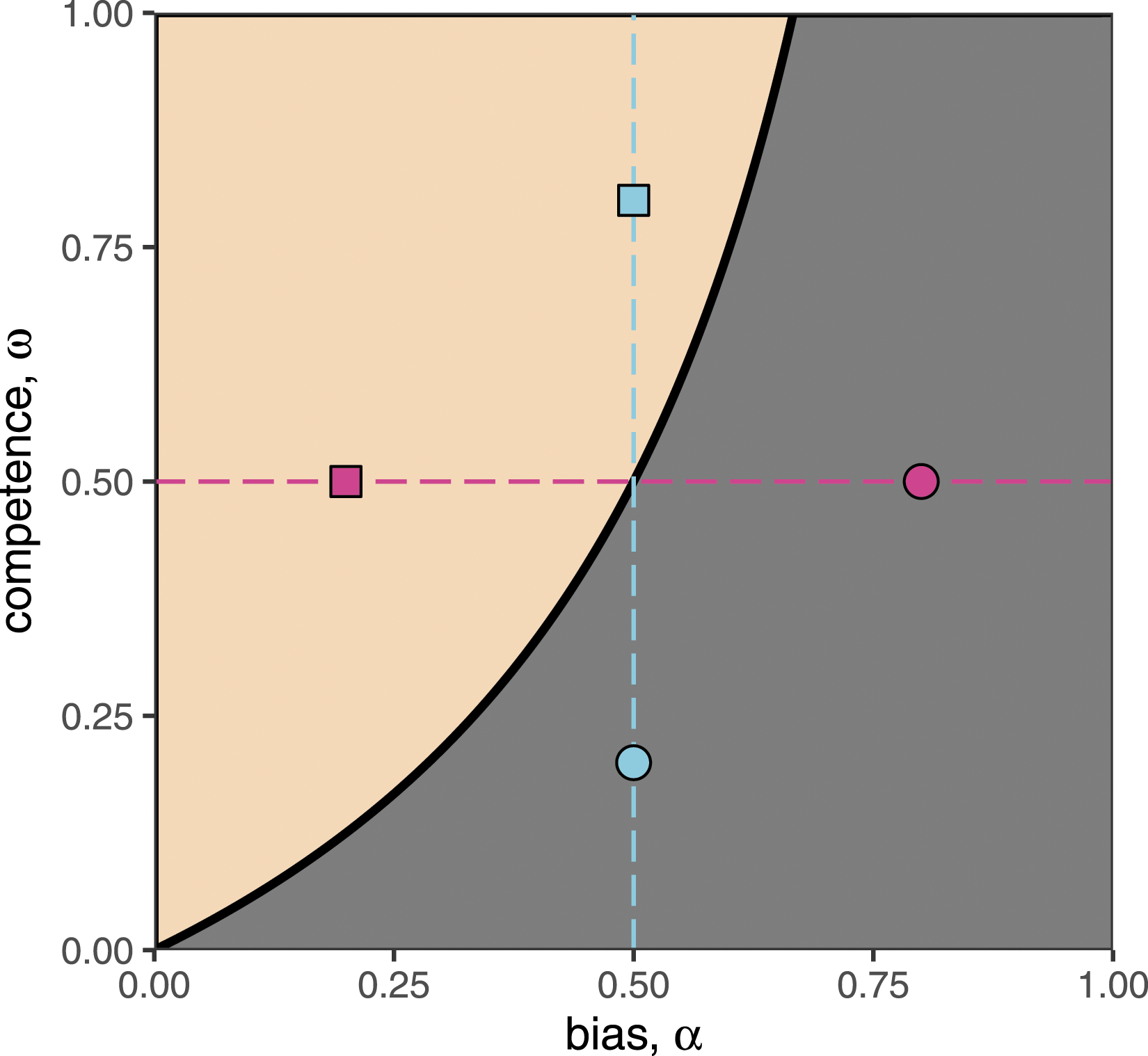

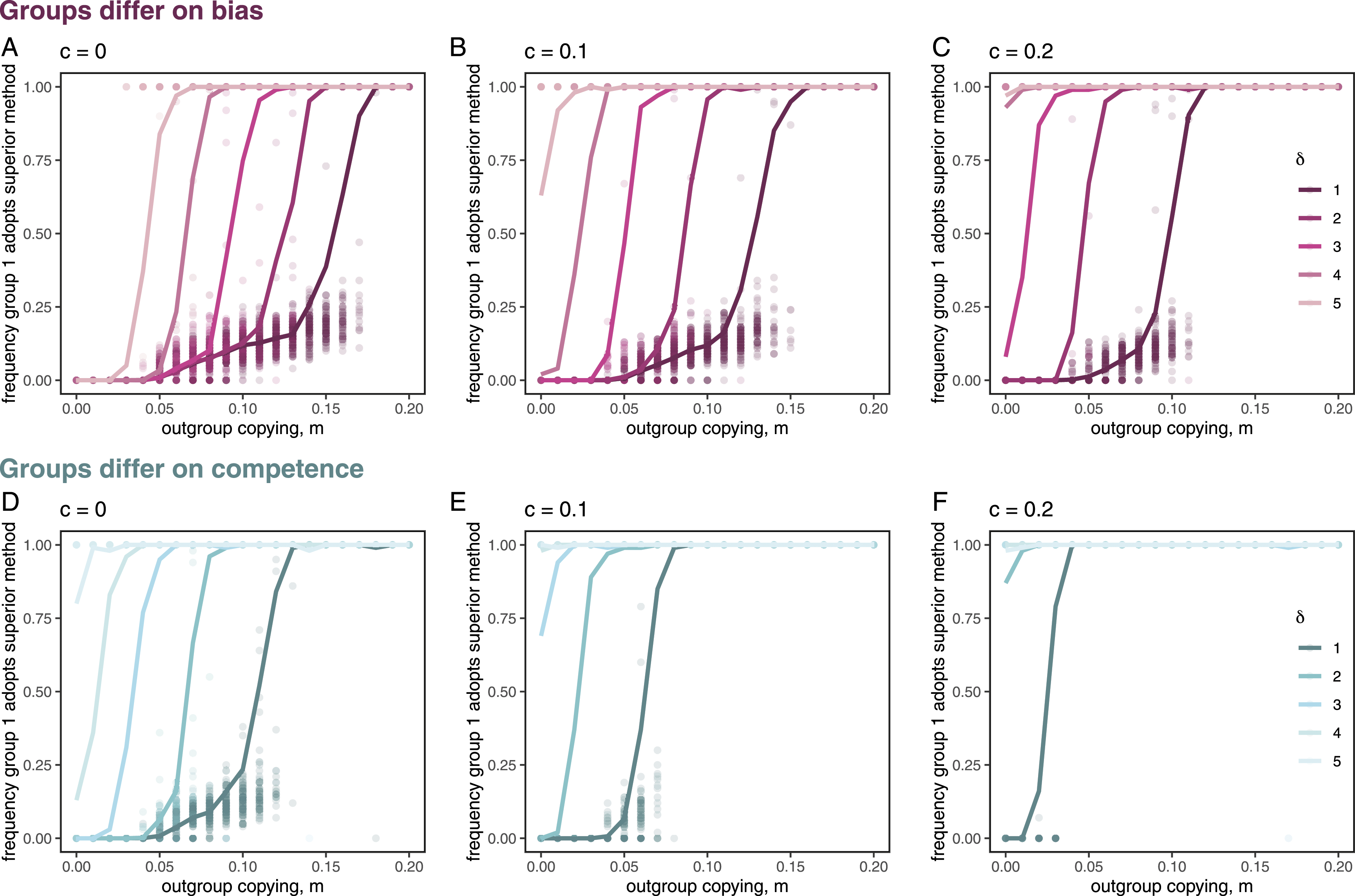

If a small but sufficient number of credit assignments and/or copying observations are made between the communities, the superior method can spread within both the high bias and the low competence communities. The greater the level of out-group copying and/or out-group credit assignment, the greater the likelihood that the superior methods ends up spreading through community 1. In addition, these two sources of interdisciplinarity interact additively. Figures 6 and 7 A, B display these results.

16

Interdisciplinarity allows the spread of better methods into a group that would otherwise not adopt them. Circles represent the proportion of agents in community 1 (the “adequate” community) who have adopted method S at t = 105 time steps on individual runs of the model, for several values of δ. Lines are the averages across 100 runs for each set of parameter values. Top row (a–c): Superior methods spread to a highly biased group when there is sufficiently high out-group copying, m, and/or out-group credit assignment, c. Here α1 = 0.8, α2 = 0.2, ω1 = ω2 = 0.5. Bottom row (d–f): Superior methods spread to a low competence group when there is sufficiently high out-group copying, m, and/or out-group credit assignment, c. Here ω1 = 0.2, ω2 = 0.8, α1 = α2 = 0.5). Positive effects of out-group credit assignment and out-group copying are additive. Heat maps display the mean frequency of agents using of method S in group 1, p1, after 105 time steps, averaged over 100 runs for each combination of parameter values. This effect holds when groups differ on bias (a: α1 = 0.8, α2 = 0.2, ω1 = ω2 = 0.5) or competence (b: ω1 = 0.2, ω2 = 0.8, α1 = α2 = 0.5). However, interdisciplinarity can also aid the spread of superior methods even when both communities are plagued by high bias (c: α1 = α20.8, ω1 = ω2 = 0.5) or low competence (d: ω1 = ω2 = 0.2, α1 = α20.5). For all cases, δ = 2.

Out-group credit assignment from community 2 means that those in community 1 using the superior method receive more credit than their colleagues using the adequate method, even when the latter method is more common in their community. This leads to greater prominence for these individuals and more copying of their methods. The effect of out-group copying is even stronger. Credit assignment is accumulated over multiple interactions, only some of which involve the out-group. Out-group copying, on the other hand, has the potential to introduce superior methods into a community in one fell swoop. Notice that these effects interact with the epistemic distinction between the methods, δ. The greater the epistemic benefit of the superior method, the less interdisciplinarity is needed for its spread.

Strikingly, the spread of better methods by interdisciplinarity does not actually require that community 2 be particularly competent or unbiased. Instead, if a community adopts superior methods by any means, perhaps through some accident of history or through influence from another discipline, they can still pass on these beneficial practices. Figure 7 shows heat maps indicating the spread of superior methods both for the conditions in which communities differ, and for the conditions in which both communities are low in competence or high in self-preferential bias. As we see in Figure 7(c) and (d), even if both communities are incompetent or biased, superior methods still spread through interdisciplinary contact. This indicates that superior methods could potentially spread throughout many communities via a cascade of interdisciplinary contact.

To summarize, interdisciplinary contact, in the form of credit-giving between disciplines and out-group copying, can unseat poor methods and replace them with better ones. This finding holds under a wide range of conditions. In addition to the parameters featured in our figures, we ran additional simulations with varying levels of competence, bias, epistemic advantage, and population size, and found that our results are robust across a very wide range of parameter values. In particular, the precise levels of self-preferential bias and competence seem to matter little as long as method S is stable in group 2. However, while superior methods are usually stable once common, there remain some rare cases in which backslide is possible. We explore these in the next section.

Can worse methods ever replace superior methods?

We have shown how superior methods can spread from a community in which they are common to a community in which they are rare. But can poor methods also spread due to interdisciplinary contact? Our analyses suggest this outcome is unlikely but not impossible. Consider a scenario in which there is widespread lack of competence to compare two different methodologies. That is, scientists in both communities cannot tell if one method is better than another. Instead they rely entirely on self-preferential bias in making credit assignments. If both communities are strongly biased toward their existing methods, then nothing should change. But what if bias is strongest in the community that has adopted worse methods, and weaker in community that has adopted superior methods?

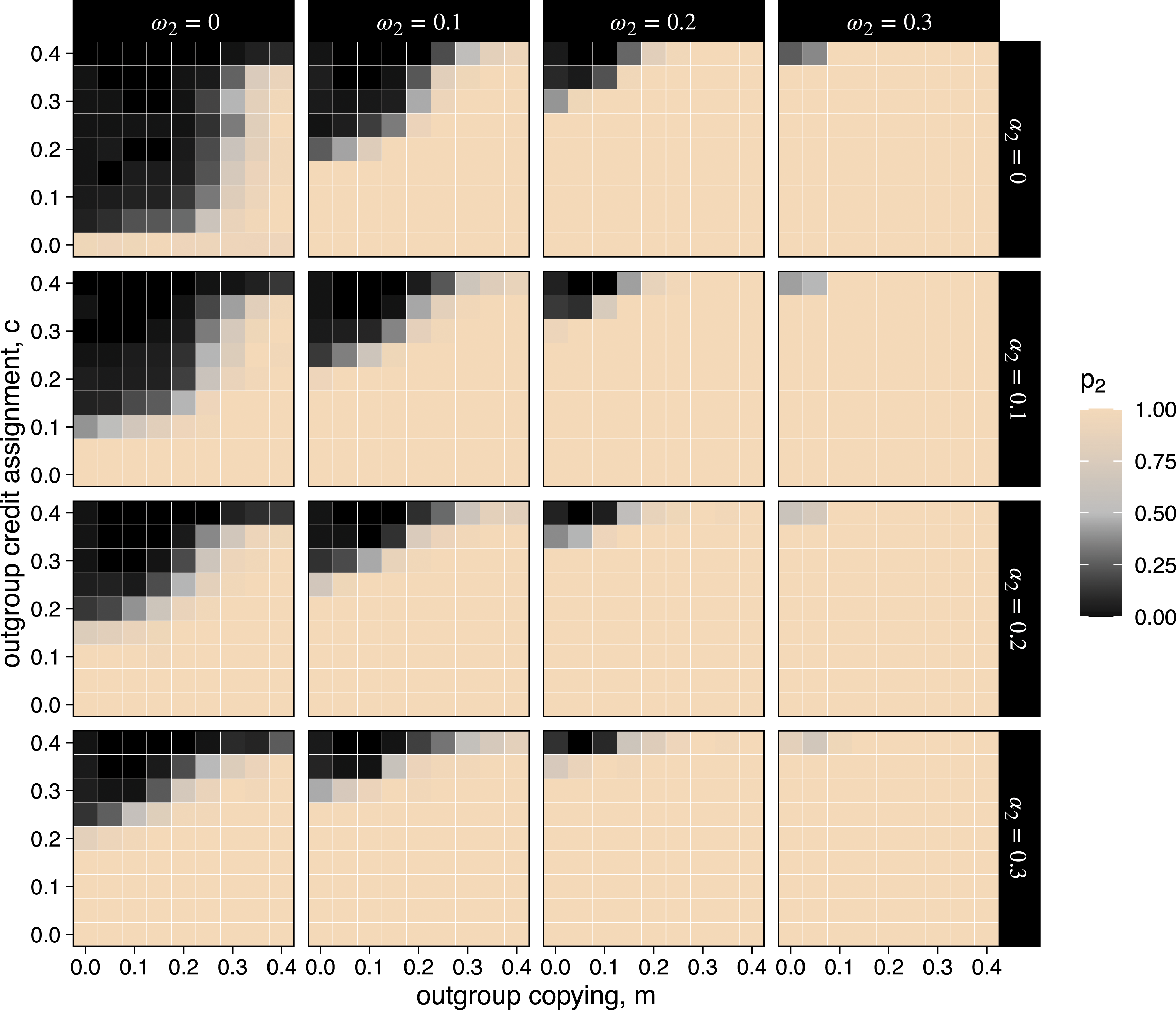

We analyze this “worst case” scenario by again considering two communities in which the superior method is initially rare in community 1 and common in community 2. Both communities lack any competence to compare methods (ω1 = ω2 = 0), and members of community 1 are strongly biased while members of community 2 are completely open minded (α1 = 1, α2 = 0). We find that under these circumstances, the worse method can spread to group 2, and that out-group credit assignment is actually the primary driver of this backslide (Figure 8, top left square). This is because reviewers in group 2 rate all scientific products equally, while reviewers in group 1 give strong ratings only to research using method A. Frequency of the superior method in group 2 after 105 time steps under the “worst case” scenario. In all cases, ω1 = 0, α1 = 1, and δ = 2. Each cell represents the average over 100 simulation runs for that combination of parameter values, and each box shows the long-term frequency of method S as a function of out-group credit assignment, c, and out-group copying, m. Each column represents a different value of ω2 and each row represents a different value of α2.

Further analysis, however, shows that increasing competence and self-preferential bias in group 2 prevent this backsliding (Figure 8). In other words, worse methods are unlikely to spread between communities as long as those communities have at least some competence or self-preferential bias. This points to a surprising possibility for why such a bias might sometimes be beneficial. In addition, some out-group copying also helps prevent backsliding to worse methods as it helps both groups to maintain a population of individuals using the superior norm.

Productivity advantages for worse methods

Our models suppose that good methods are no more difficult to employ than bad ones. But in many cases, rigorous methods are costly and time-consuming, and part of the draw of non-rigorous ones is that scientists using them can publish more quickly and easily (Smaldino and McElreath, 2016; Heesen, 2018). This was almost certainly part of the draw in the case of MBI in sports science. We might ask: in cases where poorer methods are highly productive, do they instead spread via interdisciplinary contact? The answer is, in certain cases, yes.

In our model, the parameter δ determines the degree to which the superior method is, in fact, superior. Suppose, however, that the adequate method increases productivity relative to the superior method. We can imagine adding a productivity boost, ρ, to the output for this method. In this regime, scientists employing the worst method tend to publish more papers per unit time as captured by ρ. So although each paper might not be as epistemically important, researchers could still potentially gain a credit advantage by publishing more. The addition of ρ will tend to close the epistemic gap between the methods. This will, in turn, shift the threshold levels of bias and competence for which the better method will take over within one community, as well as the threshold level of community contact for the better method to spread to a new discipline. If ρ is high enough, the worse method will actually yield more epistemic output than the better one. In such a regime, our results indicate that the worse method will instead be the one that spreads when communities come in contact.

Notice that for this to happen in our model, it must be the case that the worse method is actually producing greater net epistemic value on average, even if each individual paper is of worse quality. In model world, the spread of the worse method in this case actually increases the output of the discipline. Thus, there is some question about whether the spread of the “worse method” counts as an epistemically poor outcome here. Presumably, this regime does not track a case like MBI where, despite its high productivity, the method is so poor that it produces results that are highly questionable. Indeed, it may often be the case that output produced using low-quality methods is worse than producing nothing at all. Imagine a case, although, where a scientist develops off-the-shelf statistical packages that are easily employed and fairly dependable. These packages might replace a practice by medical researchers of hiring trained statisticians to analyze their experimental data. Clearly, the trained statisticians will produce more dependable, accurate analyses and thus more trustworthy and useful epistemic outputs. But using these packages might greatly improve speed of production and allow researchers to direct grant money to new experiments rather than data analysis. For this reason, the use of these easy packages might replace a “superior” practice, but simultaneously increase output in the discipline in such a way that is not clearly epistemically worse. In this case, the tradeoffs between short- and long-term costs and benefits to science must be considered in ways that are beyond the scope of the present analysis.

Discussion

Strong bias for current methods or low competence to assess new methods can impede scientific progress. The case of MBI in sports science fits well with the analysis provided here, and there are many other cases that do as well. For instance, the discipline of evolutionary psychology has been widely criticized for sometimes publishing findings that are unsupported and even irresponsible. A central part of these criticisms involves the development of ultimately speculative narratives about the evolution of the human mind. As critics argue, these narratives are too unconstrained, and thus are unlikely to accurately capture facts about human evolution (Gould, 1991; Lloyd, 1999; Lloyd and Feldman, 2002; Gannon, 2002). When such work is published in journals edited and assessed by evolutionary psychologists, both self-preferential bias and lack of competence can contribute to the maintenance of these uncritical applications of evolutionary theory. Competing traditions of more rigorous work in the evolutionary human sciences, including in human behavioral ecology, gene culture co-evolution theory, and also work by more rigorous evolutionary psychologists (c.f. Amir and McAuliffe, 2020; Barrett, 2020b; Gurven, 2018), point to promising avenues for future reform. Other candidate examples include the past use of factor analysis in marketing research (Stewart, 1981) and applications of unit root econometrics in macroeconomics (Campbell and Perron, 1991).

A final example worth highlighting is the field of social psychology, which has recently undergone serious methodological changes. Retrospective studies found that, particularly pre-2011, the field was rife with poorly designed and improperly analyzed data. Many attempts to replicate high profile findings in the field have failed (Open Science Collaboration, 2015; Ebersole et al., 2016). Some problematic practices like small sample sizes, lack of credible priors, and use of invalid measurement instruments may have persisted for a long time in this discipline because of the types of factors we identify here.

This field, though, also illustrates that not all problematic methods are well-captured by the models we develop. Consider HARKing (hypothesizing after results are known) and p-hacking (selectively reporting or manipulating data to produce desirable inferential statistics). Both of these are what we might call implicit methodologies. They are not typically reported in the details of published work. Reviewers thus do not typically know whether manuscripts they review involve p-hacking or HARKing, and so their self-preferential biases and competence to assess these methods do not bear on their judgments. Instead, the issue stemmed from the competence of the researchers themselves, who likely did not have the statistical and/or philosophical training to understand the problems with these practices. 17 Subsequent improvements in social psychology have benefited from interdisciplinary contact and feedback but appear to have been driven largely by critiques from within the field. This is to say that the mechanisms we focus on throughout this paper are important, but not obligatory, for explaining the persistence, and eventual improvement, of poor methodology.

Our analysis suggests that interdisciplinarity should be promoted—especially contact related to methodological practice—as such contact may facilitate the spread of better methods to new disciplines. We have already talked about some keys mechanisms for this contact—the use of competent reviewers from neighboring disciplines; academics receiving grants from, or publishing in, other disciplines; academics attending conferences outside their discipline; and cross-disciplinary collaboration. In addition, the advent of social media platforms has led to increased contact between those in different disciplines, and especially to opportunities for feedback between these groups. This may help to lessen the siloing of academic fields. Furthermore, online platforms may improve opportunities for interdisciplinary credit-giving when academics share or laud cross-disciplinary work. Likewise, explicitly interdisciplinary conferences, publishing outlets, and institutes may play an important role in the spread of good methods. Such institutions bring academics from different disciplines into contact, increasing chances of credit-giving through citations and invitations, and of methodological copying.

Other communities outside academic science, such as governmental bodies and firms, may benefit from similar sorts of contact. Arthur (1989, 1990) describes how accidents of history can lead to lock-in effects in industry where once technologies or practices become common, they are not easily replaced by superior options. Fostering a diversity of practice in different communities, and then bringing these communities into contact, may help. This could involve regular conferences where workers discuss and share their methodologies, presentations about the internal workings of these organizations to other groups, or collaborative efforts between organizations. These sorts of events may improve function at a wider level.

We might also ask: what stands in the way of sufficient interdisciplinarity in science? There are norms against interdisciplinarity in some fields. For instance, some fields consider publication in top insider journals a requirement for promotion. This limits the possibility of interdisciplinary credit-giving. In some cases, these norms might arise from in-group favoritism and biases against the out-group. In other cases, they might arise out of a desire to preserve the special status of a discipline. 18 In such disciplines, moves to change these norms may have positive epistemic impacts. Other fields may be siloed as a result of an inability to understand or engage with outside disciplines. In these cases, improved training may help, such as required graduate courses in methods from nearby fields.

One might conclude from our discussion that the best structure for scientific communities is a flat one, without disciplinary boundaries. But our results do not actually support this conclusion. A single unified scientific community would suffer from the problem indicated by our baseline model: the risk that new and improved methods fail to spread. Many have pointed to the benefits of certain types of diversity in academia. Longino (1990) in particular has advocated for critique across diverse views as important for rooting out poor assumptions and practices in science. Feminist philosophers of science like Longino (1990) and Okruhlik (1994) have lauded personal identity as an important source of beneficial cognitive diversity. Another such source stems from a diversity of educational regimes. However, several forces act to decrease diversity of practice within close-knit communities like academic disciplines, including human tendencies toward conformity, norm following, and practices of indoctrination. Some disciplinary structure may be important in preserving diversity of methods and assumptions. The aim, though, is to have enough contact between disciplines so that this diversity can prove beneficial to science as a whole. 19

Before concluding, we want to say a little more about the usefulness of our models in informing real world systems. These models are obviously highly simplified, and, as such, may fail to incorporate relevant features of actual scientific communities. This means that in real cases, interdisciplinary contact may not work just as our results suggest. The models, however, can play several epistemic roles in reasoning about interdisciplinary contact. First, they play a “how-possibly” role. At minimum, we see that it is in principle possible for interdisciplinary contact to play a direct causal role in improving poor scientific methodology. More importantly, our models are useful in directing our attention to real world interventions that have real promise vis-à-vis methodological improvement. Our models give good reasons for researchers to pay further attention to, and to further investigate, how and whether interdisciplinary contact can reform areas of the sciences that have fallen behind methodologically. Of course, a number of other researchers have already argued for various benefits to interdisciplinarity. But the models here outline specific causal pathways involving interdisciplinary credit-giving and copying that lead to the spread of superior methods. The viability of such pathways should be a topic for further research.

Footnotes

Acknowledgments

Many thanks to Christie Aschwanden, Kristin Sainani, and Aaron Caldwell for communications about methods in sports science. Thanks to Andrew Vigotsky for sharing unpublished work on sports science. For comments on previous drafts of this manuscript, we thank Liam K. Bright, Remco Heesen, Colin Holbrook, Aaron Lukaszewski, Leo Tiokhin, and Pete Richerson. Thanks to Scott Page and several anonymous referees for feedback. Finally, thanks to the organizers of the Metascience 2019 conference, which provided the interdisciplinary setting at which this paper was first conceived.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This material is based upon work supported by the National Science Foundation under Grant No.1922424.

Notes

Agent-based model description

Consider a population consisting of communities of scientists. We considered cases in which there were always either one or two communities. Each community is made up of N = 100 scientists, each of whom keeps track of their group identity and is characterized by a method x i ∈ {A, S}. The true epistemic value of method A is set at 1 without loss of generalization; the true epistemic value of method S is set at 1 + δ, so that δ represents the epistemic advantage of method S. Each community is initially characterized by a dominant method, which is used by 95% of its population. The remaining 5% of scientists use the non-dominant method. Each community k is further defined by levels of bias, α k and competence, ω k .

The dynamics of the model proceed in discrete time steps, each of which consists of two stages: Science and Evolution.