Abstract

Background

Cognitive behavioral therapy (CBT), an umbrella term for therapeutic techniques guided by cognitive behavioral theory, is an evidence-based approach for many psychiatric conditions in youth. A stronger dose of CBT delivery is thought to improve youth clinical outcomes. While a critical indicator of care quality, measuring the use of CBT techniques feasibly and affordably is challenging. Certain CBT techniques (e.g., more concrete and observable) may be easier to measure than others using low-cost methods, such as clinician self-report; however, this has not been studied.

Method

To assess the concordance of three methods of measuring CBT technique use with direct observation (DO), clinicians from 27 community agencies (n = 126; Mage = 37.7 years, SD = 12.8; 76% female) were randomized 1:1:1 to a self-report, chart-stimulated recall (CSR; semistructured interviews with the chart available), or behavioral rehearsal (BR; simulated role-plays) condition. In previous work using a global score aggregating 12 CBT techniques, only BR produced scores that did not differ from DO. This secondary analysis examined the concordance of these alternate methods with DO for each discrete CBT technique, testing for differential concordance across cognitive techniques (e.g., cognitive education) compared to behavioral techniques (e.g., behavioral activation).

Results

Results of three-level mixed effects regression models indicated that BR scores did not differ significantly from DO for any techniques, and for nine techniques, neither did CSR (all ps > .05). Contrastingly, self-report scores differed from DO for all but one technique, with greater concordance for behavioral than cognitive techniques (z = −3.29, p < .001).

Conclusions

Unlike previous findings using an aggregate score, we found that both BR and CSR did not differ significantly from DO for most techniques tested. These findings have implications within implementation research and usual care settings; they support multiple viable measurement methods that are less resource-intensive than DO.

Plain Language Summary

Psychotherapy interventions typically consist of a combination of techniques used to support client improvement. To assure quality of intervention delivery, it is valuable to measure technique use within session. One way to capture what occurs in session is direct observation (DO). However, this is expensive, time consuming, and often infeasible. Previous research has examined alternate methods of capturing session content that is more feasible and maintains client anonymity. A previous study examining use of cognitive behavioral therapy (CBT), an evidence-based psychotherapy for many disorders in youth, tested three alternatives to DO: self-report (indicate techniques used on standardized form), chart-stimulated recall (CSR; semistructured interviews, with access to session notes), and behavioral rehearsal (BR; simulated role-plays, demonstrating techniques used in session). In this previous study, each method generated a global score that estimated overall CBT use by combining ratings of 12 CBT techniques into a single score. Findings suggested that only one method—BR—was concordant with DO. However, it may be that these alternate methods are more concordant with DO for certain techniques (e.g., behavioral activation vs. cognitive education). In this study, we analyzed these same three methods compared to DO at the level of individual techniques. Unlike previous findings that supported only one alternate method, we found that both BR and CSR were concordant with DO for most techniques. These findings suggest that for several CBT techniques, use can be measured through multiple alternate methods, providing greater flexibility for researchers and clinicians.

Introduction

Cognitive behavioral therapy (CBT), an umbrella term for a set of techniques guided by cognitive behavioral theory (McMain et al., 2015), is considered an evidence-based approach for many psychiatric conditions in young people, including depression, anxiety disorders, and posttraumatic stress disorder (Benjamin et al., 2011; Dorsey et al., 2017; Higa-McMillan et al., 2016; Hofmann et al., 2012; Weisz et al., 2017). Given this, CBT has been the focus of many implementation efforts in public mental health systems serving youth (e.g., Beidas et al., 2019; Dorsey et al., 2016; Hoagwood et al., 2014). While specific CBT protocols differ depending on the condition treated, they typically include a mix of cognitive and behavioral techniques that can be combined or sequenced in different ways (Chorpita & Daleiden, 2009; Chorpita et al., 2005; McLeod et al., 2013; Rector et al., 2014; Weersing et al., 2002; Weisz et al., 2012).

The extent to which these core therapeutic techniques are delivered is theorized to be essential for achieving positive clinical outcomes; enhancing breadth and depth of CBT delivery in routine settings is a priority for implementation initiatives (Chiapa et al., 2015; Hogue et al., 2008; Huey Jr et al., 2000; McLeod et al., 2013; Miller & Rollnick, 2014; Proctor et al., 2011; Schoenwald et al., 2011). The use of techniques can be measured in a continuous manner by examining extensiveness (i.e., how thoroughly a technique is delivered) or categorically by examining occurrence (i.e., the presence or absence of a technique). Further, CBT techniques can be measured in the aggregate (e.g., using a composite score to capture global use of CBT techniques) or at the level of discrete techniques (e.g., separate scores for each technique used). Given the heterogeneous nature of CBT protocols, which often vary in the particular techniques used and the extent to which they are emphasized, measuring use at the level of discrete techniques has practical utility.

There are numerous challenges to feasibly and affordably measuring clinician use of CBT techniques, particularly in community mental health (CMH) settings, where resources are limited (Schoenwald, 2011). Direct observation (DO), which typically involves coding live or recorded sessions, is often used as a proxy for what occurs within a session. This method comes with challenges: it is expensive, logistically challenging, and requires client consent and substantial expertise and infrastructure (Rodrigues-Quintana & Lewis, 2018; Simons et al., 2010). More pragmatic, less costly measurement methods that preserve client anonymity have been developed to decrease research barriers and improve care quality (Glasgow & Riley, 2013; Lewis et al., 2021; McLeod et al., 2013; Proctor et al., 2011). However, studies comparing the concordance of alternate, more pragmatic methods with DO have had mixed results. Many studies suggest that these methods overestimate use of techniques relative to DO, especially when rating extensiveness, rather than occurrence, of a technique (Brosan et al., 2008; Hurlburt et al., 2010; McLeod et al., 2023). For example, clinician self-report (i.e., clinicians report on a questionnaire the extent to which they delivered a technique in a session) is the most widely studied method of measuring technique use and has been shown across studies to have poor concordance with DO (Creed et al., 2016; Martino et al., 2009).

That said, recent conceptual work suggests that self-report may be more concordant with DO when reporting on more concrete techniques than more abstract ones (Mcleod et al., 2023). This is supported by previous studies that found differences in concordance between various measurement methods depending on the specific therapeutic technique measured (e.g., Borntrager et al., 2015; Brookman-Frazee et al., 2021; Hogue et al., 2015; Ward et al., 2013). For example, in one study examining concordance between extensiveness ratings made by clinicians and observers, concordance was higher for a behavioral technique (i.e., the “behavioral interventions” item) compared to a cognitive technique (i.e., the “cognitive monitoring” item; Hogue et al., 2015). The literature on informant disagreement supports this; psychopathology symptom scales across multiple types of raters suggest greatest concordance for constructs that are concrete, observable, and unambiguous compared to those that are abstract (Comer & Kendall, 2004; De Los Reyes & Kazdin, 2005; Karver 2006; Salbach-Andrae et al., 2009). Behavioral techniques (e.g., use of reward charts) are typically more concrete and operationalizable while cognitive techniques are often more diffuse and conceptual (e.g., explaining the cognitive model); thus, we might expect to see greater concordance across measurement methods for behavioral relative to cognitive techniques.

Identifying the techniques for which pragmatic measurement methods are concordant with DO will inform how best to deploy them as research and care quality management tools. A recently completed randomized controlled trial (Becker-Haimes et al., 2022) compared three alternate methods to DO to measure global use of CBT techniques: self-report, chart-stimulated recall (CSR; i.e., clinicians report which techniques they used through a structured interview, referring to the client chart to aid in the recall; Beidas et al., 2016; Guerra et al., 2007; Sinnott et al., 2017) and behavioral rehearsal (BR; i.e., clinicians demonstrate the techniques delivered within session through roleplay with a trained interviewer; Beidas et al., 2014; Epstein, 2007). Unlike DO, these methods do not use identifiable client information or require client consent. Results indicated that when employing a global measure of CBT technique use, only BR produced scores that did not differ significantly from DO scores; self-report and CSR overestimated technique use compared to DO with self-report being the most discrepant (Becker-Haimes et al., 2022). However, this global rating may obscure nuances in how each measurement method performed at capturing discrete CBT techniques. Further, while an aggregate score may be useful for research purposes, to most effectively engage in quality management, it is important to understand the performance of each method at the level of discrete techniques.

We conducted a secondary analysis to (a) examine the extent to which, compared to findings at the aggregate level, there is concordance between each of the three alternate measurement methods and DO for 12 discrete CBT techniques and (b) investigate whether concordance with DO differs for cognitive versus behavioral techniques. We hypothesized that in contrast with findings from the parent trial (that only BR produces scores that do not differ significantly from DO), there may be specific CBT techniques for which more than one alternate method is concordant with DO. Second, we hypothesized that consistent with literature suggesting greater concordance of concrete constructs than abstract constructs, concordance will be greater for behavioral than cognitive techniques across the three conditions.

Method

Procedure

The City of Philadelphia (Approval No. 2016-24) and University of Pennsylvania (Approval No. 834079) Institutional Review Boards approved this study. We obtained consent or assent from all participants. Fifty-three agencies in the Philadelphia tristate area were informed about the study, and 40 agencies expressed interest. Of those agencies, 39 were eligible (i.e., had clinicians who delivered CBT to study-eligible youth), and 27 agencies geographically spread across the Philadelphia tristate area participated (i.e., allowed clinician recruitment at their site). Clinicians at these sites were recruited via staff meetings. All agencies were publicly funded and accepted Medicaid; most had participated in city-sponsored implementation efforts for CBT for youth (Beidas et al., 2013, 2019; Powell et al., 2016). Agencies primarily offered outpatient care and varied in size with regard to youth served annually. Across agencies, a mean of 15.5 clinicians provided services to youth (SD = 2.8; range: 3–43) and a mean of 2.5 clinicians in primarily supervisory roles also provided services to youth (SD = 2.5; range: 1–9).

Clinicians who consented were randomized 1:1:1 to one of three alternate measurement conditions (i.e., self-report, CSR, and BR). To be eligible, clinicians had to identify at least three sessions with eligible clients in the month following enrollment. Eligible sessions were those in English involving clients aged 7–24 (with a legal guardian who could provide consent), in which clinicians intended to use at least one of 12 CBT techniques (see Beidas et al., 2016 for detailed eligibility criteria). All client sessions were audio-recorded, stored in a HIPAA-compliant platform, and later coded using DO procedures. Clinicians and clients were compensated for their participation (see Becker-Haimes et al., 2022 for further details beyond what is provided below).

Participants

We recruited 126 clinicians from the Philadelphia tristate region from participating agencies between October 2016 and May 2020; 103 of those recruited enrolled at least one client included in the current analysis. Clinicians were primarily female (76%), White (71%), master's-level (95%), and a mean of 37.7 years old (SD = 12.8). A slight majority of clinicians were salaried (53.5%) while the rest were fee-for-service clinicians; approximately half (48%) were licensed. Clinicians had worked a mean of 10.4 years (SD = 1.0) in human services and a mean of 3.9 years (SD = 0.5) at their present agency; they carried a mean caseload of 20.7 active patients (SD = 1.4). The demographics of the clinicians within these agencies reflected those of mental health clinicians nationally and in previous work within public mental health agencies in Philadelphia. Clients were primarily male (58%), and the majority identified as Black (42%) or White (33%); they were a mean age of 13.4 years old (SD = 3.9). Client diagnoses (per clinician report) included primarily internalizing (53%) and externalizing (40%) disorders.

Techniques of Interest

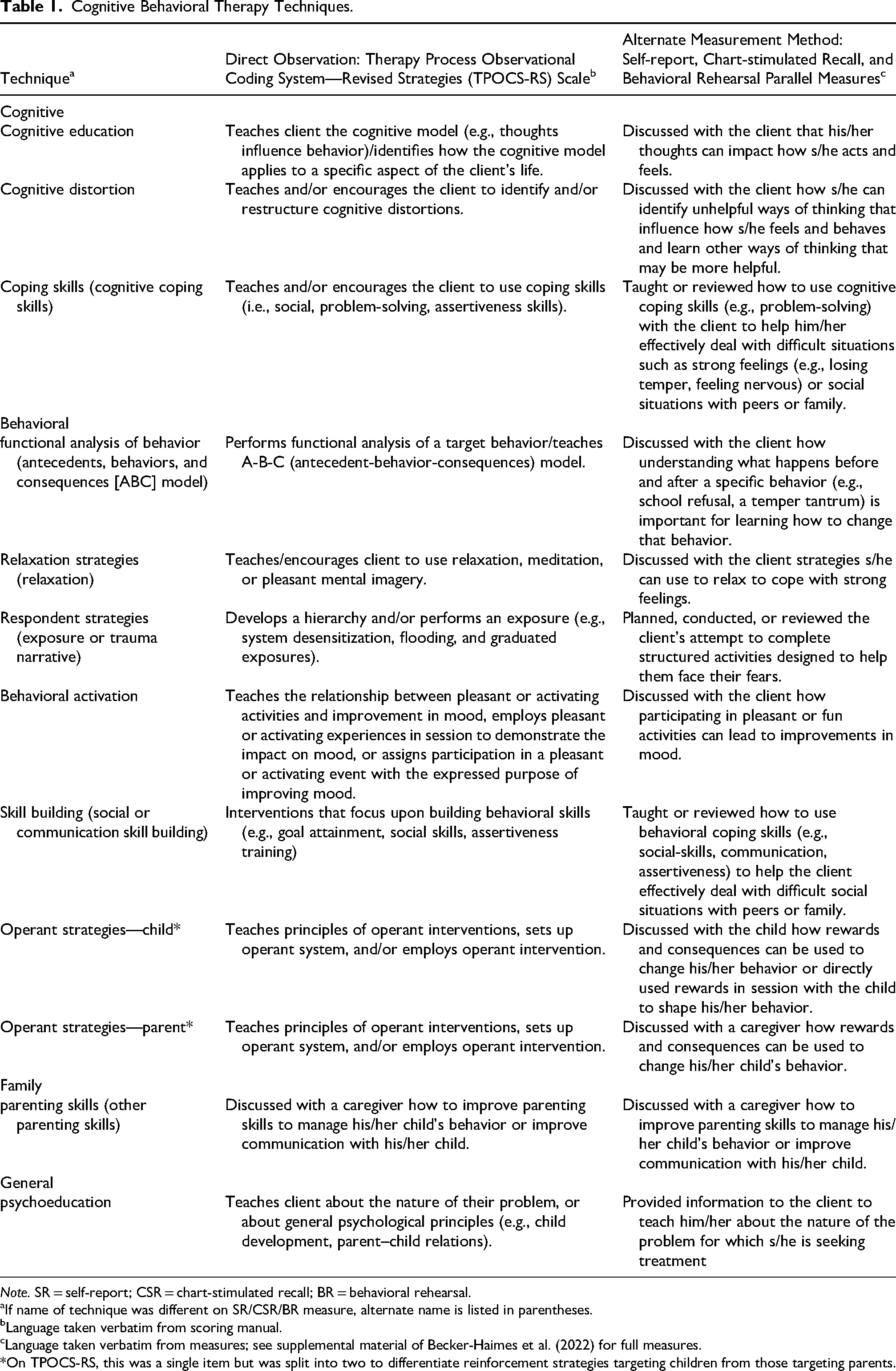

Twelve techniques commonly used in CBT for youth were selected from the Therapy Process Observational Coding System—Revised Strategies (TPOCS-RS) Scale for inclusion in the parent trial (McLeod et al., 2015; described further below). Of these techniques (see Table 1 for definitions), three were drawn from the Cognitive subscale (Cognitive Education, Cognitive Distortion, Coping Skills), six from the Behavioral subscale (one of which was split into two separate techniques, for a total of seven behavioral techniques: Functional Analysis of Behavior, Relaxation Strategies, Respondent Strategies, Behavioral Activation, Skill Building, Operant Child Strategies, Operant Parent Strategies), one from the Family subscale (Parenting Skills), and one from the general subscale (Psychoeducation).

Cognitive Behavioral Therapy Techniques.

Note. SR = self-report; CSR = chart-stimulated recall; BR = behavioral rehearsal.

If name of technique was different on SR/CSR/BR measure, alternate name is listed in parentheses.

Language taken verbatim from scoring manual.

Language taken verbatim from measures; see supplemental material of Becker-Haimes et al. (2022) for full measures.

*On TPOCS-RS, this was a single item but was split into two to differentiate reinforcement strategies targeting children from those targeting parents.

Measures

Direct Observation

Directly observed use of CBT techniques was captured using the TPOCS-RS Scale (Mcleod & Weisz, 2010; McLeod et al., 2015). This coding system was developed to capture clinician use of 42 child psychotherapy techniques categorized into six subscales (Cognitive, Behavioral, Psychodynamic, Family, Client-Centered, and General). The TPOCS-RS has demonstrated good internal consistency and validity (McLeod et al., 2015; Smith et al., 2017). Ratings on the TPOCS-RS use a 7-point Likert-type scale ranging from 1 (not at all) to 7 (extensively). Ratings are given for extensiveness, which is comprised of thoroughness (the “depth” with which the technique is delivered) and frequency (how often the clinician uses the technique within the session)

A team of 11 study staff members coded the 288 audio-recorded sessions. Study staff, including clinical research staff, doctoral students, and doctoral-level staff, underwent extensive training before coding using established procedures (McLeod et al., 2015). Coders met regularly to prevent drift, and nearly half of the sessions were double-coded by a doctoral-level expert coder. Interrater agreement was high on all techniques (item intraclass correlations [ICCs] ranged from .76 to .95).

Alternate Measurement Methods

The three alternate measurement methods were designed to parallel the TPOCS-RS; each method included 12 CBT techniques and used the same rating scale and anchors. To maximize clarity, the name and description of several techniques were altered slightly for use in clinician-facing activities (we use names from the original TPOCS-RS; see Table 1 for alternative names and definitions; see supplemental files from Becker-Haimes et al. (2022) for full self-report, CSR, and BR measures). In the self-report condition, we asked clinicians to complete the measure within 48 hr of the client session. In the BR and CSR conditions, clinicians typically completed measures within one week of the client session. Study staff involved in CSR and BR interviews were the same as those who coded directly observed sessions. Coders met regularly to prevent drift.

Self-Report

In the self-report condition, use of CBT techniques was measured using the TPOCS Self-Reported Therapist Intervention Fidelity for Youth (TPOCS-SeRTIFY; Becker-Haimes et al., 2021). Initial psychometric analysis of this measure suggests robust item performance and concordance with another established self-report measure. To mitigate some of the previously noted limitations of self-report, this measure was codeveloped with community clinicians, included a companion manual with examples of each CBT technique, and was accompanied by a brief clinician training in how to accurately self-rate before use. Prior to recording client sessions, clinicians in the self-report condition met with a study staff member for approximately 30 min in person or by phone for training on how to use the self-report measure. Following their utilization of the self-report measure, clinicians were asked to complete a brief questionnaire regarding their use of the companion manual, including reporting their time spent independently reviewing the manual (e.g., reading operational definitions of each CBT technique, reading sample vignettes of clinician behaviors and how those behaviors should be rated) prior to using the measure; they reported doing so for a mean of 13.5 min (SD = 2.9; range: 0–90).

Chart-stimulated Recall

The CSR condition consisted of an interview, during which the clinician was prompted to review their clinical session documentation to facilitate accurate reporting of session content; responses were then coded using a scale that paralleled the TPOCS-RS. Interviews were conducted at clinicians’ agencies. Each study staff member was initially trained in the TPOCS-RS and once reliability standards were met, mock interviews were carried out, followed by two supervised interviews in the field. Nearly a quarter of sessions were double-coded for reliability by a doctoral-level staff member with expertise in CBT. Interrater agreement was excellent (item ICCs ranged from .90 to .98).

Behavioral Rehearsal

In the BR condition, clinicians participated in a semistructured roleplay, demonstrating each CBT technique used within the client session, with a study staff member playing the role of the client. This method is commonly used in medicine, and we adapted it for mental health settings (Beidas et al., 2014). Roleplays for each client session were limited to 15 min and were audio-recorded. Study staff later rated recordings for the extensiveness of each technique endorsed by the clinician (i.e., each technique demonstrated in role-play). Study staff raters used a scale that paralleled the TPOCS-RS. Raters were first trained in the TPOCS-RS and, once they reached reliability standards, completed ratings of two mock BRs and one supervised BR before independent administration. A doctoral-level staff member with CBT expertise double-coded 42% of BRs, and interrater agreement was excellent (item ICCs ranged from .84 to 1.00).

Analytic Plan

To better understand patterns in the use of discrete techniques within sessions (including both extensiveness and occurrence), we first visually inspected the DO scores and calculated descriptive statistics for the 12 CBT techniques, including mean extensiveness ratings and the percentage of sessions in which each technique occurred (i.e., technique rated as 2 or higher). Given the data structure, analysis accounted for three levels of nesting (Level 1 = client, Level 2 = clinician, Level 3 = agency) using three-level regression models with random intercepts. To address our primary aim, we used SAS Version 9.4 to calculate the least squares mean paired difference between TPOCS-RS DO scores and the scores from each alternate measurement condition. Consistent with the analytic approach employed in the primary outcomes paper, we used a null hypothesis significance testing approach given that there was no robust basis for specifying a predefined equivalence margin (Becker-Haimes et al., 2022). The primary outcome was the paired difference between the extensiveness score generated by DO and that generated by each alternate measurement condition for each of the 12 CBT techniques. A nonsignificant p-value indicated that the condition did not produce scores that differed significantly from DO scores for that particular technique. We used Cohen's d to interpret the magnitude of the difference score, using recommendations for calculating effect sizes within the context of mixed models (Feingold, 2013). Effect size interpretation followed conventional guidelines; Cohen's d values of .20, .50, and .80 indicated small, medium, and large effects, respectively (Cohen, 2013). To assess the concordance of each alternate method with DO for cognitive versus behavioral techniques, a mean least squares mean paired difference score was calculated for the three cognitive techniques (i.e., Cognitive Education, Cognitive Distortion, Coping Skills) and the seven behavioral techniques (i.e., Functional Analysis of Behavior, Relaxation Strategies, Respondent Strategies, Behavioral Activation, Skill Building, Operant Child Strategies, Operant Parent Strategies). We used z-tests to compare the mean cognitive and behavioral least squares mean paired difference for each alternate method. An alpha level of .05 was used for all analyses.

Results

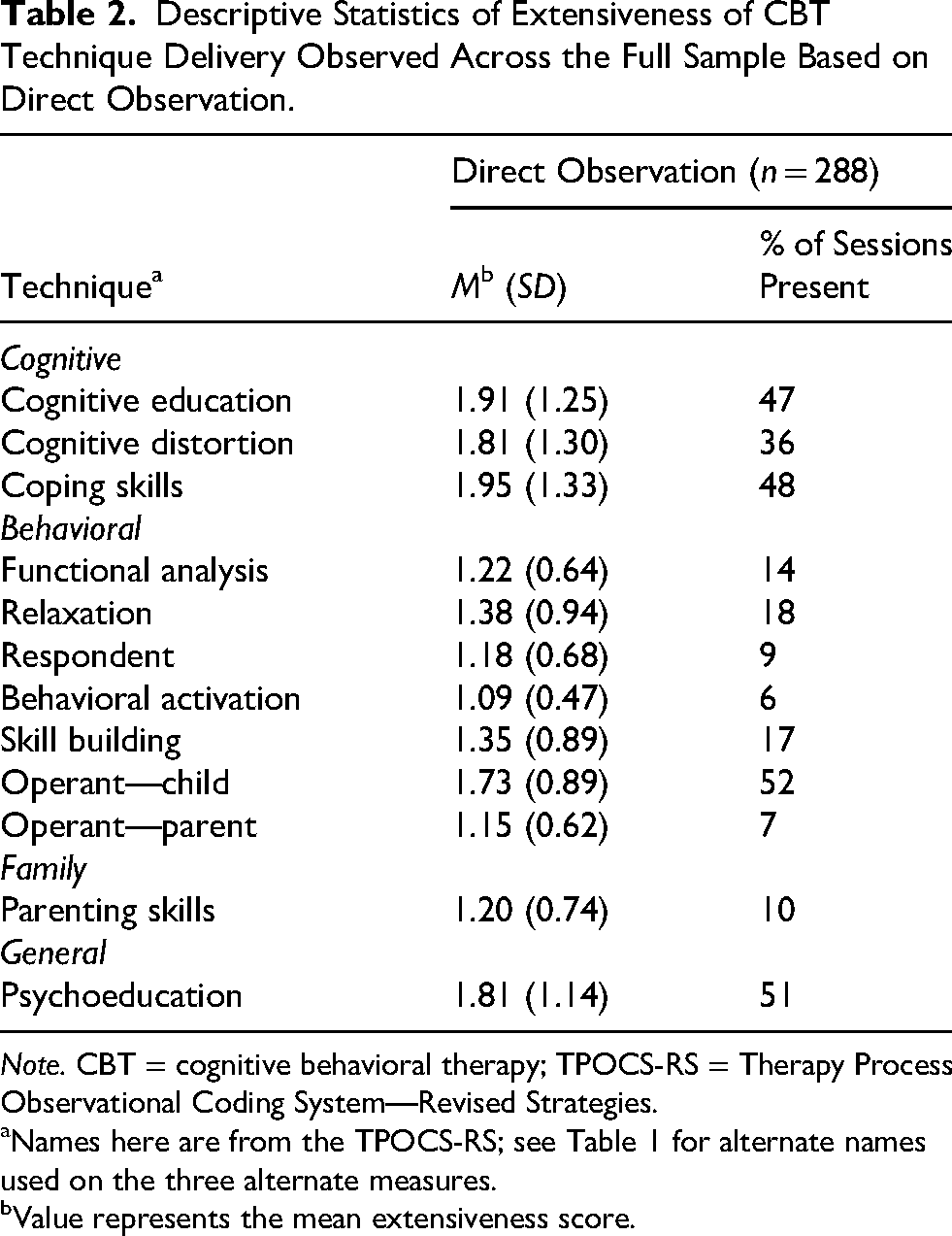

Descriptive Findings: Use of Discrete CBT Techniques

Missing data were rare (0.02%), with no variable missing more than 1.4% of values. Table 2 shows the means and standard deviations of the DO extensiveness scores for the 12 CBT techniques and the percent of the 288 sessions in which each technique was used. The techniques used most extensively were Coping Skills (M = 1.95, SD = 1.33), Cognitive Education (M = 1.91, SD = 1.25), Cognitive Distortion (M = 1.81, SD = 1.30), and Psychoeducation (M = 1.81, SD = 1.14). The techniques used in the greatest number of sessions were Operant Child Strategies (52%), Psychoeducation (51%), and Coping Skills (48%). The techniques used least extensively, which also occurred in the fewest number of sessions, were Behavioral Activation (M = 1.09, SD = 0.47; 6% of sessions), Operant Parent Strategies (M = 1.15, SD = 0.62; 7% of sessions), and Respondent Strategies (M = 1.18, SD = 0.68; 9% of sessions). Table 3 shows the means, standard deviations, and percent occurrence of the 12 CBT techniques using each alternate measurement method and DO. DO scores were largely consistent across conditions; slight differences in scores across conditions were not statistically or meaningfully different and instead consistent with natural variation. Across conditions, mean extensiveness scores were typically higher using alternate methods than DO scores, though there were several exceptions to this in the BR condition. Occurrence ratings (with occurrence defined as an extensiveness score of 2 or higher and nonoccurrence defined as a score of 1, on a scale of 1 to 7) followed a similar pattern, with self-report producing large overestimates of technique frequency compared to DO (e.g., occurrence of functional analysis in 73% vs. 14% of sessions) for many techniques. Figure 1 shows differences in occurrence ratings between DO and each alternate measurement condition.

Percent of Sessions in which Technique was Rated as Having Occurred Using Alternate Method Versus Direct Observation.

Descriptive Statistics of Extensiveness of CBT Technique Delivery Observed Across the Full Sample Based on Direct Observation.

Note. CBT = cognitive behavioral therapy; TPOCS-RS = Therapy Process Observational Coding System—Revised Strategies.

Names here are from the TPOCS-RS; see Table 1 for alternate names used on the three alternate measures.

Value represents the mean extensiveness score.

Descriptive Statistics of Technique Use Based on Alternate Measurement Method and Direct Observation.

Note. DO = direct observation; SR = self-report; CSR = chart-stimulated recall; BR = behavioral rehearsal; CBT = cognitive behavioral therapy; TPOCS-RS = Therapy Process Observational Coding System—Revised Strategies.

Names here are from the TPOCS-RS; see Table 1 for alternate names used on the three alternate methods.

Sample sizes varied for this analysis, as it only included sessions in which the CBT technique was scored using both DO and the alternate method.

Value represents the mean extensiveness score.

Percent of sessions in which technique was rated as having occurred (i.e., score of 2 or greater).

Comparison of Alternate Methods and DO for Discrete CBT Techniques

Table 4 shows the least squares mean paired difference between the three alternate methods and DO for the 12 techniques. Figure 2 presents the relative magnitude of these differences. The ICCs examine clustering at the agency and clinician levels, with median ICCs of 0.00 (range: 0.00–0.06) for agencies and 0.38 (range: 0.23–0.60) for clinicians; the variability attributable to clinicians was greater than that attributable to agencies. Specific patterns of findings for each alternate method are described below.

Least Squares Mean Paired Difference for Extensiveness Ratings Using Alternate Method and Direct Observation.

Comparison of Alternate Measurement Methods to Direct Observation.

Note. SR = self-report; CSR = chart-stimulated recall; BR = behavioral rehearsal; DO = direct observation; CBT = cognitive behavioral therapy; TPOCS-RS = Therapy Process Observational Coding System—Revised Strategies.

Names here are from the TPOCS-RS; see Table 1 for alternative names used on the three alternate measures.

Sample sizes varied for this analysis, as it only included sessions in which the CBT technique was scored using both DO and the alternate method.

Value represents the least squares mean paired difference between the alternate method and direct observation.

Cohen's d is calculated as the quotient of the parameter estimate from the least squares model divided by the product of the standard error and the square root of n.

Presents the p-value of the significance of the intercept of the three-level regression model comparing alternate method and direct observation score. A significant p-value indicates that the least squares paired mean difference is not equal to zero.

p > .05, indicating no significant difference in score between alternate measurement condition and direct observation.

Self-Report

When clinicians self-reported their use of CBT techniques, the least squares mean paired difference significantly differed from zero for all but one technique (all ps < .001; Cohen's d ranged from 0.56 to 1.13), all in the direction of overestimating DO scores. Difference scores in this condition were highly variable, with large effect sizes (≥ 0.81) for seven of the 12 techniques. The one technique that did not differ significantly from DO was Operant Child Strategies (Mdiff = −0.30, Cohen's d = 0.18, p = .06), the technique used most frequently across sessions.

Chart-Stimulated Recall

When using CSR, the least squares mean paired difference was significantly different from zero for three techniques: Cognitive Distortion (Mdiff = −0.48, Cohen's d = 0.28, p = .007), Functional Analysis of Behavior (Mdiff = −0.50, Cohen's d = 0.33, p = .001), and Cognitive Education (Mdiff = −0.67, Cohen's d = 0.36, p < .001). For these techniques, CSR overestimated the extensiveness of use compared to DO. However, for the other nine techniques, least squares mean paired difference scores did not significantly differ from zero (all ps ≥ .05). For these nine techniques, effect sizes of the magnitude of the difference in use estimations were small, with less variability than those produced using self-report (Cohen's d ranging from 0.01–0.21).

Behavioral Rehearsal

The least squares mean paired difference was not significantly different from zero for any of the 12 techniques (all ps ≥ .20). The effect sizes of the magnitude of the difference in use estimation were overall small, with less variability than both self-report and CSR (Cohen's d ranging from 0.01 to 0.13).

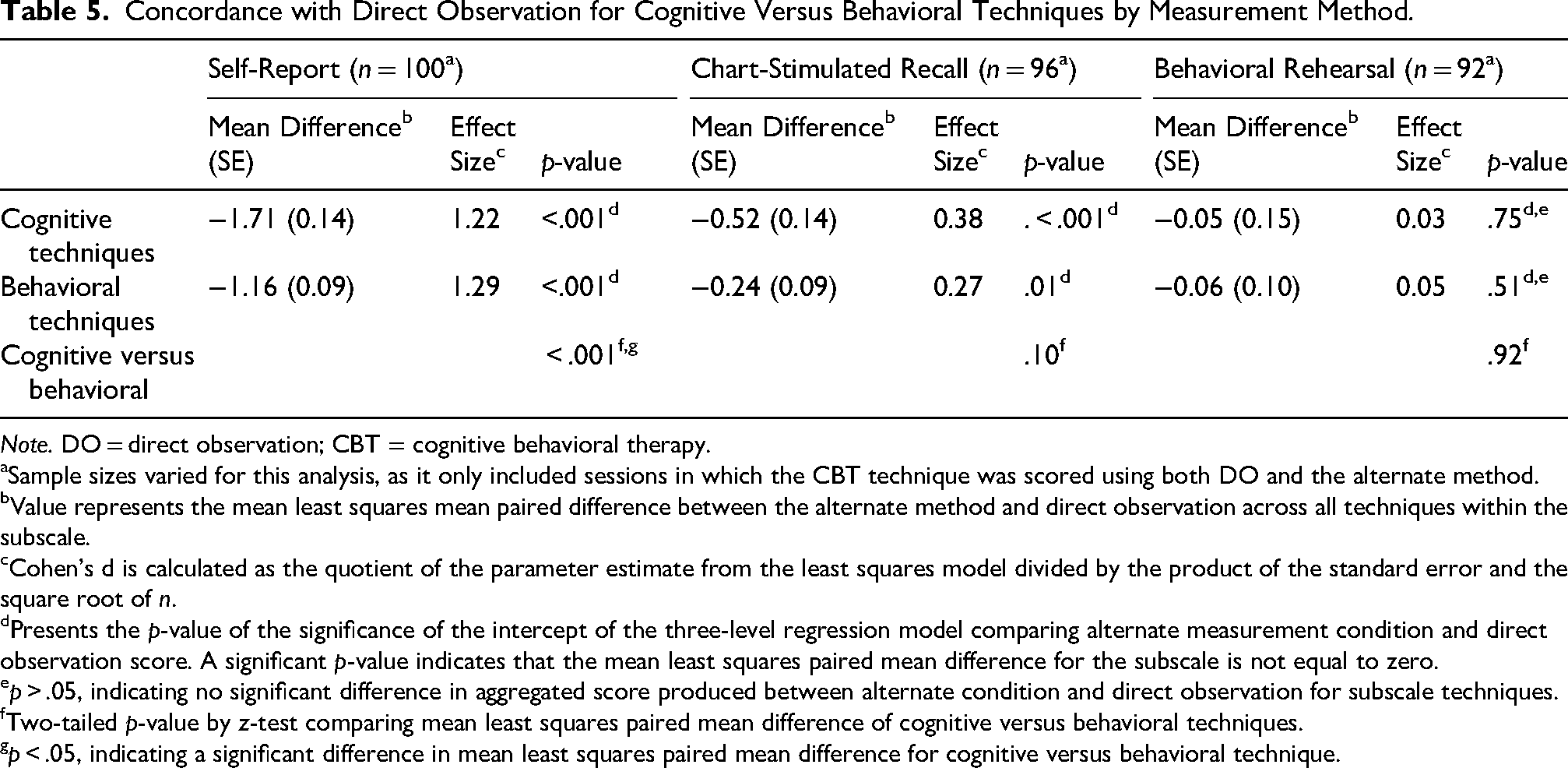

Concordance of Alternate Methods with DO for Cognitive Versus Behavioral Techniques

Table 5 shows the comparison, using z-tests, of the mean least squares mean paired differences for cognitive versus behavioral techniques for each of the three alternate measurement methods. When use was captured using self-report, concordance was significantly greater for behavioral than cognitive techniques (z = −3.29, p < .001). When use was captured using CSR and BR, there was no statistically significant difference in concordance for cognitive versus behavioral techniques (all ps ≥ .10).

Concordance with Direct Observation for Cognitive Versus Behavioral Techniques by Measurement Method.

Note. DO = direct observation; CBT = cognitive behavioral therapy.

aSample sizes varied for this analysis, as it only included sessions in which the CBT technique was scored using both DO and the alternate method.

Value represents the mean least squares mean paired difference between the alternate method and direct observation across all techniques within the subscale.

Cohen's d is calculated as the quotient of the parameter estimate from the least squares model divided by the product of the standard error and the square root of n.

Presents the p-value of the significance of the intercept of the three-level regression model comparing alternate measurement condition and direct observation score. A significant p-value indicates that the mean least squares paired mean difference for the subscale is not equal to zero.

p > .05, indicating no significant difference in aggregated score produced between alternate condition and direct observation for subscale techniques.

Two-tailed p-value by z-test comparing mean least squares paired mean difference of cognitive versus behavioral techniques.

p < .05, indicating a significant difference in mean least squares paired mean difference for cognitive versus behavioral technique.

Discussion

We examined the concordance of three alternate measurement methods capturing CBT use with DO across 12 discrete CBT techniques. In contrast to previous findings that used an aggregate CBT score and found that only BR produced scores that did not differ from DO, we found that for most techniques tested, both BR and CSR scores did not differ significantly from DO. In line with our hypotheses that relying on aggregated CBT scores may obscure nuance in how each measurement method performs, these findings suggest that for many CBT techniques, there are two viable alternative methods to DO that do not require client consent or use identifiable client information for many techniques and may be more feasible within routine practice settings. In line with previous research, self-report overestimated use for all but one technique. In partial support of our second hypothesis, we found greater concordance for behavioral versus cognitive techniques when using self-report, though not when using the other two alternate methods.

Findings have implications for implementation research and clinical care, as researchers and practitioners may have greater flexibility in selecting a measurement method that is feasible, fitting, and cost-effective within their setting. This flexibility may advance the use of these more pragmatic measurement methods within research trials and increase the likelihood that measurement of session content occurs in the community, contributing to improved quality of care. Given that agencies are increasingly asked to demonstrate quality of care for funding and reimbursement purposes, the availability of multiple options for measurement may be particularly beneficial. Additionally, ICC analyses revealed that a greater proportion of the variance in scores was attributable to clinicians compared to agencies (i.e., nearly 40%, rather than close to 0%), providing support for continued use of strategies aimed at clinicians, as was done in the current study.

Consistent with findings based on an aggregate CBT score, self-report overestimated DO scores for all but one technique and was the method most discrepant from DO. This finding is consistent with previous literature documenting that self-report produces inflated scores relative to DO (Brosan et al., 2008; Carroll et al., 2007; Hurlburt et al., 2010; Martino et al., 2009). Even with the additional training in self-rating, self-reporting remained discordant with DO and highly variable for extensiveness and occurrence ratings across almost all measured techniques (see Figure 1), raising concerns about using self-reported CBT use scores effectively. One potential avenue for improving the concordance of self-report with DO may be understanding the similarities and differences between self-report and CSR. Based on visual inspection, although scores generated through CSR are much closer to DO scores compared to those generated through self-report, both self-report and CSR scores follow a similar pattern of deviation from DO (see Figure 2). Given that, to some extent, both conditions rely on clinicians reporting the techniques used during the session, it may be that the attributes only present in CSR (e.g., the presence of another person; having access to client chart) contribute to its greater relative concordance with DO. Future research should investigate whether modifying the self-report condition (e.g., clinician being instructed to consult the chart while self-rating, engaging in a computer-assisted self-report interview, etc.) can increase its concordance with DO. Alternatively, it may be that a hybrid model that integrates CSR and self-report may improve concordance with DO. If this is not the case, it may be that the field should become more selective about when to employ clinician self-report and more aware of its limitations. Further, previous research supports greater concordance between self-report and DO when rating evidence-based techniques beyond CBT (i.e., family therapy techniques), suggesting that the concordance between self-report and DO differs across discrete techniques and therapeutic interventions (e.g., Hogue et al., 2015). Future research should seek to understand better the intervention characteristics associated with concordance of self-report and DO (e.g., level of concreteness, complexity, etc.).

In addition to investigating the relative concordance of each alternate method with DO for discrete techniques, we were interested in whether these methods were more concordant for items from the behavioral subscale (i.e., concrete, observable techniques) than the cognitive subscale (i.e., conceptual, diffuse techniques), as has been found in previous work (e.g., Hogue et al., 2015). In partial alignment with our hypotheses and consistent with the larger informant disagreement literature (e.g., Comer & Kendall, 2004), concordance was greater for behavioral versus cognitive techniques when using self-report. However, no significant difference was found using the other two alternate methods. It may be that challenges associated with capturing the use of more abstract techniques are mitigated when consulting an objective chart or engaging in a roleplay, which may require a deeper level of engagement from clinicians than filling out a questionnaire, resulting in more accurate reporting. This finding may be useful in routine practice, such as within supervision, where clinicians are often asked to report what occurred in a session. To balance efficiency with accuracy, supervisors may opt to allow for quicker self-reporting of certain techniques (e.g., those that are highly concrete and behavioral) while designating more of their limited supervision time to have clinicians “show” how they deployed other techniques (e.g., more abstract, cognitive techniques). In the current study, we used existing TPOCS-RS subscales to differentiate cognitive from behavioral techniques as a proxy for more abstract and diffuse versus concrete and observable techniques. It may be that differences in concordance between these groups would be more pronounced (and potentially present across all conditions) when distinguishing these techniques using a different coding approach. In future research, rather than categorizing a technique as cognitive or behavioral based on the subscale it is drawn from, techniques could be coded for specific characteristics hypothesized to be associated with greater concordance with DO, such as degree of experientiality. For example, it may be that rather than how cognitive or behavioral a technique is in concept, concordance is driven more by how experiential that technique is within the session. When using self-report, we found relatively low concordance for Behavioral Activation compared to other behavioral techniques. Though highly behavioral when put into practice, Behavioral Activation is typically completed outside of the session. Within the session, delivery is usually didactic (explaining the relationship between activity and mood) or focused on planning and reviewing activities. Contrastingly, Respondent Strategies, for which the concordance of self-report with DO was relatively high, often involve within-session exposures. Future research should examine whether there are additional characteristics of these CBT techniques associated with differential concordance with DO across methods, such as the complexity of techniques or the extent to which clinicians find them difficult to deliver, as has been done in previous work examining family therapy techniques (e.g., Hogue et al., 2015).

Our findings should be interpreted in the context of several limitations. First, as with any study using multiple measurement forms, findings may have been impacted by measurement-related error. Given that some techniques are defined by a relatively narrow set of discrete behaviors (e.g., Respondent Strategies) and others are relatively broad (e.g., Coping Skills; see Table 1), it may be that discrepancies in the breadth of each technique contribute to how effectively clinicians can report use (McLeod et al., 2013, 2023; Schoenwald 2011). For example, our finding that self-report did not differ from DO for Operant Child Strategies may have resulted from the broad definition of this technique, resulting in DO scores that were higher than they would have been if defined more narrowly. This may have resulted in inflated scores closer to those generated through self-report. Relatedly, it may be that the slight variations in wording across the measures contributed to discrepancies in scores. Before definitively stating that a particular method is discordant with DO, further work should be conducted to examine whether these results are replicated. Further, although in this study we examined concordance with DO, it should be noted that DO is a proxy for what “actually” occurred in session and that, to date, the empirical link between DO and treatment outcomes is still tenuous (Snider et al., 2021). Second, the overall use of CBT in our sample was very low. DO scores indicate that mean extensiveness of technique use ranged from 1.09 to 1.95 (all over one point below a rating of “somewhat”), and the percentage of sessions in which a given technique was used ranged from 6% to 52%, with seven of 12 techniques used in less than 20% of sessions. It may be that findings would differ in a sample with greater CBT use, and future research should examine these alternate methods in a sample where the use of these techniques was greater. Additionally, our analyses did not account for the frequency with which a technique was used, and it may be that there is a relationship between the extensiveness or occurrence of a technique and its concordance with DO. For example, other factors, such as complexity of an intervention, may have impacted both the frequency with which a technique was used (i.e., only highly skilled clinicians used exposure) and its concordance with DO (i.e., skilled clinicians were better able to report on their use of exposure), resulting in differential levels of concordance across techniques. Third, a limitation of the null hypothesis significant testing approach used in the parent trial and current analyses lies in the interpretability of similarity. Equivalence testing could address this limitation, although without predefined equivalence margins, and given the relatively small magnitude of between-group differences, discernment of meaningful similarities could remain an interpretive challenge (Dixon et al., 2018; Lakens et al., 2018). Finally, although this study took place within the context of CMH agencies, the alternate measurement methods tested were facilitated by study staff members rather than agency staff. As both CSR and BR require the presence of another person, the extent to which these methods could be feasibly implemented and sustained within a resource-limited clinic in a nonresearch capacity is unknown.

Additional research can meaningfully expand upon these findings. First, although the three alternate measurement methods are likely more feasible within routine care than DO, both the CSR and BR conditions involved a trained study staff member and by no means meet all criteria for being pragmatic metrics (Glasgow & Riley, 2013; Lewis et al., 2021). Previous research examining various aspects of pragmatism associated with these methods suggests promise for use within research, though it leaves some unanswered questions regarding their feasibility within usual clinical care. For example, in our recent examination of the costs associated with each of these same three alternate methods, we found that CSR and BR are similar in cost, though both are significantly more expensive than self-report (Beidas et al., under review). This difference in cost was due to three main resources as implemented in our study: use of a computer, additional clinician time, and the need for a trained interviewer (Beidas et al., under review). While these additional costs may not be prohibitive in research, mainly when compared with DO, further research is needed to evaluate their use in routine care. If facilitated by a supervisor in routine supervision (as opposed to a trained interviewer), some of these costs may disappear. Further, previous mixed-methods research investigating clinician and supervisor attitudes, beliefs, and intentions to use CSR and BR found moderately strong intentions to use both methods as well as numerous likes and advantages, though also highlighted common concerns including memory lapse and lack of relevant context when engaging in interviews and roleplays (Hoffacker et al., 2022). Further, informants expressed concern regarding the impact of these methods on clinical supervision, including the possibility of making supervision more rote and less supportive and creating an increased sense of pressure both in reducing clinicians’ sense of autonomy within sessions and increasing their sense of ongoing evaluation (Hoffacker et al., 2022). Future research should further assess the feasibility of integrating these methods within public mental health agencies beyond the scope of research (e.g., using existing staff to facilitate CSR and BR). It should evaluate these methods’ cost, cost-effectiveness, scalability, and sustainability to better understand their practical use within routine care settings serving diverse populations. Further, while in this study we examined concordance across measurement methods of general use of 12 CBT techniques, future work should examine relative concordance of these alternate techniques with DO in capturing adherence to a specific CBT protocol, which could have further implications for measuring fidelity and quality improvement in community settings. Additionally, future work should examine the feasibility and performance of these methods for use within adult populations and for techniques beyond the 12 included here, including non-CBT-based evidence-based techniques. Finally, future research should test implementation strategies to support CSR and BR within usual care settings and investigate whether these methods can feasibly be used within clinical supervision outside the context of a research study.

Our study has several strengths. First, these findings draw from the first large-scale randomized trial demonstrating that BR did not differ from DO and suggest that CSR may also be concordant with DO for many techniques. Second, our work builds upon the limited literature on the measurement of the use of discrete techniques, which has primarily comprised one set of studies (see Hogue et al., 2008). Further, although this work occurred within the Philadelphia tristate area, our sample included a broad set of agencies. Finally, the low rate of missing data bolsters the rigor of our study, as does the use of parallel measurement form.

Our findings have implications for researchers, payers, and agency leaders facing difficulties with quality improvement in evidence-based practice implementation. Our results suggest that both BR and CSR may be viable options for capturing the use of many CBT techniques. Each method offers attractive benefits for measuring CBT use compared to DO, including requiring fewer resources and better preserving client anonymity. These alternate methods hold promise for feasibly measuring session content at scale, particularly given resource constraints within study budgets, health systems, and agencies. Future research into these alternate methods may pave the way for extending their use, such as within clinical supervision, further contributing to strengthening systems of evidence-based mental health care for youth.

Footnotes

Acknowledgments

We are grateful for the support that the Department of Behavioral Health and Intellectual Disability Services has provided us to conduct this work within their system, for the Evidence Based Practice and Innovation group, and for the partnership provided to us by participating agencies, clinicians, and clients. We would also like to acknowledge, in memoriam, Dr Judy Shea, who contributed to this work and is greatly missed.

ORCID iDs

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Institute of Mental Health (Grant No. R01 MH108551; PI: Beidas).

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: R. S. Beidas is principal at Implementation Science & Practice, LLC. She is currently an appointed member of the National Advisory Mental Health Council and the NASEM study, “Blueprint for a national prevention infrastructure for behavioral health disorders,” and serves on the scientific advisory board for AIM Youth Mental Health Foundation and the Klingenstein Third Generation Foundation. She has received consulting fees from United Behavioral Health and OptumLabs. She previously served on the scientific and advisory board for Optum Behavioral Health and has received royalties from Oxford University Press. All activities are outside of the submitted work. We would like to note that four co-authors of this article are on the Implementation Research and Practice editorial board: one as co-editor-in-chief (S. K. Schoenwald) and three on the broader editorial board (R. S. Beidas, S. Dorsey, and D. S. Mandell). All other authors have no relevant financial or non-financial interests to disclose.

Data Availability

The data that support the findings of this study are available from the senior author upon reasonable request.