Abstract

Background

Mental health is a critical component of wellness. Public policies present an opportunity for large-scale mental health impact, but policy implementation is complex and can vary significantly across contexts, making it crucial to evaluate implementation. The objective of this study was to (1) identify quantitative measurement tools used to evaluate the implementation of public mental health policies; (2) describe implementation determinants and outcomes assessed in the measures; and (3) assess the pragmatic and psychometric quality of identified measures.

Method

Guided by the Consolidated Framework for Implementation Research, Policy Implementation Determinants Framework, and Implementation Outcomes Framework, we conducted a systematic review of peer-reviewed journal articles published in 1995–2020. Data extracted included study characteristics, measure development and testing, implementation determinants and outcomes, and measure quality using the Psychometric and Pragmatic Evidence Rating Scale.

Results

We identified 34 tools from 25 articles, which were designed for mental health policies or used to evaluate constructs that impact implementation. Many measures lacked information regarding measurement development and testing. The most assessed implementation determinants were readiness for implementation, which encompassed training (n = 20, 57%) and other resources (n = 12, 34%), actor relationships/networks (n = 15, 43%), and organizational culture and climate (n = 11, 31%). Fidelity was the most prevalent implementation outcome (n = 9, 26%), followed by penetration (n = 8, 23%) and acceptability (n = 7, 20%). Apart from internal consistency and sample norms, psychometric properties were frequently unreported. Most measures were accessible and brief, though minimal information was provided regarding interpreting scores, handling missing data, or training needed to administer tools.

Conclusions

This work contributes to the nascent field of policy-focused implementation science by providing an overview of existing measurement tools used to evaluate mental health policy implementation and recommendations for measure development and refinement. To advance this field, more valid, reliable, and pragmatic measures are needed to evaluate policy implementation and close the policy-to-practice gap.

Plain Language Summary

Mental health is a critical component of wellness, and public policies present an opportunity to improve mental health on a large scale. Policy implementation is complex because it involves action by multiple entities at several levels of society. Policy implementation is also challenging because it can be impacted by many factors, such as political will, stakeholder relationships, and resources available for implementation. Because of these factors, implementation can vary between locations, such as states or countries. It is crucial to evaluate policy implementation, thus we conducted a systematic review to identify and evaluate the quality of measurement tools used in mental health policy implementation studies. Our search and screening procedures resulted in 34 measurement tools. We rated their quality to determine if these tools were practical to use and would yield consistent (i.e., reliable) and accurate (i.e., valid) data. These tools most frequently assessed whether implementing organizations complied with policy mandates and whether organizations had the training and other resources required to implement a policy. Though many were relatively brief and available at little-to-no cost, these findings highlight that more reliable, valid, and practical measurement tools are needed to assess and inform mental health policy implementation. Findings from this review can guide future efforts to select or develop policy implementation measures.

Keywords

Introduction

Mental health is a critical component of wellness at both the individual- and population-level. A significant proportion of the population is affected by mental illness, including an estimated 20%–40% of the United States (Kessler et al., 2005; Substance Abuse and Mental Health Services Administration, 2018) and international populations (Chisholm et al., 2007; Wittchen et al., 2011). The prevalence of mental illness should also be considered in light of global current events, such as the COVID-19 pandemic (Auerbach & Miller, 2020; Czeisler et al., 2020; Holmes et al., 2020; United Nations, 2020), splintered political systems (Nayak et al., 2021; Yan et al., 2021), and civil unrest (Labott, 2021; Ni et al., 2020; Strohecker, 2021). Unfortunately, access to mental health services is limited for many, due to barriers such as provider shortages, distance from services, and insufficient funding (Kilbourne et al., 2018; Saxena et al., 2007; Stirman et al., 2016; Williams, 2016). These challenges are often exacerbated in developing countries, where resources and mental health infrastructure (e.g., psychiatric hospitals or residential treatment facilities) are even more limited (Ngui et al., 2010). As a result, policy interventions are needed to improve mental health on a population-level.

Public policies present an opportunity to impact mental health education and services on a large scale, reaching, for example, communities, organizations, families, and individuals simultaneously. These “Big P” policies include “laws, regulatory measures, courses of action, and funding priorities concerning a given topic by a governmental entity or its representatives” (Eyler & Brownson, 2016). Within this study, we define “Big P” mental health policies as mandates, guidelines, or recommendations established by governmental entities or legislative bodies for certain practices or services that affect mental health education and/or the provision of mental health services. In the United States public mental health policies have increased access to evidence-based mental health interventions, encouraged changes to mental health service provision using incentives or disincentives, and created preferred treatment lists (Goldman & Azrin, 2003; Raghavan et al., 2008; Wensing et al., 2020). Public policies also have the potential to improve mental health equity by addressing the social determinants of health (SDOH; i.e., economic stability, built environment, health and healthcare, social context, and education) (Dawes, 2016; Rust et al., 2015), concepts such as a stigma and discrimination for those with mental illness, as well as healthcare infrastructure, housing, transportation, and education (Alegría et al., 2003, 2018; Castillo et al., 2018; Hinshaw, 2006; Powell et al., 2016; Purtle et al., 2020).

Despite the potential of public policies to improve health, developing and successfully passing a policy does not guarantee its implementation or sustainment. Policy implementation refers to the process of carrying out a policy mandate (Natesan and Marathe, 2015) and remains understudied compared to earlier stages in the policy process (Bullock et al., 2021). There is an opportunity to apply principles and methods from implementation science, which seeks “to understand not only what is and is not working, but also how and why implementation is going right or wrong, and testing approaches to improve it” (Peters et al., 2013). Despite the potential impact on population health, there is a dearth of implementation science literature directed at the policy sphere, particularly related to mental health, leading to calls for a greater emphasis on policy research (Emmons & Chambers, 2021; Hoagwood et al., 2020; Oh et al., 2021; Purtle et al., 2017, 2016).

Policy implementation is studied by multiple fields, including—but not limited to—implementation science and political science; however, the terminology differs between disciplines. Though both fields emphasize context and its role in helping or hindering implementation, implementation science often distinguishes between internal and external context (commonly called inner setting and outer setting; Damschroder et al., 2009; Nilsen et al., 2013). Additionally, implementation science researchers measure implementation outcomes, frequently citing the Implementation Outcomes Framework (IOF) (Proctor et al., 2011), which encompasses the following: acceptability, adoption, appropriateness, cost, feasibility, fidelity, penetration, and sustainability. Political science, on the other hand, refers to implementation outcomes as outputs, while implementation outcomes refer to the policy's overall impact on the identified problem (e.g., changes in health outcomes after implementing a nutrition policy; DeGroff & Cargo, 2009). Finally, fidelity to a policy (i.e., the degree to which it was implemented as intended; Proctor et al., 2011) is conceptualized as compliance within political science (Natesan and Marathe, 2015). This review applies an implementation science lens to policy implementation, thus using the following terms throughout: outer setting (see Table 1 for constructs and definitions) and implementation outcomes (see Table 2 for constructs and definitions), which includes fidelity/compliance.

Mental Health Policy Implementation Determinants Assessed in Included Measures (n = 34) a .

Implementation determinants: “Factors believed or empirically shown to influence implementation outcomes” (also called barriers, obstacles, facilitators, etc.) (Nilsen & Bernhardsson, 2019).

These constructs were derived from Damschroder et al., 2009 but were sub-divided based on findings during full-text screening.

Definition derived from Damschroder et al., 2009. d Definition derived from Bullock et al., 2021.

Mental health policy implementation outcomes assessed in included measures (n = 34) a .

Implementation outcomes: “Effects of deliberate and purposeful action to implement new treatments, practices” or policies, which can serve as indicators of the implementation process and overall success (Proctor et al., 2011).

Current Work and Gaps in the Literature

Though policies may be developed and passed by government entities at multiple levels, the burden of implementing policies and sustaining related practices often falls on actors beyond the legislative body (e.g., healthcare settings, community organizations). Additionally, many actors or organizations face additional barriers during policy implementation, including limited financial resources, personnel, or infrastructure (Wright, 2017). To enable comparisons of policy implementation across studies and settings, generalize findings, and ultimately address these barriers, a better understanding of policy-focused implementation constructs is needed, along with high-quality measures of these constructs.

Unfortunately, myriad measurement challenges exist within implementation science. First, many measures fail to operationalize the constructs being assessed (Bruns et al., 2019; McHugh et al., 2020; Watson et al., 2018). There is also an overreliance on single-use measures, which often lack testing and/or reporting of psychometrics, and splicing items from different measures, leading to limited measurement consistency and replicability of findings (Lewis, Weiner, et al., 2015; Martinez et al., 2014; Proctor et al., 2011). Additionally, there is a limited emphasis on pragmatic measures, which are developed to minimize burden and are typically brief, inexpensive, and easy to score and interpret (Glasgow & Riley, 2013; Martinez et al., 2014; Rabin et al., 2015). Despite the importance of psychometric and pragmatic properties, researchers rarely analyze both when developing and testing a new measure (Martinez et al., 2014; Stanick et al., 2018).

Implementation researchers acknowledge the dearth of measures assessing outer setting constructs (Damschroder et al., 2009), which encompass fiscal and policy factors, as well as external networks that can affect implementation (McHugh et al., 2020). These constructs are often difficult to disentangle and assess, which may explain why they remain understudied compared to inner setting constructs (McHugh et al., 2020). Inner setting constructs include factors within an implementing organization that influence implementation (e.g., organizational structure, implementation climate, leadership support) (Damschroder et al., 2009). Policy implementation can be affected by multi-level contextual factors, including outer and inner setting characteristics, and characteristics of the policy itself (Bullock et al., 2021; Eaton et al., 2011; Goldman & Azrin, 2003; Malekinejad et al., 2018). Also known as barriers, obstacles, enablers, or facilitators (Nilsen & Bernhardsson, 2019), we refer to these multi-level constructs as “policy determinants.” Given the limited emphasis on measurement of outer setting constructs and the effect these determinants can have on policy implementation, a better understanding of these constructs is needed.

Additionally, health equity is receiving increased attention within implementation science, underscoring the impact of the SDOH on mental health outcomes (Allen et al., 2014; Compton and Shim, 2015; Shim & Compton, 2020; World Health Organization & Calouste Gulbenkian Foundation, 2014). There are growing calls for improved integration of equity-related constructs into implementation methods, models, and measures (Baumann & Long, 2021; Brownson et al., 2021; Chinman et al., 2017; Douglas et al., 2019; Emmons & Chambers, 2021; Exworthy, 2008; Loper et al., 2021; Metz et al., 2021; Oh et al., 2021; Woodward et al., 2021). However, little is known about the current state of equity-related constructs in mental health policy implementation.

Lastly, while systematic reviews have examined quantitative measures of implementation across public health topics (Chaudoir et al., 2013; Chor et al., 2015; Clinton-McHarg et al., 2016; Emmons et al., 2011; Kaplan et al., 2010; Weiner et al., 2008), less scholarship has been done related to measures of policy implementation. Recent work has examined generalizable measures of health policy implementation (Allen et al., 2020), as well as health policy measures targeting school settings (McLoughlin et al., 2021), healthy food environments (Phulkerd et al., 2016), and chronic disease (Walsh-Bailey et al., 2020). To our knowledge, no such study has been conducted for mental health policies. Measures to evaluate mental health policy implementation are particularly important, as mental health stigma is pervasive and can impact policy development and implementation, as well as mental health outcomes (Conley, 2021; Purtle et al., 2018).

Study Aims

To fill these gaps in the literature, this study sought to (1) identify quantitative measurement tools (i.e., measures, questionnaires, surveys, scales) used to evaluate the implementation of public mental health policies; (2) describe the implementation determinants and outcomes assessed in these measures; and (3) assess the pragmatic and psychometric quality of the identified measures.

Methods

This review builds from a previous review of generalizable implementation measures (i.e., measures transferable across topics and settings) of public policies passed at federal, state/regional, or local levels (Allen et al., 2020). The Allen et al., study identified 70 generalizable measures of health policy implementation and excluded 597 measures, which were policy- or setting-specific (e.g., specific to school settings or mental health policies) (Allen et al., 2020). The current review examined the previously excluded or policy-specific quantitative measurement tools used to evaluate the implementation of governmental mental health policies. We followed the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines (Moher et al., 2009) (see Figure 1 and Appendix A).

Quantitative studies: PRISMA flow diagram.

Search Strategy

Our search strategy was guided by three implementation science frameworks—the Consolidated Framework for Implementation Research (CFIR) (Damschroder et al., 2009), the IOF (Proctor et al., 2011), and a newer, policy-specific framework—the Policy Implementation Determinants Framework (Bullock et al., 2021). The Policy Implementation Determinants Framework and CFIR provide a set of determinants known to impact implementation and were used to create a more thorough representation of policy implementation determinants. While the CFIR is applicable to a variety of implementation levels and settings, the Policy Implementation Determinants Framework contains constructs tailored to policy and the outer setting. The study team operationalized construct definitions from the frameworks to make them applicable to policy (e.g., changed the referent). For example, the IOF describes acceptability of a treatment, service, or innovation; we expanded the definition to include policy or policy-mandated practice. These frameworks guided the identification and selection of implementation determinants and outcomes. Both the CFIR and Policy Implementation Determinants Framework were used to identify determinants of policy implementation, while the IOF was used to identify outcomes of policy implementation.

Allen et al., (2020) conducted the original search, encompassing health policy broadly, in April 2019 in CINAHL Plus, Medline, PsycINFO, ERIC, Worldwide Political, and PAIS databases. The search terms and strategy were constructed with guidance from a research librarian, based on policy and implementation science literature (Bullock et al., 2021; Damschroder et al., 2009; Lewis et al., 2018; Proctor et al., 2011; Rabin et al., 2015; Sabatier & Mazmanian, 1980; Sabatier, 1988; Watson et al., 2018) and expert input. Studies excluded due to policy- or setting-specific wording (e.g., applicable only to mental health policies) were marked in Covidence systematic review software (Covidence Systematic Review Software, 2021).

In the current review, we retrieved studies excluded during full-text screening by Allen et al., due to policy- or setting-specific measures (n = 597). We then updated the original search strategy, with guidance from a research librarian, to include mental health-specific terms; a similar approach was used in previous reviews (McLoughlin et al., 2021; Walsh-Bailey et al., 2020). We ran the updated search in January 2021, using search strings related to mental health, public policy, implementation, and measurement (see Appendix B; full search strategy available in Appendices C and D).

Inclusion and Exclusion Criteria

The inclusion criteria were: (1) empirical study (including study protocols) of the implementation of public mental health policies (i.e., “Big P” policies) already passed or approved; (2) use of a quantitative measurement tool (i.e., measure, questionnaire, survey, scale); (3) inclusion of at least one implementation determinant or outcome; and (4) published in a peer-reviewed journal between January 1995 through December 2020 in English language. Given faint line between policy enactment and stages of policy implementation, we included studies that did not explicitly name the policy as the independent variable.

To distinguish between “little p” (i.e., organizational-level mandates or guidelines) and “Big P” policies, empirical authors’ descriptions of governing or legislative bodies and policy decision levels (e.g., local, state, national) were used. When information was limited in articles, one reviewer (MP) conducted hand searches to determine the governing body and level responsible for mandating or recommending the policy. Because our coding required copies of each measurement tool, January 1995 was selected as the beginning of the inclusion period as web-based surveying began around that time (Dillman et al., 2009). The onset of web-based data collection was thought to increase the likelihood that instruments had been maintained and were accessible, as compared to paper-based data collection.

The team conducted hand searches to locate measure development articles, technical documents, etc. that contained verbatim items and described the initial development and psychometric testing of the quantitative instruments used in studies that met all inclusion criteria (Lewis et al., 2018). Measure development articles were not included as part of the review, but these were reviewed by the primary (MP) and secondary (EJ) reviewers for relevant information about the measurement tool properties.

We applied the following exclusion criteria: (1) Articles focused solely on agenda setting, policy development, policy content analysis, or policy evaluation were excluded, as this review centered on the implementation of policies once adopted. (2) Empirical studies reported in theses and books were also excluded, as this study included only peer-reviewed journal articles. (3) Because this review aimed to assess implementation outcomes related to policy, studies which assessed only individual-level outcomes (e.g., health behaviors)—also referred to as client outcomes—or service outcomes (e.g., efficiency, treatment effectiveness) were excluded (Proctor et al., 2011). (4) Other health policies implemented in mental health settings or with mental health service users as the target populations (e.g., chronic disease screenings among individuals in residential treatment facilities; housing policies for adults with serious mental illness) were excluded, as this review focused specifically on policies with direct influence on practices or services that affect mental health education and the provision of mental health services (e.g., Affordable Care Act and Medicaid expansion increasing access to mental health care; state-level mandate for implementation of evidence-based mental health interventions). (5) Studies were excluded if full or partial measurement tools could not be located.

Screening Procedures

De-duplication was conducted using Endnote version X9 and Covidence (Covidence Systematic Review Software, 2021), which was also used to manage references and screen studies. We first reviewed all records excluded by Allen et al., (2020) during full-text screening (n = 597) for policy- and setting-specific measures, then reviewed studies published between April 2019 and December 2020 from the updated search. Two independent reviewers (MP, EJ) screened titles and abstracts to evaluate eligibility. They then independently screened full-text articles to determine inclusion for extraction and resolved disagreements by discussion. When needed, one reviewer (MP) contacted study authors for additional materials or information (e.g., copies of data collection instruments) to clarify eligibility. Three attempts were made to contact each study author for additional materials; those that remained unavailable were excluded.

Data Extraction

Data were extracted from study articles and accompanying materials (e.g., measure development articles, survey instruments) using Microsoft Excel. One reviewer (MP) extracted data for each measure, and a second reviewer (EJ) checked each entry and noted disagreements. The reviewers then resolved disagreements by discussion. A codebook was iteratively developed to aid in extracting and deductively coding study- and measure-related information. For studies using multiple measures, each measure was extracted separately (i.e., reported in separate rows).

Data extraction included information about: (1) general study and policy information; (2) methods used; (3) measure development and testing; (4) implementation determinants and outcomes (see Tables 1 and 2); (5) measure pragmatic and psychometric properties; and (6) policy impact on health equity in included measures. Health equity could be conceptualized as either a policy determinant or outcome, and include references to health disparities, health equity, and the SDOH (U.S. Department of Health and Human Services, 2021). Information about measure development/testing or a measure's pragmatic and psychometric properties was extracted from included empirical articles or, when applicable, from measure development articles.

The Psychometric and Pragmatic Evidence Rating Scales (PAPERS) were used to assess pragmatic and psychometric properties of quantitative measures (see Appendix E) (Lewis et al., 2018, 2021; Powell et al., 2017; Stanick et al., 2019). Psychometric criteria consist of nine categories, each scored on a −1 to 4 scale, with possible total scores of −9 to 36. There are five pragmatic criteria, with possible total scores of −5 to 20. Higher values indicate stronger psychometric properties or more pragmatic characteristics. The psychometric properties evaluated include: (1) internal consistency; (2) convergent validity; (3) discriminant validity; (4) known groups validity; (5) predictive validity; (6) concurrent validity; (7) structural validity; (8) responsiveness; and (9) norms. The pragmatic scale includes five domains: (1) brevity; (2) simplicity of language; (3) cost; (4) training burden; and (5) analysis burden.

Results

General Characteristics of Included Studies

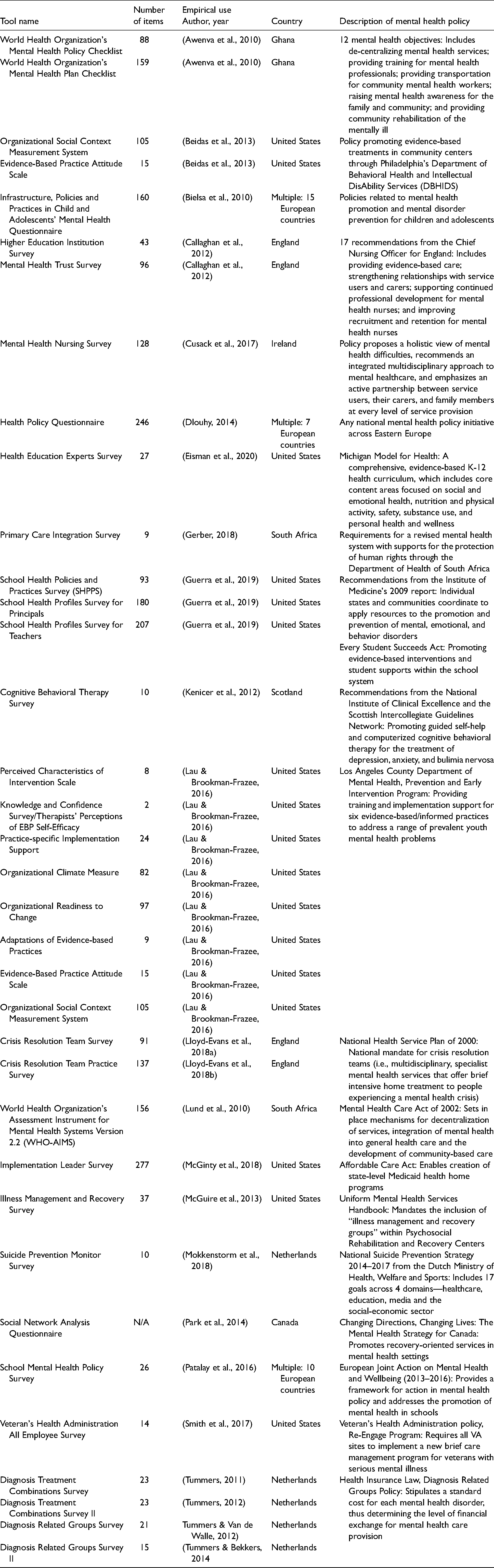

As outlined in Figure 1, the search located 16,015 articles. After removing 4,790 duplicates, we reviewed 11,225 records during title and abstract screening. Two reviewers then screened 105 full-text articles; ultimately, 80 were excluded, leaving 25 articles for inclusion in this review. Within the 25 included studies, there were 34 unique quantitative measures (see Table 3). Many studies administered multiple measures, and two measures—the Evidence-Based Practice Attitude Scale (Aarons, 2004) and the Organizational Social Context Measurement System (Glisson et al., 2008)—were used in two studies.

Quantitative measures identified in studies of mental health policy implementation (n = 34 unique measures in 25 studies).

The studies were published between 2009 and 2020 and were conducted primarily in the United States (n = 8), the Netherlands (n = 5), multiple European countries (n = 3), England (n = 3), and South Africa (n = 2). A single study was also conducted in the following countries: Scotland, Ireland, Ghana, and Canada. A variety of mental health policies were represented. Some policies impacted mental health support services for children and adolescents, while others addressed health insurance or the associated costs of implementation for both consumers and organizations/systems. While some measurement tools were developed specifically to evaluate the implementation of mental health policies (e.g., the World Health Organization's Mental Health Plan/Policy Checklists), others were used to evaluate constructs that impact implementation (e.g., Evidence-Based Practice Attitude Scale, Organizational Readiness to Change Assessment).

While the application of theories, models, and frameworks varied across studies, implementation frameworks such as the Exploration, Preparation, Implementation, Sustainment framework (Moullin et al., 2015), and the CFIR were used in multiple studies. Additional study characteristics, including study design, quantitative methods, setting, and policy level, are highlighted in Table 4, while Table 5 provides details about the measures used in included studies.

Characteristics of included studies with quantitative measures (n = 25).

Characteristics of measures utilized in studies of mental health policy implementation (n = 34 unique measures in 25 studies).

Note. IC = implementation climate; RI = readiness to implement. PAPERS pragmatic score range: −5 to 20; PAPERS psychometric score range: −9 to 36.

Measure Development and Testing

Of the 34 unique measurement tools, separate measure development articles were available for 10. The remaining quantitative instruments consisted of a mix of “home-grown” questionnaires, which were developed for use within a single study, and multi-use tools (e.g., The World Health Organization's Mental Health Plan Checklist). Overall, information regarding measure development and testing was limited. Less than half of the measures (n = 17, 45%) defined the constructs they assessed (i.e., provided definitions of constructs within the empirical use article, development article or other supporting documents, or the instrument itself). Only five measures (13%) reported that items were generated by experts in the field. Finally, there was limited information regarding pilot testing of measures. Six measures (16%) reported pilot testing with a representative sample. Only three measures (8%) reported validity or reliability tests based on pilot testing, and only two (5%) reported that the measure was refined based on pilot testing.

Implementation Determinants and Outcomes

As outlined in Table 1, only five measures (14%) assessed policy characteristics, such as the complexity or adaptability of a policy. Inner setting characteristics were far more common, with 32 measures (91%) assessing at least one organization-level construct. Outer setting characteristics, on the other hand, were assessed in less than half of the included measures (n = 15, 43%).

The most assessed implementation determinant was readiness for implementation (n = 27, 77%), which included the following subcomponents: communication efforts related to the policy, policy awareness and knowledge, leadership support for implementation, training, and non-training resources (see Table 1 for definitions). Each of these subcomponents were assessed in multiple measures, with particular emphasis on training (n = 20, 57%) related to policy implementation and non-training resources (n = 12, 34%). Other commonly measured determinants included actor relationships/networks (n = 15, 43%), organizational culture and climate (n = 11, 31%), and the policy implementation climate (n = 10, 29%).

The most commonly assessed implementation outcomes from the 34 measures were fidelity/compliance to the policy (n = 9, 26%) and penetration of the policy within the designated setting (n = 8, 23%) (see Table 2 for definitions). The acceptability and appropriateness of the policy were each assessed by seven measures (20%). The feasibility of implementing a policy was assessed by six measures (17%), while the cost of implementation was assessed by five measures (14%). Only four measures assessed adoption (11%), and no measures addressed sustainability.

Health Equity

Finally, six measures (17%) included at least one item assessing health equity or SDOH. Some measures inquired about the integration or coordination of a mental health policy with existing policy efforts to target SDOH, such as housing, poverty, or antidiscrimination. Other measures assessed factors affecting access to mental health services, such as geographic proximity to providers or availability of transportation.

Psychometric and Pragmatic Evidence Rating Scale (PAPERS) Criteria

As described in Table 5, higher scores on the PAPERS criteria are preferable, indicating that the measures possess better psychometric properties (psychometric score) or are easier to use (pragmatic score). For the 34 unique measures, limited information was available regarding psychometric properties. Possible psychometric scores range from −9 to 36 across nine categories of reliability and validity. PAPERS psychometric scores for included measures ranged from −1 to 17, with a median score of 5. The majority of studies reported only norms, an indication of sample size and distribution (n = 33, 97%), with a median PAPERS score of 3 (good). Eleven studies reported internal consistency, with Cronbach's alpha scores ranging from 0.49 to 0.97 (median PAPERS score = 3, good). Seven measures reported structural validity with a median PAPERS score of 3 (good). Lastly, convergent and discriminant validity were reported for two measures, with median PAPERS scores of 4 (excellent) and 2 (adequate), respectively. No information was found regarding known-groups construct validity, predictive validity, concurrent criterion validity, or responsiveness within the 34 included measures.

All available measures but one—the Organizational Social Context Measurement System—were free and accessible to the public either through a web search or contacting the study team (median score = 4). While the measures varied considerably in length, ranging from 2 to 277 items, 11 measures contained 11 to 50 items (median score = 3). Most measures were accessible at an 8th–12th grade reading level (median score = 3). Instructions regarding scoring and interpreting the measures were lacking overall (median score = 2); minimal information was included to aid in interpreting score ranges, identifying clear cut-off scores, and handling missing data. Similarly, information on training requirements or availability of self-training manuals for those administering the measure was often not reported (median score = 0, Not reported). Overall, the included measures received a median total score of 12, out of a possible 20, indicating that certain pragmatic criteria were underreported in this sample.

Discussion

This study examined quantitative measurement tools used to evaluate the implementation of mental health policies affecting mental health education and services and assessed measure development, testing (i.e., the extent to which measures are psychometrically tested), and application (i.e., the pragmatic nature of measures). Though there are best practices when developing and testing measures (Boateng et al., 2018), many measures lacked information regarding gold-standard criteria for measurement development and testing. Additionally, there was a number of “home-grown,” single-use measures identified in this sample, which has been reported in previous measures reviews (Dorsey et al., 2021; Mettert et al., 2020; Proctor et al., 2011; Stanick et al., 2021). Unfortunately, the measure development process and psychometric validation of such surveys were rarely reported, presenting a measurement challenge within implementation science and an area for further improvement (Lewis, Weiner, et al., 2015; Martinez et al., 2014; Proctor et al., 2011).

Our findings also highlight the need for a more critical examination of policy implementation determinants and outcomes. Within the included measures, some constructs—such as readiness for implementation, acceptability, or fidelity/compliance—were represented repeatedly. Others were underrepresented (e.g., political will for policy implementation) or absent (e.g., sustainability) from measures, despite the known significance within implementation science (Glasgow & Chambers, 2012). Outer setting implementation determinants were assessed less frequently than inner setting determinants, which has been reported in previous studies (Chaudoir et al., 2013; Chor et al., 2015; Clinton-McHarg et al., 2016; McHugh et al., 2020; Powell et al., 2021; Weiner et al., 2020). The limited attention to outer setting implementation determinants is problematic, as factors like political will can impact mental health outcomes through policy development and resource allocation (Corrigan & Watson, 2003; Shera & Ramon, 2013; Weinberg et al., 2012). Additionally, the overemphasis on compliance as a means of studying implementation has been a critique of policy studies in the past (Thomann & Zhelyazkova, 2017).

The imbalance of constructs within quantitative measures may stem from the length of time that these constructs have received attention. Historically, a large emphasis has been placed on acceptability, while sustainability may appear less often due to its relative “newness” within the implementation literature (Lewis, Fischer, et al., 2015). The absence of sustainability in these studies is perhaps unsurprising, as researchers report similar findings with other assessment models, such as the Reach, Effectiveness, Adoption, Implementation, and Maintenance (RE-AIM) Framework. For example, a 2013 review assessing the use and reporting of RE-AIM in 71 articles found that, of all five RE-AIM dimensions, maintenance (sustainment) was the least frequently reported—at both the individual-level and setting-level (Gaglio et al., 2013). Many of these constructs are theoretically grounded, though, and have a significant impact on policy implementation. Assessing these understudied constructs in future studies may provide researchers with a more complete picture of the processes, contexts, and outcomes of implementation.

Notably, health equity was assessed in few quantitative measures of mental health policy implementation, which has been reported in previous studies of policy measures (Allen et al., 2020, 2021). Moving forward, it will be vital to engage policymakers in this pursuit of equity, as they have considerable potential to impact health, such as allocating resources to and developing appropriate policies (Douglas et al., 2019). Connecting with community members and other stakeholders to identify and address health equity issues during implementation might also prove useful (Douglas et al., 2019). Additional work is needed to bridge health equity research and implementation science, both theoretically (e.g., within frameworks) and practically (e.g., pragmatic equity measures) (Brownson et al., 2021; Emmons & Chambers, 2021; Pollack Porter et al., 2018; Woodward et al., 2021). This presents many opportunities for further evaluation of the presence of equity within policy implementation.

Psychometric and pragmatic qualities of measures were also assessed in this study. With the exception of internal consistency and sample norms, psychometric properties were frequently unreported, as found in previous implementation measures reviews (Allen et al., 2020; Clinton-McHarg et al., 2016; McHugh et al., 2020; Powell et al., 2021; Weiner et al., 2020). For example, information about structural validity was available for seven measures, and convergent and discriminant validity were reported for two measures. However, none of the measures in this sample contained information about known-groups validity, predictive validity, concurrent validity, or responsiveness. These findings suggest that there is significant room for improvement in the development or refining of existing measures to make them more psychometrically sound.

Overall, the observed pragmatic qualities aligned with previous findings (Allen et al., 2020; McLoughlin et al., 2021; Powell et al., 2021; Weiner et al., 2020). Most included measures were freely available (i.e., accessible through supplemental materials with the article or by contacting authors directly), though one was proprietary. Additionally, though some measures were quite lengthy, most were relatively brief (fewer than 50 items). There is substantial need for pragmatic measures to assess implementation (Glasgow & Riley, 2013; Powell et al., 2017; Stanick et al., 2018, 2019). The findings of this work indicate that while practical, accessible measures used to evaluate policy implementation are being developed and utilized, there is still considerable room for improving the availability of brief, user-friendly measures of policy implementation. The development and use of such measures would help organizations to evaluate implementation without placing undue burden on practitioners.

Policy implementation research remains an understudied—but growing—area of implementation science (Emmons & Chambers, 2021; Hoagwood et al., 2020; McGinty et al., 2022; Oh et al., 2021; Purtle et al., 2017, 2016). Policy implementation is complex and can vary significantly across contexts (Berenson et al., 2017), making it crucial to evaluate implementation determinants, processes, and outcomes. Policy implementation also spans multiple levels of government and geopolitical units of epidemiological analyses, furthering the complexity in studying policy implementation (Schnake-Mahl et al., 2022). There are particular challenges in measuring policy implementation across levels of government. For example, policy surveillance systems are often more developed at the national level compared with the local level. The nexus between political science and implementation science offers benefits for measurement efforts. In particular, political science has a rich history of measuring the outer context of policy implementation (the policy and political environments) (Emmons et al., 2021). Valid, reliable, and pragmatic measures are needed to evaluate policy implementation and close the policy-to-practice gap (Martin et al., 2019; Oh et al., 2021). As a subset of the need for valid and reliable measures, there is growing attention on the need for metrics to track indicators of equity (e.g., SDOH, structural racism) (Brownson et al., 2022). Ultimately, this work contributes to the nascent study of policy-focused implementation science by providing an overview of existing measures used to assess mental health policy implementation, as well as guidance and recommendations for future measure development and refinement.

Limitations

While this study fills gaps in the literature, there are several limitations. First, there were likely relevant measures not captured in this study based on our search strategy and inclusion criteria. Our search strategy included only studies and accompanying measures that were published in English language peer-reviewed journals, so measures found exclusively in grey literature, books, or theses were not included. Second, because we used verbatim instrument items in data extraction (e.g., implementation determinants and outcomes), studies were excluded if we could not obtain the measures. Though we reviewed articles and supplemental materials, hand searched peer-reviewed and grey literature, and contacted authors directly, we ultimately excluded 18 studies because measures were inaccessible, introducing the possibility of sampling bias. The missing tools were commonly from older articles (1995–2010) that did not report rigorous measure development and psychometric testing. Third, though we examined the prevalence of implementation determinants and outcomes in the included measures, it is possible that these constructs were assessed qualitatively in the included studies. Finally, based on the study's definition of “Big P” policies, the included studies encompassed both mandated changes in practices or services, as well as the voluntary adoption and implementation of practices or services based on recommendations or guidelines from governmental entities. Despite these limitations, this review contributes to the policy implementation literature by assessing the state of measure development and quality in mental health policy studies.

Future Directions

Based on the findings of this study, there are several areas for further study. First, there is significant room for improvement regarding measure development or refinement of existing measures to make them more pragmatic and psychometrically sound, as well increasing reporting of pragmatic and psychometric properties. Second, there is need for greater transparency and availability of measurement instruments; we recommend that authors make measures available as supplemental materials to publications or add these to web-based measures repositories. Third, this study echoes previous calls for a greater focus on health equity as both a determinant and outcome of policy implementation (Brownson et al., 2021; Emmons & Chambers, 2021; Woodward et al., 2021). Finally, while this project focused on the implementation of mental health policies once adopted, additional work is needed to explore factors involved with policy enactment and the decision-making processes during adoption and implementation.

Supplemental Material

sj-docx-1-irp-10.1177_26334895221141116 - Supplemental material for Quantitative measures used in empirical evaluations of mental health policy implementation: A systematic review

Supplemental material, sj-docx-1-irp-10.1177_26334895221141116 for Quantitative measures used in empirical evaluations of mental health policy implementation: A systematic review by Meagan Pilar, Eliot Jost, Callie Walsh-Bailey, Byron J. Powell, Stephanie Mazzucca, Amy Eyler, Jonathan Purtle, Peg Allen and Ross C. Brownson in Implementation Research and Practice

Supplemental Material

sj-docx-2-irp-10.1177_26334895221141116 - Supplemental material for Quantitative measures used in empirical evaluations of mental health policy implementation: A systematic review

Supplemental material, sj-docx-2-irp-10.1177_26334895221141116 for Quantitative measures used in empirical evaluations of mental health policy implementation: A systematic review by Meagan Pilar, Eliot Jost, Callie Walsh-Bailey, Byron J. Powell, Stephanie Mazzucca, Amy Eyler, Jonathan Purtle, Peg Allen and Ross C. Brownson in Implementation Research and Practice

Supplemental Material

sj-docx-3-irp-10.1177_26334895221141116 - Supplemental material for Quantitative measures used in empirical evaluations of mental health policy implementation: A systematic review

Supplemental material, sj-docx-3-irp-10.1177_26334895221141116 for Quantitative measures used in empirical evaluations of mental health policy implementation: A systematic review by Meagan Pilar, Eliot Jost, Callie Walsh-Bailey, Byron J. Powell, Stephanie Mazzucca, Amy Eyler, Jonathan Purtle, Peg Allen and Ross C. Brownson in Implementation Research and Practice

Supplemental Material

sj-docx-4-irp-10.1177_26334895221141116 - Supplemental material for Quantitative measures used in empirical evaluations of mental health policy implementation: A systematic review

Supplemental material, sj-docx-4-irp-10.1177_26334895221141116 for Quantitative measures used in empirical evaluations of mental health policy implementation: A systematic review by Meagan Pilar, Eliot Jost, Callie Walsh-Bailey, Byron J. Powell, Stephanie Mazzucca, Amy Eyler, Jonathan Purtle, Peg Allen and Ross C. Brownson in Implementation Research and Practice

Supplemental Material

sj-docx-5-irp-10.1177_26334895221141116 - Supplemental material for Quantitative measures used in empirical evaluations of mental health policy implementation: A systematic review

Supplemental material, sj-docx-5-irp-10.1177_26334895221141116 for Quantitative measures used in empirical evaluations of mental health policy implementation: A systematic review by Meagan Pilar, Eliot Jost, Callie Walsh-Bailey, Byron J. Powell, Stephanie Mazzucca, Amy Eyler, Jonathan Purtle, Peg Allen and Ross C. Brownson in Implementation Research and Practice

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the National Institute of Diabetes and Digestive and Kidney Diseases (number P30DK092950), the National Institute of Allergy and Infectious Diseases (number K24AI134413), the National Cancer Institute (number P50CA244431), the National Institute of Mental Health (number K01MH113806; R21MH125261; P50MH11366), the National Center for Advancing Translational Sciences (number UL1 TR002345), the Centers for Disease Control and Prevention (number U48DP006395), and the Foundation for Barnes-Jewish Hospital. The findings and conclusions in this paper are those of the authors and do not necessarily represent the official positions of the National Institutes of Health or the Centers for Disease Control and Prevention.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.