Abstract

Background

Qualitative methods are essential for providing an in-depth understanding of “why” and “how” evidence-based interventions are successfully implemented—a key area of implementation science (IS) research. A systematic synthesis of the applications of qualitative methods is critical for understanding how qualitative methods have been used to date and identifying areas of innovation and optimization. This scoping review explores which qualitative data collection and analytic methods are used in IS research, what and how frameworks and theories are leveraged using qualitative methods, and which implementation issues are explored with qualitative implementation research.

Method

We conducted a systematic scoping review of articles in MEDLINE and Embase using qualitative methods in IS health research. We systematically extracted information including study design, data collection method(s), analytic method(s), implementation outcomes, and other domains.

Results

Our search yielded a final dataset of 867 articles from 76 countries. Qualitative study designs were predominantly single elicitation (67.7%) and longitudinal (20.3%). In-depth interviews were the most common data collection method (84.3%), followed by focus group discussions (FGDs) (34.5%), and nearly 25% used both. Sample sizes were, on average, 40 in-depth interviews (range: 1–1,131) and nine FGDs (range: 1–46). The most common analytic approaches were thematic analysis (45.3%) and content analysis (18.5%) with substantial variation in analytic conceptualization. Nearly one-quarter (23.2%) of articles used one or more TMF to conceptualize the study, and less than half (40.9%) of articles used a TMF to guide both data collection and analysis.

Conclusions

We highlight variation in how qualitative methods were used, as well as detailed examples of data collection and analysis descriptions. By reviewing how qualitative methods have been used in well-described and innovative ways, and identifying important gaps, we highlight opportunities for strengthening their use to optimize IS research.

Registration

The protocol can be found 10.11124/JBIES-20-00120.

Plain Language Summary

Qualitative methods are useful in implementation science (IS) research because they help us understand why and how efforts to deliver evidence-based interventions succeed or fail. Knowing how qualitative methods have been used in past IS research is important for two main reasons. First, it can give researchers ideas about different qualitative methods they could use and help them choose the ones that may be most useful and appropriate for their studies. Second, it can highlight new ways qualitative methods are being used and identify important gaps. We reviewed 867 articles that used qualitative methods in IS research. Most used a mixed methods approach that included both quantitative and qualitative methods. The most common ways researchers collected qualitative data were through interviews and focus group discussions at one point in time. Sample sizes varied widely across studies, and researchers often did not explain why they chose certain sampling techniques. There was also wide variation in how the same data analysis method was described and why it was chosen. Finally, we point out ways to improve qualitative methods for future IS research.

Introduction

There is increasing recognition of the benefits of using qualitative methods in implementation science (IS) research (Palinkas, 2014). In 2019, the U.S. National Cancer Institute at the National Institutes of Health published a white paper on the use of qualitative methods in implementation research, identifying a range of uses, from eliciting stakeholder-centered perspectives and informing the design and implementation of interventions to documenting and reflecting on implementation processes and generating insights into the effectiveness of implementation (National Cancer Institute, 2019). In recent years, the World Health Organization (WHO), research institutions, professional societies, scientific journals, and a growing number of IS researchers have highlighted qualitative research as a critical component of implementation research (Hamilton & Finley, 2019; National Cancer Institute, 2019; Peters et al., 2013; World Health Organization, 2016). Qualitative research can explore complex questions such as why and how evidence-based practices were (or were not) implemented successfully because it seeks to understand context and process of implementation that quantitative methods are less adept to answer (Hamilton & Finley, 2019; Holtrop et al., 2018). Qualitative research is especially useful to identify continuities and deviant cases in implementation contexts through inductive methods (Strauss, 1987). Major funders of IS research are now encouraging researchers to incorporate qualitative methods into their implementation research studies (U.S. Department of Health and Human Services, 2022). Despite the high value placed on qualitative research in the field of IS, previous research to describe how qualitative methods are used in implementation research is limited.

Current literature provides overviews of qualitative research in many areas of health research, such as health policy and systems (Fisher & Hamer, 2020), health informatics (Hussain et al., 2021), specific health topics such as health education and health behavior (Raskind et al., 2019), anesthesiology (Gisselbaek et al., 2021), and diabetes (Hennink et al., 2017). Other reviews have discussed methodological techniques such as rapid analysis (Gale et al., 2019), the use of ethnographic approaches in implementation research (Gertner et al., 2021), or the application of specific IS frameworks, such as Reach Effectiveness Adoption Implementation Maintenance (RE-AIM), in qualitative research (Gaglio et al., 2013). While other papers have broadly discussed the value of qualitative methods in IS research and provided methodological considerations in the context of IS research (Clark et al., 2021; Hamilton & Finley, 2019; National Cancer Institute, 2019). To the best of our knowledge, no reviews, published or pending, seek to assess how the array of qualitative methods has been used in implementation research.

Since the journal, Implementation Science, was launched in 2006, the field has experienced tremendous growth, both in the United States and globally (Beidas et al., 2022). IS researchers are now reflecting on progress in the field thus far, putting forward assessments of the field, and identifying opportunities for enhancing the future of implementation research (Beidas et al., 2022). It is therefore an opportune time to review how qualitative research methods have been used over the past 15 years and elevate qualitative research methods in the IS field on the path forward. In this scoping review, we aimed to explore four main questions: (a) Which qualitative data collection methods are used in IS research? (b) Which qualitative analytic methods are used in IS research? (c) Which IS theories, models, and/or frameworks are applied in IS research using qualitative methods? and (d) When qualitative methods are used in IS research, which implementation issues are explored? Documentation of the numerous ways that qualitative methods have been used in IS research can inspire researchers to consider a wider array when deciding which methods may be best suited for exploring various IS research questions and most appropriate in a specific target population and setting and given available resources, capacity, and time. The findings of this review could also help identify needs for strengthening qualitative research methods, as well as point to opportunities for innovation as the field of IS advances.

Methods

Search Strategy

Development, details, and justifications for the search strategy are described in detail in our published review protocol (Hagaman et al., 2021), and the full search strategy and data dictionary are available in the supplementary materials.

Article Selection

Articles were included in the systematic scoping review if they met four criteria: (a) the article was published in 2006 or later (Hagaman et al., 2021), (b) the article was published in English, (c) the article utilized IS research (Eccles & Mittman, 2006), (d) the article utilized a theoretical approach which includes either a theory, model, or framework (TMF) (considered best practice in IS research) (Nilsen, 2015), and (e) the article utilized qualitative methods (Kegler et al., 2019). To be considered IS research, the article must have presented research concerned with the systematic uptake of evidence-based intervention(s)/policy into routine health care and public health settings, as defined by Eccles and Mittman (2006). Procedures to ensure screening and data extraction consistency and quality are outlined in the published protocol (Hagaman et al., 2021).

Data Extraction

Our data extraction process was organized into six domains: (a) general article information, (b) focus of the article, (c) study design, (d) data collection method, (e) analysis and findings, and (f) additional study design information (Table 1). Each domain and its definitions and relevant extraction details can be found in the Data Dictionary (Supplemental File).

Data Extraction Domains and Topics.

Descriptive analysis was used to summarize the findings, including count and proportion for categorical variables and mean and standard deviation for continuous variables. We conducted all quantitative analyses in SPSS (IBM Corps). As we extracted the data, our team noted particularly well-described articles that illustrated ranges of methods, uses, and innovations to answer their research questions in varied cultures and contexts. We focused on sharing examples that were methodologically illuminative for the field while maintaining diversity in topic and geographic location.

Results

Overview of Included Articles

Our search yielded 9,888 records. Of the unique articles, 7,482 were excluded during title and abstract screening, and 2,406 proceeded to full-text review. During full text review, we excluded 1,539 articles that did not meet the inclusion criteria, resulting in 867 articles in the final sample (Figure 1). Almost half of the articles published were between the years 2017 and 2020 (Figure 2).

PRISMA Diagram.

Count of Articles Included by Year.

Table 2 displays the setting and health topics of the included articles. Studies were set in 76 unique countries, and 30 (3.5%) were conducted in multiple countries. Based on the WHO Regions, 42.6% in the Region of the Americas, 21.5% in the European Region, and 20.5% of articles were conducted in the African Region (WHO, 2024). Most articles (69.6%) presented research that was conducted in high-income countries, while 20.1% were conducted in middle-income countries, 9.0% in low-income countries, and 1.4% in multiple countries across different income levels (The World Bank, 2024). The most common health topic was health systems (n = 238; 27.5%), followed by mental health (n = 157; 18.1%), chronic disease (n = 141, 16.3%), and HIV (n = 138; 15.9%).

Setting and Health Topics of Included Articles.

Percentages exceed 100% because multiple health topics could be selected for each article.

Other health topics include: environmental health, geriatrics/aging, sexual and reproductive health, critical care, physical therapy, domestic/sexual violence, housing insecurity, surgery, occupational health, and oral health.

Which Qualitative Data Collection Methods Are Used in IS Research?

Our review captured the breadth and frequency of various aspects of qualitative data collection, including qualitative study design, sample population, data collection methods, and sample size of each data collection method (Table 3). Study designs were predominantly single elicitation (67.7%), with 20.3% using a longitudinal design. While many longitudinal studies collected data once at baseline and once post-implementation, some collected data at more than two timepoints. For example, in a multi-country process evaluation of implementing urinary continence care recommendations, Rycroft-Malone et al. conducted detailed structured observations and qualitative interviews at 24 nursing home sites across four European countries at baseline and 6, 12, 18, and 24 months post initiation to assess adoption and fidelity of care recommendations (Rycroft-Malone et al., 2018).

Qualitative Data Collection Methods of Included Articles.

Percentages exceed 100% because studies may have used more than one sample type, data collection method, and other data collection elements. All applicable methods were flagged for each study.

Other sample included: retailers, science communication specialist, senior staff, head cooks, catering staff, domestic staff, members of the study team, members of the PMTCT Implementation Science Alliance, principle investigator, project coordinators, government program officers, mHealth experts, administrative staff, support staff, teachers, restaurant owners, information technology (IT) specialists, Private health insurers, government health insurers, Computer application coordinators, donors, Laboratory personnel, Transporters (fire brigade, ambulance), Hospital epidemiologists, Patient advocates, volunteers.

Other observation included: field notes, contextual, theory-based observation, participant observation, object- activity- person oriented, two observation methods were utilized for example: structured and unstructured.

Other data sources included: workshops/training sessions, working group meetings, reflection forms/periodic reflections, task groups, stakeholder meetings, questionnaires, informal phone calls, steering committee meetings, implementation team meetings.

Among 684 studies that reported.

Among 256 studies that reported.

Among 694 studies that reported.

Nearly 12% of articles presented a case study design, with how the research team defined “case” varying across articles. For example, Abou-Malham et al. used an embedded case design in which the overall case was defined as the midwifery profession, and three embedded cases were defined as the educational, professional, and sociocultural systems of the midwifery profession (Abou-Malham et al., 2015).

Many articles presented mixed methods data (although 40% did not explicitly identify the mixed method design used), with sequential explanatory designs most common (20.3%).

Most articles collected qualitative data from health care workers (75.7%), followed by organization stakeholders (53.6%). Examples of organizational stakeholders included executive-level, management-level, and operation-level personnel, such as directors, managers, project specialists, and administrative members. Qualitative data were less commonly collected from community members (10%) and policy stakeholders (9.7%). A small proportion of articles sampled family or social contacts of health consumers (7.7%).

Table 3 summarizes data collection methods presented in the articles. In-depth interviews were most common (84.3%). Of these, 34.8% included their interview guide.

Interviews were infrequently conducted with tools other than a semi-structured interview guide with verbal questions to elicit information, such as video demonstrations, photographs, free lists, and vignettes, but these techniques were not frequently described in detail. FGDs were used in 34.5% of the articles.

Nearly a quarter of the articles presented data collected using both in-depth interviews and FGDs. There are some well-described examples of how these two methods were used to strengthen and capitalize on the unique types of data these techniques can elicit. For example, Vedel et al. used a mixed methods approach to understand the passive dissemination of recommendations for care of older adults with Alzheimer's disease and related dementia in primary care in Quebec, Canada. Data were collected through (a) a retrospective chart review; (b) in-depth interviews with clinicians; and (c) FGDs in which the data from the chart review were presented to the staff to triangulate the findings.

Observational data were presented in 17.1% of the articles and varied greatly in methodological description and technique. Moreover, 49 articles (5.7%) used individual in-depth interviews, FGDs, and observations to collect data. For example, Elsey et al. used a longitudinal, mixed-methods design to explore the implementation of a tobacco cessation intervention in Nepal. The researchers held FGDs with health workers and individual interviews with patients in the pre-implementation phase to inform the intervention. During implementation, the team conducted unstructured observations in clinics and individual interviews with participants, which involved providing cameras to female participants to take photos to facilitate discussion of a sensitive topic (Elsey et al., 2016).

Text extractions were another common tool (18.3%) and utilized in a variety of ways. For example, to evaluate the compatibility, effectiveness, and fidelity of implementing a psychological coping strategy for people facing cancer, Belkora et al. combined qualitative interviews and extracted text from case notes, meeting notes, and emails from staff involved in the implementation of the strategy (Belkora et al., 2013). Other text-extraction techniques reported were related to discussions during meetings and workshops that were recorded and subsequently analyzed.

While not classified as a standalone data collection method, we highlight that some authors chose to describe additional data collection elements, including field notes (33.2%), reflexivity (8.7%), and copies of their interview guide tool (34.8%). While we do not exhaustively describe how these elements were used, a few interesting innovations are highlighted. For example, Tarrant et al. supplemented audio-recorded and written fieldnotes with recordings of research team debrief meetings in their ethnographic research of the Sepsis Six clinical care bundle in Scotland (Tarrant et al., 2016). One example of incorporating reflexivity can be found in Irimu et al.'s 2014 participatory action research about health workers’ performance in managing severely ill children in Kenya. The authors describe their team's assumptions about the implementation site and the models they used to theorize their study. They describe the relationship of the study team observer to the context, their role (e.g., pediatrician and expert trainer in the community), and the trust they built with staff and community within the implementation site. Lastly, participants were asked to reflect on emerging findings and discuss their interpretation of data analysis with external social scientists not directly involved in their research. The authors highlighted that this reflexive process strengthened data trustworthiness and ensured that their analysis was grounded in a broader context (Irimu et al., 2014).

Table 3 and Figures 3 and 4 illustrate the range of sample sizes by data collection method. On average, studies conducted 40 in-depth interviews, though sample sizes ranged widely from one to 1,131 interviews completed. On one end, Fils-Aimé et al. conducted one in-depth interview with the lead community health worker to understand patient recruitment, follow-up challenges, and perceptions of mental healthcare provision in rural Haiti. The data from the in-depth interview identified barriers to mental healthcare that supplemented a quality improvement questionnaire and clinical patient data (Fils-Aimé et al., 2018). On the other end, Asiimwe et al. researched the use of malaria rapid diagnostic tests in which a total of 1,131 in-depth interviews were completed: 1,068 exit interviews with patients and 102 interviews with health workers in Uganda (Asiimwe et al., 2012). Data were collected at 21 health centers using an interview guide that consisted of closed and open-ended questions. It was not clear how semi-structured the exit interviews were, how much qualitative data were elicited from each interview, if the interviews were audio recorded, or how they were analyzed beyond basic textual coding. On average, studies conducted nine FGDs, ranging from 1 to 46.

Histogram of Interview Sample Size among 684 Studies That Reported Utilizing Qualitative Interviews.

Histogram of Focus Group Discussion Sample Size Among 256 Studies That Reported the Sample Number.

Among the 80% of articles that reported the number of sites from which data was collected, the average was 9.4. We explored how articles described their sampling process, noting which articles described in detail how they achieved data saturation, or why they chose their sample size. Moreover, 10.3% of articles provided descriptions about how saturation was assessed. For example, Kotte et al. researched school mental health treatment in the United States and included how they evaluated saturation using a “saturation grid” with the steps taken to achieve representativeness from all the study sites (Kotte et al., 2016). Saturation was discussed more often in articles that included data from individual interviews compared with observations, text extractions, and FGDs (Figure 5). Jones et al. offered a well-described justification for their large sample size anticipated to reach saturation. Researchers interviewed 195 veterans to evaluate their satisfaction with the Veterans Choice Program (VCP). Their qualitative sample was built from a matrix categorizing sample targets among those who had used, never tried, and sought out but did not use VCP (Jones et al., 2019). Unable to assess the homogeneity of their potential interviewees, they diverted from standard recommendations of 25 interviews for “homogenous” populations (Sandelowski, 1995), they chose a conservative target sample size per matrix cell. As such, the authors targeted 45–50 veterans in subcategories of use and barriers to VCP, thus targeting 180–200 interviews. A large sample size helped authors achieve thematic saturation without knowing the homogeneity of their sample (Jones et al., 2019).

Proportion of Studies That Discussed Saturation by Data Collection Method.

Which Qualitative Analytic Methods Are Used in IS Research?

Table 4 presents the qualitative analytic methods of the included articles. The most common analytic approach was thematic analysis (n = 393; 45.3%), followed by content analysis (n = 160; 18.5%), framework analysis (n = 78; 9.0%), and grounded theory (n = 76; 8.8%). Thematic content analysis was used in 2.4% of articles, and rapid analysis was used in 0.9% of articles. About 13% of articles did not explicitly define their analytic approach.

Qualitative Analytic Methods of Included Articles.

Percentages exceed 100% because multiple analytic methods could be selected for each article.

Other analytic approaches included: within-case analysis, thematic framework analysis, immersion-crystallization approach, text condensation, synthetic analysis, realist evaluation, phenomenographic analysis, negative case analysis, matrix analysis, magnitude coding, lexical analysis, layered analysis, hermeneutic approach, ethnographic approach, configural frequency analysis, constructivist approach, concept mapping, Comskil coding system, computer-assisted linguistic analysis, and comparative case analysis.

Percentages are calculated based only on studies that reported the number of coders.

Other software included: QDA Miner, microsoft word, EZ-Text software, HyperResearch, coded by hand and did not use a software, use of more than one software for example: NVivo and MAXqda, coded by hand and used a software package for example: coded by hand and NVivo.

Most articles (n = 562; 64.8%) presented one analytic approach, 241 (27.8%) used two analytic approaches, and 18 (5.3%) used three or more analytic approaches. Six (0.7%) articles presented thematic analysis and framework analysis, 13 (1.5%) used both thematic analysis and content analysis, and 23 (2.7%) used both thematic analysis and grounded theory. For example, Katz et al. completed research to understand the factors that influence HPV vaccine acceptability among adolescents and their caregivers in Soweto, South Africa (Katz et al., 2013). The authors described how they used an “inductive approach based upon grounded theory” to identify four broad themes related to HPV vaccine decision-making, acceptance, and uptake.

Most of the included articles (788; 90.9%) engaged coders to analyze data. Among those that reported the number of coders, 66 (11.8%) had one coder and 495 (88.2%) reported two or more coders. Of those that reported multiple coders and reported consistency, 390 (99.0%) reported checking consistency among coders. Assessing and reporting coding consistency, while not always necessary for rigor and a cornerstone of qualitative epistemological debate, can help implementation scientists employ analytic consistency across larger research teams, improve transparency, and support team science (Levitt et al., 2018; Ramanadhan et al., 2021; Tobin & Begley, 2004). Most articles (n = 521, 60.1%) reported the qualitative software they used in data analysis, with NVivo (n = 289, 33.3%) most reported. That said, using coding software is not always needed for qualitative analysis. O’Hara et al. used the transcripts and field notes to develop analytic memos, which led to a thematic matrix summarizing challenges and adaptations to a Peer-based Group Lifestyle Balance intervention for individuals with serious mental illness in the United States (O'Hara et al., 2017).

About 10% (n = 89) of articles reported that they used participant checking (e.g., member checking), one of many strategies used to enhance the “trustworthiness” and credibility of results. This method employs multiple ways of bringing elements of the data and their interpretation back to participants to assess the findings’ salience and resonance with their lived experiences. About two-thirds of articles used visuals to present results. These visuals varied greatly from conceptual models to diagrams, theme trees, and photos.

Which IS Frameworks Are Applied in IS Research Using Qualitative Methods?

Table 5 presents the TMFs used by included articles and their application. Nearly one-quarter (23.2%) of articles used one or more TMF to conceptualize the study. A small proportion of articles (5.4%) used a TMF to guide data collection only, while one-third of articles used a TMF to guide data analysis only. Less than half (40.9%) of articles used a TMF to guide both data collection and analysis.

Theories, Models, and Frameworks Used by Included Articles.

Percentages exceed 100% because multiple theories, models, and frameworks could be selected for each article.

Other TMFs included: Behavior Change Wheel; COM-B Model for Behavior Change; Organizational Readiness Theory; Organization theory of implementation innovations; QUERI; Social Capital Theories; Social Cognitive Theory; Social Learning Theory; Theory of Planned Behavior; Actor Network Theory; Unified Theory of Acceptance and Use of Technology; Theory of Change; Health Belief Model; Implementation Model by Grol et al. (2004); Ottawa Model; Technology Acceptance/Adoption Model; Ecological Validity Model; Logic Model; Kirkpatrick Model for Evaluation Training Courses; Transtheoretical Model for Behavior Change; Behavior Change Techniques Taxonomy; Conceptual Framework for Implementation Fidelity; EPIS Model; Interactive Systems Framework; Knowledge to Action; PRECEDE-PROCEED; Risk Explanatory Framework; Social Ecological Framework; Cultural-Historical Activity Theory; Assessment-Decision-Administration-Production-Topical experts-Integration-Training-Testing (ADAPT-ITT) Model; Information, Motivation, and Behavioral Skills Model; Analyze, Design, Develop, Implement, and Evaluate (ADDIE) Model; PRISM; SEA-Change Model; CDC Frameworks; Center for Public Health Systems Sciences Program Sustainability Framework; Dynamic Sustainability Framework; Levesque et al Conceptual Framework on Access to Healthcare, Medical Research Council Process Evaluation Framework, Health Policy Triangle.

CFIR = Consolidated Framework for Implementation Research.

RE-AIM framework = Reach, Effectiveness, Adoption, Implementation, and Maintenance framework.

PARIHS = Promoting Action on Research Implementation in Health Services.

Articles that investigated implementation outcomes specified in Proctor’s Implementation Outcomes.

There was variation in the TMFs that were used in the articles, CFIR was used most frequently (10.7%), followed by the Theoretical Domains Framework (4.8%), Normalization Process Theory (4.6%), Diffusion of Innovations (3.8%), RE-AIM (3.6%), and PARIHS (2.9%). Most articles (65.4%) used other theories (e.g., Organizational Readiness Theory, Theory of Planned Behavior, Actor Network Theory), models (e.g., Exploration, Preparation, Implementation, Sustainment (EPIS) Model, Transtheoretical Model for Behavior Change, PRECEDE-PROCEED), and frameworks (e.g., Conceptual Framework for Implementation Fidelity, Social Ecological Framework)—all of which were less commonly used across the articles included in this review).

A small proportion of articles (6.9%) applied a TMF developed by the authors. For example, Woodward et al. created and tested the feasibility of the Health Equity Implementation Framework by exploring inequities in hepatitis C virus (HCV) treatment among Black older adult VA patients in the United States (Woodward et al., 2019). The Health Equity Implementation Framework combines modified concepts from the i-PARIHS Framework (Harvey & Kitson, 2016) and the Health Care Disparities Framework (Kilbourne et al., 2006) to address the lack of implementation frameworks that are structured around health equity and its determinants.

When Qualitative Methods Are Used in IS Research, Which Implementation Issues Are Explored?

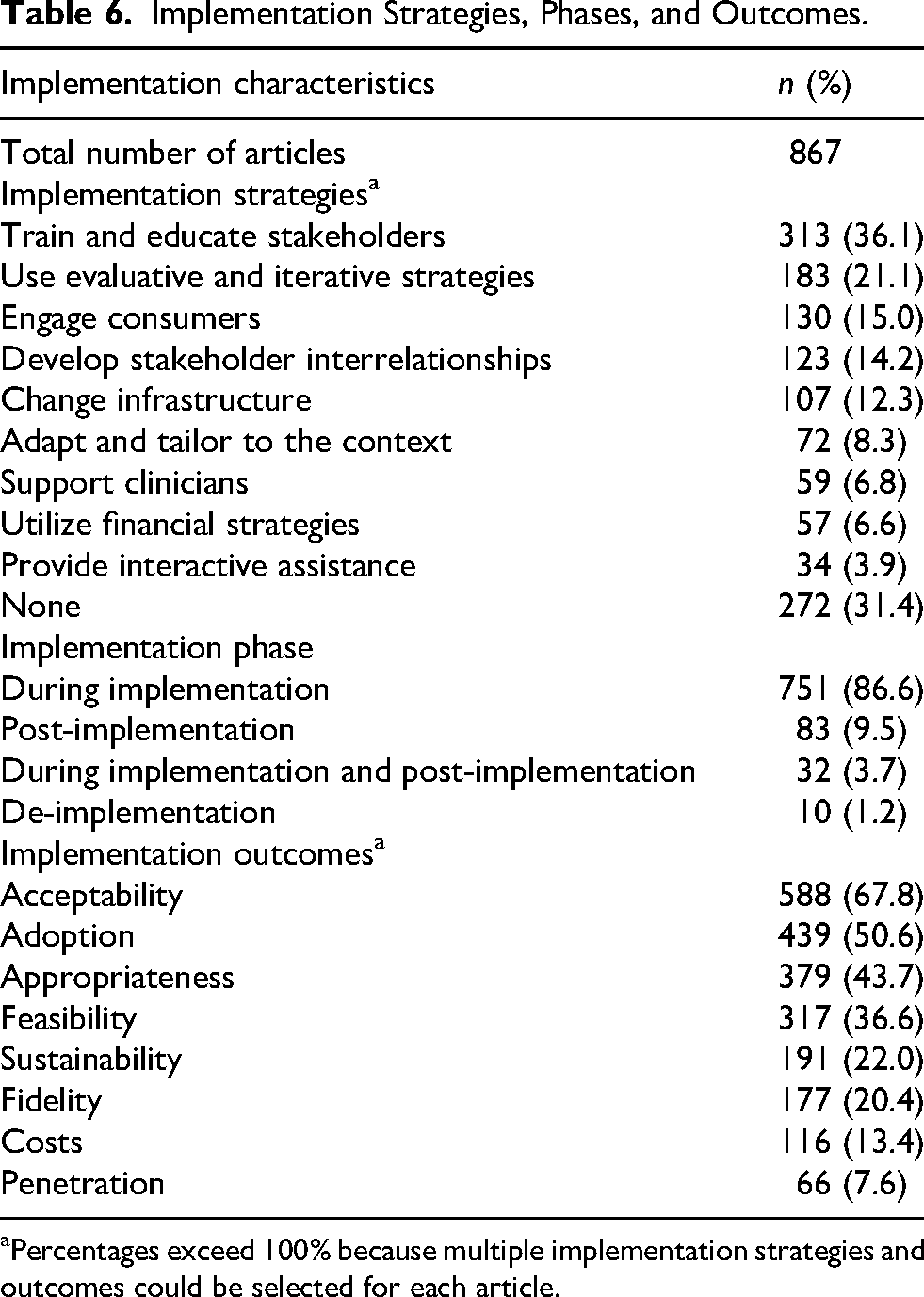

Table 6 presents the implementation strategies, phases, and outcomes of focus in each article. Of the articles (68.8%) that discussed an implementation strategy over a third of articles focused on train and educate stakeholders (36.1%), followed by use and evaluate iterative strategies (21.1%), and engage consumers (15.0%), develop stakeholder interrelationships (14.2%), change infrastructure (12.3%), adapt and tailor to the context (8.3%), support clinicians (6.8%), utilize financial strategies (6.6%), and provide interactive assistance (3.9%). Most articles presented data collected during implementation (86.6%), with a smaller proportion occurring in the post-implementation phase (9.5%) or during implementation and post-implementation (3.7%).

Implementation Strategies, Phases, and Outcomes.

Percentages exceed 100% because multiple implementation strategies and outcomes could be selected for each article.

A very small proportion of articles (1.2%) discussed de-implementation. Over two-thirds of articles presented data using Proctor's framework acceptability (67.8%), and half assessed adoption (50.6%), followed by appropriateness (43.7%), feasibility (36.6%), sustainability (22.0%), fidelity (20.4%), costs (13.4%), and penetration (7.6%) (E. Proctor et al., 2011).

Discussion

Our review found that qualitative IS articles were primarily conducted in the Americas and in high-income contexts and among inquiries focused on systems level health questions and mental health. Most articles used a cross-sectional single elicitation design and commonly within a broader mixed methods approach. Semi-structured qualitative approaches in the form of interviews and FGDs comprised most data collection techniques, with fewer eliciting qualitative data through other forms. Sample sizes varied widely for both semi-structured interviews and FGDs, and sampling justifications were less commonly elucidated. Below, we offer three key domains of discussion on the review findings and identify opportunities for enhancing and extending the use of qualitative methods in the field of IS to further strengthen its contribution to population health improvements.

What Can We Learn From What Qualitative Methods Are Used?

Most articles used individual in-depth interviews or FGDs, data collection methods that are valuable for obtaining rich information but also have known limitations. These include being influenced by the interviewer's and interviewee's experiences and the power dynamic between the two; limited skills of expression among interviewees; and interviewing skills (Affleck et al., 2013; Coleman, 2019; Riessman, 1987). Interviews and focus group discussions can also bring forward problematic memory issues. For example, they rely on individuals sharing information that is consciously known and expressing perspectives through spoken word. Interviews and FGDs alone may therefore not be able to fully capture complex system level determinants of implementation, particularly in decentralized and fractured healthcare systems around the world. Some scholars have addressed these limitations through the use of community participatory research, looking critically at context in which the interview takes place (Bayeck, 2021; Call-Cummings & Hook, 2015). To address some of the limitations of interviews and FGDs in IS, researchers have recommended combining data collection methods and using multiple data sources to triangulate findings (Hamilton & Finley, 2019). We echo this call. Observations, role plays, and non-verbal elicitations such as photographs, free lists, and pile sorts may offer opportunities to explore implementation issues within interviews and FGDs or in addition to them (Beidas et al., 2014; Davis et al., 2019; Hine, 2000; Kohrt et al., 2015). Notably, this review did not yield many articles that described non-verbal strategies to elicit feedback. The use of other forms of expression and communication, such as drawings, videos, photography, and vignettes, or mediums of qualitative data like text extractions from electronic medical records or from patient/healthcare worker messages may be useful, particularly within contexts where hierarchies and power structures impact expressions of barriers to implementation and in populations with lower education, those living with disabilities, and multilingual/cultural. Particularly as many implementation science efforts center their focus on amplifying and optimizing equity in healthcare, designing elicitation techniques that best capture the perspectives of a diversity of individuals is essential.

Many articles presented data where qualitative data were collected at one point in time, which is efficient compared with longitudinal elicitation. While qualitative methods can allow for storytelling and perceptions that can capture systems and interventions' dynamics over time, there may be better approaches to capture system dynamics (and individual behavior change) over time beyond reflective questioning (what worked well, what changed, what went better) through standard in in-depth interviews. As IS research continues to become more iterative (Glasgow et al., 2020) and useful for documenting adaptations and their impacts in real-world settings (Kirk et al., 2020), elicitation techniques like periodic reflections and template-based analytic approaches (Brooks et al., 2015) may offer efficient ways of processing multiple elicitations over time.

IS research, given its focus on spread and scale, must be primed to qualitatively assess organizations and health facilities across many contexts. IS demands that some of the previously relied upon notions of “sample size” (Guest et al., 2006) be disrupted. A focus on “saturation” as “hearing it all” and “understanding it all” (Hennink et al., 2017) should remain, but given the necessity to integrate context, history, and broader policy for IS, we need ways to account for all qualitative data, not just the interview or FGD-generated data. Furthermore, the depth of necessary understanding of issues needed may vary depending on the goal of the project. Finally, the participants who comprise the qualitative sample are deeply important for identifying and selecting salient determinants and strategies. In our review, 75.1% of articles reported data collection from healthcare workers and 53.7% of articles reported data collection from organizational stakeholders, while 36.1% of articles reported data collected from individual consumers. This may be due to the research question's focus on understanding the care workers’ decisions, perceptions, and behaviors of care delivery specifically. There may be opportunities for qualitative research questions to broaden to allow for further contextualization and triangulation among consumers, ultimately furthering health equity (Woodward et al., 2021). Strategies for incorporating the full range of stakeholders (pediatrics, caregivers/families, multiple sub-cultures, and those less represented within health systems) is essential given these elements intimately shape the “implementation climate.” Our review highlights various strategies that have successfully accomplished this through ethnographic approaches, community-engaged and participatory research, and other approaches. Guidance on how saturation may differ for IS research is warranted, given the inherent multiple “sub-samples” that may be necessary to engage with (Hagaman & Wutich, 2017).

Hallmarks of qualitative data “trustworthiness” (e.g., reflexivity, triangulation, etc.) were not frequently described. Importantly, qualitative methods typically rely on dynamic exchange between the data collector and the informant. Thus, outlining who is doing the data collection and their positionality is deeply important, particularly in social work environments where leadership, power, and occupational security are entwined in everyday work. Employing community members and those with lived experience, such as Palinkas et al.'s embedded ethnography/rapid clinical ethnography that trained doctors as ethnographers of their own health system (Palinkas et al., 2008), may be compelling strategies to collect data that can strengthen the epistemic rigor of the project by including participants with more diverse experiences and points of view. However, this must be married with considerations about roles and embedded system hierarchies (e.g., senior personnel vs. beginner medical assistants), overworked and overburdened healthcare staff, and the need for supportive training and funds to engage individuals that are not trained in traditional research practices lauded by the academic and funding landscapes. As the field progresses, a focus on rigorous and pragmatic approaches is key.

What Can We Learn From How Qualitative Methods Are Analyzed?

A striking finding while completing this review was inconsistency with how qualitative researchers are defining and employing analytic methods. For example, content analysis (the second most reported method) was described in many ways across articles, though this is also characteristic of qualitative methodological reporting (Hsieh & Shannon, 2005). To illustrate this point, one study stated it drew on content analysis, but did not cite which content analytic approach they employed (there are several), nor did it give a theoretical justification for why content analysis was indicated for their research question (Powell-Jackson et al., 2009). Furthermore, some articles combined qualitative analytic methods, some that have distinct epistemological and ontological orientations. Many articles reported multiple qualitative methods (e.g., thematic analysis and content analysis) and other reported “thematic content analysis” as a single approach. As is necessary in rigorous qualitative research, analytic approaches should follow the epistemological and methodological orientation that the researchers and their research questions assume. We believe some of this inconsistency stems from the “black box” of what happens with qualitative data during its analytic process and an academic need to “fit” an approach into a largely “known” qualitative analytic method (e.g., grounded theory). It may be that the pragmatic and rapid needs of IS researchers and implementers are not well aligned with how grounded theorists or phenomenologists describe their work, though this certainly does not mean they are incongruent. Qualitative researchers need to demystify the analytic process. We call for more consistency and convening about analytic methods and more analytic naming clarity for the field as a whole. We believe this will provide the field greater ability to provide broader knowledge and shared understanding of how and why a particular approach was taken.

A tension in IS qualitative work is the need to aggregate implementation determinants and surrounding climates across multiple distinct sites. Some IS qualitative work has created hundreds of qualitative interviews with needs to quickly process and analyze for implementation. Researchers have leveraged matrix and template methods from qualitative research and thoughtfully integrated mixed methods, bolstering strategies to handle this amount of information. For example, content analytic approaches that quantify qualitative data have emerged in popular CFIR analytic methods (Damschroder & Lowery, 2013), and Kim et al.'s matrixed multiple case study approach (Kim et al., 2020). Consistent language and more transparent applications of these pragmatic approaches to analysis may be useful to help large implementation studies effectively engage qualitative methods. Given the deep methodological contributions qualitative research has already made to healthcare systems, there remains a need for transparency and common language that can help provide clarity on what researchers need to do to answer their research questions.

What Can We Learn From How and What Frameworks Are Applied in Qualitative IS Research?

Less than half of articles used a TMF to guide both data collection and analysis. This finding is consistent with previous analyses of IS studies that highlighted the suboptimal use of frameworks wherein frameworks are not operationalized or integrated throughout the research process from study design to data collection and analysis (Kirk et al., 2016; Moullin et al., 2019). To bolster the use of TMFs, guidance on the application of individual TMFs like the TDF (Atkins et al., 2017), EPIS (Moullin et al., 2019), CFIR (Keith et al., 2017), and RE-AIM (Glasgow & Estabrooks, 2018) have recently been published (Moullin et al., 2020). In 2020, Moullin et al. put forward ten recommendations for using implementation frameworks in research and practice, emphasizing that frameworks are ideally applied throughout an implementation research study (Moullin et al., 2020). Holtrop et al. provided specific guidance and examples of how qualitative methods can be used to evaluate each of the five RE-AIM dimensions, concluding with the hope that in the future there will be more such uses of RE-AIM to conduct reviews and create more guidelines for their use (Holtrop et al., 2018). The findings of our review indicate that there is a broader need for guidelines on how to use TMFs throughout IS qualitative research to enhance their use. It would be beneficial for such guidelines to cover uses of TMFs for qualitative research studies employing different study designs—for example, how to optimize the recurrent use of evaluation frameworks, such as RE-AIM, to inform mid-course adjustments (Maw et al., 2022).

A positive development is that there are a growing number of framework-informed tools to facilitate qualitative data collection and analysis that include standardized codes and definitions (CFIR Research Team-Center for Clinical Management Research, 2024; Ritchie et al., 2022). These tools may support the use of frameworks in IS qualitative research, as well as promote standardization in coding and analysis that could improve our ability to synthesize findings across studies, thereby enabling the advancement of generalizable knowledge (Ritchie et al., 2022). At the same time, we caution the over-reliance on such tools without careful attention to their appropriateness for a given context. Furthermore, to take full advantage of the value of qualitative research, we encourage researchers to consider inductive strategies to develop codes in order to capture issues that may emerge in the data but not be captured by deductive codes available in pre-developed codebooks.

Strengths and Limitations

This scoping review has several key strengths. We included articles that used a wide range of qualitative methods. As such, our findings extend knowledge from previous reviews that focused on understanding the use of specific qualitative methods in IS research such as ethnographic methods (Gertner et al., 2021). Furthermore, we categorized numerous elements of qualitative research methods, from data collection and analysis to the use of TMFs and methods for enhancing the “trustworthiness” of findings such as member checking. To the best of our knowledge, our findings provide one of the most comprehensive reviews of the use of qualitative research methods to date.

This review has limitations. First, while we recognize that there is a vast IS literature published in numerous venues (Wensing et al., 2021), the search was limited to articles published in specific journals to ensure feasibility. We selected journals based on their focus on publishing implementation and dissemination research, with the goal of reviewing articles published in journals that only or frequently publish IS research, to efficiently and effectively capture a broad range of IS articles using qualitative methods. Additionally, determining whether an article was an IS article was not without challenges, given that there are other related fields of science such as quality improvement and improvement science and delivery science, which have similar purposes and overlapping methods (Bauer & Kirchner, 2020; Lieu & Madvig, 2019; Nilsen et al., 2022). Clarifying such distinctions may continue to be a challenge but remains necessary when reviewing the IS literature. Third, by having the inclusion criterion that the article must have used a TMF, relevant articles were likely missed since previous reviews have found that some IS studies do not use a TMF (Birken et al., 2017). Fourth, in some instances, data collection and analytic methods were not well described in the articles, making it challenging to categorize them. To help prevent miscategorized of methods, we held frequent team discussions and conducted ongoing quality checks. Once data extraction was complete, we conducted additional quality checks. In line with this limitation, systematic extractions of epistemological or ontological justifications did not occur and future research may benefit from engaging additional methodological context. The review is limited by the year cut off for included articles. Future reviews can use this review as a comparator to anchor changes over time. Finally, we conducted our searches in English only, which possible excluded pertinent information published in languages other than English. Results and recommendations can be interpreted in light of these strengths and limitations.

Conclusion

This scoping review identifies the breadth of how qualitative methods have been used in IS research as well as gaps and opportunities to strengthening the use qualitative methods in IS. It is our hope that this review will inspire future methodological research that builds on our findings and advances the use of qualitative research in IS research.

Supplemental Material

sj-docx-1-irp-10.1177_26334895251367470 - Supplemental material for How Are Qualitative Methods Used in Implementation Science Research? Results From a Systematic Scoping Review

Supplemental material, sj-docx-1-irp-10.1177_26334895251367470 for How Are Qualitative Methods Used in Implementation Science Research? Results From a Systematic Scoping Review by Ashley Hagaman, Elizabeth C. Rhodes, Carlin F. Aloe, Rachel Hennein, Mary L. Peng, Maryann Deyling, Michael Georgescu, Kate Nyhan, Anna Schwartz, Kristal Zhou, Marina Katague, Emilie Egger and Donna Spiegelman in Implementation Research and Practice

Supplemental Material

sj-docx-2-irp-10.1177_26334895251367470 - Supplemental material for How Are Qualitative Methods Used in Implementation Science Research? Results From a Systematic Scoping Review

Supplemental material, sj-docx-2-irp-10.1177_26334895251367470 for How Are Qualitative Methods Used in Implementation Science Research? Results From a Systematic Scoping Review by Ashley Hagaman, Elizabeth C. Rhodes, Carlin F. Aloe, Rachel Hennein, Mary L. Peng, Maryann Deyling, Michael Georgescu, Kate Nyhan, Anna Schwartz, Kristal Zhou, Marina Katague, Emilie Egger and Donna Spiegelman in Implementation Research and Practice

Supplemental Material

sj-docx-3-irp-10.1177_26334895251367470 - Supplemental material for How Are Qualitative Methods Used in Implementation Science Research? Results From a Systematic Scoping Review

Supplemental material, sj-docx-3-irp-10.1177_26334895251367470 for How Are Qualitative Methods Used in Implementation Science Research? Results From a Systematic Scoping Review by Ashley Hagaman, Elizabeth C. Rhodes, Carlin F. Aloe, Rachel Hennein, Mary L. Peng, Maryann Deyling, Michael Georgescu, Kate Nyhan, Anna Schwartz, Kristal Zhou, Marina Katague, Emilie Egger and Donna Spiegelman in Implementation Research and Practice

Supplemental Material

sj-pdf-4-irp-10.1177_26334895251367470 - Supplemental material for How Are Qualitative Methods Used in Implementation Science Research? Results From a Systematic Scoping Review

Supplemental material, sj-pdf-4-irp-10.1177_26334895251367470 for How Are Qualitative Methods Used in Implementation Science Research? Results From a Systematic Scoping Review by Ashley Hagaman, Elizabeth C. Rhodes, Carlin F. Aloe, Rachel Hennein, Mary L. Peng, Maryann Deyling, Michael Georgescu, Kate Nyhan, Anna Schwartz, Kristal Zhou, Marina Katague, Emilie Egger and Donna Spiegelman in Implementation Research and Practice

Footnotes

Abbreviations

Acknowledgments

The authors acknowledge the institutional support of the Center for Methods in Implementation and Prevention Science as well the Harvey Cushing/John Hay Whitney Medical Library at Yale University for their resources and staff support. We are deeply grateful for Dr. Richa Attre and Aishwarya Bhattacharya for their support screening articles. We are thankful for the scholars and their thoughtful contributions to science that populated this review.

Authors’ Contributions

AH conceptualized the study. KN, AH, and ER designed the search strategy. AH and ER developed the data extraction tool and data analysis plan. AH, ER, CA, RH, MP, MD, MG, AS, KZ, and MK conducted article screening and data extraction. RH conducted quantitative data analysis. MP, CA, and RH prepared figures and tables. All authors drafted and revised the manuscript. All authors have read and gave final approval of the version of the manuscript submitted for publication.

Availability of Data and Materials

Data are available by request.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethics Approval and Consent to Participate

Not applicable.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: AH is funded by a NIMH Career Development award [K01MH125142] that supported time and training to lead this review. The Center for Methods Implementation Prevention Science at the Yale School of Public Health, led by DS, also provided funds to support this project.

Competing Interests

The authors declare that they have no competing interests.

Consent for Publication

Not applicable.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.