Abstract

Background

This study assessed the feasibility and acceptability of a multilevel, multi-component implementation strategy for measurement-based care (MBC) called Feedback and Outcomes for Clinically Useful Student Services (FOCUSS). FOCUSS includes six components selected in our prior work with a national sample of school mental health stakeholders. This is among the first demonstrations of MBC with school-employed clinicians. We explored proof of concept by observing MBC adoption rates achieved by the end of the school year and other related implementation outcome data.

Method

A mixed-method, single-arm pilot study was conducted during one academic year with 10 school-employed mental health clinicians in two K-12 public school districts in Connecticut. Clinician adoption was assessed by monthly fidelity monitoring of measures clinicians entered in the feedback system. Clinician self-reported practices, attitudes, feasibility, acceptability, and appropriateness of using MBC with K-12 students was assessed by pre-training, 3-, 6-, and 9-month surveys. School year-end qualitative interviews explored clinician implementation experiences using MBC and FOCUSS implementation supports to inform future changes to FOCUSS in a district-wide trial.

Results

Clinicians were asked to implement MBC with five students; 60% of the clinicians achieved or exceeded this target, and MBC was adopted with 65 students. Other implementation outcomes were comparable to related studies. Qualitative feedback indicated that MBC is clinically valuable in schools by providing consistency and structure to sessions, is compatible with school mental health, and well regarded by students and parents. FOCUSS implementation supports were regarded as helpful, and individual performance feedback emails appeared to be a necessary component of FOCUSS to boost post-training implementation.

Conclusion

This is among the first studies of MBC implementation with school-employed mental health professionals in the United States. Results demonstrate proof of concept for MBC implementation with school social workers, psychologists and counselors and support subsequent district-wide use of FOCUSS to install MBC in schools.

Plain Language Summary

Keywords

Introduction

Schools are the primary setting where children and families in the United States receive mental health treatment (Duong et al., 2021). School mental health care is heterogeneous in terms of student diversity (Wilk et al., 2022), provider disciplines (social work, psychology, counseling), staffing ratios and clinical practices (Reaves et al., 2022; Zabek et al., 2022). School clinicians have large caseloads and are responsible for prevention to intervention, crisis response, and non-clinical duties (Zabek et al., 2022). Fortunately, there is growing interest in supporting school mental health professionals’ skills and capacity to deliver evidence-based mental health services (American Academy of Pediatrics, 2021; Zabek et al., 2022). Flexible, evidence-based strategies applicable to a wide range of students, such as measurement-based care (MBC), are needed to improve school mental health treatment quality.

MBC is the routine use of patient-reported progress measures to inform collaborative treatment adjustments in real time (Scott & Lewis, 2015). MBC core components include “Collect” (i.e., providing a clear, client-centered rationale for MBC and selecting and administering a progress measure aligned with treatment targets), “Share” (i.e., providing feedback and discussing scores in real time), and “Act” (i.e., adjusting individual sessions and/or overall treatment planning) (Barber & Resnick, 2023). MBC has evidence of effectiveness with children in traditional mental health care settings, but MBC implementation and effectiveness in schools is not well known (Parikh et al., 2020). In theory, MBC is highly compatible with school settings (Bohnenkamp et al., 2015; Connors et al., 2022; Lyon et al., 2022). MBC has the potential to promote consistent structure, student- and family-centered communication, and thus equity in the way students receive school-based interventions with progress monitoring and feedback. MBC-type practices are included in ethical standards and core competencies of national school professional organizations in the U.S. (American School Counselor Association, 2022, 2023; National Association of School Psychologists, 2020; National Association of Social Workers, 2012). Furthermore, since progress monitoring for academic subject areas is routine, especially in special education, school clinicians are expected to use progress monitoring (Connors et al., 2015).

MBC Implementation in Schools

To date, MBC has not yet been incorporated into education, but national and large-scale implementation efforts exist in healthcare systems (Interdepartmental Serious Mental Illness Coordinating Committee, 2023; Kaiser Permanente Institute for Health Policy, 2023). Yet, only 20% of providers in traditional mental health care settings report routine progress monitoring and feedback in their practice (Jensen-Doss et al., 2018), and MBC implementation among school clinicians is likely much lower (Cho et al., 2023).

There are various challenges to MBC implementation by school clinicians (Kelly, 2011; Parke, 2012). These include lack of access to measures, inadequate data systems or infrastructure, difficulty reaching caregivers, and lack of ongoing consultation or clinical supervision to support MBC practices (Connors et al., 2015). Beyond the school context, general barriers to MBC implementation include patient, practitioner, organizational, and system factors (Lewis et al., 2019). Implementation strategies to promote MBC uptake and implementation also vary widely by determinants in specific care settings (Boyd et al., 2018; Childs & Connors, 2022), with emphasis on clinician training as a standard strategy (Kassab et al., 2022), follow-up training, consultation, or supervision (Bovendeerd et al., 2019; Lyon et al., 2022), and tailoring strategies to site-based determinants (Lewis et al., 2022).

Despite these challenges, notable exceptions of MBC implementation in schools are emerging in several states, Canada, Northern Ireland, and Norway (Connors & Hoover, 2025). Domestically, MBC research in schools has been conducted in school-based health centers and with community-employed school mental health professionals (Borntrager & Lyon, 2015; Connors, Prout et al., 2021; Lyon et al., 2017; Sichel & Connors, 2022). Although these community-employed, school-based professionals expand school capacity to meet student mental health needs, school-employed mental health professionals are the foundation and “front line” for student mental health (Hoover et al., 2019). School psychologists, social workers, and counselors may face more challenges implementing evidence-based practices than their community-employed, school-based counterparts (Connors, Prout, et al., 2021; Langley et al., 2010). This is due to expanded roles and responsibilities that limit their time for clinical intervention, such as assessment, consultation, crisis management, whole-school mental health programming and non-clinical, unrelated duties including clerical and administrative work (Brown et al., 2006; Zabek et al., 2022). The largest U.S. study we are aware of with MBC implementation among 75 school-employed clinicians found significant effects in standardized and individualized assessment use following a brief online training and post-training consultation, but no significant effect on treatment modification (Lyon et al., 2022). Learning how to effectively support MBC among school-employed clinicians offers an opportunity for district-wide scaling of MBC.

Present Study

The goal of this study is to understand MBC acceptability and feasibility among school-employed clinicians with a multi-component implementation strategy package developed for this innovation and context. Feedback and Outcomes for Clinically Useful Student Services (FOCUSS) is an empirically derived implementation strategy package to promote school clinician MBC practices (Connors et al., 2022). FOCUSS strategies include: (1) assess clinician readiness; (2) identify and prepare champions; (3) develop a district implementation plan; (4) provide initial MBC training; (5) provide access to and training on measures; and (6) provide post-training group consultation (see Table 1). Performance feedback (PF) emails were added as a 7th strategy during this pilot (see Results for further explanation).

FOCUSS Implementation Strategies.

Note. Continuing education units were offered for initial MBC training and post-training group consultation, which could be considered a separate implementation strategy. Identify and Prepare Champions was the only strategy that we were not able to carry out as defined (see “Limitations” section). FOCUSS = Feedback and Outcomes for Clinically Useful Student Services; MBC = measurement-based care; PCOMS = Partners for Change Outcome Monitoring System.

This study was a small-scale, proof of concept to explore MBC acceptability and feasibility, based primarily on observed adoption of progress measures in one school year. We also observed how FOCUSS operates under typical conditions with school-employed clinicians as MBC implementers in K-12 public schools. Mixed-methods data on implementation outcomes and contextual factors answered the following research questions:

To what extent do clinicians adopt MBC using FOCUSS with a subset of their caseload in one school year? How acceptable, feasible, and appropriate is MBC for school clinicians participating in FOCUSS? How well does MBC fit in the school mental health context and for school-employed clinicians? How acceptable, feasible, and usable are the selected patient-reported outcome measures and measurement feedback system for FOCUSS clinicians?

This study was designed to inform adaptations to MBC and/or FOCUSS implementation strategies for a larger-scale trial. We also sought to identify what facilitates MBC implementation in schools when school-employed clinicians adopt this practice during the first school year of training and support. Finally, we explored optimal research procedures and methods for a larger-scale trial (e.g., clinician recruitment, enrollment, retention, and study measure completion).

Method

Using a sequential mixed-methods design, we collected quantitative data throughout the school year, followed by qualitative data to expand our findings for complementarity (Palinkas et al., 2011). Data were merged after all analyses and conceptually were of equal weight. Adoption and implementation rates were observed to understand how likely this practice is to be used in this context by these providers. Additional clinician self-reported acceptability and feasibility and clinician attendance to training and consultation added context to observed adoption and implementation. Also, given that this was the first pilot of FOCUSS with a small sample, quantitative implementation outcomes are not intended for population inference to school clinicians. Instead, the quantitative findings were interpreted in reference to larger-scale implementation efforts and as a foundational data source expanded by qualitative data from implementers.

Participants

We partnered with two public school districts in Connecticut in the 2021–2022 school year to provide initial MBC training to all student services staff and recruit participants. Twelve clinicians voluntarily consented to and enrolled in the pilot, but two withdrew in early October, for a final sample of 10 clinician participants. Four were from one district and six from the other. All were Female. Most (N = 7, 70%) identified as White/Caucasian, one (10%) identified as Black or African American, one (10%) as Hispanic, and one (10%) preferred not to answer. Six (60%) reported being trained in Social Work, three (30%) in School Psychology, and one (10%) in professional counseling. Roles varied (i.e., five school social workers, two school psychologists, one social work intern, one school psychology intern, and one school counselor). On average, participants worked in mental health for 11.6 years (range = 0–29, Mdn = 10) and schools for 11.9 years (range = 5–26, Mdn = 9.5). All clinicians were licensed and credentialed to provide mental health interventions independently in schools except for one social work trainee.

Procedures

We assessed clinician readiness, developed a district implementation plan in partnership with district leaders, and provided initial MBC training (see Table 1). District-wide training was provided during a professional development day. Across two districts, 64 student services personnel were invited, and 36 attended. The district with lower attendance invited student services personnel broadly, including some not appropriate as MBC implementers (e.g., speech language pathologists).

In accordance with Yale University IRB-approved procedures, district-wide training was followed by clinician consent to access to the measurement feedback system (MFS), receive MFS training, attend monthly group consultation calls, and complete study measures. With grant funds, we purchased the Better Outcomes Now MFS, which includes the Partners for Change Outcome Measurement System (PCOMS). PCOMS includes two, 4-item measures rated on an analogue scale of 1–10 called the Outcome Rating Scale (ORS) and Session Rating Scale (SRS) (Duncan, 2012). The ORS tracks psychotherapy outcomes using four dimensions of individual well-being or distress, interpersonal or relational well-being, social relationships including school and work, and overall general well-being. The SRS tracks alliance and satisfaction with services. PCOMS was selected for its evidence-base in school systems primarily outside of the United States and application to wide range of student concerns and intervention goals. A live, virtual tutorial of the online system for the PCOMS measures (Child ORS, SRS) was provided by the study team, recorded, and disseminated with individual follow-up supports made available in consultation with measure developers. Monthly, post-training group consultation was delivered by the first and fourth authors two times per month to accommodate clinician schedules. Continuing Education Units were offered for training and consultation attendance. Individual performance feedback emails were added 3 months post-training to boost initial implementation (see Table 1).

Online surveys were collected at baseline, 3-, 6-, and 9-month intervals. Clinicians were invited at the end of the year to complete an optional “implementation experience interview” on Zoom. Clinicians received $25 Amazon gift cards for each survey completion and interview. This and the continuing education units were the only compensation participants received because training, post-training consultation, and MBC practices were approved by their employer as regular duties during work hours. Due to the small nature of this pilot, members of the study team supported implementation, collected study measures, conducted and coded interviews. Both primary coders (EHC, SS) were Female; one a Clinical-Community Psychologist with a PhD and almost two decades of experience conducting qualitative research, and the other a BA-level Research Assistant trained and supervised to conduct interviews and qualitative coding. This introduces possible response bias but also offered a strength of prior established relationships. Participants were told the reason for the interviews was to gather their honest experience with MBC to inform future district-wide implementation. Zoom interviews were videorecorded in private offices of clinicians and researchers, field notes were taken, and interviews lasted 20–40 min.

Measures

To answer research question one, adoption was collected by researchers in the MFS and clinician self-reported MBC practices were collected using baseline, 3-, 6-, and 9-month surveys. To answer research question two, clinician self-reported acceptability, feasibility, and appropriateness of MBC as a practice as well as clinician attitudes toward standardized assessment were collected using four surveys. Research questions three and four were answered by qualitative clinician interviews and survey write-in responses. Mixed-methods inquiry of implementation outcomes and innovation-context fit were used to expand and contextualize observed adoption rates as the primary outcome.

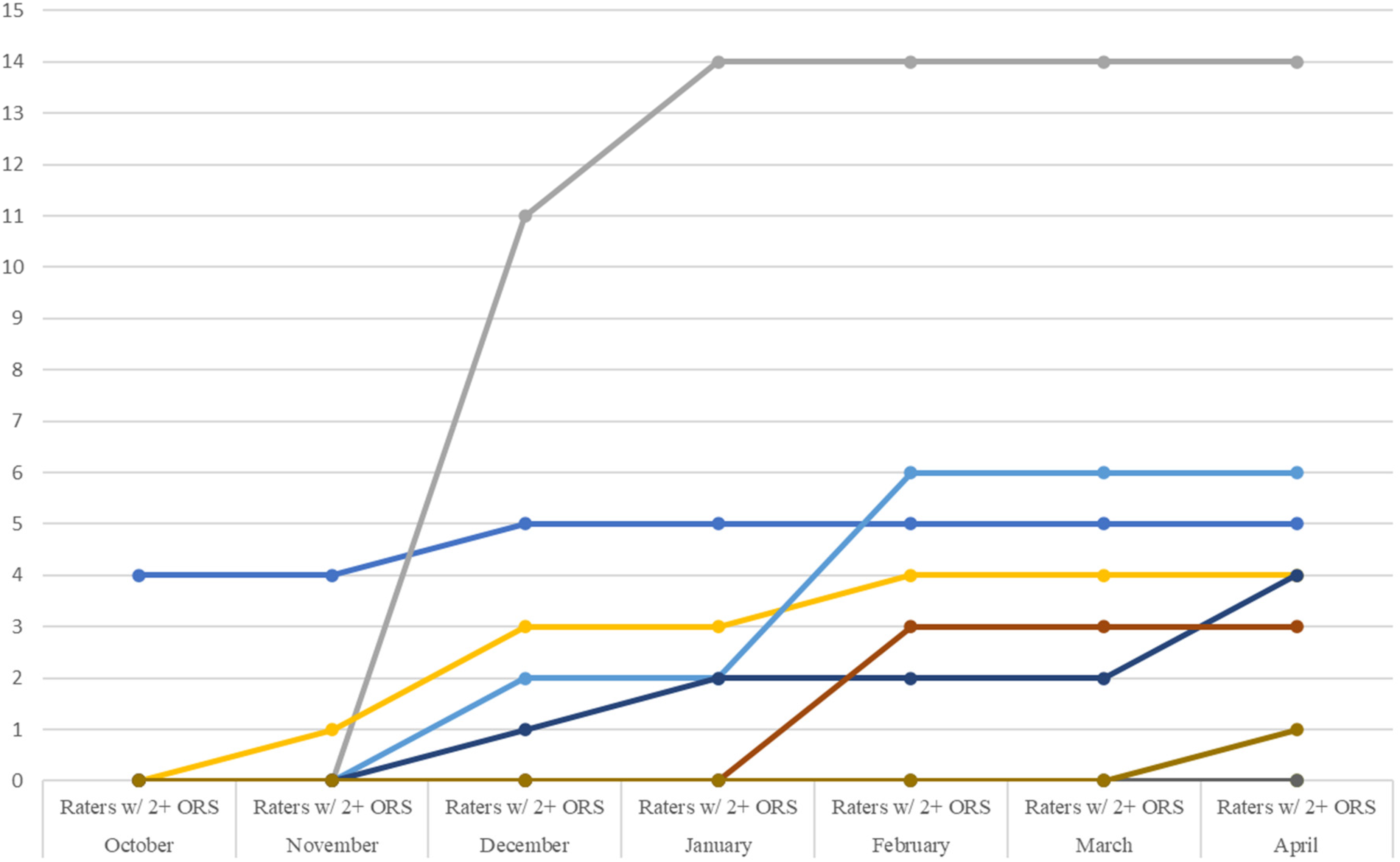

Adoption and Implementation of MBC was assessed via observational tracking of clinician ORS measures entered in the MFS each month. Although the PCOMS includes the ORS and SRS, and clinicians were trained on both, we focused on the ORS as the primary indicator of adoption and initial implementation, as it is the child outcome measure used to assess treatment progress. Through review of data trends over time and team consensus we defined “adoption” as ≥1 ORS measure collected for ≥1 case, at the clinician level, and “implementation” as ≥2 ORS measure collected for ≥1 case (see Figures 1 and 2). Clinician self-reported practices with MBC were also assessed via the Current Assessment Practice Evaluation—Revised (CAPER). This seven-item measure asks clinicians to self-report what percentage of their clients in the past month/week they administered and used (i.e., provided feedback about/discussed, changed the overall treatment plan, changed activities for a single session) standardized and individualized measures with. The CAPER has demonstrated good internal consistency (α = .72) and has been replicated with a sample of 95 clinicians (Connors et al., 2021; Lyon et al., 2019). CAPER scoring was adapted in consultation with developer Dr. Aaron Lyon from a Likert scale (which produces truncated ranges) to a more detailed continuous range of 0%–100% of a clinician's caseload for each item; in prior studies, it is reported on a 4-point scale (1 = “None [0%],” 2 = “Some [1–39%],” 3 = “Half [40–60%],” 4 = “Most [61–100%]”), which still offers points of comparison to our data.

MBC Adoption by Clinician: Cases With 1 or More Outcome Rating Scale.

MBC Implementation by Clinician: Cases With Two or More Outcome Rating Scales.

Acceptability, Feasibility, and Appropriateness of MBC as a clinical practice was assessed using clinician self-report on the Acceptability of Intervention Measure (AIM), Feasibility of Implementation Measure (FIM), and Intervention Appropriateness Measure (IAM) (Weiner et al., 2017). Structural validity, test–retest reliability, and sensitivity to change have been established (Weiner et al., 2017). We also tracked clinician attendance to monthly post-training consultation calls and requests for individual consultation as another indicator of acceptability and feasibility of FOCUSS supports.

Attitudes were measured via the Attitudes Toward Standardized Assessment Scale—Monitoring and Feedback (ASA-MF) which has three subscales (clinical utility, treatment planning, and practicality) and acceptable internal consistency (α = .81–.85) (Connors et al., 2021; Jensen-Doss et al., 2018).

Usability of PCOMS was assessed via the Usage Rating Profile—Assessment (URP-A; 3 months, 6 months, 9 months). This 28-item measure is part of a suite of measures developed specifically to assess intervention usage in schools (Miller et al., 2014). Items ask about acceptability, understanding, home–school collaboration, feasibility, system climate, and system support for any assessment tool inserted to item stems (ORS and SRS from the PCOMS, in this case). The six factors have good internal consistency (α = .62–.90) (Miller et al., 2014).

Clinician implementation experiences were assessed using qualitative data collected at the end of the implementation period in open-ended survey response items and individual interviews. Clinicians were asked about their experiences (1) as an interventionist, using MBC with students; and (2) as an intervention recipient, receiving FOCUSS implementation supports to do MBC. These experiences informed our understanding of the innovation-context fit of MBC as a practice in schools (RQ3), and the acceptability, feasibility, appropriateness, and usability of selected measures and measurement system in schools (RQ4). Specifically, the open-ended survey responses asked clinicians to write in comments, questions, or feedback about FOCUSS, what they liked about FOCUSS, what has been valuable to them and their students, and what advice they would have to improve the project. The semi-structured interview asked about motivations to consent to participation, implementation experiences with FOCUSS (with prompts about barriers experienced, comfort levels with MBC, student and parent response to MBC), MBC fit and value with school mental health services, barriers anticipated for their colleagues who didn’t consent, and advice for future MBC implementation in schools.

Analyses

Quantitative clinician self-reported survey data were downloaded from Qualtrics to SPSS Version 28.0, cleaned for usable variable names and descriptions, and inspected for errors. New variables were computed for scale and total scores per guidance from measure developers, normality and distributions were examined, and coded values were checked for accuracy across time points. Due to the small sample size, primary analyses were descriptive statistics displayed over time, visually inspected for trends, and compared to published means and standard deviations for each measure.

Qualitative content analysis was completed using grounded theory as a second stage (Charmaz, 2014; Elo & Kyngäs, 2008; Hsieh & Shannon, 2005). Because existing theory and research on MBC in schools is in its infancy, we were guided by an inductive approach to understand clinician experiences and contextual factors influencing observed implementation outcomes (Damschroder et al., 2022a; Hsieh & Shannon, 2005). However, our interpretation and categorization of final focus codes were guided by established theories of implementation outcomes. For example, the Implementation Outcomes Framework (Proctor et al., 2011) and the Consolidated Framework for Implementation Research, or CFIR 2.0 (Damschroder et al., 2022b) naming conventions for constructs were used to promote transportability to other research on this innovation (MBC) and context (schools).

First, two coders (EHC, SS) independently reviewed and open-coded all qualitative survey responses and detailed notes from all six interview transcripts. Next, open codes and memos for each segment of text were compared and discussed. Open codes were revised through iterative discussion and comparison with the data to develop focus codes with operational definitions. Survey responses were coded to consensus, and interview transcripts were divided for final focus coding. Sorting focus codes into categories or themes (referred to as re-contextualization) was mostly descriptive and somewhat interpretive, based on memos, contextual information, and elaboration when available (Lindgren et al., 2020). Final focus codes were also interpreted and categorized through consideration of implementation outcomes or determinants, and comparison to the CFIR Codebook Template (CFIR Research Team—Center for Clinical Management Research, 2024). The resulting theoretical and focus codes reflect a combination of terminology from predetermined determinants and outcomes frameworks and any innovation and/or context-specific language where applicable.

Results

MBC Adoption and Practices (RQ1)

Virtually all (nine of 10) clinicians adopted MBC for 65 students, resulting in 210 sessions with measures (M = 21.2, SD = 19.5, Mdn = 20.5, range = 1–49 sessions per clinician). One clinician never adopted MBC (see Figure 1). Clinicians were asked to implement MBC with five students, but 60% used MBC with more than five (range = 0–18). Baseline CAPER data indicate this is a notable practice change; 90% reported not completing or giving feedback about standardized progress measures prior to FOCUSS. Clinicians reported relatively more common baseline practices of tracking attendance or Individualized Education Program (IEP) goals, consistent with our prior findings (REDACTED). Although caseload-wide implementation was not a stated expectation or goal in the pilot, clinicians self-reported using measures with an average of up to 32% of their caseload (see Table 2), which is similar to rates reported in a national sample of 75 school mental health clinicians (Lyon et al., 2022).

Secondary Implementation Outcomes (Means and Standard Deviations).

Note. MBC = measurement-based care; PCOMS = Partners For Change Outcome Management System, including the Outcome Rating Scale and Session Rating Scale which clinicians used as the measures to track progress; CAPER = Current Assessment Practice Evaluation—Revised.

As shown in Figures 1–3, ORS measure adoption and initial implementation notably increased between November and December for nearly half the sample. We attribute this to the introduction of individual performance feedback (PF) emails in late November. Despite promising feasibility, acceptability, and signals of adequate MBC adoption at baseline, only 50% of clinicians adopted MBC (≥1 student with ≥1 progress measure) by November 1st of the school year. Thus, PF emails were initiated to boost implementation and test augmentation of FOCUSS with a strategy rated in the “borderline” range in the FOCUSS development study (i.e., “monitor implementation progress and provide feedback”; Connors, Lyon et al., 2021). As of April 2022, 90% of clinicians adopted MBC (≥1 student with ≥1 ORS progress measure) with all six core FOCUSS implementation strategies plus PF.

MBC Adoption by Clinician: Total Outcome Rating Scales.

Feasibility, Acceptability, Appropriateness of MBC (RQ2)

Table 2 shows mostly encouraging results for clinician attitudes toward the use of MBC; clinicians’ views of MBC acceptability, feasibility, and appropriateness; and the usability of the PCOMS measurement system. Specifically, acceptability, feasibility, and appropriateness of MBC scores were high overall and higher than observed in a prior study of 80 clinicians implementing MBC in public children's mental health services (Sichel & Connors, 2022). Over time, mean scores appeared to increase from baseline to 3 months and then were relatively stable through 6- and 9-month follow-up. Overall usability was half a standard deviation higher than reported for other school professional samples (Miller et al., 2014), but after an initial increase from baseline to 3 months, decreased below baseline levels at 9 months. The home–school collaboration domain was lower than the other subscales in our sample, which matches existing literature (Miller et al., 2014). Attitudes toward MBC were high overall and slightly higher than a prior study of standardized assessment implementation with 95 community-employed, school-based mental health providers (Connors, Lawson et al., 2021). Finally, all clinicians attended the district-wide MBC practice training, and 90% attended group post-training consultation. Consultation call attendance was very good (M = 3.2 calls per clinician, SD = 1.75; range = 0–5) for the five scheduled calls after one snow day cancellation. Half of the clinicians attended four or five calls and several requested individual consultation.

School Clinician Implementation Experiences (RQ3 and RQ4)

Qualitative categories and codes about school clinician implementation experiences are described below and displayed with illustrative quotes in Table 3.

Qualitative Categories and Codes From School Clinician Implementation Experiences.

Note. MBC = measurement-based care; IEPs = Individualized Education Programs; FOCUSS = Feedback and Outcomes for Clinically Useful Student Services.

Adoption was mixed with these qualitative codes refer to the likelihood that clinicians will continue MBC implementation after the pilot.

Clinical Value of MBC in School Mental Health

Across qualitative survey data and interviews, all six clinicians commented on at least one way MBC brought clinical value to school mental health practice. Students reportedly engaged in self-reflection because the measures are “black and white” and more specific than standard “open-ended” questions about functioning or progress. Two clinicians noted the opportunity to expand emotional self-awareness and vocabulary, as well as build “life skills” with MBC. Four clinicians reported that MBC offered consistency and structure in sessions (i.e., “consistency of the check-ins”; “consistency during counseling practice”; “the structure and the data the questions provide to both myself and the students”). We understand “consistency” in this context to refer to a planned, repeated practice that provides a routine for clinicians and all students in their sessions. One clinician noted that MBC helped adjust and “simple tweak” sessions. Another commented that MBC provides “great information and it's something that we haven’t had as social workers, and we definitely needed. We just don’t have these things.”

MBC With Subgroups of Students

School clinicians are generalists with diverse caseloads. Qualitative responses suggest that MBC may not be as meaningful for students on the autism spectrum and/or with lower cognitive functioning and may be most clinically useful for high schoolers or students with internalizing conditions. One clinician intentionally piloted MBC with students of Arabic background, African American, White American, and Hispanic/Latino descent to “test” its cultural relevance among her caseload. She noted that MBC was complicated with her immigrant families (especially undocumented) due to the student and family's legal considerations and concerns around privacy, despite her efforts to clarify that no student identifying information is entered into the feedback system or student record.

Compatibility with School Mental Health

All clinicians have students on their caseload in special education with IEPs. Integration of MBC with the IEP goals and objectives was noted as something that would improve MBC compatibility with school clinician workflows. In some cases, clinicians were able to achieve this integration. For example, report card conferences, 504 Plan meetings and IEP meetings were identified as times to share student MBC data and collect and discuss caregiver-reported data. One clinician noted the value of having “objective” data from “a structured tool to provide information” when she presents student progress in meetings. Four clinicians recommended starting school with an organized MBC process, especially with new students before “your numbers [caseload] increase and all the other sort of things that pop up.” This includes letting caregivers know at the beginning of the school year that MBC will be part of the student intervention and how important their participation is. One clinician noted this proactive approach is challenging in practice because “everything is chaotic in the beginning of the year so nobody's thinking about doing that.”

Caregiver and student engagement

In a handful of cases, caregiver data collection and discussion occurred in person; and “the majority were over the phone.” However, clinicians generally reported challenges with caregiver engagement in MBC. Clinicians expressed that families are sometimes overwhelmed with other life stressors. One clinician emphasized the importance to not “generalize” but “keep in mind that is because the population we work with. It's people that really struggle in many aspects of their lives. I think the intervention and data will be collected very differently if I worked with other kinds of kids, but that's not my job.” One clinician noted the impact of COVID on caregiver relationships during this 2021–2022 school year: “with COVID I feel like it's just been overwhelming, like, we can’t get parents to even do the rating scales for the evaluations we’re completing so it's difficult.” However, one clinician reported that lack of caregiver ratings were due to her own time limitations.

Four clinicians spoke about student engagement and enjoyment with MBC. Students reportedly “like rating themselves and their sessions” including “providing feedback about [the clinician's] performance” on the SRS as well as their own progress on the ORS. Challenges with student engagement pertained to logistics of collecting data routinely, such as clinicians not remembering, as well as figuring out whether the clinician's computer, student's chromebook, or paper and pencil was the most efficient method in the pace of individual and group sessions.

Individual Implementer and Recipient Experiences

The focus code in this category that came up most frequently was individual clinician characteristics, including clinicians’ style, preferences, or attributes that influenced their implementation experience. One clinician talked about her interest in any professional development. Others reported a natural interest in having data as a part of their practice. Years of experience came up as well; more experienced clinicians were more likely to forget to do MBC because they have an established routine. Another clinician newer to the field said, “it kind of gave me a routine that was helpful especially starting out in this field.” Personal comfort with technology was also raised as a determinant. The next most frequent focus code in this category was inner setting (school) barriers such as having limited time due to competing job demands, managing student crises, and staff turnover. Another frequent code that came up for clinicians and students (based on clinician report) was that this practice was a “learning curve,” referring to the initial time and effort to learn MBC; (i.e., “PCOMS is easy to use once you learn how to navigate the system.”) Less-frequently coded topics included compatibility with workflow (“how can I make this flawlessly fit into everything”) and forgetting to do MBC (“there were certainly times where we’d be ten minutes in and I’d say, ‘oh goodness, we forgot to check in’”).

FOCUSS Implementation Supports

Clinician participants’ qualitative responses in surveys and interviews that pertained to implementation supports were coded by five focus codes. The most frequent code was “consultation is helpful or liked,” referring to the monthly group consultation calls that were held. The next most frequent codes in this category were (1) liked training support teams, (2) liked training on/access to measures, and (3) preference for more/different supports. Recommendations ranged from every-other month consultation to weekly, 20-min group sessions because of “crisis moments” and “obstacles” that get in the way of attending consultation. One clinician said that consulting with peers was helpful, and another wished she had another social worker in her building to support implementation.

The Innovation (Measures, Feedback System, and MBC)

Feasibility and usability, specifically in terms of the logistics and procedures of collecting measures, was the most frequent code about the PCOMS measures, the feedback system, or MBC as a specific clinical practice. Some clinicians started with paper and pencil and one clinician never transitioned to using the online system, but those who used the MFS reported that it was easier once they learned it, and students enjoyed it more than doing paper measures. Most parent ratings were collected over the phone. Several clinicians reported measures were acceptable, for example: “I really enjoyed having a way to monitor progress that was consistent and quick.”

Acceptability of MBC and PCOMS measures was coded in qualitative data from approximately half of the clinicians. This included appreciation of “having an actual program to collect data!,” “seeing progress over time,” and liking the graphs produced in the feedback system. A few clinicians also noted liking the PCOMS measures themselves (“the students like rating themselves and their sessions”; “the CORS [Child Outcome Rating Scale] and the fact that it is age appropriate”). The SRS was particularly useful for a clinician to talk with an elementary school student about the purpose of school counseling, “cause you know I have that student who wants us to play games every time.”

Adoption was contextualized in the qualitative data by codes on general feedback about MBC and/or FOCUSS implementation supports, and the intended future use of MBC. Clinicians reported that MBC is “definitely needed” or “necessary,” “fitting” for their students and the school mental health context that “keeps me accountable” and “the kids like it.” Most clinicians reported using the PCOMS measures or “that sort of system” in future school years. Intentions for future use was expected to be facilitated by “already knowing how to navigate it.” At the same time, clinicians reported that due to the numerous practical and logistical challenges to MBC implementation in schools, their intentions for future use would include “starting fresh” to adjust their workflow based on what they learned.

Discussion

The purpose of this pilot was to explore initial MBC acceptability and feasibility among a school-employed mental health clinician sample, based primarily on observed adoption of progress measures, to inform a larger-scale trial. MBC adoption rates by the end of the school year (i.e., 60% of clinicians adopted MBC with five or more students; 90% adopted MBC with at least one student), serve as an initial proof of concept that MBC was acceptable to and feasible for a small sample of school-employed clinicians with FOCUSS implementation supports. Despite lack of prior experience with standardized progress measures, clinician ratings of acceptability, feasibility, appropriateness, and attitudes toward standardized assessment were positive in this sample. Furthermore, qualitative feedback suggests that MBC delivers on clinical value and is compatible with school mental health care delivery. These findings serve as a basis for future, district-wide implementation of FOCUSS to promote spread of MBC among entire student services departments.

Limitations

MBC adoption measures observed in the feedback system only captured partial MBC fidelity which was data collection. This is a common limitation in MBC implementation research that warrants mention as an area of future research. Additionally, these results should be interpreted as a proof of concept among a small sample of willing implementers. Relatedly, we may not have achieved saturation of qualitative themes for all school clinicians in this sample, and these two Connecticut districts may not be representative of districts more broadly, so a future multisite trial is needed to assess variation in school district size, region, and other inner and outer setting characteristics. We also focused on clinician-level implementation and thus did not track or report degree of implementation at the student (case) level. Due to the need for rapid year-end data collection before summer break, and rapid data synthesis to inform implementation in the subsequent year, we did not pilot test the interview guide, conduct repeat interviews, or return transcripts or results to participants for comment. However, a summary of interview findings was shared with district leadership to inform ongoing planning and involvement of clinicians as champions in the upcoming year.

Adoption improvement from 50% post-training to 90% at the end of the school year was a notable improvement, perhaps in part in part due to performance feedback emails. However, the effect of this strategy beyond the multilevel implementation support package was not isolated or empirically tested nor did we have a sample or design to do so. Clinicians were only asked to adopt MBC with a subset of cases but a future direction for understanding full implementation would be to assess adoption and implementation as a percentage of total cases or sessions. Furthermore, implementation mechanisms and outcomes were not assessed for all FOCUSS implementation strategies. Of strategies, “identify and prepare champions” was attempted prior to the training but challenging due to summer planning with districts. We expect that with a district-wide MBC implementation effort, it would be especially important to identify and prepare champions to promote buy-in and peer learning.

Future Directions

Several considerations and adjustments to FOCUSS may be necessary when going to scale as a quality improvement initiative. These include training early in the school year and explaining the initial learning curve of MBC to achieve workflow integration. Additionally, despite promising innovation-context fit in the current study, our findings underscore the importance of tailoring MBC implementation to schools (Lyon & Bruns, 2019). Conveying the value of MBC in a contextually relevant manner and ensuring MBC is integrated within workflows is imperative. Unique considerations around caregiver involvement, using MBC data in school team meetings, and making MBC part of routine practice also surfaced in our results.

Overall, FOCUSS appeared to promote adoption and initial implementation of MBC in this sample. Future research such as a Hybrid Type 1 trial with district-wide FOCUSS could offer opportunities to assess the effectiveness of MBC in schools and understand multilevel implementation determinants, strategies and outcomes with a larger sample. An empirical examination to optimize FOCUSS would also be useful, for example, in a Hybrid Type 2 trial. Sequential, multiple assignment, randomized trial designs could be used in the future to answer questions about relative effectiveness of implementation strategies in a multi-component package as well as optimal sequencing and dosage (Almirall et al., 2014; Lei et al., 2012).

Footnotes

Acknowledgments

We appreciate the dedicated partnership of all school mental health clinicians involved in this work as well as Stamford Public Schools and West Haven Public Schools.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was generously supported by the National Institute of Mental Health (NIMH K08MH116119; PI: Connors).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.