Abstract

Background

Dissemination and implementation frameworks provide the scaffolding to explore the effectiveness of evidence-based practices (EBPs) targeting process of care and organizational outcomes. Few instruments, like the stages of implementation completion (SIC) examine implementation fidelity to EBP adoption and how organizations differ in their approach to implementation. Instruments to measure organizational competency in the utilization of implementation strategies are lacking.

Method

An iterative process was utilized to adapt the SIC to the NIATx implementation strategies. The new instrument, NIATx-SIC, was applied in a randomized controlled trial involving 53 addiction treatment agencies in Washington state to improve agency co-occurring capacity. NIATx-SIC data were reported by state staff and external facilitators and through participating agency documentation. Proportion and duration scores for each stage and phase of the NIATx-SIC were calculated for each agency. Competency was assessed using the NIATx fidelity tool. Comparisons of proportion, duration, and NIATx activities completed were determined using independent sample

Results

The NIATx-SIC distinguished between agencies achieving competency (

Conclusions

Organizational participation in dissemination and implementation research requires a significant investment of staff resources. The inability of an organization to achieve competency when utilizing a set of implementation strategies waste an opportunity to institutionalize knowledge of how to apply implementation strategies to future change efforts. The NIATx-SIC provides evidence that competency is not an attribute of the organization but rather a result of the application of the NIATx implementation strategies to improve agency co-occurring capacity.

Trial Registration

ClinicalTrials.gov, NCT03007940. Registered January 2, 2017, https://clinicaltrials.gov/ct2/show/NCT03007940

Plain Language Summary

Access to integrated services for persons with co-occurring substance use and mental health disorders is a long-standing behavioral health problem. Evidence-based practices (EBPs) that focus on patient needs are effective in improving care for persons with co-occurring disorders. The stages of implementation completion (SIC) is a measure that assesses the process that organizations go through when implementing a new EBP and can be used to compare differences between organizations in their fidelity to recommended processes. To implement, organizations use specified strategies to integrate EBP into the care process. These strategies require a significant investment of staff resources. When organizations struggle to achieve competency with a set of implementation strategies, resources are wasted impacting the ability to use the strategies in future change efforts. As such, it is critical to measure organizational efforts to achieve competency, but instruments to do so are lacking. The SIC was adapted for a proven implementation strategy, NIATx, to address this gap. The NIATx strategy provides outside support and coaching to facilitate the implementation of a new EBP. Results from this study indicated that the NIATx-SIC could distinguish between addiction treatment agencies that applied NIATx implementation strategies with competency, versus those that did not, in the context of a multilevel randomized control trial. Study results provide evidence for the utility of adapting the SIC to specific implementation strategies and the benefit that the NIATx-SIC could provide for similar studies involving the use of NIATx to implement EBPs.

Introduction

Dissemination and implementation frameworks provide the scaffolding to frame proposed research and explore the effectiveness of the proposed interventions. The utilization of multiple implementation strategies is a proven and effective approach to support organizational champions or change teams to implement evidence-based practice (EBP; Caton et al., 2021; Garner et al., 2020; Perry et al., 2019). NIATx is a multicomponent set of implementation strategies developed to guide organizational champions and change teams to improve processes of care and adoption of EBPs in substance abuse treatment agencies (Ford et al., 2017; Gustafson et al., 2013; Hoffman et al., 2008; McCarty et al., 2007; Molfenter et al., 2019; Roosa et al., 2011). Although recent efforts link implementation strategies to frameworks (Waltz et al., 2019; Weir et al., 2021), few instruments exist that allow for a closer look into the “black box” of implementation to examine fidelity to the implementation process in the adoption of an EBP and how organizations differ in their approach to implementation. One exception is the stages of implementation completion (SIC) instrument (Chamberlain et al., 2011; Saldana, 2014).

The SIC is an eight-stage assessment tool designed to measure and compare implementation strategies for scaling up proven interventions (Brown et al., 2014). Stages range from Engagement (Stage 1) with the EBP developers/purveyors, to achievement of program delivery with Competency (Stage 8). Each stage maps onto three implementation phases: Preimplementation, Implementation, and Sustainment (Saldana et al., 2020). SIC data include a log of activities that operationalize the implementation process necessary to move toward successful program start-up, competency, and sustainment, and their completion dates. Two scores are calculated for each SIC stage and phase. The proportion score calculates the proportion of key activities completed within a stage. The duration score is calculated by date of first through date of final scored activity completed. The duration score can account for activities not completed sequentially and for being in multiple stages at a given time. A third final stage score indicates the final stage achieved in the implementation process (Stage 1–8). The SIC is psychometrically valid and reliable (Chamberlain et al., 2011, 2012; Saldana, 2014; Saldana & Chamberlain, 2012; Saldana et al., 2015).

The SIC has been adapted or customized for multiple EBPs, through a clearly defined adaptation process (Dubowitz et al., 2020; Nadeem et al., 2018; Saldana et al., 2012, 2020; Sterrett-Hong et al., 2021; Watson et al., 2020). Several applications of the SIC have or are being evaluated in system settings focused on improving child or youth services (Aalsma et al., 2019; Brown et al., 2014; Palinkas et al., 2017). In addition, the SIC has been utilized to assess the cost associated with implementation (Saldana et al., 2014) and to develop interview guides to obtain a greater understanding of the implementation process within an organization (Palinkas et al., 2018). However, the SIC has not been utilized to explore organizational participation and adherence to specific implementation strategies.

Within the context of a multisite randomized control trial, 53 community addiction treatment agencies located in the State of Washington were trained how to use NIATx, a proven evidence-based implementation strategy (Gustafson et al., 2013; Hoffman et al., 2012; McCarty et al., 2007), to improve their dual capacity to provide integrated care for individuals with co-occurring addiction and psychiatric disorders (Ford, Osborne, et al., 2018; Ford, Stumbo, et al., 2018; Ford, Zehner, et al., 2018). These agencies successfully utilized NIATx implementation strategies to improve their dual diagnosis capability to provide integrated care (Assefa et al., 2019; Chokron Garneau et al., 2022) and increase access to medications (Ford et al., 2021, 2022). In this manuscript, we report on the adaptation of the SIC and the development of the NIATx-SIC to track each organization's fidelity to NIATx implementation strategies.

Method

Design and Setting

A cluster randomized waitlist control group design was used to examine the extent that the NIATx implementation strategies improved agency co-occurring capacity including access to medication-related treatment for individuals with a co-occurring disorder (COD). Community addiction treatment agencies throughout Washington state were eligible to participate. Eligibility criteria included: tax-exempt status, providing outpatient (or intensive outpatient) services, a governmental agency or agency that provides 50% or greater public funding, and no prior participation in NIATx research. Recruitment letters were sent to 468 eligible addiction treatment agencies. Fifty-three agencies (11.3%) agreed to participate and four withdrew prior to randomization, resulting in a final sample of 49 agencies.

Agencies were randomized to Cohort 1 (NIATx implementation strategies,

NIATx Implementation Strategies

Adaptation of the SIC (NIATx-SIC)

The process for reviewing and adapting the SIC to measure process of the NIATx implementation strategies occurred iteratively. The initial step was a review of the planned NIATx implementation strategies (JHF and MEZ). The NIATx team then met virtually with the SIC team for a series of adaptation meetings. The SIC team interviewed the NIATx team to understand the various paths of how the NIATx implementation strategies could unfold in a participating community addiction treatment agency. When adapting the SIC tool, our team had to account for the change in focus of the SIC from implementing an EBP to implementing a multicomponent set of NIATx implementation strategies (Ford et al., 2016). Specifically, we addressed questions such as:

How do you measure implementation process when the implementation is under a fixed time period versus an open-ended implementation or intervention process? Given that each organization may implement variable numbers of change projects, and have varying levels of contact with their implementation coach, are these variations important to capture for thoroughness of completion? How does the process differ when the number of events (e.g., change projects) is not fixed but depends on the efforts of the organization? Given the NIATx model, would it be appropriate to consider the start and end dates of all change cycles as an activity in the implementation process or is it better to focus on the start and end dates associated with various change projects?

Although operationalizing the NIATx implementation process demanded some unique variations from the standard SIC. For example, it was necessary to determine how to best capture participation in coaching calls or to document the number of change projects when these activities could vary by the participating agency. In addition, it was necessary to build in variables to account for the delayed start in Stage 3 for Cohort 2 agencies. However, the most significant challenge for the adaptation was defining competency of sites (Stage 8). The NIATx fidelity instrument, developed for this study, became the basis for determining competency. The instrument utilized a 5-point scale to assess agency adherence to the NIATx principles across 24 items in seven different domains (Supplemental File 1). Using interviews and project documentation (e.g., change project forms, walkthrough reports) supplied by the agency or coach, NIATx fidelity was determined by an independent rating of two external evaluators (JHF and MEZ) by averaging the scores within each of the seven domain and then adding those scores across domains and dividing the result by seven (Ford, Osborne, et al., 2018; Ford, Stumbo, et al., 2018; Ford, Zehner, et al., 2018). Although individual item interclass correlation coefficients ranged from 0.25 to 0.98, the overall individual item correlation between the two evaluators was highly positive (

Data for the final NIATx-SIC (Supplemental File 2) were collected from information tracked by State of Washington staff, coaching interactions between NIATx experts and agency documentation. NIATx-SIC data were entered into the standard online SIC reporting system. Following standard SIC scoring procedure, for each stage and phase of the NIATx-SIC, the proportion and duration scores were calculated for each agency and averaged across each respective cohort. The NIATx-SIC calculated a third final stage score that indicates the final implementation stage achieved in the implementation process (Stage 1–8). The NIATx-SIC also monitors standard key SIC implementation milestone outcomes: agency achievement of (a) program start-up and (b) competency. Important for this study, the NIATx-SIC includes an assessment of baseline organizational characteristics such as primary funding source, population served, and geographic size.

Analysis

Descriptive statistics on the proportion and duration of activities within each phase and agency attributes were calculated. Differences between agency cohort assignment were evaluated. Independent

Results

Figure 1 shows the Extended CONSORT Diagram (Campbell et al., 2012) for study recruitment. Agencies assigned to Cohort 1 (

Extended CONSORT Diagram

Agency Attributes by Cohort and Competency

Number of agencies in Cohort 1 = 25 and in Cohort 2 = 24.

Number of agencies achieving competency: yes = 23 and no = 26. Of the agencies achieving competency, 13 were in Cohort 1 and 10 were in Cohort 2.

Table 3 provides descriptive statistics associated with the proportion of activities completed and the duration of time associated with each phase and stage depending on competency status. Of the 53 agencies that agreed to participate in this study, 23 agencies ultimately achieved competency, while 30 agencies discontinued their implementations. However, 41 agencies reached program start-up indicating that of those that discontinued, the majority did so during the active implementation phase. As shown in Table 3, although there was no significant variation in the amount of time spent on implementation processes, agencies that achieved competency completed a significantly greater proportion of activities along the implementation process from feasibility (Stage 2) to ongoing service delivery and monitoring (Stage 7), suggesting a stronger implementation process from start to finish. Supplemental File 3 shows similar descriptive information by assigned cohort.

Proportion of Events Completed and Duration by Competency Achievement

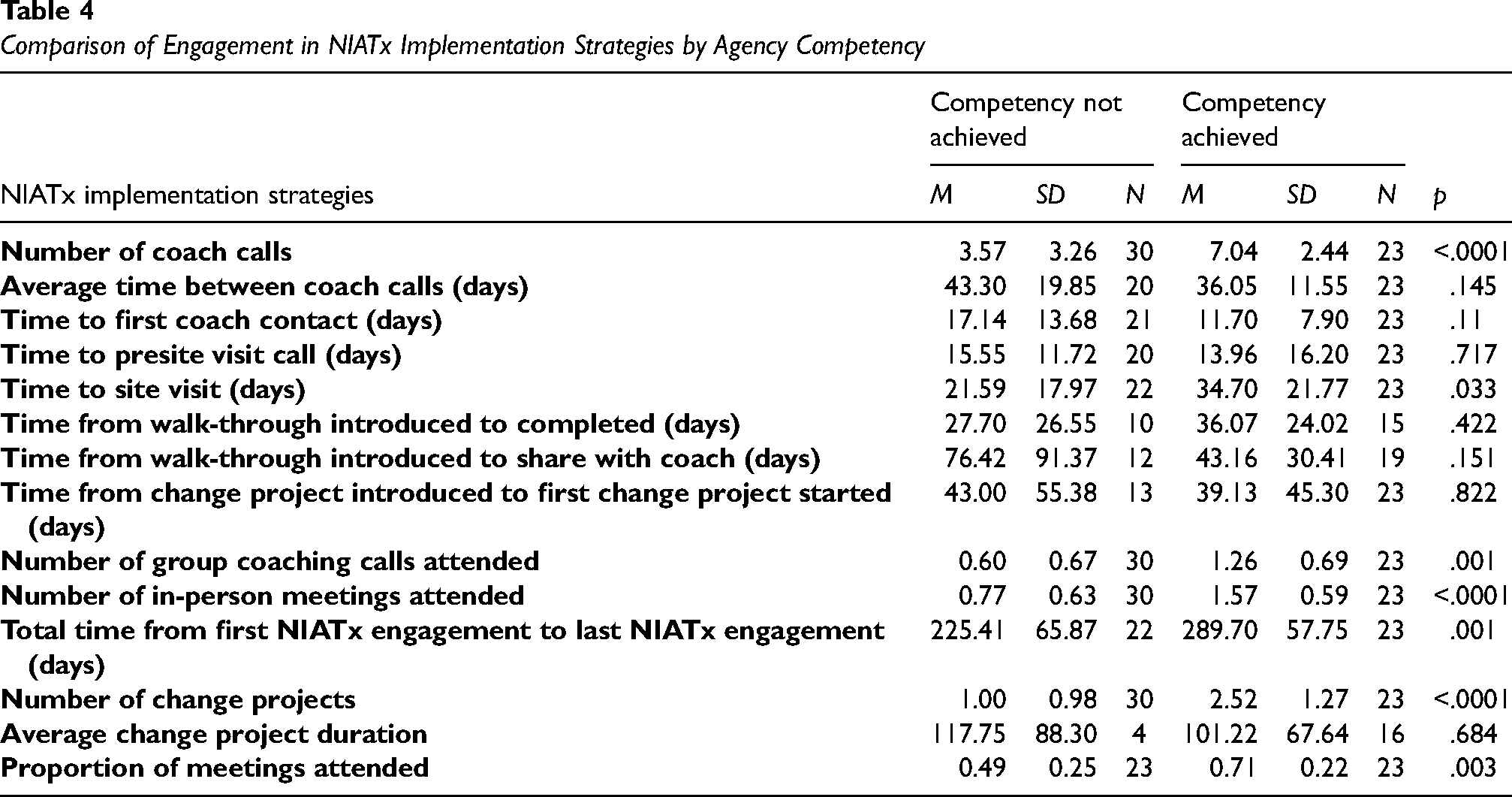

NIATx-SIC Stage 7 activities focused on the use of NIATx implementation strategies to drive change in each agency. Table 4 identifies specific NIATx implementation strategies that were completed more thoroughly by agencies achieving competency versus those that discontinued. Agencies that achieved competency showed greater participation in activities related to coaching calls, site visits, and change projects. Specifically, agencies that achieved competency participated in three more coach calls and were more actively engaged in the group coaching calls and in-person meetings than those that did not achieve competency. In addition, these agencies implemented two more change projects than noncompetent agencies (

Comparison of Engagement in NIATx Implementation Strategies by Agency Competency

Discussion

Dissemination and implementation (D&I) research involves the introduction of specific implementation strategies related to an EBP or organizational support offered through external facilitation (Powell et al., 2015; Waltz et al., 2015, 2019). Organizational participation requires a significant investment of staff resources to engage with the different implementation strategies. For example, staff may need to invest significant time to receive training in an EBP (e.g., motivational interviewing) or set aside time for external coaching calls and/or internal change team meetings to design, implement, and evaluate the impact of Plan-Do-Study-Act change cycles. The inability to sustain a particular practice (e.g., motivational interviewing) represents a direct waste of invested resources, has costs associated with missed opportunities, and may affect an organization's ability to sustain future changes (Ford, Osborne, et al., 2018; Ford, Stumbo, et al., 2018; Ford, Zehner, et al., 2018). A similar argument can be made for organizations that do not achieve competency when utilizing a set of implementation strategies. By not achieving competency, the staff within an organization waste, the opportunity to institutionalize the knowledge of how to apply specific implementation strategies to future change efforts. As such, the ability to measure and assess organizational competency for implementation strategies represents an important advancement in D&I research.

Our results provide evidence that the SIC can be adapted for use with a specific set of implementation strategies. Specifically, the NIATx-SIC provides a tool to measure agency progression through the Preimplementation, Implementation, and Initiation to Sustainment phases when utilizing the NIATx implementation strategies—designed to improve and sustain access to care across multiple healthcare settings—to introduce change within an organization (Belenko et al., 2017; Gustafson et al., 2013; Hoffman et al., 2008, 2011; McCarty et al., 2007; Pankow et al., 2018; Quanbeck et al., 2011). This was the first adaptation of the SIC to measure change cycles, including the possibility for variable numbers of iterations between agencies, and time spent in each cycle. This application of the NIATx-SIC was able to distinguish between agencies that achieved competency in their application of the NIATx implementation strategies versus those agencies determined not to have achieved competency. This finding is important because these agencies did not differ in terms of organizational attributes except for nonprofit status. Thus, competency is not an attribute of the organization but rather a result of the application of the NIATx implementation strategies to improve agency co-occurring capacity. The differences in the proportion of activities completed during the implementation phase and the duration (i.e., time spent) in engaging in Stage 7 activities such as individual coaching calls, group coaching calls, in-person meetings, or implementation of change projects provide evidence that implementation strategy competency is a meaningful construct in D&I research.

Limitations

The study had several limitations. Information collection efforts for the implementation phase relied on self-reporting from the coach, in particular recall of when certain events occurred, and the availability of information (e.g., change project forms or walkthrough reports from the agency). As such, the dates associated with these activities could not be recorded for the SIC. These data gaps may have impacted proportion and duration scores. In addition, both the delay in defining competency in the NIATx-SIC and missing agency information to support agency self-report (e.g., change project forms) impacted the independent assessment of fidelity to the NIATx implementation strategies. As a result, the final determination of agency competency may have differed. Finally, agency attributes such as climate, leadership support, or staff commitment to the change process were not assessed as a part of this project. It is possible that one or more of these attributes may be associated with whether an agency achieved competency as measured by the NIATx-SIC instrument.

Conclusions

The development of instruments to assess organizational competency when the adoption and utilization of implementation strategies to drive change represents a significant advancement in dissemination and implementation research. Organizations that can apply implementation strategies with competence move one step closer to institutionalizing this knowledge and to fulfilling the sentiments of the adage that if you “teach a man to fish, you feed him for a lifetime.” Within D&I research, you are teaching an organization the skills of how to successfully implement change and hopefully sustain change so that they have the knowledge and skills to navigate current and future challenges.

Supplemental Material

sj-docx-1-irp-10.1177_26334895231200379 - Supplemental material for Adapting the stages of implementation completion to an evidence-based implementation strategy: The development of the NIATx stages of implementation completion

Supplemental material, sj-docx-1-irp-10.1177_26334895231200379 for Adapting the stages of implementation completion to an evidence-based implementation strategy: The development of the NIATx stages of implementation completion by James H. Ford, Mark E. Zehner, Holle Schaper and Lisa Saldana in Implementation Research and Practice

Supplemental Material

sj-docx-2-irp-10.1177_26334895231200379 - Supplemental material for Adapting the stages of implementation completion to an evidence-based implementation strategy: The development of the NIATx stages of implementation completion

Supplemental material, sj-docx-2-irp-10.1177_26334895231200379 for Adapting the stages of implementation completion to an evidence-based implementation strategy: The development of the NIATx stages of implementation completion by James H. Ford, Mark E. Zehner, Holle Schaper and Lisa Saldana in Implementation Research and Practice

Footnotes

Authors’ Note

Holle Schaper and Lisa Saldana's current affiliation is Chestnut Health Systems, Lighthouse Institute – Oregon Group, USA.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This manuscript was supported by funding from NIDA-funded research (R01DA037222 and R01DA052975-01A1, Drs. Ford and McGovern, principal investigators) and by grant R01DA044745 from NIDA (Dr. Saldana, principal investigator).

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.