Abstract

Background

Adolescents increasingly access mental health services in schools. School mental health professionals (SPs; school counselors, social workers, etc.) can offer evidence-based mental health practices (EBPs) in schools, which may address access gaps and improve clinical outcomes. Although some studies have assessed factors associated with EBP adoption in schools, additional research focusing on SP- and school-level factors is warranted to support EBP implementation as SPs’ mental health delivery grows.

Methods

Baseline data were collected from SPs at Michigan high schools participating in a statewide trial to implement SP-delivered cognitive behavioral therapy (CBT) to students. Models examined factors associated with attitudes about EBPs, implementation climate, and implementation leadership, and their associations with CBT knowledge, training attendance, and pre-training CBT delivery.

Results

One hundred ninety-eight SPs at 107 schools (87%) completed a baseline survey. The mean Evidence-Based Practice Attitude Scale (EBPAS) total score was 2.9, and school-aggregated mean scores of the Implementation Climate Scale (ICS) and Implementation Leadership Scale (ILS) were 1.83 and 1.77, respectively, all on a scale ranging from 0 (low) to 4 (high). ICS and ILS scores were lower than typically reported in clinical settings, while EBPAS scores were higher. School characteristics were not significantly associated with EBPAS, ICS, or ILS scores, but scores did differ by SP role. Higher EBPAS scores were associated with more CBT knowledge (average marginal effect for 1 SD change [AME] = 0.15 points) and a higher probability of training completion (AME = 8 percentage points). Higher ICS scores were associated with a higher probability of pre-training CBT delivery (AME = 6 percentage points), and higher ILS scores were associated with higher probability of training completion (AME = 10 percentage points).

Conclusions

Our findings suggest that SPs’ attitudes toward EBPs and organizational support were positively associated with early signs of implementation success. As schools increasingly fill the adolescent mental healthcare access gap, efforts to strengthen both provider attitudes toward EBP and strategic organizational factors supporting EBP delivery will be key to encouraging EBP uptake in schools.

Plain Language Summary

Schools are an important setting in which adolescents receive mental healthcare. We need to better understand how to implement evidence-based practices (EBPs) in this setting to improve student mental health. This study examined the attitudes and perceptions of school professionals (SPs) as key contributors to the implementation of a particular EBP, the delivery of cognitive behavioral therapy (CBT) in schools. The study found that implementation climate and leadership scores in participating schools were lower than scores typically reported in clinical settings, while scores for SP attitudes about EBP adoption were higher than typical scores in clinical settings. Results further suggest that SPs with more positive attitudes toward EBPs are more knowledgeable of CBT and more likely to complete a 1-day CBT training. We also found that higher implementation climate scores were associated with SPs reporting pre-training CBT delivery (although this association was not statistically significant), and more implementation leadership was associated with SPs completing the CBT training. These findings suggest that SP attitudes toward EBPs and organizational support in schools are positively associated with early signs of implementation success. Early, low-intensity efforts to (1) improve SP attitudes about mental health EBPs, and (2) increase schools’ support for implementation may scaffold more intensive implementation efforts in schools down the road.

Introduction

As adolescent mental illness rates rise, schools are increasingly tasked with addressing gaps in access to effective mental healthcare (Kern et al., 2017; Stempel et al., 2019; Twenge et al., 2019). Only 40%–45% of youth with mental illness have accessed mental healthcare (Costello et al., 2014; SAMHSA, 2021); of those accessing mental health support, this was most commonly through school-based mental health services (Ali et al., 2019; Duong et al., 2020). However, the implementation of mental health evidence-based practices (EBPs) is limited in school settings (Langley et al., 2010; Mychailyszyn et al., 2011; O’Reilly et al., 2018). This study explored provider- and organizational-level conditions that may help to promote EBP implementation in schools.

School-Based Mental Health Services for Adolescents

Schools are well suited to deliver effective mental healthcare to students. Schools circumvent many barriers youth experience when seeking mental healthcare, including stigma, confidentiality concerns, cost/lack of insurance coverage, transportation challenges, and limited provider availability (Radez et al., 2021; Reardon et al., 2017). school-based mental health services are provided locally using currently employed school professionals (SPs; e.g., school counselors, school social workers, school psychologists, and school nurses); this is advantageous given the limited availability of providers for youth mental healthcare, especially in rural areas (Findling & Stepanova, 2018; Larson et al., 2016). Moreover, SPs have pre-established relationships with students, which can encourage service utilization for students seeking help (Herzig-Anderson et al., 2012; Masia Warner et al., 2016).

Studies, however, have pointed to various reasons limiting mental health EBP implementation in schools. Identified barriers include a lack of accessible and affordable training in mental health EBPs for SPs, along with SPs’ competing responsibilities and administrative demands (Bruns et al., 2016; Corteselli et al., 2020; Hoover & Bostic, 2021; Kern et al., 2017; Ringle et al., 2015). Studies have suggested various strategies to promote implementation, including garnering school administrator support (Forman & Barakat, 2011; Langley et al., 2010), providing SP support via training and consultation for EBP delivery (Beidas et al., 2012; Eiraldi et al., 2020; Koschmann et al., 2019; Nadeem et al., 2013), allocating protected time for SPs (Zhang et al., 2022), and adapting EBPs to fit the school environment (Lyon & Bruns, 2019; Mychailyszyn et al., 2011). For a more rigorous understanding, increased attention to implementation science can help highlight the factors that are most suited to encouraging SPs delivery of EBPs in schools (Kilbourne et al., 2018; Lyon & Bruns, 2019; Owens et al., 2014).

Inner-Context Factors to Facilitate Adoption of Evidence-Based Practices

Of the various conditions for EBP adoption studied in implementation science, one topic that requires further study in school settings are “inner-context” implementation factors (Locke, Lawson, et al., 2019; Lyon & Bruns, 2019), as described in various implementation frameworks, including the Exploration, Preparation, Implementation, Sustainment (EPIS) framework (Aarons et al., 2011). Inner-context factors of organizations implementing EBPs, including provider attitudes and organizational strategic context, have been shown to be influential for EBP implementation (Aarons et al., 2009; Moullin et al., 2019; Sijercic et al., 2020). While these factors have been studied frequently in clinical healthcare settings, more studies are needed to understand their mechanisms when scaled out to non-specialty settings like schools (Aarons, Sklar, et al., 2017; Wolk et al., 2022). Moreover, focusing on the inner context is particularly salient in schools as inner-context factors are relatively malleable and modifiable, may be less constrained than outer-context factors like district-level leadership and policies, and may have more direct impacts on teachers and school staff (Forman & Barakat, 2011; Melgarejo et al., 2020). Recent studies have increasingly examined these inner-context factors in schools, including (as discussed below) developing and validating measurements for schools based on those originally developed in clinical settings.

Provider Attitudes About Evidence-Based Practices

Provider attitudes about EBPs (e.g., willingness to adopt EBPs and openness to change) can be predictive of their decision to use EBPs and can be an important mechanism for behavioral change (Aarons, 2004; Lyon et al., 2019; Sanetti et al., 2014). Studies have shown that school counselors’ involvement with pre-implementation strategies that aim to improve their EBP attitudes and perceptions, grounded in the Theory of Planned Behavior (Ajzen, 1991) and the Health Action Process Approach (Schwarzer, 2008), could lead to increased engagement in the early stages of implementation process (Larson et al., 2021; Locke, Lawson, et al., 2019; Lyon et al., 2019).

For linkages between provider attitudes and EBP uptake, the evidence has been strong in healthcare settings (Aarons et al., 2009; Becker-Haimes et al., 2017; Beidas et al., 2014; Farahnak et al., 2020), but mixed in school settings (Locke, Lawson, et al., 2019; Odom et al., 2022; Suhrheinrich et al., 2020). In the context of special education teachers and staff serving elementary-aged students with autism spectrum disorder, for example, one study found that positive attitudes toward EBPs were significantly associated with the intensity of use for only one of three EBPs examined (Locke, Lawson, et al., 2019). Another study found its positive association with EBP training satisfaction, but no significant relationships with intervention fidelity or with daily use (Suhrheinrich et al., 2020). These studies often used the Evidence-Based Practice Attitude Scale (EBPAS) to measure provider attitudes, which was developed for mental health providers (Aarons, 2004; Aarons et al., 2012) and psychometrically validated with providers in other domains, including school-based behavioral health consultants (Cook et al., 2018).

In terms of factors associated with EBPAS, studies with mental healthcare providers have found that more positive attitudes are associated with provider characteristics (e.g., higher educational attainment, fewer years of experience, and training in social work compared to psychology), and organizational characteristics, including private (vs. public) organizations/programs and constructive (vs. defensive) culture (Aarons, 2004; Aarons et al., 2009, 2010; Aarons & Sawitzky, 2006). In school settings, less-experienced school counselors and those using cognitive/behavioral theoretical orientation showed more positive attitudes (Mullen et al., 2018).

Strategic Organizational Factors to Implement Evidence-Based Practices

Another purported important inner-context factor for increased EBP uptake is strategic organizational contexts, including implementation climate and implementation leadership (Aarons et al., 2014, 2015; Aarons, Ehrhart, et al., 2017; Ehrhart et al., 2014). Implementation climate captures the shared perception of EBP adoption within the organization (Ehrhart et al., 2014; Jacobs et al., 2014; Weiner et al., 2011), which is often measured with the Implementation Climate Scale (ICS). The ICS was originally developed and validated in community mental health contexts (Ehrhart et al., 2014); initial validation work in education sectors suggests overall applicability, but one of its constructs, related to financial incentives and rewards for staff using EBPs, may not translate to schools (Locke, Lee, et al., 2019; Lyon et al., 2018). More recently, the school version of ICS, validated with teachers implementing a universal prevention program, suggested seven dimensions of ICS (Thayer et al., 2022). Implementation leadership focuses on strategic behaviors of first-level leaders (e.g., direct supervisors) to support and facilitate EBP implementation within their organizations (Aarons et al., 2014; Gifford et al., 2017; Guerrero et al., 2020). It is often measured using the Implementation Leadership Scale (ILS), which was originally developed and validated in the clinical setting (Aarons et al., 2014), and showed its applicability in schools with minor changes (Lyon et al., 2018). More recently, the seven-factor, school version of ILS was validated with elementary school teachers (Lyon et al., 2022).

Little is known about predictors of better or worse implementation climate or leadership in schools: some studies suggest that school demographics (such as racial composition, percentage of special education students, and school size) have a negligible effect on schools’ ability to support EBP implementation (Lyon et al., 2022; Thayer et al., 2022), while others suggest potential differences in the inner context due to school location or size (Moore et al., 2021; Suhrheinrich et al., 2021). In terms of linkages between strategic organizational factors and EBP implementation, studies have found mixed support in clinical settings (Meza et al., 2021; Powell et al., 2021) as well as in schools. Studies found no significant effect of these organizational factors on teachers’ decision to use EBPs for autism spectrum disorder (Locke, Lawson, et al., 2019; Melgarejo et al., 2020), while higher ICS was associated with teachers’ perceived feasibility of implementing an universal EBP for social, emotional, and behavioral skill building (Corbin et al., 2022). Given the complexity of EBP implementation in schools, as well as the broad range of SPs supporting students with mental health concerns, more studies are needed to explore how SP and school characteristics are associated with inner-context factors, and if (and how) these factors may help stimulate EBP implementation in schools (Locke, Lawson, et al., 2019; Lyon et al., 2018; Wolk et al., 2022).

Research Questions

This paper examined inner-context factors associated with SPs’ early signs of EBP implementation in schools in the “Preparation” phase of EPIS. It used the data collected during a 3-month, pre-randomization “run-in” phase of a type III hybrid implementation-effectiveness trial testing implementation strategies to support implementation of school-based cognitive behavioral therapy (CBT) in Michigan. Specifically, this paper aimed to answer the following research questions:

How did SPs rate their attitudes about EBP adoption, as well as their schools’ implementation climate and implementation leadership? What school- and SP-level characteristics were associated with higher versus lower provider attitudes about EBPs and/or implementation climate/leadership? Was higher SP support for EBPs or school implementation climate/leadership associated with early signs of EBP implementation, such as higher CBT knowledge, increased completion of training, or more pre-training CBT delivery?

This study furthers understanding of inner-context factors for EBP implementation in schools to improve youth mental health access.

Methods

The Evidence-Based Practice and Implementers

CBT is one mental health EBP with well-established evidence showing positive effects on youth with anxiety and depression (Haugland et al., 2020; Higa-McMillan et al., 2016; Spirito et al., 2011), across racial groups (Ginsburg & Drake, 2002; Rosselló & Bernal, 1999) and through modular or components-based programs (Becker et al., 2012; Chiu et al., 2013). Studies have shown that CBT can be effectively delivered by SPs in school settings (Ginsburg et al., 2020; Koschmann et al., 2019; Masia Warner et al., 2016; Sanchez et al., 2018). Core components of CBT include psychoeducation, relaxation, mindfulness, cognitive restructuring, behavioral activation, and exposure (Chorpita & Daleiden, 2009; Chorpita et al., 2005).

This study partnered with the Transforming Research into Action to Improve the Lives of Students program (TRAILS; https://trailstowellness.org). TRAILS aims to increase equitable student access to effective mental healthcare. It does this by providing training, resources, and implementation support to school staff who then offer mental health EBPs in schools. In the present study, TRAILS provided: CBT manuals adapted for SP needs, CBT trainings, and all implementation support to schools.

Data Source, Participants, and Procedures

This paper used data collected during the 3-month Preparation phase of the Adaptive School-based Implementation of CBT (ASIC) trial (Kilbourne et al., 2018). Figure 1 shows the data collection timeline for this period. The trial was approved by the University of Michigan Institutional Review Board and full study details and primary aim results are published elsewhere (Kilbourne et al., 2018; Smith et al., 2022). Further details on study recruitment and participant eligibility criteria are provided in Appendix A. Once consented, SPs began receiving support for implementing CBT via an implementation strategy informed by the Replicating Effective Programs (REP) framework (Kegeles et al., 2000; Kilbourne et al., 2007). This support, detailed in other publications (Kilbourne et al., 2018; Smith et al., 2022), included access to TRAILS web-based CBT resources (e.g., CBT manuals, worksheets, and other student resources), on-demand technical support from a clinical psychologist, and a 1-day didactic CBT training.

Data Collection Timeline During Preparation Phase

SPs were asked to complete a SP baseline survey for a $10 incentive any time prior to training, which included questions about demographics, attitudes toward EBP adoption, implementation climate and leadership, and CBT knowledge. SPs were also asked to begin using the study data collection “dashboard” to report (or “practice reporting”) weekly CBT delivery (Smith et al., 2022). SPs were incentivized $3 per weekly report and could provide up to 10 reports prior to the first training if registered at the earliest date.

The current paper used data from SPs who completed the SP baseline survey. Of the 227 SPs at 115 schools who initially consented, N = 198 SPs (87%) from k = 107 schools completed the survey (Appendix Figure A.1). Twenty-six schools (24%) had three SPs complete the survey, 39 (36%) had two SPs and 42 (39%) had one SP. Table 1 summarizes the basic characteristics of SPs and their schools; the majority of SPs had no graduate training or prior professional development in CBT.

Participant Characteristics

Note. SD = standard deviation; SP = school professional; CBT = cognitive behavioral therapy.

Other reported roles include school psychologist (n = 7), behavioral intervention specialist (n = 4), special education teacher (n = 4), general education teacher (n = 4), school success worker (n = 4), administrator (n = 5), school nurse (n = 1), wellness therapist/coordinator (n = 1), and other (n = 5).

Measures

Individual-Level Factors

Attitudes About Evidence-Based Practices

The 36-item EBPAS (Rye et al., 2017) was used to measure SP attitudes about EBPs. It captures the original four attitude domains (Requirement, Appeal, Openness, and Divergence; Aarons, 2004) and eight additional domains (Limitations, Fit, Monitoring, Balance, Burden, Job security, Organizational support, and Feedback). All items were rated on a 5-point Likert scale ranging from 0 (not at all) to 4 (very great extent); the scale showed adequate to excellent internal consistency (Cronbach's α for subscales and total: .60–.91; Rye et al., 2017).

SP Characteristics

SPs provided information on their current professional position, years of tenure in current position, prior CBT training, as well as gender and race.

Organizational-Level factors

Implementation Climate

The 18-item ICS was used to measure implementation climate (Ehrhart et al., 2014). The original validated ICS includes six subscales—Focus, Educational Support, Recognition, Rewards, Selection for EBP, and Selection for Openness, and items are rated on a 5-point Likert scale ranging from 0 (not at all) to 4 (very great extent). In school settings, the exclusion of ICS-Rewards was recommended due to the rarity of school-level financial incentives and promotions for EBP use; the five remaining subscales showed good to excellent internal consistency (Cronbach's α: .88–.97; Lyon et al., 2018). Accordingly, we computed total ICS excluding ICS-Rewards, and SP scores were aggregated to school level to reflect that implementation climate is an organizational-level construct.

Implementation Leadership

The 12-item ILS was used for implementation leadership (Aarons et al., 2014). It includes four subscales: Proactive, Knowledgeable, Supportive, and Perseverant leadership; items are rated on the same 5-point Likert scale as the ICS. The ILS was also validated in school settings, with excellent internal consistency (Cronbach's α: .94–.97; Lyon et al., 2018). SP scores were again aggregated to school level for implementation leadership.

School Characteristics

School characteristics, including school size, location (non-rural vs. rural), and free/reduced lunch rate, were derived from a state data source (e.g., MI School Data; https://www.mischooldata.org/collected).

Outcomes

To examine how the inner-context factors work as determinants of EBP implementation, we chose three variables capturing early signs of successful EBP implementation collected during the Preparation phase. While these variables are undoubtedly proximal, and success in even all three is not necessarily a harbinger of successful downstream implementation, they could be early indicators of mechanisms likely to spur successful implementation: EBP knowledge (objective CBT knowledge), engagement in the implementation process (completion of TRAILS' 1-day training), and any early use of the practice, or the intention or initial decision to employ an EBP (Proctor et al., 2011; pre-training CBT delivery). They are also likely correlated with other commonly documented early implementation outcomes, like acceptability, appropriateness, and/or feasibility (Proctor et al., 2011; Wolk et al., 2022).

Objective CBT Knowledge

The baseline survey included a battery of CBT objective knowledge questions developed by clinical experts on the TRAILS team (Rodriguez-Quintana et al., 2021). One open-ended item asked SPs to identify components of the CBT model, and 22 multiple-choice questions were asked to identify appropriate CBT components or CBT-based treatments based on six vignettes about youth experiencing depression and/or anxiety. We assessed the number of correct answers out of 23.

Completion of Training

SPs were asked to complete a daylong didactic CBT training to be deemed eligible for inclusion in the trial. Training was offered by TRAILS at no cost at various locations across Michigan. SPs were coded as completing the training or not.

Pre-Training CBT Delivery

Weekly CBT reports were used to construct a binary measure indicating whether SPs reported any versus no CBT delivery during the Preparation (pre-training) phase.

Data Analysis

First, SPs’ ICS and ILS scores were averaged within a school to compute school-aggregated scores, after assessing SP within-group agreement using an interrater agreement index (Brown & Hauenstein, 2005). Univariate analyses examined the distribution of SP-reported EBPAS and school-aggregated ICS and ILS scores; the correlations between these measures were assessed using Pearson correlation coefficients. Second, ordinary least squares regressions with clustered robust standard errors were conducted to explore whether each of these inner-context factors (EBPAS, ICS, and ILS) was associated with school- and SP-level characteristics: school size (>500 vs. ≤500 students), school location (non-rural vs. rural), free/reduced lunch rate (≥50% vs. <50% students eligible), SP role (school social worker, school counselor, or other), race (White vs. non-White), sex (female vs. male), tenure in years, and prior CBT training (formal, informal, or none). Third, linear and logistic regression models were used to examine whether and how each of EBPAS and school-aggregated ICS and ILS was associated with SPs’ early signs of implementation: CBT knowledge (range: 0–23), training completion (yes vs. no), and pre-training CBT delivery (yes vs. no). These models used robust standard errors to account for clustering within schools and included school- and SP-level characteristics discussed above as control variables. Average marginal effects (AMEs) were computed to show the average change in the outcomes (i.e., the predicted values for the continuous outcome and predicted probability for the binary outcome) for a given change in the independent variable (e.g., EBPAS, ICS, and ILS). Stata 16 was used for all analyses.

Results

Distribution of Inner-Context Factors

SP-Reported Attitudes About EBPs

The average SP-level EBPAS total score was 2.90 (SD = 0.39), on a scale ranging from 0 (not favorable) to 4 (highly favorable attitudes about EBP adoption; Figure 2). Cronbach's α for EBPAS total and subscales ranged from .52 to .97, with two subscales below .70 (Balance: .52, Divergence: .66; see Appendix Table B.1 for details of subscales). EBPAS subscales for Limitations (reverse-coded), Fit, and Appeal had the highest scores, at 3.58, 3.45, and 3.31, respectively; the lowest subscale score was for Job Security (1.48).

Distribution of EBPAS, ICS, and ILS

School-Aggregated Implementation Climate and Implementation Leadership Scores

For ICS and ILS total scores, school-aggregated scores were computed by averaging across SPs. The within-group agreement (awg(J)) was >.90 for subscale and total scores (with 1–3 raters). For the schools with three SPs (k = 26 schools), awg(J) was >.65 for ICS and >.70 for ILS. Mean scores of school-aggregated ICS and ILS were 1.83 (SD = 0.60) and 1.77 (SD = 0.76), respectively, on a scale ranging from 0 (not supportive) to 4 (very supportive); internal consistency was excellent (Table 2). ICS and ILS scores were also highly correlated (r = .77), and showed weak positive correlations with EBPAS (Table 2). In terms of ICS subscales, Focus on EBP (2.41) and Selection for Openness (2.38) had highest scores. As expected, ICS-Rewards had the lowest score by a wide margin, at 0.23; this subscale was excluded for computing the ICS total. Within the ILS, the Supportive Leadership subscale had the highest score (2.36) and Proactive Leadership the lowest score (1.30; Appendix Table B.1).

EBPAS, ICS, and ILS Total Score Bivariate Correlations and Summary Statistics

Note. EBPAS = Evidence-based Practice Attitudes Scale; ICS = school-aggregated Implementation Climate Scale; ILS = school-aggregated Implementation Leadership Scale; M = Mean; SD = standard deviation.

***p < .001. *p < .05.

School- and SP-Level Characteristics and Inner-Context Factors

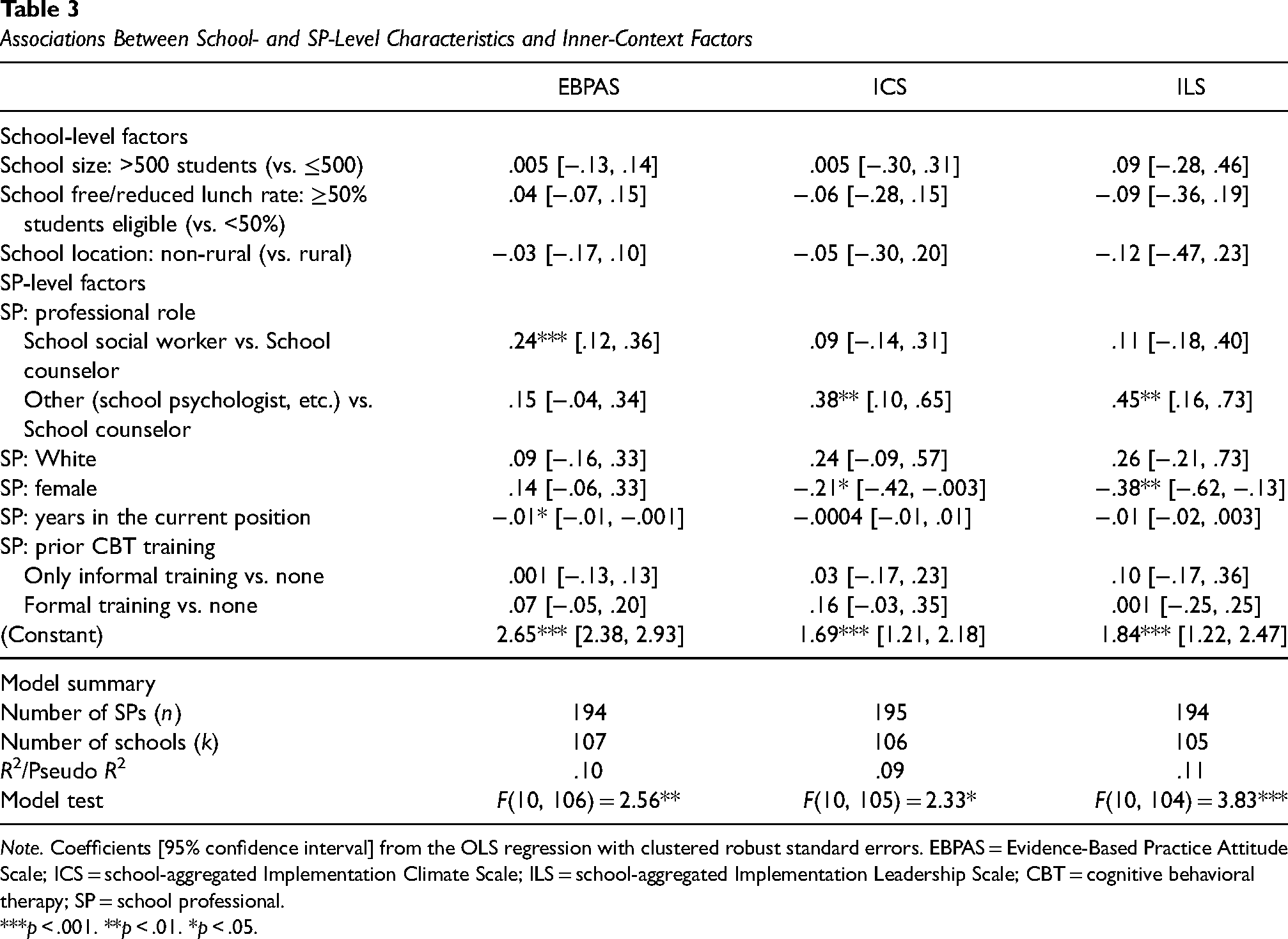

The second research question explored if school- and SP-level characteristics were associated with each of inner-context factors. Results are shown in Table 3. Little support was found for associations between school characteristics (size, free/reduced lunch rate, or location) and any SP-reported EBPAS or school-aggregated ICS or ILS scores.

Associations Between School- and SP-Level Characteristics and Inner-Context Factors

Note. Coefficients [95% confidence interval] from the OLS regression with clustered robust standard errors. EBPAS = Evidence-Based Practice Attitude Scale; ICS = school-aggregated Implementation Climate Scale; ILS = school-aggregated Implementation Leadership Scale; CBT = cognitive behavioral therapy; SP = school professional.

***p < .001. **p < .01. *p < .05.

EBPAS total scores, however, varied significantly by SP role and tenure. Specifically, school social workers reported higher EBPAS scores compared to school counselors, by 0.24 points on average (95% CI = [0.12, 0.36]; p < .001), all else equal. Newer SPs had higher EBPAS scores: scores decreased by 0.01 per additional year of experience (95% CI = [−0.01, –0.001]; p = .034), all else equal.

School-aggregated ICS and ILS total scores were associated with gender and professional role. ICS scores were lower for female SPs than male by 0.21 points (95% CI = [−0.42, –0.003]; p = .047); 0.38 points higher among SPs identified as working in “other” positions (e.g., school psychologists) compared to school counselors (95% CI = [0.10, 0.65]; p = .008), all else equal. Similar relationships were found for ILS, with lower scores for female SPs than male by 0.38 points (95% CI = [−0.62, –0.13]; p = .003) and higher scores among SPs in “other” positions (0.45 points higher compared to school counselors; 95% CI = [0.16, 0.73]; p = .003), all else equal.

Inner-Context Factors and Early Signs of EBP Implementation

Finally, we examined whether any inner-context factors were associated with outcomes reflecting early indication of successful implementation: CBT knowledge, training completion, and pre-training CBT delivery. These results are summarized below for each outcome (see Appendix Tables C.1–C.3 for full model results). Figures 3–5 present the average marginal effects of each inner-context factor on each outcome of interest.

Average Marginal Effects of EBPAS on Early Implementation Outcomes.

Predicted Probability of Early Implementation Indicators Over the ICS Total Scores

Predicted Probability of Early Implementation Indicators Over the ILS Total Scores.

Outcomes

SPs correctly answered a mean of 12 (of 23; 52%) CBT knowledge questions (SD = 4.21; Mdn = 12). For analyses, we standardized this variable to the mean with unit variance to improve interpretability. Of N = 198 SPs, 85% (n = 168) completed TRAILS' 1-day CBT training. Thirty-three percent (n = 65) reported delivering at least one individual or group CBT session during the Preparation phase; remaining SPs either reported no CBT delivery (60%; n = 120) or did not report (7%; n = 13) during this phase.

Individual Attitudes About EBPs and Outcomes

Higher scores on EBPAS were associated with more correct CBT knowledge answers and higher probability of completing training; they were not associated with pre-training CBT delivery. On average, one SD (centered) increase in EBPAS scores (approximately 0.39 points) increased CBT knowledge scores by 0.15 SD (about 0.6 questions) [95% CI: 0.003, 0.29], and changed the probability of completing training by 8 percentage points [95% CI: .03, .14] (Figure 3).

Organizational Implementation Climate/Leadership and Outcomes

Higher ICS and ILS scores were associated with higher probability of reporting pre-training CBT delivery and training completion. On average, one SD increase in ICS (approximately 0.60 points) increased the probability of reporting pre-training CBT delivery by 6 percentage points [95% CI: −.002, .12] (Figure 4). One SD increase in ILS (approximately 0.76 points), on average, increased the probability of training completion by 10 percentage points [95% CI: .05, .15] (Figure 5).

Discussion

This observational study used pre-randomization data from an implementation trial to explore the inner-context factors identified by frameworks like EPIS and their associations with both school- and SP-level factors and early signs of implementation success for an SP-delivered CBT program in Michigan high schools. We explored: (1) the distribution of inner-context factors for EBP implementation (EBPAS, ICS, and ILS); (2) the relationship between school- and SP-level characteristics and these inner-context factors; and (3) the associations between inner-context factors and early signs of implementation success. SP EBPAS scores were relatively higher and school-aggregated ICS and ILS total scores were relatively lower. School characteristics were not significantly associated with EBPAS, ICS, or ILS scores; but scores did differ by SP role. In regards to outcome variables, EBPAS scores were positively associated with CBT knowledge and training completion, whereas school-aggregated ILS scores were positively associated with training completion. ICS scores were positively associated with pre-training CBT delivery, but not statistically significant.

Inner-Context Factors for EBP Implementation in Schools

SPs reported higher average scores on EBPAS than those found in traditional clinical settings (Appendix Figure B.1 presented the distribution relative to other clinical domains), consistent with prior findings (Cook et al., 2018; Locke, Lawson, et al., 2019). With the exception of EBPAS-Job Security, the EBPAS subscale scores were generally high, indicating that SPs generally view EBP adoption positively, see EBP use as useful for their students, and are willing to learn and use them, although EBP may not contribute to their job security.

It is perhaps not surprising that SPs who chose to enroll in our study reported positive attitudes about adopting EBPs. However, echoing prior findings in education sectors (Appendix Figure B.1) (Locke, Lawson, et al., 2019; Lyon et al., 2018), our SPs rated organizational support for EBP implementation, via the ICS and ILS, lower than in clinical settings. This may reflect challenges in integrating mental health EBPs into schools, as SPs navigate competing priorities (Hoover & Bostic, 2021). Alternatively, it may be because some dimensions of implementation climate and leadership work differently in schools than in clinical settings due to, for example, differences in organizational structures between these settings (Locke, Lee, et al., 2019). Distribution of the subscales provides additional insights: some aspects of organizational support for EBPs may be more prevalent than other constructs in schools (Lyon et al., 2018). Relatively higher scores on ILS-Supportive, compared to ILS-Proactive, may suggest that school administrators and principals provide general support for implementation efforts, but do not necessarily anticipate barriers and solve problems in the implementation process. Relatively lower scores on ICS-Selection for EBPs and ICS-Rewards may reflect that schools rarely have much discretion in selecting/recognizing those who use EBPs or that SPs often are not clearly informed about the hiring process (Locke, Lee, et al., 2019; Lyon et al., 2018).

School- and SP-Level Characteristics and Inner-Context Factors

None of our school characteristics, including size, geography, or free/reduced lunch rate, saw significant associations with inner-context factors (Table 3). They also did not show significant relationships with early signs of EBP implementation (Appendix C). This may be edifying for implementation scientists and practitioners as it highlights that, even in the face of challenging, harder-to-change structural factors, implementation efforts focused on inner context can still be impactful. Furthermore, implementation scientists and practitioners should be careful not to make assumptions about schools’ readiness for implementing EBPs based on their external characteristics. Future studies should further explore if school characteristics may moderate how inner-context factors impact implementation.

At the SP level, EBPAS scores did vary across SP roles and tenure. Consistent with prior studies (Aarons et al., 2010), SPs newer to their professional position showed higher EBPAS scores; school social workers reported higher EBPAS scores compared to school counselors and other roles. It is unknown whether and how professional disciplines may affect provider attitudes (Aarons et al., 2010), but our findings suggest that school social workers show more flexibility and willingness to get feedback and try new practices that fit with their students’ needs.

School-aggregated ICS and ILS scores did vary by SP gender (female SPs had lower scores, on average) and role (school counselors reported the lowest scores, on average), all else equal. Interestingly, we also found that school social workers (vs. counselors) had higher CBT knowledge, holding ICS scores constant; female SPs had higher CBT knowledge, holding ILS scores constant. Future studies should explore how SPs’ role or previous trainings and/or experiences may affect their perceptions about organizational implementation support and downstream implementation success.

Inner-Context Factors and Early Signs of EBP Implementation in Schools

The third research question asked if SP support for EBPs or school implementation climate/leadership was associated with early indicators of implementation success, including CBT knowledge, training completion, and reported CBT delivery during the Preparation phase. Our findings partially support that both individual attitudes toward EBPs and organizational support for EBPs are positively associated with these early indicators. Other studies have similarly found mixed results when examining associations between inner-context factors and implementation outcomes (Locke, Lawson, et al., 2019; Odom et al., 2022).

Our results show that only SP attitudes toward EBPs, and not organizational support, had a significant association with CBT knowledge. Relative to other outcomes, however, this may not be surprising—as gaining knowledge in CBT is something that SPs are likely to have more ability to explore given an interest even without organizational support, time, or resources. It may also reflect a reverse-directional pathway: SPs with more CBT knowledge exhibit more supportive attitudes toward EBPs. This finding also supports prior research in clinical settings showing no significant association between ILS/ICS and CBT knowledge (Powell et al., 2017).

With respect to training completion, all else equal, SPs with more positive attitudes toward EBPs were also more likely to complete training, as were SPs from schools with leadership that were more aligned with EBP implementation. SP attendance at training did impose some burden. Although Continuing Education Units were offered and trainings were held in five locations across the state (and one virtual option), it was a full-day commitment that required travel and presumably administrative approval.

We did not find strong evidence to support linkages between inner-context factors and pre-training CBT delivery. ICS/ILS scores were positively associated with pre-training CBT delivery, but average increases were not statistically significant. Nonetheless, these findings may suggest that SPs in schools that were viewed as more supportive of EBP implementation took initiative to use CBT materials earlier on. However, it may also be the case that SPs reported more positive organizational support if they were already delivering some form of CBT. Future analyses will leverage the experimental aspects of this study to further investigate the role that implementation climate and leadership may play in spurring EBP implementation in schools.

Implications for Research and Practice

These findings overall suggest that, at least among those who participated in our implementation trial, SPs generally have supportive attitudes about EBP adoption, but lack clear organizational support, by way of leadership and climate, for spending the necessary time and resources to learn and deliver these practices. Again, this may reflect a selection effect of study participation, as perhaps SPs with strong organizational support may not have felt it necessary to enroll in a trial to receive further implementation support. Nonetheless, these findings speak to the gap between SPs and schools in terms of their support and interest in EBPs, suggesting that there remains work to be done to address implementation climate and leadership barriers to EBP implementation in schools. They also provide further evidence that provider-level EBP support and organizational support are distinct “inner-context” features and the latter are not epiphenomenal, but also raise important questions for the field as to how these concepts (co-) develop and interrelate, and how their importance might differ depending on intervention or implementation effort, particularly in school settings. Potential mechanisms and relationships among these inner-context factors need to be considered in future studies—for example, implementation leadership may contribute to developing implementation climate, which in turn influences provider attitudes and their decision to use EBPs (Williams et al., 2020, 2022). Moreover, our findings suggest that the identification of various correlates may help to inform pathways for modification and successful downstream implementation, while we generally accept that implementation leadership and climate are concepts that are both predictive of successful implementation and modifiable.

Limitations

This study had several limitations. First, the data used in these analyses were largely cross-sectional, collected at baseline of a multi-year study; therefore, no causal relationships can be inferred. Future studies using longitudinal data are needed to understand how organizational- and individual-level factors may influence EBP uptake, directly or indirectly. Second, SPs in this study agreed to participate in a trial to implement CBT; as such, they may not reflect the broader population of SPs/schools with respect to attitudes about EBPs or their relationship with early indicators of implementation success. Third, the ICS/ILS scores were school-level average ratings from only the SPs enrolled in the study. In school buildings, teachers, staff, and administrators may have different perceptions about organizational support of EBPs, which need to be further studied to fully understand the role of implementation climate and leadership in schools. Future studies could also use more recent versions of EBPAS/ICS/ILS measures validated in schools which were not yet available at the time of data collection (Lyon et al., 2022; Merle, 2021; Thayer et al., 2022). Fourth, our selected outcomes related to early indicators of implementation success are supported by theory and prior literature in implementation science broadly, but limited research has examined the extent to which they would be indicative of successful SP implementation in school settings. They also reflect some dimensions of implementation (e.g., acceptability and feasibility) but not others (e.g., fidelity), which several scholars have noted are key to mental health delivery in schools (Locke, Lawson, et al., 2019; Lyon et al., 2018). Future analyses from this study will evaluate fidelity of delivery and its association with inner-context factors.

Conclusion

This paper sought to understand inner-context factors, including attitudes about EBPs, implementation climate, and implementation leadership, associated with SPs’ school mental health EBP delivery. Our results highlight that SPs rated their schools’ ILS and ICS lower than providers in clinical settings, but their own attitudes, measured via the EBPAS, were higher. These inner-context factors were partially connected to our outcomes, which were selected to reflect early indications of implementation success. These findings highlight a need for further research on and efforts to strengthen individual and organizational support for EBP adoption in school settings as important mediators for improved EBP implementation. At the same time, our analyses did not find school size, location, or free/reduced lunch rate to be associated with higher EBPAS, ILS, or ICS scores, which highlights the malleability of these inner-context factors. More work is needed to understand how existing systems and networks can strengthen these individual and organizational attitudes toward, and ultimately implementation of, SP mental health delivery in schools.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is supported by the National Institute of Mental Health (R01MH114203). Transforming research into action to improve the lives of students program (TRAILS) is supported by funds from the Centers for Medicare and Medicaid Services through the Michigan Department of Health and Human Services, the Michigan Health Endowment Fund, the Ethel and James Flinn Foundation, and other Michigan-based foundations.

Previous Presentation

This work has been presented at the Academy Health 2019 Annual Research Meeting, Washington, DC, 6/2019, the American Public Health Association 2019 Annual Meeting & Expo, Philadelphia, PA, 11/2019, and the 12th Annual Conference on the Science of Dissemination and Implementation in Health, Washington, DC, 12/2019.