Abstract

Background:

Developing pragmatic assessment tools to measure clinician use of evidence-based practices is critical to advancing implementation of evidence-based practices in mental health. This case study details our community-partnered process of developing the Therapy Process Observation Coding Scale-Self-Reported Therapist Intervention Fidelity for Youth (TPOCS-SeRTIFY), a pragmatic, clinician-report instrument to measure cognitive behavioral therapy (CBT) delivery.

Approach:

We describe a five-step community-partnered development process. Initial goals were to create a self-report instrument that paralleled an existing direct observation measure of clinician delivery of CBT use to facilitate later assessment of measure performance. Cognitive interviews with community clinicians (n = 6) and consultation with CBT experts (n = 6) were used to enhance interpretability and usability as part of an iterative refinement process. The instrument was administered to 247 community clinicians along with an established self-reported measure of clinician delivery of CBT and other treatments to assess preliminary psychometric performance. Preliminary psychometrics were promising.

Conclusion:

Our community-partnered development process showed promising success and can guide future development of pragmatic implementation measures both to facilitate measurement of ongoing implementation efforts and future research aimed at building learning mental health systems.

Plain language summary

Developing brief, user-friendly, and accurate tools to measure how therapists deliver cognitive behavioral therapy (CBT) in routine practice is important for advancing the reach of CBT into community settings. To date, developing such “pragmatic” measures has been difficult. There is little known about how researchers can best develop these types of assessment tools so that they (1) are easy for clinicians in practice to use and (2) provide valid and useful information about implementation outcomes. As a result, there are few well-validated measures in existence that measure therapist use of CBT that are feasible for use in community practice. This paper contributes to the literature by describing our community-partnered process for developing a measure of therapist use of CBT (Therapy Process Observation Coding Scale -Self-Reported Therapist Intervention Fidelity for Youth; TPOCS-SeRTIFY). This descriptive case study outlines the community-partnered approach we took to develop this measure. This case study will contribute to future research by serving as a guide to others aiming to develop pragmatic implementation measures. In addition, the TPOCS-SeRTIFY is a pragmatic measure of clinician use of CBT that holds promise for its use by both researchers and clinicians to measure the success of CBT implementation efforts.

Implementation research is hampered by measurement limitations (Lewis et al., 2018). This is particularly so for measuring the delivery of evidence-based treatments by mental health clinicians in routine clinical care settings, which is a key implementation outcome (Bond & Drake, 2019; Proctor et al., 2011). Observational methods are the current gold-standard approach to ascertaining clinician use of evidence-based therapy techniques (Rodriguez-Quintana & Lewis, 2018; Schoenwald et al., 2011), but there are significant feasibility challenges for community settings in using these methods (Beidas et al., 2016). Despite requests for pragmatic measures that are feasible for ongoing use in the community to measure implementation efforts (Glasgow & Riley, 2013; Powell et al., 2017), there is little guidance for how to develop them. Retrofitting existing measures for use in clinician-report format may not be sufficient to create a pragmatic and clinically credible tool for everyday practice. This short report offers our collaborative experience designing an instrument with community partners as a case study of pragmatic measurement development in implementation science and behavioral health.

We describe our five-stage process for developing a brief, user-friendly, self-reported instrument for indexing clinician cognitive behavioral therapy (CBT) delivery in publicly funded mental health settings: the TPOCS-SeRTIFY (Beidas et al., 2016). The TPOCS-SeRTIFY is a self-report instrument to measure clinician CBT delivery for youth that parallels the CBT intervention items on the TPOCS-Revised Strategies (TPOCS-RS), a gold-standard observational coding system for clinician behavior (McLeod et al., 2015) that is often used to index community CBT use (e.g., Smith et al., 2017). Development goals were to design items to parallel the TPOCS-RS to allow for construct validation with direct observation, while creating a user-friendly instrument that would be comprehensible, appropriate, brief, and easy to use within a community mental health setting (i.e., is responsive to both an implementation gap and a stakeholder need). We use the exemplar of CBT for youth mental health as it has strong empirical support (Dorsey et al., 2017; Higa-McMillan et al., 2016; Hofmann et al., 2012; McCart & Sheidow, 2016) and because there have been nationwide implementation efforts designed to increase CBT use for youth (e.g., Bruns et al., 2008; Dorsey et al., 2016; Powell et al., 2016). Widespread CBT implementation will not be possible without companion self-report instruments with the capability to monitor and facilitate the adjustment of CBT delivery integrity to maximize outcomes across clinical settings.

Our process was informed by a conceptual model for conducting community-partnered research that places emphasis on bidirectional communication with community stakeholders throughout the research process (Pellecchia et al., 2018). Our pragmatic development steps include (1) develop an initial instrument draft based on prior discussions with community clinicians identifying the need for a brief, self-reported instrument of clinician CBT use in collaboration with content experts; (2) refine the instrument with community stakeholder and independent expert input; (3) collect preliminary feasibility and psychometric data; (4) conduct formal psychometric evaluation; and (5) identify next steps for instrument refinement, dissemination, and implementation, in collaboration with stakeholders. As the processes for conducting Steps 4 and 5 are better articulated in the literature (Lewis et al., 2018; Martin & Savage-McGlynn, 2013), we focus on Steps 1 through 3.

Approach

Step 1: initial measure development

Based on community partner feedback that existing clinician-report instruments measuring clinician CBT use are lengthy and contain too much jargon, the initial draft emphasized brevity and non-technical language and contained two versions: one to index CBT use at a session level and one across sessions representing overall treatment with a client. Twelve cognitive behavioral intervention items derived from the TPOCS-RS (e.g., cognitive distortion, parenting skills) were drafted. Items were reworded to remove technical language (e.g., changing “respondent strategies” to “exposure strategies”) and provide brief definitions for each item’s meaning. An exemplar was written for each intervention to illustrate what a clinician delivering this intervention might say (e.g., Psychoeducation: “It seems like you’ve been lashing out at the people you care about a lot lately. That is a common thing we see with depression, where it can make you more irritable.”). This initial draft was sent to three experts (authors SKS, SD, and AH) in CBT and measurement development, and a TPOCS-RS expert (author BDM) for review. Based on feedback, we refined item wording to further reduce technical language and make instructions more user-friendly (e.g., bolding relevant aspects of the directions). One expert recommended trying to reduce the instrument to one page; a second version was drafted that contained briefer definitions to fit onto a single page.

Step 2: instrument refinement with stakeholders and independent experts

We conducted cognitive interviews with six racially diverse, female clinicians who worked across four community mental health agencies. Given the intended goal of the TPOCS-SeRTIFY to monitor CBT use in the context of implementation efforts in publicly funded settings, we purposively interviewed stakeholders who held a primary clinician or supervisor role. Four clinicians were members of a Community Advisory Board formed by our team that provides consultation and feedback on the relevance, feasibility, and public health impact of research projects, consistent with our conceptual model for a community-partnered approach (Pellecchia et al., 2018). Cognitive interviewing is an interview procedure used in survey development to evaluate potential sources of response error (Willis, 2004). We used a combination of a “think aloud” and verbal probing approach to cognitive interviewing. Clinicians completed the instrument in the presence of a trained interviewer while talking out loud their thought process for arriving at each answer. The interviewer verbally probed for additional information to understand the interviewees’ comprehension and interpretation of each item. The instrument was iteratively revised following each interview, prioritizing items that were confusing or interpreted differently by participants. Example changes included refining instructions to clarify that each item represented a distinct CBT intervention, clarify item descriptions, change the item layout, so that conceptually similar items were grouped together to facilitate respondent’s ability to differentiate between them, change item names to make them more interpretable to the target user population (e.g., change “functional analysis of behavior” to “Antecedents, Behavior, and Consequences [ABC] model”), and refine examples to make them more realistic. The research team came to consensus on changes needed through weekly team meetings; in the rare instance of lack of consensus, the interview guide was amended to include specific queries of our community partners to resolve dispute. Saturation was reached after the fifth interview; no changes were made after the sixth interview.

We explicitly asked stakeholders for feedback about their preference of the short versus long form of the instrument by showing both and asking them directly which version they preferred. We counterbalanced which instrument they received first. As feedback was equivocal, we turned to our measurement experts for guidance, who recommended proceeding with the long form to enhance item interpretability.

Finally, to ensure scientific rigor and maximize concordance between the self-report and direct observation versions of the TPOCS-RS to allow for later comparison, the instrument was sent to two independent CBT experts alongside the original item descriptions on the TPOCS-RS. Experts rated how similar each item on the TPOCS-SeRTIFY were to the items on the TPOCS-RS on a seven-point scale (1 = Not At All Similar to 7 = Extremely Similar). Mean similarity was 5.69, with reviewers within 1 point of each other on 68% of intervention items, suggesting overall comparability between TPOCS-RS items and TPOCS-SeRTIFY items. However, two items (Coping Skills, Exposure, or Trauma Narrative) were rated 4 out of 7 by one rater. These items were discussed with the measurement and independent CBT experts and who had previously reviewed the instrument, and with the TPOCS-RS developer. These items were further revised to more explicitly map on to the TPOCS-RS constructs (i.e., changing coping skills to cognitive coping skills, more directly linking use of the coping skill to coping with strong feelings, revising the wording on the exposure or trauma narrative item to more explicitly state that exposures refer to structured practice). Revised items were sent back to the CBT experts who initially provided similarity ratings; revised items each received average similarity ratings of 6.5. Table 1 shows final items.

Final TPOCS-RS SeRTIFY items and item descriptions.

Step 3: collect preliminary psychometric data

We administered the final TPOCS-SeRTIFY to 247 mental health clinicians in a publicly funded mental health system to examine item performance and preliminary construct validity as compared with a lengthier, but commonly used, self-report measure: the Therapy Procedures Checklist-Family Revised (TPC-FR; Weersing et al., 2002). The TPC-FR is a well-validated self-report instrument (Kolko et al., 2009) that produces three subscales: use of CBT, family therapy, and psychodynamic techniques. All three subscales showed excellent internal consistency in this sample (α range = .89–.92). Clinicians were asked to select a representative client on their caseload and indicate the extent to which they used various techniques with this client on the TPC-FR (see Beidas et al., 2013; Supplemental File 1). The TPOCS-SeRTIFY asked respondents to report on the extent to which they used the 12 listed CBT interventions with the same client.

Both measures were administered during a one-time, 2-hr meeting as part of a larger study (Beidas et al., 2013); six clinicians did not complete the TPOCS-SeRTIFY, yielding a final sample of 241. All procedures were approved by the (University of Pennsylvania and City of Philadelphia) Institutional Review Boards and all clinicians completed informed consent before participating. Participant characteristics were representative of the mental health workforce and were largely female (n = 189, 78.4%) and master’s level (n = 184, 76.3%). Clinicians identified as 43.6% (n = 105) White, 25.7% (n = 62) as Black or African American, 7.9% (n = 19) as Asian, and 18.7% as “Other” (n = 45); 4.1% did not disclose their race; 18.7% (n = 45) of participants identified as Hispanic or Latinx. Clinicians averaged 37.5 years of age (SD = 11.6) and 8.4 years of full-time clinical experience (SD = 8.7).

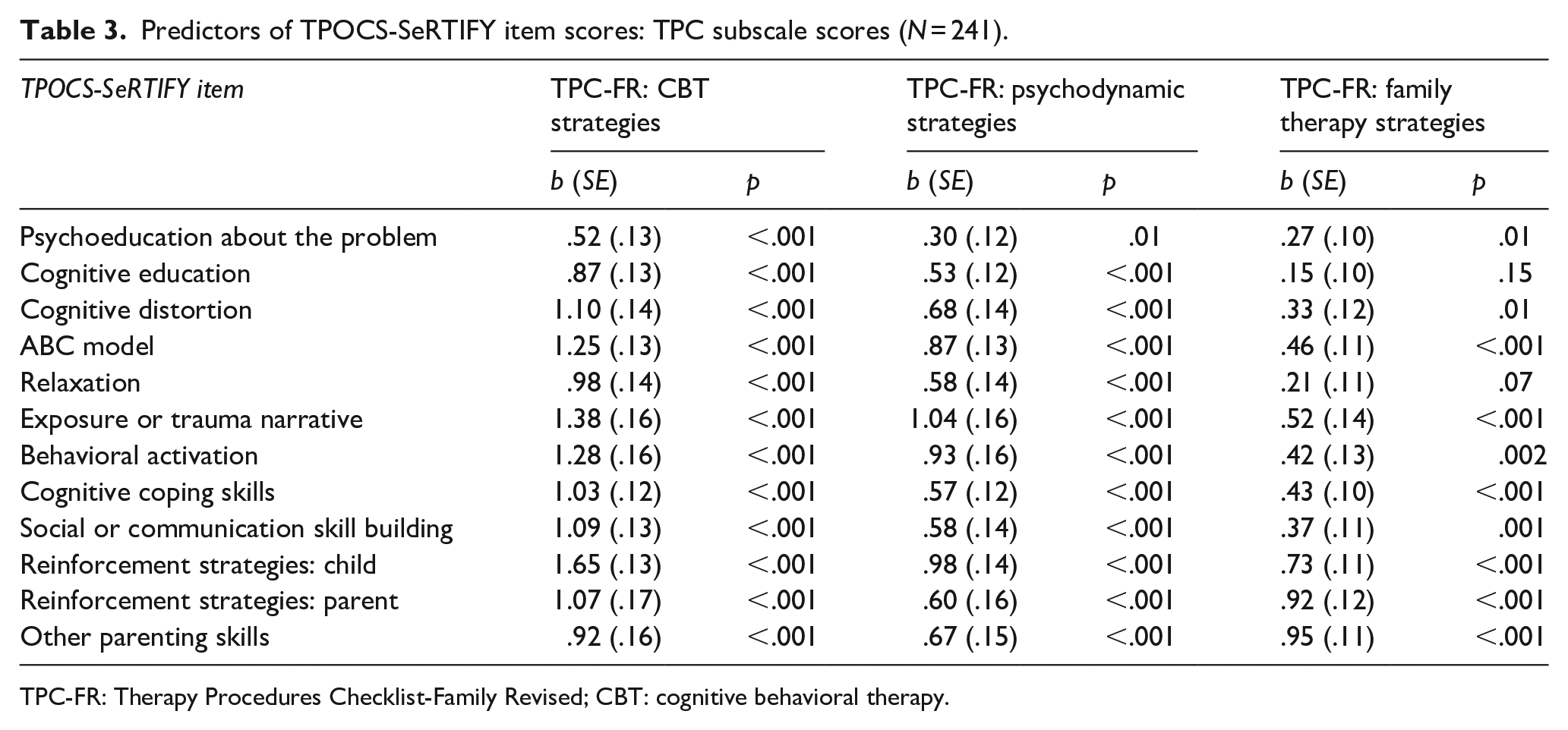

Given that this instrument is designed to assess CBT use broadly across youth presenting concerns and not of prescribed CBT interventions for a particular disorder or problem area, we did not derive a scale score through calculating an “average” CBT score or item reduction analysis (e.g., factor analysis). Instead, we examined general item performance and preliminary score validity by examining the associations of each CBT item on the TPOCS-SeRTIFY to subscale scores on the TPC-FR (CBT, family, and psychodynamic strategies), using mixed-effects models with random intercepts to account for the nested nature of the data. Given that all three subscales of the TPC-FR are highly correlated with one another (rs range from .58 to .68), consistent with the broad range of techniques used in community-based treatment (Garland et al., 2010), we hypothesized that TPOCS-SeRTIFY item scores would be positively associated with all three TPC-FR subscales. However, we expected that TPOCS-SeRTIFY items would be more strongly associated with the TPC-FR CBT Strategies subscale, relative to the family and psychodynamic technique use subscales.

TPOCS-SeRTIFY item performance

Little’s (1988) MCAR test indicated that TPOCS-SeRTIFY data were missing completely at random (χ2 = 181.5, df = 248, p > .05), and no item was missing more than 6% of its values, supporting measure feasibility. Skewness and kurtosis values indicated that all TPOCS-SeRTIFY items were normally distributed (absolute skewness < 1, absolute kurtosis < 1.5, well-within normal range per recommended parameters; West et al., 1995). Table 2 shows the descriptive statistics for TPOCS-SeRTIFY items for the sample. Clinicians reported using a moderate level of CBT with their representative clients across all 12 interventions.

TPOCS-SeRTIFY descriptive statistics.

Preliminary construct validity

Table 3 shows that all TPOCS-SeRTIFY items positively related to the CBT subscale of the TPC-FR (unstandardized coefficients ranged from .52 to 1.65, all ps < .001). Consistent with hypotheses, associations between TPOCS-SeRTIFY items and the TPC-FR psychodynamic and family strategies subscales were of a smaller magnitude than the TPC-CBT strategies subscale. Consistent with established patterns of community practice, whereby clinicians employ techniques from multiple treatment families, all TPOCS-SeRTIFY items also correlated with the TPC-FR psychodynamic and family strategies scales, except for relaxation and cognitive education, which were unrelated to the TPC-FR family strategies subscale.

Predictors of TPOCS-SeRTIFY item scores: TPC subscale scores (N = 241).

TPC-FR: Therapy Procedures Checklist-Family Revised; CBT: cognitive behavioral therapy.

Discussion

Pragmatic measurement development addresses implementation science instrumentation challenges (Glasgow & Riley, 2013). This case study describes our five-step approach to developing a pragmatic, clinician-report instrument to measure CBT delivery, which offers a model for others hoping to develop pragmatic implementation measures. We highlight two critical aspects. First, our emphasis on involving community partners at all stages of research allowed us to make early measure changes to enhance its feasibility and perceived utility. Second, collecting preliminary feasibility and psychometric data prior to full-scale evaluation allowed us to ensure the measure was feasible to implement and had concordance with another, similar instrument before formal evaluation. Based on conversations with our community partners, the instrument has since been adapted to be a primarily session-level instrument. Work is ongoing to validate the TPOCS-SeRTIFY against direct observation of client sessions (Step 4) and will be shared with community partners to inform future research (Step 5).

Our specific measure, the TPOCS-SeRTIFY, also may fill an important youth mental health measurement gap. While primarily designed as a tool to monitor CBT, such measures can also contribute to practice-based learning efforts and future research aimed at building learning mental health systems, which requires feasible ways to index implementation outcomes (Beidas & Wiltsey Stirman, 2020) Developing the TPOCS-SeRTIFY is among the first efforts (see also, Hogue et al., 2014, 2015) to enhance self-report measures to increase their accuracy and clinical utility that (1) can be applied transdiagnostically, regardless of a specific manual or protocol a clinician may be using and (2) parallels an existing direct observational measure. This latter point will facilitate future work examining strategies for enhancing the accuracy of clinician’s self-rating skills to optimize the TPOCS-SeRTIFY’s utility. Related, examining how systematic clinician biases inform their self-report will be important for refining the TPOCS-SeRTIFY to enhance clinicians’ accuracy to self-rate.

This study had several strengths, including the rigorous, stepped, community-partnered development process. While data provide only preliminary information regarding the TPOCS-SeRTIFY’s psychometric performance, results are promising and suggest the benefit of additional work in this area. Measures designed collaboratively with community stakeholders may also afford benefits over researcher-developed gold-standard implementation instruments, which are often developed on samples without historically excluded groups. The primary limitation is the lack of direct observation against which to compare results of clinician ratings on the TPOCS-SeRTIFY and our comparisons to an existing self-report measure that also has not been tested in comparison with direct observation. An additional limitation is lack of gender diversity in our stakeholder sample.

A self-report instrument that maps directly on to a gold-standard coding system, provides clearly operationalized clinician behaviors of interest, and is designed to facilitate clinician self-ratings, fills an important measurement gap for youth mental health service implementation research and practice. This is a critical step in a growing body of research (Hogue et al., 2015) to enhance accurate and feasible self-reported measurement of clinician practice use. Our process can serve as a guide for the development of pragmatic measures across implementation science.

Supplemental Material

sj-pdf-1-irp-10.1177_2633489521992553 – Supplemental material for The TPOCS-self-reported Therapist Intervention Fidelity for Youth (TPOCS-SeRTIFY): A case study of pragmatic measure development

Supplemental material, sj-pdf-1-irp-10.1177_2633489521992553 for The TPOCS-self-reported Therapist Intervention Fidelity for Youth (TPOCS-SeRTIFY): A case study of pragmatic measure development by Emily M Becker-Haimes, Melanie R Klein, Bryce D McLeod, Sonja K Schoenwald, Shannon Dorsey, Aaron Hogue, Perrin B Fugo, Mary L Phan, Carlin Hoffacker and Rinad S Beidas in Implementation Research and Practice

Footnotes

Acknowledgements

The authors gratefully acknowledge Judy Shea, David Mandell, Steven Marcus, Jessica Fishman, Catherine Maclean, and Michael French for their contributions to the larger clinical trial and Courtney Gregor, Kelly Zentgraf, and Adina Lieberman for their coordination support. They are grateful for the support that the Department of Behavioral Health and Intellectual Disability Services has provided us to conduct this work within their system, for the Evidence-Based Practice and Innovation (EPIC) group, and for the partnership provided to us by participating agencies, therapists, and clients.

Authors’ note

This study was registered with ClinicalTrials.gov (Identifier NCT02820623).

Declaration of conflicting interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Dr Beidas discloses that she is an Associate Editor at Implementation Research and Practice. She also receives royalties from Oxford University Press and has consulted for Camden Coalition of Healthcare Providers. Dr. Beidas provides consultation to United Behavioral Health. Dr. Beidas serves on the Clinical and Scientific Advisory Board for Optum Behavioral Health. Dr Schoenwald is co-founding editor of Implementation Research and Practice. All other authors have no conflict of interest to disclose.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by Grant R01 MH108551 (PI: Beidas) and Grant K23 MH099179 (PI: Beidas) from NIMH.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.