Abstract

Background:

Despite promising findings regarding the safety, fidelity, and effectiveness of peer-delivered behavioral health programs, there are training-related challenges to the integration of peers on health care teams. Specifically, there is a need to understand the elements of training and consultation that may be unique to peer-delivered interventions.

Methods:

As part of a pilot effectiveness-implementation study of an abbreviated version of Skills Training in Affective and Interpersonal Regulation (STAIR) for posttraumatic stress disorder (PTSD), we conducted a mixed-methods process evaluation utilizing multiple data sources (questionnaires and field notes) to characterize our approach to consultation and explore relations between fidelity, treatment outcome, and client satisfaction.

Results:

Peer interventionists exhibited high fidelity, defined by adherence (M = 93.7%, SD = 12.3%) and competence (M = 3.7 “competent,” SD = 0.5). Adherence, β = .69, t(1) = 3.69, p < .01, and competence, β = .585, t(1) = 2.88, p < .05, were each associated with trial participant’s satisfaction, but not associated with clinical outcomes. Our synthesis of fidelity-monitoring data and consultation field notes suggests that peer interventionists possess strengths in interpersonal effectiveness, such as rapport building, empathy, and appropriate self-disclosure. Peer interventionists evidenced minor challenges with key features of directive approaches, such as pacing, time efficiency, and providing strong theoretical rationale for homework and tracking.

Conclusion:

Due to promise of peers in expanding the behavioral health workforce and engaging individuals otherwise missed by the medical model, the current study aimed to characterize unique aspects of training and consultation. We found peer interventionists demonstrated high fidelity, supported through dynamic training and consultation with feedback. Research is needed to examine the impact of consultation approach on implementation and treatment outcomes.

Plain Language Summary:

Peers—paraprofessionals who use their lived experiences to engage and support the populations they serve—have been increasingly integrated into health care settings in the United States. Training peers to deliver interventions may provide cost savings by way of improving efficient utilization of professional services. Despite promising findings in regard to safety, intervention fidelity, and effectiveness of peer delivery, there are important challenges that need to be addressed if peers are to be more broadly integrated into the health care system as interventionists. These include challenges associated with highly variable training, inadequate supervision, and poor delineation of peer’s roles within the broader spectrum of care. Thus, there is a need to understand the unique components of training and consultation for peers. We report key findings from an evaluation of a pilot study of an abbreviated version of Skills Training in Affective and Interpersonal Regulation (STAIR) for posttraumatic stress disorder (PTSD), adapted for peer delivery. We characterize our approach to consultation with feedback and explore relations between fidelity, treatment outcome, and client satisfaction. Our study extends the small yet growing literature on training and consultation approaches to support fidelity (adherence and competence) among peer interventionists. Organizations hoping to integrate peers on health care teams could utilize our fidelity-monitoring approach to set benchmarks to ensure peer-delivered interventions are safe and effective.

As part of a larger shift toward team-based approaches in health care, peers—paraprofessionals who use their lived experiences to engage and support the populations they serve—have been increasingly integrated into health care settings in the United States. (Lehmann & Sanders, 2007; Myrick & del Vecchio, 2016). Nearly a quarter of behavioral health treatment facilities offer peer services, and 35 states provide Medicaid reimbursement (Videka et al., 2019). Peers go by many titles, including peer specialists, certified peer specialists, peer support specialists, peer navigators, community health workers, and recovery coaches. Some peers work in tandem with the medicalized health care system by acting as a “bridge” between community members and professional care, whereas other peers view themselves as a separate system that is an alternative to professional treatment (Adame & Leitner, 2008). Common services provided by peers include outreach, case management, transportation, general emotional support, health promotion education, and skills-based support groups (Jiménez-Solomon et al., 2016; Salzer et al., 2010).

Peers and the people they support describe how shared lived experience helps to build trust and reduce shame by normalizing experiences, improving rapport, and instilling hope (Cabral et al., 2013; Gates & Akabas, 2007; Gidugu et al., 2014; Mowbray et al., 1996). Peers have also been shown to promote trust and rapport with professional care (Chinman et al., 2008). Given that stigma and medical mistrust remain significant treatment barriers (Mojtabai et al., 2011), peers may be uniquely positioned to address these barriers. Peers also report personal benefits to engaging in peer support work (Moran et al., 2011; Mowbray et al., 1996).

Further, training peers to deliver some interventions may also provide cost savings by way of improving efficient utilization of professional services through reductions in emergency care, increases in preventive care, and increases in the overall availability of behavioral health services in resource-constrained settings. A randomized controlled trial (RCT) by Sledge and colleagues (2011) found that peer support led to significantly fewer psychiatric rehospitalizations among high-utilizing individuals compared to professional contact alone.

A growing body of research supports the feasibility and effectiveness of peer-delivered manualized individual and group behavioral interventions in the United States. For example, an RCT that compared professionally led treatment as usual to a peer-delivered self-management intervention, wellness recovery action planning (WRAP), found that peers attained high fidelity, and participants evidenced significant reductions in anxiety and depression symptoms (Cook et al., 2012). Similarly, Crisanti and colleagues (2019) found that peer delivery of an intervention for posttraumatic stress disorder (PTSD) and substance use (Seeking Safety; Najavits, 2003) was non-inferior to professional delivery in terms of effectiveness.

Despite promising findings in regard to safety, fidelity, and effectiveness of peer delivery, there are important challenges that need to be addressed if peers are to be more broadly integrated into the health care system as interventionists. These include challenges associated with highly variable training, inadequate supervision, and poor delineation of peer’s roles within the broader spectrum of care (Chinman et al., 2008; Gates & Akabas, 2007; Mowbray et al., 1996; Salzer et al., 2010; Silver & Nemec, 2016). The majority of states have certifications for peers; however, required hours of formal didactic training (30 to 100+ hr) and work experience (0 to 500+ hr) for certification vary widely (Chapman et al., 2015). Some peers embedded in professional care models have called for greater standardization of training, including more training in the mental health recovery process and therapeutic techniques (Gates & Akabas, 2007; Moran et al., 2013). As one example, the national Veterans Health Administration (VHA) has standardized peer behavioral health training, which covers topics, such as confidentiality, group facilitation, and navigating boundaries, and includes experiential components and a minimum of 15 hr of yearly continuing education (Chinman et al., 2013). However, some professional organizations have raised concerns about peers working outside of their training, and trepidations about patient safety (Chinman et al., 2008; Gates & Akabas, 2007). There is a need to develop models of training, supervision, and safety monitoring and clearly define the role of peers embedded in safety net health care settings, which may differ from models developed in VHA settings in terms of trauma type (e.g., community violence) and culture (e.g., immigrant and refugee; high proportion of ethno-racially diverse consumers).

Various training and consultation models have been developed to ensure that behavioral health interventions are delivered with high fidelity. For instance, Harned and colleagues (2013) promote a fidelity-oriented approach that emphasizes the role of consultation. As an extension of this, Stirman and colleagues (2017) also delineate a continuous improvement approach that acknowledges the need for interventions, and training and consultation approaches to be responsive to the need of the local setting and providers. These approaches help to prevent a drop in fidelity that can occur after initial training (Bein et al., 2000; Marques et al., 2019; Weisz et al., 2013). Recently, Fortuna and colleagues (2018) applied a fidelity-oriented approach to a peer-delivered smartphone-based illness management intervention; their consultation approach included multi-level supervision, fidelity monitoring through smartphone engagement, and audit and feedback of sessions.

The current study focuses on describing our approach to consultation with feedback to support fidelity among peer interventionists as part of a recent hybrid effectiveness-implementation pilot study of an abbreviated version of Skills Training in Affective and Interpersonal Regulation (STAIR) for PTSD adapted for peer delivery. Main outcomes regarding the feasibility, acceptability, and initial effectiveness of the intervention are published elsewhere (Smith et al., 2020), and indicate that the intervention was effective in reducing PTSD, general stress, anxiety, and depressive symptoms at post-treatment. In this article, we report on data gathered during our implementation process evaluation to characterize (a) relations between fidelity, treatment outcome, and client satisfaction; (b) our approach to consultation with feedback; and (c) the training and consultation needs unique to peer interventionists.

Methods

Participants and procedures

During the initial stages of study planning, our research team formed a partnership with a peer-led service organization overseen by the department of psychiatry at a large safety net hospital in the Northeastern United States. We assembled a community working group (CWG; N = 6) which included the full executive team of the peer-led organization and comprised five peer supervisors (all employed in supervisory roles) and one hospital administrator with a background in psychiatric nursing. The CWG and researchers were in regular contact (every 3–6 months) and met in person to discuss all stages of the study, including intervention selection and tailoring, nomination of peer interventionists, oversight of study, and interpretation of findings.

The peer-led service organization oversees per diem peer specialists who are hospital employees. Peer specialists within this organization fulfill a variety of roles, including outreach, one-to-one support (in-person and by phone), group facilitation, and activity organization. All peers, by the organization’s definition, have lived experiences with mental health or substance use problems, but the formal training they have received varies. Some peer specialists have completed formal coursework and a written exam to gain certification as peer specialists through the Department of Mental Health’s (DMH) statewide Certified Peer Specialist training program (e.g., The Transformation Center, 2020). Peer specialists have additional opportunities to receive training in skills-based courses, such as WRAP (Copeland, 2002).

Intervention selection and tailoring

Prior to submitting for funding, we presented several possible evidence-based PTSD interventions to the CWG. From these, an abbreviated five-session version of STAIR (Jain et al., 2020) was selected by consensus of CWG members. STAIR has demonstrated effectiveness (Cloitre et al., 2002, 2010) when delivered in safety net settings (Trappler & Newville, 2007) and by non-specialists (MacIntosh et al., 2016). Centered on the three-channel cognitive behavioral conceptualization of emotion regulation, abbreviated STAIR provides a “toolbox” of skills for emotion regulation (cognitive reframing, behavioral activation, mindfulness, and distress tolerance) and interpersonal effectiveness that clients select to learn based on their individual needs and preferences. Unlike other PTSD treatments, abbreviated STAIR does not require exposure or reprocessing of the trauma memory (Charuvastra & Cloitre, 2008).

The peer-adapted intervention was tailored in collaboration with the CWG, the research team, and intervention developers prior to the trial. This tailoring focused on integrating some tenets of peer models (e.g., mutuality, self-disclosure) into traditional cognitive behavioral approaches. One major contrast between the approaches is that the peer model is non-directive, whereas cognitive behavioral interventions are directive; our approach was admittedly more directive than is typical in a peer approach, but was responsive to the expressed needs of the organization. Although tailoring included changes to style of delivery and a reduction in clinical language, core content was not changed (per intervention developer vetting). For more detail on our adaptation, which involved multiple stakeholders (CWG, peer interventionists, and trial participants) and stages (pre- and post-trial), see Ametaj et al., 2021.

Peer specialist selection process, training, and consultation

CWG members were trained in the intervention prior to specifying nomination criteria for peer interventionists, so they would have a good sense of the content and necessary skills. It is important to note that CWG members identify as peers themselves, directly supervise peers, and have intimate knowledge of an individual peer’s relative strengths and challenges. As such, we found that CWGs were best able to identify peer specialists who may be a good fit for the demands of the project. Together, we set eligibility criteria as peers who (1) had completed peer specialist certification program, (2) reported lived experience of trauma, and (3) were in current stable behavioral health functioning. CWG members approached potentially eligible peer specialists and invited them to participate. Those who were interested were connected with the study team. Peer specialist interventionists were not known to researchers prior to nomination. All research procedures were approved by the Boston Medical Center and Boston University Medical Campus Institutional Review Board (Approval No. H-37338). Written informed consent was obtained from peer interventionists and participants.

Our overall approach combined multiple expert-recommended implementation strategies (i.e., Powell et al., 2015), including making training dynamic and providing ongoing consultation with feedback to support protocol adherence and competence (fidelity). We hosted a 5-hr in-person training that included both didactic and experiential components and was attended by all of the nominated certified peer specialists (n = 6) and all members of the CWG. Four peer specialists opted to continue as study interventionists (one declined due to difficulties in traveling to main study site on regular basis; one declined due to health concerns).

Peer interventionists were mostly White men (75.0%) and had a mean age of 64.25 (SD = 8.06) years. Two peer interventionists had graduate-level education and the other two attended some college but did not receive a degree; none were practicing therapists. Peer specialists were required to see a minimum of three trial participants (training cases).

Data sources

We utilized multiple data sources (questionnaires and field notes) collected as part of our larger process evaluation. Together these sources helped to characterize (a) relations between fidelity (adherence and competence), clinical outcomes, and client satisfaction; (b) fidelity-oriented support in consultation; and (c) the consultation needs unique to peer specialists.

Qualitative data for this project are observational in nature and rely on the analysis of researcher field notes gathered during fidelity and consultation provided during the pilot study. Questionnaires were administered through REDCap, a HIPAA compliant, user access restricted, and password-protected database (Harris et al., 2009, 2019). As part of a larger set of questionnaires, clinical effectiveness outcomes were measured by the PTSD Checklist for the DSM-5 (PCL-5; Weathers et al., 2017) and the 21-item Depression, Anxiety, and Stress Scales (DASS-21; Lovibond & Lovibond, 1995) at pre-and post-treatment and at each session, and trial participant satisfaction was measured by the Client Satisfaction Questionnaire-8 (CSQ-8; Larsen et al., 1979) at post-treatment.

Fidelity monitoring

We assessed two aspects of fidelity, including adherence to the protocol and competence (skill level). Of the 87 total sessions, 77 were rated for fidelity (minus 3 sessions delivered by [SEV] due to last-minute peer specialist transportation issues and sick days and 7 sessions missing audio recordings [8% missing data]). Adherence and competency were double-rated by two members of the study staff, until the average interrater agreement was above 80% (approximately 16 sessions, 20.8% of all sessions are double-rated), then the remaining sessions were evaluated by a single rater. The team continued to meet with the supervising study principal investigator [SEV] during the independent rating phase to discuss coding, resolve any uncertainty, and ensure consensus. We examined associations between fidelity ratings (adherence and competence), clinical outcomes, and client satisfaction.

Adherence

In consultation with the treatment developer, we adapted the original abbreviated STAIR fidelity checklist for this study. Adherence was calculated as a percentage by dividing the number of items completed out of the total number of items on the session checklist (100%= highest adherence). We also calculated average adherence by session and by hours of consultation to examine whether adherence varied over time.

Competence

We adapted the Cognitive Therapy Scale–Revised (CTS-R; Blackburn et al., 2001) to assess the peer specialists’ competence. CTS-R ratings were completed by trained study staff (under the supervision of study principal investigator) based on audio review of sessions. The CTS-R includes 12 items, each scored on a Likert-type scale from 0 (poor) to 6 (excellent), with a score of 3 indicating adequate competence. Some items represent general clinical skills (e.g., collaboration, pacing and use of time, interpersonal effectiveness, eliciting appropriate emotional expression), while others represent cognitive behavioral therapy-specific skills (e.g., agenda setting, eliciting key cognitions and planning behaviors, homework setting).

The CTS-R overlaps well with the abbreviated STAIR competencies. For instance, interventionists were expected to set an agenda at the start of every session (Item 1: Agenda Setting and Adherence) and to guide participants to be more aware of how their emotions, thoughts, and behaviors influence each other (Item 10: Guided Discovery). We made minor adjustments to the scale to fit the abbreviated STAIR, including omitting Item 7: Eliciting Key Cognitions in sessions that did not include cognitive work, omitting Item 12: Homework Setting in the final session, and collapsing Item 2: Feedback and Item 11: Application of Change Methods into a single rating (i.e., in abbreviated STAIR, feedback is applied/elicited in the context of applying change methods). Because we omitted items, we reported the mean rating for each item and did not report or interpret total scores. For each item, we used Blackburn and colleagues (2001) competency standard of 3 as our benchmark (see Table 1).

Peer interventionist average competence and adherence descriptive statistics.

SD: standard deviation; CTS-R: Cognitive Therapy Scale–Revised.

Note: CTS-R scores range from 1 to 5, with a minimum score of 3 considered adequate competence.

Item 2: feedback criteria were subsumed under Item 11: application of change methods.

We also examined average competence across CTS-R items by session and by hours of consultation received to understand the peer interventionist competence over time.

Consultation process (feedback)

Peer interventionists received 1 hr of in-person weekly group consultation and written individual feedback based on audio review of therapy sessions for the previous week.

Group consultation

Consultation was held approximately once a week over the course of 9 months for a total of 37 1-hr sessions. The number of hours of consultation peer interventionists had received at the time of delivering their first session ranged from 5 to 14 hr (M = 7.5, SD = 4.4, Median = 5.5). The number of hours of consultation peer interventionists had received at the time of delivering their final session ranged from 21 to 37 hr (M = 28.3, SD = 6.7, Median = 27.5).

The principal investigator (SEV; a woman clinical psychologist with specialized training in PTSD treatment and implementation science) led structured cognitive behavioral therapy style group consultation, offering clinical practice recommendations and encouraging peer interventionists to integrated selective self-disclosure as appropriate. Field notes were taken during consultation meetings by the study research assistant to garner common themes and topics covered in group consultation, including psychoeducation, care delivery, and unique client-level challenges. Our coding scheme is presented in Table 3. These topics indicated areas where peer interventionists may require additional education, training, and support.

Written individual feedback

Structured feedback sheets were organized into categories based on CTS-R items. Feedback sheets outlined areas of strength and areas for improvement, and specific recommendations for the next session. The research team compiled feedback for all 77 rated sessions to characterize general strengths and specify training and technical supports needed for peer interventionists.

Resource utilization

Resource utilization was tracked by study staff to estimate the time spent on supportive technical and clinical tasks to ensure fidelity.

Data analysis

Fidelity monitoring

Descriptive statistics for each peer specialist’s adherence, overall competence, competence per specific CTS-R skill item, and hours of consultation received were calculated. Data were analyzed using SPSS version 24 (IBM Corporation, 2016).

We conducted non-parametric analyses to account for our small sample size of peer interventionists (N = 4) and the lack of normality of the adherence and competency data. We assessed whether fidelity (adherence and competence) was related to the number of sessions (experience) or hours of consultation (training) received using chi-square test for independence. Adherence data were dichotomized (100% adherence vs <100% adherence [“non-adherent”]). Linear regressions were conducted to model the effect of interventionists’ average adherence and competence on treatment outcome and client satisfaction.

Consultation (feedback) data

We applied a rapid coding procedure (Neal et al., 2015) to directed content analysis (Hsieh & Shannon, 2005) based on CTS-R items and magnitude codes (Saldaña, 2013; that is, frequency across interventionists) to organize the initial coding scheme. This coding scheme was applied to observational data from written feedback sheets (N = 79) and consultation meeting field notes (N = 32) to (a) summarize general clinical strengths and challenges of peer interventionists (based on CTS-R) and (b) identify key training gaps that needed to be addressed through consultation. The initial coding was completed in conjunction with CTS-R-based competence coding and utilized the same coding team and consensus approach. Key themes were collated and sorted based on whether they (1) exemplified a strength or a limitation, (2) were demonstrated by all or individual peer specialist interventionists (general or infrequent), and (3) were notable in terms of intervention content (i.e., specific to individual components of the intervention). Although we attempted to summarize the breadth of the codes, we highlight challenges that posed the most threat to the intervention integrity. The goal of qualitative analysis was to use observational data to expand on and key issues that were emerged during consultation and feedback (Palinkas et al., 2010).

To estimate the resource burden of supporting this approach to consultation, we calculated the average time per week study staff spent on supporting the trial (including both support staff and clinician investigator).

Results

Fidelity monitoring

Adherence and competence

Overall, peer interventionists exhibited high adherence (M = 93.7%, SD = 12.3%) as measured by session checklists and adequate competence (M = 3.7, SD = 0.5) as measured by the CTS-R. It is important to note that only 22.1% (n = 17) of reviewed sessions did not attain 100% adherence.

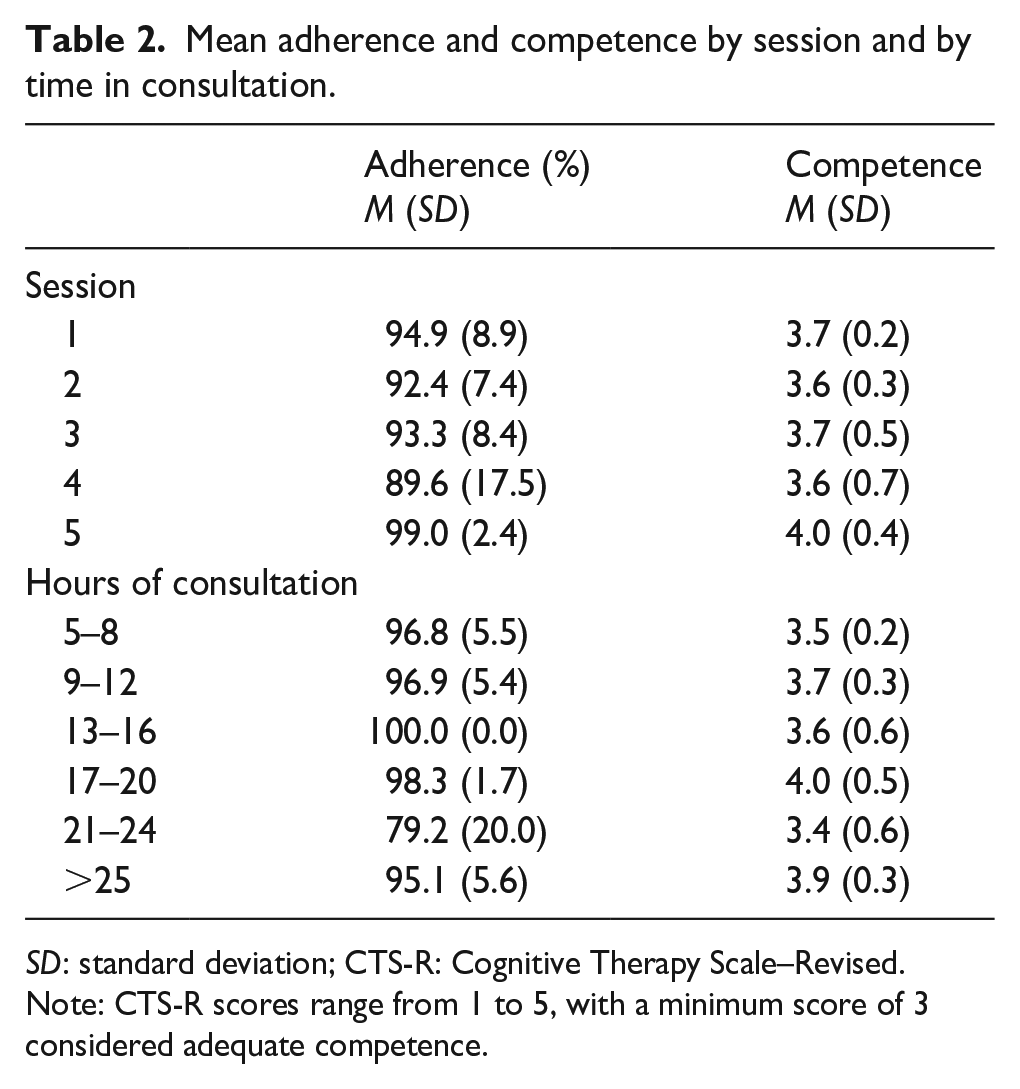

On average, peer interventionists scored the highest on interpersonal effectiveness (M = 4.4, SD = 0.7) and collaboration (M = 4.0, SD = 0.8) and lowest on eliciting key cognitions (M = 3.2, SD = 0.5) and homework setting (M = 3.3, SD = 1.0) items of the CTS-R (see Table 1). Average adherence and competence were consistently high both by session (ranging from 89.6 to 99.0 and 3.6 to 4.0, respectively) and by number of hours of consultation (ranging from 79.2 to 100 and 3.5 to 4.0, respectively; see Table 2).

Mean adherence and competence by session and by time in consultation.

SD: standard deviation; CTS-R: Cognitive Therapy Scale–Revised.

Note: CTS-R scores range from 1 to 5, with a minimum score of 3 considered adequate competence.

Chi-square analyses for adherence by session, χ2(5) = 4.17, p = .384, and by consultation month, χ2(10.67) = 4.17, p = .058, were not significant. Similarly, chi-square analyses for competence by session, χ2(12) = 13.89, p = .308, and by month of consultation, χ2(12) = 15.58, p = .410, were not significant.

Association with treatment outcome

Linear regression models suggest that adherence and competence were not associated with PTSD treatment outcomes. Adherence did not predict PCL-5 score at post-intervention, β = .20, t(1) = .79, p = .439, or PCL-5 score change, β = .32, t(1) = 1.34, p = .201. Average competence did not predict PCL-5 score at post-intervention, β = .11, t(1) = .45, p = .657, or PCL-5 score change, β = .15, t(1) = .60, p = .555.

Association with client satisfaction

Linear regressions showed that both peer interventionist adherence, β = .69, t(1) = 3.69, p < .01, and competence, β = .585, t(1) = 2.88, p < .05, significantly predicted participant’s satisfaction with the intervention.

Consultation

Clinical strengths and challenges

A summary of clinical strengths and challenges is presented in Table 3. Peer interventionists demonstrated general strengths in interpersonal effectiveness, such as rapport building, empathy, and appropriate self-disclosure. They also evidenced strength in the application of focused breathing, and relational and compassion-focused exercises. By comparison, peer interventionists evidenced challenges with pacing, time efficiency, and providing strong rationale for skills practice and behavioral tracking. Although the peer interventionists were competent in managing affect in the sessions, they asked for additional training in grounding skills during consultation. Although not frequent, peers experienced some difficulties in teaching cognitive skills relative to emotion, body, and behavioral skills. Training gaps addressed through consultation included (a) psychoeducation on PTSD (as a diagnosis, CBT theory, and other evidence-based approaches) and avoidance, (b) training on the use of the PCL-5 in session, (c) application of grounding techniques, (d) flexible delivery to meet the intellectual and emotional needs of participant, and (e) generalizing content to mitigate social determinants (e.g., homelessness, unemployment) and co-occurring problem behaviors (substance use, disordered eating, and poor medical adherence).

Relative clinical strengths and challenges of peer interventionists.

Resource utilization (time)

Technical support tasks included session audio review to flag for clinical review, creation of feedback sheets, and notation of consultation minutes. At an average rate of three client participant sessions per week, staff reported spending 6–7 hr reviewing session audio and 1–2 hr creating feedback sheets and taking consultation notes, for a total of 7–9 hr weekly. Clinician tasks included review of audio segments flagged for clinical review, reviewing feedback sheets, and providing group and individual consultation. With an average of three sessions per week, clinician tasks took an average of 0.5–1 hr of audio and feedback sheet review, 1 hr of group consultation, and 1–3 hr of individual consultation, for a total of 2–5 hr weekly.

Discussion

This article reports on findings from the process evaluation of an effectiveness-implementation pilot study of a peer-delivered intervention for PTSD (abbreviated STAIR). We aimed to characterize the training and consultation needs of peers serving as primary interventionists and to describe our approach to addressing these needs over the course of a pilot study. We also examined relations between training and consultation, fidelity, treatment outcome, and client satisfaction. Fidelity was attained quickly and remained consistently high over time. This may be one reason why fidelity was positively associated with the client satisfaction, but not associated with PTSD outcomes. Our finding reflects the complex and likely nuanced relations between fidelity and treatment outcome, as the association between the two in the extant literature is equivocal (Perepletchikova, 2011; Perepletchikova & Kazdin, 2005). Although ensuring fidelity in the context of effectiveness and efficacy studies remains central to verifying that interventions are delivered as intended, the importance of fidelity-oriented approaches to training and consultation needs further study.

We deployed a consultation and feedback approach, which entailed near real-time fidelity assessments to support continuous improvement. Written feedback was provided individually, and verbal feedback was provided through weekly group consultation. Near real-time monitoring allowed us to understand and respond to unique challenges that peers were experiencing as they were delivering the intervention. For example, we found that peer interventionists may need more support with pacing and being more directive in therapist interaction, providing theoretical rationale for tools and homework, and application of cognitive restructuring tools. This is not surprising, or necessarily problematic, given that peers are often trained to be non-directive in their typical supportive roles. Our intervention is a hybrid professional-peer approach, a where some tenets of the peer model were embedded in typically directive cognitive behavioral approache. Consultation and training were dynamic and responsive to expected knowledge gaps in PTSD assessment, diagnosis, and treatment models and approaches. It is important to acknowledge that professional providers also struggle with these challenges (Foa et al., 2013) and that they are not unique to peer interventionists. Symptom tracking also allowed us to ensure safety; however, no issues arose in this regard.

Post hoc analyses indicated that “dosage” of consultation (hours received) was not related to improvements in fidelity over time. We think this may have been due to our process of selecting peer interventionists. For example, the CWG nominated peers who had prior training in manualized treatments (e.g., WRAP, Copeland, 2002) and had gleaned general counseling skills from facilitating support groups within the organization. We found that competency benchmarks were attained by all peer interventionists within the first month of the project. One implication may be that intensity of review and feedback could have been decreased for the duration of the project. However, we suspect that if our interventionists had not been selected through supervisor nomination, some interventionists may have been slower to meet competency benchmarks and may have benefits from our more intensive approach.

Despite not finding an association between consultation dosage and fidelity, there is evidence that indicates providing consultation improves fidelity in professional interventionists (Bein et al., 2000; Bellg et al., 2004; Fixsen et al., 2005; Harned et al., 2013; Stirman et al., 2012). The field has recently started to shift toward strategies that support ongoing consultation to improve and maintain treatment fidelity, and ensure that behavioral health treatments are responsive to the needs of consumers (Haine-Schlagel et al., 2013; Stirman et al., 2017). Future research utilizing peer interventionists with more variable training histories and skill sets may help us better understand the impact of our approach to consultation on intervention effectiveness and sustainability in settings where peers serve.

Limitations

The current study is limited in terms of the generalizability of our findings to implementation in other peer-led organizations or with other interventions due to the small sample size of peer interventionists and participants. We presume that our peer interventionists represent a select group that may most likely to succeed in delivering manualized treatments and is likely not representative of the average peer worker. Our findings may also not generalize to other behavioral health interventions. However, it is promising that the skills presented in abbreviated STAIR are very similar to those included in other cognitive behavioral therapies, including skills focused on behavioral activation, cognitive restructuring, mindfulness and relaxation, and distress tolerance (Fenn & Byrne, 2013; Gaudiano, 2008). We suspect that our findings may not generalize to exposure-based therapies for PTSD which entail direct engagement with trauma memory and reminders, and more specialized therapeutic skills, but this is worth exploring in future research.

To our knowledge, we are the first to use the CTS-R to evaluate competency in peer interventionists. There may be need for further adaptation of this tool to better capture competencies of a hybrid approach and honor some of the strengths of the peer model that were not incorporated into the competency assessments (e.g., reducing stigma). Using the CTS-R, we held peers to the professional standard, which was the aim of this project, but perhaps not the aim for use of this intervention in real-world settings.

Implications

Although our approach to training and consultation was successful during this trial, we identified some challenges to implementation in real-world peer settings. For example, most peer organizations do not have clear structures or resources to provide this level of consultation. Time and clinical support limitations also pose challenges to providing clinical consultation within peer-based organizations. Most importantly, feedback from peer interventionists indicated that having professional interventionists come into peer spaces may not be acceptable or appropriate based on previous negative experiences or direct harms from professional care systems. Thus, researchers and organizations integrating peer workers must address both the logistical challenges and ethical implications of professional-level consultation within peer spaces. These challenges may be less problematic where peers are embedded within professional care teams.

Because peers are so heterogeneous in terms of experience and health, serve a wide range of functions, and have different sets of strengths, we do not recommend that all peers could or should deliver manualized inventions. However, those with interest and ability could help expand the behavioral health workforce (Alegría et al., 2007; Dinwiddie et al., 2013; Lê Cook et al., 2012) and may have a place in our health care system. To do so, organizations and researchers must develop best practices for hiring and selecting peer specialists as interventionists. We acknowledge that the nomination process we applied may be more challenging for initial hiring and within organizations without formalized supervision structures that allow for leadership to so intimately understand the strengths and weaknesses of each peer.

Future studies are warranted to develop tools for monitoring competence in real time, which include intervention-specific and general therapeutic skills relevant to quality care. Importantly, the general therapeutic skills assessed should include those promoted in the peer model (e.g., “mutuality”), as the measure of competence utilized in the current studies and in other studies of peer delivery are often developed for professional interventionists within medical settings (Cronise et al., 2016; Gomez, 2013). These measures may not be representative of peer specialist competence or the skills that are particularly valued by those seeking peer services, including those who may have had negative or stigmatizing experience with professional medical treatment. Recent developments in peer-specific competence measures are promising (Crisanti et al., 2019; Hunt & Byrne, 2019). This follows national trends, as many states using peer-led programs are looking to identify core proficiencies (Daniels et al., 2009).

Our ongoing assessment of fidelity required significant administrative and clinical time, as the research team reviewed and rated almost every session delivered. While this approach allowed us to characterize our consultation with feedback approach in the current trial, it would not be feasible in a scaled-up trial. Thus, it is important to balance effectiveness and efficiency in measuring fidelity (Schoenwald et al., 2011). Competence benchmarking approaches have demonstrated that effectiveness in ensuring fidelity is sufficient for intervention effectiveness (Breitenstein et al., 2010; Cross & West, 2011; Ringerman et al., 2006). It may not be necessary to review and rate every session, although this may be helpful until a certain threshold of competence is reached, with periodic fidelity checks to reduce drift. For example, in a study of a transdiagnostic treatment, researchers provided 6 months of weekly consultation, routine audio recording and submissions, and written feedback on two sessions (Creed et al., 2016). Monson and colleagues (2018) explored a consultation model for cognitive processing therapy for PTSD in which selected audio segments of sessions were reviewed during 6 months of weekly consultation meetings, and full fidelity assessment and feedback were provided after consultation. Finally, the need for intensive review and feedback may change over time. Therefore, approaches to training need to be dynamic and responsive to the unique training gaps of interventionists—especially peer interventionists who may have understandable knowledge gaps at the onset of training.

Conclusion

Due to the potential of peer specialists to expand the behavioral health workforce and to engage individuals otherwise missed by the medical/professional model, the current study aimed to characterize the training and consultation needs of peer interventionists delivering a cognitive behavioral intervention for PTSD. We found that peer interventionists demonstrated high fidelity to the intervention. Adherence and competence were associated with client satisfaction, suggesting that fidelity to the intervention is the key to developing and adapting acceptable interventions. Further study is warranted to examine the impact of consultation with feedback on fidelity improvement and maintenance, and more effective and efficient ways to implement real-time fidelity assessments needed for continuous improvement.

Footnotes

Acknowledgements

The authors thank the leadership of the Metro Boston Recovery Learning Community and peer specialists Idony Lisle, Michael Kanter, Brian Shea and Gary Bromley for their support as partners and interventionists over the course of the project. They also thank Dr Christie Jackson who provided training to study interventionists and advised on the consultation process.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by Boston University Clinical and Translational Science Institute (BU-CTSI) Integrated Pilot Grant Program and the National Institute of Health (1UL1TR001430). Dr Valentine’s time was supported by the National Institute of Mental Health (K23MH117221).