Abstract

Background:

Most evidence-based practices in mental health are complex psychosocial interventions, but little research has focused on assessing and addressing the characteristics of these interventions, such as design quality and packaging, that serve as intra-intervention determinants (i.e., barriers and facilitators) of implementation outcomes. Usability—the extent to which a product can be used by specified users to achieve specified goals with effectiveness, efficiency, and satisfaction—is a key indicator of design quality. Drawing from the field of human-centered design, this article presents a novel methodology for evaluating the usability of complex psychosocial interventions and describes an example “use case” application to an exposure protocol for the treatment of anxiety disorders with one user group.

Method:

The Usability Evaluation for Evidence-Based Psychosocial Interventions (USE-EBPI) methodology comprises four steps: (1) identify users for testing; (2) define and prioritize EBPI components (i.e., tasks and packaging); (3) plan and conduct the evaluation; and (4) organize and prioritize usability issues. In the example, clinicians were selected for testing from among the identified user groups of the exposure protocol (e.g., clients, system administrators). Clinicians with differing levels of experience with exposure therapies (novice,

Results:

Average IUS ratings (80.5;

Conclusion:

Findings from the current study suggested the USE-EBPI is useful for evaluating the usability of complex psychosocial interventions and informing subsequent intervention redesign (in the context of broader development frameworks) to enhance implementation. Future research goals are discussed, which include applying USE-EBPI with a broader range of interventions and user groups (e.g., clients).

Plain language abstract:

Characteristics of evidence-based psychosocial interventions (EBPIs) that impact the extent to which they can be implemented in real world mental health service settings have received far less attention than the characteristics of individuals (e.g., clinicians) or settings (e.g., community mental health centers), where EBPI implementation occurs. No methods exist to evaluate the usability of EBPIs, which can be a critical barrier or facilitator of implementation success. The current article describes a new method, the Usability Evaluation for Evidence-Based Psychosocial Interventions (USE-EBPI), which uses techniques drawn from the field of human-centered design to evaluate EBPI usability. An example application to an intervention protocol for anxiety problems among adults is included to illustrate the value of the new approach.

Keywords

Background

Complex interventions (i.e., those with several interacting components) are common in contemporary health care (Craig et al., 2013). In mental health, the majority of evidence-based practices are complex

A wealth of research has focused on identifying multilevel determinants (i.e., barriers and facilitators) of implementation (Krause et al., 2014), most often specifying factors at the individual and organization/inner setting levels. Much less frequently targeted are intervention-level determinants (i.e., characteristics of EBPIs themselves; Dopp et al., 2019). This is surprising given long-standing recognition that intervention-level determinants are critical to successful implementation (Schloemer & Schroder-Back, 2018). Classic frameworks such as Rogers’ (1962)

Evaluation of intervention-level determinants

Attention to intervention-level determinants is most prominently reflected in research on intervention modification (Chambers & Norton, 2016). Extant frameworks tend to

Human-centered design and EBPI usability

We draw on methods from the field of human-centered design (HCD; also known as user-centered design). Most EBPIs have been developed independent from the HCD field, which has sought to clearly operationalize the concepts and metrics that reflect good design. As a result, EBPIs often have not been designed for typical end users and contexts of use, exacerbating the need for adaptations. As discussed later, EBPI users (i.e., the individuals who interact with a product) are often diverse, but primary users typically include both service providers and service recipients. HCD is focused on developing compelling and intuitive products, grounded in knowledge about the people and contexts where an innovation will be deployed (Courage & Baxter, 2005). Although the application of HCD methods has typically been limited to digital technologies, their potential for broader applications in health care is increasingly recognized (Roberts et al., 2016). Lyon and Koerner (2016) applied HCD principles to the tasks of EBPI development and redesign, suggesting that EBPI designs should demonstrate high learnability, efficiency, memorability, error reduction, a good reputation, low cognitive load, and should exploit natural constraints (i.e., incorporate or explicitly address the static properties of an intended destination context that limit the ways a product can be used). Collectively, these design goals reflect key drivers of EBPI

Evaluation of usability is increasingly routine in digital health (National Cancer Institute [NCI], 2007), but systematic usability assessment procedures have never been applied to EBPIs. This is problematic given that EBPI usability strongly influences implementation outcomes that, in turn, drive clinical outcomes (Lyon & Bruns, 2019). Usability testing of EBPIs is critical because such assessments (1) allow for evaluation of intervention characteristics likely to be predictive of adoption (Rogers, 2010) and (2) uncover critical usability issues that could subsequently be addressed via prospective adaptation (i.e., redesign; Chambers et al., 2013). This information is relevant across multiple stages of intervention development, testing, and implementation by driving initial design, modification, and selection (e.g., of the most usable interventions) for research and practice applications. Presently, no methodologies exist to accomplish these goals.

Current aims

This article presents (1) a novel methodology for identifying, organizing, and prioritizing usability issues for psychosocial interventions and (2) an example application to an exposure procedure for anxiety disorders. Exposure is among the most effective interventions for disorders, such as obsessive compulsive disorder (Tryon, 2005). During exposure, clinicians support clients to approach fear-producing stimuli (exposure) while preventing fear-reducing behaviors, such as compulsions or other avoidance strategies (Himle & Franklin, 2009). Although the example used is specific to mental health and, for simplicity, incorporates only one user group (clinicians), the methodology is intended to be generalizable. Furthermore, while the feasibility of the usability testing techniques varies across settings (see Step 3, below), the methodology is intended for use by a range of professionals, including intervention developers, implementation researchers, implementation practitioners, and organizations interested in adopting EBPIs.

Methods

The

Steps of USE-EBPI methodology. A figure depicting the inputs, techniques, and outputs used across all phases of the method.

Step 1: identify users/participants

Explicit identification of representative end users is a basic tenet of HCD (Cooper et al., 2007). Product developers tend to underestimate user diversity and base designs on people like themselves (Cooper, 1999; Kujala & Mantyla, 2000), but explicit user identification produces more usable systems (Kujala & Kauppinen, 2004).

The USE-EBPI framework proposes a systematic user identification process (Table 1) drawn from the larger testing literature (Hackos & Redish, 1998; Kujala & Kauppinen, 2004). As indicated by the funnel shape for Step 1 (Figure 1), each stage of identification narrows the potential participant pool. The first sub-step is brainstorming an overly-inclusive, preliminary list of potential users (e.g., clinicians, clients, system administrators, etc.). For the exposure protocol, potential users included all behavioral health clinicians and clients who treat or experience exposure-relevant anxiety, as well as supervisors who support those clinicians. Other potential user groups (e.g., implementation intermediaries, service system administrators) were considered but determined to be too distal to the study aims.

EBPI usability test user/participant identification process (Step 1).

EBPI: evidence-based psychosocial intervention.

Second, the most relevant subset of user characteristics are articulated, which may include personal (e.g., prior EBPI training or attitudes toward EBPIs [clinician], expectations, or prior treatment experiences [client]), task-related (e.g., experience with the specific EBPI, frequency of usage), and setting characteristics (e.g., intervention setting). User characteristics most relevant to the test of the exposure protocol included experience delivering or supervising exposure interventions (clinicians, supervisors) and anxiety severity (consumers).

Third, primary user groups (i.e., the core group[s] expected to use a product) are described and prioritized, with potential adjunctive input from secondary users (Cooper et al., 2007). Primary EBPI users often include clinicians and clients, while secondary users may include caregivers (for interventions that do not target them directly), system administrators (who often make adoption decisions), implementation intermediaries (who work to enhance EBPI adoption), and paraprofessionals (who may direct clients to interventions; Lyon & Koerner, 2016). Explicitly articulated negative or nonusers may be deprioritized. In our example, clinicians and clients were primary users. However, only clinicians were selected for testing (Table 5) given the modest goals of the USE-EBPI pilot and because the exposure protocol materials were designed to be primarily clinician facing. Clinicians interested in exposure interventions were prioritized and disinterested clinicians were identified as non-users.

Fourth, typical and representative users are selected for testing. For tests involving more than a small number of users (e.g.,

Step 2: define and prioritize the EBPI’s components

Because it is rarely feasible to conduct a usability test of the entirety of an EBPI’s features, it is essential to constrain the scope of components included. The USE-EBPI framework delineates four types of EBPI components for testing, organized into two different categories, tasks and packaging (Table 2).

EBPI tasks and packaging components (Step 2).

EBPI: evidence-based psychosocial intervention; SUDS: subjective units of distress.

EBPI tasks

EBPIs include critical tasks that must be accomplished to have their intended effects. First,

Second,

EBPI packaging

EBPI packaging refers to the static properties of how tasks are organized, communicated, or otherwise supported. Packaging includes both EBPI artifacts and parameters (Table 2).

EBPI

Prioritizing EBPI components

Tasks and packaging can be prioritized for usability testing based on whether they represent core intervention components and whether there are known or suspected usability issues that may impact implementation. The actual exposure procedures in the example protocol above met both of these criteria. Although packaging is more likely to be the “adaptable periphery” of the EBPI, rather than a “core component” (Damschroder et al., 2009), key artifacts or parameters of an EBPI’s packaging that are critical to effectiveness (e.g., brief exposure guide) also are likely to be core components. Core components may be identified based on (1) theory or logic models that specify causal pathways, (2) empirical unpacking studies that test the necessity of components, or (3) research evaluating the mechanisms through which interventions impact outcomes (Kazdin, 2007). Known or suspected usability problems with an EBPI’s component tasks and packaging may also be prioritized (e.g., information from the literature about underuse of exposure procedures among community clinicians). Step 2 of USE-EBPI should result in a prioritized list of components with which users most need to interact to achieve an EBPI’s desired outcomes.

Step 3: plan and conduct the tests

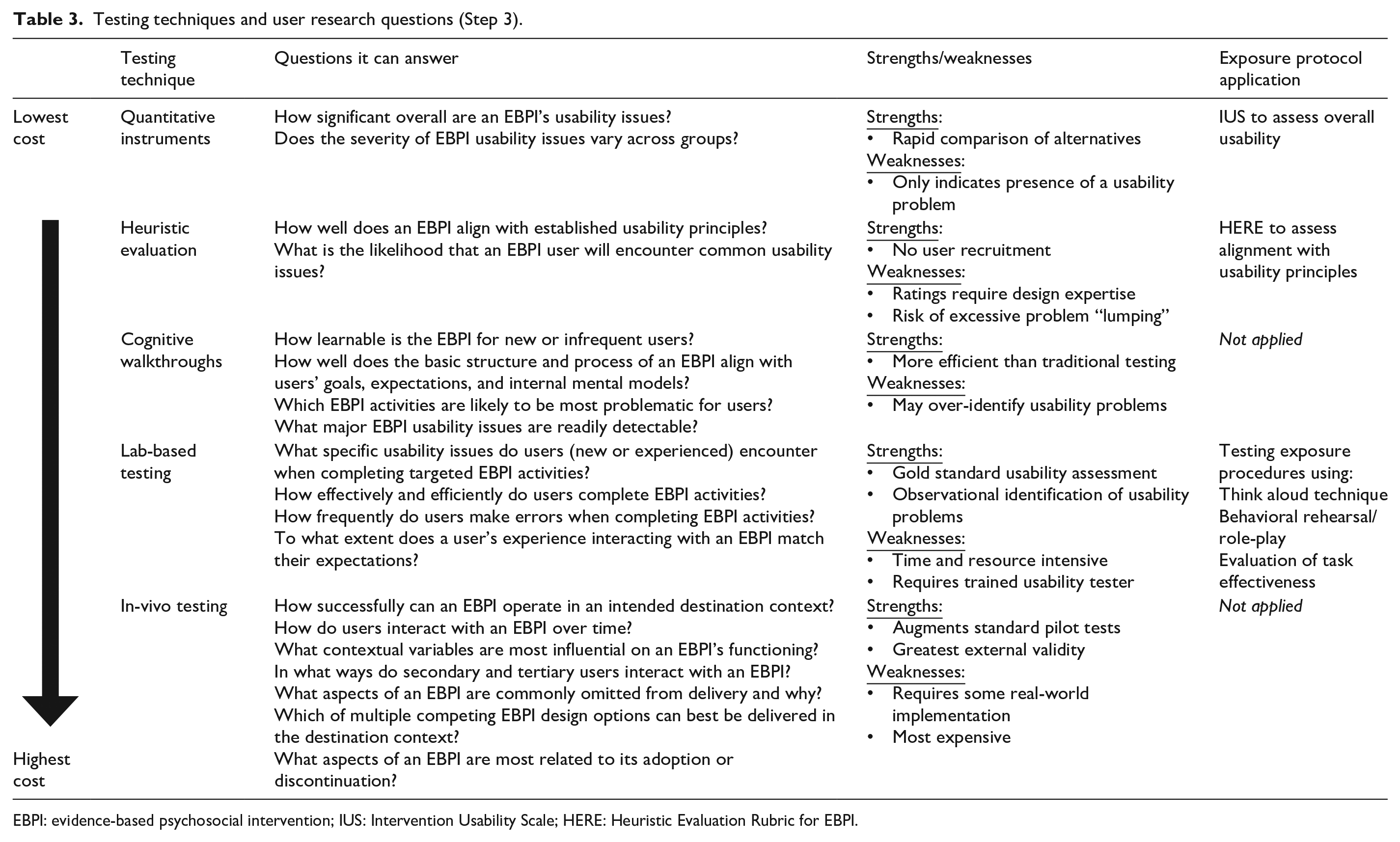

Usability tests should systematically document usability problems, confirming those already suspected (e.g., derived from the literature) and eliciting new issues. USE-EBPI provides a standard set of user research questions to drive selection of testing techniques (Table 3). Categories of testing techniques include (a) quantitative instruments; (b) heuristic evaluation; (c) cognitive walkthroughs; (d) lab-based testing; and (e) in vivo testing. Only a subset will be relevant to any particular EBPI testing process. In USE-EBPI, we suggest triangulation using complementary methods (e.g., quantitative and lab-based).

Testing techniques and user research questions (Step 3).

EBPI: evidence-based psychosocial intervention; IUS: Intervention Usability Scale; HERE: Heuristic Evaluation Rubric for EBPI.

User research questions that drove the example application of USE-EBPI included: (1) What is overall level of usability for components of the exposure protocol and related materials for more experienced and less experienced users? (2) To what extent does the protocol align with established usability principles? (3) How effectively can users complete an exposure task? and (4) What specific usability issues do users experience when interacting with the protocol. Drawing from Table 3, testing methods selected to address these questions included the use of a quantitative instrument, a heuristic evaluation checklist, and lab-based usability testing (Table 4). All five USE-EBPI testing techniques are presented below to provide a comprehensive description of the USE-EBPI method.

Adapted User Action Framework (UAF) for organizing EBPI usability issues (Step 4).

EBPI: evidence-based psychosocial intervention.

Demographics of participants.

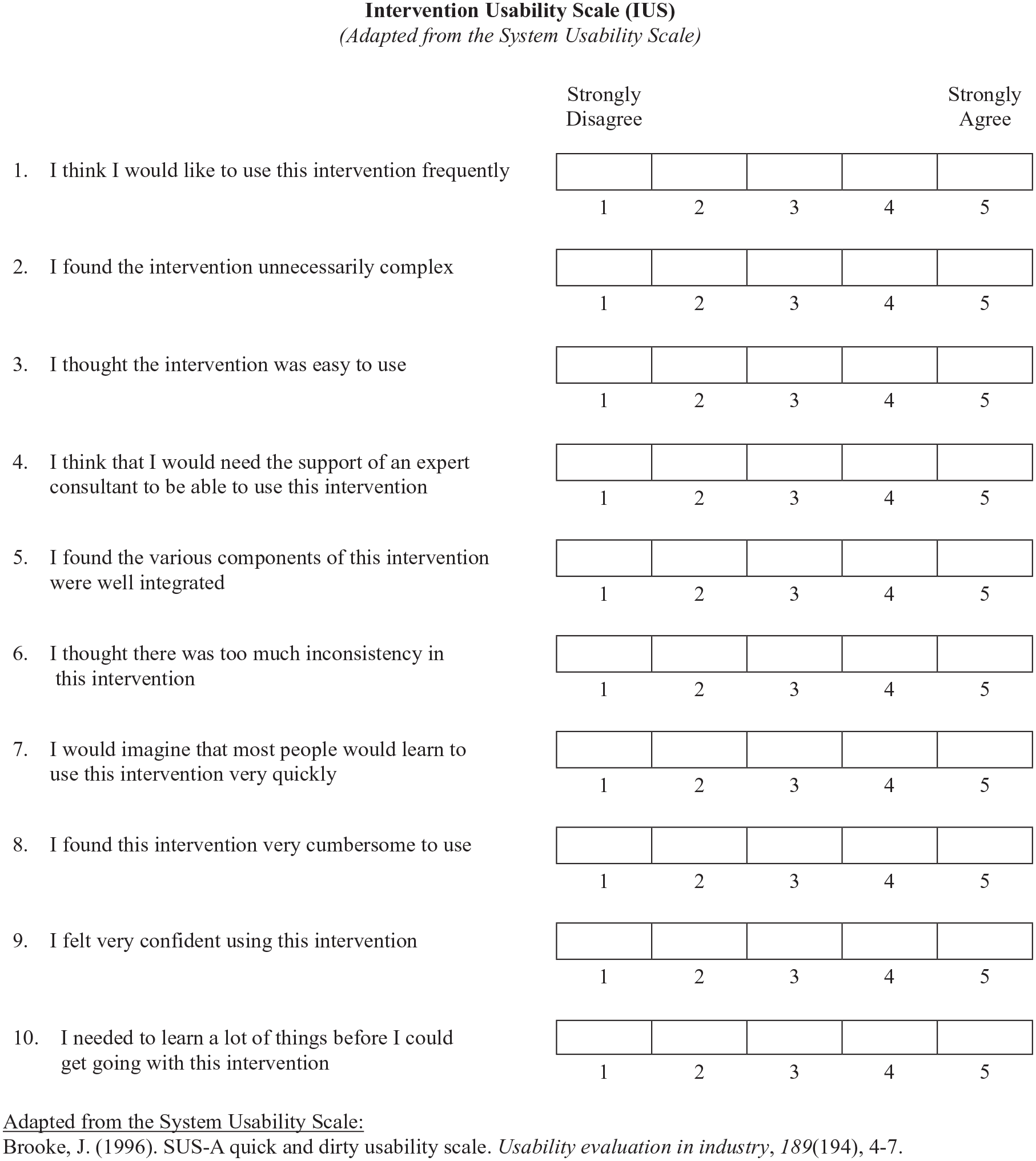

Quantitative instruments

A wide variety of quantitative instruments exist to identify usability problems. Tools, such as the robust 10-item System Usability Scale (SUS [Brooke, 1996; Sauro, 2011]) are completed directly by users. Our research team has created an adapted version of the SUS for EBPIs (i.e., IUS—Figure 2). Nevertheless, USE-EBPI de-emphasizes quantitative measures as a first line approach. They efficiently identify the

The IUS, as applied in the current project.

Heuristic evaluation

Heuristic evaluation involves expert review of a system or interface while applying a set of guidelines that reflect good design principles (Nielsen, 1994). Within USE-EBPI, heuristic evaluation involves ratings from multiple individuals with expertise in EBPI design who independently review all relevant task and packaging components. Although these heuristics should be selected or adjusted according to the specific needs of the evaluation, the design goals articulated by Lyon and Koerner (2016) reflect USE-EBPI’s default set (i.e., learnability, efficiency, memorability, error reduction, low cognitive load, and exploit natural constraints), with the exception of reputation (see Heuristic Evaluation Rubric for EBPIs [HERE], Figure 3). Evaluation is inherently mixed methods, with quantitative ratings as well as qualitative justification of those ratings for data complementarity and expansion (Palinkas et al., 2011). While an evaluator may spend multiple hours reviewing an EBPI manual and all associated materials, heuristic evaluation remains relatively efficient. Nevertheless, drawbacks include a risk of “lumping” different usability problems together, thus creating a list of problems with suboptimal specificity (Keenan et al., 1999; Khajouei et al., 2018). Heuristic analysis is also best applied by experts in design principles, the content area, or both (Nielsen, 1994), expertise that might not be available to all research teams.

The HERE, as applied in the current project.

HERE was selected to evaluate the exposure protocol in part because multiple members of the study team had adequate expertise in HCD. Three experts conducted independent HERE evaluations of all available artifacts (i.e., a how-to manual for the exposure protocol, brief exposure guide, a core fear map, and fear hierarchy examples). Raters reviewed all materials twice, once to understand the overall scope of the protocol and materials, and again to rate and log-specific usability issues.

Cognitive walkthroughs

Cognitive walkthroughs are more resource intensive than heuristic analyses largely because they require representative users. Although there are multiple approaches, walkthroughs generally focus on leading individual users or groups of users through key aspects of a product to identify the extent to which the product aligns with their expectations or internal cognitive models (Mahatody et al., 2010). Drawing from existing walkthrough procedures (Bligård & Osvalder, 2013), USE-EBPI presents users with common use scenarios and, using a sequential, mixed-methods data collection approach (Palinkas et al., 2011), asking them to rate whether they will be able to perform the correct actions (ranging from “A very good chance of success [5]” to “No/ a very small chance of success [1]”) and then provide justifications. Average success ratings identify qualitative responses that require more in-depth review (e.g., via systematic content analysis [Hsieh & Shannon, 2005]). Despite their efficiency, cognitive walkthroughs tend to over-identify potential usability problems (Health and Human Services, n.d.). Although they were not applied in the exposure protocol example, walkthroughs were considered as a lower-cost alternative to more intensive lab-based user testing.

Lab-based user testing

Individual, task-based user testing with observation is a hallmark of HCD because it captures direct interactions between users and features of a product. Typically, testing involves presenting a series of scenarios and observing how successfully and efficiently users complete a set of discrete tasks. EBPI usability tests build on established behavioral rehearsal methods (e.g., Beidas et al., 2014), but with the novel objective of evaluating the intervention instead of assessing user competence. First, participants are often instructed “

In our example application, 10 representative users were recruited from an existing clinical practice network. Institutional review board approval was obtained by the second author from the Behavioral Health Research Collective. Clinicians were invited via recruiting emails. Interested participants (

Following testing, the notes from each session were analyzed using an inductive qualitative content analysis procedure (Bradley et al., 2007; Hsieh & Shannon, 2005) in which two members of the research team reviewed all notes independently, generated codes for identified usability problems, rated task completion success (i.e., effectiveness; coded “failure,” “partial success” [with 1 + errors], and “successful”), and met to compare their coding and arrive at consensus judgments through consensus coding (Hill et al., 2005). Usability issues were defined as aspects of the intervention or its components and/or a demand on the user which make it unpleasant, inefficient, onerous, or impossible for the user to achieve their goals in typical usage situations (Lavery et al., 1997).

In vivo user testing

Unlike lab-based testing, in vivo testing involves more extensive applications of an EBPI in a destination context over longer periods of time, which allows for evaluation of the ways in which it interacts with contextual constraints. In vivo testing has the potential to expand the traditional acceptability and feasibility goals of pilot studies (Westlund & Stuart, 2017) with usability evaluation objectives. To be completed successfully, in vivo testing inherently requires some degree of intervention implementation and, as a result, is the most expensive—and also most externally valid—method of evaluating usability. If feasible to collect, real-world adherence data may be conceptualized as an indicator of EBPI task effectiveness. A/B testing, in which two designs are implemented simultaneously (e.g., an original design and a novel, alternative design) to determine whether one is superior (Albert & Tullis, 2013) is particularly useful during in vivo testing. Due to time and resource constraints, it was not feasible for the example application of USE-EBPI to conduct in vivo user testing. Tradeoffs between costs of time, money, and expertise versus quality of information require care in selecting and balancing which usability techniques are selected.

Step 4: organize and prioritize identified usability issues

Regardless of the techniques used, usability problems identified are classified and prioritized with a structured method within USE-EBPI. Usability taxonomies provide a means for the consistent and accurate classification, comparison, reporting, analysis, and prioritization of usability issues (Jeffries, 1994; Keenan et al., 1999). To organize and prioritize usability issues, USE-EBPI adapts an existing taxonomy for categorizing usability problems—the UAF (Khajouei et al., 2011). The UAF was selected because it is theoretically driven and has demonstrated reliability among experts for categorizing usability problems (Andre et al., 2001).

Organize

The augmented version of the UAF is based on a theory of the interaction cycle (Norman, 1986) and states that, in any interaction, users begin with goals and intentions, and engage in (1)

Prioritize

Finally, most usability evaluation approaches include a process for determining the potential impact of each identified problem (Medlock et al., 2002; NCI, 2007). In the UAF, prioritizing based on severity and impact focuses redesign efforts on those problems that severely hinder key interactions, ensuring that only essential elements that need fixing receive attention. USE-EBPI employs revised UAF ratings in which priority (ranging from “low priority” [1] to “high priority” [3]) is assigned to each identified problem by two or more independent team members based on its (1) impact on users, (2) likelihood of a user experiencing it, and (3) criticality for an EBPI’s putative change mechanisms. Ratings are averaged across reviewers. For example, two research team members independently rated each usability problem. Mean scores were calculated (see the section “Results”). Although ratings inform prioritization, all decisions about which problems to address first in an EBPI redesign effort should be made by the design team when considering all available information.

Results

Quantitative ratings

IUS ratings (scale: 0–100) ranged from 65 to 85, with a mean of 80.5 (

Heuristic evaluation

HERE ratings (scale: 1–10) indicated a mean overall assessment rating of 7.33 (

HERE evaluation ratings.

HERE: Heuristic Evaluation Rubric for EBPI; EBPI: evidence-based psychosocial intervention.

Lab-based testing

Task effectiveness

Successful task completion during the behavioral rehearsal was coded for nine of the 10 participants (one participant did not attempt it). Two novice participants (66%) and one intermediate participant (25%) failed the exposure task. No advanced participants failed the task. Reasons for failure included engaging in contraindicated behaviors, such as providing reassurance to the client during the exposure and unilaterally selecting the easiest trigger from a fear hierarchy (rather than collaboratively choosing something mid-range). No participants were coded as achieving partial success.

Usability problem prioritization

Consensus coding yielded 13 distinct usability problems. In Table 7, usability problems are organized based on priority scores, as these account for both likelihood of occurrence and anticipated impact. Usability problem priority scores from the UAF across the two raters were correlated at

Prioritization and categorization of usability problems (Steps 3 and 4 results).

UAF: User Action Framework.

Clinician experience level (1 circle = 1 participant; darkened if impacted; gray if not impacted):

Novice

Intermediate

Advanced

Usability problem organization

Application of the UAF interaction cycle to the usability issues indicated that most impacted more than one step of that cycle (Table 7). Seven of the usability issues interfered with the

Discussion

Complex psychosocial interventions are common in contemporary health care services. Their usability is a critical, but understudied, determinant of implementation outcomes. Evaluation of usability provides insights to drive adoption decisions as well as proactive adaptation to improve intervention implementability (Lyon et al., 2019). USE-EBPI is the first method developed to directly assess the usability of complex psychosocial interventions.

USE-EBPI application to exposure protocol components

IUS results indicated that overall clinician-rated usability of the exposure protocol components tested was good, based on established SUS norms. For comparison, mean ratings were comparable with SUS ratings of the iPhone, but lower than a typical microwave oven (Kortum & Bangor, 2013). This indicates that, while the materials could be improved, the current state is likely acceptable for many users. Nevertheless, differences in IUS ratings by clinician experience level illustrate the value in stratifying by experience. Advanced clinicians viewed the materials near the “excellent” range, whereas practitioners less experienced with exposure were more impacted by usability issues. IUS ratings were largely consistent with HERE rubric ratings by experts, which independently suggested moderate to good usability. However, HERE evaluation also yielded unique information about the protocol’s difficulty exploiting natural constraints, which provides potential direction for subsequent intervention adaptations.

Lab-based testing revealed additional detail about the specific usability problems and further underscored the utility of including users with varying experience levels. Interestingly, novice users attempted and correctly performed many aspects of the exposure, but were not able to rapidly identify and avoid

Implications for adaptation and redesign

Although it is beyond the scope of this article to detail the full EBPI adaptation or redesign processes that may result from the assessment described, results suggested how the intervention’s implementability may be enhanced. While usability testing is necessarily problem-focused, redesign decisions should be sure to retain known strengths and positive aspects of the intervention. Focusing redesign on the highest priority problems is intended to help avoid excessive adaptations that may not be critical to ensuring implementability.

Although any adaptation must ultimately be codified in the written intervention protocol (an artifact), adaptations informed by USE-EBPI might include those made to any aspects of the intervention (e.g., content, structures). As indicated above, the most critical usability issues had to do with clearer and understandable signaling about behaviors that undermine the purported active mechanism of exposure (e.g., excessive reassurance). The exposure protocol may benefit from the following proactive adaptations: First, overall usability of the intervention might be improved by clearer labeling in the brief exposure guide. Second, the intervention could provide strategic and timely delivery of instruction to clinicians on how to use the guide before, during, and after a session for self-supervision. Third more novice-friendly idioms and additional supports (e.g., example scripts) in ambiguous areas (e.g., “exposure processing”) would reduce confusion, especially for less experienced clinicians. Fourth, clearer visual grouping of content presented in select artifacts (e.g., the brief exposure guide) may enhance ease of comprehension. In addition, assignment UAF interaction cycle steps to each usability problem further facilitates redesign decisions. Top priority issues (i.e., those receiving a 2.5 or above) were least likely to involve the planning step, suggesting that appropriate adaptations might be focused less on communicating concepts in understandable ways, and more on their applications.

Limitations

The current application of USE-EBPI has a number of limitations. First, the method was only applied with one intervention. Future research should broaden its applications to a wider range of evidence-based programs and practices. Second, the study applied the method to only one of the identified primary user groups (clinicians). As described previously, USE-EBPI is intended to be applicable to a wide range of primary and secondary users. Future research should examine the extent to which EBPI usability testing with clinicians and clients surfaces unique problems to be considered during redesign. Finally, we did not collect explicit information about the extent to which the various USE-EBPI techniques were feasible for use by different stakeholders. Nevertheless, we expect that lower-cost approaches (e.g., quantitative instruments) may be readily applied by community-based stakeholders, whereas more detailed and time-intensive techniques will require expert support and/or more resources (see Table 3).

Conclusion

Intervention-level determinants of successful implementation are understudied in contemporary implementation research and few methods exist to identify EBPI components for prospective adaptation (Lyon & Bruns, 2019). The USE-EBPI methodology allows for evaluation of a critical intra-intervention determinant—intervention usability—for complex psychosocial interventions in health care. The current study provides preliminary evidence for its utility in generating information about the implementability of specific interventions as well as informing subsequent intervention redesign.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was supported, in part, by grants K08MH095939, R34MH109605, and P50MH115837, awarded by the National Institute of Mental Health.