Abstract

Background

In pathology and other specialties of diagnostic medicine, longitudinal studies and competency assessments often involve physicians interpreting the same images multiple times. In these designs, a washout period is used to reduce the chances that later interpretations are influenced by prior exposure.

Objective/s

The present study examines whether a washout period between 9 and 39 months is sufficient to prevent three effects of prior exposure when pathologists review digital breast tissue biopsies and render diagnostic decisions: faster case review durations, higher confidence, and lower perceived difficulty.

Methods

In a longitudinal breast pathology study, 48 resident pathologists reviewed a mix of five novel and five repeated digital whole slide images during Phase 2, occurring 9–39 months after an initial Phase 1 review. Importantly, cases that were repeated for some participants in Phase 2 were novel for other participants in Phase 2. We statistically tested for differences in participants’ case review duration, self-reported confidence, and self-reported difficulty in Phase 2 based on whether the case was novel or repeated.

Results

No statistically significant difference in review time, confidence, or difficulty as a function of whether the case was repeated or novel in a Phase 2 review occurring 9-39 months after initial viewing; this same result was found in a subset of participants with a shorter (9-14-months) washout.

Conclusion

These results provide evidence to support the efficacy of at least a 9-months washout period in the design of longitudinal medical imaging and informatics studies to ensure no detectable effect of initial exposure on participant’s subsequent case review.

Keywords

Background

Longitudinal study designs involve repeated measurements of individuals over periods of time, often with follow-up measurements occurring years or even decades later. 1 Compared to cross-sectional study designs, longitudinal studies provide higher statistical power and stronger evidence about causal relationships. 2 In medical education research, longitudinal designs can reveal patterns of competency development as trainees progress toward independent clinical practice. 3 The advent of digital pathology and whole slide imaging (WSI) has made it possible to quantify resident pathologists’ behavior during biopsy review using web-based platforms, including gathering data on patterns of biopsy case navigation behavior4,5 and eye movements over critical case regions.6,7 These methods provide new quantitative metrics of clinical competency development, complementing traditional methods such as written exams and global rating forms used by program directors and mentors.8,9

Ideally, longitudinal studies examining proficiency in reviewing clinical cases will involve participants interpreting some of the same biopsies over the course of several years. 10 This design affords assessment of intra-participant reproducibility (in a research setting) and/or performance (in a training setting). However, the advantages of longitudinal designs all hinge on the assumption that participants do not remember cases seen previously in a study. In general, there are two memory systems likely involved in remembering images: explicit (declarative) and implicit (non-declarative) memory. 11 Explicit memory is intentional and conscious, whereas implicit memory influences behavior without conscious awareness or intentional memory.12,13 While explicit memory for digital whole slide pathology images is very unlikely to exist after a 9-39-months washout period,14–17 it is unknown whether implicit memory processes have any practical influence on their review and diagnostic interpretation in the context of longitudinal pathology studies.

As noted by guidelines published by the College of American Pathologists (CAP),18,19 researchers are often concerned about how long case recognition persists.13,15,20,21 One study examining this question showed that after a 4-week washout period, pathologists can explicitly recognize nearly a third of the images they reviewed previously, 20 perhaps owing to their highly specialized domain expertise 22 and the presence of salient case features. 23 No study examining memory for medical images has examined lengthier washout periods, and this report is intended to fill this gap in the literature.

For this report, we analyze data from Phase 2 of a longitudinal study of resident pathologists. For each study participant, Phases 1 and 2 were separated by a 9-39-months washout period. We investigated whether resident pathologists demonstrate evidence of implicit memory for cases that were repeated (previously viewed) compared to cases that were novel (not previously viewed) at Phase 2. Implicit memory was tested in two ways. First, we examined case review durations (i.e., how long it took participants to review each case); if participants had an implicit memory of the case, we expected faster reviews in Phase 2 for repeated versus novel cases. Second, we examined subjective ratings of difficulty and diagnostic confidence for each case. If participants had an implicit memory of the case, we expected during Phase 2 that they would give repeated cases a lower difficulty rating and report higher confidence in their diagnosis compared to ratings on novel cases.

Methods

We analyzed data from a large, longitudinal study examining resident pathologists’ medical decision-making and diagnostic behavior during training.

Participants

Forty-eight (48) participants interpreted digital whole slide breast biopsy images (hereafter referred to as cases) at two time points (hereafter referred to as Phase 1 and Phase (2) separated by at least a 9-months washout period. Participants varied in experience, with 13 post-graduate year-2 (PGY2), 20 PGY3, and 15 ≥ PGY4 resident pathologists at Phase 2. Twenty-four (24) participants were female, 23 male, and one undisclosed, with an overall mean age of 35.5 years (SD 4.3 years). Three of the participants identified as Hispanic or Latino; Thirty-nine (39) participants were White, two Black or African American, six Asian, and one American Indian/Alaska Native/Native Hawaiian/Other Pacific Islander.

Design and procedures

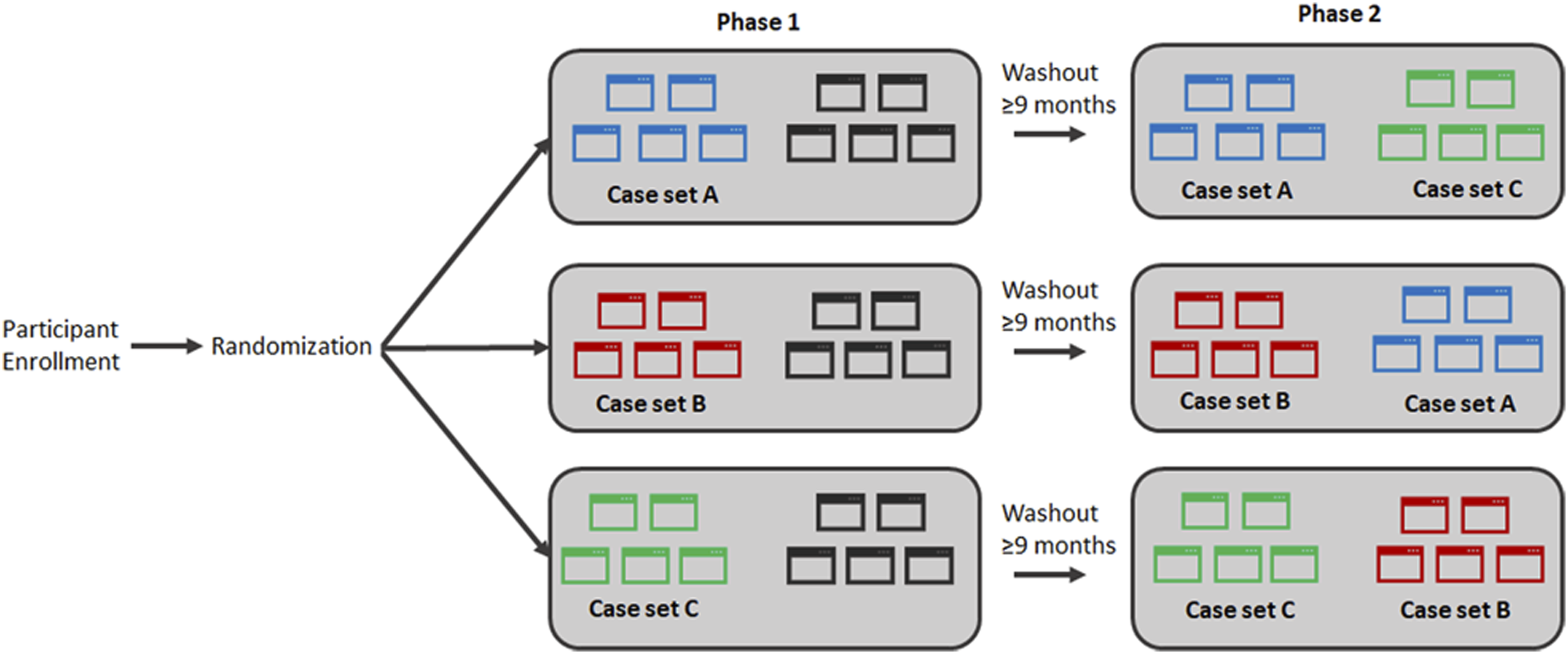

Each participant was randomized into one of three study arms (Figure 1). Our study design used three sets of five cases, with each set containing one case from each of five diagnostic categories: benign, atypia, low-grade and high-grade

24

ductal carcinoma in situ (lg-DCIS, hg-DCIS), and invasive carcinoma.

1

Critically, each participant reviewed two case sets in Phase 2, one set of five novel cases and one set of five repeated cases. Moreover, each case in Phase 2 was novel for some participants but repeated for other participants. This design feature allowed us to examine the same case in Phase 2 and compare participants who interpreted the case as novel or as repeated. Study design. Participants were randomized to one of three study arms. In Phase 1, each participant was shown either case set A, case set B, or case set C (as well as five additional cases) For Phase 2, each participant was shown 5 cases that were repeated from Phase 1 and also 5 novel cases, as illustrated. Analyses were performed on Phase 2 data only, leveraging the fact that each case in sets A, B, and C was viewed for the first time (novel) by some participants and for the second time (repeated) by other participants.

During data collection, participants viewed and navigated (zoomed, panned) one digital whole slide image for each case on a computer monitor. All images were stained with hematoxylin & eosin (no other stains were used on any images) and averaged approximately 3155 cm2 in area. An experimenter was present to provide instructions and monitor progress during data collection sessions. A custom image display and navigation (zoom, pan) software was used to track the duration of image interpretations, which was logged automatically to a local SQL database that detailed system timestamps relating case opening and case closing commands. After reviewing each case, participants completed a histology form to document their diagnosis, and they also reported their perception of case difficulty (“Please rate on the following scale your opinion of the level of diagnostic difficulty of this case”; six-point Likert scale from very easy to very challenging) and confidence in their diagnosis (“Please rate on the following scale your confidence in your assessment”; six-point Likert scale from not at all confident to very confident).

Statistical analysis

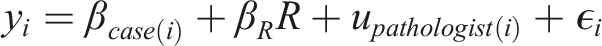

As described above, our analyses exclusively used Phase 2 case interpretation data. The premise of our analysis was that if participants explicitly or implicitly remembered cases that were repeated, review duration would be shorter, self-reported case difficulty would be lower, and diagnostic confidence would be higher compared to cases that were novel. We used linear mixed-effects models

25

for statistical analysis. The predictor of interest in each analytic model was a binary variable R indicating that the case was repeated (vs novel) for the participant. In order to control for any systematic differences between cases, models included fixed-effects for each case, which is equivalent to allowing a different model intercept for each case. Random effects for participant are represented by the u

pathologist

terms and ε

i

are the error terms:

We fit separate models for each response variable y i : case review duration, self-reported case difficulty, and self-reported diagnostic confidence. We report confidence intervals and p-values for the parameter of interest β R .

Primary analyses used data from all participants, regardless of the length of the washout period. In secondary analyses, we were interested in ensuring our results were similar when restricting to a shorter washout period; in those analyses, we restricted to the subset of participants with a washout period ranging from 9 to 14 months.

Results

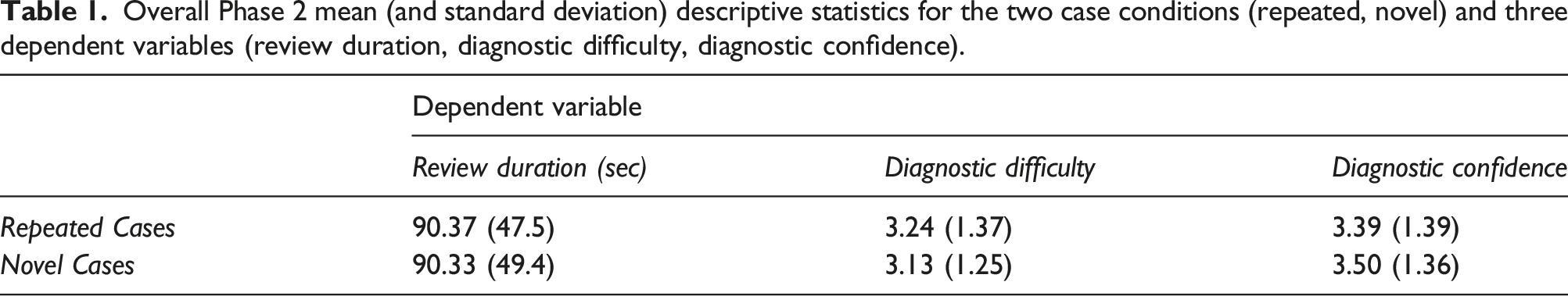

Overall Phase 2 mean (and standard deviation) descriptive statistics for the two case conditions (repeated, novel) and three dependent variables (review duration, diagnostic difficulty, diagnostic confidence).

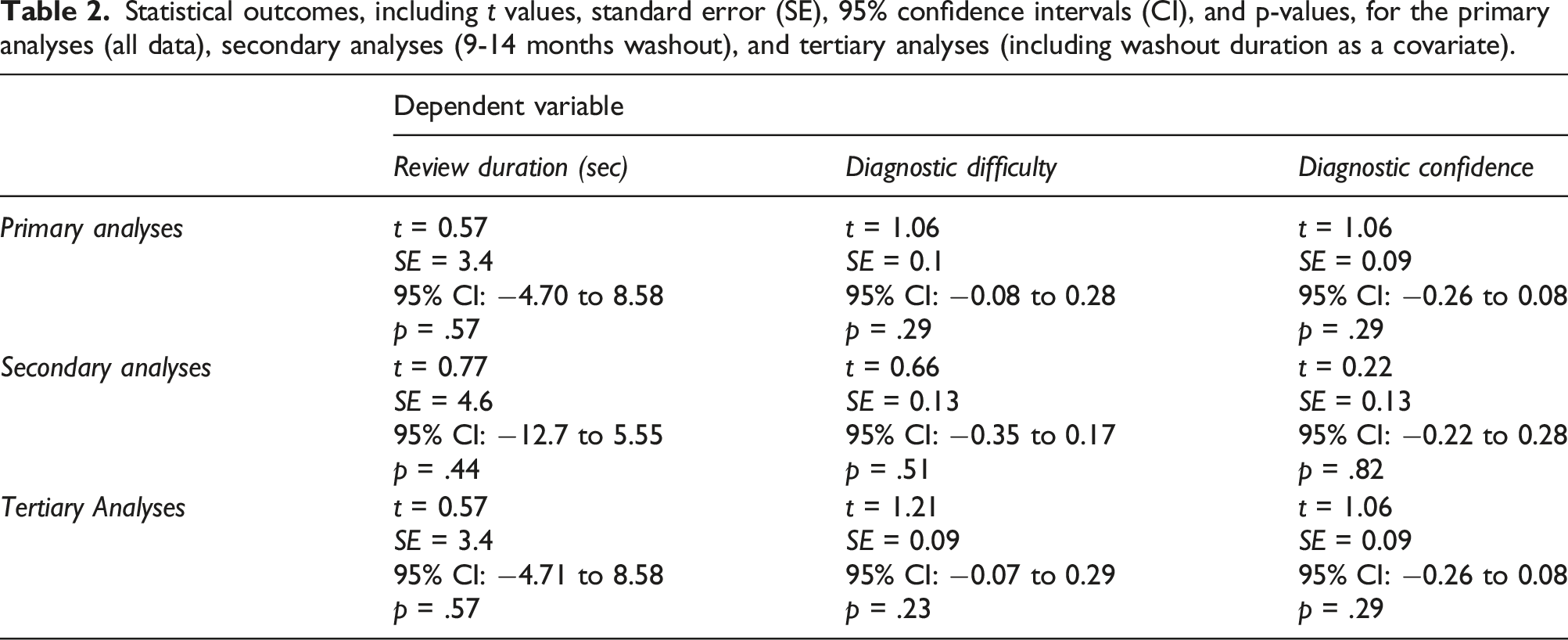

Statistical outcomes, including t values, standard error (SE), 95% confidence intervals (CI), and p-values, for the primary analyses (all data), secondary analyses (9-14 months washout), and tertiary analyses (including washout duration as a covariate).

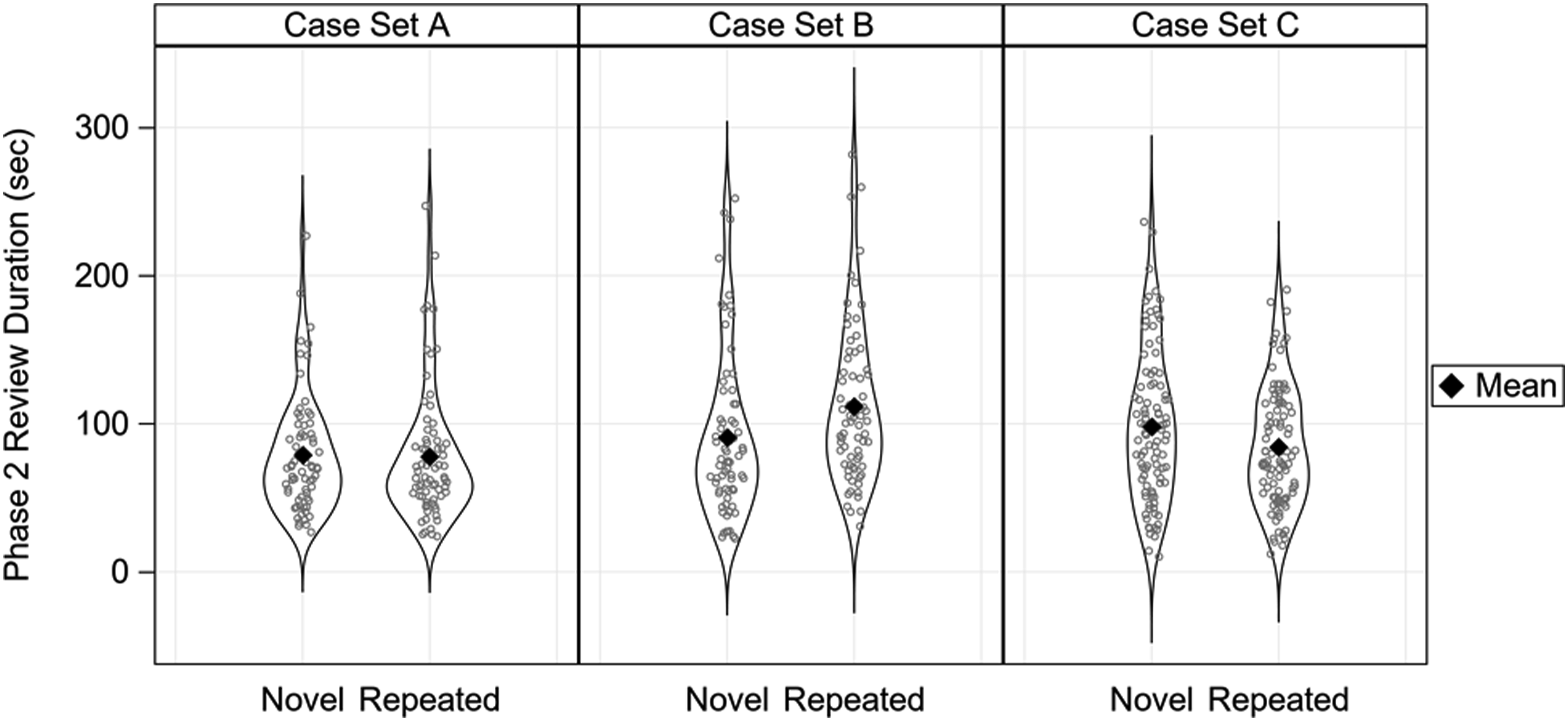

Primary analyses demonstrated no evidence that participants reviewed repeated cases more quickly than the novel cases (Figure 2): on average, a participant took 1.9 s longer to review a repeated case compared to another participant who reviewed the same case as a novel case [p = .57]. Similarly, we observed a small and statistically non-significant difference in self-reported diagnostic confidence or case difficulty for repeated versus novel cases: participants’ confidence averaged 0.09 points lower on the six-point Likert scale [p = .29] for repeated versus novel cases, and their assessment of case difficulty averaged 0.09 points higher on the six-point Likert scale for repeated versus novel cases [p = .29]. Case review durations. Violin plots depicting Phase 2 case review duration (in seconds) for all participants as a function of Case Set (A, B, C) and whether the case was novel or repeated. Each ring indicates a single case review time. Mean is indicated by a black diamond.

In secondary analyses restricted to participants (N = 24) with a shorter (9–14 months) washout period, results for difficulty [p = .51] and confidence [p = .82] were like the primary analysis and non-significant. For the secondary analysis of review duration, resident pathologists averaged 3.6 s faster when reviewing repeated versus novel cases, but this difference was statistically non-significant [p = .44].

In response to a reviewer’s request, we conducted tertiary analyses that included washout duration (in days) as a covariate in the primary analyses. None of the primary result patterns changed, as detailed in Table 2; in fact, all the statistical outcomes were strikingly similar between the primary and tertiary analyses.

Discussion

In this analysis, we did not detect evidence of implicit memory when resident pathologists reviewed the same cases following a 9-to-39-months washout period, and we also provided evidence that a 9-to-14-months washout period is sufficient. Review times for repeated cases were no faster than for the novel cases, and there was no evidence that resident pathologists were more confident or thought cases were easier when they were repeated compared to novel. The lack of a significant change in these three measures is evidence that participants did not implicitly remember the content of the digital whole slide images they had previously interpreted.

This is an important methodological outcome for three primary reasons. First, longitudinal study designs provide higher statistical power than cross-sectional designs for detecting outcome differences that emerge over time and with experience.1,2 Longitudinal designs using entirely novel cases at each assessment may unnecessarily increase the variability of behavior due to the inherently different histopathological features of biopsy cases. In research contexts, repeating a subset of interpreted cases at each assessment translates to higher statistical power when attempting to isolate the causal effects of educational (e.g., residency training) or experimental (e.g., curriculum redesign) interventions. Our results suggest that a 9-14-months washout period is sufficient for this design.

Second, multidimensional measurements of competency development during residency training are critical for identifying gaps in medical knowledge and guiding curriculum development and practice-based training. 26 Digital whole slide imaging provides novel opportunities for the quantitative assessment of competency development throughout the course of postgraduate training, 27 and progress in accurately interpreting histopathological features and rendering diagnoses is a potential metric of success tightly tied to the Accreditation Council for Graduate Medical Education’s (ACGME) medical knowledge competency. Our results suggest that in training settings, reassessing clinical competence with the same and novel cases is a viable methodology and does not unintentionally alter the interpretation of data derived from longitudinal competency assessments. Given these results, the American Society for Clinical Pathology/ASCP’s Resident In-Service Examinations/RISE 28 might benefit from repeating some of the same test cases over the course of residency training, rather than using only novel cases each assessment year; repeating cases can provide higher statistical power for detecting longitudinal trends in individual trainee performance, a key element of RISE data interpretation. 29

Strengths of our experimental design include direct comparison of Phase 2 performance when the same images were novel versus repeated, a larger sample size than previous research on this topic, and examining a diagnostic medical task of real-world relevance and importance. However, the results we describe for breast pathology might not generalize to other pathology tissues (e.g., dermatopathology) or other areas of medical image diagnostics (e.g., radiology). It is also worth pointing out that human memory is highly complex and while we consider one important aspect of memory for previously seen cases (implicit priming), there are other forms of memory that could be involved in influencing case interpretation behavior. For example, participants could explicitly recognize a uniquely shaped biopsy (e.g., I remember this case because its shape resembled a unicorn!), or implicitly remember a sequence of image navigation movements initiated by a particular biopsy (i.e., implicit procedural memory). While we do not believe these other forms of memory necessarily played a role herein, they are exciting directions for future research. Biopsies are also frequently reviewed in the context of electronic medical records which can include rich clinical history that might cue pathologists to remember the associated case; future research should consider how secondary information available within the clinical workflow might influence memory for cases. The current study also only examined a 9-39-months washout period, and it is possible that a shorter washout period (e.g., 6 months) could be similarly sufficient. Finally, we only included resident pathologists during pathology training who do not have the same expertise as more experienced pathologists, and it is possible that more experienced pathologists would show a different pattern of results.

Conclusions

In conclusion, we provide evidence that a 9-14-months washout period is effective at mitigating implicit memory for cases in longitudinal pathology studies. We did not find substantial or statistically significant differences in review duration, self-reported confidence, or self-reported difficulty when a repeated case was reviewed after the washout period compared to a novel case. We take these results to suggest that pathologists are not remembering previously seen cases. We believe these results make a compelling case for the value of longitudinal assessments in both research and educational settings, leveraging the power of whole slide images and repeated assessments to reveal meaningful changes in the diagnostic decision-making process through postgraduate training.

Footnotes

Acknowledgements

We wish to thank Ventana Medical Systems, Inc., a member of the Roche Group, for use of iScan Coreo Au™ whole slide imaging system, and HD View SL for the source code used to build our digital viewer. For a full description of HD View SL please see ![]() . We also wish to thank the staff, faculty, and trainees at the various pathology training programs across the U.S. for their participation and assistance in this study.

. We also wish to thank the staff, faculty, and trainees at the various pathology training programs across the U.S. for their participation and assistance in this study.

Authors’ contributions

JGE, DLW, TTB, KFK, and TD conceived and designed this study. TTB and TD collected the data. HS and KS managed pathologist recruitment and scheduling, and provided feedback and suggestions on manuscript drafts. CEK and JW processed the data and performed statistical analyses, and TTB drafted the manuscript. All authors reviewed the final manuscript and provided feedback and suggestions.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Research reported in this publication was supported by the National Cancer Institute of the National Institutes of Health under award numbers R01 CA225585, R01 CA172343, and R01 CA140560. The content is solely the responsibility of the authors and does not necessarily represent the views of the National Cancer Institute or the National Institutes of Health. Researchers are independent from funders, and the funding agency played no role in study design, conduct, analysis, or interpretation.

Ethical statement

Data Availability Statement

Due to the highly specialized nature of participant expertise and therefore increasing risk of identifiable ![]() , we have decided not to make our data available in a repository. In the interest of minimizing the risk of participant identification, we will distribute study data on a case-by-case basis. Interested parties may contact Hannah Shucard at the University of Washington with data requests:

, we have decided not to make our data available in a repository. In the interest of minimizing the risk of participant identification, we will distribute study data on a case-by-case basis. Interested parties may contact Hannah Shucard at the University of Washington with data requests: