Abstract

Large language models (LLMs) offer emerging opportunities for psychological and behavioral research, but methodological guidance is lacking. In this article, I develop a framework for using LLMs as psychological simulators across two primary applications: simulating roles and personas to explore diverse contexts, and serving as computational models to investigate cognitive processes. For simulation, the framework includes (a) an implementation-confound checklist distinguishing essential from context-dependent methodological checks, (b) methods for developing psychologically grounded personas that move beyond demographic categories, and (c) a three-tier validation framework (direct, indirect, and generative) tailored to data availability. A diagnostic decision framework guides researchers through establishing performance validity, identifying implementation artifacts, and interpreting LLM-human discrepancies. For cognitive modeling, I synthesize (a) emerging approaches for probing internal representations, (b) methodological advances in causal interventions, and (c) strategies for relating model behavior to human cognition. The framework addresses overarching challenges, including prompt sensitivity, temporal limitations from training-data cutoffs, and ethical considerations that extend beyond traditional human-subjects review. Open-weight models are the default for reproducibility. Together, this framework integrates emerging empirical evidence about LLM performance—including systematic biases, cultural limitations, and prompt brittleness—to help researchers wrangle these challenges and leverage the unique capabilities of LLMs in psychological research.

Keywords

Large language models (LLMs) have rapidly emerged as versatile tools in psychological research. But beyond their utility for writing assistance (Lin, 2025c), programming (Guo, 2023), and text analysis (Feuerriegel et al., 2025), how can LLMs contribute to understanding psychological phenomena and behavior, and how might they do so? Although these systems offer unprecedented opportunities for psychological research (Demszky et al., 2023; Ke et al., 2025; Sartori & Orrù, 2023), their rapid adoption has outpaced methodological development, creating risks of invalid inferences and irreproducible findings. The field lacks both conceptual clarity about their distinct applications and methodological guidance for their implementation. To address these gaps, I frame LLMs as psychological simulators, providing a methodological guide for their two primary applications: simulating human roles and personas, and serving as models of cognitive processes.

The use of computational systems to simulate human behavior and cognition has deep roots in both psychology and artificial intelligence (AI). Computational modeling of human thought traces back to mid-20th-century efforts, such as Newell et al.’s (1958) general problem solver—one of the first implementations of the information-processing paradigm—and to early agent-based frameworks, such as Schelling’s (1971) segregation models. The cognitive-modeling tradition evolved from symbol-manipulation architectures through parallel-distributed-processing models (Rumelhart et al., 1986) to today’s large-scale deep networks. LLMs both continue this trajectory—operationalizing psychological constructs in code and data—and depart from it. Earlier neural networks faced criticism for opacity, but LLMs introduce qualitatively different interpretability challenges: Their vast parameter counts, training on uncontrolled internet corpora, and capabilities that emerge unpredictably with scale collectively create a kind of opacity that is fundamentally distinct from the handcrafted, theoretically motivated architectures of earlier cognitive models.

These novel challenges have prompted theoretical examination of fundamental questions: Can LLMs replace human participants (Lin, 2025b)? What are the implications of integrating AI with psychological science (van Rooij & Guest, 2025)? How do their limitations constrain the understanding of cognition (Cuskley et al., 2024; Shah & Varma, 2025), and does their use threaten or enhance the generalizability of psychological science (Crockett & Messeri, 2025; Lin, in press)? Yet even as researchers examine these fundamental questions, empirical applications proliferate rapidly—for example, using LLMs to simulate cross-cultural personality differences (Niszczota et al., 2025), probe theory of mind capabilities (Strachan et al., 2024), generate psycholinguistic norms (Trott, 2024a), and forecast human behavior (Schoenegger et al., 2024). Commercial services now offer AI participants for market research, and academic proposals suggest LLMs could substitute human participants (Grossmann et al., 2023; Sarstedt et al., 2024).

This disconnect between theoretical caution and empirical enthusiasm risks producing invalid inferences and irreproducible findings. Without rigorous methodological standards, researchers may mistake statistical artifacts for genuine psychological phenomena—a validity crisis driven by neglecting psychometric and causal-inference principles (Lin, 2025a). This has led to warnings against “GPTology”—the uncritical application of LLMs that overlooks the complexities of human psychology and risks producing low-quality research (Abdurahman et al., 2024). Building on emerging work that has begun to establish best practices (Abdurahman et al., 2025; Hussain et al., 2024; Lin, 2025d; Lu et al., 2024), in this article, I aim to provide systematic guidance to bridge theoretical potentials and applications.

The methodological gap reflects a fundamental shift in simulation architecture. Unlike traditional agent-based models (Bonabeau, 2002; Fagiolo et al., 2007) or cognitive models (Laird et al., 1987; Ritter et al., 2019) that embody explicit behavioral rules and theoretical commitments, LLMs learn behavioral patterns implicitly from vast corpora. They produce remarkably human-like outputs through mechanisms that—unlike their handcrafted predecessors—remain partially opaque (e.g., Lin, 2023). This shift from theory-driven to data-driven simulation demands new validation strategies, ethical frameworks, and interpretive approaches (Argyle et al., 2025).

Recent empirical work has begun mapping both the promise and perils of LLM-based simulation. On one hand, models can capture certain aspects of human psychology remarkably well—from replicating cultural differences in personality traits (Niszczota et al., 2025) and human-like error patterns in cognitive tasks (Sartori & Orrù, 2023) to predicting sensory judgments across multiple modalities (Marjieh et al., 2024). On the other hand, they exhibit systematic biases and oversensitivity to prompt variations, reflecting fundamental differences from human cognition that researchers must carefully navigate (Binz & Schulz, 2023b; Tjuatja et al., 2024). Models often fail to capture the diversity found in real human responses (P. S. Park et al., 2024; A. Wang et al., 2025), showing more extreme, less nuanced preference distributions in moral domains compared with human participants (Zaim bin Ahmad & Takemoto, 2025).

Below, I provide practical guidelines organized around two primary research applications. First, I examine how LLMs can simulate roles and personas to explore diverse perspectives and behaviors—extending the agent-based-modeling tradition with systems that generate linguistically rich, contextually sensitive responses. Second, I synthesize methodological approaches for using LLMs as cognitive models—building on the neural-network tradition to probe how these systems process information and whether their mechanisms illuminate human cognition. For each application, concrete methodological recommendations are provided, grounded in emerging empirical evidence.

This framework acknowledges the temporal, cultural, and representational constraints inherent in current LLMs (Ziems et al., 2024). They are trained on historical data with specific cutoff dates, predominantly reflect WEIRD (Western, educated, industrialized, rich, democratic) perspectives. Many also undergo posttraining modifications that further alter their psychological profiles, particularly around socially sensitive topics such as race and gender (Cui et al., 2025). These limitations do not negate their research value but rather define the contexts within which they can be productively employed. By making these constraints explicit and providing strategies to work within them, the framework enables researchers to harness LLM capabilities while avoiding common pitfalls.

The article proceeds as follows. I first examine role and persona simulation, providing guidelines for prompt design, response validation, and appropriate use cases—from simulating rare populations to prototyping survey instruments. I then analyze cognitive-modeling applications, reviewing methodological approaches for probing internal representations, synthesizing advances in causal interventions, and examining strategies for relating findings to human cognition. Finally, I address ethical considerations that extend beyond traditional human-subjects protections. Throughout this framework, I emphasize that LLM-based methods should supplement rather than substitute for traditional approaches, offering unique advantages while requiring careful validation against human data. A glossary of specialized AI terms follows this introduction (see Box 1).

Glossary

Using Language Models to Simulate Roles and Personas

Given their extensive training data, LLMs show particular promise in their capacity to adopt different personas and simulate diverse perspectives. To effectively leverage this capability, it is essential to consider both theoretical foundations and practical strategies.

Conceptual foundations and model capabilities

Understanding LLMs as language simulators that can role-play various personas requires first recognizing what these systems can and cannot do (Shanahan et al., 2023). Although LLMs process text without genuine cognition or consciousness, the text they process embodies rich psychological and social information accumulated from vast training corpora. This characteristic uniquely positions them as tools for exploring how language both encodes and expresses psychological phenomena and behavior.

Responding to persona-based prompts, LLMs draw on statistical patterns linking linguistic expressions to social roles and psychological states—reflecting human dynamics embedded in language. Because language is the primary medium for expressing beliefs and intentions, LLMs can fluidly shift perspectives to generate diverse viewpoints often inaccessible through traditional recruitment (Tseng et al., 2024). This capacity arguably surpasses human role-playing—constrained by limited perspective-taking and idiosyncratic biases (Pronin et al., 2001)—by leveraging aggregate statistical patterns elusive to individuals.

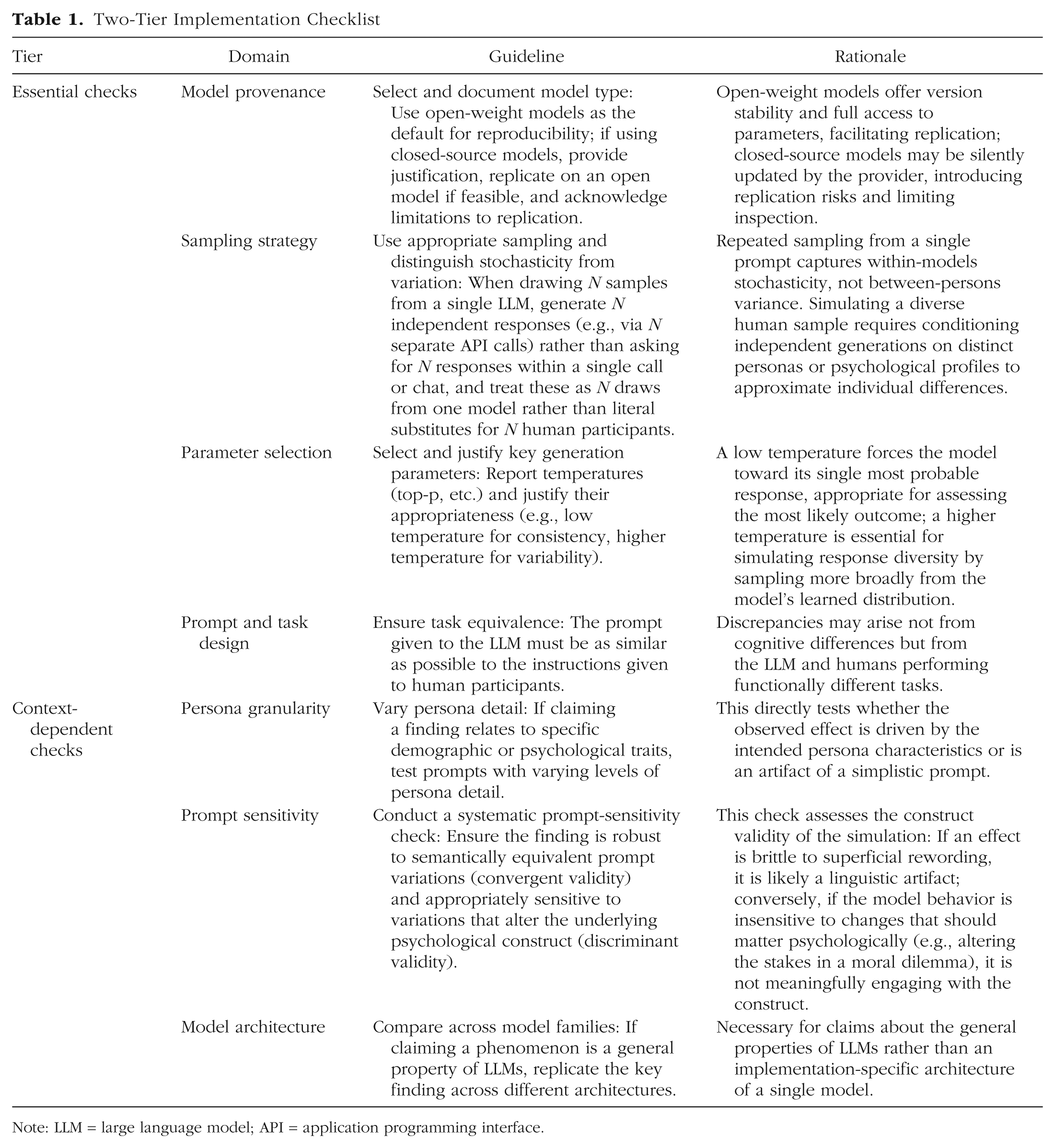

Recent empirical work has begun to establish the conditions under which LLM simulations can meaningfully capture human psychological patterns. Niszczota et al. (2025) provided an instructive demonstration, using GPT-3.5 and GPT-4 to simulate cross-cultural personality differences. Their experiment compared Big Five personality traits between simulated U.S. and South Korean personas, prompting models to “play the role of an adult from [the United States/South Korea].” Although GPT-3.5 failed to produce meaningful cultural patterns, GPT-4 successfully replicated established cross-cultural differences in personality traits. In other words, different model versions can produce dramatically different results, necessitating careful model selection and comparison (see Table 1, model provenance and model architecture).

Two-Tier Implementation Checklist

Note: LLM = large language model; API = application programming interface.

Similarly encouraging results have emerged from studies examining the ability of LLMs to generate psycholinguistic norms. For instance, Trott (2024a) demonstrated that GPT-4 effectively captures human judgments of psycholinguistic properties—including word concreteness, semantic similarity, sensorimotor associations, and iconicity—with correlations matching or even surpassing average interannotator agreement. Moreover, substituting LLM-generated norms for small human samples in regression analyses preserves the direction and magnitude of effects, highlighting the utility of LLMs in approximating the “wisdom of small crowds” in psycholinguistic research (Trott, 2024b). LLMs thus offer rapid, cost-effective methods for generating initial approximations of psychological phenomena, particularly when these phenomena have strong linguistic components.

Conditioning models on specific psychological data sets can further improve alignment with human responses. Chuang et al. (2024), for example, integrated empirically derived human-belief networks—estimated via factor analysis on a 64-item controversial-beliefs survey—into LLM agent construction. By seeding role-playing agents with a single belief on a representative topic alongside demographic information and applying both in-context learning and supervised fine-tuning, they achieved substantially better alignment with human opinions on related test topics than when using demographics alone. Likewise, Moon et al. (2024) used detailed “backstories” rather than surface demographics to improve matching to human-response distributions by up to 18% and consistency metrics by 27% across three nationally representative surveys. Nuanced persona development can yield more reliable and representative simulations (see Table 1, persona granularity).

Fine-tuning on representative corpora can also improve validity. Chen et al. (2024) developed contextualized construct representation, converting psychological questionnaires into classical Chinese and fine-tuning models on historical texts. Their approach outperformed both generic models and simple prompting on culture-specific constructs, such as collectivism and traditionalism. Thus, domain expertise combined with technical customization can enhance validity for specific research contexts.

However, LLMs occupy a peculiar temporal state: They possess vast historical knowledge yet remain frozen at their training cutoff. They cannot reflect the postcutoff events or cultural shifts that shape human psychology and behavior (Kozlowski & Evans, 2024). A model trained before a major social movement cannot capture the transformations it engendered; ongoing societal learning remains beyond its horizon.

This temporal gap is further compounded by biases in the training data. Online text tends to overrepresent more recent periods; perspectives from the immediate past may crowd out those from more distant historical eras. Such skew can distort any attempt to study psychological change over time because the model’s understanding of the past is filtered through what was digitized and included in its corpus—introducing selection biases that must be explicitly acknowledged, and when possible, corrected (Ziems et al., 2024).

Methodological framework for implementation

To operationalize these capabilities into rigorous research practices, Table 1 presents a two-tier framework. Essential checks apply to most LLM-simulation research. Context-dependent checks become necessary when making specific theoretical claims—for instance, about demographic effects or general LLM properties.

The importance of these guidelines becomes apparent when examining how methodological choices shape research outcomes. That GPT-4 replicated cultural patterns when GPT-3.5 could not (Niszczota et al., 2025), for example, illustrates why the check for model architecture (see Table 1) is necessary when making claims about general LLM properties: What holds for one model version may not generalize to others.

The choice between base and instruction-tuned models also matters. Reinforcement learning from human feedback (RLHF) introduces systematic biases: RLHF-tuned models skew toward liberal, higher-income, educated perspectives relative to their base counterparts (Santurkar et al., 2023; Tao et al., 2024). Moreover, although instruction tuning improves simulation of topics with strong consensus, it degrades performance on pluralistic topics with diverse opinions (Hu et al., 2025). When studying implicit biases or authentic response patterns, researchers should therefore compare base and fine-tuned versions (Gao et al., 2025).

In general, open-weight models should serve as the default: Unlike closed-source alternatives, they provide version stability, parameter access, and transparency—necessary for replication. Such stability proves particularly critical for complex, longitudinal simulations (J. S. Park et al., 2023) in which silent proprietary updates could invalidate findings across multistage research programs. Access to internal workings (token probabilities, attention patterns, model parameters) further enables mechanistic analysis that closed systems cannot support. When researchers must employ closed-source models—for instance, to establish whether findings generalize across the current spectrum of systems or to access state-of-the-art capabilities unavailable in open alternatives—they should explicitly justify this choice, attempt replication with open-weight models when feasible, and clearly acknowledge the resulting constraints on verification and reproducibility.

Prompt-sensitivity analysis addresses empirical findings of extreme sensitivity to wording variations. Tjuatja et al. (2024) found that RLHF-tuned models showed high sensitivity to seemingly trivial changes, such as typos in survey questions—variations that human respondents would typically ignore. This brittleness necessitates systematic testing of prompt variations to distinguish robust psychological patterns from artifacts of specific phrasings. Effective prompts balance specificity with ecological validity, providing enough context to elicit coherent responses while avoiding overly constraining scenarios that might limit generalizability (Lin, 2024a).

Applications in psychology and behavior research

Building on these methodological foundations, I now examine concrete applications in which LLM-based role simulation demonstrates particular promise. These use cases span multiple domains of psychological inquiry, progressing from simple substitution to complex multiagent systems.

Studying inaccessible populations

Perhaps most compelling is the ability to study populations that remain inaccessible through traditional recruitment methods. Executives, political leaders, historical figures, and members of isolated communities have long presented challenges for psychological research. LLMs trained on relevant textual data can simulate responses from these populations, enabling exploratory studies that would otherwise remain impossible. Chen et al. (2024), for example, used LLMs trained on historical texts to investigate psychological patterns in past populations while validating findings against historical records and acknowledging the interpretive constraints imposed by their methods (see also Varnum et al., 2024). For historical populations, temporal distance is inherent to the inquiry; for contemporary inaccessible populations—executives, political leaders, isolated communities—such displacement becomes a validity threat that requires contemporaneous validation.

Addressing ethical constraints

Ethical and practical constraints often limit the ability to study extreme situations or sensitive topics with human participants. Here, LLM simulation offers unique alternatives. R. Wang et al. (2024), for example, used LLMs to simulate patients via diverse cognitive models informed by cognitive-behavioral therapy (CBT), creating interactive training scenarios for mental-health trainees that would be difficult to construct with real patients. Their PATIENT-Ψ system generates case formulations grounded in CBT principles, allowing trainees to practice cognitive-model formulation and therapeutic interviewing in a risk-free environment. The system’s effectiveness was validated through measures of trainee skill acquisition and confidence and expert evaluations.

Rapid prototyping and cross-cultural research

The rapid-prototyping capabilities of LLMs prove particularly valuable in survey and experimental design. At minimal cost and time, researchers can iterate on instrument wording, flag ambiguous items, and identify questions that might produce floor or ceiling effects. Although this simulation does not replace the need for a human pilot study—an anomalous result could reflect a flawed item but also a simulation artifact—its purpose is to refine the instrument for a more targeted and efficient human pilot. An unexpected LLM response prompts the researcher to critically examine the item, making the validation process more deliberate. This application proves especially useful in cross-cultural research, in which subtle linguistic or conceptual differences can invalidate measures across populations. By simulating responses from different cultural contexts using appropriately varied prompts, researchers can identify problematic items before beginning expensive international data-collection efforts (Sarstedt et al., 2024; Tao et al., 2024).

Complex social systems

Complex social phenomena involving multiple actors and emergent dynamics represent another frontier for LLM-powered simulations. J. S. Park et al. (2023) introduced generative agents—LLM-driven entities that store natural-language “memories,” synthesize them into higher-level reflections, and plan actions in an open-world sandbox. When prompted to “throw a Valentine’s Day party,” 25 agents autonomously spread invitations, forged relationships, coordinated the event, and diffused information across their social network. A systematic ablation of the memory, reflection, and planning modules underscored each component’s necessity for producing believable individual and collective behaviors. Horton (2023) extended this approach to economics, using LLMs to simulate labor-market dynamics and test how minimum-wage policies affect realized wages and labor substitution. LLMs can thus serve as laboratories for studying complex social systems that would be difficult to manipulate experimentally with human participants.

A three-tier validation framework

These diverse applications require rigorous validation, which must adapt to human-data availability. Figure 1 presents a decision framework distinguishing three scenarios—direct, indirect, and generative validation—each requiring different methodological approaches.

A decision framework for validation and interpretation in LLM-based psychological simulation. The framework proceeds through four stages. Stage 1 establishes performance validity through direct validation when comparable human benchmarks exist, indirect validation when only partial data are available (e.g., testing constituent processes or convergent measures), or generative validation when human data cannot be ethically or practically obtained (evaluating theoretical coherence, emergent properties, and expert assessment of plausibility). Stage 2 identifies implementation confounds that may artificially inflate or deflate LLM-human differences, including sampling strategy, prompt design, and parameter selection. Stage 3 guides interpretation of observed discrepancies by considering how training-data artifacts (temporal displacement, representational biases) and fundamental architectural differences (lack of embodiment, statistical vs. experiential learning) may differentially shape or limit conclusions. Stage 4 emphasizes transparent reporting that matches empirical claims to validation strength and documents all implementation choices. LLM = large language model.

Direct validation (when comparable human benchmarks exist)

Much of current research falls into this category, in which established human data sets permit direct comparison. Validation involves comparing LLM responses against established human findings. Methodological equivalence is critical—identical or comparable tasks and instructions ensure that observed differences reflect genuine psychological divergences rather than task artifacts (essential checks in Table 1).

Response-distribution analysis provides another crucial validation tool. Human populations exhibit natural variability, and credible simulations must capture not just average tendencies but also distributional properties. Mei et al. (2024), for example, compared GPT-3.5-Turbo and GPT-4 with tens of thousands of human participants across six canonical economic games, examining not only mean choices but also full response distributions, dynamic consistency (e.g., tit-for-tat behavior in a repeated prisoner’s dilemma), and sensitivity to framing and context. Although GPT-4 often falls within the human-response range and passes a behavioral Turing test in several games, it diverges notably in the prisoner’s dilemma and as the investor in the trust game.

More concerning, P. S. Park et al. (2024) found that GPT-3.5 (text-davinci-003) produced near-zero variation in six of 14 study replications (Many Labs 2)—a “correct answer” effect in which responses homogenize into a single modal answer rather than reflecting human-like diversity. Such granular distributional analysis—evaluating how and why LLMs differ, not just whether they match human means—establishes clear boundaries for meaningful approximation of human decision-making.

Validation extends beyond comparing final outcomes to examining how models arrive at those outcomes. Process-level validation assesses whether models follow psychologically plausible strategies rather than producing correct end states through artificial means. J. S. Park et al. (2023) exemplified this approach through systematic ablation studies: Removing memory, reflection, or planning modules from generative agents degraded performance in psychologically meaningful ways. Without memory, agents failed to maintain consistent relationships across interactions; without reflection, they could not synthesize experiences into higher-level generalizations; and without planning, they showed reduced believability and multistep coherence. These degradations mirror how human capabilities depend on intact cognitive systems, validating process-level psychological realism rather than mere endpoint fitting.

Sequential decision-making tasks offer particularly diagnostic evidence. Do models exhibit systematic search strategies, backtracking after dead ends, or refinement through iteration—or do they arrive at solutions through direct retrieval bypassing intermediate steps? Response-latency patterns, when reflecting actual computational demands, provide another signal: Humans show longer response times for difficult problems or conflicting information, and thus uniformly quick responding reveals fundamentally different processing. Strategy consistency across related tasks offers additional evidence: Simulations showing correlated patterns across judgment tasks—especially when common thinking dispositions are involved (Toplak et al., 2011)—suggest captured cognitive approaches rather than task-specific pattern matching.

Indirect validation (when only partial human data are available)

Many research questions lack perfectly matched human-comparison data but can draw on related empirical findings. Indirect validation proceeds through three complementary strategies: validating constituent processes, seeking convergent evidence across related measures, and stress-testing simulation limits.

Constituent process validation

Individual simulation components often permit validation against established findings from simpler paradigms. A complex multiagent simulation of organizational change might validate individual agent decision-making against choice architecture research, social-influence mechanisms against persuasion studies, and memory-based reasoning against recall findings. This piecewise approach builds confidence by confirming that components operate as psychological theory predicts.

R. Wang et al. (2024) employed this strategy in developing PATIENT-Ψ to simulate CBT patients for training mental-health professionals. Without public data sets of realistic CBT cognitive models, they validated constituent processes—how agents represent beliefs and emotional responses—against CBT principles and expert clinical judgments and then evaluated the system’s fidelity and training usefulness with experts and trainees. This component-level validation supports psychologically plausible mechanisms even when the full interactive system resists direct benchmark validation.

Convergent evidence across partial anchors

When multiple partial empirical anchors exist, convergence strengthens confidence. Researchers validating simulations of political-attitude formation might triangulate across cross-sectional surveys, longitudinal studies, and experimental findings on social influence. Divergence patterns prove equally informative: If a simulation matches cross-sectional distributions but fails to capture longitudinal change trajectories, this reveals specific limitations—the model may capture stable individual differences but miss dynamic attitude-formation processes. Such targeted divergences guide refinement more effectively than wholesale success or failure.

Stress-testing simulation limits

Systematic parameter manipulation provides diagnostic evidence of whether performance degrades in psychologically plausible ways. Does response quality decline gradually with task complexity, showing signatures of limited cognitive resources, or does the model maintain perfect performance until suddenly producing incoherent output? When encountering ambiguity, do models show increased variability and heuristic reliance, or do they confidently generate responses regardless of domain—revealing a lack of metacognitive awareness (Bowers et al., 2025)?

This discriminant-validity approach defines conditions under which simulation findings warrant psychological interpretation. Transparent reporting of limits—what the simulation can and cannot do, how it fails, cases in which inferences remain valid—is as essential as documenting successes.

Generative validation (when human data cannot be obtained)

Complex agentic simulations often resist direct validation. Consider simulating discrimination emergence across a multiagent social network: No existing data set provides equivalent temporal resolution, behavioral granularity, and experimental control, and creating one might require ethically problematic manipulations. Yet such simulations offer precisely the value justifying their development—exploring processes elusive to direct empirical investigation. Validation proceeds through three complementary strategies: assessing theoretical coherence, examining emergent phenomena, and soliciting expert evaluation.

Theoretical coherence

Does agent behavior align with established psychological mechanisms? A discrimination simulation should exhibit patterns consistent with social-identity theory, contact hypothesis, or stereotype-formation research—reduced bias following positive intergroup contact, increased bias under intergroup competition, or stereotype persistence despite disconfirming evidence. Theoretical incoherence (e.g., bias emerging without perceived threat, competition, or in-group identification) signals simulation failure rather than novel discovery. The simulation’s value lies in integrating known mechanisms to explore their interaction over time, not in producing theoretically arbitrary outcomes.

Emergent phenomena

Do expected higher-order patterns arise from lower-level interactions without explicit programming? In-group favoritism should emerge from individual biases and selective interaction, attitude polarization should emerge from homophily and confirmation bias, and social-norm formation should emerge from observation and conformity pressures. J. S. Park et al. (2023) demonstrated this: 25 agents autonomously spread party invitations, forged relationships, and coordinated events through emergent social dynamics rather than scripted behaviors. The presence of such emergent properties provides indirect validation that the simulation captures relevant psychological dynamics.

Expert evaluation

Domain specialists can assess qualitative plausibility—whether agent behaviors, interaction patterns, and developmental trajectories match theoretical expectations and ethnographic knowledge. Experts can identify failures of verisimilitude: discrimination emerging instantaneously, social networks forming without homophily constraints, or attitude change occurring without exposure to alternative views.

Positive and negative controls

Across all three validation scenarios, researchers should establish validation boundaries through systematic control conditions. Positive controls are conditions in which an effect is expected. In this context, they comprise tasks in which LLMs should perform competently given their training, such as making grammatical acceptability judgments or demonstrating basic textual reasoning. Success on these tasks confirms that the experimental setup (prompts, parameters) is sound and can detect a known capability. Failure, conversely, suggests an implementation confound—such as a poorly specified prompt or an inappropriate sampling strategy—rather than a fundamental model limitation.

Conversely, negative controls are conditions in which no effect is expected. For LLM simulations, these are tasks that tap into capabilities models architecturally lack, such as making proprioceptive judgments or describing phenomenal experiences. An LLM should fail these controls. Unexpected success signals that the task was ill-posed—solvable through text-based reasoning alone—or that the model has learned to mimic competence through superficial linguistic patterns without capturing the underlying mechanism (Liu & Ding, 2025).

Systematic application of control conditions calibrates validation expectations (Fig. 1), preventing both overinterpretation (mistaking superficial alignment for deep equivalence) and underinterpretation (mistaking implementation failures for fundamental limitations).

Interpreting LLM-human discrepancies

When LLM-human differences emerge, a diagnostic framework is needed to distinguish implementation confounds (Fig. 1, Stage 2) from training-data artifacts and architectural differences (Fig. 1, Stage 3).

Implementation confounds

Methodological choices can artificially inflate or deflate observed similarities. Sampling strategy shapes variance structure. Generating 1,000 responses from a single LLM captures within-models stochasticity, not the between-persons variance of human populations. Consequently, even well-calibrated simulations exhibit compressed variance unless researchers explicitly model individual differences through diverse psychological profiles (Chuang et al., 2024) or full agentic frameworks (J. S. Park et al., 2023).

Prompt choices create additional confounds. Simple demographic prompts (“You are a 35-year-old woman from Chicago”) may elicit stereotypical responses not because models cannot capture human psychology but because prompts fail to specify the psychological richness guiding actual human responses (Chuang et al., 2024; Moon et al., 2024). Elicitation format also matters: Forcing constrained numerical outputs (Likert ratings, numerical scales) may compress variance and regress to the mean, whereas eliciting free-text responses and projecting them onto rating scales via semantic similarity may recover more faithful distributions (Maier et al., 2025). This reflects a general principle: Probe LLMs through their linguistic capabilities rather than imposing response formats designed for human participants.

Temperature settings and other parameters similarly constrain interpretations: Low temperature measures the model’s most probable response, and higher temperature explores its learned distribution. Comparing low-temperature LLM outputs with human data conflates the modal response with distributional coverage.

Training-data artifacts

Temporal displacement represents a critical consideration. Models trained on historical data cannot reflect recent societal changes, emerging social movements, or evolving attitudes. For stable psychological phenomena (basic cognitive processes, fundamental emotional responses), temporal displacement may be negligible; for rapidly changing domains (social attitudes, technology adoption, political opinions), this gap fundamentally limits validity. Researchers should document model-training dates, implement contemporaneous validation for temporally sensitive domains, and consider temporal displacement when interpreting unexpected results. Representational biases in the training corpus (WEIRD overrepresentation, demographic skews) may also account for systematic deviations.

Architectural differences

Some discrepancies stem from fundamental constraints: lack of embodiment and sensorimotor grounding, and the gap between statistical pattern learning and experiential knowledge acquisition (Pezzulo et al., 2024). These limitations become particularly salient when phenomena require physical sensation, emotional arousal, or lived experience.

Conversely, the absence of LLM-human differences requires scrutiny. Superficial alignment—matching human means but not distributions or succeeding on simple tasks but failing on diagnostic variants—can mask deeper divergences, such as in response variance (P. S. Park et al., 2024). Robust validation demands convergent evidence: alignment across multiple metrics (means, variances, sequential dependencies), sensitivity to theoretically relevant manipulations, and stability across implementation and temporal contexts.

This diagnostic stance treats LLM simulations as scientific instruments requiring calibration. Implementation choices are parameters to be systematically varied, with result patterns revealing which phenomena are robust properties of learned representations versus artifacts of probing methods. Transparent reporting (Fig. 1, Stage 4) demands documenting implementation choices and confound checks while matching empirical claims to validation tier achieved.

Boundaries and limitations

Despite these advances, LLM role simulations face inherent limitations. The absence of embodied experience creates challenges for phenomena tied to physical sensation, emotional arousal, or lived experience. Although humans describe pain, emotion, and trauma through language—making these experiences partially accessible to text-based modeling—current LLMs lack the sensorimotor grounding that shapes how humans represent and reason about such experiences. More critically, validation becomes increasingly difficult as phenomena shift from those with clear behavioral or linguistic signatures (beliefs, stated preferences) to those rooted in subjective experience (qualia, proprioception, affective intensity). This limitation reflects not an absolute boundary but a gradient of tractability, with experientially grounded phenomena presenting steeper validation challenges.

The statistical nature of LLM responses enables capturing population-level patterns but may miss outliers, unique individual perspectives, or responses that deviate from typical training-data patterns (P. S. Park et al., 2024; A. Wang et al., 2025; Zaim bin Ahmad & Takemoto, 2025). LLMs excel at simulating modal responses but may fail to capture the full range of human psychological diversity—particularly problematic when studying individual differences, personality extremes, or rare psychological phenomena.

Cultural and demographic biases in training data create additional constraints. Overrepresentation of WEIRD populations and publicly expressive individuals means that simulations of marginalized groups or non-Western cultures require particular caution (Tao et al., 2024). For example, ChatGPT achieves higher simulation accuracy for male, White, older, highly educated, and upper-class personas and underperforms for others (Qu & Wang, 2024); it consistently portrays racial minorities as more homogeneous than White Americans (Lee et al., 2024); and it pervasively represents women as younger than men—a statistical bias that can diverge from workforce demographics (Guilbeault et al., 2025). These biases can amplify through statistical optimization (Z. Wang et al., 2024), compressing diverse experiences into narrow characterizations. Furthermore, LLMs show bias against null findings and inflate effect sizes: When replicating studies finding no significant effect, LLMs produced significant results in the vast majority of cases; for known effects, LLMs consistently generated larger effects than human participants (Cui et al., 2025).

These limitations underscore treating LLM simulations as hypothesis-generating tools requiring validation with human samples. Successful role simulation requires moving beyond simple demographic prompting to psychologically grounded approaches that acknowledge both model capabilities and fundamental constraints. Whether studying rare populations, prototyping interventions, or exploring complex social dynamics, researchers should maintain clear boundaries between simulation and substitution—using LLMs as tools for discovery rather than endpoints for inference.

Using Language Models to Model Cognitive Processes

Beyond simulating human roles and personas, LLMs offer a second avenue for psychological research: investigating cognitive mechanisms through their internal workings. This approach shifts the question from whether LLMs produce human-like outputs to what their computational processes reveal about cognition itself.

Theoretical foundations for cognitive modeling

Computational models for understanding cognition trace back to early AI and cognitive architectures (McGrath et al., 2024; Simon, 1983; van Rooij et al., 2024). LLMs are particularly compelling as cognitive models because they acquire complex linguistic knowledge through learning processes that, although distinct from human development, produce representations that often aligns with human cognitive structures.

First, these models develop internal representations corresponding to meaningful linguistic and conceptual categories without explicit programming. Different layers capture different levels of linguistic abstraction, from surface-level syntactic features in early layers to complex semantic relationships in later ones (Manning et al., 2020; Tenney et al., 2019). This hierarchical organization mirrors theories of language processing, suggesting computational principles transcending specific implementations (Liu et al., 2025). Fine-tuning a language model on human behavioral data both improves choice prediction and aligns internal representations with human neural activity without exposure to neural data during training (Binz et al., 2025).

Second, LLMs exhibit emergent behaviors arising from simple learning rules interacting with complex data (Wei et al., 2022)—paralleling how human cognitive abilities develop from basic neural mechanisms interacting with rich environmental input. Although specific learning algorithms differ (backpropagation in LLMs vs. the constellation of mechanisms supporting human learning), both systems demonstrate how sophisticated capabilities can emerge from simple foundations when exposed to structured information (Binz & Schulz, 2023a; Frank, 2023; Shah & Varma, 2025).

Third, alignment between model representations and neural activity follows a scaling law: As models grow larger, their internal representations—specifically, attention patterns—become better predictors of human brain activity and eye movements during naturalistic reading (Gao et al., 2025). Critically, this improvement stems from scale itself rather than instruction tuning: Base models of increasing size (7 billion to 65 billion parameters) show monotonically improving alignment with human functional-MRI data and regressive saccade patterns, whereas instruction-tuned variants of identical size show no advantage. The effect generalizes across languages (English and Chinese) and modalities (reading and listening), suggesting that scaling produces representations that are computationally more analogous to those underlying human language processing.

Fourth, the success of LLMs in capturing human-like performance suggests that they may have discovered computational solutions to problems that biological systems also face (Buckner, 2023). These convergent solutions manifest across multiple domains: unsupervised or self-supervised learning during pretraining (Manning et al., 2020), in-context learning, domain-general computations, and human-level performance on challenging tasks. When LLMs learn to track long-distance dependencies or resolve ambiguous pronouns, they develop mechanisms for maintaining and manipulating information over time—challenges that human cognitive systems also confront (Ambridge & Blything, 2024; Blank, 2023; Millière, 2024). Studying how LLMs solve these problems provides insights into computational requirements of cognition and potential mechanisms for meeting them (Lindsay, 2024).

Importantly, the investigation of LLMs as cognitive models depends on the transparency afforded by open-weight models (Zhang et al., 2022), allowing researchers to directly probe, intervene, observe, and measure model behaviors (Frank, 2023; McGrath et al., 2024). Measures fall into two categories—correlational and causal, detailed below—enabling researchers to understand how neural networks process information.

Correlational approaches to probing model cognition

Correlational methods comprise two major classes: internal probing and output analysis.

Internal probing

Probing involves training auxiliary classifiers on model internal states to predict specific properties of interest (Belinkov, 2022). For example, a classifier trained on activation patterns from a particular layer can determine whether that layer encodes syntactic information (e.g., part-of-speech tags) or semantic information (e.g., animacy). Probe performance reveals what information is represented at different processing stages, mapping information flow through the network (Manning et al., 2020; Tenney et al., 2019).

However, probing has important limitations. High probe accuracy does not necessarily mean the model uses that information functionally—the probe might detect incidental correlations rather than causally relevant features. Conversely, low probe accuracy does not prove information absence; it might simply be encoded in a format the probe cannot detect. These limitations have motivated more sophisticated approaches that combine probing with causal intervention (discussed next).

Behavioral analysis

Behavioral analysis focuses on systematic patterns in model outputs rather than internal representations. By carefully designing stimulus sets that isolate specific cognitive phenomena, researchers can test whether models exhibit human-like processing signatures: semantic priming (Jumelet et al., 2024), garden-path effects (Amouyal et al., 2025), and structural biases in ambiguity resolution. Liu and Ding (2025) developed a one-shot word-deletion task in which the deletion rule was deliberately ambiguous; both humans and LLMs consistently inferred rules based on syntactic structure, preferring to delete whole constituents rather than arbitrary word strings—revealing not just passive structural representation but also its active deployment in resolving uncertainty.

Behavioral analysis reveals functional similarities and differences between human and model cognition. When models show human-like patterns, it suggests they have discovered similar computational solutions despite different implementations. When they diverge, this highlights either model limitations or interesting differences in how artificial and biological systems process information. This comparative approach proves particularly valuable for understanding syntactic processing, semantic comprehension, and pragmatic inference.

Causal intervention and mechanistic understanding

Whereas probing and behavioral analyses reveal correlational patterns, establishing mechanistic understanding requires causal-intervention techniques. Inspired by lesion studies in neuroscience, these methods enable researchers to directly link model components to behaviors through targeted manipulations—selectively modifying or disabling model parts to observe resulting changes. Unlike biological systems, LLMs permit precise, reversible interventions impossible or unethical in human studies.

Model editing

Meng et al. (2022) investigated factual knowledge storage in GPT-like LLMs. Performing causal-mediation interventions on hidden-state activations across model components, they identified middle-layer feed-forward modules as the critical locus of factual associations. They then demonstrated that individual facts—such as updating “The Space Needle is in Seattle” to “The Space Needle is in Paris”—can be reliably edited by applying targeted rank-one updates to corresponding feed-forward weights (rank-one model editing). This mechanistic insight reveals how models store factual information and illuminates the computational structure underlying knowledge representation.

Activation patching

Building on causal-intervention techniques, activation patching (causal tracing) offers a more precise tool for understanding information flow through networks (Heimersheim & Nanda, 2024). Researchers can take activation patterns from one context—say, when the model correctly answers “The capital of France is Paris”—and surgically insert them into another context in which the model processes a different question. By systematically swapping these activation patterns at different locations in the network, researchers can trace exactly which pathways carry specific information.

This technique has yielded insights into how models learn from examples. When given a prompt such as “Cat→Gato, Dog→Perro, House→?,” models infer that they should translate to Spanish and respond “Casa.” Activation patching revealed that specific components—called “induction heads”—detect these pattern mappings and copy relevant behavior, implementing a learned “find-and-apply-pattern” operation (Olsson et al., 2022). This shows how models adapt their behavior based on context (in-context learning), a capability that emerges from training despite never being explicitly programmed.

These causal techniques offer unique advantages for cognitive modeling. Precise reversible interventions enable strong causal inferences about the relationship between representations and behaviors. Combined with behavioral analyses showing human-like performance patterns, these techniques provide converging evidence for shared computational principles.

Learning dynamics and developmental analogies

One of the most intriguing applications of LLMs as cognitive models involves studying how cognitive abilities emerge through learning. By analyzing models at different training stages or comparing models trained on different data, researchers can investigate how exposure to linguistic information shapes cognitive capabilities. This developmental perspective offers insights into the relationship between experience and cognitive structure.

Inspired by comparative psychology, the controlled-rearing approach trains models on carefully constructed data sets to test specific hypotheses about learning. Just as manipulating newborn chicks’ visual experiences (e.g., slow or fast object motion) reveals core learning algorithms supporting object perception, manipulating inputs in language models can test which specific input types are necessary for learning.

Misra and Mahowald (2024) showcased this approach in their study of syntactic generalization. They trained transformer language models on systematically manipulated corpora: a default corpus, one with all AANN (Article + Adjective + Numeral + Noun; e.g., “a beautiful five days”) sentences removed, and others in which AANNs were replaced by perturbed variants (ANAN, NAAN). Models trained without any AANN examples but still exposed to related constructions (e.g., “a few days”) generalized to novel AANN instances at well above chance levels, and those trained on corrupted variants did not. This finding demonstrates that language models can learn rare grammatical phenomena by bootstrapping from more frequent, related structures, providing computational support for theories of language acquisition.

This approach extends naturally to cross-linguistic and cross-cultural investigations. By training models on corpora from different languages or cultural contexts, researchers can explore how linguistic and cultural environment shapes cognitive representations. Multilingual models show that internal representations systematically vary by language pair—transfer is strongest between typologically similar languages—and that models develop language-specific and shared circuits for syntax and semantics (Muller et al., 2021; Pires et al., 2019).

The temporal dynamics of learning in LLMs also provide insights into cognitive development. Early in training, models rely on simple, surface-level heuristics (n-gram-like predictions) before gradually forming deeper, hierarchical representations—phases resembling developmental trajectories in children (e.g., progression from lexical to syntactic competence; Choshen et al., 2022; Evanson et al., 2023). Although LLM training unfolds on vastly different timescales and with different mechanisms, these parallels suggest general principles about how complex cognitive abilities emerge from simpler foundations through interaction with structured input.

Multimodal extensions and embodied cognition

Although language models have proven valuable for understanding linguistic phenomena, their utility extends beyond language processing. When combined with vision systems—as in vision-language models—they serve as models for visual perception, memory, and other cognitive processes, complementing traditional artificial neural networks in modeling the mind and brain (Kanwisher et al., 2023; Wicke & Wachowiak, 2024). This expansion addresses a fundamental question in cognitive science: How do cognitive systems integrate information across multiple modalities?

Multimodal models processing both language and vision provide new opportunities to explore this integration. Models such as CLIP (contrastive language-image pretraining) and its successors learn to align representations across modalities by training on image-caption pairs, creating unified embeddings that capture both visual and linguistic information.

The cognitive relevance of these multimodal representations becomes apparent when examining their predictive power. A. Y. Wang et al. (2023) found that CLIP’s joint vision-language representations explained up to 79% of variance in high-level visual cortex, outperforming vision-only models (ImageNet-trained ResNet50) and text-only models (BERT)—especially in regions linked to scene and human-object interactions (parahippocampal place area, extrastriate body area, temporoparietal junction). Likewise, Shoham et al. (2024) showed that CLIP embeddings predict human similarity judgments in pairwise rating tasks—when participants rated the visual similarity of familiar faces and objects presented as images (perception) or reconstructed from names (recall)—significantly better than purely visual (VGG-16) or purely semantic (SGPT) models.

These findings suggest that joint training on images and natural language produces embeddings that better approximate how biological systems integrate multimodal information, highlighting how computational models with natural language supervision can reveal principles of cognitive organization difficult to uncover with traditional approaches.

Limitations and interpretive challenges

Fundamental differences between artificial and biological systems create both opportunities and constraints for cognitive modeling. Understanding these limitations is crucial for drawing appropriate inferences from model studies (Cuskley et al., 2024; Lin, 2025b; Shah & Varma, 2025; van Rooij & Guest, 2025).

Scale disparity

LLMs are exposed to far more linguistic data than any human encounters, potentially discovering statistical patterns that play no role in human cognition. This raises questions about whether model mechanisms reflect human-like solutions or alternative strategies enabled by massive data exposure. Researchers must carefully consider whether observed mechanisms could plausibly operate given human-scale learning constraints.

Architectural differences and circular inference

The core challenge stems from inductive biases: Architectural priors; training objectives (e.g., next-token prediction), and statistical regularities in data can jointly produce behaviors that mimic cognitive phenomena without sharing underlying mechanisms. This creates a risk of circular inference in which a model appears to validate a theory simply because its design reflects similar assumptions—producing theory-consistent behaviors through mechanisms that differ from those proposed for human cognition. Researchers can mitigate this risk by testing whether effects replicate across different model families or using mechanistic interventions to distinguish genuine cognitive alignment from learned statistical shortcuts. However, such strategies are often constrained by the current landscape, in which many state-of-the-art models share similar architectures (e.g., transformers), objectives, and training regimes, limiting the extent to which they can adjudicate between competing cognitive theories. Yet this limitation frames a potential advantage: By manipulating these architectures, researchers can dissociate implementation from function, isolating the necessary principles of intelligence.

Lack of grounding and embodiment

This represents perhaps the most fundamental limitation for cognitive modeling. Human cognition develops through interaction with the physical and social world, shaping representations that purely linguistic exposure cannot replicate. This limitation particularly affects spatial reasoning, social cognition, and affective processing, in which embodied experience plays crucial roles. Even multimodal models, such as CLIP—lacking sensorimotor interaction, temporal continuity, and physical grounding—cannot capture cognitive phenomena rooted in bodily experience (e.g., real-world spatial reasoning, tool use, affective dynamics), suggesting a major frontier for future work in grounded, multimodal cognitive modeling.

Future directions and methodological recommendations

The next phase of LLM-based cognitive modeling requires greater transparency and deeper integration. Open-weight architectures and conceptual frameworks that connect internal representations (activations or attention patterns) to cognitive constructs will be essential. These tools can catalyze collaboration with cognitive neuroscience: Model activations can generate testable hypotheses about neural computation, and empirical data can guide model refinement.

Systematic comparisons across architectures, training regimes, and model scales will help disentangle general computational principles from implementation-specific artifacts. This comparative approach echoes strategies in cognitive neuroscience, in which convergent evidence across methods strengthens theoretical claims.

Hybrid modeling approaches hold great promise. By combining LLMs with reinforcement-learning agents, embodied simulations, or complementary cognitive frameworks, researchers can better capture cognitive processes rooted in perception, action, and decision-making—domains in which purely linguistic models fall short. This could involve pairing large models with deliberately minimalist frameworks designed for mechanistic transparency. For example, “tiny” recurrent neural networks can be trained on behavioral data to discover underlying cognitive algorithms, which are then rendered interpretable through dynamical-systems analysis (Ji-An et al., 2025).

Methodologically, researchers should clearly articulate theoretical commitments, specifying which cognitive phenomena they aim to model and why LLMs are suitable tools. A multimethod strategy—probing analyses, behavioral-style assays, and causal interventions—can build convergent validity, and transparent reporting of scope and limitations can mitigate overinterpretation.

Ultimately, the field will progress not by asking whether LLMs are “like” human minds but by treating them as experimental systems: manipulable platforms for uncovering the algorithmic principles underlying intelligent behavior. In this role, LLMs can complement traditional approaches and illuminate new dimensions of mind and cognition.

Ethical Considerations Beyond Traditional Institutional Review Boards

The use of LLMs in psychological research raises ethical questions that extend beyond traditional human-subjects protections. Although LLMs are not sentient beings requiring protection from harm, their use in simulating human responses creates novel ethical challenges.

The representation problem

LLMs are trained on vast corpora scraped from the internet, transforming individual expressions into statistical patterns for research use—a purpose far removed from their original context (Longpre et al., 2024). This raises questions about representation and consent that traditional institutional-review-board (IRB) frameworks cannot address.

When one prompts an LLM to simulate responses from specific populations, one implicitly claims the model represents that group. Yet training data inevitably contain biases: Marginalized communities may be underrepresented, their perspectives filtered through others’ descriptions rather than direct expression. This creates risks of epistemic injustice whereby already marginalized voices are further silenced through computational mediation (A. Wang et al., 2025).

The challenge is compounded by bias amplification. LLMs not only reflect training-data biases but can also systematically amplify them through statistical optimization (Z. Wang et al., 2024). As noted earlier, models often achieve higher simulation accuracy for dominant demographic groups while compressing diverse human experiences into narrow, homogeneous characterizations (Lee et al., 2024; Qu & Wang, 2024).

Simulation also risks indirect harm by misrepresenting vulnerable groups, such as children, individuals with mental-health conditions, and trauma survivors (Y. Wang et al., 2024). Inaccurate portrayals might perpetuate stigma, and simulating trauma responses without survivor input can trivialize their experiences. Even careful simulations may feel appropriative to communities who have long struggled for direct representation.

Practical ethical guidelines

Given these challenges, researchers using LLMs for psychological simulation should adopt specific ethical practices.

Transparency requirements

Document key aspects of LLM use: model versions, prompts, parameters, and validation procedures (Lin, 2024b). Clearly indicate when findings derive from simulations and acknowledge limitations prominently—especially for closed-source models (Hussain et al., 2024). Monitor model updates because they can silently alter behaviors, potentially invalidating previous results.

Representation auditing

Before simulating any population, critically examine whether the model can credibly represent that group. Actively identify and mitigate biases through testing for stereotypical responses, examining response diversity, and validating against contemporary community data. For marginalized or vulnerable populations, collaborate with community members to design prompts, interpret outputs, and determine appropriate-use boundaries.

Appropriate-use boundaries

Clearly establish when LLM simulation is appropriate. Simulation can be justified for initial exploration or hypothesis generation when participant recruitment is challenging, but subsequent validation with actual community members is essential. Efficiency gained through simulation must never justify bypassing communities whose experiences researchers seek to understand.

Community engagement

Involve community members in research design and validation, ensuring their perspectives shape methodological choices and help identify potential harms.

Institutional responsibilities

Traditional IRB frameworks focus on protecting individual human subjects from direct harm. LLM research raises additional ethical considerations—concerning collective representation, potential indirect harms through misrepresentation, and implications of substituting computational models for human voices—that extend beyond standard IRB purview but require institutional attention.

Rather than expanding IRB scope, institutions should foster ethical research through complementary mechanisms: developing methodological best practices for LLM simulation research, facilitating access to open-weight models and computational resources to enhance transparency and reproducibility, and establishing peer-review standards that evaluate appropriate use of simulation versus human participation. When LLM research does involve human subjects—whether through validation studies or when training data contain identifiable information—traditional IRB review applies as usual, focusing on informed consent, privacy protection, and minimizing foreseeable harm to participants.

Concluding Remarks

As new tools for psychological science, LLMs are complex systems demanding new methodological vigilance. They are best understood as among the most complex, manipulable, and potentially insightful scientific instruments yet devised for exploring human thought and behavior. In this article, I have provided guidelines grounded in two distinct applications. Using LLMs for persona simulation requires researchers to grapple with temporal displacement and ethical representation, moving past surface-level prompting to psychologically grounded validation. Treating LLMs as cognitive models compels a shift from observing behavioral mimicry to probing mechanistic understanding through causal interventions that test the architecture of learned abilities.

The path forward requires a dual commitment. Psychologists must develop fluency with the technical particulars of model architecture, training, and validation, and the AI community must foster deeper engagement with the complexities of human cognition and the nuances of empirical research. Open-weight models and transparent reporting are essential for this cross-disciplinary work. The unique strengths of LLMs in scalability, experimental control, and counterfactual reasoning promise to accelerate discovery. But the rigor of the science researchers build with them will depend on the skill and care with which they learn to use them.

Footnotes

Acknowledgements

I thank Gati Aher, Michael Bernstein, Danica Dillion, Nancy Fulda, Nicholas Laskowski, Paweł Niszczota, Philipp Schoenegger, Lindia Tjuatja, Lukasz Walasek, and David Wingate for comments on early drafts. I used Claude Sonnet 4.5 and Gemini 2.5 Pro for proofreading the article, following the prompts described in Lin (2025c).

Transparency

Action Editor: David A. Sbarra

Editor: David A. Sbarra

Author Contributions