Abstract

In 2013, the Center for Open Science proposed that journal articles be awarded “badges” for engaging in open-science practices, including preregistration. In 2015, the Transparency and Openness Promotion (TOP) guidelines (TOP 2015) promoted preregistration of studies and analysis plans. Since then, the term “preregistration” has been used to describe different research outputs created at different times—sometimes, but not always, including study registration. Following a review of evidence about TOP 2015 implementation, including evidence that adherence could not be rated reliably, the TOP Guidelines Advisory Board updated these guidelines (TOP 2025). The TOP 2025 guidelines no longer use the term “preregistration.” Instead, TOP 2025 disambiguates specific research outputs, such as registrations, study protocols, analysis plans, code, and other research materials. TOP 2025 also explains that researchers should describe the time at which outputs are created and shared in relation to key study activities. In this article, we explain why adopting the terminology used in TOP 2025 and describing the times at which specific research outputs are created and shared will enhance understanding and support better implementation and reporting of open science.

Keywords

Preregistration has been proposed as a solution to concerns about the credibility of psychological research (Nosek et al., 2018), but researchers do not consistently describe the specific output that constitutes a preregistration or its timing. In 2013, the Center for Open Science proposed that journal articles be awarded “badges” for engaging in open-science practices, including preregistration (Center for Open Science, 2013; Eich, 2014). Subsequently, the Transparency and Openness Promotion (TOP) guidelines (TOP 2015) promoted “preregistration of studies” and “preregistration of analysis plans” (Nosek et al., 2015). Since then, the term “preregistration” has been used to describe various outputs, including study designs entered in databases, analysis plans entered in databases, study protocols and analysis plans published in journal articles, and analytic code shared on websites. The term “preregistration” has also been used to describe sharing these outputs at different times, such as before data have been collected, before data have been observed, before data have been analyzed, and even after analyses have been conducted.

We argue that jingle-jangle—using the same terms to denote different practices (Thorndike, 1904) and using different terms to denote the same practices (Kelley, 1927)—creates confusion and hinders efforts to implement open science, report research transparently, and conduct metaresearch (Rice & Moher, 2019). In addition to confusion among social and behavioral scientists, jingle-jangle might produce confusion in multidisciplinary teams that use terms differently, and jingle-jangle might lead to misunderstandings when social and behavioral scientists submit manuscripts to multidisciplinary journals. Notably, the updated TOP guidelines (TOP 2025) no longer use the term “preregistration.” The TOP Guidelines Advisory Board reviewed evidence about implementation of TOP 2015 (Grant et al., 2023; Naaman et al., 2023; The TOP Statement Working Group, 2024), approaches to rating adherence to TOP 2015 (Mayo-Wilson et al., 2021), and evidence that adherence to some TOP 2015 standards could not be rated reliably (Kianersi et al., 2023; Toomey et al., 2024). The board determined that more precise guidance would facilitate better implementation and reporting. Consequently, TOP 2025 disambiguates multiple research outputs, and it calls on researchers to explain whether and when each output was created and shared in relation to other study activities (Grant et al., 2025). Reducing complexity and increasing consistency might promote better understanding and implementation of open science.

In this article, we identify three problems and make four recommendations. The problems are that the term “preregistration” is used to describe a variety of research outputs, the term “preregistration” is used to describe research practices occurring at many different times, and the related term “Registered Report” conflates multiple research outputs and publication processes. To address these problems, we recommend that researchers use the term “registration” to describe entering information in a register, disambiguate specific research outputs, describe when specific outputs are created and shared in relation to key study activities, and disambiguate peer-review and publication processes from research outputs. We explain why the term “preregistration” does not always describe specific outputs (e.g., registrations, study protocols, and statistical-analysis plans [SAPs]) adequately (Box 1). Throughout the article, we provide examples of how timing can be described in relation to different activities in different types of studies. We explain that researchers have different reasons for engaging in open-science practices, so they should not assume that other researchers will infer their reasons for engaging in open science correctly, and researchers should avoid using ambiguous terms that might not be interpreted as intended. Instead, researchers should use precise terms that describe specific research outputs and times.

Definitions of Key Terms

These items might be updated before or after a study commences to reflect changes to study plans. For guidance about linking these outputs and suggestions for documenting the timing of their creation and modification, see the recommendations below.

Problem 1: The Term “Preregistration” Is Used to Describe a Variety of Research Outputs

Researchers have described a variety of outputs as preregistrations. Some researchers define a preregistration as entering specified information in a “repository” (Center for Open Science, 2013). Others agree about the importance of sharing information publicly; however, they do not always explain where preregistrations must be shared (van ‘t Veer & Giner-Sorolla, 2016). Some researchers define publishing a journal article as a form of preregistration (Stewart et al., 2023). Disagreements about the research output that constitutes a preregistration relate to differences across study types, different concerns about research, and different goals.

By comparison, the term “registration” is relatively unambiguous across study designs and disciplines. For example, ClinicalTrials.gov, PROSPERO, and OSF Registries vary in content and structure, but records in these databases are commonly described as registrations. Because they focus on different study designs, their minimum data requirements differ. ClinicalTrials.gov has requirements that are appropriate for trials, and PROSPERO has requirements for systematic reviews. OSF Registries offers a variety of templates for different types of research (see Appendix 1 in the Supplemental Material available online). Yet they are all searchable databases that are free to register and free to search.

Researchers disagree about whether preregistration applies to only hypothesis-testing research or many types of research. Researchers interested in hypothesis-testing research have emphasized the importance of knowing whether hypotheses preceded or followed a study (Nosek et al., 2018). They have argued that the purpose of preregistration is “to allow others to transparently evaluate the capacity of a test to falsify a prediction” (Lakens, 2019, p. 222). Although nonhypothesis-testing research might also be preregistered, they have argued that disagreements about the purpose and definition of “preregistration” have arisen because researchers fail to “distinguish positive externalities of preregistration from the goals of preregistration” (Lakens, 2019, p. 223). Researchers who use the term “preregistration” for many study designs (Nosek et al., 2018), including qualitative research (Haven et al., 2020), do not necessarily view other goals and benefits as “externalities.” If one takes the extreme view that the only purpose of preregistration is to limit (or identify) multiple hypothesis testing, then one might argue that preregistration is not important for randomized clinical trials designed for estimation rather than hypothesis testing (Hernan & Greenland, 2024). A more balanced position might be that stating hypotheses is important for some research and that researchers can disclose the (planned) existence of diverse study designs with diverse purposes by entering information in registers, which can be described unambiguously and consistently as “registration.”

Researchers disagree about whether preregistration requires that study details be entered in a register, and they disagree about whether entering study details in a register is sufficient to constitute preregistration. Some researchers have equated trial registration (e.g., in ClinicalTrials.gov) with preregistration (Krypotos et al., 2022; Munafò et al., 2017), whereas others have argued that additional practices are needed to constitute preregistration, such as documenting research processes through open-lab notebooks (Lakens, 2019) or including analysis plans (Lakens et al., 2024). The relationship between SAPs and statistical code further complicates definitions. Advances in Methods and Practices in Psychological Science (AMPPS) asks authors whether they have preregistered “data analysis scripts/code,” which conflates entering study information in a register with sharing code, a practice often performed on websites, such as GitHub. Even when authors agree that preregistration denotes information in a register, they do not agree which registries are acceptable. For example, some researchers recommend the website AsPredicted.org. By many common definitions, AsPredicted is not a register. It is a website managed by a single research group that lacks features that many researchers and organizations consider essential, such as governance and permanence (Box 2).

Minimum Features of Registrations and Registries for Health Research

Source: Adapted from the World Health Organization (2024b) Registry Criteria.

Researchers sometimes disagree because they define preregistration by the objectives they hope to achieve rather than as a precise action or research object. This causes confusion because different research outputs might or might not achieve the same goal. For example, if one’s goal is to evaluate claims in a single journal article, then it might not matter whether those claims can be checked against a registry entry or against a study protocol published as preprint or as a journal article before the start of data collection. Any time-stamped record might allow a peer reviewer to check whether the planned outcomes are reported in a manuscript undergoing review. By contrast, a researcher conducting a meta-analysis to inform clinical guidelines might find structured information in a registry most useful for identifying completed and ongoing studies.

Finally, inconsistent definitions contribute to confusion about which practices have been done. For example, Nosek et al. (2018) described “preregistration” on ClinicalTrials.gov as “usually accomplished by posting the analysis plan.” In fact, only 6% of all records posted by July 12, 2024, included a SAP (31,009 of 501,160), which was usually posted after study completion.

Problem 2: The Term “Preregistration” Is Used to Describe Research Practices Occurring at Many Different Times

The term “preregistration” implies that registration occurs before some event, but there is no single event that preregistration could precede across all study designs. Furthermore, heuristics lack the precision needed for scientific communication and policies. In practice, researchers use the term “preregistration” to describe different times for different types of studies and to describe different times for the same type of studies. AMPPS’s submission guidelines acknowledge this ambiguity by asking authors, “When did you complete the preregistration?”

The term “preregistration” is problematic because all study designs cannot be registered at the same time in relation to key study events. Heuristics about when preregistration should occur, such as “before the start of the study,” are difficult to apply consistently. For example, some definitions allow for preregistering systematic reviews and meta-analyses (Quintana, 2015). When is the start of a meta-analysis that includes studies the authors read before deciding to conduct the meta-analysis? Other researchers define preregistration as occurring before accessing the data that will be analyzed (Krypotos et al., 2022; Lakens et al., 2024). The Peer Community in Registered Reports (n.d.) framework describes levels of bias that are defined, in part, by whether data exist before in-principle acceptance. Perhaps the most restrictive definition is that “when data already exist, preregistration is only possible when access to the data are restricted” (Lakens et al., 2024, p. 3).

Beyond their limitations to hypothesis-testing research, frameworks for describing when all research should preregister tend to oversimplify the ways research can be conducted. They make assumptions about the relationship between accessing data and knowledge of data that are not always true. For example, researchers who use large, publicly available data sets have often read previous analyses of those data, so naive “prediction” is impossible. Researchers using the National Health and Nutrition Examination Survey (NHANES) are likely to have read previous NHANES studies, so it would be misleading to imply study design preceded knowledge of the data simply because the researchers had never accessed the data themselves. Even when data do not exist, it might be misleading to suggest researchers are “predicting” what they will observe. For example, a researcher could conduct multiple analyses using Medicare claims collected in 2025 and then create a “preregistration” describing plans to conduct only those analyses with statistically significant results in the 2025 claims. Because the 2026 data will be generated much like the 2025 data, many relationships present in 2025 could be “predicted” to appear in 2026. On the other hand, data access can be insufficient to allow researchers to test hypotheses. For example, some studies link data from multiple databases. Before linkage, data access alone might be insufficient for data analysis.

Even for a single-study design, preregistration might refer to different times. Authors who focus on hypothesis-testing research have distinguished “prediction” from “postdiction” (Nosek et al., 2018). To minimize bias, they encourage researchers to disclose plans as early as possible, and they have developed simplified frameworks to describe risk of bias associated with registering studies at different times (Peer Community In Registered Reports, n.d.). Because randomized clinical trials collect new data and begin following participants before interventions and outcomes occur, it might appear easy to define when trials should “preregister”. Yet reasonable definitions of “preregistration” might include before beginning recruitment, before enrollment of the first participant, before random assignment of the first participant, or before delivery of the first intervention. If focused only on risk of bias associated with multiple hypothesis testing, then preregistering before analysis and interpretation might be acceptable; thus, preregistration might be defined as occurring after delivering interventions but before any outcomes have occurred, after outcomes have occurred but before they have been recorded, before recorded outcomes have been shared with the investigators, before masked analyses have been done, or before unmasking the analyses.

Precise descriptions are needed to develop and to apply policies and rules (Mayo-Wilson et al., 2018; 2022). For example, the U.S. Department of Health and Human Services (HHS) requires that many trials of regulated products (e.g., drugs, biologics) and trials funded by the National Institutes of Health be registered within 21 days of starting recruitment (HHS, 2016; Hudson et al., 2016; Zarin et al., 2016). By contrast, the International Committee of Medical Journal Editors (ICMJE) and the Declaration of Helsinki require that trials be registered before enrollment of the first participant (De Angelis et al., 2004; ICMJE, 2024; World Medical Association, 2025). Trials that comply with HHS registration requirements are not necessarily eligible for publication in ICMJE journals, so it is important that trialists understand the specific timings required by these different policies.

In practice, the term “preregistration” contributes to misunderstandings about when research was registered. Nosek et al. (2018) described all studies registered on ClinicalTrials.gov as “preregistrations”; however, many studies were not registered before they were conducted (Al-Durra et al., 2020). Of the 36,620 records posted to ClinicalTrials.gov in 2023 with a start date, 44% were first submitted after the start date (20,395 of 36,620), including 40% (10,871 of 18,074) of randomized trials. Of all studies registered on OSF Registries before July 18, 2024, most used the “OSF Preregistration” template. These “preregistered” studies described registration before the creation of data (62%; 39,843 of 64,393), before any human observation of the data (6%; 4,099 of 64,393), before accessing the data (9%; 5,793 of 64,393), before analysis of the data (18%; 11,358 of 64,393), and following analysis of the data (5%; 3,300 of 64,393).

Rather than use the term “preregistration” to refer to different times for different studies and study types, we propose authors describe specific research outputs, such as registrations, and the times at which those outputs were created and shared in relation to other relevant study events.

Problem 3: The Term “Registered Reports” Conflates Research Outputs and Publication Processes

The term “Registered Reports” is problematic because it is used to describe a two-stage peer-review process that might or might not include entering study information in a register. As others have noted, common uses of the term also conflate study protocols and SAPs with peer-review processes (Rice & Moher, 2019). Furthermore, many studies are registered that are not Registered Reports.

A Registered Report is an empirical journal article that undergoes two-stage peer review with in-principle acceptance after Stage 1. It has also been described as “reviewed pre-registration” (van ‘t Veer & Giner-Sorolla, 2016). Before a study is conducted, researchers submit a Stage 1 manuscript with an introduction, methods, and proposed analyses. If the researchers receive an in-principle acceptance following peer review, the journal commits to publishing a “Stage 2” report with results, provided the authors follow the planned methods.

So far, most Registered Reports have included study protocols and SAPs, and they have been entered in registries; however, some Registered Reports are not registered, some journals do not make Stage 1 manuscripts publicly available, and some journals that accept Registered Reports do not have public policies about studies that are withdrawn (Chambers & Tzavella, 2022; Hardwicke & Ioannidis, 2018; Montoya et al., 2021; Soderberg et al., 2021). Just as many published protocols do not lead to results publications (Vorland et al., 2024), there is no guarantee that authors of Stage 1 manuscripts will publish their results. Proponents argue that best practice is to incorporate registration as part of this process, but registration is achieved only if authors (or journals) enter minimum information in a register.

A related term, “Registered Revisions,” has been proposed to describe a process that would occur during peer review (Haber et al., 2024). The proposed process would begin when peer reviewers request additional data or analysis. Authors would make a “revision plan” that would undergo additional peer review; if the plan were approved by the editors and then completed, the results would be published. It is unclear whether and how such revisions would be entered in registries (i.e., “registered”).

As described subsequently, researchers should describe research outputs unambiguously, and peer-review and publication processes should be defined separately.

Recommendation 1: Use the Term “Registration” to Describe Entering Information in a Register

For clarity and consistency, we suggest researchers follow definitions used by the World Health Organization (WHO), which distinguishes the act of study “registration,” the databases containing registrations (“registers”), and the organizations (“registries”) that manage registers (WHO, 2024a).

Registration (Box 1) is the act of entering a record of a study in a register. A registration is a record in a database that identifies the existence of a study and includes a permanent identifier (e.g., record number, DOI). A record typically includes answers to several questions completed during the registration process. For example, the WHO minimum data set includes 24 questions (WHO, n.d.). Registrations might be updated to reflect changes to study plans.

Registers are databases that have minimum data requirements, which vary by study type and discipline (see Appendix 1 in the Supplemental Material). For example, WHO requires that recognized registers make time-stamped records identifiable, accessible, permanent, and searchable from the time of registration (Box 2). Likewise, changes should be time-stamped, transparent, and current.

Registries are independent organizations that maintain registers of scientific studies. Most registries do not charge for access or for completing registrations. Some registries conduct administrative checks to ensure that elements of registrations are complete and consistent. Some registries also prompt users for regular updates.

Recommendation 2: Disambiguate Specific Research Outputs (Registrations, Study Protocols, Analysis Plans, Code, and Research Materials)

To avoid ambiguity and to promote better implementation, we recommend that researchers describe specific research outputs separately. Whereas registrations are records in databases, the terms “study protocol” and “statistical analysis plan” can be used to describe research outputs that might appear in various places, such as preprint servers, journal articles, or attachments to other study records. Study protocols and SAPs might or might not be included in registrations. For example, authors might complete detailed registration templates (e.g., on OSF Registries) or attach additional documents to registrations (e.g., on ClinicalTrials.gov or another register of health research). Wherever they appear, study protocols and SAPs should follow reporting guidelines that describe the minimum information to include in these documents (Chan et al., 2025; Gamble et al., 2017; Moher et al., 2015).

Just as minimum registration requirements differ across studies, the specific contents of study protocols and SAPs vary by study design and context. Broadly, a study protocol is a document that describes the rationale for a planned study and the methods. Study protocols are typically written before or soon after beginning study activities. For example, study protocols are often written before assigning participants to interventions in randomized clinical trials. A study protocol might include a summary or more detailed explanation of statistical methods. A statistical analysis plan is a narrative document that describes specific analyses to be conducted. A SAP might also describe the rationale for statistical analyses, processes that investigators will use to make decisions that depend on study results, and other details, such as criteria for making inferences or drawing conclusions. In fields such as psychology and economics, the term “pre-analysis plan” is used sometimes; like preregistration, the term “pre-analysis plan” might contribute to misunderstandings about the timing of analysis plans.

Together or separately, a study protocol and SAP should include sufficient information for other investigators to evaluate a study’s validity and generalizability and ideally enough information to replicate the research methods and analyses. Study protocols and SAPs can communicate key details to readers who might not understand statistical software or coding languages, but they might lack the precision found in analytic code. In some cases, sharing analytic code before conducting a study can achieve many of the same goals as sharing a SAP, and sharing analytic code might have unique benefits. On the other hand, SAPs for complex studies might anticipate multiple possible scenarios for which it would be impractical to write code before collecting data (Box 3). Finally, it might be easier for some readers to compare the consistency of results reports (e.g., journal articles) with narrative SAPs rather than code. In some studies, SAPs might be written before collecting data, and code might be written after data collection is underway. When both exist, sharing a narrative SAP and associated code might be most useful to the greatest number of readers.

Examples of Sharing Research Outputs at Different Times

It is best practice to link registrations, study protocols, SAPs, and other outputs to one another. For example, investigators might attach study protocols, SAPs, and analytic code to registrations, or they might include links and trial identifiers (e.g., registration numbers) in objects arising from a study (e.g., in abstracts of articles). If permanent and immutable versions of research outputs are not available elsewhere, attaching them to registrations can provide persistent access. Changes to registrations should be time-stamped, so attaching study protocols, SAPs, and other outputs to registrations can also help researchers clarify their timing. For example, protocols and SAPs are sometimes published as journal articles (Vorland et al., 2024) for which the timing of initial submission and subsequent modifications might be unclear. Attaching other objects to registrations can establish clear timelines; for example, the time at which a SAP is added to a registration might allow users to assess whether the SAP preceded data collection and the extent to which the SAP limited opportunities for reporting biases.

Recommendation 3: Describe the Time at Which Research Outputs Are Created and Shared in Relation to Key Study Activities

The appropriate time to create and share different research outputs might vary across study designs and across settings and contexts. In all cases, researchers can and should state the time at which research outputs are created and shared publicly in relation to critical study events, such as the start or conclusion of data collection, data access, analysis of results, and unmasking of group labels. The labels “prospective” and “retrospective” are used in some contexts, and a recent article introduced the unnecessary portmanteau “proregistration” (Malmsiø et al., 2025). Because these terms are used in relation to different events across studies and disciplines, we encourage researchers to describe the specific events that have and have not occurred at the time of registration or sharing research outputs.

Sharing research outputs might have different purposes and benefits at different times. In studies in which primary outcomes are assessed immediately (i.e., within days or weeks of commencing the study), registration, study-protocol sharing, and SAP sharing are often done at the same time. For studies in which primary outcomes are assessed distantly (i.e., months or years after commencement), registrations, study protocols, and SAPs often have more distinct purposes and content, and they are often created at different times by different people. For example, it might be necessary to register a clinical trial before enrolling participants for both scientific and legal reasons. By comparison, a SAP might be completed and shared after study activities are underway with little potential for biasing the results (Box 3).

In addition to preventing and detecting bias, sharing research outputs can achieve other benefits after research is underway or completed. For example, registering completed studies might help other researchers locate those studies and link multiple reports using common identifiers. Registrations might be the only public records that some studies occurred. Sharing study protocols and SAPs for completed studies can help readers interpret their results and apply their methods in future studies. Sharing manuals for delivering interventions can help clinicians implement those interventions in practice (Cuijpers et al., 2024).

Recommendation 4: Disambiguate Research Outputs From Peer-Review and Publication Processes

Processes used by peer reviewers and journals should be described unambiguously, without conflating journal peer-review and publication processes with research outputs created by authors.

Proponents have used the term “Registered Reports” to describe bundles comprising multiple research outputs and also peer-review and publication processes. Most frequently, the term “Registered Reports” has been used to describe a two-stage peer-review process with in-principle acceptance (Chambers & Tzavella, 2022), an approach that has been tried before and described differently (Hardwicke & Ioannidis, 2018). We recommend that researchers and publishers describe such studies as “registered” by the investigators and managed by journals using a “two-stage peer-review process with in-principle acceptance” because this is clearer than using “Registered Report” as a shorthand. If researchers and publishers continue to use the term “Registered Report,” they should ensure that studies described this way are entered in registers.

Other approaches to peer review could also reduce incentives for selective nonreporting and spin. For example, accepting or rejecting studies without knowledge of the results has been described as “results-masked review” (Box 1). When these studies are entered in registers, they should be described as “registered” by the investigators and handled using “results-masked review” by journals.

The word “registered” should not be used to describe studies that are not entered in registers.

Conclusions

Social and behavioral researchers use the term “preregistration” to describe multiple research outputs created and shared at different times. Disagreements about what does or should constitute preregistration reflect different concerns about the causes and consequences of problems in scientific research and different beliefs about what should be done to improve research. Some definitions of “preregistration” devalue sharing research outputs for diverse research designs at various times in the research life cycle (e.g., sharing materials after the research has been completed). The related term, “Registered Report,” can be misleading because it conflates research outputs and publication processes (implying that studies have been entered in registers when that might not be true and implying that other studies are not registered).

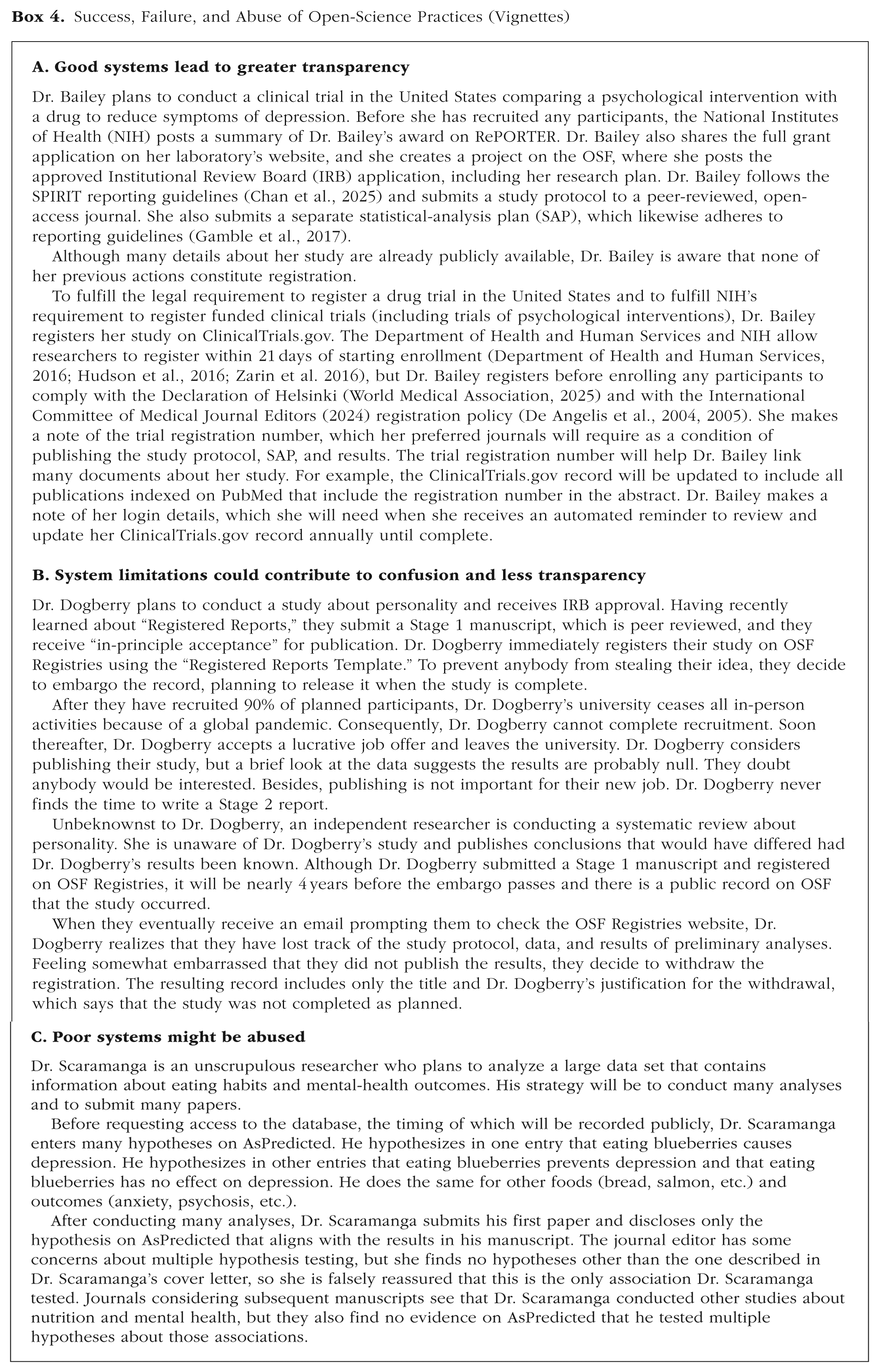

Researchers cannot be expected to engage consistently and correctly in open science unless they understand and agree on best practices (Box 4). Furthermore, researchers might not interpret information correctly if specific research outputs and the times at which those outputs are created and shared are described ambiguously. We do not propose uniform practices across study designs and disciplines; instead, we argue that precise and consistent definitions of what researchers are expected to do and when they are expected to do it will be required to develop best-practice recommendations and policies related to open science.

Success, Failure, and Abuse of Open-Science Practices (Vignettes)

We encourage researchers to disambiguate specific research outputs, describe the exact time at which research outputs are created and shared, and disambiguate research outputs from peer-review and publication processes.

Supplemental Material

sj-docx-1-amp-10.1177_25152459251375445 – Supplemental material for Consistent and Precise Description of Research Outputs Could Improve Implementation of Open Science

Supplemental material, sj-docx-1-amp-10.1177_25152459251375445 for Consistent and Precise Description of Research Outputs Could Improve Implementation of Open Science by Evan Mayo-Wilson, Sean Grant, Katherine S. Corker and David Moher in Advances in Methods and Practices in Psychological Science

Footnotes

Acknowledgements

We thank Xiangji Ying for calculating the proportion of records posted on ClinicalTrials.gov in 2023 that were submitted after the start date, and we thank Hao Ye, Julia Bottesini, and Daniël Lakens for their assistance in scraping the OSF API to assess entries in the OSF Registries.

Transparency

Action Editor: Michelle N. Meyer

Editor: David A. Sbarra

Authors contributions

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.