Abstract

Journalists are often maligned for covering sensational or desirable research results at the expense of studies with stronger methods. In the present study, we aimed to test how journalists’ preferences shift when studies are selected based on their methods rather than results (results-blind selection). Practicing journalists and editors, journalism faculty, and journalism graduate students (N = 413) read summaries of real social-psychology studies and rated their interest in reporting on them. Participants were randomly assigned to read either “traditional” summaries that included the results or “results-blind” summaries that excluded the results. Summaries varied on three within-subjects dimensions: replication status, preregistration status, and belief consistency. Participants expressed more interest in replicable (vs. not replicable) and preregistered (vs. nonpreregistered) studies regardless of whether they learned the results, suggesting that these studies have features that are valued by journalists. Meanwhile, results-blind selection showed potential for reducing confirmation bias, suggesting it may be worth further exploration if feasibility challenges can be addressed.

Keywords

In October 2024, the Pew Research Center asked Americans what they thought about scientists and their role in policymaking (Tyson & Kennedy, 2024). Respondents largely thought of scientists as “intelligent” (89%) and “focused on solving real world problems” (65%; Tyson & Kennedy, 2024). Fewer than half, however, said that scientists were “good communicators” (45%), suggesting that the public thinks scientific communication has room for improvement (Tyson & Kennedy, 2024). Typically, the public hears about scientific developments through the media. Scholars have documented an increasingly tighter relationship between science and the media such that the latter plays a major role in disseminating, popularizing, and legitimizing scientific research (Väliverronen, 2021; Weingart, 1998). Perhaps, then, changes to media’s coverage could provide one pathway to regaining public trust in science (Scheufele, 2014).

Some strategies for increasing public trust in science rely on rhetoric or persuasion (Dahlstrom, 2014; Goodwin & Dahlstrom, 2014). However, a more direct way to tackle the root of this problem is to address valid reasons for mistrust, such as bias (Scheufele & Krause, 2019). If, for example, journalists are more likely to report on sensationalistic findings over those supported by strong evidence, this could undermine the scientific record’s value as a source of public guidance. Furthermore, if journalists are more likely to report findings that reinforce their own views on politicized scientific issues, this could deepen public distrust in science.

In this project, we test a possible tool for reducing biased reporting of psychology research: Journalists 1 could select research based on methods rather than results (results-blind selection). The idea that results-blind selection could reduce bias and improve the quality of featured research is the foundation for the registered reports academic-article format, first introduced at Cortex in 2013 (Chambers, 2013). With registered reports, editors and reviewers evaluate scientific projects based on their methods before the results are known (Chambers, 2019). Results-blind selection is promising because it prompts one to consider not whether one likes a finding but whether one thinks the scientific process of arriving at that finding was sound.

A similar approach, then, might improve the trustworthiness of the psychological findings that are communicated to the public. If journalists make reporting decisions while blinded to studies’ results, they may place greater emphasis on methodological rigor and less emphasis on whether the results tell an appealing story. In this project, we examined this possibility by asking the following: Does journalists’ use of results-blind selection improve the trustworthiness of reported psychology research?

Results-Blind Selection and Research Quality

Journalists share a set of professional norms and routines that govern story selection, sourcing, information gathering, writing, and editing (Singer, 2007; Tandoc & Duffy, 2019). However, market pressures often push journalists to report on research that is novel and surprising (Fitzpatrick, 1999; Galtung & Ruge, 1965; Munger, 2020; Myers, 1996; Siravuri & Alhoori, 2017). Ruhrmann’s (1989, 1997) work noted the importance of unexpectedness, controversy, and novelty and expressed concern that a focus on spectacular discoveries could cause distortion of the public’s understanding of science.

These journalistic incentives might not be concerning if all the novel and unexpected findings were trustworthy. However, in psychology, efforts to replicate published findings have often yielded disappointing success rates (Camerer et al., 2018; Ebersole et al., 2016, 2020; Klein et al., 2018; Open Science Collaboration, 2012). Many psychological scientists express concern about the rate of false positives in the literature; 95% of one sample said that it is “somewhat” or “much” higher than it should be in psychology (Miranda et al., 2022).

It is possible that the research seen as most newsworthy also tends to be high quality. Journalists may be skeptical of findings that seem implausible, examining the methods with greater scrutiny rather than taking shocking findings at face value. If the most eye-catching reports have outsize appeal for journalists, however, perceived newsworthiness could be negatively related to quality. Consistent with this possibility, previous work has shown that risky or counterintuitive hypotheses tend to have lower statistical power (Fraley & Vazire, 2014) and be less likely to replicate (Camerer et al., 2016; Dreber et al., 2015). More broadly, it is possible that highly surprising findings tend to rest on shakier methodological foundations (Chambers, 2019).

Recent work examined how journalists evaluated fictitious scientific findings: When all else was equal, journalists favored larger samples and were not swayed by the prestige of authors’ institutions (Bottesini et al., 2023). However, no data that we know of have examined journalists’ interest in reporting on real psychological findings and whether this changes when they are blinded to the results.

Results-Blind Selection and Confirmation Bias

Although journalists are encouraged to report on unexpected discoveries, they also have incentives to affirm the political ideology of their newsrooms. Many popular media outlets are associated with a detectable political slant (Gentzkow & Shapiro, 2005; Stroud, 2011). For instance, laypeople, academics, and think tanks tend to agree that The New York Times leans left and that The Wall Street Journal leans right (AllSides, 2021; Groseclose & Milyo, 2005). A news organization’s partisan slant is reflected in both the stories it chooses to cover and the ways in which it covers them (Hassell et al., 2022). Although there are some strengths to this variability—the fact that diverse perspectives are represented, for instance, or that individual outlets are not held to a misleading standard of “balance” (Boykoff & Boykoff, 2004; Brüggemann & Engesser, 2017)—journalists may end up selectively covering the findings that align with their own beliefs and ignoring those that do not (Carvalho, 2007; Elsasser & Dunlap, 2013).

If journalists exhibit a confirmation bias—a tendency to preferentially report findings that support their preexisting beliefs—this would be a legitimate cause for distrust (Kunda, 1990; Nickerson, 1998). Indeed, conservatives tend to be less trusting of the media and scientists compared with liberals, in part because of perceptions of bias (Brenan, 2024; Hmielowski et al., 2014; Tyson & Kennedy, 2024; Weingart & Guenther, 2016). If media outlets ignore compelling data that tell an unpopular story, long-term exposure could cultivate false—but ostensibly “evidence-based”—beliefs among broad segments of the public (Potter, 2014; Scheufele & Krause, 2019). In the current project, we examined whether such a bias exists and whether it can be reduced by results-blind selection.

Overview

We asked journalists about their interest in reporting on real psychology studies that varied along three key dimensions: (a) whether the findings had been successfully replicated, (b) whether the studies were preregistered, and (c) whether the findings were consistent with journalists’ preexisting attitudes. Half of the journalists were blind to the results, and the other half were not. We chose to use real psychology studies, as opposed to fabricated studies, because we were interested in how journalists perceive the actual psychology literature. By taking this approach, our design gets closer to telling us what might happen if journalists were to adopt results-blind selection. For example, would we see a decrease in coverage of replicable studies or studies that have been preregistered? This would not be possible with an experiment that manipulates specific features via fabricated study descriptions—as valuable as such a contribution would be—because it would remain unknown how manipulated features are combined in real research.

Of course, natural experiments also have notable limitations. Using real studies introduces the possibility that our independent variables could be confounded with other study features. Our study is motivated by the suspicion that these confounds are naturally present in the literature and could result in different patterns of evaluation depending on whether the results are known. It seems useful to document these patterns—which have immediate real-world implications—even if the current project cannot yet elucidate the specific features driving journalists’ decisions.

To make the rationale for this investigation more concrete, consider the possibility that replicable studies tend to have more rigorous methods and less surprising findings than studies that fail to replicate. If journalists evaluate studies based on methods alone, they may be impressed by the rigor and show a preference for replicable over unreplicable studies. In contrast, if journalists are exposed to the results, the surprising results may sway their preference in favor of unreplicable studies. In sum, our hypotheses reflect the assumption that replicability and preregistration tend to be confounded with factors that make methods more impressive and results less impressive.

Based on this rationale, we aimed to answer three research questions:

Research Question 1: Do journalists express more interest in reporting on replicable research when they select studies in a results-blind (vs. traditional) fashion?

Research Question 2: Do journalists express more interest in reporting on preregistered studies when they select studies in a results-blind (vs. traditional) fashion?

Research Question 3: Do journalists exhibit less confirmation bias when they select studies in a results-blind (vs. traditional) fashion?

For Research Questions 1 and 2, we anticipated that the most “newsworthy” results would be associated with shakier methods. Thus, we hypothesized that unreplicable and nonpreregistered findings would have an advantage in the traditional condition compared with the results-blind condition. For Research Question 3, we expected that journalists would show a greater tendency to select belief-consistent studies in the traditional condition compared with the results-blind condition.

Disclosures

All aspects of this project are available on the main OSF page (https://doi.org/10.17605/OSF.IO/W9JG5), which links to our preregistration, ethical-approval forms, study materials, data, analysis scripts, and supplemental materials containing additional details about the power analysis, participant demographics, and alternative analyses.

Preregistration

The hypotheses, methods, and analysis plan were preregistered before data collection (https://osf.io/jurgv/overview).

Reporting

We report how we determined our sample size, all data exclusions, all manipulations, and all measures in the study.

Ethical approval

This research received approval from a local ethics board (Protocol No. 23-01-6253) and meets the ethical guidelines set forth in the World Medical Association Declaration of Helsinki.

Method

Design

Participants read a series of social-psychology-study summaries and rated their interest in reporting on them. Summary type (results-blind vs. traditional) was manipulated as a between-subjects variable. Replication status (replicated vs. did not replicate), preregistration status (preregistered vs. not preregistered), and belief alignment (belief-consistent vs. belief-inconsistent) were manipulated as within-subjects predictor variables.

Materials

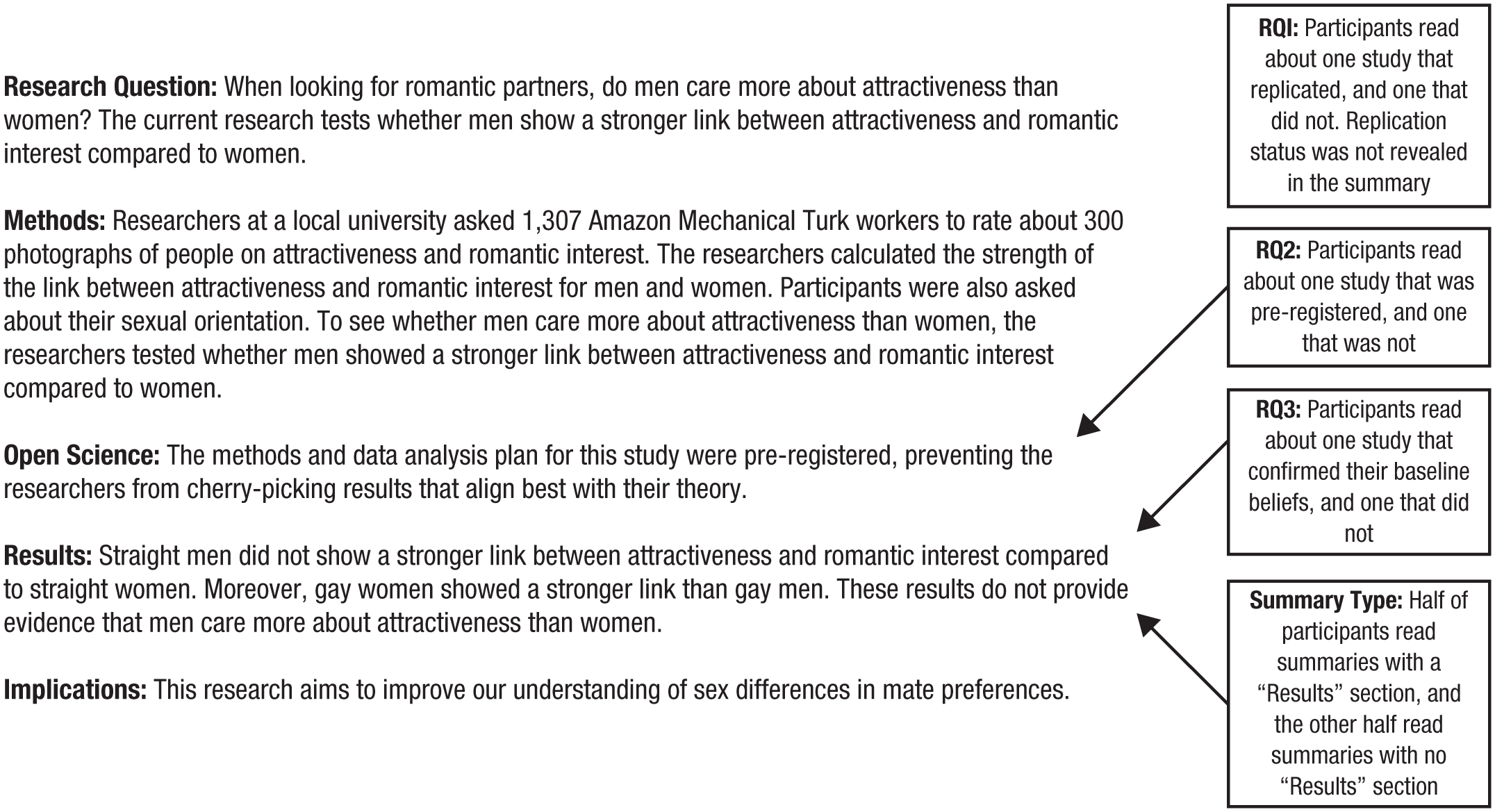

We selected three sets of psychology studies—one for each research question—and created a results-blind and traditional summary of each one (summaries: N = 142). These were designed to provide similar information to that included in a press release. Results-blind summaries included four sections: the primary research question, a summary of the methods, a brief list of open-science practices (if any), and a statement about the work’s implications. Traditional summaries were identical to the results-blind summaries except they included a fifth section describing the results (Fig. 1). Participants in the results-blind condition were asked to evaluate the study based on the way it was designed and conducted. This was done to reduce confusion about the lack of results, but this may also have increased the salience of the methods.

Example study summary from Set 2. Text in boxes explains how summaries were selected to address our research questions.

Summary Set 1: replication status

To address Research Question 1, we created results-blind and traditional summaries for each of the 21 findings that were targets of replication attempts in the Social Sciences Replication Project (SSRP; Camerer et al., 2018). Of the 21 findings, 13 replicated (e.g., people saw more positive emotion in the images of the bodies of winners vs. losers; Aviezer et al., 2012), and eight did not replicate (e.g., people rated job candidates as more suitable when they were holding a heavy clipboard compared with a lighter one; Ackerman et al., 2010). Studies in this set allowed us to examine how results-blind selection might influence journalists’ interest in reporting on replicable research (note that the summaries did not state the replication outcome). Because these studies vary on many dimensions aside from replication status, we can draw conclusions about journalistic preference for the kinds of psychology studies that do (vs. do not) replicate without being able to isolate the features that factor most heavily in participants’ decisions. One advantage of relying on SSRP is that all studies were published in Nature or Science, two primary sources relied on by science journalists (Franzen, 2012).

Summary Set 2: preregistration status

To address Research Question 2, we created results-blind and traditional summaries for 18 registered reports 2 (e.g., people in a community-service-learning program did not show boosts in well-being compared with people on a waitlist; Whillans et al., 2017) and 18 journal-based comparison articles (e.g., person-university fit was linked to a higher sense of belonging, which was, in turn, related to a more positive university experience; Suhlmann et al., 2018) in Soderberg et al.’s (2021) investigation. For registered reports, summaries mentioned preregistration in the “Open Science” section. Registered reports and comparison articles were matched on several factors by Soderberg et al.; they addressed the same topic and often had the same first author and were published in the same journal. Nevertheless, like the summaries in Set 1, they still vary on dimensions besides preregistration status. In fact, many of these dimensions were identified in Soderberg et al.’s work, which showed that registered reports were evaluated as having more rigorous methods, posing higher quality questions, and having more to teach than comparison studies. Studies in this set allowed us to examine how results-blind selection might influence journalists’ interest in reporting on preregistered research.

Summary Set 3: belief alignment

To address Research Question 3, we created results-blind and traditional summaries for 24 findings—eight for each of three topics. We selected topics that have a large body of both confirmatory and disconfirmatory results in the literature. The first eight summaries examined whether people are more likely to shoot unarmed Black versus White targets when acting as a police officer in a simulated environment (“shooter bias”; selected from meta-analyses by Cesario & Carrillo, 2024, and Mekawi & Bresin, 2015). Another eight examined whether teenage girls do worse on math, science, and spatial-skills tests when negative stereotypes about girls’ math performance are activated (“stereotype threat”; selected from the meta-analysis by Flore & Wicherts, 2015). The last eight examined whether women have stronger preferences for stereotypical masculinity in potential mates during the fertile versus nonfertile periods of their menstrual cycles (selected from the meta-analysis by Wood et al., 2014). For each set of eight, we selected four studies that provided evidence for the phenomenon and another four that provided evidence challenging it. The studies in the “evidence for” versus “evidence against” categories were closely matched in that they satisfied the same meta-analytic inclusion criteria. Studies in this set allowed us to examine how results-blind selection might reduce journalists’ confirmation bias when choosing what to report.

Participants

Practicing journalists and journalism graduate students were invited to complete our Qualtrics study via personal email and paid $20, commensurate with the average hourly wage for journalists (Tice, 2019). Email addresses of eligible participants were obtained from university journalism-program and media-outlet websites. A power analysis indicated that 400 participants would provide 92% power to detect an interaction of f = .06 for Research Question 1 (ANOVA_power, n.d.; for details, see the Supplemental Material available online). We anticipated that this would be the analysis requiring the most power, and thus, other analyses would be powered at 92% or higher.

Four hundred sixty-eight participants began our study. Of those, five were excluded for completing the study in fewer than 4 min, and 50 were excluded for not completing the dependent variables. This left 413 participants that we included in analyses (see Deviations from Preregistration below). Another six participants indicated neutral baseline attitudes for all three topics in Summary Set 3, preventing us from computing belief-consistent and belief-inconsistent scores. These participants were excluded from analyses for Research Question 3.

Procedure

Participants began by indicating their baseline belief in each of the three phenomena addressed in the Set 3 summaries (shooter bias, stereotype threat, and menstrual-cycle effects) using a 5-point scale (1 = almost certainly false, 3 = could go either way, 5 = almost certainly true). Half of participants were randomly assigned to read only results-blind summaries. The other half read only traditional summaries. All participants read two summaries randomly selected from Set 1 with the condition that one replicated and the other did not. Participants were not told the replication status of the studies. Participants also read two summaries randomly selected from Set 2 with the condition that one was preregistered and the other was not. Finally, participants read two summaries from Set 3 with the condition that one provided evidence for a phenomenon (e.g., demonstrating shooter bias) and the other provided evidence challenging the same phenomenon (e.g., failing to demonstrate shooter bias). The order of the six summaries was randomized.

To assess interest in reporting, participants used a 7-point scale (1 = strongly disagree, 7 = strongly agree) to respond to three questions (“I would be interested in reporting on this research,” “I think readers would be interested in hearing about this study,” and “I think this research conveys an important message”).

Exploratory measures

For each summary, participants used a 7-point scale (1 = strongly disagree, 7 = strongly agree) to indicate skepticism (“I am skeptical of this research”). At the end of the study, participants answered questions about the potential value and feasibility of results-blind reporting. They also answered questions about what they look for in research they want to report on and what they think their audience wants to read about. We included two attention checks, one at the beginning of the study that directed people to check specific boxes and one at the end that asked people explicitly whether they took the time they needed with their responses. Finally, participants indicated their professional role, media-outlet type (if applicable), frequency of reporting on scientific research, ethnicity, race, gender, education level, and political orientation (for a breakdown of participant demographics, see the Supplemental Material).

Deviations from preregistration

First, our preregistration said that we would exclude participants who indicated that they were not a practicing journalist, journalism graduate student, faculty member in a journalism department, or editor. Because response rates to our emails were low (8%) and our recruitment method was targeted to eligible participants, we dropped this exclusion criterion after data access but before results were known. We conducted all analyses using our preregistered exclusion criterion and found no change in the significance of the main effects or interactions for our three research questions (see the Supplemental Material). This deviation could have a small impact on readers’ interpretations because the alternative analyses yield slightly different effect sizes.

Second, we preregistered a stopping rule of 400 participants but ended up with 413 participants who had usable data. This discrepancy reflects the fact that we could not continuously monitor all exclusion criteria and thus did not stop data collection at the exact point we reached our goal N. This deviation occurred after data access but before results were known. This deviation could have a small impact on readers’ interpretations because changes in sample affect effect sizes. Because this is not a data-dependent deviation, it is unlikely to increase the risk of bias (Hardwicke & Wagenmakers, 2023; Willroth & Atherton, 2024).

Unregistered steps

Our preregistration did not say that we would exclude participants who failed to complete the dependent variables. Because these variables are necessary for our preregistered analyses, we added this exclusion criterion after data access but before results were known. This deviation has little impact on readers’ interpretations because there is no clear alternative analysis.

Results

Descriptive statistics and psychometric properties

To evaluate the reliability of the three items used to measure interest in reporting, we calculated Cronbach’s alphas for each of the six summaries viewed by participants. These ranged from α = .885 to α = .917, indicating high internal consistency. To test for acquiescence bias, we computed correlations between interest in reporting and the exploratory skepticism item for each of the six summaries viewed by participants. These ranged from r = −.40 to r = −.51, suggesting that skepticism was negatively related to interest in reporting and that it was unlikely that participants were agreeing indiscriminately to all items. For means, standard deviations, and ranges for the baseline belief and interest-in-reporting ratings, see Table S2 in the Supplemental Material.

Research Question 1

To determine whether journalists express more interest in reporting on replicable research when they select studies in a results-blind (vs. traditional) fashion, we used responses to Set 1 summaries to conduct a 2 (summary type: results-blind vs. traditional) × 2 (replication status: replicated vs. did not replicate) mixed analysis of variance (ANOVA) with interest in reporting as the dependent variable. This analysis revealed a significant main effect of replication status, F(1, 411) = 54.89, p < .001, η p 2 = .12, such that participants expressed more interest in studies that replicated (M = 4.39, SD = 1.53) than those that did not (M = 3.77, SD = 1.66). The main effect of summary type was not significant, F(1, 411) = 3.86, p = .05, η p 2 = .01; participants expressed similar levels of interest when they read traditional summaries (M = 4.21, SD = 1.49) and results-blind summaries (M = 3.95, SD = 1.68). The interaction between replication status and summary type was not significant, F(1, 411) = .004, p = .95, η p 2 = .00 (Fig. 2a).

Interest in reporting as a function of summary type (results-blind vs. traditional) and (a) replication status (replicated vs. did not replicate), (b) preregistration status (preregistered vs. not preregistered), and (c) belief alignment (belief consistent vs. belief inconsistent). Error bars depict standard errors.

These findings do not provide support for the idea that results-blind (vs. traditional) selection leads to greater favoring of replicable research. Overall, we observed a medium-sized main effect (η p 2 = .12; a 0.62 difference on a 7-point scale) showing that journalists were more interested in studies that replicated than those that did not despite not knowing the replication status of each study. This suggests that there may be features of replicable studies that appeal to journalists regardless of whether they learn the results.

Research Question 2

To determine whether journalists express more interest in reporting on preregistered research when they select studies in a results-blind (vs. traditional) fashion, we used responses to Set 2 summaries to conduct a 2 (summary type: results-blind vs. traditional) × 2 (preregistration status: preregistered vs. not preregistered) mixed ANOVA with interest in reporting as the dependent variable. This analysis revealed a significant main effect of preregistration status, F(1, 411) = 7.42, p = .007, η p 2 = .02, such that participants expressed more interest in preregistered studies (M = 4.82, SD = 1.42) than those that were not preregistered (M = 4.57, SD = 1.50). The main effect of summary type was not significant, F(1, 411) = 0.05, p = .82, η p 2 = .00; participants expressed similar levels of interest when they read traditional summaries (M = 4.68, SD = 1.39) and results-blind summaries (M = 4.71, SD = 1.53). The interaction between replication status and condition was not significant F(1, 411) = .01, p = .91, η p 2 = .00 (Fig. 2b).

These findings do not provide support for the idea that results-blind (vs. traditional) selection leads to greater favoring of preregistered research. Overall, we observed a small main effect (η p 2 = .02; a 0.25 difference on a 7-point scale) showing that journalists were more interested in preregistered versus nonpreregistered research. Journalists, then, were responsive to the use of this open-science practice regardless of whether they learned the results.

Research Question 3

To determine whether journalists exhibit less confirmation bias when they select studies in a results-blind (vs. traditional) fashion, we used responses to Set 3 summaries to conduct a 2 (summary type: results-blind vs. traditional) × 2 (belief alignment: belief-consistent vs. belief-inconsistent) mixed ANOVA with interest in reporting as the dependent variable. This analysis did not reveal a significant main effect of belief alignment, F(1, 405) = .22, p = .64, η p 2 = .00; participants expressed similar interest in reporting on belief-consistent (M = 4.97, SD = 1.52) and belief-inconsistent (M = 4.94, SD = 1.47) studies. The main effect of summary type was not significant, F(1, 405) = 1.32, p = .25, η p 2 = .00; participants expressed similar levels of interest when they read traditional summaries (M = 5.32, SD = 1.39) and results-blind summaries (M = 4.88, SD = 1.58). The interaction between belief alignment and condition was significant F(1, 405) = 4.66, p = .03, η p 2 = .01, such that the preference for belief-consistent (vs. belief-inconsistent) findings was larger in the traditional condition (difference: M = .18, SE = .10, p = .06) compared with the results-blind condition (difference: M = −.11, SE = .09, p = .23; Fig. 2c).

These findings do provide support for the idea that results-blind (vs. traditional) selection leads to a reduction in confirmation bias. Put another way, journalists showed a reduced preference for studies confirming their preexisting beliefs when they evaluated studies without the results. Although the interaction was significant, the effect was small (η p 2 = .01), and the simple effects were not significant; thus, it would be inaccurate to say that journalists were biased toward attitude-consistent studies in the traditional condition.

Discussion

Overall, the results tentatively supported one of our three hypotheses. We did not observe that results-blind (vs. traditional) selection led to greater favoring of replicable research or preregistered research. We did, however, find that participants’ preference for attitude-consistent findings was reduced in the results-blind condition compared with the traditional condition.

For Research Question 1, we saw that regardless of condition, journalists expressed more interest in reporting on studies that replicated compared with those that did not. This is particularly notable given that participants were not told the replication status of the studies and therefore were not simply favoring studies with the “replicated” stamp of approval. Presumably, then, journalists were picking up on features associated with the likelihood of successful replication, much the way that researchers have done in prediction-market studies (Gordon et al., 2021). Although we cannot isolate the features that journalists considered most heavily, one possibility is that they showed a preference for studies that had open data or materials because this was more common among studies that replicated (6/13) than those that did not replicate (2/8). Another possibility is that journalists were skeptical about topics that seemed implausible (e.g., social priming), contradicting the notion that the most counterintuitive ideas would be seen as most newsworthy. Consistent with this account, exploratory analyses revealed that journalists were more skeptical of studies that did not replicate compared with those that did replicate (see the Supplemental Material).

For Research Question 2, participants showed a preference for reporting on studies that were preregistered (vs. not) regardless of whether they knew the results. The use of this open-science practice, then, may give studies an advantage when it is explicitly communicated to journalists. On the other hand, it may be features linked with but separate from preregistration that are driving the main effect we observed. Past work (e.g., Soderberg et al., 2021) has demonstrated that preregistered studies tend to have characteristics—such as rigorous methods and strong research questions—that could make them more appealing than their nonpreregistered counterparts. More specifically, one feature that could have affected our results is sample size; across our summaries, preregistered studies had a median sample size of 280, compared with 205 for nonpreregistered studies. The idea that journalists notice and prefer larger samples has precedent in previous work (Bottesini et al., 2023). Another difference between our summary sets was that the frequency of significant results was lower among the preregistered (5/18) versus nonpreregistered (15/18) studies, reflecting a broader trend in the literature (Scheel et al., 2021). If we assume that significant results generally elicit more interest than null results, this difference would have worked against our findings, possibly reducing the preference journalists showed for preregistered studies.

Our results tentatively supported our hypothesis for Research Question 3: We observed a significant interaction such that journalists who selected studies using results-blind selection showed a reduced preference for belief-consistent findings compared with journalists who used traditional selection. This finding should be considered in light of two qualifications. First, this effect was small (η p 2 = .01) and might be overwhelmed by competing considerations in a real science-journalism context. Second, we did not observe a significant preference for belief-consistent (vs. belief-inconsistent) findings in the traditional condition. An experiment that used fabricated studies specifically manipulating belief consistency would be better equipped to identify confirmation bias, if it is indeed operating, in traditional settings.

At first glance, it might seem inevitable that results-blind selection should reduce confirmation bias—journalists can be biased by results only when they are privy to them. Nevertheless, our results are inconsistent with two alternative outcomes. First, we could have found that journalists’ preferences were unaffected by the type of selection. This is what we should have observed if journalists’ decisions were determined by the quality of the methods and not influenced by the direction of the results. Second, we could have found that journalists in the results-blind condition were able to intuit which studies would confirm their beliefs and to favor those studies even without the results. This possibility appears unlikely given that in the results-blind condition, interest in belief-inconsistent findings is (nonsignificantly) higher than interest in belief-consistent findings. The support we observed for this research question is unlikely to be explained by confounds because the interaction cannot be accounted for by an underlying difference across conditions.

If results-blind selection could have beneficial effects, it is important to consider how this could work in practice. For studies covered in this fashion, researchers would first need to finalize the methods of their study before data collection and make these methods publicly available. This currently happens with registered reports, some big-team-science projects, grant press releases, and clinical trials. Journalists could then make a decision about whether to report on the study conditional on, say, the researchers following the methods faithfully and the study passing peer review. Then, once the researchers complete and publish the study, the journalists would report on the results. At this stage, the methods and results could be reported together in the usual fashion so long as there was a time-stamped record (similar to a preregistration) of the results-blind decision.

Despite the possible benefits of results-blind reporting, there are constraints in journalistic workflows and audience expectations that could limit its feasibility as a widespread practice. First, the fast pace of many newsrooms might leave little room for a two-step process like the one required by results-blind reporting. Moreover, audiences may lack the scientific literacy or level of interest required to engage with methodological minutiae. Although it seems unlikely (and undesirable) that results-blind reporting would ever replace traditional science reporting, this practice could bolster credibility in covering methodologically strong research that seeks to answer questions of high importance to the public.

Normative constraints on journalism, including the ideal of what makes for a good science story, are mutable strategies that largely respond to or take advantage of dynamic market conditions (Reese & Shoemaker, 2018; Schudson, 1998). False balance in climate-change reporting provides a good example. In the past, it was common practice to include quotes on “both sides” of the climate-change issue to balance views on what was once a controversial topic (Brüggemann & Engesser, 2017). But reporting norms changed between 2010 and 2015, and many prominent journalists, including CNN’s Christiane Amanpour, made a concerted effort to change these practices. The change quickly became common practice in the field. This normative shift illustrates that it is at least possible that journalists will adopt results-blind reporting practices if they perceive that such a shift will be well received by their audiences and if the idea receives a “signal boost” from influential figures. The questions of whether these conditions will materialize are beyond the scope of the current study, and future research could design studies to better understand how audiences would receive the idea of results-blind reporting or how to persuade prominent journalists to adopt results-blind reporting practices.

Limitations

One limitation of this work was that we conducted a “natural experiment” in the sense that we used real psychology studies. We made this decision for two reasons. First, it bolstered external validity across all three of our research questions. Second, drawing on the actual published record was necessary for testing Research Questions 1 and 2 because these are questions about how journalists respond to the existing literature. A limitation of this approach is that we cannot identify the specific features that account for journalists’ preferences. Fabricated summaries could be useful in taking this next step because they could be used to manipulate specific characteristics, such as sample size or statistical significance.

Although we made external validity a high priority, we made some methodological decisions that deviate from a real-world reporting context. First, to reduce participant burden, we did not provide participants with the full articles corresponding to each summary and therefore cannot be sure how a more comprehensive review process might have shifted their responses. Second, our summaries explicitly mentioned open-science practices (e.g., preregistration) and provided brief explanations to ensure that our participants knew what they were. Because open-science practices are not typically featured in press releases (American Psychological Association, 2025), they were likely more salient than usual. Finally, participants in the results-blind condition were told that because they would not see the results, they should evaluate the study based on the way it was designed and conducted. Again, because results-blind selection is not currently practiced in science-reporting settings, these instructions lack ecological validity.

Interpretations of our results should also bear in mind limitations of our sample. More than 70% of our participants were academics, and faculty members in journalism departments made up the largest subgroup (n = 206). Thus, our sample may reflect different perspectives on “newsworthiness” and different standards for methodological rigor than one comprising solely journalists and editors without an academic background. Encouragingly, many journalism faculty have professional experience that could provide insight into journalistic decision-making. A further limitation of our sample is that we recruited participants from media outlets and schools based in the United States and Canada, making it difficult to generalize our findings beyond these two countries.

Conclusion

Probing journalists’ reactions to real psychological studies yielded some encouraging—and unanticipated—findings. For example, journalists showed a preference for findings that replicated over those that did not and for studies that were preregistered over those that were not. These results challenge the idea that the most “newsworthy” studies—as determined by journalists themselves—are those resting on shaky methodological foundations. We also observed that when journalists used results-blind reporting practices, they showed less favoritism toward findings that confirmed their preexisting beliefs. Results-blind selection, then, may be worth further investigation as a tool for bolstering the trustworthiness of the psychological science that gets communicated to the public.

Supplemental Material

sj-docx-1-amp-10.1177_25152459261434559 – Supplemental material for Can Results-Blind Selection Improve Science Communication?

Supplemental material, sj-docx-1-amp-10.1177_25152459261434559 for Can Results-Blind Selection Improve Science Communication? by Alexa M. Tullett, Savannah C. Lewis, Nell Lambdin, Joshua Baker and Matthew Barnidge in Advances in Methods and Practices in Psychological Science

Footnotes

Transparency

Action Editor: David A. Sbarra

Editor: David A. Sbarra

Author Contributions

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.