Abstract

In studies using the increasingly popular experience-sampling method (ESM), design decisions are often guided by theoretical or practical considerations. Yet limited empirical evidence exists on how these choices affect data quantity (e.g., response probabilities), data quality (e.g., response latency), and potential biases in study outcomes (e.g., characteristics of study variables). In a preregistered, 4-week study (N = 395), we experimentally manipulated two key ESM protocol characteristics for sending ESM surveys: timing (fixed vs. varying times) and contingency (directly vs. indirectly after unlocking the smartphone). We evaluated the ESM protocols resulting from the combination of these two characteristics regarding different criteria: As hypothesized for contingency, indirect protocols resulted in higher response probabilities (increased data quantity). But they also led to higher response latencies (reduced data quality). Contrary to our expectations, the combined effect of contingency and timing did not significantly influence response probability. We also did not observe other effects of timing or contingency on data quality. In exploratory follow-up analyses, we discovered that timing significantly affected response probability and smartphone-usage behaviors, as measured by screen logs; however, these effects were likely attributable to time-of-day effects. Self-reported states showed no differences based on the chosen ESM protocol, and similar trends were found when correlating primary outcomes with external criteria, such as trait affect and well-being. Based on the study’s findings, we discuss the trade-offs that researchers should consider when choosing their ESM protocols to optimize data quantity, data quality, and biases in study outcomes.

Keywords

In recent years, the experience-sampling method (ESM) has experienced a major upsurge in various psychological disciplines (Wrzus & Neubauer, 2023). ESM has several advantages over traditional data-collection methods. Because of its nature of repeatedly assessing feelings and behaviors in everyday life, it reduces recall bias (Lucas et al., 2021; Scharbert et al., 2025), covers real-life situations that are difficult or unethical to induce in the laboratory (Reis, 2012), and enables study of within-persons phenomena (Hamaker, 2012).

However, these various advantages of ESM come at a price. Study designs are much more complex and require researchers to make many decisions. One of the most fundamental decisions is the definition of the ESM protocol, that is, when and how the ESM surveys are sent over the course of the study. Thereby, ESM protocols are often selected based on theoretical considerations, such as the frequency with which the psychological states or behaviors of interest occur or change in daily life. Occasionally, they may also result from pragmatic considerations, such as the technical capabilities of the ESM application (app) or tool used. However, with a few exceptions (e.g., van Berkel et al., 2017; van Berkel, Goncalves, Lovén, et al., 2019), a comprehensive methodological investigation of the potential side effects of when and how ESM surveys are sent on ESM study parameters, including the quantity and quality of ESM data collected and biases in resulting study findings, is still lacking. With this study, we aim to help fill this gap.

Overview of the Characteristics of ESM Protocols

Previous studies have used different ESM protocols depending on the research question (Stone & Shiffman, 2002). Traditionally, these protocols were categorized into interval-, signal-, and event-dependent (Wheeler & Reis, 1991). Early ESM studies often used paper-and-pencil questionnaires completed at set intervals, when signaled by devices such as pagers, or after certain events (Larsen & Kasimatis, 1991; Moskowitz & Coté, 1995; Wong & Csikszentmihalyi, 1991). With smartphones now commonly used for ESM, participants are typically notified via email or app (e.g., Scharbert et al., 2024; Stieger et al., 2021), blurring the line between interval and signal-contingent protocols (Horstmann, 2021). Based on current literature, we therefore categorize ESM protocols by two main characteristics: timing and contingency, as well as their combination (see Table 1).

Overview of ESM Protocol Characteristics

Note: ESM surveys can be scheduled for exactly the same time every study day (fixed timing) or pseudorandomly (varying timing). In addition, participants can be notified about ESM surveys at the exact time it was scheduled (direct contingency) or at the very next time they use their smartphone after the time it was scheduled (indirect contingency). Timing and contingency conditions can also be combined (see cells). ESM = experience-sampling method.

“Timing” describes when ESM surveys are scheduled and distinguishes between fixed and varying timing. Fixed timing means that ESM surveys are scheduled at exactly the same time every study day (e.g., Gloster et al., 2017; Tripathi et al., 2020). Varying timing, in turn, means that ESM surveys are scheduled at random times throughout the day or pseudorandomly. That is, they are sent at random times but within fixed intervals (e.g., in the morning, the afternoon, and evening; for examples, see Neubauer et al., 2020; Verhagen et al., 2019).

“Contingency” describes how the ESM surveys are triggered. Direct contingency means that participants are notified about a scheduled ESM survey at the exact time it is scheduled (e.g., Fullagar & Kelloway, 2009; Hartmann et al., 2015). Indirect contingency means that participants receive ESM surveys only after they actively use their smartphone after the scheduled time (van Berkel, Goncalves, Lovén, et al., 2019), for example, when turning on the screen or after answering a call (e.g., Ghosh et al., 2019; Reiter & Schoedel, 2024; Schoedel et al., 2023). Despite the growing number of software frameworks and methodological or applied research on and with context- or interruptibility-aware ESM designs (Bachmann et al., 2015; Fischer et al., 2011; Schoedel et al., 2023; van Berkel, Goncalves, Koval, et al., 2019; Wen et al., 2017), there is still a strong imbalance in favor of direct ESM protocols in the current ESM-research landscape. This is likely because indirect protocols require at least some sort of passive sensing to work and thus tend to require more effort to implement technically.

Side Effects of ESM-Protocol Choice on ESM-Study Parameters

From a methodological standpoint, ESM researchers should consider that ESM-protocol characteristics may affect a variety of ESM-study parameters, including the quantity and quality of ESM data and the introduction of bias to study results.

ESM data quantity

One important aspect of ESM studies is the quantity of ESM data, often referred to as the “response probability” or “compliance of participants” (Eisele et al., 2022; Hasselhorn et al., 2022). Previous meta-analytical and systematic reviews have examined the factors underlying the response probability. These include the sociodemographic characteristics of participants, clinical status of the population under study, and study-design elements, such as study duration, the number of items, and the provision of incentives (Davanzo et al., 2023; Jones et al., 2019; Wen et al., 2017; Wrzus & Neubauer, 2023). However, many of the primary studies referred to in this work were observational (e.g., Courvoisier et al., 2012; Silvia et al., 2013; Sokolovsky et al., 2014). Studies that experimentally manipulated ESM-study characteristics, primarily focused, with few exceptions (e.g., Businelle et al., 2024), on very specific design elements, such as the number of items per ESM survey or the number of prompts per day (e.g., Eisele et al., 2022; Hasselhorn et al., 2022; Ottenstein & Werner, 2022).

Regarding the timing and contingency of ESM surveys and their combination, however, there is only preliminary evidence from one rather small study (N = 20) in the field of computer science that investigated whether participants’ response probability may depend on these ESM-protocol characteristics (van Berkel, Goncalves, Lovén, et al., 2019). We take this pioneering study as a starting point to replicate on a larger scale whether the design of the ESM protocol used is related to response probabilities in ESM studies (Research Question 1).

In more detail, we assume that participants are more likely to answer ESM surveys if they are notified the next time they use their smartphone (i.e., indirect mode) than if they are notified directly at the time the ESM survey was scheduled (i.e., direct mode; Hypothesis 1). We believe that ESM surveys in the indirect mode are more likely to be noticed because they are sent only during active phone use. In contrast, participants in the direct mode may miss ESM surveys if they are not on their phones at the scheduled time.

In addition, contingency can also be considered in combination with timing (see Table 1). In the direct mode, we expect participants’ response probability to be higher in the fixed mode than in the varying mode. If the ESM surveys are scheduled for the exact same time each day, participants can anticipate when they will occur (Myin-Germeys et al., 2018). This may increase the likelihood of habitually answering ESM surveys compared with the varying-direct mode, in which the ESM surveys are sent at slightly varying times each day. In contrast, the indirect mode is not expected to yield a discrepancy in response probabilities between fixed timing and varying timing. This is because participants receive ESM surveys only upon active usage of their smartphones, which precludes them from anticipating the timing of subsequent surveys regardless of whether the timing is fixed or varying. Therefore, we hypothesize that the difference in the (increased) probability of responding to ESM surveys in the fixed- versus the varying-timing protocol is higher when participants are directly notified at the time the ESM survey is scheduled (direct mode) than when they are notified about the ESM survey the next time they use their smartphone (indirect mode; Hypothesis 2).

ESM data quality

Another important aspect of the ESM studies is the quality of the ESM data, which means that the ESM surveys are carefully answered so that they pass certain quality controls and are therefore usable by researchers (for a detailed discussion of careless responding in surveys, see Meade & Craig, 2012). Defining control criteria, however, is not straightforward because it can involve a variety of different data characteristics (DeSimone & Harms, 2018).

To the best of our knowledge, there is a lack of substantial findings about how ESM-protocol characteristics affect data-quality indicators. Therefore, we proceed in a purely exploratory way. We follow the recommended best practices recently published by Ward and Meade (2023) for detecting careless responding in online surveys to transfer them to ESM surveys. In particular, we investigate whether participants, depending on different ESM protocols, (a) speed through or do not take enough time for the items, (b) select contradictory statements, or (c) always choose the same answer option, which could be considered indicators of low ESM data quality (Research Question 2).

Bias in resulting study findings

A final and further aspect of ESM studies is whether the resulting findings are dependent of or biased because of the characteristics of the ESM protocol used. One reason for such bias can be measurement reactivity, which refers to the effect that the instrument or procedure itself systematically distorts the validity of the outcomes collected (Barta et al., 2012). The degree of the bias resulting from measurement reactivity might vary depending on the specific ESM-protocol characteristics. For example, participants may be more annoyed by ESM surveys sent at varying times than by those sent at fixed times because they are less able to adjust to the timing. But they might want to adjust to answer as many ESM surveys as possible in the study to receive the compensation. Consequently, they might systematically rate their mood somewhat worse in the varying mode compared with the fixed mode.

Another reason for this bias can be selective sampling. For example, previous research has shown that participants reported more social interactions in event-contingent ESM protocols compared with signal-contingent ESM protocols (Himmelstein et al., 2019). Previous research has also shown that the physical context of participants affects whether they respond to ESM surveys. For example, people are more likely to answer surveys when they are at home (Reiter & Schoedel, 2024). In addition, people might use their phones more at certain locations, such as at home. As in the indirect mode, ESM surveys are sent only when participants are using their smartphones, so responding to ESM surveys might, in turn, be limited to specific locations, such as home. This selective sampling of participants’ mood may produce biased estimates that systematically differ from the true target quantity or estimand (e.g., participants’ intradaily mood fluctuations). By contrast, in the direct mode, responses are collected independently of smartphone use, making them less dependent on the participants’ location and thus potentially more representative. This could lead to more accurate estimates.

Although we will not be able to empirically identify the specific reasons behind the bias of study findings, we aim to investigate it as a comprehensive phenomenon and how it might depend on ESM-protocol characteristics. Accordingly, we explore whether the ESM responses themselves and the association patterns of ESM responses with external constructs (e.g., traits collected via questionnaires) are biased depending on the ESM protocol used (Research Question 3a).

Because ESM data are increasingly combined with data passively logged on smartphones (e.g., Ebner-Priemer & Santangelo, 2024; Harari et al., 2016; Wright & Zimmermann, 2019), we think that it is important to also include measures derived from smartphone sensing into the investigation of ESM protocols’ side effects. “Smartphone sensing” refers to the approach of continuously collecting different types of data (e.g., screen or app logs, GPS) in the background at high resolution (i.e., several thousand logs per day) to derive objective data on daily behaviors, such as mobility, physical activity, or sleep (Harari et al., 2016; Miller, 2012; Schoedel & Mehl, 2024). The combination of ESM and sensing is often considered an important new step in psychological research because the two methods can highly benefit from each other, offering new research designs and opportunities (Ebner-Priemer & Santangelo, 2024). However, it also changes the role of the smartphone from a mere tool for ESM data collection to a research object itself. To illustrate, smartphone screen time is increasingly being studied as variable of interest (Christensen et al., 2016; Liebherr et al., 2020). For example, researchers investigate associations between objectively assessed smartphone use and psychological well-being (große Deters & Schoedel, 2024; Przybylski & Weinstein, 2017). On the other hand, sending ESM surveys to assess psychological states can artificially provoke smartphone use. Thus, the ESM notifications could trigger an unintended cascade of smartphone-usage behaviors, such as quickly checking the weather or briefly replying to a message, that would not have occurred without the initial ESM. In the best case, however, ESM surveys should not elicit measurement reactivity, interrupt participants’ naturally occurring behavior, or (actively) provoke smartphone usage. For this, van Berkel, Goncalves, Lovén, et al. (2019) proposed that ESM notifications are sent only upon the next naturally occurring smartphone use after the time the ESM survey was originally scheduled. We take this approach as a starting point and explore in our study if the type of ESM protocol has side effects on smartphone-usage indicators themselves and also their associations’ patterns with external constructs (Research Question 3b).

Method

The data for this study were collected as part of the Coping With Corona project conducted by the University of Münster, the University of Osnabrück, and the LMU Munich in Germany from March to July 2023. The project was approved by the Ethics Committee of LMU Munich under the study title “Coping With Corona (CoCo): Understanding Individual Differences in Well-Being During the COVID-19 Pandemic.” This study was preregistered and can be accessed via the project’s OSF repository (https://osf.io/a5bg4/), which also contains the online supplemental material (OSM), the data preprocessing and analysis code, and an anonymized, preprocessed data set. Raw sensing data cannot be shared publicly because of privacy and related data-protection legislation.

Procedures

A diverse set of recruitment strategies was employed to obtain a heterogeneous sample, comprising psychology students and individuals from the general public. To be eligible, participants had to be 18 years or older and for technical reasons use a smartphone with Android operating system (Version 7 or higher).

Participants were asked to install the PhoneStudy research app (https://phonestudy.org/en/) on their private smartphones for 4 weeks. The app provided them with up to four ESM surveys per day. The exact ESM protocol according to which the surveys were sent was subject to experimental manipulation and is described in the next section. In addition to the daytime ESM surveys, participants received a daily evening survey, and the app continuously collected various types of log data in the background of the smartphone. At the start and the end of the study, participants completed an online pre- and postquestionnaire. Participants received up to €75 in compensation depending on the study parts completed (i.e., base compensation for completing the pre- and postquestionnaires and compliance-related bonus for answering more than 50% of ESM surveys; for further details, see Appendix A in the OSM).

ESM protocols

We manipulated the ESM protocols with respect to the two characteristics timing and contingency. Timing was operationalized in two distinct forms. First, in fixed timing, the ESM surveys were scheduled for the same times each day: 7 a.m., 10 a.m., 1 p.m., and 4 p.m. Second, varying timing indicated that the ESM surveys were scheduled pseudorandomly; one survey occurred in each of the intervals 7 a.m. to 10 a.m., 10 a.m. to 1 p.m., 1 p.m. to 4 p.m., and 4 p.m. to 7 p.m. To ensure that there was sufficient time between two consecutive ESM surveys, a minimum of 60 min was set.

Contingency was operationalized in two distinct ways. First, “direct contingency” refers to the situation in which participants were informed about a planned ESM survey at the exact time it was scheduled. Second, “indirect contingency” refers to the situation in which participants received the ESM surveys as soon as and only if they actively used their smartphone after the scheduled time (e.g., turned on the screen, answered a call). If participants did not use their phone until the time of the next scheduled ESM survey, the corresponding survey was skipped in favor of the next scheduled survey. Consequently, it was possible that participants were not informed about a scheduled ESM survey if they did not use their phone in the requisite time.

The combination of these two parameters resulted in the 2 × 2 experimental conditions presented in Table 1. Each participant was exposed to all four experimentally manipulated ESM-protocol conditions, each lasting for 7 days. The order of the four ESM-protocol conditions in the within-subjects design was randomly assigned across participants. The random assignment was accomplished during the app setup right after the participants installed the app on their smartphones. For each participant, each of the four possible experimental conditions was drawn without replacement, resulting in a total of 24 possible combinations. We informed our participants in advance that the timing of the ESM surveys would vary and that they might experience a different number of ESM surveys per day over the course of the study. We did this because during pilot testing, we found that participants thought they would have technical problems switching to another ESM protocol and were worried about it. In each of the four experimental conditions, ESM surveys timed out (i.e., the notification disappeared) 15 min after their initial appearance on the smartphone. After starting the ESM survey, participants had to complete the ESM survey within another 15 min.

Participants

In total, 510 participants answered the prequestionnaire and installed the app. After applying our preregistered exclusion criteria (for further details, see Appendix A in the OSM), the final sample comprised 395 participants, of which, 67.6% identified as female, 31.7% identified as male, and 0.8% identified as neither male nor female. On average, participants were 27.8 years old (range = 18–72). Of the sample, 1.2% of participants graduated from lower secondary school, 6.3% graduated from higher secondary school, 57% had finished A-levels, 33.1% graduated from university, and 2.3% held a PhD. We preregistered an a priori simulation-based sensitivity power analysis for generalized linear mixed models (following Pargent et al., 2024). This sensitivity power analysis was conducted before data analysis but after data collection. We took a conservative approach to estimating power, assuming small effect sizes and drawing on previous ESM literature (Wrzus & Neubauer, 2023). For an alpha error level of 5%, our achieved sample size (N = 395) and average number of observations per person (n = 104) allowed us to detect small effects with a power of 80% to 100%.

Measures

ESM data quantity

As preregistered (see Hypotheses 1 and 2), we defined participants’ response probability as the proportion of ESM surveys answered out of all ESM surveys sent to a given participant. Thereby, we only considered those sent ESM surveys in our study for which we could ensure that participants were notified about them. In other words, we excluded cases in which participants switched their smartphone off at the scheduled time for the ESM surveys, had set their devices to “do not disturb,” or had intentionally not been notified. This latter scenario pertains to the indirect contingency protocols in instances in which the participants did not use their smartphones until the scheduled time for the subsequent ESM survey, leading to the initial survey being “replaced” by the next survey. We counted ESM surveys as answered if all items of the survey were answered within 30 min after participants received the survey notification (i.e., participants started the survey within 15 min after notification and finished the survey within 15 min after starting). We use the term “response rate” in a descriptive sense and the term “response probability” if we refer to the probability to answer a specific ESM survey as predicted by the specified model (see section Data Analysis). As an additional indicator, we descriptively examined dropout counts. To this end, we analyzed the last completed ESM survey of each participant during the study period. If this occurred more than 3 days before the end of the study, we classified it as an indicator of “silent” study dropout, defined as participants who did not officially inform us of their withdrawal from the study but ceased answering ESM surveys.

ESM data quality

We used three different indicators representing common approaches to detect low-quality data arising from careless responding in survey research (Gibson & Bowling, 2019; Huang et al., 2012; Scharbert et al., 2023): (a) response duration of single ESM surveys, defined as the time difference between opening and finishing an ESM survey; (b) contradictory response patterns (binary), defined based on whether participants selected the response options “agree” or “strongly agree” on items that were semantic antonyms of one another in a single ESM survey (i.e., the item pairs feeling “happy” vs. “sad” and “stressed” vs. “relaxed”; see Ward & Meade, 2023); and (c) repetitive response styles (binary), defined based on whether the same response option was selected on all 11 subsequently presented state-affect items in a single ESM survey. As an additional, not preregistered data-quality indicator, we examined response latency. It was defined as the difference between the time of the originally scheduled ESM survey and the time when participants actually began to respond to a given ESM survey. Besides these items, the ESM surveys also asked about partners and conversational topics of preceding social interactions, corumination, and further mood-related states. For a full list of the assessed items and further details on the study procedures, see Appendix A in the OSM.

Bias in resulting study findings

We examined ESM responses and smartphone-usage indicators as primary study outcomes and their respective patterns of association with external constructs assessed by self-report questionnaires as secondary study outcomes. We thereby expanded our preregistered analysis plan by additionally including further primary study outcomes (besides smartphone usage) and their associations with external constructs in our exploratory analysis.

The selection of ESM primary study outcomes included state positive affect and state negative affect, each of which was assessed as the average score of three items (positive affect: “happy,” “excited,” “relaxed”; negative affect: “angry,” “anxious,” “sad”) of the PANAS-X (Watson & Clark, 1994). Items were rated on a 6-point Likert scale ranging from strongly disagree to strongly agree. In our analyses, we were interested in both the absolute state values of affect and the deviation of people’s current affect from their personal mean value. Therefore, we additionally centered the affect-state scores around the person-specific mean across the study.

As additional primary study outcomes, we explored smartphone-usage measures derived from the smartphone-sensing logs. Specifically, we extracted participants’ total smartphone-usage time and total number of unlock events in the hour around the time of each scheduled ESM survey (for ±30 min; for further details, see Reiter & Schoedel, 2024). Because we were interested in whether participants used their smartphones more (i.e., longer and more frequently) than usual (i.e., when not receiving ESM surveys) depending on the respective ESM protocol, we additionally centered the extracted smartphone measures by the respective person’s daytime-specific mean value. That is, for each participant, we calculated person-average smartphone-usage-behavior measures for each daytime interval of the entire study period (i.e., hourly averages for early mornings: 7 a.m.–10 a.m.; late mornings: 10 a.m.–1 p.m.; afternoons: 1 p.m.–4 p.m.; early evenings: 4 p.m.–7 p.m.). Then, we used the smartphone measures of the 1-hr interval surrounding the respectively scheduled ESM surveys and the person-average smartphone measures to extract the deviation of participants’ ESM-related behavior from their person-average smartphone-usage behavior in terms of total usage time and total number of unlocks.

Finally, as exemplary secondary study outcomes, we used different well-being measures assessed during the pre- and postquestionnaires that participants answered before and after the experience-sampling period: (a) a subset of the PANAS (Watson et al., 1988) to assess trait positive and negative affect (positive affect: α = .70,

Data analysis

All analyses were conducted using the statistical software R (Version 4.4.2; R Core Team, 2024). Generalized linear mixed effects regression models were estimated using the packages lme4 (Bates et al., 2015) and lmertest (Kuznetsova et al., 2017). Predicted average response probabilities were obtained using the marginaleffects package (Arel-Bundock et al., 2024). For reproducibility, we used the package renv (Ushey & Wickham, 2024) and uploaded the lock file to the project’s OSF repository.

Confirmatory data analysis

For the confirmatory analysis (Research Question 1), we used multilevel logistic regression models estimating within-persons effects. That is, for each participant, we repeatedly observed whether a scheduled ESM survey was answered (i.e., binary outcome variable) depending on the respective ESM-protocol-characteristics timing (dummy-coded: 0 = fixed [f], 1 = varying [v]) and contingency (dummy-coded: 0 = direct [d], 1 = indirect [i]) and their interaction. Therefore, we specified random-intercept fixed-slope models. To test our hypotheses, we calculated the average predicted response probabilities for the different types of ESM protocols resulting from the combination of timing and contingency and inspected their contrasts. In more detail, we specified the following hypotheses:

Hypothesis 1:

Hypothesis 1a:

Hypothesis 1b:

That is, we assume that participants are more likely to answer ESM surveys if they are notified about the ESM survey the next time they use their smartphone (indirect mode) than if they are notified directly at the time the ESM survey was scheduled (direct mode; for both fixed [Hypothesis 1a] and varying timing [Hypothesis 1b] protocols).

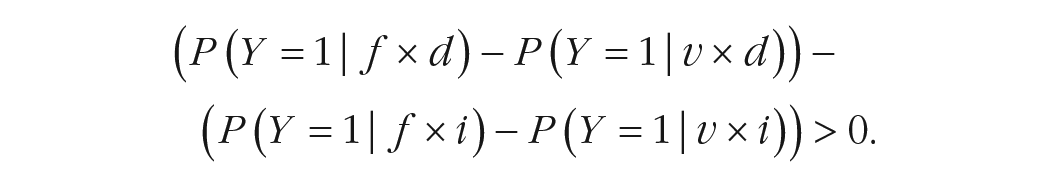

Hypothesis 2:

That is, we assume that the increase of ESM survey response probability is stronger in fixed (vs. varying) timing protocols when participants are notified directly at the time the ESM survey was scheduled (direct mode) than when they are notified about the ESM survey the next time they use their smartphone (indirect mode).

Exploratory data analysis

On an exploratory level, we compared the number of study dropouts across ESM protocols and study weeks. Given that the observed numbers were generally rather small, we present a descriptive account and refrain from conducting any statistical tests.

For the exploratory ESM-data-quality outcomes (i.e., response duration, contradictory and repetitive response styles, response latency) and primary study outcomes (i.e., absolute levels of and deviation from person average of state positive affect and state negative affect, deviation of person-average smartphone-usage duration and of number of unlocks), we used generalized linear mixed effects models (random-intercept fixed-slope) with timing, contingency, and their interaction as predictors. We standardized the numeric outcome variables.

In addition, we explored whether the use of different ESM protocols was related to differential association patterns between our set of primary study variables (i.e., state affect and smartphone use) and external constructs (i.e., trait affect, depression, satisfaction with life, and psychological well-being). For these analyses, we used the absolute levels of state positive affect, state negative affect, smartphone-usage duration, and smartphone unlocks each as outcome variable. We ran individual mixed-effects models per ESM protocol with trait affect, depression, satisfaction with life, and psychological well-being as predictors. Thus, in total, we fitted 16 models, one for each combination of the four ESM-level primary study outcomes and the four ESM protocols.

Robustness checks

Finally, we conducted different robustness checks. First, we repeated the confirmatory and exploratory analyses separately for the student subsample (n = 112; surveys: n = 11,661) and the subsample recruited from the general public (n = 283; surveys: n = 29,575). For detailed results and the full sample results, see Appendix B in our OSM. We give brief summaries of the additional analyses in the results section.

Second, in our preregistration, we defined the number of sent ESM surveys as baseline for calculating response probabilities (see section Measures). Alternatively, we could also have defined the number of scheduled ESM surveys as baseline. We therefore conducted a sensitivity analysis with this plausible alternative option to calculate response probabilities. Because the results did not differ with respect to our preregistered hypothesis tests, we refrain from extensively presenting the results in the article. However, we report in detail on the rationale and procedure of the sensitivity analysis and the results in Appendix C of the OSM.

Results

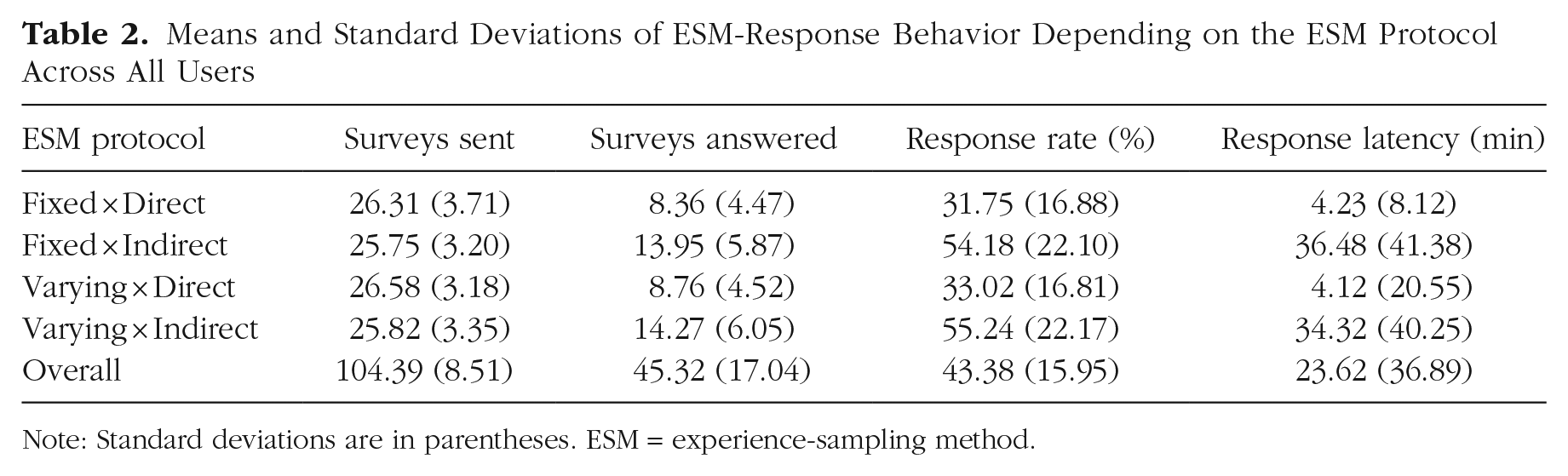

A total of 41,236 ESM surveys were sent in our study with an average of 104.39 ESM surveys per participant over 4 weeks. On average, participants answered 45.32 across all ESM protocols. As a plausibility check, we found that the average number of ESM surveys sent was slightly lower in the indirect modes, which was expected because the ESM surveys in the indirect mode were sent only if the participants used their smartphones in the time window after the scheduled ESM survey. For detailed figures on ESM surveys sent and their distribution across the various ESM protocols, see Table 2. For a detailed presentation of all results, see Appendix B of our OSM.

Means and Standard Deviations of ESM-Response Behavior Depending on the ESM Protocol Across All Users

Note: Standard deviations are in parentheses. ESM = experience-sampling method.

ESM data quantity (Research Question 1)

Table 2 shows that the number of ESM surveys answered was, on average, about 1.7 times higher for the indirect (Rows 2 and 4) compared with the direct (Rows 1 and 3) ESM protocols. That is, participants had, on average, a significantly higher probability to respond to a sent ESM survey in the indirect protocol (fixed-indirect: 54.2%; varying-indirect: 55.2%) compared with the direct protocol (fixed-direct: 31.8%; varying-direct: 33.0%; see Fig. 1a). Accordingly, and in line with Hypothesis 1, differences in the response probabilities between the two contingency variants (i.e., indirect vs. direct) were significant across both timing modes (fixed, Hypothesis 1a: ΔResponse probability [RP] = 24.6%, p < .001; varying, Hypothesis 1b:

Visualization of ESM data quantity indicators depending on ESM protocols. (a) Participants’ response probabilities across ESM protocols. (b) Response rates across time intervals and ESM protocols. (c) Dropout counts across study weeks and ESM protocols. ESM = experience-sampling method.

We did not find the expected effect for the combination of timing and contingency (Hypothesis 2). Accordingly, using the fixed ESM protocols compared with the varying ESM protocols did not show a significantly larger increase in response probability in the direct protocols compared with the indirect protocols (Hypothesis 2:

In a first supplemental analysis, we explored the main effect of timing across contingency modes and found that participants’ response probability was significantly higher in the varying mode compared with the fixed mode (odds ratio [OR] = 1.06, p = .048). The heat map of response rates in Figure 1b highlights this finding by showing that overall, varying protocols had higher response rates than fixed protocols in both contingency modes (indirect: 55.2% vs. 54.2%; direct: 33.0% vs. 31.8%). However, a closer look at the response rates individually per daytime interval reveals that the difference between varying and fixed protocols was greatest in the early morning interval (indirect: 53.6% vs. 49.6%; direct: 21.3% vs. 15.2%). We therefore excluded all ESM surveys sent in the early morning interval and reran the analysis. The main effect for timing then disappeared (OR = 0.98, p = .664). These findings indicate that the observed main effect for timing may be attributed to a methodological artifact. In the fixed modes, the first survey of the day was by design scheduled for 7 a.m., whereas in the varying modes, surveys were scheduled at random times between 7 a.m. and 10 a.m. As a result, participants in the fixed mode may have been more likely to miss the ESM survey because they could still have been asleep or engaged in their morning routines at that earlier time.

In a second supplemental analysis, we aimed to gain a better understanding of participation behavior over the course of the study. As might be expected, participants’ response probabilities decreased throughout the course of the study. We found this by including (a) the number of the study days and (b) the number of the ESM surveys as covariates in the model. Although very small, we found significant negative effects for both indicators of study progress on response probability (number of study days: OR = 0.984, p < .001; number of ESM surveys: OR = 0.997, p < .001).

As a complementary approach, we also explored participants’ dropout during the study (see Fig. 1c). On an exclusively descriptive basis, across all ESM protocols, most participants (n = 42) dropped out during the first week of the study. The dropout counts fell in Week 2 (n = 16) and rose again slightly in Weeks 3 (n = 24) and 4 (n = 25). Across all study weeks, the dropout counts were descriptively higher for the direct protocols (n = 33) compared with the indirect protocols; the varying indirect protocols counted the least (n = 24 and n = 17).

ESM data quality (Research Question 2)

The time it took participants to complete the ESM surveys hardly differed between the various ESM protocols (between 1.52 min and 1.58 min). Accordingly, we found no significant effect of timing (β = −0.03, p = .198), contingency (β = −0.04, p = .065), or their interaction (β = 0.05, p = .088) on participants’ response duration. This was also the case when the student and the general-public subsamples were analyzed separately.

We found 58 happy-sad and 83 stressed-relaxed semantic-antonym cases and 225 repetitive-answer-style cases in all completed ESM surveys and among all participants. For none of these indicators did we find significant effects for timing (OR range = 1.10–3.10, p > .05), contingency (OR range = 0.75–1.98, p > .05), or their interaction (OR range = 0.47–1.25, p > .05). This was also the case when the student and the general-public subsamples were analyzed separately.

Finally, Table 2 shows that the time between the scheduled time for the ESM survey and the time a participant responded to it was, on average, about 8.5 times longer for the indirect ESM protocols (Rows 2 and 4) than for the direct (Rows 1 and 3) ESM protocols. Accordingly, we found that the response latency was significantly higher for the indirect ESM protocols compared with the direct ESM protocols (β = 0.86, p < .001). The significant interaction effect (β = −0.06, p = .040) shows that the magnitude of the contingency effect varied by timing mode (i.e., higher difference in response latency between direct and indirect in the fixed mode compared with the varying mode; see Table 2). We replicated the contingency effect in both subsamples. However, the interaction effect of timing and contingency on response latency was replicated only in the student sample, not in the subsample recruited from the general public.

Bias in resulting study findings (Research Question 3)

Primary study outcomes

We found no significant effects of timing, contingency, or their interaction in predicting absolute levels of state positive and negative affect (βs between −0.02 and 0.03, p > .05). We found the same when predicting individuals’ deviation in state positive and negative affect from their person average (all βs between −0.03 and 0.04, p > .05).

This was also the case when the student and the general subsamples were analyzed separately, except that in the student sample, there were positive main effects for timing (varying > fixed) and contingency (indirect > direct) on participants’ self-reported absolute levels of positive affect and the deviation from their personal average. In addition, there was a negative interaction effect for timing and contingency for both outcomes, meaning that the effect of timing differed by contingency modes.

Compared with the ESM responses, we found a significant main effect for timing but not for contingency for smartphone-usage behavior. That is, participants used their smartphones longer (i.e., 1.1 minutes; β = 0.07, p < .001) and more frequently (i.e., 0.40 unlocks; β = 0.09, p < .001) than was usual for them personally at the relevant time of day when they were in the varying timing conditions compared with the fixed timing conditions (see also Figs. 2a and 2b). However, the main effect of timing disappeared after excluding the surveys from the early morning intervals (usage duration: β = 0.005, p = .747; usage frequency: β = 0.024, p = .141). This may suggest similar measurement artifacts as for response probability as outcome (see Research Question 1).

Participants’ smartphone-usage-behavior deviation depending on ESM protocols. (a) Deviation of individual usage duration from personal time-of-day average.(b) Deviation of individual number of unlocks from personal time-of-day average. Dashed line indicates the overall median of all deviation values across all participants. ESM = experience-sampling method.

Regarding the deviation in usage duration, we replicated the timing effect for the general-public sample but not for the student sample. In addition, we found (opposite) interaction effects for timing and contingency in both subsamples but not in the full sample. For the deviation of the number of unlocks, we replicated the timing effect in both subsamples and additionally found a contingency effect (direct > indirect) in the student sample.

Associations of primary study outcomes with external constructs

Figure 3 displays the point estimates and 95% confidence intervals for the fixed effects of trait positive and negative affect, depression, satisfaction with life, and psychological well-being on our four selected primary study outcomes as a function of the ESM protocols used (shown with different colors). In summary, we found divergent association patterns between ESM protocols for six out of 20 associations (see gray boxes in Fig. 3). In other words, with a significance level of α = .05, in six cases, researchers would have come to different conclusions for the significance of associations depending on the applied ESM protocol. These cases were all for associations with the smartphone-sensing outcomes. When using a Bonferroni-corrected significance level of α = 0.05 / 20 = 0.25% to address multiple testing issues, however, we did not find diverging effects for any of the 20 associations.

Comparison of association patterns between primary study outcomes and external constructs depending on ESM protocols. Gray boxes indicate differences in significance for the covariate-target combination across ESM protocols on the significance level α = .05. Factor scores were computed for covariates. All covariates were assessed during the prequestionnaire or postquestionnaire and were grand mean centered. No transformation was applied to the four outcome variables. Points and horizontal bars represent the beta coefficient estimates and their corresponding 95% confidence intervals, respectively. ESM = experience-sampling method.

Discussion

We examined whether different ESM protocols have side effects on study parameters using a within-subjects design, sending ESM surveys with different timing and contingency over 4 weeks.

As expected regarding data quantity, response probabilities were significantly higher in indirect protocols (triggered by smartphone unlocking) than in direct protocols (proactive notifications) regardless of timing (Hypothesis 1). The hypothesized effect regarding the combination of contingency and timing (Hypothesis 2) on response probability was not found. In addition, but only on a purely descriptive basis, participants dropped out less in the indirect protocols. These results were robust against different inclusion criteria for scheduled versus sent ESM surveys, as explored in additional sensitivity analyses (see Appendix C in the OSM).

Regarding data quality, we found that participants had longer response latencies in indirect protocols. No other data-quality indicators were affected by contingency or timing.

Regarding biases in study results, we found that participants used their smartphones more frequently and for longer in the varying protocols compared with the fixed protocols, but neither timing nor contingency affected self-reported primary study outcomes. Although the association patterns between the ESM-based primary study outcomes and a diverse set of well-being measures as external criteria demonstrated convergence between the different ESM protocols, this was not the case for the associations with smartphone-based primary study outcomes. These discrepancies disappeared after correcting for multiple testing.

Ultimately, the findings of the study also suggest that side effects of timing and contingency may depend on the sample composition, as we discuss in more detail below. In conclusion, choosing an ESM protocol should involve carefully considering their potential side effects on a diverse set of study parameters. The next sections therefore highlight the key findings to guide ESM-protocol design in future studies.

Contingency side effects on efficiency and ecological validity of ESM data collection

Our results show that ESM data were collected more efficiently when surveys were triggered upon smartphone unlock (indirect mode) compared with when surveys were proactively sent (direct mode). This is evidenced by higher response probabilities in the indirect mode. The design of the indirect protocol makes it unlikely that participants accidentally fail to complete surveys (van Berkel, Goncalves, Lovén, et al., 2019). If they do not respond, it presumably is their deliberate choice (Reiter & Schoedel, 2024). In addition, the greater efficiency of the indirect mode is also reflected in the lower number of participants who withdrew from the ESM study. It should be noted, however, that we explored the dropout counts only on a descriptive level, and conclusions should therefore be drawn only cautiously. Accordingly, future research is needed to substantiate our conclusions.

The higher response probabilities and (descriptively) lower dropout rates may indicate that ESM notifications are less intrusive in the indirect mode (Spathis et al., 2019). Because they are triggered by self-initiated smartphone use, participants may find them less disruptive and more easily integrate them into their routine (Lathia et al., 2013). This is further supported by the fact that indirect protocols did not increase overall smartphone use, making the surveys feel like a by-product of other tasks (van Berkel et al., 2017). Thus, indirect ESM aligns with the call for more interruption-sensitive ESM studies (Mehrotra et al., 2015).

The findings also show that the efficiency of indirect ESM protocols does not negatively affect data quality or bias. The only drawback pointed out by the study is the significantly longer response latency—around 30 min more than with direct protocols—because of notifications being sent only when participants next use their smartphones, potentially increasing recall bias (Eisele et al., 2021). Although ESM is generally valued for its high ecological validity because self-reports are collected in natural environments (Fuller-Tyszkiewicz et al., 2017), not all ESM protocols meet this standard equally (Ram et al., 2017). Accordingly, indirect protocols that allow ESM surveys to be sent only when the smartphone is actively used can compromise the principle of random sampling. Especially for ESM studies on rapidly changing psychological states, however, random sampling is a key design element. Consequently, the application of indirect protocols may result in biased data limited to certain experiences and contexts (van Berkel & Kostakos, 2021), thus threatening ecological validity.

In addition and from an applied perspective, we note that designing an ESM study using indirect protocols also comes with increased implementation efforts because ESM has to be combined with passive sensing. This may be the main reason for the prevalent majority of studies relying on direct contingency protocols in the current ESM-research landscape.

To summarize, using indirect ESM protocols leads to higher response probabilities but at the same time incurs costs in terms of ecological validity and cost-effectiveness of implementation. Researchers should bear this trade-off in mind when deciding on a specific ESM protocol, depending on their specific research question.

Timing side effects and time-of-day effects

We found significant effects of timing on participants’ response probabilities and study outcomes. That is, in the varying mode compared with the fixed mode, participants responded with higher probabilities and used their smartphones more frequently (+0.4 unlocks per hour) and for longer (+1.1 min per hour) than their personal average.

This timing effect may be because participants in the varying mode may find it more difficult to anticipate the occurrence of ESM surveys compared with participants in the fixed mode (Myin-Germeys et al., 2018). As a result, they may check their phones more frequently, simultaneously increasing the likelihood of responding to the ESM surveys when they arrive. This finding suggests that researchers using ESM studies with passive data-collection methods, such as smartphone sensing, should be aware of potential interactions between data-collection modes. Although specific timing protocols may be necessary for certain research topics (Wrzus & Mehl, 2015), they can lead to reactive effects (Eisele et al., 2023), such as participants checking their phones more frequently to avoid missing surveys. This reactive behavior, in turn, can alter natural smartphone use and potentially increase response probability but bias results at the same time.

Alternatively, the timing effects could also just be methodological artifacts caused by the time of day when the ESM surveys were sent. Accordingly, they disappeared when the ESM surveys sent in the early morning interval were excluded from the analysis. As an illustration, smartphone usage was aggregated from 6:30 a.m. to 7:30 a.m. in the fixed mode because the first ESM survey was scheduled at 7 a.m. each morning. In the varying mode, in contrast, the vast majority of ESM surveys was scheduled later (between 7 a.m. and 10 a.m.), shifting the aggregation window of smartphone use. Although we controlled for day-specific variations in smartphone usage, the size of the intervals used for doing so was quite large. The timing effect might therefore be overestimated because the later it was in the morning interval, the more participants used their smartphone. This could, in turn, also explain the higher usage times and numbers for the varying mode.

Ultimately, we cannot assert with complete certainty that the timing effects were solely a methodological artifact because excluding the morning ESM surveys also led to a reduction in the number of observations, thereby decreasing the statistical power of our analyses. Nonetheless, this finding serves as a valuable reminder of the importance of careful consideration in ESM-study design. Depending on the characteristics of the ESM approach, interactions with time-of-day effects can manifest, as illustrated in our case. This further highlights the need for rigorous testing before implementing complex ESM studies (Ebner-Priemer & Santangelo, 2024).

Side effects of ESM protocols and their dependence on sample characteristics

The analyses were conducted separately for the student sample and the general-public sample. Given the modest subsample sizes (student subsample: n = 112; general-public subsample: n = 283) and the exploratory nature of the analysis, we do not aim to provide a detailed interpretation of all results. However, our comparison suggests that the side effects of ESM-protocol design may partially depend on sample characteristics (Eisele et al., 2021; Hasselhorn et al., 2022). For example, in the student sample, even though being the smallest subsample, timing, contingency, and their interaction significantly influenced the reported positive-affect levels and deviations from their personal average. This might be due to factors such as students being younger, more motivated by compensation, more experienced with studies, or having less structured daily routines. Our findings offer only preliminary evidence that the side effects of ESM protocols may vary by sample. Future studies should systematically investigate these characteristics in greater depth (Wrzus & Neubauer, 2023).

Limitations and outlook

Our study has some limitations that may serve as starting points for future research. First, our study focused on timing and contingency as two important design characteristics of ESM protocols. At least for contingency, we remained on the surface, focusing only on the distinction between direct and indirect based on smartphone use as a trigger. Yet as the combination of ESM and smartphone sensing grows, the nature of the trigger may become more complex. For example, first studies used specific activities, such as sitting (based on accelerometer data; Giurgiu et al., 2020) or listening to music (based on music logs; Sust & Schoedel, 2024), to trigger ESM surveys. This interplay of sensing methods and active assessment was already identified as having a large potential for psychological research (Ebner-Priemer & Santangelo, 2024). Thus, our study is only a first step in investigating the side effects of ESM protocols on ESM-study parameters.

Second, we examined only a small subset of possible ESM-study outcomes. For the primary study outcomes, we limited our focus to affective states and basic indicators of smartphone use. The side effects of ESM protocols may differ for other momentary behaviors, such as social interactions, or cognitive states, such as rumination or fatigue, that are captured by ESM surveys (Eisele et al., 2023). They might also differ for other indicators derived from smartphone sensing, such as app usage or physical activity. Again, our study is only a first step, and future studies should investigate generalizability to a larger space of ESM-survey parameters.

Third, we compared smartphone-usage behavior for the periods around the respective ESM surveys with person- and time-of-day-specific behavior without consideration of ESM surveys. Accordingly, our person-average smartphone measures do not represent a counterfactual assessment of participants’ usual smartphone-usage behavior. Participants were aware that they are participating in an ESM study in which they were asked to complete as many surveys as possible. This may be a potential source of bias arising from measurement reactivity. To address this, future research should therefore include a baseline assessment week of smartphone use only without sending any ESM surveys.

Finally, the present study had a rather low overall response rate (i.e., 43.4%) compared with other (psychological) ESM studies, which often have response rates between 70% and 80% (Wen et al., 2017; Wrzus & Neubauer, 2023). Even though we rigorously tested the research app with multiple pilot participants using different Android versions, technical errors cannot be entirely ruled out. Still, we believe that technical issues did not exclusively or primarily cause the low compliance rates for a few reasons. We used the same app in previous studies with higher response rates (e.g., Reiter & Schoedel, 2024). Moreover, we applied very conservative inclusion criteria from a technical perspective. As preregistered and as a plausibility check, we included only participants for whom we could assure that at least 70 ESM surveys were sent out and that ESM surveys were generally triggered correctly.

Rather, we think that the low overall response rate is related to some of the decisions we made regarding the design of the ESM study. First, it may be due to the high participant burden of the present study. In contrast to other ESM studies, the study included smartphone sensing and lasted 4 weeks. This duration is more than twice as long as an average psychological ESM study (i.e., 12.4 days, as reported in Wrzus & Neubauer, 2023). In line with this, our additional analysis showed that response probabilities decreased for later study days.

Second, the low overall response rate may also be related to our decision to start the ESM intervals already at 7 a.m. On a descriptive level, the morning intervals from 7 a.m. to 10 a.m. showed the lowest response rates in each ESM protocol. In particular for direct protocols, we observed exceptionally low response rates (i.e., 21.3% and 15.2%) in the morning intervals compared with the later time-of-day intervals. This is even exacerbated in the fixed-direct ESM protocol because for all morning intervals, ESM surveys were scheduled for 7 a.m. and—because of the study procedures—were available for only 15 min after they had been sent. This early timing of ESM surveys might not have harmonized well with the daily routine of many of our participants, especially because about a third of our sample were students who might have been asleep at the time.

Third, from a compliance-related perspective, the inclusion criteria for the present study were rather liberal. Participants had to answer only 10 ESM surveys to be included. Consequently, our sample also included low-compliance participants, which might have further contributed to overall low and rather pessimistic response rates. In addition, the study’s compensation strategy may also not have been conducive to participants’ compliance. They were required to answer 50% of the ESM surveys to receive full compensation.

Conclusion

In the present study, we systematically investigated potential side effects of different ESM protocols at large scale taking into account a variety of study-outcome parameters. Our findings highlight the importance of carefully balancing data quantity, data quality, and potential bias in primary and secondary study outcomes when selecting an ESM protocol. Overall, the study demonstrates that although indirect ESM protocols can boost compliance and data-collection efficiency, they do so at the potential cost of response latencies and ecological validity. In addition, we have shown that new challenges for ESM-protocol selection arise when ESM data are combined with passive sensing data and that there may also be interactions between ESM protocols and times of day. Finally, we found side effects of ESM protocols on study parameters to be slightly different when considering samples with different characteristics. Accordingly, we recommend that researchers carefully weigh these trade-offs against their research goals and study populations of interest before adopting a specific ESM design.

Footnotes

Acknowledgements

Approval was obtained from the ethics committee of LMU Munich. The procedures used in this study adhere to the tenets of the Declaration of Helsinki. Informed consent was obtained from all individual participants included in the study. When writing the manuscript, we used ChatGPT (https://chat.openai.com) and DeepL (![]() ) to ensure grammatical accuracy and rewording to improve the comprehensibility of the text. After using these tools, we reviewed and edited the content where necessary and take full responsibility for the content of the publication.

) to ensure grammatical accuracy and rewording to improve the comprehensibility of the text. After using these tools, we reviewed and edited the content where necessary and take full responsibility for the content of the publication.

Transparency

Action Editor: Pamela Davis-Kean

Editor: David A. Sbarra

Author Contributions