Abstract

In what aspects do replication studies differ from their primary studies? This question is central for providing insights into the reasons for the nonreplicability of psychological effects. So far, research on potential explanations for the nonreplicability of effects has mainly focused on publication bias and methodological challenges related to measurement error or statistical inference. The recently developed causal-replication framework directs attention toward controlling for differences in study characteristics, including variations in treatment conditions, outcome measures, recruitment, causal estimates, time, location, population, and setting. To contribute to this aim, we conducted a systematic literature review to investigate the design practices of current replication studies. We preregistered the assessment of study characteristics in a detailed review protocol and investigated the available information and intended or unintended variations across primary and replication studies. To do this, we compiled a database of studies that aimed to replicate a causal effect of a clearly stated primary study and that were published in impactful social- and cognitive-psychological journals between January 2017 and August 2022. Our review results highlight that compared with the primary study, authors of replication studies predominantly focus on controlling specific study characteristics in (i.e., methods, procedures, analysis) while often neglecting other study characteristics, such as population or setting. Furthermore, the results indicate that in most replication studies, multiple study characteristics are varied in the study comparison or are insufficiently reported. Accordingly, we discuss prevalent variations, reporting standards, and strategies for planning future replication studies.

Keywords

In the last decade, the scientific community has recognized the importance of replication research for a better understanding of the reasons for effect heterogeneity (e.g., Nosek et al., 2022). For this goal, it needs to be explicitly stated in which way a replication is similar to a primary study and in which way it differs. Only when all sources of effect heterogeneity are considered can clear conclusions regarding replicability be drawn and a description of the conditions under which study results replicate be possible. Suggestions for a systematic design of replication studies traditionally focus on repeating the procedures of a primary study as closely as possible (Schmidt, 2009). However, prominent large-scale replication projects, such as the Open Science Collaboration (OSC; 2015) and the Many Labs Replication Project (Klein et al., 2014), have revealed significant heterogeneity in various psychological effects despite carefully controlling the material and implementation procedures between a primary study and its replication.

These results sparked further discussions regarding the factors contributing to low replicability in psychological research. Next to methodological challenges concerning measurement error and statistical inference (e.g., Fiedler & Prager, 2018; Lewandowsky & Oberauer, 2020; Loken, & Gelman, 2017), unintended differences between the characteristics of primary and replication studies provide possible explanations (e.g., Nosek & Errington, 2017; Van Bavel et al., 2016). For instance, even with the greatest effort, it will not be possible to exactly replicate an existing study because, for example, the time, the population considered, the sampling strategy, or the laboratory in which the study is conducted can differ. All these differences can cause effect heterogeneity because it is well known in causal-inference theory (i.e., factors that threaten the external validity of effect estimates; Cronbach & Shapiro, 1982; Shadish et al., 2002).

Recently, the causal-replication framework (CRF; Steiner et al., 2019; Wong & Steiner, 2018) provided definitions and assumptions that formally specify all potentially relevant study characteristics for replicability across studies. We apply the CRF to examine which study characteristics are reported and which study characteristics are typically varied or held constant in studies that focus on the replication of causal effects. For this, we compiled a database of recently published replication studies and their primary studies in two psychological fields (i.e., cognitive and social psychology) that play pivotal roles in discussions surrounding the replication crisis (e.g., OSC, 2015). Using all available information sources (i.e., the replication and primary studies and supplemental materials), we assessed the availability of information on the different study characteristics and their intended or unintended variation according to the preregistered criteria.

Our results describe the current reporting standards for study characteristics and the prevalent differences between primary and replication studies. In addition, we provide comparisons between the two psychological disciplines and regarding other structural indicators of the replication studies (including author overlap between replication and primary study, preregistration of the replication, type of replication, and time gap to the primary study). Finally, we discuss different practices and systematic design approaches of current and future replication studies.

The CRF

The CRF (Steiner et al., 2019; Wong & Steiner, 2018) provides a general theoretical basis, which we use to specify relevant study characteristics. Based on causal theory (i.e., the potential-outcomes framework; Rubin, 1974, 2005), the CRF encompasses five assumptions, two replication assumptions and three individual study assumptions, for the replication of a well-defined causal effect in the sense of a treatment-control contrast across two or more studies (for the descriptions of all assumptions and examples for causes of effect heterogeneity, see Table 1). Effect heterogeneity can occur when one or more of the CRF assumptions are not met.

Assumptions of the Causal-Replication Framework (Steiner et al., 2019)

The individual study assumptions define requirements for causal inference in a single study, including the correct identification, estimation, and reporting of the effect of interest. These assumptions can be addressed by using designs and analyses for causal-effect estimation in (quasi)experiments (see e.g., Shadish et al., 2002). Explanations for effect heterogeneity relating to these assumptions refer to researchers’ degrees of freedom in the treatment assignment and analysis methods in each study. For instance, variation of treatment conditions within or between participants, the selection of control variables and the specification of modeling assumptions, or methodological issues, such as measurement error, can affect the individual study results and their replicability (see e.g., Stanley & Spence, 2014). Open-science principles and preregistration aim to improve the transparency and reproducibility of the experimental design, data, and analysis in a study (National Academies of Sciences, Engineering, and Medicine, 2018).

Although causal inference in each study, as indicated by the individual study assumptions, is a prerequisite to compare effects between studies, additional variations can occur between studies such that the effects differ. The CRF thus defines additional replication assumptions that describe the necessary conditions for reproducing the same effect across studies. The first replication assumption addresses the stability of both the treatment and outcome across studies. This corresponds to a traditional understanding of replications (e.g., Schmidt, 2009) by closely following the procedures of an original study and by using identical materials. Large-scale replication projects (e.g., Klein et al., 2014; OSC, 2015) often address this assumption by collaborating with the original authors to ensure a high level of fidelity to the material and procedure of the original studies. The second assumption pertains to the equivalence of the causal estimand (i.e., the effect of interest for the specific treatment-control contrast given the population and setting under investigation). In particular, intervention research highlights that the characteristics of the recruited participants and the setting and timing for conducting a study can result in different effect estimands (e.g., description of the external validity of effects in Shadish et al., 2002). Increasing attention has also been given to these study characteristics in current standards for conducting replication research (see e.g., Brandt et al., 2014; LeBel et al., 2018). For instance, Brandt et al. (2014) proposed specifying differences in the setting, remuneration, and participant populations next to the procedures and material of a study in their replication recipe.

Reporting and Variation of Study Characteristics

The CRF offers guidance on factors that potentially cause effect heterogeneity. To better understand the conditions under which effects replicate, it is important to report on all these aspects such that it can be described in which ways a replication study resembles the primary study and in which ways it differs. Variations of study characteristics are not inherently a problem but can be intended, depending on the aim of a replication study. Direct replications require equivalent study characteristics in comparison with the respective primary study for recovering the same effect with new data to accumulate evidence for the existence of an effect (e.g., LeBel et al., 2017; Schmidt, 2009). In contrast, conceptual replications vary study characteristics to inform about the generalizability and boundary conditions of an effect (Borsboom et al., 2021; LeBel et al., 2017). A few examples addressing the generalizability are whether a theory holds for all people or just for people in Western countries or whether a certain intervention works only in in-person settings or also in an online setting.

Contemporary debates about the replicability of psychological findings highlight that the generalization of study results is often limited because of restricted samples and the specific conditions under which effects were found (Bauer, 2023; Yarkoni, 2022). Although this supports the impact of the replication assumptions for describing sources of effect heterogeneity, the CRF furthermore highlights the benefit of a systematic variation of such study characteristics for explaining effect heterogeneity in the comparison of two studies (Steiner et al., 2019; Wong et al., 2022). Specifically, when relaxing only a single replication assumption while ensuring that all other assumptions are met, strong causal inference for the impact of the variation of study characteristics on effect heterogeneity is possible. For example, if two studies on reducing prejudice were conducted at the same time, with the same procedures, using the same outcome measures, recruiting participants with the same characteristics, focusing on the same causal quantity, but varying the instructions of the intervention group, then one could attribute differences in effect estimates between the two studies to the altered instructions. In practice, varying one study characteristic (i.e., relaxing one assumption) is easy, but it is challenging to control the impact of other study characteristics, that is, to satisfy all other assumptions by ensuring that no unintended variations are prevalent. For example, when evaluating the impact of the setting (laboratory vs. online setting) for a study on facial recognition, usually not only the setting is varied but also the participants that one may recruit or the electronic equipment because of differences in characteristics of the monitors used. Thus, if differences in effect estimates between a primary study and a replication study are found, one does not know whether these are due to the setting, the participants, the electronic equipment, or a combination of these. Each involved variation can amplify or reduce effect differences between studies. In addition, interaction effects among the variations are possible, too. Thus, in a comparison of two studies (i.e., one replication and primary study), a joint variation of multiple study characteristics provides only limited insights into the reasons for effect heterogeneity. Most recently, Wong et al. (2022) used the CRF for designing conceptual replication studies that control for unintended variations between studies per design for evaluating effect heterogeneity of a teacher training in educational research. We now apply the CRF to other disciplines to study the current replication practices.

Next to the discipline and the type of replications (i.e., direct or conceptual), other structural indicators may be relevant for the reporting and variation of study characteristics in replication research. For instance, preregistration was introduced as a central tool for enhancing the reporting and design of empirical studies, including replications (e.g., Hardwicke & Wagenmakers, 2023; Nosek et al., 2018). Furthermore, overlap of the authors of the primary and replication studies is associated with more successful replications (e.g., Lemons et al., 2016; Makel et al., 2012; Makel & Plucker, 2014), maybe because study characteristics can be more readily kept constant in replication studies. However, alternative explanations are also possible, such as the time differences between the replication and primary study that may also affect the available information on a primary study. A more comprehensive examination of study characteristics is warranted as a first step to understand the prevalent variations in replication research and possible associations with disciplinary or other structural indicators in replication research.

Research Questions

We aimed to investigate practices for designing replication studies in a systematic literature review. Several literature reviews on replication studies have been conducted that focused on publication and success rates of replication studies but did not assess differences in study characteristics between primary and replication studies (e.g., Cook et al., 2016; Lemons et al., 2016; Makel et al., 2012; Perry et al., 2022). Our review extends the current knowledge on replication research by providing first insights into (a) how well different study characteristics are reported and (b) which study characteristics are typically varied or held constant.

We focus on replication studies that aim to replicate a causal effect reported in a specific primary study and that have been published in recent years—after the first large-scale replication studies were conducted (Klein et al., 2014; OSC, 2015), after suggestions for systematic replications were proposed (Brandt et al., 2014; Schmidt, 2009), and after unintended differences in direct replications were highlighted (Nosek & Errington, 2017; Van Bavel et al., 2016). We include two psychological disciplines (i.e., cognitive and social psychology) that received much attention following “failed” replication research (e.g., Brandt et al., 2014; OSC, 2015; Schmidt, 2009). We deemed it possible that the reporting and variation of study characteristics may differ between the disciplines. In addition, we provide comparisons for author overlap between studies, preregistration of the replication, type of the replication, and time gap to the primary study.

Disclosures

Preregistration, data, and materials

The methods of this literature review were preregistered on the OSF (https://osf.io/yxgc8). We followed this preregistration and report deviations when necessary. Furthermore, the data, analysis script to reproduce the results, and additional material are available at https://osf.io/yv5ns/.

Method

Transparency and reporting

The present research conforms to the PRISMA guidelines for reporting systematic reviews (Page et al., 2021). In line with the descriptive focus of the review, we report on information sources, eligibility criteria, search strategy, selection process, data items, and data-collection process. The data management, selection process, and data-collection process were managed using CADIMA (Version 2.2.3; Kohl et al., 2018), a free web tool for conducting literature reviews. We performed data preparation, analyses, and visualization in R (Version 4.3.0; R Core Team, 2023) using the packages ggplot2 (Version 3.4.2; Wickham, 2016) and readxl (Version 1.4.2; Wickham & Bryan, 2023).

Database

Information sources

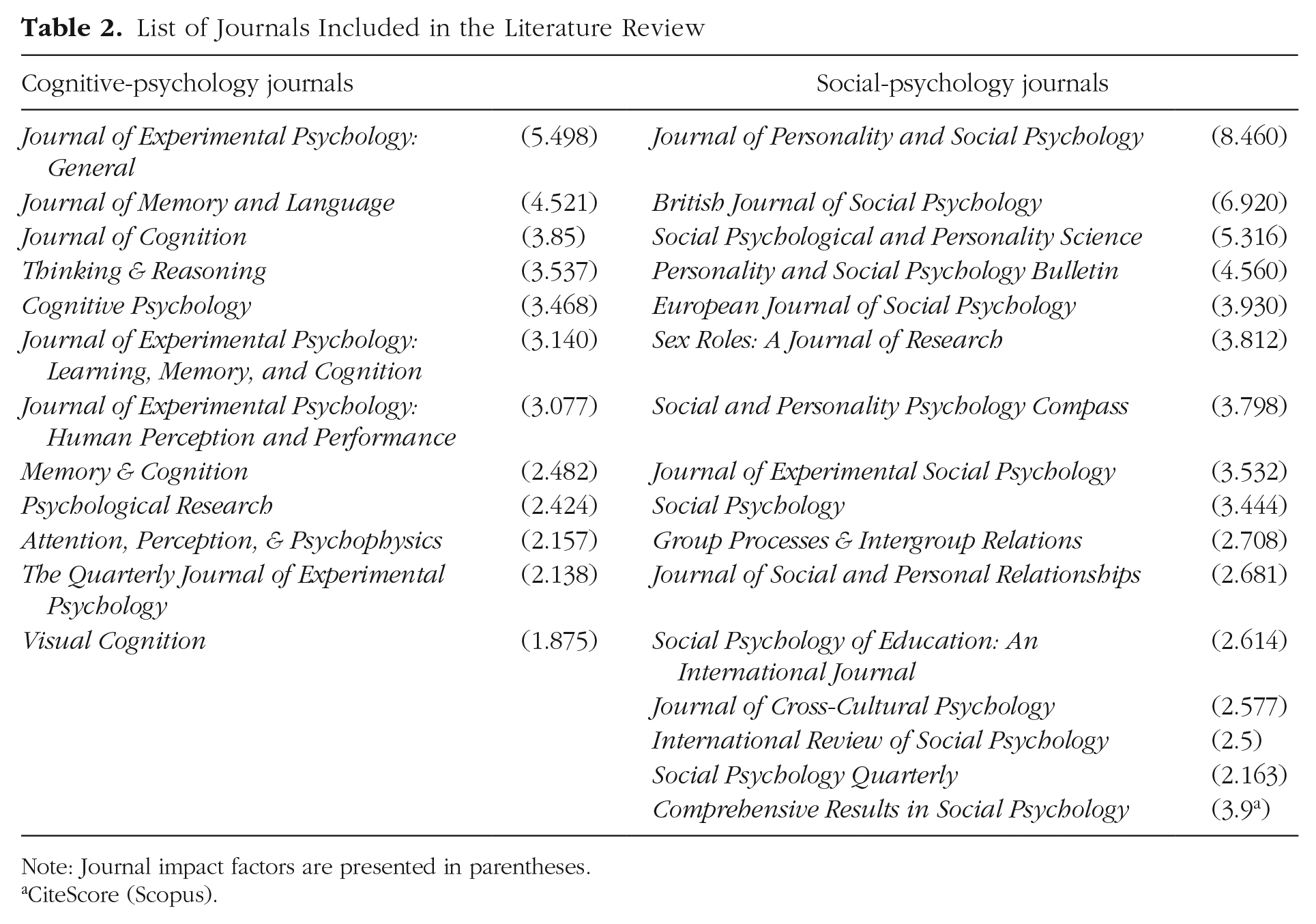

As sources for the replication studies, we preregistered a list of impactful, peer-reviewed journals for cognitive- and social-psychological research from the Scimago Journal & Country Ranking. Ultimately, we selected 12 journals to represent cognitive-psychological research and 16 journals to represent social-psychological research. For a list of the selected journals, see Table 2. We based the selection on an examination of the descriptions and author guidelines of potential journals to assess whether they were representative of the respective fields and ensured that they did not explicitly exclude replication studies from publication. A more detailed description of the journal-selection process is presented in the preregistered review protocol.

List of Journals Included in the Literature Review

Note: Journal impact factors are presented in parentheses.

CiteScore (Scopus).

Eligibility criteria

We preregistered eight eligibility criteria to specify the range of studies we aimed to include in the literature review: (a) The article was published in one of the selected journals; (b) the article was written in English; (c) the article was published between January 2017 and August 2022; (d) the article was an empirical article with primary data; (e) the article included a replication study as one of the main topics of the article; (f) the replication study was an experiment or quasiexperiment with a treatment-control comparison for applying the CRF; (g) the replication study did not employ functional imaging methods, such as PET or functional MRI, or other techniques measuring brain activity, such as EEG; and (h) the replication study focused on human research.

To summarize, we focused on current studies in one of the two psychological fields that aimed to conduct a replication of a specific primary study with new data. Criteria f through h above enhance the comparability of the studies according to characteristics that can affect the replicability of the research results. Moreover, we considered (quasi)experimental research with causal effects and excluded other research traditions that are prevalent in neurocognitive studies and animal research.

Search strategy

We employed a similar search strategy as previous literature reviews on replication studies (e.g., Lemons et al., 2016; Makel et al., 2012). Specifically, we used APA PsycInfo as the primary database to identify potentially relevant records from the journals listed in Table 2 between January 2017 and August 2022. One journal (i.e., Comprehensive Results in Social Psychology) was not covered by PsycInfo, so we used the advanced search function on the journal’s website. Our literature search focused on articles that included the term “replicat*” in their title, abstract, or keywords.

Selection process

In the first step, we investigated the title and abstract of each identified record. We applied the criteria publication language (Criteria b), that the article included an empirical study with original data (Criteria d), and that the article included a replication study (Criteria e). To this end, we distributed the records among four independent raters (J. Hoffmann, M.-A. Sengewald and two trained student assistants). Records that met all specified criteria were deemed eligible for the next screening step. In the second step, we screened the full-text articles for all eligibility criteria. Two independent raters (J. Hoffmann and a trained student assistant) were assigned to evaluate the articles, and 20% of the reports were independently screened by both raters to determine metrics of interrater agreement. If all the eligibility criteria were satisfied, the respective articles were considered eligible for data extraction. Because of limitations on the availability of research personnel, we specified a limit of 60 articles (i.e., 30 articles for cognitive psychology and 30 articles for social psychology) to be investigated in the comprehensive data collection. We identified these articles by random sampling from all eligible articles. The selected articles underwent an expert rating to assess their fit to the research fields as a form of quality control.

Data collection and analysis

As the first step in the data-collection process, we collected structural indicators from the selected articles. The structural indicators included the publication year of the primary article and replication article, overlap in authorship (i.e., at least one author worked on both articles), research field as indicated by the journal (i.e., cognitive psychology or social psychology), the type of replication as reported by the authors (i.e., direct or conceptual), and whether the replication study had been preregistered. We used these data to describe the replication studies in our database and to conduct additional analyses on the availability of information and equivalence of study characteristics.

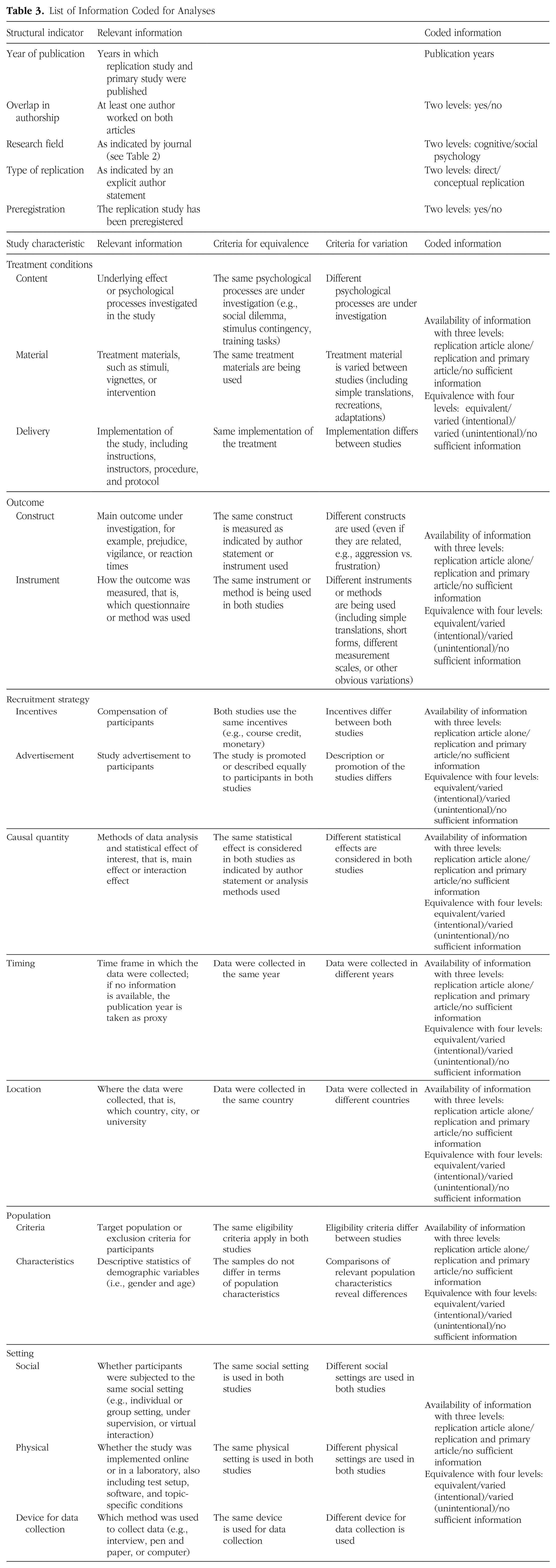

We had preregistered the concrete information that we wanted to collect regarding the availability of information and the equivalence of study characteristics. For this, we identified eight study characteristics and more detailed subcharacteristics that potentially affect replicability in psychological research according to the replication assumptions of the CRF (Steiner et al., 2019). For a comprehensive list of the coded information, see Table 3. To specify the stability of the treatment and outcome across studies, we assessed information on the treatment conditions, outcome measures, and recruitment strategy. As relevant information for the equivalence of the causal estimand across studies, we considered the specification of the effect of interest (i.e., causal quantity) and characteristics for the timing, location, population, and setting under which the studies were conducted.

List of Information Coded for Analyses

We collected all data manually by first inspecting the method section of the respective replication study. If necessary and applicable, we used information from the complete article and from preregistrations and supplementary material. As an additional source of information, we took the primary study’s article whenever necessary to directly compare methods and procedures between the replication and its primary study. In case multiple replication studies were reported in a single journal article, we included only one replication per primary study to control for similarities in the design of replication series of the same authors on the same primary studies. For this, we included only the replication study that was reported first in the article for a specific primary study. Because of the extensive amount of information, all records were single-coded by J. Hoffmann except for a small subset of articles (i.e., two articles for each psychological field) that were coded by M.-A. Sengewald to verify the data-collection process.

Availability of information

We coded the availability of information for each subcharacteristic into three categories of where we had to look to obtain the necessary information: (a) replication article alone (including preregistration and supplemental material), (b) replication article and primary article, and (c) no sufficient information. We rated replication article alone if the authors of the replication study described how a subcharacteristic was implemented in the primary study and the replication study or if they claimed equivalence or variation of subcharacteristics in the article or supplementary material (including preregistrations). If the authors indicated equivalence in the replication study or supplementary material, we additionally checked the primary article for contrary evidence. If the equivalence of a subcharacteristic could not be determined from the replication article alone, we used information from the primary article as an additional source of information. In case the primary article was necessary to determine equivalence or variation between studies, we rated the availability of information as replication article and primary article. The rating of no sufficient information was given only if we were not able to determine the equivalence of a subcharacteristic based on all available information sources.

For the formal details of the analysis regarding the availability of information ratings for the different study characteristics, see Appendix A. We first calculated the proportion of studies that provided sufficient information on each subcharacteristic. Then, we calculated an average score (i.e., we averaged the proportions of the respective subcharacteristics that refer to a common study characteristic). Furthermore, we determined an availability index for each study characteristic from the average scores that can take on values between 0 and 1. An availability index of 1 for a specific study characteristic indicates that all respective subcharacteristics were sufficiently reported based on all available information sources. A value of 0 indicates that no sufficient information was available for all subcharacteristics in all replication studies.

Next to investigating the available information across all replication studies in our sample, we also classified the studies based on the structural indicators in different subgroups (i.e., cognitive vs. social psychology, author overlap vs. no author overlap, preregistration vs. no preregistration, conceptual vs. direct replication, and small time gap vs. large time gap between primary study and replication study). To compare the results between the subgroups, we calculated log odds ratios (ORs) for each study characteristic. We considered log OR greater than 0.36 in absolute terms as substantial differences (Sánchez-Meca et al., 2003). We report no log OR when the availability index of a study characteristic was 1 or 0 in at least one subgroup. In this case, the availability ratings had no variance, and log OR could not be calculated.

Equivalence of study characteristics

We coded the equivalence between the replication study and primary study for each subcharacteristic and used the preregistered list of criteria to assess four categories: equivalent, varied (intended), varied (unintended), or no sufficient information. An intended variation had to be explicitly reported in the article; otherwise, we rated the subcharacteristic as unintentionally varied. Table 3 provides a summary of the criteria for coding the equivalence and highlights slight modifications from the preregistration. Modifications covered a slightly altered wording of some criteria to clarify the meaning and changes of the criteria for two characteristics (i.e., timing of data collection and population characteristics) to be less strict.

The analysis of the equivalence ratings was similar to the availability ratings; for the formal details, see Appendix A. We first determined how many study characteristics were equivalent or varied or lacked sufficient information per study, for which we used the equivalence ratings of the subcharacteristics. Only study characteristics for which all subcharacteristics were rated equivalent were considered equivalent. If at least one subcharacteristic was rated varied, we considered the respective study characteristic to be varied. Otherwise, we rated the study characteristic as no sufficient information. Second, we calculated the proportions of the equivalent ratings for all subcharacteristics given that sufficient information was available. Then, we obtained an average score for each study characteristic. Third, we calculated the proportion of intended variations among the varied subcharacteristics and study characteristics.

We also calculated an equivalence index for every study characteristic. The equivalence index describes the probability of a study characteristic to be equivalent to the respective primary studies given that sufficient information is available. It can take on values between 0 and 1: An equivalence index of 1 indicates that a study characteristic was rated as equivalent in all subcharacteristics and all studies that provided sufficient information; a value of 0 indicates that it was rated as varied in all subcharacteristics and all studies that provided sufficient information. Next to investigating the equivalence across all replication studies in our sample, we also compared the results in the subgroups based on the structural indicators. Similar to the analysis of the availability ratings, we calculated and interpreted the log OR for each study characteristic.

Results

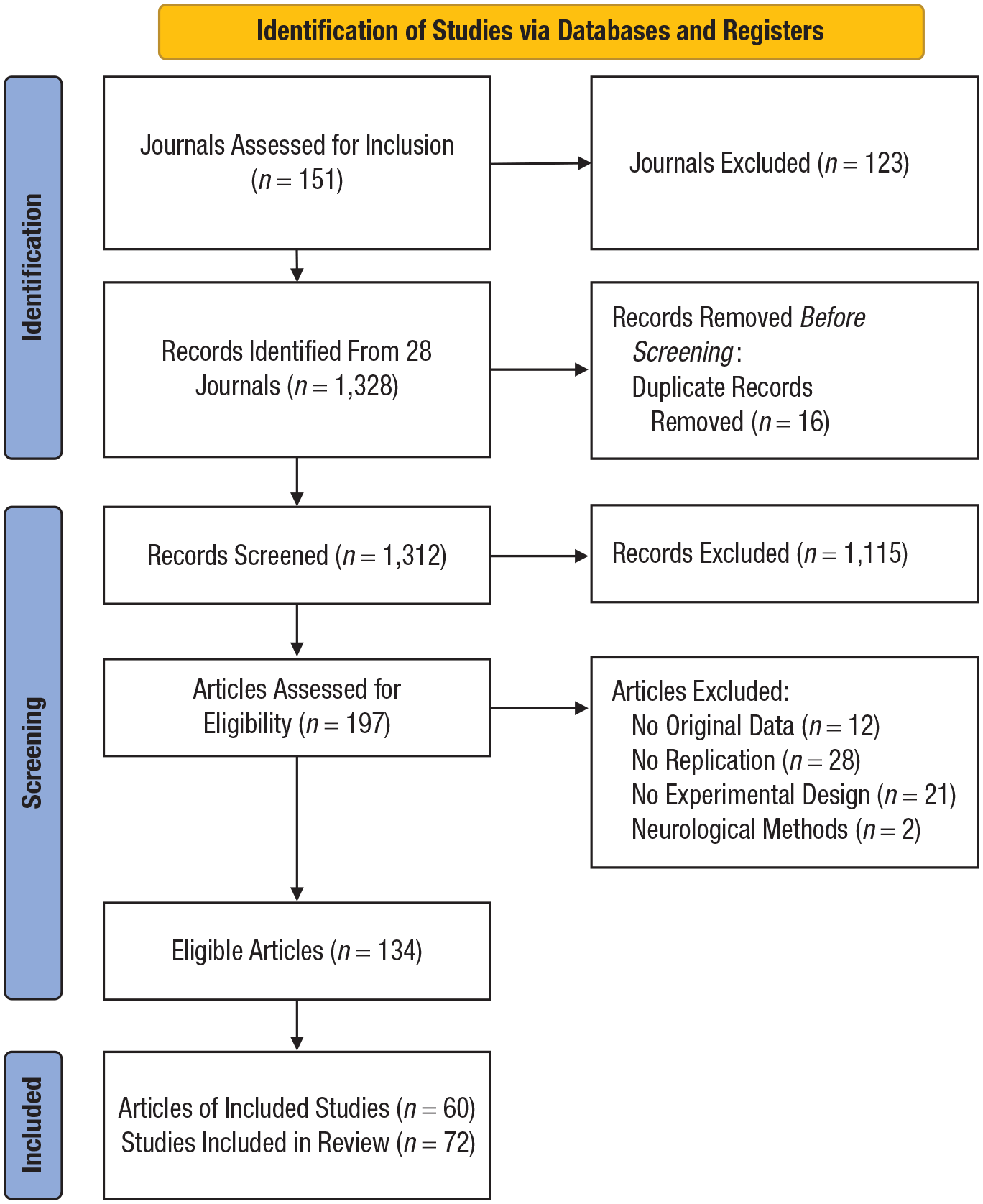

Literature search

The literature search was conducted on August 11, 2022. For a flow chart of the selection process in accordance with the PRISMA criteria (Page et al., 2021), see Figure 1. A total of 1,328 records were identified in the literature search. The extracted records were screened for duplicates, and identified duplicates were manually excluded, resulting in 1,312 records that were assessed for eligibility. In the first step of the selection process, we excluded 1,115 records that did not fit our criteria, resulting in 197 records left for the full-text screening of corresponding articles. Of the 197 articles retrieved for a full-text screening, 40 articles were screened independently by two raters. The overall agreement to include an article was 77.5%, based on disagreements on the criteria original data (97.5% agreement), replication study (82.5% agreement), 1 and study design (90% agreement). All other criteria had perfect agreement. One hundred thirty-four articles passed the entire screening process. From these, we randomly selected 60 articles (i.e., 30 articles for cognitive psychology and 30 articles for social psychology).

Flow diagram of literature search and selection process. Template retrieved from Page et al. (2021).

Descriptive statistics

The sample of 60 articles included 77 replication studies in total. Five replication studies were excluded because it was impossible to identify a unique primary study because the primary article included multiple studies. Out of the 72 replication studies, 32 (44.4%) were published in cognitive-psychology journals, and 40 (55.6%) were published in social-psychology journals. In total, 10 (13.9%) replication studies had an overlap in authorship, and 35 (48.6%) were preregistered. Forty (55.6%) replication studies self-classified as a direct replication, 10 (13.9%) self-classified as a conceptual replication, and 22 (30.6%) did not explicitly state the replication type. The replication studies were conducted between 1 year and 46 years apart from the respective primary study; median time difference was 7.5 years.

Availability of information

For a summary of the proportions of studies providing sufficient information, see Table 4. For detailed information on the proportions of availability ratings for every study and subcharacteristic, see Appendix B (Table B1). Study characteristics with the most information available were timing (100%), 2 causal quantity (98.6%), outcome (97.9%), and treatment conditions (97.2%). The other study characteristics were less often sufficiently reported: Location was sufficiently reported in 59.7% of studies, population was sufficiently reported in 52.8% of studies, setting was sufficiently reported in 54.2% of studies, and recruitment was sufficiently reported in 27.8% of studies. These results are in line with the traditional practice of conducting replication studies, putting a focus on the repetition of material and analysis methods and the reporting thereof. The available information can also vary between subcharacteristics of the same study characteristics. For example, in the case of population, the target population was reported in 73.6% of studies, and specific sample characteristics (i.e., age and gender) were reported in 31.9% of studies. Regarding the setting, sufficient information on the physical setting (68.1%) and the device for data collection (77.8%) was usually reported, whereas sufficient information on the social setting was reported in 16.7% of studies.

Descriptive Statistics on Availability of Information and Equivalence Ratings

Note: We summarized the ratings across all studies in our sample.

Proportion of equivalent ratings given that sufficient information was available.

Proportion of intended variation given that variation occurred.

We investigated differences in the availability index between different subgroups (i.e., cognitive psychology vs. social psychology, author overlap vs. no author overlap, preregistration vs. no preregistration, conceptual replication vs. direct replication, and a small time gap vs. large time gap between primary study and replication study) and found several substantial differences in terms of OR (

Odds Ratios of Availability Ratings by Study Characteristic and Subgroup Comparison

Note: Logarithmic

Substantial difference with

Equivalence of study characteristics

When calculating the number of equivalent study characteristics per study, we found that 0 to 5 characteristics were equivalent (

For summarized information on the equivalence and intended variation of study characteristics, see Table 4. For the detailed proportions of equivalence ratings for every study and subcharacteristic, see Appendix B (Table B2). The study characteristics with the highest proportions of equivalence, given sufficient information, were outcome (92.2%), causal quantity (87.3%), and treatment conditions (74.8%), closely followed by setting (70.9%). The lowest proportions of equivalence ratings were observed in location (48.8%), recruitment (42.5%), population (35.5%), and timing (0%). We also assessed the methods that were used to keep study characteristics equivalent, such as translation, back-translation methods for equivalent material; the implementation of a comparable incentive scheme; or the recreation of the original setting conditions. However, only a minority of studies reported on such methods (for more information on these results, see the OSF material). Some of the variations in replication studies were intended because the authors investigated the generalizability of the effect under investigation. Among varied study characteristics, we recorded intended variations for location (40.9%), treatment conditions (22.6%), population (4.1%), setting (2.9%), and timing (1.4%). However, we recognized most of the observed variations to be unintended. Figure 2 summarizes the equivalence indices for all replication studies and all subgroup comparisons in relation to the available information.

Equivalence index dependent on study characteristics and subgroups. Number of studies in each group is in parentheses. Cogn. psy. = cognitive psychology; soc. psy. = social psychology; overlap = studies with overlap in authorship; no overlap = studies without overlap in authorship; prereg. = preregistered replication studies; no prereg = not preregistered replication studies; concept. repl. = conceptual replications; direct repl. = direct replications; small gap = small time difference between primary and replication study

We observed several substantial group differences in terms of ORs for predicting the equivalence of study characteristics (for detailed results, see Table 6). In the comparison of the research fields, setting (

Odds Ratios of Equivalence Ratings by Study Characteristic and Subgroup Comparison

Note. Logarithmic

Substantial difference with

Discussion

The ongoing discourse surrounding the replicability of psychological effects can be enriched by investigating differences in study characteristics between primary studies and replication studies (including the treatment conditions, outcome measures, recruitment, causal estimates, time, population, location, and setting) that may affect replicability. With our literature review, we pursued this goal by assessing the current practices for designing replication studies and describing the variation of study characteristics and how researchers report on them.

We identified eight study characteristics and various subcharacteristics that could potentially cause heterogeneity of an effect of interest by applying the CRF (Steiner et al., 2019; Wong & Steiner, 2018). Thereby, we translated the theoretical assumptions under which replication success can be expected into concrete study characteristics that can be assessed. Our review was preregistered along with our search, screening, and coding criteria in a review protocol on OSF. We applied the review protocol to replication research in two different psychological fields. For this, we identified 12 impactful journals from the field of cognitive psychology and 16 impactful journals from social psychology. Among the 1,312 research articles published from January 2017 to August 2022 in these journals, a total of 134 research articles (10.21%) contained a replication study according to our criteria (i.e., requiring an explicitly stated replication purpose for a previously published and clearly referenced study). We assessed the availability of information on the different study characteristics and their equivalence to the primary study in a random sample of 60 articles (i.e., 30 for each respective psychological field).

Insights about current replication designs

Our results show that replication studies typically follow a traditional understanding of exactly repeating the procedures of a primary study (Schmidt, 2009). Given that sufficient information was available, outcome, analysis methods, and treatment conditions were the characteristics most frequently kept equivalent across studies, closely followed by the setting. All these characteristics were rated equivalent in more than 70% of replication studies compared with fewer than 50% for the other study characteristics (i.e., location, recruitment, population, and timing). However, the subcharacteristics varied considerably in terms of equivalence to the primary study. For example, although the same underlying processes as in the primary studies were investigated in all replication studies, in more than a third of studies, the authors used different study materials and changed procedural details. Furthermore, although the target population was equivalent in almost half of the replication studies, as defined by inclusion criteria, fewer than 10% of studies were equivalent in terms of age and gender of the sample.

One important caveat to these results is that they are based on studies that provided sufficient information on the equivalence of study characteristics. 3 In terms of reporting transparency, we observed large differences between study characteristics. Although the equivalence of timing of data collection, analysis methods, outcome, and treatment conditions were sufficiently reported in virtually all studies, the equivalence of location of data collection, setting, and population were sufficiently reported in fewer than half of the studies, and the equivalence of recruitment strategy was sufficiently reported in just about a quarter of the studies. As with the equivalence of study characteristics, the availability of information varied among subcharacteristics. For example, we found information on the equivalence of the target population in about 75% of studies, whereas for age and gender, we found information in only about one-third of studies. Another example is the setting because the equivalence of physical setting and the device used for data collection were reported in the majority of studies, whereas for the social setting, this was the case in just one out of six studies.

The results further reveal differences between the replication studies in social psychology and cognitive psychology that match disciplinary differences. Thus, more information was available on all assessed study characteristics in social psychology, the field in which guidelines for replication research have been prominently proposed (e.g., Brandt et al., 2014). Accordingly, the treatment conditions, outcome, and analysis method were more often equivalent in social-psychological studies, too. In contrast, the equivalence of setting characteristics (i.e., the device for data collection and physical and social settings) was more the focus of replication studies in cognitive psychology. This may be plausible because cognitive psychology draws more on basic cognitive processes and might therefore often require a distraction-free environment, which might contribute to a stronger motivation to control the setting compared with social psychology. Examinations of other structural indicators revealed that author overlap and preregistration foster the reporting of study characteristics; however, this does not translate to the equivalence of study characteristics.

Note that all replication studies varied or did not sufficiently report multiple study characteristics simultaneously. When study characteristics are not reported, this can imply that these are theoretically not relevant for a specific effect of interest. For instance, there might be a consensus that fundamental cognitive processes, as typically studied in cognitive psychology, are independent of certain study characteristics, such as population characteristics, thus, making it unnecessary to consider. However, it can also imply that there are blind spots in the design of replication studies that need to be further considered to understand aspects causing effect heterogeneity. Especially when different causes of effect heterogeneity vary simultaneously, it becomes impossible to draw strong inference about which study characteristic affects replication success or failure when comparing two studies (e.g., Coyne et al., 2016; Hedges & Schauer, 2019; Steiner et al., 2019; Wong & Steiner, 2018). A systematic variation of study characteristics that describes specific intended differences between studies and rules out alternative explanations for effect heterogeneity would provide strong evidence for understanding the reasons for replication success or failure.

Implications for planning replication studies

A more detailed assessment and reporting of study characteristics that potentially cause effect heterogeneity across studies would be very helpful for describing the conditions under which certain effects replicate. In this review, we used global study characteristics and provided a general overview of the different assumptions of the CRF. These assumptions can enrich the scientific discourse by offering a structured approach to potential sources of effect heterogeneity and provide a foundation to develop reporting standards that can guide future research (see also the PICO framework in medicine; Icahn School of Medicine at Mount Sinai, n.d.). However, such standards require concrete considerations of all relevant study characteristics for a specific effect of interest. When all potential sources of variability are clear, this would offer more comprehensive insights into the replicability of study results and facilitate systematic comparisons across studies. In addition, such information can be used in meta-analysis for investigating predictors for the heterogeneity of a specific effect across multiple studies (see e.g., Hedges & Olkin, 1985; Hedges & Schauer, 2019). Furthermore, the documentation of variations between studies directly adds to the open-science principles (National Academies of Sciences, Engineering, and Medicine, 2018).

In addition, if all replication studies follow a systematic design, specific variations between studies can be ruled out as possible explanations for effect heterogeneity, thus offering insights into the impact of specific variations on effect heterogeneity. Along these lines, Holzmeister et al. (2024) used various large-scale replication attempts to investigate population, design, and analytic heterogeneity as causes of effect heterogeneity (see also Olsson-Collentine et al., 2020). They considered preregistered many-lab studies (e.g., Klein et al., 2014, 2018) for population heterogeneity because constant procedures in all studies controlled for this source of effect heterogeneity. Likewise, many-condition studies or metastudy approaches (e.g., Baribault et al., 2018; DeKay et al., 2022; Huber et al., 2023) randomly assign participants to different variations of an experiment such that design heterogeneity can be investigated. These replication designs substantially improve the interpretability of the impact of variations between studies on effect heterogeneity by intentionally and systematically introducing variations between studies. Multiple variations between studies are possible and interaction effects between specific variations can be investigated because the replication designs ensure a systematic variation of study characteristics in the series of replication studies.

On a similar note, other methodological developments, such as the CRF (e.g., Steiner et al., 2019), provide the formal background for differentiating between different causes of effect heterogeneity and designs for a systematic variation of study differences (Wong et al., 2022). For concrete applications, subject-matter theory is required for detailed insights into the study characteristics that may cause heterogeneity of the effect of interest, such as person characteristics beyond demographics and more details on the specific setting and location. When planning post hoc replication studies to investigate the replicability of existing results, it is challenging to control all study characteristics. For example, in our database, the time of data collection varied in every replication study, and differences in setting or person characteristics are typically difficult to avoid as well. These variations in study characteristics can then be investigated only as a compound because it is impossible to disentangle the impact of certain study characteristics on effect heterogeneity in case of multiple variations. As an alternative, the CRF suggests prospective replication designs in which primary studies and replication studies are planned together to control unintended variations between studies (e.g., Wong et al., 2022; Wong & Steiner, 2018).

Limitations

Our results provide detailed insights into the reporting and design of replication research and relate the current practices to systematic replication attempts that can enhance the understanding of reasons for effect heterogeneity. However, for concrete applications of our results, several limitations need to be considered.

First, the considered study characteristics and subcharacteristics are relatively broad. We specified and operationalized them in a way that we could apply them to a variety of replication studies investigating different effects and phenomena in social psychology and cognitive psychology. This might neglect relevant study characteristics for a specific effect of interest. For example, we operationalized the location of data collection as the respective country in which the data were assessed. For some effects, such as an intergroup effect that involves specific minorities, this operationalization may be too broad because effects could also depend on local differences in a country (e.g., an urban or rural environment). Thus, when planning a replication study, one may need to adjust our criteria to relevant effect moderators for the specific effect of interest. Our global criteria can be considered as an initial set with easily accessible characteristics with which we could already discover substantial differences in the reporting and design of replication studies.

Second, we focused on specific replication studies based on our preregistered inclusion criteria. Thus, we described replication research that explicitly states the respective primary study and that investigates a causal effect in an (quasi)experiment. Following these criteria, we found a prevalence of replication studies in the considered journals of 10.2%. Because experimental studies are rather common in cognitive psychology and social psychology, only 10.7% of replication studies were excluded based on a nonexperimental study design. The inclusion criteria allowed us to consider all available information for a study comparison (i.e., the replication study and primary study plus all supplemental materials) and facilitated the investigation of the equivalence of study characteristics according to the CRF. Thus, our results represent rather controlled research conditions and do not represent replication practices for other study types, such as observational research.

Third, we limited ourselves to the coding of 60 research articles (i.e., 30 from social-psychology journals and 30 from cognitive-psychology journals). This limit was specified in our preregistered review protocol and was based on our resources for the extensive manual-coding process. In total, we went through 72 replication studies and 72 primary studies and coded information on the reporting and the equivalence of eight study characteristics, each with one to three subcharacteristics. We are confident that the overall pattern of reporting and design practices can be illustrated with these replication studies given that they were randomly selected from 134 identified articles that included replication studies in the considered journals. For subsequent investigations of the replication studies, we provide the documented database on the OSF.

Conclusion

In this literature review, we used a comprehensive list of study characteristics to evaluate to what extent these were varied, kept constant, or not reported on in replication studies. Our results suggest that in current replication studies, researchers mainly focus on specific study characteristics, such as the treatment, outcome, and analysis methods. These characteristics are generally well documented and kept constant. Other study characteristics, such as population, setting, location, and timing of data collection, are less well documented and more often varied in replication studies. For more insights into the reasons of effect heterogeneity, we suggest careful reporting and systematic variation or control of relevant study differences. A transparent documentation of the different sources of effect heterogeneity and systematic designs that control for some variations in replication studies can substantially enhance the evidence on the replicability of effects in different fields of psychology.

Footnotes

Appendix A

Appendix B

Table B1 and Table B2 include descriptive information on the proportions of availability ratings and equivalence ratings, respectively. The information was coded in every replication study for each subcharacteristic. The values pertaining to the study characteristics resemble the mean value of the respective subcharacteristics.

Transparency

Action Editor: Pamela Davis-Kean

Editor: David A. Sbarra

Author Contributions