Abstract

The prototype approach provides a theoretically supported basis for novel research, detailing “typical” cognitive representations of targets in question (e.g., groups, experiences). Fairly recently, in social and cognitive psychology, this approach has emerged to understand how people categorize and conceptualize everyday phenomena. Although this approach has previously been used to study everyday concepts, it has predominantly been overlooked in cross-cultural research. Prototype analyses are flexible enough to allow for the identification of both universal and culture-specific elements, offering a more comprehensive and nuanced understanding of the concept in question. We highlight theoretical, empirical, and practical reasons why prototype analyses offer an important tool in cross-cultural and interdisciplinary research while also addressing the potential for reducing construct bias in research that spans multiple cultural contexts. The advantages and risks of conducting prototype analyses are discussed in detail along with novel ways of integrating computational approaches with traditional prototype-analyses methods to assist in their implementation.

Concepts often differ in meaning between individuals, groups, and cultures (see De Oliveira & Nisbett, 2017; Kitayama & Salvador, 2023). For example, when we mention the term “hero,” what comes to mind? Posing this question to a thousand individuals would likely yield a variety of themes in the responses. By analyzing these patterns, we can gain insights into everyday understandings of the concept of a hero. Such analysis aids in developing a common understanding of heroes, facilitating easier communication and research. The prototype approach, pioneered within the field of cognitive psychology by Rosch (1975), plays a vital role in describing how individuals conceptualize a concept and revealing its properties.

Prototypes are laypeople’s understanding of the meaning of concepts, serving as a mental summary that reflects the most representative examples or attributes commonly associated with a particular category (Fehr, 1988). According to Rosch (1973), categories have a “core meaning” made up of the clearest cases or best examples, known as “prototypes.” Members of a category vary in how closely they resemble these prototypes, meaning not all members are equal and can be ranked by how representative they are of the category. The most representative exemplars (i.e., characteristics) share the most attributes with other category members. Less typical exemplars share fewer attributes with the prototypes and have more in common with neighboring categories. In addition, prototype analyses identify a set of features perceived as representative of the concept, allowing for a nuanced understanding of complex phenomena (Rosch, 1975, 1977). For the purposes of illustration, consider again the multifaceted concept of a hero. By employing a data-driven, bottom-up approach, this method allows participants to indicate their conceptualizations of a hero free from the constraints of preexisting theories or opinions. For instance, participants from Western cultures tend to perceive heroes as embodying bravery (Kinsella et al., 2015), whereas participants from Eastern cultures may focus more on heroes’ contributions to the nation or homeland (Sun et al., 2024).

The debate over whether the influences of cultures on psychological phenomena should be viewed through an indigenous, qualitative, emic lens or a universalist, quantitative, etic lens has been well discussed (Boehnke et al., 2014). The emic approach (i.e., culture-specific approach seeking to understand a culture from the “inside”) focused on indigenous constructs and methodologies in their own terms (Cheung, 2012; Kim et al., 2006). The etic approach (i.e., the culture-general approach seeking to identify universal principles or behaviors that apply across cultures) assumed the universality and cross-cultural invariance of existing concepts and models (Cheung, 2012). Using etic approaches in cross-cultural research can overlook crucial aspects of a concept, resulting in an incomplete understanding of human behavior (Thalmayer et al., 2022). Cheung (2012) recommended a combined emic–etic approach to bridge global and local human experiences in psychological science and practice. We argue that prototype analyses contain both emic and etic processes and have the ability to examine the suitability of an instrument for use across two or more cultures (Fischer & Karl, 2019; Mallah, 2022; van de Vijver & tanzer, 2004). Specifically, the qualitative (emic) aspect of prototype analyses allows researchers to delve into culture-specific elements of a phenomenon, whereas the quantitative (etic) aspect facilitates the measurement and comparison of these phenomena across different cultures. This dual approach ensures that the analysis is both deep (capturing the essence of the phenomenon within each culture) and broad (allowing for comparisons across cultures).

Prototype analyses include both qualitative and quantitative aspects. It begins with a qualitative approach, collecting and categorizing responses from laypeople to identify defining features. Subsequently, these features are quantitatively analyzed to determine their centrality and relevance within each culture. We argue that this combined approach facilitates cross-cultural understanding by uncovering the nuances and variations in how different cultures conceptualize the same phenomena or concepts. By comparing these conceptualizations across different cultures, researchers can uncover unique cultural nuances and similarities in the understanding of the concept. This methodological perspective, in which participants become active contributors in defining concepts, is particularly potent in cross-cultural paradigms. It allows for the exploration of how different cultures interpret, experience, and express universal concepts, such as honor, nostalgia, or heroism (Cross et al., 2014; Hepper et al., 2014; Sun et al., 2024). Thus, prototype analyses offer the flexibility to identify not only the culturally specific aspects of a concept but also the core elements of a construct universally recognized across cultures. Furthermore, the indigenous approach (see Allwood & Berry, 2006; Hwang, 2005) seeks to develop theories, methodologies, and practices that are rooted in local culture, traditions, and experiences rather than imposing frameworks and concepts from other cultures, often Western. The goal of prototype analyses is not only to identify which aspects are universal and which are culture-specific but also to overcome the issues stemming from theories or concepts constructed primarily in Western societies. This approach offers a way to examine specific concepts or phenomena within a particular cultural context or build a theory that exists in non-Western societies and then see if it can be applied to Western society.

We delve into the steps of prototype analyses, drawing on earlier research to lay a foundational understanding. As we explore these established methods, we critically examine the methods employed in previously published studies that have used prototype theory and methods, aiming to understand how these established methods can be effectively adapted and applied in the context of cross-cultural research. We also examine the advantages and risks associated with employing prototype analyses in the study of cross-cultural phenomena, providing a comprehensive perspective on its application and potential impact.

Overview of Prototype Theory and Prototype Analyses

Philosophers and cognitive scientists often engage in debates about the nature of concepts, their structure, and the process of their acquisition (see Laurence & Margolis, 1999). Classical theory posits that concepts have definitional structures, and categorization is based on whether an item satisfies the features essential to a concept (see Fodor, 1998). The classical view assumes clear-cut criteria for category membership and equal representativeness of all members. For example, if something has a seat, a back, and legs, it would be judged to fall under the concept of a chair. However, this classical view of concepts was challenged by Ludwig Wittgenstein’s (1958) notion of family resemblance. Wittgenstein argued against the idea that all members of a category share a set of essential features. Instead, he suggested that categories, much like members of a family, are connected by a series of overlapping similarities in which no single feature is common to all (Wittgenstein, 1958). This implies that concepts do not have strict definitions but are fluid and can be understood through their similarities and differences with other members of the same category (Nyström, 2005). For instance, although chairs typically have a seat, a back, and legs, a large bean bag, although quite different in structure, can also be categorized as a chair because of its functional similarity. Thus, family resemblance allows for a more nuanced and flexible approach to understanding categorization, acknowledging the complexity and diversity inherent in conceptual understanding.

Prototype theory, introduced by Rosch (1975, 1977), offers another perspective on categorization. Specifically, a prototype is known as a collection of the most representative features of a concept. If a concept is prototypically structured, it has an internal structure in which some of its features are more strongly connected with the concept than others. For example, in the context of the chair category, a standard four-legged chair might be considered the prototype. Other objects, such as bean bags or stools, are also considered chairs but are seen as less typical compared with the prototype. Thus, whereas family resemblance allows for the inclusion of a variety of instances under a broad category without strict defining features, prototype theory refines this by introducing the idea of centrality within categories. This highlights the cognitive processes involved in categorization, in which certain exemplars within a category hold a more central, representative role in one’s conceptual understanding.

Two conditions are needed for a concept or a construct to show a prototype structure. First, people need to be able to name features associated with the concept and then reliably rate the centrality of these features. Second, the centrality rating of each feature should have implications for how people think and process the concept or construct (Weiser et al., 2014). By systematically analyzing the centrality of features, one can determine which features are deemed the most relevant or representative of the concept (Mallah, 2022; Rosch, 1975) and understand how these constructs manifest in everyday life. Thus, researchers have suggested that prototype analyses are warranted when researchers try to explore “What is it?” and when there is a lack of consensus on the definition of a concept (Birnie-Porter & Lydon, 2013; Harasymchuk & Fehr, 2013; Luo et al., 2022).

Empirical Applications and Insights From Prototype Analyses

Prototype analyses have already been demonstrated to offer insights into understanding everyday concepts and provide fruitful understandings of individual experiences (see the Supplemental Material available at https://osf.io/tka2p/?view_only=cbc57ee3d5054041bfd194feaaa33439). For example, Kearns and Fincham (2004) suggested that employing a prototype approach to investigate lay conceptions can help understand a multidimensional construct that includes cognitive, affective, and behavioral components and advance the scientific study of forgiveness. They found that there was an overlap between experts’ and laypeople’s perceptions of forgiveness; there were also substantial differences. Le et al. (2008) conducted prototype analyses of “romantic missing.” They pointed out that the strengths of this research include the use of multiple methods, such as the collection and rating of open-ended data, social-cognitive techniques, and vignettes. Furthermore, prototype analyses enable researchers to understand the breadth of a concept, such as the affective, behavioral, and cognitive aspects of the experience of missing a partner. Prototype analyses not only reveal the many facets of the concept but also facilitate the conduct of applied research (Gregg et al., 2008; Harasymchuk & Fehr, 2013). For example, Hepper et al. (2012) argued the methodological advantages of prototype analyses of nostalgia would be accrued by enabling future studies to measure or manipulate nostalgia by using prototypical features, such as the design of therapeutic interventions by tailoring interventions to focus on the most helpful elements of nostalgia. Seuntjens et al. (2015) noted that prototype analyses provide information about a concept’s lay perception, helping researchers create a working definition of a concept and further empirical study of a concept, such as scale construction. Overall, based on previous studies, prototype analyses offer numerous advantages in research by providing a comprehensive understanding of complex, multidimensional constructs. It facilitates the exploration of cognitive, affective, and behavioral components of concepts, revealing both the commonalities and differences between expert and lay perceptions. This approach not only uncovers the many facets of a concept but also aids in applied research, such as designing interventions that focus on the most relevant features and creating working definitions of concepts, which are essential for further empirical studies and scale construction.

We argue that prototype analyses can further yield numerous important insights into cross-cultural psychology. Cross-cultural psychologists have long emphasized the need for a more coherent approach to studying culture and the importance of examining concrete, specific, and local cultural processes that shape psychological outcomes (Karasz & Singelis, 2009; Spector et al., 2015). The prototype approach, in this context, becomes crucial because it allows for the identification and examination of specific cultural prototypes that inform and shape concepts or constructs within particular cultural settings or across cultural settings. This method provides a more detailed and context-sensitive approach. Researchers can examine whether the importance of perceived features of a concept differs across cultures by analyzing the speed of processing/reaction time, priming, and the logic of natural language use of category terms (Kearns & Fincham, 2004) and further explore why there are differences in prototypical features. This exploration further extends understanding by revealing how differences in perception can provide deeper insights into the cultural values cherished by various cultures and the psychological diversity that goes beyond the scope of the current East–West comparative literature (Kitayama & Salvador, 2023). In addition, if there are cultural differences in the understanding of a concept, future scale validation based on the concept should take these differences into account. The emic–etic approach of prototype analyses also meets the requirement of using mixed methods for scale development and validation (see Zhou, 2019). Based on these, prototype approaches hold the potential to effectively address common challenges in cross-cultural research, including issues of bias and equivalence (see Davidov et al., 2014; Poortinga, 1989).

Prototype Analyses in Cross-Cultural Research

Culture, which is regarded as an umbrella concept encompassing a host of characteristics, shapes individuals’ perceptions and interpretations of the world, influencing their cognitive processes and behaviors (see D’Andrade, 1995; van de Vijver & Leung, 2021). Moreover, the tool-kit theory of culture posits that individuals possess a repertoire of cultural resources—habits, skills, and styles—that they use to navigate different situations (see Swidler, 1986). This theory suggests that culture is not a fixed blueprint dictating behavior but a flexible set of tools that people draw on to solve problems and achieve goals. In line with this idea, when individuals are asked to think about features associated with a concept, it reflects the ease with which features of a concept spontaneously come to mind (i.e., Step 1 of prototype analyses: feature generation). When examining the importance of these features (i.e., Step 2 of prototype analyses: centrality rating) to oneself, this focus on more deliberative and reflective aspects may influence individuals’ responses by the cultural values they hold, thus illustrating cultural differences in the understanding of a concept. For example, although participants from different cultural groups might identify some common features of a concept, the centrality ratings of those features could vary across cultures (see Joo et al., 2019; Sun et al., 2024). Prototype analyses thus offer a novel method for identifying the most central and peripheral features of concepts within different cultures. This approach further provides a nuanced understanding of how cultural values influence specific features, yielding insights into both universal and culture-specific aspects of constructs. As D’Andrade (1995) noted, although the level at which prototypes form is relatively universal, their specific content reflects typicality and availability in a given location.

Furthermore, originally developed in the context of network analyses, centrality indices reveal the relative importance of nodes within a network structure (see Bringmann et al., 2019). However, there is little research on using centrality in cross-cultural research and even less focus on the centrality of a concept across different cultures. Understanding centrality is essential because it reveals how different cultures prioritize and interpret aspects of a concept, shedding light on both universal and culture-specific elements. For instance, why do participants from a certain culture perceive one feature as the most important but not perceive another feature as participants from other cultures do? What mechanisms can explain this phenomenon? We argue that this would not only inspire future cross-cultural research to investigate the mechanisms behind cultural differences (i.e., unpack the cultural differences) but also has the potential to address construct bias.

Minimizing bias in cross-cultural research

Culture-comparative research is becoming increasingly widespread in the fields of psychology and other social sciences because of its ability to highlight the diversity and complexity of human behavior across cultural contexts and promote a broader and deeper understanding of human behavior (Boer et al., 2018; Matsumoto & van de Vijver, 2012). This type of research is often underpinned by assumptions of differences, with study designs specifically targeting the detection of differences in responses across participants from different cultures. However, it raises problems, such as item bias and noninvariance, when making comparisons (Fischer & Karl, 2019). Specifically, bias is known as systematic errors in measurement that affect the validity of cross-cultural research (van de Vijver & Leung, 2001; van de Vijver & Tanzer, 2004). Researchers have argued that without a robust methodology to ensure that biases do not influence the intended cross-cultural variations, there are concerns about the validity of any conclusions drawn regarding cultural differences and similarities (Boer et al., 2018).

Three types of bias have been identified (see Boer et al., 2018; van de Vijver & Tanzer, 2004). Specifically, construct bias can be caused by the underlying construct measured not being the same across cultures (He & van de Vijver, 2012). It can occur if a concept is differently defined or has an incomplete overlap across cultural groups. For instance, Uchida et al. (2004) found that happiness tends to be defined differently between Western and East Asian cultures. In East Asian cultures, happiness is defined in terms of interpersonal connectedness, and people engaging in these cultures are motivated to maintain a balance between positive and negative effect. In Western cultures, happiness is defined in terms of individual achievement, and people engaging in these cultures are motivated to maximize the experience of positive affect. Method bias includes three subtypes, depending on whether the bias comes from the sample, administration, or the instrument. Sample bias refers to the incomparability of participants in terms of cross-cultural variations in characteristics, such as different populations (e.g., students vs. the general population) or educational levels. Administration bias involves differences in the procedures used to administer an instrument, such as ambiguous instructions and communication problems (e.g., language differences). Instrument bias concerns systematic cultural differences in the meaning of instruments that confound cross-cultural comparisons. That is, cultural contexts may influence how instruments are interpreted and answered, potentially causing scientific inaccuracies, errors, and misleading conclusions from findings. Item bias, also known as differential item functioning, occurs when an item has a different meaning across cultures. For example, one item of a scale might be understood and responded to differently by individuals from different cultural backgrounds not because of actual differences in their attitudes or behaviors but because of the poor translation or words with ambiguous connotations (van de Vijver & Leung, 2021). The consequences of bias in cross-cultural research are significant and manifold, potentially undermining the integrity and validity of research findings. When biases are not adequately addressed, they may lead to systematic errors that distort the understanding of cultural differences and similarities (Luong & Flake, 2023; van de Vijver & Leung, 2001).

Prototype analyses and construct bias

The concept of equivalence is critical for ensuring comparability across cultures (van de Vijver & Leung, 2001; van de Vijver & Tanzer, 2004). Four levels of equivalence have been identified, each with its own importance and application within cross-cultural research (see details in Boer et al., 2018; Fontaine, 2005): functional equivalence, structural equivalence, measurement-unit equivalence, and full-score equivalence. Researchers have developed methodologies for assessing whether the psychometric properties of a scale are consistent (i.e., measurement equivalence) across different groups (see Luong & Flake, 2023). Note, however, that there is ongoing debate about establishing measurement invariance (MI) in cross-cultural psychology. Despite growing awareness of the need for cross-cultural equivalence in psychological scales, tension exists between proponents of MI and practitioners who may find recommended standards overly strict and impractical (see Funder & Gardiner, 2024).

Researchers have demonstrated that construct bias can be investigated using factor analyses to detect underlying structures for concepts. However, this method may be insufficient alone. If constructs differ across cultures, local surveys can gather descriptions of the construct and characteristic behaviors, providing a more comprehensive understanding of potential biases (Lim et al., 2002; van de Vijver & Leung, 2021). The convergence approach involves researchers from different cultures designing their own instruments, which are then administered across all cultures. Consistent findings across these instruments indicate the validity of observed cross-cultural differences, whereas discrepancies may reveal sources of bias. This method helps validate cultural comparisons by highlighting both convergences and potential biases (van de Vijver & Leung, 2021; Yang & Bond, 1990). The third approach combines emic (culture-specific) and etic (universal) aspects (van de Vijver & Leung, 2021). This method integrates both perspectives, acknowledging that psychological phenomena have universal and culture-specific features. It involves using both emic and etic measurements and mixed methods. The goal is to identify which aspects are universal and which are culture-specific, moving beyond the debate to integrate both viewpoints effectively (Cheung, 2012). Following this approach, we propose using the prototype method to examine construct bias across different cultures. The prototype approach not only identifies the features of a concept from each culture but also conveys meaningful information about these features (i.e., central or peripheral features of concepts). One can then investigate how cultural values influence these features and uncover the underlying mechanisms. This preliminary step is vital for establishing a baseline understanding before delving into comparisons of cultural differences in the concept or phenomenon in question.

Prototype analyses and unpacking cultural differences

Moreover, in cross-cultural research, one of the objectives is to identify and explain cultural differences by examining relevant cultural dimensions. According to van de Vijver and Leung (2021), these differences can be categorized as either level-oriented or structure-oriented. Specifically, level-oriented cultural differences refers to differences in the means of a construct across cultural groups (van de Vijver, 2009). These differences can initially be identified using methods such as analysis of variance or regression analysis. However, simple mean comparisons are often open to multiple interpretations, so it is important to unpack these differences to enhance their interpretability (see Ng et al., 2019). This is commonly achieved through mediation analysis, which examines how a predictor (cultural group) influences an outcome variable through a mediator that explains the cultural difference. In this model, a significant indirect effect suggests that the mediator helps clarify the observed cultural difference (Ng et al., 2019). Structure-oriented cultural differences focus on how relationships between psychological constructs vary across cultures (van de Vijver, 2009). Moderation analysis is commonly used to identify these differences by comparing the effect of a predictor on an outcome across cultural groups. Researchers can also use mediated moderation analysis to unpack these differences further (see empirical illustration in Ng et al., 2019).

This is the case for which prototype analyses offer clarification. Prototype analyses capture the psychological processes relevant to culture, identifying the specific aspects of concepts that are emphasized by a particular cultural group. These insights can then be used to inform what to test in mediation, moderation, or their combination. In particular, ensuring unit equivalence (i.e., the construct has the same meaning across groups) is crucial for both the independent and outcome variables when comparing relationships between them across cultures. By integrating prototype analyses with quantitative methods, such as mediated moderation, researchers can gain deeper insights into the mechanisms underlying cross-cultural differences.

We chart a new course by proposing prototype analyses as a foundational step for examining construct or concept equivalence, specifically tailored to address construct bias (see Fig. 1). For instance, Sun et al. (2024) found that patriotism was the most important feature of heroes among Chinese participants. However, this feature was not observed among Western participants (Kinsella et al., 2015). Why does it exist only among Chinese participants, and can this reflect the influence of cultural values on perception? If researchers find such differences from centrality ratings, the next step could be to unpack the mechanism (see van de Vijver & Leung, 2021) of why there are cultural differences. Such differences could also call measurement invariance into question, indicating that the construct is understood differently across cultures. Researchers can use the results of the prototype analyses to create cross-cultural instruments that consider culturally sensitive features of the concept. By incorporating the central features identified in each culture, researchers can develop scales that more accurately reflect the cultural nuances of the construct, ensuring that the measurement is both valid and reliable across different cultural contexts.

Prototype approach in cross-cultural research. We argue that prototype analyses can serve as an initial and fundamental step in discovering cultural differences in the understanding of concepts or phenomena. By using centrality ratings, researchers can identify which exemplars participants consider most important and apply various methods in cross-cultural research to further explore these cultural differences (i.e., unpacking cultural differences).

Prototype analyses have been applied in several cross-cultural research. For example, Sun et al. (2024) investigated the cultural differences in prototypical features of heroes among Chinese and American participants. Morgan et al. (2022) investigated whether perceptions and experiences of gratitude differ across children, adolescents, and adults from Australia and the UK. Joo et al. (2019) conducted prototype analyses to examine conceptions of forgiveness among American and Japanese participants. Hepper et al. (2014) studied prototypicality features of nostalgia among individuals in 18 countries across five continents. Cross et al. (2014) examined the prototypes and dimensions of honor among Turkish and American participants. Indeed, prototype analyses offer significant benefits in cross-cultural research, enhancing the understanding of cultural phenomena. The bottom-up methodology enables researchers to uncover distinct interpretations and understandings of the same concept across different cultures (Sun et al., 2024). Such an approach leads to a nuanced and comprehensive view of cultural diversity, revealing variations not only in behaviors and attitudes but also in the underlying cognitive processes. For example, it sheds light on the implicit norms, values, and beliefs that underlie these perceptions, which is crucial for understanding how cultural nuances influence individuals’ thoughts, feelings, and behaviors (Cross et al., 2014; Le et al., 2008). Prototype analyses enhance construct validity in cross-cultural studies by ensuring that the constructs being measured are both relevant and accurately represented in various contexts. In essence, prototype analyses enrich cross-cultural research by providing a more detailed, context-aware, and culturally sensitive perspective to examine conceptualizations.

Note that cultural-consensus theory (CCT) and prototype analyses are both methodologies used to understand shared beliefs and knowledge within cultural groups, yet they differ in their approaches and applications. CCT focuses on identifying and measuring the extent of agreement among group members regarding specific cultural domains. It operates under the assumption that a cultural consensus exists and uses formal models to derive shared beliefs and knowledge from individual responses to various types of questions, such as true/false, Likert scales, and continuous responses (Anders et al., 2014; Batchelder & Romney, 1988). CCT is particularly useful for domains in which there is no scientifically verifiable correct answer but a presumed cultural consensus (Heshmati et al., 2019). Prototype analyses delve into identifying the most representative examples or prototypes of a concept. This method goes beyond measuring agreement to understanding the nuances of how individuals conceptualize cultural constructs, which can provide richer, more detailed insights (Fehr, 1988).

Steps for prototype analyses in cross-cultural comparison

Prototype analyses typically include “standard procedures” followed by many studies (see Kinsella et al., 2015; Luo et al., 2022). First, the prototypical features of the concept are identified by asking participants to generate exemplars they consider important to describe the concept. Next, independent coders categorize those exemplars into features representing the concept. Then, the centrality of the features will be assessed and categorized into central and peripheral features. Finally, the validity of the prototypical features will be tested through tasks such as reaction time and recall in autobiographical situations. We critically reflect on each step and demonstrate new methods to facilitate the conduct of prototype analyses (see Fig. 2). We demonstrated the approaches in the supplementary materials (available at https://osf.io/tka2p/?view_only=cbc57ee3d5054041bfd194feaaa33439) using published data from Sun et al. (2024).

Guide for conducting prototype analyses in cross-cultural research. This guide illustrates the key stages of prototype analyses in cross-cultural research. The process begins with feature generation, in which features of a concept are identified through either traditional approaches or computational methods. Next, centrality ratings are used to determine the importance of these features. The validity of prototypical features is then assessed using tasks such as group membership determination, reaction times, or recall tasks. Finally, applying these prototypical features in diverse contexts allows researchers to explore their relevance across various settings and cultural contexts.

Feature generation and centrality ratings

Traditionally, within the prototype approach, laypeople are asked to generate exemplars they consider relevant to the concept under examination. These exemplars are then evaluated and categorized into larger sets of features by independent coders (see Fig. 3).

An example of traditional approaches for coding exemplars into features. Reflecting the traditional process of feature generation through human coding, exemplars are categorized into three stages: (1) grouping identical exemplars, (2) grouping semantically related exemplars, and (3) grouping meaning-related exemplars into broader categories.

For instance, in the study by Sun et al. (2024), four independent coders (two Irish and two Chinese), who were unaware of the study’s purpose, were tasked with organizing the original exemplars into superordinate categories. This was done by grouping identical exemplars, semantically related exemplars, and exemplars with related meanings, following a procedure used in previous studies (Kinsella et al., 2015). Likewise, Joo et al. (2019) involved American and Japanese coders to sort exemplars into different attribute categories, adhering to guidelines provided by Fehr (1988). This process included categorizing different grammatical forms of the same word into a single feature category as the first step. The second step involved grouping linguistic units modified by adverbs or adjectives into one category. Then, units judged by coders as having the same meaning were also categorized together. In both studies by Sun et al. and Joo et al., any discrepancies were resolved through discussion. Although including coders from various cultural backgrounds may help mitigate cultural biases during the coding procedure (Sun et al., 2024), it could be argued that these steps might still introduce human bias. We propose several methods (see Fig. 4) to not only minimize human bias as much as possible but also reduce the labor-intensive and time-consuming aspects of the process.

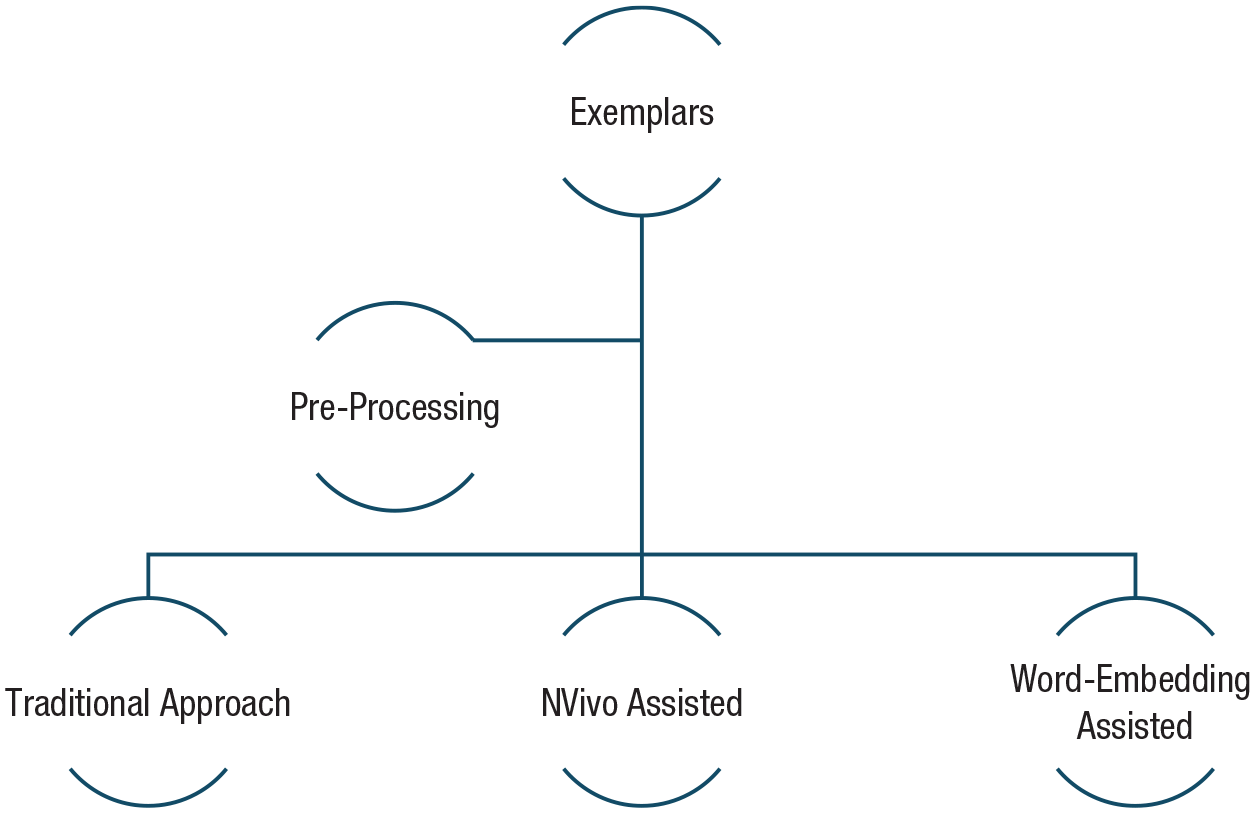

Steps for coding exemplars into features. This outlines several methods designed to minimize human bias and reduce the labor-intensive and time-consuming aspects of the coding process. After exemplars undergo preprocessing, they can be coded into features using one of three approaches: the traditional approach, NVivo-assisted coding, or word-embedding-assisted coding. Each method offers varying levels of automation and computational support to streamline the coding process.

Preprocessing

We recommend preprocessing the exemplars before coding. This involves eliminating duplicates and organizing the exemplars into separate files based on word count: those with one word, two words, and more than two words. To eliminate duplicates, use R to read the data set containing the exemplars and remove any duplicate entries. To organize by word count, sort the exemplars into separate files based on the number of words they contain (e.g., one word, two words, and more than two words). This will simplify the coding process, reduce the overall workload for human coders, and ensure they will not repeatedly code the same exemplar. The R script (see supplementary materials on OSF) demonstrates the process of manipulating a data set named “exemplars_df.” It includes loading the necessary library, reading the data set, removing duplicates, adding a word-count column, separating the data based on word count, and finally saving these subsets into separate CSV files.

Furthermore, if the research entails translating materials from their original language to the language in which they will be published, it is crucial to employ rigorous translation methods to ensure accuracy and cultural relevance. We recommend the use of translation and back-translation (Brislin, 1970), which involves retranslating translated content back into the original language and then comparing it with the original version. This process ensures linguistic equivalence in instructions, questionnaires, and exemplars as demonstrated in Study 1 of Joo et al. (2019) and Sun et al. (2024). Although back-translation may be seen as controversial because of the potential for introducing error variance in the meaning of specific features, as noted by Behr (2017), it continues to be a significant challenge in cross-cultural research. We suggest employing a combination of online translation methods, such as Google Translator, and traditional translation methods. Specifically, as demonstrated in Goyal et al. (2021), the process would begin with online translation, followed by traditional proofreading and editing. By adopting this hybrid method, the translation can be performed swiftly, enhancing both accuracy and validity.

NVivo assisted (see supplementary materials on OSF)

Maher et al. (2020) uploaded exemplars into NVivo Plus for textual analysis and conducted a word-frequency analysis and grouping synonyms using NVivo’s internal dictionary. This process categorized words and their synonyms, assigning a weighted percentage that represented their frequency in relation to the total word count analyzed. Specifically, only categories with a weighted percentage above 0.5% were initially considered significant for feature formation. Subsequently, categories unrelated to the concept (e.g., “something” and “someone”), categories lacking specific concept relevance, and categories that directly named the concept (e.g., “disillusioned” and “disillusionment”) were excluded. To ensure comprehensive representation, the excluded categories were reevaluated, and those describing the same feature as the retained categories were added back at a lower threshold of 0.1%. This step was crucial to adjust the weighted frequencies and refine the representativeness of each feature. The NVivo analysis thus provided a weighted percentage for each feature, offering an initial assessment of their representativeness and indicating how readily different features are associated with the concept by individuals (see Fig. 5).

Guide for coding exemplars into features using NVivo. This outlines the step-by-step process for coding exemplars into features using NVivo, ensuring a systematic and data-driven approach to feature identification.

Word-embeddings assisted (see supplementary materials on OSF)

In addition, we provided a way of using word embeddings to sort exemplars into features. Word embeddings are a type of word representation in natural language processing that maps words or phrases from a vocabulary to vectors of real numbers, representing them in a continuous vector space (Kozlowski et al., 2019; Pennington et al., 2014). Each word is represented by an ordered vector, known as a word embedding. The closer two words are in this space (i.e., the more similar their vector embeddings are), the more similar they are expected to be in meaning (Kozlowski et al., 2019; Pennington et al., 2014). This means semantically similar words have similar vector representations, capturing the nuances of language. Word embeddings are typically obtained through models such as Word2Vec, GloVe, and FastText, trained on large text corpora. The proximity of words in the high-dimensional embedding space signifies similarity in meaning, capturing the complex relationships between words. This representation allows for a nuanced understanding of language, facilitating tasks such as text classification, sentiment analysis, and machine translation (see Arseniev-Koehler, 2024; Charlesworth et al., 2023).

In prototype analyses, it is crucial to precisely understand the semantic similarity between exemplars for accurate feature coding. Word embeddings, which are vector representations of words derived from their contextual relationships, present a powerful tool for this purpose. By employing techniques such as vector scoring for coding exemplars and using methods such as k-means clustering, word embeddings can help organize and interpret data more efficiently. This approach can potentially reduce bias in the analyses by relying on more objective, data-driven methods. We provide an example for using word embeddings to code exemplars into features in Figure 6. Note that conducting word embedding requires careful consideration, especially regarding data-set size. As highlighted by Wang et al. (2022), the effectiveness of these models can diminish in specialized domains in which language use diverges significantly from everyday discourse. Despite this, these methods aim to facilitate the coding process in prototype analyses. Other guides have demonstrated the use of natural language processing for coding (see Kjell et al., 2023; Wang et al., 2022). Therefore, researchers should choose appropriate methods based on their specific data in the coding procedure in prototype analyses.

Guide for using word embeddings to code exemplars into features. This outlines an example of using word embeddings to code exemplars. Although word embeddings and k-means clustering for prototype analysis can provide insights into feature groupings based on semantic similarity, researchers should ensure that the results adequately represent all exemplars. Some exemplars may not be well captured through automated clustering because of nuances in meaning or context.

Ecological validity of prototypical features

After getting the centrality ratings of the features, the intraclass correlation (ICC) will be conducted, treating the features as cases and the participants as items, to investigate the reliability (Maher et al., 2020; Sun et al., 2024). In addition, Cross et al. (2014) investigated the overlap in the features identified by American and Turkish participants by using the index of interprototype similarity. This index reflects the ratio of shared to unique attributes in pairs of feature lists. For example, researchers reported a ratio of .14, which reflects a low of similarity in the two lists and concluded that there were substantial differences in the features generated by both groups of participants.

Furthermore, researchers typically validate the centrality of the features in subsequent studies, such as showing that central features are more salient in memory and have less response time compared with peripheral features (Fehr, 1988; Kearns & Fincham, 2004; Kinsella et al., 2015). For example, Fehr et al. (1982) examined the prototypicality of emotions and found that central members of the concept were identified more quickly than peripheral members. This difference was attributed to the greater amount of deliberation required during the recognition of peripheral features. In Gulliford et al.’s (2021) study of prototypical features of virtue, participants were presented with 16-character descriptions. In the central condition, four characters (e.g., Person A, Person B, Person C, and Person D) contain central features. In the other three conditions, the characters contain peripheral, marginal, and remote features. Participants were asked to rate “how virtuous is this person,” and it is expected that the character containing central features would be rated as more virtuous than characters displaying fewer central features (e.g., peripheral, marginal, or remote features). Furthermore, Gulliford et al. conducted a recognition-memory experiment to investigate if feature centrality influenced recognition memory of features, which is known as an implicit task. Specifically, participants were presented with 40 words (which included 10 central features and 10 peripheral features that had been presented in the previous person-description task and 10 central features and 10 peripheral features that had not been presented in the previous task) and asked to decide whether the words presented in character description. Gulliford et al. argued that the central features of virtue would be more frequently falsely recognized (i.e., inaccurately recognized as having been presented in the previous task) than peripheral features that had not been presented in the previous task. Seuntjens et al. (2015) examined the ecological validity of the prototype of greed. Participants were required to recall a real-life situation in which they felt greedy. Researchers argued that if central features are more associated with greed than peripheral features, then autobiographical events are supposed to be better described by central features compared with peripheral features. Moreover, central features are supposed to be good at discriminating between greedy and everyday events. Fehr and Sprecher (2009) verified the prototype structure of compassionate love by investigating category-based inferences and found that when compassionate love is activated, prototypical (not nonprototypical) features of compassionate love were reflected in the kinds of logical judgments that individuals make. Hepper et al. (2012) employed both central and peripheral features in a novel manipulation of nostalgia and examined if events triggered using those features alone would provide the psychological benefits of nostalgia.

In cross-cultural research, a modified prototype approach is often employed, typically involving the first two steps of the prototype approach, such as feature generation and centrality rating. However, examining the validity of prototypical features of a concept remains crucial. For instance, Cross et al. (2014) investigated the cultural differences and similarities in centrality ratings of features and their underlying latent structures. They were particularly interested in exploring whether there were differences or similarities in the dimensions underlying these ratings between two groups. Their findings indicated that the concept of honor in Turkey is more complex and differentiated than in the United States. In addition, the researchers assessed the validity of the dimensions of honor in relation to theoretically related constructs, such as social approval and virtue.

To enhance the examination of the validity of prototype features generated by participants from different cultures, we propose the implementation of discriminant function analysis (DFA; see supplementary materials on OSF). DFA functions in extracting a linear combination of variables that maximizes the differences between natural group means and identifying the variables that predict group membership from a set of predictors (Ramos & Liow, 2012). Hence, DFA has the ability to demonstrate how distinct the categories are from one another based on the entire set of themes or features. By using DFA, researchers could determine whether the group membership (e.g., Chinese vs. American) could be reliably predicted by the ratings of the features and which features best distinguish the two groups (see Sun et al., 2024). For example, DFA has been conducted to investigate whether the self-reported models of parenting of mothers from different cultures predicted the mothers’ cultural membership (Keller et al., 2006) and to examine whether the result of collectivism and contextualism scales could effectively differentiate between males and females across five cultures (Kashima et al., 1995). The DFA typically consists of two steps. First, Wilks’s lambda F test is used to assess whether the discriminant model is significant. Second, if the model is significant, features of the concept are assessed to determine which differ significantly by group and classify the group membership. Figure 7 demonstrates the specific steps for conducting DFA in prototype analyses.

Guide for conducting discriminant function analysis (DFA) for prototype analyses in cross-cultural research. The process begins with testing for equality of group means to determine whether there are statistically significant differences. Then, DFA is performed by using features as predictor variables and group membership as the criterion variable. The model is assessed by evaluating eigenvalues and the structure matrix to determine feature contributions, followed by model validation using classification matrices. Finally, the results are interpreted to identify which features most effectively discriminate between groups.

Risks of conducting prototype analyses

Note that prototype analyses have their own limitations. Researchers have criticized prototype theories, pointing out that some concepts may lack a prototypical structure because individuals do not hold clear opinions about the central tendencies of the corresponding categories (Laurence & Margolis, 1999). It is also possible that the prototype of a concept may not remain constant across various situations. For instance, Kearns and Fincham (2004) noted limitations in the prototype analyses of forgiveness, such as how the prototype may differ across different types of transgressions and how future research should examine the stability of the prototype across various types of situations. Furthermore, prototype analyses have been criticized for being potentially limited by the time at which they are conducted (Birnie-Porter & Lydon, 2013). However, we argue that by carefully designing the different steps of prototype analyses, it is possible to ensure that the identified feature prototypes remain relevant and resilient to changes over time. For example, in centrality ratings, people might value features aligned with their cultural values as more important than others even if the features of the concept change over time. Such features, deeply rooted in cultural values, are likely to retain their significance even as other aspects evolve. Moreover, human bias in the coding process of prototype analyses can be another limitation. We have previously outlined recommended steps for employing computational methods to aid in coding (see other steps in Wang et al., 2022). In addition, newer large language models based on transformer technology, such as the generative pretrained transformer (GPT), have the potential to either match or assist human performance in coding exemplars into features. We demonstrated this in the supplementary materials (available on OSF). For instance, Hamilton et al. (2023) conducted a comparison between human-generated qualitative analyses and those produced by ChatGPT. Their findings suggest that ChatGPT can effectively complement complex human-centered tasks, such as qualitative-research analyses, helping to identify oversights, alternative frames, and personal biases. We recommend that human coding be used as a crucial step for validation and refinement, complementing the computational methods we have discussed. This integrated approach ensures a more robust and comprehensive analysis.

Discussion

We outlined the potential for prototype analyses in understanding and comparing concepts across different cultural contexts. Prototype analyses offer several distinct advantages in cross-cultural research in the field of psychology. First, they allow for a more nuanced and culturally sensitive exploration of concepts because they enable the examination of how different cultures understand and prioritize various features of a concept. Second, prototype analyses facilitate the identification of both unique and shared elements across cultures, thereby highlighting universal patterns in human cognition and behavior while also appreciating cultural specificity. In addition, by allowing participants to define concepts in their own cultural terms, prototype analyses minimize the risk of construct bias and enhance the ecological validity of research findings. These advantages make prototype analyses especially adept at uncovering the complexities and rich variations inherent in cross-cultural studies.

Empirical psychologists face the challenge of adequately defining everyday social phenomena from a theoretical perspective (Gregg et al., 2008). This challenge underscores the necessity for scientific concepts to be validated, to resonate with the everyday understandings from which they originate, and to be applicable across cultures. We noted that the prototype approach empowers individuals from diverse cultures to define a concept in their own terms. This enables researchers to investigate the variations in the perceived importance of certain features of a concept across different cultures and to delve into the reasons behind these variations in prototypical features. The prototype approach also holds significant potential in addressing the common challenges of cross-cultural research, a field particularly susceptible to bias. For example, some psychological constructs may hold validity in one cultural context but may not be interpreted or defined similarly in others (van de Vijver & Leung, 2021). Thus, it is essential to investigate a construct deeply rooted in a specific cultural context within that context to obtain meaningful insights and simultaneously assess its universal relevance. Researchers have outlined methods to assess measurement equivalence in cross-cultural studies, ensuring the avoidance of bias (Davidov et al., 2014; Luong & Flake, 2023). For example, to avoid construct bias, researchers have shown that participants from different cultures can provide insights into the perception of certain concepts in their societies by completing sentences with culturally relevant phrases (see van de Vijver & Tanzer, 2004). Focus groups can also be an effective tool for identifying cross-cultural differences (Harding, 2018; Johnson, 1998). We suggest that conducting prototype analyses on concepts or constructs of interest in various cultures could also potentially help avoid construct bias.

Prototype analyses focus on identifying the internal features of concepts and may also offer a unique solution to the replication crisis in cross-cultural psychology. This crisis, characterized by difficulties in replicating research findings across various cultural contexts, often arises from biases and issues of equivalence in manipulations and procedures. It also concerns whether measurements obtained in different cultural groups are comparable (Milfont & Klein, 2018). Milfont and Klein (2018) provided comprehensive recommendations for implementing cross-cultural replications, covering various stages of the research process. These suggestions span the design phase, implementation phase, and reporting phase and extend to considerations for the postpublication phase. Their guidance aims to enhance the validity and reliability of research across different cultural contexts, addressing each phase with specific, tailored advice. We further recommend the use of prototype analyses as a method to examine concepts across cultures. By focusing on culturally specific prototypes, researchers can gain a deeper understanding of how different cultures perceive and categorize various concepts. This method enhances the cultural sensitivity of research designs and interpretation, leading to more accurate and replicable findings in cross-cultural studies. Prototype analyses, therefore, complement the stages outlined by Milfont and Klein, offering an additional, robust tool for addressing the unique challenges of cross-cultural psychology research.

In conclusion, we highlight the significant potential of prototype analyses in the field of cross-cultural research. This analytical approach offers a unique perspective on how people from different cultures perceive and categorize various phenomena, thus contributing to a more profound and nuanced understanding of cognitive representations across diverse cultural contexts. As demonstrated, the implementation of prototype analyses in cross-cultural studies can lead to more robust and culturally sensitive research findings. The insights and methodologies presented here are not merely conceptual contributions but also practical tools for researchers, paving the way for more effective, unbiased, and culturally informed psychological research.

Supplemental Material

sj-docx-1-amp-10.1177_25152459241296401 – Supplemental material for A Guide to Prototype Analyses in Cross-Cultural Research: Purpose, Advantages, and Risks

Supplemental material, sj-docx-1-amp-10.1177_25152459241296401 for A Guide to Prototype Analyses in Cross-Cultural Research: Purpose, Advantages, and Risks by Yuning Sun, Elaine L. Kinsella and Eric R. Igou in Advances in Methods and Practices in Psychological Science

Footnotes

Transparency

Action Editor: David A. Sbarra

Editor: David A. Sbarra

Author Contributions

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.