Abstract

Research synthesis is based on the assumption that when the same association between constructs is observed repeatedly in a field, the relationship is probably real, even if its exact magnitude can be debated. Yet the probability that the relationship is real is a function not only of recurring results, but also of the quality and consistency of the empirical procedures that produced those results and that any meta-analysis necessarily inherits. Standardized protocols in data collection, analysis, and interpretation are foundations of empiricism and a healthy sign of a discipline’s maturity. I propose that meta-analysis as typically applied in psychology will benefit from complementing aggregation of observed effect sizes with systematic examination of the standardization of the methodology that deterministically produced them. I describe potential units of analysis and offer two examples illustrating the benefits of such efforts. Ideally, this synergetic approach will advance theory by improving the quality of meta-analytic inferences.

For the past 8 years, psychologists have undergone a phase of intense self-examination regarding standards and practices in the production, dissemination, and evaluation of knowledge. This critical scrutiny has covered integral aspects of psychological science’s entire enterprise, including the maintenance of psychological theories (Ferguson & Heene, 2012); the procedures by which given hypotheses are confirmed or disconfirmed and by which new research questions are generated and explored (Wagenmakers, Wetzels, Borsboom, van der Maas, & Kievit, 2012); prevailing norms in the collection (Lakens & Evers, 2014), analysis (Wagenmakers, Wetzels, Borsboom, & van der Maas, 2011), reporting (Nuijten, Hartgerink, van Assen, Epskamp, & Wicherts, 2016), and sharing (Wicherts, Bakker, & Molenaar, 2011) of data; the consistency and accuracy of interpretations of observed effect sizes in various subfields (e.g., industrial-organizational psychology and management—Wiernik, Kostal, Wilmot, Dilchert, & Ones, 2017; personality and individual differences—Gignac & Szodorai, 2016; language learning—Plonsky & Oswald, 2014; cognitive neuroscience—Szucs & Ioannidis, 2017); publication practices (Nosek & Bar-Anan, 2012), peer review, and quality management (Wicherts, Kievit, Bakker, & Borsboom, 2012); the reproducibility and replicability of research findings (Open Science Collaboration, 2015); the communication of these findings to the public (Sumner et al., 2014); and the contingent incentive structures under which academics operate (Nosek, Spies, & Motyl, 2012).

Psychological scientists have admitted that they frequently use questionable research practices (John, Loewenstein, & Prelec, 2012) that improve their odds of publishing their research given the discipline’s rigid preference for statistically significant results (Kühberger, Fritz, & Scherndl, 2014). This admission and an increasing number of failures to replicate empirical findings (Hagger et al., 2016; Open Science Collaboration, 2015; Wagenmakers et al., 2016) have worked in concert to erode trust in psychological science. Introductory textbooks, meta-analyses, literature reviews, and policy statements are all built on decades of (purportedly) cumulative research, but how reliable is this knowledge?

Methodological Flexibility and Meta-Analyses: Known Knowns and Known Unknowns

Doubts about the reliability of psychological research have emerged, in particular, with regard to opaque reporting of data-collection rules, flexible use of methods and measurement, and arbitrary analytic and computational procedures. This class of behaviors, which collectively facilitate inflated probabilities of obtaining and subsequently publishing statistically significant results, in concert with the omission of analyses yielding (potentially conflicting) nonsignificant results, is colloquially known as p-hacking (Simonsohn, Nelson, & Simmons, 2014a). We know that these strategies can be quite effective, alone or in conjunction (see, e.g., Schönbrodt, 2016), and we know that psychologists use these strategies frequently (John et al., 2012; LeBel et al., 2013).

Given that p-hacking, by definition, has resulted in relevant information being omitted from the public record, identifying whether questionable research practices have shaped the results in published research reports (and if so, which practices have been involved) is an intricate process. Outcome switching, optional stopping of data collection, and the use of multiple computation techniques for the same target variable are all known types of methodological flexibility, yet which of these behaviors and procedures were appropriated to produce inflated proportions of statistically significant results in any particular case is usually unknown. This problem is exacerbated when individual studies are combined in an attempt to synthesize a body of research (LeBel, McCarthy, Earp, Elson, & Vanpaemel, 2018).

Effective meta-analyses in psychology inform researchers about (a) the mean and variance of underlying population effects, (b) variability in effects across studies, and (c) potential moderator variables (Field & Gillett, 2010). They do so by identifying a relevant population of research reports and selecting those meeting predefined inclusion criteria, such as adherence to methodological gold standards.

As p-hacking and the file-drawer problem (i.e., greater difficulty publishing statistically nonsignificant results than publishing statistically significant results) typically work in conjunction with each other 1 , any attempt at (quantitative) research synthesis becomes quite cumbersome. Certainly, there is an abundance of techniques for estimating and correcting bias (Schmidt & Hunter, 2015), such as the test of insufficient variance (Schimmack, 2014), the p-uniform method (van Assen, van Aert, & Wicherts, 2015), the p-curve method (Simonsohn et al., 2014a, 2014b), trim-and-fill-based and funnel-plot-based methods (Duval & Tweedie, 2000), the precision-effect test and the use of precision-effect estimates with standard error (known as PET-PEESE; Stanley & Doucouliagos, 2014), and various selection models (McShane, Böckenholt, & Hansen, 2016). These techniques may reveal that some unknown bias is present in a population of statistical results. Yet when a body of empirical research is meta-analyzed, researchers might have trouble determining the extent to which an obtained effect-size estimate is affected specifically by publication bias, methodological flexibility, or an interaction of the two. Furthermore, although such statistical estimation techniques are helpful in demonstrating that a body of research exhibits signs of bias or questionable research practices, they might be less effective in identifying the specific type of bias or practice a literature is affected by. Two recent simulation studies (Carter, Schönbrodt, Gervais, & Hilgard, 2019; Renkewitz & Keiner, 2018) compared bias-estimation techniques (in several models of publication bias and p-hacking) and found that (a) no method consistently outperformed the others, (b) the methods’ performance was poor under specific assumptions (that may be applicable to psychological research domains), and (c) researchers usually do not have the information required for selecting the best technique in a given case.

Generally, research synthesis (meta-analytic or otherwise) is based on the assumption that when the same association between constructs is observed repeatedly in a field, the relationship is probably real, even if its exact magnitude can be debated (e.g., Ellis, 2010). Yet the probability that the relationship is real is a function not only of recurring patterns of results on the construct level, but also of the quality and consistency of data collection, measurement, transformation of the data, computation, and reporting. Any meta-analysis necessarily inherits the properties of those empirical practices. With increasing diversity, the certainty that methods used in studies purportedly assessing the same constructs actually provide evidence about the same relationship decreases. Without a gold standard of assessment agreed upon by a scientific community, it can be quite difficult to estimate the consequences of inconsistent methodological approaches. And the more the consistency of research outcomes in a given field depends on flexibility in methods and measures, the greater the probability that the real association approaches zero.

Meta-Method Analysis

Meta-analyses might therefore benefit if meta-analysts complement the aggregation of observed effect sizes with systematic examination of the standardization of the methodologies that produced them. Standardized protocols in data collection, analysis, and interpretation are foundations of empirical research and a healthy sign of a discipline’s maturity. Further, agreement on the implementation of scientific procedures (i.e., standardization) and documentation of those procedures in instruction manuals and handbooks (so how is prescribed and the influence of who, where, and when on data quality is reduced) are fundamental to the objectivity of research because they guide scientists and facilitate the independence of psychological experiments or tests from basic boundary conditions. 2 The extent to which researchers in a given field have been able to agree (either implicitly or explicitly) on methodological prescriptions is key to the degree to which meaningful inferences can be extracted from meta-analytic effect-size estimates.

In this article, I propose a set of steps for evaluating the standardization of methodology in order to facilitate research synthesis. These steps do not constitute a wholly new meta-analytic procedure. Rather, they should be considered a specific type of literature review procedure that is typically overlooked in psychological meta-analyses, which are largely concerned with estimating the true distribution of effect sizes observed in primary research, and less concerned with systematically identifying, coding, and evaluating study methodology. Guidelines for systematic reviews in medicine (Higgins & Green, 2011) and social sciences (The Campbell Collaboration, 2019) require giving substantial attention to critically evaluating the methodologies used in a literature and the limits these place on conclusions, recognizing that searching for and evaluating studies is separate from meta-analytic modeling of study outcomes. Reporting guidelines for systematic reviews, such as the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) statement (Moher, Liberati, Tetzlaff, & Altman, 2009) and psychology’s Journal Article Reporting Standards (JARS; Appelbaum et al., 2018), demand that meta-analysts describe how they assessed bias in both the design and the outcomes of individual studies, and how this assessment informed the synthesis itself. Therefore, the present article should be understood as an attempt at reintroducing these critical steps into the process of synthesizing psychological research.

Does this mean that the suggestions made here are simply rephrasings of the purpose and procedures of the “systematic review” part of meta-analyses? Although there certainly might be some redundancy when these steps are incorporated into the meta-analysis routine, particularly with regard to the systematic search for and screening of primary studies, it may be valuable to use the term meta-method analysis to refer specifically to the critical evaluation of the designs and analytic choices in a literature. Further, to echo Puljak (2019), methodological studies evaluating evidence are not necessarily systematic reviews. Whereas the purpose of the latter is to identify and pool all available empirical evidence (whose relevance is determined through predefined criteria) to answer a substantive research question, the aim of the former is to explore research practices and answer methodological questions without necessarily collating all empirical studies.

Aims and purpose

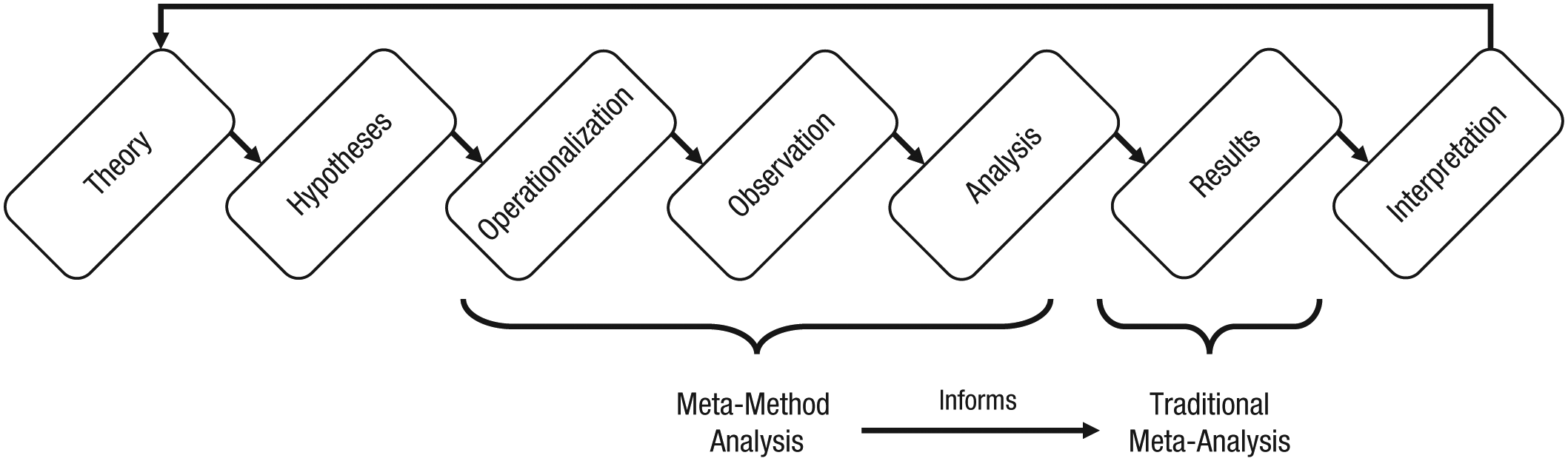

Examining the degree of standardization is valuable for contextualizing and evaluating observed meta-analytic effect sizes. In fact, standardization is, to some degree, a prerequisite of any meaningful synthesis of a research literature. It informs researchers about the psychometric objectivity of results, the agreed definitions of constructs and how they should be measured, the existence or lack of prevailing norms regarding what should be considered robust evidence, and the latent meaning of previous observations. Whereas meta-analyses in psychology are typically concerned with the synthesis of outcomes of studies (e.g., effect sizes), meta-method analyses dissect procedural decisions that deterministically lead to study outcomes (see Fig. 1). A side-by-side comparison of procedural characteristics or analytic strategies of studies on the same research question, ideally using the same empirical paradigm, may reveal idiosyncratic flexibility and peculiar deviations of individual studies in areas with consistent disciplinary norms (for an example, see Simonsohn, 2013) or endemic flexibility in areas where there is no standardization at all.

The empirical cycle involving iteratively generating or modifying theory, deriving hypotheses, conducting studies, and interpreting their outcomes. Traditionally, meta-analysis of psychological studies has involved synthesizing effect sizes from a set of systematically selected studies. Meta-method analysis informs this synthesis with an examination of the study corpus’s operationalization of constructs, observation and measurement choices, and analytic methodologies.

Units of analysis

Any procedural routine, such as operationalization, data collection, computation, analysis, or interpretation, may be the subject of a systematic meta-method analysis. The extent to which a comprehensive meta-method analysis indicates the robustness and replicability of the body of research and informs the results of a meta-analysis naturally depends on the research question. Whereas, for example, diverse samples and sampling methods might be considered healthy in the literature on predictors of psychological well-being in the general population, substantial constraints on sampling methods would be expected in studies on the effectiveness of psychotherapy. The consistent application of narrowly defined inclusion criteria could even increase trust in the robustness of the psychotherapy literature.

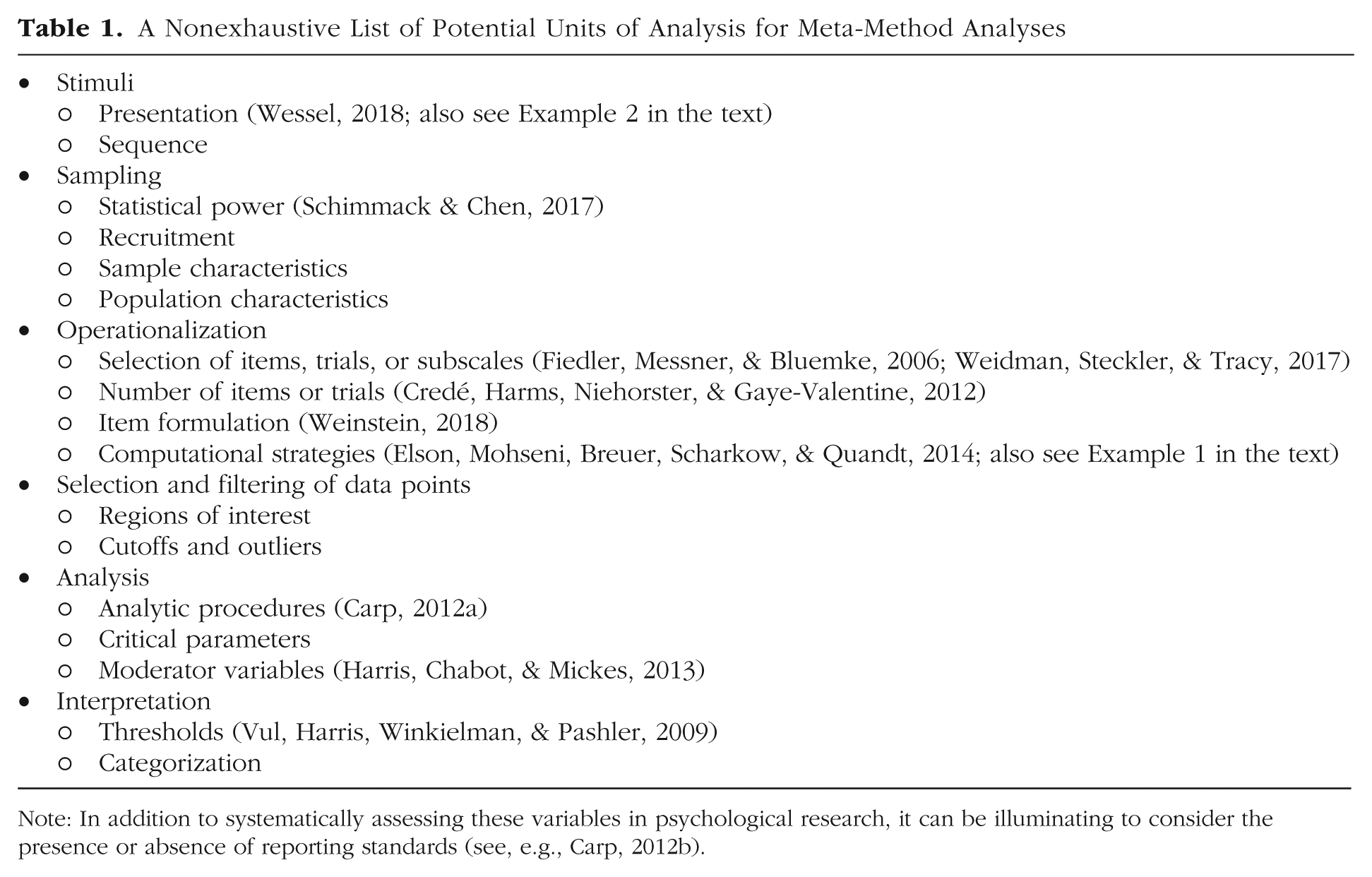

Consequently, and ironically, there may not be a standardized way of conducting a meta-method analysis, as procedures and paradigms differ substantially between research domains, and a given unit of analysis may be relevant to some meta-analytic research questions but not others. Table 1 lists potential units of analysis, citing example publications (when available) reporting procedural examinations that may inform meta-analyses. In the next two sections, I provide detailed examples illustrating the usefulness of such efforts.

A Nonexhaustive List of Potential Units of Analysis for Meta-Method Analyses

Note: In addition to systematically assessing these variables in psychological research, it can be illuminating to consider the presence or absence of reporting standards (see, e.g., Carp, 2012b).

Disclosures

All data from Example 1 are available at the Open Science Framework, at https://osf.io/4pdvg. Jan Wessel has given permission to make all data used in Example 2 available at the Open Science Framework, at https://osf.io/xytch.

Example 1: Aggressive Behavior

The causes of aggressive behavior hypothesized by psychologists range from drugs, alcohol, and hot temperatures to ostracism, frustration, and violent media (Sturmey, 2017). One of the most commonly used laboratory measures of aggressive behavior is the competitive reaction time task (CRTT), sometimes also called the Taylor aggression paradigm. In the CRTT, participants play a computerized reaction time game against another participant (actually a preprogrammed algorithm). At the beginning of each round, both players set the intensity (by setting the volume, the duration, or both) of a noise blast, after which they are prompted to react to a stimulus as quickly as possible by pressing a button. The slower player is then punished with a noise blast at the intensity set by the other player at the beginning of the round. The intensity settings are used as the measure of aggressive behavior.

Flexibility of the CRTT

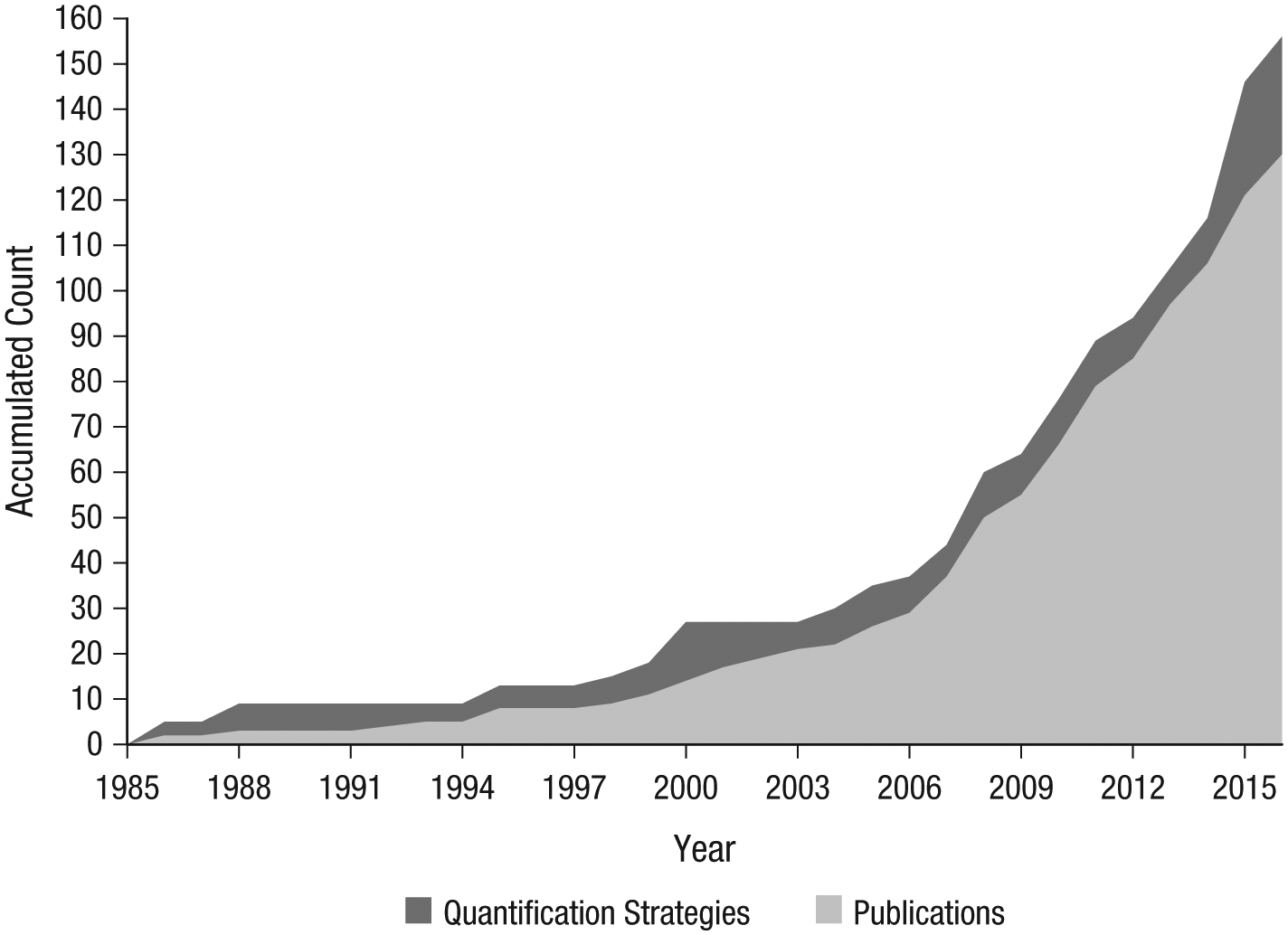

The CRTT ostensibly measures how much unpleasant, or even harmful, noise a participant is willing to administer to a (nonexistent) opponent, but an examination of published studies in which the CRTT was used reveals that researchers have followed many different strategies for extracting aggression scores from the raw data. Using a strict definition of what constituted a distinct strategy, I identified more than 150 quantification strategies 3 in 130 studies (Elson, 2016; see also Elson, Mohseni, Breuer, Scharkow, & Quandt, 2014).

Not all of these quantification strategies were substantially different (e.g., average volume over 25 trials vs. average volume over 30 trials), and thus, choosing one over the other would not be expected to change a study’s results dramatically. In other cases, however, the differences were more substantial (e.g., log of the product of volume and duration on the first trial vs. highest volume across 30 trials), and only occasionally did the researchers justify their specific choice in their publication.

Most of the quantification strategies (more than 100) were used just once, in a single research report, and never reoccurred in the literature; 3 strategies were used in more than 10 publications. Similarly, many studies reportedly relied on 1 or 2 quantification strategies, although others included up to 10. One potential explanation of these differences across studies is that because there is no documentation of a standardized CRTT procedure, researchers run the task with their own idiosyncratic choices. However, variability was also observed within—not just between—individuals and labs. In fact, a relatively small number of researchers generated the majority of the quantification strategies over time. A further cause for concern is that the number of CRTT studies and the number of different quantification strategies appear to be increasing almost linearly with time (see Fig. 2).

Accumulated number of published studies using the competitive reaction time task and of strategies for calculating aggression scores on this task (Elson 2016). Studies were identified through a Google Scholar search for the term “competitive reaction time task” and snowball sampling from reference lists.

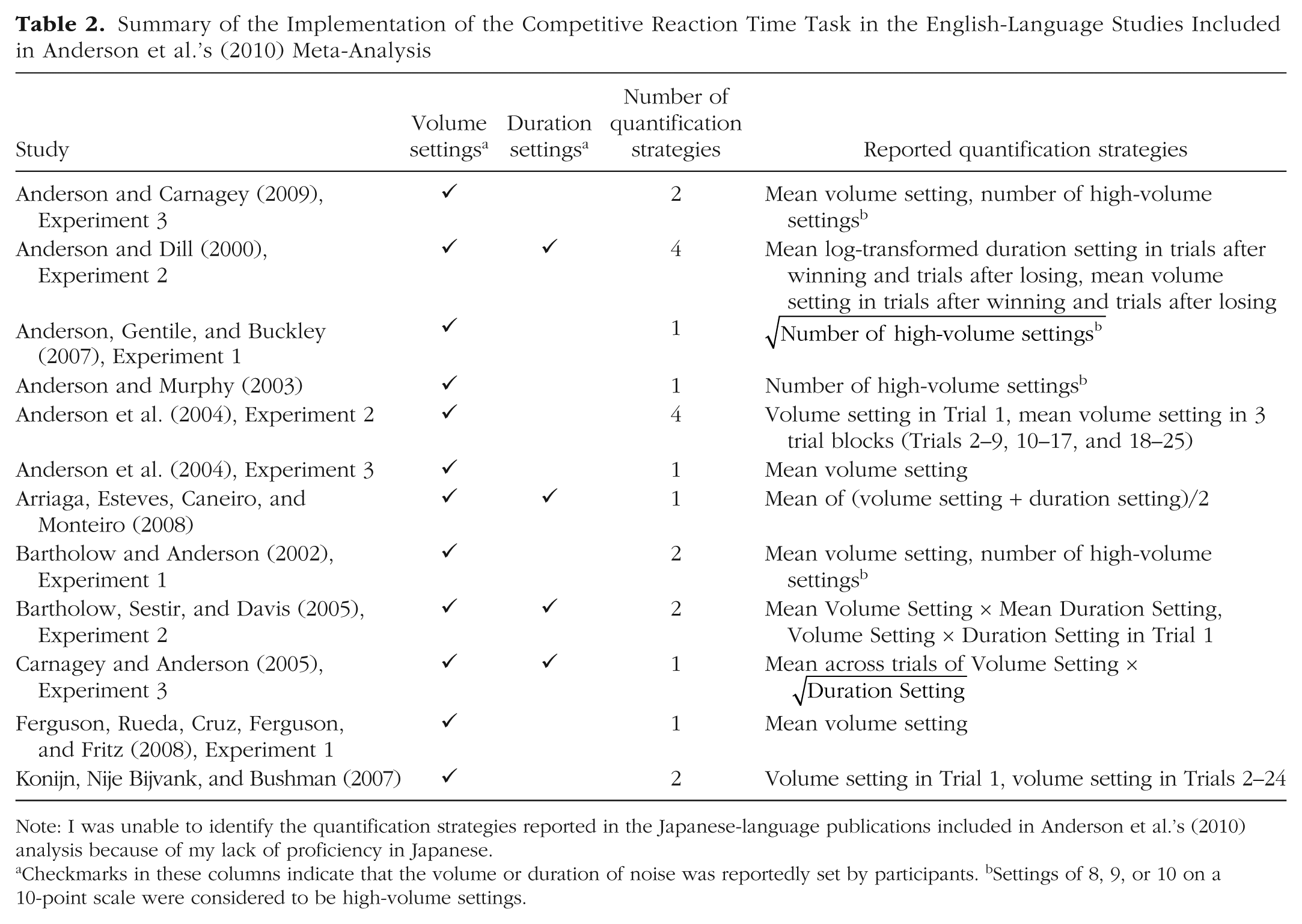

Although arguably relevant, these considerations have hardly informed the meta-analyses that have included laboratory aggression studies. For example, in their meta-analysis on the link between playing violent video games and aggression, Anderson et al. (2010) highlighted the CRTT’s prominence and validity as a behavioral measure. However, only a few, if any, of the CRTT studies Anderson et al. included in their analyses were identical with regard to their reported computational operationalization of aggression (see Table 2).

Summary of the Implementation of the Competitive Reaction Time Task in the English-Language Studies Included in Anderson et al.’s (2010) Meta-Analysis

Note: I was unable to identify the quantification strategies reported in the Japanese-language publications included in Anderson et al.’s (2010) analysis because of my lack of proficiency in Japanese.

Checkmarks in these columns indicate that the volume or duration of noise was reportedly set by participants. bSettings of 8, 9, or 10 on a 10-point scale were considered to be high-volume settings.

Implications for meta-analyses

Given that little is known about the validity of any reported CRTT quantification strategy, it is debatable whether a meaningful meta-analytic synthesis of this literature can actually be achieved. There might simply be too much uncertainty regarding both the convergence of the computational strategies on the underlying construct and the amount of bias introduced by this apparent flexibility. This observation, of course, has substantial implications for the informational value of meta-analyses in the domain of aggression research, where versions of the CRTT are routinely used (e.g., Anderson et al., 2010; Denson, O’Dean, Blake, & Beames, 2018).

Arguably, the magnitude of the problem corresponds to the degree to which the multiple CRTT quantification strategies fail to converge. Switching between or omitting quantification strategies would not affect the outcomes of statistical analyses (or inferences from them) if the strategies were all perfectly correlated. However, if that were the case, it would be even more puzzling why so many different computational strategies have been documented in the literature. Although publication bias typically occurs through selective reporting of studies with statistically significant results, it is also possible that it results from selective reporting (and omission) of quantification strategies, particularly if different quantification strategies applied to the same data do not produce converging results. In a recent study, Hyatt, Chester, Zeichner, and Miller (2019) examined bivariate correlations of various personality traits with 10 different quantifications of the same CRTT data and found directionally consistent relations and moderate heterogeneity in effect sizes. Further validation studies will be required to enable aggression researchers to make sense of the available literature.

Sharing of trial-level CRTT data may ensure that studies gain new utility when the research community finally agrees on some standardized approach. I recommend that researchers archive their CRTT data on public repositories such as the Open Science Framework (www.osf.io) so that meta-analysts can perform checks on the data to identify the most appropriate meta-analytic modeling independently. Furthermore, the extracted computational operationalizations may inspire multiverse analyses (Steegen, Tuerlinckx, Gelman, & Vanpaemel, 2016) of CRTT studies. 4

Example 2: Inhibitory Control

Whereas Example 1 focused on a lack of standardization in analytic strategies, this second example focuses on procedural differences in data collection that pose a potential concern for measurement validity.

Inhibitory control enables individuals to rapidly cancel motor activity even after its initiation. It is a cognitive process and an important human capacity that permits an individual to inhibit impulses or dominant behavioral responses to stimuli in order to select other (ideally more appropriate) responses. Inhibitory control is involved in everyday decision making, such as stopping while crossing the street when a car approaches or suppressing the urge to eat a high-calorie snack when one is trying to lose weight.

One of the most commonly used laboratory paradigms for studying inhibitory control is the go/no-go task. This task consists of a number of trials on which the stimulus presented indicates either “go” (i.e., the participant should respond, e.g., by pressing a button) or “no go” (i.e., the participant should do nothing). Response accuracy on no-go trials is used as a measure of inhibitory control. The most critical component in designing this task is to ensure that prepotent motor activity is initially elicited on each trial, so that no-go trials truly test inhibitory control. To achieve this, two parameters of the experimental design are important: First, no-go trials should be less frequent than go trials, so that it is strategically beneficial to initiate a go response on every trial. Second, trials should be presented at a rapid pace, so that responses need to be made quickly.

Flexibility of the go/no-go task

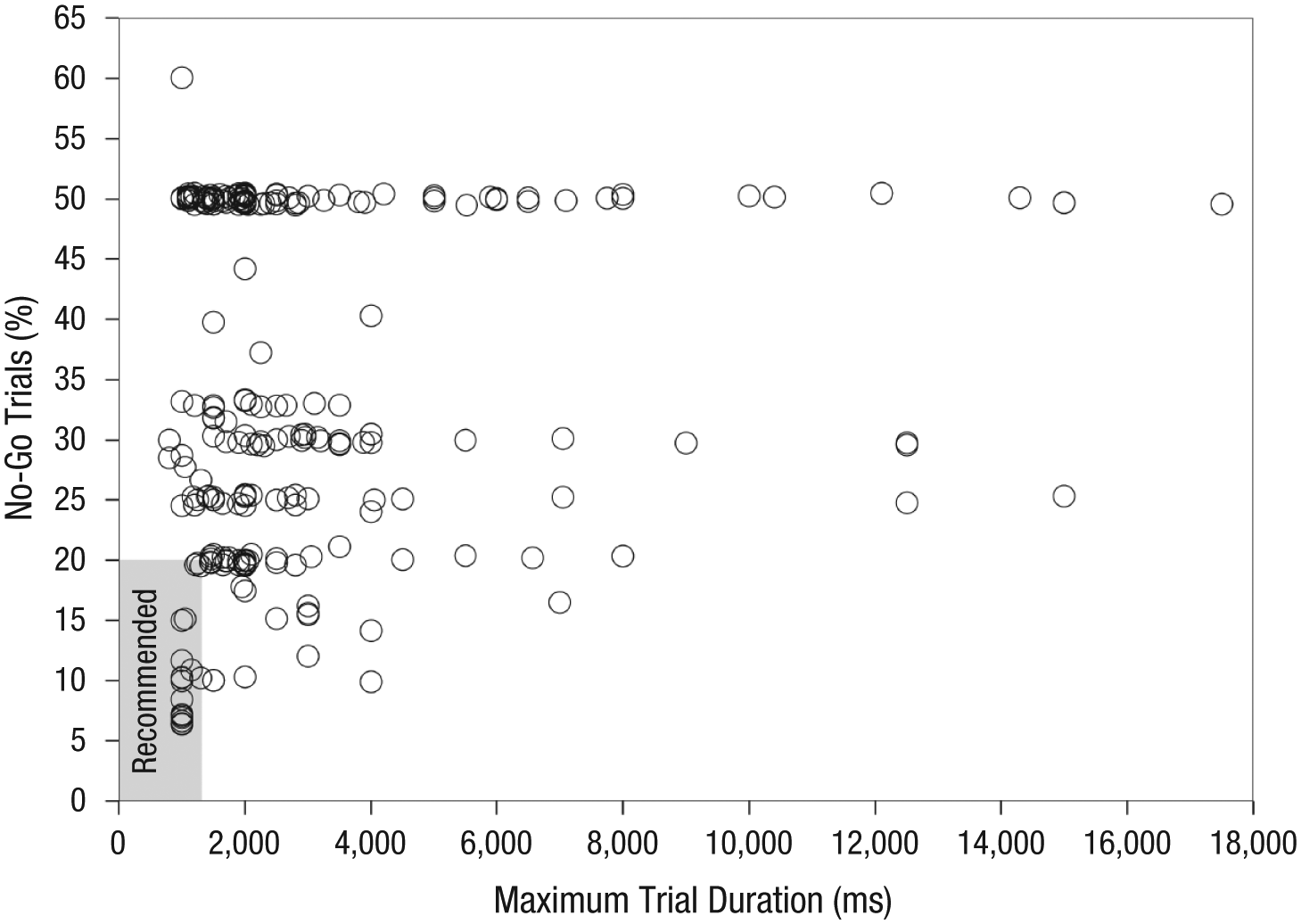

Wessel (2018) conducted a meta-method analysis of these two well-known requirements in 241 published studies using the go/no-go task and found that the percentage of no-go trials ranged from 6 to 60% and the maximum trial duration ranged from 800 to 17,500 ms (see Fig. 3). Go and no-go trials were equiprobable in about 40% of the go/no-go experiments. Additionally, although stimuli were presented at a relatively rapid pace in most of the studies, almost 20% used maximum trial durations greater than 4,000 ms, which allowed a deliberate response to the stimulus instead of a prepotent response or its immediate inhibition. Most of the studies did not meet the empirically validated recommendations for (a) fewer than 20% no-go trials and (b) varying trial durations with a maximum of less than 1,500 ms (Elson, 2017; Wessel, 2018).

Scatterplot showing the percentage of no-go trials as a function of the maximum trial duration in the 241 go/no-go studies identified by Wessel (2018). Each circle represents one study. Slight jitter has been added to increase visibility.

Implications for meta-analyses

Studies using the go/no-go task need to be carefully designed to ensure that inhibitory control is actually required to perform correctly on no-go trials. Otherwise, any differential effects of no-go trials cannot be interpreted as effects of inhibitory-control activity. It is unclear whether slow-paced or equiprobable go/no-go tasks elicit prepotent motor activity—and hence, whether they are valid measures of inhibitory control. Consequently, go/no-go studies with widely different parameters may not assess a consistent, underlying effect or test the same theoretical prediction coherently, as only a subset of the studies may have been designed appropriately to capture inhibitory control. If a meta-analysis were conducted without using a meta-method analysis to select studies for inclusion, the best-case scenario would be that the precision of the meta-analytic effect-size estimates would be reduced to some extent; in the worst case, the estimates would not be meaningfully interpretable at all because the included studies actually operationalized different constructs.

Conducting a Meta-Method Analysis

Psychologists seeking to inform a meta-analysis by means of a meta-method analysis must ask themselves which of the potentially infinite number of units of analysis their meta-method analysis should focus on. The principled, yet somewhat unsatisfying, answer is that this focus should be consequentially determined by the meta-analytic research question. Several taxonomies could be employed to guide this decision making, and one useful approach involves framing the meta-method analysis on the continuum from confirmation to exploration.

Planning a meta-method analysis

The choice of the unit of analysis is perhaps most obvious when the meta-analysis concerns a body of work in which empirical procedures are prescribed, either through highly documented assessment batteries (e.g., intelligence tests) or, ideally, through methodological specifications derived from formalized theories (which are, admittedly, currently quite rare in psychological science). In such a case, researchers may conduct a meta-method analysis to verify the (reported) conformity to those prescriptions and to determine whether any deviations occurred systematically.

In the absence of such clear norms, researchers may adopt a more pragmatic approach and conduct a meta-method analysis with two questions in mind. First, which empirical procedures should be standardized across studies in order to allow a meaningful meta-analytic inference? If the target literature shows a high degree of standardization of those procedures, this would indicate agreement on best practices, whereas a low degree of standardization might raise doubts about the replicability, generalizability, and synthesizability of observations. A meta-method analysis based on this question would provide researchers with information concerning whether a meta-analysis could yield meaningful conclusions and would also lay the foundation for establishing gold standards. Second, which empirical procedures might be prone to abuse of degrees of freedom to produce consistent results? Researchers may already suspect that flexibility is more common in some research practices than in others (e.g., because of the community’s perception of what is acceptable) and may systematically search for confirmatory evidence of that flexibility. A meta-method analysis may instead uncover a high degree of consistency in those procedures, which would be a sign of transparency and reliability, or it may reveal the expected low degree of consistency, which could indicate that the body of literature is affected by questionable research practices.

Finally, researchers may conduct a meta-method analysis on specific procedures out of genuine curiosity. The goal may not be to confirm that studies conform to prescriptions, but rather to assess the degree to which implicit agreement or field-specific traditions have already been established and shape studies’ methodologies.

General implications for meta-analyses

Whether meta-method analysis is used as a quantitative or qualitative technique for research synthesis depends on the meta-analytic research question it is applied to. However, there are important considerations to be taken into account if the plan is to incorporate meta-method-analytic results into meta-analytic statistics (e.g., in a moderator analysis): First, the greater the observed procedural differences across individual studies, the fewer studies per procedure are available, and consequently the lower the statistical power of a moderator test. Second, studies that have used different procedures may not assess the same variables and relationships between them, and therefore cannot be meaningfully included in the same analysis, even when the intent is to quantify the effect of methodological flexibility. 5

Conversely, a meta-method analysis may reveal psychometric artifacts that can be controlled for statistically with appropriate meta-analytic correction techniques. For example, range restriction (Sackett & Yang, 2000) and dichotomization of continuous variables (Hunter & Schmidt, 1990) have predictable effects on study results, and a psychometric meta-analysis can adjust for the variability they introduce in meta-analytic effect-size estimates (Schmidt & Hunter, 2015). Thus, depending on the specific findings of a meta-method analysis and the availability of appropriate adjustment techniques, it may be neither efficient nor appropriate to exclude studies from a meta-analysis, to use ratings of studies’ “methodological rigor” as a moderator in a meta-analysis, or to conclude that a meta-analysis cannot be conducted at all because of inconsistent methodologies. Researchers need to distinguish methodological artifacts that are correctable (accounting for all assumptions and limitations of the correction techniques themselves) and those that may be disqualifying factors.

The most important implication of meta-method analyses is that they provide context required to meaningfully interpret observed meta-analytic effect-size estimates. In psychology, meta-analysis is occasionally treated rather uncritically as the ultimate tool to produce final answers to a research question (Schmidt & Oh, 2016). For example, Huesmann (2010) argued that Anderson et al.’s (2010) meta-analysis (see Example 1, earlier in this article), although based partially on the documented, inconsistent use of a primary measure, “[nailed] the coffin shut on doubts that violent video games stimulate aggression” (p. 179). A meta-method analysis could inform that interpretation of the consistency of study outcomes and—given the context of unexplained flexibility in data collection—might suggest that the coffin lid is actually still somewhat ajar.

Meta-method analyses could also improve the usefulness of meta-analyses in nascent fields where the number of studies is probably too small for a meaningful collation of the available evidence. Instead of attempting to (prematurely) obtain conclusive answers to research questions, researchers could systematically examine methodological standards and norms so as to cautiously “take stock” of the available knowledge and its ability to provide meaningful conclusions. Such analyses could be done on a small number of pioneer studies, steering fields in productive directions before deficient empirical practices proliferate.

Beyond standardization

I have framed meta-method analysis somewhat narrowly as an approach to document and evaluate standardization, which is critical for maximizing psychometric objectivity, across studies. However, it is entirely conceivable that a measure is used consistently in a literature even though there is little available evidence for its construct or criterion validity, or that common design choices (e.g., a relative overabundance of simple cross-sectional studies) limit the meta-analytic conclusions that can be drawn about causality and the robustness of the relationship between variables. Certainly, in such cases, a meta-method analysis concerned solely with issues of standardization would yield different—that is to say, possibly less relevant—conclusions than would one with broader considerations.

There is no reason in principle why design and analytic choices in a literature cannot also be systematically, critically assessed with regard to their implications for psychometric reliability or validity (and for the validity of meta-analytic conclusions). The scope of this article is not meant to discourage researchers from extending the general concept of meta-method analysis to higher-level psychometric properties beyond consistency. Rather, my intent is to emphasize the importance of psychometric objectivity as a criterion of quality and a deterministic prerequisite to reliability and validity within classical test theory. Thus, this article is meant to (re)introduce the basic concept of meta-method analysis to psychological research synthesis while reaffirming the foundational value of psychometric objectivity specifically, and the importance of standardized protocols for meaningful cumulative research more generally.

General Implications for Primary Research

Both the conceptualization and the outcomes of meta-method analyses have potential benefits for empirical studies. A relatively obvious one is that they can offer systematic documentation of proven and tested empirical procedures and instructions for implementation in future studies (e.g., stimulus materials to use and to avoid, a decision tree for analytic strategies). This documentation could, in turn, cumulatively increase the informational value of research synthesis.

As much as meta-method analyses benefit from clear, detailed descriptions of empirical studies, they may also shape standards for transparent reporting as researchers seek to facilitate their studies’ accessibility. Current reporting standards, such as the American Psychological Association’s JARS (Appelbaum et al., 2018), already demand that procedures be described in great detail. However, researchers (e.g., those outside the United States) may not be aware of these guidelines, or if they are, they may find it difficult to apply broad recommendations to their specific research areas. Surely, no researchers believe they are doing a bad job describing their study protocols, but they may disagree about which information is relevant or should be made available, and where emphasis should be put. Although JARS specifically mentions the importance of transparent reporting so that scientific claims can be understood and evaluated, it may not be obvious which information is critical to meaningful research synthesis specifically.

Further, journals do not routinely enforce compliance with these guidelines, nor is compliance systematically assessed. Thus, meta-method analyses may, at least in part, document the extent to which reporting practices in a given field already correspond to these standards, and point researchers to areas where they might be improved.

Conclusion

Meta-analysis is an accessible, systematic way of synthesizing and quantifying a body of research reports to answer overarching research questions. The effectiveness of meta-analyses is constrained by a range of challenges, such as biases (e.g., publication bias), methodological issues (e.g., appropriateness of search and selection procedures), and metamethodological concerns (e.g., discipline-specific norms for empirical work). As meta-analyses necessarily inherit the quality of the included evidence, studies must meet a number of basic requirements in order to allow meaningful meta-analytic inferences. Empirical research benefits not only from agreement on terminology and fundamental theoretical constructs, but also from rigorous best practices and gold standards. Fields in which researchers have not yet been able to agree on and develop useful procedural protocols might simply not be mature enough for a sophisticated synthesis. Although tradition might be a weak form of protocol, it can still offer guidance. When there is no protocol for the procedures that many people are using, though, to what extent can individual studies be compared, combined, synthesized, and meta-analyzed? Researchers forming a community of practice need to figure out such protocols collectively before a meta-analysis is potentially used to present research results as more conclusive than the underlying research standards could possibly allow and to perpetuate empirical practice that may be detrimental to meaningful research synthesis and truth seeking more generally.

Conversely, the robustness and trustworthiness of meta-analyses in research domains in which emphasis is put on standardization and documentation would be further corroborated by meta-method analyses. Evidence of rigor across studies may increase confidence in the replicability of individual studies and the precision of meta-analytic effect-size estimates, offer guidance to researchers entering a field as they look for tried-and-tested procedures, and inspire systematic methodological research.

This article has laid out an approach to research synthesis that can contextualize meta-analytic effect-size estimates conventionally reported in psychological research syntheses: systematic examination of reported procedures that deterministically generate empirical outcomes. This approach is conservative in nature because it relies on what has been reported and does not involve inferring hidden causes (e.g., an inaccessible file drawer of studies) or biases occurring at a conceptual stage (Fiedler, 2011), which may not even be considered researcher degrees of freedom. This synergetic approach emphasizes the role of methods in advancing theory (Greenwald, 2012) by improving the quality of meta-analytic inferences. And as the quality of meta-analyses increases through these techniques, so will empirical standards in individual studies.

Supplemental Material

Elson_Rev_Open_Practices_Disclosure – Supplemental material for Examining Psychological Science Through Systematic Meta-Method Analysis: A Call for Research

Supplemental material, Elson_Rev_Open_Practices_Disclosure for Examining Psychological Science Through Systematic Meta-Method Analysis: A Call for Research by Malte Elson in Advances in Methods and Practices in Psychological Science

Footnotes

Acknowledgements

I sincerely thank Brenton Wiernik for his very thoughtful signed review of this manuscript, which substantially improved it. I also thank Nick Brown, Julia Eberle, James Ivory, Richard Morey, Julia Rohrer, and Tim van der Zee for comments on earlier versions of this manuscript.

Action Editor

Frederick L. Oswald served as action editor for this article.

Author Contributions

M. Elson is the sole author of this article and is responsible for its content.

Declaration of Conflicting Interests

The author(s) declared that there were no conflicts of interest with respect to the authorship or the publication of this article.

Funding

This research is supported by the Digital Society research program funded by the Ministry of Culture and Science of North Rhine-Westphalia, Germany.

Open Practices

Open Data: https://osf.io/4pdvg, ![]()

Open Materials: not applicable

Preregistration: not applicable

All data have been made publicly available via the Open Science Framework and can be accessed at https://osf.io/4pdvg (Example 1) and https://osf.io/xytch (Example 2). The complete Open Practices Disclosure for this article can be found at http://journals.sagepub.com/doi/suppl/10.1177/2515245919863296. This article has received the badge for Open Data. More information about the Open Practices badges can be found at ![]() .

.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.