Abstract

In this commentary, we examine the implications of the failed replication reported by Vaidis et al., which represents the largest multilab attempt to replicate the induced-compliance paradigm in cognitive-dissonance theory. We respond to commentaries on this study and discuss potential explanations for the null findings, including issues with the perceived choice manipulation and various post hoc explanations. Our commentary includes an assessment of the broader landscape of cognitive-dissonance research, revealing pervasive methodological limitations, such as underpowered studies and a lack of open-science practices. We conclude that our replication study and our examination of the literature raise substantial concerns about the reliability of the induced-compliance paradigm and highlight the need for more rigorous research practices in the field of cognitive dissonance.

We conducted a multilab constructive replication of a seminal paradigm from the cognitive-dissonance literature—the induced-compliance paradigm through an essay task. According to cognitive-dissonance theory, participants who write a counterattitudinal essay under high choice should experience more dissonance and subsequently change their attitude more to reduce this dissonance than participants under low choice. Using a design based on Croyle and Cooper (1983), researchers from 39 labs recruited 4,898 participants to test this hypothesis, resulting in the largest cognitive-dissonance study conducted to date. Despite this effort, we did not find support for the hypothesis. No significant difference in attitude was observed between the high-choice and low-choice conditions. Nonetheless, we did observe that participants who wrote a counterattitudinal essay (high or low choice) changed their attitude, on average reporting a less negative attitude compared with participants who wrote a neutral essay.

We are grateful for the commentaries on this project and for the opportunity to discuss our findings and conclusions. Rather than discussing each commentary in turn, we focus on several topics that were brought up. Our aim is to respond to criticisms that were raised, discuss new findings that were revealed by a reanalysis of the data, and elaborate on what we think the most important implications are of our project.

What to Think After Our (Mostly) Failed Replication?

The commentary of Cyrus-Lai et al. (2024; CTU) centers around what should be concluded from a failed replication. We think this is an important first point to reflect on because there are several possible conclusions one can draw from the results we obtained.

Did we disprove cognitive-dissonance theory?

Some may argue that our failed replication does not disprove the theory of cognitive dissonance. We agree. We did not set out to prove or disprove the theory. Instead, our goal was to examine the replicability of one major paradigm. We know that many decisions go into setting up a study such as this, and we therefore anticipated disagreements with certain aspects of the design. We aimed to minimize concerns by having experts vet the design as part of the Registered Report procedure, but no matter which study we would have conducted, there would be limitations that prevent us from refuting cognitive-dissonance theory. In addition, by testing only a single paradigm, we left other paradigms as potentially powerful sources of evidence for the theory. Our ambitions were merely to see whether we can reliably replicate a single widely used paradigm.

Is our replication failure a false negative?

Large-scale multilab studies such as ours are impressive in some ways but less impressive in others. For one, the same study is run across multiple labs, and a limitation of the design could mean the same limitation applies to all labs, undermining its implications.

Did we successfully manipulate perceived choice?

We implemented a widely used manipulation check to determine whether our choice manipulation affected the participants’ level of perceived choice. We asked participants about how much choice they felt over writing the essay and found that our manipulation influenced self-reported free choice.

Harmon-Jones and Harmon-Jones (2024; HH) observed that the mean of perceived choice for the low-choice condition was at the midpoint of the scale, indicating that on average, participants in this condition felt they had moderately high freedom of choice. They argued that the absence of low-choice freedom could explain the failed replication because the moderate-choice freedom could have caused them to experience dissonance-related discomfort, motivating them to change their attitude. If these participants had perceived themselves as having low-choice freedom, they would not have changed their attitudes. Likewise, Pauer et al. (2024; PLE) commented on the assumption that choice perceptions are low unless participants are reminded of their choice freedom. They argued that perceived choice may be higher because of disclosures of voluntary participation in scientific studies and the experiences of choice in everyday life, such as when people accept browser cookies.

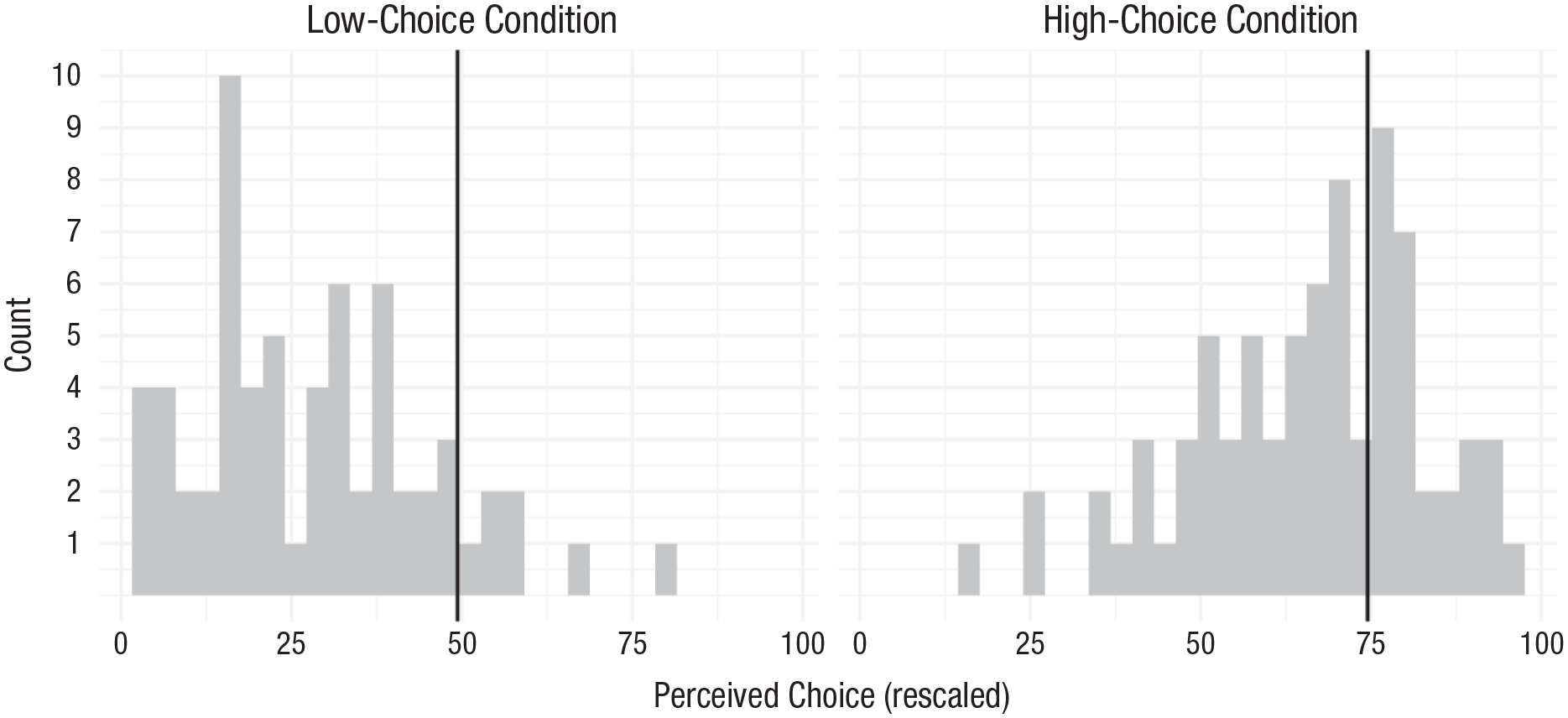

HH compared the average perceived-choice ratings from our study with those from a selection of published studies. They found that the average perceived-choice ratings from our study were higher than those from previous studies. We applied their approach to a larger selection of studies that reported perceived choice (k = 61) based on a systematic search of the induced-compliance literature (see State of Cognitive Dissonance Research section). The results are shown in Figure 1.

Rescaled perceived-choice ratings across studies. The solid vertical lines reflect the perceived choice observed in Vaidis et al. (2024). The vertical line in the right plot is the average perceived choice across both high-choice conditions.

As HH observed, the level of perceived choice in our low-choice condition was indeed higher compared with other studies. Nonetheless, nine studies (6.34%) from our sample reported a higher level of perceived choice in the low-choice condition compared with our low-choice condition. Crucially, results of these studies were interpreted as supporting cognitive-dissonance theory. 1 This means that relatively high levels of perceived choice appear not to undermine the possibility of finding statistically significant evidence.

The idea that perceived choice must reach a certain absolute level to prevent dissonance reduction is not an established fact. Some authors have considered a linear relationship between attitude change and commitment (i.e., including choice; e.g., Brock, 1968; Freedman, 1963; Kiesler, 1971), whereas others have argued for an all-or-nothing relationship (e.g., Beauvois & Joule, 1996; Brehm & Cohen, 1962). A debate on this topic gave rise to the literature on the role of commitment (Kiesler, 1971). However, no consensus has emerged on the exact relationship between commitment and dissonance reduction, perhaps because more complex research designs with more than two choice conditions are needed to adequately capture the nature of the relationship.

Relatedly, PLE conducted a reanalysis consisting of the interaction between perceived choice and the conditions on the postessay attitude. They found a positive relationship between perceived choice and attitude change in the counterattitudinal-essay conditions and a negative relationship in the neutral-essay condition. They concluded that these findings are consistent with the theoretical underpinnings of the induced-compliance paradigm.

Note that the reanalysis by PLE is inconsistent with the view that choice and attitude change have an all-or-nothing relationship. Their analysis rests on a linear relationship between perceived choice and attitude change. This further demonstrates that the exact type of relationship between perceived choice and attitude change is not agreed on.

We advise caution in interpreting PLE’s findings. First, as they themselves mentioned, their analysis was not preregistered and is therefore necessarily exploratory in nature. Second, this is, in essence, a correlational finding, and thus, it is subject to third-variable explanations. Participants’ responses to the manipulation checks may have been influenced by variables other than the manipulation. For example, strong attitudes are those that are relatively durable and impactful (Krosnick & Petty, 1995). In our study, participants who had strong preexisting attitudes may have both been less likely to change and felt less free in writing an essay that disagreed with their preexisting view. Alternatively, people who generally feel they have more control over their lives (i.e., individuals with a general tendency to report high perceived choice) might also feel less restrictive in changing their opinions and attitudes irrespective of dissonance. If so, we might also expect the interaction results observed by PLE, although this may not suggest that the attitude change among high-choice participants was a result of cognitive dissonance. Finally, even if the positive relationship between perceived choice and attitude change in the counterattitudinal essay conditions aligns with cognitive-dissonance theory, as claimed by PLE, it remains unclear how this theory accounts for the negative relationship between perceived choice and attitude change in the neutral-essay condition.

We pose these counterarguments not to dismiss the dissonance interpretation provided by PLE but to emphasize that it is not the only possible interpretation of the correlational finding. We are sympathetic to the possibility that the experimental context may have changed over the last decades, resulting in different effects on participants’ perceived choice freedom when participating in studies of this type. Since the 1980s, there have been significant changes in laboratory settings. Ethical standards have evolved, and current consent forms emphasize freedom and autonomy more than in the past, as noted by PLE, possibly explaining the failed replication. However, induced-compliance studies with similar procedures have continued to be published in recent years (e.g., Cancino-Montecinos et al., 2018; Cooper & Feldman, 2019; Martinie et al., 2017; see also Table 1).

Sample Sizes and Open-Science Practice in the Previous Induced-Compliance Studies

Note: Sample sizes are the numbers used by the authors for the analyses but not necessarily the total recruited participants. Sample size per condition is an average computed from the sample size divided per the number of conditions.

To summarize, skepticism about our results is warranted given the findings related to perceived choice. Yet because of the absence of theoretical consensus on this issue and the potential for alternative explanations, we cannot assert that the failed replication is solely caused by this aspect of the design.

Post hoc explanations

In response to our findings, several post hoc explanations have been offered to explain the failed replication 2 . How should one respond to these explanations? On the one hand, post hoc explanations must be taken seriously because they could be explanations that undercut some of the implications of a failed replication. Perhaps the induced-compliance paradigm does reliably replicate as long as one or more post hoc explanations are taken care of. On the other hand, post hoc explanations differ substantially in their evidential basis. Some post hoc explanations are supported by published studies, whereas others are not. Explanations of the latter type may be interesting but are not persuasive without evidence supporting their plausibility. This might also apply to post hoc explanations of the first category. Citing a single study or even a handful of studies to show that a factor is important in conducting dissonance studies is not necessarily strong evidence for that factor having substantial and reliable causal effects. For example, the claim that we should have used shorter essays because there are several studies that found this is more effective is not well supported. Those studies had much smaller numbers of participants in each condition and thus likely yielded inaccurate effect-size estimates. Also note that we decided to replicate a study that for all intents and purposes has a strong evidential basis in the first place, yet we believed it was warranted to try and replicate that study.

In addition, post hoc explanations also risk making a theory unfalsifiable, particularly in the case of an underspecified theory, such as cognitive-dissonance theory. To illustrate, consistent with the theory, there are often multiple ways for dissonance to be reduced. Aversive arousal caused by dissonance may be misattributed to a different cause, thereby preventing dissonance reduction (Zanna & Cooper, 1974), or other, unmeasured means of dissonance reduction could have occurred, such as trivialization (Simon et al., 1995). This underspecification risks making cognitive dissonance a nonfalsifiable theory, as has been argued by others (e.g., Griffin, 2006). We should therefore be hesitant to develop additional ways for the theory to be unfalsifiable.

We also note the possibility that some of the post hoc explanations may be influenced by status-quo-based reasoning. Suppose that we had successfully replicated the classic effect. Would the objections about the study taking place during COVID-19 have been raised? Would the issue of essay length have been raised as a problem?

Our response here may be perceived as us dismissing the post hoc explanations too easily. Yet as CTU noted, typical responses to failed replications (e.g., context-sensitivity defense, expertise defense) are not strongly supported by empirical data. CTU pointed out that meta-analytic evidence shows little cross-site heterogeneity in overall replication failures, contradicting claims of context sensitivity. Likewise, indicators of scientific eminence, such as publication records, do not predict replication results, undermining the expertise defense. Given this accumulating evidence against common rebuttals to failed replications, it seems justified to not engage with specific defenses and instead focus on the broader implications of our findings for the field.

Ultimately, post hoc explanations are possible conjectures, and we hope they stimulate theoretical and empirical efforts that improve the procedures and the understanding of the required conditions for observing dissonance effects, but they do little for us to change how we view the evidentiary value of our study.

So, what do we think we should conclude?

We conducted a Registered Report on the induced-compliance paradigm. We had experts in the field collaborate on this project and had our design and analyses approved by several more experts before data collection. Yet we did not obtain the expected pattern of results. We believe that this is cause for significant concern.

HH raised the question whether the induced-compliance effect is weak. After all, if various factors such as a brief filler task before the essay task can prevent the effect, then perhaps the effect is indeed weak. We think so. It is not sufficient to argue that it is possible to show the induced-compliance effect. Dissonance theory as we read and interpret it would predict an effect in our study. Our failed replication shows at a minimum that the effect is not reliably observed despite a large sample size, the participation of many different labs, and a vetted process in which the design and analyses were approved by experts. If there are contextual or methodological considerations that constrain when and where the induced-compliance paradigm will produce attitude change, then the theory needs to be more specific about articulating these constraints.

It is not just our findings that provide reasons for concern. We would be less concerned (and question our study much more) if we knew that our results go against the results of other preregistered and large-sample studies. However, an examination of the cognitive-dissonance literature reveals a scarcity of such studies.

The State of Cognitive-Dissonance Research

In 1964, Chapanis and Chapanis wrote,

It is rare to find in this area a study that has been adequately designed and analyzed. In fact, it is almost as though dissonance theorists have a bias against neat, factorial designs with adequate Ns, capable of thorough analysis . . . (p. 18)

HH claimed that the concerns of Chapanis and Chapanis (1964) have been addressed, citing Wicklund and Brehm (1976). Wicklund and Brehm, however, focused mainly on theoretical objections to cognitive-dissonance theory, such as alternative explanations and post hoc explanations. They indeed concluded that the cognitive-dissonance literature is fine; they wrote, for example, that the literature “is not of the post hoc variety” (p. 319). We are not so sure. Wicklund and Brehm did not focus on methodological limitations we now know to be vital for the reliability and validity of psychological findings, such as adequate sample sizes, data sharing, and preregistration. Our impression of the dissonance literature is that it is severely lacking in these practices.

To demonstrate the prevalence of methodological limitations, we used the PsycINFO database to find studies employing the induced-compliance paradigm. Our initial search resulted in 440 published peer-reviewed empirical articles (see https://osf.io/4mz2j/). We identified articles using the compliance paradigm in which perceived choice was manipulated and the counterattitudinal-essay task was used. We similarly selected studies from Kenworthy et al.’s (2011) meta-analysis and included them in the analysis. The final sample consisted of 104 studies, for which we reported the overall sample size, number of conditions, average per condition, and whether several open-science practices were performed, including preregistration, data accessibility, and whether it was a Registered Report (Table 1).

The induced-compliance literature by means of the counterattitudinal-essay task spans more than 50 years. In these years, excluding our study, the (average) sample size per condition was 16.66 (SD = 10.13) and ranges from 4.25 to 70 with a median of 13.50 (95th percentile = 33.60; 99th percentile = 54.49). Just two other studies shared their data publicly. None of the studies were preregistered or part of a Registered Report. Of course, we cannot expect these practices to be common in this old literature, but that does not matter. What matters is whether the literature presents a strong evidential basis for its claims. Looking at Table 1, we get the same impression that Chapanis and Chapanis had of the literature in 1964.

About the Necessity of Open Science in Cognitive-Dissonance Research

HH wrote that the literature has provided much evidence consistent with predictions from cognitive-dissonance theory, but the replication crisis has demonstrated that hundreds of experiments may not necessarily mean strong evidence (e.g., Hagger et al., 2016; McCarthy et al., 2018; O’Donnell et al., 2018). There is evidence that multiple phenomena in psychology, each ostensibly supported by dozens if not hundreds of studies, may have been Type 1 errors (also see CTU’s commentary). Is the field of cognitive dissonance full of Type 1 errors? It might very well be. Without open-science practices and preregistration in particular, it is possible for Type 1 errors to become prevalent. In fact, assuming an already inflated effect size in social psychology of d = 0.43 (Richard et al., 2003), a two-sample t test requires a sample size of 86 participants per cell for 80% power and an alpha of 5%. No study from Table 1 reaches this number except ours. Unless effect sizes in this literature are much higher, this implies that the majority, if not all, induced-compliance studies are severely underpowered and that there is a file drawer of studies with nonsignificant results.

We agree with CTU that the strong signals provided by superior multisite samples and more rigorous analyses are and should continue to be the primary goal of replication. It seems clear to us that the cognitive-dissonance literature needs testing of its ideas using the most rigorous experimental designs and statistical techniques. The literature is rife with hypothesis skepticism, focused on the possibility of alternative explanations explaining data patterns, but statistical tests of these ideas have not, in our perception, met the same standards. Greater statistical skepticism is warranted.

Although HH are concerned about the fact that “failures to replicate have the potential to unfairly discredit the field of psychology,” we think that the opposite is also true. Failures to refute can also discredit a field (Fanelli, 2010; Scheel et al., 2021). We assume that the goal of science is to achieve a better understanding of the world, not to preserve a discipline’s prestige.

The field of cognitive dissonance has a long and rich history, marked by numerous theoretical disagreements that have fueled intense scholarly debates. The field now needs to focus on improving its research methods. We argue for wider use of open-science practices in cognitive-dissonance studies to match the field’s dedication to resolving theoretical disputes. This means preregistering studies, using larger sample sizes, and openly sharing data and materials. Without adopting these open-science practices, the field risks being filled with Type 1 errors.

Footnotes

Transparency

Action Editor: David A. Sbarra

Editor: David A. Sbarra

Author Contributions

W. W. A. Sleegers and D. C. Vaidis share first authorship.