Abstract

The Transparency and Openness Promotion (TOP) Guidelines describe modular standards that journals can adopt to promote open science. The TOP Factor quantifies the extent to which journals adopt TOP in their policies, but there is no validated instrument to assess TOP implementation. Moreover, raters might assess the same policies differently. Instruments with objective questions are needed to assess TOP implementation reliably. In this study, we examined the interrater reliability and agreement of three new instruments for assessing TOP implementation in journal policies (instructions to authors), procedures (manuscript-submission systems), and practices (journal articles). Independent raters used these instruments to assess 339 journals from the behavioral, social, and health sciences. We calculated interrater agreement (IRA) and interrater reliability (IRR) for each of 10 TOP standards and for each question in our instruments (13 policy questions, 26 procedure questions, 14 practice questions). IRA was high for each standard in TOP; however, IRA might have been high by chance because most standards were not implemented by most journals. No standard had “excellent” IRR. Three standards had “good,” one had “moderate,” and six had “poor” IRR. Likewise, IRA was high for most instrument questions, and IRR was moderate or worse for 62%, 54%, and 43% of policy, procedure, and practice questions, respectively. Although results might be explained by limitations in our process, instruments, and team, we are unaware of better methods for assessing TOP implementation. Clarifying distinctions among different levels of implementation for each TOP standard might improve its implementation and assessment (study protocol: https://doi.org/10.1186/s41073-021-00112-8).

Because the decision to publish research findings is often related to their magnitude and statistical significance, an undesirable proportion of published findings are likely to be false. For example, the ability to replicate results is sometimes used as a proxy for whether they are true (Goodman et al., 2016), and scientists have been concerned for decades about the lack of replication in the literature (Greenwald, 1976; Nosek et al., 2022; Open Science Collaboration, 2015; Rosenthal, 1979; Sterling, 1959). There is increasing recognition that transparent and open research practices can increase trust in empirical science and increase the likelihood that published results are true (Miguel et al., 2014). Note that scientists endorse positive norms related to transparency and openness, and social changes may be contributing to greater uptake of technologies and rules to promote better scientific practices (Agnoli et al., 2021; Christensen et al., 2020; Lindsay, 2017; Spellman, 2015; Tenopir et al., 2015).

Academic publishers and journal editors can facilitate greater transparency and openness in the published literature by implementing policies in their instructions to authors that promote open-science standards (Mayo-Wilson et al., 2021). In 2015, scientists representing multiple disciplines developed the Transparency and Openness Promotion (TOP) Guidelines, which provide standards on open-science policies for scientific journals (Nosek et al., 2015). TOP includes eight modular standards on transparency (design and analysis reporting guidelines), reproducibility (data, code, and materials sharing), prospective registration (study and analysis plan preregistration), and rewarding researchers for engaging in open science (conducting replications and citing data, code, and materials). The TOP Factor—a measure of journal implementation of TOP—includes two additional standards related to (a) publication bias and (b) “open science badges” that acknowledge open research practices.

Journal policies might operationalize TOP standards at “levels” that differ in the stringency of requirements for journals, peer reviewers, and authors (Nosek et al., 2015). Level 1 policies promote open research practices, typically by requiring authors to disclose whether they used an open research practice. Level 2 policies involve stronger expectations for authors without added requirements for editors and reviewers, typically by requiring that authors use open research practices. Level 3 policies require that journals also invest resources to verify that authors used open research practices. Journal policies that do not implement TOP are assigned Level 0. Using data sharing as an example, journals could require authors to disclose whether data are publicly accessible (Level 1), require authors to archive data in trusted repositories (Level 2), verify that reported analyses can be reproduced independently using the publicly accessible data (Level 3), or say nothing about data sharing (Level 0). The TOP Factor is calculated as the sum of the levels of implementation across all 10 standards.

Journal procedures and practices should be aligned with journal policies. Here, we define “procedures” to include the manuscript-submission systems used to submit articles and related information about those articles and their authors. We define journal “practices” as information reported in journal articles (Mayo-Wilson et al., 2021). Journal procedures that complement stringent policies could enable uniform policy adherence and facilitate systematic monitoring of standards related to transparency and openness (Aalbersberg et al., 2018). For example, journals could implement procedures that require authors to complete certain tasks during manuscript submission, such as entering structured data elements into submission systems (e.g., entering a URL in a field for the location of a data set). In addition, journals might create templates for empirical articles that facilitate disclosing information in journal articles. For all manuscripts that are potentially eligible for open-science badges, Advances in Methods and Practices in Psychological Science requires that manuscripts include a dedicated “Disclosures” section in the main text on preregistration, transparent reporting, and data, code, and materials sharing. By contrast, stringent policies that are not complemented by equally stringent procedures and practices might be less effective and more difficult to monitor. For example, many journal policies require that authors register clinical trials prospectively, yet a study of high-impact clinical-psychology journals found that two of the three journals that required trial registration published unregistered trials (Cybulski et al., 2016).

Reliable assessment of journal implementation is essential to TOP’s goals of increasing transparency and openness. Designed as a complement to the journal impact factor, the TOP Factor is a quantifiable metric for journal quality that focuses on the degree to which journals promote scholarly norms of transparency and openness. Yet because TOP involves a complex scoring system, raters might differ in their assessments of the same journals. Without standardized instruments, guidance, and training, the TOP Factor has questionable credibility and utility. For example, groups that have assessed the extent to which journals implement TOP have identified difficulties in understanding the distinctions among different levels of implementation and difficulties in how language used by journals corresponds to TOP (Cashin et al., 2021; Hansford et al., 2021; Spitschan et al., 2020). Crowdsourcing efforts to rate journals have used bespoke methods or subjective rater judgments that are not methodologically reproducible. Although the interrater reliability (IRR) of TOP ratings is unknown, anecdotal evidence suggests that differences in the interpretation and rating of journal policies are common. Given the growing use of TOP as a framework to change journal behaviors, reliable instruments with objective and clear questions are needed.

Objectives

In this study, we systematically assessed the interrater agreement (IRA) and the IRR of three instruments for assessing implementation of the TOP standards in journal policies (instructions to authors), procedures (manuscript-submission systems), and practices (journal articles). Two related articles reported results from other parts of the overall study. One describes the level of TOP uptake at the journals included in our study (Grant et al., 2022). The other describes a survey of journal editors that aimed to identify facilitators and barriers to uptake (Naaman et al., 2023).

Disclosures

We published the protocol for this study in a peer-reviewed journal (Mayo-Wilson et al., 2021). Readers can access the code and documentation (Kianersi et al., 2022b), materials (Kianersi et al., 2022a), deidentified data (Mayo-Wilson, Grant, Kianersi, & Naaman, 2022; Naaman, et al., 2023), and other resources at https://osf.io/txyr3/. In the Supplemental Material available online, we summarized descriptive findings on journal publishers and submission systems, our methods for interpreting the magnitude of IRR estimates, comparisons of our journal ratings with those posted on the Center for Open Science website, and our journal-rating timelines. We reported how we determined our sample size, all data exclusions, all manipulations, and all measures in the study. This study was reviewed by the Institutional Review Board (IRB) at Indiana University and determined to be exempt human-subjects research (IRB No. 10201).

Method

Modeling best practices in systematic reviewing (Higgins et al., 2019), we developed a structured process and instruments for rating TOP implementation in journal policies, procedures, and practices (Mayo-Wilson et al., 2021). This report follows the Guidelines for Reporting Reliability and Agreement Studies (GRRAS; Kottner et al., 2011).

Eligible journals

We included journals that have published empirical evaluations on the effectiveness of social and psychological interventions. To identify eligible journals, we first searched for federal evidence clearinghouses in a previous study (Mayo-Wilson, Grant, & Supplee, 2022). Federal clearinghouses rate the quality of published empirical evaluations on intervention effects to distinguish and disseminate information about “evidence-based” interventions for public policy and local decision-making. In the current study, we included all journals that published at least one report of an evaluation used by a federal clearinghouse to support the highest rating possible for an intervention (i.e., a “top tier” evidence designation). We did not restrict reports by date restriction when identifying eligible journals. We included journals that have changed publisher or changed name since publishing an eligible report. We excluded journals that have ceased operation entirely.

We initially identified eight clearinghouses, from which we identified the 339 eligible journals in our sample (Grant et al., 2022; Mayo-Wilson, Grant, & Supplee, 2022). Two clearinghouses (Pathways to Work and Prevention Services Clearinghouse) became active during our project after we had generated the list of eligible journals (Mayo-Wilson, Grant, & Supplee, 2022), and we did not search the new clearinghouses for additional journals.

TRUST policy, procedure, and practice rating instruments

For each rating instrument, the principal investigators (PIs; S. P. Grang and E. Mayo-Wilson) developed concise questions with detailed instructions for each TOP standard (Center for Open Science, 2014; Kepes et al., 2020; Nosek et al., 2015). To facilitate reliability, these questions are intended to be objective, single-barreled (i.e., asking about only one aspect of a standard), consistent in structure, and include “yes-or-no” responses only (Polanin et al., 2019). Each “yes” response indicated that a policy, procedure, or practice implemented a particular aspect of a TOP standard.

The policy rating instrument included 41 yes-no questions and two multiple-choice questions. The procedure rating instrument included 60 yes-no questions and no multiple-choice questions. Both instruments were divided into 10 sections (with two to eight questions in each section). Each section evaluated one TOP standard and concluded with an open text-box field in which raters were instructed to copy and paste relevant text from eligible journal documents. The practice rating instrument included 26 yes-no questions and one multiple-choice question, with seven open text-box fields. Although instructions assumed some knowledge of quantitative research methods and publication processes, they also included examples of common scenarios to facilitate reliable ratings.

We programmed the instruments for journal policies and procedures into Research Electronic Data Capture (REDCap; Harris et al., 2009, 2019). We programmed the instrument for journal practices in EPPI-Reviewer (Thomas et al., 2020). To promote efficiency and to ensure consistency of the data, the instruments were rated online using display logic. That is, some questions were always displayed, and other questions were displayed conditionally on each rater’s previous responses. Our rating instruments to assess journals policies (TRUST Team, 2019a), procedures (TRUST Team, 2019b), and practices (TRUST Team, 2021) are freely available on OSF (https://osf.io/txyr3/).

To assess IRR and IRA, we analyzed the questions in each rating instrument that were answered for all journals by all research assistants (RAs). These included 13 policy questions, 26 procedure questions, and 14 practice questions. We excluded questions that appeared depending on responses to previous questions because of display logic.

Most policy, procedure, and practice questions (50 of 53) included in the analyses of the current study were dichotomous. One policy question had four options that could be either “checked” or “unchecked.” Two questions about practices had three possible options: “no,” “yes study was not registered,” and “yes study was registered”; we combined the two “yes” responses for analysis.

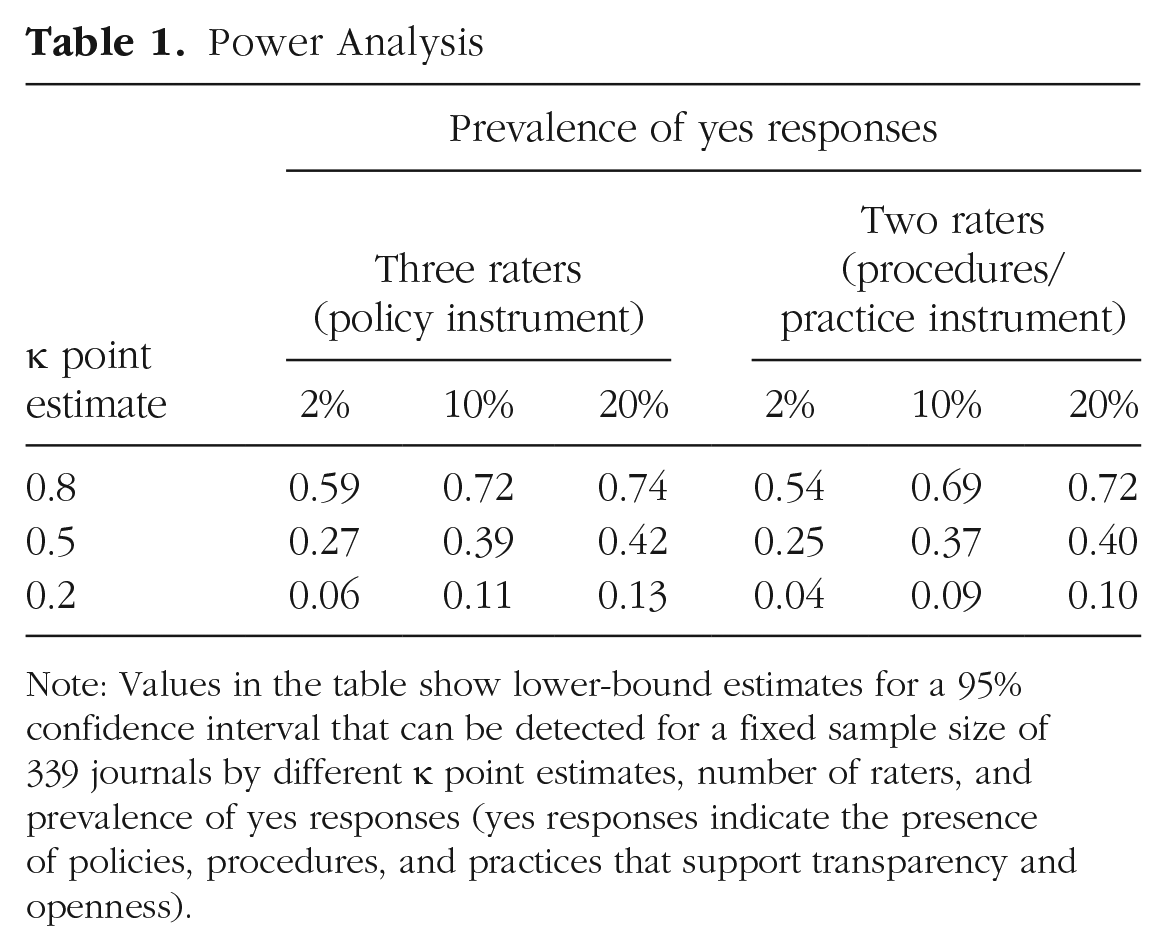

Statistical power

After identifying 339 eligible journals, we calculated the lowest expected value for κ (lower-bound estimate for a 95% confidence interval [CI]) under a range of scenarios for the fixed sample size (Table 1). We calculated precision in RStudio (RStudio Team, 2021) using the kappaSize package (Version 1.2; Rotondi, 2018). Depending on the prevalence of yes responses, our sample size was large enough to capture κ point estimates as small as 0.2 and lower-bound estimates as small as 0.04.

Power Analysis

Note: Values in the table show lower-bound estimates for a 95% confidence interval that can be detected for a fixed sample size of 339 journals by different κ point estimates, number of raters, and prevalence of yes responses (yes responses indicate the presence of policies, procedures, and practices that support transparency and openness).

Raters

Study PIs sent targeted emails to graduate students and their supervisors to recruit paid RAs to rate journal policies, procedures, and practices. The PIs trained the recruited RAs by introducing the project aims, answering general questions about transparency and openness, and discussing each question on the instruments. Compared with journal procedures and practices, we anticipated that journal policies would be more subjective and more difficult to rate. For this reason, we assigned two RAs to evaluate each procedure and practice, and we assigned three RAs to rate each journal policy.

We pilot tested preliminary versions of the instruments with RAs by assessing a small number of journals. During pilot testing, we held weekly group meetings to solicit feedback and to assess and promote agreement among RAs. Questions in the instruments with high disagreement were identified for further discussion and potential revision. When we identified questions with disagreements attributable to wording, structure, and/or instructions, we revised the questions to improve clarity and to promote future agreement. In the current study, we report the IRR and IRA of the revised versions of the TRUST policy, procedure, and practice rating instruments.

Identifying policy, procedure, and practice documents

To assess policies, two independent RAs identified eligible policy documents for each journal, specifically “instructions to authors” and related explanatory documents concerning manuscript submissions. RAs independently searched each journal’s website, downloaded a PDF version of each document, dated each document, and stored documents in a folder on Google Drive. RAs then met and reconciled any discrepancies in the eligible documents. Unresolved discrepancies were discussed and resolved during weekly meetings with the PIs.

To assess procedures, RAs located each journal’s online submission system and, when possible, initiated manuscript submissions. RAs simulated manuscript submission steps, took screenshots of each step, and saved those screenshots on Google Drive for rating. Because manuscript-submission systems might ask questions related to transparency and openness that depend on answers to previous questions (display logic), the RAs answered questions such that all relevant questions and fields would appear. A few journals required manuscript submissions by email. For these journals, RAs downloaded the journals’ submission instructions as PDF files. In weekly group meetings, RAs discussed issues about procedure identification with the PIs.

To evaluate practices, we used methods similar to article-identification and data-extraction procedures used in systematic reviews. We included journal articles reporting quantitative evaluations of interventions that were intended to modify processes and systems that are social and behavioral in nature and that are hypothesized to improve health or social outcomes (Grant et al., 2018). Because we aimed to describe journal practices (i.e., the proportion of journals that were transparent and open) rather than author practices (e.g., the proportion of articles in each journal that were transparent and open), we did not sample multiple articles from each journal. Instead, two RAs independently hand-searched each eligible journal by screening titles and abstracts. When they identified potentially eligible articles, they entered citation information (i.e., volume number, issue number, first page number, and DOI) using a REDCap form. RAs could identify more than one article for each journal (Mdn = 7). Articles identified by RAs were retrieved for full-text review by one of the PIs, who reviewed the articles in reverse chronological order (i.e., starting with the most recent). Because we anticipated that transparent and open journals might use templates or other procedures to achieve consistent practices, a PI identified one eligible article per journal for rating. Questions about inclusion were resolved through discussion with the other PI.

Rating setting

All ratings were conducted independently online, and RAs were aware that ratings would be compared. RAs were not masked to journal names. The RAs met with the PIs weekly. In these meetings, PIs answered RAs’ questions about the instruments and any problems that they faced during the rating of a specific journal question. After the start of the rating process, we updated the policy rating instrument and added six questions that we also completed for journals rated previously. Four of the added questions asked whether a policy applied to all studies or only certain kinds of studies, and two questions concerned items included in the TOP Factor that were not part of the TOP Guidelines. We initially conducted the weekly meetings in person, and we moved to online Zoom meetings in 2020 because of the COVID-19 pandemic.

Reconciliation

After RAs completed their ratings, one RA identified disagreements in the data set. The PIs reviewed all disagreements and selected the reconciled ratings to be used for analysis.

Scoring policies

To determine the TOP levels for all standards in a journal policy, we used algorithms based on a published rubric for the TOP Factor (Center for Open Science, 2016a). The algorithms are included in our protocol (Mayo-Wilson et al., 2021) and are freely available online (TRUST Team, 2019a). We did not calculate levels for journal procedures and practices because levels of implementation apply specifically to journal policies only.

Consistency check for journal procedures

In the reconciled procedure-ratings data set, we checked whether journals with the same publisher and submission system had the same procedure ratings. For instance, we expected that journals published by Wiley and accepting manuscripts through the ScholarOne submission system would use similar procedures and thus have similar ratings. To examine the reliability of our ratings and to improve the quality of our data, one author (S. Kianersi) used Python to identify journals from the same publication and submission system that were rated differently. A second author (K. Naaman) reviewed inconsistent ratings, verified whether the procedures were actually the same or different, and recommended changes to improve consistency and accuracy. One PI (S. P. Grant) then reviewed and finalized the ratings used for analysis.

Comparison with external data sources

To further assess the reliability of our reconciled ratings, we compared the TOP Factor scores we calculated with TOP Factor scores published elsewhere. We found no overlapping journals with the results from three previous reports (Cashin et al., 2021; Hansford et al., 2021; Spitschan et al., 2020). We identified some overlapping journals rated on OSF by April 8, 2022. OSF ratings were completed at different times (which were not recorded in the data set) by staff at Center for Open Science and volunteers at hackathon-style events or by following journal and publisher submissions (personal communication). To rate journals, Center for Open Science refers raters to the published rubric that we used for our study (Center for Open Science, 2016a). To our knowledge, ratings published by Center for Open Science were not done in duplicate, and they were not completed using structured instruments. We considered ratings on OSF to be the best available data set for comparison, but these ratings were not considered a “gold standard” for determining the transparency and openness of journals.

Statistical analysis

Descriptive analyses on raters

We calculated the number of journals and the number of questions that each RA rated, and we calculated the proportion of times that each RA was in agreement with the reconciled rating (i.e., the rating agreed by all RAs or the rating after reconciliation by a PI). For policy questions, we also calculated the proportion of times that each RA was in the minority (i.e., rated a question differently from the other two policy RAs); we did not calculate this proportion for procedure or practice ratings data because there were only two raters.

IRR analysis

To estimate reliability, we used Fleiss’s κ and the intraclass correlation coefficient (ICC; Koo & Li, 2016; Kozlowski & Hattrup, 1992). We used Fleiss’s κ statistic when evaluating the IRR for each journal policy, procedure, and practice question (Fleiss, 1971). Because level of implementing each TOP standard is on an ordinal scale (range = 0–3), we used the ICC when assessing the IRR for TOP standard implementation (Kottner et al., 2011; Shrout & Fleiss, 1979). We used the two-way random-effects ICC model in our analysis, treating both journals and RAs as random effects (McGraw & Wong, 1996; Shrout & Fleiss, 1979). We used the “absolute agreement” definition and implemented the “single rater” type in our ICC analysis (Koo & Li, 2016). We categorized the κ statistic and ICC values using published guidelines (Koo & Li, 2016; Landis & Koch, 1977).

IRA analyses

For all IRA analyses, we evaluated the overall and specific agreement proportions (Cicchetti & Feinstein, 1990; de Vet et al., 2017; Kottner et al., 2011; Kozlowski & Hattrup, 1992). Overall agreement proportion was defined as the number of cases in which raters agreed exactly relative to the total number of ratings. Specific agreement proportion was the observed agreement relative to each of the yes or no rating categories (for Equations 1–3, see Table 2). We reported overall agreement for each TOP standard. In addition, in a sensitivity analysis for evaluating IRR for each TOP standard, we also reported the information-based measure of disagreement and its 95% CI (Costa-Santos et al., 2010; Henriques, Antunes, Bernardes, et al., 2013; see Table S1 in the Supplemental Material).

Abbreviations and Definitions

Software

Data processing, management, visualization, and part of descriptive analyses were done in Python (Van Rossum & Drake, 2009). We conducted the IRA and IRR analysis in R using RStudio (R Development Core Team, 2021; RStudio Team, 2021). We used the obs.agree package in R for IRA analysis and the irr package for IRR analysis (Gamer et al., 2019; Henriques, Antunes, & Costa-Santos, 2013). Annotated data processing and analysis-code notebooks are available at OSF (https://osf.io/xtdb6/). As recommended in GRRAS, we used the lower bond of the 95% CI to interpret the IRA and ICC measures.

Results

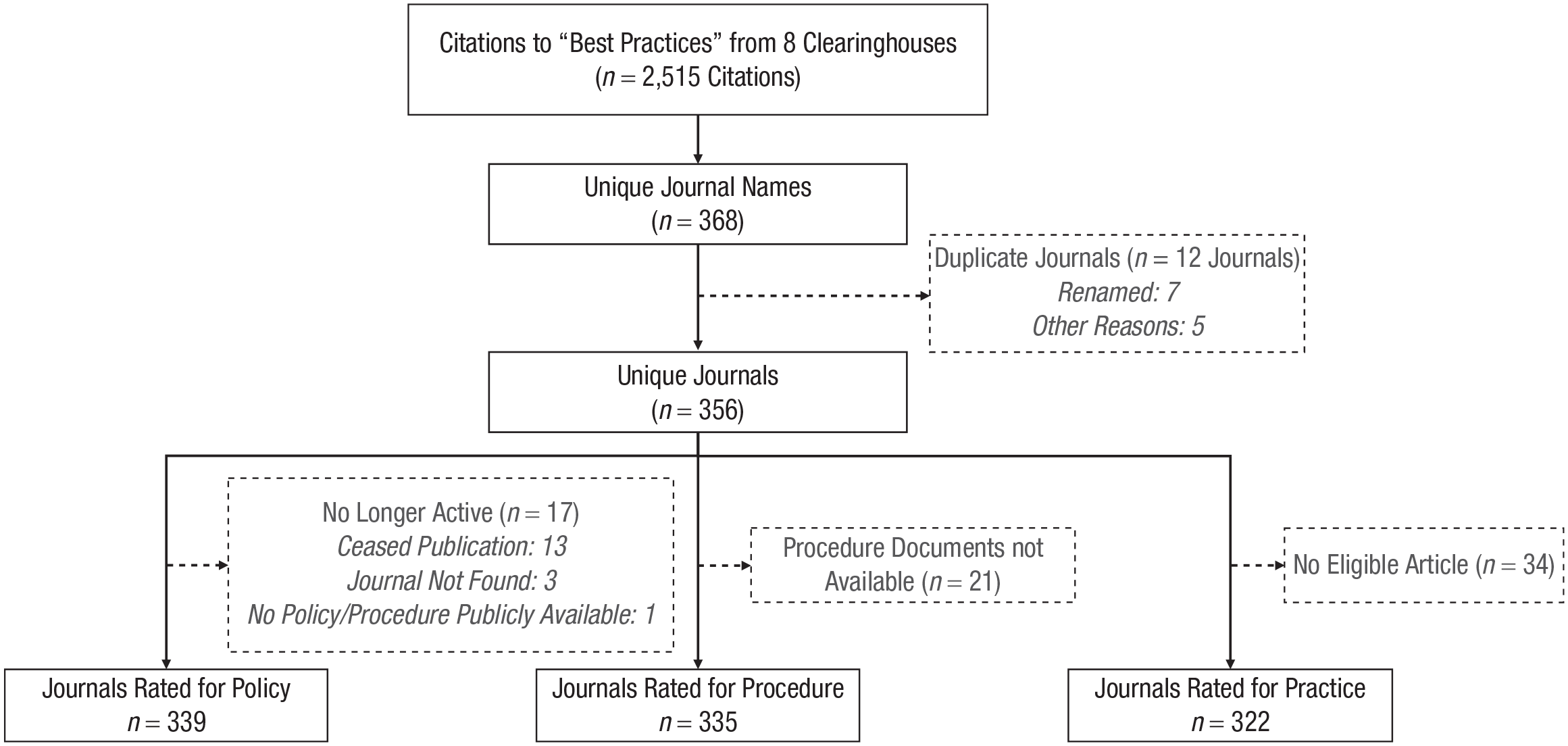

We rated 339 journals using the policy instrument, 335 journals using the procedure instrument, and 322 journals using the practice instrument (Fig. 1). Fifteen RAs were recruited and started rating policy, procedure, and practice documents in December 2019, May 2020, and November 2020, respectively (see Fig. S1 in the Supplemental Material). RAs completed all ratings by January 2021 and performed comparably with each other. The percentage agreement with the reconciled rating used for analysis was 95% to 100% across all RAs and instruments (Table 3). Moreover, the percentage of times each RA disagreed with the other two RAs on questions in the policy instrument was low, between 1% and 3%.

Journal flow diagram.

Rater Agreement on Journal Policy, Procedure, and Practice Ratings

Note: TOP = Transparency and Openness Promotion; NA = not applicable.

The original TOP Guidelines questions (n = 11) were rated by seven raters at Indiana University-Bloomington campus, and additional TOP Factor questions (n = 2) were rated by four raters at the Indiana University-Indianapolis campus.

We did not calculate the proportion of times that a rater’s rating was in the minority for procedure or practice ratings data because there were only two raters.

Journal policies (instructions to authors)

For journal policies, we present estimates for agreement and interrater reliability of the 13 individual questions in our instrument, agreement and IRR of the scores for the 10 standards in the TOP Factor, and agreement and reliability of our final ratings compared with an external data source (Center for Open Science, 2016b).

Agreement and reliability of individual questions

For all 13 policy questions, overall agreement and specific agreement on the no responses exceeded 90%. The prevalence of yes responses was low (Table 4), ranging from 1% to 23% (Mdn = 2%). Specific agreement on yes responses was inconsistent; there was lower specific agreement for questions with few yes responses. Fleiss’s κ values ranged from −0.008 to 0.903 (M = 0.507, SD = 0.371) and were statistically significantly different from 0 for most policy questions (10/13; 77%). Whereas the IRR statistics were “almost perfect” for four questions (4/13; 31%) and “substantial” for one question (1/13, 8%), IRR values were “moderate” or worse for most questions (8/13; 62%).

Interrater Agreement and Interrater Reliability for Questions in the Policy Rating Instrument Among the Three Raters

Note: Order of questions might not match that in the protocol study because the policy instrument was modified after publication of the protocol study. IRA = interrater agreement; IRR = interrater reliability; PE = point estimate; CI = confidence interval.

There was no skip logic for the questions. Hence, all raters responded (yes or no) to these questions for all journals.

Interpretation based on the following: < 0.00 = poor; 0.00–0.20 = slight; 0.21–0.40 = fair; 0.41–0.60 = moderate; 0.61–0.80 = substantial; 0.81–1.00 = almost perfect (Landis & Koch, 1977).

Additional TOP Factor Question 2 had four possible responses (no, “Preregistration” badge, “Open Data” badge, and “Open Materials” badge). We evaluated the IRA/IRR for the last three responses. Here, the number of yes responses column shows the number of times that raters checked the box for each of the possible responses.

Agreement and reliability for TOP standards

Agreement on assessments for journal level of implementation was high for all standards (Table 5), ranging from 79% for “data transparency” to 99% for “open science badges.” However, as with individual questions, IRR varied across the standards. The ICC ranged from −0.004 for “registration of analysis plan” to 0.883 for “open science badges” (M = 0.479, SD = 0.303). In addition, no standard had excellent reliability: three standards had “good” (3/10; 30%), one “moderate” (1/10; 10%), and six “poor” IRR (6/10; 60%).

Interrater Reliability and Interrater Agreement for TOP Standard Levels of Implementation

Note: IRA = interrater agreement; IRR = interrater reliability; TOP = Transparency and Openness Promotion; CI = confidence interval; ICC = intraclass correlation coefficient.

Interpretation is based on the lower bound of the 95% CI (< 0.50 = poor; 0.50–0.75 = moderate; 0.75–0.90 = good; >0.90 = excellent reliability; Koo & Li, 2016).

The TOP rubric refers to “data and materials.” We assessed Standards for citing data and statistical code (rows in italics) separately, and we assigned the higher of those two values to citation Standards.

Typically, a journal would get a Level 2 for a Standard if it requires that authors actually use an open-science practice. The exceptions are the Standards for preregistering a study, including its analysis plan, and the replication standard. In those cases, Level 2 states that the journal verifies compliance with the preregistered plan or its analysis plan or reviews replication studies blinded to their results.

Agreement with an external data source

In April 2022, we identified ratings on OSF for 1,575 journal policies, including TOP Factor scores for individual standards and for the total TOP Factor (Center for Open Science, 2016b). Of the 339 journal policies rated in our study, 134 (40%) were also rated on OSF (Fig. 2). Total TOP Factor scores were the same for 56 (42%) journals. Scores on OSF were higher for 49 (37%) journals, and scores in our study were higher for 29 (22%) journals. The mean absolute difference between the scores was 2, and ICC was 0.602 (95% CI = [0.426, 0.734]), suggesting a “moderate” reliability between the two scores (see Tables S2 and S3 in the Supplemental Material).

Overlap between the OSF and TRUST total Transparency and Openness Promotion Factor scores (134 journals). We added noise to overlapping circles, which appear as darker areas in the plot.

Journal procedures (manuscript-submission systems)

For the 26 procedure questions, overall agreement and specific agreement on the no responses exceeded 90% for 23 and 24 questions, respectively. The prevalence of yes responses was low (Table 6), ranging from 0% to 29% (Mdn = 3%). As with journal policies, the specific agreement on yes responses was inconsistent such that there was lower specific agreement for questions with few yes responses. Fleiss’s κ values ranged from −0.017 to 1.000 (M = 0.457, SD = 0.390) and were statistically significantly different from 0 for most procedure questions (18/26; 69%). IRR values were moderate or worse for most questions (14/26; 54%), although some were almost perfect (8/26; 31%) and substantial (4/26; 15%).

Interrater Agreement and Interrater Reliability for Questions in the Procedure Rating Instrument Between the Two Raters

Note: IRA = interrater agreement; IRR = interrater reliability; PE = point estimate; CI = confidence interval; NA = not applicable.

There was no skip logic for the questions. Hence, all raters responded (yes or no) to these questions for all journals.

Interpretation based on the following: poor < 0.00; slight = 0.00–0.20; fair = 0.21–0.40; moderate = 0.41–0.60; substantial = 0.61–0.80; almost perfect = 0.81–1.00; Landis & Koch, 1977).

Consistency check for journal procedures

To identify potential errors in our ratings of journal procedures, we checked for consistency across journals with the same publisher and submission system (“publisher–submission system pairs”). Three questions did not have any inconsistency across the publisher–submission system pairs (3/26; 12%; Table 7). The largest number of inconsistent reconciled ratings was for the question regarding whether the submission process included a field for indicating data availability (16/26; 62%). After removing inconsistencies because of errors in our ratings, our ratings improved for nine of 26 submissions (35%). Ratings did not change for 14 questions because procedures truly varied across journals with both the same publisher and submission system (14/26; 54%).

Inconsistency of Procedures Within Publication-Submission Systems

There was no skip logic for the questions. Hence, all raters responded (yes or no) to these questions for all journals.

This is the number of times there was an inconsistent case (different ratings for the journals within the same publication-submission system) for a question.

Total number (denominator in proportion) of publisher–submission system combinations was 108.

Journal practices (journal articles)

Overall agreement was above 86% for questions on journal practices. The prevalence of yes responses for questions in the practice instrument was low (Table 8), ranging from 0% to 16% (Mdn = 5%). As with journal policies and procedures, specific agreement on yes responses was lower than agreement on the no responses, and it was notably low for questions on journal practices with few yes responses. However, the practice instrument was comparatively more reliable than the policy and procedure instruments. Fleiss’s κ estimates ranged from 0.242 to 1.000 (M = 0.612, SD = 0.211) and were statistically significantly different from 0 for most practice questions (12/14; 86%). Whereas the IRR statistics were almost perfect for two questions (2/14; 17%) and substantial for four questions (4/14, 29%), IRR values were moderate for most questions (5/14; 36%). IRR values were not poor for any question. We could not calculate Fleiss’s κ for two questions because there were no yes responses.

Interrater Agreement and Interrater Reliability for Questions in the Practice Rating Instrument Between the Two Raters

Note: IRA = interrater agreement; IRR = interrater reliability; PE = point estimate; CI = confidence interval; NA = specific agreement on yes responses and Fleiss’ κ cannot be calculated because there were 0 yes responses.

There was no skip logic for the questions. Hence, all raters responded (yes or no) to these questions for all journal articles.

Interpretation based on the following: poor < 0.00; slight = 0.00–0.20; fair = 0.21–0.40; moderate = 0.41–0.60; substantial = 0.61–0.80; almost perfect = 0.81–1.00; Landis & Koch, 1977).

Response options for Questions 4a and 5a were “no”; “yes, study was not registered”; and “yes, study was registered.” The latter two options were combined in analyses.

Discussion

This study demonstrated the feasibility of using a structured process and instruments to assess journal implementation of the TOP Guidelines. Trained graduate RAs rated instructions to authors, manuscript-submission systems, and articles published in a large cohort of journals. They performed comparably with each other and had high interrater agreement on level of implementation in journal policies (i.e., TOP Level 0, 1, 2, or 3). In addition, we found high overall interrater agreement on the questions about specific aspects of TOP in all three of our instruments. Agreement was particularly high when journals did not implement TOP standards in their instructions to authors, submission systems, and journal articles. Although raters generally agreed when journals had not implemented a standard, they found it difficult to identify the level at which each TOP standard had been implemented. That is, specific agreement was low when journals had language related to TOP. Most questions had moderate or worse IRR, and we did not have excellent reliability in rating the level of implementation of any TOP standard. For some items, such as analysis plan, differences in reliability suggest that raters might be better able to evaluate the transparency and openness of journal articles compared with policies and procedures. Finally, we found that our ratings sometimes differed from TOP Factor scores rated by others, but we were unable to determine the timing of those ratings, and it is possible that some journals updated their policies during the time between the two ratings.

Our study highlights several obstacles to monitoring journal implementation of standards to promote open research. In particular, it was time-consuming to train RAs, identify relevant documents, and rate those documents. The rigorous processes used in our study would be expensive to scale and to sustain. In addition, it might be challenging to keep journal ratings up to date if journals change their policies, procedures, and practices without public notice. Although some TOP standards could be monitored automatically, our study suggests that automated surveillance would be challenging. Most manuscript-submission systems do not request structured information about the items needed to assess TOP implementation (e.g., study registration number, link to data set). We also found that TOP implementation varies across journals with both the same publisher and submission system. Because information needed to assess transparency and openness does not appear consistently and because reports often lack explanations and metadata needed to evaluate supplemental materials, it might not be possible to design a simple automated program to assess TOP implementation. Machine learning could address some of these challenges in theory; however, the small number of examples of implementation at Levels 1 to 3 would make it difficult to train a model. More examples of stringent policies, procedures, and practice may be needed for machines to recognize and differentiate among more stringent practices.

Revising the TOP Guidelines

Our study shows that TOP, like other guidelines (Logullo et al., 2020), does not translate easily into a measure for research on journal quality. As noted by others, TOP standards and their corresponding levels of implementation are difficult to assess because of their complex requirements described in multiple clauses (Cashin et al., 2021; Hansford et al., 2021; Spitschan et al., 2020). We found it challenging to parse compound requirements into clear and concise questions, and it was challenging to identify those facts that would allow us to determine objectively whether each standard had been implemented. Conversely, some constructs that are separated in TOP could be difficult to distinguish consistently in practice, such as registering a protocol and registering an analysis plan.

We sometimes struggled to distinguish different levels of TOP implementation because journals provided information on some but not all requirements. For example, it was unclear whether journals met Level 2 for data transparency when instructions to authors stated that data sharing in a trusted digital repository was required for publication but the instructions to authors did not state whether data sets must include all variables described in the manuscript. It was also difficult to rate TOP implementation when journals had multiple policies that applied to different study designs. For example, it was unclear whether journals met Level 2 for methods transparency when they required systematic reviews to adhere to the PRISMA Guidelines (Page et al., 2021) but did not require adherence to reporting guidelines for other study designs.

Rating TOP implementation assumes that journals have a single coherent set of policies, but we also found contradictions in the information journals provide to authors. That is, instructions to authors for a single journal might appear across multiple web pages and included hyperlinks to pages with additional hyperlinks. Dispersed instructions are difficult to interpret and follow, and multiple pages provide opportunities for policies to become internally inconsistent when pages are updated by different journal staff at different times. Furthermore, instructions to authors sometimes had ambiguous and confusing language. We particularly struggled with varying use of modal verbs in both journal documents and current TOP guidance, for example, using “should” instead of “must” to describe policies that appear to require an open research practice (see Box 1).

Box 1.

Examples of Ambiguous Language in Policy Documents

1. “Data and programs should be archived in the [repository].”

2. “Citations should include the type of material submitted.”

3. “[Journal] strongly encourages that all datasets on which the conclusions of the paper rely should be available to readers.”

4. “A CONSORT Statement includes recommendations, a checklist of items that should be included in a comprehensive report, and a participant flow diagram.”

To support future research about TOP implementation, the Center for Open Science and the broader scientific community could adopt a standard process and instruments, including training materials, that explain how to use TOP to assess journal quality. Currently, TOP is described across several websites, guidance documents, and rubrics that are subtly but meaningfully different. For example, citation standards are sometimes described as applying to data sets only, and they are sometimes described as applying to data sets, code, and research materials. Moreover, these resources provide information for journals, including examples of language that journals could incorporate in their instructions to authors, but they do not provide tools to evaluate policy language written by others.

We anticipate that our process and instruments could help in the development of these resources to improve TOP and its implementation. We translated TOP into factual questions, display logic, and scoring algorithms. Like checklists included in guidelines such as CONSORT and PRISMA, operationalizing TOP in this way might facilitate understanding and communication with editors and authors. By providing a structured interpretation of the guidelines, our instruments and algorithms could also help readers identify areas of agreement and disagreement in their interpretations of TOP. For example, if the differences we identified between Level 1 and Level 2 for a given standard are not what the developers intended, then TOP could be revised to clarify how the levels should be distinguished. Finally, current resources address journal policies only. Our process and instruments could help in the development of official guidance on implementing TOP standards in journal procedures (i.e., manuscript-submission systems) and practices (i.e., information reported in articles).

Limitations

In addition to the issues identified above, limited reliability in our study could be explained by limitations in our process, instruments, and team. To minimize these sources of error, the two PIs—who have contributed to the development of TOP standards—led the design of the process and instruments, trained RAs in their use, and provided close supervision throughout the study. We deconstructed criteria for TOP standards into factual questions with detailed instructions, and we programed the instruments using software with validity checks. We also checked for consistency across publisher–submission system pairs, which we hypothesized would have similar ratings. These methods improved on previous approaches that asked raters to consider all criteria simultaneously and to record the level of implementation for each standard in spreadsheets. For these reasons, we suspect that different raters or methods would not produce more reliable ratings. It is also a limitation that journals in our study publish research for which some but not all TOP standards are relevant. We focused specifically on applicable study designs by including randomized experiments and other quantitative evaluations of intervention effectiveness. Finally, we included only one article per journal to rate practices. If some positive practices we identified were representative of all articles in the included journals, then our results might overestimate TOP practices.

Conclusions

TOP aims to align scientific ideals with research practice. Unfortunately, it has not been implemented widely by journals in the behavioral, social, and health sciences (Grant et al., 2022; Mayo-Wilson, Grant, & Supplee, 2022) despite journal endorsement and widespread community support (Naaman et al., 2023). We conclude that revising TOP might improve its interpretability and use. Although we found high agreement among raters using our instruments, our experiences throughout this study indicate that limited reliability might arise from ambiguities in TOP and associated instructions. Standardized processes and instruments for assessing TOP implementation, accompanied by training materials, could advance efforts to implement, assess, and monitor open research policies, procedures, and practices. Monitoring transparency practices would be easier if journals were to collect structured data and metadata about research reports and associated elements such as registrations, data sets, and code.

Supplemental Material

sj-docx-1-amp-10.1177_25152459221149735 – Supplemental material for Evaluating Implementation of the Transparency and Openness Promotion Guidelines: Reliability of Instruments to Assess Journal Policies, Procedures, and Practices

Supplemental material, sj-docx-1-amp-10.1177_25152459221149735 for Evaluating Implementation of the Transparency and Openness Promotion Guidelines: Reliability of Instruments to Assess Journal Policies, Procedures, and Practices by Sina Kianersi, Sean Patrick Grant, Kevin Naaman, Beate Henschel, David Mellor, Shruti Apte, Jessica E. Deyoe, Paul Eze, Cuiqiong Huo, Bethany L. Lavender, Nicha Taschanchai, Xinlu Zhang and Evan Mayo-Wilson in Advances in Methods and Practices in Psychological Science

Footnotes

Acknowledgements

Additional TRUST collaborators include Lauren Supplee (Child Trends, Bethesda, Maryland), Emily Fortier (Indiana University, Indianapolis, Indiana), Madison Haralovich (Indiana University School of Dentistry, Indianapolis, Indiana), and Nick Liu (Indiana University Network Sciences Institute, Bloomington, Indiana).

Transparency

Action Editor: David A. Sbarra

Editor: David A. Sbarra

Author Contribution(s)

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.