Abstract

Advances in mobile and wireless technologies offer tremendous opportunities for extending the reach and impact of psychological interventions and for adapting interventions to the unique and changing needs of individuals. However, insufficient engagement remains a critical barrier to the effectiveness of digital interventions. Human delivery of interventions (e.g., by clinical staff) can be more engaging but potentially more expensive and burdensome. Hence, the integration of digital and human-delivered components is critical to building effective and scalable psychological interventions. Existing experimental designs can be used to answer questions either about human-delivered components that are typically sequenced and adapted at relatively slow timescales (e.g., monthly) or about digital components that are typically sequenced and adapted at much faster timescales (e.g., daily). However, these methodologies do not accommodate sequencing and adaptation of components at multiple timescales and hence cannot be used to empirically inform the joint sequencing and adaptation of human-delivered and digital components. Here, we introduce the hybrid experimental design (HED)—a new experimental approach that can be used to answer scientific questions about building psychological interventions in which human-delivered and digital components are integrated and adapted at multiple timescales. We describe the key characteristics of HEDs (i.e., what they are), explain their scientific rationale (i.e., why they are needed), and provide guidelines for their design and corresponding data analysis (i.e., how can data arising from HEDs be used to inform effective and scalable psychological interventions).

Keywords

Advances in digital technologies (e.g., mobile and wearable devices) have created unprecedented opportunities to extend the reach and impact of psychological interventions. Interventions that leverage automated software tools are relatively inexpensive and can deliver in-the-moment support (Goldstein et al., 2017; Huh et al., 2020). However, insufficient engagement (i.e., the investment of energy in a focal task or stimulus) remains a critical barrier to the effectiveness of digital interventions (Nahum-Shani, Shaw, et al., 2022). Human delivery of interventions (e.g., by clinical staff) can be more engaging (Ritterband et al., 2009; Schueller et al., 2017) but potentially more expensive and burdensome. Hence, integrating digital- and human-delivered intervention components requires balancing effectiveness against scalability and sustainability (Schueller et al., 2017; Wentzel et al., 2016).

The capacity to sequence and adapt intervention delivery to the changing needs of individuals is viewed as an important innovation in psychological science (Kitayama, 2021; Koch et al., 2021; Lattie et al., 2022). Interventions are considered “adaptive” if they use time-varying (i.e., dynamic) information about the individual (e.g., location, emotions) to modify the type or intensity of interventions over time. Human-delivered intervention components are typically adapted at relatively slow timescales (e.g., weekly, monthly). For example, the adaptive drug court program for drug-using offenders (Marlowe et al., 2012) starts with standard substance use counseling and then every month uses information about the participant’s response status to decide whether to enhance the intensity of the counseling sessions. Here, the adaptation occurs monthly. Digital-intervention components, on the other hand, are typically adapted at a much faster timescale (e.g., every minute, daily). For example, Sense2Stop (Battalio et al., 2021) is a mobile intervention that uses information about the participant’s stress, which is collected every minute via a wearable device, to decide whether to deliver a push notification suggesting a brief stress-management exercise. Here, the adaptation occurs every minute.

Existing experimental designs and related data-analytic methods can be used to answer questions either about how to best employ components that are sequenced and adapted at relatively slow timescales or about how to best employ components that are sequenced and adapted at much faster timescales. However, these methodologies do not accommodate sequencing and adaptation of components at multiple timescales and hence cannot be used to answer scientific questions about how to best integrate human-delivered and digital components. To close this gap, we introduce the hybrid experimental design (HED)—a new experimental approach that can be used to answer scientific questions about the construction of psychological interventions in which human-delivered and digital components are integrated and adapted at multiple timescales. HEDs enable researchers to sequentially randomly assign study participants at multiple timescales, thereby providing data that can answer questions about how to best combine components that are sequenced and adapted at multiple timescales.

The goal of this article is to explain why HEDs are needed, what their key characteristics are, and how they can inform psychological interventions. To achieve these goals, we first explain how standard adaptive interventions (ADIs) typically guide the adaptation of intervention components at relatively slow timescales. We then describe how the sequential, multiple-assignment, randomized trial (SMART)—an experimental design involving sequential randomizations at relatively slow timescales—can be used to answer scientific questions about constructing ADIs. Next, we explain how just-in-time adaptive interventions (JITAIs) typically guide the adaptation of intervention components at relatively fast timescales. This is followed by describing how the microrandomized trial (MRT)—an experimental design involving sequential random assignments at relatively fast timescales—can be used to answer scientific questions about constructing JITAIs. Building on the work described above, we define multimodality adaptive interventions (MADIs) as an intervention delivery framework in which human-delivered and digital components are integrated and adapted at multiple timescales, slow and fast. Finally, we define and describe key features of the HED—an experimental design involving sequential randomizations at multiple timescales. We explain how HEDs can be used to answer scientific questions about constructing MADIs. Throughout, we use an example that is based on existing research but modified for illustrative purposes. Key terms and definitions are summarized in Table 1.

Definitions of Key Terms

ADIs

An ADI is an intervention-delivery framework that typically guides the adaptation of human-delivered components at timescales of weeks or months (Collins, 2018; Murphy et al., 2007). These interventions are becoming increasingly popular across various domains of psychological sciences, including health (Czajkowski & Hunter, 2021; Spring, 2019), clinical (Patrick et al., 2021; Pelham et al., 2016), educational (Chow & Hampton, 2022; Majeika et al., 2020), and organizational (Eden, 2017; Howard & Jacobs, 2016) psychology. In practice, an ADI is a protocol that specifies how tailoring variables (i.e., time-varying information about the individual’s progress and status) should be used by practitioners (e.g., therapists, teachers, coaches) to decide whether and how to modify intervention components at each of a few decision points (i.e., points in time in which intervention decisions should be made) during the course of treatment. ADIs are designed to achieve a distal outcome (i.e., a long-term goal) by achieving proximal outcomes, which are the short-term goals the adaptation is intended to achieve. The proximal outcomes are mechanisms of change (i.e., mediators) through which the adaptation can help achieve the distal outcome (Nahum-Shani & Almirall, 2019).

The example in Figure 1 is a hypothetical ADI to prevent substance use and violent behavior among adolescents visiting the emergency department (ED). This example is based on an existing research project (Bernstein et al., 2022) but modified for illustrative purposes. In this example, all participants are provided a single human-delivered session during an ED visit, focused on reducing risks and increasing resilience, plus a digital intervention that includes daily messages (e.g., protective behavioral strategies, alternative leisure activities). The tailoring variable, response status, is assessed 4 weeks after discharge, and youths classified as early nonresponders (i.e., individuals reporting substance use or physical aggression) are offered human-delivered (remote) coaching in addition to the digital intervention. Individuals classified as early responders (i.e., youths reporting no substance use and no physical aggression) continue with the digital intervention. This intervention is “adaptive” because it uses time-varying information (here, about the individual’s response status) to decide whether and how to intervene subsequently (here, whether to add human coaching). This intervention includes decision points at Weeks 0 (ED visit) and 4 because the goal is to address conditions (i.e., early signs of nonresponse) that tend to unfold at a relatively slow timescale (here, over several weeks).

An adaptive intervention (ADI) to prevent substance use and violent behavior among adolescents visiting the emergency department (ED).

The SMART

Investigators interested in developing ADIs often have scientific questions about how to best construct these interventions. As an example, suppose the goal is to determine (a) whether it is better (e.g., in terms of reduction in substance use by Week 16) to augment the single session provided in the ED with a digital intervention alone or with both a digital intervention and (human-delivered) coaching and (b) whether it is better to step up the intensity of the initial intervention for participants who show signs of nonresponse by Week 4.

The SMART (Lavori & Dawson, 2000; Murphy, 2005) is an experimental design that is being used extensively in psychological research (for review of studies, see Ghosh et al., 2020) to inform the development of ADIs. At each ADI decision point, participants are randomly assigned among a set of intervention options. For example, the hypothetical SMART in Figure 2 can be used to answer the questions outlined above. This design involves two stages of random assignment that correspond to two decision points in the ADI of interest. First, youths in the ED are randomly assigned (.5 probability) to augment the single session with either a digital intervention alone or combined with coaching. Second, at Week 4, nonresponders (i.e., youths reporting substance use or physical aggression) are randomly assigned again (.5 probability) to either continue with the initial intervention or step up to a more intense intervention; responders (i.e., youths reporting no substance use and no physical aggression) continue with the initial intervention. Suppose the primary distal outcome is the number of substance use (e.g., alcohol, marijuana use) days measured at Week 16. Note that the design in Figure 2 is considered a “prototypical SMART” (Nahum-Shani et al., 2020), that is, the SMART includes two stages of random assignment, each stage involves random assignment to two ADI options, early response status is determined at a single point in time, and only nonresponders get randomly assigned again to subsequent options (i.e., second-stage random assignment is restricted to nonresponders).

An example sequential, multiple-assignment, randomized trial to empirically develop an adaptive intervention (ADI) for preventing substance use and violent behavior among adolescents visiting the emergency department (ED).

The analyses for addressing the aforementioned scientific questions (whether it is better to augment the single session provided in the ED with a digital intervention alone or with both a digital intervention and (human-delivered) coaching and whether it is better to step up the intensity of the initial intervention for participants who show signs of nonresponse by Week 4) leverage outcome information across multiple experimental conditions (Collins et al., 2014; Nahum-Shani et al., 2012a). Specifically, the first question can be answered by comparing the mean outcome across all the conditions in which participants were offered the digital intervention alone initially (Fig. 2, A–C) to the mean outcome across all the conditions in which participants were offered the digital intervention combined with coaching initially (Fig. 2, D–F). Note that this comparison would involve using outcome data from the entire sample to estimate the effect, which can be viewed as the main effect of the initial components averaging over the subsequent components for responders and nonresponders (Nahum-Shani, Dziak, & Wetter, 2022; Nahum-Shani et al., 2020). The second question can be answered by comparing the mean outcome across the two experimental conditions in which nonresponders were offered the step-up subsequently (Fig. 2, B and E) to the mean outcome across the two conditions in which nonresponders continued with the initial intervention (Fig. 2, C and F). Note that this comparison would involve using outcome data from the entire sample of nonresponders to estimate the effect, which can be viewed as the main effect of the subsequent components among nonresponders averaging over the initial components (Nahum-Shani, Dziak, & Wetter, 2022; Nahum-Shani et al., 2020).

The multiple, sequential, random assignments in this SMART yield four “embedded” ADIs (Table 2). One of these ADIs, represented by Cells A and B, is described in Figure 1. Data from SMARTs can be used to compare embedded ADIs and to answer a wide variety of scientific questions beyond the main effects of the first- and second-stage components (e.g., Nahum-Shani et al., 2012b, 2020). The extant literature highlights the efficiency of SMARTs in achieving statistical power for addressing scientific questions about building ADIs (Collins et al., 2014; Murphy, 2005; Nahum-Shani et al., 2012a).

Four ADIs Embedded in the SMART in Figure 2

Note: ADI = adaptive intervention; ED = emergency department.

JITAIs

JITAIs typically guide the sequencing and adaptation of digital-intervention components. These interventions are becoming increasingly popular across various domains of psychological sciences, including health (Conroy et al., 2020; Nahum-Shani, Rabbi, et al., 2021), clinical (Comer et al., 2019; Coppersmith, 2022), educational (Cook et al., 2018), and organizational (Valle et al., 2020) psychology. Leveraging powerful digital technologies, a JITAI is a protocol that specifies how rapidly changing information about the individual’s internal state (e.g., mood, substance use) and context (e.g., geographical location, presence of other people) should be used in practice to decide whether and how to deliver intervention content (e.g., feedback, motivational messages, behavioral or cognitive strategies) in real time in the individual’s natural environment (Nahum-Shani et al., 2015, 2018). For example, suppose the delivery of messages in the digital intervention described above follows the JITAI in Figure 3. Specifically, the mobile device prompts participants to provide information such as their stress and loneliness via ecological momentary assessments (EMAs) and tracks their physical position via GPS. If this combined information indicates high risk for substance use during the day, then a message would be sent in the evening recommending a protective behavioral strategy. Otherwise, no message would be delivered. This intervention is adaptive because it uses time-varying information (here, about the individual’s risk status) to decide whether and how to intervene subsequently (here, whether to deliver a message). This intervention includes decision points every day because the goal is to address conditions (i.e., short-term risk for substance use) that unfold at a relatively fast timescale (here, daily).

Just-in-time adaptive intervention (JITAI) to guide the delivery of daily messages for adolescents visiting the emergency department.

The MRT

Investigators interested in developing JITAIs often have scientific questions about how to best construct these interventions. As an example, suppose the goal is to determine (a) whether it is beneficial (e.g., in reducing next-day substance use) on average to deliver a daily message and (b) under what conditions (e.g., level of risk) providing a daily message would be beneficial in reducing next-day substance use.

The MRT (Qian et al., 2022) is an experimental design to inform the development of JITAIs. MRTs are experiencing rapid uptake in psychological research despite being relatively new (Figueroa et al., 2021; Valle et al., 2020). At each JITAI decision point, participants are randomly assigned among a set of intervention options. The MRT is conceptually related to the SMART because it also includes sequential random assignments. However, the MRT is designed to provide the empirical basis for constructing JITAIs, which involve adaptation at relatively fast timescales. Hence, an MRT involves sequential random assignments at a relatively fast timescale—participants are randomly assigned to different intervention options hundreds or even thousands of times over the course of the experiment.

For example, the hypothetical MRT in Figure 4 (which is based on Coughlin et al., 2021) can be used to answer the two questions outlined above. This MRT employs random assignments daily over 16 weeks (i.e., 112 random assignments): Participants are randomly assigned every day (in the evening) with .5 probability to either a message delivery or no message delivery. Before the random assignments, individuals are prompted on their mobile device to provide information such as their stress, mood, and daily substance use via EMAs. In addition, the mobile device tracks their physical position via GPS, and data are collected about the individual’s response to prior messages. Suppose the primary proximal outcome is the number of drinks on the next day.

An example microrandomized trial to empirically develop a digital just-in-time adaptive intervention for preventing substance use and violent behavior among adolescents visiting the emergency department. EMA = ecological momentary assessment; R = random assignment.

Similar to the SMART, the MRT makes extremely efficient use of study participants to answer questions about building a JITAI. This efficiency is facilitated by capitalizing on both between-subjects and within-subjects contrasts in the proximal outcome (Qian et al., 2022). For example, consider the first question above, in which the proximal outcome is the number of drinks on the next day. This proximal outcome is assessed following each random assignment. Hence, this question can be answered by comparing two means: (a) the average number of next-day drinks when a message was delivered on a given day and (b) the average number of next-day drinks when a message was not delivered on a given day. This difference can be estimated by pooling data across all study participants and also across all decision points in the trial (Qian et al., 2022). This is an estimate of the (causal) main effect of delivering (vs. not delivering) a daily message, in terms of the proximal outcome.

The second question can be answered by investigating whether the difference between the two means described above varies depending on self-reported or sensor-based information collected before random assignment. For example, the data can be used to investigate whether the level of risk before random assignment moderates the causal effect of delivering (vs. not delivering) a message on next-day number of drinks. As before, this analysis would use data across all study participants and across all decision points in the trial (Qian et al., 2022). Estimates of the difference in next-day number of drinks between delivering versus not delivering a message at different levels of risk (e.g., low, moderate, high) can be used to further identify the specific level or levels at which delivering (vs. not delivering) a message would be most beneficial.

MADIs

Suppose an investigator would like to integrate the ADI in Figure 1 with the JITAI in Figure 3. Specifically, the goal is to employ the JITAI in Figure 3 as part of the digital-intervention component mentioned in Figure 1. Figure 5 provides an example in which at program entry, all participants are provided a single (human-delivered) session and a digital intervention delivers a daily message only if momentary risk is high. Response status is assessed 4 weeks after discharge on the basis of self-reported substance use and physical aggression. Human-delivered coaching is added to individuals classified as nonresponders at Week 4, whereas individuals classified as responders continue with the initial intervention. The example in Figure 5 is a MADI—an intervention in which human-delivered and digital components are integrated and adapted at multiple timescales. Although this is the first article to formally define MADIs, there is a growing interest in developing psychological interventions that involve adaptation of human-delivered and digital components at multiple timescales (e.g., Belzer et al., 2018; Czyz et al., 2021; Patrick et al., 2021).

A multimodality adaptive intervention to guide the delivery of human coaching and digital messages for adolescents visiting the emergency department (ED).

The construction of MADIs requires knowledge of how best to combine human-delivered and digital components that are adapted at multiple timescales. These questions are critical given that each decision regarding the integration between digital and human-delivered components (e.g., what, when, how much, for whom) represents a trade-off between benefits and drawbacks (Mohr et al., 2011; Schueller et al., 2017). For example, adding (human) coaching for nonresponders may increase engagement with the digital messages but may increase participant burden and intervention cost; intensifying the delivery of digital messages may help address sudden shifts in risk between coaching sessions but may increase habituation to the messages. Hence, an important step in building an effective and scalable integration between human-delivered and digital components is to ensure they are designed synergistically so that the effect of the intervention as a whole is expected to be greater than the sum of effects of the individual components.

Existing experimental approaches address scientific questions about building ADIs (e.g., the SMART) or JITAIs (e.g., the MRT) but not about their integration. Investigators interested in developing interventions that adapt to the changing needs of individuals at multiple timescales must currently settle for addressing questions about the sequencing and adaptation of components at each timescale separately. This compartmentalization represents a major barrier to the effective integration of human-delivered and digital components. Hence, we introduce a new experimental approach that will enable investigators to answer questions about the interplay between intervention components that are sequenced and adapted at multiple timescales.

The HED

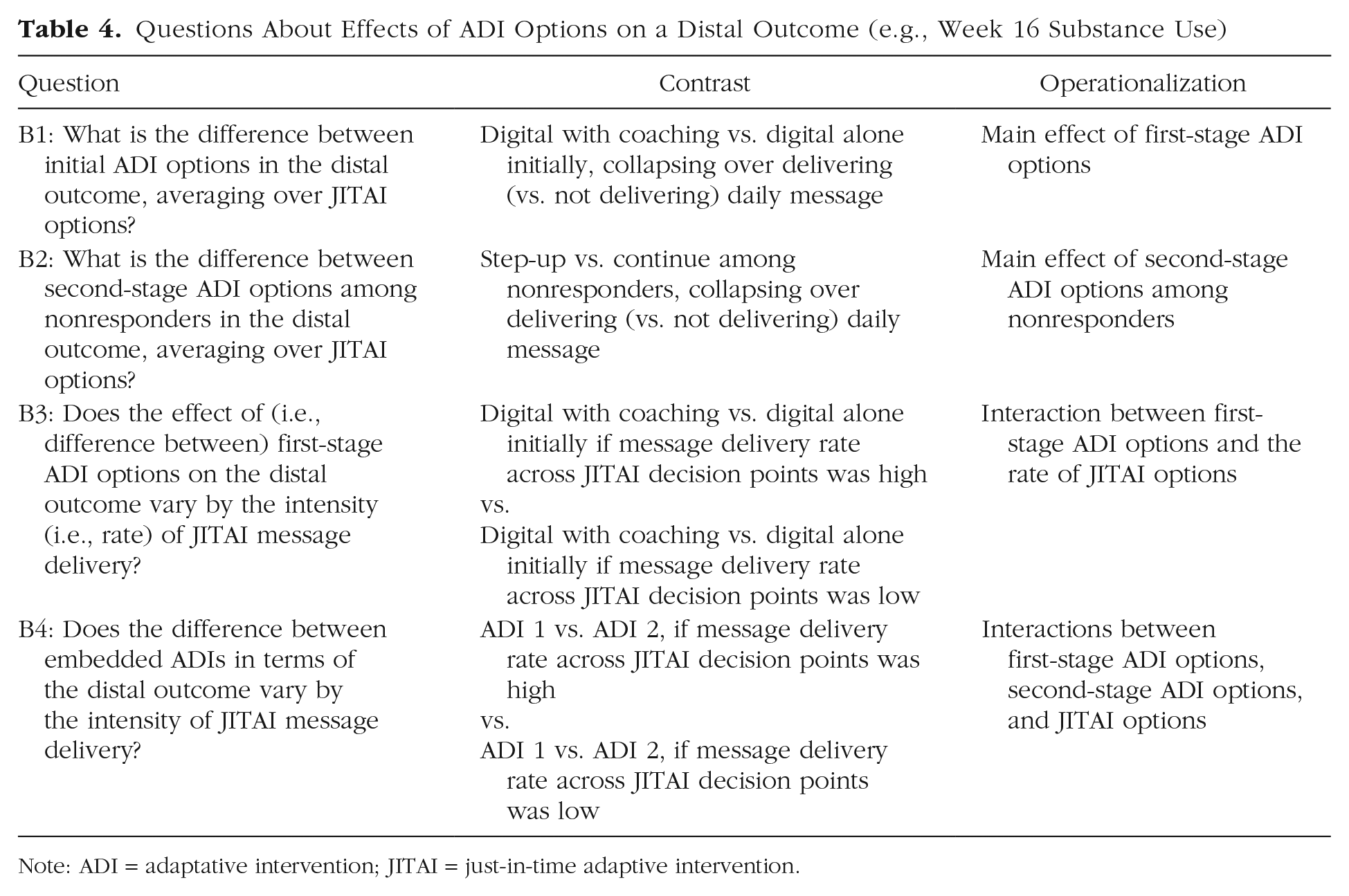

HED is a type of experimental design that can aid in the development of MADIs. It is a flexible random-assignment trial in which participants can be sequentially randomly assigned to ADI options and to JITAI options at different timescales appropriate for each. Suppose investigators would like to answer the following questions about the development of the MADI in Figure 5. First, does the proximal impact of daily (digital) messages on next-day number of drinks vary between starting with the digital intervention alone versus the digital intervention combined with (human) coaching (Table 3, A2)? Second, does the impact of starting with coaching (vs. digital alone) on substance use reduction at Week 16 vary by the intensity (i.e., the rate) of digital messages delivered (Table 4, B3)? These questions concern synergies between human-delivered (coaching) and digital (messages) components that are sequenced and adapted at different timescales (after 4 weeks vs. daily).

Questions About Effects of JITAI Options on a Proximal Outcome (e.g., Next-Day Number of Drinks)

Note: JITAI = just-in-time adaptive intervention; ADI = adaptative intervention.

Questions About Effects of ADI Options on a Distal Outcome (e.g., Week 16 Substance Use)

Note: ADI = adaptative intervention; JITAI = just-in-time adaptive intervention.

The experimental design in Figure 6 can provide data for addressing such questions. This design integrates the SMART in Figure 2 with the MRT in Figure 4. Specifically, participants are randomly assigned at two ADI decision points (here, at Week 0 and Week 4) to ADI options. These sequential randomizations yield four embedded ADIs (Table 2), similar to the SMART in Figure 2. In addition, all participants are microrandomly assigned at JITAI decision points (here, every day) during the 16 weeks to either a message delivery or no message delivery. That is, microrandom assignments are embedded in Cells A through F in Figure 6 and hence in each embedded ADI. Tables 3 and 4 provide examples of scientific questions about building a MADI that can be answered with data from the HED in Figure 6. Appendix A in the Supplemental Material available online provides regression models for analyzing the data to answer each question.

An example hybrid experimental design to empirically develop a multimodality adaptive intervention for preventing substance use and violent behaviors among adolescents visiting the emergency department (ED). EMA = ecological momentary assessment; R = random assignment.

Similar to the SMART and the MRT, the HED makes efficient use of study participants to answer questions about building MADIs. For example, consider Question A1 (Table 3), which concerns the proximal impact (on next-day number of drinks) of delivering (vs. not delivering) a daily message averaging over ADI options. This question can be answered by estimating the causal effect of delivering (vs. not delivering) a daily message in terms of next-day number of drinks on average across all study participants, across all JITAI decision points, and across first- and second-stage ADI options. This effect can be viewed as the proximal “main effect” of delivering (vs. not delivering) a message, averaging over ADI options.

Now consider Question A2 (Table 3), which concerns whether the proximal impact on next-day number of drinks of delivering (vs. not delivering) a daily message varies depending on whether coaching was offered initially (vs. the digital intervention alone). This question can be answered by comparing the causal effect of delivering (vs. not delivering) a message on next-day number of drinks between the two initial ADI options. This corresponds to the interaction between the JITAI options and the first-stage ADI options in terms of the proximal outcome. As before, this interaction would be estimated by using data across all study participants and across all JITAI decision points.

Next, consider Question B1 (Table 4), which concerns the distal impact (on Week 16 substance use) of starting with coaching (vs. digital intervention alone), averaging over JITAI options. This question can be answered by using Week 16 outcome information from all study participants. Specifically, this would involve comparing substance use at Week 16 between participants who started with coaching and participants who started with the digital intervention alone, collapsing over the delivery (vs. no delivery) of a daily message at JITAI decision points. This can be viewed as the main effect of the first-stage ADI options on the distal outcome, provided that the daily messages are delivered with .5 probability.

Finally, consider Question B3 (Table 4), which concerns whether the distal impact (on Week 16 substance use) of starting with coaching (vs. digital alone) varies by the intensity (i.e., rate) of digital messages delivered. Note that the random assignments to JITAI options (message vs. no message) with .5 probability at each JITAI decision point generate a distribution across participants in the number of messages delivered in practice over the course of the study. Although the values of this distribution are tightly clustered around the mean (because of the central limit theorem), investigators might still be interested in using this data to explore whether the causal effect of starting with coaching (vs. digital alone) on Week 16 substance use varies between different rates of messages delivered across the 16-week study. This corresponds to an interaction between the first-stage ADI options and the average JITAI options in terms of the distal outcome. As before, this interaction would be estimated by using data across all study participants and across all JITAI decision points.

Appendix B in the Supplemental Material describes the results of Monte Carlo simulation studies showing that with reasonable sample sizes (e.g., 100–200 participants), adequate statistical power can be achieved for addressing Questions A1 through A4 (Table 3) and B1 and B2 (Table 4) with data from a HED such as the one in Figure 6. As should be expected, power was much lower for questions that concern the rate of JITAI options (Table 4, B3 and B4) because the values of this variable are tightly clustered around the mean. A satisfactory power for questions involving the rate of JITAI options would require a different HED whereby the random-assignment probabilities systematically vary between participants. For example, some participants might be assigned to have daily messages delivered with 0.4 probability and others with 0.6, thus creating an added initial ADI factor that influences the JITAI component delivery. Nonetheless, data from the HED in Figure 6 can still provide some useful information about the intensity of message delivery on an exploratory basis that can inform hypothesis generation.

Practical Steps for Planning a HED

In this section, we suggest pragmatic steps and available resources that researchers can use to implement the HED approach in their own research.

Step 1: develop a working model

This step involves compiling existing empirical evidence and practical considerations into a working model that describes how the candidate MADI being developed may operate. Both the ADI elements and the JITAI elements of the hybrid intervention need to be considered.

The ADI elements include the decision points, intervention options, and tailoring variables. It will also be necessary to decide the thresholds or levels of the tailoring variables that differentiate between conditions under which different ADI options should be delivered; this includes planning for situations in which information about the tailoring variable is not available in practice (see Murphy & McKay, 2004; Roberts et al., 2021). These decisions can be informed by existing literature and empirical evidence and by consideration of standard clinical practice, expert opinion, or experience. Of course, some important questions will remain unanswered, hence the need for the random-assignment trial (see Step 2). Recommended reading includes literature that describes the motivation for ADIs, their key elements, and guidelines for their design (e.g., Collins et al., 2004; Nahum-Shani & Almirall, 2019).

Similar elements must be considered for the JITAI, including the JITAI decision points, intervention options, tailoring variables, and the thresholds/levels of the tailoring variables that differentiate between conditions in which different JITAI options should be delivered. Recommended reading includes literature that describes the motivation for JITAIs, their key elements, and guidelines for their design (Nahum-Shani et al., 2015, 2018).

Step 2: specify scientific questions

Scientific questions that motivate a HED can be expressed in terms of the proximal and/or distal effects of different intervention options. Questions about proximal effects of JITAI options, such as the difference between JITAI options in terms of a proximal outcome (e.g., next-day number of drinks), may concern either their main effects, averaging over ADI options (e.g., Table 3, A1), or else their interactions with ADI options (e.g., Table 3, A2–A4). Likewise, the distal effects of ADI options (i.e., the difference between ADI options in terms of a distal outcome, e.g., Week 16 substance use) may concern either their main effects, averaging over JITAI options (e.g., Table 4, B1 and B2), or their interactions with JITAI options (e.g., Table 4, B3 and B4). The selected outcomes should measure changes at timescales (e.g., triannually or daily, respectively) that are suitable given the ADI and JITAI decision points (monthly or daily, respectively).

Sometimes multiple questions may be identified, perhaps too many to answer in a single study. In this case, a useful strategy might be to rank-order the questions in terms of both their scientific impact and novelty. On the basis of these scientific questions, investigators should formulate a single, simple, and clear main hypothesis accompanied by a limited number of secondary hypotheses and exploratory propositions.

Step 3: plan random assignments

The timing of random assignments and assignment probabilities should be guided by the scientific questions of interest while also addressing practical considerations. For example, if the JITAI under investigation is designed to address conditions that change every few hours (rather than every day), then random assignments to JITAI options should occur every few hours (e.g., Nahum-Shani, Potter, et al., 2021). If, because of considerations relating to burden or risk for habituation, individuals should not receive more than a certain number of JITAI options over a certain period of time (e.g., no more than two messages per day), then higher random-assignment probabilities can be assigned to JITAI options that are less burdensome and/or that can mitigate habituation (e.g., higher probability to not delivering relative to delivering a message; see discussion in Qian et al., 2022). If the ADI under investigation includes a dynamic tailoring variable (i.e., repeated assessments of response status used to transition individuals to a more intense intervention as soon as they show early signs of nonresponse), then the HED should again randomly assign nonresponders to subsequent ADI options at different time points (rather than at a single point in time, such as week 4 in Fig. 6) depending on when they show early signs of nonresponse (see example in Patrick et al., 2021).

Note that the example in Figure 6 involves random-assignment probabilities that are constant. However, certain scientific questions and practical considerations would require changing the random-assignment probabilities systematically during the trial. For example, consider the following scientific question: For nonresponders at Week 4, is it better (in terms of Week 16 substance use) to add coaching or to enhance the intensity of message delivery, averaging over JITAI options? This question can be answered by designing a HED in which nonresponders at Week 4 get randomly assigned again to two subsequent ADI options: (a) add coaching or (b) enhance the probability of delivering a message (vs. no message) in subsequent microrandom assignments (e.g., from 0.5 to 0.7). Furthermore, suppose that the goal is to investigate whether it is more beneficial (in terms of next-day number of drinks) to deliver a message when the individual experiences high risk versus low risk, averaging over ADI options. To ensure that there are adequate numbers of messages delivered both at high-risk times (which may be relatively rare) and at low-risk times, the HED can be designed to use a higher random-assignment probability to delivering (vs. not delivering) a message when individuals are classified as high risk and a lower probability when they are classified as low risk. In this example, different random-assignment probabilities to JITAI options are used for different individuals at different time points depending on a time-varying variable (see detailed discussion in Dempsey et al., 2020).

Furthermore, any conditions that restrict the random assignments (i.e., determine whether an individual should get randomly assigned to specific intervention options) must be specified on the basis of scientific questions and substantive knowledge. For example, if at any time it is inappropriate to deliver JITAI options (e.g., a message) because of scientific, ethical, feasibility, or burden considerations, then random assignments to JITAI options should be restricted only to those conditions in which their delivery is appropriate (e.g., microrandom assignment of an individual to JITAI options only when not driving a car: Battalio et al., 2021; or only if the individual completed a specific task such as self-monitoring: Nahum-Shani, Rabbi, et al., 2021). Similar considerations should be used when selecting tailoring variables such as response status to restrict the random assignments to subsequent ADI options.

In the current example (Fig. 6), random assignments to stepping up to a more intense intervention at Week 4 is restricted to nonresponders only. This ADI option is not considered for responders to avoid delivering unnecessary treatment to individuals who are responsive to the initial intervention. In fact, because this motivating example did not include scientific questions about responders, this subgroup is not randomly assigned to any subsequent ADI options at Week 4 and instead continues with the initial intervention. If there are scientific questions about subsequent ADI options for responders (e.g., whether it is better to continue or step down the intensity of the initial intervention), then the HED could involve randomly assigning responders again as well as nonresponders at the second stage. In this case, both responders and nonresponders will be randomly assigned again, but the random assignment will be restricted to different subsequent ADI options (see an example in Nahum-Shani et al., 2017).

Recommended reading to support this step includes literature that provides guidelines for planning the random assignments in SMARTs (e.g., Nahum-Shani & Almirall, 2019; Nahum-Shani et al., 2012a) and MRTs (e.g., Qian et al., 2022; Walton et al., 2018) in relation to motivating scientific questions and substantive knowledge about building ADIs and JITAIs, respectively.

Step 4: plan sample size

Similar to other experiments, the required sample size for a HED is typically affected by the effect size, the chosen Type I error rate, and the chosen statistical power. At this point, work to develop closed-form formulas for planning sample size for HEDs is still ongoing. However, we developed a simulation-based approach to plan sample size. An annotated code for implementing this approach is available online at https://github.com/dziakj1/Hybrid_Designs_Simulation. A brief user guide for applying this code is provided in Appendix C in the Supplemental Material.

Step 5: develop software for random assignment and delivery of digital-intervention components

Recall that the rapid random assignments in the HED are motivated to empirically inform the delivery of JITAI components that address conditions that change rapidly in the person’s natural environment. Hence, these options are typically delivered via mobile devices (e.g., smartphones, wearables) that can facilitate timely delivery of interventions in daily life. Because the type of technology used to deliver the interventions would have implications on the type of data that can be collected, researchers should work closely with software developers to ensure appropriate implementation and data collection. Recommended reading includes literature that provides guidelines for data collection and management in MRTs (e.g., Seewald et al., 2019). Careful attention should also be given to beta testing study procedures to ensure they operate as needed. This can be especially useful in anticipating and mitigating the various scenarios that can result in missing data before the study begins.

Other considerations

As with other studies, a detailed study protocol and manual of operations should be developed for the HED, including procedures to ensure and measure fidelity of implementation. The HED should be registered in an open-science framework before the conduct of the trial. We recommend specifying the planned analyses in the preregistration, including the list of control variables for inclusion in the models. Appendix A in the Supplemental Material provides example models that can be used to analyze the data, and Appendix D in the Supplemental Material provides annotated code to implement the analyses.

Conclusion

This article introduces HEDs, new experimental designs that can aid in the development of psychological interventions that integrate components that are adapted at multiple timescales. We describe some of the questions that HEDs can address and practical steps to guide the design of these trials. Although effectively integrating human-delivered and digital-intervention components is a key motivation for this design, the main difference between the HED and other experimental approaches is the multiple timescales at which study participants can be randomly assigned during the course of the trial. Hence, this design can potentially be used to inform the development of interventions that are not necessarily multimodality but nonetheless involve adaptation at multiple timescales (e.g., a fully digital intervention with multiple components that adapt to slow and fast changing conditions). For simplicity, this article focuses on one type of HED that integrates a prototypical SMART with a relatively simple MRT. However, HEDs can take on various forms depending on the scientific questions motivating the investigation. Additional research is needed to develop methods for analyzing data from different forms of HEDs and to find more convenient methods for planning sample size to address various types of scientific questions.

Supplemental Material

sj-docx-1-amp-10.1177_25152459221114279 – Supplemental material for Hybrid Experimental Designs for Intervention Development: What, Why, and How

Supplemental material, sj-docx-1-amp-10.1177_25152459221114279 for Hybrid Experimental Designs for Intervention Development: What, Why, and How by Inbal Nahum-Shani, John J. Dziak, Maureen A. Walton and Walter Dempsey in Advances in Methods and Practices in Psychological Science

Supplemental Material

sj-docx-2-amp-10.1177_25152459221114279 – Supplemental material for Hybrid Experimental Designs for Intervention Development: What, Why, and How

Supplemental material, sj-docx-2-amp-10.1177_25152459221114279 for Hybrid Experimental Designs for Intervention Development: What, Why, and How by Inbal Nahum-Shani, John J. Dziak, Maureen A. Walton and Walter Dempsey in Advances in Methods and Practices in Psychological Science

Supplemental Material

sj-docx-3-amp-10.1177_25152459221114279 – Supplemental material for Hybrid Experimental Designs for Intervention Development: What, Why, and How

Supplemental material, sj-docx-3-amp-10.1177_25152459221114279 for Hybrid Experimental Designs for Intervention Development: What, Why, and How by Inbal Nahum-Shani, John J. Dziak, Maureen A. Walton and Walter Dempsey in Advances in Methods and Practices in Psychological Science

Supplemental Material

sj-docx-4-amp-10.1177_25152459221114279 – Supplemental material for Hybrid Experimental Designs for Intervention Development: What, Why, and How

Supplemental material, sj-docx-4-amp-10.1177_25152459221114279 for Hybrid Experimental Designs for Intervention Development: What, Why, and How by Inbal Nahum-Shani, John J. Dziak, Maureen A. Walton and Walter Dempsey in Advances in Methods and Practices in Psychological Science

Footnotes

Transparency

Action Editor: David A. Sbarra

Editor: David A. Sbarra

Author Contributions

I. Nahum-Shani led the conceptualization, methodology development, funding acquisition and writing–original draft; J. J. Dziak led the analysis and contributed to conceptualization, methodology development, and writing–original draft; M. A. Walton contributed to conceptualization, funding acquisition, and writing–review and editing; W. Dempsey contributed to conceptualization, methodology development, funding acquisition, and writing–review and editing. All of the authors approved the final manuscript for submission.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.