Abstract

To investigate whether a variable tends to be larger in one population than in another, the t test is the standard procedure. In some situations, the parametric t test is inappropriate, and a nonparametric procedure should be used instead. The default nonparametric procedure is Mann-Whitney’s U test. Despite being a nonparametric test, Mann-Whitney’s test is associated with a strong assumption, known as exchangeability. I demonstrate that if exchangeability is violated, Mann-Whitney’s test can lead to wrong statistical inferences even for large samples. In addition, I argue that in psychology, exchangeability is typically not met. As a remedy, I introduce Brunner-Munzel’s test and demonstrate that it provides good Type I error rate control even if exchangeability is not met and that it has similar power as Mann-Whitney’s test. Consequently, I recommend using Brunner-Munzel’s test by default. To facilitate this, I provide advice on how to perform and report on Brunner-Munzel’s test.

Keywords

In psychology, a common task is to investigate whether a variable tends to be larger in one population than in another. The most used statistical technique for this research question is the parametric

However, in some situations, applying the

Relative Frequencies of the Level of Improvement for the Control Group and the Treatment Group

A test that is insensitive to the coding scheme and is thus more appropriate for ordinal data is the nonparametric Mann-Whitney

Unfortunately, the means by which the Mann-Whitney test is applied in psychology is often flawed. To reveal this, I need to clarify which hypothesis the Mann-Whitney test assesses. The Mann-Whitney test can be used to test many different hypotheses (Fay & Proschan, 2010). In psychology, it is typically presented as either a test of equality of the two populations regarding all aspects, which is often called equality of distributions (Howell, 2012) or equality of population medians (Divine et al., 2018). In the statistical literature, it has been argued that the Mann-Whitney test actually tests stochastic equality (Chung & Romano, 2016; Divine et al., 2018). If outcome scores are denoted by

The first problem is that the Mann-Whitney test is associated with strong assumptions for all three hypotheses (Chung & Romano, 2016; Fay & Proschan, 2010), which are rarely met in psychology. For example, for the equality of medians hypothesis, the assumption of equal variances across populations must be met, which is rarely the case in psychology (see Delacre et al., 2017).

The second problem is that if the assumptions are not met, this can completely invalidate the Mann-Whitney test (Chung & Romano, 2016). More specifically, the Type I error rates of the Mann-Whitney test can be seriously inflated even for large sample sizes. Thus, the Mann-Whitney test is generally not even asymptotically robust to violations of its assumptions. This is in contrast with the parametric counterpart of the Mann-Whitney test, Welch’s

This observation calls for alternatives to the Mann-Whitney test. Because the Mann-Whitney test can be used for testing different hypotheses, a substitute for each hypothesis is needed. In this article, I concentrate on the stochastic equality hypothesis because first, it is the most appropriate nonparametric operationalization of the intuitive hypothesis that a variable tends to be of equal size across two populations (see Cliff, 1993; Neuhäuser & Ruxton, 2009; or the explanation presented in the next section), and second, it has repeatedly been argued to be the most appropriate hypothesis for the Mann-Whitney test (Chung & Romano, 2016; Divine et al., 2018).

Fortunately, multiple alternatives to the Mann-Whitney test exist that are asymptotically robust tests of stochastic equality. These can be categorized into “classical” procedures and “resampling procedures.” Whereas the classical procedures employ theoretical sampling distributions, the resampling procedures estimate the sampling distribution empirically. The classical procedures include the Fligner-Policello (Fligner & Policello, 1981), Cliff’s (1993), and the Bunner-Munzel (Brunner & Munzel, 2000) tests. The resampling procedures include the permutation version of the Brunner-Munzel test (Neubert & Brunner, 2007), the Reiczigel approach (Reiczigel et al., 2005), and the Ruscio approach (Ruscio & Mullen, 2012).

The performance of the classical procedures is similar, and no classical approach is generally superior (Delaney & Vargha, 2002). The resampling procedures have not yet been compared extensively with each other. A first small comparison (Neubert & Brunner, 2007) suggests that the Reiczigel test and the permutation Brunner-Munzel test behave similarly to each other as well as to the classical Brunner-Munzel test. However, the Reiczigel test was too conservative, and the classical Brunner-Munzel test was too liberal, problems that did not occur for the permutation Brunner-Munzel test. Although the results of Ruscio and Mullen (2012) suggest that the Ruscio approach outperforms the classical approaches for confidence interval generation, its utility as a hypothesis test is questioned by its inventors (Ruscio & Mullen, 2012). In summary, the current evidence seems to favor the permutation Brunner-Munzel test weakly. In addition, the permutation Brunner-Munzel test has the advantage that it is close to the Mann-Whitney test, which is also a permutation test. Therefore, I focus on the permutation Brunner-Munzel test in this article.

It has not been investigated how the Brunner-Munzel test and the Mann-Whitney test compare in terms of power, particularly when the assumptions are met. This is important because typically a modification of a test to make it more robust reduces its power. To address this question, I perform a power comparison. Unexpectedly, the Brunner-Munzel test was almost always equally as powerful as the Mann-Whitney test or even more powerful. The only exception was skewed data together with unequal sample sizes, for which the Mann-Whitney test had a small power advantage.

Despite the advantages of the Brunner-Munzel test, the Mann-Whitney test is still widespread in psychology, whereas the Brunner-Munzel test is mostly unknown. A Google Scholar search for articles published between 2015 and 2020 in journals that contain psychology in their name and Brunner-Munzel in their text led to two results, whereas 3,350 results contained Mann-Whitney. 3 One reason for the continuing popularity of the Mann-Whitney test in psychology might be that whereas the flaws of the Mann-Whitney test and the advantages of the Brunner-Munzel test are well known in the statistical literature, those articles tend to be too technical to be accessible to applied psychologists or even teachers of psychological methods. Furthermore, the arguments that I summarized so far are spread throughout multiple statistical articles. Moreover, the articles describing the Brunner-Munzel test (Brunner & Munzel, 2000; Neubert & Brunner, 2007) introduce the Brunner-Munzel test in a rather technical manner, disconnected from the Mann-Whitney test. Finally, practical advice on how to apply and report on the Brunner-Munzel test is missing.

I thus begin this article by reviewing and demonstrating the flaws of the Mann-Whitney test in more detail. Then, I introduce the Brunner-Munzel test in a nontechnical manner, as a straightforward modification of the Mann-Whitney test. I continue with a simulation study, comparing the power of the Mann-Whitney and Brunner-Munzel tests. After that, I provide practical advice for applied researchers, in particular, which software to use for the Brunner-Munzel test and how to report on and interpret its results.

Flaws of the Mann-Whitney Test

Notation

A variable has been observed across two groups. For Group 1, the observations are denoted as x1,…,

Multiple perspectives on the Mann-Whitney test

For analyzing ordinal data, the Mann-Whitney test is a valid test under at least three different perspectives (Fay & Proschan, 2010). A perspective is defined by a combination of null hypothesis, alternative hypothesis, and assumptions. A test is called valid for a certain perspective if its Type I error rate is always below the desired significance level

Below I list the null hypothesis, alternative hypothesis, and assumptions for all three perspectives. Note that all perspectives additionally assume independence, that is, all observations are assumed to be independent of each other.

Equality of distributions - Null hypothesis: Population distributions are equal in all aspects; - Alternative hypothesis: Population distributions differ in any aspect; - Assumptions: None.

Equality of medians - Null hypothesis: Population medians are equal; - Alternative hypothesis: Population medians are unequal; - Assumptions: If the null hypothesis is true (no differences in medians), the population distributions are identical (

Stochastic equality - Null hypothesis: - Alternative hypothesis: - Assumptions: If the null hypothesis is true, the population distributions are identical (

The assumptions for the different perspectives are all a special case of the Mann-Whitney test’s core assumption, exchangeability. In the Mann-Whitney test setting, exchangeability reduces to if the null hypothesis is true, the two population distributions must be identical.

Note that the equality of distributions null hypothesis is wrong if the population distributions differ in any aspect. It encompasses the other two null hypotheses, in the sense that if any of those is wrong, then the equality of distributions null hypothesis is wrong.

Applying the three perspectives to the depression therapy example from the introduction leads to answers to the following different and, in principle, equally valid research questions. I assume now that the numbers displayed in Table 1 represent the population values. For equality of distributions, the relative frequencies across the two groups are different, so the distributions are not equal. For equality of medians, the median improvement level in both groups is slight improvement. Thus, regarding the median improvement level, the therapy and the control groups do not differ. For stochastic equality, the probability that a random person from the treatment population has a larger increase than a random person from the control population [

Requirements for a reasonable test

To demonstrate that the Mann-Whitney test is not a reasonable test for any of those perspectives, I concentrate on two requirements. Essentially, they formalize asymptotical robustness of a test to its assumptions and ensure that a test still works reasonably if its assumptions are not met.

The first requirement is asymptotic validity under realistic assumptions. Again, a test is valid if its Type I error rate is always lower than the desired significance level

The second requirement is consistency under realistic assumptions and relates to power. Asymptotic validity alone is easy to obtain. For example, a test that randomly rejects the null hypothesis with probability

Problems with asymptotic validity

First, I argue that the assumptions associated with the equality of medians and stochastic equality perspectives are not realistic in psychology. The assumptions for both perspectives are similar. If there is no difference between the two populations concerning the tested aspect (equality of medians or stochastic equality), there may not be a difference at all. This situation is unrealistic in psychology. For example, there are often variance differences between two populations despite there not being mean differences (Delacre et al., 2017).

This observation calls for investigating the performance of the Mann-Whitney test under more realistic assumptions. I use the assumption of the two populations’ variances being finite because the parametric Welch

For the equal medians perspective, the distribution

For the stochastic equality perspective, both populations were normally distributed with a zero mean. The only difference was that for Population 1, the variance was 1, and for Population 2, it was 4. Note that the hypothesis of stochastic equality is met.

I estimated the Type I error rates for increasing sample sizes. The sample sizes for Group 1 were

In Figure 1, I display the estimated Type I error rates of the Mann-Whitney test under the equal median and stochastic equality perspectives. Clearly, the Mann-Whitney test is not asymptotically valid. For the equal median perspective, the Type I error rate increased with sample size and even converged to 1. The Type I error rate for the stochastic equality perspective seems to be stable at around

Estimated Type I error rates of the Mann-Whitney test. The horizontal line displays

Problems with consistency

For the difference in distributions perspective, the Mann-Whitney test is asymptotically valid under the finite variances assumptions. The only assumption needed for the validity of the Mann-Whitney test for the difference in distribution perspective is independence (Fay & Proschan, 2010). Consequently, it is also asymptotically valid. However, the Mann-Whitney test is also not reasonable under this perspective because it is not consistent.

The simulation study I performed for showing that the Mann-Whitney test is not asymptotically valid for the stochastic equality perspective showcases this. Note that in this scenario, the population distribution differed by their variances. Thus, the null hypothesis of equal distributions was false. However, as is visible in Figure 1b, the power of the Mann-Whitney test remained relatively constant around

Brunner-Munzel Test

Importance of the stochastic equality perspective

The observations from the previous section call for alternatives to the Mann-Whitney test for each perspective. In this article, I focus on the stochastic equality perspective because more often than not, this is the perspective psychologists should take when applying the Mann-Whitney test. To unpack this statement, I discuss the different perspectives and when they should be chosen.

The equality of distributions perspective stands out because it does not answer a directional question (Schlag, 2015), in the sense that it does not investigate whether a variable tends to be larger in one population than in another. Note that the equality of distributions null hypothesis is wrong if the population distributions differ in any aspect.

However, psychologists typically are interested in making a directional statement (Schlag, 2015). This is especially the case when the Mann-Whitney test is applied because it is understood to be the nonparametric equivalent of the

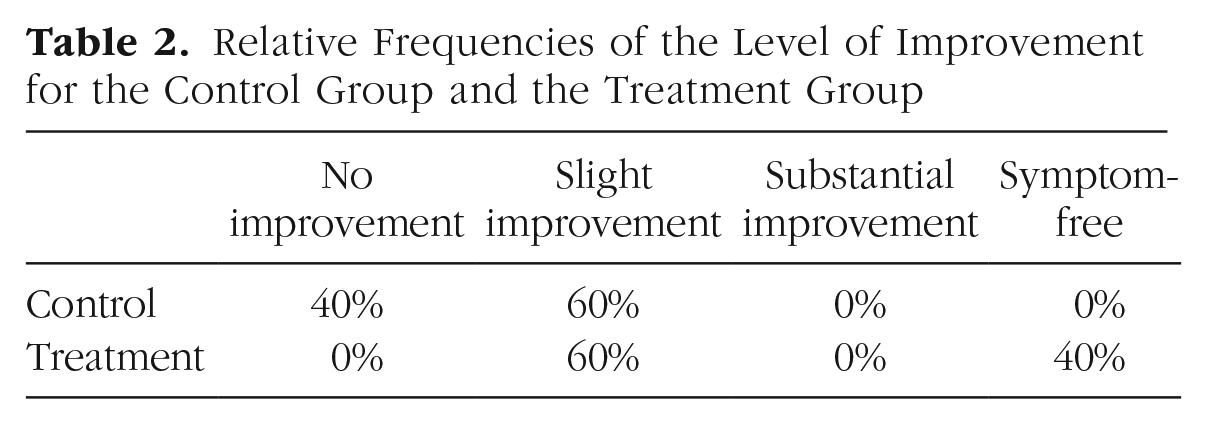

Which directional perspective is the most appropriate to take should be determined for each research project individually. However, the stochastic equality perspective is closer to the individual person, and thus often to the substantive research question, than the equality of medians perspective. For the equality of medians perspectives, all individuals are summarized by one value, the median. The medians are then compared across the groups. This strategy, summarizing first and then comparing, leads to a lot of information loss. The stochastic equality perspective, in contrast, compares all individuals with each other directly, and thus all available information is considered. I illustrate with the therapy example. Consider that the population frequencies are as displayed in Table 2. Thus, no person in the treatment group had worse improvement than any person in the control group, and many persons in the treatment group had substantially better improvements than all persons in the control group. Thus, clearly, the therapy works. However, under the equality of medians perspective, the therapy and the control treatment are equally effective because for both, the median is slight improvement. In contrast, the stochastic equality perspective correctly captures the difference between the two groups because it compares the individual improvement levels directly: P(improvement treatment > improvement control) = 64% > 0 = P(improvement treatment < improvement control), that is, the probability that the therapy leads to a bigger improvement than the control treatment is

Relative Frequencies of the Level of Improvement for the Control Group and the Treatment Group

Mann-Whitney

statistic

Because the Brunner-Munzel test modifies the Mann-Whitney test, I first introduce the computational details of the latter. For the definition of the Mann-Whitney

The Mann-Whitney U statistic is then the sum of this function over all possible Group 1, Group 2 pairings

Stochastic superiority statistic

The Brunner-Munzel test builds on an equivalent version of the Mann-Whitney test, which uses an easier to interpret modification of the U statistic. Instead of using the sum over all possible pairings, the average value is calculated. The resulting test statistic is

The test statistic

The stochastic superiority estimate

From stochastic superiority statistic

to P value

For the Mann-Whitney test, multiple techniques exist to translate the stochastic superiority statistic

Essentially, the permutation approach is a generally applicable technique to translate a test statistic into a p value. It comes with one core assumption, exchangeability, and thus is the reasion why exchangeability is also the core assumptions of the Mann-Whitney test. In addition, it is well known that the permutation test is not asymptotically valid when exchangeability is violated (Chung & Romano, 2013). This explains why the Mann-Whitney test is not asymptotically valid under the equality of medians and stochastic equality perspectives if the corresponding assumptions are violated.

However, statisticians have invented a general technique, called studentization, which makes a permutation test asymptotically valid even if exchangeability is violated (Chung & Romano, 2013; Janssen, 1997). Neubert and Brunner (2007) applied this technique to the Mann-Whitney test to make it asymptotically valid. The resulting test is the Brunner-Munzel test.

Studentizing the stochastic superiority statistic

The core idea behind studentization is surprisingly simple. The test statistic is transformed such that it has approximately a standard normal distribution under the null hypothesis. However, the required derivations are quite technical. The interested reader is referred to Neubert and Brunner (2007) and Brunner et al. (2018). Here, I will present only the result.

The modified test statistic is the studentized stochastic superiority statistic

with the pooled standard deviation

with

As for the Mann-Whitney test, optimally, a permutation approach is employed to translate the studentized stochastic superiority statistic

However, this rather small difference leads to substantially different properties. The Mann-Whitney test is not asymptotically valid for the stochastic equality perspective. Thus, if the assumption of exchangeability is not met, the Type I error rate can be substantially higher than the significance level, even for large samples. I demonstrated this in the preceding section (see Fig. 1). In contrast, Brunner and Munzel (2000) proved that the Brunner-Munzel test is asymptotically valid under the rather general and reasonable assumption that the variances of both population distributions are finite. Note that this is exactly the same condition under which the parametric Welch t test is asymptotically valid.

Simulation Study

The fact that the Brunner-Munzel test is asymptotically valid does not guarantee that the Type I error rates are satisfactory for sample sizes as they occur in psychology. In addition, the theoretical results do not quantify how the Brunner-Munzel test compares with the Mann-Whitney test in terms of power. In particular, if the assumptions of the Mann-Whitney test are met, it could be that the Mann-Whitney test has higher power and thus should be preferred in this case. The appropriate tool to investigate these questions is a simulation study.

Multiple simulation studies have already investigated under which conditions the Type I error rate of the Brunner-Munzel test is sufficiently close to the significance level α (Brunner & Munzel, 2000; Delaney & Vargha, 2002; Neubert & Brunner, 2007; Neuhäuser, 2010; Neuhäuser & Ruxton, 2009). In general, the results are reassuring. Even for small samples, the Type I error rate was sufficiently close to the significance level α. As one example, in the simulation study performed by Brunner and Munzel (2000), the Type I error rates were between 4.6% and 5.7% for a significance level of

Rather surprisingly, to my knowledge, no previous study compared the power of the Brunner-Munzel and Mann-Whitney tests. Thus, this is the focus of this simulation study. I also report Type I error rates because no previous study used a design specifically tailored to mirror the factors as they occur in psychology and to keep this article self-contained.

The full details of the simulation study can be found in the Supplemental Material available online. Here, I focus on summarizing the results.

Concerning Type I error rates, the results are in line with the results from previous simulation studies. The estimated Type I error rate of the Brunner-Munzel test was never higher than 6%. For sample size

For power, I consider only the conditions in which exchangeability was met. Only in these conditions is it guaranteed that both tests have the same Type I error rate, which is required for a meaningful power comparison. Overall, the power of both tests was similar. The mean of the absolute power differences was 1.38%, and the median was 0.50% (see also Table S2 in the Supplemental Material).

However, for the symmetric distributions, the Brunner-Munzel test had more power than the Mann-Whitney test. 4 The power advantage ranged from 4.05%.

For the skewed distribution, the opposite pattern emerged. Although the Mann-Whitney test was not more powerful in all conditions, it was more powerful in the majority of the conditions. The Mann-Whitney test’s maximum power advantage was 10.33%.

In summary, the Type I error rate of the Brunner-Munzel test converged very quickly to the significance level of

Brunner-Munzel Test in Practice

Example data set

In this section, I discuss practical considerations by applying the Brunner-Munzel test to an example data set. The example data come from the Eurobarometer 73.2 (European Commission, 2012). The data are open and available at http://doi.org/10.4232/1.11429. As part of the Europarameter 73.2, participants were asked the question, “How often during the past 4 weeks have you felt downhearted and depressed?,” which had the ordinal answer options all the time, most of the time, sometimes, rarely, and never and the noninformative don’t know. The research question is whether either men or women feel depressed more often. Because the data are ordinal and the research question is directional, the appropriate formalization of this question is to test for stochastic equality.

Computational considerations

The first practical challenge is computational. After removing all missing values, including the don’t know responses, the data set consisted of responses from 14,430 women and 12,199 men. Calculating the test statistic for all possible permutations of the data, as required by the permutation Brunner-Munzel test, is computationally infeasible. The number of permutations needed would be

This computational infeasibility of the Brunner-Munzel test for moderately sized samples is shared by all permutation tests, thus also by the Mann-Whitney test. In general, there are two approaches to solve this problem. The first uses a random number of permutations instead of all permutations (this is sometimes called approximate or Monte Carlo approach). The second uses a parametric distribution to approximate the permutation distribution (this is called the asymptotic approach).

For the Brunner-Munzel test, there seems to be no consensus yet which approach is better. However, only for the asymptotic approach, detailed evidence that it is reasonably accurate for moderately large samples is available (Brunner & Munzel, 2000). In addition, in this article’s simulation study, I used the asymptotic approach, confirming those results. Thus, for now, I recommend using the asymptotic approach if the exact approach is computationally infeasible.

The difference between the exact permutation approach and the asymptotic approach is most pronounced for very small samples, in which the exact permutation approach is computationally feasible. In particular, the permutation approach has better Type I error control in very small samples (

Reporting results

Before reporting the results, I introduce guidelines for reporting on the Brunner-Munzel test. I follow the American Psychological Association principles for reporting on statistical tests as closely as possible. Note that my recommendations are different from the standard recommendations for the Mann-Whitney test (Field, 2017, p. 296). This is not because the Brunner-Munzel test is conceptually different from the Mann-Whitney test but, rather, is because of the misconception in the literature that the Mann-Whitney test is a reasonable test for median differences.

The appropriate test statistic to report is the studentized stochastic superiority estimate

As a measure of effect size, the raw stochastic superiority estimate

Applying those guidelines to the example data set leads to the following results: Women and men were not stochastically equal in their reporting of how often they felt depressed,

Interpretation of stochastic superiority effect size

To guide the interpretation of the stochastic superiority effect size, multiple approaches have been proposed (Cliff, 1993; Divine et al., 2018). I discuss the most prominent here to help readers understand the stochastic superiority effect size better and give readers a tool set to explain the results of applying the Brunner-Munzel test to their readers.

It helps to interpret the stochastic superiority effect size

The stochastic equality effect size can be visualized as a bubble plot (see Fig. 2). In this plot, points above the diagonal represent Group 1, Group 2 pairs in which the Group 2 observation was bigger. For points below the diagonal, the Group 1 observation was bigger, and for points on the diagonal, both observations were equal. The stochastic equality effect size summarizes this plot by computing the proportion of points above the diagonal, including half of the points on the diagonal.

Bubble plot for the example data set.

O’Brien and Castelloe (2006) suggested to transform the stochastic superiority effect size

Another transformation is Cliff’s

For this example, one can reveal the causes of the significant stochastic superiority effect size by directly comparing the distributions. In Table 3, the relative frequencies are compared across the two groups. Note that 38.74% of the men but only 31.03% of the women reported that they never felt depressive symptoms. The option rarely was chosen essentially equally often by both genders. In contrast, for sometimes, most of the time, and all of the time, the frequencies are all higher for the women. Together with the lower frequency of women in the never category, this explains the significant stochastic superiority effect size.

Relative Frequencies of the Frequency of Depressive Symptoms for Men and Women

Confidence intervals

It is often recommended to report confidence intervals additionally to or instead of the outcome of a hypothesis test. For the example data set, accurate confidence intervals for the stochastic superiority can be obtained from the asymptotic Brunner-Munzel test and confidence intervals for the other effect sizes by transforming the obtained confidence interval for the stochastic superiority (see Appendix). The resulting confidence intervals are [0.44, 0.45] for stochastic superiority, [0.78, 0.83] for the WMWodds, and [0.09, 0.12] for Cliff’s

For small samples or if the population stochastic superiority is close to 0 or 1, the confidence interval obtained in this fashion should be treated with caution. There is still no consensus on which approach to use in this case. Potential remedies include a bootstrap approach (Ruscio & Mullen, 2012), inverting the permutation Brunner-Munzel test (Pauly et al., 2016), and multiple other approaches (see Brunner et al., 2018, Section 3.7.2). Implementations of some of these approaches can be found in the R packages rankFD, nparcomp, and RProbSup.

Code

In the Appendix, I provide the R code used for the example analysis discussed here.

Discussion

Summary

I have demonstrated that the Mann-Whitney test is not a reasonable test of equality of medians, distributions, or stochastic equality. This observation motivated identifying a reasonable test for each perspective. Here, I focused on the stochastic equality perspective because it is the most appropriate formalization of the intuitive hypothesis that one population tends to have larger values than another. To this end, I introduced the Brunner-Munzel test. I demonstrated that it is a reasonable test for the stochastic equality perspective with often equal or even higher power than the Mann-Whitney test. Only for skewed data and unequal sample sizes was the Mann-Whitney test more powerful. As a consequence, I recommend that psychologists should use the Brunner-Munzel test as the default nonparametric test instead of the Mann-Whitney test. They should use the Mann-Whitney test only if they are confident that their data meet the assumptions of exchangeability and are skewed, which will rarely be the case. Note that contrary to common practice, preliminary assumption checks, whether based on formal hypothesis tests or visualization techniques, cannot be recommended to establish exchangeability or skewness because preliminary assumption checks are generally flawed. Among other problems, they distort the Type I and II error rates of the actual test of interest (Wells & Hintze, 2007). Valid alternative approaches to establishing assumptions are theory and reason (Wells & Hintze, 2007). To enable researchers to apply the Brunner-Munzel test, I provided practical guidance.

Nonparametric or parametric testing

I did not yet address the question regarding when researchers should adopt the parametric Welch t test over the nonparametric Brunner-Munzel test. This question has already received a lot of attention (Delaney & Vargha, 2002; Rietveld & van Hout, 2015; Ruxton, 2006; Ruxton & Neuhäuser, 2019), but there still seems to be no consensus. For now, my recommendation is as follows.

The Welch t test is the recommended procedure for testing equality of means (Delacre et al., 2017), whereas I recommend the Brunner-Munzel test for testing stochastic equality. Thus, the choice between the two tests should first and foremost be guided by which hypothesis a researcher intends to test. Because each hypothesis is associated with a different research question, the choice is essentially determined by which research question a researcher aims to answer. Note that this is contrary to the common practice of choosing between tests on the basis of pretesting of assumptions, which, again, should be avoided (Wells & Hintze, 2007).

For ratio and interval data, I illustrate using the running depression therapy example. However, now I assume that the improvement was measured using an interval variable indicating the extent of the improvement, with high values indicating a large improvement. In this example, the equality of means hypothesis is equivalent to the research question of whether the therapy, on average, leads to more improvement than the control treatment. In contrast, the stochastic equality hypothesis is equivalent to whether for a random patient, the therapy has a higher chance to lead to a bigger improvement than the control treatment. I illustrate that those are profoundly different questions using a concrete example. In the therapy group, 90% of the patients did not improve, whereas 10% improved by 20, which is considered a substantial improvement. In the control group, all patients improved by 1, which is a slight improvement. Using a comparison of means, one would conclude that the therapy works given that the mean improvement was bigger (M = 2) in the therapy group than in the control group (M = 1). In contrast, using stochastic equality, one would conclude that the therapy does not work given that for two random patients from both groups, there is a 90% chance that the patient who was in the control group had a bigger improvement.

Again, which research question to ask should be decided individually by each researcher. However, psychological researchers should consider testing the stochastic equality hypothesis more often than is currently done. For example, for an individual patient, identifying the treatment with a higher chance of leading to a bigger improvement seems more relevant than identifying the therapy with the larger average improvement. At the very least, testing for stochastic equality and reporting the associated effect sizes provides useful additional information.

For ordinal data, testing for stochastic equality should be the default strategy because a comparison of means requires at least an interval scale (Delaney & Vargha, 2002), and the stochastic equality hypothesis and its associated effect sizes are also meaningful for ordinal data. This advice seems to conflict with the common practice of treating ordinal data as continuous, especially if the number of levels is sufficiently large, enabling a comparison of means. However, note that this practice implicitly assumes that the data are not ordinal but, rather, discrete data on an interval scale because it implies that the numerical distance between each pair of subsequent categories is equal. Consequently, whether this practice is appropriate is not related to the numbers of categories but, rather, whether this central assumption of an interval scale is fulfilled.

In certain situations, the hypothesis of equal means and stochastic equality are equivalent, most importantly, if the distributions of the two populations are symmetric. Only in this situation should the choice between the tests be guided by their statistical properties. To make an informed choice between the two tests, a detailed comparison of the power of Welch’s t test and the Brunner-Munzel test is needed, which has not been performed. However, the practical value of such a comparison can be questioned because one virtually never knows with certainty that the population distributions are symmetric. Consequently, by default, one should assume that they are not and select between the two tests on the basis of the different hypotheses they test.

Testing median differences and equality of distributions

I have not yet discussed how to best test for median differences or equality of distributions. Testing for equality of distributions is generally a tough problem. A test appropriate for general use must be able to detect all the numerous ways two distributions can differ. This is probably one reason why no consensus has been reached about how to best test for equality of distributions and also casts doubt on whether this will ever be the case. However, I agree with Chung and Romano (2016) that omnibus tests such as the Kolmogorov-Smirnov and the Cramér–von Mises tests, which capture the differences of the entire distributions as opposed to only testing for stochastic equality, should be preferred over the Mann-Whitney test. An overview and comparison of available classical procedures can be found in Thas (2010), whereas Wasserman (2012) provided an overview of some modern alternatives.

For testing median differences, the classically recommended procedure is Mood’s median test (Brown & Mood, 1951). However, many modern alternatives and supposed improvements have been proposed (Bonett & Price, 2002; Chung & Romano, 2013; DiCiccio & Efron, 1996; Schlag, 2015; Wilcox, 2006). A detailed independent comparison of those tests is missing, and thus no recommendation can be given yet. Consequently, a comparison of available tests for median differences is recommended for future work.

Conclusion

In conclusion, when investigating directional research questions, psychologists should test for stochastic equality more often. However, instead of the Mann-Whitney test, they should use the Brunner-Munzel test by default.

Supplemental Material

sj-pdf-1-amp-10.1177_2515245921999602 – Supplemental material for Psychologists Should Use Brunner-Munzel’s Instead of Mann-Whitney’s U Test as the Default Nonparametric Procedure

Supplemental material, sj-pdf-1-amp-10.1177_2515245921999602 for Psychologists Should Use Brunner-Munzel’s Instead of Mann-Whitney’s U Test as the Default Nonparametric Procedure by Julian D. Karch in Advances in Methods and Practices in Psychological Science

Footnotes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.