Abstract

Replication studies that contradict prior findings may facilitate scientific self-correction by triggering a reappraisal of the original studies; however, the research community’s response to replication results has not been studied systematically. One approach for gauging responses to replication results is to examine how they affect citations to original studies. In this study, we explored postreplication citation patterns in the context of four prominent multilaboratory replication attempts published in the field of psychology that strongly contradicted and outweighed prior findings. Generally, we observed a small postreplication decline in the number of favorable citations and a small increase in unfavorable citations. This indicates only modest corrective effects and implies considerable perpetuation of belief in the original findings. Replication results that strongly contradict an original finding do not necessarily nullify its credibility; however, one might at least expect the replication results to be acknowledged and explicitly debated in subsequent literature. By contrast, we found substantial citation bias: The majority of articles citing the original studies neglected to cite relevant replication results. Of those articles that did cite the replication but continued to cite the original study favorably, approximately half offered an explicit defense of the original study. Our findings suggest that even replication results that strongly contradict original findings do not necessarily prompt a corrective response from the research community.

Keywords

It is often assumed that science is a self-correcting enterprise: The veracity of scientific knowledge should progressively improve as inaccurate claims are abandoned and accurate claims are reinforced (Vazire & Holcombe, 2020). Replication studies are considered to be a key driver of this process because they may indicate that prior results are exaggerated or erroneous (Ioannidis, 2012; Zwaan et al., 2018). Although interpreting the outcome of replication studies is not necessarily straightforward (Collins, 1985; Earp & Trafimow, 2015; Maxwell et al., 2015), one might expect a replication result that strongly contradicts 1 and outweighs the results of a prior (“original”) study to affect how that study is cited in subsequent academic literature. For example, if a replication undermines belief in the credibility of an original finding, one might expect to see a change in the frequency and valence (favorability) of citations to the original study, that is, a decrease in favorable citations accompanied by an increase in unfavorable citations. However, as discussed below, a variety of interesting patterns could emerge depending on how the research community responds to a replication result. The goal of the present study was to empirically explore and describe postreplication citation patterns in the context of four prominent multilaboratory replication attempts published in the field of psychology that strongly contradicted and outweighed the findings of prior studies.

Table 1 outlines several citation patterns that might follow a contradictory replication result, each reflecting different types of response by the research community. We have tentatively categorized these response patterns as being “progressive” or “regressive,” depending on their expected impact on the accumulation of scientific knowledge. 2 The first set of patterns, belief correction/perpetuation, refers to what is often considered a primary functional role of (contradictory) replication studies—to change belief in the credibility of exaggerated or erroneous original findings (Ioannidis, 2012; Vazire & Holcombe, 2020; Zwaan et al., 2018). In the absence of an explicit defense of an original study that convincingly explains a strongly contradictory replication result (see explicit defense explanation below), a progressive response might involve a decrease in favorable citations and an increase in unfavorable citations, reflecting updated beliefs about the credibility of the original finding. Conversely, a regressive response might involve maintenance of (or even increase in) favorable citations and relatively few unfavorable citations, which suggests a perpetuation of belief in the credibility of the original finding despite the contradictory replication result. Prior research has documented how favorable citations to observational epidemiology studies can persist despite the claims of those studies being strongly contradicted in subsequent randomized trials (Tatsioni et al., 2007). Likewise, it has been reported that even when articles are retracted, they can continue to receive favorable citations (Budd et al., 1998; Fernández & Vadillo, 2020). Thus, there is evidence that belief in the credibility of original findings can perpetuate even when subsequent events cast doubt on their credibility; however, we are unaware of similar evidence in the context of studies that were explicitly designed to test the replicability of prior findings.

Progressive or Regressive Responses to Strongly Contradictory Replication Results and Their Expected Impact on Citation Patterns for Original Studies

The second set of patterns in Table 1, citation balance/bias, generally refers to whether positive (supportive) evidence is preferentially cited relative to negative (nonsupportive) evidence (Bastiaansen et al., 2015; Greenberg, 2009). This pattern has previously been observed in the context of research on inclusion body myositis; citation content analysis showed that the accumulating literature heavily cited the theory that beta amyloid is involved, ignoring multiple studies that contradicted this theory (Greenberg, 2009). In the present study, these patterns specifically refer to whether articles citing an original study also cite the subsequent contradictory replication study. A progressive response would be to cite both studies (citation balance) because this involves considering and reporting highly relevant evidence (even if the implications of the replication are disputed; see explicit defense explanation below). By contrast, citation bias could occur if articles citing an original study neglect to cite a relevant replication study. Regardless of whether this occurs through lack of awareness or deliberate omission, it can be considered a regressive response pattern because highly relevant evidence is not being reported or considered.

The third set of patterns in Table 1, explicit/absent defense, refers to whether researchers who continue to favorably cite the original finding despite the strongly contradictory replication result offer a concrete defense of the original study. As implied above, even when the results of a replication study strongly contradict the results of an original study, this does not necessarily nullify the credibility of the original findings; the same criticisms that one might apply to an original study to infer that its findings are erroneous or exaggerated may also be applied to replication studies (Collins, 1985; Earp & Trafimow, 2015; Maxwell et al., 2015). Thus, if proponents of the original claim mount an explicit defense that counters the implications of the replication results, this might still be considered a progressive response (although obviously one could disagree with the arguments that are presented). By contrast, if favorable citations to the original study are not accompanied by explicit argumentation about the replication result (an absent defense), this might be considered a regressive response because the replication result is apparently discounted without providing any rationale.

In the present study, we explored the postreplication citation patterns described above in the context of four case studies in the field of psychology in which the findings of a replication study strongly contradicted and outweighed the findings of an original study (Table 2). In two of the cases, the replication studies were part of a single Many Labs project (Klein et al., 2014) and addressed the “flag priming effect” (T. J. Carter et al., 2011) and “money priming effect” (Caruso et al., 2013), respectively. The other two cases involved Registered Replication Reports (Simons et al., 2014) that examined influential demonstrations of the “facial feedback effect” (cf. Strack et al., 1988; Wagenmakers et al., 2016) and the “ego-depletion effect” (cf. Baumeister et al., 1998; Hagger et al., 2016; Sripada et al., 2014), respectively. For methodological reasons (see Hagger et al., 2016), the ego-depletion replication was aimed at a classic study in the field (Baumeister et al., 1998) but actually employed a modified computer-based version of the original paradigm (Sripada et al., 2014). For this particular case study, we examined citation patterns to both of these original studies.

Sample Sizes and Effect Sizes for Replication Studies and Original Studies

Note: Publication dates are earliest available (i.e., “online first” if relevant). d = Cohen’s d; MD = mean difference; k = number of data-collection sites; N = total number of participants; CI = confidence interval.

Total citations to the original study between the publication date and December 31, 2019.

For methodological reasons (see Hagger et al., 2016), the ego-depletion replication was aimed at a classic study in the field (Baumeister et al., 1998) but actually employed a modified computer-based version of the original paradigm (Sripada et al., 2014). We examined postreplication citation patterns for both studies.

We adopted a case-study approach to develop a “narrow and deep” understanding of the topic, as opposed to a “broad and shallow” approach, which would have required a deliberate representative sampling strategy. We chose these particular case studies because they involved prominent preregistered multilaboratory replication attempts with sample sizes 23 times to 211 times larger than the original studies, thus providing highly visible and highly credible evidence that strongly contradicted and outweighed earlier findings. 3 This facilitates additional interpretative clarity about the citation patterns one might expect to observe.

The present study was exploratory in nature and intended to provide descriptive observations rather than test hypotheses. The three sets of expected citation patterns outlined in Table 1 were used to guide our study design and interpretation, but we do not claim that this is a comprehensive typology of the postreplication patterns that may occur. Such patterns may be more complex and idiosyncratic in other topic domains. To examine belief correction/perpetuation patterns, we downloaded citation histories (a list of citing articles) for each original study and classified the valence (favorable, equivocal, or unfavorable) of a set of prereplication and postreplication citations. To examine citation balance/bias patterns, we manually checked whether postreplication citations of the original study were accompanied by citations to the replication study. Finally, to examine explicit/absent defense patterns, we extracted and categorized any counterarguments offered in articles that cited the replication.

Method

The study protocol (rationale, methods, and analysis plan) was preregistered on April 7, 2018 (https://osf.io/eh5qd/). An amended protocol was registered partway through data collection on May 1, 2019, primarily because we extended the sampling frame to cover additional months (https://osf.io/pdvb5/). All deviations from these protocols are explicitly acknowledged in Supplementary Information A in the Supplemental Material available online. We report how we determined our sample size, all data exclusions, all manipulations, and all measures in the study.

Design

This was a retrospective observational study consisting of four case studies. Primary outcome variables were annual citation counts for original studies, citation valence (favorable, equivocal, unfavorable), co-citation of original and replication studies, and frequency/type of counterarguments.

Sample

We examined four case studies in which a prominent preregistered and multilaboratory replication study strongly contradicted and outweighed the findings of an original study (Table 2). As shown, all the original studies found modestly large to very large effects, and all of them were relatively small studies (thus they would have been underpowered to detect small effects). Conversely, all the replication efforts comprised very large sample sizes, and they would be very well powered to detect even small effects; however, they all obtained null results.

Procedure

Annual citation counts

Citation histories (i.e., bibliographic records for all articles that cite the original study) from the publication date of each original study through December 31, 2019, were downloaded from Clarivate Analytics Web of Science Core Collection, accessed via the Charité – Universitätsmedizin Berlin on August 12, 2020. We also obtained citation histories for a reference class—all articles published in the same journal and the same year as each original study—from the same source. For example, for Baumeister et al. (1998), the reference class was all articles published in 1998 in the Journal of Personality and Social Psychology. Citation counts were standardized in each case study by setting the citation count in the replication year to the standardized value of 100 and then adjusting the counts in other years according to the same transformation ratio. For example, if the raw citation count in the replication year was 1,000, citation counts in each year would be standardized by dividing by 10. This computation was performed separately for citations to the reference class and citations to the original article.

Qualitative assessment

Qualitative assessment of citation patterns was limited to a time period starting 1 year before the year of publication of the replication study until December 31, 2019, excluding the year in which the replication was published. We excluded the replication year because it may be unreasonable to expect citing articles already in the publication pipeline to cite the replication study. For the Baumeister case, the qualitative analysis was based on a random sample of 40% of citing articles from the prereplication period and postreplication period because of the large number of citations to the original study (n = 1,974; for details, see Supplementary Information B in the Supplemental Material).

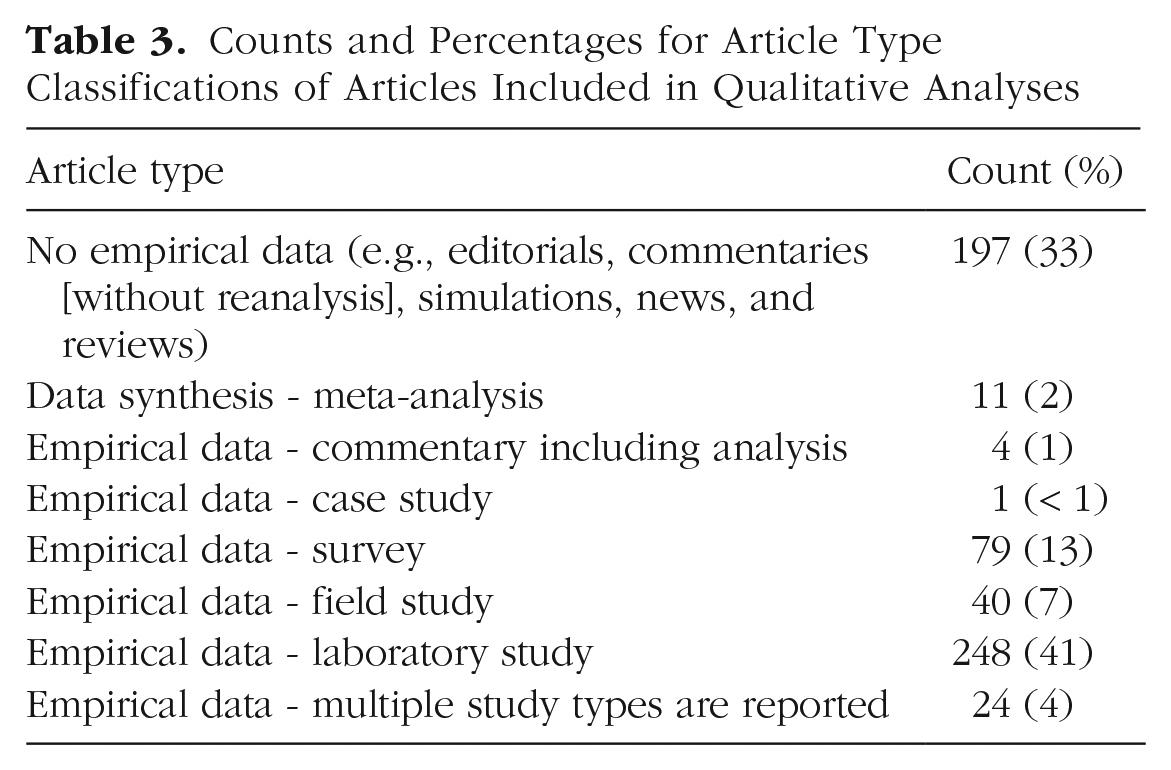

For each citing article undergoing qualitative assessment, we attempted to retrieve the full text via several methods in the following order: (a) search of at least two of the institutional libraries we are affiliated with; (b) general Internet search for the article title, including the Google and Google Scholar search engines and Research Gate; (c) email requests to the corresponding author; and (d) interlibrary loan request. Articles that remained inaccessible after all of these methods were exhausted were excluded. Articles written in a non-English language were translated by one of the authors or by using Google Translate (see Supplementary Information D in the Supplemental Material). For articles for which we could obtain the full text, we classified the research design according to the categories in Table 3 and recorded whether the replication study was cited after manual inspection of the reference section (see Table 1: citation balance/bias).

Counts and Percentages for Article Type Classifications of Articles Included in Qualitative Analyses

To examine the belief correction/perpetuation pattern (Table 1), one of six primary coders (T. E. Hardwicke, D. Szűcs, R. T. Thibault, S. Crüwell, O. R. van den Akker, and M. B. Nuijten) manually extracted the “citation context” of the original study and the replication study (i.e., all relevant verbatim text surrounding each in-text citation). The primary coder then classified the citation valence as “favorable,” “equivocal,” “unfavorable,” or “unclassifiable.” Favorable citations were those used to support a positive claim about the phenomenon of interest, whereas unfavorable citations were used to support a negative claim about the phenomenon of interest. Citations were considered equivocal if the authors did not take a predominantly favorable or unfavorable position. Citations that did not endorse or oppose the phenomenon of interest (e.g., simply referring to the procedures of the original study) were designated as unclassifiable. Because this process was inherently subjective, the citation contexts and classifications were also examined by one of six secondary coders (T. E. Hardwicke, D. Szűcs, R. T. Thibault, S. Crüwell, O. R. van den Akker, and M. B. Nuijten). Disagreements were resolved through discussion, and a third coder arbitrated when necessary. Valence classifications by the primary coder were modified after discussion with the secondary coder in 31 (5%) cases.

To examine the explicit/absent defense pattern (Table 1), the primary coder flagged articles that co-cited the original and replication studies and also contained any explicit defense of the original study. Subsequently, two team members (O. R. van den Akker and S. Crüwell) reexamined all of the flagged cases, extracted verbatim counterarguments, and developed a post hoc categorization scheme that summarized them as concisely and informatively as possible. Coding disagreements were resolved through discussion, and a third coder (T. E. Hardwicke) arbitrated when necessary.

In additional exploratory (not preregistered) analyses, we examined overlap of authorship for articles that provided counterarguments with (a) any of the authors of the original studies and (b) any prior collaborators of the first authors of the original studies. These analyses are complicated by the fact that author names in bibliographic records do not always adhere to the same grammatical standards—for example, whether forenames are initialized or middle names are included—so it is not straightforward to isolate individual authors within bibliographic databases. To identify prior collaborators of the first authors of the original studies, we downloaded bibliographic records (on February 2, 2021) for all articles published by each of the original study first authors according to their author record in the Web of Science Core Collection. These author records are automatically generated by an algorithm that attempts to identify all documents likely published by an individual author using several variations of their name (e.g., “Hardwicke, Tom E.,” “Hardwicke, Tom,” “Hardwicke, T. E.”), but errors can still occur, and incomplete database coverage means that this method likely misses some of the authors’ prior publications and consequently some of their collaborators. Nevertheless, the method supports a reasonable lower bound estimate of authorship overlap with articles providing counterarguments. To identify authorship overlap, we used string manipulation tools in R to extract only author surnames from bibliographic records and then used string matching to automatically detect the presence of original author or collaborator surnames among the surnames of authors of articles that provided counterarguments. When a match was detected, it was verified by manual examination of the authors’ full names.

Results

In total, 2,829 articles cited one of the original studies, of which 632 articles (after taking a 40% random sample in the Baumeister case) fell within the time period designated for qualitative assessment. Of these 632 articles, we excluded 28 from the qualitative analysis because (a) we could not access the full text (n = 22), (b) they included a citation to the original study in the reference section but not in the main text (n = 5), or (c) manual inspection indicated that they did not actually appear to cite the original study at all (n = 1). Article type classifications for the remaining 604 articles included in the qualitative analysis are shown in Table 3.

Annual citation counts and citation valence

Figure 1 shows standardized annual citation counts for each original study and the respective reference class (citations to all articles published in the same year and same journal as the original study) and classifications of citation valence (favorable, equivocal, unfavorable, unclassifiable, or excluded). The data can also be viewed in tabular format in Supplementary Table C1 in the Supplemental Material. All counts (n) reported in the text and table are raw counts (i.e., not standardized).

Standardized annual citation counts (solid line) for the five original studies with citation valence (favorable, equivocal, unfavorable, unclassifiable) illustrated by colored areas in prereplication and postreplication assessment periods. The dashed line depicts citations to the reference class (all articles published in the same journal and same year as the target article). Annual citation counts are standardized against the year in which the replication was published (citation counts in the replication year, indicated by a black arrow, are set at the standardized value of 100). Citation valence classifications for the Baumeister case are extrapolated to all articles in the assessment period according to a 40% random sample.

After the replication was published, citations to the reference classes were continuing their trend to plateau (Baumeister case) or increase (other cases). By contrast, citations to the original study appeared to undergo a modest decline in the Strack case (decreasing from 56 to 41 between 2015 and 2019) and a small decline followed by a small increase in the Baumeister case (increasing from 191 to 199 between 2015 and 2019). In the other cases (Sripada, Carter, Caruso), the total citation counts were much lower, and there was considerable variability in the postreplication citation patterns; nevertheless, there was no substantial change in annual citations from prereplication to postreplication in these three cases (the maximum difference was +8 citations).

Before the replication, the vast majority of citations were favorable for all five articles (range = 67%–100%). In most cases (Strack, Sripada, Carter, and Caruso), there was a small postreplication increase in unfavorable citations and a small decrease in favorable citations, indicating a modest active correction pattern. However, the overall number of unfavorable citations was very low, and there was still a substantial majority of favorable citations. For example, in the Strack case, unfavorable citations increased from 0% in the prereplication period (2015) to 7% in the postreplication period, whereas favorable citations decreased from 88% to 78%. In the Baumeister case, the proportion of favorable citations remained stable from prereplication (79%) to postreplication (77%), a pattern consistent with belief perpetuation. The very small number of unfavorable citations (2017: n = 7, 7%; 2018: n = 2, 2%; 2019: n = 2, 4%) suggests that this is largely an unchallenged belief perpetuation pattern (see Table 1).

Citation balance and citation bias

Figure 2 shows the proportion of citing articles that also cited or did not cite the replication study after it was published (excluding the publication year itself). The data can also be viewed in tabular format in Supplementary Table C1 in the Supplemental Material. In the Strack and Baumeister cases, a considerable majority of articles citing the original study did not cite the replication study, which indicates substantial citation bias. In the Baumeister case, the proportion of articles citing the replication study remained stable (20% in 2017, 18% in 2019). In the Strack case, the proportion increased from 13% to 41%. In the Carter and Caruso cases, the proportion never exceeded 50%, also consistent with substantial citation bias. In the Sripada case, it was much more common for the replication study to be cited (> 88%), which reflects a balanced citation pattern.

Standardized annual citation counts (solid line) for the five original studies with citation balance/bias (i.e., whether the replication is cited) illustrated by colored areas in the postreplication assessment period. The dashed line depicts citations to the reference class (all articles published in the same journal and same year as the target article). Annual citation counts are standardized against the year in which the replication was published (citation counts in the replication year, indicated by a black arrow, are set at the standardized value of 100). Replication citation proportions for the Baumeister case are extrapolated to all articles in the assessment period according to a 40% random sample.

Explicit defense and absent defense

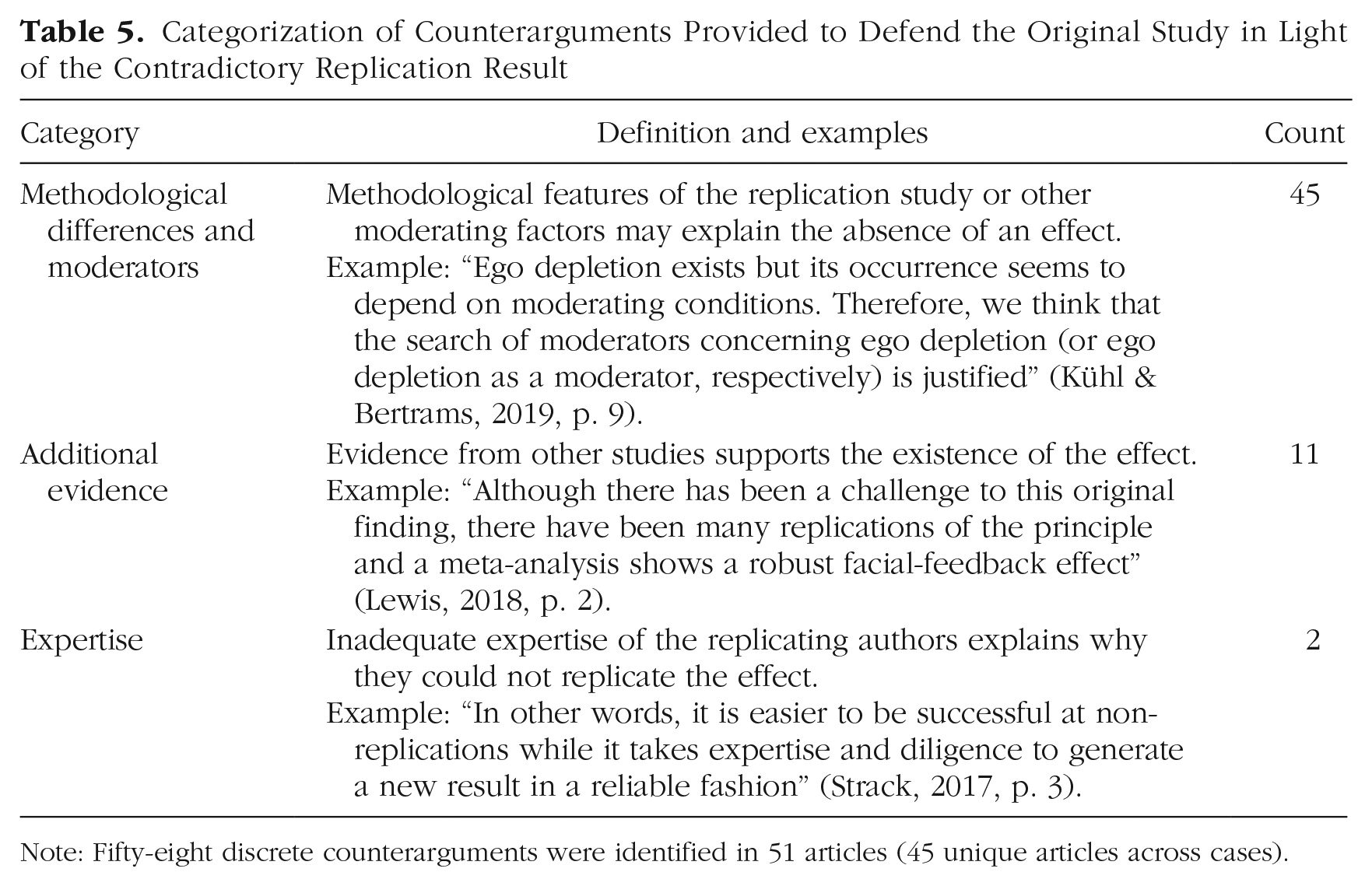

Table 4 shows whether articles that cited the original study and replication study (“co-citing articles”) and the subset of co-citing articles that cited the original study favorably provided any explicit counterarguments to defend the credibility of the original finding (an explicit defense) or not (an absent defense). Overall, fewer than half of the 127 co-citing articles provided any counterarguments. Of the 60 co-citing articles that cited the original study favorably, around half provided counterarguments. We identified 58 discrete counterarguments in 51 citing articles (45 of which were unique articles because six of them were cited in two of the case studies) and allocated them to one of three categories (Table 5).

Counts and Percentages for Whether Articles That Cited Both the Original Study and Replication Study Provided Any Explicit Argumentation to Defend the Original Study

Note: Data are displayed for co-citing articles with any citation valence classification and the subset of co-citing articles with favorable citation valence classifications.

Categorization of Counterarguments Provided to Defend the Original Study in Light of the Contradictory Replication Result

Note: Fifty-eight discrete counterarguments were identified in 51 articles (45 unique articles across cases).

In additional exploratory analyses (not preregistered), we examined other characteristics of the 45 unique articles that contained counterarguments. The articles were published in 34 individual journals; Frontiers in Psychology published seven of the articles, Social Psychology published four of the articles, and all other journals published only one or two of the articles. Seventeen of the articles did not involve empirical data, three involved reanalysis or meta-analysis of existing data, and 25 involved collection of novel data. The articles had 112 individual authors, of whom all contributed to a single article except for nine individuals who had authored or coauthored two articles. Three articles were authored or coauthered by one of the original authors, and nine articles were authored or coauthoredby at least one prior collaborator of one of the first authors of the original articles. Seven of these articles did not involve empirical data, and five of them involved novel data collection.

Citations to replication studies and co-citation of original studies

A reviewer requested that we examine citation counts for replication studies and check whether citing articles also co-cited the relevant original study. To obtain the data, we downloaded bibliographic records from the Clarivate Analytics Web of Science Core Collection, accessed via the University of Amsterdam on April 16, 2021, for articles that cited each replication study (up to the study endpoint—December 2019) and cross-checked them with our sample of articles that cited the original study. As shown in Table 6, the replication studies also have a life of their own, and they are often cited independently of the specific original study. Often this is easy to explain. For example, Klein et al. replicated 13 original studies, not just the two that were of interest in our analysis. These studies are also likely to have accrued citations by virtue of being among the first highly prominent examples of preregistered multilaboratory replications in psychology.

Citations Counts for Replication Studies and Co-Citation Counts to Relevant Original Studies

Discussion

It has been proposed that replication studies can facilitate scientific self-correction by modifying scientists’ belief in the credibility of published findings (Ioannidis, 2012; Vazire & Holcombe, 2020; Zwaan et al., 2018); however, the extent to which this occurs in practice is unclear. In this study, we investigated how the research community responded to four strongly contradictory replications in the field of psychology by examining postreplication citation patterns for original studies. We observed some progressive response patterns in the form of modest active correction (a small decline in favorable citations and a small increase in unfavorable citations) and even more prominent regressive response patterns in the form of unchallenged belief perpetuation (sustained levels of favorable citations and few unfavorable citations, particularly in the Baumeister case) and considerable citation bias (neglecting to cite the replication study; in all cases aside from Sripada). When authors cited the original study favorably despite the replication result, only half of the articles provided any explicit counterarguments in defense of the original study (an explicit defense). Overall, these findings are consistent with prior observations that favorable citation patterns appear relatively unperturbed by subsequent publication of contradictory results in studies with lower risk of bias (Tatsioni et al., 2007) or even by full retraction (Budd et al., 1998; Fernández & Vadillo, 2020) and that positive (supportive) evidence is preferentially cited relative to negative (nonsupportive) evidence once a theory gets entrenched despite overwhelming evidence against it (Bastiaansen et al., 2015; Greenberg, 2009).

It is reasonable to question whether the replication results in these case studies should (rationally) have instigated belief change in the research community and thus triggered a more sizable decline in favorable citations than we observed. Note that the case studies we selected were deliberately chosen because the replication results were superior to the original results in terms of both credibility and evidential value. The replications were preregistered, multilaboratory studies with large sample sizes. By contrast, the original studies had much smaller sample sizes and arguably had a much higher risk of bias given that they were not preregistered, were performed by single teams, and arose in domains affected by publication bias and other questionable research practices (E. C. Carter et al., 2015; Coles et al., 2019; Vadillo, 2019; Vadillo et al., 2016). Thus, it seems reasonable to suggest that the compelling replication results should have reduced belief in the credibility of the original results. However, one could also contend that if there were some flaw in the replication study that undermined its validity (Brandt et al., 2014; Fabrigar et al., 2020; Vazire et al., 2021), then this may provide justification to continue favorably citing the original study. Indeed, in these particular case studies, the validity of the replication results has been challenged by proponents of the original findings (Table 5; Baumeister & Vohs, 2016; Strack, 2016). Because this debate remains far from settled (Coles et al., 2019; Vadillo, 2019), ideally any favorable citation of the original studies should at a minimum be accompanied by co-citation of the replication results and some discussion of the discrepant findings.

The clear evidence of citation bias that our study documents may have two main contributory factors: (a) a lack of awareness about the replication results and/or (b) a decision to ignore the replication results. The practical issue of awareness is not necessarily straightforward to address. Individual scientists can find it difficult to keep up to date with the voluminous literature that is relevant to their research. Recently, the reference manager Zotero introduced a feature that alerts users when an article in its database has been retracted (Zotero, 2019). One could imagine a similar feature being introduced for replication studies, perhaps based on databases that explicitly identify replication studies. However, it is much less straightforward to define and identify a relevant replication study (Neuliep & Crandall, 1993), and users would need to be alerted that this requires some scientific judgment rather than simple article metadata. Another solution could be to encourage researchers to focus less on individual studies and more on up-to-date evidence summaries (i.e., reviews and meta-analyses) in which relevant evidence is systematically identified and collated. This would require that high-quality and contemporary evidence summaries are available; however, in psychology, systematic reviews and meta-analyses can be of low quality, and their results may still be inflated and nonreproducible (Kvarven et al., 2020; Maassen et al., 2020; Polanin et al., 2020). Moreover, empirical studies are often not included in any form of evidence synthesis (Hardwicke et al., 2021).

The second issue of authors ignoring highly relevant replications seems undesirable and implies a biased appraisal or presentation of the evidence. Contradictory replication results do not necessarily nullify the credibility of an original study (Collins, 1985; Earp & Trafimow, 2015; Maxwell et al., 2015), but we would still expect highly relevant replication results to be cited and explicitly debated. In fact, we found that when authors continued to favorably cite original studies despite the replication results, around half did not provide explicit counterarguments in defense of the original study. Although our study did not examine researchers’ individual beliefs, one recent study reported that when research psychologists were confronted with replication evidence, they often did update their (self-reported) beliefs, albeit modestly (McDiarmid et al., 2021). However, there are several reasons to be uncertain about whether the results from this artificial setting might generalize to real-world settings, including potential participant reactivity (i.e., participants behaving differently because they are under observation and/or responding to the perceived expectations of the research team) and the possibility that individuals may behave differently in settings in which they have substantial personal investment and may be publicly scrutinized. In addition, cognitive psychology studies have obtained some evidence of a “continued influence effect” wherein an individual’s beliefs and behavior can continue to be influenced by false or misleading information despite subsequent efforts to reject it (Lewandowsky et al., 2012). It is plausible that various cognitive biases, such as confirmation bias (preferentially seeking out and processing evidence that supports preexisting beliefs) or motivated reasoning (constructing and evaluating arguments according to what is desirable rather than what is rationally justifiable), may partly explain researchers’ tendency to ignore or dismiss the replication evidence (Bishop, 2019; Kunda, 1990; Nickerson, 1998).

We observed that when counterarguments were raised, most of them tried to dismiss the contradictory replication by claiming that the original and the replication studies differed in important ways that moderated the absence/presence of the effect under investigation. In some cases, authors pointed to evidence from other studies as a rationale for their continued belief in the effect. In a minority of cases, the authors challenged the competence of replicators. We found that articles presenting counterarguments were published in a variety of journals (rather than clustered in a few journals) and involved collection of new data in around half of the cases. They were also published by a sizable group of investigators, only a minority of whom were one of the original authors or had previously collaborated with one of the original first authors. This suggests that the explicit defense of the original study came from a variety of sources rather than being confined to a small number of investigators. However, note that this analysis may underestimate authorship overlap because of the difficulties isolating individual researcher identities (see Method section) and articles published in the same year as the replication not being included.

The findings presented here are inherently limited by the observational nature of the study design, which complicates straightforward conclusions about the causal impact of the replications. Although the use of a reference class enables us to detect the influence of exogenous factors to some extent, we cannot rule out their contribution. For example, the modest decline in favorable citations observed in most cases could be attributable, at least in part, to a more general awareness in the research community about methodological issues (e.g., that the sample sizes of the original studies may not have provided adequate statistical power). We have also focused only on the replication study and the original study in each case study without considering the impact of other potentially relevant events. Note that metaresearch studies have detected signatures of publication bias and other questionable research practices in the fields to which these case studies belong (E. C. Carter et al., 2015; Coles et al., 2019; Vadillo, 2019; Vadillo et al., 2016), and other relevant replication studies contesting prior findings have been published (e.g., Rohrer et al., 2015).

We have been able to gauge reactions to replications only to the extent that they are reflected in relatively short-term citation patterns. A potential explanation of the apparently cursory treatment of the replication studies could be that researchers became aware of them only after their own research projects had begun and/or even had been completed. If one of the original studies had been a key motivator for one’s own study, then it may be difficult to accommodate the strongly contradictory replication results. It may even be tempting to ignore them or give them only superficial treatment. Moreover, researchers who are convinced that the replication study has squarely refuted the original may no longer be interested in doing research on a topic for which they see no future potential. In addition, examination of citation patterns would not detect whether there had been a correction effect among individuals who would not typically cite the original study, such as students, members of the public, or researchers working in other fields. A contested study may continue to be cited favorably by its proponents who remain working in the field. This will suffice to create belief perpetuation in the published literature even though other scientists may simply no longer be interested in getting involved with such a strongly contested research topic. Relatedly, we did not examine citation patterns beyond 3 to 5 years after replication. Some perspectives envision scientific self-correction unfolding over a much longer time scale (Lauden, 1981; Peterson & Panofsky, 2020) and suggest that the process is characterized less by the impact of individual study results and more by the gradual accumulation of converging evidence, gradual revision of theoretical understanding, and/or informal sociological processes (e.g., researchers choosing alternative topics to study). Thus, although the current findings may contradict the expectations of a more direct and expedited view of the corrective impact of replication studies (Ioannidis, 2012; Vazire & Holcombe, 2020), they are not necessarily inconsistent with a slower and more indirect process of self-correction. Future research could employ longitudinal designs or older historical case studies to evaluate citation patterns unfolding over a longer time scale. Finally, because we examined only citation patterns, this study could not capture other potential responses to replication results, such as changes in research practices.

Generalization beyond these four case studies requires caution. Note that the replication studies examined here were some of the first large-scale, multilaboratory replication attempts conducted in the field of psychology. This gave them particular prominence and initiated considerable debate, which resulted in broader ramifications beyond the research community that typically studies the topics under scrutiny (Nelson et al., 2018). Also note that we deliberately selected case studies in which the replication studies were high-profile and had yielded high credibility evidence that strongly contradicted and outweighed the original findings. A correction effect may be less expected in cases in which replication results are more ambiguous, less consequential, or less well known. For example, in a situation in which two high-credibility studies with similar evidential value yield contradictory results, it would be premature to lose confidence in one of the studies before further investigation has probed the cause of the discrepancy. Pursuit of potential moderating factors may be entirely rational in such circumstances (Gershman, 2019).

We also note that particular aspects of our study were inherently subjective, specifically, the identification of citation context, the classification of citation valence, and the identification, extraction, and categorization of counterarguments. To minimize subjectivity, a team of six investigators performed coding in duplicate, with a third investigator arbitrating if necessary. Because disagreements between primary and secondary coders were infrequent, we are confident that the classifications are meaningful, but there may be some edge cases when an independent observer might reasonably disagree with our classifications.

In conclusion, postreplication citation patterns in four case studies indicated that the anticipated corrective impact of strongly contradictory replication results did not materialize to any substantive degree. A lack of awareness of replications and/or a decision to discount or omit them appears to have played a significant role. This highlights potential practical problems with the discoverability of replication studies and psychological or sociological issues related to belief change. The findings also indicate that scientific self-correction may not be as expedient or straightforward as one might hope (Ioannidis, 2012), which adds further impetus toward efforts to improve the quality of the academic literature (Hardwicke et al., 2020; Nelson et al., 2018).

Supplemental Material

sj-docx-1-amp-10.1177_25152459211040837 – Supplemental material for Citation Patterns Following a Strongly Contradictory Replication Result: Four Case Studies From Psychology

Supplemental material, sj-docx-1-amp-10.1177_25152459211040837 for Citation Patterns Following a Strongly Contradictory Replication Result: Four Case Studies From Psychology by Tom E. Hardwicke, Dénes Szűcs, Robert T. Thibault, Sophia Crüwell, Olmo R. van den Akker, Michèle B. Nuijten and John P. A. Ioannidis in Advances in Methods and Practices in Psychological Science

Footnotes

Transparency

Action Editor: Julia Strand

Editor: Daniel J. Simons

Author Contributions

T. E. Hardwicke, D. Szűcs, and J. P. A. Ioannidis designed the study. T. E. Hardwicke, D. Szűcs, R. T. Thibault, S. Crüwell, O. R. van den Akker, and M. B. Nuijten performed the data extraction and coding. T. E. Hardwicke and S. Crüwell performed the data analysis. T. E. Hardwicke wrote the manuscript. All of the authors provided feedback and approved the final manuscript for submission.

Supplemental Material

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.