Abstract

Entrepreneurial education (EE) is plagued by multiple vexing issues, such as unfocused impact studies, few pedagogical practices that work, infrequent scaling of good practice and passive single-case reliant communities. These issues have a stagnant effect on EE. What if they all have a single root cause? What if our efforts to see what works in EE have been hampered by limitations in established scientific methods? Designed action sampling is a new scientific method that combines action research, design science, experience sampling and critical realism. Teachers co-design action-oriented step-wise experiments that are then carried out by other teachers, who reflect in written form afterwards upon effects they see. Originally conceived in EE, the method has been used mostly outside EE by around 4,000 teachers and 36,000 students to form large communities around school development, vocational education and teaching in segregated areas. We investigate what problems can be solved in EE through this innovative design-based research method. Teacher communities in EE could adopt the new method to build more active communities that co-design, share, replicate and evaluate classroom level practices. This could reverse the stagnant effect of vexing issues in EE. Achieving this through research method innovation has not previously been proposed.

“It is time for us to break out of our closed loop. It is time for us to matter.” Presidential address to Academy of Management, Donald C. Hambrick (1993, p.13)

Introduction

Entrepreneurial education (EE) is plagued by multiple and vexing issues. Impact assessment is hampered by many difficulties, such as assessing the impact of something that has not even been defined properly (Draycott & Rae, 2011; Jones & Iredale, 2010). Studying “what works” in a rigorous enough way is difficult when complexity and divisiveness abound (Brentnall et al., 2018; Hägg & Gabrielsson, 2019). Scaling entrepreneurial pedagogy is largely unheard of, apart from “McDonaldized” standard approaches such as pitching, business plan writing and mini-company creation (Fletcher, 2018; Hytti, 2018). Spreading good practice is hindered by classroom practices largely remaining a well-kept secret, a black box we seldom get to look into (Nabi et al., 2017; Neck & Corbett, 2018). Strong communities of practice are rare in EE, leaving most educators isolated in marginalized teaching practices (Michels et al., 2018). For example, the ECSB 3E community meets once a year, each research team reporting their findings in isolation, but has so far largely failed to interact in larger-scale co-creative pedagogical development and assessment efforts that matter (cf. Hambrick, 1994, p. 13).

What if all these vexing issues have a single root cause in common? What if our collective efforts to see what works in EE have been hampered by some fundamental limitations in the established set of scientific tools and methods we have so far employed? Our main way of seeing what goes on in EE is through surveys and interviews grounded in quantitative positivism and in biased single-case descriptions (Blenker et al., 2014; Lindahl Thomassen et al., 2020; Maritz, 2017). Such mono-method approaches mirror well how our mother field entrepreneurship has been studied (McDonald et al., 2015). We rarely look beyond our own course or programme to study others’ practice in a deeper sense. And when we do, we usually gaze through the fuzzy lens of large-scale surveys that rarely expose what really happens in other teachers’ classrooms. This positions our field stuck in Schön’s (1995, p.28) theoretical rigour versus practical relevance dilemma: “Shall [we] remain on the high ground where [we] can solve relatively unimportant problems according to [our] standards of rigor, or shall [we] descend to the swamp of important problems where [we] cannot be rigorous in any way [we know] how to describe?“. EE suffers from this fundamental theory-practice gap (Neck et al., 2014, p. 7).

In this article, we posit that one way out of this dilemma is to engage in architectural method innovation, defined by Lê and Schmid (2022, p. 311) as “novel ways of combining new and well-established research approaches”. Such innovation efforts can help us “see in new ways” (Bansal et al., 2018, p. 1189) what works, thus potentially triggering significant theoretical developments and new ways to tackle wicked problems (Lê & Schmid, 2022; Wiles et al., 2011). The purpose of this article is therefore to outline a novel combination of research methods, here labelled designed action sampling, which has evolved over a decade’s effort to innovate research methods. It consists in teachers or educational developers co-designing action-oriented step-wise experiments that are then carried out by other teachers with their students and reflected upon individually and collectively. This method was originally conceived in the field of EE (Lackéus, 2020a), but has over the years been applied mostly in other fields such as general education, social work, medicine and organisational learning. We will therefore here explore the following home-coming questions: How could designed action sampling be applied in its origin domain of EE? What problems could it potentially alleviate or solve? Could it be used to better identify, create, discuss, evaluate and diffuse good practices?

Designed action sampling (DAS) is an amalgam and innovative development of four different research traditions, all relatively uncommon in EE. First and foremost, the focus has been on taking action together with practitioners, aiming to help them in their daily efforts to create value for their beneficiaries such as students, colleagues or customers. This is grounded in a Clinical Action Research (CAR) tradition (Coghlan, 2009; Schein, 1993). Second, such help has been given through a careful co-design and written specification of practical activities or “tasks” that are then tested in social experiments by participants such as teachers, students and colleagues. This is grounded in a Design Science Research (DSR) tradition (Dresch et al., 2015; Romme, 2003). Third, data collection in all of the co-designed experimental interventions has been done through asking all participants to quantify and reflect in writing upon any effects they saw (or not), immediately after they carried out each step in the social experiment. This is grounded in an Experience Sampling Method (ESM) tradition (Hektner et al., 2007; Stone et al., 2003). Fourth, the overarching ambition has been to study causal mechanisms on a micro-level in a context-sensitive way, aiming to uncover “what might work, for whom and why” (Brentnall et al., 2018, p. 406) rather than to arrive at macro-level universal laws for what “works” for all. This is grounded in a Critical Realism (CR) tradition (Little, 1991; Sayer, 2010).

The reception of a combined CAR-DSR-ESM-CR approach was initially somewhat lukewarm, but has through a decade of experimentation and continuous improvements together with some 4,000 teachers and their 36,000 students been increasingly well received, also engaging scholars across Northern Europe (e.g., Derre, 2023; Ericsson, 2022; Grigg, 2020; Lindahl Thomassen & Ramsgaard, 2022; McCabe & Phillips, 2022; Ruskovaara & Pihkala, 2016; Tjulin & Klockmo, 2023; Westerberg, 2022). Issues studied include how to improve teaching in segregated areas, how to integrate newly arrived refugees, how to improve pupils’ reading skills, how to embed programming in schools, how to capture children’s learning in preschools, how to assess apprentices, how to drive change in hospitals, and many more. We have been able to confirm claims made in literature that novel ways to collect, analyse and present research data can result in new perspectives, deeper understandings, theoretical contributions and practical solutions to wicked problems (Lê & Schmid, 2022; Wiles et al., 2011). Many challenges have also been confirmed, such as sceptical colleagues inside academia, slow uptake of novel research methods and a conservative distrust of innovations viewed as packaged, marketed and over-claimed fads (Wiles et al., 2013).

To investigate how DAS could benefit the EE community, the article proceeds as follows. First, four vexing issues in EE are briefly outlined. This is followed by a review of four research traditions that might provide remedy, relating them to extant work in EE and to the four vexing issues. Then comes a detailed description of DAS as a concerted approach to address the four vexing issues. Empirical examples are given. The new method is then discussed in relation to Wizard of Oz as an illuminating and revelatory metaphor, followed by implications and conclusions.

Four Vexing Issues in EE: Impact, Sharing, Community and Scaling

There are many ways to problematise a field such as EE. From consensus based attempts to develop a field, through attempts to generate alternative ways to perceive it, to dissensus based attempts to disrupt and unsettle the entire field as a whole (Fletcher & Seldon, 2016). The below selection of vexing issues in EE is anchored in both a consensus and a dissensus approach. While the main aim here is to explore how we better can identify, create, discuss and diffuse good practices through a hopeful appreciation of EE, we also need to realize that EE faces some vexing issues that cannot be complacently ignored.

Impact Assessment: Does EE “Work”?

Investigating if EE “works” has been the topic of countless impact studies and many PhD theses. Half of the 25 most cited articles in EE focus on impact assessment, making it the most dominant theme in EE research (Tiberius & Weyland, 2022). This reflects an anxiety in regard to the question: “Can entrepreneurship be taught?“. In question here is not whether it is possible to teach facts and knowledge about entrepreneurship, e.g. financial statements or incorporation procedures, but rather if the transversal skills and mindset needed for entrepreneurship as practice can be taught (Lautenschläger & Haase, 2011). Despite many decades of scholarly effort, only weak consensus has been established that it does seem to work, sometimes (Nabi et al., 2017). Three main shortcomings in impact assessment are methodological weaknesses, a neglect of pedagogical variation and a short-term focus that fails to capture longer-term effects (Longva, 2019, p. 29). An inherent assessment challenge is also the transversal, interdisciplinary and horizontal nature of entrepreneurial competencies which, by definition, transcend disciplinary concepts which are easier to assess (Janssen et al., 2007). As a way forward, scholars have started to question the prevailing yes/no focus on whether entrepreneurship can be taught, to instead recommend a more pluralistic and micro-oriented investigation into which pedagogical approaches in the classroom that lead to which outcomes in which contexts (Hägg & Gabrielsson, 2019; Longva & Foss, 2018). This could even be “the only way for us to understand entrepreneurship education in an incremental and meaningful way” (Nabi et al., 2017, p. 292).

Good Practice Sharing: What Works in the EE Classroom?

One of the best kept secrets in EE is what happens in the classroom and how that works for the students (Neck & Corbett, 2018). Classroom practices are more complex, more advanced and more diverse than most impact studies in EE lead us to assume (Lans et al., 2017). Little is known about how even the most basic pedagogical tools such as Effectuation, Lean Startup or Business Model Canvas work in the classroom for the students (Günzel-Jensen & Robinson, 2017; Lans et al., 2017; Mansoori et al., 2019). To unpack this, there is a need to “acknowledge the nuances of EE offered across the world, at different education levels and with quite diverse pedagogics” (Longva & Foss, 2018, p. 369).

One potential source of good practice is the many EE projects funded by the European Union. Some of these projects specify pedagogical tools in considerable detail, such as how to let students interact with the outside world (Seeber, 2021). Many projects try to establish a digital repository of good EE practice, see for example EntreAssess, Nemesis, EntreCompEdu, Intrinsic, DOIT Toolbox, ETC Toolkit and EE-HUB. However, most of these repositories end up being largely inactive after project termination. What is also lacking is details around the effectiveness of each tool in various contexts. This constant project merry-go-round yields a growing graveyard of deserted good practices with unknown use or usefulness. Sustainable sharing of good practice seems to require something that EU projects in EE have been unable to deliver so far – an active networked community that works long-term together, and that rigorously measures the effects of various improvement efforts (cf. LeMahieu et al., 2015).

Community Building: How to Co-Create EE Practice that Works?

Many EE teachers are embedded in rather traditional educational institutions, making them largely isolated in their attempts to innovate pedagogical approaches (Michels et al., 2018). In such hostile environments, being part of an active community of EE teachers can be quite helpful. There are a few active communities in EE, if we assume that meeting once a year to exchange pedagogical ideas is defined as being active rather than being a “minimal impact” phenomenon or “an incestuous, closed loop” (cf. Hambrick, 1994, p. 13). Most of these communities revolve around yearly academic conferences, primarily the 3E conference in Europe, the USASBE conference in the USA and the ISBE conference in the UK, engaging around 1500 people in total (Hägg & Kurczewska, 2022; Landström et al., 2022). Research articles and workshops constitute the main formats for disseminating good EE practice at these conferences, often in collaboration with academic journals such as JSBM, IJEBR and EE&P. There are also a few practitioner-led communities, the largest being Junior Achievement (JA) that organises around 500,000 teachers and volunteers in more than 100 countries (Brentnall et al., 2023). An example of a national practitioner-led and member-based community is EEUK with around 1400 associates and a yearly conference IEEC (Michels et al., 2018). An international community of around 1200 people is also emerging around EntreComp, the EU framework for entrepreneurial competencies (Bacigalupo et al., 2016; van Gelderen, 2020). There are also a few small international communities around pedagogical approaches such as the full-venture creation approach (Smith et al., 2022), the Team Academy approach (Urzelai & Vettraino, 2022), and the Babson approach (Tresierra et al., 2021). Business coach communities represent a distinct type of EE, focused on giving advice to entrepreneurs, see for example SCORE Foundation and Kauffman Foundation’s FastTrac program. Finally, there is the Entrepreneurship Division, a large community of around 3000 entrepreneurship scholars who meet at Academy of Management Yearly Meeting. However, their focus is not on EE but on entrepreneurship as a scholarly field.

Communities of practice in EE is a much under-researched topic (Landström et al., 2022). Most attempts to establish EE communities fail due to funding issues (Michels et al., 2018). Most communities are active only for short time periods, such as during a yearly conference or while a project is funded. Most digital repositories of good EE practice are passive, under-utilised or outright abandoned. How much interorganisational collaboration around micro-level pedagogical practice occurs is largely unknown, but can be assumed to be marginal. A fundamental challenge is how to feasibly organise co-creation, co-improvement and co-evaluation of EE pedagogy for teachers who are isolated and resource constrained in their hostile home institutions.

Organisational Scaling: How to Spread Good EE Practice?

Scholars agree that EE has grown tremendously as a phenomenon in the last decades, but less is known about the diffusion of different pedagogical practices. A few popular practices have indeed spread globally, such as business plan writing, idea pitching, business competitions, mini-company creation, lean startup, design thinking and effectuation (Günzel-Jensen & Robinson, 2017; Hytti, 2018). But since the EE classroom remains a well-kept secret or a black box that we cannot see inside (Baggen et al., 2021; Maritz & Brown, 2013), very few micro-level approaches to such practices have spread across schools, campuses, institutions or borders. For each single case description diffused through books, conferences and journals, that particular pedagogical arrangement is in many cases used only by the teacher team who developed it. It could be for example a lecture plan, a workshop exercise, an assessment technique, a pedagogical perspective or an entire course plan. And even if a micro-level approach is adopted elsewhere, we have no simple method in EE for spreading it or measuring its impact in a context different from where it was developed. Such replication is seldom even discussed. A remarkable exception to the lack of scaling is Junior Achievement, who have been immensely successful in scaling their mini-company approach. JA has, however, other problems that put in question whether this represents good practice, such as ideological clouding of their capitalist heritage, aggressive populist evidencing through complacent evaluations and a habit of taking credit from winners in JA competitions (Brentnall et al., 2023).

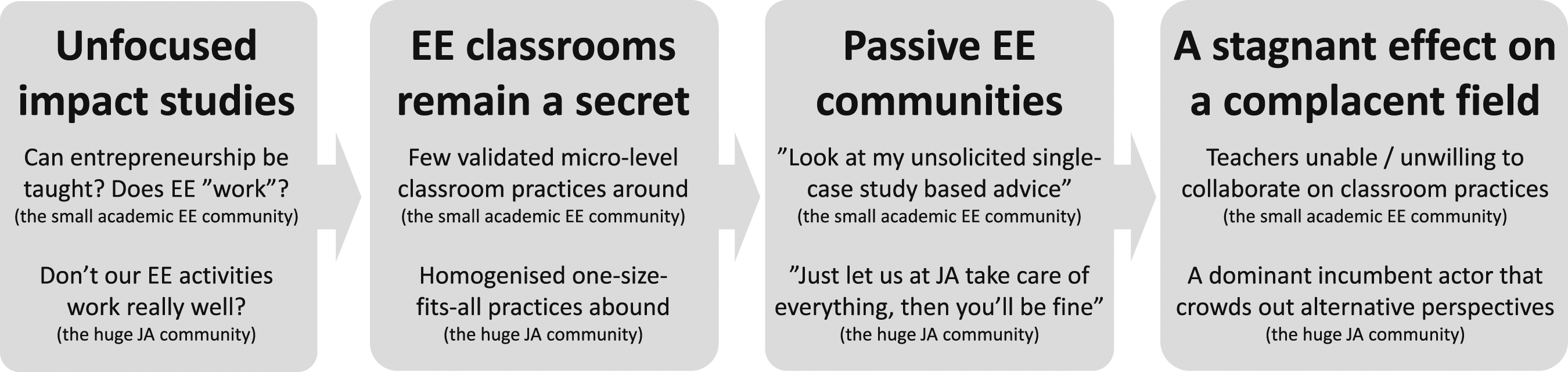

With little insight into what “works” in EE, little insight into which classroom approaches are effective, with passive and single-case reliant communities of practice that seldom measure replication effects on a micro level, and with a lack of diffusion and evaluation mechanisms apart from Junior Achievement, we cannot expect EE to become a cumulative field of increasing insight anytime soon. Instead, we must realize that apart from the occasional pockets of excellence (Elmore, 1996), EE can be described as in a state of complacence and escalating stagnation (Hytti, 2018; Katz, 2003; Zhang, 2017). Zhang (2017, p. 5) writes that EE “has remained stagnant partially due to insufficient fine-grained qualitative research on the impact of entrepreneurship education”. Katz (2003 p.296) writes that a “big problem [for EE] is avoiding stagnation [through] getting ‘complacent with success’”. Hytti (2018, pp. 230–231) describes EE as in a homogenised state of “McDonaldization”, where “one-size-fits-all” activities are run in a similar way across the globe and are adopted from “Junior Achievement models”. Given that the JA community is more than 100 times larger than the major academic EE communities in the world, Hytti’s remark seems sad but true. The drama of EE is summarized in Figure 1. The drama of escalating stagnation in EE.

Vexing issues in EE have been extensively discussed before. Various ways forward have been articulated, such as drawing more on educational research and philosophy (Fayolle, 2013; Hannon, 2006), increasing the rigor of impact studies through pre- and post measurements and control groups (Martin et al., 2013), paying more attention to pedagogical details (Nabi et al., 2017) and to contextual factors (Lindahl Thomassen et al., 2020). This article represents a rather different take, exploring whether uncommon research methods can counter stagnation and complacency in EE.

To the Rescue: Four Research Methods Uncommon in EE

We will now outline four research traditions that are rarely used in EE but that may counter stagnation. For each research tradition, we articulate some initial ideas around how it could be put to use in EE. There could certainly be other rare research traditions useful in EE, but these four emerged from a decade of innovating research methods together with around 40,000 practitioners within and outside the field of EE.

Clinical Action Research in General, in EE and in the Future

Action research is a broad family of research approaches where researchers enter a setting with the dual purpose of helping practitioners with their real-world problems while at the same time collecting data that may contribute to furthering social science (Coghlan & Shani, 2014). Action research rests on the basic assumption that only by trying to change a social system can one fully understand it (Lewin, 1947). Hidden mechanisms become visible that would not have revealed themselves for a mere observer at a distance. Action research is often undertaken in action-and-reflection cycles, comprising plan-act-observe-reflect-revise, allowing for theory and practice to inform each other (Altrichter et al., 2002). This, however, entails many challenges, such as how to generalize beyond the immediate setting, how to publish generated insights in scientific outlets, and how to avoid vague and fuzzy working procedures (Wedekind, 1997). Therefore, an action research tradition may well benefit from being combined with other research traditions.

One such tradition is adopting a clinical posture. Medical doctors are not alone in doing clinical work. Also social workers, lawyers, consultants, coaches, managers and certainly teachers engage in clinical work, if we by clinical mean a situation where someone is being asked to help another human being – a client – in a relational way (Schein, 1993). What differentiates clinical action research from regular action research is that “the researcher comes into the situation in response to the needs of the client, not his or her own needs to gather data” (Schein, 1993, p. 703). This changes the psychological contract significantly for teachers when asked to participate. A regular research-oriented purpose can in fact deter teachers from wanting to take part at all, dismissing outsiders’ attempts to help them develop their teaching as unsolicited advice (Farber, 1999, p. 226): “What practice is subject to more unsolicited advice and criticism than teaching? The ceaseless promotion of “new ideas” to improve what teachers do is a fact of life in the field.”

Despite its potential to bring together and empower a community of EE teachers (Winkler et al., 2018), action research is quite rare in EE. Only a few examples have been identified. Winkler et al. (2018) studied an extracurricular co-working space for student entrepreneurs at a US college. Action research has also recently been used to develop an EE model in Australia (Maritz, 2017). In general education, especially on pre-university levels, action research is quite common among teachers (Bryk et al., 2015; Kemmis et al., 2014; Schildkamp et al., 2016; Timperley, 2015).

In the future, CAR could inspire EE teachers to build more active and relational communities. EE teachers could try helping teachers at other institutions in their classrooms, but also dare to open up their own classroom for clinical advice from outsiders around what might work better for their own students. Learning from each other in clinical and action-based ways would be a welcome addition to the current emphasis in EE on presenting one’s own (unsolicited) pedagogical ideas and practices in articles and workshops. Teachers could also involve their students in such studies. CAR also represents a rather different way to cross institutional and national borders in EE research than the common teacher or student survey approach. Further, assessment and scaling of EE could leverage CAR as a way to study and spread actions on a micro-level that might work in the classroom.

Design Science Research in General, in EE and in the Future

DSR is a new and fast-growing research tradition with roots in work by Nobel laureate Herbert Simon (Dresch et al., 2015). In his famous book The sciences of the artificial, Simon (1969) argues that a different kind of science – a design science – is needed in professions where emphasis is on creation of artificial objects and situations, that is, artifacts created by humans rather than naturefacts existing in nature (Hilpinen, 2011). When teachers, engineers, architects and other professionals create things and situations that did not previously exist, they are in fact conducting design work. Simon contrasts design of what ought to be against describing and analysing what is in natural science. DSR thus represents a more prescriptive and problem-solving research approach of “changing existing situations into desired ones” (Romme, 2003, p. 562). Compared to the action research approach, DSR adds a focus on novel artifacts that researchers co-create together with practitioners in attempts to solve their practical problems (Holmström et al., 2009). Such artifacts can help bridge the problematic theory-practice gap so prevalent in EE and elsewhere (Neck et al., 2014; Van de Ven & Johnson, 2006).

In DSR, it is mainly the so-called design principles that help bridge between theory and practice. They do this by offering a tentative written-down prescriptive template for how to solve a practical problem of a certain type (Denyer et al., 2008). A design principle is often articulated according to a CIMO-logic, describing Context, Intervention, Mechanism and Outcome. The aim is to specify “what to do, in which situations, to produce what effect and offer some understanding of why this happens” (Denyer et al., 2008, p. 396). CIMOs can be developed from literature, from practice, or as a combination. They always need to be validated through field tests in practice. Since design work is often done in a complex organisational setting, it usually results in multiple closely related design principles (Romme & Endenburg, 2006). A collection of carefully developed and tested design principles represents an immaterial artifact that practitioners can use and share more broadly (Dimov et al., 2022). We end up with a reification – something abstract having been made more concrete, in this case, a piece of codified, transferable and pragmatically validated actionable knowledge about “what might work, for whom and why” (cf. Brentnall et al., 2018, p. 406).

DSR has been positioned as a powerful method to open the elusive black box of EE (Baggen et al., 2021, p. 350; Derre, 2023, p. 396). This could be important, since more and more EE scholars call for studies that isolate the efficiency of specific pedagogical interventions (e.g. Fayolle, 2013, p. 696; Kozlinska, 2016, p. 38). DSR in EE is, however, in a nascent phase. In our search for articles that develop design principles for EE we could find only two research articles (Baggen et al., 2021; de Castro Krakauer et al., 2017) and one doctoral thesis (Derre, 2023).

In the future, DSR could help EE teachers and scholars to put words on their own and others’ classroom interventions. This represents a much-needed addition to the current emphasis on the O in CIMO – the outcome in terms of entrepreneurial competencies and other desired learning outcomes. DSR contains procedures and tools that can help EE teachers articulate and describe in writing the interventions they design in their classrooms, the contexts these are embedded in and the mechanisms that explain how and why outcomes of interest are produced (Dresch et al., 2015, pp. 71–93). This opens up for easier replication of pedagogical practices in other teachers’ classrooms and increased clarity in what is to be assessed in various impact studies.

Experience Sampling Methodology in General, in EE and in the Future

ESM is a method to collect an immediate and momentary detailed portrait – a sample – of people’s thoughts and feelings as they take action in their natural environment. Participants are asked to provide written self-reports by completing a very short questionnaire multiple times over typically a week or two (Larson & Csikszentmihalyi, 1983). An ESM questionnaire can consist of questions such as “What were you doing right now?“, “How do you feel about it?” and “Why do you feel like this?“. Some questions are scale-based and others are open text-based, resulting in a mixed dataset consisting of both text and numbers. Questionnaires are completed either at random times through help from mobile technology, at certain time intervals such as daily, or immediately after certain events such as after an interpersonal conflict (Reis et al., 2014). While potentially burdensome for participants, ESM offers much higher data validity and situational precision than after-action interviews or surveys that often suffer from recall bias (Stone et al., 2003).

The only use of ESM in EE we could find in literature was the method innovation work drawn upon here (Lackéus, 2014; 2020b). No other scholars seem to have used ESM in any attempt to study EE. Commentators have described the ESM approach to EE as novel, ambitious and promising (Gartner & Teague, 2020; Neergaard et al., 2020). In fields adjacent to EE such as entrepreneurship and education, a few more studies have been done (Uy et al., 2010; Zirkel et al., 2015).

In the future, ESM could help EE teachers and scholars to better see the M in CIMO – the mechanisms that are responsible for producing various outcomes in EE, both desired and unintended ones (cf. Bandera et al., 2021). This could empower students as well as teachers to reflect more deeply upon the momentary experiences of emotional highs and lows in EE, which in turn could build up a collective pool of highly detailed datasets for various EE interventions. Also sceptical teachers could be invited to momentarily quantify and reflect upon pedagogical ideas they apply but that they are not so fond of. Maybe we could then see more clearly how various types of EE impact not only winners but also losers, dropouts, poorly resourced, discriminated, conscripted and non-starter students (Brentnall, 2021, 2023). It could give us a more fine-grained picture and a deeper understanding of various known mechanisms in EE. It could also uncover hidden mechanisms not previously discussed in EE.

Critical Realism in General, in EE and in the Future

CR is a philosophical position that bridges between two extremes – rigid objectivism (a search for universal truths) and fuzzy subjectivism (each individual has to find her own truth). Such bridging is not achieved by taking a diluted middle-ground position, but through taking a multi-paradigm stance where two or more incompatible positions are maintained at the same time (Patomäki & Wight, 2000). According to CR, there is indeed a reality out there, independent of the observer. Still, this reality is not easily measurable or knowable since it is partly socially constructed and also impacted by humans with their varying desires and emotions (Easton, 2010). What social scientists then need to do is to try to identify weak regularities on a micro level (Danermark et al., 2002). Such regularities are context-dependent, and need to be studied case-by-case (Bhaskar & Danermark, 2006). In CR, these regularities are termed causal mechanisms. Ylikoski (2019, p. 16) writes that “a mechanism-based explanation tells us how the cause produces its effects by describing the process by which this happens”.

Causal mechanisms are unpredictable in that they are “sometimes active, sometimes dormant”, and that there are often multiple counteractive mechanisms at play (Danermark et al., 2002, p. 199). Social scientists therefore need to be critical to macro-level descriptions of what things are like, and instead drill deep down inside the black box of society’s internal machinery, digging out those real causal mechanisms that explain why things are the way they are in each particular case (Elster, 1989). This is why it is labelled critical realism – reality is indeed out there, but it needs to be painstakingly dug out and explained through a broad variety of available means. One approach of particular relevance here is to stage social experiments where “the researcher consciously provokes a situation in order to study how people handle it” (Danermark et al., 2002, p. 103). Teachers are well positioned to perform such experiments (Pring, 2010, p. 122). CR also recommends a reliance on mixed methods (Danermark et al., 2002, pp. 150–176).

Application of CR in EE scholarship is rare but promising. Some fundamental taken-for-granted practices have been questioned, such as students writing business plans (Jones, 2010), students participating in business competitions (Brentnall, 2021, 2023) and students doing creativity exercises in the classroom (Lackéus, 2020b). In all these cases, CR has been used to see beyond the macro-level assumptions in EE, to instead uncover hidden mechanisms that produce a variety of outcomes that are desirable or not.

In the future, CR could help bridge between two camps in EE consisting of quantitative questionnaire scholars on one side and qualitative single-case study scholars on the other side. This could give us more mixed methods studies in EE. CR could also trigger methodological innovation that brings us new and more powerful ways to study and explain the varying contexts that EE activities are embedded in, such as differing disciplines, regions and cultures (Lindahl Thomassen et al., 2020). This could move the EE community from the current impasse of studying whether EE works and what “works” for all, to instead study when, how and why EE works in various contexts.

Sixteen Small Steps Forward for EE Teachers

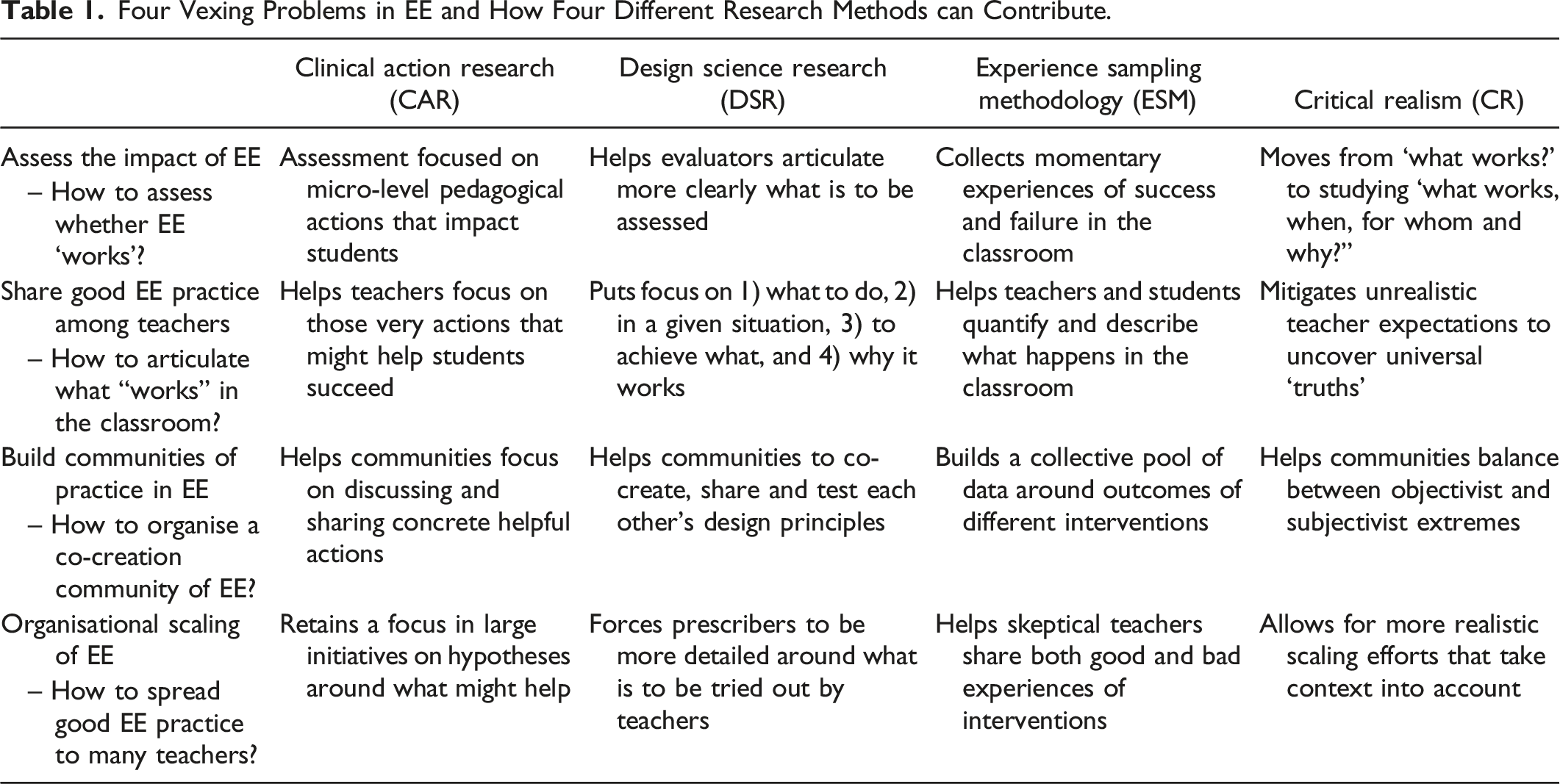

Four Vexing Problems in EE and How Four Different Research Methods can Contribute.

A More Concerted Rescue Attempt: Designed Action Sampling

DAS took a decade to reach maturity (see Lackéus, 2020a). Our endeavour to develop this new research methodology started in 2012. University students were asked to reflect longitudinally upon their experiences at an entrepreneurship programme in an event-triggered ESM manner (Lackéus, 2014). The event type they were asked to quantify and reflect upon was emotionally charged events connected to the programme. The data collected and the resulting insights were so interesting that people in the general schooling sector got to hear about it. They then asked for more studies to be conducted with the same method, but now focused on younger students aged 7-19. These studies in turn made the authors engaged in a wide variety of studies primarily outside EE.

To facilitate the practical collection of data, a digital tool was developed, see https://www.loopme.io/. This tool plays a key role in making a rather complex and data saturated method simple and manageable in practice for the often quite large number of participants. However, we will not focus on the digital tool here since it has been described and analysed extensively elsewhere (Lackéus, 2020a). We will instead here focus on the generic combinatory methodology that emerged, and that can be facilitated by any digital tool that fulfils certain functional requirements. For a longer version of the brief description given here, see a book written in Swedish (Lackéus, 2021). An English translation is available upon request to the author.

Designed action Sampling in Five Steps

DAS consists of the following five steps:

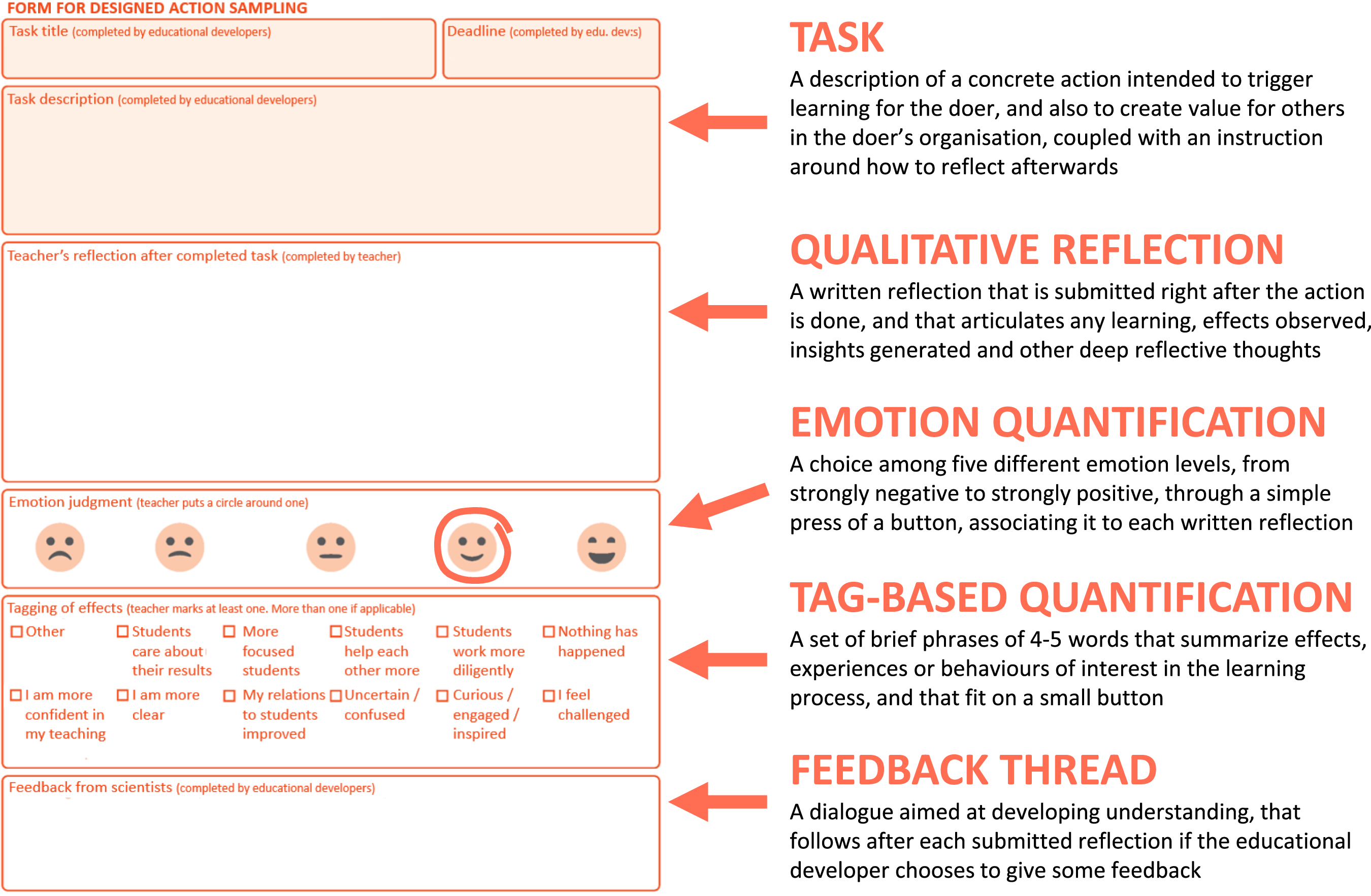

In the design step, a team of educational developers tries to put words on those actions that a larger group of people is later invited to try out in their own context in step two. Inspiration can be taken from theory, from practice or from both. A DSR inspired technique has been developed that supports the written articulation of a content package – a collection of usually five to ten action-reflection tasks. Each task should be designed so that it hopefully creates value for others, which in the teacher’s case is the students. Each task must be phrased so that participating teachers understand what to do, how to do it and how to reflect deeply afterwards. Each task comprises an action-oriented title (i.e., a verb included) and a task description of three to five sentences. This is then inserted into a digital version of the form in Figure 2. One such form is given to each participant for each task. A collection of tags is also designed at the outset, used by participants to quickly indicate effects and experiences of interest in the study, see Figure 2. The design step is well aligned with DSR; tasks represent prescriptive design principles (CIMOs), content packages represent artifacts that trigger desired situations, and the design procedures align with DSR literature. Form for designed action sampling.

In the action step, around 10-50 teachers receive five to ten forms each, with one action-reflection task on each form. A total of 50–500 forms are thus typically administered to a group of participants. They are asked to complete and submit a form as soon as they have tried to do what is specified on each form. Some forms will have a deadline, others will not, depending on study design. It is not always easy to say exactly when a certain task can be done, since it depends on each teacher’s context. The tasks should be seen as hypotheses used to conduct a CR-inspired social experiment, and the observed effects on students should be documented in real-time in an ESM manner. The action step is well aligned with CAR; the forms are there to help teachers with their teaching, they facilitate opening up classrooms for clinical advice from outsiders, and the resulting data helps a boundary-crossing community reach deeper understanding.

In the sampling step, quantifications and reflections are received digitally from participants through completed ESM forms. This step takes anything from a few days to months, depending on study design. The data received is causal by nature; the cause being the action tried out, and the effect being documented in a mixed methods way through quantifications and reflections. Some teachers will not complete all or even any of the forms, even if they had agreed to participate. Reminders through emails or push notifications increase the completion rate significantly, often to around 50-70%. The sampling step is well aligned with ESM; the form is completed soon after key events in the participant’s natural environment, it focuses on participants’ thoughts and feelings, and it results in a mixed dataset.

In the discussion step, each submitted form is discussed individually with each participant in a chat manner. As soon as the educational developers receive a form, they must provide some brief feedback consisting of a couple of sentences in the chat associated to each submitted form. Such instant feedback therefore requires a digital tool adapted for DAS. The chat step typically results in a short comment thread between the participant who submitted the form and the educational developers. Such feedback is more important than one might assume. Completion rate and depth of reflection increase sharply as soon as the participants realize that someone is paying attention to their experiences. The discussion step is well aligned with CR; it emphasises drilling deep inside the black box of classrooms’ internal machinery, it helps identify weak regularities through outcome-based discussions, and it allows for context-sensitive interpretations of events on a case-by-case basis.

In the analysis step, the educational developers first prepare a summary consisting of descriptive statistics, a thematic analysis of all reflections and comments and some key insights generated. For each theme identified, five to ten illustrative quotes from participant reflections are shown in anonymous form, underpinning a key insight. This kind of summary typically consists of around ten slides or a five-page document. Participants are invited to a meeting where they get to read the summary, discuss and reflect upon the outcome of the study individually, then in teams and lastly as a collective. The analysis meeting finishes with time set aside for yet another individual written reflection. All data, including the data collected at the analysis meeting, are used to produce a final result which can be communicated in a suitable form to all – a report, a slide deck, a video presentation or some other form.

In the above five-step description, the teachers are the ones who carry out the action-reflection tasks and then reflect upon effects they see on their students. However, we have also seen that DAS can be used together with students, inviting them to do action-oriented tasks and then reflect upon how it worked for them and what they learned.

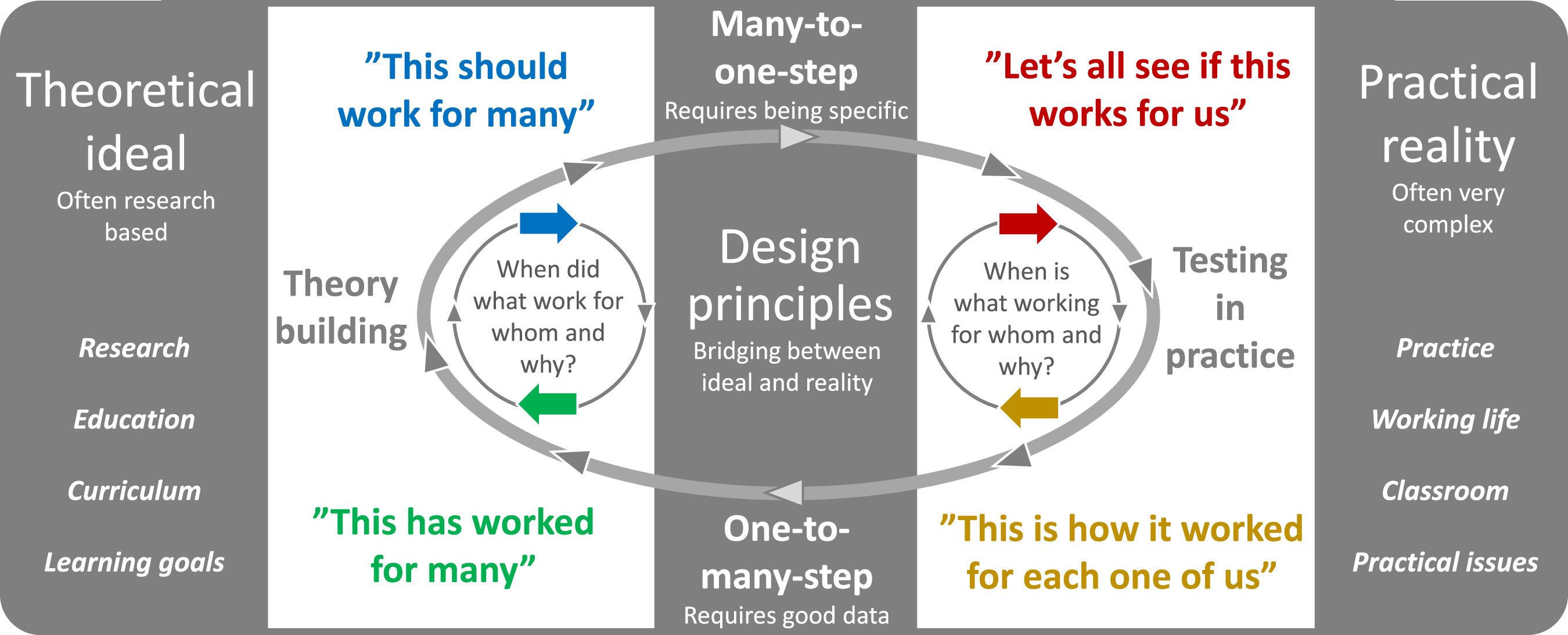

A Pragmatism-Based Circular Workflow that Never Ends

The fifth analysis step is not the end of a linear workflow. The result is instead fed into another cycle starting again from step one with a re-design of the entire content package in light of what has been learned. DAS can thus be described as pragmatism-based abduction – a constant move back-and-forth between theoretical ideals and a complex reality, see Figure 3. The collective understanding deepens with each iteration. If one single content package is tested in multiple contexts, in a replication manner, a deeper understanding of how mechanisms and outcomes vary with context will be generated as the large resulting dataset is analysed. What often happens is that modified versions of a content package are created to better suit a context different from where a content package was conceived. The purpose here is thus not to arrive at one single final version of any given content package. We need to let go of unrealistic ambitions to arrive at simplistic “rules for educational action” (Biesta & Burbules, 2003, p. 110). Each group of practitioners must instead revise a content package to suit their unique context. To facilitate such re-design, a library of content packages is often needed where practitioners can browse, access, modify and add their own content packages as needed, see library.loopme.io. In DSR terms, such a library constitutes a collection of design principles that can help bridge between theory and practice, in both directions. Most people find it difficult to craft design principles from scratch. However, modifying an existing content package – a template to start with – is much easier. This does not mean that such a library is a one-stop shop repository of best practice. There is no such thing as best practice in DSR-based educational development, only more or less intelligent ideas around what can be tried out in people’s own complex practice through pragmatic experiments (Biesta & Burbules, 2003; Romme, 2003). Designed action sampling is characterised by abduction - a repeated move between theoretical ideals and a complex reality.

Use of the New Research Method in Practice

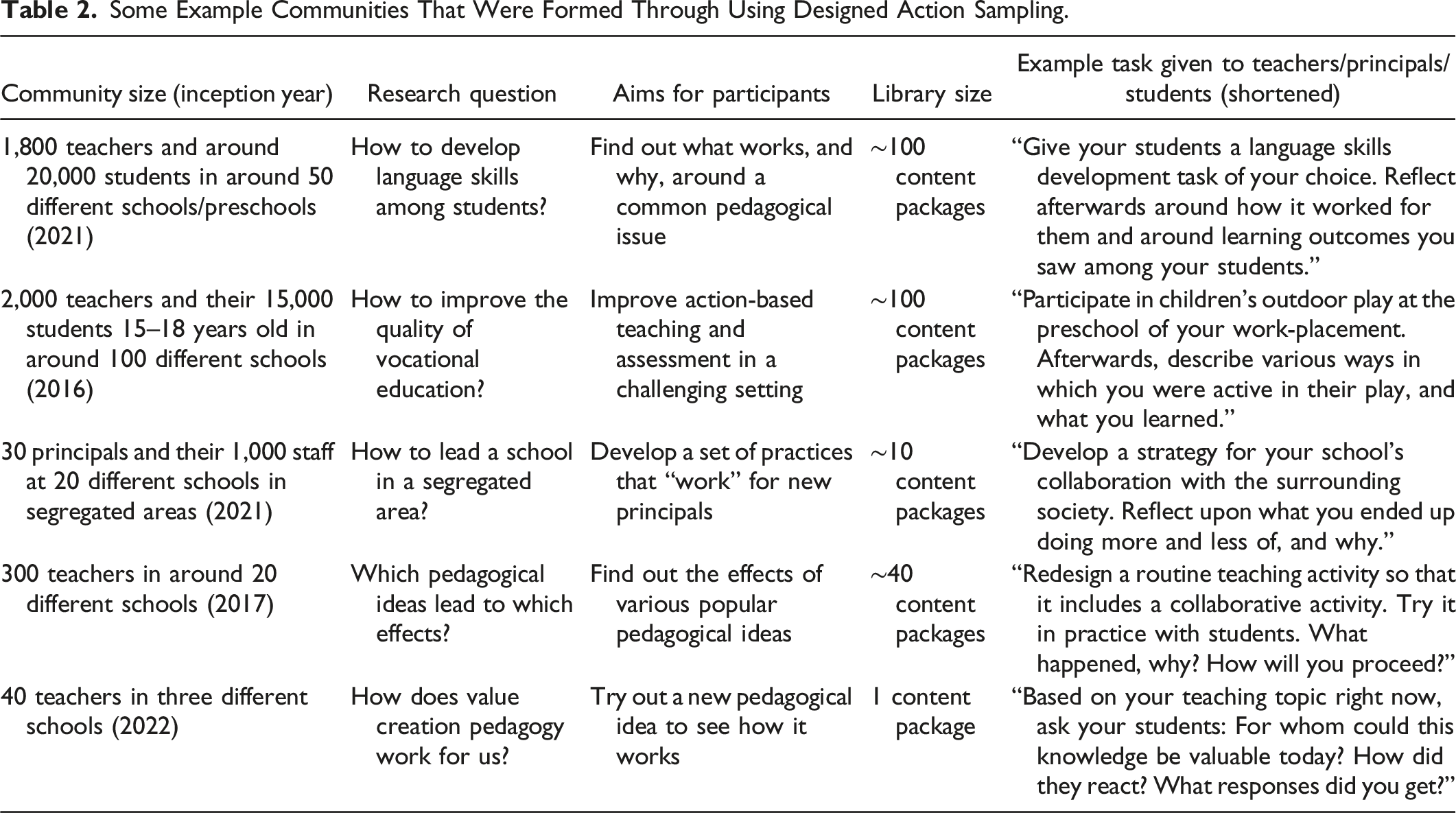

Some Example Communities That Were Formed Through Using Designed Action Sampling.

The largest community started up in 2016 in vocational education, with support from Uddevalla municipality and Swedish National Agency of Education. It currently consists of around 2,000 vocational teachers, and is growing each year. These teachers have taken DAS to their hearts mainly because it allows them to see what their students learn in a way not possible before. They appreciate the practice of creating, revising, evaluating and sharing tasks and tags as content packages. DAS has contributed to increased quality in vocational education primarily due to strengthened collaboration between teachers, students and workplace supervisors. DAS has also resulted in stronger bridging between education and working life, more efficient and fair assessment of students and a common language around educational development (Lackéus & Sävetun, 2021). Similar effects may be seen in EE. When EE teachers get to co-design action-based learning in detail and then also get to see what their students learn in detail, they too might appreciate it. DAS is thus not merely a new research method, it also represents a new instructional design and assessment method in experiential education (Lackéus & Williams Middleton, 2018).

Since around 99% of DAS users are currently outside the field of EE, only a few examples from EE practice can be provided here. EE teachers at University of Huddersfield in England have used DAS at their Enterprise Placement Year programme to deepen students’ reflective skills, to make their interaction with students more interactive, and to use the resulting data to improve their programme. EE teachers at University College Dublin in Ireland have used DAS to track what students feel they learn at their Creativity & Innovation in Education programme. EE teachers at HOGENT University of Applied Sciences in Belgium have used DAS to establish a data-driven design science approach to develop more effective EE.

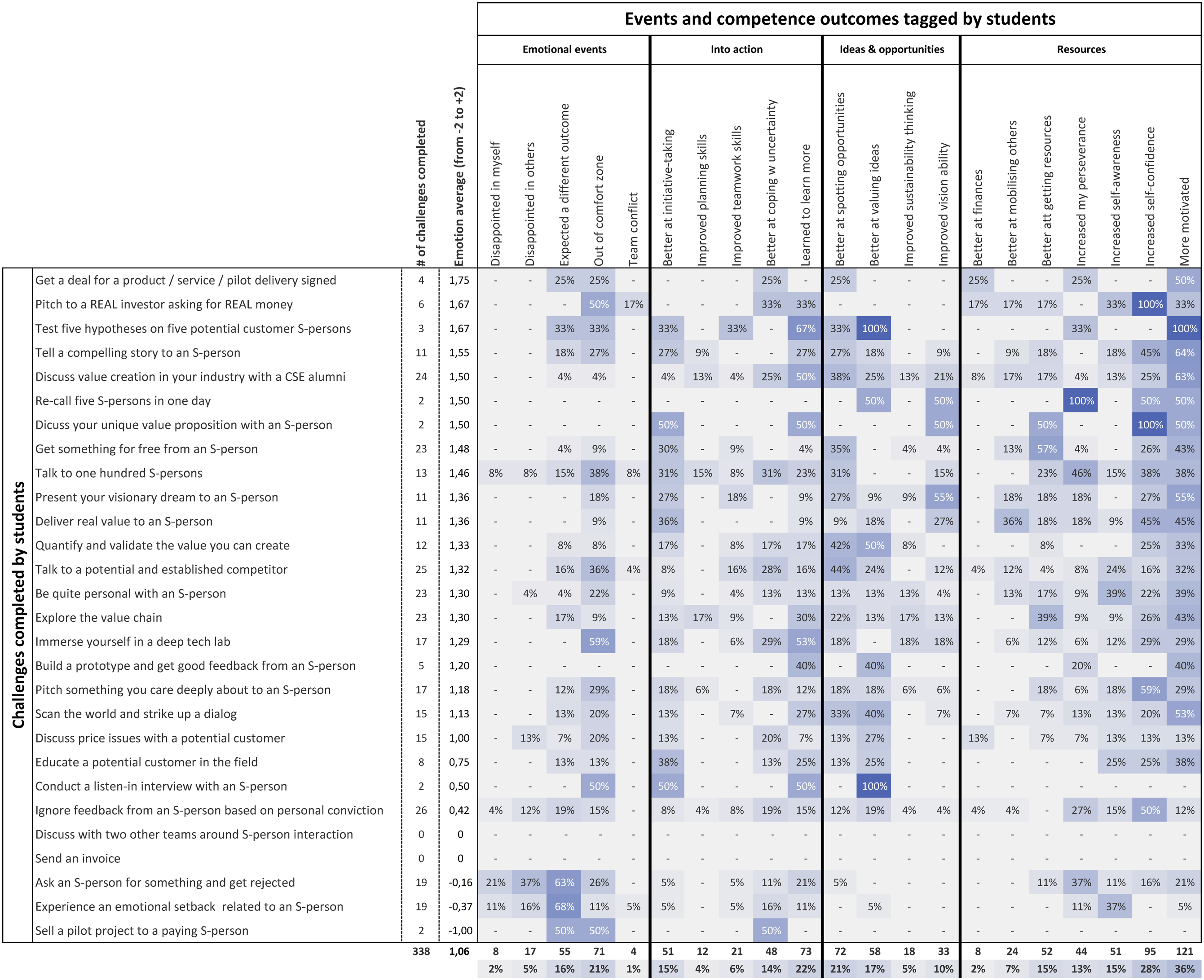

The authors of this article have used DAS at Chalmers University of Technology to develop action-based EE at their full-venture creation programme (cf. Smith et al., 2022). Around 40 students spend one academic year running either a real-world deep-tech start-up venture based on university research or a real-world corporate venturing project inside an established organisation. 28 interaction challenges are given to these students each year, helping them become skilled initiators of explorative conversations with experts, potential partners and potential customers, so called S-persons; significant stakeholders relevant to their venture. Each student picks ten S-person challenges of their choice. After completion of a challenge, they reflect upon learnings and tag their experience according to EntreComp competencies and emotional events. From September 2022 to May 2023, 338 reflections with 80,000 words and 1313 tags were produced, see Figure 4. This mixed dataset was thematically analysed and presented to the students in aggregate form afterwards, inviting them to become co-researchers with their teacher around the question “What did we learn from each S-person interaction challenge?“. In a three-hour analysis meeting, students were provided with selected anonymised quotes for each challenge as well as a printed version of Figure 4. A key insight that emerged was that the more difficult challenges generated a number of hard-to-teach entrepreneurial competencies, such as self-confidence and increased ability to persevere, to cope with uncertainty and to mobilise others. Quantitative data in Figure 4 supported this insight, and qualitative written reflections submitted towards the end of the analysis meeting uncovered reasons why. Students linked difficulty to time, to uncertainty and to perseverance: “It’s only when you daily push yourself against your norm that you have developed the less tangible skills of perseverance, and self confidence. (…) They do not come with a simple task and it is hard to pinpoint a specific moment that helped develop this more vaguely defined skills and attitudes.” “the difficult challenges were easier to accomplish later in the project since we gained more confidence in ourselves” “Because the task is harder I would need more perseverance to reach my goal and that will also bring more uncertainty” A task-tag matrix showing how many tasks were completed, which emotion level on average that was indicated by participants, and which tasks that led to which tags being picked by participants.

This example illustrates how DAS can make fuzzy learning processes in EE more visible and help identify weak regularities on a micro level. Teachers can then return to the original Humboldtian ideal of students and teachers as co-creators of knowledge (Shumar & Robinson, 2018). Applied to a community of EE teachers, it could also help them develop empirically grounded pedagogical models, e.g., on how to teach students an entrepreneurial mindset. The full task and tag design is available at library.loopme.io.

DAS has not yet been used in EE to let a group of teachers or coaches reflect. Potential applications of such a set-up could be if an existing EE community used DAS to collect data on some of its pedagogical recommendations. Advice given in popular prescriptive books could be evaluated in different contexts through DAS (e.g., Aulet, 2013; Blank & Dorf, 2012; Ries, 2011; Wickman, 2012). A particularly interesting example would be to evaluate the Babson approach (Neck et al., 2014). Babson’s train-the-trainer program is one of the largest in EE, yet seems to lack a scientific evaluation of impact on different teachers and students so far (Neck et al., 2021). Another potential application could be to let business coach communities use DAS to reflect in a structured way around the observed effectiveness of different pieces of advice given to different types of entrepreneurs.

Discussion

Coming back to the four vexing issues in EE, DAS has been found to help people in a number of different communities to see in new ways the impact of various pedagogical approaches on a micro-level of classroom practice. The design of CIMOs in the form of content packages has emerged as a useful way to articulate, share, replicate, evaluate and co-develop pedagogical practices across organisational and national borders. This has in turn allowed for new and quite large communities of practice to emerge and grow, empowered by the new research methodology. Good practices have been allowed to spread broadly across an entire country, from teacher to teacher. Large amounts of causal data have been collected and analysed by the practitioners themselves, allowing them to evaluate their impact. Given these observed effects, and assuming that they are transferable also to EE, we find it plausible to assume that all four vexing issues in EE could be addressed to a certain extent through DAS. We will here focus on what we believe is the most interesting effect, that of building active collaborative communities that share good practices and rigorously evaluate their impact.

In an attempt to help readers grasp and sense-make DAS as an unfamiliar and novel concept, we will draw on the children’s novel The Wonderful Wizard of Oz as a metaphorical tool. The aim of this is to “elucidate properties in an illustrative and illuminating way”, and to “describe something unfamiliar by referring to … something familiar” (Danermark et al., 2002, pp. 122–123). For readers unfamiliar also with this novel, a brief summary is offered here. Teenager girl Dorothy Gale and her dog Toto are caught up in a tornado, sweeping them away from their farm in Kansas to end up in the magical land of Oz. To get back home, they must follow Yellow Brick Road to Emerald City where the powerful Wizard of Oz can help them. On their way, they team up with Scarecrow who wants a brain, Tin Man who wants a heart, and Lion who wants courage. Wizard of Oz offers them all help, but wants the broomstick of dangerous Wicked Witch of the West in return. They eventually succeed in this, but as they return with the broomstick, Toto exposes Wizard of Oz as a humbug populist illusionist making Emerald City look fancier than it is. In the end, Dorothy instead gets help from Good Witch of the North, telling Dorothy how to use her magical Ruby Slippers to get back home to Kansas.

Goodbye Illusory EE Outcomes, Hello Clinical EE Community

So goodbye yellow brick road, Where the dogs of society howl You can’t plant me in your penthouse, I’m going back to my plough Lyrics by Benny Taupin in Elton John’s Song “Goodbye Yellow Brick Road”

If a growing community of hundreds or thousands of teachers and researchers in EE would start to co-design, share, try out and evaluate each other's CIMO-based content packages broadly, using hundreds of such packages in an ESM way to collect data about their impact, this could lead to the establishment of a very active clinical EE community. Meaningful exchanges would occur every week instead of one single week per year. The main focus would then become what micro-level impact we see on students as they act out their entrepreneurial spirits through our classrooms, instead of what macro-level fancy outcomes we could hypothesise a black box of EE to generate in a more distant Emerald City-like glowing future.

Relating to Elton John’s famous song Goodbye Yellow Brick Road, this could represent a move away from the illusory and evasive entrepreneurial competencies our community loves to “howl” about and design impact studies around, to a focus instead on the design and impact of various down-to-earth entrepreneurial “ploughing” activities that students undertake as they get to experience various kinds of EE. This represents a move away from EntreComp to something we could call EntreAct; an action- and process-oriented behavioural focus (cf. Derre, 2023, pp. 95–107). Still, outcomes are also a part of the CIMO acronym in DSR terms, so we should be careful not to throw EntreComp and related frameworks out with the bathwater, but instead assign them a more balanced role in a design-oriented community of EE. Alluding to Elton John’s song, this would let our dear EE communities abandon the Yellow Brick Road to glossy humbug Emerald City of fancy words and vanity metrics – Creativity! Resilience! Growth! Intentions! Employment! – to instead come home to a grey Kansas where we focus on designing, sharing and evaluating more mundane farming- and ploughing-like pedagogical EE activities.

In such a perhaps less fancy EE community, measuring impact would be done by the teachers themselves, supported by researchers when possible, rather than by a disinterested research team coming in from the side. Teachers are already doing this in many other communities around the world. A leader in one such community, Carnegie Foundation for the Advancement of Teaching, remarked: “we cannot improve at scale what we cannot measure” (LeMahieu et al., 2015, p. 447). In order to measure something, we however first need to define it properly (Pring, 2010). The CIMO approach helps us articulate and define (cf. Draycott & Rae, 2011, p. 674) in a more precise way than before what is to be tested in a social experiment and then measure outcomes in a micro-level cause-effect way through ESM. DAS thus provides a new and concerted approach to design, define, diffuse, test, measure and discuss the impact of pedagogical interventions in EE in a way that traditional interviews and surveys have been unable to accomplish. Using DAS to establish a clinical and scientific community of practice in EE could eventually be how the vexing issue of impact assessment in EE is at last resolved. It is accomplished through a rigorous evaluation method that can follow and make visible the complexity of EE practice, and that at the same time also helps teachers with what they find most relevant; their daily practice of teaching and assessment.

An Active EE Community that Requires Heart, Courage and Brain

Dorothy: “How can you talk if you haven’t got a brain?” Scarecrow: “I don’t know. But some people without brains do an awful lot of talking, don’t they?” Script excerpt from movie “The Wizard of Oz” by Metro-Goldwyn-Mayer 1939

As attractive as a clinical EE community might seem, we should not underestimate the heart, courage and brains needed to establish such a community. DAS is an inherently relational method, relying on clinical practice where people are genuinely helping each other on a weekly basis, even becoming each other's clients. This is why reflections are not submitted anonymously to the educational developers. EE teachers will need Tin Man’s big heart in order to take precious time away from their own practice to also help others succeed in their distant organisations and countries. Teachers will need Lion’s courage to go against the traditions of secrecy and open up their own classrooms for scrutiny by distant EE colleagues who are invited to browse through their own and their students’ reflection data, articulating judgments around what seems to “work” or not. Here, it is the relational quality that determines how honest teachers will be with each other, how good of a reflective practitioner they dare to become (cf. Schön, 1983). Teachers will also need Scarecrow’s Doctor of Thinkology (ThD) brain to sift through the big data generated by the ESM part of DAS. In our work with communities outside EE, people struggling with analysing large ESM datasets often comes up as a key challenge, even if it has been made easier by digital tools. Here, generative AI such as ChatGPT could also be useful.

A Microscope that Looks Inside Any Classroom

“But isn’t everything here green?” asked Dorothy, “No more than in any other city,” replied Oz, “when you wear green spectacles, why of course everything you see looks green to you. (…) My people have worn green glasses on their eyes so long that most of them think it really is an Emerald City” From book “The wonderful wizard of Oz”, p.187-188, by L. Frank Baum in 1900

Aside community building, it is also important to acknowledge the stand-alone value of a new methodology that allows us to gaze inside the black box of classroom practices. This is useful not only for EE but for any pedagogical practice. We might thus finally be presented with a solution to Schön’s (1995, p.28) dilemma of not being able to study the “swamp of important problems” in a rigorous enough way. Now we can finally take off those “green spectacles” of questionnaire-based research that have been “all locked fast with the key” on us all, strapped “night and day … [for us] to be blinded” from seeing things as they really are (Baum, 1900, pp. 117–118). What we instead get to see with, through help from DAS, could be reminiscent of a microscope that permits us to study complex and diverse classroom practices on a bacterial micro level, “allow[ing] scholars [and teachers!] to surface new insights and enable new ways of seeing” (Bansal et al., 2018, p. 1194). What makes this complexity manageable and simple from a teacher’s perspective is the form in Figure 2. It can be completed in around 3 minutes, which is a requirement to get stressed teachers to participate broadly (Bryk et al., 2015). The form also yields a highly structured and mixed dataset that allows for swift and causal analysis of complex issues.

Is Designed Action Sampling in EE Worth the Effort?

Scarecrow: “You humbug!” Lion: “Yeah!” Wizard of Oz: “Yes-s-s -- that...that’s exactly so. I’m a humbug!” Dorothy: “Oh, you’re a very bad man!” Wizard: “Oh, no, my dear -- I’m -- I’m a very good man. I’m just a very bad Wizard.” Script excerpt from movie “The Wizard of Oz” by Metro-Goldwyn-Mayer 1939

Vast economic resources in EE are consumed by JA and their global community. Therefore, what JA does in terms of impact assessment has a decisive impact on the field. They have invested heavily in trying to see if what they do in EE “works”, using impact studies to argue for receiving more resources and responsibilities (Brentnall et al., 2023). Much of their evaluation efforts have been devoted to questionnaire-based approaches such as ASTEE, OctoSkills and Entrepreneurial Skills Pass (Moberg, 2019). JA has considered DAS, but has not yet deemed it worthwhile. Maybe JA has preferred to keep tight control over their own research methodologies, their own “green spectacles” that show Emerald City in its full splendour? Or is such a humbug accusation of JA’s work with impact studies unfair? Maybe so, but JA has not used its power position and vast resources to invest in research method innovation in EE. Positioning JA as the incumbent Wizard of Oz in EE, supported by the big business Witch of the East (cf. Taylor, 2005, p. 421) is perhaps then not too unfair?

In the EntreComp community, DAS has been tried out tentatively in the EntreCompEdu project (Grigg, 2020). However, when funding ceased and it was time to renew efforts in a new project, the method was no longer included due to a resource-constrained EU project economy. In a related community, the Swedish National Agency of Education (SNAE) yearly funds a large number of EE related school projects. Some schools have asked for funds to use DAS to study the effects of their efforts. However, this is routinely declined by SNAE, since costs related to scientific evaluation of effects are not within the remit of their assigned task to fund projects in EE. It is only the activities that can be funded, not an investigation of their effects.

These examples are not included here to suggest that existing community leaders are bad, as Dorothy tried to imply about humbug Wizard of Oz. Community leaders do their best to deliver good practice, and also have a pressing need to put up a good emerald façade. However, if innovative research methodology can be labelled a kind of wizardry, they are perhaps not as good wizards as they are good-intentioned EE practitioners. If community leaders in EE can acquire their much-needed funding without such wizardry, who are we to blame them for it? Maybe it is rather the funders of EE who need to improve their knowledge of wizardry-like research methodologies. Funders of EE may need to pay more attention to innovation in research methodology for evaluating EE and other pedagogical practices. Related to this, more research is needed around how to secure long-term funding for clinical and scientific EE communities that transcend short-term project funding.

Is This New Way to Empower EE Communities Too Good to be True?

Witch Glinda: “Now, those magic slippers will take you home in 2 seconds!” Dorothy: “Oh, dear -- that’s too wonderful to be true!” (…) Witch Glinda: “Close your eyes, and tap your heels together three times and think to yourself – There’s no place like home” Script excerpt from movie “The Wizard of Oz” by Metro-Goldwyn-Mayer 1939

All Dorothy Gale had to do to get home to Kansas was to close her eyes, tap her heels with the magical ruby slippers three times and think about Kansas. Could it now be just as easy for the EE community to create an active clinical community focused on designing and assessing classroom practices? Is DAS a magic pair of ruby slippers that can take EE home to Kansas in just 2 seconds? No, not at all. From what we have seen when establishing other communities, it takes years of resilience, leadership, vision, skills, collaboration and committed work (cf. Chaskin, 2001; Foster-Fishman et al., 2001). It also requires infrastructure, tools and funding for teachers interested in learning how to use a new scientific toolbox. We have also seen many examples of sceptical academic colleagues who distrust and denigrate a rather different methodological innovation, especially if it is supported by a digital tool (cf. Wiles et al., 2013, p. 29). Many power centres in society have been passively or openly hostile towards this novel way to build active communities. Even so, we do not believe that the vision articulated here is too good to become true one day.

Conclusions

Designed action sampling contributes with more focused impact studies in EE through its emphasis on detailed articulations of interventions on a classroom level (inspired by DSR), and through its mixed data collection strategy allowing for fine-grained causal data analysis (inspired by ESM). It also contributes with a rigorous yet context-sensitive way for educational developers such as teachers or researchers to study what “works” in EE. This is achieved through an emphasis on design principles that specify context, intervention, mechanisms and outcomes for each social experiment conducted (inspired by CR). Such experiments are carried out by teachers themselves in their classrooms (inspired by CAR), rather than by outsiders coming in with their questionnaires to teachers or students. This facilitates a much-needed scaling of EE without causing pedagogical homogenisation. It also helps form active clinical EE communities that rigorously build cumulative insight, allowing more and more teachers to become better at making a real impact.

For this to happen, some key developments are needed in relation to money and power. Funding needs to be secured that does not disappear when a project ends. Community leaders, in particular JA, need to lead the work on this new opportunity instead of fighting it. Ironically, we may need to learn a lesson from JA who achieved global scale by standardising its pedagogy. This time, we may try standardising the research methodology instead of the contents and the pedagogical methods. Unless many EE teachers apply DAS in a concerted effort to rigorously try out and assess many different pedagogical ideas, the positive effects observed in other communities will not be achieved in EE. We may also need to negotiate a truce between quantitative and qualitative EE researchers, and instead traverse a mixed methods pathway together. Training is also needed in the new research methodology and in train-the-trainer efforts around a growing collection of CIMOs that EE teachers co-design.

We conclude with a call for more entrepreneurial EE communities that more often take their own medicine through a clinical helping posture towards other teachers. Entrepreneurship is, after all, about creating value for others. We therefore paraphrase Hambrick’s (1993, p.13) presidential address to Academy of Management: Colleagues, if we believe highly in what we do, if we believe in the significance of advanced thinking and research on entrepreneurial education, then it is time we showed it. We must recognize that our responsibility is not to ourselves, but rather to the teachers around the world that are in dire need of improved pedagogy. It is time for us to break out of our closed loop. It is time for us to matter.

Footnotes

Acknowledgements

The authors thank the expert reviewers for insightful comments on our study.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.