Abstract

Over the last 20 years, there has been growing interest among scholars in conducting experiments in entrepreneurship education. In this paper, we first discuss how experiments as a research method have moved from the natural sciences into the social sciences and how the social sciences, including the educational sciences, have helped to address the challenges of using experiments in studying human behavior. Through the lens of the methodological advances made by the social sciences regarding conducting experiments, we systematically review the literature on entrepreneurship education research that has used experimental designs. By reviewing this literature, we provide an overview of what has and has not yet been studied using experimental designs and which type of experimental designs have been commonly used. Next, we critically evaluate current practices – both good and bad. Based on our critical assessment of the use of experimental designs in the field of entrepreneurship education research, we not only provide a future research agenda and call for experiments that (1) are more theory-driven; (2) answer more ambitious research questions, and (3) use more robust designs, but we also provide several paths forward for experimentalists with an interest in entrepreneurship education to do so.

“[We are] committed to the experiment: as the only means for settling disputes regarding educational practice, as the only way of verifying educational improvements, and as the only way of establishing a cumulative tradition in which improvements can be introduced without the danger of a faddish discard of old wisdom in favor of inferior novelties” (Campbell & Stanley, 1966, p. 2).

Introduction

The field of entrepreneurship education research studies how entrepreneurship is taught and seeks to help educators to improve their methods of teaching entrepreneurship (Nabi et al., 2017). Many of these studies take either a cross-sectional or longitudinal approach (Blenker et al., 2014; Nabi et al., 2017). Although such research designs can yield rich descriptive data and can suggest which educational methods may work better than others, they cannot shed light on causal mechanisms (Shadish et al., 2002). They cannot tell us which methods, or specific elements of an educational approach, cause a particular educational result. Experimental designs provide a powerful lens to isolate variables and determine causality (Colquitt, 2008); they have been called “the most ‘rigorous’ of all research designs” (Trochim, 2001, p. 191).

For more than a century now, educational scientists have not only applied experimental methods to better understand the causes behind differences in the effectiveness of educational methods, they also have actively developed the experimental method itself (Campbell & Stanley, 1963; 1966). It is therefore surprising that relatively few experiments have been conducted in the field of entrepreneurship education research (Blenker et al., 2014).

We argue that the field of entrepreneurship education research would benefit from more experiments for at least two reasons. First, as several researchers have observed, the field of entrepreneurship education suffers from a lack of methodological rigor (Fayolle et al., 2016; Greene et al., 2004; Honig, 2004; Rideout & Gray, 2013). Although conducting more experiments does not by itself solve the issue of rigor in the field, well-structured experimental studies will improve rigor and certainly will enable more confidence in conclusions concerning causality. This is because, well-designed and well-executed experiments permit experimentalists to be confident that (1) they have activated the intended theoretical construct, (2) that the variable(s) of interest has (have) been isolated, and (3) that the variable(s) of interest has(have) caused the observed outcome(s).

Second, using experimental designs gives scholars of entrepreneurship education a research tool to study the causal mechanisms between the educational methods used in the classrooms and outcomes linked to those methods and not to other factors. It allows scholars to better understand not only the effects of macro-level educational elements, such as full programs and courses, but also the effects of micro-level educational elements, such as specific assignments or team formation strategies. By better understanding the causal mechanisms underlying what works well and what does not, the educators can make empirically informed choices regarding the design of educational programs instead of basing decisions on philosophical debates or individual preferences.

Although historically there have been relatively few experimental studies in entrepreneurship education, the use of experimental and quasi-experimental designs has increased dramatically over the last decade in the educational sciences in general (Gopalan et al., 2020), and in entrepreneurship education specifically (Longva & Foss, 2018). Longva and Foss (2018) reviewed the then state-of-the-art of the use of experimental designs in entrepreneurship education. Although their review of 17 studies provides important paths forward, the use of experimental designs in the field has increased significantly since their review. In line with this special issue on “Experimental designs to address current challenges in entrepreneurship education research,” we argue that it is therefore important and timely to review the current state of the use of experimental designs in entrepreneurship education and to build a research agenda for their future use in this field. The goals of this literature review are threefold. First, we give a historical overview of how the educational sciences have helped to shape experiments as a research method in the social sciences. We review the past to gain a better understanding of (1) the reasons why the experimental method has gained, lost and then regained importance in the wider field of educational science, and (2) how educational scientists have addressed the challenges of using the experimental method in the study of human behavior. This will help us in better understanding the current state of the field and to provide an agenda for future research.

Second, we will review the current state of the use of experimental designs in entrepreneurship education research. Reviewing the current state will provide information on how experimental designs have led to theoretical development in the field and how rigorous experimental studies have been executed to date.

Third, we will discuss an agenda for future research using experimental designs in entrepreneurship education research. Although not all research questions can or should be addressed using experimental designs, many research questions where causal inferences are needed would benefit from the use of experiments. We will provide readers with several suggestions for future research using experimental designs to address important theoretical questions left unanswered in the field. Additionally, we will provide suggestions for experimentalists in this field to develop both more ambitious and more rigorous studies. However, before we address these goals, we will first address the basics of experimental and quasi-experimental research designs.

Experimental Design Basics

It is important to begin with some basic concepts of what is, and is not, an experiment. 1 In the following, we describe the critical features of experiments and explain how those features affect issues of internal and external validity. At the same time, we acknowledge that for many important research questions in many important research settings, strict adherence to the demands of experimental designs is not always possible. Thus, we also describe quasi-experimental designs and discuss how these contrast with “true” experiments. In addition, we will discuss additional design choices such as field experiments, natural experiments and factorial experiments, since many research studies in entrepreneurship education in our overview used such designs.

Experiments

According to the American Psychological Association Dictionary of Psychology, an experiment is defined as: “a series of observations conducted under controlled conditions to study a relationship with the purpose of drawing causal inferences about that relationship. An experiment involves the manipulation of an independent variable, the measurement of a dependent variable, and the exposure of various participants to one or more of the conditions being studied. Random selection of participants and their random assignment to conditions also are necessary in experiments.” (American Psychological Association, n.d.-a). The key features of an experiment include control, causal inference, manipulation of an independent variable, measurement of a dependent variable and random selection and assignment (Cook & Shadish, 1994). The concepts of manipulation of one variable (the independent variable) followed by the measurement of another (the dependent variable) are self-explanatory. Therefore, we begin by discussing randomization as an important, and often neglected aspect of experiments especially in research conducted in field settings, such as entrepreneurship education research. We thereby need to distinguish between random selection of participants from the population and random assignment of participants over experimental conditions.

It is not uncommon to see otherwise rigorously conducted experiments in which the initial selection of participants (sampling) was not random. Consider, for example, the very long history of basic psychological (and other) studies conducted with student samples that were randomly assigned to experimental conditions but not randomly selected from the population as a whole. Despite this fact, thousands of such studies were published in highly reputable journals. This may limit to some degree the external validity of the findings, and in particular, the ability to generalize the findings to the population as a whole (Highhouse, 2009). One might argue, however, that over time and repeated replication of the findings, a field’s confidence in the external validity and generalizability of the findings grows. Nonetheless, it is a shortcoming of a vast number of published experimental studies.

However, the constraint of randomly assigning participants to experimental conditions is arguably a “blackletter” requirement of true experiments. This is because a lack of random assignment means that the experimenter cannot be sure that the experimental groups (treatment group(s) v. control group) are initially equivalent. Random assignment “ensures that alternative causes are not confounded with a unit’s treatment condition” and “it reduces the plausibility of threats to validity by distributing them randomly over conditions” (Shadish et al., 2002, p. 248). A lack of random assignment leads to a lack of surety of group equivalence, and therefore the researcher cannot conclude that the independent variable, and the independent variable alone is responsible for the differences observed between treatment and control groups. Thus, another criterion for a study to be considered an experiment is not met. The researcher cannot make a clear causal statement. The non-equivalence of groups essentially introduces another variable into the experiment – namely, that there are known, but unknown, 2 differences between treatment and control groups. This introduces a “confound” into the experiment and greatly impairs the internal validity of the study. This is because the confound makes it impossible for the experimenter to isolate the effects of the independent variable from those of the confound. Confounds are essentially alternative possible explanations of the research findings.

The foundational concept of random assignment to conditions was developed by R. A. Fisher (1925, 1935). In the context of research in agriculture, he defined random assignment as “using means which shall ensure that each variety has an equal chance of being tested on any particular plot of ground” (Fisher, 1935, p. 56). Ever since Fisher’s early work, random assignment to conditions has been regarded as the best-practice of experimental design and causal inference (Shadish et al., 2002). Alternatives to random assignment, such as matching, have been suggested as another approach to equate experimental conditions (Shadish et al., 2008). However, although matching can ensure equivalence on the measured variables, random assignment balances conditions on known variables, whether measured or not, and unknown variables (Shadish et al., 2002).

Although random assignment balances conditions on all variables, it is not flawless (Goldberg, 2019). Randomization remains a chance-based process, meaning that due to chance, conditions could still be different on a specific variable. This has led some fields (e.g., economics and medicine), sometimes to conduct balance tests to test for potential differences between the groups. When randomization has been mismanaged, balance tests can be important to determine whether the data can be regarded as experimental or observational (Mutz et al., 2018). Although balance tests have become commonplace in several fields, they have also been extensively criticized (e.g., Altman, 1985; Assmann et al., 2000; Roberts & Torgerson, 1999; Sedgwick, 2014; Senn, 1994). Specific criticisms of balance tests include that they are conceptually problematic (Altman, 1985), that statistical significance of group differences is not as important as the correlation with the dependent variable (Altman, 1985), that researchers may use these tests improperly (Bruhn & McKenzie, 2009), and that their use “…can destroy the basis on which scientific conclusions are formed, and can lead to erroneous and even fraudulent conclusions” (Mutz et al., 2018, p. 32).

Although random selection of the research sample limits generalizability, it does not affect internal validity of the experiment. Therefore, with random assignment of respondents to experimental conditions, the researcher can unambiguously conclude that the effect of the independent variable on the differences observed in the dependent variable is a true, reliable difference. The experimenter is limited, however, in concluding that any such effect might be limited to the non-random sample selected for the study. However, the confounding effect of a non-random assignment of respondents to treatment v. control conditions affects internal validity. Therefore, such a study fails in a fundamental requirement of an experiment, which is to unambiguously conclude that the manipulation of the independent variable caused the differences observed between the treatment and control groups on the dependent variable.

Experiments have been labeled “the ‘gold standard’ against which all other designs are judged” (Trochim, 2001, p. 191) because in “true” experiments all possible influencing factors are controlled and can be kept constant by the researcher (Cook, 2018). Despite the value of experiments in applied settings, such as education (Whitehurst, 2012), researchers cannot always control all possible factors that may influence the study for a variety 3 of reasons (Cook, 2002; Cook & Campbell, 1979; Schanzenbach, 2012). In the context of entrepreneurship education, researchers may face ethical concerns when they would like to randomly assign students to different classes, courses or educational programs. They also may not always be in full control of the treatment, and therefore not be able to manipulate the variable(s) of interest. For example, program accreditations may enforce specific boundaries, courses may have strict learning goals, and students may expect a certain workload, such constraints may limit experimentalists’ ability to manipulate conditions. In such circumstances, researchers need to make trade-offs (Grégoire et al., 2019). Therefore, in the following section, we extend our focus to experimental designs in situations when researchers (1) cannot assign participants randomly to conditions; and/or (2) cannot control the manipulation of the independent variables. In such situations, researchers may use quasi-experimental designs.

Quasi-Experiments

As defined by the APA, a quasi-experiment is “an experimental design in which assignment of participants to an experimental group or to a control group cannot be made at random for either practical or ethical reasons; this is usually the case in field research. Assignment of participants to conditions is usually based on self-selection (e.g., employees who have chosen to work at a particular plant) or selection by an administrator (e.g., children are assigned to particular classrooms by a superintendent of schools)” (American Psychological Association, n.d.-e). The term “quasi-experiment” is used to refer to a research design that often resembles an experiment in most respects except that assignment of participants to treatment v. control groups is not random. Thus, quasi-experiments are subject to the internal validity concerns expressed earlier. A commonly used method to address the issue of group non-equivalence is to measure one or more variables that the researcher deems to be theoretically important as potential determinants of the effect of the treatment on the dependent variable and then to “match” treatment and control groups on those variables (Kim & Steiner, 2016). However, because of the confounding discussed earlier, alternative explanations rooted in group non-equivalence are still present. As noted by Shadish et al. (2002, p. 14), “In quasi-experiments, the researcher has to enumerate alternative explanations one by one, decide which are plausible, and then use logic, design, and measurement to assess whether each one is operating in a way that might explain any observed effect.”

In the following sub-sections, we discuss two commonly used quasi-experimental designs, namely the one-group pretest-posttest design and the pretest-posttest control-group design.

One-Group Pretest-Posttest Design

Pretest-posttest designs without a control group (one-group pretest-posttest designs) are sometimes used (and published), however, as noted by Campbell and Stanley (1966, p. 7) these are “pre-experimental” (i.e., not true experimental designs). As they note, the primary reasons are that the experimentalist cannot eliminate several alternative explanations that any difference between the pretest and posttest were caused by the mere repetition of the measurement (testing), or extraneous events (history) or changes in the study participants (maturation) that occurred during the time that elapsed between the pretest and posttest measures.

Pretest-Posttest Control-Group Design

A pretest-posttest control-group design addresses the alternative plausible explanations enumerated above because the control group is subject to the effects of the confounded variables (testing, history and maturation) and thus differences between the groups on the posttest measure can more confidently be attributed to the independent variable. Ideally, the pretest-posttest control-group design is used with random assignment of participants to conditions. In a quasi-experimental design, however, the pretest-posttest design is in its best form when used to achieve a matching between groups. Since the dependent variable is measured before treatment, it can be used to match treatment and control groups on that variable as closely as can be achieved prior to treatment. Although this is not as desirable as random assignment, it may be the best that can be done given the constraints of the field setting.

Other Choices Regarding Experimental Designs

So far, we have distinguished between experimental and quasi-experimental designs by looking at whether research participants have been assigned to experimental conditions at random or not. We also briefly discussed the possibility of a pretest, which is the measurement of the dependent variable before the experimental treatment. However, experimental designs can differ in other aspects than how participants have been assigned to conditions and whether a pretest is included. Below, we will discuss field and natural experiments, which are experiments that take place outside of a laboratory setting, and we discuss factorial designs, which are experiments that include more than one manipulated variable and where each manipulated variable has at least two discrete values.

The American Psychological Association distinguishes between field and natural experiments although these are not mutually exclusive and are somewhat overlapping types of experiments (or quasi-experiments). The APA defines a field experiment as “a study that is conducted outside the laboratory in a ‘real-world’ setting. Participants are exposed to one of two or more levels of an independent variable and observed for their reactions; they are likely to be unaware of the research. Such research often is conducted without random selection or random assignment of participants to conditions and without deliberate experimental manipulation of the independent variable by the researcher” (American Psychological Association, n.d.-c). Because there is often a lack of random assignment, field experiments are a type of quasi-experiment. In a field experiment, the independent variable may be manipulated by the experimentalist although this is not a requirement (Shadish, 2002).

Some manipulations are completely outside of the control of the researcher. A recent example would be the outbreak of the COVID-19 pandemic. This pandemic caused many educational institutions to move away from in-class teaching to online teaching. The pandemic itself and most of its effects were not under the control of researchers. Researchers could simply observe and compare the conditions before and after the pandemic. Such situations are called natural experiments. The APA defines natural experiment as “the study of a naturally occurring situation as it unfolds in the real world. The researcher does not exert any influence over the situation but rather simply observes individuals and circumstances, comparing the current condition to some other condition” (American Psychological Association, n.d.-d). As with field experiments, however, natural experiments occur in the “real world” and not in the laboratory.

Although “true” experiments may be the ne plus ultra of rigorous research methods, the field of entrepreneurship education research is fundamentally aimed at understanding what happens in the context of an educational program and this alone may place an important constraint on researchers. However, as in many disciplines that focus on understanding human behavior, we argue that a multi-method approach is often the best strategy, particularly when research moves between the ideal control available to the experimentalist in an in situ (laboratory) setting and the in vivo (field) setting that we seek to understand.

In its most simple version (i.e., the two-by-two factorial design), the factorial design includes two variables that each have two levels. In such a design, the experiment has four treatment conditions. If all conditions are part of the experiment, it is called a full factorial design or a fully crossed design; if not all levels of all variables are included in the design, it is called a fractional factorial design. More complicated factorial designs can include more independent variables with more levels of each variable. For example, a four-by-three-by-two factorial design is a design that includes three independent variables: one with four levels, one with three levels, and one with two levels. More complicated factorials designs can provide more fine-grained insights into the effects of different values of the independent variables and their differing interaction effects. At the same time, they become more complicated to implement. For example, the aforementioned four-by-three-by-two factorial design contains 4 × 3 × 2 = 24 treatment conditions, and each condition would need to have sufficient participants to detect statistically significant differences between the conditions.

In this section, we have provided a basic overview of the terminology used in the experimental method and of the basic experimental designs. However, this overview is in no way exhaustive. We refer readers interested in further discussions of the above-mentioned topics, or other types of experimental designs, such as within-subjects designs, a design with each participant as their own treatment and control group (Hsu et al., 2017; Shadish et al., 2002), or regression discontinuity designs (Shadish et al., 2002; Thistlethwaite & Campbell, 1960), to Shadish et al.’s (2002) “Experimental and quasi-experimental designs for generalized causal inference.” In the next section, we will provide a brief overview of the development of the experimental method in the social sciences in general and in education sciences specifically to show that the experimental method has a long history in the educational sciences.

A Brief Historical Overview of the Use and Development of Experimental Designs in the Social Sciences

In the beginning of the 20th century, social scientists were bringing experimental methods from the natural sciences into the social sciences. “Experimentation in the biological sciences is obviously more difficult than in the non-biological ones, simply because of the difficulty of controlling living things. Psychology, which is a branch of biology since it endeavours to bring within experimental control the behaviour of human beings, is the most difficult of all the sciences.” (Sandiford, 1928, p. viii). For example, apples, balls and rocks do not notice when they are being put into an experiment time and time again, whereas human subjects get tired after long or repeated testing. Social scientists quickly learned that the experimental designs used in the natural sciences often provided inadequate controls and were not sensitive to the reactivity of research participants. 4 Therefore, new methods were developed to deal with extraneous and biasing influences such as subject-differences between conditions (Armitage, 2003; Fisher, 1926), respondent fatigue (Thorndike, 1899; 1900), and experimenter expectations (Rivers & Webber, 1907), among others. Researchers from agriculture, psychology and educational sciences developed methods, such as control groups (Coover & Angell, 1907; Dehue, 2000; Solomon, 1949), blinding researchers (Rivers & Webber, 1907), and randomization over conditions (Fisher, 1925; 1935) to deal with such potential confounds and biases. Over time, more theoretical and empirical experience was gained in different settings and topics, which led to the identification of additional sources of bias. These newly identified biases, in turn, led to the development of more methods to eliminate the biasing conditions (e.g., Dehue, 2000). The goal has always been to eliminate alternative (and spurious) explanations for the findings and to, therefore, identify true causal relationships between the independent (manipulated) variables and the dependent (measured) variables of interest. A wave of enthusiasm for experimentation dominated the field of educational sciences (e.g., Sandiford, 1928), and perhaps reached its apex in the 1920s and 1930s (Campbell & Stanley, 1966; Shadish et al., 2002).

This wave of enthusiasm was followed by a wave of disillusionment, apathy and rejection of experiments beginning around 1935 (Good & Scates, 1954). There were several reasons for this. First, the expected rate and degree of progress that was supposed to be made with experimental designs was overly optimistic and the enthusiasm regarding the experiments was accompanied by an unjustified denigration of non-experimental studies (Campbell & Stanley, 1963). Initially, advocates of experimental designs argued that progress had been slow due to the lack of use and adequate application of the scientific method. And, traditional educational practices were deemed to be incompetent just because their effectiveness was not supported by the results of experiments (Campbell & Stanley, 1963).

Second, on an individual level, personal avoidance conditioning could explain the disillusionment (Campbell & Stanley, 1963). Non-confirmation of valued theories and their hypotheses is painful to researchers. Researchers are left with either rejecting their treasured theories or rejecting the method used to gather the data. This appears to have resulted in a general avoidance or rejection of the experimental method instead of the rejection of a theory not supported by the data. New psychological perspectives that are not amenable to experimental investigation, such as Gestalt psychology and psychoanalysis, became more popular theories (Campbell & Stanley, 1963). Conversions of researchers trained in the experimental tradition to these perspectives occurred frequently (Campbell & Stanley, 1963).

After this wave of pessimism, a wave of renewed enthusiasm followed. After the Second World War, fields such as psychology and education sciences blossomed. Reviewing the lessons learned from the initial wave of enthusiasm, leading experimentalists Campbell and Stanley (1963, 1966) argued that the choice for experimentation should be made “not as a panacea, but rather as the only available route to cumulative progress” (1966, p. 3). Experimentalists should expect disappointment and lack of results, but make cumulative progress through persistence. More specifically, Campbell and Stanley argue that “multiple experimentation is more typical of science than once-and-for-all definitive experiments” (Campbell & Stanley, 1963). Experiments should be replicated and cross-validated at different times and under different conditions. They warn that we should not expect that “… crucial experiments which pit opposing theories will be likely to have clear-cut outcomes” (Campbell & Stanley, 1963). Instead, they call for multiple experiments that are less complex in nature, arguing that over time, such systematic, incremental and partial replication of simple experimental designs will improve reliability and confidence in findings.

Lastly, the statistical procedures to analyze experiments had improved significantly. Researchers in the 1920s and 1930s were only able to conduct univariate, one-variable-at-a-time, research. Advancements in statistics and mathematics and the introduction of the computer led to statistical tools that helped experimentalists to analyze models with two or more experimental variables and their interactions (Raudenbush & Schwartz, 2020). Moreover, besides analyzing two independent variables, researchers also gained the ability to analyze models with more than one dependent variable, and then to analyze models with both more than one independent and more than one dependent variable. This enabled experimentalists to ask more interesting research questions and find more nuance in their results (Cook & Shadish, 1994).

In these and following decades, many interesting field experiments have been conducted, but these experiments were often time consuming and expensive. And unfortunately, they were neither as definitive nor as useful as were hoped for. There were, for example, problems to recruit enough participants, some participants did not accept random assignment, random assignment was poorly implemented and when the researchers finally were able to complete the experiments and report on them, they provided an answer to an old question in which (policy-shaping) stakeholders were not always interested anymore (Shadish & Cook, 2009). Another wave of pessimism followed when researchers and practitioners raised important questions about whether field experiments were viable and valuable to both science and policy (e.g., Cronbach, 1982; Cronbach et al., 1980). Different methods such as nonexperimental econometric methods, qualitative and anthropological case studies therefore became popular in the 1970s and 1980s, and researchers started to ask research questions that were non-causal in nature. Although several fields, such as medicine and public health remained interested in the use of field experiments, “[e]ntire fields, particularly education but also some parts of economics and sociology, largely rejected experimentation in favor of these and other alternatives” (Shadish & Cook, 2009, p. 609). Despite efforts to improve the quality of educational intervention research (e.g., Levin, 1994; Pressley & Harris, 1994) and critical analyses of the reasons that educational researchers have used for not using experimental designs (e.g., Cook, 2002), the field of educational sciences has seen a decrease in articles reporting on randomized experiments (Hsieh et al., 2005).

Over the last few decades, however, there is again a renewed interest in the experimental method. Evidence-based practice became important in policy and encouraged policymakers to adopt interventions that were demonstrated to have empirical effectiveness. “In fields where ideology and recent practice had rejected experimentation, most notably education, the turn of the 21st century saw federal mandates for the use both of experiments and of interventions with experimental support, and funding priorities underwent a large shift to back this mandate” (Shadish & Cook, 2009, p. 610). Renewed interest also came from economists and statisticians who found that their econometric models could not reproduce experimental results.

In summary, a century of research using experimental designs in the social sciences in general, and in the educational sciences specifically, has led to the development of many important tools that have become standard procedures and guidance to help researchers design rigorous experiments. Moreover, it has led to the possibility to analyze more complex experimental designs, and therefore to test more ambitious research questions. Although entrepreneurship has been taught for centuries (Wadhwani & Viebig, 2021) and modern entrepreneurship education started with the first entrepreneurship course having been taught at Harvard Business School in 1947 (Katz, 2003), systematic research on entrepreneurship only began to be conducted in 1970s and 1980s (Landström & Benner, 2010). It is around that time that research on entrepreneurship education started to emerge (Béchard & Grégoire, 2005) beginning with the pioneering work by Vesper (1974, 1982). Hence, the field emerged during a time when experimental research in education was out of fashion. However, recently, the fields of entrepreneurship (e.g., Acs et al., 2010; Williams et al., 2019) and entrepreneurship education (e.g., Longva & Foss, 2018) have developed an interest in using experimental designs. It is against this background that we review the empirical literature in entrepreneurship education that used experimental designs.

Method

We conducted a systematic literature review to develop an overview of the use of experimental designs in entrepreneurship education over the past 30 years. Figure 1 shows a graphical overview of the steps taken. Graphical overview of the steps taken in the systematic literature review.

In searching the literature, we used the Web of Science database and the following search string: (Experiment* OR quasi* OR random* OR manipulat* OR treatment* OR causal*) AND (Entrepreneur*) AND (educa* OR train* OR teach* OR learn* OR course* OR pedagog*)

This resulted in an initial dataset of 1599 articles published from 1991 through 2021. We first scanned all titles and abstracts to see whether certain papers could be excluded because the paper either clearly did not use an experimental design, or clearly was not about entrepreneurship education. If we were unable to determine whether or not an article met these criteria based on the title and abstract alone, we kept the articles in the dataset for further analysis. This left us with 244 articles for which the research was clearly on a topic relevant to entrepreneurship education and where it seemed that the authors had perhaps conducted an experiment, but where that was not clear. For these articles, we reviewed the full-text to determine if an experiment had been conducted and, if so, what type of experiment.

We then read and categorized these 244 articles using the following criteria. An article was included in our final detailed analysis if it reported on a study using an (quasi-) experimental 5 design and the topic of the study was related to entrepreneurship education. We excluded 33 articles because they were written in a language than English (Croatian, Korean, Polish, Russian, or Spanish) despite the fact that the titles and abstracts were in English. We also excluded one article that we were unable to obtain. Lastly, we removed 28 articles wherein the actual focus of the research was not related to entrepreneurship education research although the term “entrepreneurship education” was used in the articles. Of the 182 remaining articles, 86 articles used a non-experimental design, 6 and were therefore removed from the dataset. Our final dataset contained 96 studies where the research topic focused on entrepreneurship education and that used an experimental design: 34 articles used a randomized experimental design, 62 used a quasi-experimental design.

Overview of Entrepreneurship Education Studies Using a Quasi-Experimental Design.

Overview of Entrepreneurship Education Studies Using a True Experimental Design (Randomized Group Assignments).

Results

General Findings

Several interesting findings emerged from our analysis of the articles generated by the search. First, it is notable that only 96 articles out of an initial dataset of 1599 articles (6.0%) met the criteria set forth above and could, therefore, be included in the analysis. The remaining articles, although using language suggesting that experiments pertaining to entrepreneurship education had been conducted turned out not to be either. In part, this is understandable since any article mentioning “entrepreneurship education” and “experiment” would surface in the search. In many cases, this was a simple co-occurrence of the words experiment and entrepreneurship education. This is understandable. However, some articles that purported to involve an experiment were in fact not experiments at all.

Of course, we designed our search terms to be as inclusive as possible. We wanted to capture every possible experimental study. At the same time, this meant the search would include many non-experimental studies. There are several reasons for this. For example, we included “random*” as a search term to capture articles that used ‘randomized assignment to conditions’ to ensure equivalence of experimental groups (thereby increasing internal validity), however, the results also included (non-experimental) studies that used ‘random sampling’ to increase external validity by ensuring the sample was representative of the population of interest. Many articles contained the term “experiment” or “experimenting,” but used these terms in the sense of a “trial” or a “tryout” instead of referring to the research design itself, and were therefore excluded. We also found studies using the term ‘pretest-posttest design’ to refer to the fact that they first measured the independent variables and that they measured the dependent variable at a later point in time, probably to reduce the threat of common method variance. Moreover, we found that several researchers labeled their studies as using an experimental or quasi-experimental design, but in fact no independent variable was manipulated or even studied by the researchers, and all variables included in the study were simply measured post hoc. These findings suggest that researchers may not always be clear on the distinctions between experiments, quasi-experiments and other related methods of ascribing causality.

Second, we plotted the number of studies using an experimental or quasi-experimental design over time (see Figure 2). Although we included the 30-year period 1991–2021 in our search, the first study we found on entrepreneurship education that used an experimental or quasi-experimental design was published in 2003. This is a remarkable finding in and of itself, given the long history of using experimental and quasi-experimental designs in education research (Lindquist, 1953; Shadish et al., 2002). Figure 2 shows that there has been a dramatic increase in the use of experimental and quasi-experimental designs in entrepreneurship education research in the time frame of our review, and particularly over the last 10-year period. This finding supports the need for and timeliness of this review article. It also shows that in almost every year, we found about twice as many quasi-experiments as randomized experimental designs. Entrepreneurship education studies using an experimental or quasi-experimental design published by year.

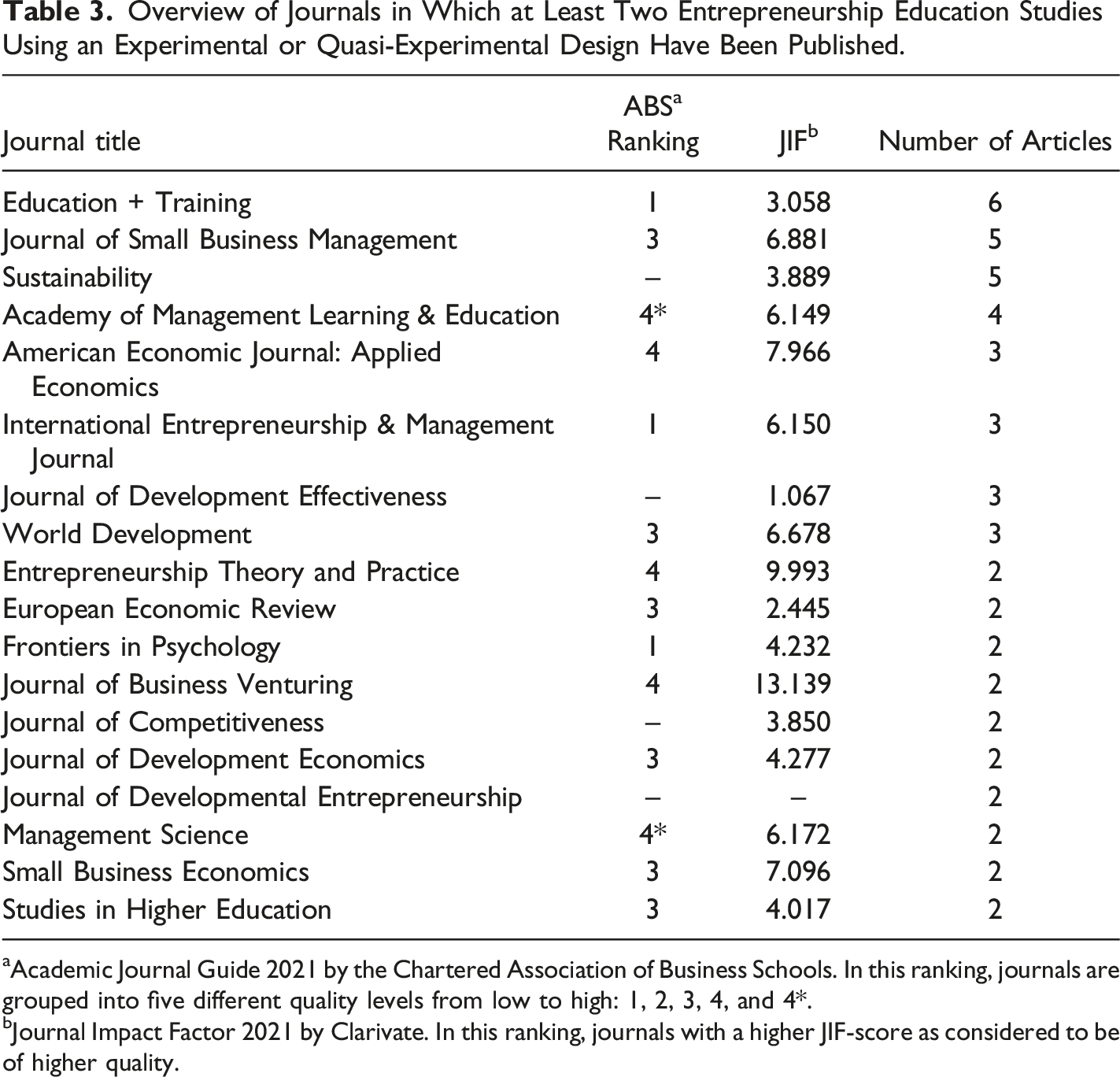

Overview of Journals in Which at Least Two Entrepreneurship Education Studies Using an Experimental or Quasi-Experimental Design Have Been Published.

aAcademic Journal Guide 2021 by the Chartered Association of Business Schools. In this ranking, journals are grouped into five different quality levels from low to high: 1, 2, 3, 4, and 4*.

bJournal Impact Factor 2021 by Clarivate. In this ranking, journals with a higher JIF-score as considered to be of higher quality.

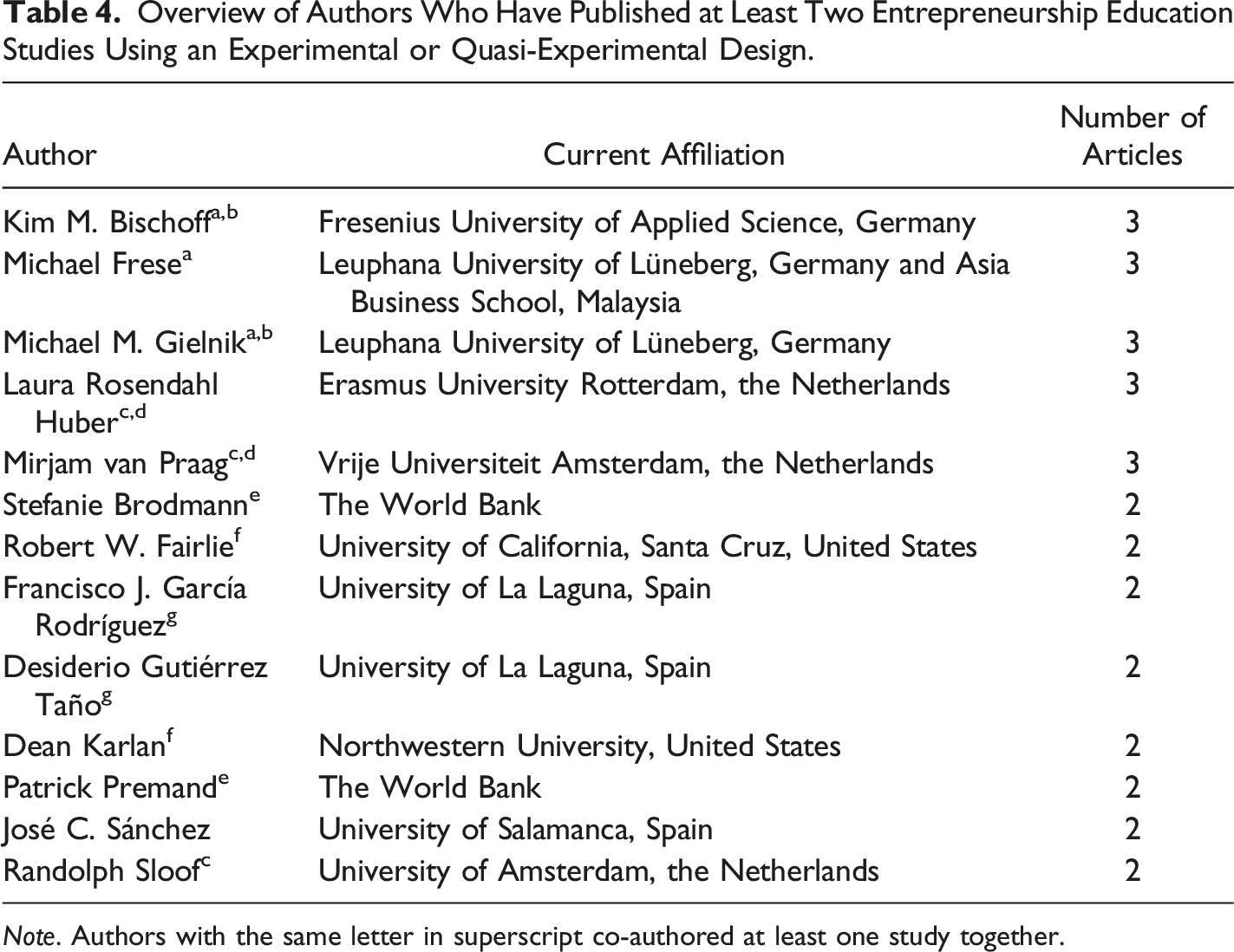

Overview of Authors Who Have Published at Least Two Entrepreneurship Education Studies Using an Experimental or Quasi-Experimental Design.

Note. Authors with the same letter in superscript co-authored at least one study together.

Analysis of Experimental Design Elements

Of these 96 articles included in our dataset, only three articles reported two experimental studies in each article. The second experiment reported in these articles typically was a conceptual extension of the first experiment. Typically, the authors included an additional independent variable to create a factorial-design, or they included an additional moderating and/or mediating variable as a help in explaining the results more fine-grained. Follow-up experiments on boundary conditions or more fine-grained understanding of the effects were missing in 93 (or 96.9%) of the studies. As the second experiments in the articles included in our review closely resembled the first experiments in design, and most studies in our review reported on a single experiment only anyway, we will report the results at the level of the article and not the level of individual experiments included in these articles.

We found that 34 (or 35.4%) articles used a randomized experimental design, and 62 (or 64.6%) used a quasi-experimental design. Of the 34 articles that reported using a randomized experimental design, we found that in 27 articles, the subjects were randomly assigned to conditions using simple randomization; in six articles participants were randomly assigned to conditions after stratifying 7 the subjects on specific variables. In the 62 articles reporting the results of quasi-experiments, the researchers used a research design in which respondents were not randomly assigned to experimental conditions. 8 Of these, 26 studies involved the self-selection of participants into experimental conditions. Another 18 articles reported using single-group pretest-posttest or single-group longitudinal designs with multiple measurements over time. Twelve articles reported group assignment where some variables were used to “match” groups as a means of achieving group equivalence in the absence of random assignment. 9 Four articles reported on assigning subjects to groups based on their score on a specific variable. One article reported on the use of a natural experiment. For two quasi-experimental studies we were unable to determine how subjects were non-randomly assigned to conditions.

We identified 75 articles that reported on the use of a control group to be able to compare the results of the experimental group(s) to a similar group who did not receive the treatment. Twenty-one articles did not report on a control group. These studies used either a one-group pretest-posttest design, or they compared multiple different interventions with one intervention defined as the intervention to which all other interventions were compared. Of the 75 articles that included a control group, 54 (72.0%) used a no-treatment concurrent control group, meaning no intervention was given to the participants in the control group. Nineteen articles reported on the use of an active-treatment concurrent control, meaning the control group received an existing treatment to which the new treatment was compared. Often, these were either existing entrepreneurship education programs, or an existing non-entrepreneurship curriculum, and where the focus of the research was on improvement in performance due to the “new treatment.” One article reported on the use of a placebo concurrent control, where the control group received a similar treatment as the experimental group(s) but with the element that captured the theoretical construct of interest removed from the treatment. One article, the natural experiment, reported on the use of a historical control, meaning that the experimental group was compared to a group for which data had been collected in the past. We found no significant differences in the type of control groups used between experiments and quasi-experiments (χ 2 (4) = 7.94, p = .09).

We also looked at the use of pretest-posttest 10 designs. These studies were therefore able not only to report on whether or not there were differences between the experimental group(s) and control group, but were also able to determine how large the effect of their intervention was relative to an initial baseline measure. We found 79 articles that reported on the use of a pretest-posttest and 17 that did not use a pretest. We found no significant differences regarding the use of a pretest-posttest design between experiments and quasi-experiments (χ 2 (1) = 0.68, p = .41).

We also looked at the manipulations (or interventions) used in the studies. This led us to make two important observations. The first observation we made is that in almost all these studies, the researchers seemed to be interested in the effects of one particular empirical phenomenon, i.e., the course or program being studied. These interventions were pre-existing phenomena and seemed most often not to have been developed with a theoretical research question in mind but rather to be fit to a theory a posteriori. The second observation is that the interventions that these studies encompass often involve full courses or complete educational programs. Such interventions may activate a plethora of intended and unintended theoretical concepts. Only one of the studies in the dataset included a manipulation check to test whether the theoretically intended “manipulated” variable was successfully activated by the manipulation.

Analysis of Theories Studied

To better understand which theoretical models have been tested under the conditions of entrepreneurship education, we inventoried the theoretical models used in the studies included in this review. Although the scholarly emphasis on theory and theoretical contributions has been criticized (Hambrick, 2007), “theory is the currency of our scholarly realm” (Corley & Gioia, 2011, p. 12). Therefore, we find it surprising that 45 (46.9%) studies did not report on any theory being tested, the theory on which the intervention was based, nor the theory that may have guided the researchers in the selection of the variables included in their study. That almost half of the studies included in our review do not build on the accumulated set of knowledge nor contribute to an improved understanding of entrepreneurship education and pedagogy is problematic. And although this problem is widely known and documented in the field (e.g., Fayolle et al., 2016; Nabi et al., 2017; Neck & Corbett, 2018), it is even more problematic for studies using a research method whose core distinguishing factor is its ability to provide evidence for causal relations between theoretical constructs. The lack of theory and theory development limits the field’s legitimacy, its possibilities for gaining a better understanding of how best to teach entrepreneurship and it hurts our students who therefore do not get the best possible learning experiences.

The theory most reported was the theory of planned behavior (see Ajzen, 1991). In total, 19 studies were based on this theory. Twelve studies focused on this theory alone, whereas seven studies used this theory in combination with other theories. Six studies utilized human capital theory (see Becker, 1964) and five studies focused on action-regulation theory (see Hacker, 1986).

Only a few studies included in our review either explicitly base their intervention on theory or expand a theory or theoretical model in a setting of entrepreneurship education. Most studies that mention a theory, however, mention theory as an argument for the choice to include one or more specific variables in their study, without including the full theoretical model. For example, some studies that include variables like “perceived behavioral control” or “entrepreneurial intention” mention that these variables are derived from the theory of planned behavior, but often do not include the other variables that are part of that theoretical model.

Analysis of Variables Studied

To gain a better understanding of what has been studied and what has not yet been studied using experimental designs, we also categorized the variables studied in our dataset into educational variables, economic variables, and psychological variables. Examples of educational variables include the application of different pedagogies as experimental variables or the use of students’ test scores as dependent variables. Examples of economic variables include offering participants a loan or a grant as the experimental variable and differences in sales as the dependent variable. Psychological variables, such as personality variables, were often included as measured independent variables rather than manipulated, experimental variables. Psychological variables were also used as moderating and mediating variables to test, for example, the theory of planned behavior (Ajzen, 1991), but most often were used as dependent variables. Many entrepreneurship education studies using an experimental or quasi-experimental design test the effects of the intervention on psychological variables such as skills, attitudes, and entrepreneurial intentions.

Analysis by Type of Variables

For 81 studies, the independent variable was an educational variable. Out of these 81 studies, 67 tested the effect of an educational program. Seventeen test the effect of a pedagogical intervention, and four tested the effect of differing educational content. Two studies tested interventions of combined educational content and pedagogical effects and two other studies tested interventions in educational programs combined with pedagogical effects.

One study reported on the effects of an economic intervention and three studies reported on the effects of a psychological intervention. Three studies reported on the effects of both economic and educational variables, two studies on the effects of educational and economic variables and six on the effects of educational and psychological variables.

Seventeen studies included mediating variables in the experimental design. Fourteen of these studies used psychological variables as mediators, two studies used educational variables as mediators, and one study reported on an economic variable as mediator.

Thirteen studies reported on the effects of moderating variables. Ten studies reported on the effects of psychological moderators. Two studies looked at the moderating effects of educational variables and one study reported on the effects of both economic and psychological moderating variables.

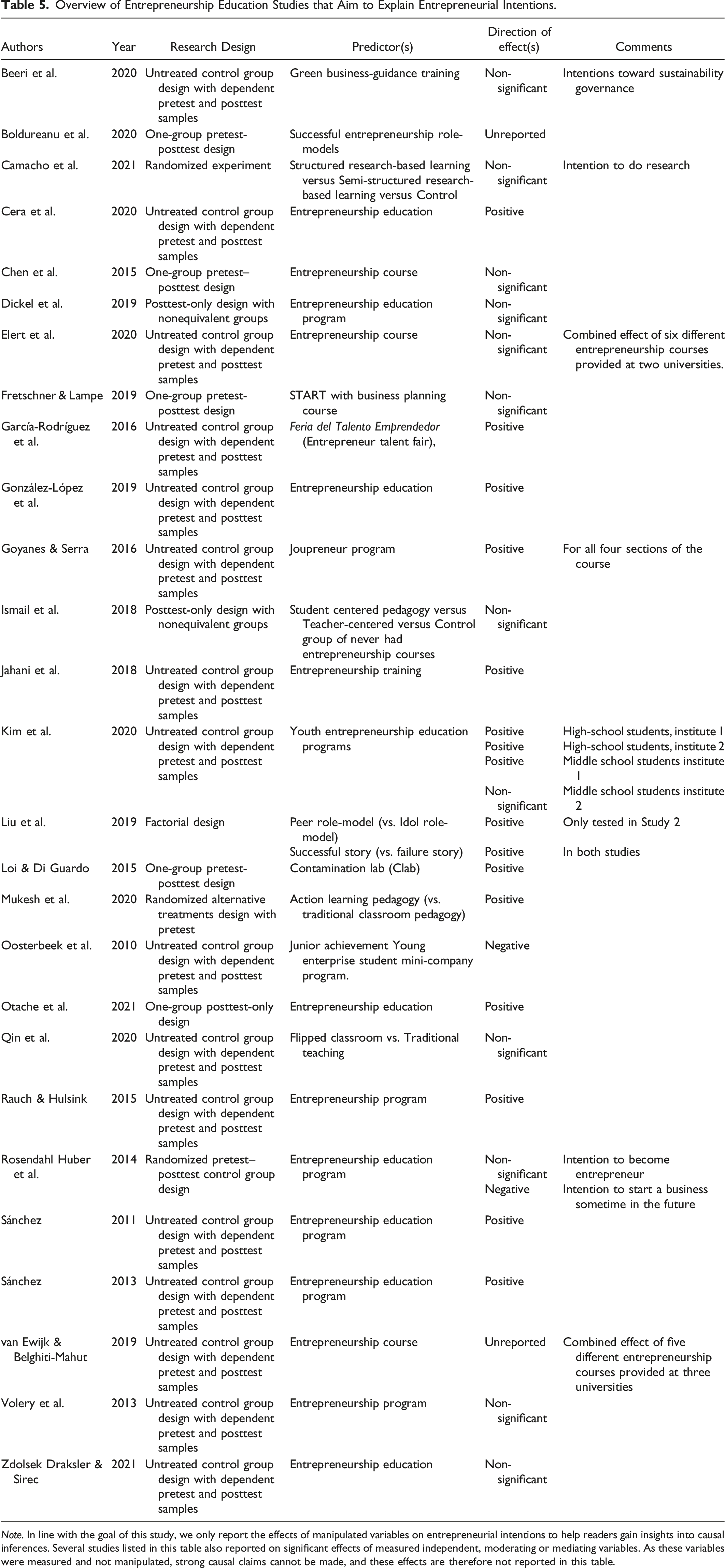

Overview of Entrepreneurship Education Studies that Aim to Explain Entrepreneurial Intentions.

Note. In line with the goal of this study, we only report the effects of manipulated variables on entrepreneurial intentions to help readers gain insights into causal inferences. Several studies listed in this table also reported on significant effects of measured independent, moderating or mediating variables. As these variables were measured and not manipulated, strong causal claims cannot be made, and these effects are therefore not reported in this table.

Analysis by Core Discipline

We found 29 studies that investigated causal effects on economic variables only. These studies looked at causal effects of the intervention on financial variables, such as (differences in) sales, (differences in) profits, and whether the entrepreneur or company had a loan, and if so, the amount of the loan. Alternatively, some studies looked at non-financial, company-level outcomes such as whether a business was created, survival (or failure) of the business and (differences in) number of employees.

Ten studies investigated the effects on educational variables only. These studies investigated the causal effects of the intervention on variables such as knowledge of entrepreneurial concepts, students’ test scores, or the quality of the pitch presentation as a function of training (independent variable).

We also found studies that tested the causal effects of the intervention on a combination of educational, economic, and psychological variables. One study explained both economic and educational variables: that study looked at whether the entrepreneur participated in trainings, attended workshops or seminars, but also at loan applications, sales, and earnings of both business and households. Three studies explained economic and psychological variables. These studies explained both psychological variables such as self-esteem, attitudes, and personal control, but also economic variables such as income and investments. One study explained both educational and psychological variables by testing the effect of attending an entrepreneurship seminar on educational outcomes such as learning skills, and psychological variables such as soft skills and entrepreneurship skills. One study explained educational, economic, and psychological variables: it looked at the causal effects of a youth entrepreneurship education program on psychological measures, such as personality traits, economic measures such as economic advancement and empowerment, and educational measures such as school attendance, college and occupational interest, and knowledge about entrepreneurship.

Discussion

Before we discuss our findings and present our agenda for future research in entrepreneurship education using experimental designs, we would like to emphasize that we do not argue that all future research on entrepreneurship education should use experimental designs. A strength of experimental designs is that they are suitable to test potential causal relationships between variables by “artificially” inducing a treatment (Whitehurst, 2012). However, not all variables of interest can be easily and realistically artificially induced (Schanzenbach, 2012). Moreover, due to the artificial induction and manipulation of the independent variable(s), the ecological validity of experiments is often lower than of other research methods (Aanstoos, 1991; Araújo et al., 2007).

One remarkable finding of this study is that only three of the articles included in our review reported on multiple studies. In many fields, including psychology and economics, there is a trend towards publishing articles that report on multiple experiments. Articles that report on multiple experiments often replicate and extend the initial findings (Morrow, 2018). These replications increase the reliability of the studies’ results, whereas the extensions help to better understand the boundary conditions or help to develop a more fine-grained understanding of the theory being tested. In general, articles reporting on multiple experiments can ask more advanced research questions.

When extending experiments, researchers may include multiple (manipulated) independent variables, mediating variables and moderating variables. We found few studies that reported on the use of moderating or mediating variables. Including such variables can help researchers to gain a more precise understanding of the relationships. The relatively ‘simple’ experiments currently found in the field move the field forward, but not as fast as would be the case when researchers would report on such more fine-grained designs.

Another remarkable finding is that although many articles included in our review did not explicitly report their research question, many articles were interested in whether a specific educational element (e.g., lecture, course or program) had an effect on one or more dependent variables. As entrepreneurial intention was the most-often studied dependent variable, we provided an overview of the manipulated predictors of that variable (see Table 5). Here we find several studies reporting positive effects, negative effects and non-significant effects of entrepreneurship education on entrepreneurial intentions. This overall inconclusive evidence is in line with findings from a meta-analysis of this relationship (Bae et al., 2014). A possible explanation for the lack of consistent evidence may be that most treatments used in the experiments included in our review use full-fledged programs or courses as the treatment. Such treatments may activate a plethora of intended and unintended variables. In some studies, the effects of different courses were grouped together as one treatment, making it unlikely that all participants received the same treatment. Moreover, we found that the treatments used in these studies very often were not designed to activate a specific theoretical variable of interest but instead were designed to test whether that specific course or training (as the empirical operationalization of theoretical construct “entrepreneurship education”) had an effect.

Quasi-experiments have been published about twice as often as randomized experiments. Although several rigorous quasi-experiments have been published in the field (e.g., Costa et al., 2018; González-López et al., 2019; Rauch & Hulsink, 2015), the field would benefit from more randomized experiments to eliminate the possibility that differences between self-selected treatment and control groups could have been caused by unmeasured variables.

We also found that experiments used in this field strongly rely on no-treatment concurrent control groups, if they use a control group at all. The strengths of placebo control groups over other forms of control groups are well known in the literature (e.g., Boot et al., 2013) and also in the field of education sciences the call for placebo control groups (Adair et al., 1990) has been heard. If placebo control groups are not possible, active treatment control group designs could be used (Looi et al., 2016).

Contributions

Our study provides an overview of what has been studied in entrepreneurship education using experimental or quasi-experimental designs. Previous literature reviews in entrepreneurship education research have reviewed the field as such (e.g., Nabi et al., 2017) or focused on theoretical and/or methodological elements relevant to the field (e.g., Fayolle et al., 2016). Recently, Longva and Foss (2018) provided an excellent overview of the specific use of experimental or quasi-experimental designs in this field. Our study adds to their impressive findings not only by extending the time frame (up to and including 2021 as compared to 2017), but also by taking a broader view. Longva and Foss (2018) focused on “rigorous or strong experimental designs” only, which they define as “true experiments or quasi-experiments that make use of a longitudinal design (as opposed to a cross-sectional design) and have control groups for comparison” (Longva & Foss, 2018, p. 359). They operationalize a longitudinal design as a research design that includes a pretest measure (essentially a pretest-posttest). This led them to include “11.7% of qualitative impact studies” (p. 365). In our study, we did not use a “quality” threshold of methodological rigor to look only at the “most rigorous” designs, but instead, we included all experimental or quasi-experimental studies we could find to provide a more complete overview of the current state of the use of experimental or quasi-experimental designs in the field of entrepreneurship education research. Our study therefore includes more articles, and also provides information on the occurrence of pretests and control groups in the field (or lack thereof), which their study, by design, does not do. On the other hand, Longva and Foss (2018) focus on additional elements, such as sample characteristics (e.g., primary school students vs. undergraduates) and the timing of their posttest measures (e.g., immediately vs. 12 months later), that we do not include in our analysis

Limitations

Although our literature review provides new insights on the use of experimental and quasi-experimental designs, our overview also has limitations. First, we conducted a review of the literature beginning in the year 1991 and only included journal articles indexed in Web of Science. Although Web of Science indexes a wide variety of databases, we cannot claim we have covered all possible articles. Moreover, experiments published in books, book chapters or conference proceedings, and not in the journal literature, were not included in this review.

Second, this review is based on published articles and, similar to other literature reviews, might be subject to a possible publication bias (Torgerson, 2006). Experiments that produce statistically significant findings are more easily publishable than experiments that produce null results (Franco et al., 2014). We cannot assess to what extent the studies included in our review suffered from this bias, which is a type of “survivor bias” pervasive in these types of reviews (Ioannidis, 2005). Taking into account that a publication bias may exist, we are confident our review gives a solid overview of the current state of affairs of the use of experimental and quasi-experimental designs in (published) entrepreneurship education research.

Third, our search terms included “Entrepreneur*” but not “Enterprise.” Some “entrepreneurship education experiments” could have been published as “enterprise education experiments.” 12 For example, Longva and Foss (2018) included “enterprise education” as a search term in their overview. In order to determine what, if anything, may have been missed, we used Web of Science to search all journals listed in Table 3 with the aforementioned search string, replacing “Entrepreneur*” by “Enterprise” and added “NOT (Entrepreneur*)” to exclude any previously found articles that contained both “Enterprise” and “Entrepreneur*”. This search yielded only one additional, relevant article: de Mel et al. (2014). We did not include this article in our analysis because we did not find this article through our initial systematic literature review, but only after a search in journals found to be relevant through our initial search. However, this search also showed that in the great majority of occasions, “Enterprise” appeared along with the term “Entrepreneur*”.

Agenda for Future Research

Based on our analyses, we would like to propose three paths forward for future research on entrepreneurship education using experimental designs. We call for future entrepreneurship education studies using experimental designs to (1) be more theory-driven, (2) answer more ambitious research questions, and (3) have more robust experimental designs.

1. Towards More Theory-Driven Experiments

We observed that currently, many studies test the effects of existing educational programs or elements on a dependent variable of interest. By testing the effect of existing, “naturally occurring” educational elements, the treatment (e.g., an educational program, a course, or an assignment) is not chosen nor developed on theoretical grounds (i.e., because it manipulates the theoretical concepts of interest). Instead, researchers take existing educational elements and theorize ex post facto about the theoretical concepts that have been manipulated through such treatments. Ex post facto theorizing limits the theoretical development of the field. To ensure progress in theoretical development, the theoretical concepts of interest should be studied for reasons of scientific interest. We therefore call for more ex ante theorizing in developing experimental studies in entrepreneurship education to further improve the theoretical development of the field. Once researchers have decided which concepts need to be studied, the treatments should be developed to fit those theoretical concepts, and not the other way around. We therefore heed the bell and call for experiments in entrepreneurship education that are more theory-driven and less ex post facto (or opportunistic). An example in this regard is the work by Costa et al. (2018) who specifically developed their “Cognitive Entrepreneurial Training in Opportunity Recognition” based on opportunity recognition theory (Baron, 2004; 2006; Baron & Ensley, 2006) and the principles of experiential learning (Corbett, 2007; Kolb, 1984).

A related issue is whether the experimentalist can be confident that the theoretical construct of interest has, in fact, been manipulated. Only one study in our dataset included a manipulation check. In all of the other studies there was a presumption that the theoretical construct of interest was manipulated and that the successful manipulation of that construct was the cause of the results observed. Since the majority of studies reviewed here aim to examine the effects of educational variables on behavior relevant to entrepreneurship, it is important to establish that the education variables are having their intended “educational” effects by including manipulation checks. For example, if the independent variable is expected to increase participant knowledge, which is then expected to influence the entrepreneur’s ability to develop a business plan, the researcher should demonstrate that the treatment group has more relevant knowledge than the control group. Only then can the experimentalist conclude that the theoretical construct (knowledge) was the determining factor in the differences observed between the treatment and control group’s business plans. We therefore call for more experiments in entrepreneurship education research that use manipulation checks to ensure that the theoretical constructs of interest have been successfully activated in the manipulation. 13 The only study included in our review that used manipulation checks is the work by Bjorvatn et al. (2020). In their study, they included a measure to capture the knowledge of the content of the edutainment show to verify that the treated students actually had been more exposed to the edutainment show than the students in the control group. This way, the researchers could show that the exposure to the edutainment show was different between groups.

2. Towards More Ambitious Research Questions

Another observation we have made is that articles reporting on experiments in entrepreneurship education usually provide an answer to a simple research question. By reporting on one experiment, which typically involves a single independent variable, these studies test whether an intervention has an effect on a dependent variable as compared to a “non-treated” control group. Although such studies can provide important insights, studies using more complex research designs can provide more fine-grained insights and can answer more ambitious research questions. More complex research designs could include the effects of moderators (either measured or manipulated), mediators, multiple independent variables, multiple levels of the independent variables and multiple dependent variables (cf. Judd et al., 2017). Whereas it is common in fields such as psychology to report on multiple experiments in one paper, we only found three articles in our dataset that did so: Bischoff et al. (2020); Elert et al. (2020); and Liu et al. (2019). By designing a series of experiments, researchers can explore more complex relationships among independent variables. They can start by conducting a simpler experiment to test the basic hypothesized relationship and then follow-up with more complex research designs. By testing the effects of multiple variables, including their mediating (e.g., Roelle et al., 2015) or moderating (e.g., Dinsmore & Alexander, 2015) roles and factorial interaction effects (e.g., Paik & Schraw, 2013) and by reporting on multiple studies within an article where every study builds upon the previous (e.g., Fiorella & Mayer, 2016), authors can make larger theoretical contributions. This, in turn, could help entrepreneurship education researchers to (1) publish their studies in high (er) ranked entrepreneurship and/or (management) education journals that look to publish studies with large(r) theoretical contributions; and to (2) not only borrow from, but also contribute to, theories in the educational sciences, using entrepreneurship education as the empirical setting.

3. Towards More Robust Experimental Designs

We have observed that currently many studies report on comparing the presence of a complex treatment with the absence of a treatment. There are two disadvantages of such an approach and each disadvantage suggests a path forward.

First, by testing the effects of complex educational elements (e.g., educational programs), the treatments do not activate one particular theoretical concept of interest. Instead, a plethora of concepts, both intended and unintended, may be represented in the independent variables (whether manipulated or not). For example, when comparing the levels of entrepreneurial intentions between an experimental group who participated in a course on entrepreneurship and a control group who did not participate in this course, it should be made clear what the theoretical concept of interest is. When a significant difference in entrepreneurial intentions between these groups has been found, what was the theoretical concept that caused this difference? Does the course represent an increase in knowledge on entrepreneurship theories and models? Does it represent an increase in entrepreneurial experience, or perhaps in entrepreneurial self-efficacy? Treatments as complex as educational programs are likely to affect all these concepts, making it unclear what theoretical concept(s) truly caused the difference between the groups on the dependent variable of interest. We therefore call for more experiments on entrepreneurship education that test the effects of specific theoretical concepts and that therefore isolate variables of theoretical interest. An example of such a study is the work by Liu et al. (2019). Instead of testing the effect of an educational program, they developed a factorial design where they tested the effect of an “idol role model” versus “peer role model” and the effect of a “failure story” versus a “successful story.” By including role models telling a story in all conditions, they were able to specifically isolate the effects of idol versus peer role models and success versus failure stories.

Second, many experiments reported on the use of a no-treatment control group. The respondents in these groups did not receive any treatment (e.g., did not participate in a training). In such experiments, it might be that the mere fact of attending a training caused the difference on the dependent variable, regardless of what the training actually intended to do (Karlsson & Bergmark, 2015; Shadish et al., 2002). To mitigate these effects, researchers should design experiments using active-treatment control groups or placebo control groups (International Conference on Harmonization, 2000). Respondents in active-treatment control groups actively participate in the study, but either receive a “treatment” that is unrelated to the research question of interest but in other ways is comparable (e.g., the control group would receive an unrelated training), or receive the current treatment to which all newly developed treatments will be compared (e.g., the current entrepreneurship education program) (Grégoire et al., 2019; Hsu et al., 2017). An exemplar study in this regard that was included in our review is the work by Clingingsmith and Shane (2018), who compared the effects of different types of pitch training to an active-treatment control group who received minimal training about pitching. Respondents in a placebo control group receive a treatment equal to the experimental condition that differs on one specific element only: the variable of interest (Boot et al., 2013). These types of control groups ensure that researchers can measure the relative effect of receiving this particular treatment compared to another “treatment”, instead of measuring the absolute effects of receiving this particular treatment compared to no treatment at all. This is important, because it enables researchers to find out whether the effect on the dependent variable is caused by the specific characteristics of this particular treatment (i.e., this particular training), or perhaps is (partly) caused by the fact that the respondents were treated at all (i.e., the mere fact of being trained) (Karlsson & Bergmark, 2015). A good and only example of a placebo control group in our review is the work by Lafortune et al. (2018), who were interested in the impact of role models during a training program for micro-entrepreneurs. By offering all groups of micro-entrepreneurs the same training and have randomly chosen groups receive a visit from a successful alumnus, they are able to isolate the effect of a visit of a role-model.

Next to these three main directions in which we think future experimental research in entrepreneurship education should develop, there are many more suggestions to give and best practices to share. We therefore refer researchers who are interested in getting (better) acquainted with the experimental method to design experiments in entrepreneurship education research to the articles in the reference list that are indicated with an asterisk.

Conclusion

This paper increases our understanding of the prevalence and use of experimental and quasi-experimental designs in entrepreneurship education research. We provided a historical overview of how experimental designs have developed in the social sciences, and the improvements made over time in experimental designs formed the basis for our review of the current state of affairs of the use of experimental and quasi-experimental designs in entrepreneurship education research. From a theoretical perspective, our study gives the field an overview of which causal relations relevant to entrepreneurship education have thus far been studied and tested using experimental designs. Methodologically, it shows what types of experimental and quasi-experimental designs have been used most often, and which designs have been applied less frequently.

Our study therefore makes two important contributions. First, we identify those research topics in entrepreneurship education where some causal empirical evidence has been generated, which helps to identify potential future research directions focusing on as yet unstudied variables. Second, by showing which experimental designs have been used, and which not, we identified possible directions for future research using more rigorous and complex experimental designs. This will help to strengthen the base of empirical evidence to guide entrepreneurship education practices. It is clear from the results of our literature review that quasi-experimental and true experimental designs are the minority of methods used to study the effects of entrepreneurial education methods on entrepreneurial outcomes.

In summary, we hope our study is a contribution that helps move the field forward in the use experimental designs that provide an empirical means of making theoretical contributions and making changes in entrepreneurship education practices based on identified causal relationships.

Footnotes

Acknowledgments

We thank editor Dr. Tegtmeier and two anonymous reviewers of this journal for their constructive feedback that helped us to substantially improve our manuscript. We thank Elizabeth Chandler, Louis Euverink, Jessica Heiland, Savannah Kelley, and Kayla McCoy for their help with the data collection. An overview of the full dataset can be found at ![]() . We have no known conflicts of interest to disclose.

. We have no known conflicts of interest to disclose.

Author Contributions

Authors are listed in alphabetical order as they contributed equally to the manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.