Abstract

In this paper, we critique a pervasive discourse about the environmental implications of artificial intelligence as witnessed in news media, public policy analysis and computer science literature. In this discourse, AI is seen through a paradoxical lens: as essential to reducing the damaging effects of the climate crisis and, at the same time, a looming threat to both the climate and broader ecological crises. This seemingly contradictory framing of AI as both ‘remedy’ and ‘poison’ resonates with the concept of pharmakon, a heuristic device used extensively in the philosophy of technology. In this paper we show how the policy discourses of leading actors such as the OECD, Green Software Foundation and Microsoft's data scientists resolve the pharmacological nature of AI's environmental impact by narrowing the scope of its toxic properties and hence the solutions required to enable the technology's continued use and expansion. We argue that these discourses are reducing and oversimplifying the problem at stake to a simple proposition: we need more AI for climate tech applications but less energy thirsty AI. We show how this framing of the problem arose from a particular recent political history of the ‘techlash’, which in turn prompted considerable efforts to quantify AI's carbon footprint. We suggest a different problematisation inspired by science and technology studies scholar Andrew Barry's methodological approach, one that can re-open the problem-space of AI's environmental impact. This approach is sketched through four methodological starting points: unpacking the material entanglements between AI and ecologies; being sensible to geohistory – the specific locally situated nature of data centres and energy grids sustaining AI training, tuning and deployment; envisioning the multiplicity of solutions to the climate crisis (beyond carbon accounting of the AI footprint); and finally, rereading AI (by acknowledging the heterogeneity of actors and interests along AI supply chains).

Keywords

Introduction

“The discussion surrounding Artificial Intelligence (AI) could hardly be more “Artificial intelligence (AI) has […]

This paper critiques this pervasive pharmacological discourse. The framing presented by actors such as OECD or (in a slightly more critical tone by) AlgorithmWatch, that AI has an “impact” or “effect” on the environment that is separate from its creation and from nature, tends to reinforce the rush to quantification. Instead, just like any infrastructure, AI is itself an impact, both physical — it needs materials, computers, chips, data centres, devices — as well as social and relational in producing new expertises and organisational collaborations — between science labs, big tech and start-ups. As infrastructure, AI has particular characteristics. Unlike roads, dams or pipelines, its infrastructural space is highly distributed which makes it challenging to visualise. Moreover it has been obfuscated by various forms of black-boxing and so-called “transparent” design (Kemper, 2022). Despite this, the major response to ameliorating its impact, standardisation, reflects a dominant policy response of rushing to resolve the pharmakon by quantifying harm whilst preserving and facilitating the infrastructure as a positive good. Richer accounts reveal that asymmetries of power shape decisions about what are the main environmental costs, and to whom. These accounts can disrupt the misleading logic of the current dominant framing that leads from calculations of AI's “economic benefits” and calculations of “energy costs” to a conclusion as to what constitutes the optimisation of climate mitigation.

In the first section of the paper, we show how the idea of the pharmakon can illuminate current debates on the ecological impact of AI. We exploit the potential of the pharmakon to analyse environmental policy discourses around AI, rather than developing the theory of the pharmakon in philosophy of technology or media theory. It provides a heuristic device to investigate the effects of contemporary policy discourses around AI's ambivalent relation to ecologies, a relation we describe here as socio-techno-ecologies of life entangled with matter and more-than-humans (Rakova and Dobbe, 2023; Taffel et al., 2022). Our use of the pharmakon allows us to analyse the rise of concern with the ecological impact of AI and the policy solution as a sociological problem (Blumer, 1971), where both problem construction and policy solution require examination. As we will show, the AI as environmental pharmakon is typically resolved in policy discourses by narrowing and reducing the voices and problems to be considered in governing AI development and deployment. This solution legitimates narratives that benefit the dominant tech industry players that already own most AI infrastructure (e.g., Ferrari, 2023). At the same time it blocks the circulation of richer, more plural accounts that could envision more selective uses of AI and ensure its benefits are more widely shared.

The second section shows how the problem and solution of AI as environmental pharmakon emerged within the tech sector itself. This section is based on a non-exhaustive narrative literature review focused on traditional news media, grey literature, scholarly literature databases and online records of tech worker activism up until mid-2024. 1 We show how the environmental damage of AI became problematised as a complex issue within the sector after it was attached to 2019 protests by tech workers and subsequently to social movements that flowed from the “techlash” moment. Partly in response to the techlash movement, practitioner and academic AI developers within the field of computer science became key actors in shaping how to reduce this damage through their efforts to provide methods to quantitatively calculate the carbon cost of machine learning (ML). This ‘solution’ renders AI an homogenous whole, irrespective of its purpose (which may include for example searching for new fossil fuel deposits), or the impact of its infrastructure (including materials extracted and labour used to train data) on ecological wellbeing in different locations (see also Gregg and Strengers, 2024). Critically, it is a solution that does not fundamentally challenge who benefits from contemporary socio-economic arrangements and their attendant inequalities, even though these more fundamental and complex issues had been raised by tech activist campaigns (Kneese, 2023).

In the third section we take the burgeoning interest in calculating the carbon intensity of ML algorithms as a case study and present four alternative methodological starting points for problematising computer science-led resolution of the ecological harms of AI through quantification. These analytical and methodological starting propositions give us a different way to use the heuristic of the pharmakon to generate diverse responses beyond dominant framings. These four starting points are inspired by science and technology studies (STS) scholar Andrew Barry's approach to environmental problems (Barry, 2021) and consist of: unpacking the material entanglements between ML and ecologies (all along the ML supply chain from material extraction to use on device); being sensible to geohistory and the specific locally situated nature of data centres and energy grids sustaining ML training, tuning and deployment; interrogating the lure of quantification in the face of complex entanglements; before turning finally and briefly to rereading AI by acknowledging the heterogeneity of actors and interests along ML supply chains. We conclude that it is necessary to practice the “art of the pharmakon” by acknowledging the ambivalent relationship between AI and ecologies.

AI as an environmental pharmakon: a cure or a poison to climate change?

The pharmakon concept and pharmacological thinking in lay and expert discourse concerning emerging technologies is not new. For centuries now the profound ambivalence of technology, its paradoxical nature in both curing and toxifying, its position as both cause (technology generates problems) and effect (contingent solution, reaction or response to certain kinds of problems), has been noted and questioned (Hughes, 1994: 112; Wyatt, 2008: 174; see also Stiegler, 2013: 2). According to Bernard Stiegler, the French philosopher famous for theorising the topic (2013, 4), people both fear and care for pharmacological technologies. Currently, we humans worry about AI while we suspect that we will not be able to fight climate change without it, since robust climate modelling now requires intensive data tracking, sensors and algorithms (Edwards, 2010; Gabrys, 2016; Lovelock, 2020).

Yet the potentially powerful role of AI in this one important function risks positioning it as necessary to fight the climate crisis. In the process, its promoters conflate technological progress with environmental progress, creating in STS parlance an underpinning ideology of “technological determinism” (Wyatt, 2008, 168). The necessity of developing AI as a technology is legitimated by its specific role in tackling a narrow set of ecological challenges, yet is largely driven by economic imperatives (Wyatt, 2008, 174). In a context where digitisation and computation are defining cultural logics of late capitalism (Franklin, 2015), “Green AI”, geoengineering (Schubert, 2021) and “computationalism” demonstrate ‘belief in the power of computing’ (Golumbia, 2009: 2) to solve the climate crisis.

To make plain the ecological impact of AI requires a close examination of the relationship between the material substance of this technology-as-pharmakon with the political dynamic of both its harmful and therapeutic properties. Our analysis responds to media theorists Scott Wark and Yigit Soncul's timely provocation for an extended focus that captures not only the social impact of contemporary media, but also the distributed, material reality in which it is enrolled (Wark and Soncul, 2021, 11). The materiality of an AI system is found in its distributed nature: from extracted minerals used for hardware chips like GPUs (Graphics Processing Unit, see Rella, 2024), to the diverse stacks of code, platforms, software and end-user devices such as web browsers and smartphones (e.g., Crawford and Joler, 2018; Matzner, 2024). At each of these scales, technologies do things to their surrounding ecologies — water from mines pollutes rivers, software and hardware consume electricity — and engage in pharmacological relations with (non) humans (Turnbull et al., 2023). As one of the authors has argued elsewhere (Cellard & Marquet, 2023), attention to the materialities of the digital is critical. That is, it is urgent to pay attention to the flows of matter (metals, plastics, chemicals, water, fuel, greenhouse gases) and energy (renewable, carbon-based, nuclear) set in motion by digital technologies, to the ways in which these flows are assessed and stabilised, and even how they could overwhelm actors and ecosystems.

This is not to deny that myriad industrial processes have already generated the very real material consequences of the ecological crisis and continue to do so. In the Anthropocene, humans must accept a much greater responsibility for managing ecological systems (Latour, 2017) and the capacity of AI to monitor, communicate and control has taken on particular appeal. Wark and Soncul (2021, 2022) highlight the importance of recognising the positive side of the pharmakon (and by extension AI): the creation of new capacities to sense, think, and act on the environment and the earth (e.g., Gabrys, 2019; Halpern and Mitchell, 2023). Yet, this rhetoric of “smartness”, with its constant attempt to envision how energy and other resources are to be computationally optimised through AI and ML, is a problematic epistemology for governing life and the so called “resilient” planet. AI as an uncontroversial singular figure needs to be troubled (Suchman, 2023) to appreciate all its infrastructural and elemental constituents. As shown by environmental media studies and ethnographies of data centres, there are no cloud systems (hence no AI) without access to electricity (e.g., Ortar et al., 2023) and water (Hogan, 2015; Lehuedé, 2024).

When viewed as pharmakon, AI provides instrumental satisfaction through innovation whilst simultaneously degrading environments. Wark and Soncul explain: “Media's large-scale role as essential infrastructures establishes pharmacological relations between the consumption of new technology [seen as a solution to our desire to augment capacities to act, feel and think], the waste produced by its dynamics of obsolescence, and the sometimes-violent processes of extraction that underlie the production of technology, as we see with minerals like Coltan or Lithium.” (2021)

Political geographer Andrew Barry (2021) provides a methodological approach for the possibilities that accompany this more nuanced approach to using the pharmakon heuristic. Barry's work invites us to suspend our judgment of AI's ecological impact while diversifying the ways AI's ecological implications are currently problematised. Barry's paper “What is an environmental problem?” (2021) provides an approach attuned to the formulation of environmental problems, solutions and the study of their contestations. Barry argues for the adoption of an attitude that is “sceptical of the claims of those who know in advance what the solution to the problem is and of those who know what hidden forces have determined what has happened [to the problem].” (2021, 21). Barry takes a non-normative approach inquiring into where the construction of environmental problems takes place, how they are shaped by various contextual forces, without (too easily) proposing solutions to the problems at stake (see also Bacchi, 2012).

Through Barry's approach rooted in science and technology studies (STS) and political ecology, pharmakon-like narratives can be envisioned not so much as abstract discourses about the dualism of technological development, but as situated, unresolved and contestable constructions of problems and solutions to climate change. These problems and solutions deserve greater empirical attention. Our aim in this paper is to take one modest step along this pathway, to sketch new avenues for problematisation, as we explain further in the third section of the paper. If AI understood as an environmental pharmakon is mainly a discursive construction shaping positions for or against the development of this technology, our call is to get down to the study of precise cases and inquire into the social, technical, political and geographical construction of these positions. To do this, it is important to examine the recent politicisation of the climate impact of AI to trace the pharmacological thinking endemic in the circulation of news media, industry narratives, public policy analysis and computer science literature.

AI as an environmental problem

Problematisation of climate impact of AI by activism within the tech sector

AI was simultaneously proposed as a climate saviour, through initiatives such as Microsoft's AI for Earth (Brain, 2018), at the same time as the ‘techlash’ that first emerged in 2016 positioned digital technology as a social, political and economic problem in the public sphere (Phan et al., 2022, 123). By 2017 to 2018 public animosity towards the large Silicon Valley platform companies and their Chinese equivalents had been fuelled by a series of scandals concerning personal data collection, election interference, discrimination in the treatment of users, abuse of employees and gig worker and misuse of monopoly power, leading conservative financial media such as the Financial Times and The Economist to declare by 2018 that the ‘techlash’ was in full swing (Foroohar, 2018; see also Smith, 2018). The concerns that made up the techlash evolved over time, encountering ecology somewhat later than other political and ethical issues. It was only in 2019 that the ecological impact of digital technology became an issue in public discourse, although there was already a rich history of consideration of the ecological entanglement of digital media and its infrastructures in scholarly work (e.g., Brevini and Murdock, 2017; Cubitt, 2016; Gabrys, 2013, 2016; Kuntsman and Rattle, 2019; Maxwell and Miller, 2012; Mosco, 2017; Parikka et al., 2015).

The public discourse on the climate impact of big tech emerged from the collective action of tech workers and quickly focused on machine learning and AI. Soon after the first election of US President Trump in 2016, tech workers including professional employees such as software engineers, began to collectively organise with radical labour rights groups (such as the Tech Workers Coalition and CoWorker.org) in defiance of their employers’ willingness to provide AI tools to the US federal Government for military applications and securitised immigration control (Tveten, 2019). This became known as the ‘Tech Won’t Build It’ campaign, and involved a spate of petitions, strikes and walk-outs by tech workers (Boag et al., 2022; Tveten, 2019).

By 2019 tech workers applied a similar lens to big tech's complicity in the climate crisis, as global attention was increasingly turning to the urgent need to repair decades of climate inaction in the lead up to the UN Climate Summit in New York on 20 September 2019. In April 2019 nearly 9000 Amazon employees signed an open letter to CEO Jeff Bezos and the Amazon Board of Directors calling for Amazon to take urgent and aggressive action on climate change, and even proposed an (unsuccessful) shareholder resolution at the company's annual general meeting (Amazon Employees for Climate Justice, 2019b; Paul, 2019). 2 By September 2019, the Tech Workers Coalition (TWC) was helping coordinate thousands of tech workers to join the high profile Friday climate strike occurring immediately before the New York climate summit (Baca and Greene, 2019; Tarnoff, 2019). The strikers included nearly 2000 Amazon employees organised by the Amazon Employees for Climate Justice group (Amazon Employees for Climate Justice, 2019a), workers from Google (Google Workers for Action on Climate, 2019), Microsoft (MSWorkers/for.ClimateAction, 2019), Facebook (now Meta) and other tech companies. TWC demanded that their big tech employers make the same kind of climate commitments being increasingly expected of other large corporations, noting that ‘The tech industry cultivates a “green” public image, but it is in fact a major contributor to climate change’ (TWC, 2019).

The tech workers’ demands intertwine social and ecological concern. They evince a broad ranging understanding of big tech companies’ complicity in the climate crisis with a particular focus on machine learning and algorithms, and the intersection of environmental justice with social justice and labour rights (Boag et al., 2022; Kneese, 2023; Tveten, 2019). Figure 1 shows the graphics produced by the TWC to illustrate its four key climate demands: reduction of big tech's carbon footprint (due mainly to the computational infrastructure supporting data storage and machine learning/artificial intelligence) to zero by 2030; cessation of ‘collaboration’ with big oil (provisioning of infrastructure, engineering and AI to aid fossil fuel extraction); termination of the selling of technologies designed to surveill, police and track vulnerable, poor and racialised communities (including climate refugees and others directly impacted by climate crisis); and, fourth, to halt the funding and amplification of climate denialism, through algorithmic recommender systems on major social media platforms (TWC, 2019; see also Donaghy et al., 2020; Tech Won’t Drill It, 2020).

TWC's graphics illustrating its demands in the climate strike. Source: https://techworkerscoalition.org/climate-strike/.

Think tanks and activists concerned with the social impact of AI also raised ecological concerns. In October 2019, a month after the climate strikes, AI Now, a prominent think tank concerned with the social implications of AI, published a think piece, ‘AI and Climate Change: How they’re connected and what we can do about it’, highlighting the tech workers climate strike and its multifaceted demands (Dobbe and Whittaker, 2019). 3 In 2020, Timnit Gebru and Margaret Mitchell were famously fired by Google for co-authoring their ‘Stochastic parrots’ paper raising a series of wide-ranging ethical questions about Google's language processing tools, focused mostly on encoded bias, but also highlighting the energy use of machine learning and asking whether that resource could be more beneficially used elsewhere (Bender et al., 2021; Tiku, 2020). It was this paper and Google's reaction that brought the environmental impact, and specifically the carbon footprint of AI, most firmly to the attention of the broader public discourses about big tech in news media.

By 2020 several academic pieces had been published questioning the ecological impacts of AI specifically (Brevini, 2020, 2021; Dauvergne, 2020; Taffel, 2023). A growing sub field of critical academic and activist works now expound the political ecology of computation and its infrastructure with a particular focus on AI (eg AI Now Institute, 2023b; InfluenceMap, 2021; Jiménez and Oleson, 2022; Nobrega and Varon, 2020; Nost and Colven, 2022; Taffel, 2023; Taffel et al., 2022) and even the investment of big tech's enormous profits in the fossil fuel industry (McKibben, 2022). Another stream is seeking to define and promote ‘sustainable AI’ (Robbins and Van Wynsberghe, 2022), an approach based on conducting lifecycle analyses of AI equipment and software across the whole production, supply and use chain from the extraction of raw materials to produce them, their manufacturing, transport, use and end of life (Ligozat et al., 2022; see also AlgorithmWatch 2022, 2023; Samuel et al., 2022; Van Wynsberghe, 2021).

Yet within the tech industry and computer science literature this contestation around the ecological and specifically climate impact of big tech is interpreted and tamed as both a problem and solution. Their focus is an increasingly widespread project to address the ‘problem’ of red AI, meaning high energy, high emissions machine learning by making AI ‘green’. The “Green AI” project has come to focus on measuring the carbon emissions of machine learning (and other aspects of digital technology more or less close to the practice of AI, eg data storage) and seeking to reduce those emissions. The aim is to slow down the volume of data, number of parameters, the number and energy-hungry cores (parallel computing, CPU and GPU), and running time of a given ML model (e.g., Schwartz et al., 2020). Through the interaction between activists and strategies of industry aimed at preserving their economic and political influence, ecological damage risks being equated with carbon intensity.

Green AI vs Red AI: AI as environmental pharmakon in computer science, industry, news media and policy discourse

Just a few months before the climate strikes, University of Massachusetts academics Strubell et al. (2020) presented a paper at the Association of Computational Linguistics (ACL), a high status conference for both academic and industry researchers in machine learning, for the first time seeking to quantify the financial and carbon cost of running neural network models for natural language processing. They famously calculated that training one model could be responsible for carbon emissions equivalent to the emissions of five fossil fuel guzzling American cars over their entire lifetime including manufacture – an estimation cited by the TWC climate strike call to action (TWC, 2019) and repeated in nearly every discussion of the climate impact of AI/ML over the next few years including the activist works mentioned above (eg Dobbe and Whittaker, 2019). 4

In August 2020, a news feature in the Machine Intelligence imprint of the influential Nature franchise cited the tech workers’ climate strike, growing critical scrutiny by think tanks like AI Now and the pioneering calculations by Strubell et al. (2020) and directed attention to the ‘urgent’ matter of ‘the part that artificial intelligence plays in climate change’ (Dhar, 2020). Noting the imminent arrival of OpenAI's new generative ML models and the worsening climate crisis, the author Payal Dhar crystallised the emerging narrative of the AI as pharmakon-like: “AI seems destined to play a dual role. On the one hand, it can help reduce the effects of the climate crisis, such as in smart grid design, developing low-emission infrastructure, and modelling climate change predictions. On the other hand, AI is itself a significant emitter of carbon.” (Dhar, 2020; citing Vinuesa et al., 2020)

The Nature Machine Intelligence news article presciently marked the genesis and emergence of a field of computer scientists working on the quantification and metrification of the carbon footprint of machine learning (e.g., De Vries, 2023; Kaack et al., 2022; Luccioni and Hernandez-Garcia, 2023; Schwartz et al., 2020). The Green Software Foundation — an industry consortium led by tech companies and world-wide consulting firms — has become the leading industry-based professional group working toward the creation and implementation of a software carbon intensity specification describing how to calculate the carbon intensity of a software application, including AI models (Green Software Foundation, 2023a) and advocating generally for decarbonising software (Green Software Foundation, 2023b). The OECD has convened the OECD Expert Group on Compute and Climate, 6 a multi stakeholder group made up of government and industry representatives, to develop expertise and collaborate with the OECD's multi stakeholder Global Partnership on AI to create a ‘responsible AI strategy for the environment’ (GPAI, 2022). 7 The expert group developed recommendations for measuring the environmental, especially climate (and emerging biodiversity) impact of AI (OECD, 2022), and recommendations for government action (Centre for AI & Climate and Climate Change AI, 2021) predicated on the need to address both sides of the pharmakon, namely to ‘help measure and decrease AI's negative effects while enabling it to accelerate action for the good of the planet’ by establishing measurement standards, expanding data collection and identifying AI-specific impacts. 8

The quest to quantify and make transparent the carbon footprint of AI to tame the pharmakon is now evident in official policy discourse around the European Union Artificial Intelligence Act (EU AI Act), the world's first legislation to comprehensively regulate the development and deployment of AI across industry sectors. The initial draft legislation proposed by the European Commission did not include any requirement to consider the environmental impact of AI (Appelle and Garrett, 2023). However, the 2023 version proposed by the Parliament responded to calls from NGO AlgorithmWatch (AlgorithmWatch, 2023b; Van Wynsberghe, 2021) and Greens members of Parliament (The Greens/EFA in the European Parliament, 2023) 9 by recognising ‘environmental friendliness’ as a fundamental value (European Parliament, 2023: Article 1; Hacker, 2024: 371–4). To operationalise this environmental purpose, the draft proposed that providers of “high risk AI systems” (which include all generative AI and foundation models) should be required to produce environmental (in addition to human rights) risk assessments and make use of relevant technical standards to log energy consumption and to reduce energy use and increase energy efficiency (European Parliament, 2023: Articles 9.2b, 12.2a, 28b.2(d)). This chimes with the developing calls for the creation of a regime of carbon emissions assessment, disclosure and reduction for AI discussed above.

The final version of the EU AI Act has downgraded but retained environmental protection as relevant to the regulation of AI. The primary purpose of the final version of the Act is now expressed as ‘to promote the uptake of human centric and trustworthy artificial intelligence while ensuring a high level of protection of health, safety, [and] fundamental rights’ (European Parliament, 2024, Recital 1).’ This reflects a pharmakon framing in which AI is to be encouraged but its poison remedied. This opening statement of purpose goes on to clarify that ‘democracy, the rule of law and environmental protection’ are incorporated into the consideration of protection for health, safety and fundamental rights.

The final EU AI Act still requires high-risk AI systems to conduct risk assessments and create technical documentation processes to address their impact on health, safety and fundamental rights, which (as indicated above) is defined to include environmental impact (European Parliament 2024, arts 9, 11, Annex IV; see also Hacker, 2024: 371–4). In practice, however, this means the creation of voluntary, technical standards by which providers of AI models can assess and minimise their environmental impact, particularly in regards to energy efficiency. Indeed the European Commission published an official call for the development of a measurement framework for energy efficient and low emission AI soon after the Act was finalised (European Commission, 2024) and other standardisation organisations are also developing similar standards. 10

This focus on transforming how an AI model is made so as to make it less energy hungry renders the question of what an AI system is used for in each particular case, and which actors in the AI system lifecycle are responsible for the impact of how it is used, almost entirely out of scope (cf. Diamantis, 2021). It may overlook, for example, a “Green ML model” used for mapping, targeting or monitoring fossil extraction or gas fracking (see Donaghy et al., 2020). It also fails to question the underlying assumption that more compute allowing more data, larger models and relying on more infrastructure is inherently better (Gregg and Strengers, 2024) in a context where this approach benefits few large tech players who aim at scaling-up their AI models to reach many domains of applications and potentially control the market for AI applications (Ferrari, 2023; Pfotenhauer et al., 2022; Ribes et al., 2019; Whittaker, 2021). Big Tech companies and “Green AI” promoters in and out of these companies do not necessarily have common interests in the outcome of this quantification process of carbon accounting.

Principles for re-opening the problem-space of ML's carbon accounting

Andrew Barry's (2021: 15–17) understanding of environmental problems provides a useful basis to sketch four starting-points for needs to go beyond two dimensional pharmakon thinking about ML's entanglement with ecologies. Barry points out that environmental problems are complex, involving multiple encounters between plural interests and various materialities in specific times and places. It is therefore appropriate to be open to multifarious solutions in different times and places, rather than channelling efforts in one direction.

Barry's principles offer analytical and methodological guidance for complicating the dominant framing concerning AI's relations with the climate crisis. Applied to AI, they involve unfolding the material entanglements between ML and ecologies at every point along the ML supply chain; being sensible to geohistory – the specific locally situated nature of data centres and energy grids sustaining ML training, tuning and deployment; envisioning the multiplicity of solutions to the climate crisis (beyond carbon accounting of the ML footprint); and finally, de-centering AI by acknowledging the heterogeneity of actors and interests along ML supply chains. We discuss each of these below, drawing on the computer science literature on the carbon accounting of ML (e.g., De Vries, 2023; Dodge et al., 2022; Kaack et al., 2022; Luccioni and Hernandez-Garcia, 2023; Schwartz et al., 2020; Strubell et al., 2020).

Unpacking the material encounters along the ML supply chain

For Barry, the difficulty of mapping the conflicting interests of those wrestling with environmental problems is compounded by multiple heterogeneous and incommensurable processes (Barry, 2021: 16). As we have noted in Part 2, there is a long history of scholarly engagement in the complexity of material entanglement between digital media and ecologies (see also, Ensmenger, 2018; Pellow and Park, 2002). The carbon intensity of ML algorithms highlights this challenge. In their attempts to calculate the carbon footprint of ML, communities of computer scientists encounter a diverse set of materialities. These include: a mix of programming code, hardware (GPUs, servers, supercomputers) and software (cloud applications, end-user devices); the electricity grid necessary to make these codes, hardware and software used; water required to cool down servers; new types of logging and reporting interfaces that must be built in order to track emissions, and so on.

Barry reminds us that environmental problems are always encounters between different forms of materiality and disparate systems (Barry, 2021: 16 drawing on Savransky, 2016). Indeed, an AI system can have many different materialities — data sets, code, hardware, software, interfaces (Matzner, 2024) — and its stabilisation is subjected to multiple forms of contestation since actors do not always agree on what is the core of an AI system and where its boundary lies. The OECD noted that the vague boundaries of AI computation and the difficulty of delineating it from other computing elements makes carbon accounting in the context of AI more complex: “It is challenging to distinguish compute used for AI from that for other scientific, mathematical and general-purpose ICT needs. Further efforts should be made by governments, national statistical offices, intergovernmental organisations, the private sector, academia and others to disaggregate ICT infrastructure datasets, estimate the share used by AI and explore relevant proxy measures.” (OECD, 2022: 6)

When ML developers sketch a set of best practices to reduce the direct and material impacts of their activity (Luccioni et al., 2020), frictions and productive encounters occur at every stage of the ML material chain,

11

bedevilling the possibility of creating a robust generalisable method of calculating carbon intensity.

12

For example:

choosing a sustainable cloud provider generates friction between two layers that comprise the necessary software: the ML model as code and its application within a specific cloud infrastructure such as Microsoft Azure (Dodge et al., 2022). Any particular infrastructural configuration will determine where the relevant boundaries lie and hence what is and is not accounted for. Differences between the determination of these boundaries makes difficult to establish a reporting method for all situations and create a considerable standardisation challenge; choosing the most efficient computing hardware so that the graphical processing unit (GPU) architecture is aligned with what the AI model requires. This entails adjustments and negotiation between code specificities, software in the “cloud”, hardware systems such as GPUs and the climate commitments of tech industries;

13

selecting a data centre location with the greenest energy grid is a material encounter between the energy required to run GPUs situated in a specific data centre, located in a particular geographical location and negotiations that are necessary with local-national electricity and water markets. Moreover, rising demand for AI requires updating the copper wiring of massive data centres, which is also energy intensive. While it is possible ML developers could benefit from open source development, reusing pretrained models and other available resources to reduce the computing costs training (eg Chowdery et al., 2022), this too will generate friction in calculations based on template-like ML libraries or packages and the energy needs of a new system that is built with pre trained ML models.

How AI is defined will affect how its material impacts are understood, and which are considered relevant. Regulators, users and practitioners are all striving to capture more helpful discursive ways to represent algorithms (Cellard, 2022). Different understandings of AI lead to different ways to control and regulate it (Benbouzid et al., 2022). If AI is reduced to a set of code (which as the above shows is complex in and of itself), then quantification of the ML model, challenging as it is, is likely to seem enough. But, as argued above AI's ontology is not so neatly captured. A more expansive definition whose ecological impact is tamed through quantification requires conceptualising and creating appropriate data to capture AI as a flow of materials (e.g., minerals and metals used to create GPUs), energy (e.g., water and electricity feeding data centres) and a set of encounters between layers of a large data infrastructure. This creates significant barriers to an holistic ecological regulation of these systems. Yet, this challenge should not mean that AI's ontology should be so tightly defined that the solutions to its ecological impact appear obvious.

This examination of the material in the case of AI, then, has several implications. Firstly, that choices are being made about how to include AI within the broader category of computing (whilst taking account of the increased demands it makes on those technologies) as a lifecycle analysis would suggest. Secondly, whether mapping the material challenges in understanding the impact of AI should be directed at identifying commensurable metrics. Finally, whether the focus should shift to a narrower definition of AI (carbon intensity of codes) to calculate impact. As we noted above, these choices are material, and involve actors engaged in exerting (or expanding) their influence over the direction of AI. This requires an examination of the geography and history of actors and their analysis of AI's computing needs.

Be sensible to the geohistory of AI computing needs

The current pharmakon narrative regarding the environmental impact of AI mainly emerged from the Global North. But the environmental focus included a concern with competitive barriers to access in the Global South. The paper which coined the term “Green AI” (Schwartz et al., 2020) saw the pressure to make ML more efficient and less energy demanding as needed to reduce the costs of training AI models so that AI could be adopted more easily in the Global South (as well as by small businesses). Less expensive AI would facilitate its geographical spread. Hugging Face Climate Lead Sasha Luccioni presented the greening of AI through the reuse of trained models as a way to ‘democratize’ ML development whilst simultaneously promoting its use. When asked by AlgorithmWatch if she would see any “social or economic advantages” in Hugging Face's strategy of offering the option to reuse already trained models, she responded: “With the size of transformer and AI models growing bigger and bigger, the entry barrier for joining the AI community is becoming correspondingly high, especially for countries that don’t have access to the extremely powerful computers being used to create these models. Hugging Face has several offers available for such cases – for example, the ability to query a large language model using an API, so you don’t need to run it on your own computer. This makes such models more accessible.” (AlgorithmWatch, 2023a: 16)

Data centres are an instructive focal point. The material geographies of cloud computing are entangled with local ecologies such as rivers used for hydropower (Dryer, 2023; Lally et al., 2022; Levanda and Mahmoudi, 2019), or low air temperature used to cool computer rooms (e.g., Velkova, 2021). Data centres’ sources of energy have for example been contested by feminist Parisian activists in the case of fuel (Marquet, 2018) or by Canadian scholars in the case of renewable use in the Pacific Northwest (Pasek, 2019). More broadly, the field of environmental media studies has shown that digital technologies have always been “finite media” (Cubitt, 2016) facing planetary limits (Nardi et al., 2018) and causing ecological damages — a striking example is the deforestation caused in Southeast Asia by the massive use of gutta percha sourced from local timber by the telegraph and submarine cable industry in the nineteenth century (Tully, 2009).

Carbon intensity across countries varies but is also generally high. In their attempt to map all scales of the carbon intensity of ML, Dodge et al. (2022) acknowledged that the type of data centre (classical cloud storage vs. supercomputers) and each one's geography would play a crucial role in AI's carbon footprint because of their dependencies on local energy mix, national policies for access to electricity markets and water availability. Furthermore, in a survey of the carbon emissions of 95 ML models across time and performing different tasks in natural language processing and computer vision, Luccioni and Hernandez-Garcia (2023: 5) found that the majority of these models (61/95) used high-carbon energy sources, namely coal and natural gas as their primary energy source. Less than a quarter (34/95) used low-carbon energy sources like hydroelectricity and nuclear energy. They also found that those countries where model training predominated (e.g., the US and China), are on the high end of the carbon spectrum. Countries with the lowest carbon intensity in their sample, such as Canada and Spain, only represent a total of 7/95 models from their sample data.

A focus on data centres highlights how AI's environmental impacts are intertwined with issues of land, real estate and energy infrastructure. Where data centres are located and when they operate influences the impact of the growing energy needs of AI. This means not merely a generalised carbon footprint calculus. Moreover, the timing and scheduling of AI computing tasks can heavily impact computing tasks necessary for other industries in need of computing power within the same data centre and at the same moment, and the electricity supply available to the broader local population (Dodge et al., 2022). This requires more empirical studies about how the geohistory of AI energy needs encounters the temporality of data infrastructures. Adopting a place-based accounting of AI ecological impacts will help to align local energy demands with long-term time horizons and could enhance the capacity building of local communities of practice and create a bridge between local and global sustainability initiatives (Rakova and Dobbe, 2023: 12–13).

Interrogating the lure of quantification in the face of material entanglements

Environmental problems are diffuse, and even when they seem at first to be circumscribed, they do not lead to singular solutions. Further those solutions may themselves cause problems or hide alternative ways forward. Pharmacological relations — the interplay of empowering and damaging effects enacted by AI — are composed and balanced between the curing need of AI, and necessary distance from it. Stiegler warns against fixating on the power of emergent technology such as AI (Stiegler, 2016), because this leads to a frame of reference where AI is seen as essential rather than one that enables intervention to restrict that growth.

To date, though, the narrowing of the frame of reference retains its allure. This allure is evident in the specific genre of computer science texts that are produced to calculate carbon-accounting of ML. They are experimental and aimed at defining appropriate metrics and methods of accounting. Within this field, ethical and political analysis of AI is limited, particularly since the work is devoted to providing more engineering interventions. It is almost as if for AI/ML developers, the climate crisis was simply a crisis of “inefficiency” in how to allocate and optimise resources (Dryer, 2023; Halpern, 2021). Because of this belief in never-ending optimisation, developers might consider their interventions as commitments to an ethical stance towards a more sustainable practice but without reflecting on broader dynamics of their field (cf AI Now Institute, 2023a; Lean ICT Working Group, 2019). To illustrate this, it is instructive to hear again Sasha Luccioni, Climate Lead at Hugging Face responding to questions from Algorithm Watch criticising the race for gigantic models and the risk it creates to ruin all efforts towards computing efficiency: “If we kept the size of our models and the amount of computation needed at a constant level, we would definitely be going in the right direction [to reduce the carbon emissions of ML]. But since both are growing so fast, it's hard to say where we might end up. […] On the other hand, though, the concept of “the bigger the better” in AI modeling is getting out of hand.” (AlgorithmWatch, 2023a: 18) “[when developing their PaLM model

14

] the Google research team claims to have made a breakthrough in terms of training efficiency

15

. This advance has been achieved […] by way of newly developed hardware called Tensor Processing Units (TPUs)

16

, which enable accelerated computation, and through new strategies in parallel computing. Google says it was able to significantly reduce the amount of time it took to train the vast model, thus saving energy. […] But the question remains as to why a far more efficient hardware innovation [TPUs] and new training methods were only deployed to make models even larger, rather than to improve the energy efficiency of smaller, yet still quite substantial models. That isn’t just irresponsible from the perspective of resource conservation. Such vast models also make it more difficult to detect and remove discriminatory, misogynistic and racist content from the data used in training.” (AlgorithmWatch, 2023a: 13)

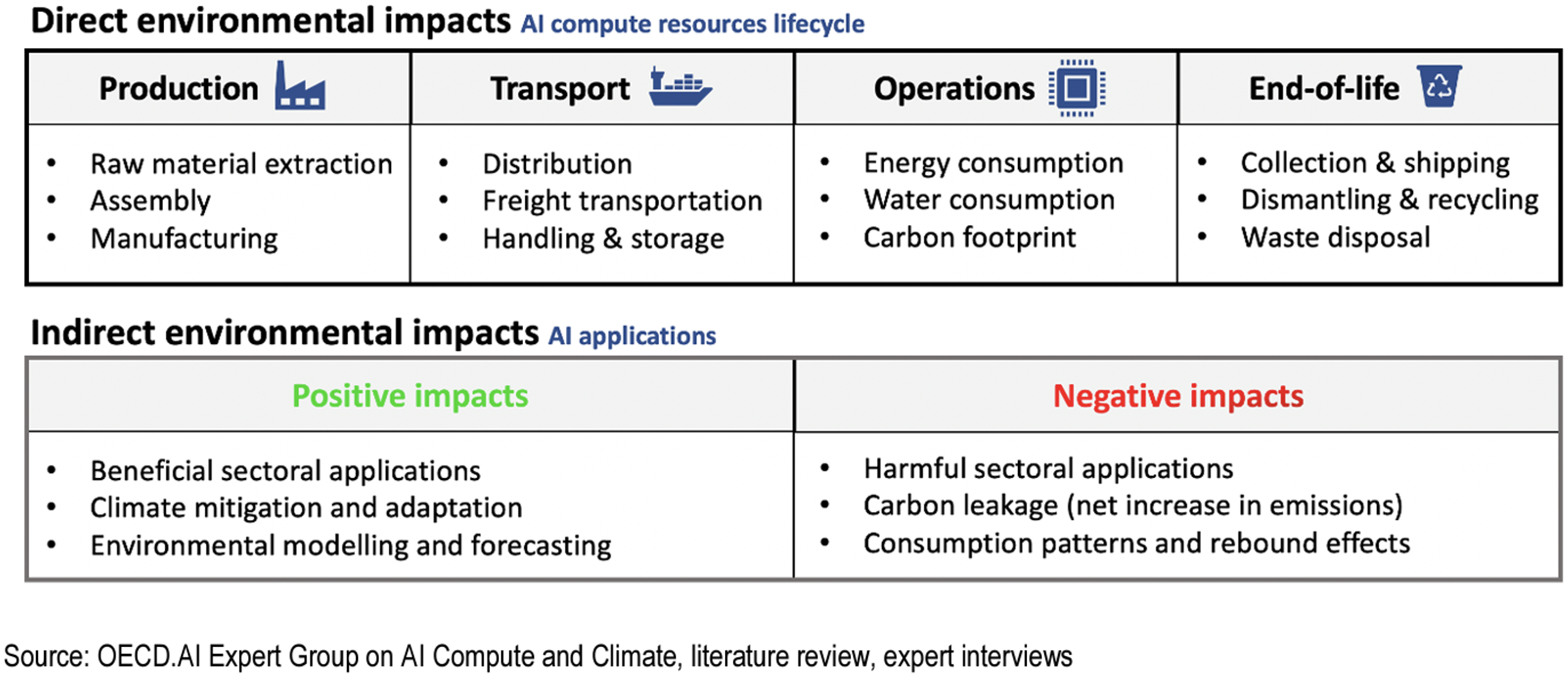

This focus on carbon intensity appears as the ‘best’ solution to reducing environmental impact – one that resolves the dilemma of ensuring regulation does not inhibit the growth of AI together with its potential benefits. Environmental problems with AI became predominantly an energy problem, further an energy problem defined by what could be accounted for, namely what is labelled as the “direct” impacts of energy overconsumption — and more predominantly electricity consumption. This focus on energy and direct impact is not only strongly presented in the computer science literature about the topic but also reflected in the OECD AI policy group of experts’ leading policy report which compiles expert evidence (mainly from computer science and big tech corporations) on the issue (OECD, 2022) (see Figure 2 which summarises the expert group's summary of the direct and indirect positive and negative environmental impacts of AI.

Figure from OECD Report (2022).

Figure 2 highlights the policy focus on direct environmental impacts of AI as those that are ecologically damaging but also those that can (in principle) be measured and hence managed or reduced: extractivist activities, energy consumption, the logistics of materials and different forms of waste. What can potentially be quantified become the elements most worthy of attention in part because they appear amenable to regulation. They are then presented as what one must deal with to solve the problem of AI environmental costs. The restriction of scope directs attention to those areas that can theoretically be measured. Despite this apparent simplicity, however, the material reality of what is proposed remains challenging. It is still very difficult to construct predictive models mapping the trade-offs between accurate-performant AI models and efficient-sustainable ones (Kaddour et al., 2023; Luccioni and Hernandez-Garcia, 2023; Schwartz et al., 2020).

Further, the ‘indirect’ impacts of AI are those that may well have the greater negative impact – such as facilitating fossil fuel extraction and encouraging overconsumption. These “indirect” impacts are more heterogeneous since they either concern the aims of the AI system (beneficial or harmful sectoral applications such as climate monitoring or carbon leakage) or phenomenon difficult to anticipate (rebound effects understood as the increased consumption that results from activities that increase AI efficiency and reduce manufacturing or consumer costs) (see also Brevini, 2021). Yet, robust methods to measure and quantify these impacts do not, and may never, exist. Empirical studies are lacking, for example about the relative proportion of the use of AI in domains such as climate mitigation when compared with its use in areas that damage ecological wellbeing.

Exploring AI as environmental pharmakon presents us with a bundle of dimensions — the global political economy of AI, singular AI applications in specific data infrastructures from data centres to user interfaces as well as local energy markets and regulations — calling for a diverse range of solutions. Despite its lure, it reveals the quantification of carbon intensity as a weak problematisation of the issues at stake and more generally the limits to standardisation, certification and labelling. Together, these constitute a classical engineering response prone to reductionism (what is left out of the metrics?) and the foreclosing of democratic participation in infrastructural development (what experts decide what metrics matters and how to standardise them?). In contrast to democratic participation is the dominance (arguably the “corporate capture”) of what an ethical response to the ecological impact of AI is. “Green AI” is, at this stage, mainly a self-regulatory response to the techlash pressure. Even with the environmental impact of AI entering the jurisdiction of legal and policy instruments such as the EU AI Act, the emphasis is on cost-benefit assessment in risk assessment and management, the reliance on industry standards to determine how to quantify risk assessment and on internal risk management systems means that policy action entrenches self-regulatory responses that may be resistant to outside influence.

Harnessing the potential of “Green AI” then appears as a new seductive rhetoric inside the broader economy of AI ethical virtues (Phan et al., 2022). It requires close scrutiny since ‘green AI’ and the quantification of carbon intensity of machine learning is led by or affiliated with Western energy-thirsty companies such as Google (Patterson et al., 2021, 2022), Microsoft (Dodge et al., 2022), Facebook (Henderson et al., 2022) either alone or in concert with consultancy firms like BCG or Accenture and industry consortiums like the Green Software Foundation. All are prone to regulatory capture.

Despite this lure, a robust calculus of the carbon footprint of AI is likely to remain contested, technically, socially and politically. In a study about the carbon footprint of the Internet, Pasek et al. (2023) proposed six methodological factors that drive this type of impasse: fraught access to industry data; the tradeoffs between using “bottom-up” data measurements vs. “top-down” mathematical modelling; lack of system boundaries (where does an AI start and end?); geographic averaging (inappropriate extension of regional data to represent all parts of a global model); lack of metrics standardisation in ML evaluation; and the unresolved question of the future rate of energy efficiency in global networks. Similarly, existing models for calculating the footprint of video streaming cannot capture its full extent and researchers in this area are advocating for an interdisciplinary approach to study the entanglement of data, computation, and infrastructure (Jancovic and Keilbach, 2023). The difficulties are compounded when environmental impacts beyond greenhouse gas emissions are considered, including the interaction and tensions between them (e.g., tensions between demand for critical minerals for renewable energy and biodiversity impacts of mining and renewable energy infrastructure).

Re-Reading AI with heterogeneous actors’ interests

According to Barry, environmental problems — including those involving AI technologies — are often difficult to formulate and stabilise partly because there is a lack of consensus between actors (e.g., corporations creating AI products, regulators, other government agencies, environmentalists, affected populations) about what the problem is. Without communication and shared understanding, different environmental experts in and out of the AI field may lack a common interest in reducing AI thirst for energy. This ambiguity over problem definition provides the impetus behind the creation of a simplistic polarity between advocates and critics of the use of AI for the mitigation of climate change. At the same time, AI itself can be seen as a distraction from more fundamental questions of necessary priorities in a time of ecological crisis. In this light, the pharmakon takes not the shape of a cure or poison but one of a scapegoat (pharmakos) acting as a lure whilst taking all the blame while hiding the underlying ecosystemic realities. By being too focused on the fact that AI (often understood as a new, context-less and value-less technology) is creating significant environmental problems, the dominant framing of the problem leaves much of corporate actors’ interests unquestioned.

Rereading “AI” means seeing it less as an abstract category to spend more time identifying actors investing time, money and effort to build sustainable AI. To do so suggests there is always an interest (symbolic and pecuniary) in what is presented as “ethical” disinterestedness (a decentring of the self and its environment expressed here by concern for the environment). The construction of environmental AI problems is not solely generated by technologies or technologists but shaped by a rich bundle of actors, causes and misaligned agendas. Instead of making AI the core object of attention, observers can on the contrary follow the traces of the unsaid, the undiscussed, absences, invisible entities or problems, as well as the dimensions that are difficult to articulate, know and share (Bacchi, 2012; Lee, 2022).

A question remains missing from computer science, industry or public policy debates: If AI does not simply generate impacts but is an impact, how is it then producing specific spaces, altering lands, transforming ecologies? What stories can best describe the production of these spaces? What is being obfuscated by the flood of carbon-accounting metrics? Or as policy analyst Theodora Dryer (2024) asks: “Which stories have you unearthed and which stories can you imagine? The world-making difference is between chasing after deadly ambitions of artificial futures and grounding ourselves in a historically informed reality – a reality in which collective healing, repair, and justice are possible.”

Conclusion

This paper questions the way pharmakon-like discourses are used to manage, quantify and narrow down solutions to tame AI's environmental impact problem. The paper has situated carbon accounting of ML as emerging from the attempt to transform a political situation — generated by the techlash — and the representation of an ecological problem (particularly the climate crisis) prone to quantification. Carbon accounting metrics and methods arise as a solution to AI's environmental impact in part because computer scientists, engineers and other types of workers selected one aspect of AI's ecological impacts to which to respond. This quantification endeavour is being further developed through the standardisation of carbon intensity metrics (visible in the work of the Green Software Foundation) and policy advocacy efforts to implement accounting methodologies in regulation (seen in the European Parliament's draft of the EU AI Act and policy solutions being promoted by the OECD). Our analysis suggests that this iteration of the politics of standardisation needs further examination through critique of: the search for a consensus and community-building around key metrics; the lifespan and robustness of a given standard; and the broader negotiation within the standardisation process of different orders of worth (Thevenot, 2022). The latter includes the industrial imperatives of building performant-accurate ML models with billions of parameters, market-oriented agendas, the reputational metrics of sustainable AI and corporate narratives of green capitalism.

Rather than directly intervening in proposing solutions to the carbon-accounting problem of AI, the four methodological principles we sketched in the third section of the paper were positioned as navigational aids for re-problematising the issues at stake. Teasing apart the material encounters between ML-as-code, GPUs, data centres computation capabilities and energy mix allows us to begin to “uncover relations and entanglements” (Wark and Soncul, 2022: 3) that are highly obscured by the AI hype as the saviour or final death knell of environmental damage. Following Wark and Soncul (2022), our alternative problematisation of the pharmacologic starts by questioning technologies through their “substances”. Indeed, when ML material chains are described as made of flows of materials, energies and various layers of a data infrastructure, many promising lines of inquiry emerge from: the problematic computing trends pushing for the scaling of AI (Pfotenhauer et al., 2022); the hegemony of computer science in framing and finding solutions to AI related problems; and the water and electricity availability to local populations (Dryer, 2023; Lehuede, 2024).

By questioning the assumptions and methods of this quantification enterprise a more rich and diverse set of pathways for ecological understanding and for academic, industry and policy interventions can come into view. These pathways raise critical questions about where AI should be used, by whom and for what purpose. In sum, what is needed is greater sensitivity to the choices being made and implications for power and voice. This should guide our thinking about what to do with AI and its ecological impact rather than proposing that one generic solution — whether in the form of industry commitments or government regulation — is unquestionably better than another (Bacchi, 2012: 5).

To navigate the ambivalences and paradoxical effects of AI on ecologies and as a response to the assurance of AI promoters, it helps to master the “art of the pharmakon” (Stengers, 2015: 128). This means crafting analytical and methodological resources that prepare observers “to hesitate, learn doses and preparations, and experiment with practices that can be at once cure or poison and whose effects can’t always be known in advance.” (Duclos and Criado, 2020: 162). A commitment to practice the “art of the pharmakon” is a way of suspending judgmental appreciation in order to: learn what would be the “right dose” of AI; train attention and expertise to — depending on the context — both accept or manage to get out of moments of undecidability regarding when using AI; to formulate how to alternatively care for as well as abolish it; as well as envision how to repair or undo problematic effects of AI on ecologies of living. Analysing complex, materially heterogeneous and located pharmacological relations of AI entanglement with ecologies of living is something to nurture with vigilance and dexterity beyond the framing of AI as poison and remedy.

Following Stiegler, we acknowledge the ambivalent effects of AI — its pharmacological potential — since as many we are unsure how to engage with it. But contrary to the dominant framing, we are not attempting to resolve this paradox through new metrics and quantification methods creating an easy balance between costs and benefits. Instead, we want to be clear eyed about the need to prioritise ecological and social impacts of AI. Moreover, practising the “art of the pharmakon” requires new knowledge to decide when to use AI or not — a point absent from dominant framings —while staying skeptical of the infinite optimisation of our energy grids. The standardisation of accounting metrics can play a role in learning this art and limiting how much damage can be done but, it is always flawed, limited in scope, sometimes given too much credence and takes a life of its own that forecloses other possible ways forward (starting by not using AI in the first place). Because it can be corrupted, quantification and standardisation should only be seen alongside other potential ecological controls of AI – as part of a regulatory web to be designed.

Highlights

The article provides:

A conceptual introduction to the idea of the “pharmakon” as a heuristic device to illuminate current debates on the impact of AI on ecology. Recent historical context to the way AI's damaging effects on the environment emerged as a problem in public policy discourses. Four alternative methodological starting points for problematising computer science-led resolution of the ecological harms of AI through quantification.

Footnotes

Acknowledgements

The authors wish to thank Jake Goldenfein, James Parker and Michael Richardson for comments on an earlier version of this paper.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Australian Research Council, (CE200100005 ARC Centre of Excellence for Automated Decision-Making and Society).