Abstract

In April 2024, over 20 scientists, clinicians, and experts in health equity convened in Denver, Colorado, for an interdisciplinary Summit on Advancing Precision in Health Assessment. The Summit was hosted by the National Academy of Neuropsychology (NAN) with an aim to develop a pathway to identify, assess, and implement alternatives to broad proxies, such as race and sex/gender, in health assessments. Presentations and breakout group discussions focused on re-examining existing clinical algorithms for bias; how big data, artificial intelligence, and machine learning will lead to new algorithms and how to design and use these in ethical ways and how to assess and successfully implement changes in health care assessment aimed at achieving equity. Race received the most discussion. Participants supported calls to replace race-based decision-making tools that erroneously consider race as a biological variable. When possible, underlying social determinants of health should be included in clinical algorithms. Moving forward, research should include clear definitions and justification for all variables used. Future research paths should assess the utility and fairness of algorithms across groups before and after implementation. Changing the narrative to focus on better health for all people, and to achieving health equity, will require collaboration, partnerships, and openness to innovation.

Introduction

In 2020, the American Medical Association (AMA) and the American College of Physicians (ACP) issued statements acknowledging the harms caused by racism and committing to work to combat racism and bias within health care, medical research, and public health.1,2 The Centers for Disease Control and Prevention (CDC) declared racism a public health threat in 2023 and announced an agency-wide commitment to

Clinical algorithms, screening tools, cognitive assessments, and other instruments are important aids in health care decision-making. Ensuring that such tools work effectively and equitably is a challenge for creators of new tools, and is an aspect of existing tools that has come under increased scrutiny. The availability of ever-growing amounts of data about human variation arising from genomic and other “omics” studies has raised the potential to achieve greater precision in health assessment, potentially personalized, as opposed to population-based medicine. In particular, the inclusion of race as a factor in a variety of clinical algorithms has inspired re-examination of whether that factor is serving a purpose and whether its inclusion is making those algorithms more or less accurate, and resultant medical outcomes more or less fair.

10

Similarly, the use of factors such as sex assigned at birth

The question of the inclusion of race in clinical algorithms was addressed in a meeting in June 2023 sponsored by the Doris Duke Foundation, in partnership with the Gordon and Betty Moore Foundation, the Council of Medical Specialty Societies, and the National Academy of Medicine. They produced a report entitled “Reconsidering Race in Clinical Algorithms: Driving Equity through New Models in Research and Implementation.” Their analysis identified ways in which race in algorithms can be harmful, neutral, or beneficial depending on the algorithm and how race is partitioned.

11

For example, one study found that removing race and ethnicity from a cancer recurrence risk model worsened algorithmic fairness in non-Hispanic White groups.

12

Also, if race serves as a proxy for

It is within this context that the Advancing Precision in Health Assessment Summit was convened, April 18–19, 2024, in Denver, Colorado. Over 20 participants representing foundations, professional clinical societies, psychological test development companies, and academia came together for an interdisciplinary summit to discuss the inclusion of race, sex/gender, and other proxies in clinical algorithms and health assessments.b

The Summit: Summary of Topics Addressed

Setting the stage

Beth Arredondo, Past-President of NAN, opened the Summit by providing an overview of the Summit structure. The Summit was comprised of 45-min lectures covering the following topics: the history and effects of including race in clinical algorithms; the complexities of using proxies in health care; the promises and challenges of new artificial intelligence (AI) and machine learning (ML)-based health care tools and algorithms; how “big data” and large-scale collaborative projects are working to collect the information needed to achieve personalized, precision medicine for all individuals; and frameworks developed to implement and assess programs to achieve

Open discussion involving all available summit participants followed each presentation. Summit participants then separated into assigned breakout groups to address three challenges: identifying promising alternatives to proxies such as race and sex/gender that could be used for greater precision in health assessment; identifying a research pathway to evaluate promising alternatives; and identifying an implementation pathway to incorporate these alternatives into clinical practice. Each group discussed the same questions and took notes on their recommendations. Two members of the organizing committee collated the group notes and summarized the main themes with assistance from the first author. On the second day of the summit, all available summit participants reviewed the summarized notes and preliminary recommendations. A science writer drafted an initial proceedings paper, and the writing team finalized the draft. All participants and organizing committee members received a copy of the almost-final draft for input and to confirm their participation in the final product. One summit participant who attended as an employee of a federal agency opted to remove their identifying information from the final paper. The results of these presentations and discussions are summarized within this document, which is hoped will serve as a resource when implementing steps to generate greater precision in health care assessments.

What breathing can teach us about bias

Aaron Baugh, Assistant Professor of Medicine at the University of California, San Francisco School of Medicine, presented a history of how race has long been included as a factor in lung function algorithms, even though this variable does not contribute to algorithm accuracy. In pulmonology, for a long time, results have been analyzed using an algorithm that includes an adjustment factor based on race. 7 However, the algorithm does not consider biracial or multiracial individuals. To exemplify the problem, he started with the case of a patient who presents with the need to assess how much lung dysfunction contributes to their poor quality of life. If the patient has one Black and one White parent, which algorithm should be used to evaluate their lung function? Analyzing such a patient’s test results can result in very different outputs depending on whether the patient is considered Black or White. This patient could very well be found to have normal lung function using Black in the algorithm but be diagnosed with limited function using White in the algorithm. The patient could be rejected or accepted for disability based entirely on race.

Dr Baugh stated that there is no gold standard for lung function; a person is compared with a healthy population of similar individuals. Historically, the comparison population was comprised of similar people with respect to age, height, sex at birth, and race. These are externally verifiable and objectively measurable variables, except for race. The inclusion of race in the assessment of lung function is due in part to the work of a physician, Albert Damon, who, in addition to original research on the topic, revived studies from the 19th century and concluded that there is a genetic basis for a difference in lung function between Black and White individuals. 17 Subsequent research has referenced Damon’s work, including the study that established correction factors for race based on the assumption that there are anatomical differences between people of different races. 18

Damon’s conclusion that differences in lung function are due primarily to race is one that today appears to be incorrect, and the importance of environmental factors in lung function is now recognized. 7 Dr Baugh proposed that three errors occurred within the field of pulmonology that allowed the influence of environment to be underappreciated for several decades. First, the underlying premise of the hypothesis was not questioned. Second, conclusions were not reconsidered in light of new evidence. Third, contributions from disciplines in areas outside the expertise of pulmonology were not appreciated.

Dr Baugh noted that Damon [incorrectly] proposed that an environmental hypothesis for differences in lung function could be ruled out if two things were true: that there is more lung disease in the Black population, and that disease leads to permanent lung damage and long-term disability. 17 The effects of short-term exposures, or of changes in function in response to changing environment, and the relationship between race and environment, were not considered. As a consequence, the premise that genetics is responsible for differences in lung function associated with race was not interrogated.

Multiple studies demonstrate that environmental factors have a substantial influence on lung function. A study of Japanese Americans, all of whom had four Japanese grandparents, found their lung function tracked with that of the population in the location where the individual had grown up, Japan versus in the United States. 19 Within one generation, in people with similar racial background, functional differences were observed based on environment. Other work demonstrates the association between environment and lung function by comparing the same population in the same location across generations. The average lung function of the Taiwanese population changed significantly as living conditions and the country’s economy improved, leading to a requirement to update the pulmonary reference equations for this group. 20

More recent work has examined how genetics could influence differences in lung function associated with race. Historically, medical students have been taught that lung capacity depends on body proportions, and that body proportions differ between races. One study carefully examining the basis for this assumption found that anthropometrics accounted for about 24% of observed differences in lung function. 21 Other work has examined the contribution of ancestry. A study found a correlation between African ancestry (based on analysis of genotyping data) and lung function and found that its effects could not be disentangled from socioeconomic differences. 22 Dr Baugh explained that other factors besides genetics are contributing to the observed difference, which could include environmental factors. For too long, the field has not carefully interrogated the bases for focusing on the concept of inborn differences and minimizing the effect of environmental factors.

As new data have emerged, there has also been insufficient reassessment of earlier conclusions. Damon believed differences in the incidence of lung disease and evidence for long-term effects of such disease would support an environmental hypothesis. Studies have since revealed that in the early 20th century, there were racial disparities in mortality due to pneumonia and in rates of childhood pneumonia.23,24 Other studies demonstrate that short-term lung infections can result in long-term effects on lung function.24–26 Dr Baugh indicated these new data support a reassessment of earlier conclusions regarding the relative contributions of genetics and environmental factors.

Other information supporting reassessment of the genetic hypothesis comes from re-analyzing data comparing lung function of White, Black, and mixed-race Civil War veterans. 27 Based on a genetic theory of lung function, one would hypothesize that the lung function of the mixed-race population should be intermediate to those of the Black and White populations. The actual results show an overlap of the function of the Black and mixed-race veterans, supporting an environmental and social hypothesis. Due to the “1-drop” theory of race at the time, the treatment and experience of mixed-race soldiers was identical to those who identified as Black. Taken together, this work suggests ways in which environmental factors contribute to observed race-associated difference in lung function, highlighting the importance of evaluating factors associated with environment in lung function algorithms.

Contributions from fields outside of pulmonology examining the effect of environment and racism on health outcomes have not been appreciated in pulmonology until recently. Race is not a biological variable. As a social construct, race can be associated with health and health outcomes because race correlates with economic and social differences. In the United States, there is a large wealth gap between Black and White households. 28 The gap is sustained in part due to the phenomenon of minority diminished returns. Studies over decades demonstrate a persistent observation that Black applicants with identical resumes are less likely to receive a callback for a position than White applicants. 29 Identical investment in self and career results in lower rates of success due to structural factors. Minority diminished returns are also observed in health care outcomes. 30 Low birth weight is known to correlate with low income, but a study found that Black women living in affluent, predominantly White neighborhoods had worse pregnancy outcomes than those in less affluent but predominantly Black neighborhoods, 31 suggesting the importance of social environment. Work from Dr Baugh’s group found that lung function does not increase with improved adversity-opportunity index (AOI)—which controlled for socioeconomic status but not discrimination—for African Americans as it does for non-Hispanic White persons. 32 Critically, however, the similar lung function in adverse circumstances argued against baseline genetic causation. These studies suggest that Black individuals do not automatically reap the same health benefits of higher socioeconomic status as White individuals, as additional moderating factors may play a role.

The pulmonary medicine community demonstrated a path forward as the Summit participants consider how to remove race from clinical algorithms. In the case of the lung function algorithm, until recently, no one examined whether the race-adjusted algorithm correlated with health outcomes. Recent work showed that vital capacity and self-reported symptom data better align with a race-neutral algorithm.32,33 Race-neutral algorithms are now being introduced and replacing the race-based algorithms.

In addition to highlighting the importance of collecting data to evaluate the fairness of the algorithm by assessing outcomes across groups, the pulmonology example demonstrated the importance of whose voices are heard. In 1936, Dr W. Montague Cobb attributed the excellence of Olympic athletes to training environment and “experience and better nurture.” 34 Even further back, W.E.B. DuBois argued in 1906 that the evidence shows that tuberculosis is “not a racial disease, but a social disease” dependent on social and economic conditions. For over 100 years, these voices were not heard.7,35

Dr Baugh concluded with a comparison of breathing and bias. Breathing is normally unconscious, just like bias. When there is a problem, pulmonologists can teach a person to consciously breathe. Similarly, he suggested we can teach people to move toward race-conscious medicine, to think critically, and arrive at a better world.

Open discussion

Summit participants were very interested in the AOI

32

Dr. Baugh developed for his studies, and whether it or something similar could be used across other disciplines. This combined measures of household income, highest educational attainment, jobs with a high degree of respiratory particulate exposure as a proxy for blue-collar work, access to fresh healthy foods, and history of in utero smoke exposure.

32

A strength of the AOI was that it combined both individual- and neighborhood-level measures of disadvantage, because both have been found to have independent effects in studies of lung disease.

36

However, participants also recognized that not all these data points are commonly collected. The need for a set of standardized definitions and variables when assessing the effects of SDOH and environmental variables was a topic that recurred throughout the summit. Participants considered what might underlie the observation that Black patients do not benefit from increasing economic status to the same extent as White patients. Effects the experience of racism can have on the body through the brain-body connection and

Participants also discussed whether and why there have been barriers to the implementation of a race-neutral approach to the study of lung function. In the past year, both the ATS and European Respiratory Society have announced a switch to recommending race-neutral algorithms, 7 and there is increasing acceptance of the value of collecting additional collateral information about patients’ backgrounds to help understand and interpret their test results. The Summit participants discussed how this scenario speaks to the importance of educating the workforce about the reasons for implementing new algorithms, in this case, about the data demonstrating more accurate results and better patient outcomes.

Social determinants and the assessment of cognitive health and functioning

Grant L. Iverson, Professor in the Department of Physical Medicine and Rehabilitation at Harvard Medical School, provided an overview of tests used to assess neuropsychological functioning and brain health, and how normative reference values for these tests traditionally have been adjusted for factors including age and sometimes sex and gender, education, and race. The American Academy of Neurology defines brain health as a continuous state of attaining and maintaining the optimal neurological function that best supports one’s physical, mental, and social well-being through every stage of life. 39 Some examples of cognitive markers of brain health include attention and concentration, language, visual-spatial abilities, learning and memory, processing speed, executive functioning, social cognition, and intelligence. Neuropsychologists aim to objectively measure these markers to diagnose cognitive disorders.

Dr Iverson highlighted that cognitive problems throughout the lifespan can be associated with prenatal exposures, learning disabilities, brain injuries, depression, and anxiety. Many medical (e.g., cardiovascular disease) and neurological conditions can contribute to cognitive problems. Genetic influences also impact cognitive health risks, in addition to physical health conditions. 40 Importantly, brain health is not preordained; rather, a diverse set of positive and negative social determinants can have a significant effect. Physical health affects brain health, and exercise, sleep, nutrition, and proactive medical care, as well as socialization and intellectual stimulation, are modifiable factors that influence brain function. 41 There are disparities and inequalities in the ability of people to pursue and optimize these factors, which could result in differences in scores on tests of cognitive functioning. 39

One important application of neuropsychological assessment identified by Dr Iverson is the diagnosis of dementia, including differentiating dementia from normal age-related changes in cognitive function. To identify problems with cognitive function via neuropsychological tests, an individual’s assessment scores are compared with normative samples derived from a “healthy” control normative population made up of similar individuals. Assessing cognitive function in older adults can identify people with mild cognitive impairment (MCI), which may help predict who will progress to dementia. In the DSM-5, neurocognitive disorder is defined by comparing scores with a normative population. 42 Scores 1–2 standard deviations below the population mean are diagnosed as mild neurocognitive disorder, and 2 or more standard deviations below the mean as major neurocognitive disorder. Therefore, comparing people with a normative population that is not similar to them could result in over- or underdiagnosis of a neurocognitive disorder. 43 Early recognition of dementia risk may allow intervention to help prevent or slow the worsening of cognitive function and to treat underlying health problems or conditions that are contributing to worsening function. Cognitive screening in the primary care setting is used to decide when individuals should be sent for specialist neuropsychological assessment for MCI.

Dr Iverson noted that when some tests were developed, it was found that, on average, there were differences in scores between samples of people who identified as African American, as Hispanic or Latino, or as White. Historically, to control for these differences, race was introduced as a proxy in some scoring algorithms. Fully demographically adjusted normative reference values adjust scores for age, sex, education level, and race. These demographic adjustments can reduce the likelihood of misclassifying people as having MCI or dementia when they do not.44–46 Race may be serving as an imprecise proxy for a broad range of negative SDOH variables that might contribute to race-associated disparities in neuropsychological test scores. Removing race and ethnicity from these algorithms presents a conundrum for neuropsychology because, in this case, doing so could lead to diagnosing someone with a neurocognitive disorder when he or she is not experiencing such a condition. Some factors that could contribute to the population differences in scores include health care access, education level and education quality, parental education and family income, language fluency, childhood adversities or exposures, experiences of racism, and discrimination.47–49

SDOH variables may also contribute to race-associated disparities in health care that influence the risk of MCI and dementia, per Dr Iverson. Findings of group differences in dementia burden and other chronic diseases related to dementia risk do not automatically equate cause to biology.50–58 Rather, growing evidence points to the impacts of environmental and social factors on disease risk. For example, a greater proportion of Black (76–86%) than White (21–23%) individuals experienced lower-quality education as children, 48 due to structural inequities. A potential factor that could replace race as a variable for adjusting neuropsychological test scores is reading ability, which may serve as a measure for quality (as opposed to level) of education. In one study, adjusting scores for reading ability eliminated large amounts of race-related differences on neuropsychological tests. 59

Socioeconomic factors are associated with neuropsychological test scores. Assessment in Spanish-speaking adults using the NIH Toolbox Cognition Battery, a set of seven tests assessing multiple cognitive constructs, found that low scores in tests of fluid ability were much more prevalent among people with a family income below $40,000 compared with those with a higher family income. 45

Dr Iverson presented evidence that childhood adversities are associated with worse cognitive function in children and adolescents. Adverse childhood experiences (ACEs) are associated with worse mental health, negative effects on brain development, and lower executive functioning as measured by neuropsychological tests.60,61 The Youth Risk Behavior Survey found that teens report experiencing cognitive impairment (such as trouble concentrating, remembering, or making decisions) more frequently when they also reported experiencing a greater number of psychosocial adversities (such as dating violence or being bullied). Interestingly, more girls than boys reported perceived cognitive impairment across the adversity index. 62 ACEs and negative SDOH were associated with self-reported cognitive difficulties among high school students. 63 Food insecurity in particular is known to be associated with psychological distress and worse mental health in adolescents. 64 It can have a negative and persisting association with academic and cognitive function. 65

Stereotype threat is another factor that can influence some sex-, race-,66–68 and age-associated differences in test performance.68,69 Perceived discrimination has been found to be associated with neuropsychological test scores; Black individuals who reported high levels of perceived discrimination obtained higher scores when tested by an examiner of the same race. 70

In conclusion, Dr Iverson highlighted that neuropsychological test scores might be influenced by many factors, including SDOH, in addition to medical problems and disease. When assessing cognitive performance and diagnosing MCI and dementia, Dr Iverson expressed that the evaluation process and normative reference groups used should be based on the best available data, including sociodemographic factors, for the best precision medicine approach.

Open discussion

Summit participants discussed the possible harms of over- and underdiagnosing MCI, as well as the possible harms of including or excluding race as a proxy in scoring algorithms from the neuropsychological assessments. Overdiagnosis stresses support systems and makes it more difficult for people with a condition to access specialists and other follow-up care. For the individual, an incorrect diagnosis of MCI can be very distressing and lead to fear of conversion to Alzheimer’s disease or of being treated differently due to the diagnosis. People diagnosed with MCI might be subjected to legal proceedings, such as a proceeding relating to testamentary capacity. Their family members might express concern about them managing their finances or driving. Underdiagnosis of MCI could prevent resources from reaching people who would benefit from them. Participants also noted that the availability of supports for people with MCI is environmentally and economically driven, so the issue of early diagnosis is complex.

With the perspective gained from the first presentation of the Summit, participants questioned whether underlying premises or prior conclusions in this field should be re-examined in light of new data. The group discussed whether assessments are measuring their intended parameters. For example, some questionnaires are regularly updated to reflect changes in word usage because word choice can result in differences in responses that are not reflective of the underlying condition being interrogated. Considering how lung function algorithms are being redesigned based on data showing the race-neutral algorithm is associated with better real-world outcomes, participants questioned whether test scores on assessments for MCI are uniquely associated with specific outcomes, such as one’s ability to make decisions and live independently. If not, participants discussed whether the tests should be modified or if new tests are needed for the assessment of cognitive effects of MCI and dementia.

Participants also discussed what has been done to examine what factors underlie the differences observed between groups and whether other factors, or an opportunity/adversity index, might be more appropriate than race. Additionally, there was agreement that moving from a cross-sectional to a longitudinal approach, in which changes in a person’s cognition are tracked over time, would be more valuable when assessing MCI. However, participants acknowledged that longitudinal tracking requires technology, and disparities in access to technology could lead to unintended disparities in health care. Getting high-quality and equitable health care to all communities was a recurring topic of the summit.

Transforming public health data systems for equity and transformation

Gail C. Christopher, Executive Director of the National Collaborative for Health Equity, spoke about approaches developed to implement programs to achieve health equity. She said this Summit was part of an expanding and growing movement about health equity, but also about healing the legacy of our nation founded on a false hierarchy of human value. She supported the concept of moving from race-based medicine to race-conscious medicine but emphasized that a true breakthrough will be achieving “racism-conscious” medicine, because racism is the belief system that must change. How we change beliefs determines how we change society.

Dr Christopher noted she has seen progress throughout her professional career working on this issue and asserted that there was still a great deal of progress needed before achieving health equity. She provided an outline of a current movement, developed with 150 organizations, designed to be appropriate for a diverse country like the United States, which has avoided facing its history. The process, called the Truth, Racial Healing and Transformation™ (TRHT™) Framework, involves three stages: narrative change, racial healing and relationship building, and addressing systemic structures that have been perpetuating inequities.

71

The first Summit presentation by Dr Baugh provided an example of how narrative change enabled scientists to interrogate the false assumptions that underlie the existing narrative and to create new standards and expectations. Dr Christopher endorsed the idea of being a “

Dr Christopher highlighted the book The Slave’s Cause: A History of Abolition by Manisha Sinha, which centers the work of free and enslaved Africans in achieving abolition. In addition to narrative change, the book demonstrated how abolition was an interracial movement grounded in the understanding that we are one human family. The book also demonstrated what was meant by the racial healing and relationship building step of TRHT: bringing people from different backgrounds and disciplines together to work together. She identified the present Summit as another example where solutions were sought through relationship building and open communication, recognizing all have been hoodwinked by the fallacy of a hierarchy of human value based on race and gender.

Racial healing and relationship building lay the groundwork for addressing the three main structures that allow false hierarchies to persist. The first is Separation, which includes racial segregation, forced resettlement, concentrated poverty, and differential neighborhood access to vital resources (e.g., hospitals, food, green spaces). The second is Law, including the civil, criminal, and public systems and policies (e.g., immigration) designed to maintain the hierarchy. The third is the Economy, which includes structured inequality and barriers to opportunity.

Numerous TRHT efforts are underway across the United States, in collaboration with college campuses, libraries, and media, including social media. All TRHT projects begin with the charge to imagine an America without racism, which has faced and overcome the legacy. Dr Christopher pointed out the difference in this approach in that, instead of starting with the pathology, it starts with a vision of where we are going and the belief that it is possible to leave negativity in the past.

TRHT efforts include TRHT campus centers, leadership training, Great Stories Club initiatives at libraries (a literature-based outreach for underserved youth), and TRHT coalitions within local jurisdictions. Implementation of TRHT within local communities and organizations is using five steps in their process: (1) envision a future without racism, (2) analyze the landscape to see where we are and what we need to do, (3) determine who has the power to make the needed interventions and bring in those people or institutions, (4) identify specific actions to take, (5) determine how to assess the impact of the actions that will be taken, and how to sustain the work. The final goal is to replace the long-held belief in a hierarchy of human value with the genuine belief that people are all equal.

In recent years, people have begun to recognize the effects of the hierarchy in health care. The COVID-19 pandemic in particular revealed inequities. In 2021, Dr Christopher chaired the National Commission to Transform Public Health Data Systems.73–75

The group applied the TRHT framework to their deliberations. Dr Christopher summarized the commission’s recommendations in three statements. The first is to change the narrative to center on health and well-being. The current narrative tends to focus on pathologizing and identifying things that are wrong with people of color. A new narrative should instead center on well-being and achieving optimal health. The second is to prioritize equitable governance and community engagement. Listen to and respect the individuals, and recognize people own their own medical data. The third is to ensure public health measurement captures and addresses

Dr Christopher introduced a new data tool, the Health Opportunity and Equity (HOPE) data index, to measure how different populations are faring on 27 indicators of health and well-being.76,77 HOPE was created to analyze national and state data and now is expanding to work with local communities. The health and well-being indicators represent five domains: health outcomes, socioeconomic factors, community and safety factors, physical environment, and access to health care. The phrasing of each factor tends to be flipped in contrast to how these types of data are usually discussed. For example, rather than documenting food insecurity, food security is measured. Instead of documenting pathology or negative SDOH, the indicators are aspirational measures for setting goals. This aligns with narrative change as described in the TRHT framework.

As part of the approach, HOPE identifies communities that are doing well in a metric such as homeownership or high school graduation rates and sets their achievement as the goal for all communities. Communities then determine and quantify what outcomes would indicate the desired goal or improvement has been achieved. Part of the program involves sharing the datasets so communities can establish aspirational goals and to provide the algorithms needed for communities to calculate their distance to the goal.

Dr Christopher emphasized that the health effects of racism include the body’s response to adversity, including increased stress hormones and allostatic load. “Racism gets under our skin” literally, as there are physiological effects of stress and being faced with people and systems that deny or minimize your humanity. For this health equity Summit, she asked that participants focus on solutions rather than merely carefully documenting why disease is more prevalent in populations of color.

Medical professions are critical to this work. Dr Christopher commented that APA, ATS, AMA, and AAP have acknowledged the harm they participated in and have made commitments to address consequences and create a new way forward via public statements.1,6–8 She identified recent movement and momentum, and she expressed hope.

Open discussion

Participants discussed how to achieve the political will and the help necessary to implement public health changes. Dr Christopher noted the importance of showing that investment in public health benefits all people, and the importance of convincing politicians of the collective best interest. Much discussion focused on the fact that the transition to more precision in medicine would be aided by some comprehensive changes in the health care system. Participants noted that the United States lacks universal health care and has worse health outcomes than other nations. They discussed leveraging moral responsibility and political will to shift the health care system to focus on population health and well-being in addition to profit, and a change to value-based care. Discussion also centered around how to incentivize keeping people healthy and changing medical reimbursement models from procedure- or volume-based models to quality- and healthy outcomes-based models.

Racial fairness in clinical algorithms

Shyam Visweswaran, Professor of Biomedical Informatics at the University of Pittsburgh, spoke about racial fairness in clinical algorithms developed using standard statistical methods as well as AI and ML. Clinical algorithms are mathematical formulas or computational procedures designed to analyze or interpret data and predict outcomes or guide decisions in health care settings. They are often equations or logistic regression models. Increasingly, AI/ML is used to develop clinical algorithms because these new technologies can examine large amounts of variables and data to generate new algorithms. However, the use of AI/ML also introduces new opportunities for bias into clinical algorithms, for example, when algorithms are built on biased data, or when clinicians apply algorithms without a full understanding of what variables are used and how they might impact results.

Dr Visweswaran pointed out that many clinical algorithms in current use are statistical algorithms developed using standard statistical methods to identify patterns and relationships in the data. They have been created by researchers who carefully considered the variables to include and defined the relationships among them. An example of a statistical clinical algorithm is the estimated glomerular filtration rate (eGFR), which adjusts serum creatinine by age, gender, and race. In contrast, AI/ML-based clinical algorithms are developed using computational methods that learn relationships from data without human guidance. AI/ML can be applied to large and complex datasets with many variables and detect complex patterns and relationships that may not be apparent to human researchers. An example is a random forest model being developed at the University of Pittsburgh to predict bleeding risk in patients taking direct anticoagulants, which involves many dozens of variables.

When applying any clinical algorithm, Dr Visweswaran said ethics should be considered. A range of individual and societal harms could result from the misuse or abuse of an algorithm, by a poorly designed algorithm, or by unintended consequences of the application of an algorithm. AI/ML ethics have been proposed as a set of principles, values, and approaches that are widely accepted standards to guide moral conduct in the lifecycle of AI/ML algorithms and systems. 78 These ethical guidelines can be applied to standard algorithms as well, but are especially important in the context of AI/ML algorithms because they can be developed and deployed rapidly. The United States lags behind the European Union in addressing this issue. In 2023, the Biden administration issued an executive order directing the National Institute of Standards and Technology (NIST) to develop guidelines for AI/ML algorithms. 79

Dr Visweswaran identified two ethical principles that are particularly relevant to race: racial health equity and racial justice. Racial health equity is the principle of identifying current health disparities and reducing and ultimately eliminating these disparities across racial groups. Racial justice is the principle of supporting and achieving racial equity through proactive and preventive measures, creating systems correctly from the beginning. 78 These ethical principles have been codified in law. Anti-discrimination laws prevent discrimination based on certain demographic attributes in hiring, housing, and lending. There are also laws preventing discrimination based on genetic information. Such protected attributes include race in addition to color, religion, sex, national origin, age, disability, and genetic information, including family medical history. However, there are many historical examples of racism in medicine. For example, the belief that Black people can endure more pain than White people, or that they require higher X-ray exposures for having “denser” bones. 80 There are also examples of racism in medical research, such as the unethical Tuskegee Syphilis Study.

The inclusion of race in the clinical algorithm for eGFR effectively makes a Black person appear healthier than a White person with the same serum creatinine level. Negative consequences of this include the fact that it takes longer for a Black patient to be classified as having disease or qualifying for kidney transplant. Alternatively, a positive consequence is that the apparently better kidney function may qualify a Black patient for certain cancer medications.

Catalogs of clinical algorithms that include race as a factor, of medications with race-based guidelines, and of medical devices with differential racial performance can be found online. 81 The US Food and Drug Administration (FDA) has approved 15 medications with race-based indications or use guidelines based on race. 81 Some medical devices demonstrate differing performance across the races, such as poorer pulse oximeter performance in individuals with darker skin tones and poorer electroencephalogram scalp electrode performance in individuals with thick and curly hair.81,82 Currently, there is intense focus on the fairness of clinical algorithms across racial groups and the validity of including race as an input in such algorithms. A recent New England Journal of Medicine paper started the discussion. 4 The impact of race as an input in clinical algorithms is likely to vary and can be detrimental, neutral, or beneficial, depending on the algorithm. This was addressed by the Council of Medical Specialty Societies (CMSS). 11

One way to evaluate algorithmic fairness is via group fairness, which requires that if a population is divided into groups based on a protected attribute such as sex or race, the disadvantaged group would be treated similarly to the advantaged group. Group fairness, however, does not guarantee fairness for every individual, and a current area of research is developing ways to assess individual fairness.

Group fairness has been studied in the UTICalc algorithm, a screening algorithm used to predict the risk of urinary tract infection (UTI) in children aged 2 years and younger. The algorithm helps decide which children should be catheterized to obtain a urine sample for testing and bacterial culture. The original algorithm included age, sex, fever, alternate source of fever, and race (Black vs. non-Black) as variables. 83 In line with the AAP’ commitment to move away from race-based medicine, 16 the UTICalc algorithm was reworked to remove race. Replacing race with duration of fever and prior UTI resulted in similar algorithmic performance, 84 but the question arose whether these modifications make the algorithm more fair. To evaluate algorithmic fairness, several fairness metrics can be examined, including demographic parity (i.e., similar proportions in each group should have the predicted outcome), equality of opportunity (each group should have a similar true positive rate), and equalized odds (each group should have similar true and false positive rates). Comparing the two versions of the UTICalc algorithm found that the original algorithm was biased, with different sensitivities for Black children (82%) than non-Black (98%). The reworked algorithm, however, is more fair because the sensitivities for the two groups are similar (94% for Black children vs. 96% for non-Black). 85

Bias mitigation requires the ability to measure unfairness and a way to equalize fairness. When evaluating an algorithm, it is not possible to optimize fairness on all metrics simultaneously. For example, parity in sensitivity, specificity, and positive predictive value cannot be achieved simultaneously. Thus, for a given algorithm, it is critical to choose a suitable metric for which fairness is required. For example, in the case of UTICalc, sensitivity is the critical metric because it is a screening algorithm. AI/ML methods have been developed to optimize an algorithm on a specified fairness metric across groups, such as Microsoft’s Fairlearn toolkit. Such toolkits may be suitable for optimizing an algorithm to make it fairer on a metric of choice.

Dr Visweswaran pointed out several potential sources of bias in an algorithm. Data bias refers to bias preexisting in the data used to develop the algorithm. For example, electronic health records or administrative data are not collected under carefully controlled conditions, and any biases existing within these data are likely to make an algorithm derived from them unfair. Interaction bias is the improper use of an algorithm, whether by the clinician or the patient. An example is automation bias, which describes the tendency of a person to accept the decision or recommendation of an algorithm, ignoring information that indicates the recommendation is incorrect. Development bias refers to the poor design of the algorithm. An example is a commercial algorithm that was used for a decade and applied to many millions of people in the United States before its development bias was identified. The algorithm was used in population health management to identify patients who should be directed to additional health resources due to their high-risk status. Though the algorithm did not use race as a variable, when a research group examined it for bias, they found that Black patients assigned the same risk score by the algorithm were much sicker than White patients. 86 The algorithm had been trained to predict health care costs, rather than health needs, resulting in decreased risk for underrepresented groups like Black persons, who had limited access to health care. The analysis identified a race-related gap between needing health care and receiving care and showed that using health care costs as a proxy for health needs was poor algorithmic design. 86

Achieving algorithmic fairness in medicine requires a two-pronged approach: not only must existing algorithms be made fair, but new algorithms must be constructed in an unbiased manner. The US FDA has certified over 500 AI/ML algorithms to date, primarily in the field of radiology. 87 A key limitation is that most of these algorithms are proprietary and the product of commercial development, not academic research. Therefore, full performance data about algorithms are not available, and whether an algorithm is biased cannot be readily assessed independently.

To help ensure algorithmic fairness, Dr Visweswaran proposed developing an evaluation framework, similar to what is used for drugs or vaccines, including trials to demonstrate safety (lack of bias) and efficacy, and continuous monitoring after deployment to assess bias and efficacy in the field. Another parallel with drugs is that using clinical algorithms “off-label” entails risks similar to “off-label” use of drugs. An algorithm developed for one purpose, for example, screening, should not be used for a different task, such as diagnosis, without additional validation. Such “off-label” use of an algorithm could cause harm because a screening algorithm is optimized for sensitivity and may have poor positive predictive value, which is the key metric for a diagnostic algorithm. For AI/ML-based algorithms, particular attention should be paid to assessing data representativeness, ensuring that persons of all races are adequately included.

Open discussion

Summit participants discussed how the United States lags behind the European Union in establishing guidelines for algorithms that will be used for human health. They also discussed that the present incentive for algorithm development is not fairness or even accuracy, but speed and efficiency. Algorithms are developed to help ease overburdened clinicians, especially in primary care. The use of algorithms requires education of the clinical workforce; not necessarily to understand the math underlying every algorithm, but to understand what a score from a given algorithm represents and how to include it as one factor in clinical decision-making, similar to laboratory test results. Also, the Summit participants discussed the importance of education regarding limitations of algorithms and the temptation of people to thoughtlessly follow technology, even when told the technology is not 100% accurate.

Precision medicine: Bridges to breakthroughs

Julie Louise Gerberding, President and CEO of the Foundation for the National Institutes of Health (FNIH), spoke about trends accelerating the evolution of precision health and described the challenges of translating big data to individualized health decisions. A move toward precision health will reduce the use of imprecise proxies in clinical health assessment. FNIH is a nonprofit organization chartered to support the mission of the NIH and is exploring how it can apply AI tools to support its three main strategic pillars: (1) designing and managing collaborative public–private-patient precompetitive research partnerships; (2) identifying and supporting promising early- and mid-career scientists; and (3) building trust in science and the value it brings to people. Ultimately, the value of these efforts in creating health impact depends on translating new knowledge and tools developed from studies of populations to the individual patient facing a medical decision. Unfortunately, in clinical practice, achieving this has been easier said than done.

Precision medicine is generally defined as the customization of health care tailored to meet the individual needs of a specific patient, based on genetic, environmental, and lifestyle variables. Dr Gerberding indicated that most professional organizations, including the NIH, NAM, and the AMA, have called for the inclusion of these data in medical decision-making. Initially, the focus was on genomic data with the hope that the increasing availability and affordability of genome-wide association studies (GWAS) would reveal the most important bases of disease causality, identify new treatment targets, and improve the precision of interventions. The ability to obtain so much genetic data excited scientists about the potential to associate diseases with specific genes and truly achieve personalized medicine, 88 and to some extent, particularly in oncology and single-gene heritable diseases, this promise has been fulfilled. However, most diseases are multifactorial in origin, and lifestyle, social, and environmental factors are the major drivers of most chronic diseases. 89 Fortunately, AI tools enable the utilization of the growing data resources and analytic approaches that make precision medicine possible.

However, “big data” presents its own challenges, often characterized by the five Vs: volume, variety, velocity, veracity, and value. 90 It is not only the formidable amount of data but also the many types of data, including environmental and longitudinal data about a patient’s exposures over their lifetime—so-called exposome data—that complicate this approach. Even when available, these data sources are heterogeneous and usually incomplete. Standards have been developed for GWAS, but standards are also needed for environment-wide association studies (EnWAS). Data ownership and governance are often contentious, and data privacy and security are essential but difficult to protect.

Despite these challenges, Dr Gerberding pointed out that creating algorithms from disparate data sources is the greatest opportunity for AI tools to improve the precision of medical decisions. Data interpolation, mapping, and integration are now feasible. Unfortunately, the actual analytic tools to create algorithms operate with algorithm “anonymity.” Few people understand the actual models or methodology being used, which makes it difficult to ask the right questions about bias, accuracy, and relevance. In particular, biased input data will obviously lead to biased outputs. Transparency, peer review, and stakeholder input are required to ensure algorithms are correct and unbiased. A trained workforce is also a critical need, and health care students must be taught about information processing and biomedical informatics. Perhaps most importantly, once an algorithm is created, it must be implemented in a patient-centric way and validated in the real world. Trust on the part of both patients and caretakers is the most significant barrier to uptake, but Dr Gerberding expressed optimism that we can solve these problems for all patients in an equitable and transparent way.

Open discussion

Summit participants discussed the need to have clinicians who understand the anonymity of AI/ML algorithms and are involved in algorithm design. There is a need to develop a workforce with the expertise to understand the clinical and ethical requirements of algorithms in addition to understanding AI/ML. If an algorithm is being constantly updated as new data are acquired, especially if the algorithm is proprietary, how will clinicians know if, when, and how the algorithm has changed to be able to continually assess fairness? Participants also discussed how to regulate algorithms based on the context of use, since an algorithm could do harm if used incorrectly.

Public–private partnerships at the fnih to advance precision medicine

Joseph P. Menetski, Senior Vice President and Chief Translational Science Officer at the FNIH, described how programs at the FNIH support translational science research that is relevant for precision medicine. Traditional drug development starts by selecting one disease, identifying one pathway important to disease development, and trying to find a target effective at disrupting that pathway. The strategy of the pharmaceutical industry has been to focus on the diseases that affect the most people in order to have the greatest impact on the largest number of people. This has worked well for decades, but it has become clear that there are patients for whom some drugs do not work.

A deeper understanding of disease biology has led to a new approach to drug development. Mechanistically, no disease is an island. Instead, it appears that some less-common diseases are more like archipelagos, appearing separate at the surface but actually connected by common underlying mechanisms. In addition, common diseases have been found to be complexes of different diseases, rather than single monoliths, and these subcategories can be looked at more as rare diseases.

Knowing there may be common underlying mechanisms for multiple diseases has not made drug discovery easier, faster, or cheaper, according to Dr Menetski. Rather, more data and more targets have led to less clarity, longer timelines, and more difficulty judging success. Partnerships can help with these challenges. FNIH manages partnerships between government and other agencies, life sciences companies and academia, and foundations and patient organizations to tackle the most pressing health challenges. The role of FNIH is analogous to a general contractor in house construction, focusing on the big picture and project management. FNIH sets a defined, usable goal at the start and plans for potential problems before the project has started. They work with trusted and respected partners and encourage a big tent, attracting as many collaborators as can help. Their approach is that “a rising tide floats all boats,” and they intend that the information collected by a collaboration will be used by and benefit all participants, and that all results will be made public.

Dr Menetski pointed out that drug development is inefficient; in 2023, over 10,000 active programs resulted in less than 200 drugs submitted to the FDA. FNIH has four main areas of activity to address four problem areas in drug research and development to try to shrink the time between target discovery and drug approval: (1) there are more targets than there is time to investigate, (2) precise populations require precise understanding of biology and tools for decision-making in the clinic, (3) clinical trials for specific therapies do not allow flexibility during the trial, and (4) regulators rely on established clinical outcomes that do not always allow for early decision-making.

To address the first issue, FNIH’s Accelerating Medicines Partnership works to validate targets in a systematic and consistent way to reduce the number of potential targets. Over the last 10 years, almost one billion dollars have been invested in 10 projects. The partnership is developing multi-omic data on hundreds of individuals, including whole genome sequences, metabolomics, proteomics, and bulk RNAseq and single-cell transcriptomic data that can be used in disease association studies. An example is the project to discover Alzheimer’s disease genes. The data are shared on the Agora portal (Agora.adknowledgeportal.org). FNIH has surveyed the partners and knows the project has helped researchers prioritize targets. Specifically, the program has identified new targets that academics are now researching, while industry has deprioritized unlikely targets and is able to focus efforts on those that are most likely to benefit patients. The project has also been used to evaluate genetic associations related to ancestry. 91

To address the need for decision-making tools, FNIH would like to speed up clinical trials by encouraging studies to use biomarkers as outcomes and use the Biomarkers, Endpoints, and other Tools (BEST) resource. Biomarkers are defined as anything that can be measured as an indicator of normal biological processes. The Biomarkers Consortium projects bridge the gap between basic research and practical needs for advancing drug development and regulatory science. All consortium work is precompetitive, and results are released to the public. The consortium includes patients in the development and evaluation of digital measures. 92 Over 15 years, the work has resulted in nine clinical tools in drug development and five FDA guidance documents, and 14 therapeutics have advanced based on tools generated. An example of a biomarker project is the metabolic dysfunction-associated steatohepatitis/nonalcoholic steatohepatitis (MASH/NASH) hepatitis project, Non-Invasive Biomarkers of Metabolic Liver Disease (NIMBLE), which aims to identify noninvasive biomarkers for the diagnosis and staging of NASH. Identifying such a biomarker would reduce the number of liver biopsies and provide a faster means to assess response to interventions. The project is evaluating 10 potential biomarkers and has found some that work better than the current standard blood marker, Fibrosis-4 (FIB-4).

To increase flexibility and precision in clinical trial design, FNIH supports a move from the traditional one-trial–one-drug approach to the design of master protocols in which one protocol can evaluate multiple drugs based on biomarkers. An example is LungMAP, which is evaluating numerous drugs in non–small cell lung cancer (NSCLC) at more than 900 sites across the United States. Every person at every site is treated the same. All tumors are genotyped, and patients receive a drug targeted to their tumor. The large-scale approach allows multiple drugs to be evaluated simultaneously.

Finally, FNIH is working to change the regulatory paradigm to better work with precision drug development. Traditionally, regulators require specific sets of data for every drug. However, FNIH is pursuing whether highly similar drugs, based on the same drug formulation, can use the same nonclinical data in appropriate situations and avoid starting the process from scratch. The Bespoke Gene Therapy Consortium is addressing this. One project is adeno-associated virus (AAV) for gene therapy for rare diseases; the viral vector is the same, but several different gene inserts will be tested. Their goal is to produce a standardized process that can be repeated for other rare diseases. The first version of the regulatory playbook was released earlier this year.

Open discussion

Summit participants discussed what FNIH has learned about building coalitions and getting disparate groups to work together; a consortium needs to come to consensus about how all members will benefit. Discussion also centered around the need for diversity in the pools from which the -omics study data are derived so that results are applicable to the entire population. As more data are accumulated, precision medicine is constantly funneling down to personalized medicine.

Summit Discussions and Recommendations

Vision for the future

Before dividing into breakout groups for more focused discussion, Beth Arredondo led the entire group of Summit participants in a discussion of goals for the breakout groups, what the desired outcome would be, and how to achieve change. The group discussed that a desired outcome would be tools to help with decision-making for every patient, with an understanding that sometimes there may not be one tool that performs equally well in all situations.

Participants discussed the importance of focusing on wellness across the lifespan and of primary care to that goal, including pediatrics. They noted that primary care already does not have sufficient time to carry out all that is required, and there is a need for an increased workforce and recognition of every professional’s role in health care.

Another large-scale change to the health care system participants discussed is the need for an integrated health system. Some noted that the current system does not always prioritize wellness. For example, information fields collected by the electronic health record are frequently driven by billing rather than information that might be needed for clinical decision-making. Consistent data collection and sharing would be aided by the use of commonly operationalized definitions, particularly when collecting information about SDOH. It was noted that the Centers for Medicare & Medicaid Services (CMS) instituted codes for SDOH in 2023, so a goal could be to ensure these codes are used and that clinicians are aware that collecting SDOH information can be valuable information for analyzing a clinical case, and not only a basis for referring a patient to nonmedical services.

Participants noted that patients may not be comfortable talking about factors such as SDOH with clinicians, that trust is essential to this process, and that trust must be earned. Transparency is important to building trust. With respect to clinical algorithms, transparency includes developing and publicizing standards for demonstrating an algorithm actually leads to more fair outcomes. Finally, the group discussed the need for a strategy to evaluate existing and changing models, and a pathway for building models into clinical decision-making and health care education.

Breakout group discussions

Summit participants broke into smaller groups to discuss the topics listed below. Each small group discussed the same topics and took notes of main points.

Identify promising alternative factors that influence health care baseline to use instead of current proxies. Current and future. Common alternatives across health care and idiosyncratic alternatives in specialty areas. Identify a research pathway to evaluate promising alternative variables. Current and future. Traditional and nontraditional funding sources. Identify an implementation pathway to incorporate promising alternatives into clinical practice. Current and future. Facilitators and barriers to implementation in different regions.

After their breakout sessions, two members of the organizing committee and the first author collated breakout group notes. On the second day, all available Summit participants reconvened to compare and build on each group’s insights. In a final discussion, participants finalized their recommendations for a path forward to advancing precision in health assessment with the aim of achieving equity in health outcomes. Key takeaways are described below.

Promising alternative factors that influence health care assessment to use instead of current proxies

In a recent report entitled “Reconsidering Race in Clinical Algorithms: Driving Equity through New Models in Research and Implementation,”

11

the authors wrote: “The impact of including race in clinical algorithms can vary widely, and can be:

Beneficial, if race is included in an intentional, well-considered effort to reduce inequities, and it represents true biological differences based on clinical evidence; Neutral/have no impact; or Harmful, if the inclusion of race in the algorithm perpetuates race-based medicine that disadvantages historically underserved populations and/or promotes the concept of innate biologic differences between racial groups that do not exist” (from the Executive Summary).

In addition, they wrote: “As a result, the best path to improving health equity will likely differ for each algorithm. The best option for a particular algorithm may be to update the algorithm to exclude race; to use an alternative to race, such as measures of SDOH; to retire the algorithm and replace it with an alternative that promotes equity; or to continue to include race” (from the Executive Summary).

The Summit participants agreed that race should not be included in clinical algorithms, whether statistical or AI/ML, unless it is intentional and beneficial, such as when it can reduce inequities. Where race has been serving as a proxy for social factors, it should be removed from existing clinical algorithms and replaced with more precise factors, resulting in algorithmic fairness and the promotion of racial health equity.

Several factors may make more sense to include than race in health care algorithms or decision-making tools. Some of these potential alternative factors include SDOH such as economic stability, education access and quality, health care access and quality, neighborhood and built environment, experiences of racism, and social and community context. Potential measures for these SDOH discussed by Summit participants are provided in Table 1.

Social Determinants of Health and Methods of Measurement

Participants noted in some assessments or algorithms, poverty may be the most significant influence behind observations previously associated with race.52,93 Participants cautioned about unintended consequences and substituting one category of inequity for another.

Participants noted language, particularly English as a second language, may account for some race- or culture-associated differences.45,94 Therefore, regional or culturally specific vocabulary should be considered when designing tests including a language component.

The participants noted that completely removing race from consideration in the context of delivery of health care and health assessment may not be recommended, and the aim should be race-conscious and racism-conscious rather than race-based or race-blind health care. Collecting information about race is required by some government agencies to help track disparities, allocate resources, and research health-related outcomes. In some cases, it may be important to collect information about ancestry and information about a patient’s history (first- vs. second-generation American, for example) that could provide insight into environmental factors influencing health and disease. When race information is collected, Summit participants agreed there is a need for standardization and clarity in how race is defined for that study. Removing race from any algorithm should always be done with concern for unintended consequences and confirmation that its removal improves health outcomes and does not introduce new inequities. The participants endorsed the idea of equity until equality.

A research pathway to evaluate promising alternatives

When developing new algorithms or replacing race in current algorithms, the algorithms should be assessed for clear evidence that they improve health outcomes and do not introduce or reinforce inequities. Testing algorithmic fairness should always be a part of the research pathway.

The participants noted that some new AI/ML algorithms are built on large datasets that are not carefully collected in a scientific manner and that embody historic beliefs in racial hierarchy. Therefore, the results will be affected by any bias present in the datasets. For example, if people from one region, from one socioeconomic group, or from one ethnicity are over- or underrepresented, the algorithm will be biased and potentially not applicable to all people. It is necessary that studies sample from the entire population and integrate populations that are underrepresented in biological research. For studies of biological underpinnings of disease, data from genomics and other omics studies and advances in precision health can help science move away from the illusion of specificity that race has provided.

In addition, the causality of any correlations identified by AI/ML algorithms is unclear. Additional work will be needed to determine whether interventions targeting variables found to be correlated with an outcome can improve wellness.

Summit participants suggested that when developing algorithms, optimal use should be made of common data elements (CDEs) (https://cde.nlm.nih.gov/home) to allow data to be shared and combined. These CDEs should be built into NIH journal guidelines. CDEs offer existing standardized definitions that can be used across disciplines to ensure common definitions and increase consistency between groups and across studies. Algorithms using CDEs may be more easily incorporated into wider use by groups that also use these definitions. The factors chosen can inform longitudinal, population-based research such as the All of Us Research Program. There is a need for comprehensive longitudinal studies and for a focus on multi- and transdisciplinary approaches. The group recommended the development of a robust ADI and AOI as metrics that can be used across different types of research.

Proxies should be avoided, and data elements collected should be limited to those tied to the outcome of interest. For sex and gender, for example, consider whether sex assigned at birth is relevant, or whether hormone levels or genetics are the underlying factor that should be collected. The participants noted that even animal studies are biased, including only male animals, so future studies should include diversity with respect to sex and sex hormones. More research is also needed on the biological and physical changes associated with the experience of stress, discrimination, and with SDOH.

In survey research, the group recommended to carefully consider questions that are self-reported and phrase questions to avoid stigma and stereotypes and in ways to minimize heterogeneity that interferes with data collection. It is necessary to be conscious of stigma that can reduce participation and cultural considerations in answering personal questions. Allow more granularity for self-report of gender and race and consider the impact of that granularity on the outcome versus potential harm.

AI could be a valuable tool to improve precision medicine, while guarding against unintended consequences and ensuring that any algorithms developed foster positive health changes. Public data are used to “train” AI/ML, so AI/ML producers should be partners investing in the research. The group recommended considering structuring AI/ML research pathways similar to those for biologics, including continued monitoring after implementation. Because large-scale AI/ML research requires bridging subject matter and technology expertise, the group recommended finding ways to foster the development of scientists who can bridge this gap, and also to challenge health care researchers to learn more about AI and diverse tools.

Future research must include consideration of data privacy and security. When collecting large datasets for AI/ML algorithms, privacy and HIPAA limits to data access and federal regulations of data mining must be kept in mind. All projects must plan for the security of all data, including cloud-based protections, and consider the unintended consequences of open data sharing. For example, data must be anonymized or protected against unintended uses by insurance companies, law enforcement, or industry. Maintaining data privacy and security is essential to trust and community participation, and trust and community participation are essential to obtaining unbiased decision-making tools.

In publications, there should be transparency about why specific variables are used or not used, with explanations that can be referred to in future research.11,73,95,96 For example, when race is included, the article should be clear about how race was defined and how information about race was collected. Standardized definitions (e.g., CDEs) can help to ensure consistency of definitions. Similarly, scientists must be clear to define what information was collected when using sex or gender as a variable, since these terms have often incorrectly been used interchangeably. In some research scenarios, hormone status may be relevant, while in others, the relevant parameter might be gender identity or gender-based discrimination. The participants agreed that going forward, they should provide these explanations in their own work and serve as role models for how research should be done. See further guidance from the National Academies of Sciences, Engineering, and Medicine, and the AMA.

Recommended future research directions include open science, including citizen science, while keeping in mind the costs of doing so. Leveraging the electronic medical record and taking a

An implementation pathway to incorporate promising alternatives into clinical practice

Implementing promising algorithms into clinical practice will require workforce development and training. Participants noted that though increasing the diversity of the workforce is an important step to addressing trust barriers, it is not possible to assess workplace diversity because the Office of Management and Budget does not track diversity of the health care workforce with granularity. Making opportunities to participate in health care more visible to children could inspire more people from all backgrounds to enter the health care workforce. Also, being mindful of the

Building coalitions will be key including partnerships with public health and local policymakers. Clinicians will need to become “solutionists” and, by extension, activists to influence policy. Advocating to change incentive structures in health care from relative value units to wellness outcomes would be an important step. To attract community involvement requires trust and should include acknowledgment of past wrongs. Improving community health literacy for all is an important goal, including health literacy for children. Concurrently, efforts should be made to improve the communication of science to lay groups and to make sure communication and care are culturally and linguistically relevant. It is recommended that the research narrative change from a focus on identifying the negative influences that impair health to a focus on creating a culture of health and wellness and identifying factors and actions that improve health outcomes.

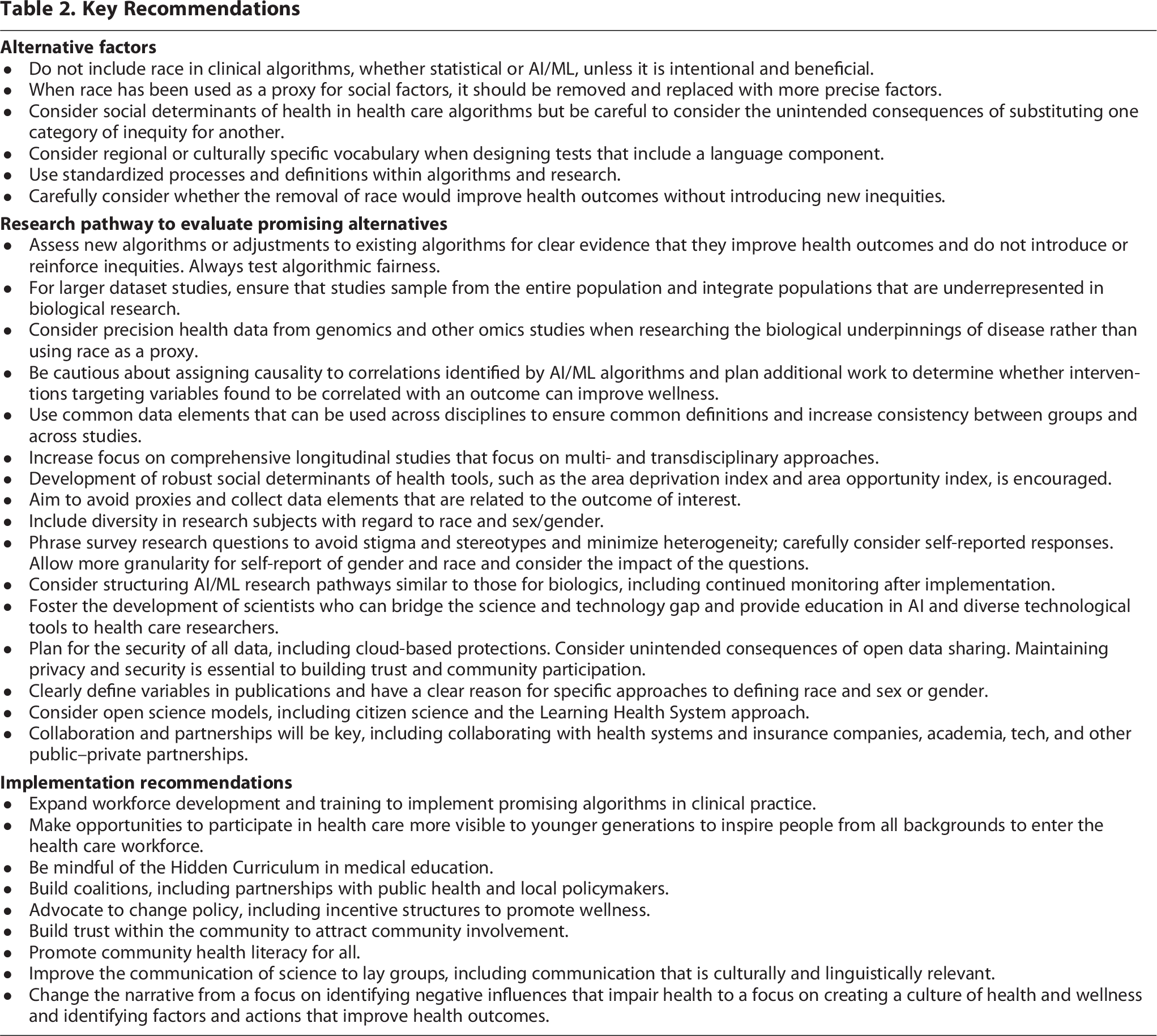

Key takeaways are summarized in Table 2.

Key Recommendations

Conclusions

The participants in the Summit on Advancing Precision in Health Assessment arrived at several recommendations for removing proxies from assessments and clinical algorithms with an aim of achieving more personalized medicine and improving fairness and equity in medical care. Participants strongly supported calls to replace race-based decision-making tools that erroneously consider race as a biological variable with race-conscious medicine. Participants acknowledged that it can be important to collect information about race for purposes of studies of fairness and to assess effectiveness of programs aiming to reduce inequity. If race is included as a variable in a study, participants recommend publications make clear the rationale for its inclusion and, when possible, how its inclusion improves accuracy.

In clinical algorithms, race likely serves as a proxy for underlying factors. When replacing race with other variables such as SDOH, participants emphasized the need for consistent definitions of variables, of making sure to only collect the minimum information needed for a study, and of making sure information is collected in a culturally considerate way.

To research and develop new or updated clinical algorithms, participants expressed optimism that big data and AI/ML tools will help achieve precision medicine and do away with the need to consider constructs such as race but noted the need for oversight. Participants supported the need to continue to invest in basic research, in the development of a workforce that bridges the gap between the clinic and the technology, and in the need to develop pathways to assess the fairness of algorithms before and after implementation.

To implement new clinical algorithms and achieve greater equity in health care, participants emphasized the need for diversity among medical practitioners and to educate the workforce on the advantages and limitations of AI tools. Implementation will require increased health literacy, partnerships with public health, government, industry, and, most importantly, communities. The basis of community involvement is trust.

Challenging and evolving from the status quo will be difficult, but committing to equity until equality, accepting equity and the work that will be required to achieve it, is the first step.

Glossary of Terms

Allostatic load: The cost of chronic exposure to fluctuating or heightened neural or neuroendocrine responses resulting from repeated or chronic environmental challenges that an individual reacts to as being particularly stressful. 38

Ancestry: Ancestry refers to a person’s ethnic origin or descent, “roots,” or heritage, or the place of birth of the person or the person’s parents or ancestors before their arrival in the United States. 98

Clinical algorithm: A set of rules or procedures to solve a clinical problem of diagnosis or management. 99

Equity: Fairness or justice in the way people are treated, and especially freedom from bias or favoritism. 100

Gender: Depending on the context, gender may reference gender identity, gender expression, and/or social gender role, including understandings and expectations culturally tied to people who were assigned male or female at birth. 101