Abstract

Behavioral analysis of moving animals relies on a faithful recording and track analysis to extract relevant parameters of movement. To study group behavior and social interactions, often simultaneous analyses of individuals are required. To detect social interactions, for example to identify the leader of a group as opposed to followers, one needs an error-free segmentation of individual tracks throughout time. While automated tracking algorithms exist that are quick and easy to use, inevitable errors will occur during tracking. To solve this problem, we introduce a robust algorithm called epiTracker for segmentation and tracking of multiple animals in two-dimensional (2D) videos along with an easy-to-use correction method that allows one to obtain error-free segmentation. We have implemented two graphical user interfaces to allow user-friendly control of the functions. Using six labeled 2D datasets, the effort to obtain accurate labels is quantified and compared to alternative available software solutions. Both the labeled datasets and the software are publicly available.

Introduction

Laboratory animal models are widely used for behavioral studies. Social animals, including many fish species, show specific group behavior with complex interactions. Experimental setups have been established to analyze group behavior with respect to underlying genetics and modulation by drugs. To study these and analyze interactions between individual behavior and group dynamics, the positions of all individuals need to be known. This can be achieved in a comprehensible way by tracking the positions in recorded videos. An easy solution is the tracking of marked individuals, but the physical marking of individuals may affect their behavior.1,2 A burdensome and error-prone possibility is the manual annotation of positions throughout time. Furthermore, for the human eye, individuals are often indistinguishable, which hinders a reliable identification. Also, manual tracking is exhausting, and when tracks cross or touching of individuals results in errors, these are propagated throughout the video.

Automated image processing and object tracking offer the possibility to relieve the workload by using software that automatically condenses video information into trajectories of all moving objects. 3 Depending on the experiment, the individuals need to be identified and tracked as quickly as possible or as accurately as possible.

Both cases require an image analysis that is capable of recognizing individuals within a video time sample (= image) and tracking them throughout time. Tracking of objects in videos can basically be done in two ways: First, single images can be used for segmentation and object detection, and the positions of the objects in the following frames deliver the trajectory. Therefore, either local image features [e.g., scale-invariant feature transform (SIFT) and histogram of oriented gradients (HOG)] may be extracted from single images, and bag of visual words representations are generated; on their basis, objects are determined using classifiers (e.g., support vector machines),4–9 or there are exact models of the objects to be found, which are compared with the given data.10–13 Feature extraction and classification can also be performed using deep learning models, 14 although these usually require a sufficient amount of training data. Second, the change rate of intensity or colors can be directly used to follow objects (e.g., a Kalman filter or particle filter).15–20

Besides algorithms for two-dimensional (2D) tracking, there are methods for three-dimensional (3D) tracking of fish,21–26 rodents, 27 dolphins, 28 and flies. 29 While 3D tracking can provide additional insights for behavioral analysis, 2D tracking is often preferred for its simplicity in experiment design and evaluation, and should therefore not be omitted. There is also commercial software like EthoVision, 30 which, however, costs several thousand dollars.

While many of the algorithms work well under simple conditions, they fail if the movement of objects is too small or too high 17 (e.g., individuals stay in their position or perform jerky leaps), or if videos contain shadows or reflections (e.g., from glass boundaries or water surfaces). Furthermore, if more than one individual is to be tracked, object detection suffers from touching and crossing of individuals, and often segmentation is correct but the assignment is wrong.

To avoid error propagation throughout the video, idTracker 9 extracts texture features of each animal to identify them during tracking. Recently, the new software package idTracker.ai was presented, 31 which is capable of automatically tracking multiple objects in videos. It was shown that the software works on heterogeneous data like videos of mice, zebrafish, and drosophila. Most tools, however, cannot be adapted to special domains, as will be shown later, and this would be necessary under more complex recording conditions. This hinders a fully error-free description of the movement trajectories, which is, however, absolutely mandatory in behavioral science. For example, if leadership within a fish swarm is to be quantified by the distance from fish to swarm, a single change of object to fish assignment will make this feature obsolete. Thus, manual corrections are mandatorily needed, and repetitions of the tracking algorithms are necessary. There are no frameworks, however, to support this approach in a semiautomatic manner.

We introduce a new method 32 designated for the robust 2D tracking of fishes (here, medaka and zebrafish) under challenging conditions. Since errors in the generated trajectories are unavoidable under these conditions, we further introduce a new and comprehensive tool not offered by any other available tracking software, supporting the user to easily change annotations if errors occur. In doing so, the tool facilitates both error detection and the subsequent correction process via a semiautomatic procedure. This leads to a completely new way of processing the data, consisting of tracking, correcting, and benchmarking, giving users the possibility to repeat, optimize, and compare processing algorithms. Our aim is to provide a software that can be used in behavioral sciences and can be handled without programming skills. Furthermore, we present a new benchmark dataset consisting of five labeled zebrafish and medaka videos, which for the first time allows a comparison of fish-tracking algorithms under differing conditions. Based on this benchmark, we introduce a new criterion to evaluate the manual effort to obtain error-free tracking results and use this criterion to compare and discuss our method with two state-of-the-art tracking algorithms.

Materials and Methods

Concept

Our goal is to track animals in videos recorded with a top-down perspective and to obtain error-free trajectories. We are aiming to process videos with up to 10 individuals and restrict ourselves to videos of fish in tanks (fish recordings, in contrast to those of mice, suffer from more frequent crossings and possible reflections on the water surface; and, when compared to drosophila flies, fishes are bigger in size and show a lower average speed, which is more suitable for standard cameras). The frequency needs to be at least 30 Hz to avoid tracking errors in fast movements. A resolution of 75 pixels per object is needed to segment reliably without taking too much processing time.

The main focus of a software lies on the robust tracking of various fish species, differing in form, color, or swimming properties. To be comfortable for the user, the tracking needs to take place autonomously without the need for continuous interaction.

If videorecording is done with a lot of care, autonomous tracking is feasible; however, inevitable errors occur often. Basically, we face two types of error sources: External influences on the videos, such as waves on the surface, mirroring effects, and inhomogeneous brightness, change the video content (e.g., color distribution and object position); and internal influences (crossing of fish trajectories and jump-alike movements) hinder proper object detection and tracking.

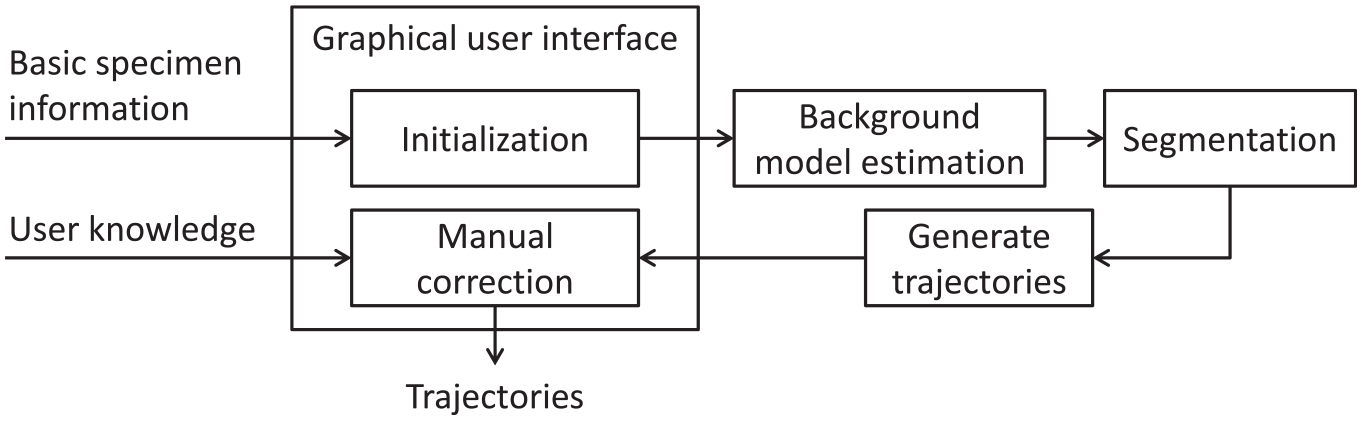

To cope with these effects, we propose the following workflow (see Fig. 1 ): Basic species information and parameters are requested from the user in a graphical user interface (GUI), which initializes all algorithms. The user is guided through the whole tracking process and might specify the processing parameters.

Workflow: The user provides the video and basic information about the fish via the graphical user interface (GUI). After estimation of the background model, segmentation of the individual images follows. The segmentation results are used to incrementally build the trajectories, which are then corrected using the GUI.

A background model is used to suppress external influences, such as inhomogeneous brightness, reflections of illumination sources, and so on. The model is estimated from the pixel-based median of a set of 25 images with a temporal distance of 10 s. Using the median instead of the mean has the advantage of preventing artifacts and ghosting effects caused by fish visible in one of the images.

Segmentation is done for each video frame separately (which allows the recovery of objects not being detected in previous steps, which would otherwise be lost for the rest of the video) with the aim to detect objects and suppress disturbances. Since disturbances can usually be evaluated regarding either the background or the color channel, a combination of two complementing processing steps is used. The first step uses the information of the background model to blend out stationary items with a similar color to the fish. The second step uses the color information specified by the user to blend out shadows and wall reflections caused by the fishes. Details are given in the online supplements. In a subsequent step, individual objects are extracted that represent connected areas of foreground pixels. The objects are then filtered based on their sizes to remove too-small objects. The remaining objects are then transferred to the subsequent tracking step.

To generate trajectories, the segmentation results of the individual frames are aggregated throughout time. Tracks that represent a chronological sequence of object positions are used for this purpose. At the beginning (t = 0), each segmented object creates a new track. In the next time step (t = 1), the segmented objects of this time step get assigned to the corresponding tracks. The corresponding tracks are found using James Munkres’s variant of the Hungarian algorithm, 33 an established and runtime-optimized method to solve assignment problems. If the cost of an assignment is too high, a new track is created, and the remaining one is stopped. To improve the quality of the assignment, a Kalman filter 34 is used to predict the position of the tracks for the time step of the assignment. After the whole video is analyzed, a post processing of the tracks is performed. Tracks that exist for only a short duration are considered as noise and therefore deleted. After that, incomplete tracks are combined by analyzing the time, position, and speed difference between the start and end of the tracks. After the tracking and post processing are completed, some tracks can still be incomplete and thus do not extend throughout the whole video duration; because not all fishes are always found in the segmentation, a dynamic memory structure is necessary that allows trajectories to start and end at any time.

With a GUI, the user can easily navigate through the video and directly jump to its key points to do manual corrections. On one hand, these are time points when fish have touched each other, and therefore an exchange of the assignment is possible; on the other hand, these are time points when fishes are lost, and thus tracks end prematurely. These tracks are indicated in the GUI by a different marker color for easy identification. The GUI also supports the user in the process of correcting and merging trajectories by interpolating the positions between annotations and optionally merging trajectories if their positions line up after the correction. This enables a simplified evaluation and faster corrections of trajectories.

Benchmark

Benchmarks are used to objectively compare the performance of object-tracking algorithms. They allow the algorithms to be evaluated on the same data with the same criteria. Since, however, there is no benchmark that is specifically designed for tracking several fishes, a new benchmark is presented here. The labeled dataset is freely available and can be downloaded from https://doi.org/10.5281/zenodo.3885775.

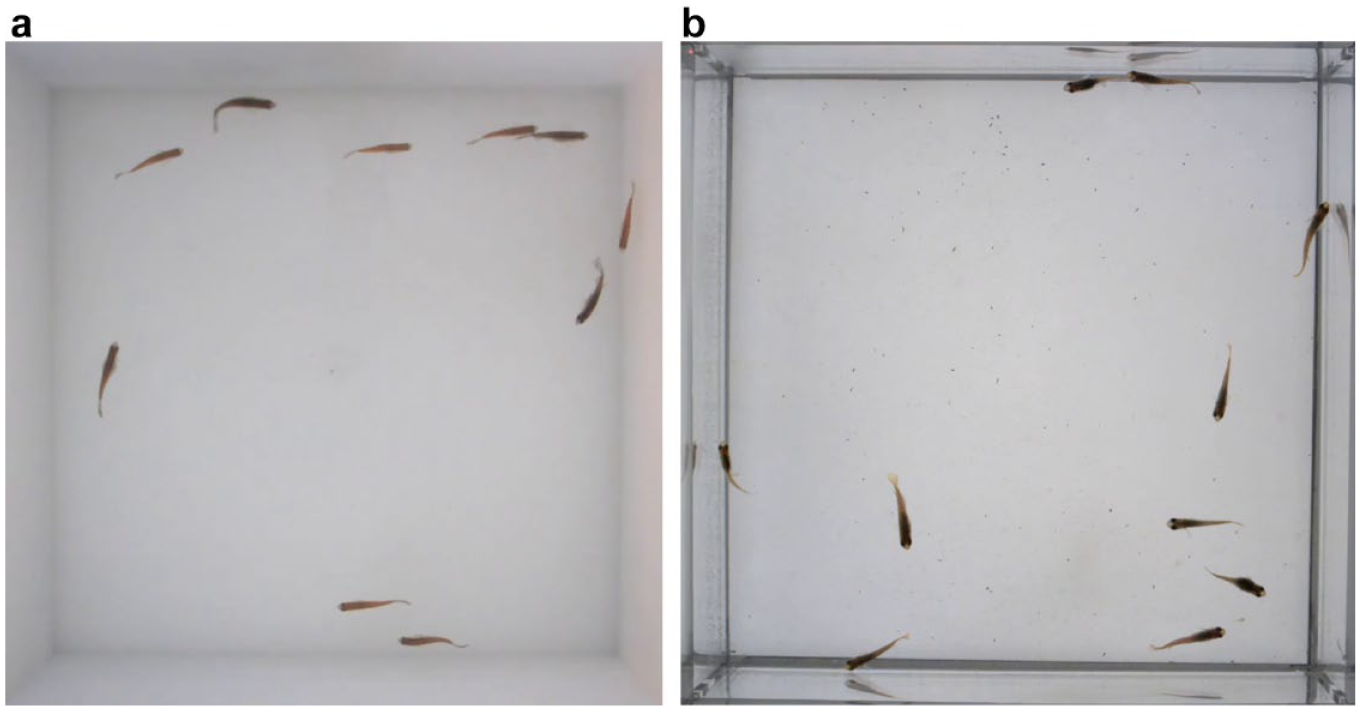

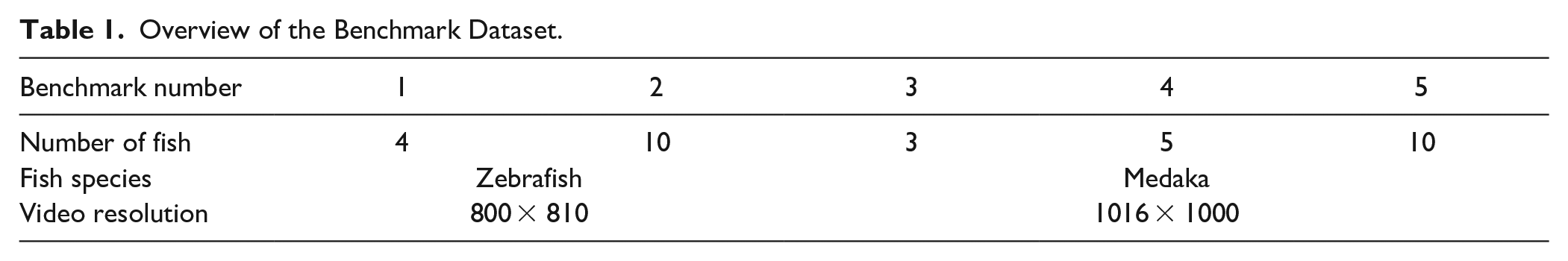

To test algorithms under various conditions as they occur in different laboratory environments, five videos differ in fish species (zebrafish and medaka; see Fig. 2 ), number of individuals (3–10; see Table 1 ), lighting, and type of tank. Each video consists of 10,000 frames, which at a frame rate of 30 FPS (frames per second) corresponds to a length of about 5.5 min.

Preview of the recording. (

Overview of the Benchmark Dataset.

In the recordings, the zebrafish differ from medaka by a higher average speed and sudden movements. Therefore, crossing and contacts occur more frequently, but mostly during only a short time period like a fraction of a second. Medaka show a lower average speed, and in some cases, they even remain still throughout a longer period of time. Due to the lower speed, there are fewer crossings and touches of the fish, but the crossings can last several seconds.

Since the medaka hardly move after the fish are released into the tank, the start of the recording is delayed until the first fish start to move again. At the first recording, 10 medaka fish were placed in the tank, while for the further recordings, some of the fish were taken out of the tank to obtain the required number of fish for the following recordings. Thus, it is possible that the swimming behavior changes due to the longer dwell time of the fish in the tank.

Furthermore, recordings differ in terms of the recording conditions and therefore the difficulty ( Fig. 2 ): Zebrafish videos were recorded under almost optimal conditions. To prevent reflections of the fishes on the walls, an opaque tank was used. In addition, the scene was illuminated by a diffuse light source mounted above the tank (uniform illumination without dark spots and light reflections on the water’s surface). Medaka videos were taken in a regular tank, in which wall reflections occur; the medaka fishes were recorded under different conditions to also test the algorithms under less favorable conditions, which are sometimes unavoidable due to experimental requirements. Also, the lighting setup was changed. The tank was illuminated from underneath by light-emitting diode (LED) panels, which result in good contrast but also lead to dark areas at the panel junctions that are similar in color to the fish.

All videos are completely labeled and checked for consistency. Parameters are given in Table 1 .

To evaluate the performance of tracking algorithms, we introduce the following measures, which are normalized such that 1 indicates the best possible result, and 0 the worst possible (or an unacceptable) result. Results of these measures were displayed in percentage format, and changes to the original measure were indicated by an asterisk (*).

MOTA: Validity of the trajectories (estimation false positives, false negatives, and mismatches of the assignment)

MOTP: Precision of the trajectories (the distance between the ground truth objects and the corresponding detected objects)

Mostly tracked: Number of objects being tracked ≥80% of the time

Mostly lost: Number of objects being tracked ≤20% of the time

Fragmentation: Number of fragments comprising a trajectory

TTC: Estimated time for error-free correction using the introduced GUI

Implementation

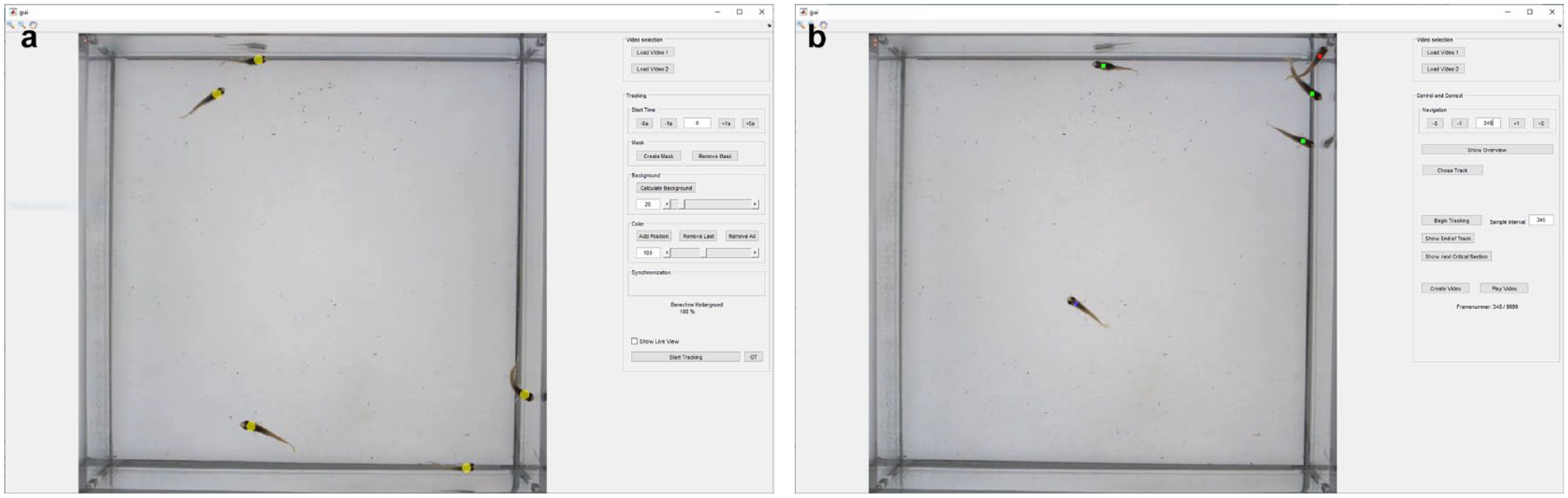

For the operation of the algorithms and the adjustment of the parameters, a GUI was designed (see Fig. 3a ). This enables non-specialized users to operate the algorithms. The interface features two modes: a tracking mode for setting parameters and executing the tracking, and a control and correction mode for optimizing the generated tracks in a semiautomated fashion.

Graphical user interface of the epiTracker. (

In tracking mode, the video to be analyzed is selected via a file selection window. The first frame is displayed in the preview, and various setting options are shown. To support the user, his inputs are visualized in the preview. The start time, at which the tracking should begin, is specified, and the preview gets updated to show the corresponding frame. To mask out uninteresting areas outside of the fish tank, the user is able to specify an area. Masked-out areas will be blacked out in the preview.

Based on the set parameters, the background model is calculated via the button “Calculate Background,” and the user is asked to specify the color of the fish by selecting pixels in the preview with the mouse after pressing “Add Position.”

The segmentation threshold values pcol and pbg are set via either sliders or text input, and the resulting mask mges is displayed in the preview. (Finding the ideal threshold values is not an easy task and often requires several adjustments. By visualizing the mask in combination with the sliders, however, the setting of the threshold values is considerably simplified.) The tracking can be started via a button; the progress is displayed in an info box.

After the tracking is completed, the interface switches to the “Control and Correct” mode (see Fig. 3b ). Here, the results of the tracking can be verified, and corrections can be made. A preview shows the locations of the tracks for a selected frame. A green marker indicates that a track is complete (i.e., it covers the entire video duration). A red marker, in contrast, indicates that a track is incomplete.

To correct erroneous tracking results, the GUI offers possibilities to either extend tracks semiautomatically or combine tracks. This procedure is repeated until all tracks are complete (marked green).

Then, the GUI shows time points at which the fish touched each other, because it is possible that the assignment changed between fish. If an error is detected in the track, its positions can be overwritten by manual annotations. A change of the assignment can be fixed by connecting the corrected track with the following track, as described above.

Results

In this section, the performance of the epiTracker is evaluated and compared to the performance of the idTracker, Version 2.1, and its successor, the idTracker.ai, Version v1.0.3-alpha.

Due to repeated software crashes, it was not possible to evaluate the recordings of medaka fish with the idTracker.ai. A complete reinstallation of the software, renaming the files and folders, and converting the videos to .mp4 (H.264 encoding) and .avi (motion JPEG encoding) using Matlab did not improve the situation. This might be fixed in newer versions of the software.

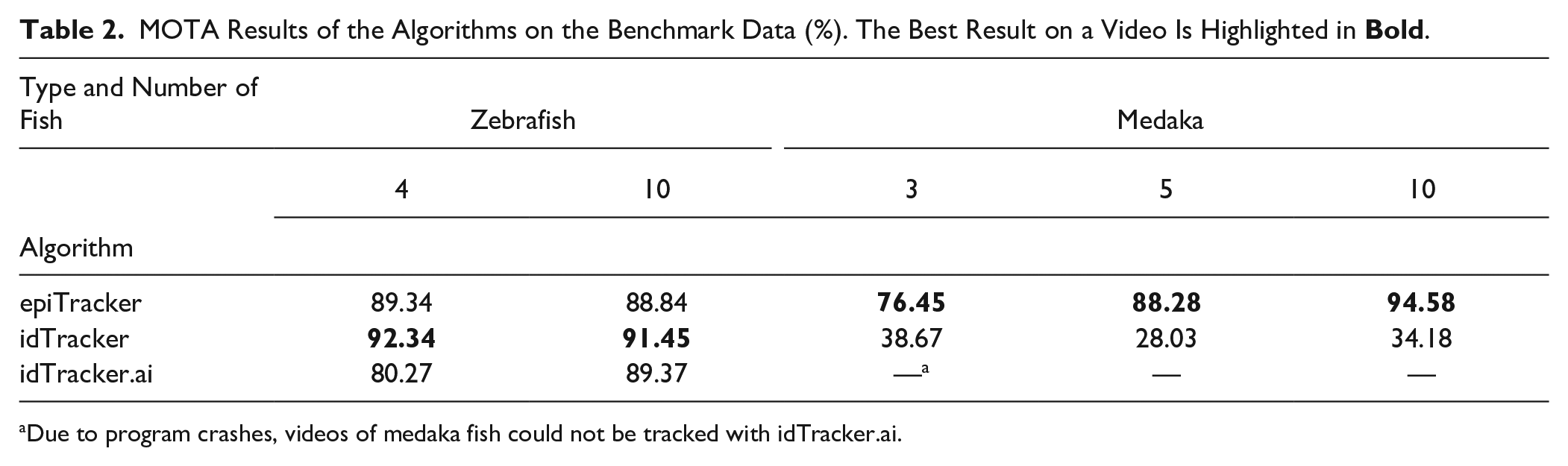

With the MOTA measure, it is possible to gain a quick overview of the performance of the algorithms, because it covers the most important error types: false positives, false negatives, and assignment mismatches. Because it is an important measure, Table 2 shows the achieved MOTA results of the algorithms on the individual videos of the benchmark. Using denoised zebrafish videos, all methods deliver roughly the same outcomes; using the more complex videos of medaka, the new epiTracker shows clear advantages. The recording conditions of the medaka videos greatly increase the previously low probability of false-positive and false-negative predictions, and while the lower swimming speed eases the tracking in normal situations, it also leads to long crossing times that are harder to resolve based on the previous movements.

MOTA Results of the Algorithms on the Benchmark Data (%). The Best Result on a Video Is Highlighted in

Due to program crashes, videos of medaka fish could not be tracked with idTracker.ai.

Regarding the error-free recordings of zebrafish, it can be seen that the values for MOTA are quite similar between the algorithms. The idTracker shows the best results with 92% (four individuals) and 91% (10 individuals), while the results of the epiTracker are about 3% lower. The idTracker.ai scores 89% on the video with 10 zebrafish, while the score of 80% for four individuals is 12% worse than that of the idTracker.

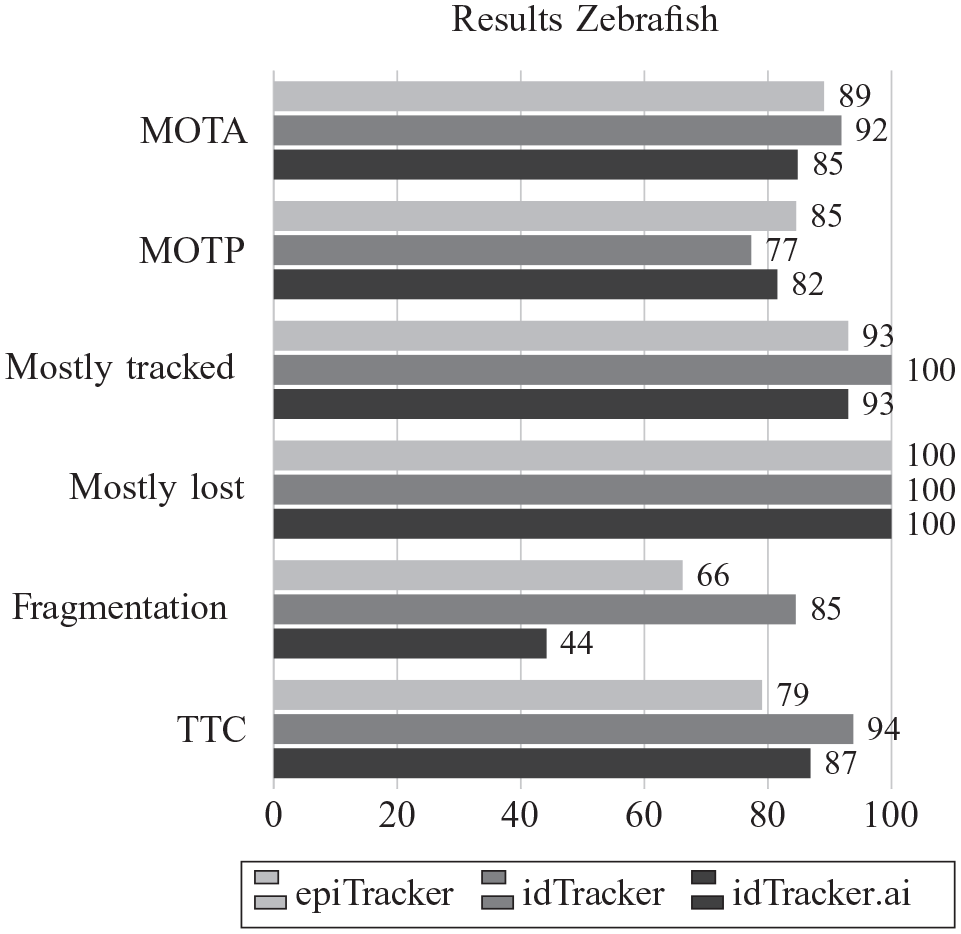

Figure 4 shows further evaluation measures, which represent the averages of all zebrafish recordings. The differences are higher when considering the other evaluation metrics (cf. Fig. 5 ). The best MOTP result is achieved by the epiTracker with a value of 85%, while the worst value of 77% is achieved by the idTracker. The difference among the three algorithms is less than two pixels for both videos.

Averaged results of the epiTracker, idTracker, and idTracker.ai on the benchmark videos of zebrafish.

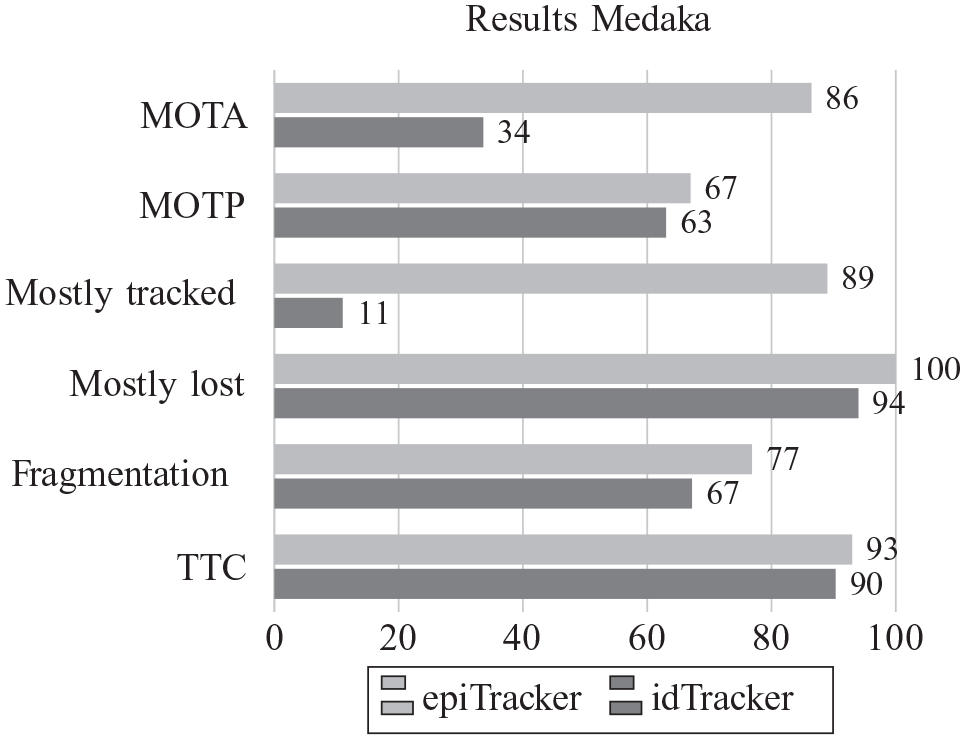

Averaged results of the epiTracker and idTracker on the benchmark videos of medaka.

With the idTracker, all fishes are rated as “mostly tracked,” and therefore a value of 100% is achieved. With the epiTracker and idTracker.ai, one individual is not evaluated as “mostly tracked,” and therefore a value of 93% is reached. Since no fish is counted as “mostly lost,” all algorithms achieve a value of 100% on the measure.

Considering the fragmentation of the tracks, the idTracker shows the least fragmentation (85%), followed by the epiTracker (66%) and idTracker.ai (44%).

Due to the low fragmentation of the trajectories, the idTracker requires the shortest time for correction according to the TTC measure. Four individuals need 57 min, and 10 individuals 166 min. The epiTracker needs the most time for correction (200 and 556 min). The idTracker.ai needs durations of 159 and 314 min to correct the trajectories. It should be noted that the times are based on a correction with the help of the presented GUI and that idTracker offers no possibility to correct predictions in any way, while idTracker.ai offers only a basic routine for the correction of assignment mismatches.

Regarding the noisy and error-prone recordings of medaka, the epiTracker shows the best MOTA results independent of the number of fish. It reaches a value of 76% for three individuals, 88% for five individuals, and 95% for 10 individuals. It is noticeable that the results of the epiTracker improve with increasing numbers of individuals. In comparison, the performance of the idTracker decreases from 39% for three individuals to 34% for 10 individuals (the result with five individuals is 28%). As mentioned, due to program crashes, videos of medaka fish could not be tracked with the idTracker.ai.

According to the MOTP measure, the trajectories of the epiTracker are located closer to the ground truth. With values of 67% (epiTracker) and 63% (idTracker), the precision decreases in comparison to the recordings of the zebrafish.

The large difference between the two algorithms regarding the MOTA measure is also reflected in the number of individuals counted as “mostly tracked.” Here, the epiTracker achieves a value of 89%, while the idTracker reaches a value of only 11%. On the results of the idTracker, one individual is even considered as “mostly lost,” resulting in a value of 94% compared to 100% for the epiTracker.

For the recordings of medaka fish, the fragmentation of the epiTracker’s trajectories is now better than that of the idTracker. On this measure, the algorithms achieve a value of 77% (epiTracker) and 67% (idTracker). This is again reflected in the TTC measure because the number of fragments is included in the calculation. Based on the TTC measure, a time of 138 min is needed to correct the trajectories generated by the epiTracker for the video with 10 individuals. The idTracker requires an additional hour for the correction (200 min).

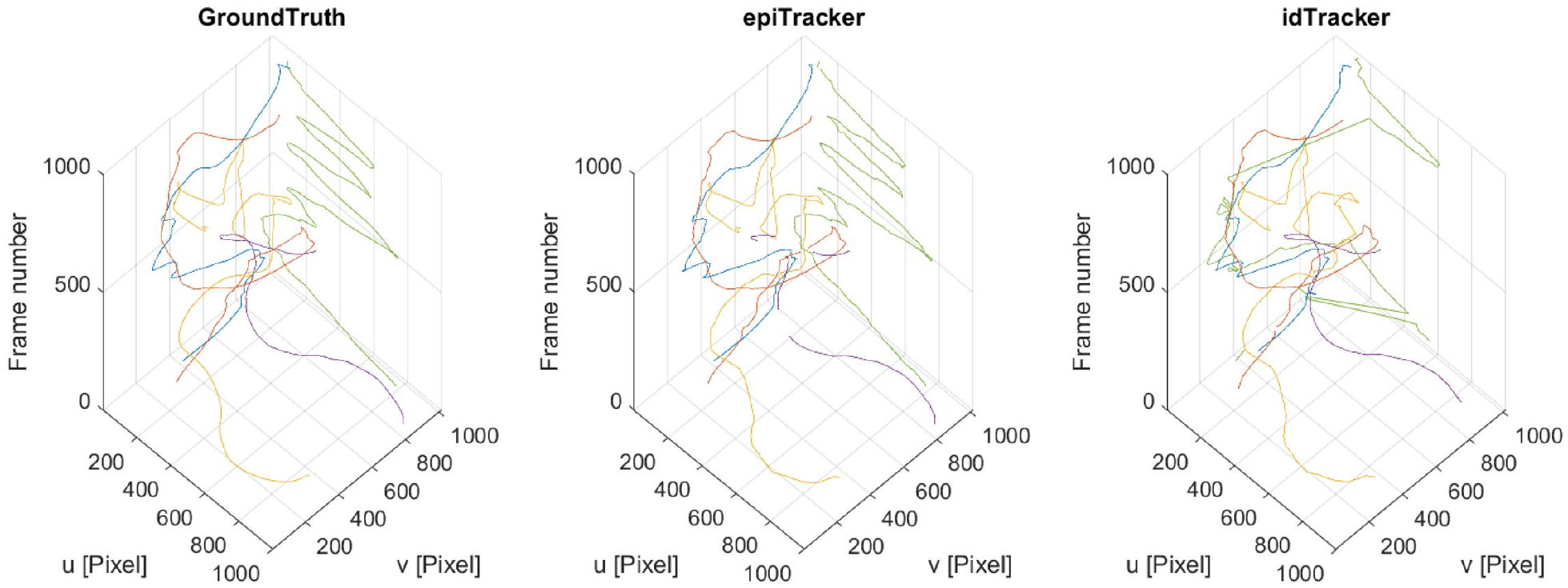

A visualization of the trajectories of the ground truth, the epiTracker, and the idTracker for the first 1000 frames of the recording of five medaka fish can be found in Figure 6 . The trajectories of the idTracker show jumps resulting from incorrect assignments. In addition, the idTracker shows a tracked object for which no ground truth exists. The epiTracker shows some interrupted trajectories.

Visualization of the trajectories of the video with five medaka fish. u and v represent the axes of the image coordinate system, and the z-axis indicates the time. Each trajectory or track fragment is represented by its own color. The green trajectory near the wall is fragmented for the epiTracker. In trajectories of the idTracker, several large jumps are visible in the green and blue trajectories.

Discussion

With its GUI, epiTracker also offers inexperienced users the ability to extract trajectories from recorded videos. In addition to tracking, the GUI enables the user to easily control and correct critical points in a semiautomatic manner, creating 100% error-free trajectories. This is especially important for specific behavioral analysis of individuals in the group, since a single change of the assignment will propagate through the rest of the video, rendering the results invalid.

The newly introduced benchmark offers more than 25 min (30 FPS) of fully annotated video data. With heterogeneous videos differing in aspects such as fish species (and thus swimming behavior), fish count, aquarium (matte or gloss), or the illumination setup, it is ideally suited for discussing the strengths and weaknesses of algorithms and comparing their performance in a quantitative manner.

On the easier recordings of zebrafish, the three algorithms performed nearly the same, with the idTracker showing the best MOTA scores. But on the more difficult medaka recordings, the idTracker showed worse performance than the epiTracker.

The considerably higher “false-positive” values of the idTracker for medaka fish can be explained by the reflecting walls of the tank. Looking at the result videos, it can be seen that the idTracker often tracks the reflections instead of the actual fish in recordings of medaka. These errors do not occur in zebrafish recordings, because reflections are completely prevented by the matte walls of the tank.

The result videos of the idTracker also show that in places where the background is very similar to the fish, the fish are no longer recognized by the idTracker. Due to the different illumination, there are no such places in the videos of zebrafish.

In addition, the idTracker has problems recognizing the textures of the fish due to the light source placed opposite the camera. This information is used to identify the fish and assign them to the trajectories. Thus, the idTracker sometimes detects the fish but cannot assign them to a trajectory. In the trajectory plot of the idTracker ( Fig. 6 ), a jump in the trajectory can be seen, which is the result of an assignment error. There, the assignment swaps with a fish, which is located in the opposite corner of the tank. Also, the fish swimming near the wall is not detected for 700 frames.

In contrast to the idTracker, the epiTracker is more robust against different recording conditions. By combining a background mask with a color mask, the fish can be detected even in the dark areas, and at the same time the detection of disturbances, such as reflections of the fish on the walls, can be avoided. As can be seen in Figure 6 , the idTracker wrongly tracks the reflection of a fish (parallel green and orange lines, top left), while the epiTracker only detects the real fish.

The robustness against reflections is especially important for the analysis of group behavior, since this leads to errors in the distances between the fish or the area of the convex hull formed by the fish.

The fact that the results of the epiTracker in medaka recordings improve with an increasing number of fish may be explained by the order in which the videos were recorded. First, 10 fish were placed in the tank at the same time. Since the medaka fish hardly move after insertion, the start of the recording is delayed until the first fish start to move again. For the further recordings, the corresponding number of fish have been taken out of the tank. Thus, it is possible that the swimming behavior changes due to the longer dwell time of the fish in the tank.

With the idTracker.ai, the small number of “false-positive” detections and “mismatches” is particularly noticeable. Probably the identification of the fish compared to the idTracker is improved by the neural net, and thus wrong allocations are avoided. The higher values for “false-negative” detections, however, suggest that the idTracker.ai will track the fish only if it is very confident in its identification. It should be noted, however, that in all videos, the “Crossing Detector” of the idTracker.ai is not used due to a too-low number of detected crossings and therefore lack of training data.

The idTracker makes slightly fewer mistakes regarding “mismatches.” While the number of errors caused by “mismatches” increases with the epiTracker in the recordings of zebrafish, the number of errors remains approximately the same for the idTracker. The zebrafish show a much unsteadier swimming behavior, which leads to more contacts and crossings than the medaka fish. By identifying the fish based on the texture, the idTracker makes fewer mistakes in the allocation.

Because a trajectory calculated by the idTracker can be assigned to several individuals, it is still problematic to blindly rely on the idTracker to distinguish the fish from each other.

The difference in the accuracy (MOTP) of the two fish species may be related to the different procedure for creating the ground truth. Instead of labeling the individual frames for all fish, the results of the algorithm were reviewed, and the errors corrected. As a result, the accuracy of the ground truth was improved, because the sampling interval was smaller.

In conclusion, the epiTracker is more robust under suboptimal conditions than the idTracker. Averaged among all videos, the epiTracker’s MOTA performance is more than 53% better than that of the idTracker (87.5% to 56.9%).

Thus, the epiTracker considerably broadens the application spectrum to obtain faithful tracks of several individuals in a group of moving animals. It allows researchers to run pilot experiments in simple setups, precluding the need for special tanks (reflection-free) and illumination arrangements. Because most fish tanks are made of glass or plastic with glossy surfaces, this is an important feature of the epiTracker application. The filter options and possibility to edit automatic tracking and thereby achieve high-fidelity tracking, especially under suboptimal conditions, are key features of the epiTracker. This permits researchers to analyze videos with different tank properties and variations in illumination, such as intensity and direction of light, and thus provides flexibility to test for environmental effects on the movement of animals.

Conclusion

This article presents a robust algorithm for tracking multiple objects in video recordings and a semiautomatic method to easily correct automatically generated tracks and thus achieve 100% error-free trajectories. To support the user and allow nonspecialists the usage of the algorithms, two graphical user interfaces are provided. Furthermore, a labeled benchmark dataset for the evaluation of tracking algorithms is presented alongside with a new metric to assess the manual effort needed to obtain error-free trajectories. The benchmark contains more than 25 min of video data, resulting in 50,000 labeled frames showing medaka and zebrafish.

Based on this benchmark, the performance of the proposed tracking algorithm and two state-of-the-art algorithms were compared under various conditions.

The benchmark shows that the idTracker is best suited for well-recorded conditions (zebrafish recordings). In contrast, if there are many disturbances like reflections in the videos (medaka recordings), the epiTracker should be used, because it clearly produces better results in this case. This is especially beneficial if an experiment prevents the use of a dull tank or requires special illumination methods.

The results also show that none of the compared algorithms can generate error-free trajectories, which illustrates the importance of the included correction tool of the epiTracker, if the user is in need of error-free trajectories. The tool accelerates the otherwise burdensome manual process of finding and correcting errors by providing a semiautomated procedure.

The results also show that the idTracker.ai cannot outperform its predecessor idTracker on the given data.

The application of this software is not limited to fish tracking, but could also be used in cell-tracking applications or even the tracking of players from a sports team.

In future work, we will investigate the possibility of a 3D tracking option in the software.

Supplemental Material

sj-pdf-1-jla-10.1177_2472630320977454 – Supplemental material for epiTracker: A Framework for Highly Reliable Particle Tracking for the Quantitative Analysis of Fish Movements in Tanks

Supplemental material, sj-pdf-1-jla-10.1177_2472630320977454 for epiTracker: A Framework for Highly Reliable Particle Tracking for the Quantitative Analysis of Fish Movements in Tanks by Roman Bruch, Paul M. Scheikl, Ralf Mikut, Felix Loosli and Markus Reischl in SLAS Technology

Footnotes

Acknowledgements

The authors acknowledge the excellent technical support of Cathrin Herder and fish husbandry of Nadeshda Wolf and Natalja Kusminski. Christian Pylatiuk helped to design and install the recording setup.

Supplemental material is available online with this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.