Abstract

Brain and Neuroscience Advances has grown in tandem with the British Neuroscience Association’s campaign to build Credibility in Neuroscience, which encourages actions and initiatives aimed at improving reproducibility, reliability and openness. This commitment to credibility impacts not only what the Journal publishes, but also how it operates. With that in mind, the Editorial Board sought the views of the neuroscience community on the peer review process, and on how they should respond to the Journal Impact Factor that will be assigned to Brain and Neuroscience Advances. In this editorial, we present the results of a survey of neuroscience researchers conducted in the autumn of 2020 and discuss the broader implications of our findings for the Journal and the neuroscience community.

Keywords

Introduction

Brain and Neuroscience Advances recently celebrated its fourth year as a Society-owned, fully open-access journal. During this time, it has continued to develop as a platform that publishes high-quality neuroscience research, including being indexed within PubMed Central. It is a journal for the neuroscience community, and as we continue to evolve, we wish to ensure that it reflects the aims of the British Neuroscience Association (BNA) and the community it represents.

The Journal has also grown in tandem with the BNA’s other activities and initiatives – most notably, the launch of our campaign to build Credibility in Neuroscience, through encouraging actions and initiatives aimed at improving reproducibility, reliability and openness (https://bnacredibility.org.uk/). The BNA is committed to improving the research culture in neuroscience, by putting these values into practice across the range of its activities, which naturally includes this Journal. The publication of our first Registered Report last year is one example of the type of credible practice we want to encourage through our role as a publisher (Henson et al., 2020).

But our commitment to credibility impacts not only what the Journal publishes, but also how it operates. With that in mind, the Editorial Board sought the views of the neuroscience community on the peer review process, and on how they should respond to the Journal Impact Factor (JIF) that will be assigned to Brain and Neuroscience Advances. In this editorial, we present the results of a survey of neuroscience researchers conducted in the autumn of 2020 and discuss the broader implications of our findings for the Journal and the neuroscience community.

Background

Factor friction

The BNA signed the San Francisco Declaration on Research Assessment (DORA) in 2019. DORA’s overarching recommendation is a commitment by signatories not to use journal-based metrics, such as JIF, as a surrogate measure of the quality of individual research articles, or to assess an individual scientist’s contributions, or in hiring, promotion, or funding decisions (https://sfdora.org/read/). This reflects JIF’s status as a crude, outdated metric, which has gone far beyond its intended purpose at the time of its inception.

In addition to this general recommendation, being a signatory to DORA also requires commitments specifically for publishers – for example, either by stopping any promotion of JIF at all, or by only presenting it alongside other journal-based metrics (DORA includes editorial and publication times, h-index and Eigenfactor as examples) to provide ‘a richer view of journal performance’. In the future, JIF will hopefully cease to be a metric that has the pervasive influence it has had on research assessment, and the quality of research will be assessed based on the research itself, not the journal in which it appears.

There are encouraging signs, not least through the growth in signatories to DORA since it was developed in 2012, and other initiatives that have emerged since. The Leiden Manifesto, which encourages best practice in metric-based research assessment, highlighted an alarming ‘impact factor obsession’ and the danger of gaming the system (Hicks et al., 2015).

How far does that obsession remain for the neuroscience community, and how in particular should this Journal manage JIF? The Journal will invariably have an impact factor assigned to it. Some publishers actively try to increase their JIF as part of their publishing strategy to encourage authors to publish in their journal, through means such as avoiding low-citation topics, and encouraging citation of papers in the same journal.

While wanting to eliminate JIF from the equation, we are aware that this may place our new journal-on-the-block at a disadvantage. For example, ignoring JIF altogether causes friction with potential authors given the importance that JIF still holds in the wider research environment. Indeed, some surveys of academic staff highlight that JIF still ranks highly as a determinant for publishing location (Taylor & Francis Group, 2019), and is still considered one of the most important factors for determining institutional promotion and tenure decisions (Niles et al., 2020). A recent survey by Vitae on behalf of UK Research and Innovation (UKRI) also highlighted that two-fifths of researchers who responded still report a negative impact on research integrity from JIF and other metrics, in relation to the perceived value of publishing in high-impact factor journals in order to secure funding, be hired and/or be promoted (Vitae, UK Research Integrity Office, UK Reproducibility Network, 2020). We suspect that these real-world consequences of JIF are likely to be most serious for early career researchers (ECRs), for whom the next salary is typically less certain.

There is, therefore, inevitable friction between the troubling impact that JIF is causing, and the hesitancy of individuals to ignore it while it still holds prominence. We sought to investigate this within the neuroscience community that we serve: does the ‘impact factor obsession’ remain and do attitudes vary depending on career stage?

Peer review

A second issue for publishing more credible neuroscience concerns peer review. This Journal has a single-blind peer review process in which the reviewers’ names are withheld from the author. This remains the most widely adopted peer review model, with Wiley, for example, highlighting that this is used by 72.5% of its health sciences journals and 90% of its life science journals (https://authorservices.wiley.com/Reviewers/journal-reviewers/what-is-peer-review/types-of-peer-review.html). Among the arguments against this model include that anonymity only to the reviewer limits the reviewer’s accountability for the recommendations they make (Etkin et al., 2017), and that the identity of the author can result in reviewer biases on decisions about publication (Tomkins et al., 2017).

Alternative models for peer review have emerged that seek to address some of the criticisms of traditional single-blind review by providing greater fairness and transparency. Double-blinded review, which removes the name of authors in addition to reviewers, goes some way towards providing anonymity to both sides. However, the degree to which it is effective in ensuring anonymity in practice can vary, with authors in some highly specialised fields still able to establish the author and institution from the information in the manuscript they review (O’Connor et al., 2017). Nonetheless, we suspect that even though reviewers might often have a good idea who the authors are, the fact that they will rarely be 100% certain may have important psychological consequences for how they approach their review.

Other models seek to provide greater transparency. Open peer review, whereby both reviewer and author identities are known, has shown popularity from some surveys (Ross-Hellauer et al., 2017). Other research on review invitation acceptance rates suggests that support for open peer review differs across age groups, with stronger support from younger reviewers (Publons, 2018). There are other models for greater transparency in which reviews are published along with the original article, often with reviewers given an option of whether they are identified. This has been offered by journals such as eLife, F1000Research and EMBO Press, with calls for other journals to follow suit (Polka et al., 2018).

A more open scientific environment underpins the BNA’s Credibility campaign to improve neuroscience overall. Society journals such as Brain and Neuroscience Advances can play an important role in facilitating open science – by being fully open access, publishing null results and Registered Reports, using Contributor Roles Taxonomy (CRedIT) and Transparency and Openness Promotion (TOP) badges; all features that help the publishing process enable reproducibility, replicability and reliability in science. Therefore, reconsidering how we peer review has become a key priority for the Editorial Board.

Engaging our neuroscience community

A society journal is well placed to proactively lead on such improvements in scientific publication, but it also needs to consider the changing views of its members and the wider neuroscience community that it serves. To this end, the BNA conducted a short survey of neuroscience researchers to canvass views on JIF and different options for peer review that the Journal could introduce.

The survey included a mix of BNA members and non-members involved in neuroscience research, and was conducted via SurveyMonkey between 23 September and 23 November 2020, launched within Peer Review Week 2020. The survey was completed by 263 UK-based respondents, with a further 49 respondents from outside the United Kingdom (including 28 members). Respondents were weighted towards academia: 35 (9.0%) were undergraduates, 74 (23.7%) were postgraduates and 74 (23.7%) were ECRs. This is the first time that the BNA has canvassed opinion from neuroscientists on either JIF or peer review, and as far as we are aware, this is the first survey to gather data on the views of neuroscientists on both issues.

Findings

JIF is still viewed as important across all career stages

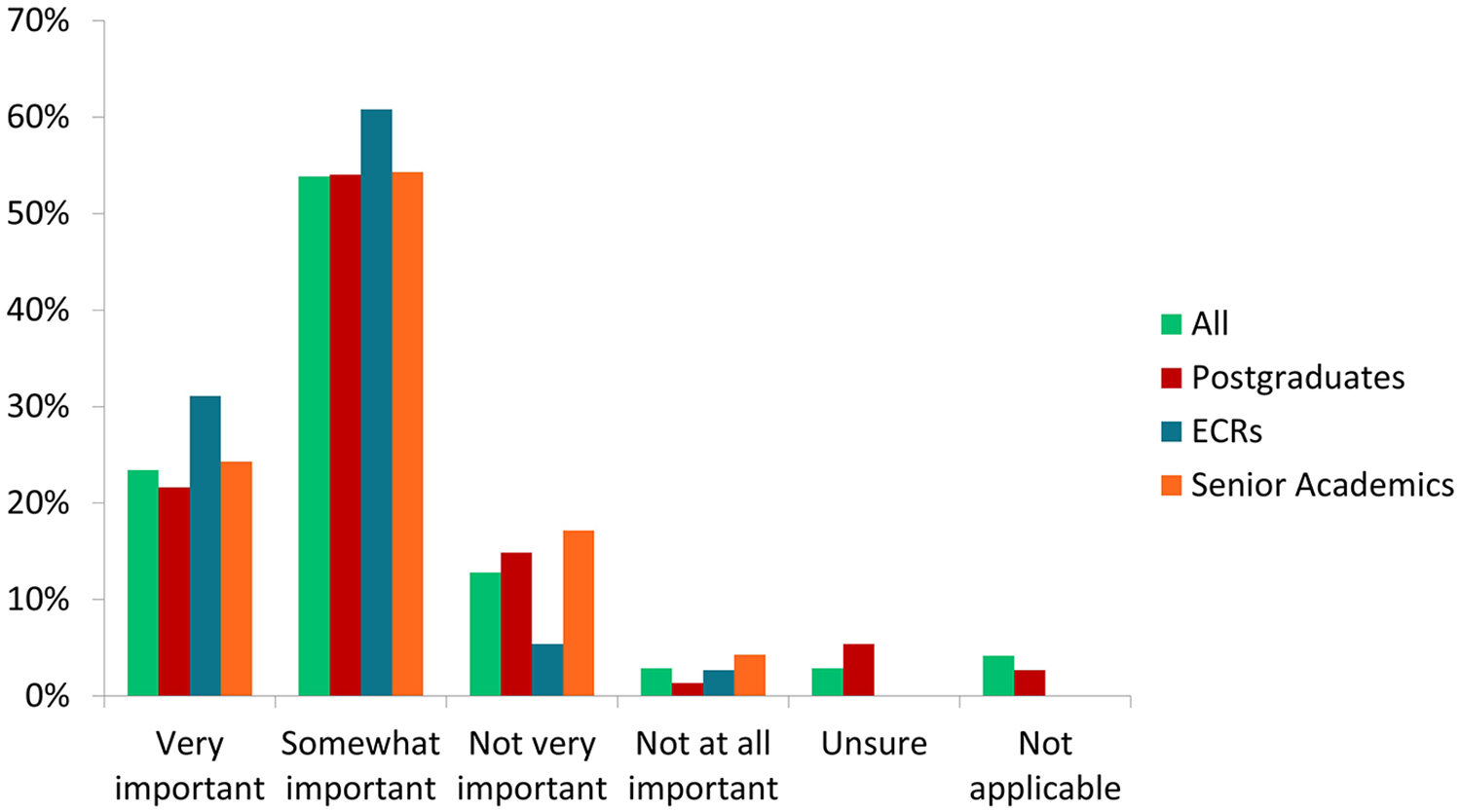

When considering where to submit an article, JIF remains important for a substantial majority of neuroscience researchers that took part in our survey – with 241 of the 312 respondents indicating this was somewhat important (53.9%) or very important (23.4%). This perceived importance was reflected across all career stages of respondents (see Figure 1), with 56 of the 74 postgraduates (75.7%) and 55 of the 70 senior academic researchers (78.6%) indicating it was either somewhat or very important. The highest proportion of perceived importance was seen among ECRs, with 68 of the 74 ECRs (91.9%) viewing JIF as either somewhat or very important to their choice of journal to publish in. While a substantial majority of senior academics view JIF as an important consideration, this was also the group where the greatest proportion considered JIF to be not very important or not at all important (21.4%).

Importance of JIF when considering where to submit an article for publication.

Using JIF as a promotional tool divided respondents, while strategically increasing JIF was clearly opposed

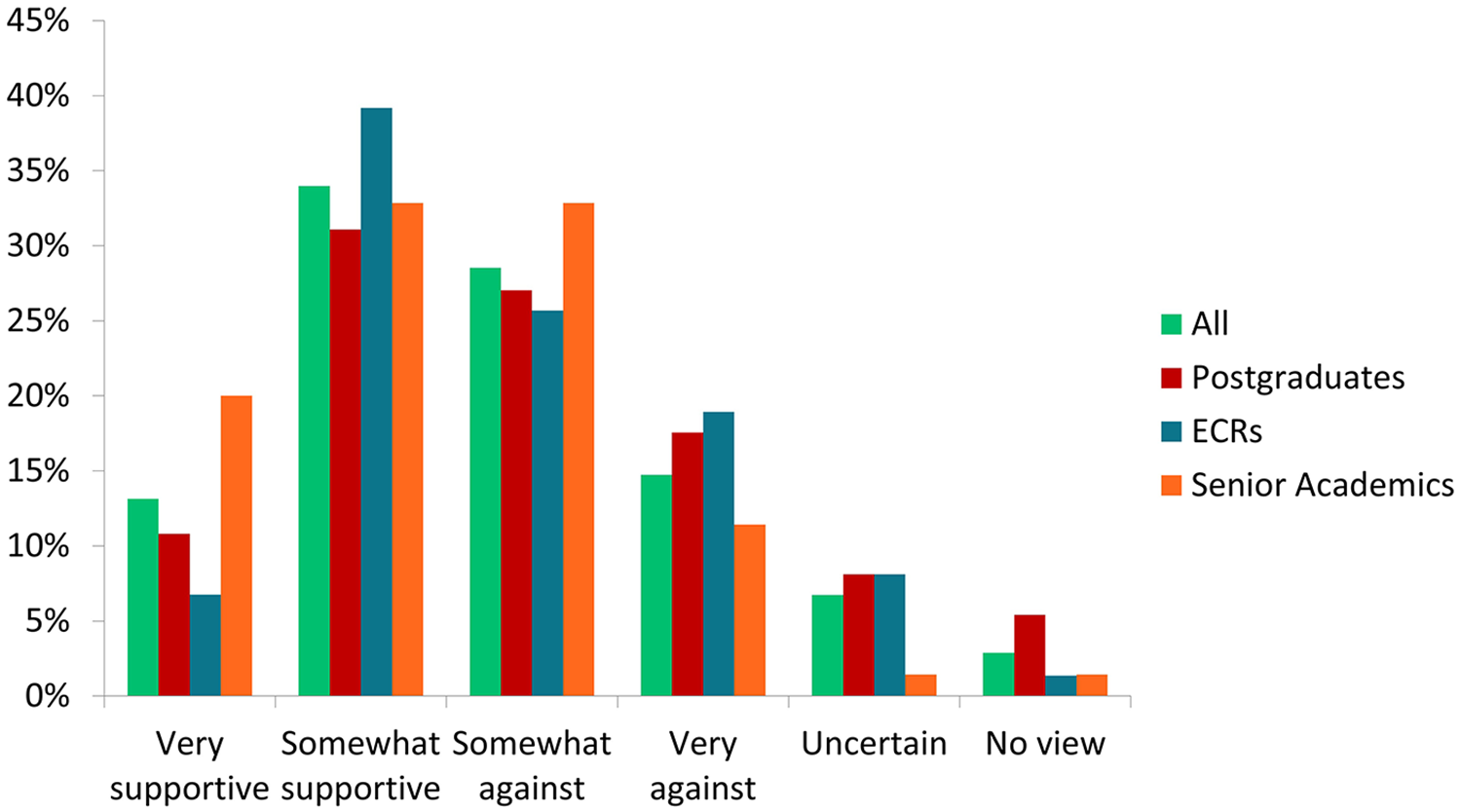

Respondents were asked for how they would feel about Brain and Neuroscience Advances actively promoting its JIF as a measure of its quality. Prior to this question, they were first made aware of the BNA being a DORA signatory.

This had a mixed response, although marginally more were ‘for’ than ‘against’, with 147 (47.1%) respondents supporting the suggestion compared to 135 (43.3%) not. There were again some differences according to career stage (see Figure 2), with postgraduates slightly more opposed than supportive (33 vs 31), compared to ECRs (34 supportive vs 33 against), while senior academics were the only career stage to express slightly higher support for this (37 vs 31).

Views on this journal actively promoting its JIF as a measure of the quality.

In contrast to the mixed response on JIF promotion, there was a clear majority of respondents opposed to the idea of Brain and Neuroscience Advances taking strategic steps to increasing its JIF (see Figure 3). This again followed the information of being a DORA signatory, in addition to examples of how a journal might do this. Overall, 226 (72.4%) were either somewhat or very against this, compared to 61 (19.6%) supportive. Postgraduates were noticeably less supportive of this (see Figure 4), with just 11/74 (14.9%) in favour, while senior academic researchers were the most against, with 52/70 (74.3%) opposed to this suggestion.

Views on whether this journal should promote or strategically seek to increase JIF.

Views on this journal strategically taking steps to increase its JIF.

Strong support for changes to peer review

In the survey, three mutually inclusive options were put to respondents in separate questions, alongside a limited description of each, to determine support for possible replacements of the Journal’s current single-blind peer review model. These were:

Double blind (identities of reviewer/author anonymised);

Open review (identities of reviewer/author known, and reviews published) and

A form of transparent review that includes a Peer Review Process File alongside a published paper (containing reviewers’ anonymised comments and editors’/authors’ correspondence).

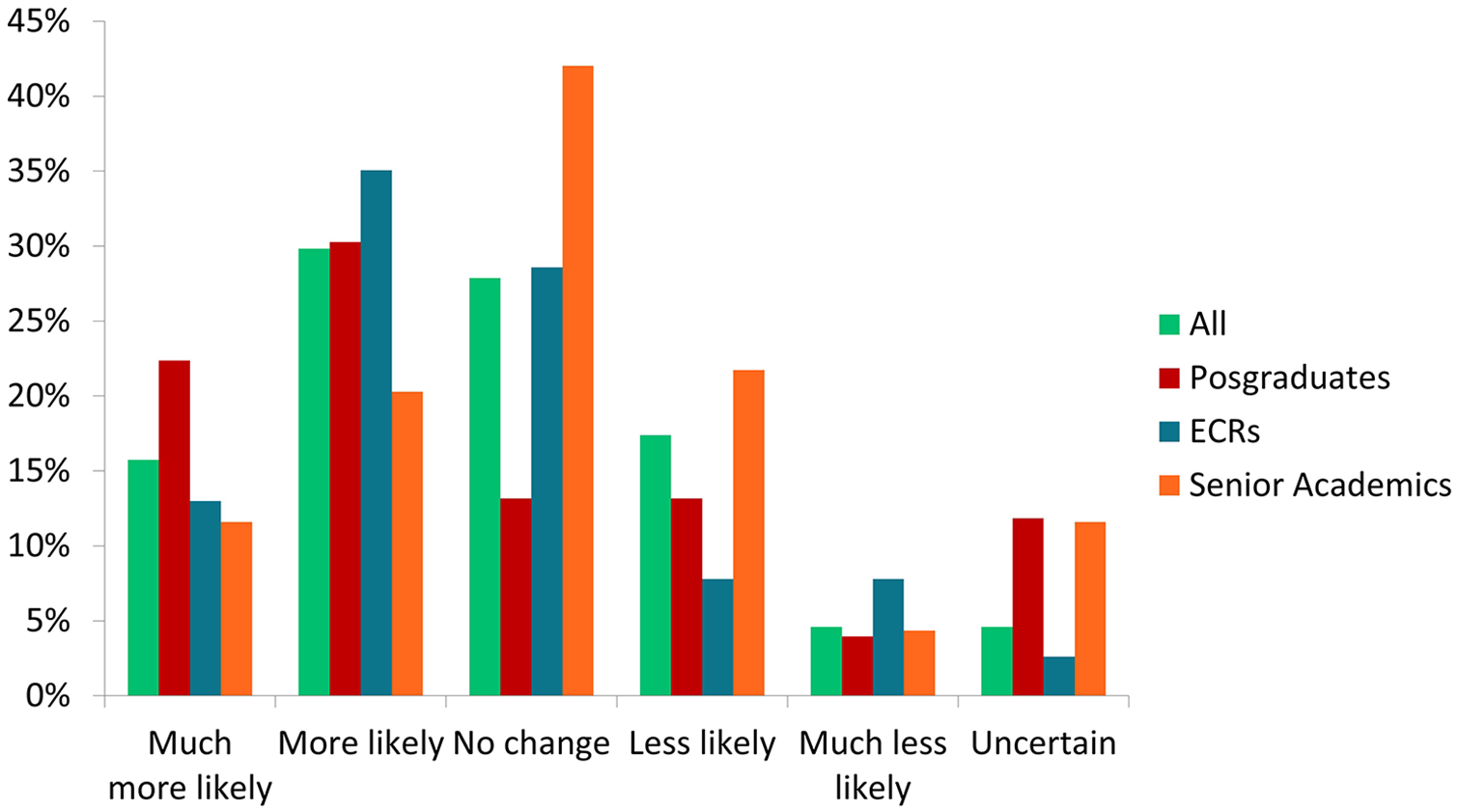

The transparent review model using a Peer Review Process file was the most popular of the three – 175/305 (57.4%) said they would overall be more likely to submit to the Journal, compared to 150/305 for double-blind review (49.2%) and 139/305 (45.6%) for open review. Double blind was considered by respondents to be the most likely of the options to have no impact on their decision whether to submit to the Journal, with 131/305 (43.0%) indicating this. But it was also the option least likely to discourage respondents from submitting, with only 12/305 (3.9%) stating they would be less likely or much less likely to submit an article with double-blind review in place (see Figure 5).

How the nature of the peer review process impacts authors’ decision to submit articles to this journal.

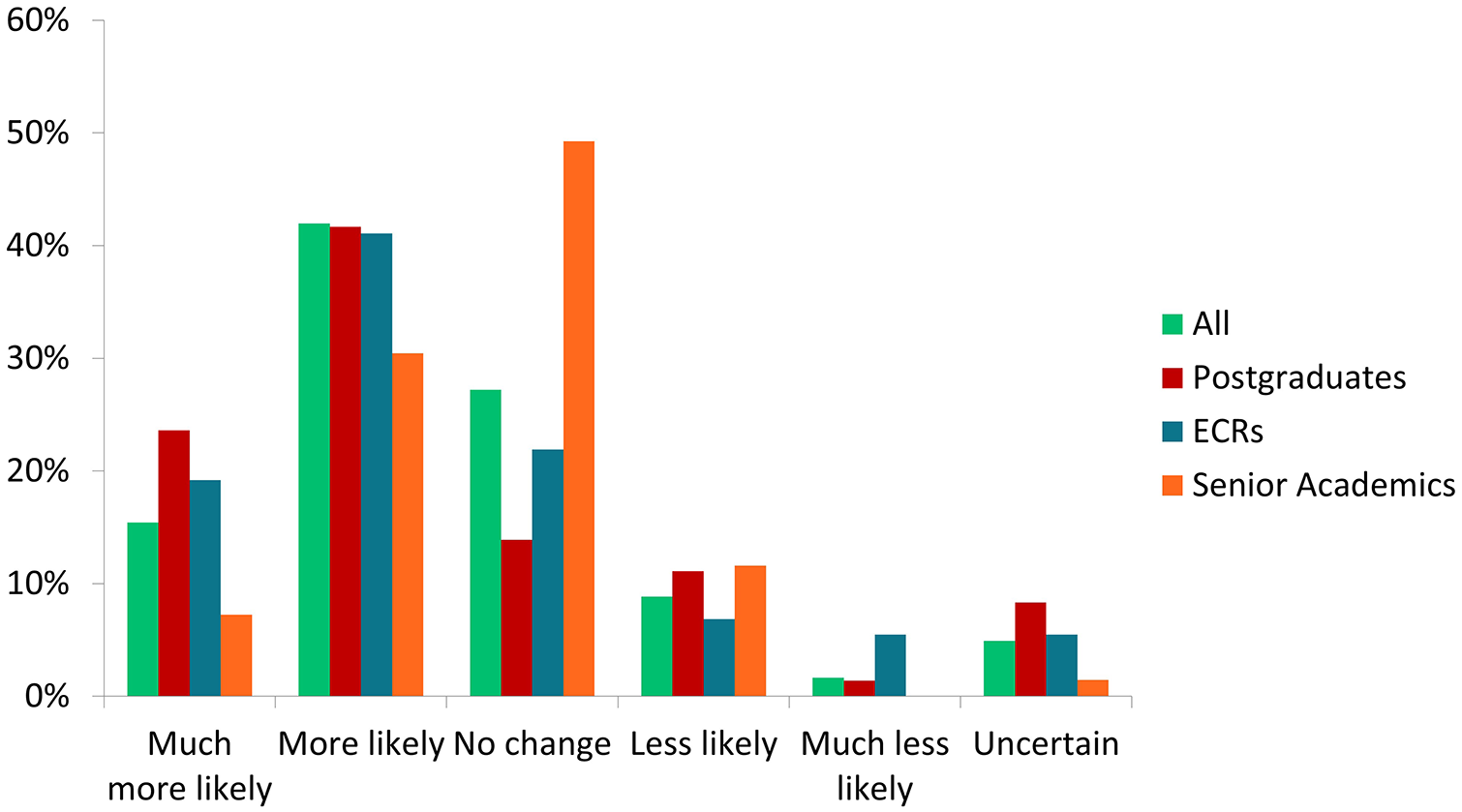

There were some differences between career stage when considering open review (see Figure 6). This was popular among postgraduates with 40/76 (52.7%) more or much more likely to submit using this compared to 13/76 (17.1%) who would be less or much less likely. However, this was the option for which senior academics had the most concerns, with 18/69 (26.1%) indicating they would be less or much less likely to submit articles under open review. The most common answer senior academics gave to both the suggestion of open review and transparent review (see Figure 7) was that it would not change their likelihood of submitting an article to the journal.

Likelihood of submitting an article to this journal under a model of open review.

Likelihood of submitting an article to this journal under a model that publishes a Peer Review Process File alongside it.

Key implications and next steps for the Journal

Despite the growth in support for initiatives such as DORA, to which the BNA remains fully committed, JIF clearly lingers as an important consideration for a substantial majority of the neuroscientists in our community. This was not intended as an extensive deep-dive into the motivations of neuroscientists on the reasons behind their choices in this survey, but we present these findings as a sense-check on attitudes towards the topics we looked at, and hope they will be of interest to neuroscientists, publishers and policymakers.

DORA’s guidance on JIF for promotional purposes is that, if not completely stopping JIF promotion, publishers should only present it alongside other journal-based metrics to provide ‘a richer view of journal performance’ (https://sfdora.org/read/). While the neuroscientists we surveyed consider JIF important to their publishing considerations, to the extent that nearly half would be happy for the Journal to use JIF as a promotional tool, it is encouraging that they do not want the Journal to use JIF to ‘game the system’ (e.g. discourage less popular topics or encourage self-citations), which perpetuates the problems that DORA was designed to address.

The Metric Tide, which in 2015 looked at the role of metrics in research assessment and management, recommended as part of a drive to improve research culture that publishers need to reduce an emphasis on JIF, look at broader measures of journal performance and encourage a shift towards assessment based on the academic quality of an article rather than JIF (Wilsdon, 2015). There are, as Wouters et al. (2019) have noted, better ways than JIF to judge a journal, using more responsible metrics. There has also been an emergence of alternative analytic metrics seeking to supplant JIF (Bornmann and Marx, 2016), although so far used to complement rather than replace (Scotti et al., 2020). Society-led journals such as ours have an important part to play in helping to drive change, and we will look to how we can incorporate other responsible metrics in the future, alongside providing additional information to help clarify how JIF and other metrics are calculated.

On the subject of peer review, the support from the neuroscience community for improved models that move away from the current single-blind review in use is reassuring. Similarly heartening is the message that, overall, the impact of these alternatives is unlikely to have a negative impact on decisions on whether to publish in Brain and Neuroscience Advances. This consensus will allow the Editorial Board to make the necessary changes to the peer review process with the backing of the BNA membership. While all alternatives presented were considered by respondents as preferable to our current single-blind review, the double-blind model and a form of transparent review that includes a Peer Review Process File were considered by respondents as the most popular model, with slightly less appetite for fully open peer review. The Editorial Board will in due course consider adopting these measures as we seek to modernise and reform the Journal and its peer review process more generally.

Supplemental Material

sj-docx-1-bna-10.1177_23982128211006574 – Supplemental material for Lifting the lid on impact and peer review

Supplemental material, sj-docx-1-bna-10.1177_23982128211006574 for Lifting the lid on impact and peer review by Joseph Clift, Anne Cooke, Anthony R. Isles, Jeffrey W. Dalley and Richard N. Henson in Brain and Neuroscience Advances

Supplemental Material

sj-pdf-1-bna-10.1177_23982128211006574 – Supplemental material for Lifting the lid on impact and peer review

Supplemental material, sj-pdf-1-bna-10.1177_23982128211006574 for Lifting the lid on impact and peer review by Joseph Clift, Anne Cooke, Anthony R. Isles, Jeffrey W. Dalley and Richard N. Henson in Brain and Neuroscience Advances

Footnotes

Acknowledgements

J.C. and A.C. are employed by the British Neuroscience Association (BNA) and its Credibility in Neuroscience programme, supported by the Gatsby Charitable Foundation (GAT3674), and played a key role in the design, conceptualisation, data collection, analysis and preparation of this editorial. The authors thank the respondents who took the time to participate in this survey.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.

Data accessibility statement

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.