Abstract

Neurophysiological recordings in behaving rodents demonstrate neuronal response properties that may code space and time for episodic memory and goal-directed behaviour. Here, we review recordings from hippocampus, entorhinal cortex, and retrosplenial cortex to address the problem of how neurons encode multiple overlapping spatiotemporal trajectories and disambiguate these for accurate memory-guided behaviour. The solution could involve neurons in the entorhinal cortex and hippocampus that show mixed selectivity, coding both time and location. Some grid cells and place cells that code space also respond selectively as time cells, allowing differentiation of time intervals when a rat runs in the same location during a delay period. Cells in these regions also develop new representations that differentially code the context of prior or future behaviour allowing disambiguation of overlapping trajectories. Spiking activity is also modulated by running speed and head direction, supporting the coding of episodic memory not as a series of snapshots but as a trajectory that can also be distinguished on the basis of speed and direction. Recent data also address the mechanisms by which sensory input could distinguish different spatial locations. Changes in firing rate reflect running speed on long but not short time intervals, and few cells code movement direction, arguing against path integration for coding location. Instead, new evidence for neural coding of environmental boundaries in egocentric coordinates fits with a modelling framework in which egocentric coding of barriers combined with head direction generates distinct allocentric coding of location. The egocentric input can be used both for coding the location of spatiotemporal trajectories and for retrieving specific viewpoints of the environment. Overall, these different patterns of neural activity can be used for encoding and disambiguation of prior episodic spatiotemporal trajectories or for planning of future goal-directed spatiotemporal trajectories.

Introduction

Memory-guided behaviour depends on circuit mechanisms and interactions of multiple structures, including the hippocampus, entorhinal cortex, and retrosplenial cortex (RSC, Figure 1(a)), and their interactions with subcortical regions, including the striatum and the septum. This review will describe neurophysiological data addressing the coding of space and time in these regions, with a specific focus on the mechanisms of encoding and disambiguation of spatiotemporal trajectories used to guide goal-directed behaviour that depends on episodic memory. Goal-directed behaviour includes traditional spatial memory tasks that require episodic memory that disambiguates specific spatiotemporal trajectories, but can also include more abstract non-spatial trajectories used to solve reasoning problems based on recent examples in tasks, such as the Raven’s progressive matrices task.

(a) Schematic representation of the rodent anatomy showing the location of the RSC on the posterior dorsal midline with bidirectional connections with the entorhinal cortex, which has bidirectional connections with the dentate gyrus (DG) and subfields CA3 and CA1 of the hippocampus. The medial septum provides cholinergic, GABAergic and glutamatergic innervation of the entorhinal cortex and of the hippocampus through the fornix. (b) The delayed spatial alternation task is impaired by lesions of hippocampus and medial septum and used for the recording of time cells, place cells, and context-dependent cells. This task uses a T-maze with a stem, two reward arms on left and right, and two return arms. During the delay period, the animal either waits at the base of the stem or runs on a treadmill. After the delay period, the animal turns into the left or right reward arm based on making the opposite response from the previous trial. The animal then returns along the return arm for another delay period in the stem (adapted from Kraus et al., 2013).

Episodic memory is defined as memory for events that occur at a specific place and at a specific time (Eichenbaum et al., 1999; Tulving, 1984). Thus, by definition, space and time are essential to forming the neural representations for episodic memory. Episodic memory includes the concept of mental time travel, in which episodic memory ‘produces many snapshots whose orderly succession can create the mnemonic illusion of the flow of past time’ (Tulving, 1984). Later, models of the full spatiotemporal trajectory of an episodic memory (Hasselmo, 2009, 2012) propose that episodic memory must contain more than a sequence of snapshots. Those papers argued that a full account of episodic memory should also include coding of the viewpoint or heading direction of a person perceiving a particular event (Conway, 2009), the speed of movement of that agent (Hasselmo, 2009, 2012), the movement of objects in the event (Hasselmo et al., 2010), and coding of context for disambiguation of memories (Hasselmo, 2009; Hasselmo and Eichenbaum, 2005). Consider an individual person or animal freely moving through an environment and encountering other agents or objects. This requires coding not only of where the agent was and the relative time that an event occurred but also requires more detailed information to encode memory of all elements of the episode, such as the direction the agent was facing, the speed it was moving at, the locations of objects or barriers relative to the agent, and the context of prior or future actions by the agent.

We will first review the neurophysiological data showing neurons that code time and space in the hippocampus, entorhinal cortex, and RSC, both separately but also as combined representations. Then we will address the complex range of other factors involved in coding a spatiotemporal trajectory and disambiguating it from other trajectories, such as the direction and speed of movement and the context of prior or future responses. Finally, we will address the egocentric coding of environmental coordinates and the interaction of this coding with disambiguating the coding of agent location.

Coding of time (time cells)

Neurophysiological data in the hippocampus and associated cortical structures reveal neurons that could be useful for coding of both time and space (Kraus et al., 2013, 2015; MacDonald et al., 2011; Pastalkova et al., 2008). Many neurophysiological studies use tasks that put a strong demand on the encoding and retrieval of spatiotemporal trajectories for episodic memory, by requiring behavioural responses that depend on retrieval of a prior event occurring at a specific place and time. For example, lesions of the hippocampus impair performance in the delayed spatial alternation task or delayed non-match to position tasks (Aggleton et al., 1986, 1995; Ainge et al., 2007; Hallock et al., 2013). In each trial of these tasks, animals experience a delay period on the stem of a T-maze, then run to the end of the stem (choice point) and make a left or right turning response into one reward arm. After another delay period, they must then make a turning response into the opposite arm on the next trial (Figure 1(b)) to get rewarded. This requires memory of both spatial location and the time of the prior trial to discriminate (disambiguate) it from other previous trials. Behaviour in these task is also impaired by lesions of the subcortical input to the hippocampus from the medial septum (Aggleton et al., 1992, 1995; Givens and Olton, 1990; Markowska et al., 1989; Numan and Quaranta, 1990; Wang et al., 2015) or by lesions of the entorhinal cortex, which provides cortical input to the hippocampus (Bannerman et al., 2001).

Neurophysiological data gathered in these types of tasks demonstrate neurons that code time intervals relative to task events, such as the onset of the delay period. These cells are referred to as ‘time cells’ because they respond at specific relative time points during a period of time in which the spatial location of the animal is held constant (Figure 2(a)). For example, the animal can be kept in a single location by requiring that they run in a running wheel or on a treadmill during the delay period. Using this technique, neurophysiological recording revealed hippocampal neurons that fire at specific intervals relative to the onset of running on a running wheel during the delay period of a delayed spatial alternation task (Pastalkova et al., 2008). Subsequent work showed similar time cell responses in a delayed non-match to object task (MacDonald et al., 2011) even if the animal was restricted to a single location by either running on a treadmill during a delay period (Kraus et al., 2013; Mau et al., 2018) or being head-fixed (MacDonald et al., 2013), (Figure 2).

Time cell responses in hippocampal region CA1. During running on the treadmill, individual different time cells respond at different time intervals relative to the start of the delay period, shown here using calcium imaging (Mau et al., 2018). (a) For example, one cell might respond after 2 s of running on all trials (left neuron), whereas another cell might respond after 4–7 s of running on all trials (right neuron). (b) Delay activity across a full population of time cells over 4 days. Neurons are ordered by the time of their peak firing relative to other time bins on Day 1. Degradation of the temporal code from Day 1 to 4 indicates that time cells changed their engagement in temporal sequences over time (adapted from Mau et al., 2018).

In this review, we focus on neural dynamics related to the encoding of spatiotemporal trajectories and accordingly refer to sequential activation of hippocampal cells during delay periods as ‘time cell’ activity (Eichenbaum, 2014; Howard and Eichenbaum, 2013; Kraus et al., 2013; MacDonald et al., 2011). However, it is important to consider that sequential time cell firing is likely a manifestation of a more general hippocampal mechanism of sequence generation (Buzsaki and Tingley, 2018). Numerous reports have shown that hippocampal ensembles sequentially tile task-relevant events spanning multiple domains (space, time, sensory, etc.) (Aronov et al., 2017; O’Keefe and Recce, 1993; Pastalkova et al., 2008; Skaggs et al., 1996). To this end, some argue that hippocampal ensembles are flexible in regard to the content of their inputs and, consequently, will ordinally map any feature space (though one should consider that dense innervation of the hippocampus from regions carrying head direction (HD) information may bias the system to respond to the spatial domain in rodents). An important question may be if there is any continuous feature of the task space that would not be reliably mapped by hippocampal sequences (Ahmed et al., 2020; Aronov et al., 2017).

Time cell responses have been shown in a wide range of different structures, including hippocampal region CA1 (Kraus et al., 2013; Mau et al., 2018; Pastalkova et al., 2008), hippocampal region CA3 (Salz et al., 2016), and the entorhinal cortex (Heys and Dombeck, 2018; Kraus et al., 2015; Tsao et al., 2018). Recordings in parietal cortex (PPC) and RSC during navigation of real or virtual linear tracks show distributions of peak neural activity over space similar to those observed for time cells (Alexander and Nitz, 2015, 2017; Harvey et al., 2012; Runyan et al., 2017). In contrast to neurons in hippocampal ensembles which typically are active in a small range of spatial or temporal bins with little background out-of-field firing, neocortical ensemble sequences typically are observed as uniformly distributed activation peaks for different neurons with non-zero activation at non-peak positions due to the higher spontaneous background activity of neocortical neurons (Alexander and Nitz, 2015; Harvey et al., 2012; Runyan et al., 2017). Accordingly, parietal or retrosplenial ensembles may generate sequential activation patterns that can be utilised to decode progression through a spatiotemporal feature space but likely at lower resolution than that observed in the hippocampus. Despite showing activity distributed over all positions in these tasks, neither the PPC nor the RSC has been explicitly tested for time cell type responses during delay periods with rodents fixed in real or virtual space, to use techniques comparable to those in studies of hippocampal or entorhinal time cells (Kraus et al., 2013, 2015).

The presence of time cells in multiple cortical regions associated with hippocampus raises the question of their role in coding memory on multiple different sensory time scales. Recent data suggest that the representation of time can be used on multiple time scales. Calcium imaging of the same population of hippocampal neurons over several days (Mau et al., 2018) shows that the neurons participating in the time cell sequence drop out of the sequence (or are added) slowly over time (Figure 2(b)) resulting in a change in correlation across the population on slow time scales of minutes or days (Mau et al., 2018). Slow drift in ensemble membership could provide a feasible mechanism for differential representation of temporally proximal memories through overlapping populations (Cai et al., 2016; Howard et al., 2014; Kinsky et al., 2020; Levy et al., 2020; Mankin et al., 2015; Rubin et al., 2015; Rule et al., 2019). Changes in the coding of place cells over the time scale of minutes or days were also shown previously (Mankin et al., 2015; Rubin et al., 2019; Ziv et al., 2013), and similar observations of drifting population representations of task-relevant variables have been observed in neocortex (Driscoll et al., 2017; Rokni et al., 2007). Models show that changes in the correlation of a population of cells on multiple longer temporal scales are essential for the capacity to differentiate episodic memories occurring at different time points on the scale of minutes, hours, or days (Howard et al., 2014; Liu et al., 2019). Future work should explore whether the rate of ensemble drift among regions composing the broader mnemonic processing network differs and if so, how unstable and distributed population correlations can remain anchored to the initial memory conditions in which they were instantiated.

One experiment compared time versus running distance by recording as a rat ran on a treadmill at different speeds during different delay periods (Kraus et al., 2013). This experiment showed that neurons could respond on the basis of either time or running distance during the delay (Kraus et al., 2013) as predicted by previous models (Burgess et al., 2007; Hasselmo, 2008). As alluded to above, neurons that fire as time cells may also code other dimensions related to episodic memory, consistent with evidence of mixed selectivity in other regions (Rigotti et al., 2013). A cell that fires as a time cell during running on the treadmill might also fire as a place cell during running off the treadmill on the return arms (Kraus et al., 2013; Mau et al., 2018), indicating that these cells do not only code time. Coding of both space and time was also shown for single grid cells in the entorhinal cortex (Kraus et al., 2015). These grid cells not only fired in an array of spatial locations when animals foraged in a two-dimensional (2D) environment but also fired as time cells at different time points during a 16-s delay as the rat ran in a single location on the treadmill. However, a recent study reported that space-encoding neurons and neurons coding time during immobility in entorhinal cortex form anatomically distinct subpopulations (Heys and Dombeck, 2018; Heys et al., 2014). Whether mixed selectivity manifests within individual neurons or through circuit interactions, the dual mapping of space and time within these structures could provide a basis for coding of spatiotemporal trajectories.

Time cells code time not only by overall firing rate but also by the phase of firing of cells relative to network theta rhythm oscillations. Time cells show theta phase precession of neurons within a time cell firing field (Pastalkova et al., 2008; Terada et al., 2017). For example, a neuron firing during the period of 4–5 s after the start of running starts out firing at late phases of theta and then progresses to earlier phases of theta. This resembles the phase precession by a place cell as an animal runs through the place field of the neuron. The potential important role of theta rhythm in this firing is indicated by the fact that inactivation of medial septum prevents the temporal specificity of firing by time cells (Wang et al., 2015) while also reducing the magnitude of theta rhythm in the hippocampus (Brandon et al., 2014; Rawlins et al., 1979). Notably, the same inactivation of medial septum also removes spatial specificity of grid cells as described below (Brandon et al., 2011; Koenig et al., 2011). Theta phase coding has the advantage that it could allow a single neuron to code a continuous dimension of time or space, which might allow a broader range of transformations on the level of single neurons that might be difficult to implement across a full population.

Coding of spatial location (place cells and grid cells)

A range of studies show that damage to the hippocampus also causes impairments in 2D spatial navigation in tasks, such as the Morris water maze, a task where the animal learns a specific goal platform location but must then navigate to that location from a range of different starting locations (Eichenbaum, 2017; Eichenbaum et al., 1990; Morris et al., 1982). This task requires the planning and disambiguation of spatiotemporal trajectories to generate the correct trajectory to the goal location from a new start location, and models have addressed the potential role of different neuronal subtypes in performing this task (Erdem and Hasselmo, 2012, 2014; Redish and Touretzky, 1998). Early models focused on the role of place cells and HD cells (Redish and Touretzky, 1998), but later models address the additional role of grid cells and speed cells in planning trajectories to a goal location (Erdem and Hasselmo, 2012, 2014). Impairments of goal finding in the Morris water maze are also observed after lesions of the entorhinal cortex (Steffenach et al., 2005) or the dorsal presubiculum (Taube et al., 1992), or following pharmacological inactivation (Brioni et al., 1990) or lesions of the medial septum (Marston et al., 1993). Performance in this task is also impaired after lesions of the RSC (Czajkowski et al., 2014), and learning of the task is impaired after parietal lesions (Hoh et al., 2003). As described in this section, these behavioural data relate to physiological data on the 2D coding of spatial location by place cells in the hippocampus and grid cells in the entorhinal cortex and coding of other spatial representations relevant to goal-directed behaviour.

Place cells in hippocampus

Neurophysiological recording in the hippocampus during exploration in both one-dimensional (1D) mazes and 2D mazes reveals place cells that respond selectively on the basis of the spatial location (O’Keefe, 1976; O’Keefe and Dostrovsky, 1971). A typical place cell might fire in a region of about 20 cm in diameter, but the size of firing fields can differ, and cells can have more than one firing field (Fenton et al., 2008). Place cells have been shown during foraging in an open field environment (Muller et al., 1987) and during behaviour guided by episodic memory in the eight-arm radial maze (McNaughton et al., 1983), or on the return arms in a spatial alternation task (Ainge et al., 2007; Kinsky et al., 2020; Wood et al., 2000). The position of an animal can be effectively decoded from the firing activity of hippocampal place cells (Brown et al., 1998), supporting their role in guiding behaviour in spatial memory tasks.

The discovery of place cells led to the important demonstration of theta phase precession (O’Keefe and Recce, 1993), which strongly supports phase coding by mammalian neurons. Place cells show theta phase precession as an animal runs through the firing field of a place field. As the animal enters the firing field, the spiking at first occurs at late phases of theta, and as it runs through the firing field and exits, the phase of spiking shifts to earlier and earlier phases of theta (Maurer et al., 2006; O’Keefe and Recce, 1993; Schmidt et al., 2009; Skaggs et al., 1996; Zugaro et al., 2005). Recordings of multiple hippocampal neurons show that theta phase precession can be associated with sequential spiking of neurons coding sequential places, termed theta sequences (Foster and Wilson, 2007), but that phase precession appears on the first trial on a novel linear track, whereas theta sequences only appear on later trials (Feng et al., 2015). This indicates that theta sequences may arise from the encoding of new episodic associations on a given day. Theta sequences could play an important role in guiding behavioural responses as discussed further in the section on context-dependent activity.

The broad range of studies on place cells cannot be reviewed comprehensively here. Instead, we will focus on data relevant to different theoretical mechanisms for the generation of spatial responses: the contrast between path integration versus egocentric to allocentric transformation (Figure 3). Path integration refers to the integration of a self-motion signal, including direction and speed, to generate current location (McNaughton et al., 1996, 2006). Egocentric to allocentric transformation refers to the computation of allocentric spatial location from the egocentric angle of sensory cues, such as the position of barriers or objects in the environment (Byrne et al., 2007; O’Keefe and Burgess, 1996).

Different theoretical neural mechanisms for tracking the self-location of an animal in an environment. (a) Computation of location can be determined from a velocity signal based on the animal’s self-motion (including both speed and movement direction). Integration of velocity will provide an update of the current location (McNaughton et al., 2006). (b) Alternately, location can be determined by computations based on the allocentric angle and distance to visual features (Bicanski and Burgess, 2018; Byrne et al., 2007; Raudies and Hasselmo, 2015). (c) Computation of location from visual features requires initial coding of egocentric angle and distance to environmental cues, such as boundaries, followed by transformation into allocentric coordinates.

Previous studies have supported the potential role of either path integration (Figure 3(a)) or sensory transformation (Figure 3(b)) for generation of place cell responses. In one study, the distance from a starting box location to a goal location on a 1D track was shifted after the animal reached the goal (Gothard et al., 1996a, 1996b), and the firing of some place cells depended on the distance from the start location (supporting path integration), whereas others responded based on the visible box location (supporting spatial coding from sensory landmarks). Another study tested the role of self-motion by recording place cells during active running, compared to riding on a train (Terrazas et al., 2005), and showed that place firing was more accurate during active running, suggesting an important role of the integration of self-motion (Figure 3(a)). In addition, testing in darkness showed that place cells can maintain their firing fields in the dark when a rat starts out in light conditions in the maze (Quirk et al., 1990).

However, a number of studies show the importance of distal sensory cues (Figure 3(b)) in the firing of place cells. For example, rotation of a visual cue on the wall of a circular environment causes rotation of the position of place cell firing (Muller and Kubie, 1987), and placing an animal into the maze in darkness results in firing fields that differ from those when it enters in light (Quirk et al., 1990). Hippocampal neurons, including place cells, can also respond to a variety of other sensory cues, such as odours, reward, or tactile surface boundaries (Anderson and Jeffery, 2003; Wiener et al., 1989). Recent studies also show that hippocampal neurons can map other sensory dimensions, such as auditory frequency space (Aronov et al., 2017). The role of path integration versus distal sensory cues will be discussed further in a later section.

Grid cells in entorhinal cortex

Neurophysiological recording in the entorhinal cortex demonstrates different types of coding of spatial dimensions. The most striking form of coding involves the response of entorhinal grid cells, which respond when a foraging animal visits an array of spatial locations in the environment that appear in a hexagonal pattern (Hafting et al., 2005). Different grid cells fire with different size and spacing between firing fields, allowing a population of grid cells to code a single location (Barry et al., 2007; Heys et al., 2014; Sargolini et al., 2006; Stensola et al., 2012). Many grid cells code both for the animals’ location and the current HD of the animal (Sargolini et al., 2006).

Entorhinal grid cells also exhibit phase coding in the form of theta phase precession as an animal runs on a linear track (Hafting et al., 2008) or as an animal forages in two-dimensions in an open field (Climer et al., 2013; Jeewajee et al., 2014). Consistent with this, the intrinsic rhythmicity of entorhinal neurons differs with spatial scale (Jeewajee et al., 2008) and shifts with running speed (Hinman et al., 2016; Jeewajee et al., 2008). The potential role of theta rhythm in generation of grid cell responses is supported by the fact that inactivation of the medial septum causes both a dramatic reduction of theta rhythm in the entorhinal cortex (Jeffery et al., 1995; Mitchell et al., 1982) and a loss of the spatial selectivity of firing of grid cells (Brandon et al., 2011; Koenig et al., 2011). The specific population of medial septal neurons involved in regulating grid cell firing has not yet been demonstrated. However, recent studies show that inactivation of glutamatergic neurons causes a decreased specificity in grid cell firing activity and inactivation of GABAergic neurons in the medial septum results in the loss of grid cell spatial firing along with a reduction in theta rhythm oscillations (Robinson et al., 2019).

Similar to place cells, the mechanism of grid cell firing could depend on both path integration of self-motion (Figure 3(a)) and transformation of sensory input (Figure 3(b)). In some experiments, grid cells retain their spatially periodic firing pattern in darkness, indicating the potential role of path integration of self-motion in the absence of visual cues (Dannenberg et al., 2020; Hafting et al., 2005). However, other experiments support the role of sensory input by showing rotation of grid cell firing fields with rotation of visual cues in a circular environment (Hafting et al., 2005) and by showing a loss of accurate spatial coding by grid cells when absence of visual cues is accompanied by initial disorientation and removal of other cues, such as auditory and olfactory input (Chen et al., 2016; Pérez-Escobar et al., 2016). Shifting from an open field environment to a zig-zag maze (Derdikman et al., 2009) or a spatial alternation task (Gupta et al., 2014) causes a shift from the 2D hexagonal array of grid cell firing fields to a different 1D distribution of fields that does not perfectly match the 2D distribution. Grid cells show more accurate coding near environmental boundaries than at a distance from boundaries (Hardcastle et al., 2015), consistent with an influence of sensory cues near boundaries, and they show reduced accuracy in darkness when other cues still remain (Dannenberg et al., 2020). The role of sensory input is further supported by evidence that grid cells lose spatial coding during inactivation of regions providing HD input (Winter et al., 2015).

Spatial coding in RSC and PPC

Spatial correlates of neurons within RSC and PPC association cortices are likely dependent on mixtures of sensory, self-motion, and spatial information (Minderer et al., 2019; Voigts and Harnett, 2020). Despite indications from human neuroimaging experiments, there is currently no evidence of grid cell activation patterns in RSC or PPC (Doeller et al., 2010), but note that grid-like patterns were recorded in the cingulate bundle (Alexander et al., 2020). Instead, recent work demonstrated complex but spatially reliable activation patterns in RSC neurons as animals freely explored 2D environments (Alexander et al., 2020). There is less evidence of allocentrically anchored spatial location correlates of PPC neurons under similar free foraging conditions (Whitlock et al., 2012). In contrast to allocentric spatial representations in entorhinal cortex, parietal neurons predominantly respond to the organisation of actions (Whitlock et al., 2012).

There have been limited reports of place-like responses in PPC and RSC (Harvey et al., 2012; Krumin et al., 2018; Mao et al., 2017, 2018) and it is important to consider that nearly all such observations have occurred in calcium imaging experiments utilising head-fixed animals navigating virtual environments. In freely moving animals navigating linearised environments, RSC and PPC neurons typically exhibit reliable spatial activation patterns that are continuously modulated across spatial positions and primarily non-zero in rate magnitude. This suggests that the sparser spatial coding observed in these areas during virtual navigation arises from reduced self-motion information and, in particular, impoverished vestibular signals. Recent methodological developments for two-photon calcium imaging that enables head rotations should yield important future insights about the integration of idiothetic, sensory, and spatial variables in overall activation patterns of neurons within RSC and PPC (Voigts and Harnett, 2020).

A critical yet often overlooked component of navigation for most animals and spatiotemporal trajectory encoding is mapping of known routes. Reliable activation of RSC and PPC ensembles during track running provides information about the animal’s progression through a route (Alexander and Nitz, 2015, 2017; Nitz, 2006, 2009, 2012; Whitlock et al., 2012). As mentioned above, PPC and RSC exhibit continuously modulated firing rate patterns that are reliable on a trial-by-trial basis during running on a labyrinthine maze. Ensembles of neurons exhibiting these firing properties produce distinct population rate vectors for each position within the route which can be decoded to accurately estimate the animal’s position between the start and end of the trajectory and the animal’s distance from any location within the route (Alexander and Nitz, 2017; Nitz, 2006). Furthermore, this form of activation is sensitive to geometric properties of routes. Recent work has shown that RSC neurons exhibit spatial periodicity anchored to local features within routes possessing repeating elements and that the frequency of spatial periodicity is modulated in predictable ways when route geometry is altered (e.g. moving from a route with square features to a circularly shaped trajectory; Alexander et al., 2017). Accordingly, the RSC ensemble may extract a set of spatial basis functions that could be utilised to map the relative relationships among all positions within a known trajectory.

Route-related firing rate profiles cannot be explained purely by self-motion as neurons commonly exhibit differential activation for repeated actions within the route. PPC correlates are invariant to the position of the route in the broader allocentric environment as firing patterns are preserved when the track is moved to a different position and/or rotated relative to visible distal cues. In contrast, RSC route representations are conjunctively sensitive to the position of the trajectory within the broader allocentric space and form new representations when routes are moved. Recent work has demonstrated that these route-referenced activation patterns develop with learning of an environment in RSC, suggesting that the route representation may emerge after associating route locations with multimodal information, such as actions, goals, or sensory landmarks (Miller et al., 2019; Vedder et al., 2016). Similar correlates have also recently been reported in other cortical regions indicating that route-referenced activity is distributed across multiple regions (Kaefer et al., 2020; Rubin et al., 2019).

Coding of prior context

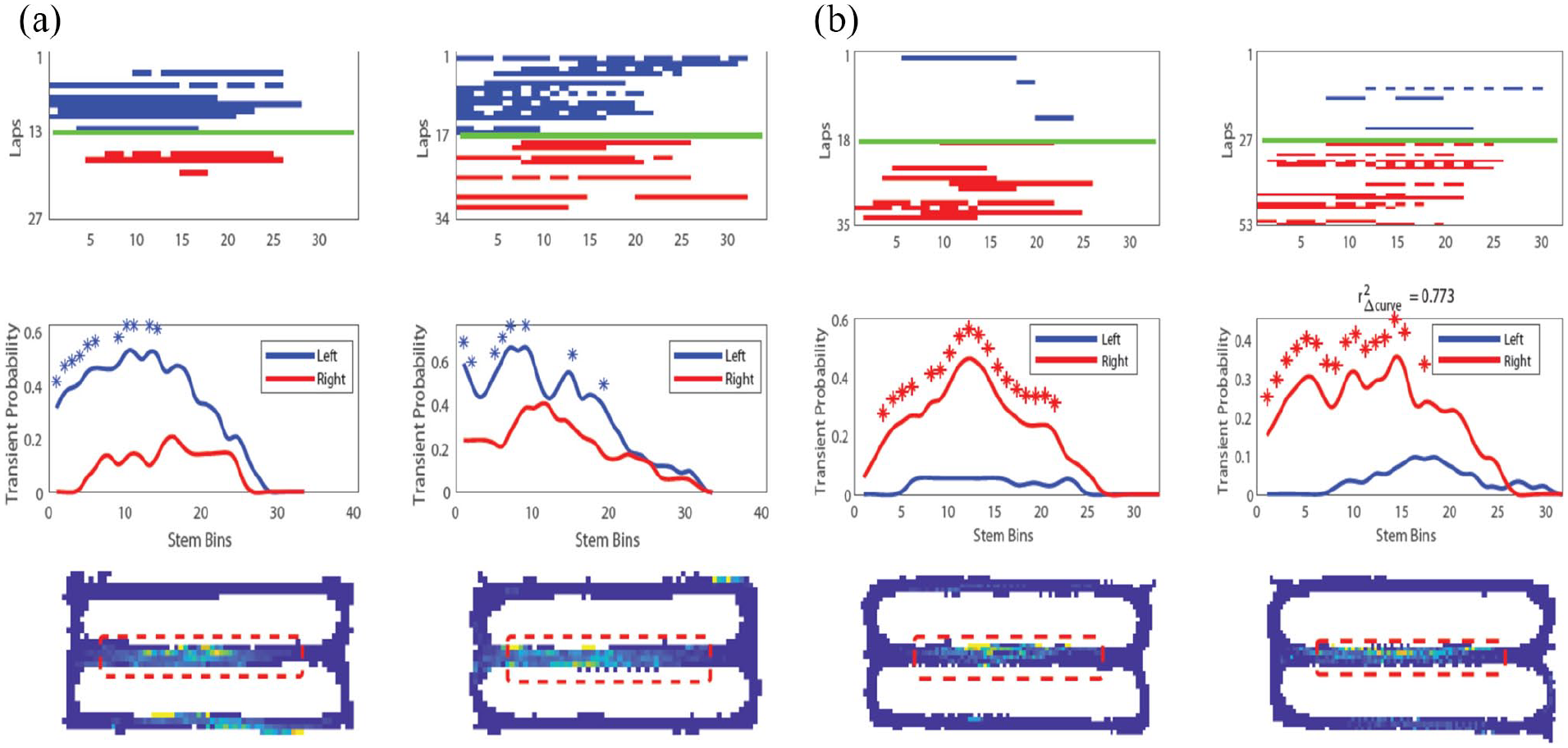

The performance of tasks, such as delayed spatial alternation or delayed non-match to position, requires the capacity to distinguish (disambiguate) spatial location on different trials, as described in previous modelling work (Hasselmo, 2009; Hasselmo and Eichenbaum, 2005; Levy, 1996). Because animals run up the stem of the task on each trial, they cannot determine their current response based on location, but must base their response on prior location. Neurophysiological data in these tasks show context-dependent activity appropriate for this behavioural disambiguation based on memory. For example, when a rat runs on the stem of spatial alternation task, individual neurons will fire selectively based on the past or future turning response (Figure 4). These ‘splitter’ neurons have been observed in the hippocampus (Ferbinteanu and Shapiro, 2003; Kinsky et al., 2020; Levy et al., 2020; Wood et al., 2000) and entorhinal cortex (Frank et al., 2000; Lipton et al., 2007; O’Neill et al., 2017).

Examples of two context-dependent ‘splitter’ neuron responses recorded on the central stem of the spatial alternation task using calcium imaging. These ‘splitter’ neurons remain stable across days and could provide disambiguation of responses to guide performance of the task, as supported by correlations of discriminability of neuronal responses with behaviour. (a) Example splitter neuron (left) and same neuron recorded 7 days later (right). Top: calcium event raster along stem, blue = left trials, red = right trials. Middle: average calcium event probability across all trials showing greater likelihood of left trial events. Bottom: occupancy normalised event rate map showing firing on central stem. (b) Same as (a) but for a different neuron with imaging sessions 9 days apart (adapted from Kinsky et al., 2020).

These neuronal responses can appear at specific times during training and are more stable than place cell responses in the task, possibly because the splitter responses are more necessary for accurate task performance (Kinsky et al., 2020). The left–right discriminability of splitter cell responses (Figure 4) correlates significantly with accurate behavioural performance (Kinsky et al., 2020). Context-dependent activity can also distinguish the sample versus test trials in delayed non-match to position (Griffin et al., 2007; Levy et al., 2020), and during the course of learning, the task shows a gradual shift from coding both turn direction and task phase to showing more coding of turn direction or task phase alone (Levy et al., 2020).

Both the guidance of behaviour and the learning-dependent shift in context-dependent representations over time may depend on mechanisms of sequence retrieval during the theta rhythm. Theta sequences appear to reflect planning of future trajectories, as sequences appear at choice points (Johnson and Redish, 2007; Kay et al., 2020), and the length of theta sequences increases with greater distance of future goals (Wikenheiser and Redish, 2015). The phase of firing relative to theta rhythm also appears to shift based on the novelty of individual cues (Manns et al., 2007) or the novelty of the environment (Douchamps et al., 2013; Wells et al., 2013), consistent with proposals for encoding and retrieval on different phases of theta rhythm cycles (Hasselmo, 2006; Hasselmo et al., 2002).

The replay of sequences of place cell activity during sharp-wave ripple events also appears to reflect the direction of future goal locations (Olafsdottir et al., 2015; Pfeiffer and Foster, 2013), which could guide behaviour about selection of a spatial response after a delay period. In some cases, the replay of sequences also appears to be coordinated between place cell sequences and grid cell sequences (Olafsdottir et al., 2016). Specific context-dependent time cell sequences can play out during the delay period depending on a rat’s future trajectory (Pastalkova et al., 2008). Together, these data indicate that spatial representations are not coded in isolation but are combined with many other features relevant to the performance of a behavioural task. The spatiotemporal trajectory coded for episodic memory can contain many dimensions depending on task demands.

Coding of trajectory speed and direction

As noted above, the coding and disambiguation of spatiotemporal trajectories for episodic memory appears to include dimensions beyond spatial location and time interval. For example, context-dependent activity can reflect the influence of prior behaviour on the current spatiotemporal trajectory. Similarly, one can remember one’s point of view when having a conversation in a familiar room (Conway, 2009), or the speed at which one rode a bicycle through town. Thus, direction and speed are important elements of episodic memory and memory-guided behaviour. In addition, as discussed previously, the coding of spatial location by place cells and grid cells could depend on the integration of the speed and direction of self-motion (Figure 3(a)). However, the neurophysiological data on coding of speed and direction do not seem consistent with the requirements of path integration.

Coding of speed

The running speed of animals has been shown to be coded by neurons in the hippocampus (McNaughton et al., 1983; O’Keefe et al., 1998) and by a number of different functional classes of neurons in the medial entorhinal cortex (Buetfering et al., 2014; Hinman et al., 2016; Kropff et al., 2015; Sargolini et al., 2006; Wills et al., 2012). Interneurons in the entorhinal cortex show coding of running speed (Kropff et al., 2015), but many grid cells and HD cells also show clear coding of running speed (Buetfering et al., 2014; Hinman et al., 2016; Jeewajee et al., 2008; Sargolini et al., 2006; Wills et al., 2012). Changes in firing rate based on speed have also been shown in RSC and PPC (Alexander et al., 2020; Clancy et al., 2019; McNaughton et al., 1994), and changes in magnitude of sensory responses appear with differences in running speed in areas, including visual cortex (Niell and Stryker, 2010) and auditory cortex (Nelson and Mooney, 2016). It is unclear whether a brain region exists that does not possess a subset of neurons with linear speed correlates.

Rate-based models of path integration require linearity of the firing rate speed signal (Dannenberg et al., 2019; Hinman et al., 2016). While many medial entorhinal cells have linear speed tuning curves as needed for path integration, the majority of speed-modulated cells have tuning curves that are non-linear (Figure 5(a)), showing saturation of rate once the animal reaches a moderate running speed. This non-linearity causes problems for path integration models. However, a subset of cells do match the linear speed signal employed by computational models. Another problem concerns the time scale of speed coding. Neurons appear to accurately represent speed by firing rate over periods of several seconds (Figure 5(b)), but when tested over periods shorter than a second, the accuracy of speed coding falls to levels that would prevent accurate path integration (Dannenberg et al., 2019).

Speed tuning as a function of time scale showing inaccurate tuning at short time scales. (a) Conventional speed tuning curve of a speed-modulated neuron recorded with tetrodes in mouse medial entorhinal cortex computed from firing rate and running speed data across all time scales. Black dots show speed-binned data, grey line shows that the data are best fit by saturating exponential curve using least-square fitting. (b) Speed tuning curves of the same neuron shown in (a) computed from firing rate and running speed data filtered at time scales of 1–2, 2–4, and 4–8 s. Filtering at one time scale was done by first computing two box car-smoothed signals, the first smoothed at the length of the lower bound of the time scale and the second smoothed at the length of the upper bound of the time scale. The upper bound-smoothed signal was then subtracted from the lower bound-smoothed signal to get the time scale-filtered signal. Finally, filtered signals were recentred around the mean of the raw signal. Note that the slope of a neuron’s speed tuning curve is a function of the time scale used for the integration of firing rate over running speed. Black dots show speed-binned data, grey lines show the least-square linear fits of the data (adapted from Dannenberg et al., 2019).

Coding of direction

Path integration for location would require a representation of movement direction, which has not been shown to be independently represented in the hippocampus or associated cortical structures (Raudies et al., 2015), although many place cells show direction sensitivity (Huxter et al., 2003; McNaughton et al., 1983). Instead, extensive neurophysiological data have demonstrated neurons that respond to the current HD of the animal, usually measured by a pair or more of LEDs mounted to the head (Taube et al., 1990). HD cells respond selectively based on the current allocentric direction of the animal’s head, regardless of the location of the animal in the environment and the relative position of individual landmarks. HD cells were initially discovered in the dorsal presubiculum (Taube et al., 1990), and they were subsequently demonstrated in the anterior thalamus (Taube, 1995). Later studies showed HD cells in entorhinal cortex, where they often have broad tuning (Brandon et al., 2011, 2013; Giocomo et al., 2014; Sargolini et al., 2006). Many HD cells do not show theta rhythmicity, but a subset of HD cells in the entorhinal cortex with partially overlapping tuning curves show theta rhythmic firing that falls on alternate cycles of the theta rhythm, referred to as theta cycle skipping (Brandon et al., 2013). This theta cycle skipping of HD cells is consistent with the readout of trajectories on alternate theta cycles in the hippocampus (Kay et al., 2020).

In contrast to the absence of place cells or grid cells in RSC and PPC, small numbers of allocentric HD cells have been observed in both RSC and PPC (Alexander and Nitz, 2015, 2017; Chen et al., 1994; Cho and Sharp, 2001; Jacob et al., 2017; Shine et al., 2016; Wilber et al., 2014). A subpopulation of HD cells in RSC is co-modulated by the tilt of the animal’s head in the vertical dimension (Angelaki et al., 2020), which could be important for landmark processing. Other RSC HD neurons exhibit tuning anchored to local visual landmarks (Jacob et al., 2017). The conditions sufficient to produce this form of directional tuning are still being explored (Zhang and Jeffery, 2019) but the existence of locally anchored HD tuning could prove important for anchoring the broader HD network to the constellation of distal cues that define space within the external world (Bicanski and Burgess, 2016; Clark et al., 2010; Vantomme et al., 2020). HD cells in either PPC or RSC comprise roughly 10% of the population, although there is variability across studies. A subset of RSC neurons are reliably tuned to angular velocity (approximately 25%), and HD cells within the region can exhibit anticipatory firing dependent on clockwise or counterclockwise movements into the preferred directional heading (Alexander et al., 2020; Cho and Sharp, 2001). Percentages of reported angular velocity-tuned cells and HD cells in RSC may vary as a function of the rostrocaudal location, subregion, and cortical layer wherein recordings were conducted (Lozano et al., 2017).

Contrast with movement direction

As noted above, path integration of self-motion to determine spatial location requires neural coding not of HD but of movement direction. Many models assume that movement direction could be extracted from a moving average of HD, but data show that mean HD does not provide a sufficient movement direction signal (Raudies et al., 2015). Analysis of data from medial entorhinal cortex during periods of time when HD differed greater than 30° from movement direction showed many neurons that responded to HD, but only two neurons showed significant coding of both movement direction and HD (Raudies et al., 2015). Given the lack of evidence for a rate code correlating with movement direction, an intriguing alternative hypothesis suggests that the required movement direction signal is inherently coded in theta phase sequences from discrete ensembles of grid cells though this remains to be explicitly tested (Zutshi et al., 2017). Based on the current data, it appears that HD cells may be more important for coding sensory feature angle than self-motion, given that they do not code movement direction (Raudies et al., 2015).

HD cells could instead be vital for accurate transformation of egocentric coordinates into allocentric coordinates, as allocentric HD is needed if one is to transform egocentric coordinates of sensory feature angle into allocentric location (Bicanski and Burgess, 2018; Byrne et al., 2007; Touretzky and Redish, 1996). As a simple example, imagine seeing a lighthouse about 1 mile away directly in front of you on a boat. If you know from a compass that your head is pointing directly north when viewing the lighthouse, then you know you are located 1 mile south of the lighthouse. However, the same egocentric observation has a completely different location if your compass indicates that your head is pointing directly east (indicating a location 1 mile west of the lighthouse). Consistent with this, lesions of the dorsal presubiculum or anterior thalamic nucleus, which both provide HD input to cortex, cause destabilisation of hippocampal place cells (Goodridge and Taube, 1997) and entorhinal grid cells (Winter et al., 2015).

Coding based on egocentric sensory input angle

The evidence above indicates that path integration of self-motion cannot be the only mechanism for the generation of spatial responses (Figure 3(a)). Even though path integration is supported by the persistence of spatial representations for periods of time in darkness, or in relation to a start location, other data support the influence of sensory feature angle (Figure 3(b) and (c)). The role of sensory feature angle is supported by the effect of rotation of visual cues on the rotation of spatial responses by place cells (Muller and Kubie, 1987) and grid cells (Hafting et al., 2005). In addition, the mechanisms of path integration appear inconsistent with non-linearities of speed tuning and the inaccuracy of speed tuning over short time intervals (Dannenberg et al., 2019), as well as the apparent absence of coding of movement direction (Raudies et al., 2015).

Thus, sensory input clearly plays a role in spatial coding. However, this raises the important question of how the raw sensory input to receptors in the eye can be transformed from egocentric visual feature angle to allocentric spatial coding. The following sections will review data relevant to this topic.

Allocentric boundary cells

One striking set of data demonstrated the influence of sensory cues on place cell firing by showing that place cell firing depends on the position of the walls of the environment (O’Keefe and Burgess, 1996). This led to the proposal of an undiscovered class of neurons termed ‘boundary vector cells’ that code the position of an animal relative to boundaries (Burgess et al., 2000; Hartley et al., 2000, 2014; Savelli et al., 2008). This novel prediction was later supported by data showing the existence of boundary vector cells that respond at a specific distance and allocentric angle from boundaries (Barry et al., 2006; Lever et al., 2009; Savelli et al., 2008; Solstad et al., 2008). These boundary responses can occur at a substantial distance from the boundary (Lever et al., 2009), indicating the important role of visual sensory cues, but the boundary responses can also be evoked by both wall barriers and the drop-off at the edge of a table (Lever et al., 2009), indicating a more general coding of boundaries beyond simple visual features. A related but distinct subpopulation of neurons in medial entorhinal cortex was recently discovered, termed object vector cells, that respond when the animal occupies specific allocentric angles and distances from non-boundary landmarks (Hoydal et al., 2019) similar to some neurons in lateral entorhinal cortex (Deshmukh and Knierim, 2011) and hippocampus (Deshmukh and Knierim, 2013). Alterations of visual boundaries also influence the location of grid cell firing fields. The spacing of grid cell firing fields is compressed or expanded by movements of the environment walls (Barry et al., 2007; Munn et al., 2020; Stensola et al., 2012), and the coding of velocity also changes with wall movement (Munn et al., 2020).

Computational modelling shows how egocentric visual input about boundaries could be transformed to a code for allocentric spatial location (Bicanski and Burgess, 2018; Byrne et al., 2007). The response of grid cells to barrier movement has also been modelled based on selective influences of the angle and optic flow of visual cues from different parts of the visual field (Raudies and Hasselmo, 2015; Sherrill et al., 2015). In addition to predicting the existence of allocentric boundary vector cells (Burgess et al., 2000; Hartley et al., 2000), models predicted that generation of allocentric boundary cells could depend on HD input combined with egocentric coding of environment boundaries (Bicanski and Burgess, 2018; Byrne et al., 2007), predicting the existence of egocentric boundary cells.

Egocentric boundary cells

Recent data have demonstrated the existence of egocentric boundary cells in a number of structures (Figure 6), as originally predicted in the models described above. An early study demonstrated these neurons in the dorsomedial striatum (Hinman et al., 2019), in the region of the striatum receiving input from the RSC and medial entorhinal cortices. These neurons showed a clear spiking response as animals foraged near barriers at the edge of an open field environment (Hinman et al., 2019). The position of the barriers was plotted in egocentric coordinates for each spike, and summed over all spikes, to show that neurons were tuned for specific egocentric positions of barriers (Figure 6(a)). Neurons with this form of receptive field are active whenever any environmental boundary occupies a specific range of angles and distances relative to the animal itself, and peak responses could occur when the boundary was at different specific angles relative to the front of the animal and different distances from the animal (Figure 6(b)). In the dorsomedial striatum, egocentric boundary cells had bearing preferences that were primarily lateral to the animal’s nose (though some coded positions directly in front or behind the animal) and preferred distances that were concentrated within specific ranges (Figure 6(c); approximately 6, 13, and 26 cm). The concentration of preferred distances has not been observed in other structures and is especially interesting when considering the known role of dorsal striatum in goal-directed action. Specifically, the clustering of preferred distances among striatal egocentric boundary cells could indicate the spatial resolution by which behaviourally relevant goals (e.g. reward sites, shelter, etc.) could be associated with the execution of specific action sequences. Dorsal striatal egocentric boundary vector responses are invariant to the appearance of environmental boundaries, indicating that the response property is not driven by high-level visual features (Hinman et al., 2019). Another early published report of neurons with this response property observed them in the lateral entorhinal cortex where neurons responded at particular egocentric bearings to boundaries, objects, and goal locations (Wang et al., 2018). This and other evidence for egocentric coding of environments have been reviewed in Wang et al., 2020.

(a) Egocentric boundary cell (EBC) responses recorded with tetrodes in RSC. Schematic for construction of ratemaps depicting firing rate as a function of egocentric position of boundaries relative to the animal (egocentric boundary ratemaps, EBR). Left and middle panels: an example spike is mapped with respect to egocentric boundary locations in polar coordinates. a1: the HD of the animal is determined for each spike (vector with arrow), and the distance to wall intersections for all 360° is determined (subsample shown for clarity). a2: boundaries within 62.5 cm are referenced to the current HD of the animal for a single spike. a3: example boundary positions for three spikes. a4: example EBR constructed after repeating steps (a1)–(a3) for all spikes and normalising by occupation. Colour axis indicates zero (blue) to peak firing (yellow) for this neuron (using MATLAB Parula colourmap). (b) Example EBC recorded in the dorsomedial striatum. Left plot: 2D ratemap depicting firing rate as a function of animal position in a 1.25 m2 square environment (blue is no firing, red is peak firing rate which is indicated above the plot, using MATLAB Jet colourmap). Middle plot: trajectory plot depicting all positions of the animal within the environment (grey) with positions of spikes indicated with coloured circles. Spikes colour code for current HD of animal at time of spike. Right plot: EBR for this neuron with an egocentric boundary vector receptive field on the left side of the animal. (c) Probability density function across all dorsomedial striatal EBCs is concentrated at approximately three preferred distances. Mean preferred distance values for individual animals over sessions marked with coloured dashed lines. (d) Example EBC recorded in the RSC. Plots are the same as in (b) (but using a colour axis equivalent to (a)). (e) Left: polar histograms and probability density estimates of preferred boundary bearing of all RSC EBCs. Yellow and blue bars correspond to EBCs recorded in the left and right hemisphere, respectively. Right: probability distribution of preferred distance from boundary of all RSC EBC receptive fields (adapted from Alexander et al., 2020; Hinman et al., 2019).

Egocentric boundary cells have also been demonstrated in a number of cortical regions. Models of the transformation from egocentric to allocentric coding of boundaries (Byrne et al., 2007) initially proposed that the transformation would occur in the RSC. Consistent with this model, egocentric boundary cells have been demonstrated in the RSC (Alexander et al., 2020). In RSC, egocentric bearing has a contralateral preference with the hemisphere where single cells are recorded (Figure 6(e); e.g. an egocentric boundary cell recorded in the left RSC is likely to have a preferred bearing to the right of the animal), which indicates that some component of the signal may arise from thalamocortical projections. The neurons show tuning to barriers at a number of distances, including distances well outside the range of whisker contact and at a number of angles, including positions behind the animal (Figure 6(e)). Egocentric boundary cells in the RSC with preferred distances proximal to the animal are more likely to be disrupted when boundaries were changed to drop-offs, suggesting subsets of neurons with this response property are driven by different sensory features (Alexander et al., 2020). Future studies should investigate the role of different idiothetic and sensory signals in the construction and maintenance of egocentric boundary responses as a function of brain region.

Egocentric boundary cells have also been shown in several other cortical regions, including PPC, secondary motor cortex, and postrhinal cortex (Alexander et al., 2020; Gofman et al., 2019; LaChance et al., 2019). In the postrhinal cortex, egocentric boundary responses persist in darkness (LaChance et al., 2019), supporting the computational theory of their generation by some mechanism of path integration based on prior contact with the barrier. In the postrhinal cortex, egocentric bearing was found to be anchored to the centre of the environment rather than the boundaries (LaChance et al., 2019). There is ambiguity about the exact relationship between centre-anchored and boundary-anchored egocentric vector tuning. It is important to consider that the centre of an environment possesses a fixed complementary relationship to all points along the boundaries. This makes a statistical comparison between the strength of egocentric bearing to boundaries versus the arena centre complex (e.g. in centre bearing, spiking is assigned to a single location, while for boundary bearing, spiking is assigned to multiple discrete locations along walls). Accordingly, it is difficult to disentangle whether egocentric vector tuning is anchored to the centre of the environment or the boundaries using the bearing component alone, especially in environments with internal symmetry. In work where environmental boundaries were expanded, it was found that the distance component of the vector remained at the same fixed distance relative to the animal, indicating that the vector was responding to the boundaries and not to the centre of the arena (Alexander et al., 2020; Hinman et al., 2019). This conclusion relies on the assumption that the bearing and distance components anchor to the same environmental feature, which has not been investigated at this time. That said, there may be no functionally distinct populations encoding bearing to the arena centre or boundaries, rather just slight methodological differences in detecting cells with these receptive fields. It is also important to note that detection of the centre of an environment may be difficult without knowing the relative relationships among boundaries.

Based on anatomical connectivity, models of the transformation from egocentric to allocentric coding of boundaries (Byrne et al., 2007) initially proposed that egocentric boundary cells would be observed in the PPC with the transformation between coordinate systems occurring in the RSC. Indeed, egocentric boundary cells have now also been demonstrated in PPC (Alexander et al., 2020; Gofman et al., 2019). The exact relationship of parietal egocentric boundary cell responses and a subset of parietal neurons that are sensitive to the egocentric direction to a visual cue should be explored in greater detail (Wilber et al., 2014). Future studies should address the origin of the egocentric representation of boundaries as a manifestation of higher-order sensory integration. Many egocentric boundary cells fire when the boundary is out of range of the whiskers, but whisker input could contribute to some of the closer egocentric firing fields as supported by the contralateral preference for egocentric coding. In addition, the memory of prior somatosensory interaction with the boundary could influence more distant firing. Future work should address the multisensory integration of somatosensory and visual input in driving this egocentric coding.

The robust evidence for theta phase coding of location in the hippocampus and entorhinal cortex reviewed above raises the question of the potential role of theta phase coding in the egocentric and allocentric representations in other structures, such as RSC. The RSC displays robust theta rhythmicity that correlates with hippocampal theta rhythmicity (Alexander et al., 2018), and a subset of RSC egocentric boundary cells exhibit theta phase locking (Alexander et al., 2020). This is of interest in the context of spatiotemporal coding, as recent modelling work utilised periodic modulation of top-down connection weights, akin to the theta oscillation, to enable an agent to make comparisons between perceived and memorised scenes (Bicanki and Burgess, 2018) similar to the proposal of theta phase separation for encoding and retrieval in the hippocampus (Hasselmo et al., 2002). Consistent with this, allocentric boundary cells in the subiculum fire on different phases of theta during direct experience of boundaries versus the phase of firing for trace responses to boundaries that are no longer present (Poulter et al., 2019). Egocentric sensory information must be temporally synchronised with movement through space and time to form contextually rich episodic memories. Future studies should analyse the potential role of theta phase coding of other spatial dimensions in structures, such as the RSC as a potential mechanism for accurately integrating egocentric information into coding of spatiotemporal trajectories.

Other evidence for transformations

Association cortices possess reciprocal connectivity with sensory, motor, and spatial cortices. Computations within these areas are implicated in sensorimotor coordination and reference frame transformations critical for navigation-relevant processes (Figure 7(a); Byrne et al., 2007). Transformation between egocentric and allocentric coordinate systems may be facilitated by networks of neurons with conjunctive spatial representations that likely arise from the aforementioned connectivity patterns. Conjunctive sensitivity often manifests as gain modulation, wherein the firing rate magnitude of a neuron in response to one spatial variable (e.g. egocentric position of a wall or cue) is decreased or increased (i.e. gain-modulated) as a function of a second spatial variable (e.g. gaze direction of the animal (Andersen et al., 1985).

Conjunctive spatial representations in a possible circuit for egocentric–allocentric coordinate system transformations. (a) Schematic of model circuit for interrelating egocentric (sensory) and allocentric (spatial) information for spatial memory (adapted from Bicanski and Burgess, 2018). Top: sensory spatial information in PPCs, allocentric spatial information arising in medial temporal regions, and HD signals (HDCs) from thalamic, rhinal, and subicular regions converge on RSC to produce conjunctive egocentric–allocentric representations that can facilitate coordinate system transformations. (b) Activation of RSC neurons while animals perform a virtual navigation task with repeating, identical, visual landmarks (from Mao et al., 2020). Left panel: visual scene with visual landmarks. Right panel: sorted neuronal activity for neurons that have landmark-related responses. Repeating activation in each row indicates responses to each visual landmark and differential intensity of activation supports conjunctive modulation of visual responses (egocentric) as a function of distance through the virtual track (allocentric/route-centric). (c) Mean linear firing rate for an RSC neurons exhibiting right turn sensitivity on traversals across a ‘W’ shaped track (from Alexander et al., 2015). This neuron consistently exhibits greater activation for the first right turn versus the second right turn, a form of gain modulation that concurrently represents egocentric action state and position within the route in a manner akin to position modulation of visual responses observed in part B. Bottom: schematic of the track with turn sites labelled. (d) An RSC egocentric boundary cell with conjunctive HD modulation (from Alexander et al., 2020). Left plot: trajectory plot with spikes colour coded by HD. Middle plot: egocentric boundary ratemap showing a preference for boundaries positioned to the left and slightly behind the animal. Right: polar tuning plot of firing rate as a function of allocentric HD shows greater activation of this EBC when the animal is heading southwest in the environment (corresponding to green coloured spikes in the trajectory plot). (e) A postrhinal cortex egocentric boundary cell with conjunctive HD modulation. Plots are similar to (d) but trajectory plot is not colour coded by current HD (from Gofman et al., 2019).

Conjunctive egocentric–allocentric receptive fields are observed in multiple regions within an interconnected network important for spatial mapping, navigation, and memory. A population of PPC neurons encodes the egocentric angle to behaviourally relevant visual landmarks and the animal’s allocentric heading orientation, simultaneously (Wilber et al., 2014). Retrosplenial neurons also exhibit activation related to visual landmarks during virtual navigation (Fischer et al., 2020; Mao et al., 2020; Powell et al., 2020). Responses of some retrosplenial neurons differentiated repeated exposures to a visual landmark at different locations along a virtual corridor (Figure 7(b); Mao et al., 2020). Thus, retrosplenial neurons provided a conjunctive code for the presence of a visual cue and its relative location within a route. This conjunctive code parallels rate codes observed for repeated local features during route running (Figure 7(c); Nitz, 2012; Alexander and Nitz, 2015, 2017). Similar route modulation of both egocentric signals and higher-order cognitive variables, such as choice availability, has recently been reported in secondary motor cortex (Olson et al., 2020).

Finally, subsets of egocentric boundary cells in lateral entorhinal cortex, postrhinal cortex, and RSC exhibit co-modulation by allocentric HD (Alexander et al., 2020; Gofman et al., 2019; Jacob et al., 2017; LaChance et al., 2019), supporting proposed mechanisms for translation between coordinate systems in network models (Figure 7(d) and (e)) (Bicanski and Burgess, 2018; Byrne et al., 2007). Linear combinations of neurons with conjunctive reference frame response fields have been used to efficiently approximate non-linear reference frame transformations (Bicanski and Burgess, 2018; Pouget and Sejnowski, 1997; Pouget and Snyder, 2000). An important next step would be to causally test the relationship between conjunctive egocentric–allocentric representations and coordinate system transformations, potentially by artificially creating or ‘clamping’ gain modulation using behavioural closed-loop optogenetics and examining allocentric spatial representations in downstream structures.

Conclusion

This review provides an overview of the representations in the hippocampus and associated structures that could be useful for the encoding and retrieval of episodic memory to be used in guiding future behaviour. These data address the problem of how episodic memory flexibly disambiguates complex overlapping spatiotemporal trajectories (Hasselmo, 2009, 2012). Neurophysiological data suggest the solution that episodic memories can be disambiguated on the basis of multiple features, including the coding of both space and time by mixed selectivity across neurons in the hippocampus and entorhinal cortex (Kraus et al., 2013, 2015), the coding of the context of prior or future trajectory segments (Frank et al., 2000; Griffin et al., 2007; Kinsky et al., 2020; Levy et al., 2020; O’Neill et al., 2017; Wood et al., 2000), the coding of speed (Dannenberg et al., 2019; Hinman et al., 2016; Kropff et al., 2015), the coding of HD (Taube et al., 1990), and the coding of egocentric spatial views (Alexander et al., 2020; Gofman et al., 2019; Hinman et al., 2019; LaChance et al., 2019; Wang et al., 2018). These features explain the capacity for episodic memory to encode and retrieve not only location and time of events (Tulving, 1984) but also self-motion properties, such as speed and directional viewpoint (Conway, 2009; Hasselmo, 2012). Discrimination of spatial location appears to depend on both path integration and the transformation of sensory input cues from egocentric coordinates (Alexander et al., 2020; Gofman et al., 2019; Hinman et al., 2019; LaChance et al., 2019; Wang et al., 2018) into allocentric spatial responses to barriers and to spatial location (Lever et al., 2009; O’Keefe and Burgess, 1996; Solstad et al., 2008). Thus, egocentric viewpoints could be transformed into allocentric representations for the coding of the spatiotemporal trajectories, and in addition, the spatiotemporal trajectories could trigger retrieval of specific egocentric viewpoints at particular positions along a trajectory (Bicanski and Burgess, 2018; Byrne et al., 2007; Wang et al., 2020).

The existing data and models of this transformation raise important questions (Wang et al., 2020), concerning the nature of the ongoing interaction of egocentric and allocentric representations in lateral versus medial entorhinal cortex and the question of how to code egocentric representations of multiple objects or moving objects (Wang et al., 2020). Additional experiments could address the nature of sensory input driving egocentric boundary cells, including the multisensory integration of somatosensory input from contact with barriers through whiskers and the representation of boundaries by visual features and optic flow (Bicanski and Burgess, 2018; Byrne et al., 2007; Raudies and Hasselmo, 2012, 2015; Raudies et al., 2016). The evidence for phase coding of location in hippocampus and entorhinal cortex suggests the benefit of exploring phase coding of location or egocentric distance and angle in RSC (Alexander et al., 2018, 2020). These data move beyond the question of the behavioural function of these regions to address the question of how neural circuits can represent complex trajectories and the transformation between different coordinate systems.

A number of other important experimental questions remain to be answered. For example, the techniques used to analyse the development of context-dependent responses (Kinsky et al., 2020; Levy et al., 2020) could be used to address the development of time cell responses during running on the treadmill in hippocampus (Kraus et al., 2013; Mau et al., 2018) and entorhinal cortex (Kraus et al., 2015). In addition, the mechanism for reset of neural activity at the start of treadmill running could be analysed as this may involve activation of modulatory systems, such as the cholinergic system (Hasselmo, 2006; Hasselmo and Stern, 2006). Furthermore, the role of replay activity during the delay period or intertrial interval (O’Neill et al., 2017; Olafsdottir et al., 2015, 2016) could be evaluated for its influence on the appearance and stability of context-dependent responses to turn or phase in spatial alternation and delayed match to sample (Kinsky et al., 2020; Levy et al., 2020). For example, the inclusion of a particular neuron in replay of a specific sequence (i.e. left versus right) could be associated with the appearance or change in stability of that neuron’s representation.

The flexible generation of spatiotemporal trajectories can also be used as part of mechanisms for planning and disambiguating potential trajectories to goal locations in the environment (Chrastil et al., 2015, 2016; Erdem and Hasselmo, 2012, 2014; Kubie and Fenton, 2012), and even for generating potential rule-guided trajectories to evaluate potential solutions to rule-based reasoning problems, such as the Raven’s progressive matrices task (Raudies and Hasselmo, 2017). Thus, the combination of multiple different codings for time, space, context, speed, direction, and egocentric and allocentric representation of boundaries could play an important role in planning and generating behaviour based on rapid encoding and disambiguation of episodic representations of configurations of the real world or a configuration of problem space.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Institutes of Health (Grant Nos R01 MH120073, R01 MH60013, R01 MH052090, F32 NS101836 (to A.S.A.), F32 AG067640 (to W.M.), K99NS116129 (to H.D.)) and the Office of Naval Research (Grant Nos MURI N00014-16-1-2832, MURI N00014-19-1-2571, and DURIP N00014-17-1-2304).