Abstract

While automation replaces human labour in structured and repetitive tasks, augmentation enhances human capabilities through artificial intelligence (AI) collaboration. This article develops a typology of human–AI value cocreation, distinguishing between automation and augmentation capabilities across varying levels of task complexity. The proposed framework is structured along two dimensions: mode of technology engagement (automation vs augmentation) and complexity of task-in-context. The four resulting models of value creation are analyzed in terms of their temporal, spatial and hierarchical complexity, which shapes human–AI interaction in performing a given task. Temporal complexity relates to sequencing, timing and feedback loops; spatial complexity refers to physical environments and the integration of dispersed resources; and hierarchical complexity captures interdependence and interaction across micro- and macro-level structures. By introducing this structured analytical lens, the article contributes to a more holistic understanding of AI-enabled value creation. The findings may also inform a research agenda for future inquiry.

Introduction

With 72% of companies adopting artificial intelligence (AI) into their business functions (McKinsey & Company, 2024), a third of current full-time occupations is expected to be reimagined as augmented services involving human–AI collaboration within the next decade (van Doorn et al., 2023), making workplaces more agile as generative AI displaces tedious and repetitive tasks (Bate, 2025). Human interaction with technology has long been central to firms’ efforts to cocreate value (Cenamor et al., 2017), but AI is likely to profoundly disrupt economies and reshape value cocreation processes, with major implications for labour markets (Lu et al., 2020; McKinsey, 2023).

AI is defined as algorithmically driven applications capable of performing cognitive tasks previously requiring human intelligence (Anthony et al., 2023), with the ability to learn, adapt and respond to challenges (Nguyen & Elbanna, 2025; Nilsson, 1971). A crucial distinction in understanding AI’s transformative role is between automation, where AI executes tasks autonomously and replaces human labour, and augmentation, where AI supports and enhances human capabilities and capacities (Nguyen & Elbanna, 2025; Raisch & Krakowski, 2021). These represent different modes of engagement with AI, the former replacing certain tasks in the ‘job to be done’ (Anthony et al., 2023), and the latter fostering collaboration for improved outcomes (Siemon, 2022).

While automation is efficient for repetitive, rule-based tasks (Lu et al., 2020; van Doorn et al., 2023), many low-skilled service roles remain resistant to automation due to their reliance on contextual understanding and spontaneous interactions (Autor & Dorn, 2013; Huang & Rust, 2018). These tasks often involve improvisation, creativity and judgement—features of contextual complexity better addressed by humans (van Doorn et al., 2023). Augmentation enables a divergence from the traditional machines-as-tools perspective by highlighting interdependencies and complexity within workflows (Le et al., 2023, 2024). Effective deployment requires systems that adapt in real time and exhibit situational awareness, rather than relying solely on predefined automation logic (Fountaine et al., 2019; Makarius et al., 2020).

This accentuates the importance of contextual complexity in AI configuration (Edvardsson et al., 2018; Ng et al., 2012), and we conceptualize this inherent property of the complexity of task-in-context determined by the required variety and interconnectivity of heterogeneous resources within a given situation (Heylighen, 1999). This manifests across temporal, spatial and hierarchical dimensions, shaping task execution (Holland, 2006). Following Ashby’s (1958) law of requisite variety, the complexity of a given context must be matched by the complexity of any system that seeks to control it or function within it. This implies tailoring human–AI configurations to the complexity and dynamics of their task environment.

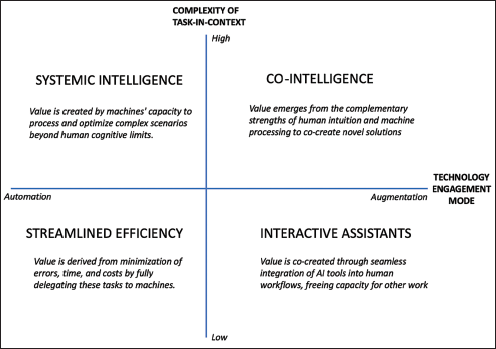

Based on these foundations, we propose a typology of human–AI value cocreation structured along two dimensions: (a) mode of engagement (automation vs augmentation) and (b) complexity of task-in-context (low to high). Each quadrant represents a distinct configuration of human–AI interaction, shaped by the emergent properties of the task and context. The typology offers a holistic but nuanced perspective on value cocreation and helps identify key research questions for future inquiry.

Conceptual Foundations

Amid scarce organizational human resources and increasing pressure for efficiency, the replacement of human labour with AI is a growing trend across domains, including service research (McLeay et al., 2020; Wirtz et al., 2018; Xiao & Kumar, 2021). Recent frameworks have sought to classify human–AI collaboration along different dimensions. Raisch and Krakowski (2021) proposed the automation–augmentation paradox, emphasizing how organizations must balance conflicting goals such as control versus creativity. Wilson and Daugherty (2018) introduced collaborative intelligence, emphasizing human capabilities that complement AI. Others focus on the locus of control in human-in-the-loop (HITL) systems (Holzinger, 2016) or define emerging team roles for AI agents (Siemon, 2022). Our typology builds on this literature by extending it along a second axis, task-in-context complexity, offering a situated and systemic view of human–AI value cocreation.

In line with service-dominant logic, value is cocreated through the interaction of human and non-human actors within a given context (Peters, 2016; Vargo & Lusch, 2016). While augmentation typically involves direct, synchronous human–AI collaboration, automation can also be interpreted as cocreation, though more asymmetric and indirect. Automated systems do not operate independently in isolation, they are designed, configured and governed by human actors. In this view, value is cocreated not only at the point of execution, but also upstream, through the human-led shaping of capabilities, and downstream through use, interpretation and feedback. This expanded interpretation allows the framework to account for both collaborative and delegated forms of human–AI cocreation of value.

While this article references technologies such as robotics and augmented reality (AR), we treat these as AI-enabled systems that incorporate autonomous capabilities. Our analytical focus is on the cognitive functions performed by these systems, consistent with AI’s role in performing tasks that involve learning, adaptation and decision-making (Anthony et al., 2023; Nguyen & Elbanna, 2025).

Modes of Engagement

AI increasingly enables machines to perform cognitive functions once reserved for humans (Anthony et al., 2023)—including learning, interaction and problem-solving (Makarius et al., 2020). Historically, the primary goal was to automate routine tasks, replacing human labour in standardized processes to optimize efficiency (Davenport & Kirby, 2016a, 2016b; Fügener et al., 2022; Nilsson, 1971; Wilson & Daugherty, 2018).

Advances in computational power and generative AI allow the automation of more complex tasks, including managerial decision-making and strategic analysis (Brynjolfsson & McAfee, 2017). These developments also reinforce augmentation as a complementary approach in which AI enhances rather than replaces human capabilities. Far from diminishing human agency, augmentation fosters dynamic partnerships in which humans and machines pursue shared goals through interactive collaboration (Brynjolfsson & McAfee, 2017). Concepts such as hybrid intelligence, human–AI symbiosis and HITL all support the view that combining human and machine strengths yields superior outcomes (Siemon, 2022).

Accordingly, AI-enabled value creation should be understood as a continuum, not a binary, where the balance between automation and augmentation is shaped by task complexity, technological advances and the socio-organizational context (Isaza & Cepa, 2024; Raisch & Krakowski, 2021). Viewing these modes as fixed or mutually exclusive oversimplifies the adaptive nature of AI integration (Raisch & Krakowski, 2021). The optimal balance is dynamic, evolving with shifting task demands, technological progress and structural change (Isaza & Cepa, 2024). Tasks vary in complexity and require differing degrees of structure and flexibility; as such, organizations must regularly reassess their AI integration strategies to remain aligned with strategic objectives. To move beyond binary distinctions, scholars have adopted a paradox perspective (Smith & Lewis, 2011) that foregrounds the innate contradictions and interdependencies of human–AI collaboration, which emphasizes complementarity over substitution (Raisch & Krakowski, 2021).

Complexity of Task-in-context

Context is viewed as a ‘set of unique actors with unique reciprocal links among them’ (Chandler & Vargo, 2011, p. 40), emphasizing that tasks unfold within socio-organizational systems. A solved task is an outcome valued by another actor (Vargo & Lusch, 2016), realized through resource integration within a given context (Chandler & Vargo, 2011). Task-in-context complexity refers to the extent to which a task requires diverse resource integration and reciprocal interaction. As complexity increases, so does the need for adaptive coordination mechanisms to align these interactions with evolving demands.

According to Ashby’s (1958) law of requisite variety, an (eco)system must align its structural and functional complexity with that of its environment; that is, its capacity for adaptation and regulation must reflect the complexity of the task-in-context it seeks to address. Similarly, the diversity and interrelatedness of human and AI resources should correspond to the task’s inherent complexity. Effective value creation depends on this alignment between systemic capabilities and contextual contingencies to ensure adaptability.

As a nested hierarchy of interrelated systems, the service ecosystem’s macrosystem shapes and constrains the internal structure of its microsystems (Braathen, 2025). Complexity manifests along three dimensions: temporal, spatial and hierarchical (Heylighen, 1999). Temporal complexity refers to sequencing, timing, feedback loops and process synchronization. Misalignments as delays, bottlenecks or low adaptability, can amplify complexity with cascading effects across systems. Spatial complexity arises from variation in physical or digital environments, including geographic dispersion and relatedness of disparate resources. In digital contexts, complexity may arise from the merging of data sources or the need for distributed collaboration. Finally, hierarchical complexity pertains to the interdependence between activities on different levels in the nested structure, and the interplay between them (Holland, 2006). It encompasses how local processes are embedded in broader systems, each with distinct coordination demands (Heylighen, 1999).

In summary, human–AI value cocreation can be conceptualized through two interrelated dimensions: (a) through the nature of interaction and modes of engagement—automation to augmentation, and (b) the degree of environmental complexity—complexity of task-in-context—from low to high. Together, these axes define a four-quadrant typology, each representing a distinct value cocreation model highlighting how tasks are distributed between humans and machines.

Types of Value Cocreation

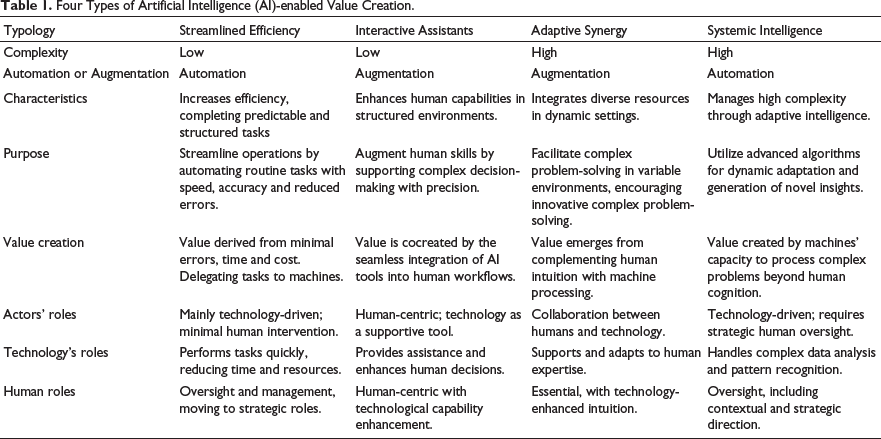

Theoretical typologies offer a structured means of integrating and clarifying theoretical assumptions about empirical phenomena (Helkkula et al., 2018). The framework proposed here identifies four modes of human–AI value cocreation: streamlined efficiency, interactive assistance, adaptive synergy and systemic intelligence (see Figure 1 and Table 1). These represent distinct configurations of human–AI interaction, shaped by varying levels of task complexity.

Each type is analyzed from three perspectives: temporal (sequencing, timing and synchronization), spatial (dispersion and integration of resources) and hierarchical (scaling across micro- and macro-level structures in nested structures). In practice, factors such as task repetitiveness, subjectivity in inputs and outputs and effort–value trade-offs complement the framework. While not formal criteria, these considerations function as decision boundaries in real-world scenarios, influencing and shaping appropriate design and performance of human–AI cocreation. Unlike typologies focused solely on control or role allocation, our framework integrates environmental complexity to better capture systemic contingencies of value cocreation.

Typology of Artificial Intelligence (AI)-enabled Value Creation Models.

Streamlined Efficiency

Streamlined efficiency refers to contexts with low task complexity and high automation potential. Tasks in this quadrant are typically routine, structured and repetitive, making them ideal for automation and easy to standardize (Isaza & Cepa, 2024). Operations can often be delegated to AI-driven systems with minimal human input, promoting rationalization, accuracy and efficiency (Davenport & Kirby, 2016a, 2016b). Because these processes are linear, follow predefined steps, and can be codified into explicit rules and algorithms, AI often outperforms humans in speed, cost and precision (Lu et al., 2020).

A salient example is the automation of routine accounting functions. Tasks such as invoice generation, expense tracking and tax calculation can now be handled by AI-integrated software, reducing human involvement in error-prone operations. As a result, accountants are transitioning toward advisory roles, such as providing strategic financial guidance and compliance with complex regulatory frameworks. Accounting is especially suited to automation, given the low subjectivity of its inputs and outputs—largely numerical and rule-based. Moreover, the cost of deploying AI in these settings is typically offset by efficiency gains, making the value trade-off favourable.

In temporal terms, automation minimizes variability by executing tasks within fixed timeframes, enhancing predictability and efficiency. Digitization reduces spatial complexity by enabling seamless interaction unconstrained by geography or physical media. The hierarchical dimension pertains to the organization of data and processes within layered structures that guide decision-making and execution. By defining relationships and enforcing consistency through predefined rules, hierarchical frameworks ensure that tasks and interactions remain predictable and linear.

Streamlined efficiency also supports scalability and knowledge replication. Codified procedures can be reproduced across contexts without proportionate resource increases. However, as spatial, temporal, or hierarchical complexity grows, these systems often struggle to adapt (van Doorn et al., 2023), since rigid structures lack the flexibility needed in dynamic or unstructured environments.

Research Agenda: Streamlined Efficiency

Future research could examine how automation affects task design, employee roles and service quality in transactional domains like insurance, banking or logistics. For instance, comparative case studies using institutional theory could explore how automation reshapes accountability and role expectations across industries.

From a complexity standpoint, automation excels in stable environments but may falter where contextual variability is high. Organizations must therefore consider redesigning jobs and training programmes that incorporate humans-in-the-loop. Research could also investigate worker perceptions of fairness, autonomy and the ethical challenges of algorithmic control. The key research questions include:

How do automation technologies in low-complexity tasks reshape employee roles and perceptions of autonomy in standardized service operations? What institutional factors influence the adoption of automation in domains with high spatial or hierarchical complexity (e.g., hospitality, retail), and what adaptations are needed? How does the removal of human discretion in automated systems affect service quality and customer-perceived accountability?

Interactive Assistants

For complex, ambiguous or creative tasks, AI often enhances rather than replaces human judgement (Brynjolfsson & McAfee, 2014). By blending computational power with human intuition, contextual reasoning and adaptive decision-making (Wilson & Daugherty, 2018), augmentation refines human capabilities and supports value cocreation. Here, AI functions as a cognitive enhancer that promotes more efficient and accurate problem-solving while preserving human oversight.

It is important here to distinguish between the complexity of the task and the associated contextual complexity. While the task itself may be structured and predictable, the surrounding context may introduce some degree of variability and unpredictability. In spatially complex environments, technology can extend and augment our human capacity to operate safely and effectively—for example, by using remote control robots with AI capabilities in ‘master-slave’ configurations to operate, adapt and learn in hazardous environments (Buttolo et al., 1994). Equally, AI-enabled systems can enhance delicate medical procedures that require extreme accuracy, such as eye surgery, by reducing physical strain and improving outcomes. In such cases, technology acts as a force multiplier that improves outcomes by extending human capabilities and reducing physical strain.

Similarly, mechanics today rely on AI-powered diagnostics in vehicles, which analyze sensor data to identify likely malfunctions. These tasks are moderately structured but involve interpretive judgement, making them less suitable for full automation. In such cases, augmentation improves decision accuracy while maintaining flexibility. The cost and effort of fully automating these roles would often exceed the marginal benefits due to context-specific variability, for example, when a mechanic must replace a damaged hose under non-standard conditions.

In these examples, augmentation offers a more efficient trade-off between investment and value, as it enhances human performance in tasks that resist full automation, despite low complexity, because of interpretive variability or unpredictable physical environments. Temporally, it speeds up workflows and decision-making. Spatially, it limits the need for direct physical presence in hazardous or remote areas. Hierarchically, AI helps coordinate complex tasks across multiple aggregational levels while leaving strategic oversight to humans.

Research Agenda: Interactive Assistants

AI-driven tools offer real-time guidance, enabling faster, more accurate task completion and supporting on-the-job learning, particularly for less experienced employees. However, poor design may increase cognitive load, highlighting the importance of effective system integration (Isaza & Cepa, 2024). Studies could explore how augmentation changes human expertise development, especially in low to moderately complex tasks (e.g., diagnostics in healthcare, technical troubleshooting). Economically, augmentation is often more cost-effective than full automation because it requires lower upfront investment, leverages existing human resources and allows gradual scaling without rigid frameworks.

By reshaping roles, augmentation shifts focus toward higher-order tasks like problem-solving, supervision and system optimization, where humans maintain oversight in dynamic contexts that demand adaptability and contextual reasoning. Key research questions include: How and under what conditions does augmentation affect the speed, accuracy and cognitive workload of frontline workers performing structured but variable tasks? What role do augmented decision-support systems play in skill acquisition and de-skilling over time among junior and experienced professionals (e.g., mechanics, technicians)? Under what contextual conditions do organizations choose augmentation over automation for repetitive-yet-subjective tasks, and how are these choices justified economically?

Systemic Intelligence

Systemic intelligence refers to a form of automation suited for high-complexity environments by offering capabilities that extend beyond the rigidly predefined processes of traditional automation. Enabled by generative AI, a technology that produces ‘previously unseen synthetic content, in any form and to support any task’ (Peñalvo & Ingelmo, 2023, p. 14), these systems adapt dynamically to complexity and generate novel insights by integrating vast amounts of structured and unstructured data. Unlike rule-based systems, generative AI employs probabilistic learning and adaptive mechanisms to automate cognitive processes that were once exclusively human. In light of their ability to understand, respond and anticipate, these technologies come close to what Huang and Rust (2018) refer to as ‘analytical intelligence’—the ability to solve and learn from problems by deploying information processing, logical reasoning, and mathematical skills that previously required specialized training and expertise.

Generative AI excels at managing temporal complexity by identifying intricate patterns in sequential data, enabling accurate prediction and real-time adaptation to changing conditions. These tasks are typically data-intensive but low in physical interaction, producing outputs ranging from analytical insights to generated content. While the development and training of such systems require significant upfront investment, the value produced can be substantial in domains where high-volume decision-making at scale yields long-term strategic or financial benefits. The ability to handle both short-term, time-sensitive decisions and long-term forecasting is likely to be especially valuable in such domains as financial forecasting, supply chain optimization and predictive maintenance.

The strengths of generative AI again become apparent in domains characterized by hierarchical complexity, where tasks may involve synthesizing micro-level data (e.g., individual consumer behaviour) to produce macro-level strategies (e.g., market predictions). To navigate such nested systems, generative AI can identify patterns within layers of complexity. In the pharmaceutical sector, for instance, this might involve integrating molecular-level data and broader clinical trial results to predict drug efficacy and streamline the research and development pipeline.

Spatial complexity remains challenging for generative AI, which must rely on digital representations of the physical world in the absence of sensory capabilities. Unlike humans, who interact directly with the world and constantly adapt to it, generative AI struggles to navigate dynamic environments characterized by variability, physical interactions and emergent phenomena. This limitation underscores the importance of human oversight in tasks involving physical or social complexity. Additionally, in areas like creative writing or market analysis, the subjectivity of outputs makes full substitution contentious. In such cases, generative AI may complement human input by proposing alternatives, yet still requires human judgement for final decisions. The value of automation increases as models demonstrate higher accuracy and generalizability across varied contexts, making the effort-to-benefit ratio increasingly favourable in data-rich sectors.

Research Agenda: Systemic Intelligence

Whereas traditional automation focuses on precision and control, systemic intelligence thrives on complexity, uncertainty and change. It demonstrates temporal intelligence by recognizing dynamic patterns, enabling both real-time adaptation and long-term strategic planning. It also addresses hierarchical complexity through synthesizing micro-level data to form macro-level insights, but lacks embodied perception, and continues to face challenges in spatial domains. Systemic intelligence invites rich inquiry into organizational decision-making under uncertainty. Researchers could adopt complex adaptive systems theory to study how generative AI enables emergent strategy formation in domains like finance, supply chains or public health. Future research questions include: In deploying generative AI for decision-making tasks that traditionally require human expertise, what are the salient capabilities and ethical considerations? How do organizations manage the trade-off between transparency and performance when deploying generative AI for strategic decision-making in high-stakes domains (e.g., finance, logistics)? How do different organizational designs affect the interpretability, trust and use of systemic AI in multi-level decision hierarchies?

Adaptive Synergy

In high-complexity environments, technological augmentation enhances human creativity, adaptability and resource integration—particularly for tasks characterized by low repetitiveness and high subjectivity, such as design, diagnostics or field-based decision-making.

Temporal complexity reveals how augmentation can accelerate human decision-making and learning. In healthcare, for instance, augmented AI systems analyze medical histories, patient conditions and treatment outcomes to support diagnostics in real time. This alignment with real-time human judgement supports faster and more informed decision-making. These environments often involve context-specific and ambiguous data, where full automation is infeasible due to ethical constraints or interpretive variability. In such cases, augmentation improves human performance while mitigating complexity.

The deployment of AI in these contexts is often justified not only by efficiency gains but also by its contributions to decision support, safety or innovation. The augmentation route is frequently chosen precisely because it maximizes the value of human intuition while managing the burden of environmental complexity.

Hierarchically, augmented solutions operate across nested systems, bridging micro-level tasks and macro-level objectives. At the micro level, tools like DeepMind’s AlphaFold have revolutionized our ability to understand processes like protein folding, bypassing years of experimental trials by predicting protein structures with remarkable accuracy. These micro-level advances cascade to the macro-level effects, such as accelerating drug discovery and transforming healthcare delivery systems.

Research Agenda: Adaptive Synergy

Future research could examine how humans and AI co-evolve expertise in creative, improvisational or field-based tasks, such as social work, repair or design. Practice theory or embodied cognition may serve as useful lenses to understand how augmentation interacts with human sensibility, tacit knowledge and material contexts. Another promising area is participatory design, where AI tools are developed in collaboration with end users. Such cocreation approaches may yield augmentation tools better aligned with the needs of workers operating in unpredictable or socially sensitive settings. Research questions might include: How do workers in craft- or context-intensive occupations (e.g., carpentry, nursing and design) integrate AI augmentation tools into their embodied practice and decision-making? What are the affordances and constraints of AI tools in helping humans to navigate spatial, temporal and social complexity in real-time environments? How do cocreative design processes involving end-users and developers shape the development of augmentation tools that support improvisational and adaptive tasks?

Table 1 presents an overview of the proposed typology.

Four Types of Artificial Intelligence (AI)-enabled Value Creation.

Conclusion

Van Doorn et al. (2023) emphasize the need to analyze human–machine interactions in frontline contexts where dynamic environments increase task complexity. The framework proposed here offers a structured approach to examine how humans and AI cocreate value by categorizing tasks according to contextual complexity and mode of technological engagement (automation–augmentation). It also provides a foundation for analyzing the evolving interaction between task complexity and technological capabilities.

As value cocreation is inherently contextual, neither automation nor augmentation alone can address all forms of complexity. Effective integration depends on understanding the complexity of task-in-context and aligning human–AI configurations accordingly. By bridging theory and practice, the typology contributes to a more nuanced perspective for studying AI implementation and task orchestration. This includes both direct, interactive forms of collaboration and more asymmetrical forms where value is cocreated through the design, governance and use of autonomous systems.

Importantly, deploying AI to enhance well-being, such as by automating hazardous or physically taxing tasks, need not undermine human worth. On the contrary, it can reinforce the centrality of human agency in value cocreation. To overcome cultural and organizational barriers to AI adoption and to ensure that these technologies augment human dignity and participation rather than undermining them, we must aim to pursue a human-centric approach (Findsrud & Tronvoll, 2024; Fountaine et al., 2019; Krakowski, 2025).

For practitioners, the typology provides a decision matrix for assessing the suitability of AI applications across varied task environments. Managers should automate tasks with high volume, standardization and low cognitive load (e.g., invoice processing, scheduling), augment tasks that require human judgement, contextual interpretation or empathy, supported by AI insights (e.g., diagnostic support, legal analysis), and avoid AI in tasks involving tacit knowledge, ethical ambiguity or strong cultural resistance. By aligning engagement mode and task complexity, organizations can prioritize AI investments, guide job redesign, and target upskilling efforts for sustainable impact.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.