Abstract

Background

Defensible standard setting is a cornerstone of competency-based medical education. However, many institutions still use arbitrary pass marks such as 50% without empirical justification. This study aimed to apply the Nedelsky method—a defensible, criterion-referenced standard setting approach—to determine the minimal passing score for the final examination of the Obstetrics and Gynecology (OBGYN) course at Pham Ngoc Thach University of Medicine, Vietnam, and to compare it with the conventional Cohen method.

Method

A four-step Nedelsky procedure was implemented to establish a defensible passing standard for a 50-item multiple-choice examination administered to fourth-year medical students on December 3, 2022. Three trained assessors independently estimated the number of distractors that a minimally competent candidate (MCC) could eliminate for each question, from which the Minimal Passing Level (MPL) was calculated. Subsequently, a cross-sectional analysis was conducted on 808 students to determine the pass and fail rates using both Nedelsky and Cohen methods. Statistical comparison was performed using the McNemar’s test for paired categorical data.

Results

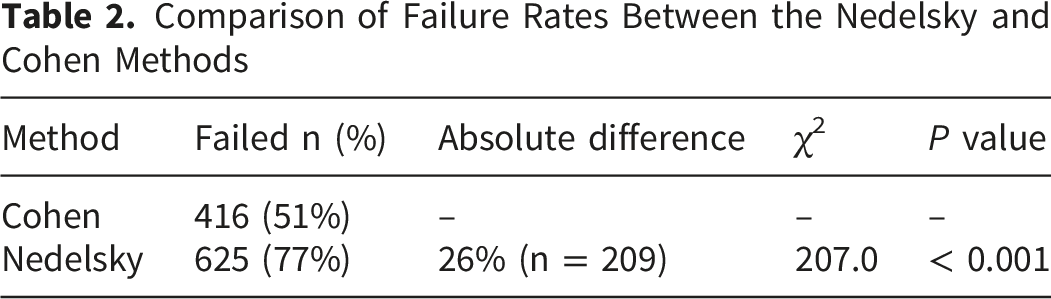

The mean MPL derived from three assessors was 65%, corresponding to 33 correct answers as the passing score. According to this standard, 183 of 808 students (23%) passed the examination, while 625 (77%) failed. In contrast, using the Cohen method (cut-off = 55%), 416 students (51%) failed. McNemar’s test showed a statistically significant difference between the two methods (χ2 = 207.0, p < 0.001), with moderate agreement (Cohen’s κ = 0.47).

Conclusion

The relative Cohen method underestimated the minimal competency threshold, resulting in a substantial “failure to fail” phenomenon. The Nedelsky method provided a more defensible, transparent, and evidence-based approach for setting pass standards in resource-limited medical education contexts. Adoption of defensible standard setting methods such as Nedelsky can enhance fairness, validity, and accountability in competency-based assessments.

Keywords

Introduction

Competency-based medical education (CBME) emphasizes that assessment outcomes must meaningfully distinguish between learners who are competent to practice safely and those who are not. Within this framework, standard setting—the process of determining a defensible passing score—plays a pivotal role in ensuring the validity, fairness, and accountability of summative assessments.1-4 An appropriately established pass mark should allow competent candidates to pass while reliably identifying those who have not yet met the minimum required level of competence.

Despite its importance, standard setting in medical education is frequently reduced to arbitrary practices, such as adopting a fixed cut-off score (e.g., 50% or 60%) without consideration of test difficulty, item characteristics, or the expected performance of minimally competent candidates. Evidence from the literature has consistently shown that such fixed cut scores are not defensible and may undermine the fundamental goals of competency-based assessment.2,5 Importantly, there is no single “correct” passing score, no gold standard, and no fully objective method that can eliminate human judgment from the standard-setting process. 6

Norm-referenced approaches further complicate pass–fail decisions by anchoring standards to cohort performance rather than to explicit competency expectations. The Cohen method, for example, determines the cut score based on the expected score from random guessing and the performance of high-achieving students. 7 Although practical and easy to implement, such relative methods risk allowing candidates to pass simply because they perform better than their peers rather than because they meet a predefined minimum level of competence. This practice contributes to the well-described phenomenon of “failure to fail,” whereby learners who have not yet reached acceptable competence are insufficiently identified in summative assessments.1,8

To address these limitations, criterion-referenced standard setting methods have been developed to link pass–fail decisions directly to competency expectations. Among these, the Nedelsky method is a test-centered, absolute standard-setting approach originally designed for multiple-choice examinations. 9 The method requires judges to estimate how many distractors a minimally competent candidate (MCC) can reasonably eliminate for each item, thereby estimating the probability that such a candidate would answer the item correctly. Aggregating these probabilities across all items yields a minimal passing level grounded in expert judgment rather than cohort performance. 10

Comparative studies in medical education have shown that the Nedelsky method produces passing scores that are generally higher and more defensible than those generated by norm-referenced or compromise methods, while remaining feasible for classroom-based assessments. 6 Although methods such as Angoff or Ebel are often regarded as best practice, their implementation typically requires large panels of subject matter experts with substantial psychometric expertise.5,10 In many medical schools, particularly in resource-limited settings, such expertise may not be readily available. In this context, the Nedelsky method offers a pragmatic yet defensible alternative for establishing absolute passing standards in routine course examinations. 6

In Vietnam, as in many low- and middle-income countries, summative assessments in undergraduate medical education continue to rely heavily on norm-referenced or arbitrary cut scores. Empirical evidence comparing defensible, criterion-referenced standard-setting methods with conventional approaches in this context remains limited. Therefore, this study aimed to apply the Nedelsky standard-setting method to determine the passing score for the final examination of an undergraduate Obstetrics and Gynecology course and to compare the resulting pass–fail outcomes with those obtained using the commonly applied Cohen method.

Methods

Study Design

This study employed an observational, cross-sectional design to apply a defensible standard-setting procedure and to compare pass–fail outcomes derived from two different standard-setting methods, consistent with prior comparative research in medical education assessment. 6

Study Context and Participants

The study was conducted at Pham Ngoc Thach University of Medicine in Ho Chi Minh City, Vietnam. Undergraduate medical education in Vietnam typically follows a six-year program with centralized national admission and relatively large cohort sizes compared with many Western medical schools. At our institution, annual cohorts commonly exceed 600 students.

Participants included all fourth-year undergraduate medical students enrolled in the Obstetrics and Gynecology (OBGYN) course during the 2022–2023 academic year who sat for the final summative written examination on December 3, 2022. A total of 808 students participated in the examination and were included in the analysis.

Students were excluded if they were absent from the examination or had incomplete examination records.

The sample size was not predetermined because the study analyzed the complete cohort of students who sat for the examination during the study period. Therefore, all available examination records were included in the analysis.

Assessment Instrument

The summative assessment consisted of a 50-item multiple-choice question (MCQ) examination covering core theoretical knowledge in Obstetrics and Gynecology. Each item had four response options, including one correct answer and three distractors. The examination was developed and reviewed by the OBGYN department following institutional assessment guidelines and was used as the official end-of-course examination.

Standard Setting Procedure Using the Nedelsky Method

The passing score was established using the Nedelsky standard-setting method, a criterion-referenced, test-centered approach originally described for objective tests. 9 This method estimates the probability that a minimally competent candidate would answer each item correctly based on the number of distractors that can be reasonably eliminated. 10

The standard-setting process consisted of four structured steps. Step 1: Definition of assessed competency

The assessed competency was defined as foundational theoretical knowledge in Obstetrics and Gynecology required of a minimally competent fourth-year medical student who is safe to progress in the undergraduate medical curriculum. This definition aligns with established concepts of minimal competence in medical education assessment.

2

Step 2: Formation of the assessor panel

A panel of three assessors was convened to conduct the standard-setting process. All assessors were faculty members in the Department of Obstetrics and Gynecology with senior clinical qualifications and prior training in medical education assessment and standard-setting methodologies. The use of trained faculty assessors rather than psychometric experts is consistent with recommended practice for classroom-based standard setting.

6

Step 3: Conceptualization of the minimally competent candidate

Before conducting item-level judgments, assessors participated in a structured discussion to establish a shared understanding of the minimally competent candidate (MCC). The MCC was defined as a learner who demonstrates sufficient knowledge and judgment to practice safely but may lack the consistency, efficiency, or depth of higher-performing peers.

2

Step 4: Independent item-level judgments

Each assessor independently reviewed all 50 MCQs and estimated the number of distractors that an MCC could reasonably eliminate for each item. These judgments were used to calculate the probability of a correct response for each item. Item-level probabilities were summed to estimate the minimal passing level (MPL), and the final passing score was calculated as the mean MPL across the three assessors, following established Nedelsky procedures.6,9

Application of the Cohen Method

For comparison, a passing score was also calculated using the Cohen method, a norm-referenced standard-setting approach that determines the cut score based on the expected score from random guessing and the performance of high-achieving candidates. 7

Outcome Measures

Primary outcomes included the passing scores determined by the Nedelsky and Cohen methods, the corresponding pass and fail rates, and the difference in failure rates between the two methods.

Statistical Analysis

Descriptive statistics were used to summarize examination performance. Because pass–fail classifications under the Cohen and Nedelsky methods were derived from the same group of students, comparisons between the two methods were performed using McNemar’s test for paired categorical data. Agreement between the two standard-setting methods was further evaluated using Cohen’s kappa coefficient. Statistical significance was defined as a two-sided p value < 0.05. All analyses were conducted using R statistical software.

To minimize potential sources of bias, the study analyzed the complete cohort of students who sat for the examination during the study period, thereby reducing selection bias. Examination scores were obtained from official academic records, ensuring objective measurement of student performance.

Ethical Considerations

The study used anonymized secondary data derived from routine examination records collected as part of the university’s standard academic assessment process. No questionnaires or additional data were collected, and students were not contacted for the purposes of this research. All identifiable information was removed prior to analysis. Because the study involved retrospective analysis of de-identified educational records and posed minimal risk to participants, formal ethical approval and informed consent were not required according to institutional policies governing educational research.

Results

Characteristics of Examination Performance

A total of 808 fourth-year medical students sat for the final Obstetrics and Gynecology examination, which consisted of 50 multiple-choice questions. The distribution of examination scores was non-normal, with the highest frequency observed around a score of 60%. Most student scores clustered above 40%, and the 95th percentile of examination performance was 75%.

The overall distribution of examination scores is illustrated in Figure 1, showing a concentration of scores between 50% and 70%, with a long tail toward lower scores. Distribution of examination scores among fourth-year medical students

Histogram showing the distribution of percentage correct scores for the 50-item Obstetrics and Gynecology examination (n = 808). Scores were not normally distributed, with a peak around 60% and the majority of students scoring above 40%. The 95th percentile of performance was 75%, which was used as a reference point in calculating the passing score using the Cohen method.

Passing Score Determined by the Nedelsky Method

Independent judgments from three assessors were obtained for all 50 examination items. Based on the estimated number of distractors that a minimally competent candidate could reasonably eliminate, item-level probabilities of correct responses were calculated and summed to generate the minimal passing level (MPL) for each assessor.

The mean MPL across the three assessors was 65%, corresponding to 33 correct answers out of 50 as the passing score.

Pass and Fail Rates Using the Nedelsky and Cohen Methods

Applying the Nedelsky-derived passing score of 65%, 183 of 808 students (23%) passed the examination, while 625 students (77%) failed.

Passing Scores and Pass–Fail Outcomes Determined by the Nedelsky and Cohen Methods

Comparison of Failure Rates Between Standard-Setting Methods

The failure rate determined by the Nedelsky method (77%) was substantially higher than that determined by the Cohen method (51%). The absolute difference in failure rates between the two methods was 26%, corresponding to 209 students who would have passed under the Cohen method but failed under the Nedelsky method.

Comparison of Failure Rates Between the Nedelsky and Cohen Methods

Discussion

This study applied a defensible, criterion-referenced standard-setting method to a routine undergraduate medical examination and demonstrated substantial differences in pass–fail outcomes when compared with a commonly used norm-referenced approach. Using the Nedelsky method, the passing score was set at 65%, resulting in a failure rate of 77%, whereas the Cohen method yielded a lower cut score of 55% and a failure rate of 51%. The 26% absolute difference in failure rates underscores the profound impact that the choice of standard-setting method can have on summative assessment decisions.

The relatively high failure rates observed in both standard-setting approaches should be interpreted in the context of the assessment design. The examination evaluated theoretical knowledge using a criterion-referenced framework aimed at identifying the minimal competence required for safe progression in the curriculum rather than for graduation-level certification. In addition, large cohort sizes and heterogeneous preparation levels are common features of undergraduate medical education in Vietnam, which may contribute to wider score distributions in summative examinations.

Comparison With Existing Literature

Our findings are consistent with previous comparative studies of standard-setting methods in medical education. Downing et al demonstrated that the Nedelsky method typically produces higher and more conservative passing scores than compromise or norm-referenced approaches such as Hofstee, particularly in classroom-based assessments. 6 In their study, the Nedelsky method served as a defensible baseline against which other methods were evaluated, supporting its continued relevance in undergraduate medical education.

Similarly, studies examining criterion-referenced methods have emphasized that absolute standards anchored to minimally competent performance are more aligned with the goals of competency-based medical education than relative standards based on cohort performance.1,8,10 The higher failure rate observed with the Nedelsky method in our study should therefore not be interpreted as excessive stringency, but rather as a reflection of a more explicit and defensible definition of minimum competence.

Defensibility and the “Failure to Fail” Phenomenon

A key implication of our findings relates to the well-recognized phenomenon of “failure to fail,” in which learners who have not yet achieved adequate competence are allowed to progress due to lenient or poorly justified assessment standards. 8 In our cohort, 209 students who would have passed under the Cohen method failed when the Nedelsky-derived standard was applied. This discrepancy highlights how norm-referenced methods may underestimate the level of performance required for safe progression, particularly when overall cohort performance is modest.

Fixed or relative cut scores, such as those generated by the Cohen method, do not explicitly define what a minimally competent candidate should know or be able to do. 7 In contrast, the Nedelsky method requires assessors to engage directly with item content and to make judgments about the plausibility of distractors for a minimally competent candidate. This process enhances transparency and provides a clearer rationale for pass–fail decisions, thereby strengthening the defensibility of the assessment.9,10

The moderate agreement between the two methods (κ = 0.47) further suggests that they are not interchangeable for pass–fail decision–making.

Feasibility in Resource-Limited Educational Settings

Although methods such as Angoff or Ebel are often regarded as best practice, their implementation typically requires large panels of subject matter experts and substantial psychometric expertise.5,10 In many medical schools, particularly in low- and middle-income countries, these resources are not readily available. Our study demonstrates that the Nedelsky method can be feasibly implemented by a small panel of trained faculty assessors within the context of routine course assessment, consistent with prior observations in classroom-based settings. 6

The use of the Nedelsky method in this context offers a pragmatic balance between methodological rigor and feasibility. While it may not capture the full complexity of clinical competence, it provides a defensible and transparent approach for setting standards in written examinations when more resource-intensive methods are impractical.

Interpretation of Score Distribution

The histogram of examination scores revealed a non-normal distribution with a concentration of scores between 50% and 70% and a 95th percentile of 75%. This distribution helps explain the divergence between the two standard-setting methods. Because the Cohen method relies on high-performing students as a reference point, it is sensitive to the overall score distribution and may produce lower cut scores when the upper tail of performance is limited. 7 In contrast, the Nedelsky method is independent of cohort performance, reinforcing its suitability for criterion-referenced decision-making.

Limitations

Several limitations should be acknowledged. First, this study was conducted in a single institution and focused on a single written examination in Obstetrics and Gynecology, which may limit generalizability to other disciplines or assessment formats. A formal sample size calculation was not performed because the study analyzed the complete cohort of students who sat for the examination during the study period. Therefore, the sample size was determined by the available examination data rather than by statistical considerations. Second, the Nedelsky method is inherently restricted to multiple-choice questions and simplifies performance judgments into discrete probabilities based on distractor elimination. This may not fully reflect the cognitive processes involved in answering complex clinical questions. 10 Finally, although assessors were trained in assessment methodology, formal psychometric analyses such as inter-rater reliability were not the primary focus of this study.

Implications for Practice and Future Research

Despite these limitations, our findings provide empirical support for the adoption of defensible, criterion-referenced standard-setting methods in undergraduate medical education, particularly in resource-limited contexts. Future research should explore the application of the Nedelsky method across multiple courses and institutions, as well as comparisons with other criterion-referenced approaches such as Angoff or Direct Borderline methods. Integrating standard-setting decisions with broader validity evidence, including reliability and educational impact, may further strengthen assessment practices.

Conclusion

In this study, the application of the Nedelsky standard-setting method resulted in a substantially higher and more defensible passing score than that produced by the commonly used Cohen method for a routine undergraduate medical examination. By anchoring pass–fail decisions to explicit judgments about minimal competence rather than cohort performance, the Nedelsky method provided a transparent and criterion-referenced approach to standard setting. These findings highlight the potential risks of relying on norm-referenced or arbitrary cut scores in competency-based medical education and underscore the value of adopting defensible standard-setting methods, particularly in resource-limited educational settings.

Footnotes

Acknowledgements

The authors would like to express their sincere gratitude to Professor Hoi Ho and Professor Tuyen Kim Ho for their valuable academic guidance and insightful comments during the development of this manuscript. Their expertise and constructive feedback greatly contributed to strengthening the methodological rigor and clarity of this study.

Ethical Considerations

The study used anonymized secondary data derived from routine examination records collected as part of the university’s standard academic assessment process. No questionnaires or additional data were collected, and students were not contacted for the purposes of this research. All identifiable information was removed prior to analysis. Because the study involved retrospective analysis of de-identified educational records and posed minimal risk to participants, formal ethical approval and informed consent were not required according to institutional policies governing educational research.

Author Contributions

Conceptualization: HAB, VTTT, HTN; Methodology: HAB, VXN, TKNH; Investigation: HAB, VXN; Formal analysis: HAB, VXN; Writing – original draft: HAB, VXN; Writing – review & editing: VTTT, TKNH, HTN; Supervision: TKNH, HTN. All authors read and approved the final manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are available from the corresponding author upon reasonable request, subject to institutional regulations.