Abstract

Introduction

Simulation is commonly used in health education, but its role in competency assessment remains underrecognized. This study examined the experiences and perceptions of medical students and evaluators regarding the use of simulators during an objective structured clinical exam (OSCE) to assess clinical competency.

Methods

This qualitative study employed a conventional content analysis approach. Forty-three medical students and 2 evaluators from the Shahid Beheshti University of Medical Sciences in Tehran, Iran were recruited through purposive sampling in 2024 to 2025. Data collection involved 3 focus group interviews (first: 15 students; second: 14 students; third: 14 students), 2 semistructured individual interviews, and field notes, with data collection continued until saturation was achieved. Data analysis was conducted using a method developed by Graneheim and Lundman.

Results

Six main categories were identified: (1) change management: resistance to change, stress from utilizing simulators, and positive adaptation to change; (2) facilitative role of the simulator: simulators overcome real-world limitations and act as facilitators rather than replacements for a clinical setting; (3) role of the human agent: the evaluator as a facilitator/interventionist, blended scoring, and feedback; (4) integration/coordination as the missing link: the simulator as a factor in vertical and horizontal integration; (5) challenges in utilizing a virtual patient simulator (VPS): lack of mastery learning before exams, insufficient time to use the simulator, absence of a holistic approach, low fidelity, and technical issues with VPS; and (6) progress toward enhancement: comparisons with past experiences, progress made, and the infrastructure and prerequisites of virtual OSCE.

Conclusion

Although the use of simulators in OSCEs is a suitable method for clinical assessment, there are drawbacks in their implementation and training that require special attention. It is recommended that simulation-based assessment be more widely incorporated into the medical education curriculum.

Introduction

In recent years, medical education has experienced many transformations following the changing needs of the population and scientific and technological advances, 1 and there has been a global trend toward competency-based medical education. 2 Competency means “having sufficient knowledge, judgment, skill, or power for a particular task.” 3 Competency is the ability to perform an activity that consists of the knowledge (coded applied knowledge and implicit knowledge based on experience), skill (technical and cognitive), and cognitive ability. This ability is habitual, stable, task-dependent, observable, measurable, independent, contextual, and knowledge-based, and is considered the main component of the profession.4,5 The evidence indicates that there are gaps in the valid and reliable assessment of the key competencies of students of some disciplines. 2 Current evaluation methods need to be improved to benefit educational programs, students, and patients. 6 One of the evaluation methods is the objective structured clinical exam (OSCE), which is a comprehensive, consistent, and standard method to evaluate the clinical learning experience of students.7-9 The OSCE was established in the 1970s and quickly emerged as an international benchmark for evaluating clinical knowledge, physical examination, communication, and clinical reasoning abilities. 10 The OSCE increases learners’ self-confidence and satisfaction,7,9 clinical judgment, and knowledge acquisition compared to other traditional evaluation methods. 9 Also, the OSCE provides opportunities for learners to learn, increase communication, coping, and problem-solving abilities. 7 Nevertheless, the implementation of the OSCE is not without challenges. For example, lack of clinical skills center, high costs, high preparation time, and standardization of stations and equipment, short time spent at each station, inflexible checklists, pervasive short (“snapshot”) encounters with the hypothetical patient, the lack of trained evaluators, experience of high stress, lack of standardized patients (SPs), and the impossibility of simulation of some skills may make it difficult to use it for assessment.7,9,11,12 Also, the differences in learners’ abilities, the strictness of some evaluators, differences in patient profiles, and the difficulty of stations can cause errors. 8 On the other hand, made-up OSCE scenarios may not reflect real-life scenarios. These problems, along with limited resources and a lack of experience, may make the implementation of the OSCE more difficult in developing countries than in developed countries. 12 The degree of the simulation fidelity and the problems of correctly recreating the real world are among the issues that are raised in the OSCE evaluations. 9 Indeed, one of the most fundamental aspects of establishing the OSCE's credibility is the degree of authenticity that simulation may provide an opportunity to address these gaps. 2 Simulation provides new opportunities for teaching scenarios to students, as well as critical reasoning, feedback on practice, and insight into lived experiences. 9 Simulation aims to replicate realistic patient care scenarios, allowing learners to demonstrate competency through problem-solving and decision-making.3,13,14 Simulation allows students to be taught through guided experiences in safe contexts and facilitates adequate learning and standardized assessment of the skills necessary to face a changing world.1,15 Simulation is cost-effective for training, patient safety, and reducing medical errors and patient harm, and provides a return on investment for hospitals.13,16,17 Simulation is widely used for education in healthcare professions. However, its value as a tool for assessing learners’ competency is not fully recognized. 3 Assessing competencies with simulation is complicated because no method can perfectly mimic clinical practice. 2 Simulation-based tests improve the ability to assess medical students’ competencies. 18 Simulators are the best tool to assess the performance of comprehensive clinical skills. They provide clear feedback about a learner's competency in facing artificial clinical scenarios. 13 Since the 1970s, simulation-based evaluation methods, clinical performance evaluation, and decision-making have been operationalized with the development of OSCE. 3 Simulation-based OSCE is used in some medical specialties in the certification process. Programs that have used simulators with high fidelity to simulate real emergencies can evaluate medical knowledge, clinical assessment, procedural, communication, and management skills of learners. 16 Computer-based case simulations, often referred to as virtual patients (VPs), have played an increasing role in education and assessment over the past decade. 3

One of the main problems of using simulators is their high cost. The initial capital expenditure and the ongoing maintenance cost of simulators are high. However, since simulation-based medical training has the potential to reduce medical errors, high training costs may lead to a reduction in overall national costs in the long term.

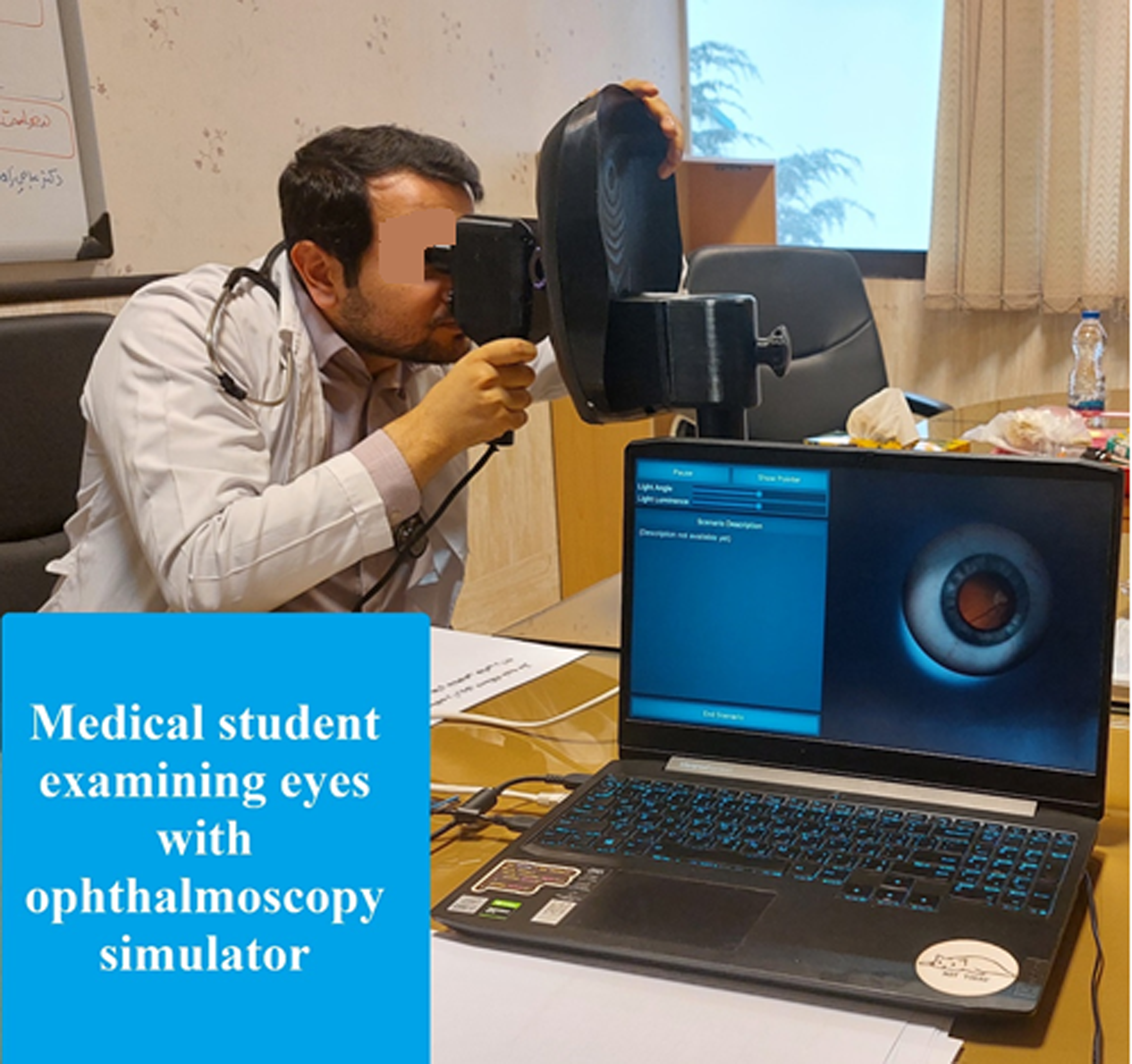

In the last few decades, Iranian universities of medical sciences have used OSCE. Despite the efforts of the trustees and the recent need of the universities to make it one of the formats of the clinical competency exam in medical sciences, its development is not as expected. In Iran, medical students are required to pass the “Practical Examination of Clinical Competency (PECC)” at the end of the course after 6 months of the internship period, and success in this examination is required to graduate. 19 Therefore, in recent years, Iranian universities have conducted OSCE. Recently, the Shahid Beheshti University of Medical Sciences (SBMU) tried to use a “computer-based clinical simulators” or “screen-based simulators” to evaluate the skills of medical students to examine a VP with cardiopulmonary disease (Figure 1). They also used a “high-tech simulator” to evaluate the students’ skills at the eye examination station with an ophthalmoscopy simulator (OS) in the PECC (Figure 2). Explaining students’ experiences and insights helps to provide a comprehensive assessment of OSCE. On the other hand, better decision-making and achieving better educational policies and practices require consensus, participation, and understanding of the experiences of evaluators and educational experts. Evaluators play an important role in the OSCE. They are responsible for identifying exam focus areas, designing stations, implementing the OSCE, and providing feedback to students. Therefore, it is important to clarify the understanding and experience of not only students, but also evaluators, to improve the quality of the OSCE. This study is an attempt to understand the experiences and views of students and professors as evaluators. Some studies have explained the experiences of students regarding the OSCE. For example, Saeed et al explained students’ experiences of simulation-based learning and its effect on their performance in the OSCE in Pakistan. Content analysis of their data revealed 3 key categories: learning through simulation, the role of the facilitator, and the way forward for personalized learning. 20 Although there are some reports about students’ experiences of participating in the OSCE, according to the review conducted by the researchers, no study was found that explained the experience and perceived challenges of the students regarding the use of high-tech simulators and virtual patient simulators (VPSs) in the OSCE in the Iranian context. While feasibility and validity are critical considerations in simulation-based assessment, the current study focuses on students’ experiences, which provide essential insight into how such assessment innovations are perceived, adopted, and interpreted in practice. Therefore, this study aimed to explain the experiences and perceptions of students and evaluators regarding the use of VPSs and OSs in the OSCE.

A medical student examining a virtual patient with chronic obstructive pulmonary disease.

A medical student examining eyes with an ophthalmoscopy simulator.

Materials and Methods

Design and Setting

This qualitative study was conducted with the qualitative content analysis approach in 2024 to 2025 at the SBMU. The study was grounded in an interpretivist research paradigm, which seeks to understand how individuals construct meaning from their experiences within specific social and educational contexts. Qualitative content analysis was chosen as it provides a systematic and flexible method for analyzing textual data, enabling the identification of codes, categories, and themes derived inductively from participants’ narratives. This approach was particularly suitable for exploring the experiences of medical students and evaluators with simulator use in OSCEs, as it allows for both descriptive and interpretive analysis of perceptions related to assessment and simulation. Although this study was not explicitly guided by a single qualitative theory, it was conceptually informed by frameworks of simulation-based medical education and experiential learning, which emphasize authenticity, learner engagement, and reflective practice in clinical skills assessment—consistent with the use of immersive simulation to facilitate concrete experiences, reflective observation, concept formation, and active experimentation in simulation curricula. 21 The authors adhered to the Standards for Reporting Qualitative Research (SRQR) 22 Guideline (Supplemental 1).

OSCE in the Study Setting

The OSCE was conducted to assess students’ clinical competencies in a standardized and objective manner in a medical school in 1 day. The examination included 1 quarantine station, 3 rest stations, and 12 assessment stations. The VPS and OS stations were not conducted consecutively. To reduce potential carryover effects and response bias, the stations were separated by other OSCE stations assessing unrelated clinical competencies. The sequence of stations was standardized for all students, and equal transition times were provided between stations.

There was a trained evaluator at each station (n = 12) utilizing checklists, and at stations with a VPS and OS, in addition to the evaluators with prior experience in OSCE and simulators, there was also a technician familiar with the simulator. The OS and VPS stations were separated. The isolated station served as a holding region to guarantee that there was no prior exposure to the appraisal scenarios and to protect the integrity of the exam.

Each appraisal station showcased a clinical situation aimed at surveying specific competencies. Certain stations executed SPs, whereas others utilized simulators or mannequins. To ensure confidentiality, checklists and scenarios were designed by faculty members who were not present at the stations as evaluators. A director facilitated the OSCE to oversee the examination process, whereas an assigned staff member rang a chime to demonstrate the start and conclusion of each station, guaranteeing precise time administration. Scores from all dynamic stations were amassed to assess the in general execution within the OSCE.

Several weeks before the OSCE, the simulator technicians trained evaluators and students to use the OS device and the VPS software. Also, the VPS demo was made available to the students. At VPS and OS stations, a blended scoring was used to capture both objective performance and holistic clinical competence. OS and VPS stations were scored using structured checklists complemented by evaluators’ ratings. Additionally, these stations generated automated performance metrics, which were reviewed and contextualized by evaluators. Final station scores were derived by combining checklist and system scores. This approach was intended to balance standardization with expert judgment in a high-stakes assessment context. The inclusion of the VPS and OS stations within the OSCE was designed primarily as a pilot evaluation of the performance and feasibility of these simulation tools. The objective was to determine whether these simulators could be effectively integrated into high-stakes assessments and, if successful, inform potential nationwide implementation in subsequent years. Although students were scored by both evaluators and the simulators at these stations, these scores were not factored into the final OSCE grade, as the primary aim of this study was to investigate participants’ experiences, perceptions, and interactions with the simulation tools within the assessment context, rather than to evaluate summative performance outcomes.

Simulation-based Assessment Systems

Two commercially available simulation systems were used in this study: a VPS and an OS.

Virtual Patient Simulator

At a station, a VPS (Arina Interactive Patient Simulator, Model A-100, Arina Mehr, Iran) was used to assess clinical reasoning, history-taking, and decision-making skills. The VPS is a commercially available, artificial intelligence (AI)-driven platform that delivers interactive and lifelike patient encounters through dynamic clinical scenarios. The system allows learners to engage in simulated patient interviews and clinical decision-making through predefined and adaptive case pathways. The simulator features realistic AI-powered patient responses, adaptive scenarios tailored to learner performance, and an extensive database of patient histories and multisystem pathologies, enabling consistent and standardized assessment across examinees. Integrated performance monitoring and analytics allow evaluators to review learner interactions and decision-making processes. The simulator was used exclusively for educational assessment purposes, without involvement of real patients.23,24

Ophthalmoscopy Simulator

The ophthalmic OSCE station employed an MechEye OS (ArIna Mehr, Iran), a commercially developed virtual reality (VR)-based simulator designed for training and assessment of direct ophthalmoscopy. An MechEye simulator provides a computer-based immersive environment that replicates fundoscopic examination using high-fidelity digital retinal visualizations. The system includes a retinal pathology library encompassing normal and abnormal fundus conditions, allowing standardized exposure to predefined ophthalmic findings. Key features include adaptive difficulty levels, AI-assisted feedback, VR-supported examination environments, and performance tracking, enabling objective evaluation of ophthalmoscopy-related competencies during the OSCE. 23

Participants and Sampling

Forty-three students and 2 evaluators were included in the study through a census. Participant selection occurred before the test. Inclusion criteria for medical students were: (1) willingness to participate in the study and (2) prior experience participating in the OSCE with OSs and VPSs. Evaluators’ inclusion criteria were: (1) willingness to participate in the study, (2) teaching experience with medical students, and (3) evaluation experience at stations with OSs and VPSs. The evaluator sample was limited to a small number of faculty members with direct experience in VPS-based OSCE implementation at the study setting. Two evaluators were recruited purposively as information-rich cases capable of providing in-depth insights into assessment processes.

Data Gathering

Three focus group discussions (first: 15 students, second: 14 students, third: 14 students) and 2 semistructured interviews with open questions and field notes were used to collect data, considering the possibility of thoughts, questions, and answers, as well as reflective thinking among the participants. The students did not know the test results when the interviews were conducted. To conduct focused group discussions, participants were invited to discuss and exchange their opinions and experiences about the subject in one of the halls of the Medical Faculty of the SBMU. In the focus group meetings, in addition to the main researcher, a psychiatrist who was proficient in group and individual interviews was present as a facilitator. In addition, semistructured and in-depth individual interviews with the evaluators were conducted by phone with prior coordination in a quiet setting. The time of individual interviews was chosen at the request of the participants. After introducing the researchers, the purpose and method of the study were explained. To gain trust and establish a proper relationship with the participants, the interview started with general questions. The focus of the participants’ questions was about utilizing simulators in the OSCE, with the first question being a general question based on “Please talk about your experience of using simulators in the OSCE.” Then, based on the experiences expressed by the participants, more specific, in-depth, and probing questions were asked to clarify the details. An interview guide was used in this study (Box 1). Its qualitative content validity was confirmed by the 5 researchers who are proficient in qualitative research. Focus group discussions and individual interviews were audio-recorded with the consent of the participants. All students and evaluators participated in group and individual interviews, and data collection continued until saturation was reached in the students’ subgroup. All digital files, including audio recordings and transcripts, were stored on password-protected computers accessible only to the first author. Backup copies were securely maintained on encrypted storage devices.

Interview Guide.

Please talk about your experience utilizing simulators in the clinical competency exam. “Which simulator station did you feel most comfortable in?” How? What were the features that made you have this experience? Have you worked with simulators before this exam? Where? Please explain more? How was the experience different from this exam? “What do you think are the advantages of using simulators in the process of assessing students in the clinical competency exam?” “What do you think are the disadvantages of using simulators in the process of assessing students in the clinical competency exam?” “What challenges did you face while administering the exam?” “What suggestions do you have to improve the quality of future exams?” If you have anything else to add that is important to you, please let me know.

All interviews were conducted in Persian. After coding and analyzing the data, parts of the interviews (quotations) were translated into English and reported as evidence of the findings.

Rigor or Trustworthiness

To increase the credibility, dependability, confirmability, and transferability or fit of data,25,26 the following actions were observed.

Credibility. The researchers spent enough time collecting and analyzing the data. They had a long-term engagement with the phenomenon under study, were completely immersed in the data, and tried to follow the techniques of conducting individual and group interviews. All researchers were faculty members of medical sciences universities who had mastered qualitative research. Also, the researchers have a history of conducting qualitative studies. The researchers had no role in the design or implementation of OSCE. Also, several methods were used to collect and analyze data. The text of the extracted codes, subcategories, and categories was approved by some participants. Two faculties supervised the process of the study. In this study, given the high-stakes institutional context of OSCE assessment, reflexivity was carefully considered. Recognizing the potential for power asymmetry between the researchers and participants, several steps were taken to minimize coercion and social desirability bias. Participation was voluntary, and students were assured that their decision to participate or decline would have no impact on their academic standing or OSCE results. Interviews were conducted after completion of the OSCE process and grading. Interviews were conducted by the first researcher, who was not directly involved in OSCE design, scoring, and students’ training.

Dependability. The study protocol and all documents were registered. An effort was made to accurately code the data, and the researchers agreed on the subcategories and categories extracted from the data.

Confirmability. Several meetings were held between the researchers, and a consensus was reached regarding the findings. Also, the quotes of the participants were used to confirm the subcategories and categories.

Transferability or fit. An attempt was made to use an appropriate sampling approach and provide an accurate description of the participants. Also, researchers searched for refuting evidence and analyzed negative cases, and tried to consider the opinions and experiences of all participants.

Data Analysis

Data were collected and analyzed simultaneously using Graneheim and Lundman's method. 27 First, the text of the focus group and individual interviews was transcribed verbatim. Transcripts were reviewed alongside the audio files to ensure completeness and accuracy. Field notes taken during and immediately after interviews were used to contextualize the transcripts during analysis. All transcripts were anonymized before analysis. Identifiable information, such as names, was removed and replaced with codes. The recorded interviews were listened to, and the transcribed texts were reviewed several times. Then, meaning or semantic units were extracted from the text of the interviews, and the data were coded. According to the experiences of the participants, explicit and implicit concepts were determined in the form of sentences or paragraphs of their words and denoting codes, and at the same time, the data were summarized and abstracted. In the data analysis, the researcher avoided using existing concepts or theoretical perspectives and allowed the concepts, subcategories, and categories to emerge from the data in an inductive process. Coding and category development were discussed among researchers to enhance analytic credibility. Discrepancies between the research team were discussed and resolved through consensus. Coding was conducted inductively, with categories emerging from the data rather than being predetermined. An audit trail documenting analytic decisions, code development, and revisions was maintained throughout the study. This study was designed to explore participants’ experiences rather than formally evaluate feasibility or establish validity evidence. However, participants’ narratives of technical, organizational, and assessment-related issues were analyzed as experiential insights relevant to future implementation and evaluation.

Results

Participants’ Characteristics

Forty-three medical students (30 males and 13 females) and 2 female evaluators who were at stations with simulators participated. The average score of the students in the OS station was 17.93, the VPS station was 16.58, and the average score of the OSCE was 15.73 out of 20 points. Four students failed the exam.

Main Findings

“Change management,” “Facilitative role of the simulator,” “Role of the human agent,” “Integration/coordination as the missing link,” “Challenges in utilizing the VPS,” and “Progress toward enhancement” were the 6 main categories extracted from the data (Figure 3). Participants’ quotations supporting the findings are provided in Table 1 (Supplemental 2).

Categories and subcategories extracted from the data.

Change Management

Resistance to Change

Some students resisted using technology because of their previous habits. The inability to communicate, the lack of a good feel, and the VPS being confusing and strange for some students were evident in the data.

Stress of Utilizing Simulators

Some students reported experiencing high stress while utilizing the VPS due to the novelty of the high-stakes OSCE, such as the VPS and OS in Iran, and the lack of sufficient practice.

Positive Adaptation to Change

In contrast, all students had a positive opinion of the OS, and some of them welcomed the VPS. They described the use of these simulators as suitable for assessing students. The ease of utilizing the simulators and communicating with them, the alignment of the simulators’ goal with the OSCE experience, and the lack of challenges in utilizing the OS were mentioned by students and evaluators.

The Facilitative Role of Simulators

Simulators Overcome Real-World Limitations

Participants acknowledged that simulators are able to overcome many real-world limitations. The inability to perform some examinations as well as simulators on real patients, the ability to experience rare conditions in the hospital through simulators, mimicking symptoms that an SP is unable to perform, auscultating heart and lung sounds with better clarity than a real patient, accurately assessing the comprehensive examination method, changing the level of difficulty of the exam, examining pathologies, and greatly reducing the likelihood of students cheating on the OSCE were among the items mentioned by participants.

Simulator as a Facilitator, not a Substitute for the Clinical Setting

The participants emphasized that although the use of simulators has many benefits in the training and evaluation process, they can never replace the real setting, and simulators’ role is more as a facilitator. Being forced to examine the eyes of other students during the educational program due to a lack of a simulator, the possibility of assessing the skills and examination methods of students using simulators, and the fact that it is easier to examine a real patient than a VP were extracted from the data. One of the evaluators emphasized the need for the intern to be present in the clinical setting and use the simulator to strengthen skills.

The Role of the Human Agent

The Role of the Facilitator/Interventionist of the Evaluator

Most students were willing to seek guidance and assistance from the evaluator at the stations with simulators. In contrast, some students mentioned the lack of need for guidance from the evaluator due to the student's mastery of the simulator. They expressed satisfaction with the facilitator's role and pointed out that in the absence of an evaluator at the stations, there was a possibility of serious errors by students. In contrast, a few students expressed dissatisfaction with the guidance provided by the evaluator at one of the simulator stations to speed up their work. They claimed that this led to increased stress and confusion.

Blended Scoring

Students expressed satisfaction with the OS scoring system and pointed out the need to use a blended scoring and multimethod assessment to increase the exam's flexibility and rigor. The students’ concerns about the simulator's failure to recognize the examination process and the need for the evaluator to be involved in the scoring process, not being satisfied with the evaluation method by the simulator, suggesting that the evaluator's opinion is preferable to the simulator's, preferring a combination of scoring by the human agent and the simulator, greater understanding of the evaluator than the software as a factor in fairer scoring, the possibility of human errors if only the human agent is used as the evaluator, suggesting the existence of a spectral scoring system rather than dichotomous (0 and 1), and the usefulness of the simulator's scoring system for learning the software were emphasized by the students.

One evaluator pointed out that if the students received sufficient training in how to use the simulators and the exam was standardized, it would be sufficient to have a technician at the OSCE stations instead of an expert evaluator.

Feedback

An evaluator noted that although in the OSCE, evaluators are required not to provide feedback to students, the students expected to receive feedback from the evaluator due to their high stress experience, and it was very difficult for the evaluator to avoid this, which indicates the supportive role of the evaluator. She sometimes had to provide feedback to the students because they were not familiar with the simulator. Some students also noted that providing feedback during training and practice with simulators is mandatory and illogical during testing.

Integration/Coordination as a Missing Link

Simulator as a Factor in Vertical Integration

The use of simulators, especially for training and assessing students, can be a strong factor in the vertical integration process and filling the gap between theory and clinical. Participants noted that externs (trainees) experience a sense of alienation, fear, and stress when entering the hospital. Also, there may not be enough real patients for all students to learn; therefore, the suggestion of using simulators in students’ training courses (especially in the years before entering the clinical setting, such as semiology and physiopathology courses, and internships) to reduce students’ fear, stress, and sense of strangeness from being in the clinical setting, increase their self-confidence, and greatly emphasize the need to train students before using simulators in the OSCE was emphasized by participants.

Simulator as a Factor in Horizontal Integration

The need for interprofessional coordination. Students, and especially evaluators, emphasized the need for interprofessional coordination between (a) the question designer and the evaluator, (b) the evaluator and the simulator designer, and (c) the simulator designer and medical professionals.

Despite the presence of the VPS designer as a supporter at the VPS station, students and evaluators faced challenges at this station. One evaluator noted the lack of role of evaluators in the design of the VPS. She mentioned that the main challenge of the OSCE was the lack of coordination between the question designer, the evaluator, and the simulator designer, which seemed to be the “missing link” and the main cause of confusion for students and the evaluator at the VPS station. She emphasized the need to seek advice from a specialist or subspecialist when designing the simulators.

Students emphasized the need to integrate medical and computer knowledge and skills to facilitate the use of simulators.

The need for scenario and exam question coordination. Students, and especially evaluators, emphasized the need for scenario and exam question coordination. One evaluator noted the lack of coordination between the clinical symptoms in the VPS and the final diagnosis, which led to student confusion. Students also noted this challenge and emphasized that it would be better for the scenario to focus on the process of examining students rather than on the final diagnosis of the disease. They emphasized that the VPS is suitable for taking a medical history and pointed out the extent of the examination process at the station. Suggestions for limiting tasks, introducing a targeted scenario, and the need for a clear assessment process at the VPS station were mentioned by the participants.

Challenges in Utilizing the VPS

Participants pointed out the challenges they faced while utilizing the simulators and suggested solutions to overcome them and improve the quality of utilizing the VPS, which are mentioned in this section.

Lack of Mastery Learning Before the Exam

Participants mentioned that they had been given a VPS prebriefing session and emphasized the need for exposure to the simulator before the OSCE. However, most students criticized the lack of mastery learning and the lack of sufficient practice before the OSCE. All students acknowledged that they had no challenges during the exam due to receiving sufficient training on the OS. In contrast, some of them indicated that they had encountered the VPS for the first time in the OSCE and had not received sufficient training on how to use it. The reason for the lack of sufficient practice for some students was that the software demo had not been implemented, and there was not enough time before the exam. In contrast, others admitted that they had received sufficient training and had no problems using the VPS.

Not Having Enough Time to Work With the Simulator

Most students believed that they did not have enough time at the VPS station and emphasized the need to allocate enough time according to the scenario.

Lack of a Holistic Approach

The need for a holistic approach was another issue mentioned by the students. Having a holistic approach leads to closer proximity to reality, coordination of the training and evaluation process, and better communication between the participants and the simulator. Oversimplification, limited examination and diagnosis process, inability to take a medical history, and inability to perform elective examinations of all organs in the VPS due to lack of time were among the issues criticized by the students and the evaluator. Some students attributed this problem to the characteristics of the OSCE, not the simulator.

Low Fidelity

The need for simulators to resemble reality, the preference for device-based simulators over VPSs, and the preference for VPSs over moulages were emphasized by most participants. In contrast, some participants were reluctant to use purely software-based simulators and preferred a combination of device-based and software-based simulators. Participants noted the high fidelity of the OS, its closeness to reality, and its close connection to it. Also, students were satisfied with the appearance of the VPS.

Students believed that the excessive clarity of the VP's cardiopulmonary sounds reduced the degree of fidelity, which made it difficult for students to think and make decisions. They noted that the high clarity of signs and symptoms in the VPS improved the learning process, but did not have a favorable effect on evaluation.

Technical Problems of VPS

The participants expressed satisfaction with the OS; however, they emphasized the need to fix the technical problems of the VPS. The failure to run the software demo for some students, the inability to connect to the server for practice, the similarity of some icons, the inability to move the mouse properly and the low accuracy of the stethoscope, the inability to examine the way murmurs are emitted, the inability to compare lung sounds, the slight delay in the playback of heart and lung sounds, and the inability to communicate with the VP were challenges raised by the students and the evaluator. Therefore, they emphasized that these technical issues should be resolved before readministering the simulator. Some students mentioned the possibility of communicating with an SP as opposed to a VPS and preferred that an SP be used in the OSCE.

Progress Toward Enhancement

Comparison With Previous Experiences

During the interviews, students compared their experience utilizing simulators in the OSCE with their previous experiences using VR and gamification. Some participants noted the similarity of the OS to VR and their desire to use VR in the OSCE. Some students pointed out that Cyber Patient Software is more advanced than VPS. In contrast, others evaluated it better compared to foreign software.

A Step Forward

Despite the challenges of using simulators, participants described their use as a step forward. They believed that the conditions and facilities available to students had improved compared to previous years. Students pointed out the possibility of the VP software learning and its gradual growth as more data is collected and the software's intelligence increases over time. This is a factor in the simulator's evaluation becoming closer to the human agent.

One evaluator and some students suggested using higher-quality images in the VPS. Also, the suggestion of using the VP for drug therapy, prescription writing, and procedures such as pericardiocentesis was raised.

Infrastructure and Prerequisites of Virtual OSCE

The participants suggested that if the infrastructure (such as high-speed internet) is provided, the OSCE could be held virtually. Some admitted that if there is sufficient infrastructure, control of distractors, the presence of an evaluator, and feedback, they would prefer the virtual OSCE rather than in-person. On the other hand, some preferred the in-person exam to the virtual one due to better student–evaluator interaction and less likelihood of errors and student objections.

Discussion

In this study, the experiences of students and evaluators from utilizing a VPS and OS in the OSCE at an Iranian medical university were explained. This study highlights the complex interplay between technology, human agents, and systemic factors in simulation-based OSCEs.

“Change management” was one of the main categories. Some students showed resistance to the new situation of using simulators and pointed to the experience of high stress, not being familiar enough with the new situation. Stress experienced while performing skills in the OSCE,7,12 and the feeling of negative experience, anxiety, vulnerability, and insecurity during simulation-based training, have also been reported in other studies. 28 The stress associated with VPS stations may reflect heightened perceived stakes and reduced opportunities for real-time clarification, illustrating how modality influences consequential validity and student agency. On the contrary, some students welcomed the change and felt very good about the experience of utilizing simulators. In Ataro et al's study, satisfaction with OSCE was also a theme that supported its implementation. 11 The change management category illustrates how innovation in assessment systems produces emotional and behavioral consequences among stakeholders. According to modern validity frameworks, such consequences are a legitimate source of evidence, as stakeholders’ perceptions and engagement can influence both performance and the perceived fairness of decisions. 29

The “facilitating role of the simulator” was another category. The participants mentioned the facilitating role of simulators in the teaching–learning and evaluation process. They noted that nothing replaces teaching–learning and assessment activities in the real setting. Simulated assessments enable the testing of preparedness for scenarios that candidates would rarely or never encounter in practice. 30 Arabpur et al stated that simulators function both as an educational method and as a tool for performance assessment, facilitating the acquisition and refinement of clinical competencies within a structured setting that enables learners to translate theoretical knowledge into practice without direct patient interaction. 14 The facilitative role of simulators emphasizes their contribution to authentic learning and assessment experiences by situating trainees in realistic clinical contexts where they can develop and refine technical and nontechnical skills in a safe, controlled environment that overcomes real-world limitations and enhances clinical competence compared with traditional methods.31-33

“The role of human agent” was another category. All students pointed out the necessity of the presence of the human agent and the very important role of the evaluator in OSCE, especially in stations with simulators. The existence of interaction between the student and the evaluator was emphasized by most of the students. They suggested blended scoring (evaluator and simulator) and the use of multimethod evaluation in stations with simulators. Nevertheless, the necessity of the dominant role of the evaluator in scoring the stations was agreed upon by all the participants. These findings suggest that hybrid OSCE models must balance standardization with opportunities for human interaction to preserve authenticity and support defensible high-stakes decisions. OSCEs are inherently complex and significantly reliant on the evaluative quality of examiners assessing the candidates’ performance. 34 In Saeed et al's study, all students emphasized the importance of the facilitator's involvement in conducting the sessions and his/her important role in creating scenarios and communicating with the mannequin. 20 According to the protocol of most educational institutions, it is forbidden to provide feedback to the student during the OSCE. In this study, the participants wanted to provide feedback on the simulator both in the training process and in the evaluation, which can lead to better learning, strengthening their clinical reasoning, and better decision-making. In Saeed et al's study, students were satisfied with the effect of feedback in improving their clinical skills. 20 In Jaafarpour et al's study, which explained the lived experiences of undergraduate midwifery students on OSCE, feedback to students about their learning activities was the most important concept extracted from the data. OSCE helps students to identify their weaknesses and thereby improve their skills in clinical training, and is a valuable tool for learning necessary professional skills. 35 The role of human agents underscores the critical influence of human judgment on assessment outcomes and the reliability of high-stakes decisions. Evidence on rater cognition indicates that impersonal judgments and personal preferences can affect the judgment processes of raters. 36 The blended scoring approach reflects the need to reconcile standardization with the inherently interpretive nature of clinical performance assessment. While automated OS and VPS metrics enhanced reliability and consistency, evaluator ratings preserved the capacity to evaluate nuanced competencies such as clinical reasoning and communication. This hybrid model aligns with contemporary assessment theory, which emphasizes the role of expert judgment in ensuring validity and defensibility in high-stakes OSCEs.

In this study, “integration/coordination as the missing link” was a main category. Participants recommended that simulators be used to train students before entering the real clinical setting. The use of simulators can be a factor in vertical integration and linking theory and practice. Vertical integration leads to the integration of basic and clinical sciences. 37 One study reported that the most valuable feature of simulation-based training from the perspective of students was learning a new skill under supervision, applying prior knowledge to a clinical scenario, and identifying gaps in knowledge and skills. 28 In this study, the lack of coordination between the evaluator, the question designer, and the simulator designer, the scenario and exam questions, and the requirement to integrate medical and computer knowledge were mentioned by the participants, which indicates the need for interprofessional coordination and horizontal integration. Ataro et al also mentioned that the poor organization of the OSCE hurt its implementation. 11 In contrast, according to the report by Fisseha and Desalegn, the majority of students and evaluators had a positive opinion about the features, structure, organization, and validity of the OSCE. 12 Horizontal integration can bring different disciplines, subjects, and titles closer together, eliminate redundancy, and create coherence and interconnectedness. 38 Yeates et al believed that authenticity in an OSCE cannot be taken for granted; rather, it seems to arise from the interplay of station design, personal preferences, and the contextual expectations surrounding assessment. They believe that a deeper understanding of candidates’ engagement with simulation and scenario immersion in summative assessment is necessary. 30 The challenges identified in integration and coordination reflect broader systemic consequences, underscoring the need for alignment across curricular levels to support meaningful and authentic learning and assessment experiences.

One of the most important findings of this study was “challenges in utilizing the VPS.” The students indicated that they had been given a VP prebriefing session, but one of the most important challenges for most of them was their lack of familiarity with the VP due to a lack of sufficient practice and a lack of mastery. In fact, despite prior training of students for using the VPS software, it seems that it was not sufficient for all of them. Some students needed more educational sessions to master learning. However, the evaluators were well-trained and proficient. One study reported that most students felt that a briefing session was useful for simulation-based training, and all participants were in favor of regular debriefing sessions. 28 Saeed et al also noted the impact of simulation-based learning on student performance on the OSCE. In their study, students considered hands-on experience during simulation-based learning of clinical skills and the provision of opportunities for deliberate practice to be critical to their learning. 20 Fisseha and Desalegn reported that approximately 80% of students recommended practice sessions before administering the OSCE, and more than 20% of raters had no prior training on the OSCE. 12 Adequate training and practice before the exam are essential for students to be successful in the OSCE.

In this study, most students reported not having enough time at the VPS station. Lack of time to perform skills at some OSCE stations has also been reported in other studies.7,8,11,12 Inadequate “task time agreement” (ie, time pressure) leads candidates to rush, which modifies their engagement with (simulated) patients and/or hinders the assessment of numerous realistic scenarios. 30 Therefore, time management, whether in simulator or nonsimulator stations, should be a particular focus for OSCE designers.

In this study, the lack of a holistic approach in scenarios, including simulator stations, and the lack of alignment of the training during the course with the OSCE due to a lack of time were criticized by students. In OSCE stations, specific skills are assessed and may not accurately reflect the entire experience of real patients. Analyzing the whole patient is more than just examining individual parts. 9 Students in the study by Saeed et al emphasized that each station should focus on 1 skill. 20

Findings indicated that most students preferred the device-based simulator to the VP and the VPS to the moulage. The high level of technology of the OS, with its high fidelity, allowed it to closely mimic the functioning of the human eyes, enabling students to fully engage in the designed scenario and exercise sound clinical judgment. Adequate pre-OSCE training was key to students’ success in their positive experience of this station. This finding is consistent with a recently published systematic review. 39 In contrast, some participants noted that the VPS was not very realistic. Some studies have also noted the lack of true-reflection scenarios that mirrored real patients. 7 Some researchers have pointed out that “decoupling” users from reality is one of the challenges of virtual simulation that should be considered. 40 While participants valued the standardization afforded by the VPS, their narratives revealed a perceived reduction in authenticity and relational engagement compared with OS stations. This tension highlights a core challenge in OSCE design: optimizing reliability without undermining authenticity, a construct closely linked to validity in performance-based assessment.

Technical problems with the VPS, including the lack of a pretest demo for some students, the similarity of the icons, software limitations such as the low accuracy of the stethoscope, and the inability to communicate with the software, were the most important challenges for students. Addressing these shortcomings could lead to a better experience for students utilizing the VPS. The challenges in utilizing the VPS illustrate that technical and logistical constraints can adversely affect both engagement and fairness, reinforcing that validity encompasses the impact of assessment on stakeholders. 29

In this study, “on the way upgrading” was another category. The students compared utilizing simulators with their previous experiences. It seems that utilizing simulators led to the activation of students’ cognitive drive. Azobel calls the most important motivational factor affecting meaningful learning “cognitive drive.” Cognitive drive is an intrinsic motivation that stems from a pervasive curiosity and interest in discovering, manipulating, understanding, and interacting with one's environment. 41

Despite the challenges, participants described the use of simulators as a step forward. Students also suggested that, subject to the provision of infrastructure, a virtual OSCE could be possible in the not-too-distant future. In this study, the SBMU was the first in Iran to use simulators in the OSCE, which could indicate the lack of adequate infrastructure in most Iranian medical universities and possible inequities in digital medical education. The lack of infrastructure and the lack of access to some students to simulation training resources have also been criticized in other studies. 40 Virtual OSCE was one of the alternative methods of traditional assessments and certification for students and trainees during the COVID pandemic to meet the high demand for healthcare workers. 42 In the process of improvement, medical education policymakers should strive to incorporate the use of simulators and virtual OSCE elements into student evaluation systems, given the increasing role of telehealth in recent years. However, the experiences of other educational institutions in implementing virtual OSCE, such as reduced social interaction with the evaluator and students, students’ inability to perform physical examination maneuvers, and the desire of most students to interact face-to-face with patients,1,15 need to be considered. The category progress toward enhancement demonstrates that iterative improvements, infrastructure development, and comparisons with past experiences shape perceptions and foster adaptation, emphasizing that the consequences of assessment are dynamic and ongoing.

Totally, the findings suggest that assessment modalities operate within a sociotechnical system in which technological mediation reshapes student agency, examiner judgment, and the consequential meaning of scores. Interpreting these dynamics illuminates how simulation design choices influence the defensibility of high-stakes assessment decisions.

Limitations

One of the limitations of this study was the possibility that participants did not share their entire experiences during the interviews. The researchers attempted to overcome this limitation by gaining their trust. As well, the evaluator sample was small, reflecting the limited number of faculty involved in VPS-based OSCE implementation at the study setting. While their perspectives provided valuable expert insights, data saturation cannot be assumed, and findings related to evaluator experiences should be interpreted cautiously. Future studies, including a larger and more diverse group of evaluators, are recommended to enhance transferability. Also, the limited generalizability of the findings is another limitation and a characteristic of qualitative research. Another limitation was the possibility of a “halo effect” during focus group interviews. The researchers attempted to overcome this by observing interview principles and controlling the findings by participants and peer reviewers. Another limitation was that the VPS and OS evaluators did not design scenarios and checklists for their stations, which caused some challenges identified after analyzing the data. Also, although the training on how to use simulators was provided to students before the OSCE, the lack of proficiency of some of them in utilizing simulators can affect the results. Therefore, providing sufficient training to students before using simulators in the OSCEs is emphasized again. On the other hand, stakeholders’ experiences suggest that simulator-based OSCEs may be feasible under appropriate conditions and may support aspects of assessment validity; however, these interpretations arise from experiential data and require further empirical validation.

Conclusion

Overall, these findings illustrate that assessment innovation extends beyond technical implementation to a sociotechnical process in which perceived authenticity, consequences of change, and human judgment shape stakeholder experiences and have implications for assessment practice. Additionally, these findings indicate that simulators’ integration in OSCEs is a promising yet context-sensitive innovation shaped by technological reliability, infrastructure, and human judgment. This study also demonstrated the inescapable link between training and evaluation. Through targeted training and practice and feedback through simulators, students will gain more confidence without compromising patient safety, which can lead to improved performance on the high-stakes OSCE examination at the end of their internship. Although the use of simulators in OSCE is a suitable method for clinical assessment, there are drawbacks in their implementation and training that require special attention. The contribution of OSCEs to assessment programs would be significantly enhanced if their authenticity were to be improved. Greater use of student-centered methods in teaching and assessment can help them improve their clinical decision-making skills while enhancing their competencies. Therefore, it is recommended that simulation-based training and assessment be more widely incorporated into the medical education curriculum. Given the single-site design and technical limitations observed, the transferability of results should be interpreted cautiously. Further multi-institutional studies are required to explore the experiences of students, evaluators, and simulator designers regarding their use in the OSCE. By analyzing their experiences, challenges, opportunities, and areas for improvement, a basis is provided for increasing the efficacy, efficiency, and effectiveness of these student-centered methods.

Supplemental Material

sj-docx-1-mde-10.1177_23821205261435371 - Supplemental material for Experiences of Medical Students and Evaluators on Utilizing Simulators in Objective Structured Clinical Exam: A Qualitative Study

Supplemental material, sj-docx-1-mde-10.1177_23821205261435371 for Experiences of Medical Students and Evaluators on Utilizing Simulators in Objective Structured Clinical Exam: A Qualitative Study by Zahra Farsi, Babak Sabet, Soleiman Ahmady, Ali Kheradmand and Mahdi Aghabagheri in Journal of Medical Education and Curricular Development

Supplemental Material

sj-docx-2-mde-10.1177_23821205261435371 - Supplemental material for Experiences of Medical Students and Evaluators on Utilizing Simulators in Objective Structured Clinical Exam: A Qualitative Study

Supplemental material, sj-docx-2-mde-10.1177_23821205261435371 for Experiences of Medical Students and Evaluators on Utilizing Simulators in Objective Structured Clinical Exam: A Qualitative Study by Zahra Farsi, Babak Sabet, Soleiman Ahmady, Ali Kheradmand and Mahdi Aghabagheri in Journal of Medical Education and Curricular Development

Footnotes

Acknowledgments

This article is part of a research project approved by the Shahid Beheshti University of Medical Sciences. This project was designed and implemented in response to the request of the Undergraduate Medical Education Council of the Ministry of Health and Medical Education, Iran. The authors are grateful to the officials of this center and the Shahid Beheshti University of Medical Sciences. Also, the authors thank the participants and OSCE organizers for their sincere cooperation.

Ethical Considerations

This study was approved by the Research Ethics Committee of SBMU (IR.SBMU.SME.REC.1403.029; Date: 20/05/2024). Also, the principle of confidentiality regarding the mention of quotations and data, the principles of the Committee on Publication Ethics, and the Helsinki Declaration were observed.

Consent to Participate

The participants were informed about the objectives and method of the study and were assured of the confidentiality of their identities when welcoming them to take part. They participated in the study voluntarily and signed the informed written consent. Also, participants had the right to withdraw at any stage of the study. Data collection did not harm the social, educational, and professional status of the participants.

Author Contributions

ZF: conceptualization, planning, data collection, transcription, data analysis and interpretation, writing the initial draft of the manuscript, and revise manuscript in the review process. BS, SA, and MA: conceptualization, planning, data interpretation, and critical revision of the manuscript. AKh: conceptualization, planning, data collection, interpretation, and critical revision of the manuscript. All authors collaborated in the study, and they read and approved the final manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Information will be available upon reasonable request from the corresponding author in Persian.

AI Statement

Artificial intelligence was used to improve the manuscript's language; the authors reviewed and verified all outputs for accuracy and integrity.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.