Abstract

Higher education is a fundamental social institution that supports the latest theoretical developments and builds advanced skills. All institutions of higher education require that undergraduate and postgraduate students acquire performance skills as communication. Despite the fact that nearly every higher education program and discipline includes such skills among their learning outcomes, there are few instruments available with which to assess such complex competences. This article presents the findings of a study in which an instrument to measure communication skills was operationalized according to Habermas’ theory of communication, which distinguishes between strategic and understanding-oriented communication. The instrument introduced and used was based on role-plays that included standardised instructions and standardised observation forms. In addition to the empirical investigation into the theoretical dimensions of strategic and understanding-orientated communication, this study also examined two correlations: the correlation between performance-based testing and self-assessments and the correlation between performance-based testing and non-verbal communication. Two dimensions can be found in confirmatory factors analysis. Furthermore, the study found a medium correlation with non-verbal skills, but low correlation with self-assessments. Following a presentation of the study, the article concludes with a discussion of the benefits as well as limitations of such an instrument.

Educational objectives and quality assurance at universities

Higher education is a fundamental social institution that supports the latest theoretical developments and builds advanced skills (Hüther & Krücken, 2018; Schofer et al., 2021). Its importance has only increased in light of its responsibility for teaching skills that have become essential to dealing with the many challenges facing the world today. Further evidence of its growing importance is also provided by the rise in the number of enrolled students and institutions of higher education that have emerged in the past two decades (Gu et al., 2018; Organisation for Economic Co-operation and Development, 2018, 2019). Such growth is the direct result of massive state effort and investment worldwide. But are such investments justified? Effective? To be able to answer such questions, it is necessary to develop tools for measuring the quality and legitimacy of such expenditures (Coates, 2016).

As part of a larger move at universities to adopt strengthening quality-assurance measures, a discussion has recently emerged in relation to university policy and the scientific development of a novel test format, competence-oriented examinations. This approach to testing aims to provide empirical evidence that students are achieving clearly defined learning objectives. Referred to as “performance-based” or “competence-oriented tests” within the field of competence research, such tests seek to represent holistically the individual’s capabilities to act (Blömeke et al., 2015; Shavelson et al., 2018). Thus, even the designation of a “competence-oriented examination” of communication skills, for example, suggests that standard forms of testing, like written examinations, are not appropriate if the purpose is not so much to demonstrate a student’s knowledge of the relevant subject but to measure their capacity to act. Competence-oriented exams emphasize the specificity of the situation and the student’s capacity to adapt. They measure skills by introducing situations that involve complex interactions that are as authentic as possible (Braun & Mishra, 2016).

In the field of higher education, research is currently underway that aims to develop such performance-based tools for future use in higher education (Hyytinen & Toom, 2019; Shavelson et al., 2018). The discussion and findings presented in this article are situated within this context. The article begins by discussing communication skills as a particular competence to act. It then presents the research findings of a test designed to evaluate the effectiveness of competence-oriented learning.

Communication skills as a competence to act

In a literature review, Blömeke et al. (2015) proposed a seemingly paradoxical notion of the term “competence” in its scientific use. On the one hand, in keeping with the analytic tradition, domain-specific competences is understood as cognitive dispositions. Based on this concept, competences are documented using standardized written (achievement) tests that are usually developed specifically for a certain discipline (i.e. Zlatkin-Troitschanskaia et al., 2019). By extension, this notion of competence focuses on cognitive achievement, in particular on declarative and procedural knowledge. On the other hand, Blömeke et al. (2015) argue that competence is more than achievement; it is also competence to act. According to this holistic understanding of the competence to act, observing behavior in a complex (situational) context that is as authentic as possible is essential. Competence to act is about the ability to adapt effectively to one’s social environment or to behave appropriately according to the situation. The development of context and culturally sensitive competence to act, Masten and Coatsworth (1998, p. 206) argue, is something an individual can learn. According to this notion of competence, individuals are considered competent if they are able to reach their goals with their situation-specific behavior and at the same time act within the boundaries of socially accepted behavior. The ability to communicate is one of the most important components of successful social interaction.

Types of communication

Habermas (2015) postulated a theory of communication. As part of his theory, he differentiates between two types of communicative action that can be characterized in terms of the goals and intentions of the participants in a conversation. The goal of strategic communication, he argues, is to attain certain interests, a purpose that the means of communication serves. Communication-oriented-toward-understanding, by contrast, presupposes that all participants will pursue interaction as the most transparent course of action. Such a course ensures that participants reach a common understanding and, if possible, agree upon a common solution that is based on the best arguments (Habermas, 2015).

For example, the goal of an employee’s conversation with a supervisor can be to obtain permission to attend a professional development event, even though this event may serve as the springboard for the employee’s applying for other jobs. In a case like this, the strategic communication technique entails emphasizing the employee’s and the supervisor’s common interests. They thus avoid any mention of the fact that the employee may achieve personal advantages through their professional development. After all, a supervisor is not likely to support any sort of professional development that increases the likelihood that the employee would leave their current position. For this reason, what the employee says in this case does not serve common understanding. In order to increase the likelihood that the supervisor will approve of the employee’s participation in such an event, the employee thus puts forward arguments from the supervisor’s perspective. An example of a situation of communication-oriented-toward-understanding can be illustrated by the joint preparation of guidelines in which one of the colleagues introduces content that others deem unacceptable. In this case, the colleagues who disapprove of such content raise objections to these ideas in a polite, but firm manner. Since, in this case, the goal is understanding, the opinions of all the partners in the conversation are given equal weight and can only be accepted or rejected on the basis of reason. If there are factual reasons for rejecting certain parts of a manual, the partners participating in the conversation should agree not to retain them.

Habermas (2015) adopts a normative stance with respect to good “successful communication” and equates it with communication-oriented-toward-understanding. Most scholars, however, consider both types of communication, depending on specific situational requirements, promising and, thus, under certain circumstances, appropriate for developing a testing tool.

As stated above, Habermas formulated a theory of communication, which is more complex than what is introduced here. For the purposes of our study, we focus solely on the two types of communication he proposed.

Non-verbal communication

In addition to what is said, non-verbal signals are also crucial to successful communication. They include all of the physical signals that occur when a person talks, apart from actual words. It is the how something is said, independent of the content. More specifically, this includes haptic signals (e.g. touching), body language (e.g. posture, mimicry), proxemics (e.g. the chosen spatial proximity to one another), or physical characteristics (e.g. clothing and cosmetics). Body language in particular is relevant to communication (Röhner & Schütz, 2015).

Measuring communication skills

On the subject of aspects of non-verbal communication, there exist a number of empirical studies that are based on observational data (Conrad & Newberry, 2011; Klein et al., 2006; Spitzberg & Adams, 2007). Scholars have also applied Habermas’s theories of communication to their research on leadership and organizations (Hallahan et al., 2007; Retallick, 1991; Werder et al., 2018). Such studies have provided further evidence that communication plays an essential role in the professional world today. In contrast to pre-existing studies based only on non-verbal communication or those focused on leadership and organizations, our study considers both verbal and non-verbal forms. Moreover, we apply Habermas’s notion of two types of communication specifically to the field of higher education, considering whether his ideas can be fruitfully applied to this context.

Research questions

On the basis of the discussions outlined above about the theories and higher education policies developed in relation to performance-based assessments, there emerge the following key research questions, which we tested empirically:

Is it possible to apply and to empirically prove the efficacy of the two conceptual dimensions, i.e., strategic communication and communication-oriented- toward-understanding to the context of higher education? Is there an empirically verifiable correlation between these dimensions of communication and other assessments of communication, such as:

self-assessments of communication skills and observational data on non-verbal communication?

Methodology

Role-playing games

Role-playing games are considered a suitable method for conducting conversations that are as authentic as possible and thus for stimulating concrete communication situations (Braun & Mishra, 2016; Gulikers et al., 2008; Stevens, 2015). Based on discussions about the use of role-playing games for simulating communication situations, we developed 10 role-playing games. Five are oriented toward strategic communication; five are oriented-toward-understanding-communication. Table 1 contains a short description of each role-play.

Ten role plays and descriptive statistics.

In addition to the written instructions for the study participants and the trained interlocuters, the games also included standardized observation sheets in which trained raters recorded and evaluated the communication behavior displayed.

Instructions: The instructions were at most one-page long and indicated the goal to be attained as well as certain information about the context. The goal of the conversation was either strategic or oriented-toward-understanding; no specifications were made concerning specific behavior toward achieving this goal. This made it possible to observe how students used the information provided in the instructions during the conversation. If all of the information was provided openly, in order to allow the students to weigh different arguments, then communication was deemed more oriented-toward-understanding. By contrast, if certain information was provided in a nontransparent way or omitted entirely, then it tended to be a strategic-conversation.

Observation sheets: For all 10 role-playing games, observation sheets were developed that included between 9 and 16 items and a four-point rating scale. All of the items were worded in such a way that strong agreement indicated strong communication competence in accordance with the theoretical concept. The observation sheets were completed directly following the end of each conversation in order to enable evaluation of the entire course of the conversation. Besides these observation sheets, each of which was allocated to a specific role-playing game, after conducting all of the role-playing games, four highly inferential items were used, each of which was evaluated on a four-point response scale for strategic conversational situations as well as ones oriented-toward-understanding. These global items are as follows:

The student displays good communication skills in the conversational situations overall. The student is a competent speaker in the conversational situations. The student communicates appropriately in the conversational situations. The student communicates in a goal-oriented fashion in the conversational situations.

There were in total six scales for each communication style, five role-plays plus one global scale (see Table 2).

Two-dimensional confirmatory factor analysis.

Results of confirmatory factor analyses; full information maximum-likelihood-estimation. CFI: comparative fit index; RMSEA: root mean square error of approximation; ρ: rho, estimation for reliability.

Conducting the role-playing games, and thus the test, required three individuals: the student as the study participant, an interlocuter as the conversation partner, and a rater. During the conversation between the subject and the interlocuter, the observer remained in the background and, accordingly, did not participate in the conversation. The rater focused exclusively on evaluating the study participants communication skills as shown during the conversation using the standardized observation sheet.

Data-gathering locations and the sample

The role-playing games developed were conducted at 11 universities across Germany (six universities, four universities of applied sciences, and one private university; one each in Baden-Wuerttemberg, Bavaria, Berlin, Brandenburg, Hesse, Mecklenburg-Western Pomerania, Saxony, and two each in North Rhine-Westphalia and Saxony-Anhalt).

At these universities, we made an open call to participate in the role plays using flyers, social media and with making announcements in lecture courses. Participation was voluntary. A total of 549 students in various phases of their studies participated in the role-playing games. Study participants were, on average, 24.7 years-old, the youngest were 19 years-old and the oldest 39 years-old. Gender was nearly balanced, with 50.21 percent female and 49.79 percent male students. Almost the half of students were undergraduates (48.95%). One-third were postgraduates (30.86%). Some students were enrolled in programs for state examinations (8.64%), another tenth (11.11%) were enrolled in programs that existed prior to the Bologna reforms (diploma, magister degree).

Research design for the use of the role-playing games

Each student participant received a folder with four randomly chosen role-playing games (out of the ten developed); two each were strategic and oriented-toward-understanding. By design, each student ran four out of the ten role-plays. This produced observable data of 40 percent for each of the 10 role-playing games, and thus 60 percent randomly missing values, a consequence that we had anticipated. In all cases, the order in which the four role-playing games were to be played was determined at random. This made it possible to apply Full Information Maximum Likelihood (FIML) to estimate missing values (Lüdtke et al., 2007). All data could therefore be processed and used. If every student played each of the ten role plays, then the assessment of one student would have taken almost two hours, which exceeded our time resources.

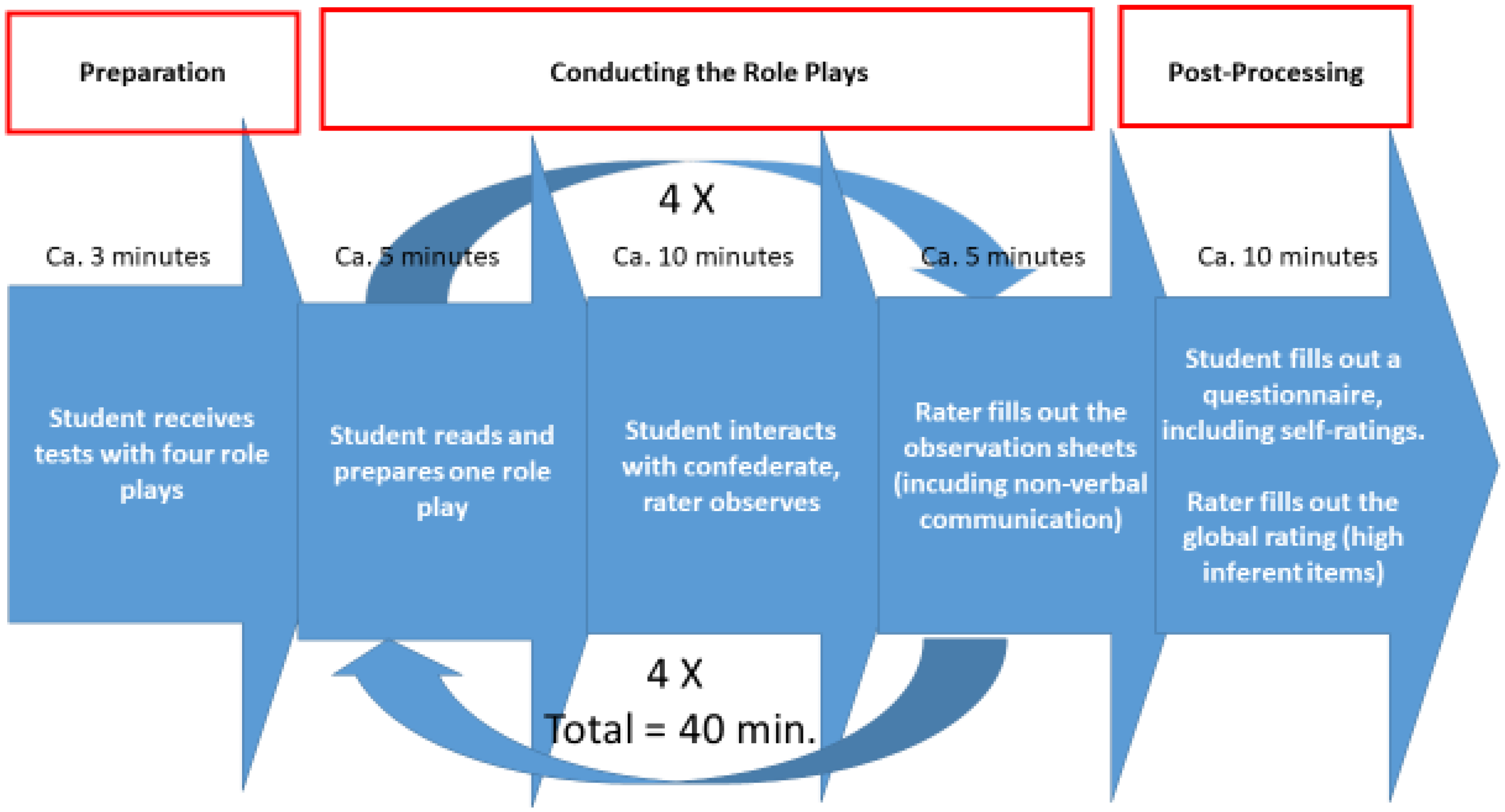

All of the interlocuters and observers were also assigned at random. The students were given the instructions just before the role-playing game and had up to 5 min to prepare for the conversation. Then, the interactions lasted up to 10 min. This was repeated four times. Each student was thus observed for 40 min (Figure 1).

Role-play procedure.

Additional instruments

In addition to the role-playing games, for each individual student, the raters completed the observational scale “Conversational Skills Rating Scale (CSRS)” (Spitzberg & Adams, 2007) to document non-verbal communication after each role-play.

In addition, students rated themselves by using a subscale for communication skills from the “Evaluation in Higher Education: Self-Assessed Competences” (‘HEsaCom’; Braun & Leidner, 2009). These items for self-evaluation were included in the questionnaire that the students completed after finishing the role-playing games.

In total, there were three different measurements of communication. First, observation of performance in role-playing games of two communication styles (strategic communication and communication-oriented-toward-understanding); second, the observation of the use of non-verbal communication; and third the self-ratings of communication.

Analysis of the data

A confirmatory factor analysis, with two latent constructs, was constructed in order to answer the first research question, namely empirically testing the two types of communication. Since, as mentioned above, the complex test design resulted in a large number of randomly missing values, the model was developed in two steps. First, a manifest indicator for strategic communication and one for understanding-oriented-communication were constructed for each role-playing game. In order to model the latent dimensions of strategic and understanding-oriented-communication skills simultaneously, confirmatory factor analyses (CFA) were calculated in a next step with the latent dimensions of the 10 role-playing games. This meant: strategic communication and understanding-oriented-communication consisted of five indicators each from the relevant role-playing games, and additionally of a highly inferential overall evaluation (see above). Conforming to theory, a two-dimensional model with correlated factors was specified. On the basis of the randomly missing values produced by the research design, the models were estimated using the “Full Information Maximum Likelihood (FIML)” (Lüdtke et al., 2007). The reliability of the correlated factor model was calculated as ρCF as proposed by Cho (2016) following McDonald (1985, 1999), in which the subscript CF stands for “correlated factors.” A subscript for each subscale was placed as a prefix: sρCF stands for strategic communication competences, VρCF for understanding-oriented ones.

In order to answer the second research question, the correlations between the strategic/understanding-oriented-communication skills, on the one hand, and (a) the self-reports of communication skills (HEsaCom), and (b) the observational data of non-verbal communication (CSRS), on the other, were analyzed.

To this end, the expected a posteriori estimators (EAPs) (Embretson & Reise, 2013) were calculated first for strategic and understanding-oriented-communication since they were estimated as latent constructs. Total scores were calculated for the self-reports of communication skills (HEsaCom, see above) and for the non-verbal aspects of communication (CSRS) (Spitzberg & Adams, 2007). The correlations of the values determined were estimated using Pearson correlations between the manifest variables.

All the analyses were conducted using the statistical software package Stata 15 (StataCorp, 2017).

Findings

Findings 1: Two-dimensional structure of communication skills

A Confirmatory Factor Analyses (CFA) was used to estimate a two-dimensional model (strategic communication, communication-oriented-toward-understanding) with correlated factors. Six indicators each (five role-playing games and the total value of the highly inferential items) were used for evaluating the strategic and the understanding-oriented-communication construct, respectively. Overall, the empirical data confirmed the theoretical model well (χ2 = 103.5; df = 53; p < 0.001; CFI = 0.95; RMSEA = 0.04). The reliability of the model was also good, at ρCF = 0.89. The items load on strategic communication with values of 0.43 to 0.89 and understanding-oriented-communication with values of 0.55 to 0.93. At SρCF= 0.80 for strategic and VρCF = 0.84 for understanding-oriented-communication, reliability is good. Table 2 shows the results for the two-dimensional model.

Findings 2: Correlations with self-evaluations as well as non-verbal communication

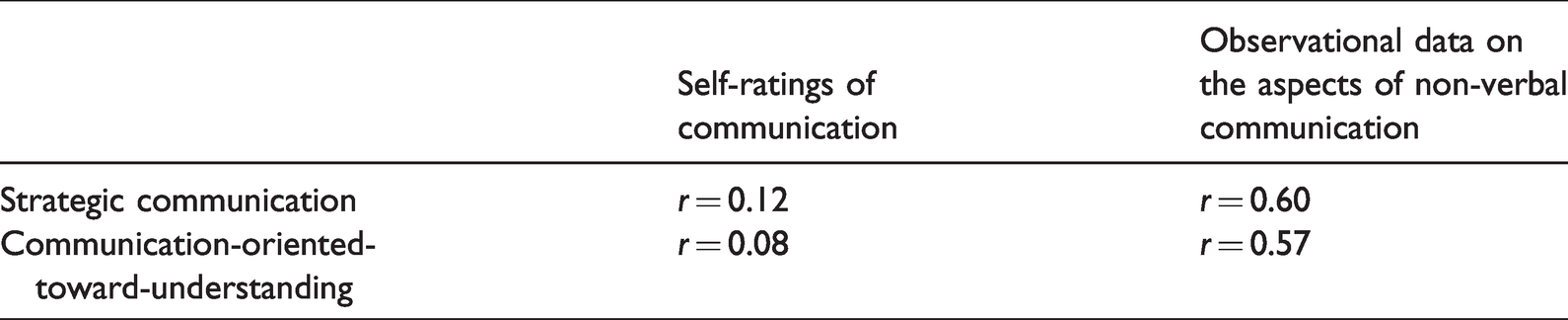

In subsequent analyses, the correlation between self-evaluations and non-verbal communication, on the one hand, and the two types of communication, on the other, was examined.

Neither strategic nor understanding-oriented-communication correlated significantly with the students’ self-evaluations. The correlation between the self-evaluation and strategic communication skills was r= 0.12; between self-evaluation and understanding-oriented-communication skills was r = 0.08. Thus, there was only minor systematic covariance between the two dimensions of the performance-based test and the students’ self-evaluations.

When using the observational data on the aspects of non-verbal communication the correlations were significantly stronger for strategic and understanding-oriented-communication. Strategic communication correlated with the associated non-verbal communication at r = 0.60. For understanding-oriented-communication, the correlation was r = 0.57. Obviously, the two newly developed scales correlated significantly with an established observation sheet on non-verbal communication. All correlations can be seen in Table 3.

Correlation between two types of communication and self-ratings of communication and non-verbal communication.

Discussion

Strategic and communication-oriented-toward-understanding are essential interaction skills (Hallahan et al., 2007; Retallick, 1991; Werder et al., 2018). Building on this premise, this article presents empirical findings on the development of a performance-based testing in higher education to determine students’ communication skills. First, the empirical findings confirmed the operationalization of strategic and understanding-oriented-communication. Second, the observational data did not correlate significantly with students’ self-evaluations, but it did with observed aspects of non-verbal communication.

These findings raise some research questions, and directly associated with them, practical questions.

Habermas’s theory of communication goes beyond merely positing that there are two basic types of communication. He also, for instance, takes the particularities of the context into account, i.e. the “ideal speech situation,” a factor which we did not consider. There is also a psychometric issue related to the high rate of missing values. If every student carried out all ten role plays, the assessment of one student would have taken almost two hours, which exceeded our time resources. We therefore randomised as many aspects as possible (assignment of raters, of interlocutors, of role plays) to compensate for the fact that we could not process each student for each role play. We cannot, however, be sure that this approach was completely successful and further research is needed to control for the situation.

Self-evaluations are widespread in the field of ascertaining skills in higher education, specifically in the context of quality assurance procedures for teaching and degree programs (Braun & Mishra, 2016). Since results of these procedures also lead to practical recommendations for future application, it should be clarified whether the insignificant correlation found here is a robust empirical finding.

To strengthen the validity of the test, it should be explicitly noted that both the interlocuter and the rater have to be familiar with the testing tool and the theoretical background on which it is based. This requires comprehensive training in advance. Further research could also include evaluation of raters and interlocutors, to consider the two types of communication from their perspective.

Finally, this contribution has presented insights that are theoretically founded and empirically confirmed in order to be able to document and evaluate communication skills that are explicitly formulated as learning objectives for teaching at higher education. Such procedures, and their further development, are necessary to enable longitudinal, quantitative proof of learning achievements in the area of transdisciplinary competences. In light of the sobering conclusion that there does not appear to be any noteworthy correlation with students’ self-evaluations, one has to ask if the considerable resources required for performance-based testing (compared with self-evaluations) is, in fact, justified.

Another argument against the performance-based test is the question of how such competences can be supported in higher education. There is an underlying connection between the assessment of competences and their promotion. The notion of “constructive alignment” (Biggs & Tang, 2015) provides a theoretical framework for understanding this connection. Constructive alignment assumes that students primarily learn what will be graded on the exams. In contrast to some lecturers, who see this conjecture as a negative learning process, constructive alignment uses the exams as a tool for managing learning objectives. If lecturers clearly defined the intended objectives that students should achieve, then they would be in a better position to align those intended objectives with their teaching and assessment practices. Moreover, if the exams were to cover activities that represent the learning objectives, the student’s learning would be geared toward the intended objectives. Our study confirms that researchers can capture student competences of mastering complex, authentic, and challenging situations with the use of performance assessments (Hyytinen & Toom, 2019; Shavelson et al., 2018).

Performance-based tests are, therefore, capable of measuring not only essential learning objectives, but also of supporting the teaching and learning of them within higher education. Were institutions of higher education to use performance-based tests on a regular basis, student learning could be more aligned with assessments.

Footnotes

Acknowledgments

I would like to thank the members of the KomPrue team who have supported this labor-intensive project: Georgios Athannassiou, Daniel Klein, Kathleen Pollerhof, and Ulrike Schwabe, as well as Michelle Standley for her constructive feedback on the article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the German Federal Ministry of Education and Research [BMBF] [grant number FKZ: 01PK14001].