Abstract

Background

Undergraduate research (UR) plays a pivotal role in medical education, fostering scientific thinking and professional development. At present, China lacks standardized tools to effectively appraise UR experiences among medical undergraduates. The Undergraduate Research Student Self-Assessment (URSSA), widely used in Western countries, has not been validated for Chinese populations and culture. This study aimed to translate and adapt the URSSA into Chinese and assess its psychometric properties for use with medical undergraduates.

Methods

The URSSA was translated following Brislin's model, including forward-backward translation, expert panel review, and pilot testing. The final Chinese version was administered to 165 medical undergraduates from Peking University Health Science Center with UR experience. Reliability was assessed using Cronbach's α and Guttman split-half coefficients. Confirmatory factor analysis (CFA) tested the four-factor structure (Skills, Thinking and Working Like a Scientist, Personal Gains, and Attitudes and Behaviors). Discriminant validity was examined by comparing scores across distinct subgroups.

Results

A total of 165 medical undergraduates were included, among whom 66.7% were majoring in clinical medicine. After rigorous translation and back-translation, we obtained the URSSA questionnaire suitable for the Chinese context. The Chinese URSSA demonstrated excellent reliability (Cronbach's α coefficients = .972; Guttman split-half coefficients = 0.956). CFA supported the original structure and related metrics for testing the overall fitness of the structural equation model, including χ2/df = 2.858, CFI = 0.790, and RMSEA = 0.106. Students with higher research engagement scored significantly higher on all four subscales (P<.01), confirming the capability of URSSA to differentiate among different groups of people.

Conclusions

The Chinese URSSA is a valid and reliable tool for assessing UR gains among medical undergraduates. Its adoption can aid in program evaluation and curriculum development. Future research should validate the tool nationally and explore its predictive validity for long-term academic outcomes.

Keywords

Introduction

Undergraduate research (UR) experience has been an important part of scientific education in colleges and universities, and has received increasing attention in recent years. In recent years, Chinese scholars have also begun to pay attention to the lack of UR evaluation tools. For example, Huang et al developed a self-rating questionnaire for medical students’ scientific research ability. During the UR internship, students can assist their scientist mentors in designing projects, collecting and analyzing data, writing up and presenting research findings, which is a great opportunity to conduct authentic research in the laboratory. 1 Currently, it is widely believed that UR training is beneficial to undergraduate education and career development of the participants, not only in the fields of science, technology, engineering, or mathematics, but also in medical education.2,3 In China, most medical undergraduates voluntarily participate in UR, mainly through the summer research projects and the undergraduate innovation projects initiated by the medical school. In addition, students can also seek collaboration with mentors by sending emails or in-person meetings to participate in research programs in their areas of interest. 4

It is necessary to evaluate UR properly. However, how to evaluate UR in different disciplines, different programs, and different backgrounds and experiences is a rather challenging task. 5 Currently, there is a lack of systematic, scientific, and effective tools for evaluating medical undergraduates in China. The creators developed the Undergraduate Research Student Self-Assessment (URSSA) based on years of multistepped and empirical approach to survey development, and pilot tested it within students, revising it gradually before wider use.6–8 By measuring and evaluating students’ research experience, research access, and research satisfaction, the questionnaire eventually developed four categories in UR access, including skills, thinking and working like a scientist, personal gains, and attitudes and behaviors as a researcher. 6 The full survey instrument template contains 134 items, grouped in 17 blocks. As a relatively mature scale, the URSSA has been widely used in the United States and has been applied to both science, technology, engineering, and mathematics (STEM) and non-STEM undergraduates. 9

At present, the reliability and validity of the URSSA have been confirmed by several studies.10,11 However, there is no standardized Chinese version of the URSSA available yet. Considering the diversity of Chinese cultural contexts and the complexity of medical education, our research aims to translate the URSSA into a Chinese version and examine the psychometric properties of the Chinese version URSSA as a self-report survey for UR programs. Because the survey has been taken by more than 160 students, we can test some aspects of how the survey is structured and how it functions under a Chinese medical educational background.

Methods

Translation of the URSSA

URSSA is a validated and full survey instrument template that contains 134 items, grouped in 17 blocks. 6 In adapting the URSSA for the Chinese context, we selected the 35 core items originally established in the URSSA development as the foundational assessment dimensions. These items, organized into the 4 fixed, noneditable core blocks, are used to assess students’ gains and growth. 6 Their relevance to the Chinese medical education setting was further evaluated during the expert panel review, ensuring cultural and contextual appropriateness. A core group of items, organized in 4 core categories, ask students to rate how much they have gained in Skills, Thinking and Working Like a Scientist, Personal Gains, and Attitudes and Behaviors as a Researcher. The remaining 13 blocks (comprising 99 items) are customizable: program administrators may edit, delete, or rearrange them as needed. These sections are typically used to gather background information and collect feedback on the program. In accordance with the instrument's guidelines, the URSSA scoring procedure involves the following steps. First, reverse coding is applied to negatively worded items to ensure measurement consistency across all items, such that higher scores uniformly reflect more favorable attitudes. Subsequently, subscale scores are computed by summing the constituent items, with elevated values indicating greater experiential gains. The reporting of this study conforms to the Consensus-based Standards for the selection of health Measurement Instruments statement (Supplemental File 1). 12

The process of the translation and construction of the Chinese version URSSA was shown in Figure 1. This study rigorously adhered to Brislin's translation guidelines for the cross-cultural adaptation of the URSSA into Chinese. The specific procedures were as follows: (1) Forward translation: The original English version of the scale, including all items, dimension definitions, and response options, was independently translated by one medical doctoral candidate and one professional translator. The research team then compared and analyzed the 2 translated versions to develop the initial Chinese draft of the URSSA. (2) Back translation: The preliminary Chinese version was back-translated into English by a separate medical doctoral candidate and a professional English translator. (3) Expert consultation: 2 medical education experts and 3 clinical practitioners were invited to evaluate and analyze the translated scale. They comprehensively assessed the original English version, the initial Chinese draft, and the back-translated English version, providing feedback on item relevance, semantic clarity and fluency, cultural appropriateness, and cross-cultural adaptation. Based on expert feedback, the revisions made include (a) rephrasing of colloquial expressions to align with academic Chinese; (b) adjustment of response anchors to enhance interpretability; and (c) minor reordering of items within subscales to improve logical flow. No items were removed or substantively altered in meaning. Based on these, the final Chinese version of the URSSA was refined and finalized. The consensus version was presurveyed on 30 students, who were randomly selected from the participant population, to evaluate its understandability, acceptability, and clarity. Following expert modification of the Chinese version based on feedback from the student participant, the final Chinese version of the URSSA was distributed in a validation study.

The Flowchart of Study Procedures.

Data Collection for Validation and Reliability of the Chinese Version of the URSSA

We conducted the study in accordance with the Declaration of Helsinki. All participants were informed and consented to the study prior to the investigation. Participants were recruited through convenience sampling via departmental announcements and student networks at Peking University Health Science Center. The questionnaire was distributed electronically to undergraduate students from various schools between October 2024 and November 2024. Inclusion criteria comprised: (1) enrollment as an undergraduate student at Peking University Health Science Center with recent participation in short-term research programs; and (2) voluntary and conscientious completion of the questionnaire. Exclusion criteria included (1) incomplete demographic information; and (2) careless or insincere responses through quality control checks by investigators.

Participants who had recently engaged in short-term research programs such as summer research projects or undergraduate innovation initiatives were invited to complete the survey (Supplemental File 2). The survey consisted of 2 components: the general information questionnaire and the Chinese version of URSSA. The general information questionnaire was applied to collect demographic characteristics and academic background data, including participants’ gender, major, prior research experience, publication experience, and potential academic outputs generated through the short-term research program.

Rigorous quality control procedures were implemented. All returned questionnaires underwent systematic validation checks, with exclusion criteria applied to eliminate incomplete submissions and responses exhibiting detectable measurement errors (eg, patterned answering, logical inconsistencies, or missing critical demographic fields). A nominal incentive of 2 RMB was provided upon questionnaire completion as an encouragement measure. Responses were collected with an online crowdsourcing free platform in China (called “Survey Star,” powered by www.wjx.cn). Each survey took ∼3 to 5 min to complete data collection.

Sample Size Consideration

While a formal a priori sample size calculation was not performed, our sample of 165 participants with 35 items corresponds to a participant-to-item ratio of ∼4.7:1, which meets the commonly recommended ratio of 5:1 for factor analysis (Hair et al, 2014). This sample size is deemed adequate for preliminary validation studies.

Statistical Analysis

Data were analyzed using IBM SPSS Statistics, version 24 (IBM Corporation, Armonk, NY) and Amos Graphics, version 26 (IBM Corporation, Armonk, NY). A prespecified significance level of P<.05 was used for all statistical tests. Measurements are expressed as mean ± standard deviation (Mean ± SD) if they are normally distributed, and counts are expressed as the number of cases (N) and the constitutive ratio (%). Cronbach's α and Guttman split-half coefficient were used to establish the reliability of the experience and the importance scale. 13 Confirmatory factor analysis (CFA) of the extraction factor model was performed using data random samples from the participants. 14 To provide evidence for discriminant validity, known-groups validity was assessed by comparing URSSA total and subscale scores across subgroups defined by relevant participant characteristics (eg, research engagement intensity and publication output) using independent-samples t-tests and mixed-effects models. With these methods, we explored the factor structure and tested the scale's construct validity. t tests and the mixed effects model were used to compare URSSA scores and the subscale score means among subjects with different characteristics, thereby examining the scale's discriminant analysis.

Results

Participants Characteristics

A total of 175 questionnaires were collected, and 165 questionnaires were valid (valid call-back rate, 94.3%). For the 10 invalid questionnaires, 2 were blank and 8 had the same responses across more than 80% of all the items. All the participants were undergraduate. Among these participants, 72 are males and 93 are females. Most participants were major in clinical medicine (66.7%), nursing (11.5%), and basic medicine (6.7%). Participants reported previous research experience (82.4%), publish experience (21.2%), and paper publish after the project was completed (36.4%). The general characteristics of participants are shown in Table 1.

Characteristics of the Participants.

Item Scores and Screening

The average score of each item of the Chinese version scale ranged from 2.19 to 3.66, and the total score ranged from 47 to 168. The average score for each subscale was as follows: Thinking and Working Like a Scientist (24.79 .79 Working Like a Scientist (24.79 le ranged from 2.19 to 3.66, and th 9.98), and Attitudes and Behaviors (22.78 ±7.54).

To avoid misunderstanding or decreasing of precision in responses, items with high omission rates (> 5%) and low discrimination (standard deviation of the item score < 0.85) would be removed with a prudential panel discussion. The standard deviations for all 35 items were higher than one, and factor loadings for all items were > 0.4 and < 0.9. The results showed that no item satisfied the exclusion criteria. For the Cronbach's α coefficients of .908 and .894, the removal of any URSSA item could decrease the Cronbach's α coefficient for the scale. Thus, these 35 items were deemed suitable for inclusion in the translated Chinese version of the URSSA (Table 2).

Descriptive Statistics for Each Item According to the Original 4 Subscales of URSSA.

Abbreviation: URSSA, Undergraduate Research Student Self-Assessment.

Reliability

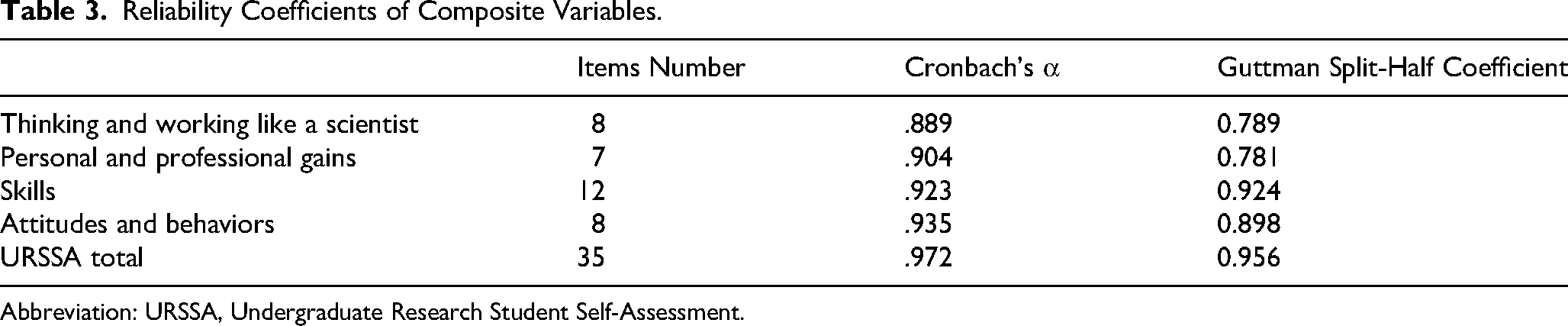

For surveys, reliability is usually regarded as the internal consistency of the items within each scale, which reflects the degree of interrelationship among students’ responses to the scale's items. In this study, the ordinal coefficient α and Guttman split-half coefficient were .972 and 0.956, respectively, for all the 35 items of the Chinese version URSSA, indicating good stability as shown in Table 3. The coefficient α for the subscales ranged from .889 to .935. For the Guttman split-half coefficient, the subscales ranged from 0.781 to 0.924 (Table 3).

Reliability Coefficients of Composite Variables.

Abbreviation: URSSA, Undergraduate Research Student Self-Assessment.

Validity

CFA was implemented by using Amos Graphics (version 22). The Chinese version of URSSA followed the division established by the original version. The parameter estimates of the CFA of the simplified Chinese version of the URSSA are shown in Figure 2, which presents the standardized factor loadings for the four-factor model of the Chinese URSSA, illustrating the relationships between observed items and their respective latent constructs. The entire standardized factor loading was statistically significant and > 0.40. All the items loaded significantly onto their respective factors. We also calculated metrics for testing the overall fitness of the structural equation model. 15 The chi-square degree of freedom ratio (χ2/df) was 2.858, the comparative fit index (CFI) was 0.790, the root mean square error of approximation (RMSEA) was 0.106, and the incremental fit index (IFI) was 0.792 (Table 4).

Four-Factor Model of the Chinese Version URSSA. Abbreviation: URSSA, Undergraduate Research Student Self-Assessment.

Confirmatory Factor Analysis of the Chinese Version of URSSA's Invariance.

Abbreviations: χ2/df, chi-square degree of freedom ratio; RMSEA, root mean square error of approximation; CFI, comparative fit index; NFI, normed fit index; IFI, incremental fit index; TLI, Tucker-Lewis index.

Discriminant Analysis

We explored the discriminant validity associated with basic characteristics of participants. All of 4 subscale scores differed significantly according to hours per week for research and paper publish after the project was completed (all P < .01). This study found that the female tended to have lower scores on the Thinking and Working Like a Scientist, Personal and Professional Gains and Attitudes and Behaviors, which was consistent with the established or verified invariance of factor structure on gender in some literatures. Moreover, the Attitudes and Behaviors subscale score differed significantly across different research experiences (P < .01). Similarly, students with prior publish experience demonstrated higher Skills subscale scores than those without publication history (Table 5).

Discriminant Validity of the Subscale Scores According to Participant Characteristics.

Discussion

This study is the first to strictly follow Brislin's translation principles to adapt the URSSA scale into Chinese, forming a questionnaire for evaluating UR experiences that is suitable for the Chinese cultural context. We then preliminarily examined the Chinese version of the URSSA for validity and reliability to verify the survey structure and its use as a reliable measurement tool. The validity issue resolved outstanding questions about the core indicators of the survey and its future application to medical undergraduates.

Through research participation, students acquire essential skills such as experimental design, data analysis, and academic writing, developing critical thinking and clinical problem-solving abilities, which were vital for their future academic and clinical careers.16,17 However, the lack of standardized assessment tools in China to evaluate students’ gains from research training not only hinders objective measurement of training outcomes but also limits evidence-based curriculum optimization and individualized student development. The introduction of assessment scales such as the URSSA enables systematic and scientific quantification of students’ progress across multiple dimensions. 2 Such tools are crucial as they provide educators with reliable feedback to identify students’ strengths and weaknesses, thereby refining research training programs. Furthermore, standardized assessments facilitate cross-institutional and cross-cultural comparisons, contributing to the overall improvement of medical education quality.

Currently, the absence of validated assessment tools impedes objective evaluation of research training effectiveness, which may negatively impact the selection and cultivation of future scientific talent. There is an urgent need to develop or adapt culturally appropriate assessment scales, such as the Chinese version of URSSA, to address this gap. This initiative would not only provide a scientific foundation for medical education but also promote international exchange in research training methodologies, ultimately strengthening the framework for nurturing research competence among Chinese medical undergraduates.

There are few quantitative criteria to assess the detailed roles and effects of UR for undergraduate medical students in China. 4 Although previous researches have clearly articulated multiple benefits of participating in UR for students, it has not illuminated the differential scientific growth of undergraduate researchers in different ways. 11 A better understanding of students’ intellectual, personal, and professional development through research is important for the guidance and supervision of undergraduate researchers. 11 The simplified URSSA has 4 dimensions: thinking and working like a scientist (8 items), personal and professional gains (7 items), skills (12 items), and attitudes and behaviors (8 items), assessing different aspects of gaining from UR.

In addition to the URSSA, there are other questionnaires used to assess UR experiences and skill acquisition.18,19 Huang et al 4 developed a self-administered questionnaire that assessed the scientific research ability and qualities of students. Our reliability coefficients (Cronbach's r re.972) are comparable to those reported in the original URSSA validation study (α = .94-.96). 10 Although the CFI (0.790) and RMSEA (0.106) did not meet the conventional thresholds for excellent fit, these indices are acceptable in early stage validation studies within distinct cultural contexts. The moderate fit may reflect differences in how Chinese medical students perceive certain items, particularly those related to “thinking like a scientist,” which may be influenced by curricular emphasis on clinical application over pure research. Unlike the URSSA, this study used a self-designed questionnaire that contained more targeted questions. For instance, when asking the participants involved in UR about the number of procedures they have mastered, the options listed include laboratory sterilization technique, cell culture, bacterial culture, animal modeling, MTT, PCR, ELISA, Western blot, metaanalysis, biopsy, and other techniques that are often required in biology and medicine. 4 The broader scope of URSSA makes it applicable to students from diverse disciplines. Some studies have applied it simultaneously to both STEM and non-STEM undergraduate students and found that the experience of UR is beneficial for both groups. 9

In this study, we implemented standardized back-translation and cross-cultural adaptation procedures to ensure the content validity of the Chinese URSSA versions. 20 We included 165 undergraduate students who had recently participated in a short-term research project from various colleges of the Peking University School of Medicine, most of whom were from the School of Clinical Medicine (66.7%). Through online electronic questionnaires, we collected the original data on the validation of the Chinese version of URSSA as a tool for measuring the gains from UR experiences. The Cronbach α coefficients for all four domains of URSSA were > .8. In addition, URSSA has an appropriate Cronbach's α coefficient and Guttman split-half coefficient of .972 and 0.956, respectively, and the results indicate that the Chinese version of URSSA has favorable internal consistency and stability in general and in each dimension. 21 We conducted CFA to construct the structural equation model of the Chinese version of URSSA. The results suggested that the data of this study fitted well with the scale model, and the Chinese version of URSSA had desired structural validity. Furthermore, we found that the longer weekly commitment to a short-term research project, the higher the scores on the URSSA dimensions. Undergraduate students who had an article published at the end of the project also had higher scores on the 4 URSSA dimensions. This suggests that the questionnaire has good discriminant validity. This finding is conceptually aligned with the intended purpose of URSSA as a tool to differentiate gains based on research engagement intensity. 10 While publication is but one tangible outcome, it often signifies deeper involvement in the research processose of URSSA as a tool to differentiate gains based on research engagement intensityarticle published at the end of the projec. 11 Thus, higher self-assessed gains among students with publications provide convergent evidence for the scale's discriminant validity.

This study has some limitations. First, we did not confirm the test-retest reliability and concurrent validity of the simplified Chinese version of URSSA. Furthermore, discriminant validity was assessed primarily through group mean comparisons rather than interconstruct correlations. Future studies could enhance discriminant validity testing by examining correlations between URSSA subscales and theoretically distinct constructs. Second, the sample used in this study was from a single campus in China, and extrapolation to other populations requires further validation. Future studies need to expand the random sample nationwide to further confirm the reliability and validity of the questionnaire. Third, this study relied on a convenience sample from a single medical school, which may limit the generalizability of the findings. The moderate sample size (N = 165) and the absence of probability sampling also constrain the external validity. Additionally, as with all self-report instruments, responses may be influenced by social-desirability bias, potentially inflating reported gains. And this study did not perform a rigorous prior power analysis to determine the sample size, and future confirmatory studies could be based on pretrial data for more precise sample size planning. Future researchers can collaborate with faculty mentors in the UR program, who can invite students to fill out questionnaires to encourage more students’ participation. It is noteworthy that this study did not establish a total cutoff score for the Chinese URSSA to differentiate between “acceptable” and “inadequate” research skill levels. This absence may limit the instrument's immediate utility in contexts requiring binary decisions, such as curriculum evaluation or individual student assessment. Future research should aim to develop normative scoring and establish validated cutoff values using larger, multiinstitutional samples.

Conclusions

In conclusion, the Chinese version of URSSA is a reliable and valid instrument for evaluating UR experiences among medical undergraduates in China. Its implementation can guide educators in optimizing UR programs, ultimately enriching students’ academic and professional trajectories. This study contributes to the global discourse on UR assessment, offering a culturally adapted tool that bridges methodological gaps and promotes cross-cultural comparisons in higher education research.

Supplemental Material

sj-docx-1-mde-10.1177_23821205261416307 - Supplemental material for Reliability and Validity of the Chinese Version of the Undergraduate Research Student Self-Assessment Among Chinese Medical Undergraduates

Supplemental material, sj-docx-1-mde-10.1177_23821205261416307 for Reliability and Validity of the Chinese Version of the Undergraduate Research Student Self-Assessment Among Chinese Medical Undergraduates by Shirong Xu, Lina Chen, Yangzi Tan, Yan Yan and Dawei Wu in Journal of Medical Education and Curricular Development

Supplemental Material

sj-docx-2-mde-10.1177_23821205261416307 - Supplemental material for Reliability and Validity of the Chinese Version of the Undergraduate Research Student Self-Assessment Among Chinese Medical Undergraduates

Supplemental material, sj-docx-2-mde-10.1177_23821205261416307 for Reliability and Validity of the Chinese Version of the Undergraduate Research Student Self-Assessment Among Chinese Medical Undergraduates by Shirong Xu, Lina Chen, Yangzi Tan, Yan Yan and Dawei Wu in Journal of Medical Education and Curricular Development

Footnotes

Abbreviations

Acknowledgments

We would like to thank the medical students who participated in this study. We thank Shixian Gu and Wenqing Yuan for their guidance on the project.

Ethical Approval

This study was conducted using a questionnaire survey method in accordance with the ethical principles for medical research outlined in the Declaration of Helsinki. This study was approved by the Ethics Committee of Peking University Health Science Center (No. 20250813).

Consent to Participate

Informed written consent was obtained from all the participants.

Author Contributions

SX, LC, and YT: methodology, data collection and analysis, writing, and draft preparation. DW and YY: conceptualization, supervision, and critical revision.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets generated and analyzed during the current study are available from the corresponding author on reasonable request.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.