Abstract

Background

Point-of-care ultrasound (POCUS) is endorsed by multiple national societies as an important skill in internal medicine (IM). Despite its growing importance, current evaluation methods for POCUS competence focus primarily on image acquisition and interpretation, overlooking clinical decision-making.

Objectives

We developed and evaluated an objective structured clinical examination (OSCE) that assesses IM residents’ ability to select appropriate ultrasound examinations, interpret pathological images, and integrate these findings into clinical decision-making.

Methods

Over both the 2022 and 2023 academic years, 110 postgraduate year-2 IM residents participated in a longitudinal POCUS curriculum. Eighty-one of these residents participated in a 40-min OSCE case 9 months later. In the OSCE, residents encountered a clinical case involving a patient with systemic lupus erythematosus and respiratory distress, requiring both lung and cardiac ultrasounds. Residents’ performance was evaluated using a 50-question rubric that assessed image quality, interpretation, and clinical reasoning. This is a cross-sectional study.

Results

Most residents successfully identified the appropriate examinations and interpreted pathological images, with 91% performing a lung ultrasound and 96% performing a cardiac ultrasound. However, many residents did not conduct a comprehensive lung exam, and many faced challenges obtaining certain cardiac views. Despite these gaps, most residents articulated appropriate differential diagnoses and management plans.

Conclusions

Our OSCE was able to evaluate the scanning patterns of the residents and test their ability to apply abnormal findings to a case, despite variability in the residents’ scanning skills. This OSCE highlights opportunities to improve our POCUS curriculum in emphasizing comprehensive examination techniques and integration of clinical reasoning.

Introduction

Point-of-care ultrasound (POCUS) has become integral in medical education, endorsed by national organizations such as the Alliance of Academic Internal Medicine, the Society of Hospital Medicine, and the American College of Physicians.1–3 A national residency consensus curriculum has recently been published. 4 Despite this, current POCUS assessment methods do not evaluate all necessary clinical skills.

Clinical application of POCUS requires 4 subskills: selecting the appropriate exam, obtaining high-quality images, interpreting those images in real-time, and integrating findings into clinical decision-making. 5 Current evaluations typically use healthy models and emphasize only the first 3 subskills, omitting assessment of clinical reasoning based on POCUS findings.6,7 A tool to assess all 4 subskills, especially clinical integration (Kirkpatrick level 3), remains lacking. Inadequate integration can lead to misinformed decisions and patient harm.

To address this gap in assessment, we developed an objective structured clinical examination (OSCE) case aimed at evaluating residents’ abilities to demonstrate the core 4 POCUS subskills. Our guiding research question asks, “Can an Objective Structured Clinical Examination (OSCE) effectively identify deficiencies in IM residents’ utilization and contextualization of POCUS-derived information in clinical practice that are not adequately assessed by conventional POCUS evaluation methods?”

Materials and Methods

Setting and Participants

This cross-sectional study with 2 cohorts that took place between 2022 and 2023 included 110 postgraduate year-2 internal medicine (IM) residents at New York University (NYU). All had completed a prior POCUS training based on our previously described faculty course, 8 with the exception of the procedural skills portion. The curriculum included 2 h of online content followed by 2 4-h workshops on image interpretation and hands-on practice with standardized patients (SPs), covering cardiac, abdominal, lung, and lower extremity ultrasound. Residents had ongoing opportunities for hands-on scanning with faculty support. Ultrasound machines were made available in the wards and intensive care units.

Implementation

Nine months after completing their training, residents participated in a 40-min POCUS OSCE station (25 min for the case and 15 min for feedback). This POCUS station was part of a larger multistation OSCE that included 4 additional non-POCUS cases. Residents rotated through all stations on a scheduled sequence within a simulation center.

Description of Innovation

During the POCUS station, each resident was presented with a clinical case scenario in which they were asked to evaluate and manage a middle-aged immunosuppressed patient with systemic lupus erythematosus, presenting with shortness of breath, hypoxemia, and fever. After the initial assessment, the patient would progress into undifferentiated shock. Residents selected which organ to scan and demonstrated scanning technique on an SP. If they obtained interpretable images, they were shown a corresponding pathologic clip via slideshow. Otherwise, the preceptor was asked not to show the associated pathologic image. The sequence is described pictorially in Figure 1.

Objective structured clinical examination (OSCE) walkthrough.

In the first portion of the case, referred to henceforth as the “lung portion,” the expected action was for the resident to perform a complete lung ultrasound phased array probe to work up undifferentiated dyspnea and hypoxemia. Findings included B-lines and pleural effusion. For the shock portion of the case, referred to henceforth as the “cardiac portion,” the expected action was for the resident to perform a cardiac ultrasound and identify a pericardial effusion on the pathologic images. If a resident selected an incorrect examination, the preceptor would guide them to perform the appropriate one.

Residents were evaluated using a 50-question rubric (see Supplemental Information 2), adapted from the validated adapted from the validated ultrasound competency assessment tool 9 and expanded with case-specific questions. Preceptors were trained to conduct the OSCE by 1 author (NCSK), who reviewed the content of the case and the images with the preceptor. The author also reviewed the rubric and reviewed what constitutes a “well done,” “partly done,” and “poorly done” image with each preceptor.

Anatomy-based questions were scored on a binary scale (1 = yes, 0 = no). Image quality was scored on a 3-point scale (0 = poorly done, 1 = partly done, 2 = well done) or “Not Attempted” if the resident did not attempt that view. A “well done” view showed all relevant structures clearly; “partly done” images had minor flaws but were interpretable; “poorly done” meant most structures were not identifiable.

Image interpretation was given a score (0, 1, or 2 as above), or “not attempted” if the resident did not attempt the view on the SP and thus was not shown the pathologic image. “Well done” was defined as the identification of all findings and anatomic structures. “Partly done” was defined as the identification of key findings pertaining to the case and most anatomic structures. “Poorly done” was defined as identification of no relevant findings or misinterpretation of the image.

Residents’ differential diagnoses and management plans were recorded verbatim. For the cardiac portion, success was defined by suspecting tamponade and proposing appropriate management (IV fluids and pericardiocentesis). This was scored as “well done” if the resident articulated the need for pericardiocentesis and IV fluids, “partly done” if the resident only named one, or “poorly done” if neither was mentioned. Residents were also asked to explain the rationale for suspecting tamponade. The results were entered as multiple choice (hypotension, pericardial effusion, right atrium (RA) free wall collapse, right ventricular (RV) free wall collapse, and inferior vena cava (IVC) plethora).

Using the 2018 Consensus Framework for Good Assessment, 10 we devised this OSCE to primarily be feasible and acceptable for stakeholders given its novel nature. Additionally, we developed the rubric to detail specific behaviors or image characteristics that would constitute an acceptable or unacceptable effort with the goal of optimizing reproducibility. Having a single author review that rubric with each individual instructor aimed to address consistency.

Participants in this study provided written informed consent to use of their data for research as part of a medical education research registry. This registry was approved under NYU Grossman School of Medicine's Institutional Review Board (i06-683). Data were analyzed descriptively. The reporting of this study conforms to the STROBE statement on observational cross-sectional studies. 11

Results

A total of 81 residents completed the OSCE. After the first session, 2 questions regarding image quality for the lung portion of the examination were revised for clarity. Results from those questions were excluded from the final analysis (7 of 81 results in the lung image acquisition portion, n = 74).

Most residents selected the correct study to perform in the given scenario: 67 (n = 74, 91%) opted to perform a lung ultrasound during the first phase, and 78 residents (n = 81, 96%) chose to scan the heart in the second.

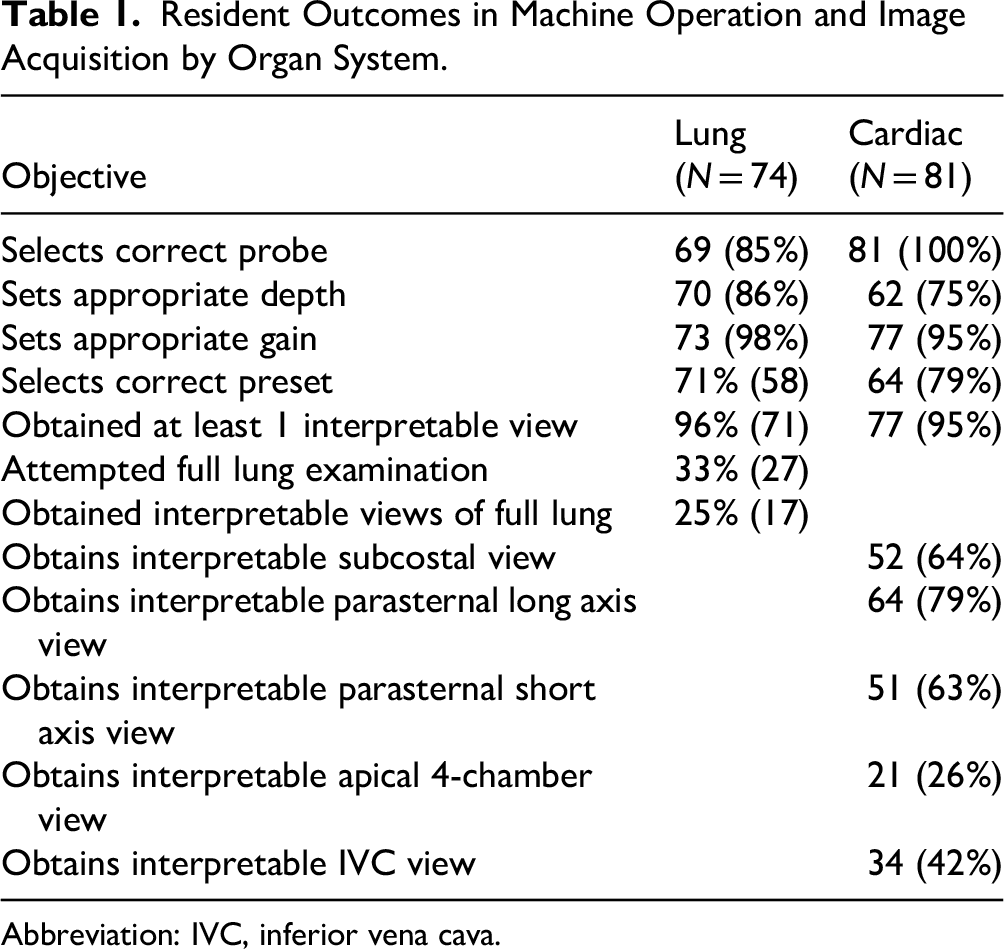

The majority of residents succeeded in selecting the correct probe, setting the depth and gain, and selecting an appropriate preset for both lung and cardiac views. Every resident except 1 obtained at least 1 interpretable view of the lung; however, only 33% completed a comprehensive lung exam. A comprehensive examination was defined as examining 3 points on each hemithorax: anterior lung, lateral lung, and lung base at its most posterior point, with a view of the diaphragm. Results are summarized in Table 1.

Resident Outcomes in Machine Operation and Image Acquisition by Organ System.

Abbreviation: IVC, inferior vena cava.

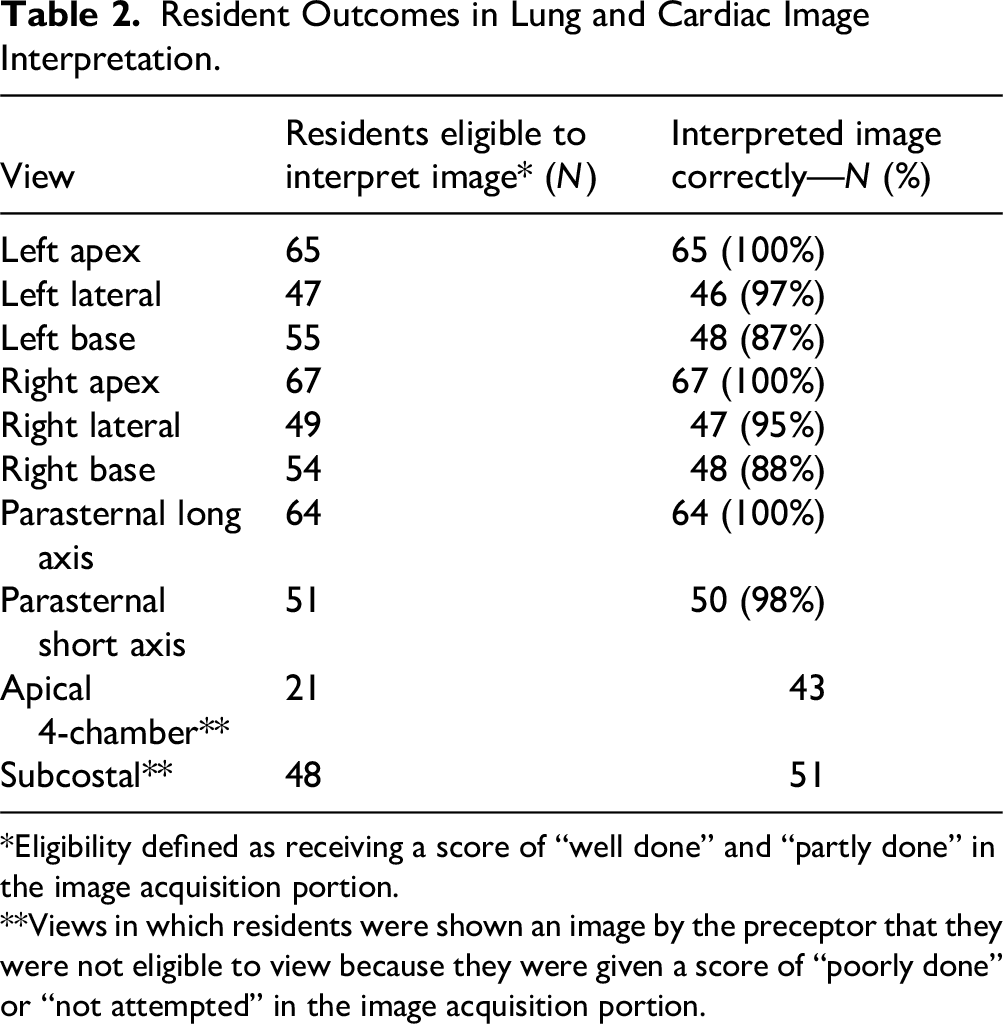

Residents who obtained interpretable images of specific lung locations were asked to interpret the corresponding lung images from the case. Scores for image interpretation were high across all views. Results are summarized in Table 2. In total, the residents generated 6 diagnoses that were supported by the information provided in the imaging (pleural effusion/parapneumonic effusion, empyema, pneumonia, lupus pleuritis/serositis, volume overload, and interstitial lung disease) and 6 diagnoses that were not supported by the imaging thus far (pneumothorax, chronic obstructive pulmonary disease/asthma, tamponade, pulmonary embolism, pericarditis, and acute renal failure).

Resident Outcomes in Lung and Cardiac Image Interpretation.

*Eligibility defined as receiving a score of “well done” and “partly done” in the image acquisition portion.

**Views in which residents were shown an image by the preceptor that they were not eligible to view because they were given a score of “poorly done” or “not attempted” in the image acquisition portion.

In the cardiac phase, 76 residents (94%) obtained at least 1 interpretable view. The pericardial effusion was visible from all views, though to best assess the RV free wall for echocardiographic signs of tamponade, a subcostal view is of particular interest—two-thirds of residents obtained an interpretable subcostal view. Results are summarized in Table 2. All residents except 1 were given at least 1 image to interpret. Some residents were inappropriately shown images to interpret by the proctor, even if they did not meet the image acquisition quality threshold to view the image, particularly in the apical and subcostal views. Overall, most residents articulated concern for tamponade and proposed appropriate management plans. Many residents identified tamponade based on the case's mention of hypotension and identification of a pericardial effusion. Fewer identified more specific signs of echocardiographic tamponade, such as chamber collapse and IVC plethora. Results are summarized in Table 3.

Resident Outcomes in Clinical Reasoning.

Abbreviations: RA, right atrium; RV, right ventricular.

We evaluated internal validity by examining the results for “not attempted” in our rubric. In the OSCE, a resident should only be presented with a clip if the scan they obtain on the SP was “well done” or “partly done.” This implies that residents whose images were deemed “poorly done” or “not attempted” should not see the clip, and thus be marked as “not attempted” in the image interpretation portion of that organ. We expected that the number “not attempted” in clip interpretation would equal “poorly done” plus “not attempted,” however, this was not true for any of the questions on the rubric. The results are summarized in Table 4. We were unable to calculate inter-rater reliability due to logistical issues with matching the preceptor with the test-taker post-hoc.

Internal Validity.

Abbreviation: IVC, inferior vena cava.

Discussion

This OSCE assessed IM residents’ ability to apply POCUS clinically and addresses all 4 subskills in a single testing session. Most residents correctly chose which system to scan (subskill 1), interpreted images accurately (subskill 3), and integrated findings into management (subskill 4). However, we found that our residents inconsistently performed complete examinations (subskill 2) when it would have been appropriate in a clinical scenario, such as in the lung portion of the case, where 67% of residents failed to perform a full examination.

In our OSCE, we expected our residents to acquire and interpret images and also determine which ultrasound views are appropriate at specific points during a simulated clinical case. For example, an adequate view of the lung in 1 study 8 required only 1 location to be scanned; our case structure assumes a more comprehensive clinical application of POCUS, with full lung field assessment typically expected. This subskill of study selection has not been explicitly investigated, though Kelm et al 12 noted that all residents in their OSCE were able to name 3 indications for cardiac ultrasound. Clinical integration of POCUS has also not been studied. Our case seeks to simulate how a resident might interpret POCUS findings even when their knowledge is incomplete. Nearly all residents succeeded in acquiring at least a single cardiac view on the SP, and all but 1 could identify a pericardial effusion on the corresponding image. All except this 1 cited pericardial effusion and hypotension as grounds for suspecting tamponade. Despite fewer than 25% of residents identifying classic echocardiographic signs of tamponade, their clinical suspicion remained appropriately elevated. We argue that our residents were synthesizing available findings with clinical context rather than anchoring on the presence or absence of any 1 echo feature. This high index of suspicion is the desired outcome.

Most existing POCUS assessments focus on image interpretation and acquisition via online testing.12,13,14 Reported performance ranges from 70% to 100% for lung interpretation and up to 90%–100% for cardiac interpretation.12,15 In regard to image acquisition, most IM residents (78%–90%) were able to acquire various cardiac structures adequately. 15 Our findings are broadly consistent, despite using a more integrated, OSCE-based approach. Still, it is difficult to draw direct comparisons, as our OSCE integrates both image acquisition and clinical integration, and previous testing separates the 2. Our assessment may favor practical over theoretical skills, and the use of curated pathologic images could inflate diagnostic accuracy.

Internal validity issues were evident, particularly around inconsistent proctor behavior. Inconsistencies were observed between raters across all scanning locations, resulting in some residents being incorrectly shown images despite inadequate scans. Further analysis of rater grading patterns is needed to determine whether this reflects a systemic issue, suggesting the need for improved pre-OSCE rater training, or whether it is attributable to individual raters, in which case the implementation of immediate postsession feedback may help correct deviations in real-time. The single-center nature, lack of a control group, and influence of local curriculum limit generalizability. Faculty familiarity with residents may introduce bias (eg, halo effect, contrast effect, and observer bias),5,16 as documented in OSCE literature. A structured rubric can mitigate some of these biases but not eliminate them.

We plan to evaluate the content validity of our rubric to ensure it reflects all 4 POCUS subskills. Data gathered in answering these questions may enhance future efforts to standardize clinical reasoning into POCUS competency assessment. Qualitative research is also needed to understand decision-making around comprehensive scans and integration into clinical reasoning. Longer-term goals include longitudinal tracking of residents’ skill retention and performance, establishing competency cutoffs by training year, and improving inter-rater reliability. Calculating and optimizing the inter-rater reliability of this OSCE will be our first step in exploring this possibility. To strengthen the assessment, we would investigate formalizing cutoff scores for adequate performance based on training year.

Curricularly, our findings point to gaps, particularly in performing comprehensive lung exams. Many residents obtained diagnostic views but failed to scan all relevant areas. This suggests the current curriculum, focused on acquisition and interpretation, needs enhancement to better simulate clinical applications. Incorporating case-based learning for common scenarios such as dyspnea or shock may help address this.

Conclusion

This OSCE successfully assessed all 4 core POCUS subskills within a realistic clinical scenario. While most IM residents demonstrated competency in choosing exams, interpreting images, and integrating findings, many did not perform comprehensive scans. The OSCE also highlighted issues with rater consistency and the need for more robust preceptor training. Future research should refine this tool, explore longitudinal competency tracking, and address curriculum design to improve real-world POCUS application in residency training.

Supplemental Material

sj-docx-1-mde-10.1177_23821205251411172 - Supplemental material for Assessing Clinical Integration of Point-of-Care Ultrasound With an Objective Structured Clinical Examination

Supplemental material, sj-docx-1-mde-10.1177_23821205251411172 for Assessing Clinical Integration of Point-of-Care Ultrasound With an Objective Structured Clinical Examination by N. Caroline Srisarajivakul-Klein, Jennifer Dong, Aron Mednick, Isaac Holmes, Lauren Comisar, Anne Dembitzer, Harald Sauthoff and Michael Janjigian in Journal of Medical Education and Curricular Development

Footnotes

Acknowledgements

The authors would like to acknowledge the Program for Medical Education Innovations and Research (PrMEIR), NYSIM, and the Division of General Internal Medicine & Clinical Innovation. Administrative support was provided by Deborah Cooke.

Ethical Considerations

Data used for the analyses described were obtained through the Institutional Review Board-approved NYU Grossman School of Medicine Graduate Medical Education Research Registry (No. 06-683)

Consent to Participate

Participants in this study consented to the use of their data for research as part of a medical education research registry. This registry was approved under our Institutional Review Board (No. 06-683).

Consent for Publication

Not applicable.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This program was supported by a generous donation from the Goodman Family Foundation, which did not participate in any aspect of this project, including study design, collection, analysis, or data interpretation, or in the writing of the manuscript.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Preprints

This manuscript has not been submitted for preprint elsewhere.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.