Abstract

Introduction

The American Cancer Society (ACS) estimates over 2 million new cancer cases will be diagnosed in the United States in 2024. We utilize the Extension for Community Healthcare Outcomes (ECHO) Model® developed by the University of New Mexico's ECHO Institute to address cancer-related knowledge gaps between healthcare professionals in underserved communities and specialists. By connecting healthcare professionals through a virtual telementoring community, ECHO programs are designed to increase local expertise and improve patient care.

Methods

This quantitative investigation evaluates the effectiveness of four ACS ECHO programs focused on cancer care across 2023 and 2024 by examining participants’ engagement, likelihood to use what was presented, as well as changes in knowledge and confidence.

Results

The ACS ECHO programs engaged 431 unique participants, averaging 108 participants per program. Of those participants, 59% were planning to use the information presented within a month. On average, participants’ knowledge and confidence had mean increases on a 5-point scale of +0.84 and +0.77, respectively, reflecting participants’ readiness and ability to apply what they learned into practice.

Conclusion

These findings can inform insights for promoting ECHO program sustainability and directions for future research, including integrating qualitative insights to deepen our contextual understanding of ACS ECHO program effectiveness. While previous evaluations of ECHO programs have solely relied on qualitative approaches, the quantitative methods used in this study can offer an objective approach to evaluating model implementation and program impact.

Introduction

Initially developed by Dr. Sanjeev Arora with the University of New Mexico's Health Sciences Center, Project Extension for Community Healthcare Outcomes (ECHO) was launched in 2003 to address disparities in healthcare access and quality. 1 ECHO program participants engage in a virtual community with peers and subject matter experts and through didactic and case-based presentations, sharing support, guidance, and feedback that fosters an “all-teach, all-learn” approach.

The American Cancer Society (ACS) works to improve the lives of people with cancer and their families through advocacy, research, and patient support, to ensure everyone has an opportunity to prevent, detect, treat, and survive cancer. 2 In 2018, ACS and the ECHO Institute partnered to launch the first ACS ECHO program, which focused on the continuum of care for lung cancer patients. 3 Since then, ACS has launched 62 ACS ECHO programs reaching 4350 unique participants.

Previous evaluations of Project ECHO programs have highlighted the success of the ECHO Model® in improving healthcare professionals’ self-reported confidence and knowledge in areas such as managing chronic diseases and implementing best practices. For example, research by Arora et al showed that participants reported significant improvements in their knowledge and ability to care for patients with hepatitis. 4 Similar findings have been reported across a range of medical disciplines, from end-of-life care to chronic pain.5,6 However, many of these studies relied predominantly on qualitative methods, such as interviews and focus groups, to assess program outcomes.

Given the rapid proliferation of ECHO programs, there is a pressing need for structured, quantitative evaluations that clearly define the data collected, the analytical strategies applied, and the measurable outcomes achieved. However, as detailed by Moss et al, limited access to research and evaluation resourcing presents a significant barrier for implementation teams. 7 This constraint hinders the ability to capture and present implementation outcomes with scientific rigor, ultimately affecting their ability to showcase their local impact. A robust quantitative evaluation enables the planning team, as well as other stakeholders, to measurably and objectively confirm the extent to which the programs are achieving their intended goals and learning objectives, such as increasing participant knowledge and confidence. Quantitative data can also highlight areas of improvement and inform strategies for ECHO program sustainability and expansion into new domains or regions. This study seeks to build the evidence base by applying a clear, structured methodology to quantitatively evaluate four ACS ECHO programs focused on cancer care, while addressing key limitations and considerations.

This article was prepared in alignment with the STrengthening the Reporting of OBservational studies in Epidemiology guidelines to ensure transparency and quality in reporting observational data. 8

Materials and Methods

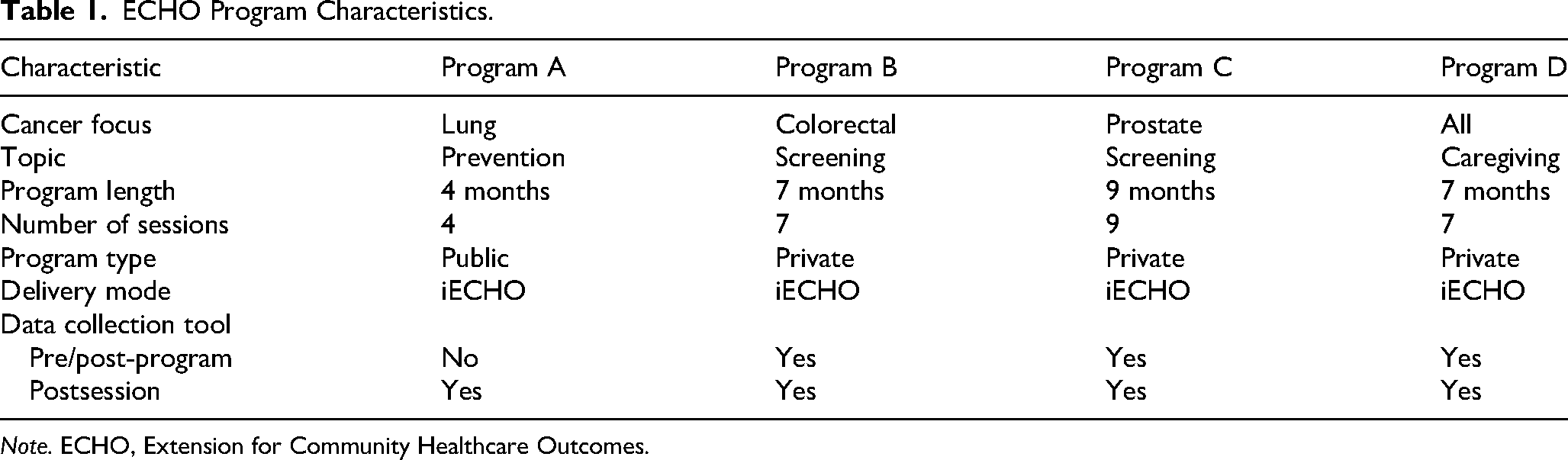

This investigation used a quantitative approach across four ACS ECHO programs focused on cancer care conducted between June 2023 and September 2024 to assess the effectiveness of the ECHO Model. Each program was designed and implemented to address either a specific cancer, a topic across the cancer continuum, or both. ACS ECHO programs were deidentified and labeled as Program A, Program B, Program C, and Program D for reporting purposes. Each session was held over iECHO, Project ECHO's technology platform, and included both a didactic presentation and case presentation, per the ECHO Model.

ACS categorizes ECHO programs as either public or private, each with distinct recruitment strategies and attendance expectations. Public programs are open access, allowing anyone to view details and register for the program. These programs, such as Program A, strongly encourage but do not require attendance at all sessions. In contrast, private programs, such as Programs B, C, and D, are invitation-only with personalized invitations sent to selected participants. These private programs require attendance at all sessions and promote comprehensive data collection, enabling the use of pre- and post-program assessments to track participant progress more effectively.

Program A was conducted from February 2024 through April 2024 and had 195 unique participants. The four monthly sessions were intended to increase healthcare professional capacity to assess and provide evidence-based services related to tobacco cessation. Participants were recruited through direct outreach between ACS and our health system partners. Additionally, Program A was a public program, and recruitment through iECHO was available.

Program B was conducted from January 2024 through June 2024 and engaged 45 unique participants. The 7 monthly sessions were intended to empower participants within their healthcare role to help increase colorectal cancer screening rates. Program C was conducted from January 2024 through September 2024 and had 59 unique participants. The 9 monthly sessions were intended to increase awareness of appropriate prostate cancer screening and increase the utilization of shared decision-making tools. Program D was conducted from June 2023 through November 2023 and engaged 132 unique participants. The 7 monthly sessions were intended to educate participants on the unique needs of caregivers. Participants were recruited for programs B, C, and D were recruited through direct outreach to health system partners.

All participants consented to involvement through their registration and attendance to an ECHO program. Participation in any ECHO program is completely voluntary, and participants can withdraw at any time.

Data Collection

To evaluate ACS ECHO programs, data was collected through attendance forms and brief surveys and included participant session attendance, the likelihood of participants to use the information presented, and self-reported changes in knowledge and confidence. ACS ECHO programs also gathered self-reported demographic data, including gender, clinical profession, and years of experience, to understand audience reach. Indirect measures of patient outcomes, such as barriers to implementation, as well as open-ended style questions were also included to allow participants to provide details not available with the quantitative-type questions. Each data collection tool assessed likelihood to use what was presented, as well as self-reported changes in knowledge and confidence.

Data collection tools included pre- and post-program surveys as well as postsession surveys. For private programs B, C, and D, pre- and post-program surveys were distributed at the beginning and end of the program, while post-session surveys were administered at the end of each session. Public Program A participants received only post-session surveys. All surveys were administered online via Microsoft Forms and remained open for 1 week. Participants could access the surveys through a provided QR code or survey link.

Measures were collaboratively developed by ACS data and evaluation teams following guidance from the ECHO Institute. Prior to implementation, all survey tools were tested. Self-reported change in knowledge and confidence questions utilized a 5-point Likert scale from 1 (not at all) to 5 (extremely) and was reviewed internally for face validity. Change in knowledge questions measured participants’ self-perceived understanding of specific cancer topics addressed in each session (eg, “understanding of tobacco cessation protocols” and “knowledge about prostate cancer screening”), while change in confidence assessed comfort in applying those insights in their professional work.

Statistical Analysis

Quantitative survey data, including changes in self-reported knowledge and confidence, were summarized using descriptive statistics. Item-level survey responses were based on a 5-point Likert scale range and converted into a score of 1 through 5. For example, the response items of “1 = not at all knowledgeable” and “5 = extremely knowledgeable” were used. The average score for each session was calculated and aggregated across each ACS ECHO program, with mean differences determined by subtracting the pre-program scores from the post-program scores.

Percentage data was derived by dividing the number of participants in each category by the total sample size and multiplying by 100. Likert scale shifts were represented as mean differences, calculated by comparing pre- and post-survey responses, with positive shifts indicating an increase.

All four ACS ECHO programs were analyzed individually and aggregated whenever possible. All statistical analyses were performed using Excel and GraphPad Prism software.

These studies operated under research ethics approval from the Morehouse College Institutional Review Board: #2053363-1, #2153720-1, and #2190195-1.

Results

Across the four ACS ECHO programs depicted in Tables 1 and 2, 431 unique participants were engaged with an average of 108 participants per program. These programs collectively conducted 27 sessions, ranging from a low of 4 sessions to a high of 9 sessions, and averaging 20.15 participants per session.

ECHO Program Characteristics.

Note. ECHO, Extension for Community Healthcare Outcomes.

ECHO Participant Characteristics.

Note. ECHO, Extension for Community Healthcare Outcomes.

Clinical professional is defined as those who directly provide patient/client care or counseling, such as physicians, advanced practice providers, nurses, community health worker, patient navigator, etc.

Of the participants (n = 280) who reported their gender across the 3 of the 4 ACS ECHO programs that did collect this information, 34 (12%) and 242 (86%) identified as male and female, respectively. Two participants identified as either nonbinary or did not disclose, respectively, making up a total of 4% of the total participant pool. Table 2 shows the participant breakdown of 3 out of the 4 ACS ECHO programs for the percentage of participants who directly provide care or counseling, defined here as clinical professional, as well as range of years of experience.

Survey response rates varied across the ACS ECHO programs as shown in Table 3. The post-session response rate for public Program A was 52%, while private Programs B, C, and D had higher response rates of 82%, 66%, and 55%, respectively, averaging 67% across these 3 programs.

Survey Response Rates by Program Type and ECHO Program.

Note. ECHO, Extension for Community Healthcare Outcomes.

Table 4 illustrates the likelihood of participants in Programs B, C, and D to apply the information presented during the program into practice. Program D shows the highest immediate likelihood of use, with 68% of participants planning to use the information within the next month. Overall, on average, 59% of participants intend to use the information within the next month, 91% within the next 6 months, 8% sometime in the future beyond 6 months, and only 1% reported they will never use it.

Likelihood to Use Presented Information in Practice.

Note. ECHO, Extension for Community Healthcare Outcomes.

Table 5 compares pre- and post-program knowledge mean scores across all 4 ACS ECHO programs. Program B had the largest increase in knowledge (+1.06), while Program D had the smallest increase (+0.61). Overall, on average, participants’ knowledge experienced a mean difference increase of +0.84 post-program.

Pre- and Post-Knowledge.

Note. ECHO, Extension for Community Healthcare Outcomes.

Table 6 compares changes in pre- and post-program confidence mean scores for 2 of the 4 ACS ECHO programs. Program C had the largest increase in confidence (+0.84), while Program A had the smallest increase (+0.69). Overall, on average, participants experienced a mean difference increase of +0.77 post-program. Data for programs B and D are missing as confidence changes were not collected for those programs.

Pre- and Post-Confidence.

Note. ECHO, Extension for Community Healthcare Outcomes.

Table 7 integrates qualitative themes and supporting quotes alongside each of the key outcomes and quantitative findings. Within likeliness to use, participants described being able to share information and resources with colleagues along with a boosted awareness of both internal and external processes. Within knowledge, which had the highest gain among outcomes, participants described the importance of case studies for building insight into the topic. Confidence, which also showed an increase, was credited to the exposure of participants to the topic along with the safe space that the ECHO Model enabled.

Supplementary Qualitative Themes.

Note. ECHO, Extension for Community Healthcare Outcomes; ACS, American Cancer Society.

Discussion

The findings from this quantitative evaluation measurably demonstrate the effectiveness of the ECHO Model in improving participant competencies and, indirectly, patient outcomes of cancer programs. The improvements in participant knowledge and confidence suggest that the ECHO Model is successfully addressing gaps in healthcare professional education. These findings are consistent with previous studies, such as those by Arora et al and Katzman et al, which qualitatively reported improvements in healthcare professional knowledge and clinical practice following participation in ECHO programs.4,9 Additionally, Cinko et al reported increased confidence and knowledge among healthcare professionals participating in an ECHO program. 10

The quantitative data from this evaluation provides objective metrics on an ECHO program's reach, participant engagement, and impact. For example, Table 4 indicates that, on average, 59% of participants across ACS ECHO programs reported a likelihood of implementing the information presented within the next month, while 91% anticipated doing so within the next 6 months. This may be due to the nature of each ACS ECHO program as some topics could be easier to integrate into practice while others may require administrative support. These high rates suggest a strong, immediate commitment among participants to apply learned knowledge, which is crucial for timely improvements in patient care, particularly in the field of cancer. Moreover, Table 5 reveals an average mean difference increase in knowledge of +0.84 post-program, with the highest gains observed in Program B (+1.06), underscoring the program success in enhancing understanding in the key areas presented. The mean difference in knowledge gain is potentially due to the targeted audience and their years of experience. Confidence levels also improved notably, as evidenced in Table 6, where the average increase of +0.77 was seen, with the highest increase of +0.84 in Program C, indicating that participants felt more capable of applying their knowledge effectively.

These metrics offer tangible, comparable data points that expose potential trends and inform programmatic decisions. The high percentage of participants planning to use the knowledge soon after the program, coupled with increases in both knowledge and confidence, aligns with the ECHO Model's goal of fostering continuous education and knowledge transfer, ultimately aiming to improve patient care in targeted communities.

The result of this quantitative investigation suggests that ACS ECHO programs, including Programs A, B, C, and D, have a positive impact on healthcare professional competencies and, indirectly, patient outcomes.

Attendance Rates

ACS ECHO programs with fewer participants, particularly programs B and C, demonstrated higher gains in both knowledge and confidence. Program B, with only 45 participants, shows the highest knowledge gain (+1.06), while Program C, with 59 participants, reports the highest confidence gain (+0.84). Conversely, Program D, with 132 participants, shows the lowest knowledge gain (+0.61), while Program A, with 195 participants, reports the lowest confidence gain (+0.69). This trend indicates that smaller programs may create more effective learning environments, which potentially allow for greater retention, personalized engagement and attention. These results suggest that smaller programs can lead to maximized participant benefits, including knowledge and confidence.

Survey Response Rates and Data Collection Consistency

The variability in survey response rates across ACS ECHO programs suggests that program format may play a significant role in participant engagement. As shown in Table 3, private programs demonstrated consistently higher response rates, averaging 67%, compared to the public program, which had a lower response rate of 52%. This difference underscores the potential influence of structured, closed-group learning environments in fostering participant accountability and engagement.

A possible explanation for this trend is the environment of private ACS ECHO programs. Smaller, more structured groups may create a greater sense of community and responsibility among each other, leading to more consistent engagement. Additionally, private programs may facilitate closer interactions between subject matter experts and participants. These insights highlight the need to consider program structure when developing evaluations, as higher response rates can yield more robust and reliable outcome assessments. Future studies might explore strategies to enhance response rates in public programs, such as increasing participant follow-up or incorporating incentives.

Years of Experience Compared to Knowledge and Confidence Gains

Participants’ years of experience show an observable inverse relationship with knowledge and confidence gains across ACS ECHO programs. Program A, the largest program with 195 participants, has a high proportion (41%) of highly experienced professionals, or those with over 20 years of experience. Meanwhile, Program B has the smallest group with 45 participants but has the largest percentage of participants (43%) with less than 2 years of experience. ACS ECHO programs with a higher proportion of early-career professionals, such as B, show more substantial gains in knowledge (+1.06) compared to programs with a higher proportion of experienced professionals (+0.72). This suggests that less experienced participants may gain the most from ACS ECHO programs, as is likely due to the foundational knowledge being presented. ACS ECHO programs with a mix of experience levels also reported positive outcomes, indicating that inclusion of both early-career and more experienced professionals may enhance the learning environment by fostering a diverse range of perspectives and feed into productive conversation.

Program B had the highest knowledge gain (+1.06), while Program C had the highest confidence gain (+0.84), suggesting that knowledge and confidence improvements may not always increase uniformly. Confidence gains are highest (+0.84) in Program C, where a moderate mix of years of experience in addition to a smaller participant count appears to create an effective learning environment that builds confidence in applying the new skills being presented.

Inclusion of Qualitative Data

Through the integration of qualitative insights, we provide a broader and holistic view of an ECHO program's effectiveness. The quotes, while anecdotal, revealed that awareness of services and resources may be an underlying factor behind participants’ likeliness to use the information presented in the ECHO session. Similarly, many of the themes helped validate the quantitative findings that some participants were openly not knowledgeable or confident in the topic until they attended the sessions. Altogether, the synthesis of themes and quotes alongside the primary quantitative outcomes helped to humanize the data through the lens of ECHO participants and provides deeper contextual understanding of why these positive outcomes occurred.

Limitations of Data Collection

A major limitation of this study is the potential bias from a low response rate, with only 40% of participants completing surveys across the four ACS ECHO programs. Nonresponse bias may have skewed findings, as those who struggled with ECHO recommendations or saw minimal patient improvements might have been less likely to participate, overrepresenting positive outcomes.

Additionally, while carefully developed, the measures used have inherent limitations, including response bias in self-reported data. The lack of survey completion further exacerbates nonresponse bias, as individuals with less favorable experiences may have opted out. Future studies should focus on improving response rates to enhance the representativeness of findings.

Limitations of Data Analysis Methods

ACS ECHO programs were analyzed primarily using descriptive statistics, which are useful for evaluating trends over time but are constrained by the small sample size. This limitation prevents the use of inferential statistics, reducing the statistical power of the findings. 11 While the sample size is sufficient for detecting broad trends, its small scale restricts subgroup analyses, potentially obscuring differences among healthcare professionals based on experience or specialty.

The low response rate further impacts the generalizability of findings, as a small, potentially nonrepresentative sample of clinical professionals makes it difficult to draw broader conclusions. 12 Additionally, missing data adds another challenge, as its handling—whether through imputation or exclusion—could influence outcomes. 13 Given the relatively small dataset, missing values may have an amplified effect, warranting careful consideration in future studies. Sensitivity analyses and alternative strategies for addressing missing data should be explored to strengthen the reliability of findings. 14 Future investigations should aim to increase response rates and sample sizes to enhance statistical robustness and allow for more nuanced analyses of ECHO program participation.

Conclusion

This study suggests that ACS ECHO programs enhance knowledge on critical cancer topics and boost healthcare professional confidence, positively impacting patients across the cancer continuum. These programs address the burden of cancer by equipping professionals with tailored knowledge and best practices to help patients prevent, detect, treat, and survive cancer.

To sustain impact, ACS is implementing standardized evaluation protocols and quality benchmarks to ensure program consistency. A structured and robust quantitative evaluation framework strengthens implementation and provides insights for replication and scaling to other areas of cancer care.

While this study highlights the effectiveness of ACS ECHO programs, addressing limitations in future evaluations is essential. Expanding participation, incorporating objective data sources, and mitigating missing data and nonresponse bias will provide stronger evidence of impact. These steps will ensure ACS ECHO programs continue to democratize healthcare knowledge, enhance professional capacity, and improve outcomes for underserved communities affected by cancer.

Supplemental Material

sj-docx-1-mde-10.1177_23821205251358088 - Supplemental material for A Quantitative Approach to Data Collection and Analysis of ECHO Programs Focused on Cancer Care

Supplemental material, sj-docx-1-mde-10.1177_23821205251358088 for A Quantitative Approach to Data Collection and Analysis of ECHO Programs Focused on Cancer Care by Jennifer McBride, Patrick Edwards, Mindi Odom and Rich Killewald in Journal of Medical Education and Curricular Development

Footnotes

Acknowledgements

The authors would like to extend sincere gratitude to the ACS for their visionary leadership in advancing healthcare education and the commitment to improving the lives of people with cancer and their families through advocacy, research, and patient support, to ensure everyone has an opportunity to prevent, detect, treat, and survive cancer.

We are deeply grateful to the ECHO and Data and Impact Reporting Teams for their invaluable support and contribution to ACS ECHO programs. Your expertise and commitment to fostering knowledge sharing have been integral to this study. We are also deeply grateful to all ACS team members across the organization that support and work on our ever-growing ECHO portfolio.

We also acknowledge all ACS ECHO program participants, whose dedication to professional growth and patient care continues to drive and inspire our efforts.

Ethical Considerations

All ACS ECHO programs were approved by Morehouse College Institutional Review Board: #2053363-1 on May 1, 2023; #2153720-1 on January 24, 2024; and #2190195-1 on May 8, 2024.

Consent to Participate

All participants consented to participation through their registration and attendance to an ECHO program. Participation in any ECHO program is completely voluntary, and participants can withdraw at any time.

Author Contributions

JM and RK conceptualized and designed the study. JM, MO, and PE collected the data. JM and PE analyzed and interpreted the data. JM and MO produced the first draft of the paper, but all authors contributed to drafting and refinement. All authors approved the final manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: We gratefully acknowledge the support of the ACS, CEOs Against Cancer, EMD Serono, Merck, and the National Football League. Their contributions, and continued support, made this work possible. The funders were not involved in the study design, data collection, data analysis, manuscript preparation, or decision to publish.

Declaration of Conflicting Interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Due to participant confidentiality, the datasets generated and analyzed in this study are not publicly available. However, summaries of the findings are provided within the manuscript, and further details may be made available upon reasonable request to the corresponding author.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.